Aliyun Bailian (2): The Qwen LLM API in Production

Picking a Qwen variant by latency and cost, function calling done right, JSON mode without tears, and the enable_thinking + streaming requirement that the docs gloss over.

This article in the series covers most of the production wins. While the other models are interesting, the LLMs are what every product I’ve shipped on Bailian calls every minute of every day. The official Qwen API reference is dense and complete; this article is the readable companion that guides you through it.

Pick the right Qwen variant for the workload#

The Qwen family is large. Some teams overspend by defaulting to qwen-max everywhere; others underspend on quality by defaulting to qwen-turbo. The right answer is “match variant to job”:

My production rules of thumb:

qwen-turbo— classification, intent detection, short summarization, anything you call >10× per user request. It’s the cheapest sane Qwen and surprisingly good at extraction.qwen-plus— daily driver for chat, RAG synthesis, multi-step reasoning. The cost-vs-quality knee.qwen-max/qwen3-max— code review, complex reasoning, anything where being wrong costs more than being slow.qwen3-coder-plus— every code task. It is meaningfully better at code than generalqwen-pluseven at the same parameter scale.qwen3-vl-plus/qwen3-omni-flash— image / video / audio in. Article 3 is dedicated to this.

Tip: A common mistake is using

qwen-maxfor embedding-style classification. Don’t. Useqwen-turbowith a tight system prompt and you’ll cut cost 10× with no quality loss on tasks where you only need a label.

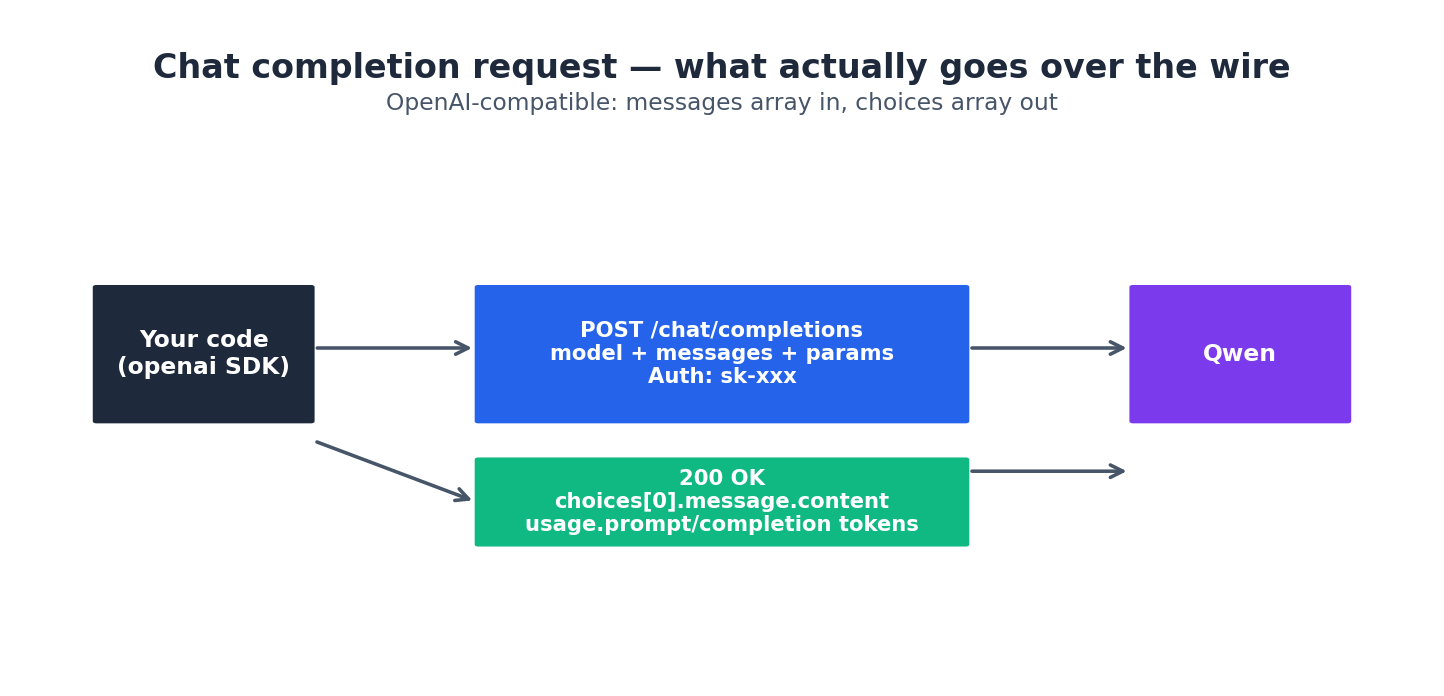

What actually goes over the wire#

Whether you use the OpenAI compat layer or DashScope native, the core of a chat-completion request remains the same: a model ID, a messages array, and a parameter block.

The fields you’ll touch most often:

messages— an array of{role, content}. Role issystem/user/assistant/tool. The official docs note that for multimodal modelscontentcan be an array of typed parts (text, image_url, input_audio, video_url) — see article 3.temperature— 0.0-2.0. I use 0.0 for extraction / classification, 0.2-0.4 for default chat, 0.7+ only for creative writing. The official docs default is around 0.7, which is too high for most agentic uses.top_p— leave it at default unless you know exactly why you want to change it. Tweaking bothtemperatureandtop_pat once is a recipe for confusion.max_tokens(compat) /parameters.max_tokens(native) — this is the output token cap, not total. Set it. Otherwise a runaway can cost you.stream— toggle SSE streaming. See below.response_format={"type": "json_object"}— JSON mode. Strongly recommended over “please return JSON” prompting.tools/tool_choice— function calling.

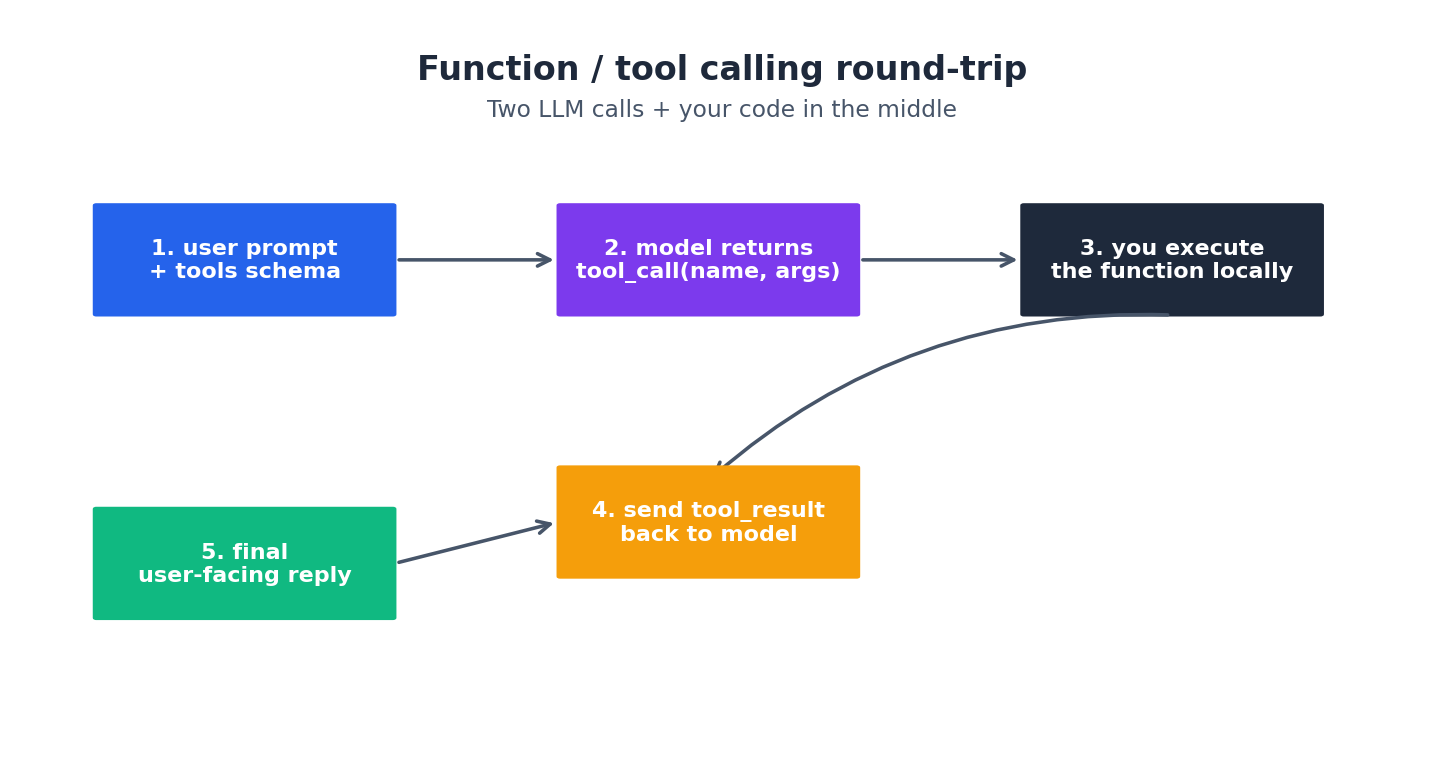

Function calling: the round trip#

The Qwen function-calling protocol follows the OpenAI tool-calls protocol. It involves two LLM calls and your code in between:

A complete worked example — a tiny agent that can look up the weather:

| |

Three things that bite:

messages.append(msg)is required between the first response and the tool result. The model needs to see its own tool_call message in the history, otherwise the second call returns a 400 about an “orphan tool result”.tool_choice="auto"is the default. Force a specific tool withtool_choice={"type": "function", "function": {"name": "..."}}when you must — useful for the first call in a workflow.parallel_tool_calls=Trueis supported. Use it when you have independent tools — the model will return multipletool_callsin one shot.

JSON mode#

For structured output, don’t rely on prompting. Use:

| |

Two caveats from production:

- The model will sometimes wrap JSON in

```jsonfences anyway. Defensive parsing withjson.loadsafter stripping fences is wise. - For structured JSON (Pydantic schema) use the function-calling pattern instead. It’s stricter and the failure modes are easier to debug.

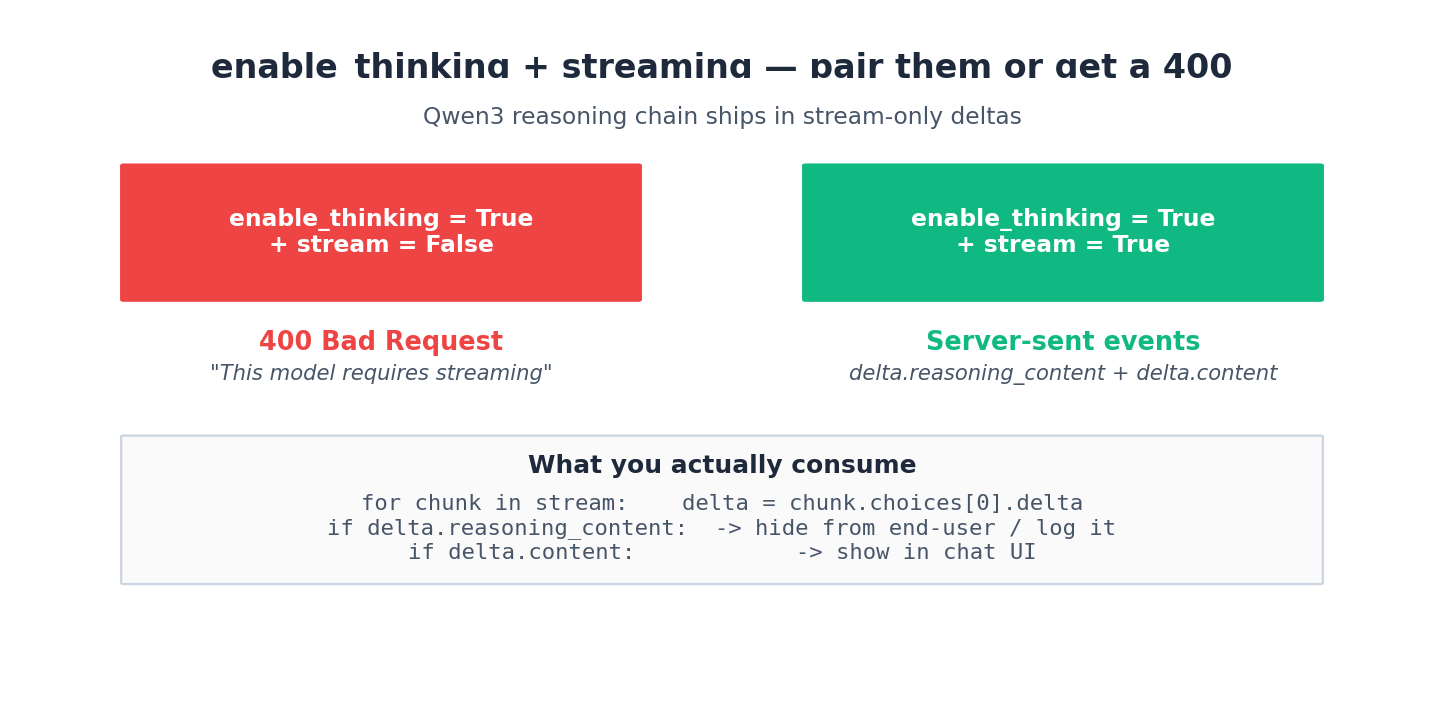

enable_thinking and the streaming trap#

Qwen3 series models support enable_thinking=True — it asks the model to produce a reasoning chain before the final answer. Quality goes up, especially on reasoning-heavy tasks. But you must use streaming. Non-stream returns a 400.

Practical pattern — collect the reasoning into a side log and stream the answer to your UI:

| |

I forward reasoning to my logging system and never to the user. Three reasons: (1) it leaks chain-of-thought IP if customers ever see it, (2) it confuses non-technical readers, (3) it doubles your visible response length.

Async chat (rare but useful)#

If you have a very long-running chat (e.g. a 30k-token RAG synthesis), you can submit it async with X-DashScope-Async: enable and poll, same pattern as Wanxiang. The Qwen API reference documents this under “Asynchronous calling”. I use it for cron-batch summarization jobs that don’t need an immediate user-facing response.

Cost controls that actually work#

- Always set

max_tokens. Default cap of “model max” means a runaway loop costs you a fortune. - Use a workspace key per environment. Set a hard daily budget on the prod key in the console under the workspace.

- Log token counts.

usage.prompt_tokensandusage.completion_tokensare in every response. Aggregate them weekly and you’ll spot the prompt that bloated by 3x without anyone noticing. - Cache identical prompts at your edge. DashScope does not currently expose prompt caching the way Anthropic does — so cache yourself for high-volume identical-prefix patterns.

Token counting: DashScope vs tiktoken, and the CJK bloat#

The single biggest surprise for teams coming from the OpenAI ecosystem: tiktoken lies about Qwen token counts. The Qwen tokenizer is not BPE-compatible with cl100k_base or o200k_base. If you size your context budget with tiktoken.encoding_for_model("gpt-4o"), you’ll be off by 20-40% on Chinese, 5-10% on English. I lost a Friday night to a RAG pipeline that was “definitely under the 32k context” by tiktoken count and was actually 41k by Qwen’s count.

The right move is to use the official Qwen tokenizer locally:

| |

The tokenizer ships in the Hugging Face repo for any Qwen model — Qwen2.5-7B, Qwen3-7B, etc. share a tokenizer family, so loading any of them gives you a count that matches what DashScope will charge you within ±1 token. After the fact, usage.prompt_tokens and usage.completion_tokens in the response are authoritative; trust those over local estimates when you have them.

The CJK bloat problem is real and worth pricing in. A typical Chinese sentence runs about 1.5 tokens per character on Qwen. English runs about 0.25 tokens per character (1 token per ~4 chars). So a 1000-character Chinese RAG context costs you 1500 tokens; the same in English costs you 250. When you size context windows, plan in tokens, not in characters, and use the local tokenizer. I’ve watched a “use 100k chars of context” plan turn into “we need a 150k context model” exactly once.

Streaming with backpressure: drain pattern, partial JSON#

Naïve streaming code looks like the snippet from earlier — iterate the stream, append, done. That works for a CLI demo. In a production HTTP service you have two extra problems: backpressure (your downstream is slower than the model produces) and partial parsing (the user wants structured output but your buffer is mid-token).

Backpressure: when you forward stream chunks to a slow client (a mobile browser on 4G), the chunks pile up in your process memory until either you OOM or the upstream connection times out. The fix is to drain the upstream into a bounded queue and apply pushback to your client connection:

| |

The queue size (32 here) is the depth of in-flight buffer you allow. Smaller means more responsive backpressure but slightly choppier delivery. 32 is what I’ve found works for an SSE-to-WebSocket relay over the public internet.

Partial JSON: if your output is JSON and you want to render fields as they arrive (live-updating a form, for example), you can’t just json.loads until the stream ends. The trick is a streaming JSON parser like json-stream or partial_json_parser:

| |

This unlocks “the form is filling in front of the user” UX without waiting for the model to finish. I use this for the structured-extraction endpoints in our marketing tool — perceived latency drops from 4 seconds to under 500ms even though wall-clock is identical.

Function calling deep dive: multi-round, parallel, the tool_choice=“auto” trap#

The basic round-trip from the original article handles the simple case. Real agents loop. The pattern is a while loop until the model stops emitting tool_calls:

| |

Three things the docs do not flag clearly:

The tool_choice="auto" infinite-loop trap. With auto, the model decides whether to call a tool or answer. On Qwen-Plus I have repeatedly seen it call get_weather four rounds in a row for the same city, each time deciding the previous answer wasn’t enough. The fix is either (a) add a strict max_rounds cap (which I do), (b) after round 3 force tool_choice="none" so the model must answer, or (c) detect identical tool calls and short-circuit. Option (b) is what I use in production — once the agent has had 3 chances to tool-up, it gets one final answer-only round.

Parallel tool calls return in one assistant message. With parallel_tool_calls=True, msg.tool_calls is a list of multiple calls. You must append the single assistant message containing all of them, then append one tool message per call, then make the next request. If you append per-call tool messages without the assistant message between them, you get the orphan-tool-result 400.

Tool argument schemas: keep them flat and required. Qwen’s tool calling is meaningfully less reliable on deeply nested arguments than GPT-4. A tool with {type: object, properties: {filter: {type: object, properties: {date: {...}, region: {...}}}}} will get the date right and forget the region about 15% of the time. Flatten to top-level required params and you get to >99% reliability. I learned this the hard way after a week of “the model is dumb today” complaints that were really “your schema is too nested”.

enable_thinking nuances: when CoT helps, when it hurts, what it costs#

enable_thinking=True is sold as “free quality boost”. It is not free, and it is not always a boost. After running it in production for six months on different workloads, my taxonomy:

Where it helps:

- Multi-step reasoning (math word problems, logic puzzles, code-execution traces).

- “Did the user request X and Y but not Z” classification with multiple constraints.

- Code review with multiple files in context.

- Anything where you’d want to write down intermediate steps yourself.

Where it hurts (or is wasted):

- Pure extraction (“pull these 5 fields from this text”). The reasoning chain just rephrases the input.

- Short factual lookups. The model thinks for 2 seconds about a one-word answer.

- High-temperature creative writing. Reasoning collapses style toward neutral.

- Tool-calling agents. The reasoning content interferes with the tool-call decision in subtle ways — I’ve seen calls drop the

tool_choicesignal entirely when thinking is on.

Latency cost: TTFT (time to first byte of the answer) goes from ~400ms to ~1.5-3 seconds because the reasoning chain has to finish before the answer streams. Total tokens roughly double — you pay for the reasoning content even though you don’t show it to the user. For a chat UI the perceived “is it working” gap during the thinking pause is bad UX; I either show a “thinking…” spinner or stream the reasoning content into a collapsible side panel.

How I decide: if the task is the kind I’d want to write notes on before answering, I enable thinking. If I’d just blurt out the answer, I leave it off. For agentic loops I leave it off on every round except the final synthesis.

Long context: cache hit rate and truncation strategy#

Qwen-Plus has a 128k context window. Qwen-Max goes to 32k by default with 1M-token long-context variants. Just because the window is big doesn’t mean you should fill it.

The implicit prompt cache I mentioned in chapter 1 has one critical property: it caches by exact prefix match. If your system prompt is identical for every user but the user message changes, the system-prompt prefix is cached. If you put dynamic data in the middle of the prompt (e.g. “today is {date}”), every variant breaks the cache. The fix is to keep all dynamic content at the end of the messages array — put dynamic dates / user IDs / timestamps in the user message, not in the system prompt.

I instrument cache hit rate per endpoint:

| |

A well-structured RAG endpoint with a stable system prompt hits 70-80%. An endpoint that interpolates request-specific metadata into the system prompt hits 0%. The bill difference is a factor of 2 on input tokens.

For truncation when you do exceed the window: the safe pattern is “preserve the system prompt and the most recent user/assistant pair, then sliding-window the middle”. I keep the first system message and the last 6 messages verbatim, and summarize anything in between with a cheap qwen-turbo call when the conversation crosses a threshold. The summary goes back into the messages array as a synthetic system message ("role": "system", "content": "Earlier in this conversation: ..."). Quality loss is small for chat-style workloads, dramatic for code-context workloads where you can’t lossy-compress the file content — for those, prefer a longer-context model over summarization.

What’s Next#

Article 3 is Qwen-Omni — the multimodal sibling. The big differences are: streaming is required (not optional), the content array gets typed parts for image / audio / video, and you have to think about pixel budgets and frame rates. It’s the highest-leverage capability in Bailian if your product touches non-text content.

Aliyun Bailian 5 parts

- 01 Aliyun Bailian (1): Platform Overview and First Request

- 02 Aliyun Bailian (2): The Qwen LLM API in Production you are here

- 03 Aliyun Bailian (3): Qwen-Omni for Video, Audio, and Image Understanding

- 04 Aliyun Bailian (4): Wanxiang Video Generation End-to-End

- 05 Aliyun Bailian (5): Qwen-TTS for Multilingual Voice