Aliyun PAI (5): Designer vs Model Gallery — When the GUIs Actually Earn Their Keep

PAI-Designer for tabular ML pipelines, Model Gallery for one-click open-source model deploy/fine-tune. The honest decision matrix for when to skip the SDK and let the GUI ship for you.

The first four articles covered the underlying primitives — DSW, DLC, EAS — that you orchestrate with Python. This one focuses on two GUI products that wrap these primitives and provide a runnable solution for users who don’t want to write Python: PAI-Designer for drag-and-drop tabular pipelines, and Model Gallery for zero-code open-source model deployment and fine-tuning. While serious engineers might not use them first, they are the right choice in two specific situations.

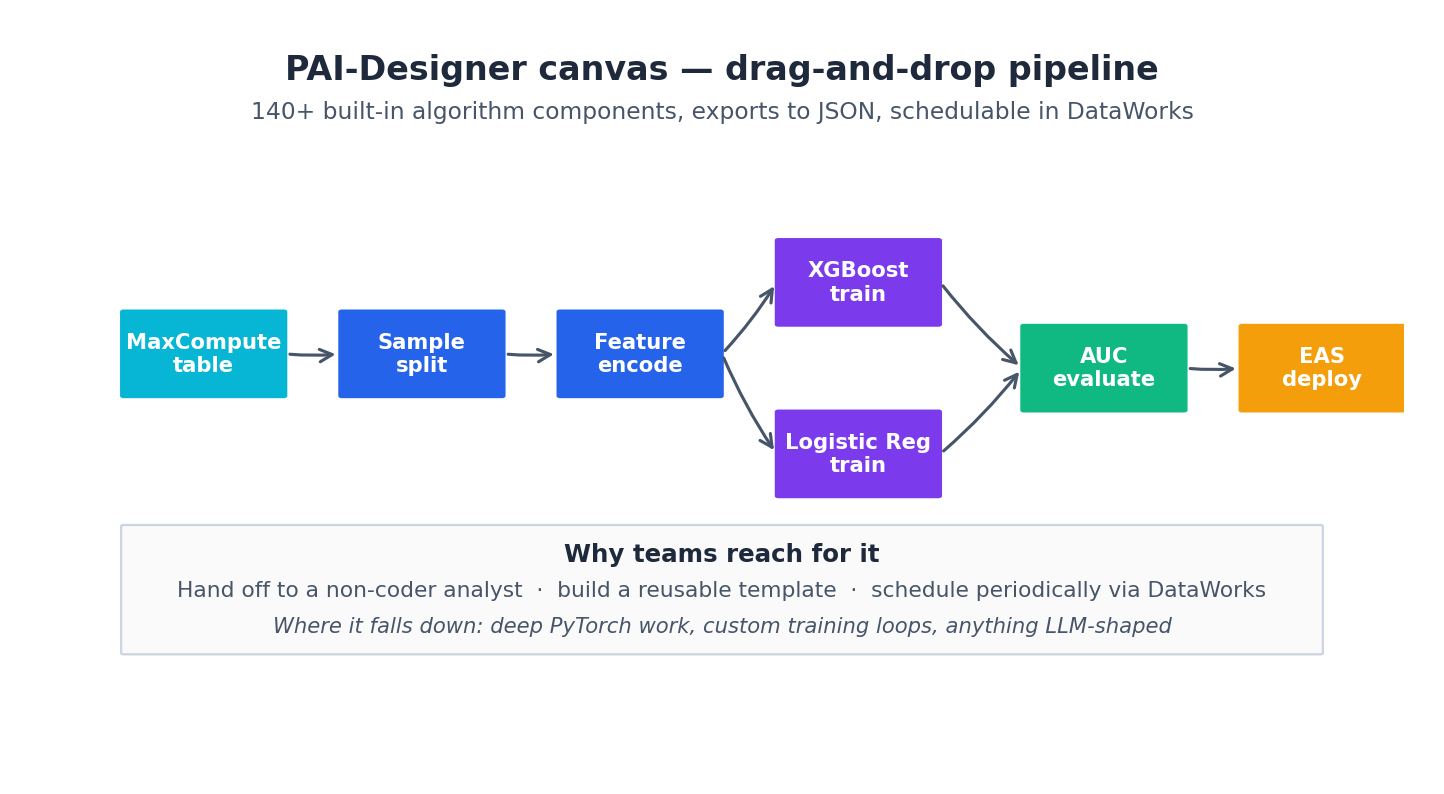

Designer — the drag-and-drop pipeline composer#

According to the docs, Designer implements modeling and model debugging through workflows. Users can build AI development processes by dragging and dropping different components, like building blocks. Key features include over 140 built-in algorithm components, JSON export, scheduling in DataWorks, and support for custom SQL, Python, and PyAlink scripts as nodes.

Where it shines:

- Tabular ML at MaxCompute scale. Designer is tightly bound to MaxCompute. If your training data is a 200-million-row partitioned table on MaxCompute, Designer’s built-in source / split / encode / train components run inside MaxCompute itself, not over the wire to a Python pod. You are paying for MaxCompute compute, not GPU pods sitting idle waiting on data.

- Hand-off to a non-coder analyst. Recommendation, churn, and risk-scoring teams often have a domain expert who can’t write Python but understands the pipeline. Designer canvases are something they can read, modify, and own.

- Built-in templates. The docs list ready-to-run cases for product recommendation, news classification, financial risk control, smog prediction, heart disease prediction, agricultural loans, census analysis. These are useful as starting points even if you tear them down and replace half the nodes.

- Scheduled offline runs. Export the workflow to JSON, hand it to DataWorks, get a daily/hourly cron with retries.

Where it loses:

- Anything LLM-shaped. Designer’s strength is feature engineering + classical ML; it is not a place to write a custom PyTorch training loop.

- Custom CUDA work, novel losses, anything where “the algorithm IS the thing”.

I ship Designer pipelines for the tabular workloads I’d otherwise have built in DLC and SQL, and I ship custom-trained models in DLC for everything else.

Model Gallery — the zero-code MaaS shortcut#

Model Gallery is the tooling that wraps DLC + EAS, allowing non-MLOps users to fine-tune and deploy open-source models with just a few clicks. According to the docs, it encapsulates PAI-DLC and PAI-EAS, providing a zero-code solution for deploying and training open-source large language models.

The Quick Start walks through Qwen3-0.6B end-to-end:

- Search “Qwen3-0.6B” in Model Gallery → click Deploy.

- Default GPU type, default vLLM image, defaults everywhere → OK.

- ~5 minutes later the status flips to

Running. - View Call Information → grab the

Internet Endpointand token. - Plug into Cherry Studio (or Claude Code MCP, or the Python SDK with the OpenAI-compatible base URL) and chat.

For fine-tuning, the docs provide a logistics-information-extraction example: feed it a JSON dataset, select LoRA hyperparameters from a dropdown, and it submits a DLC job for you. The Quick Start highlights the distillation pattern — using a large teacher (Qwen3-235B) to label data and a small student (Qwen3-0.6B) to learn from it. This pattern is worth internalizing; it’s the most cost-effective fine-tuning method I know.

Where Gallery shines:

- Evaluating a new model in 10 minutes. When DeepSeek-V3 dropped, my team had it deployed and chatting in the time it took to refill a coffee. That is impossible from

vllm serveif you also need to set up the OSS bucket, the security group, and the SSL cert. - Demos for non-engineering stakeholders. Click → endpoint → Cherry Studio chat → board meeting.

- One-click LoRA fine-tunes. For most domain-adaptation work, the defaults the Gallery picks (LR, epochs, LoRA rank) are within 5% of optimal.

Where Gallery loses:

- Custom architectures. If you’ve modified the model code, you need DSW + DLC.

- Tight latency targets. The defaults Gallery picks for serving are sensible, not optimized. If you need <100ms p99, you’re going to want to write the EAS deployment yourself with the right batching config.

- Air-gapped or cross-region deploys. Gallery assumes “deploy in the region you’re in”.

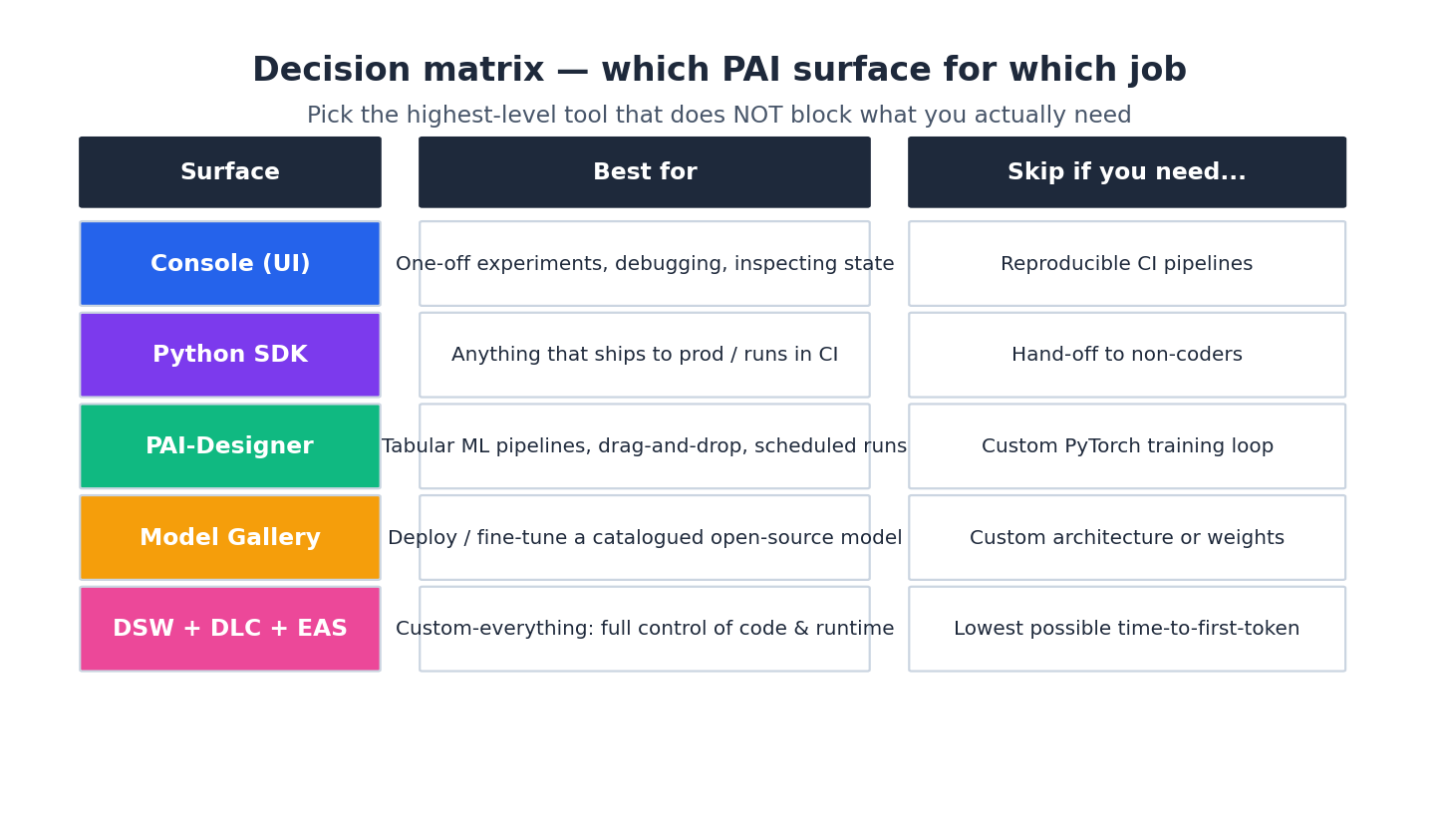

When to pick what#

The decision matrix that has held up for me:

Summary heuristic: start as high up the stack as the requirements allow. Many teams over-engineer on day one, building custom DLC + EAS pipelines when a Model Gallery deploy would suffice. Optimize for time-to-first-token, then refactor once you have real traffic and metrics to design against.

A worked example: when Designer beat custom code#

A real ticket I saw: marketing wanted weekly user-segmentation runs on a 60M-row table from MaxCompute. The data scientist’s first instinct was a DLC job in PySpark + scikit-learn, with code in OSS, scheduled via SLS-callback-to-EventBridge. Three days of work.

Designer version: source node → sample → encode → KMeans → write back to MaxCompute. Exported to JSON, scheduled in DataWorks. Two hours, including the meeting where they explained it to the marketing PM. Same output table, half the cost (no GPU pod), one-tenth the maintenance.

A worked example: when Model Gallery saved a week#

We needed to test whether Qwen3-Coder was good enough to replace an internal qwen-plus-based code-review bot. Pre-Gallery this would have been: read vLLM docs, set up an EAS deployment, write the OpenAI-compatible bridge, hand it to the team. Post-Gallery: search → deploy → endpoint into our existing client → done by lunch. We could focus on the actual question (was the model better?) rather than on the plumbing.

A concrete decision tree#

The matrix above is a heuristic; this is the actual decision tree I run when a teammate asks “should I use Designer / Gallery / DLC / EAS for this?”

A few non-obvious branches:

- MaxCompute-resident data is the strongest signal for Designer. Not size, not algorithm — where the data lives. Pulling 50 M rows from MaxCompute to a Python pod via

pyodpsis slow and expensive; running the same model inside MaxCompute via Designer’s components is fast and cheap. If you ever find yourself writing “first I need to export the table” in a design doc, you’ve already lost the argument for not using Designer. - “Quick eval” is a Gallery-strength even if you’ll later self-host. Spending 10 min in Gallery to confirm Qwen3-Coder is good enough for your use case, then 2 days writing the optimized EAS deployment, is strictly faster than spending 2 days writing the EAS deployment first only to find out the model isn’t good enough.

- The EAS branch never goes to Gallery. Gallery’s auto-deployed serving config is fine (median latency, no surprises), but for any production-tier service you’re going to want to write the EAS spec by hand — pinning vLLM batching params, choosing the GPU type, configuring autoscaling. Gallery is for dev, EAS-by-hand is for prod.

The mistake I see most: “let’s stay on Gallery to ship fast” past the point where it makes sense, then a frantic re-deploy three months later when the bill comes in or latency starts mattering. Plan to break out of Gallery to hand-written EAS the moment you have real users.

Cost comparison per use case#

Numbers from real workloads I’ve run, normalized to monthly RMB. Exact prices vary by region and season; the ratios are what matter.

Use case A: Weekly user segmentation on 60 M-row MaxCompute table.

| Approach | Time-to-build | Per-run cost | Monthly cost (4 runs) | Maintenance |

|---|---|---|---|---|

| Designer | 2 hours | ~6 RMB (MaxCompute spot) | ~24 RMB | Negligible |

| DLC + PySpark | 3 days | ~38 RMB (4-node cluster, 40 min) | ~152 RMB | One engineer-day per quarter for image bumps |

| Hand-written EMR job | 1 week | ~45 RMB | ~180 RMB | Multiple engineer-days |

Designer wins on every axis here. Don’t even argue.

Use case B: 10-minute eval of a new open-source LLM (Qwen3-VL-7B, just released).

| Approach | Time-to-eval | Cost during eval | Cost if abandoned | Cost if shipped |

|---|---|---|---|---|

| Model Gallery | 15 min (deploy) + actual eval time | ~5 RMB/h × eval hours | 0 (delete service) | re-deploy via EAS for prod |

| Hand-rolled EAS | 1-2 days (figure out vLLM, mount weights, debug) | ~5 RMB/h × eval + setup time | ~50 RMB sunk | already there |

| DSW notebook | 1 hour (download model, run inference loop) | ~5 RMB/h × eval hours | 0 | nope, can’t serve from DSW |

Gallery wins on time-to-eval. If you abandon, no further work; if you ship, you re-deploy via EAS anyway because Gallery’s defaults aren’t production-ready. Hand-rolled EAS only wins if you’re sure you’ll ship and don’t mind the upfront cost.

Use case C: Production LLM chat endpoint, 5 QPS average, 30 QPS peak.

| Approach | Setup | Monthly cost | Latency p99 | Notes |

|---|---|---|---|---|

| Model Gallery (default) | 5 min | ~14,000 RMB | ~2.5 s | min_replicas=2 default, no batching tuning |

| EAS hand-written (optimized) | 1-2 days | ~10,500 RMB | ~1.2 s | tuned vLLM, scheduled scaling, weights baked |

| Bailian managed Qwen-Plus | 0 (it’s an API) | varies — typically ~3-8 RMB per 1M tokens | ~1.5 s | someone else’s GPU, someone else’s problem |

This is the conversation that should happen at every “should we self-host?” planning meeting. If your usage is 50 K-100 K requests/month, Bailian wins on cost and operational burden. Cross 1 M requests/month or have data-residency requirements, self-hosted EAS pulls ahead.

Use case D: Fine-tune Qwen3-7B on a 5K-example JSON dataset.

| Approach | Setup | Run cost | Quality |

|---|---|---|---|

| Model Gallery default LoRA | 10 min | ~80 RMB (single A100, ~3 h) | Within 5% of optimal for most tasks |

| DLC custom Megatron + LoRA | 2-3 days | ~60-100 RMB | Tunable to optimal, worth it for >50K examples |

| DSW manual run | half day | ~80 RMB | Same as Gallery, more inspectable |

Gallery wins here too unless you have a specific reason to tune the loop. The “default LoRA hyperparameters” in Gallery are surprisingly good — I’ve benchmarked them against hand-tuned configs and the gap is consistently small.

The pattern across all four: Designer and Gallery win on time-to-something, lose marginally on cost optimization or quality at scale. Use them for the first 80% of the work; break out to hand-written DLC / EAS for the last 20% only when you have evidence it’s needed.

Breaking out: Designer/Gallery → raw PAI resources#

Eventually you’ll outgrow the GUI for a specific service. The migration paths I’ve actually run:

From Designer canvas to raw DLC + DataWorks. The painful path is “rewrite from scratch in PySpark”; the cheap path is “export the JSON, replace each Python node with the equivalent DLC submission, keep the MaxCompute steps as DataWorks SQL nodes”. Steps:

- Export the workflow JSON from Designer (top-right menu).

- Identify the MaxCompute-only subgraph; lift those nodes into a DataWorks workflow as ODPS SQL nodes (1:1 conversion, mostly).

- Identify the Python node(s) that need custom logic; for each, write a

train.pyand aTrainingJobSDK submission (chapter 3). - Wire the DataWorks workflow to trigger the DLC job via a “Shell node + pai SDK call”.

- Delete the Designer workflow only after the DataWorks version has run cleanly for 2 weeks.

The migration takes 1-2 days for a typical Designer canvas. The win is observability — DataWorks gives you per-stage logs, retries, and proper alerting in a way the Designer canvas does not.

From Gallery deployment to hand-written EAS. This is the one I do most often, and the “right” sequence is:

- From the Gallery service detail page, click View configuration — it shows you the underlying EAS service spec (image, model path, command, resources, autoscaling).

- Copy that spec into a YAML file in your repo. It’s now a normal EAS deployment, version-controllable.

- Deploy a new EAS service (separate name) from the YAML. Verify it serves identically to the Gallery one.

- Tune: bake weights into a custom image, switch metric to

concurrent_requests, add scheduled scaling, configure mirror on the service group. Each change is one PR. - Migrate traffic from Gallery → hand-written via the service group (chapter 4 pattern).

- Delete the Gallery deployment.

The pattern: don’t rewrite, export and refactor. Both Designer and Gallery emit configurations that are valid PAI primitives — they’re not magic. Treat them as starting templates, not as endpoints you’re stuck inside.

The reverse migration (raw → Gallery) is rare but possible. If you’ve built a custom EAS deployment for what is fundamentally an open-source model with a standard config, you can collapse it back to a Gallery deployment to reduce operational surface. I’ve done this once: a custom DeepSeek deployment that grew to 600 lines of YAML, simplified back to a Gallery service plus a small EAS-side proxy for the parts Gallery couldn’t express. Nobody on the team missed the YAML.

What’s Next#

That is the series. To recap:

- Article 1 — what PAI is and how the pieces fit.

- Article 2 — DSW for dev.

- Article 3 — DLC for training.

- Article 4 — EAS for production serving.

- Article 5 — Designer / Model Gallery for the cases where the GUI is correct.

The companion Aliyun Bailian series covers DashScope, Qwen, Wanxiang and Qwen-TTS — the managed MaaS layer that sits on top of the same PAI-EAS infrastructure described here. Many teams use both: PAI when they need their own models on their own GPUs, Bailian when they need someone else’s model behind an API key. Choose by what you need to control.

Aliyun PAI 5 parts

- 01 Aliyun PAI (1): Platform Overview and the Product Family Map

- 02 Aliyun PAI (2): PAI-DSW — Notebooks That Don't Eat Your Weights

- 03 Aliyun PAI (3): PAI-DLC — Distributed Training Without the Cluster Pain

- 04 Aliyun PAI (4): PAI-EAS — Model Serving, Cold Starts, and the TPS Lie

- 05 Aliyun PAI (5): Designer vs Model Gallery — When the GUIs Actually Earn Their Keep you are here