Computer Fundamentals (6): Deep Dive and System Integration

Series finale. We zoom out from individual components to the whole system: how CPU, cache, memory, storage, IO, and accelerators are wired together; how hardware-aware software wins by 100×; how to read performance counters; and where computing is heading next — chiplets, photonics, and quantum.

We’ve spent five chapters exploring one component at a time — the CPU, cache hierarchy, storage, motherboard and GPU, network, and power supply. Each part is interesting on its own, but a computer is more than just its components. It’s about how these parts must agree, every nanosecond, on what to do next.

This finale is about that conversation. We’ll wire everything together into a single picture, look at the system through the eyes of a profiler, revisit the 80-year-old design tension that still shapes every chip you buy, and end by looking forward — chiplets, photonic interconnects, and the quietly arriving quantum era.

If you stick with one chapter from this series, make it this one. This is where the mental model finally solidifies.

Part 1 — The whole machine, in one picture#

When you press the power button, a precisely choreographed sequence begins and continues until you shut down. The motherboard’s BIOS/UEFI powers up the rails in sequence, the CPU fetches from a hardcoded reset vector, the memory controller initializes the DRAM, the boot SSD loads the kernel, the GPU sets up its display engine, and the NIC establishes a link. Within a few seconds, billions of transistors work together to show you a login screen.

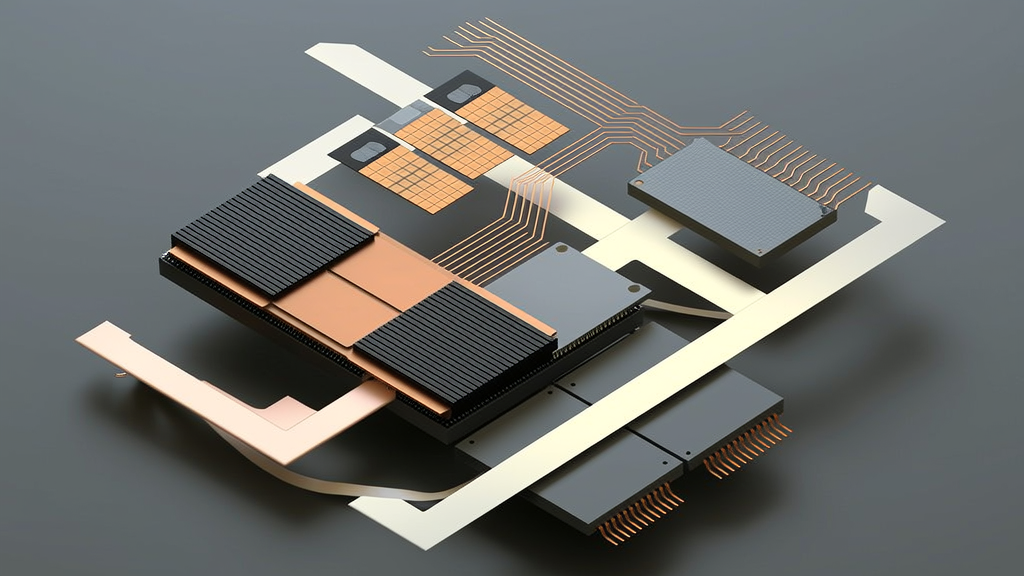

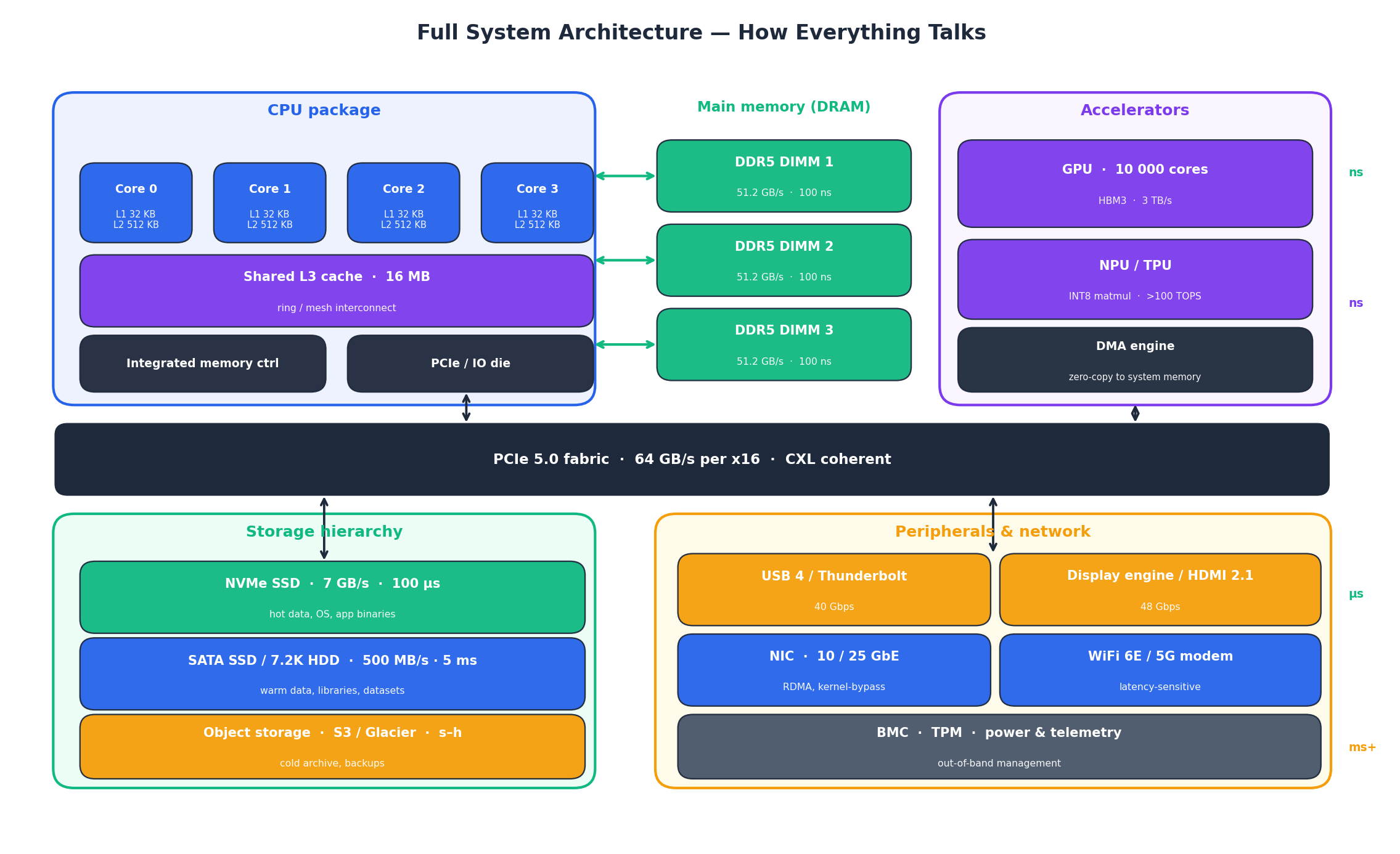

Here’s what’s actually inside that machine, drawn as one block diagram:

A few things worth noticing in that picture:

- The latency gradient runs left-to-right and top-to-bottom. Caches inside the CPU answer in nanoseconds. DRAM answers in tens of nanoseconds. The PCIe fabric and accelerators answer in hundreds of nanoseconds to microseconds. Storage and the network answer in microseconds to milliseconds. Five orders of magnitude live on one motherboard.

- The PCIe fabric is the spine. Almost everything outside the CPU package — the GPU, the NVMe SSD, the NIC, USB controllers — talks through it. CXL is starting to extend that fabric with cache-coherent semantics, which is why “memory pooling” suddenly looks plausible at rack scale.

- There is no single bus anymore. Inside the CPU package the cores talk over a ring or mesh. The memory controllers and IO die are separate tiles. The GPU has its own HBM. What used to be one shared highway is now a city of overlapping networks.

Once you see the machine this way, a lot of folklore stops being mysterious. “Why is my game faster after I move the SSD to the other M.2 slot?” Because that slot wires straight to the CPU instead of through the chipset. “Why does my AI workload love HBM?” Because the GPU needs 3 TB/s and DDR5 can only give it 50 GB/s. “Why is mmap so fast on small files?” Because the file is already sitting in DRAM as page cache; you’re paying nanoseconds, not microseconds.

Part 2 — Hardware-aware software wins by 100×#

Hardware engineers have spent fifty years building impressive machines, but most software still treats them like 1990s PCs. Bridging this gap is the most high-leverage skill in performance engineering.

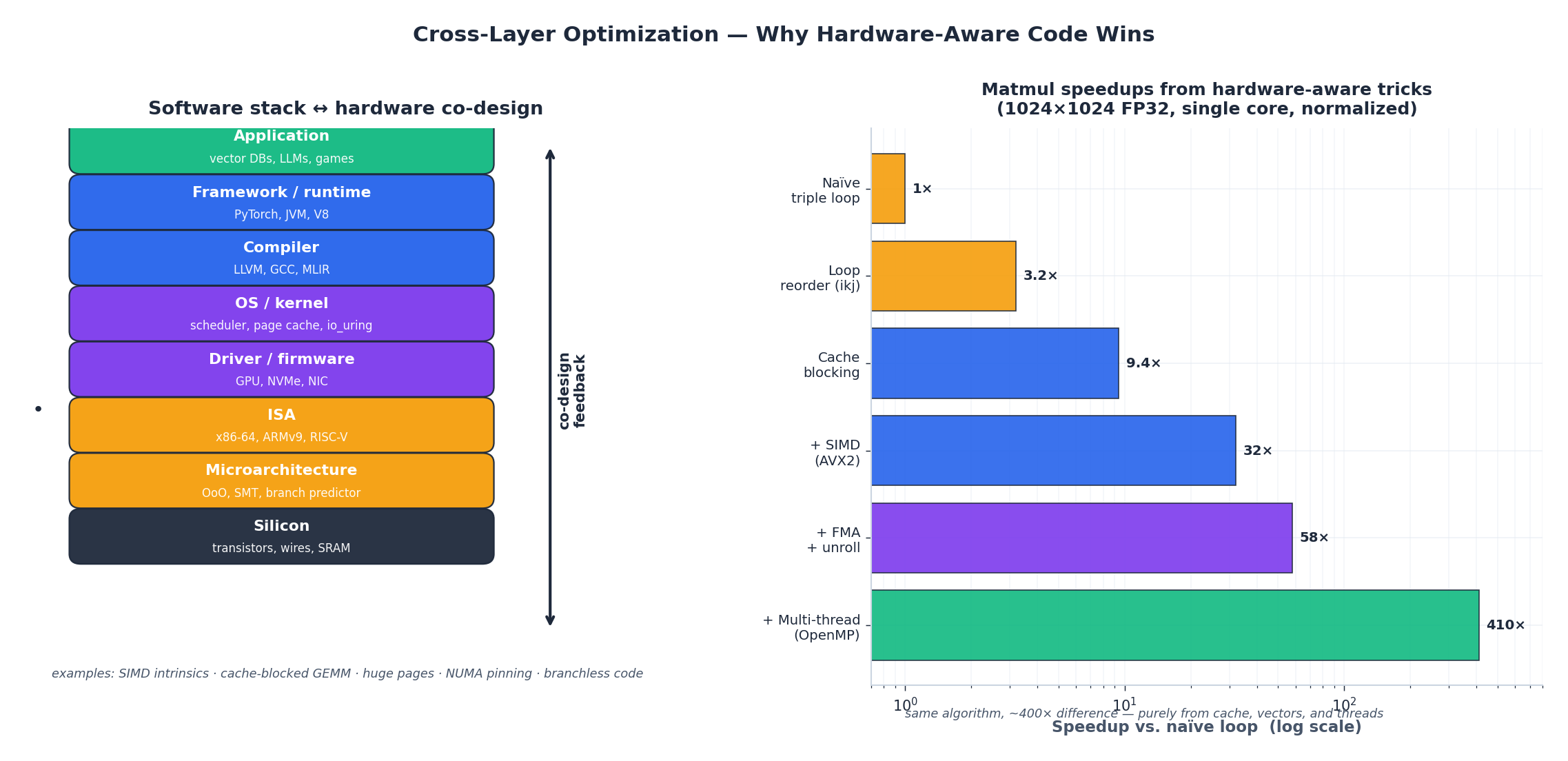

The left side of the figure shows the familiar stack: application, framework, compiler, OS, driver, ISA, microarchitecture, and silicon. The right side shows what happens when you allow information to flow up and down the stack, rather than treating it as a sealed pipe.

The bars show a classic experiment: multiply two 1024×1024 matrices, single core, FP32. The naïve triple loop is the baseline. Reorder the loops so the inner stride is unit-stride and you pick up ~3× from cache friendliness. Block the matrix to fit L1 and you get to ~10×. Drop in AVX2 vector instructions: ~32×. Add fused multiply-add and unrolling so the out-of-order engine has work to chew on: ~58×. Spread across cores with OpenMP: ~400×. Same algorithm. Same FLOPs. The 400× came entirely from telling the hardware what you actually wanted.

This is the key takeaway of the series. The CPU isn’t slow. The memory isn’t slow. Most software simply leaves the machine idle.

A few patterns are worth memorizing because they appear everywhere:

- Stride matters more than count. A loop that touches one byte per cache line is 64× more expensive than a loop that touches all 64.

- Branches you can’t predict are worse than work you don’t do. Branchless code, or code with predictable branches, often beats “smart” code that shortcuts.

- Allocation is a memory access too. A

mallocdeep in a hot loop can dominate the loop’s actual work. - Concurrency is a bandwidth problem, not a CPU problem. Eight threads contending for one cache line are slower than one thread.

The concrete tools are unglamorous: perf, vtune, flamegraph, valgrind --tool=cachegrind. They tell you which line of your code is actually the bottleneck. Which is almost never the line you guessed.

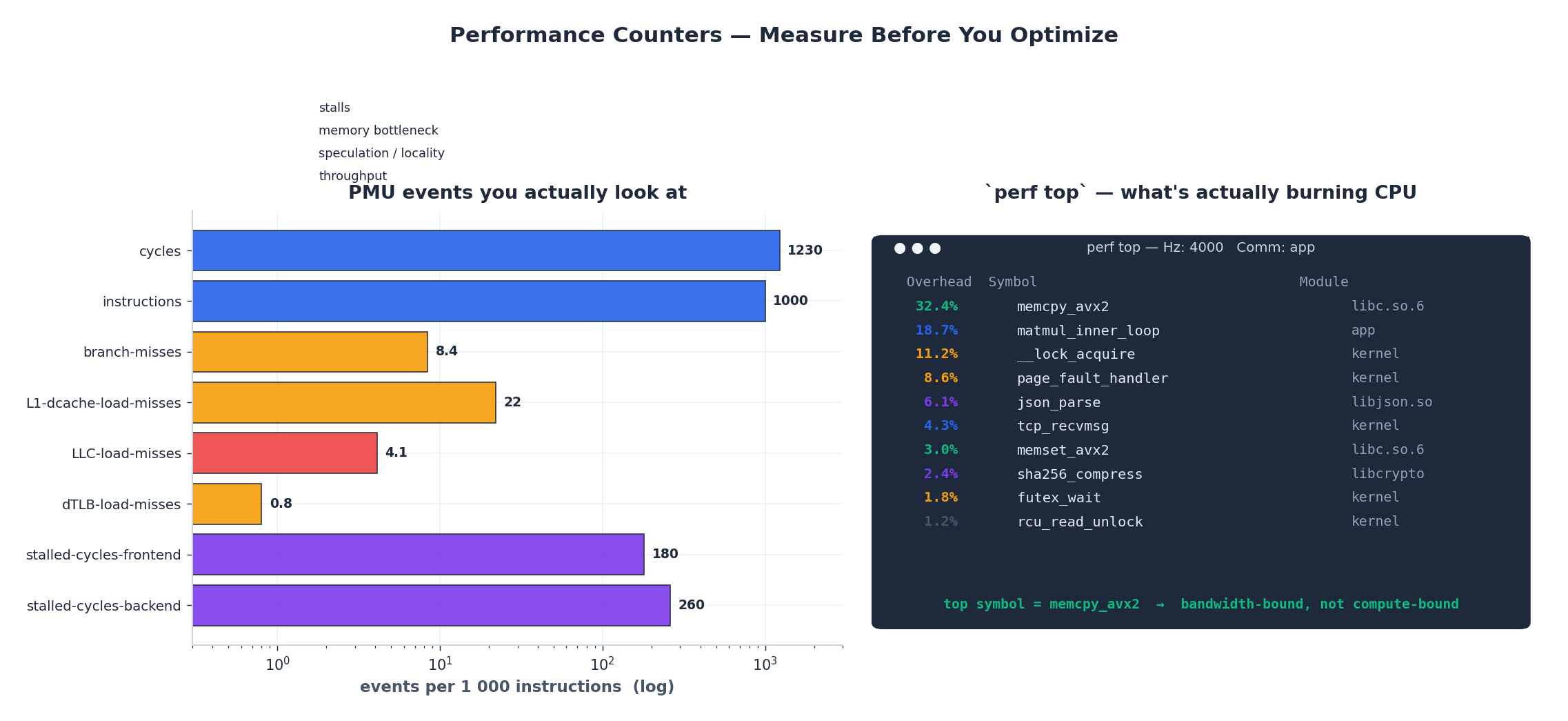

Part 3 — Counters: how the machine tells you what it’s doing#

Every modern CPU ships with a Performance Monitoring Unit (PMU) — a small bank of registers that count microarchitectural events. They are the difference between guessing and measuring.

The left panel shows the events you actually look at, normalised to events per 1000 instructions. The right panel is what perf top looks like on a typical hot workload — a live view of which symbols are burning CPU right now.

A short field guide to the events:

cyclesandinstructionsgive you IPC (instructions per cycle). On a modern Intel/AMD core with no stalls you can hit 3–4 IPC. If you’re seeing 0.3 IPC, the core is sitting idle and you have a problem.branch-missesat more than ~5 per 1000 instructions usually means a hot, unpredictable branch. Sometimes the fix is__builtin_expect. More often it’s restructuring the data so the branch goes the same way most of the time.L1-dcache-load-missesandLLC-load-misseslocalise where your cache problem lives. L1 misses that hit L2 cost a few cycles. LLC misses that hit DRAM cost a few hundred. One LLC miss is roughly one thousand wasted instructions.dTLB-load-missespoint at working sets that don’t fit your TLB reach. The fix is often huge pages — one 2 MB page covers what 512 4 KB pages would.stalled-cycles-frontendvsstalled-cycles-backendtell you whether the core is waiting for instructions to arrive (frontend — usually I-cache or branch prediction) or for results to come back from memory (backend — almost always memory bandwidth).

The simulated perf top panel illustrates the most common surprise of a first profile: the top symbol is almost never your business logic. It’s memcpy, or a lock, or a page fault handler. Real programs are bandwidth-bound and synchronisation-bound long before they are compute-bound. That is not a failure of your code; it is the shape of modern hardware.

A workflow that has never let me down:

- Reproduce the slow workload in a tight loop.

perf stat -d ./app— look at IPC, cache miss rate, branch miss rate.perf record -g ./app && perf report— find the hot symbol.- Read the disassembly of that symbol with

perf annotate. Look for the line where samples pile up. - Form a hypothesis, change one thing, repeat from step 2.

Most performance work is just refusing to skip step 4.

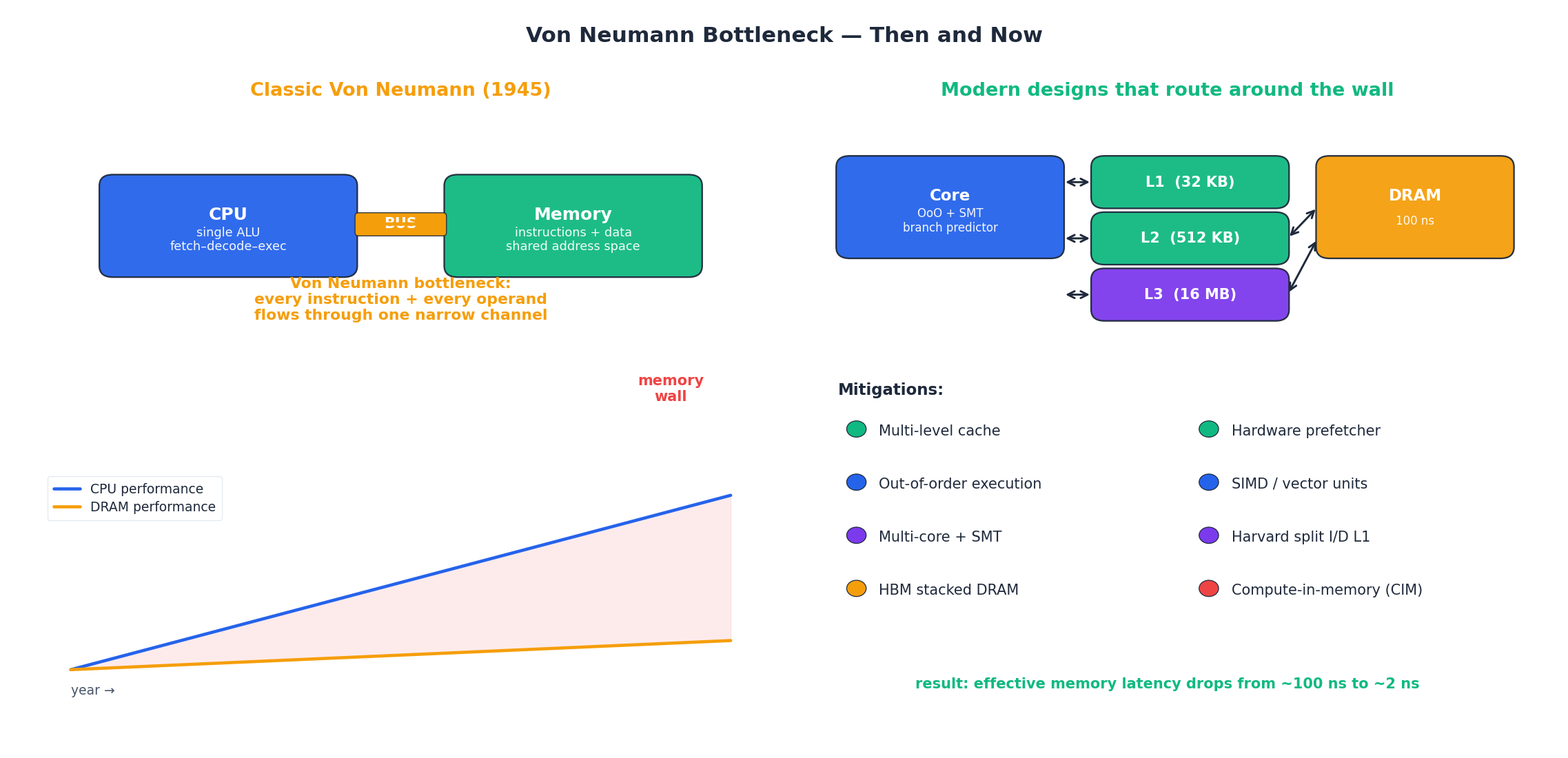

Part 4 — The Von Neumann bottleneck, then and now#

In 1945, John von Neumann sketched the architecture that almost every computer still uses: a CPU, a memory, and a bus between them, with instructions and data living in the same address space. The design is brilliant and general — and it carries an inherent limitation that has shaped computing ever since.

The classic problem is on the left. There is one bus. Every instruction the CPU executes has to come down that bus. Every operand it loads has to come down that bus. Every result it writes has to go back up that bus. As CPU clock speeds raced ahead through the 1990s and 2000s and DRAM speeds did not, the gap between them — the memory wall — became the dominant cost. Today, a load that misses all caches and goes to DRAM costs the CPU about 300 instructions of wasted work.

The right side shows what fifty years of mitigation looks like:

- Multi-level caches put smaller, faster copies of memory inside the chip. L1 hits at <1 ns. The whole hierarchy is what makes 3 GHz cores possible at all.

- Hardware prefetchers notice you’re walking through memory linearly and start loading ahead before you ask.

- Out-of-order execution lets the core keep working on independent instructions while it waits for a slow load to finish.

- SIMD does eight or sixteen FP operations per instruction, amortising the cost of going to memory.

- SMT (hyper-threading) keeps the core busy by giving it two instruction streams to choose from.

- Harvard split L1 for instructions and data lets the front end and back end fetch in parallel.

- HBM stacks DRAM dies directly on top of the compute and gets to terabytes per second of bandwidth.

- Compute-in-memory is the most radical: do the arithmetic inside the memory array itself, so data never has to move.

The combined effect is striking. The raw DRAM latency hasn’t improved much in twenty years — it’s still around 80 ns. But the effective latency a well-written program sees is closer to 2 ns, because almost everything is served from cache, prefetched ahead of time, or hidden behind out-of-order execution. We didn’t break the memory wall. We routed around it.

Knowing this changes how you write code. Algorithms that look optimal on paper can be slow on real hardware because they jump around memory; algorithms that look wasteful (extra arithmetic, redundant loads) can be fast because they stay inside the cache. Modern performance is a memory game disguised as a compute game.

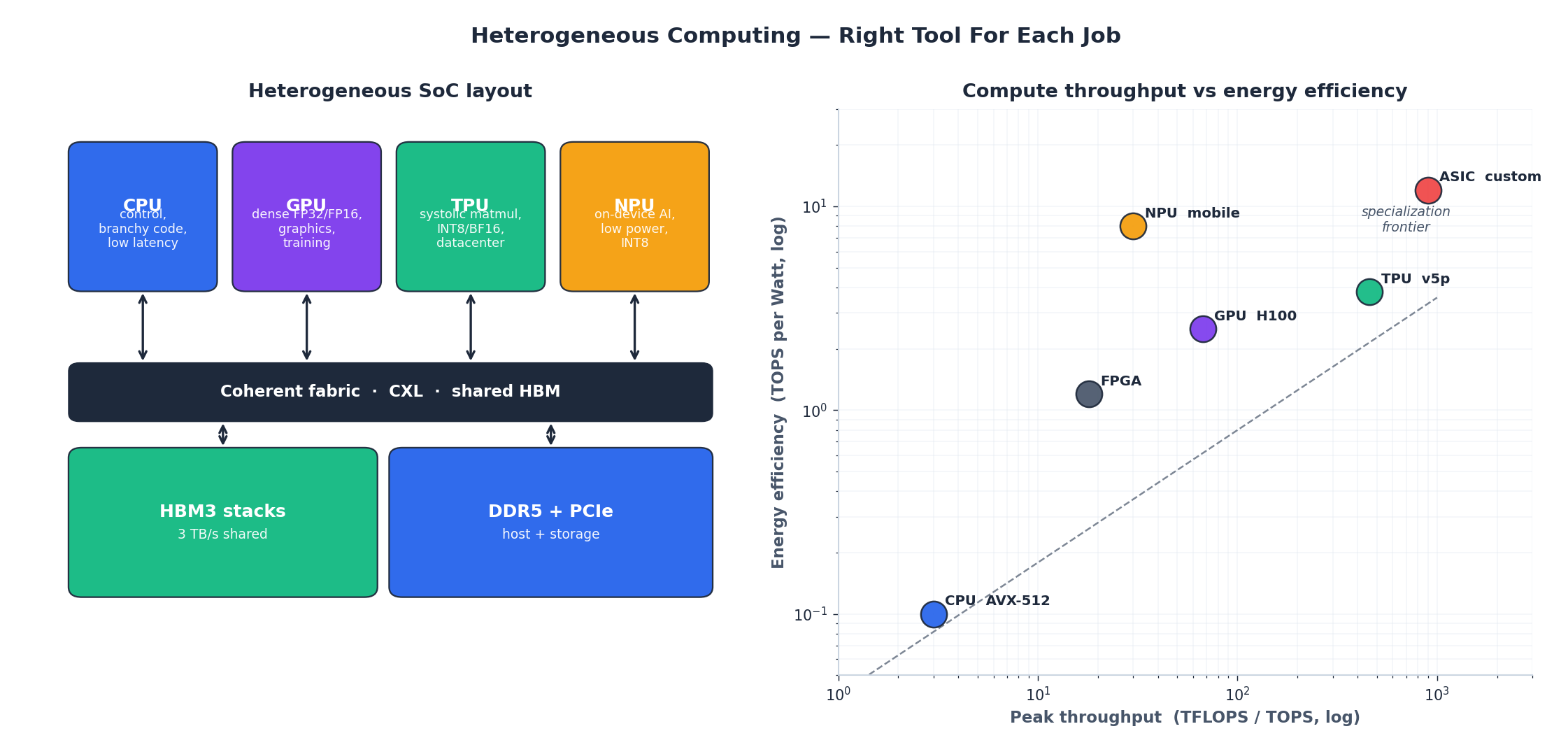

Part 5 — Heterogeneous computing: the right tool for the job#

For decades, “the computer” meant the CPU. Every workload paid the same architectural tax. That era is ending. A 2026 laptop has a CPU, a GPU, an NPU, a video codec block, and a secure enclave on the same die. A datacenter rack has CPUs, GPUs, TPUs, FPGAs, and SmartNICs, and the interesting question is no longer “how fast is your CPU” but “did you put the workload on the right thing”.

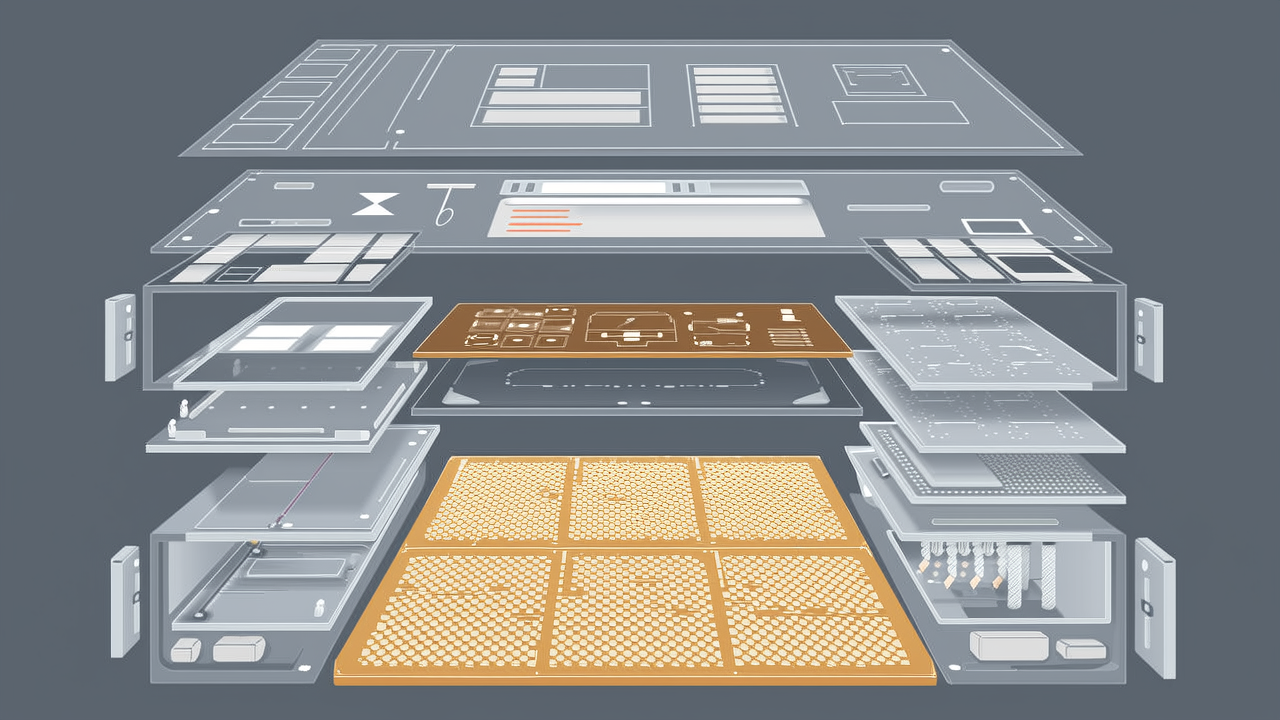

The left panel shows what a modern SoC actually looks like. Specialised tiles — CPU for control flow, GPU for dense floating point, TPU for INT8 matrix multiplies, NPU for low-power on-device AI — share a coherent fabric and a pool of HBM. The fabric is starting to extend across packages with CXL, so accelerators in different sockets can share memory without a software round-trip.

The right panel makes the trade-off concrete. The x-axis is peak throughput, in TFLOPS or TOPS, log scale. The y-axis is energy efficiency, in TOPS per Watt, also log. A few takeaways:

- A modern CPU with AVX-512 delivers a few TFLOPS at ~0.1 TOPS/W. Excellent for branchy, latency-sensitive code. Terrible for matmul.

- A datacenter GPU like H100 delivers ~67 TFLOPS at 2.5 TOPS/W. General enough to train any neural net you can describe, fast enough to make it the default for training.

- A TPU v5p is roughly 7× the throughput at 1.5× the efficiency — but only if your workload looks like a giant systolic matmul.

- Mobile NPUs win on efficiency by 80× versus a CPU, which is why your phone can do real-time speech recognition without burning the battery.

- Custom ASICs sit at the frontier: maximum throughput, maximum efficiency, near-zero flexibility. Bitcoin mining, video encoding, network packet processing.

The dashed “specialization frontier” is a real curve. Every step toward more specialization buys you throughput and efficiency at the cost of generality. There is no free lunch — there’s just a menu, and good engineering is choosing wisely.

The practical advice is short: profile first, then choose hardware, then choose algorithm. Putting a branchy graph traversal on a GPU is just as wasteful as running BERT on a CPU.

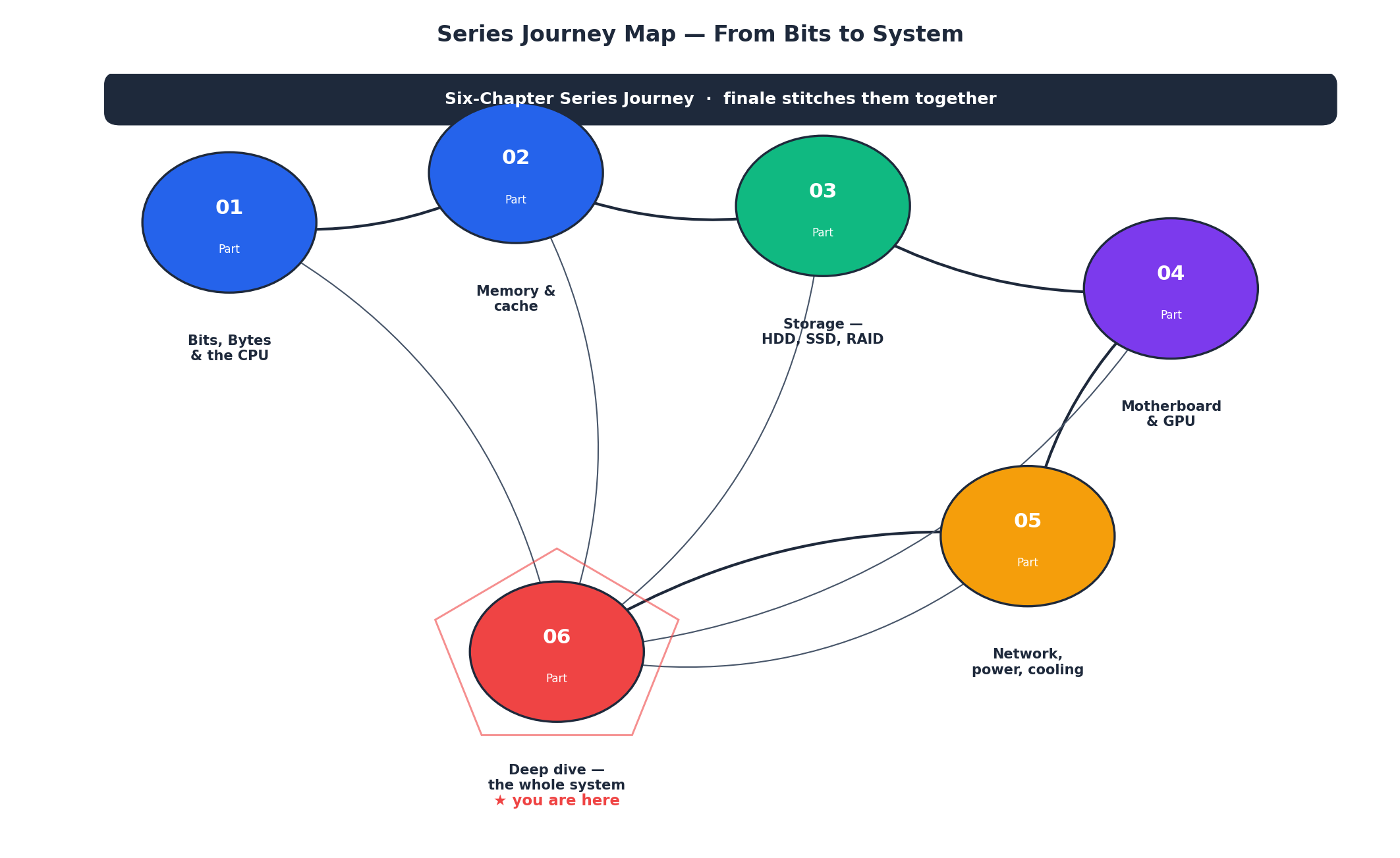

Part 6 — The journey: from bits to system#

Stepping back from the technical detail, here is the path this series has walked. Each chapter built one piece of the mental model. This finale wires them together.

- Part 1 — Bits, Bytes, and the CPU. We started where everything starts: a bit is two states, a byte is eight bits, ASCII gives you English, UTF-8 gives you the world. From there we walked into the CPU itself: cores, threads, clock speed, the difference between Intel and AMD, why a 32-bit OS can’t address 4 GB of RAM.

- Part 2 — Memory and cache. DDR generations, dual-channel, the three-level cache hierarchy, hit rates, why doubling your channels matters more than doubling your frequency.

- Part 3 — Storage. HDD platters and heads, NAND flash cells from SLC to QLC, SATA versus NVMe, RAID levels, the storage tiering pyramid that runs from DRAM down to Glacier.

- Part 4 — Motherboard and GPU. Chipsets, PCIe lanes and generations, M.2 slot wiring, VRM phases, GPU memory hierarchy, the SM/CUDA-core architecture that makes massive parallelism possible.

- Part 5 — Network, power, and cooling. NIC speeds and RDMA, the OSI model in practice, PSU wattage and 80 PLUS, fan curves and thermal throttling, the full assembly checklist.

- Part 6 — This chapter. The whole picture. Cross-layer optimisation. Performance counters. The Von Neumann story. Heterogeneous computing. And what comes next.

Each part is a building block; none of them is the building. The building is the system you’re sitting in front of, and the moment you can see all six pieces at once is the moment hardware stops being mysterious.

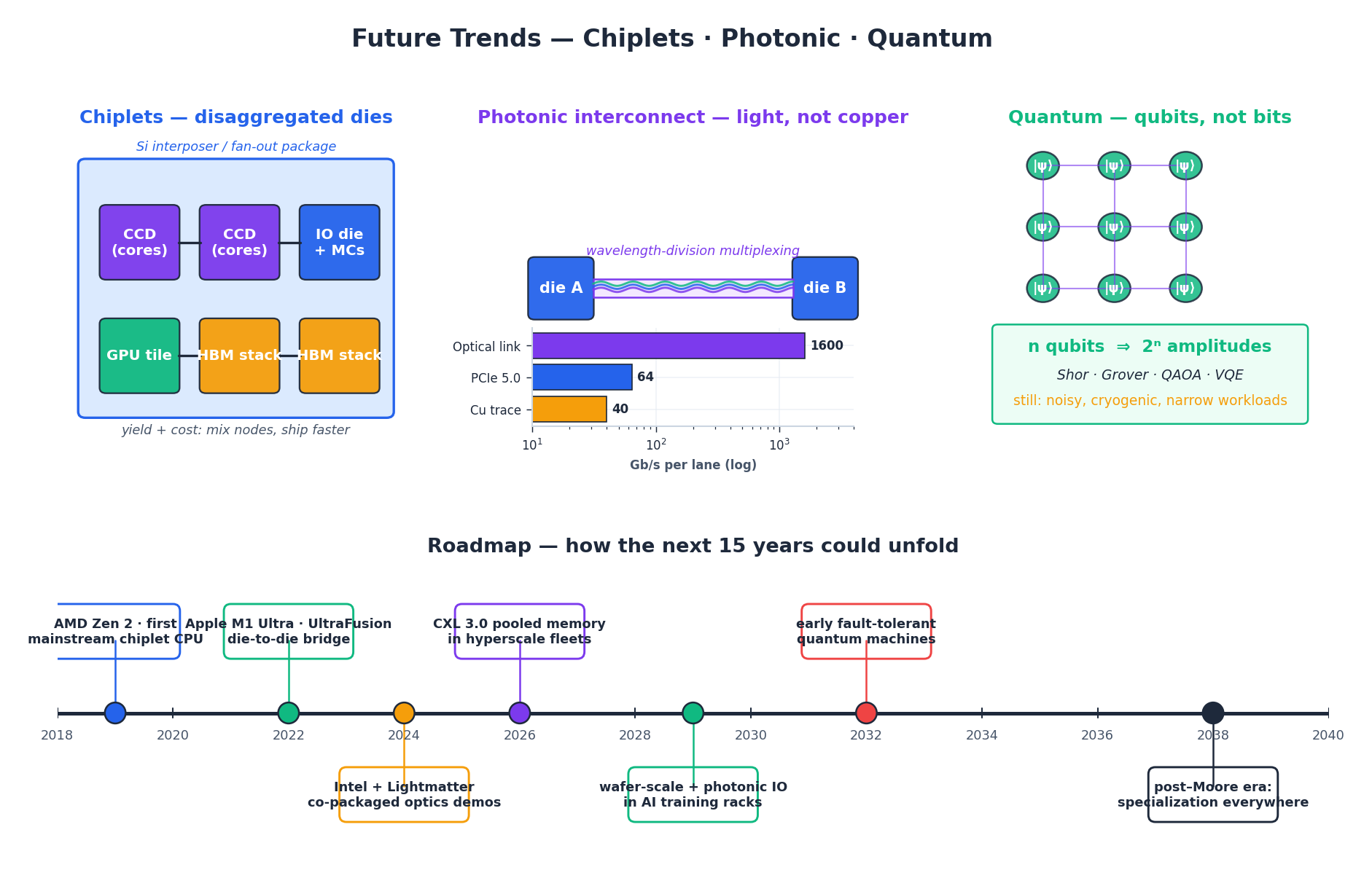

Part 7 — What comes next: chiplets, photonics, quantum#

Moore’s Law as the press knew it — transistor count doubling every two years on the same monolithic die — is over. Transistors keep shrinking, but the economics, yields, and physics no longer support a single, ever-larger chip. The interesting question is what replaces it. Three threads are already visible.

Chiplets — disaggregated dies on a shared package. Instead of one giant die that has to yield perfectly, you build a package out of small chiplets, each fabbed on the process that suits it best. AMD’s Zen 2 was the mainstream debut in 2019 — separate Core Complex Dies and an IO die, talking through Infinity Fabric. Apple’s M1 Ultra glued two M1 Maxes together with the UltraFusion bridge. Intel’s Meteor Lake mixes a CPU tile, a GPU tile, an SoC tile, and an IO tile on a single package. The benefit is brutal economics: a 600 mm² monolithic die yields badly; six 100 mm² chiplets yield well, can be mixed and matched, and let different parts ride different process nodes. The cost is interconnect — those chiplets have to talk to each other at low latency and high bandwidth, which is why standards like UCIe (Universal Chiplet Interconnect Express) have suddenly become urgent.

Photonic interconnects — light instead of copper. Copper traces hit a wall around a few tens of Gb/s per lane before signal integrity collapses. Light does not. Co-packaged optics put a tiny laser and a waveguide right next to the compute die, and wavelength-division multiplexing lets one fibre carry dozens of channels in parallel. The early use case is the escape problem — getting data off a GPU package fast enough to feed AI training fabrics that span thousands of accelerators. Lightmatter, Ayar Labs, and the hyperscalers are all building this; the first commercial deployments are landing in 2024–2026. By 2030 it’s likely the default way data leaves a high-end chip.

Quantum — qubits, not bits. A qubit isn’t a faster bit; it’s a different kind of object. n qubits represent 2ⁿ amplitudes simultaneously, and a quantum algorithm steers those amplitudes through interference toward an answer. For a narrow set of problems — factoring (Shor), unstructured search (Grover), some chemistry and optimisation — that’s an exponential or quadratic speedup. The current hardware is noisy, cryogenic, and small. The 2032-ish milestone everyone is racing toward is fault-tolerant quantum computing — enough qubits and enough error correction to run the famous algorithms on real-world inputs. It will not replace your laptop. It will replace specific compute jobs that are intractable today.

The bottom of the figure ties these threads onto a single timeline. The big picture: the next fifteen years of computing won’t be one big jump. They’ll be many small ones, all in the direction of specialisation — custom silicon, custom interconnects, custom physics — held together by software that knows what’s underneath it.

Closing — what to do with all this#

You’ve now seen the machine end to end. A few habits will compound this knowledge for you for years:

- When something is slow, measure before you guess. A

perf statand a flamegraph will save you days of wrong intuition. - Read the datasheet of the hardware you actually own. Cache sizes, memory channels, PCIe lane wiring — they’re all in there, and they explain most of what you’ll see.

- Keep one mental model and refine it. Every new technology — CXL, photonic IO, MI300 chiplets — fits somewhere on the system diagram in Part 1 . Find where, and you understand it.

- Build something that pushes the hardware. A toy database, a small ray tracer, a profiler of your own. There is no substitute for the moment a counter goes from 0.3 IPC to 2.8 IPC because of a change you made.

Computer hardware is not a shopping list. It’s a system that has been refined for eighty years, by tens of thousands of engineers, into the most intricate machine humans have ever built. Knowing how it works is one of the highest-leverage things you can know.

Thanks for reading this series. See you in the next one.

— End of the Computer Fundamentals series —

Appendix — Three Things to Try This Week#

The whole series argued that measuring is the lever. Here are three concrete experiments, each takes under an hour, and each will leave you with a number you didn’t have before.

Measure your own machine’s memory wall#

| |

The first run streams large blocks — it measures sustained DRAM bandwidth. The second run touches 64-byte cache lines randomly — it measures latency-bound throughput. The ratio between them is your machine’s memory wall: typically 5× to 20×. That ratio is the reason cache locality matters.

Watch a branch predictor learn#

| |

The sorted run is typically 1.4–1.8× faster on the same data. The CPU’s branch predictor learns “always taken” then “always not taken” instead of fighting random noise. This is the entire point of Mike Acton’s “data-oriented design” talk made small.

Find your machine’s real PCIe bandwidth#

| |

PCIe Gen4 x16 is theoretically 32 GB/s, in practice you’ll see 24-28. If you get half of that, you’re plugged into a Gen3 slot or a x8 lane. The chipset diagram in your motherboard manual will tell you which slot is which — most boards have exactly one CPU-direct x16 slot.

Appendix — Cache Lines and ML Workloads#

The cache line is 64 bytes on every modern CPU. In ML pipelines this single number explains a lot:

- A

float32tensor: 16 floats per cache line. Iterating row-major over(B, C, H, W)data hits the line that’s already loaded; iterating column-major across the batch dimension misses every time. - The same model can train 2–3× faster with

channels_lastmemory format on convolutional networks, because the convolution kernel reads channels contiguously. torch.compileand XLA fuse operations partly to keep tensors in cache between ops. The win isn’t theoretical FLOPs — it’s avoiding round-trips to DRAM at 80 ns each.- A 256 KB tile of activations fits in L2. A 24 MB layer of weights does not. This is why FlashAttention tiles the attention matrix into L2-sized blocks: the algorithm hasn’t changed, the memory traffic has.

If you take one thing from the deep-dive: the math operations are usually free; the memory movement is the cost. This is true on CPU, more true on GPU, and almost the entire reason custom AI silicon exists.

Computer Fundamentals 6 parts

- 01 Computer Fundamentals (1): CPU and the Computing Core

- 02 Computer Fundamentals (2): Memory and Cache Systems

- 03 Computer Fundamentals (3): Storage Systems (HDD vs SSD)

- 04 Computer Fundamentals (4): Motherboard, Graphics, and Expansion

- 05 Computer Fundamentals (5): Network, Power, and Troubleshooting

- 06 Computer Fundamentals (6): Deep Dive and System Integration you are here