LeetCode (9): Patterns — Greedy Algorithms

When does picking the locally best option give the global optimum? A working tour of greedy algorithms with proofs (exchange argument), classic problems (Jump Game, Gas Station, Interval Scheduling, Task Scheduler, Partition Labels), and a clear rule for when greedy fails.

The greedy algorithm paradigm seems too good to be true: at every step, choose the option that looks best right now, never look back, and somehow reach the global optimum. When it works, the code is almost embarrassingly short. When it doesn’t, it produces confidently wrong answers — which is why the real skill is recognizing when greedy is allowed.

This article walks through the structural reason greedy is correct on some problems and broken on others, then applies that lens to seven LeetCode classics: Jump Game, Jump Game II, Gas Station, Best Time to Buy and Sell Stock II, Non-overlapping Intervals, Task Scheduler, and Partition Labels.

What Makes a Problem “Greedy”?#

A greedy algorithm builds a solution incrementally by committing, at each step, to the choice that is locally best according to some criterion — and never undoing it. That last clause is what separates greedy from backtracking and from DP: there is no undo, no memo table of alternative branches.

For this strategy to be correct, the problem must have two structural properties:

- Greedy choice property. There exists a locally optimal choice that is part of some globally optimal solution. (Equivalently: making this choice never destroys the best possible future.)

- Optimal substructure. Once that choice is committed, the remainder of the problem is itself a smaller instance of the same problem, and an optimal solution for it combines with the greedy choice into an optimal solution for the original.

Most problems satisfy optimal substructure. The greedy choice property is rarer and requires a proof. If you cannot prove the greedy choice property, you do not have a greedy algorithm — you have a guess.

Greedy vs DP vs Backtracking#

| Property | Greedy | Dynamic Programming | Backtracking |

|---|---|---|---|

| Decision rule | One locally optimal pick | Try all, keep optimum | Try all, prune infeasible |

| Revocability | Never revoked | N/A (computes all states) | Revoked on backtrack |

| Typical time | O(n) or O(n log n) | O(n²)–O(n·k) | Exponential |

| Required structure | Greedy choice + optimal substructure | Optimal substructure + overlapping subproblems | Verifiable solution check |

| Proof burden | Heavy (must prove correctness) | Mechanical (transition + base case) | None (it tries everything) |

The takeaway: greedy trades correctness for runtime. You pay in proof effort and get linear or near-linear time in return.

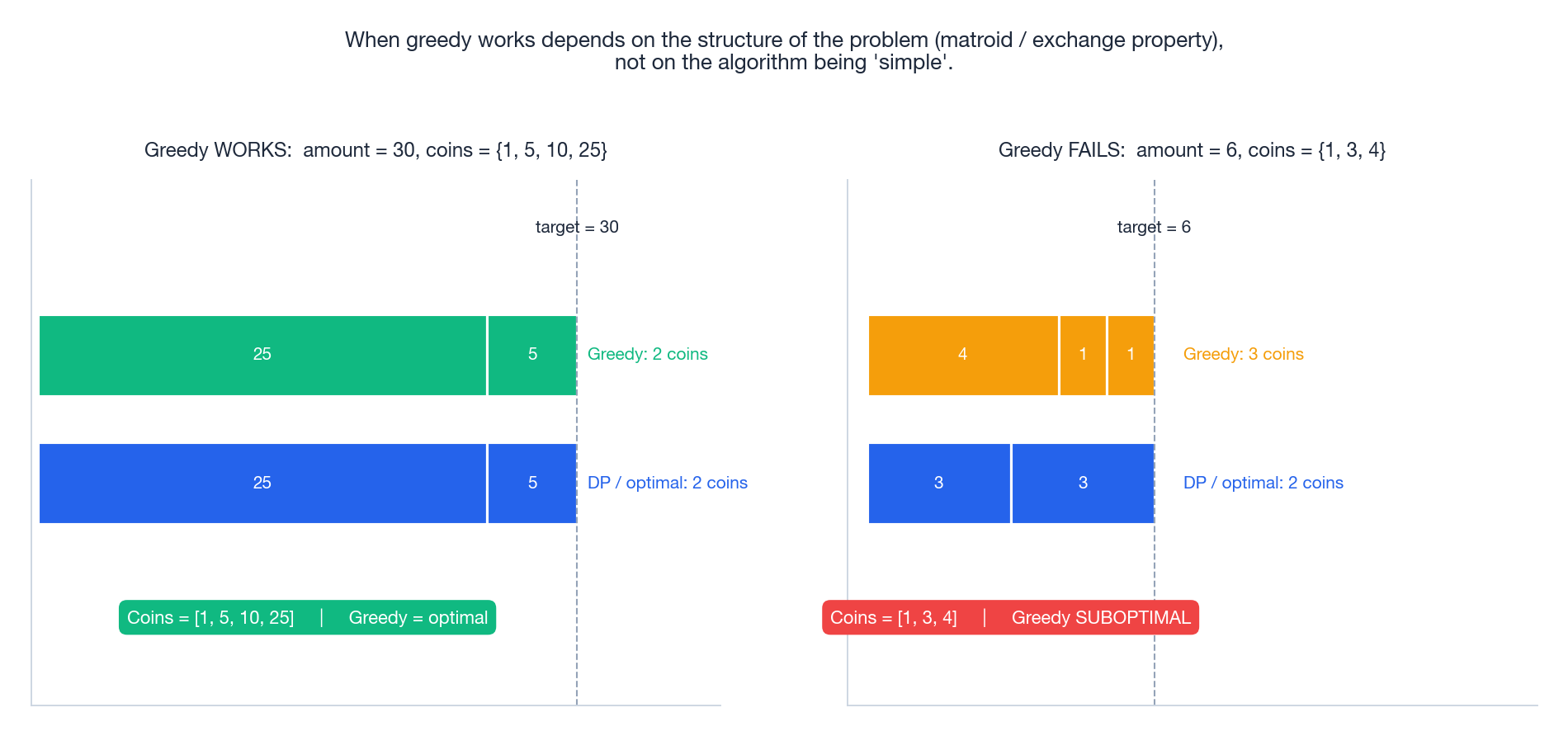

When greedy fails: a concrete warning#

Coin change is the canonical cautionary tale. With the canonical US coin set {1, 5, 10, 25}, “always take the largest coin that fits” produces an optimal answer for every amount. With a slightly different set {1, 3, 4} and amount 6, greedy picks 4 + 1 + 1 (three coins) while the optimal is 3 + 3 (two coins). Same algorithm, same intuition — but the second instance lacks the structural property (technically: the coin set is not a matroid) that makes greedy correct.

The lesson: greedy correctness is a property of the problem, not the algorithm. Always prove it or recognize it from a known pattern.

A Vocabulary of Greedy Strategies#

Across LeetCode, greedy solutions tend to fall into a small number of recurring shapes:

- Sort by end — interval scheduling, non-overlapping intervals, minimum-arrows.

- Sort by start — meeting-room allocation, merging intervals.

- Sort by ratio / value density — fractional knapsack, profit-weighted scheduling.

- Maintain a running extremum — Jump Game (

max_reach), Best Time to Buy/Sell Stock (min_priceso far), Maximum Subarray (max_ending_here). - Prefix-sum reset — Gas Station, Maximum Subarray (Kadane), Partition Labels (last-occurrence sweep).

- Frequency / heap-based scheduling — Task Scheduler, Reorganize String.

- Local adjustment in two passes — Candy, Wiggle Subsequence.

Most of the problems below are instances of one of these patterns. Recognizing the pattern is 90% of the solving work.

How to Prove Greedy Correctness: Exchange Argument#

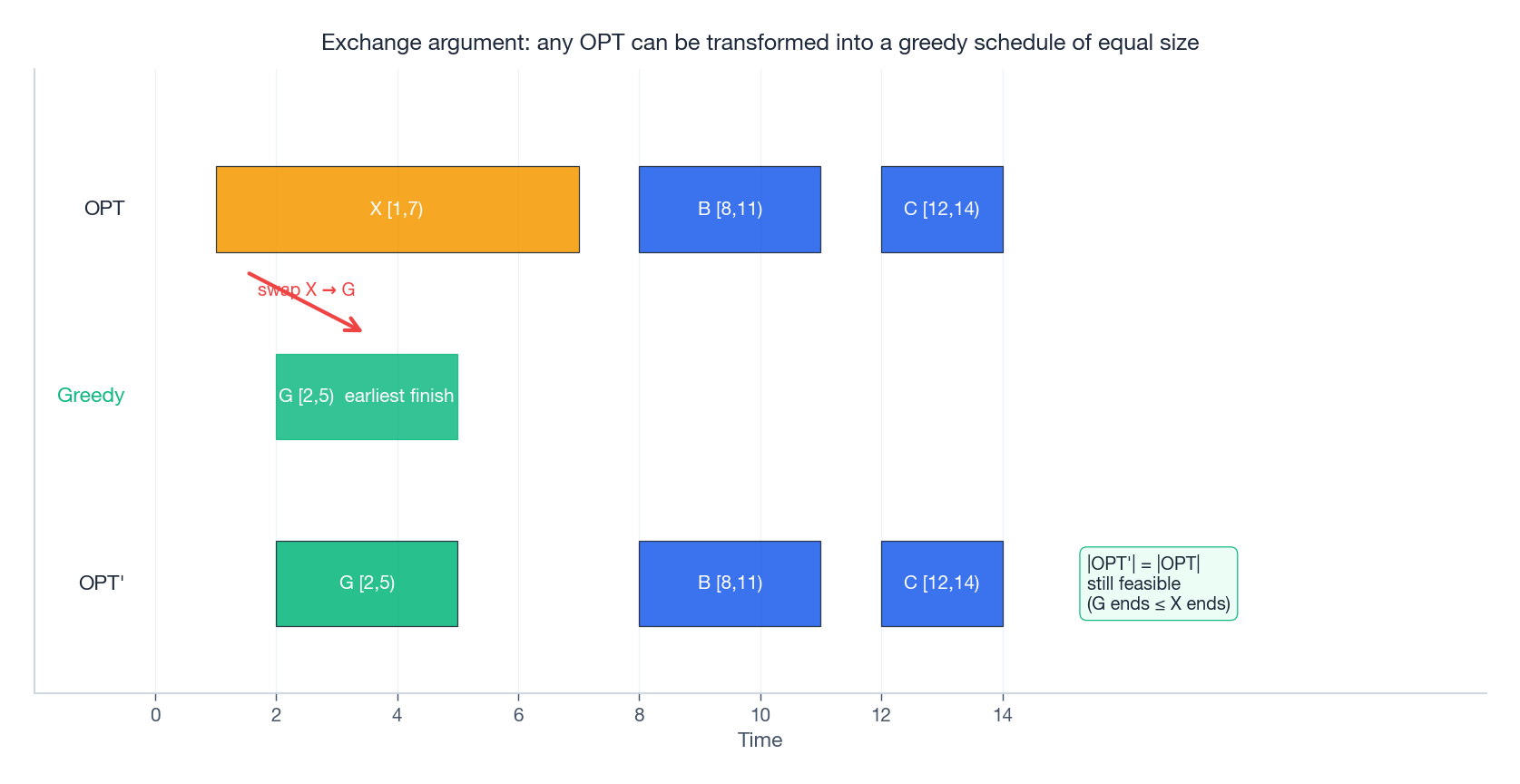

The most common proof technique for greedy is the exchange argument:

- Take any optimal solution

OPT. - Show that you can replace the first non-greedy choice in

OPTwith the greedy choice, producing a new solutionOPT'that is no worse. - By induction,

OPT'can be transformed step by step into the greedy solution without losing optimality. - Therefore the greedy solution is itself optimal.

Worked example for interval scheduling: OPT schedules some interval X first, but the greedy choice G is the interval with the earliest finish time. Because G.end ≤ X.end, every interval that was compatible with X (and came after it) is still compatible with G. Swap X for G; size is unchanged, feasibility is preserved. Repeat for the second pick, the third, and so on. The greedy schedule has the same size as OPT, so it is optimal.

The same template proves correctness for Jump Game II, Task Scheduler’s lower-bound argument, and many others.

LeetCode 55 — Jump Game#

Given a non-negative integer array

numswherenums[i]is the maximum jump length at positioni, starting from index 0, returntrueif you can reach the last index.

Examples: [2,3,1,1,4] → true, [3,2,1,0,4] → false.

The greedy insight#

You do not need to track which jumps you make — only how far you could possibly have got by now. Maintain max_reach, the farthest index reachable using any sequence of jumps from positions 0..i. Sweep left to right; if at any point i > max_reach, you are stranded.

The figure compares the two cases. On the left, max_reach jumps to 4 at i=1 and we are done. On the right, the zero at index 3 freezes max_reach at 3, and on the next iteration i=4 > max_reach=3 triggers the failure.

Why greedy is correct#

The proof is almost a tautology: if position i is reachable, then every position in [0, i + nums[i]] is reachable through it. max_reach is the union of those intervals up to step i, which is exactly the set of all reachable positions. Local update = exact global state.

Implementation#

| |

Common pitfalls:

- Forgetting the

i > max_reachcheck and updatingmax_reachfrom an unreachable index. The update would be meaningless (we never had the right to be ati), but the algorithm would return wrong answers likeTruefor[0, 1]. - Comparing

max_reach == n - 1instead of>=. The reachable frontier can overshoot the goal in a single jump.

LeetCode 45 — Jump Game II#

Same setup as LC 55, but you are guaranteed to be able to reach the end. Return the minimum number of jumps.

The trick is to think of jumps as levels of a BFS: jump 1 reaches a contiguous prefix [1 .. nums[0]]; from anywhere in that prefix, jump 2 can reach a wider prefix, etc. We do not need an explicit BFS queue — we just need to know the right boundary of the current level.

| |

Why this is optimal. Among all positions reachable in k jumps, picking the one whose i + nums[i] is largest never hurts — by an exchange argument, any optimal sequence using a “shorter” intermediate position can be modified to use the farther-reaching one without increasing the jump count. So always extending farthest is safe.

LeetCode 134 — Gas Station#

A circular route has

ngas stations. Stationihasgas[i]litres; driving fromitoi+1costscost[i]litres. Starting with an empty tank, return the index from which you can complete the loop, or-1if impossible. The answer is unique.

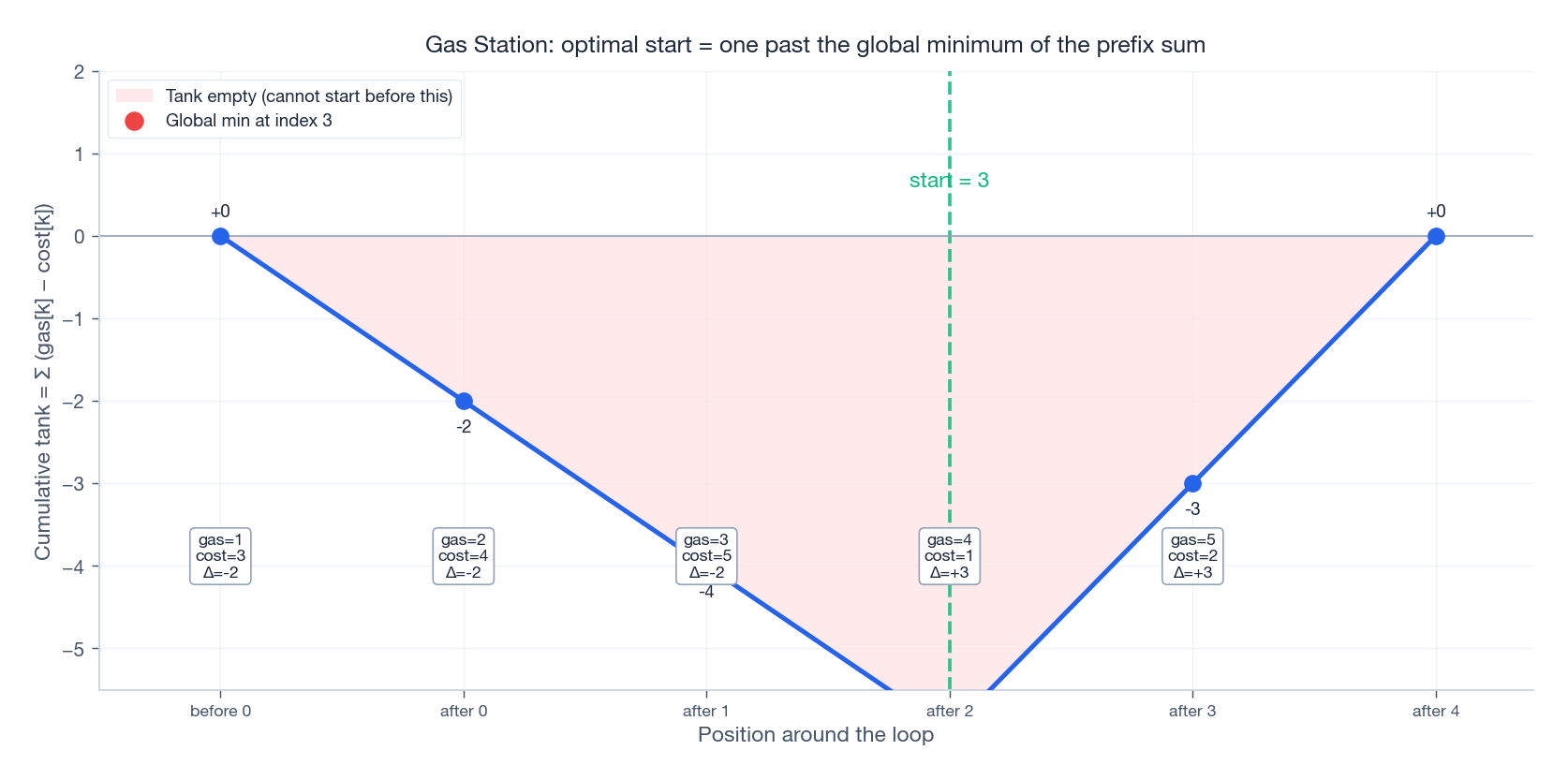

Two facts that drive the algorithm#

- Feasibility test. A solution exists iff

sum(gas) >= sum(cost). If the total deficit is negative, no starting point can compensate. - Localising the start. Define

Δ[i] = gas[i] - cost[i]. Run the prefix sum ofΔstarting from station 0. The optimal start is one position past the global minimum of this prefix sum.

The figure plots the running tank for gas = [1,2,3,4,5], cost = [3,4,5,1,2]. The prefix sum dips to its minimum after station 2, so starting at station 3 makes the tank non-negative everywhere going forward; the surplus from Δ[3]+Δ[4] = +6 covers the earlier deficit on the wrap-around.

Why the greedy reset works#

Suppose we attempted to start at index s and got stuck at index j (current_tank < 0). Then no index in [s, j] can be a valid start: each prefix from s was non-negative until j, so any later starting index s' ∈ (s, j] had even less fuel available at j, and would also fail. The next candidate to try is j + 1. Each station is visited at most twice across the whole sweep, giving O(n) overall.

Implementation#

| |

LeetCode 122 — Best Time to Buy and Sell Stock II#

Given daily stock prices, you can buy and sell as many times as you like (but never hold more than one share). Maximise total profit.

The greedy reframe#

The optimal profit is Σ max(0, prices[i] - prices[i-1]). That is, capture every upward day-over-day move and skip every downward one. There is no need to identify peaks and troughs explicitly.

Why it works. Decompose any buy-low/sell-high pair (p_lo, p_hi) into the telescoping sum of consecutive day differences: p_hi - p_lo = Σ_{i=lo+1}^{hi} (p_i - p_{i-1}). Including the negative differences strictly hurts, so the optimal is to take only the positive ones. Each positive difference is independently realisable because you can always sell at the end of an up day and rebuy at the start of the next.

| |

Pitfall. This is not the algorithm for LC 121 (single transaction) or LC 123 / 188 (limited transactions). Those problems do not have the greedy choice property — multiple buys would interfere — and require DP.

LeetCode 435 — Non-overlapping Intervals#

Return the minimum number of intervals to remove so that the rest do not overlap.

This is the classical activity selection problem in disguise: maximise the number of mutually compatible intervals, then answer = n − max_compatible.

Strategy#

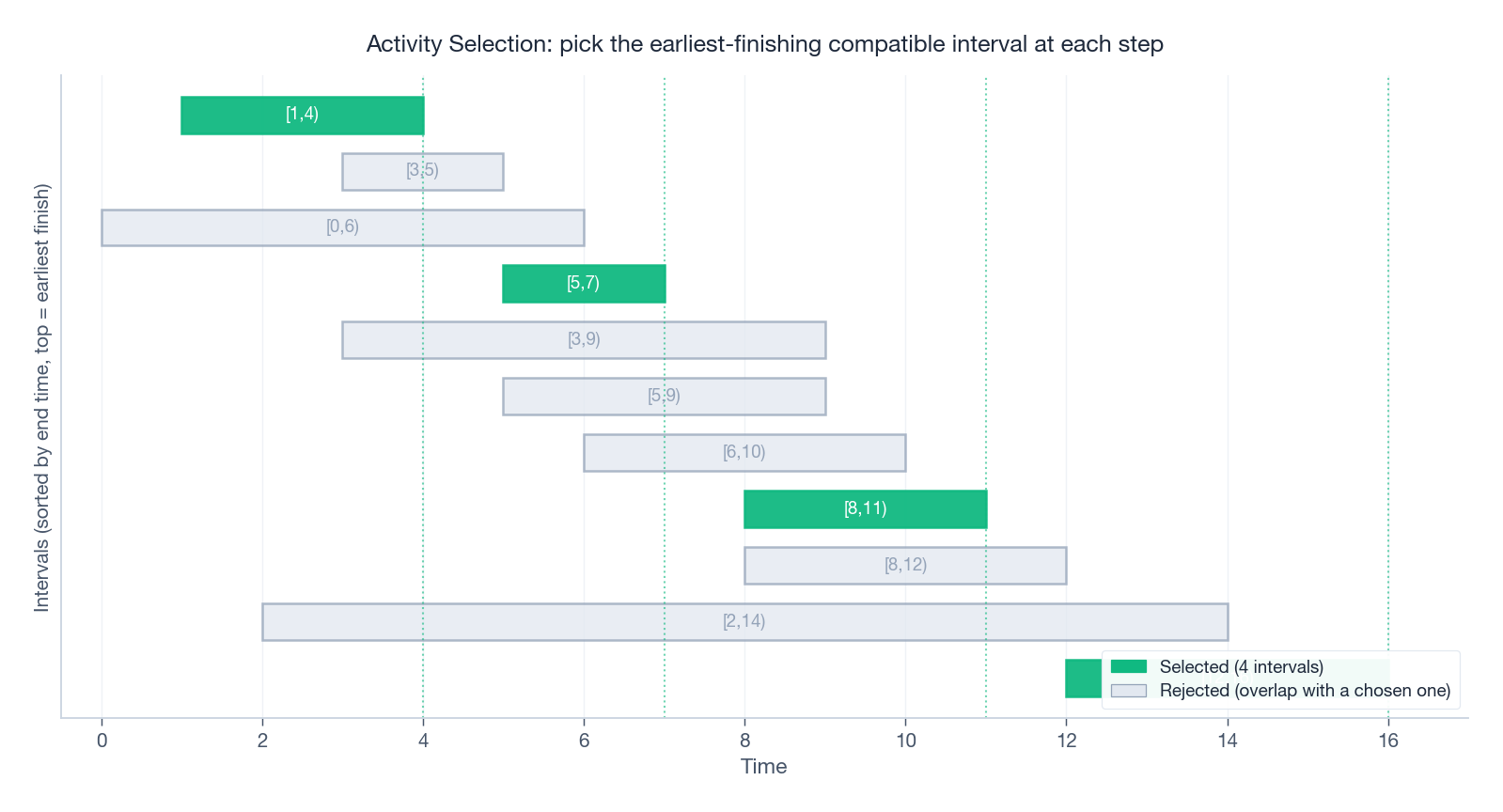

Sort by end time ascending. Greedily keep the next interval whose start is ≥ the last kept interval’s end.

The figure shows 11 intervals after sorting by end time. The greedy sweep keeps [1,4), [5,7), [8,11), [12,16) — four intervals. Every other interval overlaps one of these four and is rejected.

Why “earliest end” and not “earliest start” or “shortest”#

- Earliest start can lock in a hugely long interval (e.g.

[1,10)) and crowd out many short ones — see counterexample[[1,10],[2,3],[4,5]]. - Shortest can fail when a short interval sits in the middle of two longer ones it forces you to drop — counterexample

[[1,5],[4,6],[5,10]]: shortest is[4,6](length 2), picking it kills both neighbours. - Earliest end is provably optimal by the exchange argument from earlier: swapping any first pick for the earliest-ending one preserves feasibility and size.

Implementation#

| |

Pitfall. Use >= (not >) for the overlap test — the problem treats [1,2] and [2,3] as non-overlapping. For LC 452 (arrows / balloon-bursting) the convention flips and you want strict >.

LeetCode 621 — Task Scheduler#

Tasks are letters; identical tasks must be separated by at least

ncooldown slots (any letter oridle). Return the minimum total length of the schedule.

The greedy lower-bound formula#

Let f_max be the frequency of the most common task, and let k be the number of tasks tied at that frequency. Then the answer is

| |

Intuition. Lay out the most frequent task in a grid of n+1 columns. The first f_max - 1 rows are full (length (f_max-1)*(n+1)), and the last row contains exactly k tasks (the ones tied for most frequent). Any other tasks can be slotted into the empty positions of these rows without violating cooldown. If everything fits, the formula above gives the schedule length; if there are more tasks than empty slots, no idles are needed at all and the answer is just len(tasks).

Implementation#

| |

Why no heap is needed. A heap-based simulation also works (O(N log 26)) and is sometimes asked in interviews. The closed-form above is the elegant version once you internalise the row layout argument.

LeetCode 763 — Partition Labels#

Partition the string into as many parts as possible so each letter appears in at most one part. Return the lengths of the parts.

The greedy insight#

For each letter, record its last occurrence. Sweep the string maintaining end = max(end, last[s[i]]). When i hits end, every letter seen so far is fully contained in the current segment, so close it.

This is greedy because: at every position we extend the current segment to be the smallest one that can contain all letters seen so far — closing it any earlier would split a letter across segments; closing it any later wastes characters.

Implementation#

| |

Worked example (s = "ababcbacadefegdehijhklij"):

| |

Summary#

What I would internalise#

- Greedy correctness is structural, not aesthetic. The same

argmaxloop is right on Jump Game and wrong on{1,3,4}coin change. Always identify the structural reason — exchange argument, matroid, prefix-sum monotonicity — before trusting a greedy. - Sort by the right key. End time for scheduling, value/weight for fractional knapsack. The wrong key produces plausible-looking wrong answers.

- Maintain an invariant, not a path. Jump Game’s

max_reach, Gas Station’scurrent_tank, Buy/Sell Stock II’s day-delta — these are running aggregates, not reconstructions. Greedy’s space win comes from this. - The exchange argument is the workhorse proof. Memorise the template; you will reuse it for every new “earliest X / largest Y” pattern.

Problem checklist#

| Pattern | LeetCode | Greedy key | Proof technique |

|---|---|---|---|

| Interval scheduling | 435, 452, 56 | sort by end | exchange argument |

| Reachability frontier | 55, 45 | running max_reach | inductive invariant |

| Prefix-sum reset | 134, 53 | reset on negative tank | total-sum lemma |

| Day-delta capture | 122 | sum positive deltas | telescoping decomposition |

| Frequency layout | 621 | most-frequent-first formula | grid-fitting argument |

| Last-occurrence sweep | 763 | extend end to last[ch] | minimal-segment lemma |

| Counterexample (don’t!) | 322 | greedy ≠ optimal | requires DP |

Common mistakes#

- Picking a sort key that feels right (start time, length) without proving it.

- Forgetting the reachability check (

i > max_reach) before updating an aggregate. - Treating LC 122 as a template for LC 121/123/188 — those need DP.

- Confusing “touching at endpoint” rules:

>=for LC 435,>for LC 452.

When in doubt, try a small adversarial input (often n ≤ 5 is enough) before believing your greedy. If you cannot find a counterexample and you can sketch an exchange argument, you are probably safe.

That completes the LeetCode Patterns series. From hash tables to greedy, the recurring meta-skill has been the same: identify the structural property of the problem first, then pick the algorithm whose preconditions match. Good luck.

LeetCode Patterns 10 parts

- 01 LeetCode (1): Patterns — Hash Tables

- 02 LeetCode (2): Patterns — Two Pointers

- 03 LeetCode (3): Patterns — Linked List Operations

- 04 LeetCode (4): Patterns — Sliding Window Technique

- 05 LeetCode (5): Patterns — Binary Search

- 06 LeetCode (6): Patterns — Binary Tree Traversal and Construction

- 07 LeetCode (7): Patterns — Dynamic Programming Basics

- 08 LeetCode (8): Patterns — Backtracking Algorithms

- 09 LeetCode (9): Patterns — Greedy Algorithms you are here

- 10 LeetCode (10): Patterns — Stack and Queue