LeetCode (8): Patterns — Backtracking Algorithms

Master the universal backtracking template through six classic problems: Permutations, Combinations, Subsets, Word Search, N-Queens, and Sudoku Solver. Learn the choose -> recurse -> un-choose pattern and the pruning techniques that make it fast.

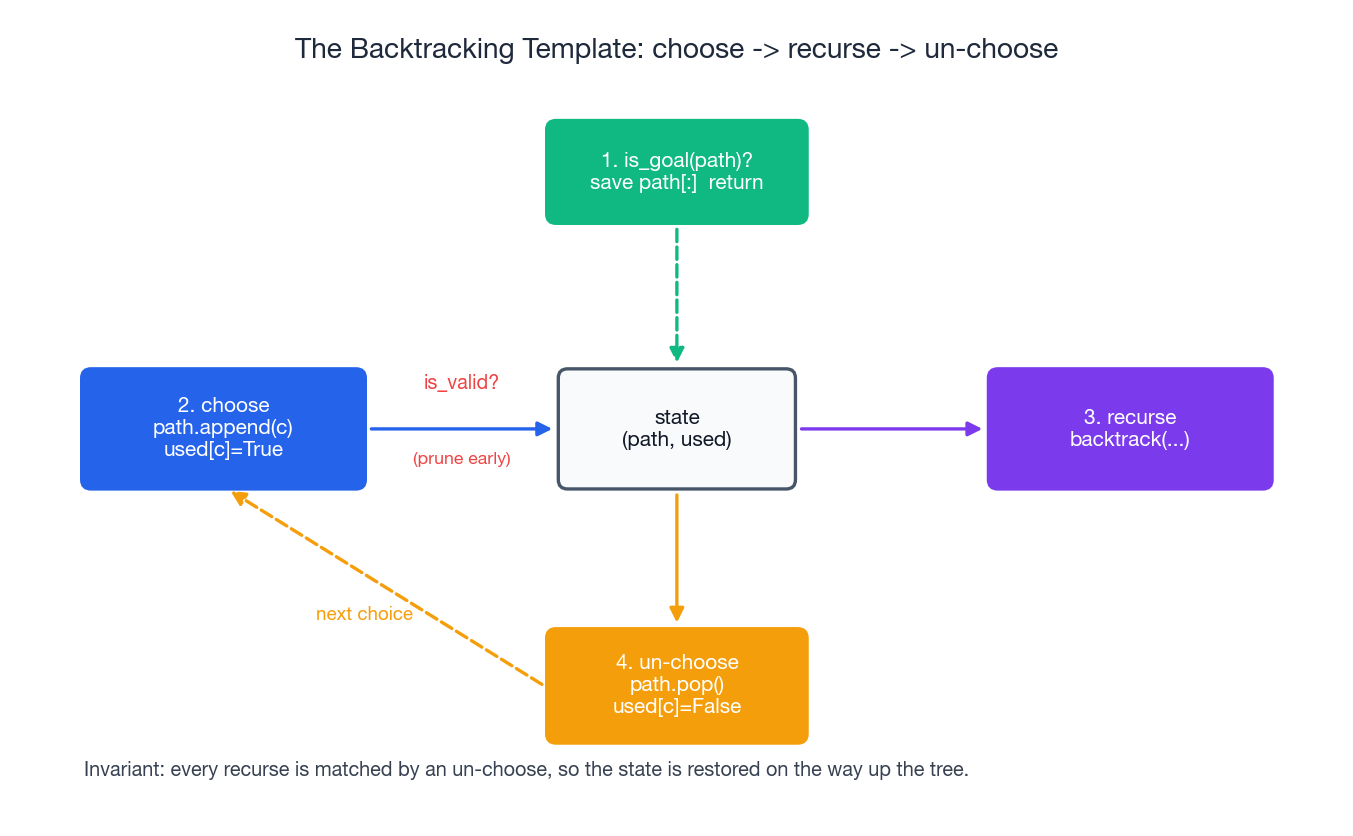

Backtracking is the algorithm you use when a problem asks you to enumerate something — every permutation, every subset, every legal board, every path through a grid. It’s brute force with a brain: you build a candidate solution one decision at a time, abandon it as soon as a constraint says “this won’t work,” and undo your last move so the next branch starts fresh. The whole technique fits in three lines:

choose -> recurse -> un-choose

This simple pattern solves Permutations, Combinations, Subsets, Word Search, N-Queens, and Sudoku, and it’s the same template you’ll use for about 90% of “find all…” problems on LeetCode. This article walks you through the template, then applies it to six canonical problems with full implementations, recursion-tree diagrams, complexity proofs, pruning tactics, and a debugging checklist for the bugs you will inevitably hit the first few times you write it.

Series Navigation#

LeetCode Algorithm Masterclass Series (10 Parts):

- Hash Tables (Two Sum, Longest Consecutive, Group Anagrams)

- Two Pointers (Collision pointers, fast/slow, sliding window)

- Linked List Operations (Reverse, cycle detection, merge)

- Binary Tree Traversal & Recursion (Inorder/Preorder/Postorder, LCA)

- Dynamic Programming Intro (1D/2D DP, state transitions)

- Binary Search Advanced (Integer/real binary search, answer search)

- Dynamic Programming continued

- -> Backtracking Algorithms (Permutations, combinations, pruning) <- You are here

- Stack & Queue (Monotonic stack, priority queue, deque)

- Greedy & Bit Manipulation (Greedy strategies, bitwise tricks)

What backtracking actually is#

Backtracking is depth-first search over an implicit tree of partial solutions. Each node is a partial answer (the path so far). Each edge is a single decision (add element x, place a queen on column c, write digit d in this cell). When you reach a leaf that satisfies the goal, you record it. When you reach a node that violates a constraint, you stop descending and back up. The “back” in backtracking is the explicit undoing of the last decision so that the algorithm can try a sibling branch with the state it had before.

That last point is what separates backtracking from a plain DFS: a graph traversal does not need to undo anything because each node is visited once, but a search over partial solutions reuses the same path and used[] data structures across millions of branches and would corrupt them without explicit restoration.

When to reach for backtracking:

- The problem says “return all …” (permutations, combinations, subsets, partitions, parenthesizations).

- It is a constraint-satisfaction problem (N-Queens, Sudoku, graph coloring).

- The search space is exponential but most of it can be eliminated by checking partial constraints early.

- You need one solution and a witness, and a polynomial-time greedy / DP doesn’t apply.

When not to reach for it:

- You only need a count or an optimal value, and the subproblems overlap -> use DP.

- The search space is small enough that you can enumerate states iteratively.

- The problem reduces to BFS shortest path or a known graph algorithm.

The universal template#

Every backtracking solution in this article is a slight variation of one template. Memorize the structure and adapt the four slots.

| |

Four things to get right and you are done:

is_goal— when ispatha complete answer?choices— what can we do from this node? (Often controlled by astartindex, aused[]array, or both.)is_valid— what makes a choice illegal in this branch? (Prune as early as you can.)update/undo— every mutation made on the way down must be reversed on the way up. This is the bug that catches everyone the first time.

A subtle point about path[:]: result.append(path) would store a reference to the same list you are about to keep mutating. By the time recursion finishes, every entry in result would point at an empty list. Use path[:], path.copy(), or list(path).

Backtracking vs DFS — the one-line distinction#

DFS traverses: it visits each node once, never modifies what it walked over, and the recursion stack alone holds enough state. Backtracking constructs: the same path is mutated all the way down and restored all the way up, so the algorithm can reuse it across exponentially many branches without copying. Backtracking uses DFS as its motion, but adds the choose/un-choose discipline.

LeetCode 46 — Permutations#

Given an array

numsof distinct integers, return all possible permutations.

Example: nums = [1,2,3] -> [[1,2,3],[1,3,2],[2,1,3],[2,3,1],[3,1,2],[3,2,1]].

How the template specializes#

- Goal:

len(path) == len(nums). - Choices: any number that hasn’t been used yet.

- Validity:

not used[i]. - State: a

used[]boolean array, flipped True on the way down and False on the way up.

The recursion tree for [1,2,3] is a perfect 3-2-1 fan-out — three first choices, two second choices, one final element forced — and produces exactly 3! = 6 leaves.

![Backtracking: exploring the permutations tree for [1,2,3]](https://blog-pic-ck.oss-cn-beijing.aliyuncs.com/posts/gifs/leetcode/backtracking-permutations.gif)

Implementation#

| |

Complexity#

There are n! permutations and each one costs O(n) to copy into the result, so the total time is O(n * n!). The recursion stack and the path array each take O(n) auxiliary space (the output is not counted).

Why n!? At depth 0 there are n legal choices, at depth 1 there are n-1, and so on, so the leaf count is n * (n-1) * ... * 1 = n!.

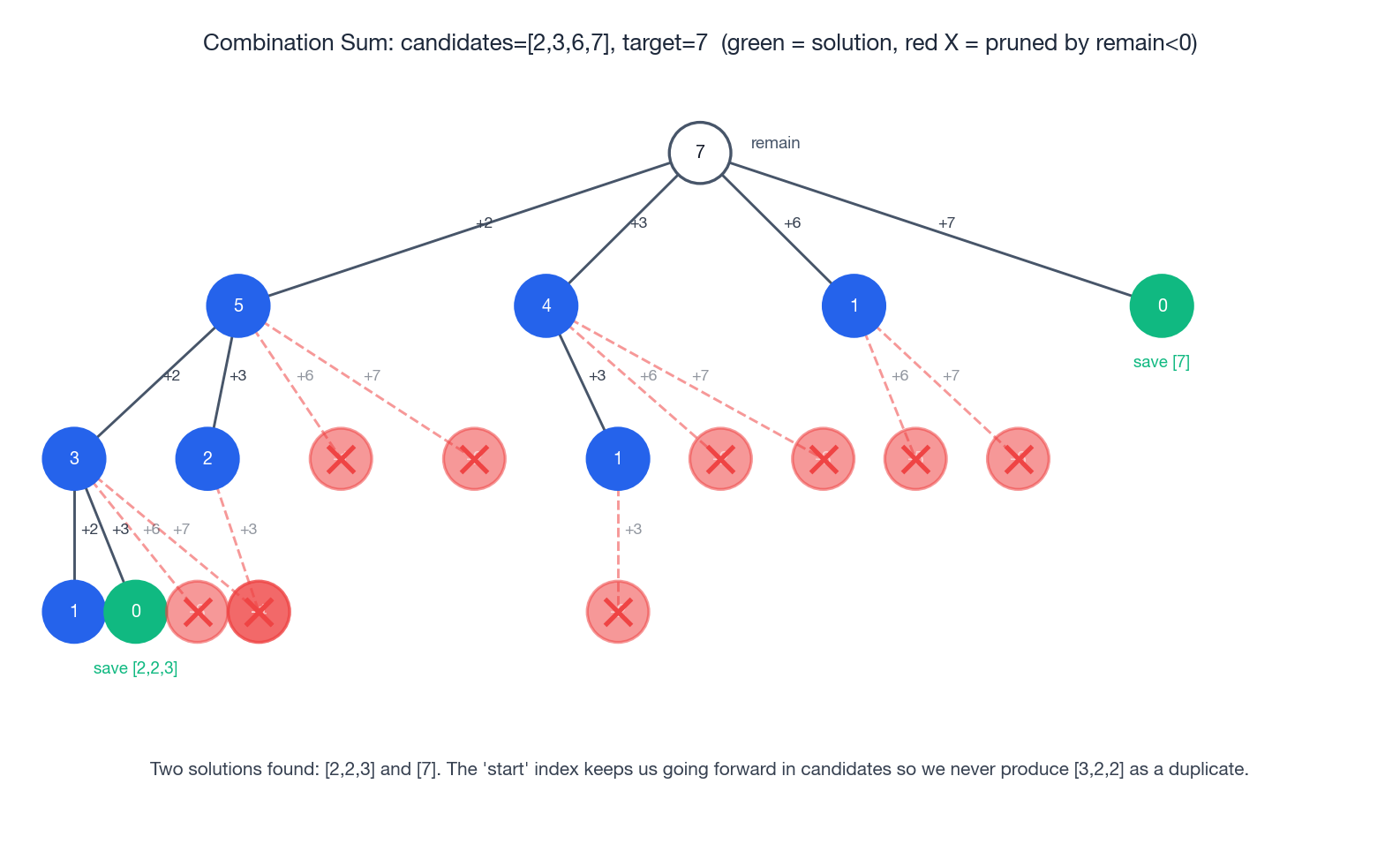

LeetCode 39 — Combinations (Combination Sum)#

Given an array of distinct positive integers

candidatesand a targettarget, return all unique combinations whose elements sum totarget. The same number may be picked unlimited times.

Example: candidates = [2,3,6,7], target = 7 -> [[2,2,3], [7]].

How the template specializes#

- Goal:

remain == 0. - Constraint pruning: if

remain < 0, abandon this branch immediately. - Avoiding duplicates: this is the hard part.

[2,3]and[3,2]are the same combination — order does not matter. The standard fix is astartindex that says “from this branch on, you can only pick candidates at index>= start”. That fixes a canonical ordering on every saved combination, so each one is generated exactly once. - Reuse: when we recurse we pass

i(noti + 1) so the same number can be picked again.

The decision tree for the example below shows pruning in action: every red X is a branch killed by remain < 0. Notice how aggressively the tree shrinks compared to enumerating all 4^k candidate strings.

Implementation#

| |

Sort-then-break optimization#

If you sort candidates first, the inner loop can break (not just continue) as soon as candidates[i] > remain, because every later candidate is even larger. On adversarial inputs this is a huge speedup.

| |

Complexity#

A clean upper bound is hard to write because reuse means paths can be longer than the input. With smallest candidate m, paths have length at most target / m, and at each step there are at most n choices, giving O(n^(target/m)) worst case. Pruning shaves this down dramatically in practice. Space is O(target / m) for the recursion depth.

LeetCode 78 — Subsets#

Given an array

numsof unique integers, return all2^nsubsets (the power set).

Example: nums = [1,2,3] -> [[],[1],[2],[1,2],[3],[1,3],[2,3],[1,2,3]].

How the template specializes#

- Goal: there isn’t a single goal — every node of the recursion tree is a valid subset, including the root (empty set). Save on entry to every call.

- Choices: include

nums[i]fori >= start, then recurse withstart = i + 1.

| |

A second, perhaps more intuitive, formulation is “for each element, include or skip”:

| |

Both run in O(n * 2^n) time and O(n) auxiliary space.

LeetCode 79 — Word Search#

Given an

m x ngrid of characters and a stringword, return True ifwordcan be formed by a sequence of horizontally or vertically adjacent cells, with each cell used at most once.

This is backtracking on a grid. The choice at each step is “which neighbor do I move to next”, the constraint is that the cell must match the next character of the word and not already be on my path, and the goal is matching the final character.

The cleanest way to track “is this cell on my current path” is to mutate the grid in place — write a sentinel like '#' when entering a cell, restore the original character when leaving. That is the choose/un-choose discipline applied to a 2D state.

| |

Time is O(m * n * 4^L) where L = len(word) (each path can branch four ways and is at most L long, with m * n starting positions). The early character-mismatch check prunes almost all of that in practice. Space is O(L) for the recursion.

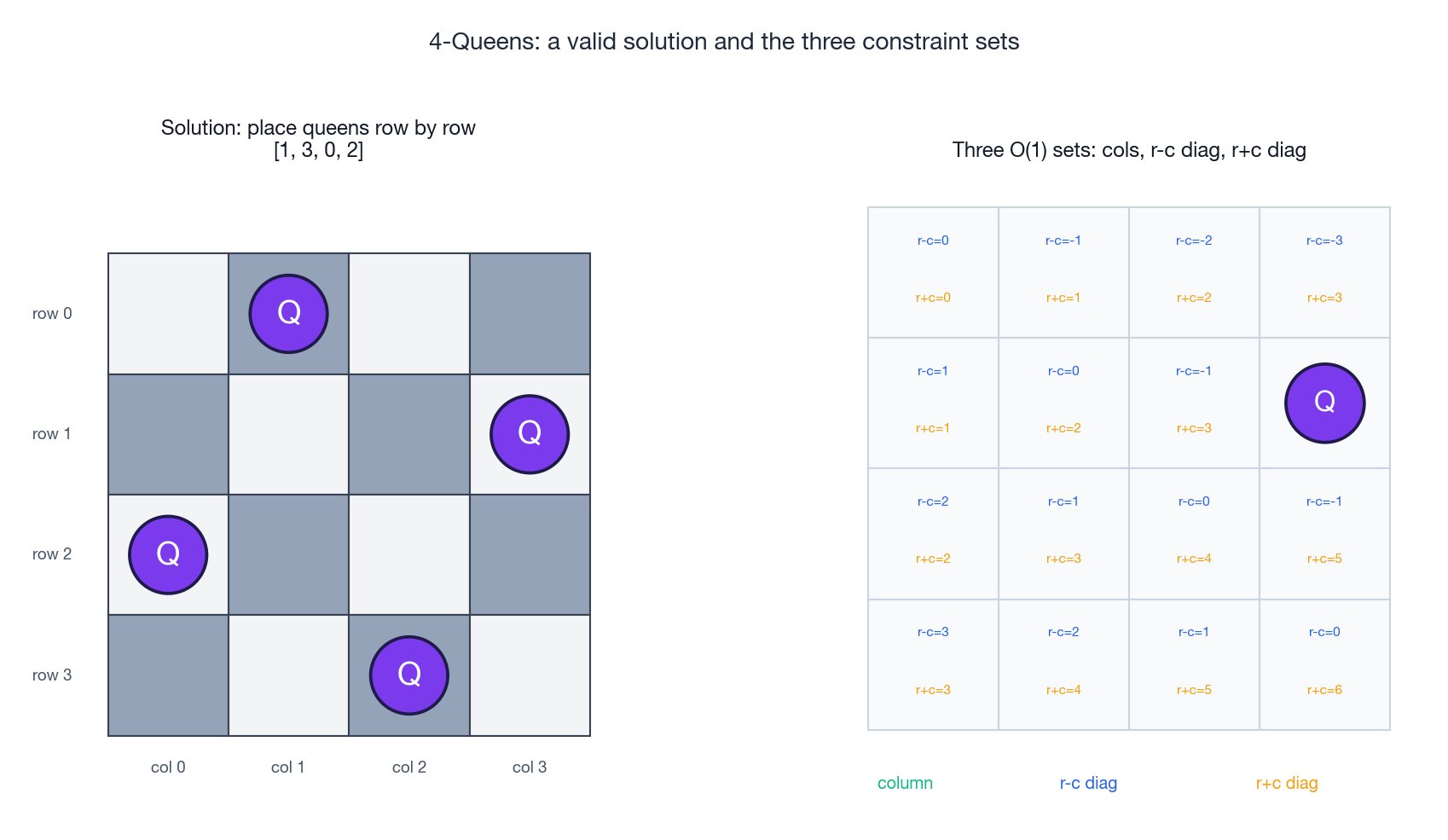

LeetCode 51 — N-Queens#

Place

nqueens on ann x nboard so that no two attack each other (no shared row, column, or diagonal). Return every solution.

The clever piece here is the diagonal trick. For any cell (r, c):

- All cells on its

↘(main) diagonal share the same value ofr - c. - All cells on its

↙(anti) diagonal share the same value ofr + c.

That means we can encode “is this diagonal taken?” as O(1) set membership instead of scanning the board. Combined with placing one queen per row (which automatically prevents row conflicts), we get three O(1) constraint sets that prune the search to a fraction of the naive n^n brute force.

| |

The asymptotic complexity is famously hard to write in closed form. A loose upper bound is O(n!) because each row eliminates at least one column for the next row; a much tighter empirical bound is roughly O(n!) / (n-ish factor) thanks to diagonal pruning. The number of solutions itself grows roughly like n! / (n^c) and is non-trivial to compute — see OEIS A000170.

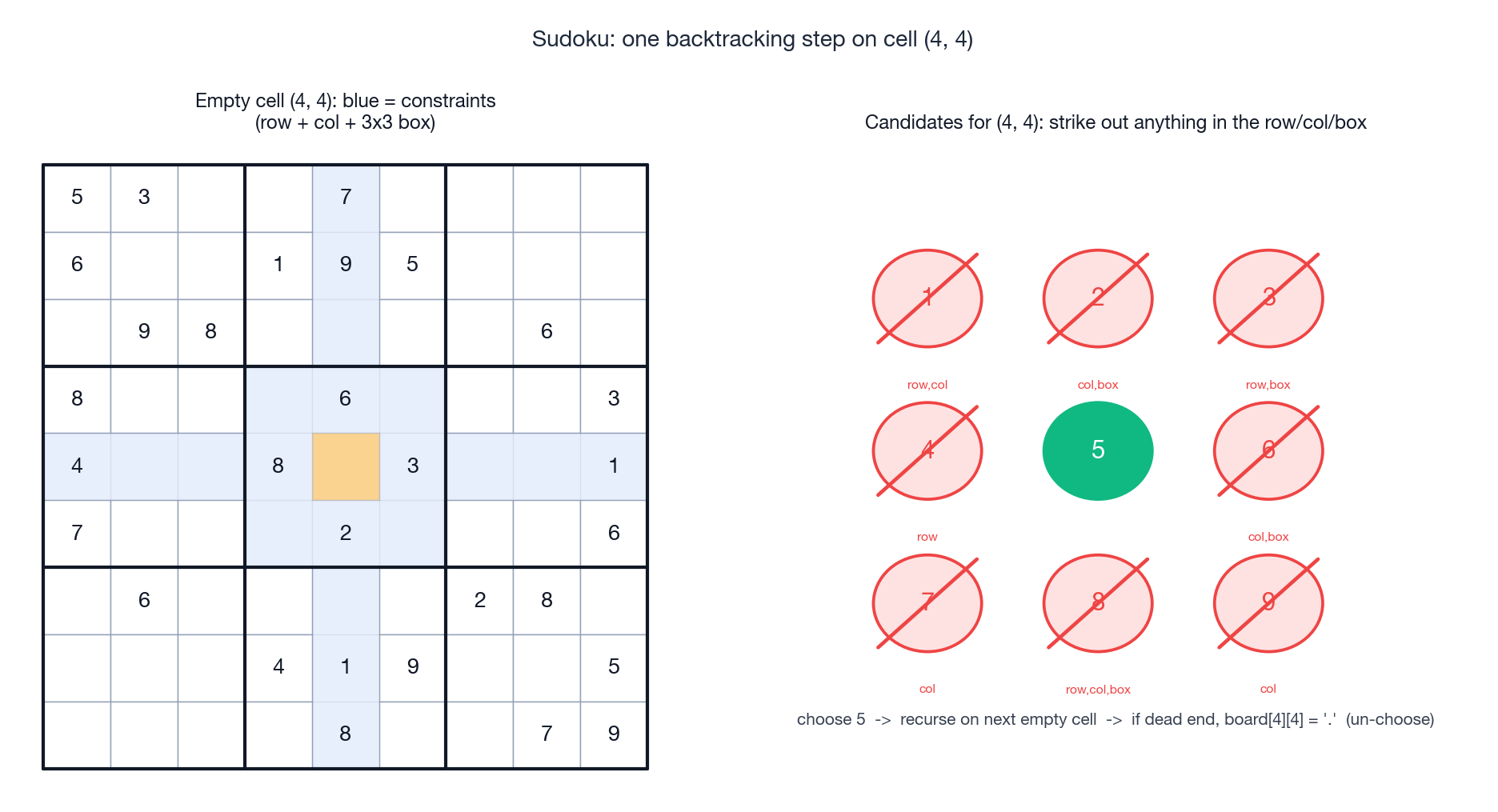

LeetCode 37 — Sudoku Solver#

Solve a Sudoku puzzle in place. The rules: digits 1-9 must appear exactly once per row, column, and 3x3 box.

Sudoku is the cleanest pedagogical example of “backtracking with strong pruning”. Most cells have only one or two legal candidates given the current board, so although the worst-case search tree is astronomical, real puzzles finish in milliseconds.

The pattern is “find the next empty cell, try every legal digit, recurse”. On a dead end, blank the cell and try the next digit; if no digit works, return False so the caller can blank its cell and try the next digit, all the way up.

| |

Two things worth pointing out:

- We return

Trueas soon as a solution is found, which short-circuits the whole tree. Backtracking does not have to enumerate everything when the question is “find one”. - We pre-compute

empty[]instead of scanning for the next blank insidebacktrack. This turns each step from O(81) into O(1) cell lookup. A further speedup is “MRV” — pick the empty cell with the fewest legal candidates next, which dramatically prunes the branching factor near the leaves.

Worst-case complexity is exponential in the number of empty cells; pragmatically, a 9x9 Sudoku is solved in milliseconds because the constraints prune the tree so aggressively.

Pruning techniques that matter#

Constraint pruning (the is_valid check before recursing) is what separates a brute-force enumeration from a useful algorithm. Five techniques recur:

- Constraint pruning —

if not is_valid(choice): continue. This is the bread and butter; do it before recursing, never after. - Bound pruning — for sum-target problems, abort once

remain < 0or onceremaincannot be reached even if you take every remaining element. - Sort + early break — sort the input; once the current element exceeds the remaining budget, every subsequent one will too, so

breakinstead ofcontinue. - Duplicate skipping — sort, and inside the loop skip indices where

i > start and nums[i] == nums[i-1]. This is the standard fix for “Combination Sum II” and “Permutations II”. - Symmetry breaking — for problems with rotational symmetry (e.g. N-Queens with

n >= 4solutions), restrict the first choice to half the board and mirror the rest.

Complexity at a glance#

| Problem | Time | Space (aux) | Why |

|---|---|---|---|

| Permutations | O(n * n!) | O(n) | n! leaves, O(n) to copy each |

| Combination Sum | O(n^(target/m)) worst | O(target) | m = min(candidates); pruned heavily |

| Subsets | O(n * 2^n) | O(n) | 2^n subsets, O(n) to copy |

| Word Search | O(m*n * 4^L) | O(L) | start anywhere, branch 4 |

| N-Queens | ~ O(n!) worst | O(n) | row-by-row with diagonal pruning |

| Sudoku | exponential worst | O(81) | strong pruning makes it fast in practice |

Bugs you will hit (and how to fix them)#

Bug 1 — saving a reference instead of a copy.

| |

Bug 2 — forgetting to un-choose.

| |

Bug 3 — un-choose only restores half the state. If you mutated used[i], the board, or three diagonal sets, you have to undo all of them. Make it a habit: every line below the recursive call mirrors a line above it.

Bug 4 — checking the constraint after appending. Always check is_valid(choice) before path.append(choice). Otherwise pruning saves you nothing.

Bug 5 — wrong loop start in combinations. Combinations need a start parameter; permutations need used[]. Mixing them up either generates duplicates or misses solutions.

Bug 6 — re-scanning instead of indexing in Sudoku. The naive solver finds the next empty cell with a nested loop on every call. Pre-compute the list of empties once.

FAQ#

Q1. Backtracking vs DFS — what is the actual difference? DFS visits each node once and never modifies the structure; backtracking reuses the same path/state across exponentially many branches and must restore that state on the way up. Backtracking uses DFS as its motion.

Q2. Backtracking vs DP — when do I pick which? If you need all solutions, backtracking. If you need a count or an optimum and the subproblems overlap, DP. Sometimes both work and DP is faster (e.g. counting paths instead of listing them).

Q3. Why path[:] and not path? path is a reference to the list you keep mutating. Without the copy, result ends up as a list of references all pointing at the same eventually-empty list.

Q4. How do I avoid duplicates when the input has duplicates? Sort the input, then in the loop write if i > start and nums[i] == nums[i-1]: continue. This skips equal siblings at the same depth without skipping equal elements that appear at different depths.

Q5. Combinations vs permutations — what changes in the code? Permutations track used[] and let the loop start at 0 each call; combinations use a start index that fixes a canonical order on the chosen elements.

Q6. My solution is correct but TLEs. Where to look? First, are you pruning before recursing, not after? Second, are your validity checks O(1) (sets/arrays) instead of O(n) (in path)? Third, can you sort the input and break instead of continue?

Q7. Can I make backtracking iterative? Yes — replace the call stack with an explicit stack of (path, state) pairs. The recursive form is almost always cleaner; the iterative form helps only when you are hitting a recursion-depth limit.

Q8. How do I find just one solution efficiently? Have the recursive function return a bool (or the solution itself). Return True as soon as a goal is reached, and propagate that up the call chain so the caller stops iterating.

Q9. Sudoku takes forever on hard puzzles — what should I add? Use MRV (most-constrained variable): at each step, fill the empty cell with the fewest legal candidates. Combined with the rows/cols/boxes sets above, this cuts the branching factor near the bottom of the tree dramatically.

Q10. What is the right order to learn these? Permutations -> Subsets -> Combination Sum -> Word Search -> N-Queens -> Sudoku. Each one adds exactly one new wrinkle (used[] -> save-every-node -> start index + reuse -> grid traversal -> multi-set constraints -> early termination + heuristics).

Summary#

Backtracking is a single rhythm — choose, recurse, un-choose — wrapped around a constraint check. The whole skill is recognizing the four slots in the template (goal, choices, validity, state mutation) and filling them in for the problem in front of you. Permutations show off the used[] pattern. Subsets show that “every node is a solution”. Combinations introduce the start index for canonical ordering. Word Search transplants the template onto a 2D grid by mutating cells in place. N-Queens demonstrates the diagonal-set encoding. Sudoku shows how aggressive constraint propagation turns a 10^81 search space into something that finishes in milliseconds.

If you internalize one thing from this article, make it this: every line of mutation on the way down the tree must be matched by an undoing line on the way up. Get that invariant right and the rest is just bookkeeping.

LeetCode Patterns 10 parts

- 01 LeetCode (1): Patterns — Hash Tables

- 02 LeetCode (2): Patterns — Two Pointers

- 03 LeetCode (3): Patterns — Linked List Operations

- 04 LeetCode (4): Patterns — Sliding Window Technique

- 05 LeetCode (5): Patterns — Binary Search

- 06 LeetCode (6): Patterns — Binary Tree Traversal and Construction

- 07 LeetCode (7): Patterns — Dynamic Programming Basics

- 08 LeetCode (8): Patterns — Backtracking Algorithms you are here

- 09 LeetCode (9): Patterns — Greedy Algorithms

- 10 LeetCode (10): Patterns — Stack and Queue