Essence of Linear Algebra (1): The Essence of Vectors — More Than Just Arrows

Vectors are everywhere -- from GPS navigation to Netflix recommendations. This chapter builds your intuition from arrows in space to abstract vector spaces, covering addition, scalar multiplication, inner products, norms, and why linearity matters.

Why Vectors, and Why Care?#

A physicist talks about a force. A data scientist talks about a feature. A game programmer talks about a velocity. A quantum theorist talks about a state. Different fields, different terms — but the same underlying object: a vector.

That’s no coincidence. A vector is the simplest mathematical object flexible enough to describe anything you can add together and scale. Once you see this pattern, you’ll see it everywhere.

Most introductory courses give you two answers to “what is a vector?”:

- “An arrow in space, with a length and a direction.”

- “An ordered list of numbers.”

Both are correct but incomplete. The full story is that a vector is whatever lives in a vector space — a set of objects that work well with addition and scaling. Arrows and number lists are the most common examples; functions, signals, polynomials, and quantum states are equally valid.

This chapter moves from the concrete to the abstract:

- Geometric — arrows you can draw.

- Numerical — columns of numbers you can compute with.

- Structural — the inner product, which fuses the two.

- Axiomatic — the rules that make the whole thing work for any such object.

What You Will Learn#

- How to think about vectors geometrically (arrows) and numerically (lists)

- The three operations: addition, scalar multiplication, subtraction

- The dot product — algebra and geometry agreeing on what “alignment” means

- Several ways to measure size (norms), and why we need more than one

- The axiomatic vector-space definition — why functions can be vectors too

Prerequisites#

- High-school algebra

- Coordinate geometry on the $x$ –$y$ plane

- Pythagoras’ theorem

The Geometric Picture: Vectors as Arrows#

From Walking Directions to a Vector#

Stand at the centre of a park (call it the origin). A friend says: “Walk 4 steps east, then 3 steps north.”

$$\vec{v} = \begin{pmatrix} 4 \\ 3 \end{pmatrix},$$with$4$ pointing east (the$x$ component) and$3$ pointing north (the$y$ component).

$$\|\vec{v}\| = \sqrt{4^2 + 3^2} = 5.$$ $$\theta = \arctan\!\left(\frac{3}{4}\right) \approx 37^\circ.$$So a vector packages two pieces of geometric information — length and direction — into a single object.

Translation Invariance: A Vector Has No Home#

Here is the first idea that surprises beginners: a vector does not care where it starts. Whether you draw “4 east, 3 north” from the park centre or from the northeast corner, it is the same vector. Direction and length are the only invariants.

Velocity is the clearest example. A ship sailing east at 20 knots has the same velocity vector whether it’s in the middle of the Pacific or hugging the coast of Spain. The position changes every minute; the velocity vector does not.

This is why, when drawing vectors, we can anchor them at the origin — a convenience, not a constraint.

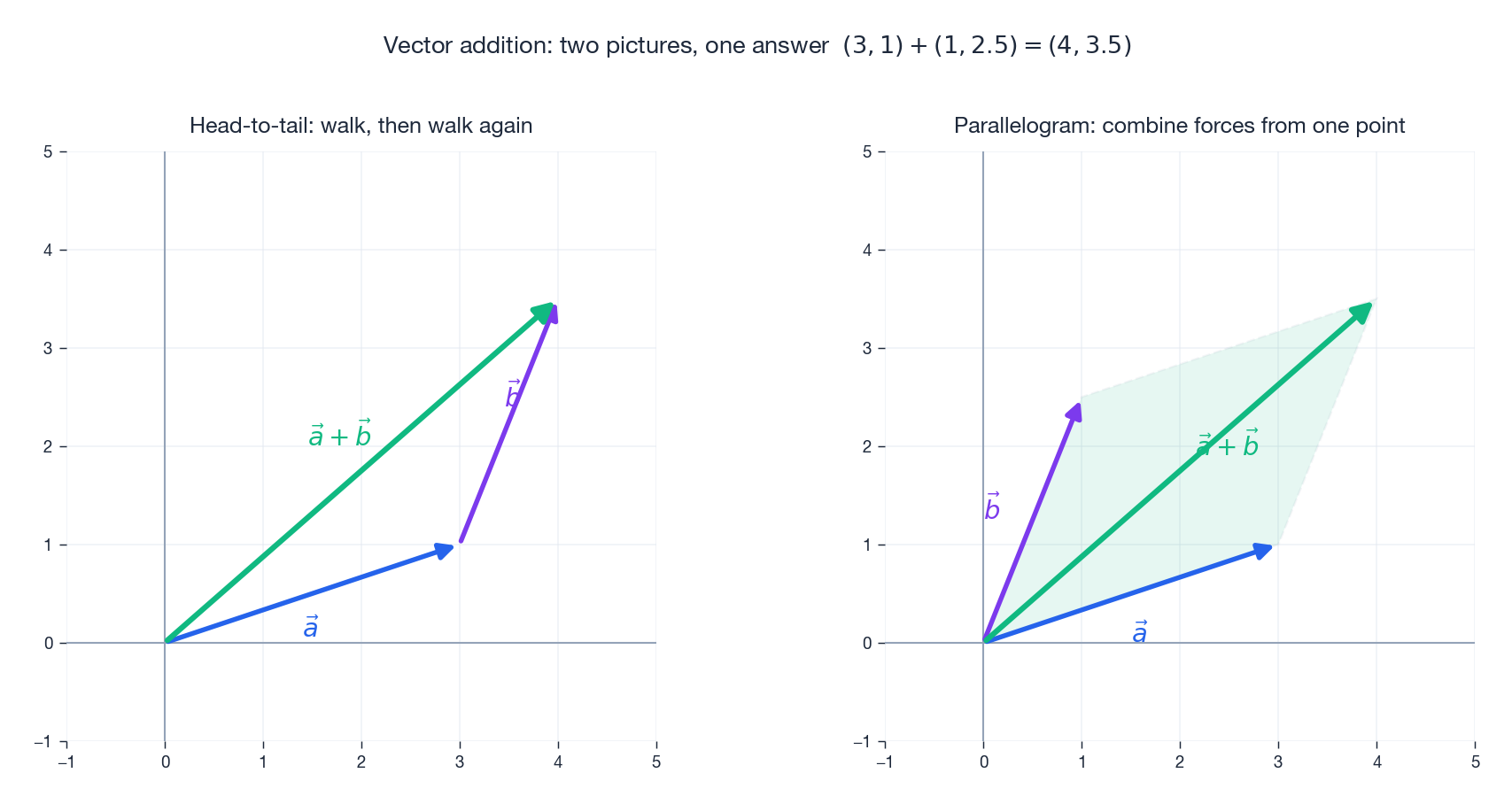

Vector Addition: Three Pictures of the Same Thing#

Given two vectors$\vec{a}$ and$\vec{b}$ , the sum$\vec{a} + \vec{b}$ can be visualised three ways. They are all equivalent.

Head-to-tail. Walk along$\vec{a}$ , then walk along$\vec{b}$ . The arrow from your starting point to your ending point is the sum. This is how we naturally think about successive displacements.

Parallelogram. Draw both vectors from the same point. Complete the parallelogram. The diagonal from that shared point is the sum. This is how physicists combine two forces acting at the same location.

$$\begin{pmatrix} 3 \\ 1 \end{pmatrix} + \begin{pmatrix} 1 \\ 2.5 \end{pmatrix} = \begin{pmatrix} 4 \\ 3.5 \end{pmatrix}.$$Use the geometric pictures to understand; use components to compute. They are two faces of one coin.

Scalar Multiplication: Stretch, Shrink, Reverse#

Multiplying a vector$\vec{v}$ by a number (a scalar)$c$ rescales it along its own line. The behaviour splits into four regimes:

-$c > 1$ : the arrow stretches in the same direction. -$0 < c < 1$ : it shrinks in the same direction. -$c = 0$ : it collapses to the zero vector. -$c < 0$ : it reverses direction and rescales by$|c|$ .

The set of all scalar multiples of a single non-zero vector traces out a line through the origin — this is your first taste of a span, which we’ll explore in Chapter 2 .

| |

A driving analogy makes this stick. If$\vec{v}$ is your current velocity, then$2\vec{v}$ is doubling your speed,$0.5\vec{v}$ is cruising at half-speed, and$-\vec{v}$ is a U-turn at the same speed.

Vector Subtraction: “From Here to There”#

$$\vec{b} - \vec{a} \;=\; \text{the vector that takes you from } A \text{ to } B.$$Game engines use this constantly: the displacement from a player to a target, the direction a bullet should travel, the offset between two waypoints — all are subtractions of position vectors.

The Numerical Picture: Vectors as Data#

Beyond 2D and 3D#

$$\vec{v} = \begin{pmatrix} v_1 \\ v_2 \\ \vdots \\ v_n \end{pmatrix} \in \mathbb{R}^n.$$You cannot picture a 100-dimensional arrow, but every operation — addition, scaling, dot products, norms — carries over unchanged. This is the bridge from “geometry you can see” to “geometry you can only compute.”

The Same Object, Three Viewpoints#

Here is the same five numbers,$\{25.3,\,65.0,\,1013,\,15.2,\,45\}$ , viewed from three different traditions. They are all the same vector.

Physics. An arrow in physical space, e.g. a velocity or a force.

Computer science. A feature vector: a row of measurements characterising one example — here, weather conditions at one moment (temperature, humidity, pressure, wind speed, cloud cover).

Mathematics. A column of real numbers, an element of$\mathbb{R}^5$ .

| |

Once you accept the three viewpoints as one object, very different things become the same calculation. Comparing two users’ movie tastes, or comparing two images for similarity, or comparing two molecular fingerprints — all reduce to the same dot-product computation we are about to define.

| |

A famous NLP example takes this even further: words become 300-dimensional vectors trained so that$\text{king} - \text{man} + \text{woman} \approx \text{queen}$ . Vector arithmetic captures meaning.

The Inner Product: Where Geometry Meets Algebra#

The inner product (or dot product in$\mathbb{R}^n$ ) is the deepest single operation in this chapter. It hides a small miracle: two definitions that look completely unrelated turn out to give the same number.

Two Definitions, One Operation#

$$\vec{a} \cdot \vec{b} \;=\; \sum_{i=1}^{n} a_i b_i \;=\; a_1 b_1 + a_2 b_2 + \cdots + a_n b_n.$$ $$\vec{a} \cdot \vec{b} \;=\; \|\vec{a}\|\,\|\vec{b}\|\,\cos\theta,$$where$\theta$ is the angle between$\vec{a}$ and$\vec{b}$ .

The algebraic form tells you how to compute. The geometric form tells you what it means: the dot product measures how much two vectors agree in direction. Same direction$\Rightarrow$ large positive number. Perpendicular$\Rightarrow$ exactly zero. Opposite directions$\Rightarrow$ large negative number.

Projection: The Best Approximation#

$$\operatorname{proj}_{\vec{b}}\vec{a} \;=\; \frac{\vec{a}\cdot\vec{b}}{\vec{b}\cdot\vec{b}}\,\vec{b}.$$This is the closest point on the line through$\vec{b}$ to the tip of$\vec{a}$ . That is not a coincidence — it is the same idea behind least-squares regression, principal components, signal filtering, and a dozen other “best linear approximation” problems we will meet again and again.

| |

Orthogonality: Independence, Made Geometric#

When$\vec{a} \cdot \vec{b} = 0$ , the two vectors are orthogonal (perpendicular), written$\vec{a} \perp \vec{b}$ . Geometrically that means a 90-degree angle. Why is that worth a name?

- Geometry: orthogonal directions don’t interfere — moving along one does not change the projection onto the other.

- Statistics: uncorrelated random variables correspond to orthogonal vectors (covariance$= 0$ ).

- Computation: an orthogonal basis decouples a problem into independent one-dimensional pieces. Almost every “this is fast” trick in numerical linear algebra rides on orthogonality.

The Cauchy–Schwarz Inequality#

$$|\vec{a}\cdot\vec{b}| \;\leq\; \|\vec{a}\|\,\|\vec{b}\|,$$with equality only when one is a scalar multiple of the other. In words: the dot product can never exceed the product of the lengths. It is the algebraic shadow of$|\cos\theta| \leq 1$ , and it is the single most-used inequality in all of analysis.

Norms: Several Ways to Measure Size#

The familiar “length”$\sqrt{x_1^2+\cdots+x_n^2}$ is one valid notion of size for a vector. It is the $L^2$ norm. But it is not the only one, and which one you choose changes the geometry of your problem.

The Three Most Common Norms#

| Norm | Formula | Intuition |

|---|---|---|

| $L^2$ (Euclidean) | $\sqrt{\sum_i x_i^2}$ | Straight-line distance |

| $L^1$ (Manhattan) | $\sum_i \lvert x_i \rvert$ | City-block distance, walking only along streets |

| $L^\infty$ (max) | $\max_i \lvert x_i \rvert$ | Worst-case component |

Why three? Because different problems care about different things:

-$L^2$ is smooth, differentiable everywhere away from the origin, and rotation-invariant. It is the safe default and the natural partner of the dot product. -$L^1$ has corners. That non-smoothness is a feature: it pushes optimisers towards solutions where many coordinates are exactly zero. This is the secret behind LASSO regression and compressed sensing. -$L^\infty$ is what you reach for when you care about the worst component, not the average one — robust control, error bounds, max-error guarantees.

Unit Balls: The Geometric Fingerprint of a Norm#

A clean way to see a norm is to draw its unit ball — the set of all vectors of length 1 in that norm. Three norms, three very different shapes:

The diamond’s sharp corners on the axes are exactly why$L^1$ produces sparse solutions: when you minimise an objective constrained to that diamond, the optimum tends to land on a corner, which means several coordinates are zero.

A reassuring theorem — norm equivalence — says that in finite dimensions, all of these norms are interchangeable in a qualitative sense: a sequence converges in one if and only if it converges in all of them. The numerical values differ; the topology does not.

The Abstract Picture: Vector Spaces from Axioms#

So far, “vector” has meant “an arrow” or “a column of numbers”. But mathematicians noticed something striking: many wildly different objects — arrows, polynomials, continuous functions, random variables, quantum states — all obey the same rules. So they distilled those rules into a definition.

The Definition#

A vector space$V$ over a field$\mathbb{F}$ (usually$\mathbb{R}$ or$\mathbb{C}$ ) is a set with two operations,

- vector addition,$\vec{u} + \vec{v} \in V$ , and

- scalar multiplication,$c\,\vec{v} \in V$ ,

satisfying ten axioms (commutativity and associativity of addition, existence of a zero vector and of additive inverses, distributivity, associativity of scalar multiplication, and a multiplicative identity). The axioms are the small print; the spirit is one sentence:

Anything you can add together and scale, while obeying the usual algebraic rules, is a vector.

Surprising Vector Spaces#

Continuous functions. All continuous functions on$[0,1]$ form a vector space: add them pointwise,$(f+g)(x) = f(x) + g(x)$ ; scale them pointwise,$(cf)(x) = c\,f(x)$ ; the zero vector is the constant function$0$ . This space is infinite-dimensional — you can think of a function as a vector with one coordinate for every$x \in [0,1]$ .

Polynomials. Polynomials of degree at most$n$ form an$(n+1)$ -dimensional vector space, with the natural basis$\{1, x, x^2, \ldots, x^n\}$ .

Matrices. The set of$m \times n$ matrices forms an$mn$ -dimensional vector space; you add matrices entrywise and scale them entrywise.

Quantum states. A quantum state is a unit vector in a complex Hilbert space. The famous “superposition” of states is just vector addition wearing a tuxedo.

Why the Abstraction Pays Off#

Once you prove a theorem at the level of axioms, it applies everywhere the axioms hold:

- Cauchy–Schwarz works for column vectors, function spaces, and random variables (where it becomes the covariance inequality$|\operatorname{Cov}(X,Y)| \le \sigma_X \sigma_Y$ ). One theorem, three subjects.

- Fourier analysis is “decompose a function as a linear combination of orthogonal basis vectors” — exactly what we will do with column vectors in Chapter 7 .

- Quantum mechanics is “physics, but in an infinite-dimensional inner-product space.”

This is the deepest pay-off of linear algebra: learn the structure once, apply it forever.

Vectors in the Wild#

Game Physics in Five Lines#

| |

That is Newtonian mechanics, discretised. Every physics engine you have ever played in a game is built on these two lines, repeated 60 times per second.

Colour Mixing Is Vector Addition#

| |

RGB colours live in$\mathbb{R}^3$ . Mixing them is literally vector arithmetic.

GPS, Reduced to Vector Equations#

$$\|\vec{x} - \vec{s}_i\| \;=\; d_i.$$In the plane, two such equations leave you with two intersection points; a third pins the answer down. In 3D, you need four satellites (a fourth for clock-bias correction). That is it — billion-dollar infrastructure, expressed as vector equations.

Common Pitfalls#

“A vector must start at the origin.” No. Vectors are translation-invariant; we draw them from the origin only for convenience.

“A vector is just a list of numbers.” Half-true. A list of numbers is one representation of a vector. Continuous functions, polynomials, and quantum states are vectors that cannot be written as finite columns of numbers.

“Dot product and cross product are similar.” They are not. The dot product takes two vectors and returns a scalar; the cross product (which only exists in$\mathbb{R}^3$ , with a partial cousin in$\mathbb{R}^7$ ) takes two vectors and returns a vector. Different inputs in the same place, totally different outputs.

“The zero vector points in some direction.” No. The zero vector is the unique vector with no well-defined direction — which is exactly why formulas like$\vec{v}/\|\vec{v}\|$ need a special case.

Summary#

| Concept | Key Idea |

|---|---|

| Vector | An object with magnitude and direction — or, more generally, an element of a vector space |

| Addition | Head-to-tail, parallelogram, or component-wise — all equivalent |

| Scalar multiplication | Stretch, shrink, or reverse along the same line |

| Inner product | Measures alignment; algebra and geometry agree |

| Projection | The best one-dimensional approximation — prototype of least-squares |

| Norm | Measures size; different norms reflect different priorities |

| Vector space | Any set where addition and scaling obey the axioms — including spaces of functions |

The Big Takeaway#

A vector is not an arrow, and it is not a column of numbers. It is a pattern — a small, sharp set of rules for “things that add and scale”. Once you start looking, you will find that pattern in images, signals, quantum states, financial portfolios, neural-network parameters, and probability distributions. Linear algebra is the study of that pattern.

Numerical Stability: When Vector Math Breaks in Code#

I want to show one thing that quietly bites people: the textbook formula $\cos\theta = \vec{u}\cdot\vec{v} / (\|\vec{u}\|\,\|\vec{v}\|)$ is not a safe way to compute the angle between two vectors. Try it on a pair of nearly-parallel unit vectors:

| |

What happened? Squaring the components inside norm destroyed the small coordinate ($10^{-9}$

became $10^{-18}$

, below double-precision resolution near 1). Then $c$

rounded to exactly $1$

, and arccos(1) = 0.

atan2 keeps full precision for both tiny and near-$\pi$

angles. The same lesson applies to Gram-Schmidt (use modified Gram-Schmidt or Householder, never classical), to computing variance (use Welford’s online formula, not $E[X^2] - (E[X])^2$

), and to softmax (subtract the max first).

The takeaway: the algebra you learned in this chapter is exact over $\mathbb{R}$ , but every line of code lives in $\mathbb{F}_{64}$ , a finite set of about $2^{64}$ rational numbers. Cancellation, overflow, and rounding are not edge cases — they decide whether your model trains.

What numpy Actually Does for np.dot#

When you write np.dot(u, v) for two 1-D arrays, numpy does not run a Python loop. It dispatches to a BLAS Level 1 routine — typically cblas_ddot from OpenBLAS, MKL, or Apple Accelerate — written in hand-tuned C or assembly. For a vector of length $n$

, the routine:

- Checks alignment and stride. If both arrays are contiguous, it takes the fast path.

- Splits the loop into chunks of 4 or 8 elements and uses SIMD instructions (AVX2 / AVX-512 on x86, NEON on ARM) so one CPU instruction multiplies-and-adds 4 or 8 doubles in parallel.

- Accumulates partial sums in separate registers to break the dependency chain, then combines them at the end. This is roughly 4-8x faster than a naive sequential sum and — somewhat surprisingly — it changes the bit-exact result, because floating-point addition is not associative.

The cost: $\Theta(n)$

FLOPs and $\Theta(n)$

memory reads. For a length-$10^6$

vector on a modern laptop you should see roughly 5-10 GFLOPS and a runtime around 0.1 ms. If you ever measure much worse than that, it means numpy fell off the BLAS path — usually because of a non-contiguous slice (u[::2]) or an object dtype.

The same reasoning scales up. A @ B for two $n \times n$

matrices calls dgemm (BLAS Level 3), which uses a blocked algorithm to keep data in L1/L2 cache. That’s why a hand-written triple loop in Python is roughly 1000x slower than numpy — not because Python is slow per multiply, but because it cannot hold the working set in cache the way dgemm can.

So: when this chapter says “the inner product is just $\sum u_i v_i$

”, that is true mathematically. Operationally, np.dot is one of the most heavily optimised pieces of software you will ever call. Trust it. Don’t reinvent it.

What’s Next#

In Chapter 2 : Linear Combinations and Vector Spaces, we ask the next natural questions:

- How can we build an entire space from a small set of vectors? (span)

- When are some of those vectors redundant? (linear independence)

- What is the smallest possible “complete toolbox” for a space? (basis and dimension)

These three ideas turn the static notion of “a vector” into the dynamic notion of “a coordinate system.”

Linear Algebra 18 parts

- 01 Essence of Linear Algebra (1): The Essence of Vectors — More Than Just Arrows you are here

- 02 Essence of Linear Algebra (2): Linear Combinations and Vector Spaces

- 03 Essence of Linear Algebra (3): Matrices as Linear Transformations

- 04 Essence of Linear Algebra (4): The Secrets of Determinants

- 05 Essence of Linear Algebra (5): Linear Systems and Column Space

- 06 Essence of Linear Algebra (6): Eigenvalues and Eigenvectors

- 07 Essence of Linear Algebra (7): Orthogonality and Projections — When Vectors Mind Their Own Business

- 08 Essence of Linear Algebra (8): Symmetric Matrices and Quadratic Forms — The Best Matrices in Town

- 09 Essence of Linear Algebra (9): Singular Value Decomposition — The Crown Jewel of Linear Algebra

- 10 Essence of Linear Algebra (10): Matrix Norms and Condition Numbers — Is Your Linear System Healthy?

- 11 Essence of Linear Algebra (11): Matrix Calculus and Optimization — The Engine Behind Machine Learning

- 12 Essence of Linear Algebra (12): Sparse Matrices and Compressed Sensing — Less Is More

- 13 Essence of Linear Algebra (13): Tensors and Multilinear Algebra

- 14 Essence of Linear Algebra (14): Random Matrix Theory

- 15 Essence of Linear Algebra (15): Linear Algebra in Machine Learning

- 16 Essence of Linear Algebra (16): Linear Algebra in Deep Learning

- 17 Essence of Linear Algebra (17): Linear Algebra in Computer Vision

- 18 Essence of Linear Algebra (18): Frontiers and Summary