Essence of Linear Algebra (4): The Secrets of Determinants

Determinants are not just tedious calculations -- they measure how much a transformation stretches or compresses space. This chapter gives you the geometric intuition behind determinants, their key properties, and practical applications.

Beyond the Formula#

$$\det\begin{pmatrix}a & b\\ c & d\end{pmatrix} = ad - bc$$You plug in numbers, compute, and move on. That misses the point entirely.

Here is the real meaning, in one sentence:

The determinant of $A$ is the factor by which $A$ scales area (in 2D) or volume (in 3D).

Once you internalize this, every property of determinants stops being a rule to memorize and starts being something you can see. The product rule $\det(AB) = \det(A)\det(B)$ becomes obvious — two scalings compose multiplicatively. $\det(A) = 0$ means space gets crushed flat. $\det(A^{-1}) = 1/\det(A)$ says the inverse must undo the scaling. The sign of the determinant tells you whether orientation was preserved or flipped.

What you will learn#

- The geometric meaning of determinants in 2D and 3D

- What the sign of the determinant tells you (orientation)

- What $\det = 0$ means (singularity, information loss)

- Key properties and why each one is geometrically obvious

- Three ways to actually compute a determinant

- Applications: Cramer’s Rule, area/volume formulas, the Jacobian

Prerequisites#

2D Determinants: An Area Scaling Factor#

Starting from the unit square#

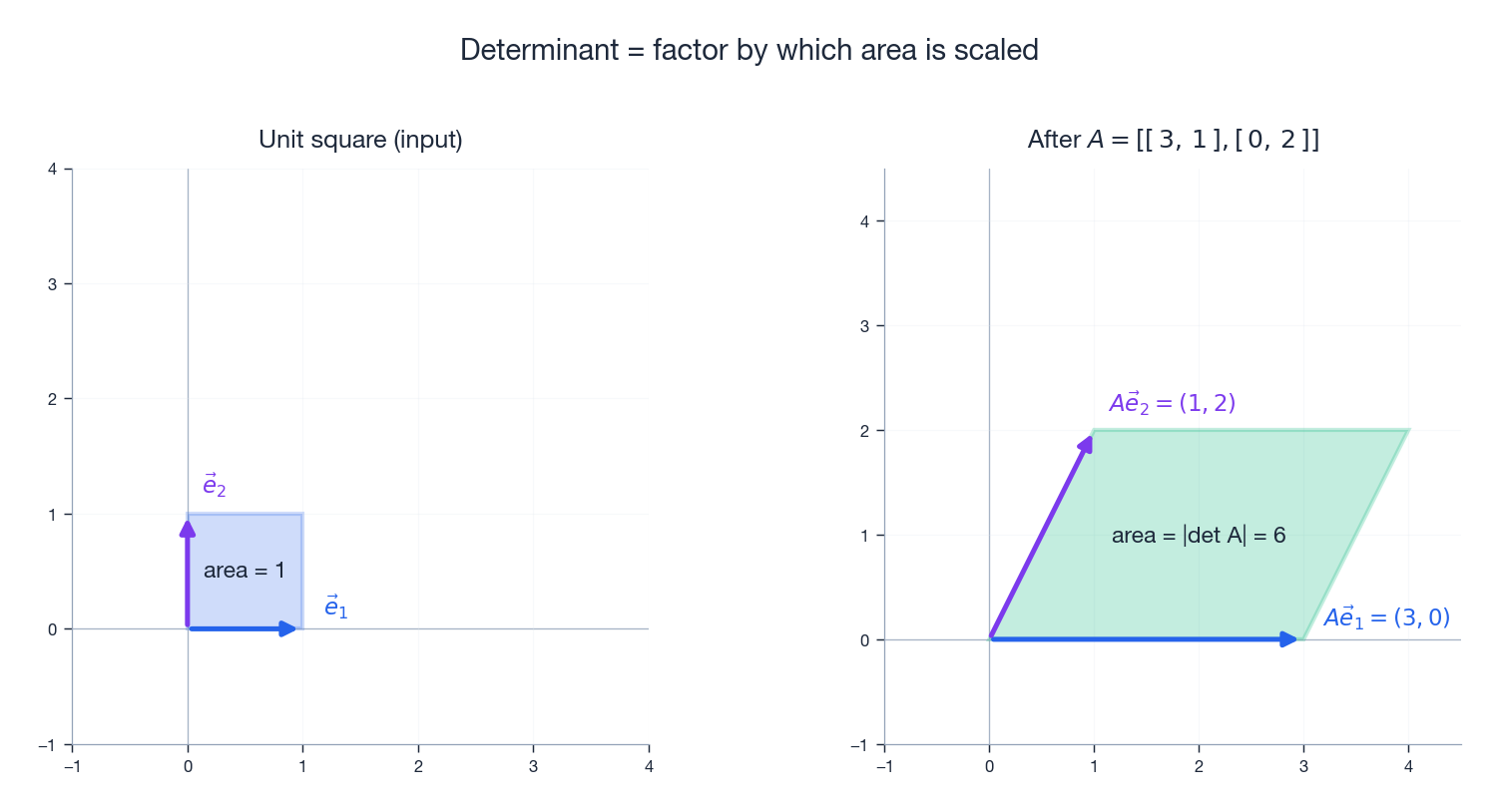

In the plane, the unit square is the square with corners at $(0,0)$ , $(1,0)$ , $(1,1)$ , $(0,1)$ . It is built from the standard basis vectors $\vec{e}_1 = (1, 0)$ and $\vec{e}_2 = (0, 1)$ , and its area is exactly $1$ .

A $2 \times 2$ matrix $A = \begin{pmatrix}a & b\\ c & d\end{pmatrix}$ sends the basis vectors to the columns of $A$ :

- $\vec{e}_1 \;\mapsto\; (a,\,c)$ — the first column

- $\vec{e}_2 \;\mapsto\; (b,\,d)$ — the second column

That is the whole content of the 2D determinant.

A worked example#

$$A = \begin{pmatrix}3 & 1\\ 0 & 2\end{pmatrix}, \qquad \det(A) = 3\cdot 2 - 1\cdot 0 = 6.$$The unit square (area $1$ ) becomes a parallelogram of area $6$ . Every shape in the plane is rescaled by the same factor $6$ — a circle of area $\pi$ becomes an ellipse of area $6\pi$ , a triangle of area $0.5$ becomes a triangle of area $3$ , and so on. The matrix does not care about the shape, only about the local area element.

The photocopier analogy#

$$A = \begin{pmatrix}2 & 0\\ 0 & 2\end{pmatrix}, \qquad \det(A) = 4.$$Width doubles, height doubles, but area quadruples (not doubles). The determinant gives the area scaling directly, and that “$4$ ” is exactly the surprise built into linear maps.

Three transformations, three determinants#

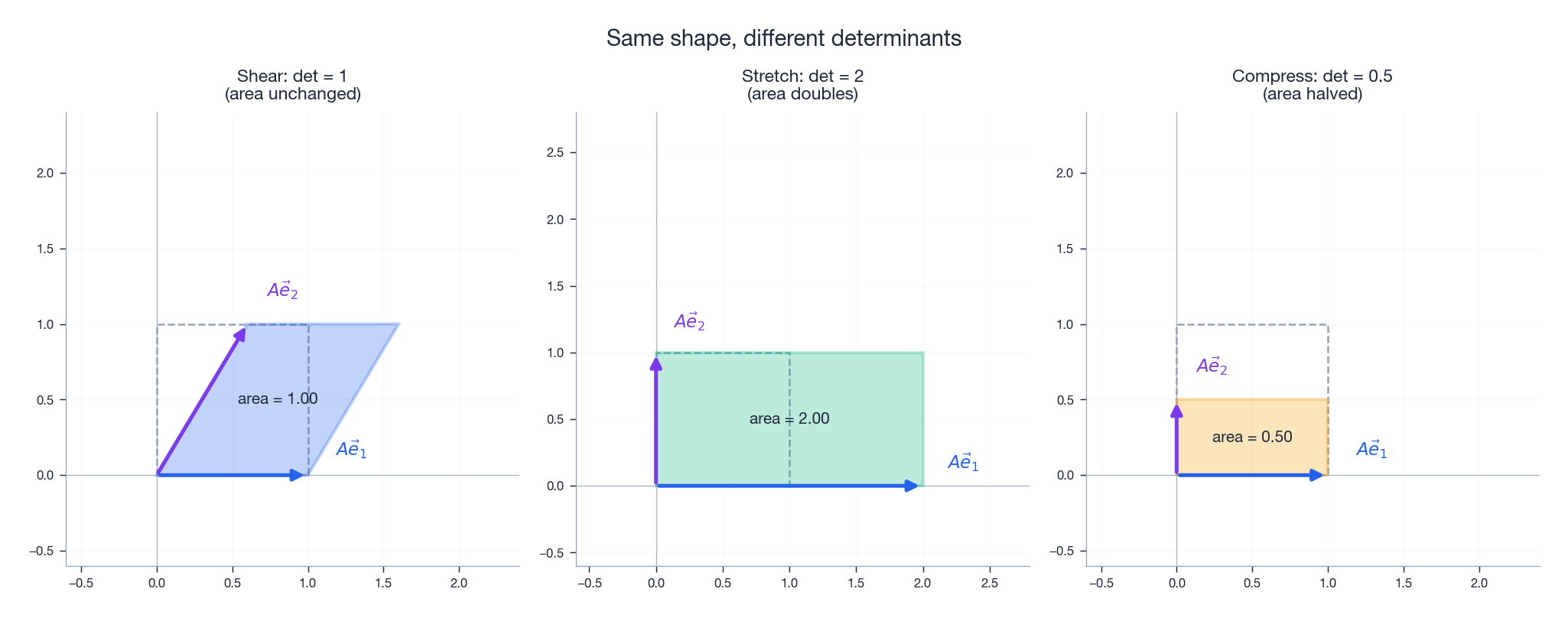

To build intuition, look at three different $A$ ’s acting on the unit square:

- Shear, $\det = 1$ : the parallelogram leans, but its area is unchanged. (Imagine pushing the top of a stack of books sideways — the volume of the stack does not change.)

- Stretch, $\det = 2$ : one direction is doubled; area doubles.

- Compression, $\det = 0.5$ : one direction is halved; area is halved.

The determinant captures the one number that all of these transformations agree on: how much the area changed.

The Sign of the Determinant: Orientation#

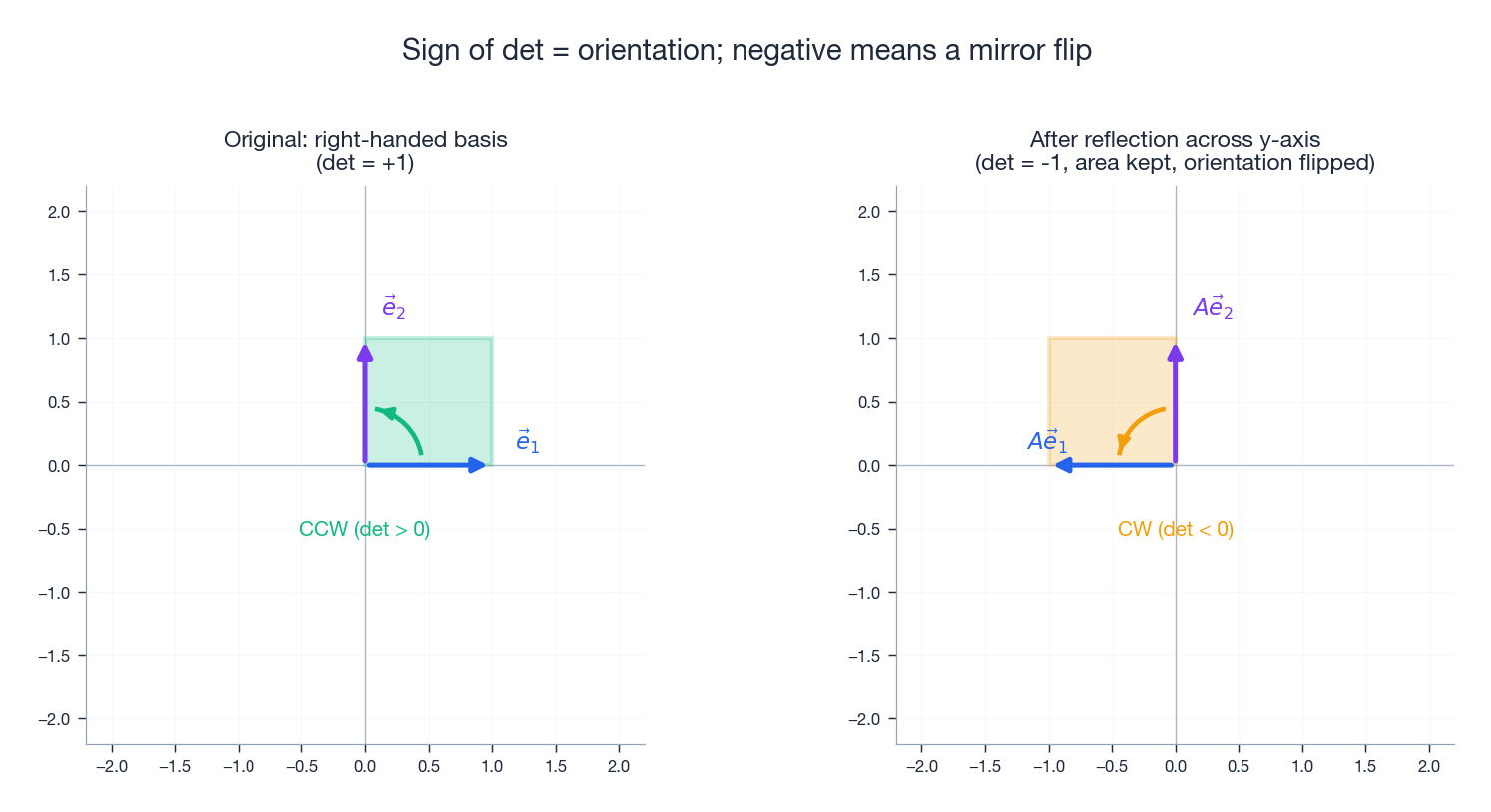

The absolute value $|\det(A)|$ tells you about size. The sign tells you about orientation.

- $\det(A) > 0$ : the transformation preserves orientation. A counter-clockwise loop stays counter-clockwise.

- $\det(A) < 0$ : the transformation flips orientation. A counter-clockwise loop comes out clockwise — exactly what a mirror does.

Example: reflection across the $y$ -axis#

$$A = \begin{pmatrix}-1 & 0\\ \phantom{-}0 & 1\end{pmatrix}, \qquad \det(A) = -1.$$- $|\det| = 1$ : area is unchanged.

- The negative sign records the flip: write a word on a transparent sheet, hold it up to a mirror, and you see exactly what $A$ does.

The glove analogy#

Take a right-hand glove. Rotate it, stretch it, squash it — it stays a right-hand glove. But turn it inside out, and it becomes a left-hand glove. That “inside-out” operation is exactly the kind of transformation a negative determinant performs in our model. Rotations and stretches keep $\det > 0$ ; reflections flip the sign.

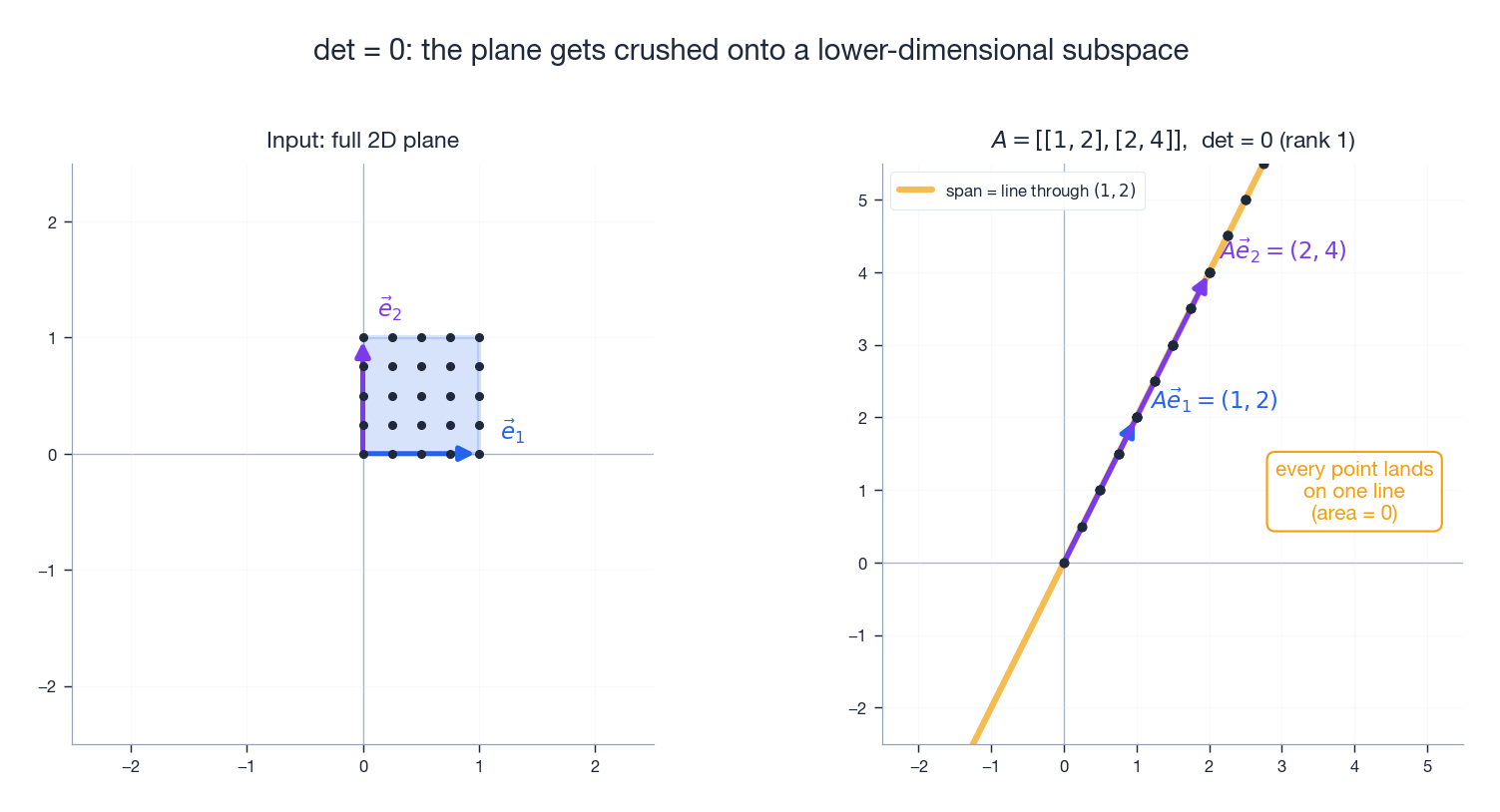

Determinant Zero: Space Gets Crushed#

If the area scaling factor is $0$ , then area becomes $0$ . In 2D, that can only mean one thing: the entire plane is squashed onto a line (or, in degenerate cases, onto the origin).

Example#

$$A = \begin{pmatrix}1 & 2\\ 2 & 4\end{pmatrix}, \qquad \det(A) = 1\cdot 4 - 2\cdot 2 = 0.$$The second column $(2, 4)$ is exactly twice the first column $(1, 2)$ . Both basis images lie on the same line through the origin (the line spanned by $(1,2)$ ). Every point of the plane gets sent to that line — the 2D world is collapsed into 1D.

Why this means non-invertible#

Take a 2D photo and squash it into a line — can you reconstruct the photo? No: countless input points now occupy the same output point, so the map cannot be undone. Information has been destroyed, so $A^{-1}$ does not exist.

$$\det(A) = 0 \;\Longleftrightarrow\; A\text{ is singular} \;\Longleftrightarrow\; \text{the columns of }A\text{ are linearly dependent}.$$It also gives a fast practical test for linear dependence: just compute the determinant.

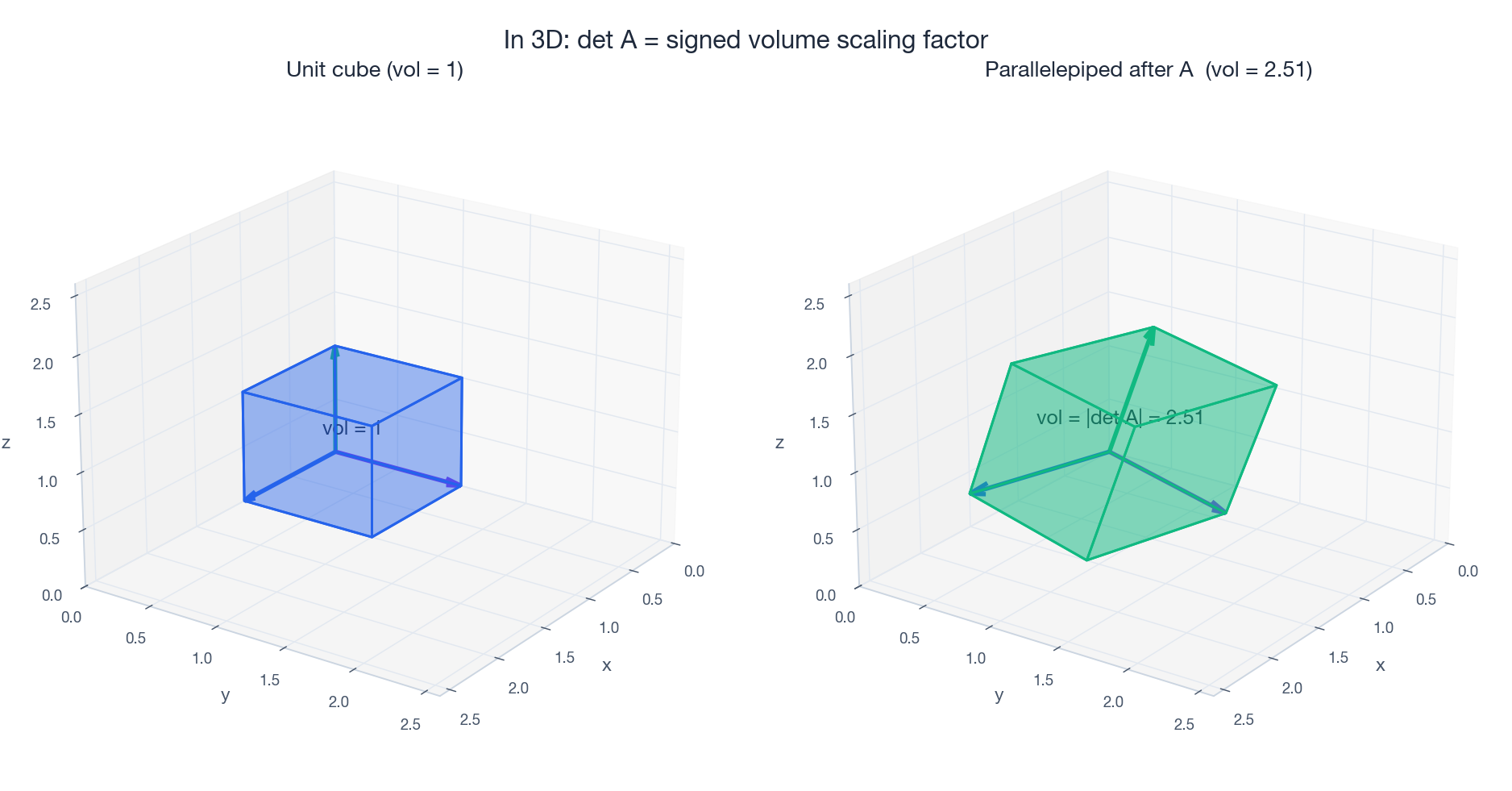

3D Determinants: A Volume Scaling Factor#

Everything we said in 2D lifts cleanly to 3D. The unit cube is built from $\vec{e}_1, \vec{e}_2, \vec{e}_3$ , and a $3 \times 3$ matrix sends it to a slanted box — a parallelepiped. The determinant gives the (signed) volume of that box.

The formula#

$$\det\begin{pmatrix}a & b & c\\ d & e & f\\ g & h & i\end{pmatrix} = a(ei - fh) - b(di - fg) + c(dh - eg).$$ $$\det(A) = \vec{v}_1 \cdot (\vec{v}_2 \times \vec{v}_3),$$which is one of the standard formulas for the (signed) volume of a parallelepiped.

Sign in 3D#

A negative 3D determinant means the right-handed coordinate system has been turned into a left-handed one (e.g. by reflecting one axis). Reflections, point reflections, and odd numbers of mirror flips all give $\det < 0$ .

Properties of Determinants — All Geometric#

Once you see determinants as scaling factors, the algebraic properties stop looking like a list of rules and start looking like statements about scaling.

Multiplicative: $\det(AB) = \det(A)\det(B)$ #

$B$ scales volume by $\det(B)$ ; then $A$ scales the result by $\det(A)$ . Total scaling = product. Like first running a copier at $1.5\times$ then at $3\times$ : total area scaling is $4.5\times$ .

Transpose: $\det(A^T) = \det(A)$ #

Swapping rows for columns leaves the volume scaling unchanged. (Geometrically the parallelepipeds are different, but they have the same volume — a non-trivial fact that is one of the small miracles of the theory.)

Inverse: $\det(A^{-1}) = 1/\det(A)$ #

If $A$ multiplies volume by $k$ , then $A^{-1}$ must divide volume by $k$ . Algebraically: $\det(A)\det(A^{-1}) = \det(I) = 1$ .

Row swap changes sign#

Swapping two rows multiplies the determinant by $-1$ . Swapping basis vectors flips the handedness of the coordinate system, so the sign flips.

Row scaling scales the determinant#

Multiplying one row by $k$ multiplies the determinant by $k$ — you stretched one basis vector $k$ times, so the parallelogram is $k$ times as big.

Corollary. $\det(kA) = k^n \det(A)$ for an $n\times n$ matrix: $k$ acts on each of the $n$ rows.

Row addition leaves the determinant alone#

Adding a multiple of one row to another does not change the determinant.

This is a shear: the parallelogram changes shape, but its area does not. Picture a stack of cards; pushing the top sideways changes the silhouette but not the volume.

This single fact is why Gaussian elimination preserves determinants up to easy bookkeeping — it is the entire reason the elimination method works for computing $\det$ .

Special matrices#

| Matrix type | Determinant |

|---|---|

| Identity $I$ | $1$ |

| Diagonal | product of diagonal entries |

| Triangular (any kind) | product of diagonal entries |

The triangular case is the workhorse: any matrix can be reduced to triangular form by elimination, and once it is triangular the determinant is one multiplication.

Computing Determinants#

$2 \times 2$ : just the formula#

$$\det\begin{pmatrix}a & b\\ c & d\end{pmatrix} = ad - bc.$$$3 \times 3$ : Sarrus’s rule#

$$\det\begin{pmatrix}1 & 2 & 3\\ 4 & 5 & 6\\ 7 & 8 & 9\end{pmatrix} = (1\cdot 5\cdot 9 + 2\cdot 6\cdot 7 + 3\cdot 4\cdot 8) - (3\cdot 5\cdot 7 + 2\cdot 4\cdot 9 + 1\cdot 6\cdot 8) = 0.$$(The result is $0$ because each row is the previous one plus a constant — the rows are linearly dependent.)

Warning. Sarrus’s rule works only for $3 \times 3$ matrices. Do not try to extend the diagonal pattern to $4 \times 4$ — you will get a wrong answer.

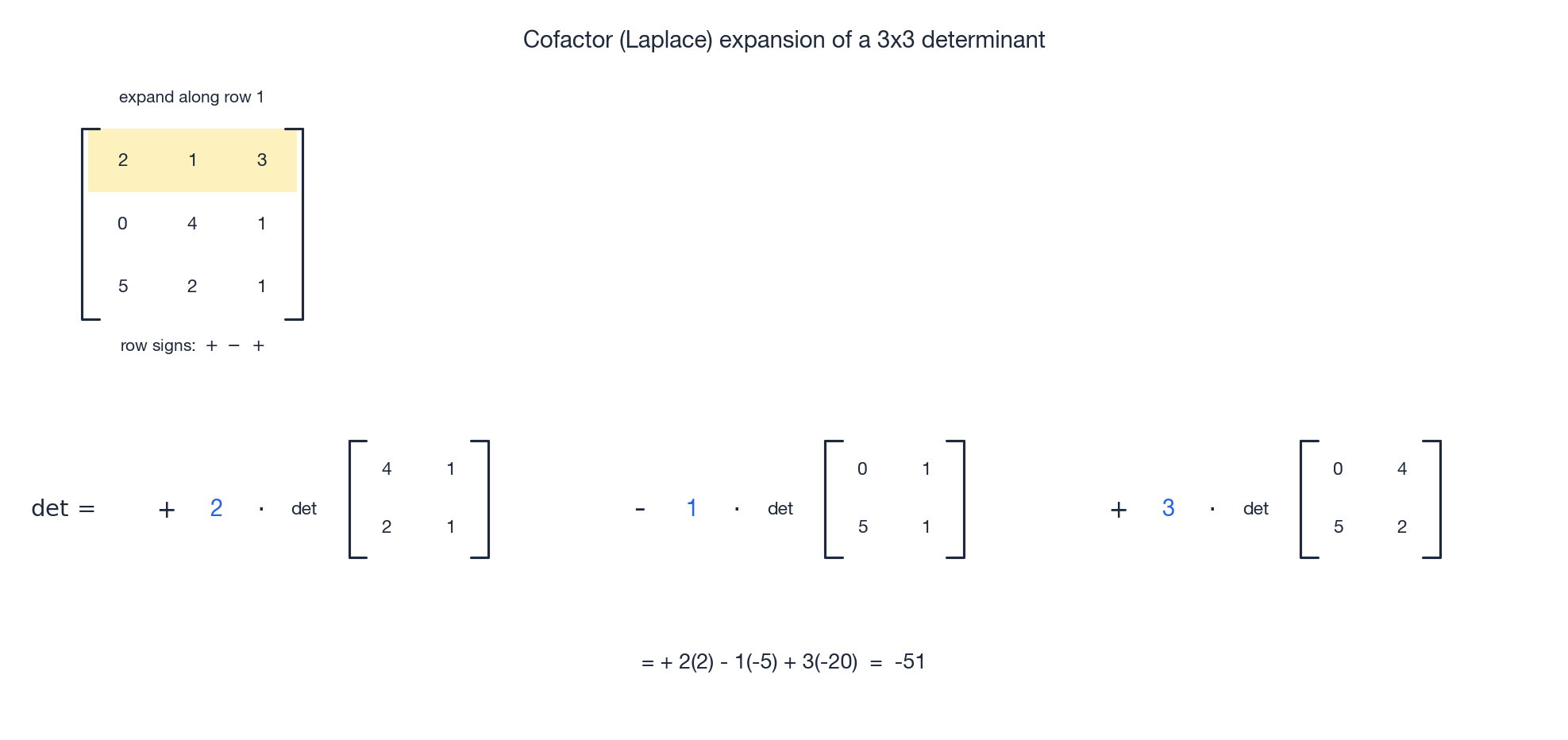

General: cofactor (Laplace) expansion#

$$\det(A) = \sum_{j=1}^{n} (-1)^{i+j} a_{ij}\, M_{ij},$$where $M_{ij}$ is the minor — the determinant of the $(n-1)\times(n-1)$ submatrix obtained by deleting row $i$ and column $j$ . The sign pattern $(-1)^{i+j}$ alternates like a checkerboard; for a $3\times 3$ the first row gets signs $+,-,+$ .

Practical tip. Expand along the row or column with the most zeros — those terms vanish and you do less work.

For real computation: Gaussian elimination#

Cofactor expansion has $O(n!)$

work, which is hopeless past $n = 10$

or so. In practice you reduce $A$

to upper triangular form by elementary row operations (which only multiply the determinant by predictable factors), then multiply the diagonal. That is $O(n^3)$

— this is what numpy.linalg.det actually does internally.

| |

Cramer’s Rule#

$$x_i = \frac{\det(A_i)}{\det(A)},$$where $A_i$ is $A$ with its $i$ -th column replaced by $\vec{b}$ .

$$\begin{cases} 2x + y = 5 \\ 3x + 4y = 11 \end{cases}$$ $$\det(A) = 8 - 3 = 5, \quad \det(A_1) = 20 - 11 = 9, \quad \det(A_2) = 22 - 15 = 7,$$so $x = 9/5,\; y = 7/5$ .

Caveat. Cramer’s rule is theoretically beautiful but practically slow ($O(n^4)$ at best vs. $O(n^3)$ for elimination). It is the right tool for proving things and for $2\times 2$ or $3\times 3$ symbolic problems, not for actually solving big systems.

Applications#

Area of a triangle#

$$\text{Area} = \tfrac{1}{2}\left|\det\begin{pmatrix} x_2 - x_1 & x_3 - x_1 \\ y_2 - y_1 & y_3 - y_1 \end{pmatrix}\right|.$$You are taking half the area of the parallelogram spanned by two edges.

Cross product as a determinant#

$$\vec{a} \times \vec{b} = \det\begin{pmatrix} \vec{i} & \vec{j} & \vec{k} \\ a_1 & a_2 & a_3 \\ b_1 & b_2 & b_3 \end{pmatrix}.$$Its magnitude $\|\vec{a}\times\vec{b}\|$ is exactly the area of the parallelogram spanned by $\vec{a}$ and $\vec{b}$ — a $2 \times 2$ determinant in disguise.

The Jacobian determinant#

$$\iint f(x, y)\, dx\, dy = \iint f\bigl(x(u, v),\, y(u, v)\bigr) \left|\det \frac{\partial(x, y)}{\partial(u, v)}\right| du\, dv.$$The Jacobian $\left|\det\frac{\partial(x,y)}{\partial(u,v)}\right|$ is the local area scaling factor — the determinant of the linear approximation to the change of variables at each point. Geometrically, you are using our 2D area-scaling theorem at every infinitesimal patch.

$$\left|\det \frac{\partial(x, y)}{\partial(r, \theta)}\right| = \det\begin{pmatrix} \cos\theta & -r\sin\theta \\ \sin\theta & \phantom{-}r\cos\theta \end{pmatrix} = r.$$That is the famous “$r$ ” in $dx\,dy = r\,dr\,d\theta$ . Calculus students often memorize it; now you can derive it.

Determinants and linear systems#

For $A\vec{x} = \vec{b}$ with $A$ square:

| Condition | What happens |

|---|---|

| $\det(A) \neq 0$ | unique solution exists |

| $\det(A) = 0$ , system homogeneous | non-trivial solutions exist |

| $\det(A) = 0$ , $\vec{b} \neq \vec{0}$ | either no solution or infinitely many |

Python: Visualizing the Determinant#

| |

Try a few more matrices on your own — in particular, try one with $\det = 0$ and watch the parallelogram collapse to a line.

Summary#

The mental model#

When you see a determinant, do not think “I need to compute a number.” Think:

“How does this transformation change the size and orientation of space?”

- $|\det(A)|$ — how much area or volume is scaled

- $\det > 0$ — orientation preserved

- $\det < 0$ — orientation flipped (mirror image)

- $\det = 0$ — space crushed flat, information lost, matrix not invertible

Key properties at a glance#

| Property | Formula | Intuition |

|---|---|---|

| Multiplicative | $\det(AB) = \det(A)\det(B)$ | scalings multiply |

| Transpose | $\det(A^T) = \det(A)$ | rows and columns equally valid |

| Inverse | $\det(A^{-1}) = 1/\det(A)$ | undo the scaling |

| Scalar | $\det(kA) = k^n \det(A)$ | $k$ scales each of $n$ directions |

Why Nobody Computes the Determinant by Cofactor Expansion#

The cofactor formula is beautiful, recursive, and catastrophically slow. Let $T(n)$ be the number of multiplications to expand an $n\times n$ determinant by minors. The recursion $T(n) = n\cdot T(n-1)$ gives $T(n) = n!$ . For $n = 20$ that is $2.4 \times 10^{18}$ multiplications — decades on a single core. For $n = 50$ it is more multiplications than there are atoms on Earth.

$$\det A = (-1)^{\text{swaps}} \prod_{i=1}^n U_{ii}.$$The cost of LU is $\tfrac{2}{3}n^3$ FLOPs, and the cost of multiplying the $n$ diagonal entries is linear. So determinant is $\Theta(n^3)$ , not $\Theta(n!)$ — a saving of, for $n=20$ , a factor of $3\times 10^{14}$ .

Two practical notes:

- The same factorisation that gives you $\det A$

also gives you $A^{-1}$

and the solution to $Ax=b$

for any right-hand side. If you are computing both the determinant and the solution, do not call

np.linalg.detandnp.linalg.solveseparately — factor once withscipy.linalg.lu_factorand reuse. - For symmetric positive-definite matrices, Cholesky is twice as fast: $A = LL^T$ in $\tfrac{1}{3}n^3$ FLOPs, and $\det A = (\prod L_{ii})^2$ .

So the cofactor formula stays in the textbook because it is the cleanest statement of what the determinant is. For computing it, we exploit the multiplicative property $\det(LU) = \det L \cdot \det U$ and the trivial fact that the determinant of a triangular matrix is the product of its diagonal.

The slogdet Trick: When the Determinant Itself Underflows#

Here is a problem you hit the first time you implement maximum-likelihood estimation for a Gaussian. The log-likelihood involves $\log \det \Sigma$

where $\Sigma$

is a covariance matrix. For a $200 \times 200$

covariance with eigenvalues around $0.01$

, the determinant is roughly $0.01^{200} = 10^{-400}$

— which is exactly $0$

in double precision (smallest positive double is $\approx 5\times 10^{-324}$

). So np.log(np.linalg.det(Sigma)) returns -inf and your training crashes.

The fix is to never form the determinant. numpy provides np.linalg.slogdet, which returns the sign and the log magnitude separately:

| |

The product of $n$ small numbers underflows; the sum of $n$ logs does not. The same trick reappears everywhere: log-probabilities in HMMs, the log-partition function in energy-based models, log-Jacobians in normalising flows. Whenever you see a product over many factors that each could be tiny or huge, work in log space.

A related habit: when comparing two determinants for sign or relative size, compare their logs. When you really need the value, compute $\det A = \mathrm{sign}\cdot e^{\log|\det A|}$ at the very end, ideally never.

What’s Next#

Chapter 5 : Linear Systems and Column Space. We bring together everything so far — matrices, transformations, and determinants — to understand when $A\vec{x} = \vec{b}$ has solutions, how many, and what their structure looks like. The key concepts are the column space (“what can $A$ reach?”), the null space (“what gets crushed?”), and the rank (“how many effective dimensions remain?”). Determinants will play a starring role in the square case; for non-square or rank-deficient $A$ we will need a more refined toolkit.

Linear Algebra 18 parts

- 01 Essence of Linear Algebra (1): The Essence of Vectors — More Than Just Arrows

- 02 Essence of Linear Algebra (2): Linear Combinations and Vector Spaces

- 03 Essence of Linear Algebra (3): Matrices as Linear Transformations

- 04 Essence of Linear Algebra (4): The Secrets of Determinants you are here

- 05 Essence of Linear Algebra (5): Linear Systems and Column Space

- 06 Essence of Linear Algebra (6): Eigenvalues and Eigenvectors

- 07 Essence of Linear Algebra (7): Orthogonality and Projections — When Vectors Mind Their Own Business

- 08 Essence of Linear Algebra (8): Symmetric Matrices and Quadratic Forms — The Best Matrices in Town

- 09 Essence of Linear Algebra (9): Singular Value Decomposition — The Crown Jewel of Linear Algebra

- 10 Essence of Linear Algebra (10): Matrix Norms and Condition Numbers — Is Your Linear System Healthy?

- 11 Essence of Linear Algebra (11): Matrix Calculus and Optimization — The Engine Behind Machine Learning

- 12 Essence of Linear Algebra (12): Sparse Matrices and Compressed Sensing — Less Is More

- 13 Essence of Linear Algebra (13): Tensors and Multilinear Algebra

- 14 Essence of Linear Algebra (14): Random Matrix Theory

- 15 Essence of Linear Algebra (15): Linear Algebra in Machine Learning

- 16 Essence of Linear Algebra (16): Linear Algebra in Deep Learning

- 17 Essence of Linear Algebra (17): Linear Algebra in Computer Vision

- 18 Essence of Linear Algebra (18): Frontiers and Summary