Essence of Linear Algebra (17): Linear Algebra in Computer Vision

Images are matrices, geometric transformations are matrix multiplications, camera imaging is a projective map, and 3D reconstruction is solving linear systems. Master the linear algebra that quietly powers every corner of computer vision.

Computer vision is the science of teaching machines to see. What is striking is how thoroughly the whole field reduces to linear algebra: an image is a matrix, a geometric transformation is a matrix product, a camera is a $3 \times 4$ projection matrix, two-view geometry is the equation $\mathbf{x}_2^\top \mathbf{F}\, \mathbf{x}_1 = 0$ , and 3D reconstruction is a sparse linear least-squares problem. Once you see the field through that lens, what once looked like a zoo of algorithms turns out to be a small set of linear-algebraic ideas applied repeatedly.

What you will learn

- How images become matrices, tensors, and high-dimensional vectors

- 2D rotation, scale, shear, and translation as matrix multiplication, unified by homogeneous coordinates

- Why perspective requires the homography $\mathbf{H}$ and how to fit it from point correspondences

- The pinhole camera as a $3 \times 4$ projection matrix $\mathbf{P} = \mathbf{K}[\mathbf{R}\,|\,\mathbf{t}]$

- Epipolar geometry, the fundamental and essential matrices, triangulation

- SVD-based image compression and the Eckart-Young theorem

- Convolution as matrix multiplication; the Harris structure tensor and optical flow as $2 \times 2$ linear systems

Prerequisites: linear transformations (Chapter 3 ), eigendecomposition (Chapter 6 ), SVD (Chapter 9 ).

Images as Matrices, Tensors, and Vectors#

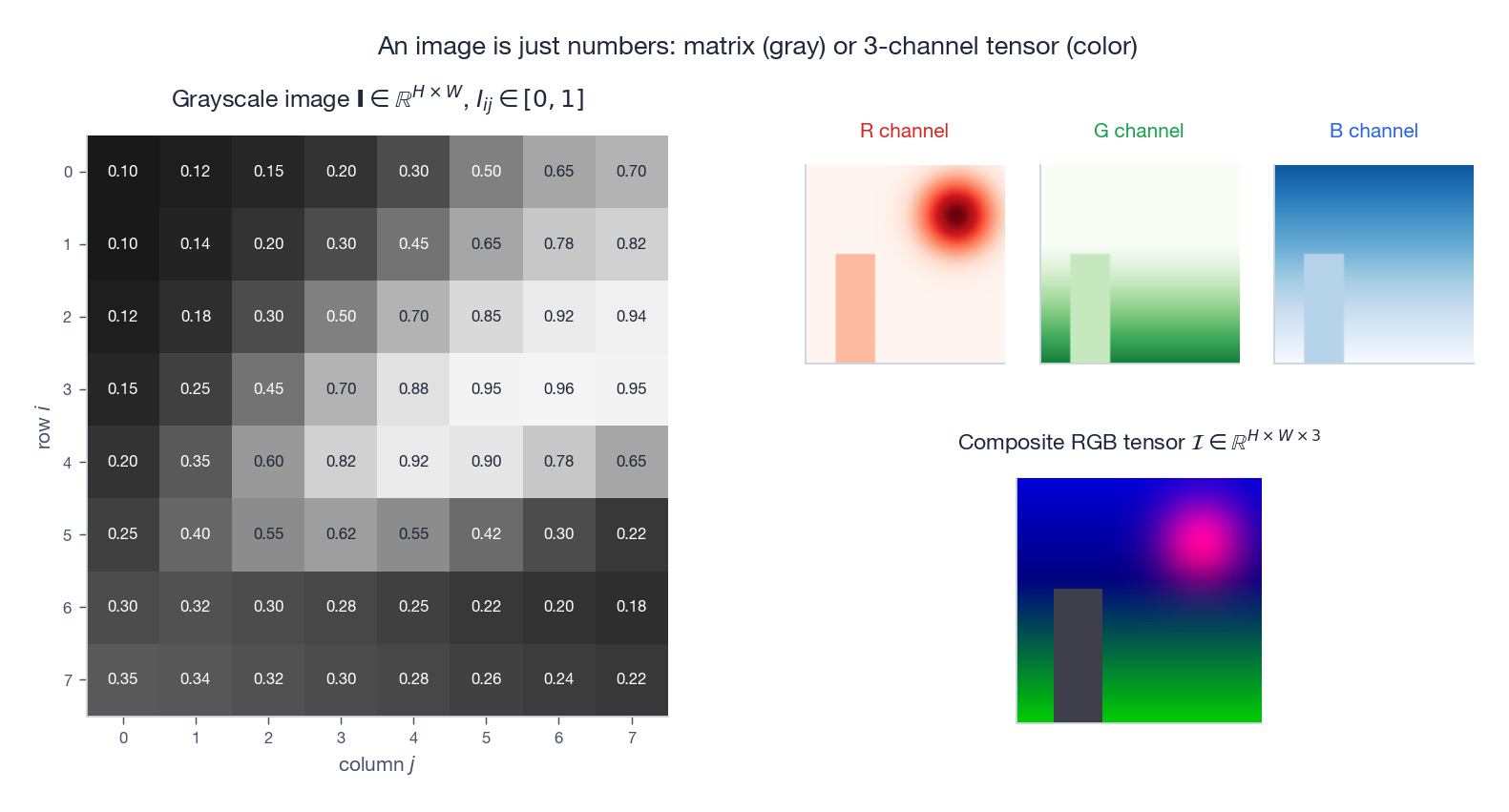

From pixels to a matrix#

$$\mathbf{I} \in \mathbb{R}^{H \times W}, \qquad I_{ij} \in [0, 1].$$That is the entire story. Every operation we will run on the image is some linear-algebraic transformation of this matrix.

Color images are 3-channel tensors#

$$\mathcal{I} \in \mathbb{R}^{H \times W \times 3}, \qquad \mathcal{I}_{ij\,c} \in [0, 1].$$ $$Y = 0.299\,R + 0.587\,G + 0.114\,B.$$ | |

Images as high-dimensional vectors#

$$\mathrm{vec}(\mathbf{I}) \in \mathbb{R}^{HW}.$$ $$\cos\theta = \frac{\mathbf{a}^\top \mathbf{b}}{\lVert\mathbf{a}\rVert\,\lVert\mathbf{b}\rVert},$$a tool used in everything from image retrieval to face recognition. The huge dimension is exactly why we use SVD/PCA to find a much lower-dimensional subspace where similarity actually means something visual.

Geometric Transformations as Matrix Multiplication#

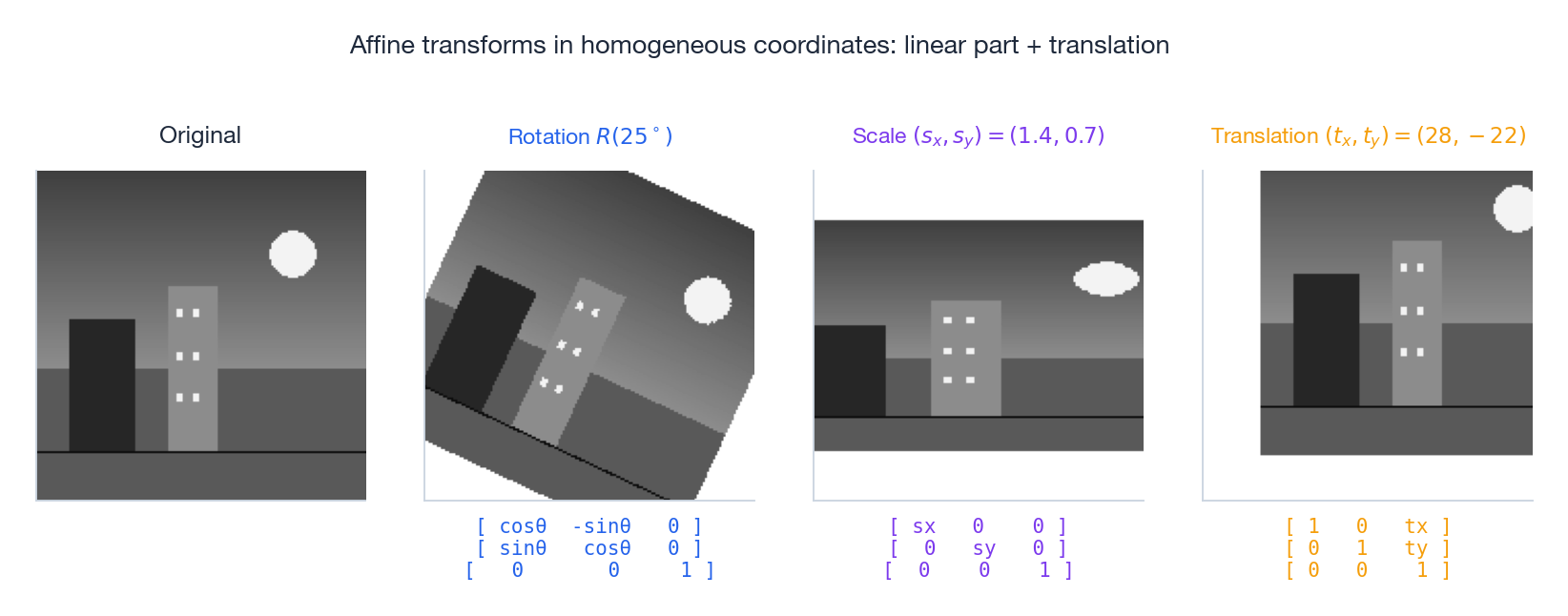

Why matrices#

A geometric transformation maps each pixel coordinate $(x, y)$ to a new location. Storing it as a matrix gains two superpowers:

- Composition is multiplication. The result of applying $\mathbf{A}$ then $\mathbf{B}$ is just $\mathbf{B}\mathbf{A}$ .

- Inversion is matrix inverse. Undoing a transformation never requires re-deriving a formula.

Rotation, scale, and shear#

$$\mathbf{R}(\theta) = \begin{bmatrix}\cos\theta & -\sin\theta \\ \sin\theta & \cos\theta\end{bmatrix}.$$Three properties make rotation matrices unusually pleasant. They are orthogonal ($\mathbf{R}^\top\mathbf{R} = \mathbf{I}$ ), so $\mathbf{R}^{-1} = \mathbf{R}^\top$ ; they have $\det\mathbf{R} = 1$ , so they preserve area and orientation; and they compose by adding angles, $\mathbf{R}(\alpha)\mathbf{R}(\beta) = \mathbf{R}(\alpha + \beta)$ .

$$ \mathbf{S} = \begin{bmatrix}s_x & 0 \\ 0 & s_y\end{bmatrix}, \qquad \mathbf{H}_k = \begin{bmatrix}1 & k \\ 0 & 1\end{bmatrix}. $$Order matters#

Composition reads right-to-left: $\mathbf{T} = \mathbf{R}\,\mathbf{S}$ means “first scale, then rotate”. Reverse the order and you generally get a different image (the only safe case is uniform scaling, which commutes with rotation).

| |

Homogeneous Coordinates: Making Translation Linear#

The translation problem#

Rotation, scaling, and shear all fix the origin and so are linear maps $\mathbf{y} = \mathbf{A}\mathbf{x}$ . Translation $\mathbf{y} = \mathbf{x} + \mathbf{t}$ does not — it sends the origin somewhere else, breaking linearity. We would like every transformation in the same matrix-multiplication framework.

The trick: lift to one extra dimension#

$$\begin{bmatrix} x \\ y \end{bmatrix} \;\longrightarrow\; \begin{bmatrix} x \\ y \\ 1 \end{bmatrix}.$$ $$ \begin{bmatrix} x' \\ y' \\ 1 \end{bmatrix} = \begin{bmatrix} 1 & 0 & t_x \\ 0 & 1 & t_y \\ 0 & 0 & 1 \end{bmatrix} \begin{bmatrix} x \\ y \\ 1 \end{bmatrix}. $$ $$ \mathbf{M} = \begin{bmatrix} a_{11} & a_{12} & t_x \\ a_{21} & a_{22} & t_y \\ 0 & 0 & 1 \end{bmatrix}. $$The upper-left $2 \times 2$ block is the linear part; the right column is the translation; the bottom row identifies “this is still affine”.

Rotating around an arbitrary point#

$$\mathbf{M} = \mathbf{T}(c_x, c_y)\,\mathbf{R}(\theta)\,\mathbf{T}(-c_x, -c_y).$$ | |

Perspective and the Homography#

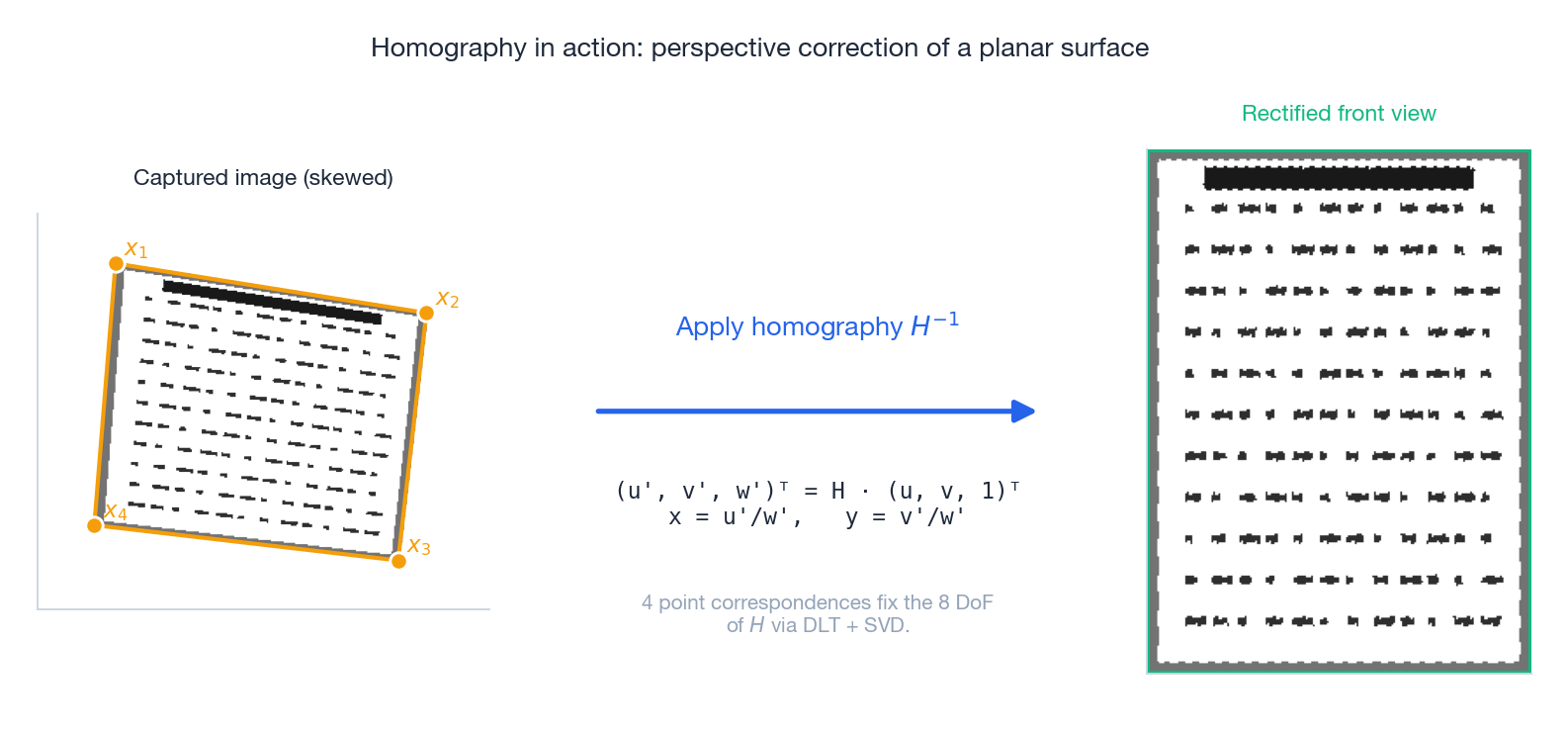

Why affine is not enough#

Affine maps preserve parallelism: parallel lines stay parallel. The real world disagrees — railway tracks converge to the horizon. Photographs of a flat object taken from an angle exhibit this convergence as well, and we need a more flexible map to undo it.

The $3 \times 3$ homography#

$$ \begin{bmatrix} x' \\ y' \\ w' \end{bmatrix} = \mathbf{H} \begin{bmatrix} x \\ y \\ 1 \end{bmatrix}, \qquad u = x'/w', \quad v = y'/w'. $$The crucial difference from an affine map is the bottom row: in an affine matrix it is $(0,\,0,\,1)$ , whereas a homography allows nonzero entries there. Those entries produce the perspective division $w'$ that bends parallel lines together.

$$\mathbf{A}\,\mathbf{h} = \mathbf{0}$$with SVD — taking the singular vector with the smallest singular value as $\mathbf{h}$ . This is the Direct Linear Transform (DLT), and it is the prototype of every projective estimation algorithm in this chapter.

| |

Where this shows up. Panorama stitching (one $\mathbf{H}$ per image pair when the camera only rotates), document scanners (rectifying skewed photos of paper), augmented reality (placing a virtual object on a tracked planar marker), top-down “bird’s-eye” views in autonomous driving.

The Pinhole Camera and Its Projection Matrix#

Pinhole geometry#

$$u = f\,\frac{X}{Z}, \qquad v = f\,\frac{Y}{Z}.$$The division by depth $Z$ is the source of perspective: distant objects project to smaller image coordinates.

Intrinsics: from millimetres to pixels#

$$ \mathbf{K} = \begin{bmatrix} f_x & s & c_x \\ 0 & f_y & c_y \\ 0 & 0 & 1 \end{bmatrix}, $$where $f_x, f_y$ are focal lengths in pixels, $(c_x, c_y)$ is the principal point, and $s$ is sensor skew (essentially zero on modern cameras).

Extrinsics and the full projection#

$$ \lambda \begin{bmatrix} u \\ v \\ 1 \end{bmatrix} = \mathbf{K}\,[\mathbf{R}\,|\,\mathbf{t}] \begin{bmatrix} X \\ Y \\ Z \\ 1 \end{bmatrix} = \mathbf{P}\,\mathbf{X}_w, $$where $\mathbf{P} \in \mathbb{R}^{3 \times 4}$ is the projection matrix and $\lambda$ absorbs the scalar coming from perspective division. Eleven parameters live in $\mathbf{P}$ : five intrinsics plus six pose parameters (three rotation angles, three translations).

![Pinhole camera projection: 3D world points become 2D pixels via P = K[R|t].](https://blog-pic-ck.oss-cn-beijing.aliyuncs.com/posts/en/linear-algebra/17-linear-algebra-in-computer-vision/fig5_camera_projection.png)

| |

Calibration and Zhang’s method#

Estimating $\mathbf{K}$

and the lens distortion coefficients from images of a known checkerboard at several poses is camera calibration. Zhang’s algorithm (1999) reduces it to estimating one homography per board image, extracting linear constraints on $\mathbf{K}^{-\top}\mathbf{K}^{-1}$

from the orthonormality of a rotation matrix, and refining with a small nonlinear optimisation. OpenCV’s calibrateCamera does the whole pipeline in one call.

Two Views: Epipolar Geometry#

The epipolar constraint#

$$\mathbf{x}_2^\top\,\mathbf{F}\,\mathbf{x}_1 = 0,$$where $\mathbf{F}$ is the $3 \times 3$ fundamental matrix. It depends only on the relative pose of the two cameras, not on the scene. From this constraint, given a point $\mathbf{x}_1$ in image 1, the corresponding point in image 2 must lie on the epipolar line $\boldsymbol{\ell}_2 = \mathbf{F}\,\mathbf{x}_1$ . Stereo matching reduces from a 2D search to a 1D search.

Essential vs fundamental#

$$\hat{\mathbf{x}}_2^\top\,\mathbf{E}\,\hat{\mathbf{x}}_1 = 0, \qquad \mathbf{F} = \mathbf{K}_2^{-\top}\,\mathbf{E}\,\mathbf{K}_1^{-1}.$$The essential matrix has the special form $\mathbf{E} = [\mathbf{t}]_\times \mathbf{R}$ , where $[\mathbf{t}]_\times$ is the skew-symmetric matrix of the translation vector. Decomposing $\mathbf{E}$ via SVD recovers the relative camera pose $(\mathbf{R}, \mathbf{t})$ up to a sign and the unknowable absolute scale of monocular vision.

| |

3D Reconstruction: Triangulation, SfM, and Bundle Adjustment#

Triangulation as a linear system#

Given two known projection matrices $\mathbf{P}_1, \mathbf{P}_2$ and a corresponding pair $\mathbf{x}_1 \leftrightarrow \mathbf{x}_2$ , each image equation $\lambda_i \mathbf{x}_i = \mathbf{P}_i \mathbf{X}$ contributes two linear constraints on the 3D point $\mathbf{X}$ after eliminating the unknown depth $\lambda_i$ . Stacking gives a $4 \times 4$ linear system $\mathbf{A}\mathbf{X} = \mathbf{0}$ , solved by SVD: $\mathbf{X}$ is the right singular vector with the smallest singular value, and the 3D coordinates are recovered after the homogeneous division.

| |

Structure from Motion#

SfM scales triangulation up to many views. The standard incremental pipeline is:

- Detect and match features (SIFT, ORB) between every pair of overlapping images.

- Initialise with two views: estimate $\mathbf{E}$ via the 5-point algorithm + RANSAC, recover relative pose, triangulate an initial point cloud.

- Grow by adding one image at a time: solve PnP (Perspective-n-Point) to register the new camera, then triangulate any newly observable 3D points.

- Refine globally with bundle adjustment.

Bundle adjustment#

$$\min_{\{\mathbf{P}_i\},\,\{\mathbf{X}_j\}} \;\sum_{(i, j) \in \mathcal{V}} \rho\!\left(\lVert \mathbf{x}_{ij} - \pi(\mathbf{P}_i, \mathbf{X}_j)\rVert^2\right),$$where $\pi$ is the projection function and $\rho$ is a robust kernel (Huber) for outliers. This is a giant nonlinear least-squares problem — millions of variables in modern reconstructions — but the Jacobian is block-sparse: every observation involves exactly one camera and one point. Levenberg-Marquardt with the Schur complement exploits that sparsity and makes the problem solvable on a laptop.

SLAM: Linear Algebra in Real-Time#

The SLAM problem#

A robot moves through an unknown environment, reading sensors as it goes. SLAM asks it to simultaneously estimate its own trajectory and a map of landmarks. Modern SLAM systems are essentially online bundle adjustment, with two distinctive linear-algebraic ingredients.

Lie groups for rotations#

$$\mathbf{T} = \begin{bmatrix}\mathbf{R} & \mathbf{t} \\ \mathbf{0}^\top & 1\end{bmatrix} \in SE(3),$$with $\mathbf{R} \in SO(3)$ . Optimising $\mathbf{R}$ as nine numbers is awkward because we must enforce $\mathbf{R}^\top\mathbf{R} = \mathbf{I}$ . The Lie algebra $\mathfrak{se}(3) \cong \mathbb{R}^6$ is an unconstrained vector space, and the exponential map $\mathbf{T} = \exp(\boldsymbol{\xi}^\wedge)$ moves between them. We do gradient steps in $\mathbb{R}^6$ and lift back to $SE(3)$ — clean, constraint-free optimisation.

| |

Pose-graph optimisation#

$$\min \;\sum_k \lVert \mathbf{e}_k\rVert^2_{\boldsymbol{\Omega}_k},$$ $$\mathbf{H}\,\Delta\mathbf{x} = -\mathbf{b},$$where $\mathbf{H} = \mathbf{J}^\top \boldsymbol{\Omega}\,\mathbf{J}$ inherits the graph’s sparsity. Sparse Cholesky on $\mathbf{H}$ is the inner loop of essentially every modern SLAM stack.

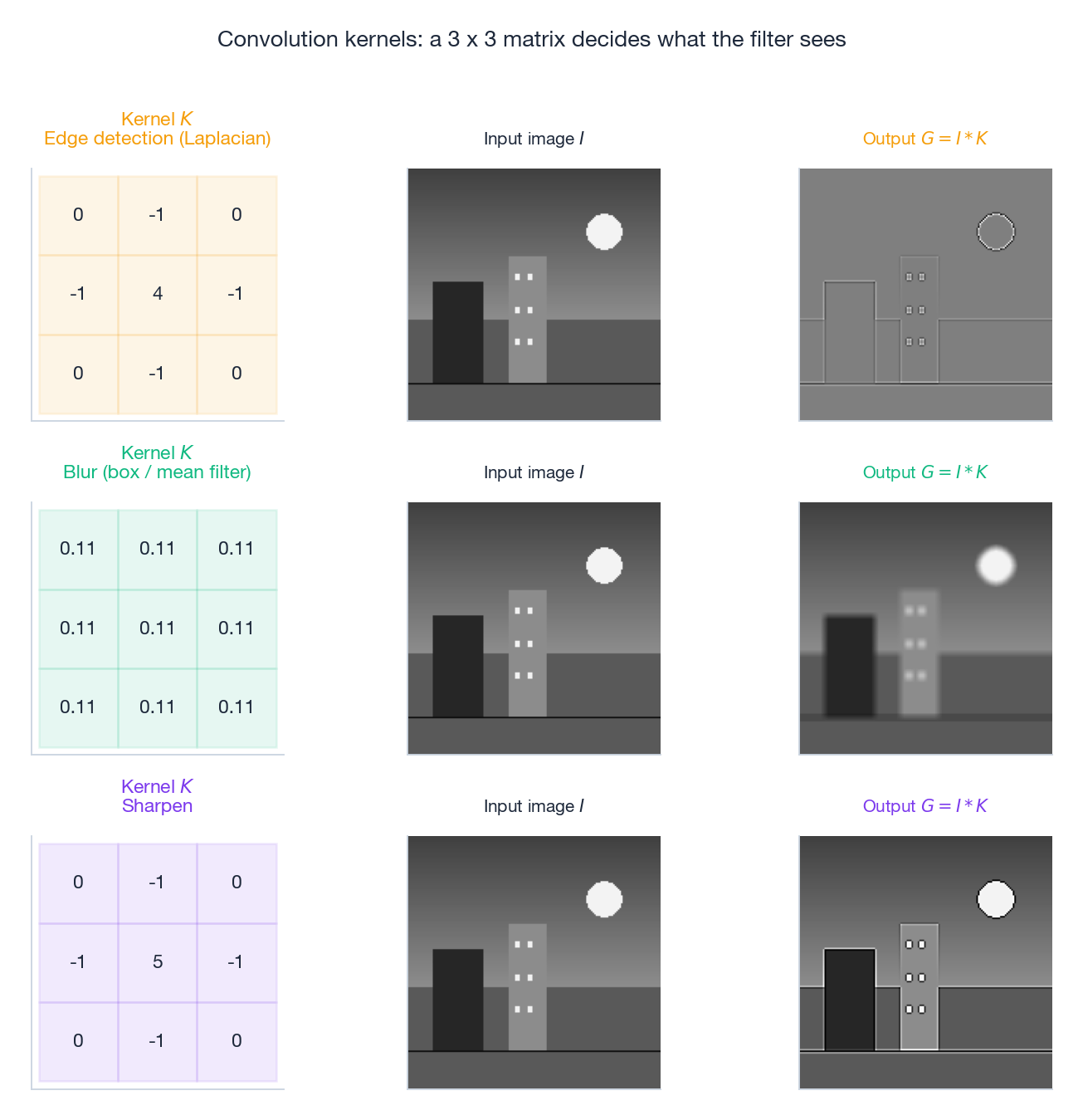

Image Filtering as Matrix Multiplication#

Convolution is a (huge, sparse, structured) linear map#

A 2D convolution $\mathbf{G} = \mathbf{I} \ast \mathbf{K}$ is, when written in vector form, a matrix multiplication $\mathbf{g} = \mathbf{T}\mathbf{i}$ where $\mathbf{T}$ is a doubly block Toeplitz matrix built from the kernel. We never form $\mathbf{T}$ explicitly — it would have $(HW)^2$ entries — but its existence justifies analysing convolutions with linear-algebraic tools (eigenvalues of $\mathbf{T}$ are precisely the discrete Fourier transform of the kernel).

Three kernels you should know by heart#

$$\mathbf{K}_{\text{blur}} = \tfrac{1}{9}\begin{bmatrix}1 & 1 & 1\\ 1 & 1 & 1\\ 1 & 1 & 1\end{bmatrix}$$ $$\mathbf{K}_{\text{edge}} = \begin{bmatrix}0 & -1 & 0\\ -1 & 4 & -1\\ 0 & -1 & 0\end{bmatrix}$$ $$\mathbf{K}_{\text{sharp}} = \begin{bmatrix}0 & -1 & 0\\ -1 & 5 & -1\\ 0 & -1 & 0\end{bmatrix} = \mathbf{I} + \mathbf{K}_{\text{edge}}.$$Convolution theorem#

$$\mathcal{F}[\mathbf{I} \ast \mathbf{K}] = \mathcal{F}[\mathbf{I}] \cdot \mathcal{F}[\mathbf{K}].$$Designing a filter becomes shaping its frequency response: low-pass for noise removal, high-pass for edges, band-pass for textures.

Two Vision Algorithms That Are Just $2 \times 2$ Eigenvalue Problems#

Harris corners and the structure tensor#

$$ \mathbf{M} = \sum_{(x, y) \in W} w(x, y) \begin{bmatrix} I_x^2 & I_x I_y \\ I_x I_y & I_y^2 \end{bmatrix}, $$a $2 \times 2$ symmetric positive-semidefinite matrix. Its eigenvalues $\lambda_1 \ge \lambda_2 \ge 0$ describe how the image varies in the two principal directions of that window:

| eigenvalues | meaning |

|---|---|

| both small | flat region |

| one large, one small | edge |

| both large | corner |

a corner score that is large only when both eigenvalues are large.

| |

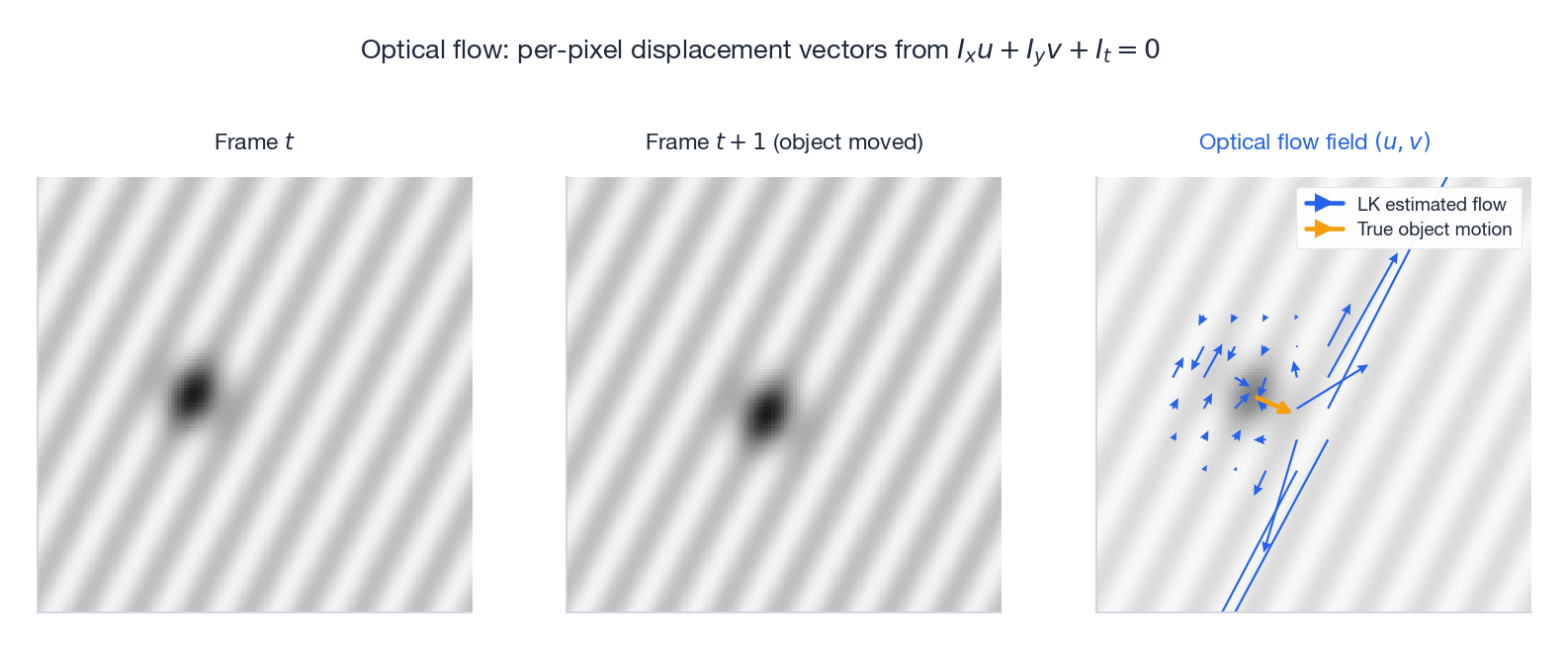

Optical flow: the same matrix again#

$$I_x\,u + I_y\,v + I_t = 0,$$ $$ \underbrace{\begin{bmatrix} \sum I_x^2 & \sum I_x I_y \\ \sum I_x I_y & \sum I_y^2 \end{bmatrix}}_{\text{exactly the structure tensor } \mathbf{M}} \begin{bmatrix} u \\ v \end{bmatrix} = -\begin{bmatrix} \sum I_x I_t \\ \sum I_y I_t \end{bmatrix}. $$The system is well-posed precisely when $\mathbf{M}$ is well-conditioned — that is, at corners. The same eigenvalue analysis tells Harris where corners live and tells Lucas-Kanade where it can trust its flow estimate.

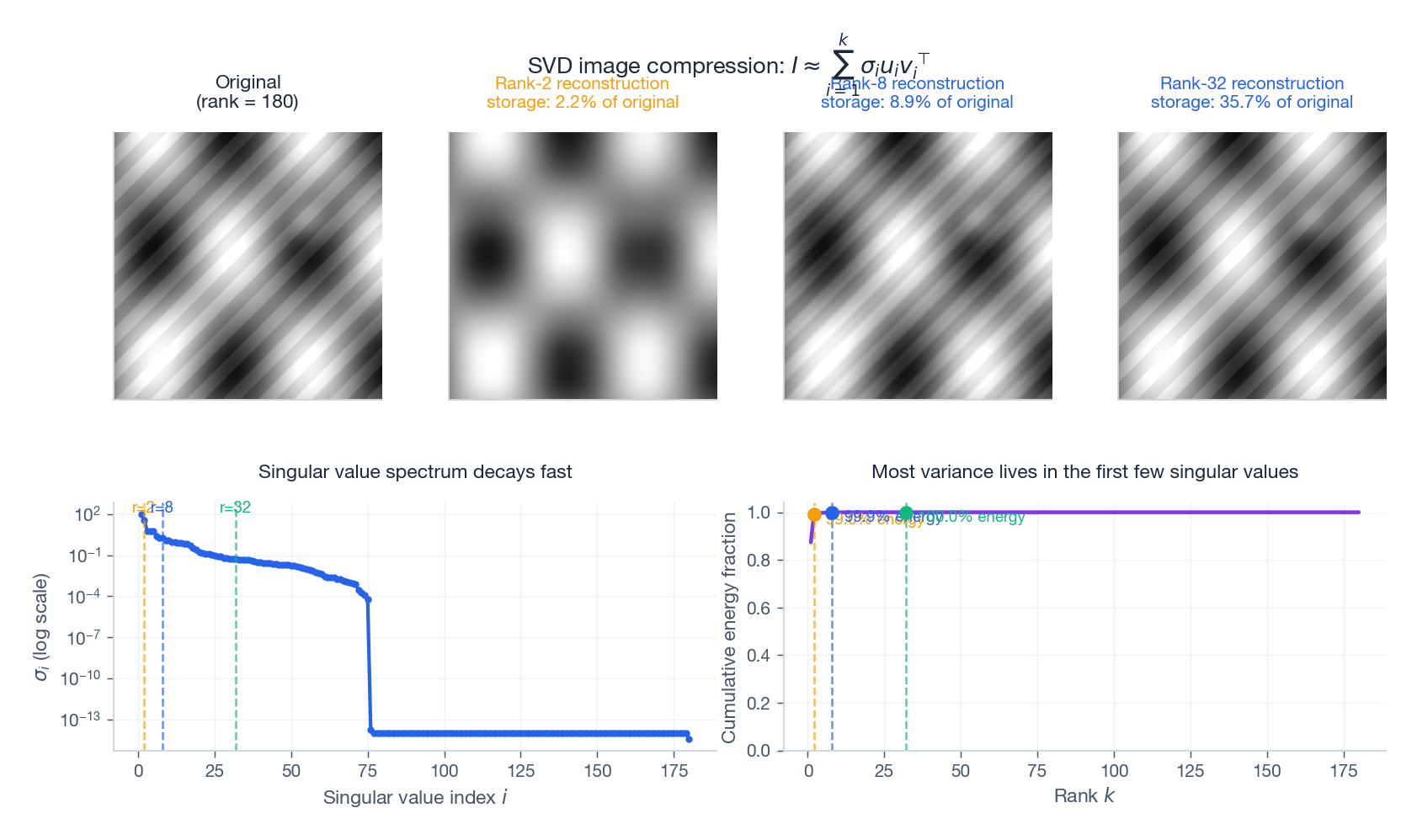

SVD for Image Compression#

$$\mathbf{I} = \sum_{i=1}^{r} \sigma_i\,\mathbf{u}_i\,\mathbf{v}_i^\top, \qquad \sigma_1 \ge \sigma_2 \ge \cdots \ge 0.$$The Eckart-Young theorem (Chapter 9 ) says the truncation to the first $k$ terms is the best rank-$k$ approximation under both Frobenius and spectral norms. For natural images the singular values decay rapidly — most of the visual energy lives in the first few dozen components — so a tiny $k$ already produces a recognisable picture.

Storage drops from $HW$ to $k(H + W + 1)$ numbers, a compression ratio of roughly $k(H + W) / (HW)$ . SVD is nowhere near JPEG efficiency on natural images (JPEG exploits perceptual redundancy in DCT coefficients), but it is conceptually clean and the gold standard for low-rank approximation in many other corners of vision: face recognition (Eigenfaces), background subtraction (RPCA), denoising.

Linear Algebra in Modern Deep Vision#

Convolution as matrix multiplication via im2col#

GPUs are extraordinarily good at dense matrix multiplication. The im2col trick rewrites every convolution as one giant gemm: extract every receptive-field patch as a column, stack the kernels as rows, multiply once. The result is reshaped back to a feature map. Every CNN is, mechanically, a sequence of matrix multiplications interleaved with elementwise nonlinearities.

Self-attention is matrix multiplication#

$$\mathrm{Attention}(\mathbf{Q}, \mathbf{K}, \mathbf{V}) = \mathrm{softmax}\!\left(\frac{\mathbf{Q}\mathbf{K}^\top}{\sqrt{d_k}}\right)\mathbf{V},$$where $\mathbf{Q}, \mathbf{K}, \mathbf{V}$ are linear projections of the token sequence. Vision Transformers (ViT) tokenise an image into patches and feed them through this mechanism — the entire forward pass is a tower of matrix products.

Batch normalisation is an affine map#

At inference time, batch norm with stored running statistics and learned $(\gamma, \beta)$ is just an elementwise affine transform $y = \gamma\,\hat{x} + \beta$ . It can be folded into the preceding convolution at deployment time, removing it from the compute graph entirely.

Exercises#

Basics#

- Write matrix expressions for (a) vertical flip, (b) horizontal flip, (c) brightness $+50$ , (d) contrast $\times 1.5$ on a grayscale image $\mathbf{I}$ .

- Prove for 2D rotation matrices: (a) $\det \mathbf{R} = 1$ , (b) $\mathbf{R}^{-1} = \mathbf{R}^\top$ , (c) $\mathbf{R}(\alpha)\mathbf{R}(\beta) = \mathbf{R}(\alpha + \beta)$ .

- Express in $3 \times 3$ homogeneous form and combine into a single matrix: translate by $(2, 3)$ , then rotate $45^\circ$ around the origin, then scale by $2$ .

Advanced#

- Why do four point correspondences suffice (in principle) to estimate a homography? Set up the $\mathbf{A}\mathbf{h} = \mathbf{0}$ system that the DLT algorithm solves.

- Show that the essential matrix factorises as $\mathbf{E} = [\mathbf{t}]_\times \mathbf{R}$ . Use the SVD $\mathbf{E} = \mathbf{U} \boldsymbol{\Sigma} \mathbf{V}^\top$ to recover $\mathbf{R}$ and $\mathbf{t}$ up to sign.

- Given two projection matrices and a corresponding image-point pair, derive the linear system whose solution is the triangulated 3D point.

Programming#

- Implement affine warping from scratch, building the $3 \times 3$ matrix and applying inverse-warping with bilinear interpolation.

- Implement the 8-point algorithm for estimating $\mathbf{F}$ , including normalisation and rank-2 enforcement via SVD.

- Implement Harris corner detection end-to-end with non-maximum suppression.

Applications#

- Design a panorama stitcher. Why is a homography sufficient when the camera only rotates? How would you handle accumulated drift over many images and exposure differences?

- Implement a monocular visual odometry loop: extract features, match between frames, estimate $\mathbf{E}$ , recover relative pose, accumulate trajectory. Discuss scale drift and how to detect tracking failure.

Summary#

Computer vision and linear algebra are inseparable.

Representation. Grayscale images are matrices; colour images are 3-channel tensors; flattened images are vectors in $\mathbb{R}^{HW}$ .

Geometry. Rotation, scaling, and shear are linear maps; homogeneous coordinates promote translation to a linear operation; perspective requires a $3 \times 3$ homography with a non-trivial bottom row.

Cameras and 3D. A pinhole camera is the $3 \times 4$ projection matrix $\mathbf{P} = \mathbf{K}[\mathbf{R}\,|\,\mathbf{t}]$ . Two-view geometry is encoded in the fundamental matrix $\mathbf{F}$ . Triangulation, structure from motion, and bundle adjustment are linear (or sparse nonlinear) least squares.

State estimation. SLAM optimises poses on the manifold $SE(3)$ via Lie algebra; pose-graph optimisation is a sparse Cholesky in the inner loop.

Filtering and features. Convolution is a giant block-Toeplitz multiplication. Harris corners and Lucas-Kanade flow are the same $2 \times 2$ eigenvalue analysis applied to the structure tensor.

Compression and learning. SVD gives optimal low-rank approximations of images. Deep CNNs and Transformers are towers of matrix multiplications, with batch norm reducing to a deployment-time affine map.

Once you internalise this list, the field’s papers stop looking like a heap of unrelated algorithms and start looking like creative re-uses of a small linear-algebraic toolkit.

References#

- Hartley, R., & Zisserman, A. Multiple View Geometry in Computer Vision (2nd ed.). Cambridge University Press, 2004.

- Szeliski, R. Computer Vision: Algorithms and Applications (2nd ed.). Springer, 2022.

- Forsyth, D. A., & Ponce, J. Computer Vision: A Modern Approach (2nd ed.). Pearson, 2011.

- Strang, G. Introduction to Linear Algebra (5th ed.). Wellesley-Cambridge Press, 2016.

- Barfoot, T. D. State Estimation for Robotics. Cambridge University Press, 2017.

- Zhang, Z. “A flexible new technique for camera calibration.” IEEE TPAMI 22(11), 2000.

- Lucas, B., & Kanade, T. “An iterative image registration technique with an application to stereo vision.” IJCAI, 1981.

- Harris, C., & Stephens, M. “A combined corner and edge detector.” Alvey Vision Conference, 1988.

Linear Algebra 18 parts

- 01 Essence of Linear Algebra (1): The Essence of Vectors — More Than Just Arrows

- 02 Essence of Linear Algebra (2): Linear Combinations and Vector Spaces

- 03 Essence of Linear Algebra (3): Matrices as Linear Transformations

- 04 Essence of Linear Algebra (4): The Secrets of Determinants

- 05 Essence of Linear Algebra (5): Linear Systems and Column Space

- 06 Essence of Linear Algebra (6): Eigenvalues and Eigenvectors

- 07 Essence of Linear Algebra (7): Orthogonality and Projections — When Vectors Mind Their Own Business

- 08 Essence of Linear Algebra (8): Symmetric Matrices and Quadratic Forms — The Best Matrices in Town

- 09 Essence of Linear Algebra (9): Singular Value Decomposition — The Crown Jewel of Linear Algebra

- 10 Essence of Linear Algebra (10): Matrix Norms and Condition Numbers — Is Your Linear System Healthy?

- 11 Essence of Linear Algebra (11): Matrix Calculus and Optimization — The Engine Behind Machine Learning

- 12 Essence of Linear Algebra (12): Sparse Matrices and Compressed Sensing — Less Is More

- 13 Essence of Linear Algebra (13): Tensors and Multilinear Algebra

- 14 Essence of Linear Algebra (14): Random Matrix Theory

- 15 Essence of Linear Algebra (15): Linear Algebra in Machine Learning

- 16 Essence of Linear Algebra (16): Linear Algebra in Deep Learning

- 17 Essence of Linear Algebra (17): Linear Algebra in Computer Vision you are here

- 18 Essence of Linear Algebra (18): Frontiers and Summary