ML Math Derivations (1): Introduction and Mathematical Foundations

Why can machines learn from data at all? This first chapter builds the mathematical theory of learning from first principles -- problem formalization, loss surrogates, PAC learning, VC dimension, the bias-variance decomposition and the No Free Lunch theorem.

What this chapter does#

In 2005 Google Research showed, on a public benchmark, that a statistical translation model trained on raw bilingual text could outperform decades of carefully engineered linguistic rules. The conclusion was uncomfortable for the experts of the day, but mathematically liberating: a system that has never been told the rules of a language can still recover them, given enough examples. Why?

The answer is not a trick of engineering — it is a theorem. In this chapter we build, from first principles, the part of mathematics that explains when learning from data is possible, how much data is required, and what fundamentally limits what any algorithm can do.

By the end you will be able to:

- Formalize a learning problem as a probabilistic optimization over a hypothesis class.

- State and derive the PAC learnability condition for finite hypothesis classes.

- Use the VC dimension to extend that argument to infinite classes such as linear classifiers and neural networks.

- Decompose the test error of any predictor into bias, variance and irreducible noise.

- Explain, via the No Free Lunch theorem, why every successful algorithm carries an inductive bias.

Prerequisites. Calculus (derivatives, integrals, Taylor expansion), elementary probability (expectation, variance, Bayes’ rule, independence) and comfort reading mathematical notation. No prior exposure to learning theory is assumed.

The shape of a learning problem#

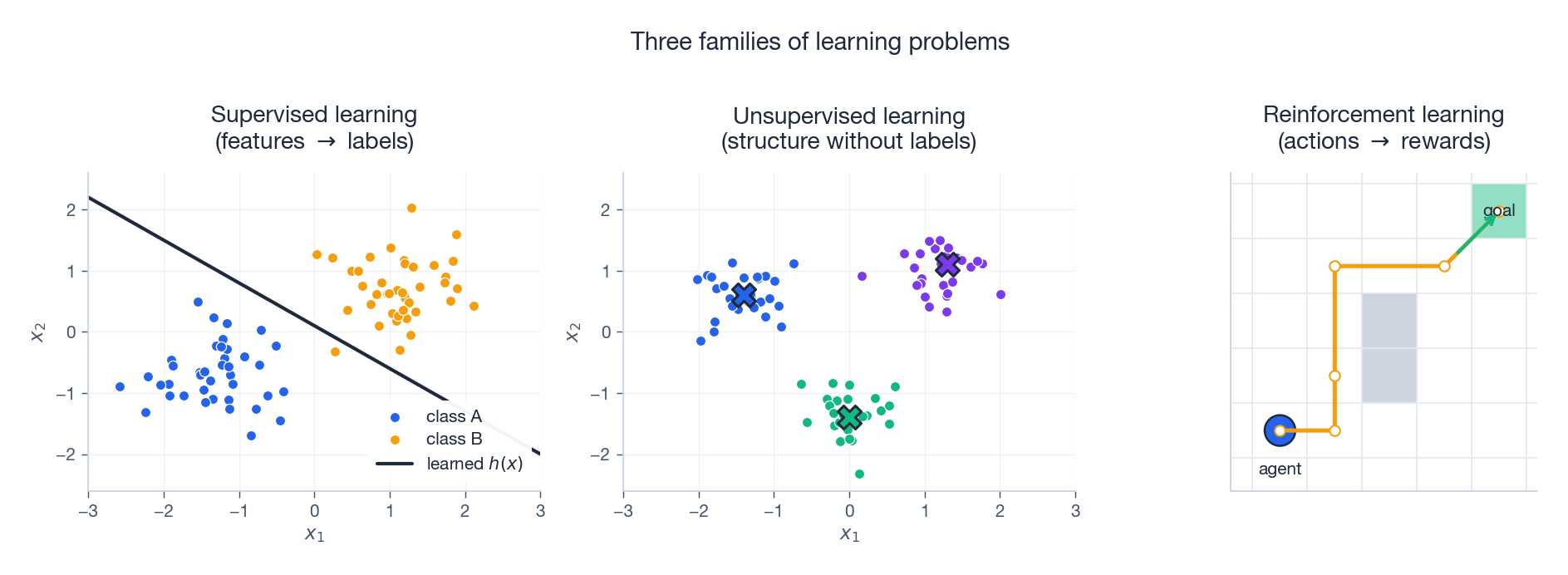

Three families of problems#

Before any theorem, fix the landscape. Almost every problem in this series will fall into one of three families.

- Supervised learning. We are given pairs $(x, y)$ and asked to predict $y$ from $x$ . Classification (discrete $y$ ) and regression (continuous $y$ ) are the two canonical instances.

- Unsupervised learning. Only the inputs $x$ are given. The task is to recover structure — clusters, low-dimensional manifolds, density estimates — without external labels.

- Reinforcement learning. An agent chooses actions in an environment and observes a scalar reward signal. The data is generated by the agent’s own policy, which makes the statistical analysis subtler than in the supervised case.

This chapter develops the theory in the supervised setting, because that is where the foundational results (PAC, VC, bias-variance) are cleanest. The same machinery, with care, lifts to the other two families.

Mathematical formalization#

We assume an unknown joint distribution $\mathcal{D}$ over an input space $\mathcal{X}$ and an output space $\mathcal{Y}$ . A learning algorithm is a procedure that consumes a finite training sample $S = \{(x_i, y_i)\}_{i=1}^m$ drawn i.i.d. from $\mathcal{D}$ and returns a hypothesis $h: \mathcal{X} \to \mathcal{Y}$ . The quality of $h$ is measured by a loss function.

Definition 1 (Loss function). A loss is any measurable map $\ell: \mathcal{Y} \times \mathcal{Y} \to \mathbb{R}_{\geq 0}$ assigning to a prediction $h(x)$ and a truth $y$ a non-negative penalty $\ell(h(x), y)$ .

$$L_{\mathcal{D}}(h) \;=\; \mathbb{E}_{(x,y)\sim \mathcal{D}}\bigl[\ell(h(x), y)\bigr]. \tag{1}$$This is the quantity we care about: average loss on a fresh draw from the same distribution that generated the training data.

$$L_S(h) \;=\; \frac{1}{m}\sum_{i=1}^{m} \ell(h(x_i), y_i). \tag{2}$$This is the quantity we can measure.

The central paradox of statistical learning. We optimize $L_S$ but are graded on $L_{\mathcal{D}}$ . Almost every theorem in this chapter is a statement about how, when, and how much $L_S(h) \approx L_{\mathcal{D}}(h)$ .

Three components of a supervised learner#

Any supervised algorithm is determined by a triple $(\mathcal{H}, \ell, \mathcal{A})$ :

- Hypothesis class $\mathcal{H}$ . The set of functions the algorithm is allowed to output. Larger $\mathcal{H}$ is more expressive but harder to control statistically.

- Loss $\ell$ . Turns prediction quality into a number we can minimize.

- Algorithm $\mathcal{A}$ . A map from samples to hypotheses, $\mathcal{A}: (\mathcal{X} \times \mathcal{Y})^m \to \mathcal{H}$ .

Two paradigmatic algorithms recur throughout the series:

- Empirical Risk Minimization (ERM): $\;h_{\text{ERM}} \;=\; \arg\min_{h\in\mathcal{H}} L_S(h)$ .

- Structural Risk Minimization (SRM): $\;h_{\text{SRM}} \;=\; \arg\min_{h\in\mathcal{H}} \bigl[L_S(h) + \Omega(h)\bigr]$ , where $\Omega$ penalizes complexity.

ERM is the natural starting point. SRM is what we actually use in practice, and we will see why once the bias-variance decomposition makes the need for $\Omega$ explicit.

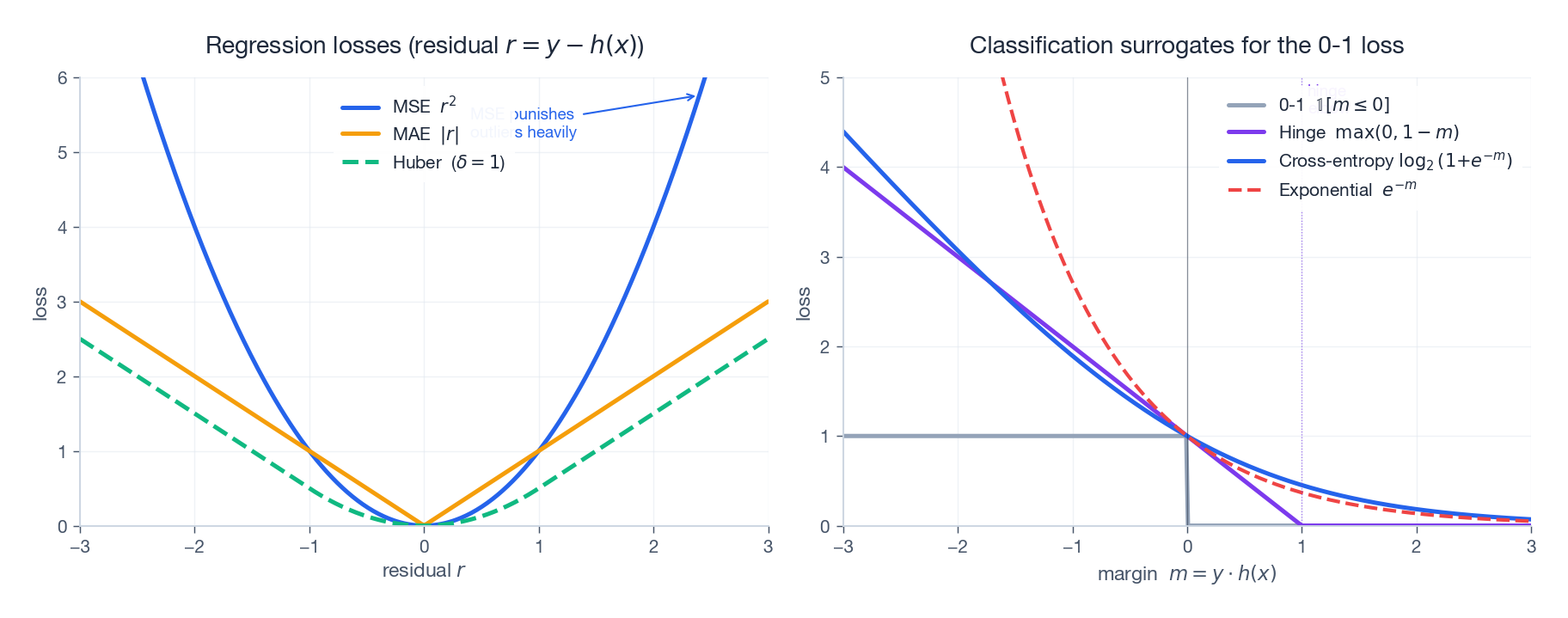

Choosing a loss: regression and classification surrogates#

The choice of loss is not cosmetic: it changes the optimization landscape, the statistical efficiency and the set of optima.

For regression with residual $r = y - h(x)$ :

| Loss | Formula | Properties |

|---|---|---|

| Squared (MSE) | $r^2$ | Smooth, strongly convex, sensitive to outliers. Bayes-optimal predictor is $\mathbb{E}[Y\mid X]$ . |

| Absolute (MAE) | $\lvert r\rvert$ | Robust to outliers, non-smooth at $r=0$ . Bayes-optimal predictor is the conditional median. |

| Huber | $\tfrac12 r^2$ if $\lvert r\rvert \leq \delta$ , else $\delta(\lvert r\rvert - \tfrac{\delta}{2})$ | Quadratic near zero, linear in the tails: combines MSE smoothness with MAE robustness. |

For binary classification with $y \in \{-1, +1\}$ and margin $m = y \cdot h(x)$ :

| Loss | Formula | Used in |

|---|---|---|

| 0-1 | $\mathbb{1}[m \leq 0]$ | The quantity we ultimately care about. NP-hard to minimize directly. |

| Hinge | $\max(0,\, 1 - m)$ | Support vector machines. Convex upper bound on 0-1; sparse subgradients. |

| Logistic / cross-entropy | $\log\bigl(1 + e^{-m}\bigr)$ | Logistic regression, neural classifiers. Smooth, calibrated probabilities. |

| Exponential | $e^{-m}$ | AdaBoost. Most aggressive on misclassified points; sensitive to label noise. |

Every classification surrogate in the table is a convex upper bound on the 0-1 loss that is non-increasing in the margin. Bartlett, Jordan and McAuliffe (2006) showed that minimizing such “classification-calibrated” surrogates is statistically consistent: in the limit of infinite data the resulting classifier converges to a Bayes-optimal one.

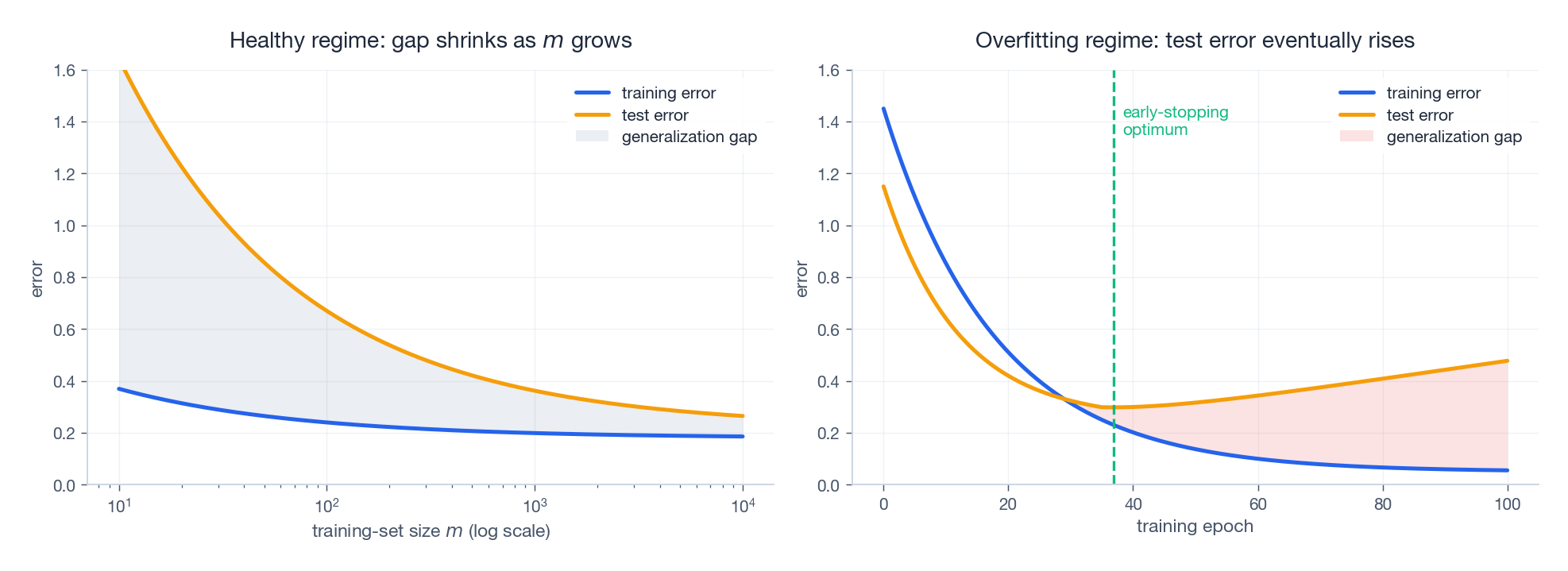

Why theory matters: the generalization gap#

A 100-degree polynomial fit to 10 noisy points achieves $L_S = 0$ but predicts catastrophically at any new $x$ . The size of $L_{\mathcal{D}}(h) - L_S(h)$ is the generalization gap, and its behaviour is the subject of the next two sections.

The left panel shows the healthy regime, where the gap shrinks as the training set grows — this is what PAC learning will quantify. The right panel shows the overfitting regime, where pushing the optimizer further actually hurts test error — this is where regularization, early stopping and the bias-variance tradeoff become essential.

Learning theory gives us tools to:

- Predict when the gap will be small;

- Quantify it via complexity measures (cardinality, VC dimension, Rademacher complexity);

- Control it through model selection and regularization.

The PAC framework#

What “learnable” should mean#

When we say a problem is learnable we are making three concessions:

- We cannot demand a perfect predictor — only one within $\varepsilon$ of optimal.

- We cannot demand certainty — only success with probability at least $1 - \delta$ over the random training sample.

- The error is measured against the true distribution $\mathcal{D}$ , not against the training data.

These three weakenings — Probably Approximately Correct — were formalized by Valiant (1984).

$$\Pr_{S \sim \mathcal{D}^m}\!\bigl[\, L_{\mathcal{D}}(h_S) \;\leq\; \min_{h \in \mathcal{H}} L_{\mathcal{D}}(h) + \varepsilon \,\bigr] \;\geq\; 1 - \delta. \tag{3}$$The function $m_{\mathcal{H}}(\varepsilon, \delta)$ is the sample complexity. Read in plain English: “with at most $m_{\mathcal{H}}(\varepsilon, \delta)$ examples, we can guarantee, with probability $\geq 1 - \delta$ , a hypothesis whose true error is within $\varepsilon$ of the best in $\mathcal{H}$ .”

The realizable case for finite hypothesis classes#

Begin with the simplest non-trivial setting: $\mathcal{H}$ is finite, and there exists $h^\star \in \mathcal{H}$ with $L_{\mathcal{D}}(h^\star) = 0$ (the realizable assumption). We use the 0-1 loss.

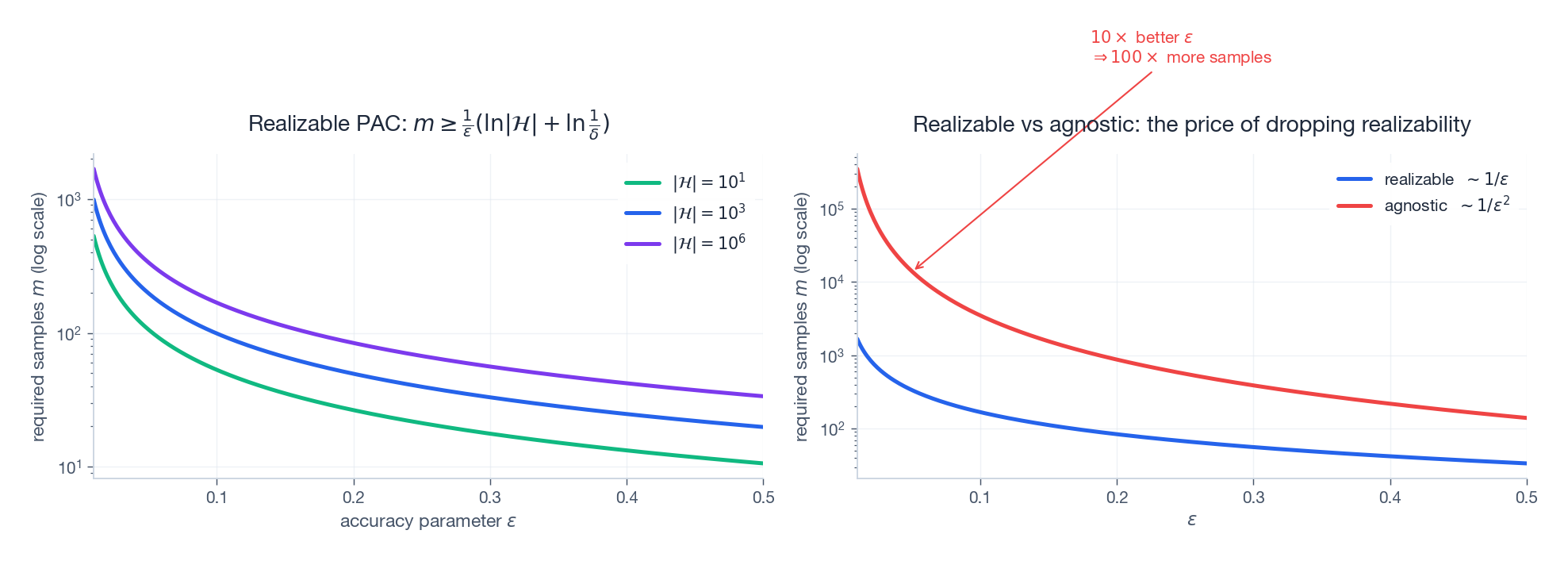

$$m \;\geq\; \frac{1}{\varepsilon}\Bigl(\ln |\mathcal{H}| + \ln \tfrac{1}{\delta}\Bigr), \tag{4}$$then with probability at least $1 - \delta$ over the sample $S$ , every hypothesis $h \in \mathcal{H}$ with $L_S(h) = 0$ satisfies $L_{\mathcal{D}}(h) \leq \varepsilon$ . In particular, ERM is a PAC learner for $\mathcal{H}$ .

Proof. Let $\mathcal{H}_{\text{bad}} = \{h \in \mathcal{H} : L_{\mathcal{D}}(h) > \varepsilon\}$ . We bound the probability of the bad event “ERM returns a bad hypothesis”, which is contained in the event “some bad hypothesis has zero training error”.

$$\Pr_{S}\!\bigl[L_S(h) = 0\bigr] \;=\; \prod_{i=1}^{m} \Pr\!\bigl[h(x_i) = y_i\bigr] \;\leq\; (1 - \varepsilon)^m. \tag{5}$$ $$\Pr_{S}\!\bigl[\exists h \in \mathcal{H}_{\text{bad}} : L_S(h) = 0\bigr] \;\leq\; \sum_{h \in \mathcal{H}_{\text{bad}}} (1 - \varepsilon)^m \;\leq\; |\mathcal{H}|\,(1 - \varepsilon)^m. \tag{6}$$ $$|\mathcal{H}|\,(1 - \varepsilon)^m \;\leq\; |\mathcal{H}|\, e^{-\varepsilon m}. \tag{7}$$ $$ |\mathcal{H}|\, e^{-\varepsilon m} \;\leq\; \delta \;\;\Longleftrightarrow\;\; m \;\geq\; \frac{1}{\varepsilon}\bigl(\ln |\mathcal{H}| + \ln \tfrac{1}{\delta}\bigr). \tag{8} $$When $m$ satisfies (8), the bad event has probability at most $\delta$ , and the complementary event is exactly the conclusion of the theorem. $\blacksquare$

Reading the formula.

- $\ln |\mathcal{H}|$ — richer hypothesis classes need more data, but only logarithmically.

- $\ln(1/\delta)$ — doubling the required confidence costs only an additive $\ln 2$ samples.

- $1/\varepsilon$ — halving $\varepsilon$ doubles $m$ . Linear in accuracy.

The agnostic case#

Realizability is a strong assumption. Agnostic PAC drops it: we no longer require any $h^\star$ to be perfect, only that we want to compete with the best $h^\star \in \mathcal{H}$ .

$$m \;\geq\; \frac{2}{\varepsilon^2}\Bigl(\ln |\mathcal{H}| + \ln \tfrac{2}{\delta}\Bigr) \tag{9}$$suffices for $L_{\mathcal{D}}(h_{\text{ERM}}) \leq \min_{h \in \mathcal{H}} L_{\mathcal{D}}(h) + \varepsilon$ with probability $\geq 1 - \delta$ .

$$\Pr\!\bigl[\, |L_S(h) - L_{\mathcal{D}}(h)| > t \,\bigr] \;\leq\; 2 e^{-2 m t^2}. \tag{10}$$A union bound over $\mathcal{H}$ and a substitution $t = \varepsilon / 2$ yield (9).

Why $1/\varepsilon^2$ , not $1/\varepsilon$ ?#

The shift from realizable to agnostic costs a quadratic factor in $\varepsilon$ . Where does it come from?

In the realizable case, we only need to eliminate bad hypotheses: a single counter-example destroys them, and the probability of having no counter-example decays exponentially in $\varepsilon m$ .

$$\frac{\sigma}{\sqrt{m}} \;\lesssim\; \varepsilon \;\;\Longrightarrow\;\; m \;\gtrsim\; \frac{\sigma^2}{\varepsilon^2}.$$This is the fingerprint of the Central Limit Theorem. Improving $\varepsilon$ by $10\times$ costs $100\times$ more samples. The two regimes are visualized below.

The left panel shows that $\ln|\mathcal{H}|$ enters only as a logarithmic additive term — jumping from $|\mathcal{H}| = 10^3$ to $10^6$ merely doubles it. The right panel shows the price of dropping realizability: the agnostic curve has slope $-2$ on log-log axes, the realizable curve only slope $-1$ .

VC dimension: complexity for infinite classes#

Why a new measure is needed#

The bound $\ln |\mathcal{H}|$ collapses for any reasonable real-valued model: a linear classifier in $\mathbb{R}^d$ has uncountably many hypotheses, so $\ln|\mathcal{H}| = \infty$ and Theorem 2 is vacuous. We need a finite complexity measure that still captures statistical capacity.

The right measure is combinatorial, not cardinal: it counts how many distinct labelings a class can produce on a finite set of points.

Shattering#

$$\bigl|\{(h(x_1), \ldots, h(x_m)) : h \in \mathcal{H}\}\bigr| \;=\; 2^m. \tag{11}$$Intuition: shattering means $\mathcal{H}$ has enough flexibility to produce every dichotomy on those particular points. Its expressive power on $C$ is maximal.

Definition#

$$\mathrm{VCdim}(\mathcal{H}) \;=\; \sup\bigl\{\, m : \exists\, C \subseteq \mathcal{X},\; |C| = m,\; \mathcal{H}\text{ shatters } C \,\bigr\}. \tag{12}$$If the supremum is infinite we write $\mathrm{VCdim}(\mathcal{H}) = \infty$ .

Two important asymmetries.

- Existential lower bound. To prove $\mathrm{VCdim}(\mathcal{H}) \geq d$ it suffices to exhibit one set of size $d$ that $\mathcal{H}$ shatters.

- Universal upper bound. To prove $\mathrm{VCdim}(\mathcal{H}) < d+1$ we must show that every set of size $d+1$ fails to be shattered.

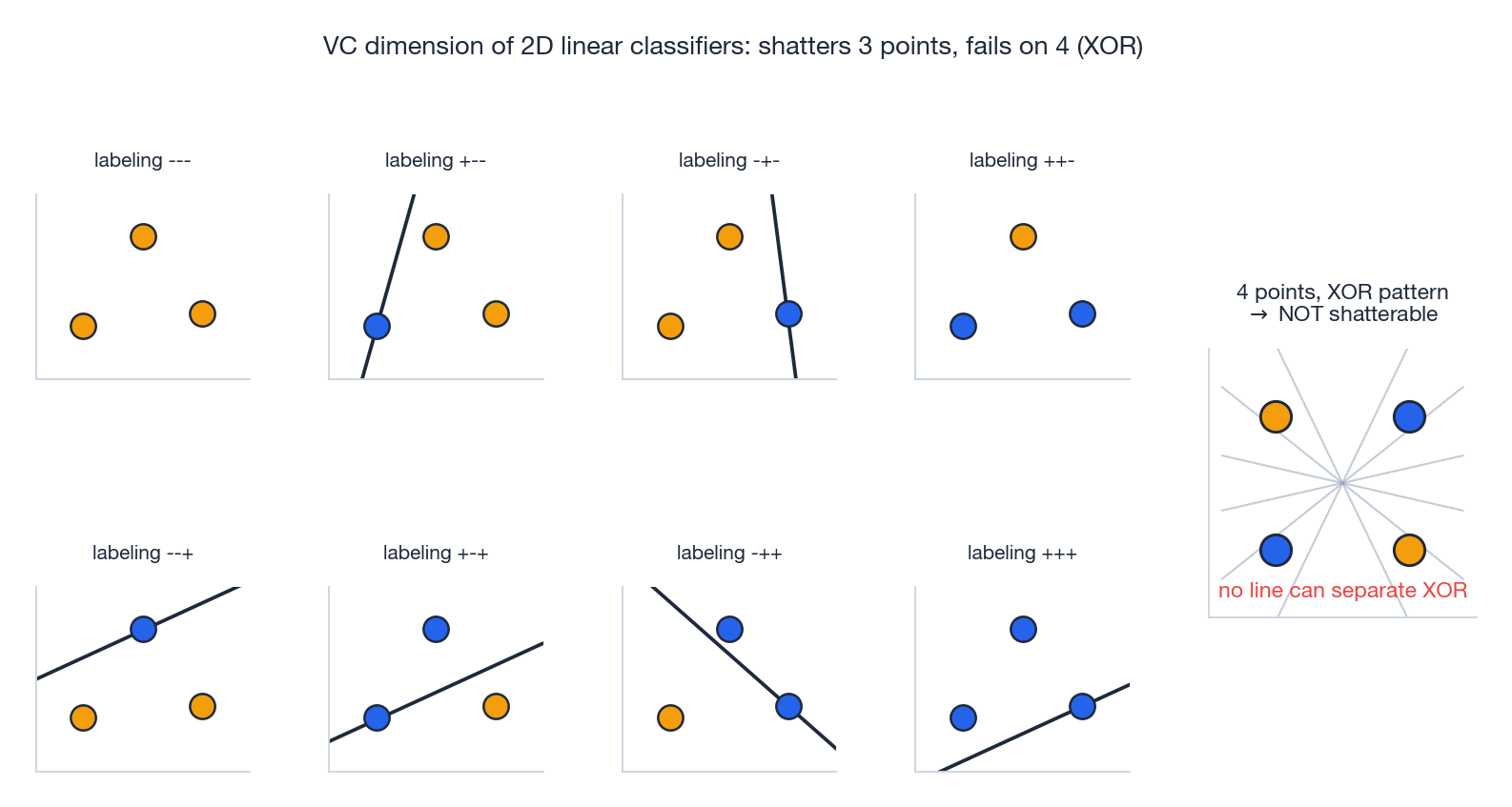

Worked example: linear classifiers in $\mathbb{R}^d$ #

Theorem 3. The class of homogeneous linear classifiers $\mathcal{H} = \{x \mapsto \mathrm{sign}(w^\top x + b) : w \in \mathbb{R}^d, b \in \mathbb{R}\}$ has $\mathrm{VCdim}(\mathcal{H}) = d + 1$ .

Proof.

$$w \;=\; \sum_{i=1}^{d} y_i\, e_i, \qquad b \;=\; \tfrac12 y_0. \tag{13}$$Then $w^\top 0 + b = b = \tfrac12 y_0$ has the sign of $y_0$ , and for $i \geq 1$ , $w^\top e_i + b = y_i + \tfrac12 y_0$ , whose sign is determined by $y_i$ (the $\tfrac12 y_0$ term is too small to flip it). Every labeling is realizable, so the set is shattered.

$$\sum_{i=1}^{d+2} a_i \tilde{x}_i \;=\; 0, \qquad \text{not all } a_i = 0. \tag{14}$$ $$\underbrace{\sum_{i \in I^+} a_i (w^\top x_i + b)}_{>\,0} \;+\; \underbrace{\sum_{i \in I^-} a_i (w^\top x_i + b)}_{>\,0} \;=\; 0,$$because for $i \in I^+$ both factors are positive, and for $i \in I^-$ both factors are negative (so their product is positive). The sum of strictly positive terms equals zero — contradiction. $\blacksquare$

The geometry behind the bound is exactly what one would guess: a hyperplane in $\mathbb{R}^d$ has $d+1$ free parameters, and one parameter “buys” exactly one labeling degree of freedom.

The eight panels on the left enumerate every labeling of three generic points in $\mathbb{R}^2$ ; in each one a separating line exists. The right-hand panel exhibits the XOR pattern of four points: no single line can separate the $+$ ’s from the $-$ ’s, and a counting argument shows that no set of four points in $\mathbb{R}^2$ is shatterable. So $\mathrm{VCdim} = 3$ for 2D linear classifiers, in agreement with the formula $d+1$ .

The VC bound on sample complexity#

$$m_{\mathcal{H}}(\varepsilon, \delta) \;=\; O\!\left(\frac{d + \ln(1/\delta)}{\varepsilon^2}\right). \tag{15}$$ $$\mathrm{VCdim}(\mathcal{H}) = d \;\;\Longrightarrow\;\; \Pi_{\mathcal{H}}(m) \;\leq\; \sum_{i=0}^{d} \binom{m}{i} \;\leq\; \left(\frac{e\,m}{d}\right)^d. \tag{16}$$The exponential $2^m$ collapses to a polynomial $m^d$ as soon as $m$ exceeds $d$ . Plugging this polynomial growth into a uniform-convergence argument (which we will give in the probability chapter) yields (15).

Application. A linear classifier in $\mathbb{R}^{100}$ has $\mathrm{VCdim} = 101$ . To reach $\varepsilon = 0.05$ , $\delta = 0.05$ in the agnostic setting we need on the order of $m \approx 10^5$ samples — entirely feasible. By contrast, a class with $\mathrm{VCdim} = \infty$ (e.g. the class of all measurable functions) is not PAC learnable, regardless of sample size.

Bias-variance decomposition#

Setup#

Even when a class is PAC learnable, the test error of a particular learned predictor decomposes into pieces with very different origins. Understanding the decomposition is what guides model selection in practice.

Fix the squared loss and assume:

- A true regression function $f^\star: \mathcal{X} \to \mathbb{R}$ .

- Observations $y = f^\star(x) + \varepsilon$ with $\mathbb{E}[\varepsilon] = 0$ and $\mathrm{Var}(\varepsilon) = \sigma^2$ .

- A learning algorithm that, given a sample $S$ , produces a predictor $\hat{f}_S$ .

Take expectations over both the random sample $S$ and the noise $\varepsilon$ at a fixed test input $x$ .

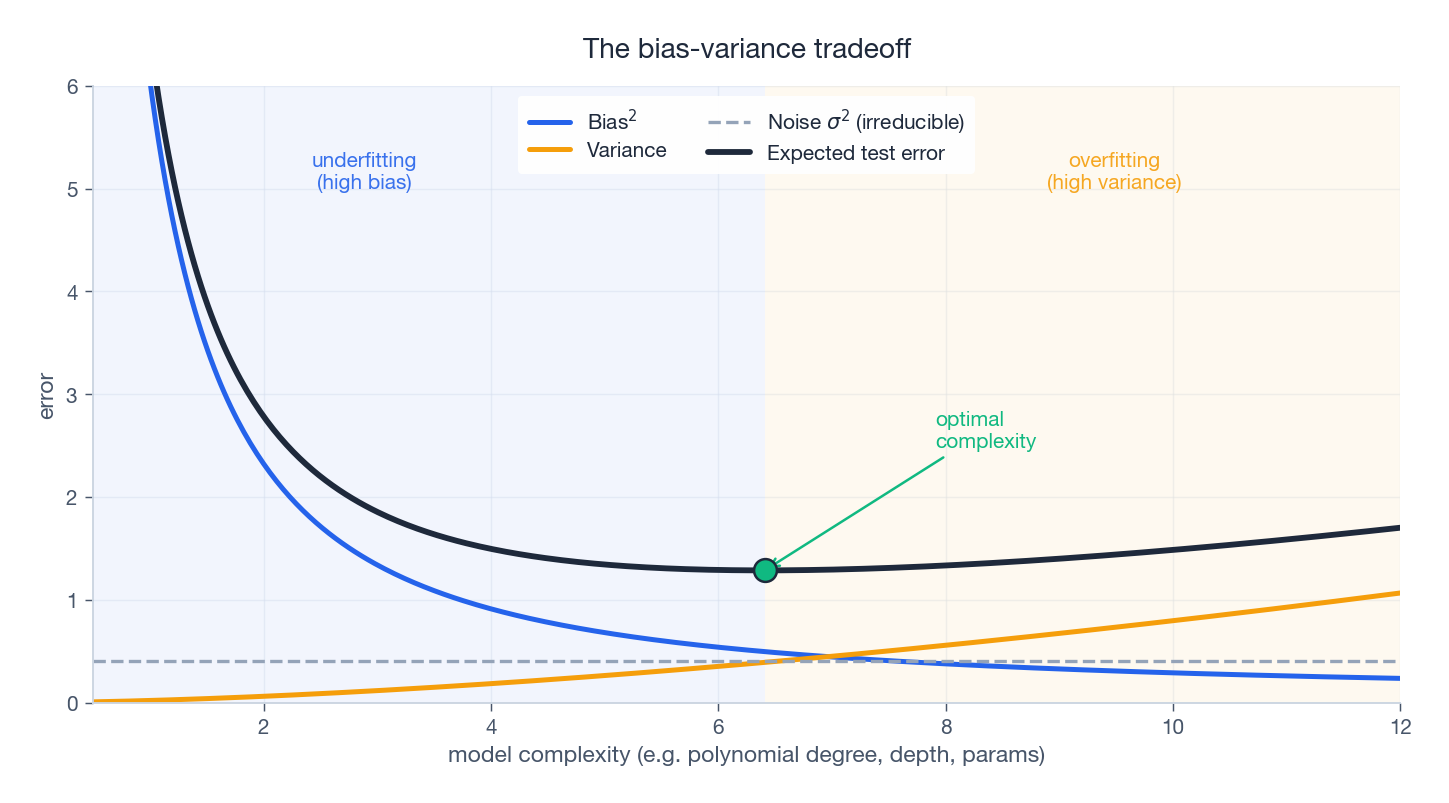

$$ \mathbb{E}_{S,\varepsilon}\!\Bigl[(\hat{f}_S(x) - y)^2\Bigr] \;=\; \underbrace{\bigl(\bar{f}(x) - f^\star(x)\bigr)^2}_{\textstyle\text{Bias}^2} \;+\; \underbrace{\mathbb{E}_S\!\bigl[(\hat{f}_S(x) - \bar{f}(x))^2\bigr]}_{\textstyle\text{Variance}} \;+\; \underbrace{\sigma^2}_{\textstyle\text{Noise}}. \tag{17} $$Proof#

$$\hat{f} - y \;=\; \underbrace{(\hat{f} - \bar{f})}_{A} \;+\; \underbrace{(\bar{f} - f^\star)}_{B} \;+\; \underbrace{(f^\star - y)}_{C}. \tag{18}$$ $$(\hat{f} - y)^2 \;=\; A^2 + B^2 + C^2 + 2AB + 2AC + 2BC.$$Take expectations over $S$ and $\varepsilon$ . The three cross-terms vanish:

- $\mathbb{E}_S[2 A B] = 2 B \cdot \mathbb{E}_S[\hat{f} - \bar{f}] = 2 B \cdot 0 = 0$ because $\mathbb{E}_S[\hat{f}] = \bar{f}$ .

- $\mathbb{E}_{S,\varepsilon}[2 A C] = 2\,\mathbb{E}_S[A] \cdot \mathbb{E}_\varepsilon[C] = 0$ since $S \perp \varepsilon$ and both expectations are zero.

- $\mathbb{E}_\varepsilon[2 B C] = 2 B \cdot \mathbb{E}_\varepsilon[C] = 0$ since $\mathbb{E}[\varepsilon] = 0$ .

What remains is $\mathbb{E}_S[A^2] + B^2 + \mathbb{E}_\varepsilon[C^2]$ , which are precisely Variance, Bias$^2$ and Noise. $\blacksquare$

What each term means#

| Term | Meaning | Decreased by |

|---|---|---|

| Bias$^2$ | How far the average predictor sits from the truth. Symptom: high bias = underfitting. | More expressive class, better features, less regularization. |

| Variance | How much the predictor wobbles when retrained on a fresh sample. Symptom: high variance = overfitting. | More data, a less expressive class, regularization, ensembling. |

| Noise | Irreducible randomness in $y$ given $x$ . Cannot be removed by any algorithm. | Nothing — it is the floor. |

These three forces compete as the model class grows in capacity:

The total expected error follows a U-shape. To the left of the optimum, bias dominates and we are underfitting. To the right, variance dominates and we are overfitting. The minimum is the operating point you are looking for, and finding it — usually by cross-validation — is what model selection is.

A quick numerical sanity check#

Let $f^\star(x) = \sin(\pi x)$ on $[-1, 1]$ with $\sigma = 0.3$ , $m = 20$ , repeated over 100 random training sets:

| Model | Bias$^2$ | Variance | Noise | Total |

|---|---|---|---|---|

| Degree 1 polynomial | 0.42 | 0.002 | 0.09 | 0.51 |

| Degree 3 polynomial | 0.05 | 0.02 | 0.09 | 0.16 |

| Degree 9 polynomial | 0.01 | 0.35 | 0.09 | 0.45 |

The degree-3 polynomial wins because it is the only one that simultaneously keeps both bias and variance small. Note that the noise term is the same in all rows, by definition.

Diagnosing under- and overfitting#

| Train error | Validation error | Diagnosis | What to try |

|---|---|---|---|

| Low | Low | Healthy | Nothing — ship it. |

| High | High | Underfitting | More features, larger model, train longer, less regularization. |

| Low | High | Overfitting | More data, smaller model, regularization, early stopping, dropout. |

| High | Low | Likely a data leak or a bug in your evaluation pipeline. | Audit the splits. |

The No Free Lunch theorem#

Statement#

Wolpert and Macready (1997) and Wolpert (1996) made precise an idea that practitioners had long suspected: there is no universally best learner.

$$\frac{1}{|\mathcal{F}|} \sum_{f \in \mathcal{F}} \mathbb{E}_S\!\bigl[L_f(\mathcal{A}_1(S))\bigr] \;=\; \frac{1}{|\mathcal{F}|} \sum_{f \in \mathcal{F}} \mathbb{E}_S\!\bigl[L_f(\mathcal{A}_2(S))\bigr]. \tag{19}$$The argument is short. For any unseen $x \notin S$ , the labels under a random target $f$ chosen uniformly from $\mathcal{F}$ are an unbiased fair coin: half the targets in $\mathcal{F}$ assign $f(x) = 0$ and half assign $f(x) = 1$ . So any prediction strategy is right on exactly half the targets. Both sides of (19) equal $\tfrac12$ on the unseen part, regardless of the algorithm.

Inductive bias#

If no algorithm can be universally good, every successful algorithm must encode assumptions about the world. These assumptions go by the name inductive bias.

| Inductive bias | Embodied in | Suited to |

|---|---|---|

| Smoothness | $k$ -NN, kernel methods | Continuous regression on physical quantities |

| Linearity | Linear/logistic regression, linear SVM | Roughly linear relationships, high-dim with few samples |

| Sparsity | Lasso, elastic net | High-dim problems with few relevant features |

| Compositionality / hierarchy | Deep networks | Vision, language, anything with parts and wholes |

| Locality + translation equivariance | CNNs | Images, audio spectra |

| Sequential structure | RNNs, transformers | Language, time series |

| Permutation invariance | Set / graph networks | Sets, molecules, social networks |

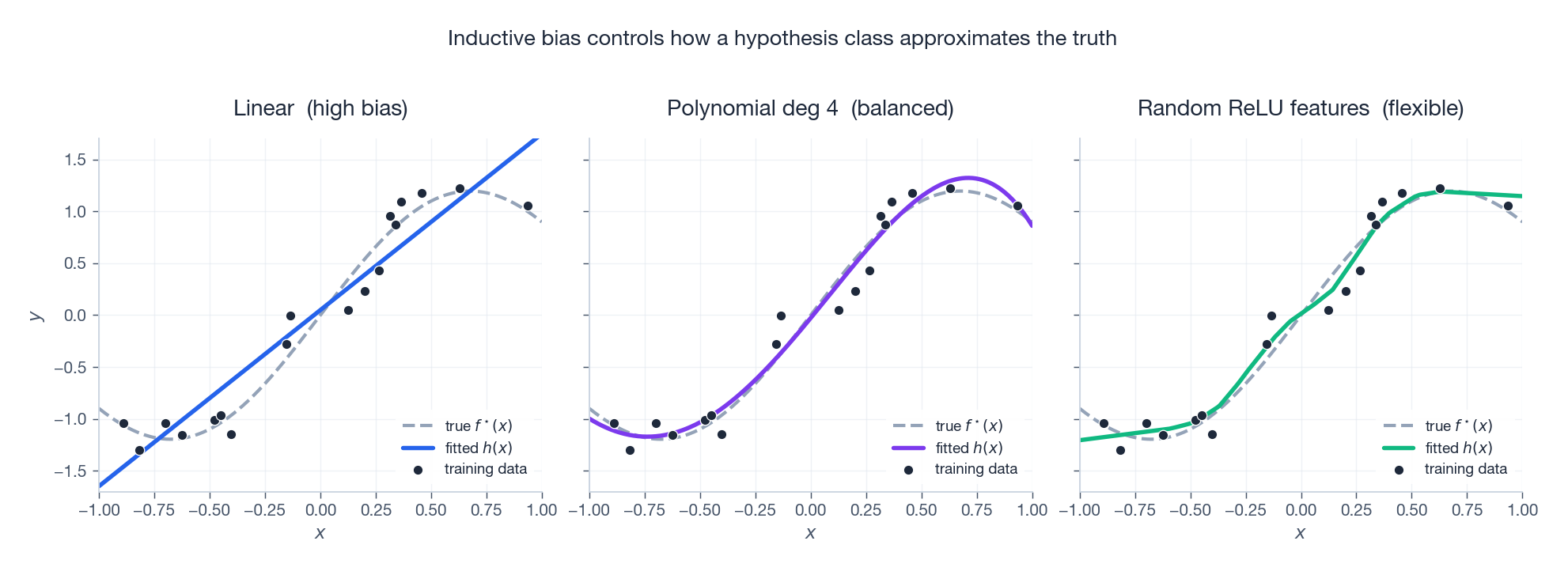

The next figure shows three different inductive biases acting on the same training data:

The linear fit underfits because its bias is too strong; random ReLU features overfit because their bias is too weak; the degree-4 polynomial happens to match the structure of the target. None of them is intrinsically right — the choice depends on what you believe about $f^\star$ .

Why NFL does not make learning useless#

NFL averages over all possible functions. The functions we actually encounter — physical laws, natural language, images of objects — live on a vanishingly small, highly structured submanifold of “all functions”. On that submanifold, some inductive biases dominate others by enormous margins.

Practically, NFL asks four things of you:

- Stop looking for a universal algorithm; it does not exist.

- Identify what structure your problem actually has.

- Pick an inductive bias that matches it.

- Evaluate on the problem class you care about, not on adversarial worst cases.

Code: empirical PAC and bias-variance for linear regression#

The following self-contained script reproduces the central predictions of this chapter on a simple linear-regression problem. It (i) verifies that test error decreases as $1/m$ in the realizable regime, and (ii) measures Bias$^2$ , Variance and Noise separately and checks that they sum to the total expected error.

| |

What you should observe.

- Test error shrinks roughly as $1/m$ — the PAC prediction in the realizable regime, since the squared loss for a well-specified linear model behaves like a parameter-estimation problem.

- Bias$^2$ is essentially zero — the linear hypothesis class contains the truth.

- Variance shrinks like $1/m$ — with more data, the fitted slope and intercept fluctuate less.

- Bias$^2$ + Variance + $\sigma^2$ matches the test MSE to within Monte-Carlo noise, confirming Theorem 5.

FAQ#

Q1. If ERM is so simple, why not just use it?

Because $L_S$ and $L_{\mathcal{D}}$ can disagree arbitrarily on a too-rich $\mathcal{H}$ . The “lookup-table” hypothesis $h(x_i) = y_i$ for $i \leq m$ and $h(x) = 0$ otherwise has $L_S = 0$ and $L_{\mathcal{D}}$ close to chance. PAC theory tells us under what conditions on $\mathcal{H}$ and $m$ the gap $|L_S - L_{\mathcal{D}}|$ is small, and structural risk minimization tells us how to use those conditions to choose $\mathcal{H}$ adaptively.

Q2. How can VC dimension be finite for an uncountable hypothesis class?

VC dimension does not count hypotheses; it counts distinct labelings the class produces on a finite set. The class of all 2D linear classifiers is uncountable, but two classifiers with the same sign on every test point are indistinguishable for our purposes — and the number of distinguishable behaviours on $m$ points is at most $\binom{m}{0} + \binom{m}{1} + \binom{m}{2} \leq O(m^2)$ . The Sauer-Shelah lemma converts this combinatorial fact into a finite complexity bound.

Q3. Is zero training error always bad?

No. The right question is: is your model class large enough to memorize the noise? If the data is essentially noise-free and the class contains the truth (e.g. fitting a linear model to linear data), zero training error is the correct outcome. If $\sigma^2 > 0$ and your class is rich enough to interpolate, zero training error is a red flag. A useful sanity check: if $m / \mathrm{VCdim}(\mathcal{H}) < 10$ , treat low training error with suspicion.

Q4. What are the VC dimensions of common ML models?

| Model | VC dimension |

|---|---|

| Linear classifier in $\mathbb{R}^d$ | $d + 1$ |

| Polynomial of degree $k$ in $\mathbb{R}^d$ | $\binom{d+k}{k}$ |

| Axis-aligned rectangles in $\mathbb{R}^d$ | $2d$ |

| Sigmoid neural net with $W$ weights | $\Theta(W^2)$ (Karpinski-Macintyre) |

| Piecewise-linear net with $W$ weights, $L$ layers | $\Theta(W L \log W)$ (Bartlett et al.) |

| Decision tree of depth $D$ | $\Theta(2^D)$ |

| Class of all measurable functions | $\infty$ (not PAC learnable) |

For modern deep nets the VC bound is loose — networks routinely interpolate random labels (so VC is huge) yet still generalize. Explaining this is an active research area (PAC-Bayes, margin bounds, neural-tangent analyses, implicit-regularization theory).

Q5. Where does Hoeffding’s inequality come from?

It is a sub-Gaussian concentration bound for sums of bounded independent random variables. We will derive it cleanly from the moment-generating function in Chapter 3 and use it as the workhorse for almost every uniform-convergence argument in the rest of the series.

Summary#

| Concept | Key formula | One-line intuition |

|---|---|---|

| PAC sample complexity (realizable, finite) | $m \geq \tfrac{1}{\varepsilon}(\ln \lvert \mathcal{H}\rvert + \ln \tfrac{1}{\delta})$ | Eliminating bad hypotheses is exponentially easy. |

| PAC sample complexity (agnostic, finite) | $m \geq \tfrac{2}{\varepsilon^2}(\ln \lvert \mathcal{H}\rvert + \ln \tfrac{2}{\delta})$ | Estimating expectations costs an extra $1/\varepsilon$ . |

| VC sample complexity | $m = O\!\bigl(\tfrac{d + \ln(1/\delta)}{\varepsilon^2}\bigr)$ | $\mathrm{VCdim}$ replaces $\ln \lvert \mathcal{H}\rvert$ for infinite classes. |

| Sauer-Shelah | $\Pi_{\mathcal{H}}(m) \leq (em/d)^d$ | $2^m$ collapses to $m^d$ once VC is finite. |

| Bias-variance | Test error $=$ Bias$^2 +$ Variance $+\, \sigma^2$ | Three independent sources of error. |

| No Free Lunch | $\sum_f L_f(\mathcal{A}_1) = \sum_f L_f(\mathcal{A}_2)$ | All algorithms tie when averaged over all problems. |

Practical checklist for any new project.

- Plot training and validation errors against $m$ — which regime are you in?

- Estimate $\mathrm{VCdim}(\mathcal{H})$ or count effective parameters; aim for $m / \mathrm{VCdim} \gtrsim 10$ .

- Use cross-validation to pick complexity, not eyeball the training curve.

- Add regularization to make SRM the default, not an afterthought.

- Choose $\mathcal{H}$ whose inductive bias matches your problem — locality for images, sparsity for genomics, smoothness for physics.

What’s next#

So far I have one vocabulary on the table: risk, loss, capacity, bias-variance. It tells me whether learning is possible at all, but it does not tell me what a learner concretely looks like. The next chapter spreads these abstract tools onto reals and vectors — linear algebra and matrix theory.

The reason I put it second rather than in an appendix is that almost every “model” in machine learning eventually reduces to linear-algebra operations: gradients are vectors, parameters are matrices, backpropagation is Jacobian chains, PCA is the eigendecomposition of a covariance matrix. If this chapter handed me a measuring stick, the next one wires that stick into the actual computational machinery. By the end of it, “the gradient of a quadratic form is $2Ax$ ” should stop being a formula and start being a reflex — that reflex is the hard floor on which every later derivation stands.

References#

- Valiant, L. G. (1984). A theory of the learnable. Communications of the ACM, 27(11), 1134-1142.

- Vapnik, V. N. & Chervonenkis, A. Y. (1971). On the uniform convergence of relative frequencies of events to their probabilities. Theory of Probability & Its Applications, 16(2), 264-280.

- Blumer, A., Ehrenfeucht, A., Haussler, D. & Warmuth, M. K. (1989). Learnability and the Vapnik-Chervonenkis dimension. Journal of the ACM, 36(4), 929-965.

- Bartlett, P. L., Jordan, M. I. & McAuliffe, J. D. (2006). Convexity, classification, and risk bounds. Journal of the American Statistical Association, 101(473), 138-156.

- Bartlett, P. L., Harvey, N., Liaw, C. & Mehrabian, A. (2019). Nearly-tight VC-dimension bounds for piecewise linear neural networks. Journal of Machine Learning Research, 20, 1-17.

- Wolpert, D. H. (1996). The lack of a priori distinctions between learning algorithms. Neural Computation, 8(7), 1341-1390.

- Wolpert, D. H. & Macready, W. G. (1997). No free lunch theorems for optimization. IEEE Transactions on Evolutionary Computation, 1(1), 67-82.

- Shalev-Shwartz, S. & Ben-David, S. (2014). Understanding Machine Learning: From Theory to Algorithms. Cambridge University Press.

- Mohri, M., Rostamizadeh, A. & Talwalkar, A. (2018). Foundations of Machine Learning (2nd ed.). MIT Press.

- Zhang, C., Bengio, S., Hardt, M., Recht, B. & Vinyals, O. (2017). Understanding deep learning requires rethinking generalization. ICLR.

ML Math Derivations 20 parts

- 01 ML Math Derivations (1): Introduction and Mathematical Foundations you are here

- 02 ML Math Derivations (2): Linear Algebra and Matrix Theory

- 03 ML Math Derivations (3): Probability Theory and Statistical Inference

- 04 ML Math Derivations (4): Convex Optimization Theory

- 05 ML Math Derivations (5): Linear Regression

- 06 ML Math Derivations (6): Logistic Regression and Classification

- 07 ML Math Derivations (7): Decision Trees

- 08 ML Math Derivations (8): Support Vector Machines

- 09 ML Math Derivations (9): Naive Bayes

- 10 ML Math Derivations (10): Semi-Naive Bayes and Bayesian Networks

- 11 ML Math Derivations (11): Ensemble Learning

- 12 ML Math Derivations (12): XGBoost and LightGBM

- 13 ML Math Derivations (13): EM Algorithm and GMM

- 14 ML Math Derivations (14): Variational Inference and Variational EM

- 15 ML Math Derivations (15): Hidden Markov Models

- 16 ML Math Derivations (16): Conditional Random Fields

- 17 ML Math Derivations (17): Dimensionality Reduction and PCA

- 18 ML Math Derivations (18): Clustering Algorithms

- 19 ML Math Derivations (19): Neural Networks and Backpropagation

- 20 ML Math Derivations (20): Regularization and Model Selection