ML Math Derivations (4): Convex Optimization Theory

Nearly every ML algorithm is an optimization problem. This article derives convex sets, convex functions, gradient descent, Newton's method, KKT conditions, and ADMM -- the optimization toolkit for machine learning.

What You Will Learn#

In 1947, George Dantzig proposed the simplex method for linear programming, and a working theory of optimization was born. Eight decades later, optimization has become the engine of machine learning: every model you train, from a one-line linear regression to a 70B-parameter language model, is the answer to some optimization problem.

Among all such problems, convex optimization holds a privileged place. The defining property is so strong it almost feels like cheating: every local minimum is automatically a global minimum, and a handful of well-understood algorithms come with airtight convergence guarantees. The whole reason we treat “convex” as a green flag and “non-convex” as a yellow one comes down to this single fact.

This article builds the convex toolkit from the ground up. We start with the geometry (convex sets, convex functions, Jensen’s inequality), develop the calculus (subgradients for the non-smooth case), and then derive the algorithms that use that geometry: gradient descent, Nesterov acceleration, Adam, Newton’s method, BFGS, the KKT conditions for constrained problems, and ADMM for problems with separable structure.

What you will learn

- Convex sets and convex functions — the geometric definitions, first- and second-order characterizations, Jensen’s inequality

- Subgradients — extending calculus to non-smooth objectives like the L1 norm

- First-order methods — gradient descent, momentum, Nesterov, Adam, with the convergence rates that explain why each one exists

- Second-order methods — Newton’s method and BFGS, and the cost-vs-convergence tradeoff

- Constrained optimization — the Lagrangian, weak and strong duality, the KKT conditions

- ADMM — splitting a hard joint problem into two easy alternating subproblems

Prerequisites: multivariable calculus (gradients, Hessians, Taylor expansion) and linear algebra (eigenvalues, positive semidefinite matrices). The earlier parts of this series cover both.

Convex Sets and Convex Functions#

Convex Sets#

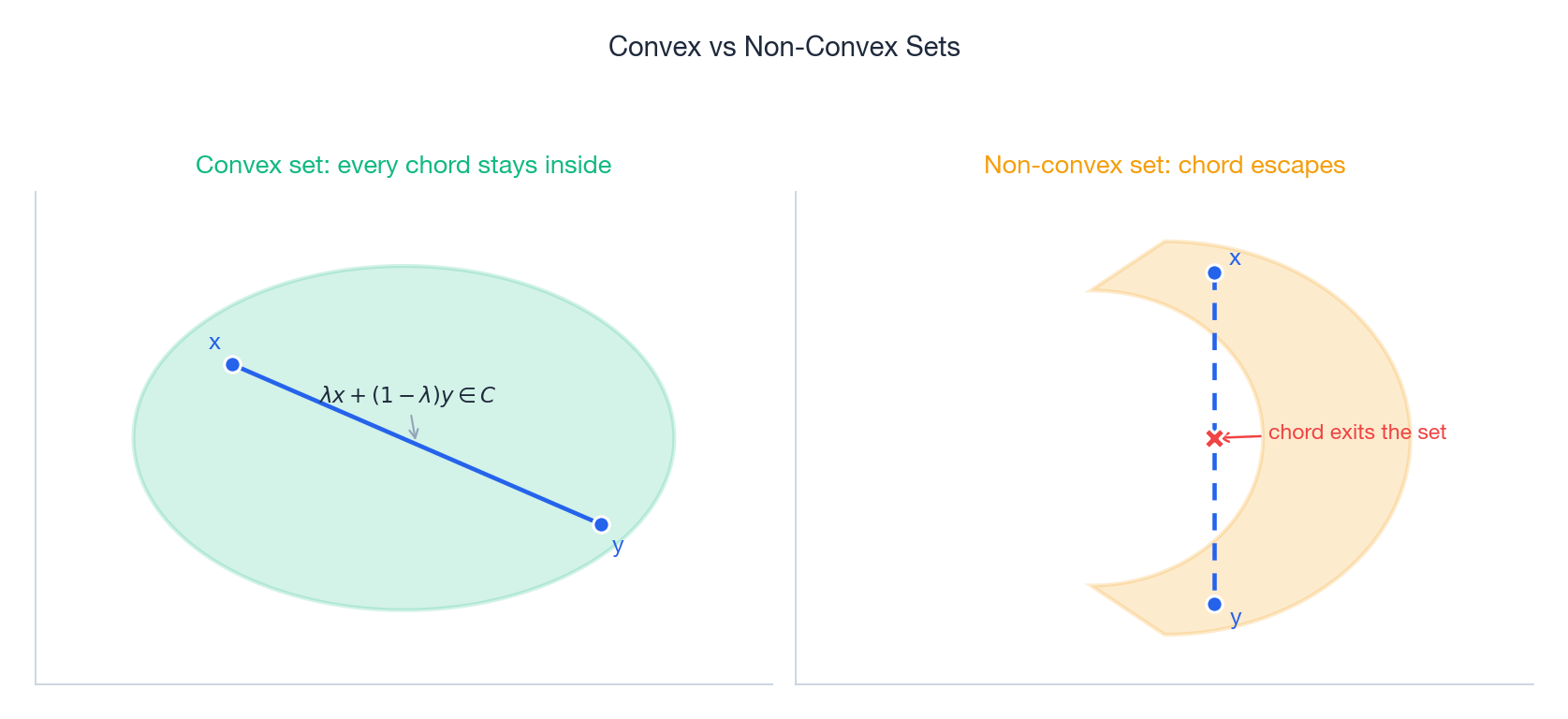

$$\lambda x + (1 - \lambda) y \in C. \tag{1}$$In words: the line segment between any two points of $C$ stays inside $C$ . No dents, no holes, no missing chunks. The picture below makes the definition concrete: a chord across a convex set never escapes; a chord across a non-convex set can poke right through a gap.

A short list of convex sets that you will meet again and again:

- Hyperplanes $\{x : a^T x = b\}$ and halfspaces $\{x : a^T x \leq b\}$ — the building blocks of every linear-constraint problem.

- Norm balls $\{x : \|x\| \leq r\}$ for any norm. The L2 ball is the standard one; the L1 ball gives the famous diamond shape behind sparsity.

- Ellipsoids $\{x : (x - x_0)^T A (x - x_0) \leq 1\}$ for $A \succ 0$ .

- Polyhedra — finite intersections of halfspaces, the natural feasible regions of linear programming.

Theorem 1 (Intersection). Any intersection $\bigcap_i C_i$ of convex sets is convex.

Proof. If $x, y$ lie in every $C_i$ , then $\lambda x + (1-\lambda) y$ lies in every $C_i$ by convexity, hence in their intersection. $\square$

This single fact is the workhorse of practical convex modeling: complicated feasible regions are almost always built by intersecting many simple convex constraints.

Convex Functions#

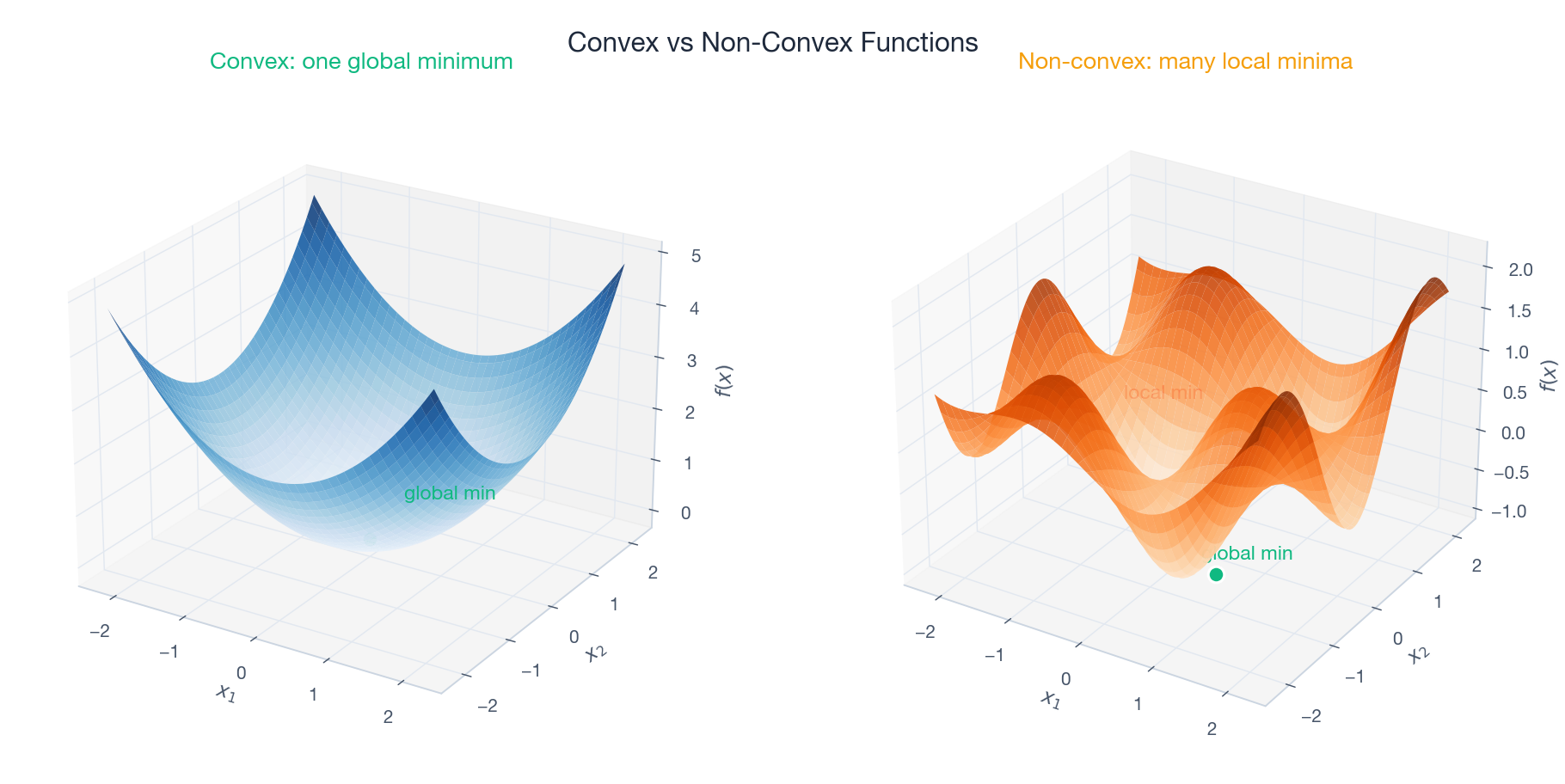

$$f(\lambda x + (1-\lambda)y) \;\leq\; \lambda f(x) + (1-\lambda) f(y). \tag{2}$$Geometrically, the chord between any two points on the graph of $f$ lies on or above the graph itself. The bowl-shaped surface on the left below is convex; the egg-carton on the right is not. The difference is consequential: the convex bowl has exactly one minimum that any descent method will find, while the egg-carton has many local minima where descent methods get permanently stuck.

Two strengthenings of convexity matter often enough to name:

- Strictly convex: the inequality (2) is strict whenever $x \neq y$ and $\lambda \in (0, 1)$ . This rules out flat plateaus and guarantees a unique minimizer (when one exists).

- $\mu$ -strongly convex ($\mu > 0$ ): $f(x) - \tfrac{\mu}{2}\|x\|^2$ is convex. Equivalently, $f$ has at least quadratic curvature in every direction. Strong convexity is what powers the linear convergence rates we will derive shortly.

First-Order and Second-Order Characterizations#

Definition 2 is geometric and clean, but it is hard to check. The next two theorems give equivalent conditions in terms of derivatives, which is what we actually use in practice.

$$f(y) \;\geq\; f(x) + \nabla f(x)^T (y - x). \tag{3}$$In other words, every tangent hyperplane is a global lower bound on $f$ . This is the deep structural reason gradient descent works on convex problems: the linear approximation $f(x) + \nabla f(x)^T (y-x)$ never overestimates $f$ , so following its negative gradient really does decrease $f$ — at least for a small enough step.

$$\nabla^2 f(x) \;\succeq\; 0 \qquad \text{for all } x. \tag{4}$$If $\nabla^2 f \succ 0$ everywhere, $f$ is strictly convex. If $\nabla^2 f \succeq \mu I$ everywhere, $f$ is $\mu$ -strongly convex. The Hessian test is by far the most common way to certify convexity in practice — for instance, the loss in linear regression is $\nabla^2 = X^T X$ , which is automatically PSD.

Jensen’s Inequality#

The convexity inequality (2) is a statement about a two-point average. Pushing it to general probability distributions gives what is arguably the single most-used inequality in machine learning.

$$f(\mathbb{E}[X]) \;\leq\; \mathbb{E}[f(X)]. \tag{5}$$If $f$ is strictly convex and $X$ is non-degenerate, the inequality is strict.

Proof sketch (discrete case). Iterate Definition 2 by induction: if $X$ takes values $x_1, \ldots, x_n$ with probabilities $p_1, \ldots, p_n$ , then $f(\sum p_i x_i) \leq \sum p_i f(x_i)$ by repeated application of the chord inequality. The continuous case follows by approximation. $\square$

Jensen powers an enormous amount of downstream theory. Two appearances you will see again in this series:

- Non-negativity of KL divergence. Apply Jensen to $-\log$ (which is convex): $D_{KL}(p \| q) = \mathbb{E}_p[-\log(q/p)] \geq -\log \mathbb{E}_p[q/p] = 0$ .

- Evidence Lower Bound (ELBO) in variational inference: the log-marginal $\log p(x)$ is bounded below by $\mathbb{E}_q[\log p(x, z) - \log q(z)]$ via Jensen on $\log$ .

A short catalog of convex functions worth memorizing: affine functions $a^T x + b$ (both convex and concave), the exponential $e^{ax}$ , all norms $\|x\|$ , the negative entropy $x \log x$ on $x > 0$ , the squared loss $\tfrac{1}{2}\|x\|^2$ , and the log-sum-exp $\log \sum_i e^{x_i}$ that powers softmax.

Subgradients and Non-Smooth Optimization#

Many of the most useful objectives in machine learning are not differentiable everywhere. The L1 norm $\|x\|_1$ used in Lasso has a kink at every coordinate axis; the hinge loss $\max(0, 1 - y \cdot s)$ used in SVMs has a kink at $s = 1/y$ . To do calculus on these objects we generalize the gradient.

Subgradients#

$$f(y) \;\geq\; f(x) + g^T (y - x). \tag{6}$$The set of all such $g$ is the subdifferential $\partial f(x)$ . When $f$ is differentiable at $x$ , $\partial f(x) = \{\nabla f(x)\}$ — a single element. At non-differentiable points the subdifferential is typically a non-trivial set, encoding all the “valid” linear lower bounds that touch $f$ at $x$ .

$$\partial f(x) = \begin{cases} \{-1\} & x < 0, \\ [-1, 1] & x = 0, \\ \{+1\} & x > 0. \end{cases} \tag{7}$$Optimality. $x^\star$ is a global minimizer of a convex $f$ if and only if $0 \in \partial f(x^\star)$ . This is the non-smooth analogue of $\nabla f(x^\star) = 0$ .

Subgradient Method#

$$x^{(k+1)} = x^{(k)} - \alpha_k g^{(k)}, \quad g^{(k)} \in \partial f(x^{(k)}). \tag{8}$$A subtle but important warning: a subgradient is not in general a descent direction — the function value may go up on a single step. To ensure convergence the step size must shrink, e.g. $\alpha_k = 1/\sqrt{k}$ . The price for working with non-smooth functions is a slower rate, $O(1/\sqrt{k})$ instead of the $O(1/k)$ rate of gradient descent on smooth problems.

For composite problems of the form $f(x) + h(x)$ where $f$ is smooth and $h$ is non-smooth (Lasso fits this exactly), the proximal gradient method recovers the smooth $O(1/k)$ rate by handling $h$ exactly through its proximal operator.

First-Order Optimization Algorithms#

Gradient Descent#

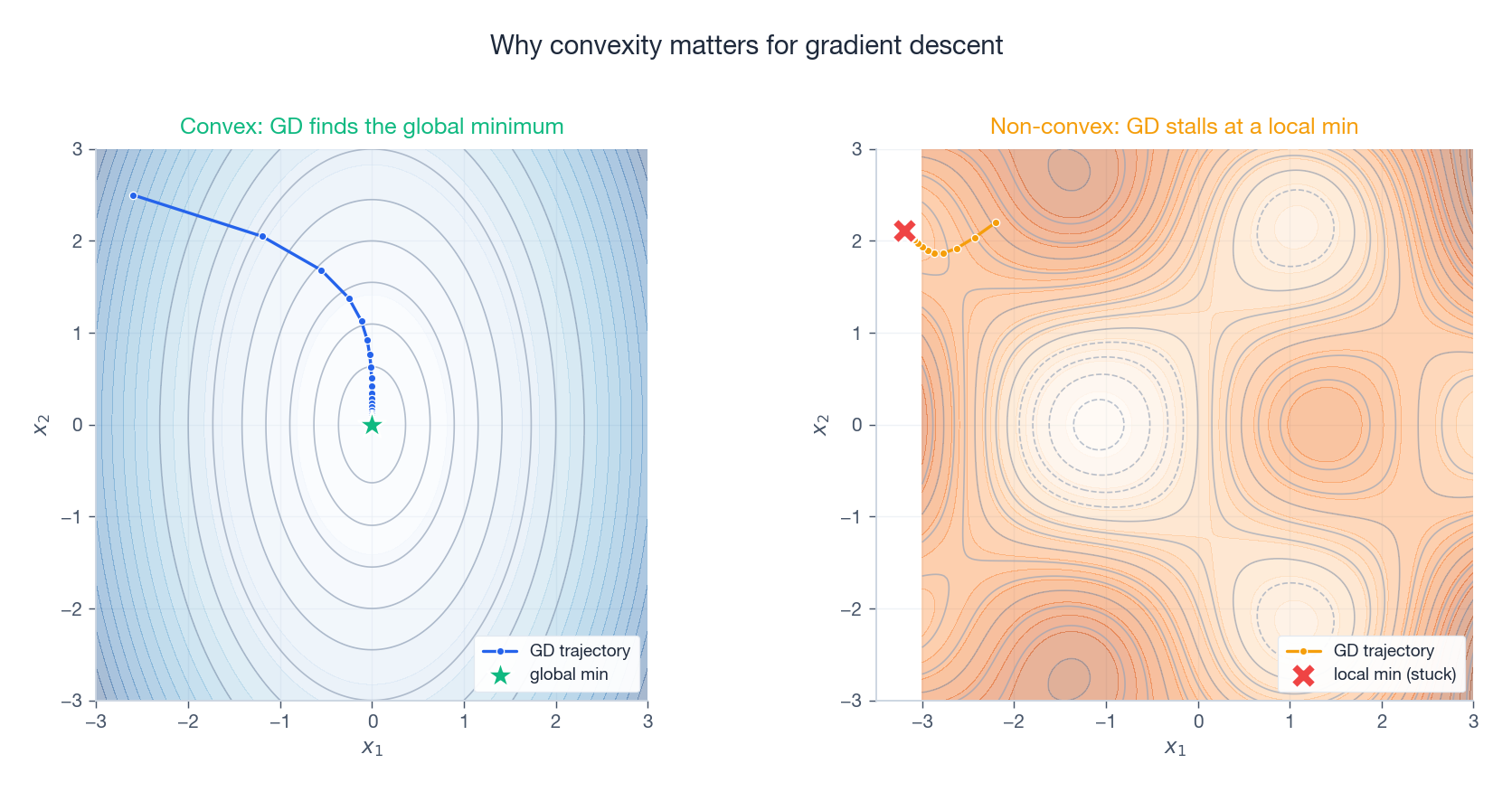

$$x^{(k+1)} \;=\; x^{(k)} - \alpha \, \nabla f(x^{(k)}). \tag{9}$$Why does this work? Because of the first-order condition (3): on a convex function, the negative gradient really is a descent direction, and the linear approximation underestimates $f$ , so we cannot “overshoot the bottom” by following gradients carefully. The next figure shows the consequence in two dimensions — a clean monotone path to the minimum on the convex side, and a path that gets pinned to a local minimum on the non-convex side.

Proof sketch. The Lipschitz-gradient assumption gives the descent inequality $f(y) \leq f(x) + \nabla f(x)^T(y - x) + \tfrac{L}{2}\|y - x\|^2$ . Plug in $y = x - \tfrac{1}{L}\nabla f(x)$ and combine with the convexity bound (3); telescoping over $k$ iterations yields (10). $\square$

$$f(x^{(k)}) - f(x^\star) \;\leq\; \left(1 - \frac{\mu}{L}\right)^k \big[f(x^{(0)}) - f(x^\star)\big]. \tag{11}$$This is linear convergence: the error shrinks by a fixed factor each step. The factor depends on the condition number $\kappa = L / \mu$ . A well-conditioned problem ($\kappa$ near 1) converges in a handful of steps; a badly-conditioned one ($\kappa \sim 10^6$ ) needs millions. Improving conditioning — through preconditioning, normalization, or regularization — is one of the most effective practical tricks in optimization.

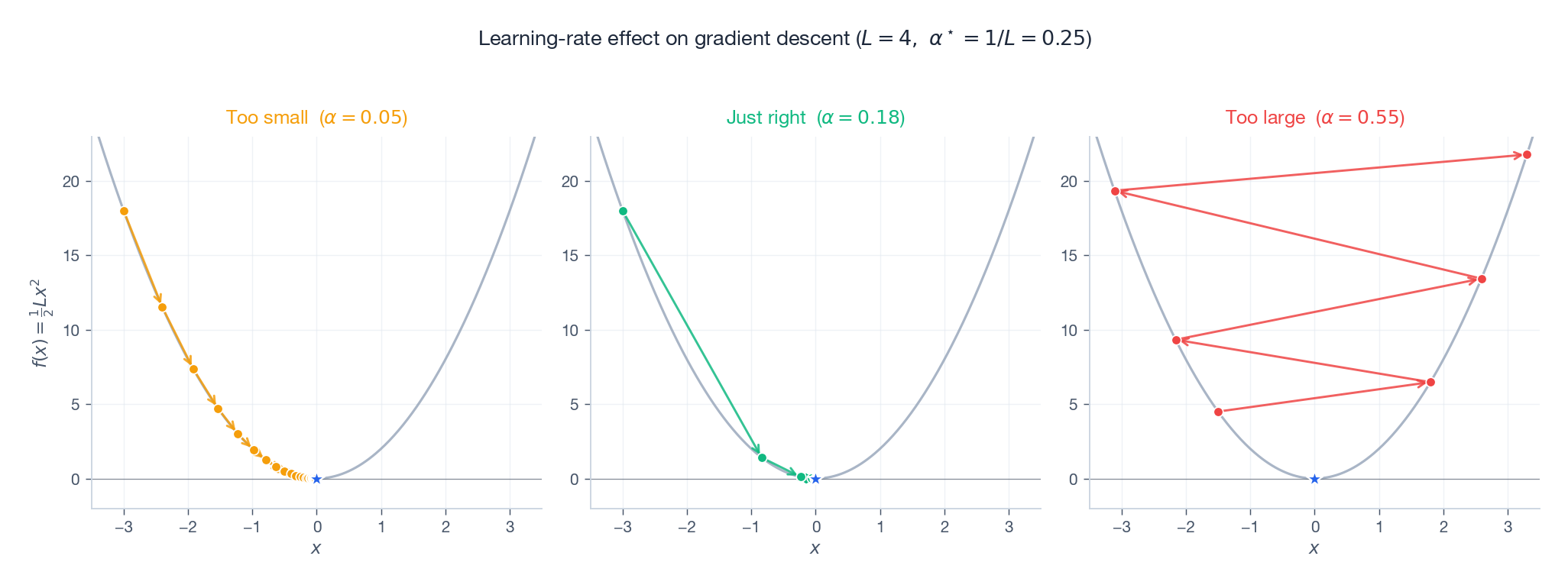

The Learning Rate is a Choice You Cannot Avoid#

Gradient descent has one knob: the step size $\alpha$ . For the same convex quadratic, three choices produce three completely different stories.

The arithmetic for the 1D quadratic $f(x) = \tfrac{L}{2} x^2$ makes the picture exact. The update is $x^{(k+1)} = (1 - \alpha L) x^{(k)}$ , so:

- $0 < \alpha < 1/L$ : contracting but slowly — many tiny steps.

- $\alpha = 1/L$ : optimal one-step contraction; on a 1D quadratic this jumps straight to $0$ .

- $1/L < \alpha < 2/L$ : still convergent but oscillating across the minimum.

- $\alpha > 2/L$ : $|1 - \alpha L| > 1$ , so iterates amplify and diverge.

The same dichotomy holds in many dimensions, with $L$ replaced by the largest Hessian eigenvalue. This is the single most important practical fact about gradient descent: the right learning rate is set by curvature, not by guessing.

Momentum and Nesterov Acceleration#

On long, narrow valleys gradient descent zig-zags across the walls instead of running down the floor. Momentum methods fix this by averaging past gradients, damping the oscillations and accumulating speed in consistent directions.

$$v^{(k+1)} = \beta v^{(k)} - \alpha \nabla f(x^{(k)}), \qquad x^{(k+1)} = x^{(k)} + v^{(k+1)}. \tag{12}$$ $$x^{(k+1)} = y^{(k)} - \alpha \nabla f(y^{(k)}), \qquad y^{(k+1)} = x^{(k+1)} + \beta_k\big(x^{(k+1)} - x^{(k)}\big). \tag{13}$$ $$f(x^{(k)}) - f(x^\star) \;=\; O(1/k^2). \tag{14}$$The bound (14) is provably optimal: no first-order method (one that only queries $\nabla f$ ) can do asymptotically better in the worst case. This is one of the genuine surprises of convex optimization — a tiny algorithmic twist (look ahead, then step) gets you from $O(1/k)$ to $O(1/k^2)$ for free.

Adaptive Learning Rates and Adam#

Plain gradient descent uses one global step size for every coordinate. In practice, different coordinates have wildly different effective curvatures — for instance, in a text model, common-word embeddings receive frequent gradient updates while rare-word embeddings receive almost none. Adaptive methods give each coordinate its own learning rate, scaled by the recent magnitude of its gradient.

$$ \begin{aligned} m^{(k+1)} &= \beta_1 m^{(k)} + (1-\beta_1)\, g^{(k)} \\ v^{(k+1)} &= \beta_2 v^{(k)} + (1-\beta_2)\, (g^{(k)})^{\odot 2} \\ x^{(k+1)} &= x^{(k)} \;-\; \frac{\alpha\, \hat m^{(k+1)}}{\sqrt{\hat v^{(k+1)}} + \varepsilon} \end{aligned} $$with bias-corrected estimates $\hat m^{(k+1)} = m^{(k+1)} / (1 - \beta_1^{k+1})$ and $\hat v^{(k+1)} = v^{(k+1)} / (1 - \beta_2^{k+1})$ . The standard hyperparameters $(\beta_1, \beta_2, \alpha, \varepsilon) = (0.9, 0.999, 10^{-3}, 10^{-8})$ work astonishingly well across deep learning workloads, which is part of why Adam became the default optimizer for transformers.

Stochastic Gradient Descent#

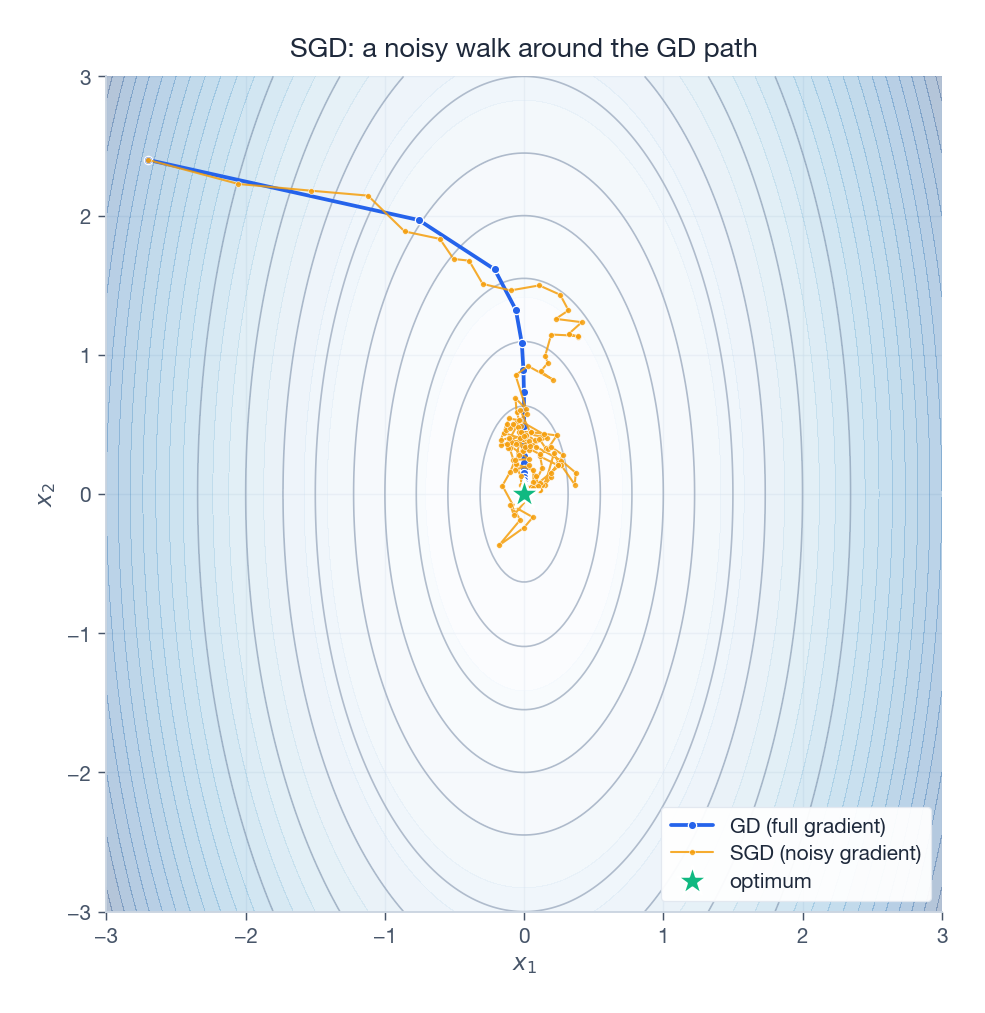

In large-scale ML, computing the full gradient $\nabla f(x) = \tfrac{1}{N}\sum_{i=1}^N \nabla f_i(x)$ over millions of examples is prohibitive. Stochastic gradient descent (SGD) replaces the full sum by a random sample (a mini-batch), trading a noisy step for a much cheaper one.

The picture below shows the qualitative difference: the deterministic GD path is smooth and direct; the SGD path is a noisy walk that stays close to the GD trajectory on average but jiggles around it constantly.

For convex objectives, SGD converges at $O(1/\sqrt{k})$ with constant step size and $O(1/k)$ with diminishing step size — slower per-step than GD, but each step is $N$ times cheaper, so wall-clock convergence is dramatically better. There is also a soft benefit in non-convex settings: noise helps SGD escape shallow saddle points and narrow local minima, which is part of why deep networks trained with SGD generalize better than the same networks trained to a sharper minimum with full-batch methods.

Second-Order Methods#

Newton’s Method#

$$f(x) \;\approx\; f(x^{(k)}) + \nabla f^T (x - x^{(k)}) + \tfrac{1}{2} (x - x^{(k)})^T \nabla^2 f \,(x - x^{(k)}).$$ $$\Delta x = -\big[\nabla^2 f(x^{(k)})\big]^{-1} \nabla f(x^{(k)}). \tag{18}$$ $$\|x^{(k+1)} - x^\star\| \;\leq\; C\, \|x^{(k)} - x^\star\|^2. \tag{19}$$Quadratic convergence is qualitatively different from anything we have seen so far: roughly, the number of correct digits doubles each iteration. Once you are close, Newton finishes in five or six steps to machine precision.

The catch is in three places. (i) Per-iteration cost is $O(n^3)$ to factor the Hessian — prohibitive for $n > 10^4$ . (ii) Storage is $O(n^2)$ for the Hessian itself. (iii) If $\nabla^2 f$ is not PD (which happens away from convex regions), the Newton step may not even decrease $f$ , so it has to be regularized into something like a trust-region step.

Quasi-Newton: BFGS and L-BFGS#

Quasi-Newton methods aim for “almost” Newton-like behavior at first-order cost. Instead of computing the Hessian, they approximate its inverse using only the gradients we have already seen.

$$H^{(k+1)} = (I - \rho_k s_k y_k^T)\, H^{(k)} \,(I - \rho_k y_k s_k^T) + \rho_k s_k s_k^T, \tag{20}$$where $s_k = x^{(k+1)} - x^{(k)}$ , $y_k = \nabla f^{(k+1)} - \nabla f^{(k)}$ , and $\rho_k = 1/(y_k^T s_k)$ . The update is engineered so that $H^{(k+1)}$ satisfies the secant equation $H^{(k+1)} y_k = s_k$ (the inverse-Hessian analogue of how the true inverse Hessian relates gradient differences to step differences) and remains symmetric positive definite.

For large $n$ , even storing $H$ is impractical, so L-BFGS keeps only the last $m \approx 5$ –$20$ pairs $(s_i, y_i)$ and applies $H g$ implicitly through a recursive two-loop formula. This is the standard workhorse for medium-to-large smooth convex problems where you want better-than-GD convergence without the Hessian cost.

| Method | Cost per iter | Convergence | Best for |

|---|---|---|---|

| GD | $O(n)$ | $O(1/k)$ | Large-scale smooth |

| Nesterov | $O(n)$ | $O(1/k^2)$ | Smooth convex |

| Newton | $O(n^3)$ | Quadratic | $n < 10^4$ , high accuracy |

| BFGS | $O(n^2)$ | Superlinear | Medium scale |

| L-BFGS | $O(n)$ | Superlinear | Large smooth convex |

| SGD/Adam | $O(n)$ /step | $O(1/\sqrt{k})$ | Deep learning, huge $N$ |

Constrained Optimization and Duality#

Problem Setup#

$$\min_x f(x) \quad \text{s.t.} \quad g_i(x) \leq 0 \;\; (i = 1, \ldots, m), \quad h_j(x) = 0 \;\; (j = 1, \ldots, p). \tag{21}$$We assume $f$ and $g_i$ are convex, and $h_j$ are affine.

The Lagrangian and the Dual Problem#

$$\mathcal{L}(x, \lambda, \nu) = f(x) + \sum_{i=1}^m \lambda_i g_i(x) + \sum_{j=1}^p \nu_j h_j(x). \tag{22}$$The dual function $d(\lambda, \nu) = \min_x \mathcal{L}(x, \lambda, \nu)$ is concave in $(\lambda, \nu)$ regardless of whether the original problem is convex (it is the pointwise minimum of affine functions of $(\lambda, \nu)$ ).

$$d(\lambda, \nu) \;\leq\; f(x^\star). \tag{23}$$Proof. For any feasible $x$ , $\sum_i \lambda_i g_i(x) \leq 0$ (because $\lambda_i \geq 0$ and $g_i(x) \leq 0$ ) and $\sum_j \nu_j h_j(x) = 0$ , so $\mathcal{L}(x, \lambda, \nu) \leq f(x)$ . Taking the infimum over $x$ gives $d(\lambda, \nu) \leq f(x)$ , and since this holds for every feasible $x$ , $d(\lambda, \nu) \leq f(x^\star)$ . $\square$

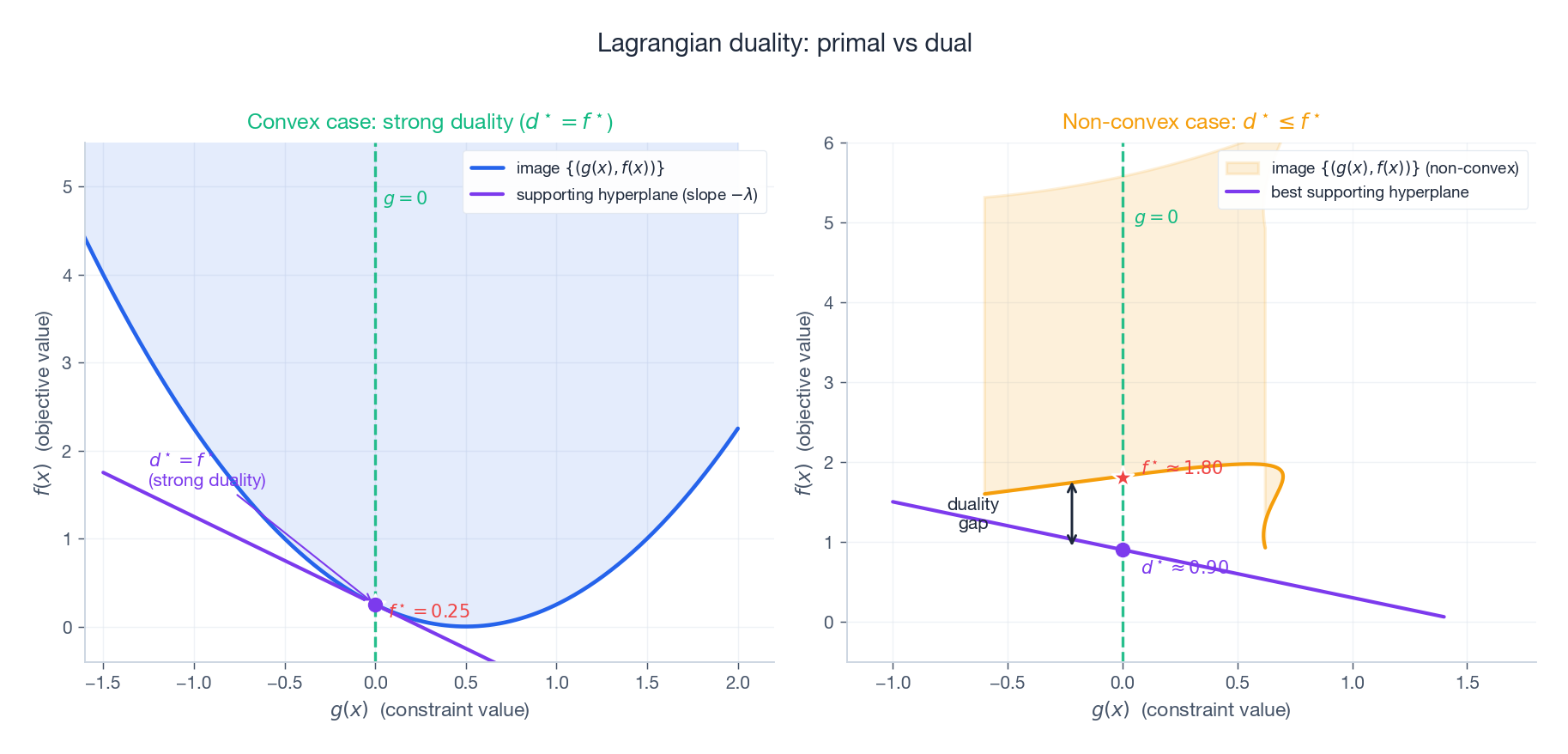

The dual problem $\max_{\lambda \geq 0, \nu}\, d(\lambda, \nu)$ has optimal value $d^\star \leq f^\star$ . The gap $f^\star - d^\star \geq 0$ is the duality gap. When $f^\star = d^\star$ we say strong duality holds.

Slater’s condition. For convex problems, strong duality holds whenever there exists a strictly feasible point — some $\tilde x$ with $g_i(\tilde x) < 0$ for all inequality constraints. (A few mild technical assumptions cover the equality constraints.) Strong duality is what makes the dual problem useful: solving it gives the same optimal value as the primal, often with a simpler structure.

The geometric picture is in the figure below. The image set $\{(g(x), f(x)) : x \in \mathbb{R}^n\}$ tells us everything: $f^\star$ is the smallest $f$ -value attained at $g \leq 0$ , and $d^\star$ is the highest $f$ -axis intercept of any non-vertical hyperplane that supports the image set from below. When the image is convex these two values coincide; when it is not, a “notch” can open above the supporting hyperplane and create a duality gap.

KKT Conditions#

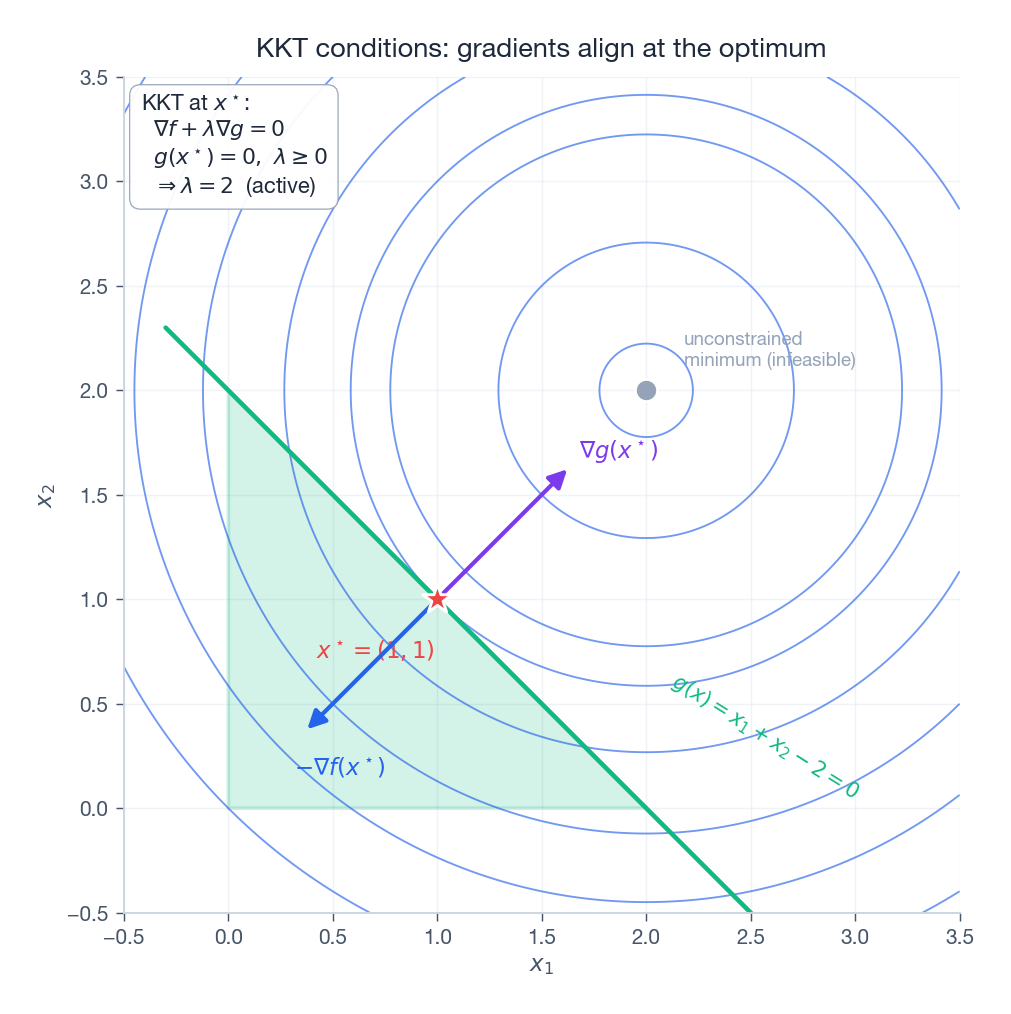

When strong duality holds, the optimal primal and dual variables jointly satisfy a small set of equations and inequalities — the Karush-Kuhn-Tucker (KKT) conditions.

Theorem 10 (KKT). Suppose strong duality holds and all functions are differentiable. Then $(x^\star, \lambda^\star, \nu^\star)$ is primal-dual optimal if and only if

- Primal feasibility: $g_i(x^\star) \leq 0$ and $h_j(x^\star) = 0$ .

- Dual feasibility: $\lambda_i^\star \geq 0$ .

- Complementary slackness: $\lambda_i^\star\, g_i(x^\star) = 0$ for all $i$ .

- Stationarity: $\nabla f(x^\star) + \sum_i \lambda_i^\star \nabla g_i(x^\star) + \sum_j \nu_j^\star \nabla h_j(x^\star) = 0$ .

For convex problems satisfying Slater’s condition, KKT is both necessary and sufficient — solving these equations is equivalent to solving the original optimization problem.

The geometry of the stationarity condition is striking. At the optimum, $-\nabla f(x^\star)$ (the direction in which the objective wants to move) must lie in the cone of active-constraint normals. If it didn’t, you could find a feasible descent direction and the point wouldn’t be optimal. The figure makes this concrete on a 2D example: at the constrained optimum on a half-plane, the negative objective gradient and the constraint gradient point in exactly opposite directions, balanced by the multiplier.

Complementary slackness is the most intuitively powerful KKT clause: for each inequality, either the constraint is active ($g_i(x^\star) = 0$ ) or its multiplier is zero. Inactive constraints contribute nothing to the optimum — they could be dropped without changing the answer. This single observation gives the support-vector machine (SVM) its name: in the SVM dual, only training points exactly on the margin have nonzero multipliers, and those are the support vectors. Everything else is dead weight.

ADMM: Splitting Hard Problems Into Easy Ones#

$$\min_{x, z}\, f(x) + g(z) \quad \text{s.t.} \quad A x + B z = c. \tag{24}$$ $$ \begin{aligned} x^{(k+1)} &= \arg\min_x\, \Big[f(x) + \tfrac{\rho}{2}\|Ax + Bz^{(k)} - c + u^{(k)}\|^2\Big], \\ z^{(k+1)} &= \arg\min_z\, \Big[g(z) + \tfrac{\rho}{2}\|Ax^{(k+1)} + Bz - c + u^{(k)}\|^2\Big], \\ u^{(k+1)} &= u^{(k)} + Ax^{(k+1)} + Bz^{(k+1)} - c. \end{aligned} $$ADMM is not the fastest algorithm in any single regime, but it is remarkably robust and naturally distributable, which is why it dominates large-scale structured problems.

Application: Lasso. The Lasso problem $\min \tfrac{1}{2}\|y - X\beta\|^2 + \lambda\|\beta\|_1$ becomes an ADMM problem by introducing a copy: $\min \tfrac{1}{2}\|y - X\beta\|^2 + \lambda\|z\|_1$ s.t. $\beta = z$ . Then

- the $\beta$ -update is a ridge regression with closed form,

- the $z$ -update is the soft-thresholding operator, applied coordinate-wise:

Both subproblems are essentially free, and the iteration produces the exact Lasso solution. The same recipe works for nuclear-norm minimization, total-variation denoising, consensus optimization across many machines, and more.

Code: Optimization Algorithms#

A minimal but working implementation of GD, Newton, BFGS, and ADMM-Lasso:

| |

FAQ#

Q1: Why study convex optimization if deep learning is non-convex?

For three reasons that compound. First, many of the workhorses of ML — linear and ridge regression, logistic regression, SVMs, most classical statistical estimators — really are convex. Second, near a local minimum, any sufficiently smooth loss looks quadratic, so the convex convergence rates apply locally. Third, modern optimizers like Adam, SGD with momentum, and AdaGrad were all designed and analyzed in the convex setting, then transplanted to deep learning; understanding their convex roots tells you when they are likely to misbehave on a non-convex loss.

Q2: What does the condition number $\kappa$ actually mean?

$\kappa = L / \mu$ measures how stretched the level sets are. $\kappa = 1$ means perfectly spherical contours, and gradient descent finishes in one step. $\kappa = 100$ means the bowl is 100× longer in one direction than another, and gradient descent zig-zags. $\kappa \sim 10^6$ means it bounces nearly perpendicular to the descent direction and crawls. Ridge regression’s secret weapon is conditioning: adding $\lambda I$ to $X^T X$ raises the smallest eigenvalue from near zero to at least $\lambda$ , replacing $\kappa = \lambda_{\max}/\lambda_{\min}$ with $\kappa = (\lambda_{\max}+\lambda)/(\lambda_{\min}+\lambda)$ , which can be many orders of magnitude smaller.

Q3: How do I choose an algorithm for a new problem?

Follow the structure of the problem.

| Situation | Algorithm |

|---|---|

| Small smooth ($n < 10^3$ ) | BFGS or Newton |

| Medium smooth ($n < 10^5$ ) | L-BFGS |

| Large-scale or deep learning | SGD, Adam |

| Non-smooth + structured (e.g. L1) | Proximal gradient, ADMM |

| Distributed / consensus | ADMM |

| Constrained convex | Interior-point (CVXPY) |

When in doubt, start with L-BFGS for smooth problems, ADMM for non-smooth structured problems, and Adam for anything involving a neural network.

Exercises#

Exercise 1. Show that $f(x_1, x_2) = x_1^2 - x_1 x_2 + x_2^2$ is strictly convex.

Solution. The Hessian is $\begin{pmatrix} 2 & -1 \\ -1 & 2\end{pmatrix}$ with eigenvalues $1$ and $3$ . Both are positive, so the Hessian is positive definite and $f$ is strictly convex.

Exercise 2. Solve $\min x_1^2 + x_2^2$ subject to $x_1 + x_2 \geq 1$ via the KKT conditions.

Solution. Write the constraint as $g(x) = 1 - x_1 - x_2 \leq 0$ . Stationarity: $2x_i - \lambda = 0$ , so $x_1 = x_2 = \lambda/2$ . If the constraint were inactive then $\lambda = 0$ and $x = 0$ , which violates the constraint; so the constraint is active, $x_1 + x_2 = 1$ , giving $x_1 = x_2 = 1/2$ and $\lambda = 1$ .

Exercise 3. Derive GD’s convergence rate for $f(x) = \tfrac{1}{2} x^T A x - b^T x$ with $A \succ 0$ .

Solution. The error $e^{(k)} = x^{(k)} - x^\star$ satisfies $e^{(k+1)} = (I - \alpha A)\, e^{(k)}$ . Convergence requires $\|I - \alpha A\| < 1$ in spectral norm, i.e. $|1 - \alpha \lambda_i| < 1$ for all eigenvalues $\lambda_i$ of $A$ , giving $0 < \alpha < 2/\lambda_{\max}$ . The optimal step is $\alpha^\star = 2/(\lambda_{\min} + \lambda_{\max})$ , with rate $(\kappa - 1)/(\kappa + 1)$ .

Exercise 4. Use Jensen’s inequality to prove the AM-GM inequality $\tfrac{1}{n}\sum x_i \geq (\prod x_i)^{1/n}$ for $x_i > 0$ .

Solution. The function $-\log$ is convex on $(0, \infty)$ . By Jensen, $-\log\!\big(\tfrac{1}{n}\sum x_i\big) \leq \tfrac{1}{n}\sum (-\log x_i) = -\log(\prod x_i)^{1/n}$ . Negating and exponentiating gives the AM-GM inequality.

Summary#

| Concept | Key Result | Why it matters |

|---|---|---|

| Convexity | Local min = global min | Optimization becomes tractable |

| First-order condition | $f(y) \geq f(x) + \nabla f(x)^T(y - x)$ | Gradient descent has a global lower bound |

| GD convergence | $O(1/k)$ smooth, $(1 - \mu/L)^k$ strongly convex | Speed is set by the condition number |

| Nesterov | $O(1/k^2)$ — provably optimal first-order | Acceleration for free |

| Newton | Quadratic convergence near $x^\star$ | Few steps if you can afford the Hessian |

| KKT | Necessary and sufficient (convex + Slater) | Characterizes constrained optima |

| ADMM | Splits a hard problem into two easy alternating subproblems | Distributed and structured optimization |

Convex optimization is the core that the rest of this series will build on: linear regression, logistic regression, SVMs, and many EM-style algorithms all reduce to convex problems for which the algorithms above are the right answer.

What’s next#

Four chapters of scaffolding, done: the language of analysis, of vectors, of probability, of optimization. From the next chapter onward I start stacking all four on the same concrete algorithm — linear regression.

Linear regression looks simple, but it is the “microscope slide” of supervised learning: its parameters are vectors (linear algebra), its loss is a Gaussian likelihood (probability), its solution is convex optimization (this chapter), and its generalization decomposes as bias-variance (chapter one). I will keep returning to this four-language stacking — not for show, but because it is only when each algorithm is dissected into these four layers that you can see why “swapping the loss to Huber only touches one layer” or why “adding L1 touches both the prior and the optimizer”. Linear regression is the first full rehearsal of that habit.

References#

- Boyd, S. & Vandenberghe, L. (2004). Convex Optimization. Cambridge University Press.

- Nocedal, J. & Wright, S. J. (2006). Numerical Optimization (2nd ed.). Springer.

- Nesterov, Y. (2004). Introductory Lectures on Convex Optimization. Springer.

- Kingma, D. P. & Ba, J. (2015). Adam: A Method for Stochastic Optimization. ICLR.

- Boyd, S., Parikh, N., Chu, E., Peleato, B., & Eckstein, J. (2011). Distributed Optimization and Statistical Learning via ADMM. Foundations and Trends in ML.

- Bubeck, S. (2015). Convex Optimization: Algorithms and Complexity. Foundations and Trends in ML.

- Bottou, L., Curtis, F. E., & Nocedal, J. (2018). Optimization Methods for Large-Scale Machine Learning. SIAM Review.

ML Math Derivations 20 parts

- 01 ML Math Derivations (1): Introduction and Mathematical Foundations

- 02 ML Math Derivations (2): Linear Algebra and Matrix Theory

- 03 ML Math Derivations (3): Probability Theory and Statistical Inference

- 04 ML Math Derivations (4): Convex Optimization Theory you are here

- 05 ML Math Derivations (5): Linear Regression

- 06 ML Math Derivations (6): Logistic Regression and Classification

- 07 ML Math Derivations (7): Decision Trees

- 08 ML Math Derivations (8): Support Vector Machines

- 09 ML Math Derivations (9): Naive Bayes

- 10 ML Math Derivations (10): Semi-Naive Bayes and Bayesian Networks

- 11 ML Math Derivations (11): Ensemble Learning

- 12 ML Math Derivations (12): XGBoost and LightGBM

- 13 ML Math Derivations (13): EM Algorithm and GMM

- 14 ML Math Derivations (14): Variational Inference and Variational EM

- 15 ML Math Derivations (15): Hidden Markov Models

- 16 ML Math Derivations (16): Conditional Random Fields

- 17 ML Math Derivations (17): Dimensionality Reduction and PCA

- 18 ML Math Derivations (18): Clustering Algorithms

- 19 ML Math Derivations (19): Neural Networks and Backpropagation

- 20 ML Math Derivations (20): Regularization and Model Selection