ML Math Derivations (5): Linear Regression

A complete derivation of linear regression from three perspectives -- algebra (the normal equation), geometry (orthogonal projection), and probability (maximum likelihood) -- followed by Ridge, Lasso, gradient methods, and diagnostics, with every claim verified against scikit-learn.

Hook. In 1886 Francis Galton noticed something strange about heredity: children of unusually tall (or short) parents tended to be closer to the average than their parents were. He called this drift toward the mean regression, and the name stuck. The statistical curiosity grew up into the most consequential model in machine learning — not because linear regression is powerful on its own, but because almost every other algorithm (logistic regression, neural networks, kernel methods) is some twist on the same idea: fit a line, but in the right space.

This chapter develops linear regression from three independent starting points — algebra, geometry, and probability — and shows that they all land on the same equation. Then we look at what happens when the assumptions break, and how Ridge, Lasso, and robust losses repair them.

What You Will Learn#

- Setup — how to write the model in matrix form so the math becomes one line.

- Algebra — minimize a quadratic, get the normal equation $w^\* = (X^\top X)^{-1} X^\top y$ .

- Geometry — the same answer falls out of orthogonally projecting $y$ onto $\operatorname{Col}(X)$ .

- Probability — under Gaussian noise, least squares is exactly maximum likelihood.

- Regularization — Ridge (L2) makes the system stable, Lasso (L1) makes it sparse.

- Optimization — when $X^\top X$ is too big to invert, gradient descent steps in.

- Diagnostics — residuals, multicollinearity, outliers, and how to fix each.

Setup: Putting Linear Regression in Matrix Form#

Problem Statement#

$$f(x) = w^\top x + b$$whose predictions are as close as possible (in a sense we’ll make precise) to the observed targets.

$$\tilde x = \begin{pmatrix} x \\\\ 1 \end{pmatrix}, \qquad \tilde w = \begin{pmatrix} w \\\\ b \end{pmatrix}, \qquad f(x) = \tilde w^\top \tilde x.$$From now on we drop the tilde and just write $w^\top x$ , with the understanding that the last component of $x$ is 1.

Matrix Form#

$$X = \begin{bmatrix} x_1^\top \\\\ x_2^\top \\\\ \vdots \\\\ x_m^\top \end{bmatrix} \in \mathbb{R}^{m \times (d+1)}, \qquad y = \begin{bmatrix} y_1 \\\\ y_2 \\\\ \vdots \\\\ y_m \end{bmatrix} \in \mathbb{R}^{m}.$$The vector of all predictions is then simply $\hat y = Xw$ . Our job is to choose $w$ so that $\hat y$ is as close to $y$ as possible.

Perspective 1 — Algebra: The Normal Equation#

The Squared-Error Loss#

$$L(w) = \tfrac{1}{2}\,\|y - Xw\|_2^2 = \tfrac{1}{2}\,(y - Xw)^\top (y - Xw).$$The factor $\tfrac{1}{2}$ exists only to cancel a 2 when we differentiate; it changes nothing else.

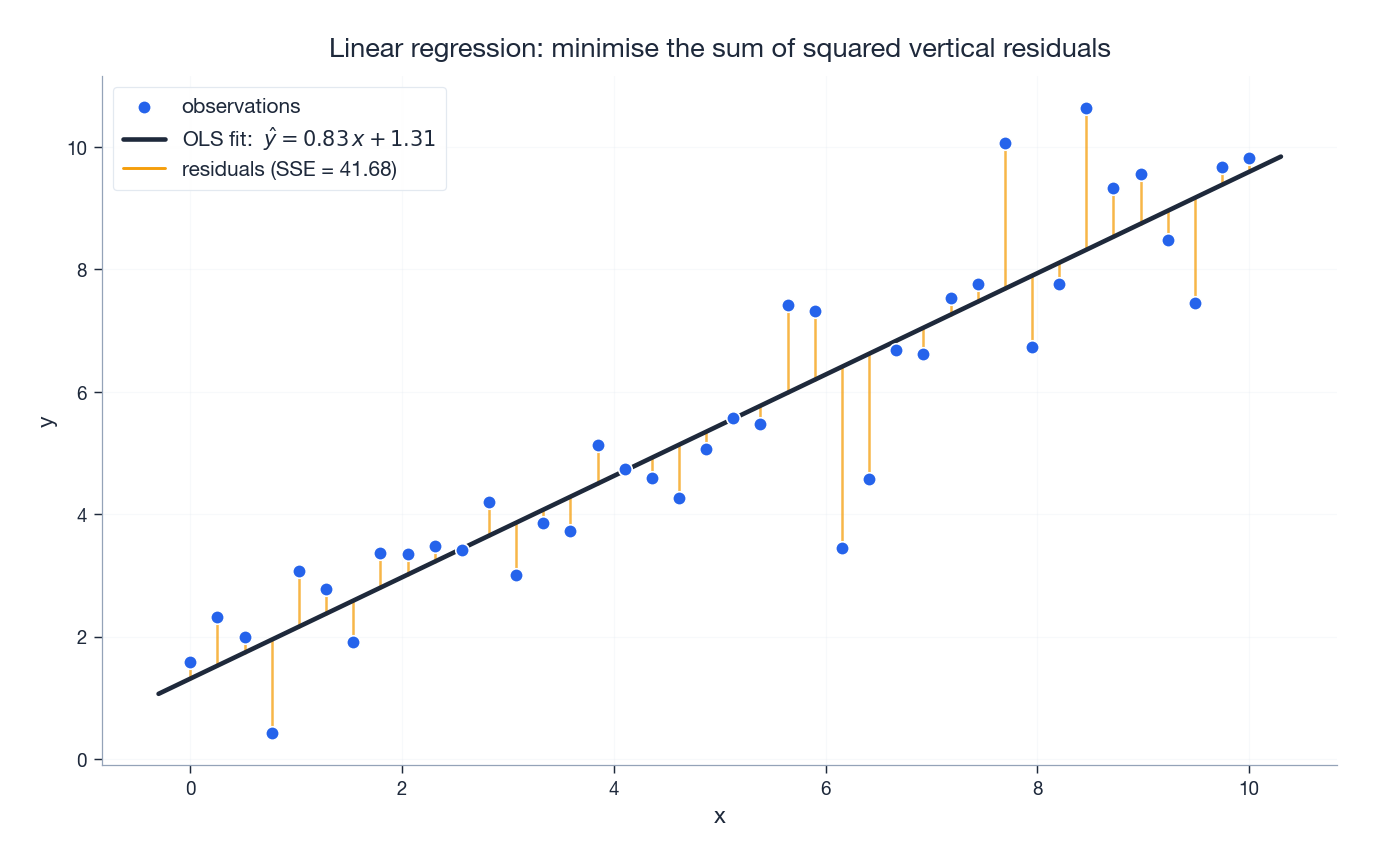

The picture below shows what the loss is actually summing: the squared lengths of the vertical lines from each data point to the fitted line.

Computing the Gradient#

$$L(w) = \tfrac{1}{2}\bigl(y^\top y - 2\,y^\top X w + w^\top X^\top X\,w\bigr).$$ $$\nabla_w L = -X^\top y + X^\top X\, w = X^\top (Xw - y).$$The Normal Equation#

$$\boxed{\,X^\top X\, w = X^\top y\,}.$$This is the normal equation — so named because, geometrically, it asserts that the residual is normal (perpendicular) to every column of $X$ . We’ll see that geometry in a moment.

$$w^\* = (X^\top X)^{-1} X^\top y.$$ $$v^\top X^\top X\, v = \|Xv\|_2^2 > 0$$(strict because full column rank means $Xv \neq 0$ ). A convex quadratic with positive-definite Hessian and zero gradient is at its global minimum. $\square$

When does $X^\top X$ fail to be invertible?

- $m < d+1$ — fewer samples than features (the system is underdetermined),

- columns of $X$ are linearly dependent (perfect multicollinearity).

In both cases the pseudoinverse gives the minimum-norm solution: $w^\* = X^{+} y$ , computed via SVD ($X = U \Sigma V^\top \Rightarrow X^+ = V \Sigma^+ U^\top$ , where $\Sigma^+$ inverts the nonzero singular values and leaves zeros alone).

Perspective 2 — Geometry: Orthogonal Projection#

The Same Answer, A Different Story#

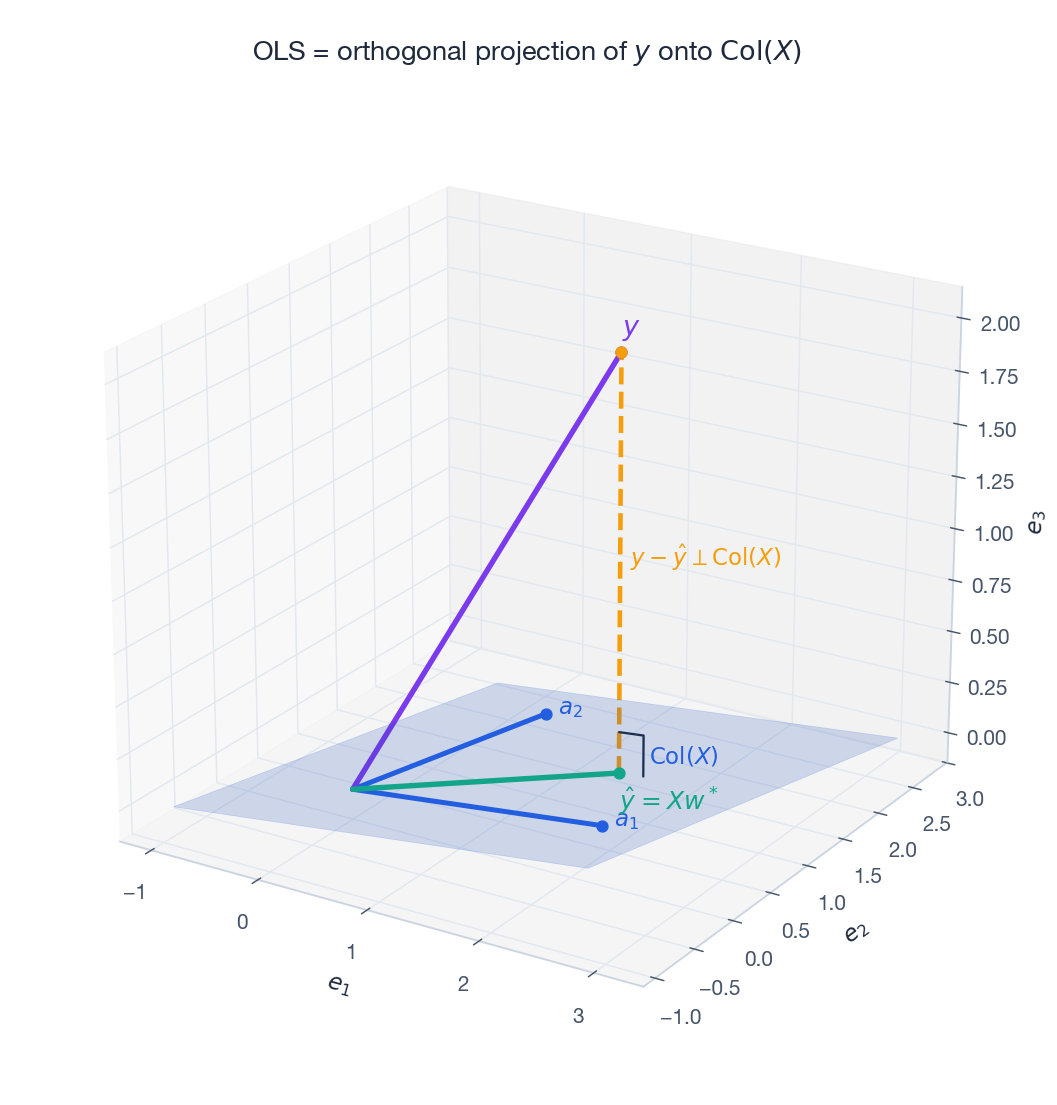

Forget calculus for a moment. The columns of $X$ span a subspace $\operatorname{Col}(X) \subseteq \mathbb{R}^m$ . The vector $\hat y = Xw$ lives somewhere inside that subspace, no matter what $w$ is. We want to find the point in $\operatorname{Col}(X)$ closest to $y$ (in Euclidean distance).

$$X^\top (y - X w^\*) = 0 \quad\Longleftrightarrow\quad X^\top X\, w^\* = X^\top y.$$That’s the normal equation again — this time derived without taking a single derivative.

The figure makes three things visible simultaneously: the columns of $X$ spanning a plane (purple/blue), the target vector $y$ floating above that plane, the projection $\hat y$ on the plane, and the residual $y - \hat y$ shooting straight up at a right angle to the plane. Right angle = optimum.

The Projection Matrix#

It satisfies the two defining properties of an orthogonal projector:

- Idempotent: $H^2 = H$ . Projecting twice does nothing extra.

- Symmetric: $H^\top = H$ . Equivalent to “the projection is orthogonal.”

The residual maker $M = I - H$ projects onto the orthogonal complement; $M y$ is the residual vector. It satisfies $MX = 0$ , formalising “residuals are orthogonal to every feature.”

Perspective 3 — Probability: Maximum Likelihood#

The Linear-Gaussian Model#

$$y_i = w^\top x_i + \epsilon_i, \qquad \epsilon_i \overset{\text{i.i.d.}}{\sim} \mathcal{N}(0, \sigma^2).$$Equivalently, conditional on $x_i$ , the target $y_i$ is Gaussian with mean $w^\top x_i$ and variance $\sigma^2$ .

Likelihood and Log-Likelihood#

$$p(y \mid X, w, \sigma^2) = \prod_{i=1}^{m} \frac{1}{\sqrt{2\pi}\,\sigma}\,\exp\!\left(-\frac{(y_i - w^\top x_i)^2}{2\sigma^2}\right).$$ $$\log p = -\frac{m}{2}\log(2\pi\sigma^2) - \frac{1}{2\sigma^2}\sum_{i=1}^{m}(y_i - w^\top x_i)^2.$$Maximising over $w$ (with $\sigma^2$ fixed) is the same as minimising the sum of squared residuals. So:

Theorem 2. Under the Gaussian-noise model, the MLE for $w$ is exactly the OLS solution: $\hat w_{\text{MLE}} = (X^\top X)^{-1} X^\top y$ .

Differentiating with respect to $\sigma^2$ yields $\hat\sigma^2_{\text{MLE}} = \tfrac{1}{m}\|y - X\hat w\|_2^2$ — the mean residual sum of squares. (This estimator is biased; the unbiased version divides by $m - d - 1$ .)

Bayesian Step: Where Ridge Comes From#

$$\sum_i (y_i - w^\top x_i)^2 + \frac{\sigma^2}{\tau^2}\|w\|_2^2.$$That is Ridge regression, and we just identified its regularisation strength: $\lambda = \sigma^2 / \tau^2$ . A weaker prior (large $\tau$ ) means smaller $\lambda$ .

Regularization: Ridge, Lasso, Elastic Net#

Why Regularize at All#

$$L_{\text{reg}}(w) = \tfrac{1}{2}\|y - Xw\|_2^2 + \lambda \cdot \mathcal{P}(w).$$Ridge Regression (L2)#

$$\boxed{\;\hat w_{\text{Ridge}} = (X^\top X + \lambda I)^{-1} X^\top y.\;}$$The added $\lambda I$ shifts every eigenvalue of $X^\top X$ up by $\lambda$ , so the matrix is always invertible for $\lambda > 0$ — even when $X^\top X$ alone is singular. This is what people mean by “Ridge stabilises the inverse.”

Lasso Regression (L1)#

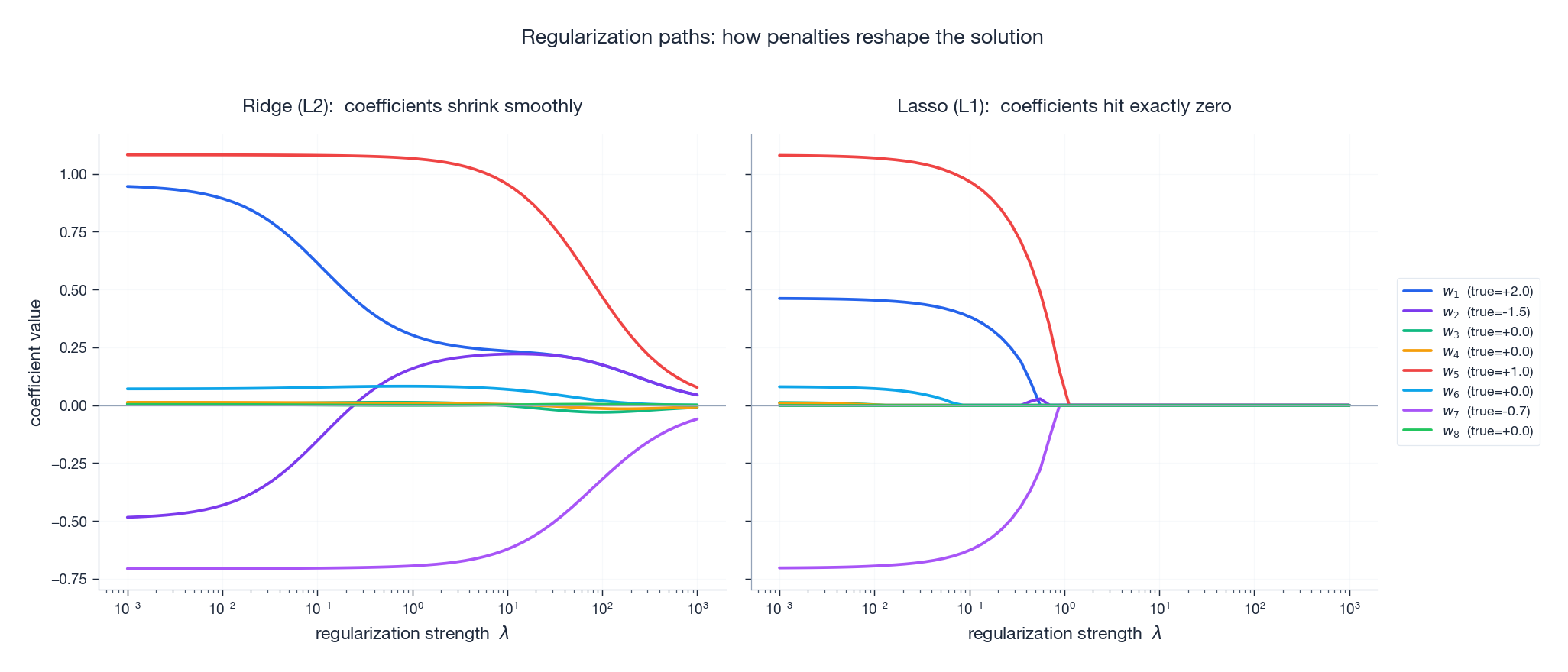

Take $\mathcal{P}(w) = \|w\|_1$ . There is no closed form — $|\cdot|$ is not differentiable at zero — but the optimisation is still convex and is solved efficiently by coordinate descent or proximal gradient. The remarkable property:

Lasso produces exactly-zero coefficients, performing variable selection automatically.

The figure below shows the coefficient paths — how each $w_j$ evolves as $\lambda$ slides from very small (left, equals OLS) to very large (right, every coefficient crushed to zero):

In Ridge, each curve approaches zero asymptotically — it gets small, but never zero. In Lasso, curves hit the axis and stay there. That binary “in or out” behaviour is what makes Lasso a feature selector.

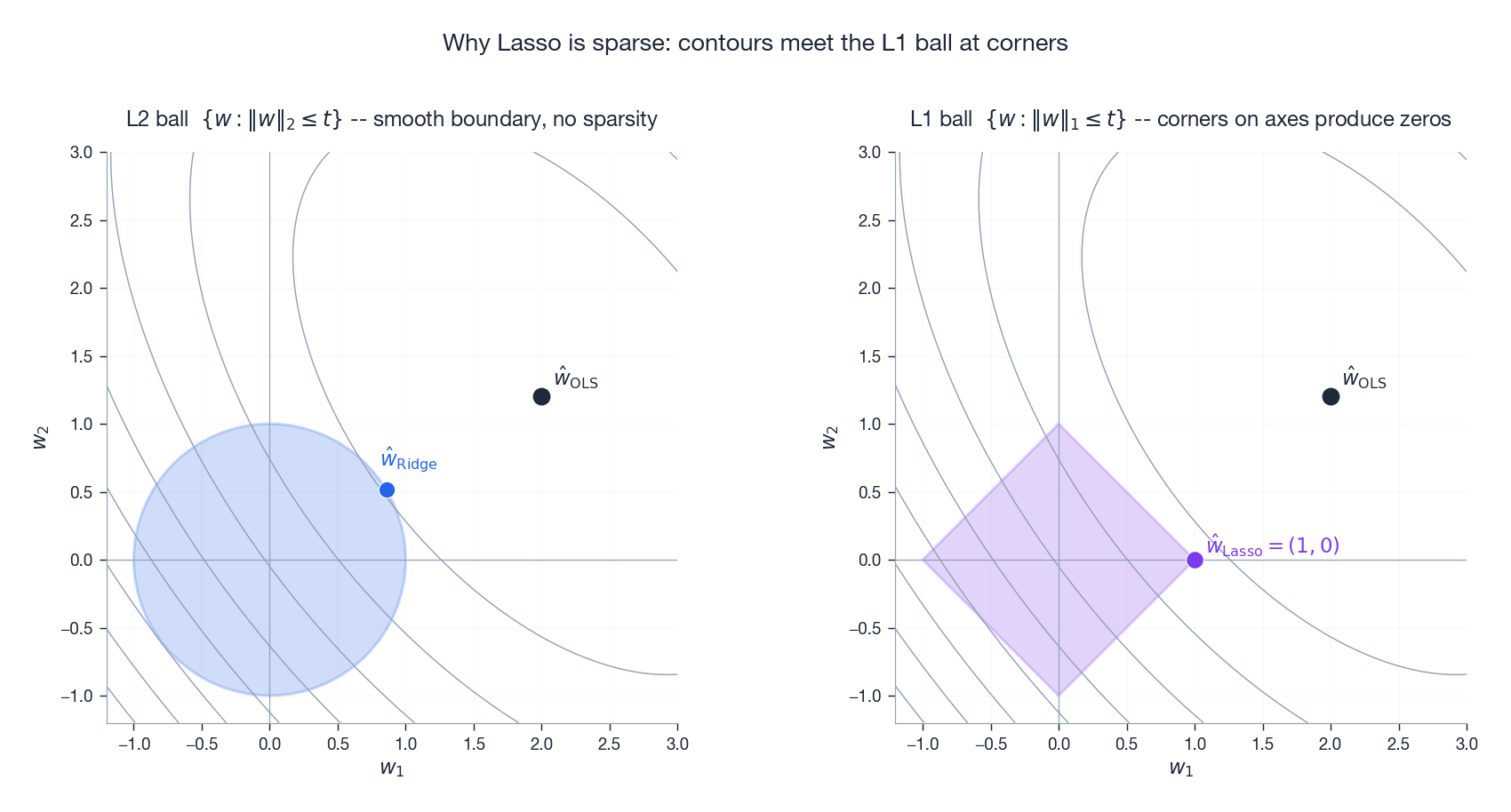

Why Lasso is Sparse: A Geometric Argument#

Rewrite both penalties in constrained form:

- Ridge: minimise $\|y - Xw\|^2$ subject to $\|w\|_2 \le t$ .

- Lasso: minimise $\|y - Xw\|^2$ subject to $\|w\|_1 \le t$ .

The level sets of the loss are ellipsoids around $\hat w_{\text{OLS}}$ . The constraint regions are very different shapes:

The L2 disk has a smooth boundary, so the loss ellipse generally touches it at an interior point of the boundary — both coordinates non-zero. The L1 diamond has corners on the axes, and ellipses tilted in generic directions almost always touch a corner first — giving sparse solutions. The higher the dimension, the more corners and edges the diamond grows, and the more aggressively Lasso zeroes things out.

Elastic Net#

$$\mathcal{P}_{\text{EN}}(w) = \alpha \|w\|_1 + \tfrac{1-\alpha}{2}\|w\|_2^2.$$Keeps the sparsity from L1 and the stability from L2, particularly useful when groups of correlated features would otherwise cause Lasso to pick one arbitrarily.

Optimization: When Closed Form Isn’t Practical#

Computing $(X^\top X)^{-1}$ costs $\mathcal{O}(d^3)$ time and $\mathcal{O}(d^2)$ memory. For $d = 10^6$ , that’s a non-starter. Iterative methods are then the only option.

Batch Gradient Descent (BGD)#

$$w^{(t+1)} = w^{(t)} - \eta \cdot \frac{1}{m} X^\top (X w^{(t)} - y).$$ $$L(w^{(t)}) - L(w^\*) \le \left(\frac{\kappa - 1}{\kappa + 1}\right)^{2t} \bigl(L(w^{(0)}) - L(w^\*)\bigr),$$where $\kappa = \lambda_{\max}(X^\top X) / \lambda_{\min}(X^\top X)$ is the condition number. Ill-conditioned problems (large $\kappa$ ) crawl; this is why people standardise features before running gradient descent.

Worked Numerical Example: one BGD step on a 3-point fit#

Take three points $(x_i, y_i) = (1, 2), (2, 2), (3, 4)$ and fit $\hat y = wx$ (no intercept) by minimising $L(w) = \tfrac{1}{2m}\sum (wx_i - y_i)^2$ . The gradient is $\nabla L = \tfrac{1}{m}\sum (w x_i - y_i) x_i$ .

Start from $w^{(0)} = 0$ . Predictions are $(0, 0, 0)$ , residuals $(0-2, 0-2, 0-4) = (-2, -2, -4)$ , gradient $\tfrac{1}{3}((-2)\cdot 1 + (-2)\cdot 2 + (-4)\cdot 3) = \tfrac{1}{3}(-2 - 4 - 12) = -6$ .

Pick step size $\eta = 0.1$ . Update: $w^{(1)} = 0 - 0.1 \cdot (-6) = 0.6$ . New residuals $(0.6-2, 1.2-2, 1.8-4) = (-1.4, -0.8, -2.2)$ , gradient $\tfrac{1}{3}((-1.4) + (-0.8)\cdot 2 + (-2.2)\cdot 3) = \tfrac{1}{3}(-1.4 - 1.6 - 6.6) = -3.2$ . Then $w^{(2)} = 0.6 + 0.32 = 0.92$ . Continue: $w^{(3)} \approx 1.09$ , $w^{(4)} \approx 1.18$ , converging to the closed-form $w^\star = \sum x_i y_i / \sum x_i^2 = 16/14 \approx 1.143$ . The error halves roughly every two steps because the eigenvalue of $\tfrac{1}{m} X^\top X$ here is $14/3 \approx 4.67$ and $\eta \cdot 4.67 \approx 0.47$ , so the contraction factor is $|1 - 0.47| = 0.53$ per step.

Now try $\eta = 0.5$ : $w^{(1)} = 3.0$ , $w^{(2)} \approx -2.3$ , $w^{(3)} \approx 7.4$ — the iterates oscillate with growing amplitude. The threshold for divergence is $\eta > 2/\lambda_{\max} = 2/4.67 \approx 0.43$ . Above that, BGD blows up; below that, it contracts. Step size is not a free hyperparameter — it has a sharp ceiling set by the Hessian’s largest eigenvalue, and “tune $\eta$ ” in practice means “stay safely under that ceiling.”

Stochastic Gradient Descent (SGD)#

$$w^{(t+1)} = w^{(t)} - \eta \cdot (w^{(t)\top} x_i - y_i)\, x_i.$$- Pros: $\mathcal{O}(d)$ per step, supports streaming/online learning, mild noise can help escape bad regions in non-convex settings.

- Cons: noisy updates require a decreasing learning rate $\eta_t$ (e.g. $\eta_t = \eta_0 / (1 + t)$ ) for almost-sure convergence.

Mini-batch GD#

The pragmatic middle ground: average the gradient over a batch of $B \in [32, 512]$ samples. Combines vectorised speed with SGD’s variance reduction. This is the version used in essentially every deep-learning library.

Model Evaluation and Diagnostics#

Metrics#

For predictions $\hat y_i$ on a test set:

- MSE $= \frac{1}{n}\sum (y_i - \hat y_i)^2$ — penalises large errors quadratically.

- RMSE $= \sqrt{\text{MSE}}$ — in the original units of $y$ .

- MAE $= \frac{1}{n}\sum |y_i - \hat y_i|$ — more robust to outliers.

- $R^2$ $= 1 - \dfrac{\sum(y_i - \hat y_i)^2}{\sum(y_i - \bar y)^2}$ — fraction of variance explained.

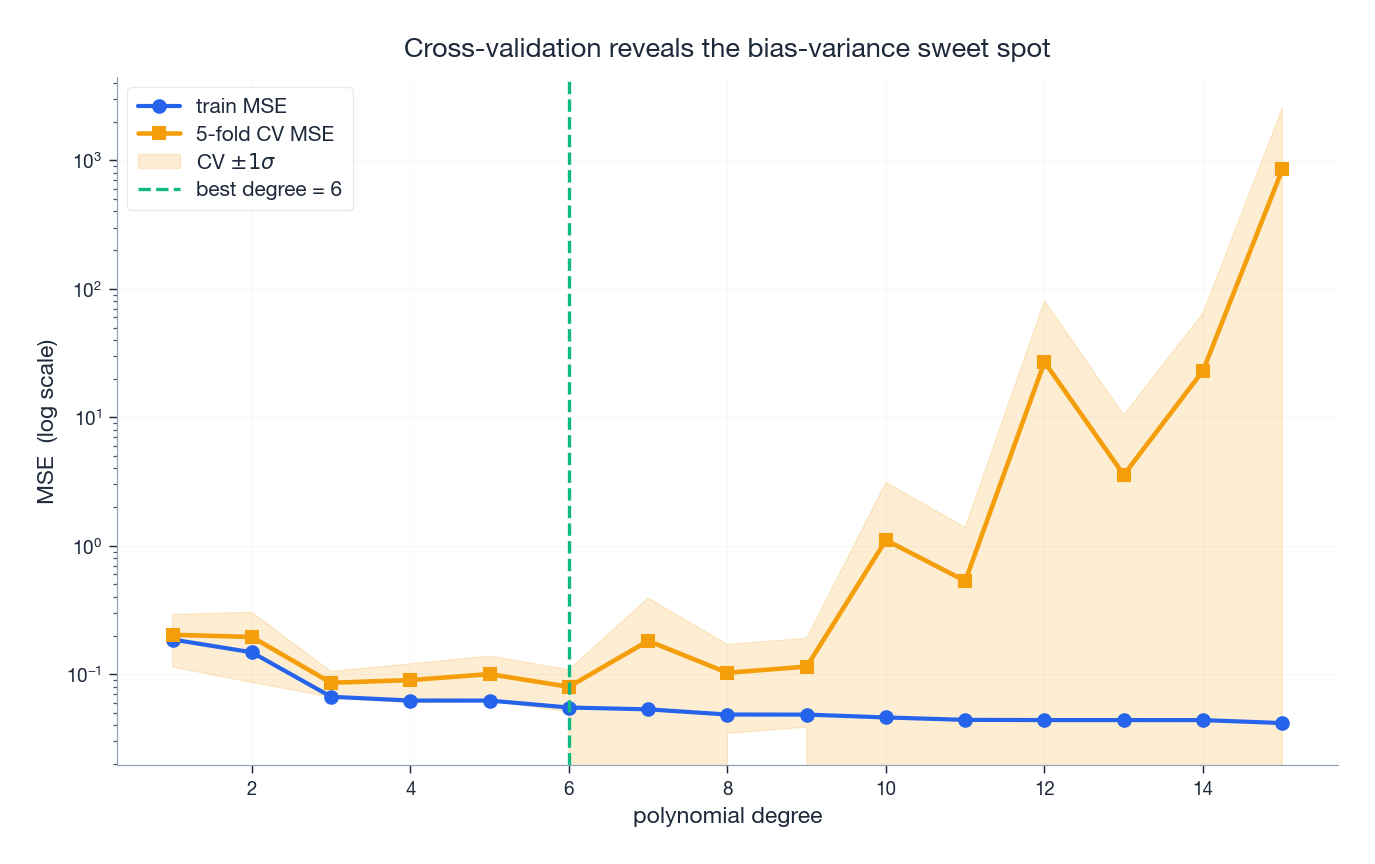

Cross-Validation: Picking Model Capacity#

There is no single “best” polynomial degree, no single “best” $\lambda$ . Cross-validation finds the choice that generalises best by holding out folds of data:

The training error keeps falling as we add capacity (more polynomial terms, smaller $\lambda$ ). The CV error follows a U-shape: too little capacity is biased (underfit), too much is variance-dominated (overfit). The minimum of the CV curve is your model.

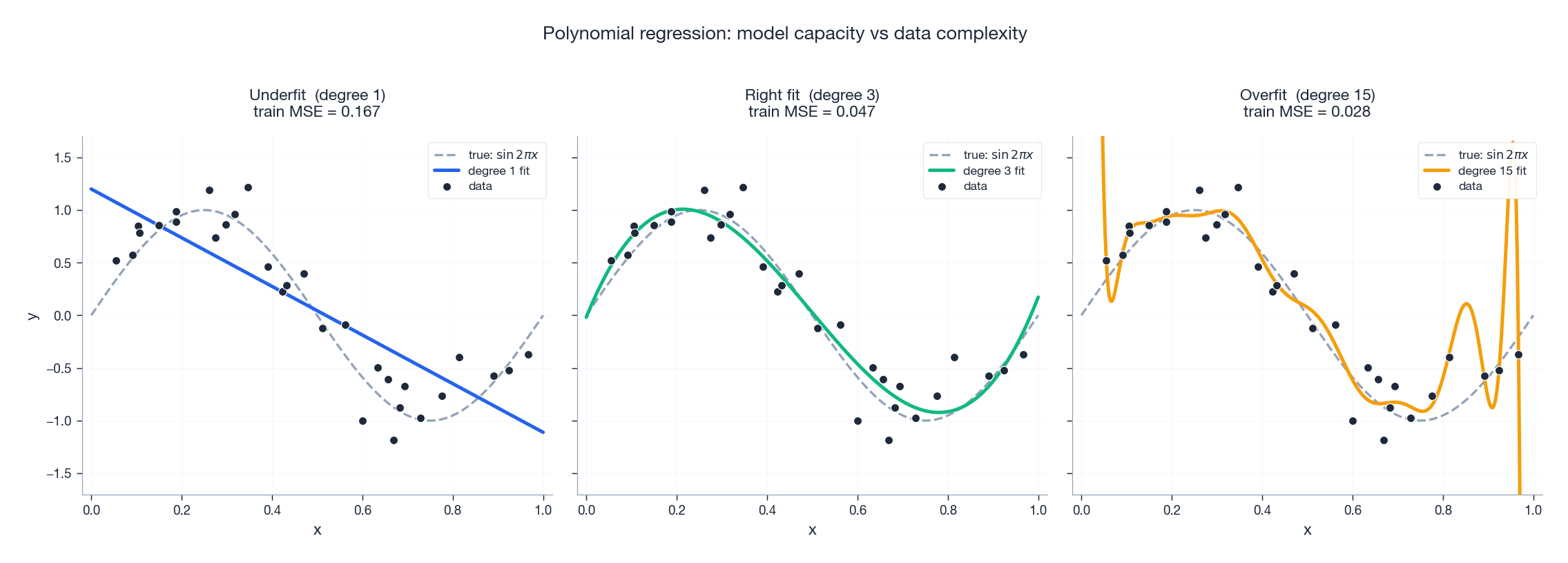

Polynomial Regression: Bias and Variance Made Visible#

Linear regression is “linear in the parameters”, not “linear in the inputs”. Adding polynomial features lets us fit curves while still solving a linear system. The risk is overfitting:

A degree-1 model is too rigid to capture the sine wave (high bias). A degree-3 polynomial nails it. A degree-15 polynomial passes through almost every point but oscillates wildly between them — a classic overfit. Compare the training MSE in each panel: it monotonically decreases. The training error tells you nothing about overfitting; only held-out data does.

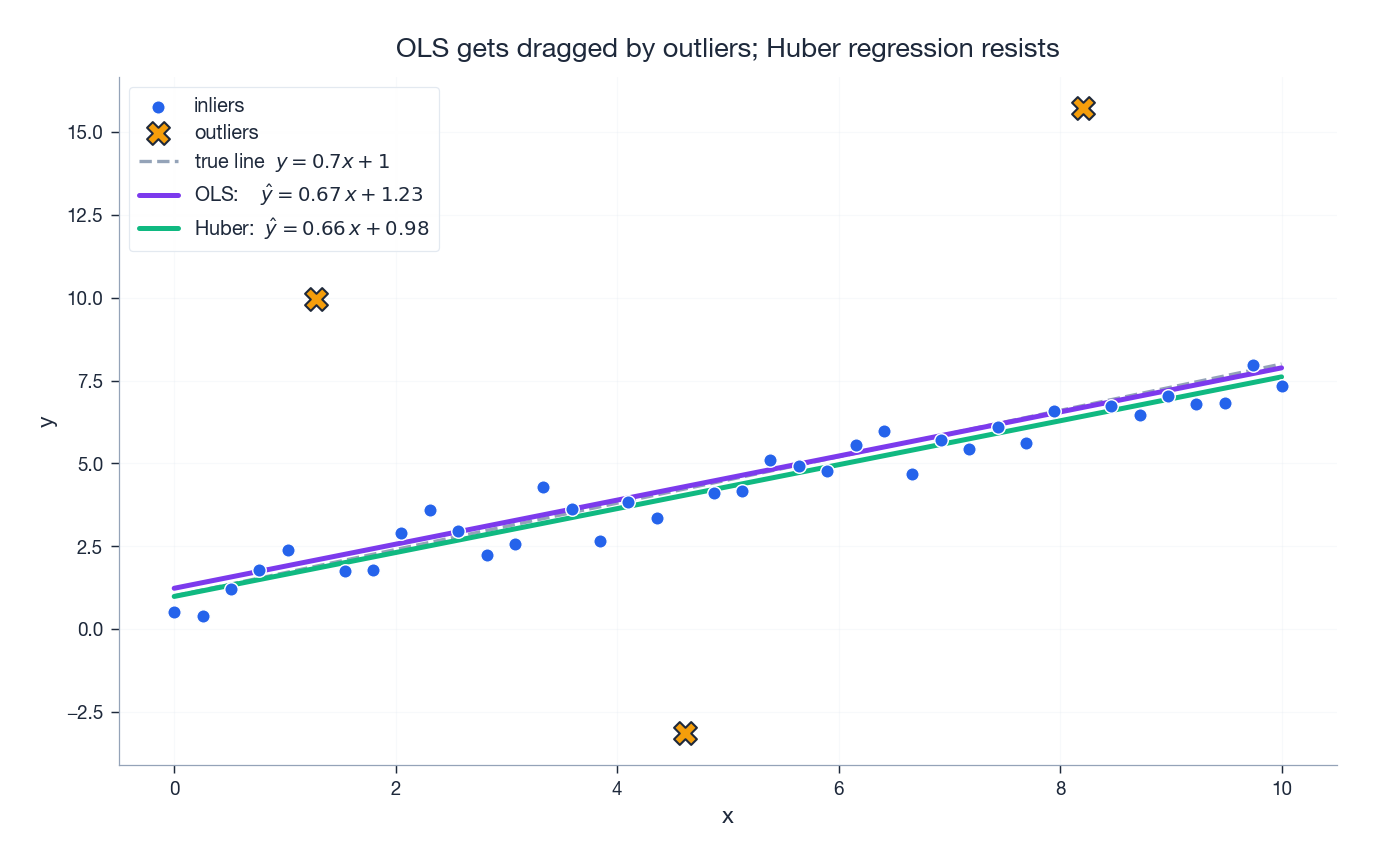

Outlier Sensitivity and Robust Alternatives#

Squared loss has a known weakness: a single outlier with a large residual contributes its squared error to the total, so the model bends to accommodate it. The picture is striking:

The Huber fit (green) almost overlaps the truth despite the same outliers. Other options exist (RANSAC, M-estimators, quantile regression), but Huber is the workhorse: differentiable everywhere, convex, robust.

Q&A: Common Sticking Points#

Q1: Why squared loss instead of absolute loss?#

| Loss | Differentiable? | Closed form? | Robust to outliers? | Implied noise |

|---|---|---|---|---|

| Squared (L2) | everywhere | yes | weak | Gaussian |

| Absolute (L1) | not at 0 | no (LP) | strong | Laplace |

| Huber | everywhere | no | medium | mixed |

Three reasons squared loss dominates: (i) it’s smooth, (ii) it has a closed-form solution, (iii) it corresponds to MLE under the most common noise model. Use Huber when robustness matters.

Q2: Normal equation or gradient descent?#

Use the normal equation when $d \lesssim 10^4$ and $X^\top X$ fits in memory — it’s exact and one-shot.

Use gradient descent when $d$ is large, when you need online updates, or when you’ve added a non-smooth penalty (Lasso) that has no closed form.

Q3: Why does Ridge always have a solution?#

$$v^\top (X^\top X + \lambda I) v = \|Xv\|^2 + \lambda\|v\|^2 \ge \lambda\|v\|^2 > 0 \quad (v \ne 0).$$Even if $X v = 0$ , the $\lambda \|v\|^2$ term keeps the form strictly positive. Geometrically: Ridge says “in directions the data can’t pin down, prefer 0.”

Q4: How do I pick $\lambda$ ?#

Cross-validation. Almost always cross-validation. RidgeCV and LassoCV in scikit-learn do this in one line. Search log-uniformly in $[10^{-3}, 10^{3}]$

, then refine.

Q5: Why standardise features?#

Two reasons:

- Conditioning. Different scales make $X^\top X$ ill-conditioned (huge $\kappa$ ), which slows gradient descent dramatically.

- Fair regularization. $\|w\|_2^2$ penalises every coordinate the same way; a feature measured in millimetres has weight 1000x larger than the same feature in metres, and Ridge would crush it harder for no good reason.

Use StandardScaler: subtract the (training) mean, divide by the (training) std.

Q6: How does multicollinearity affect things?#

Two correlated features $x_1 \approx x_2$ make $X^\top X$ nearly singular. Symptoms: huge standard errors on $\hat w_1$ and $\hat w_2$ , signs that flip on tiny perturbations, but the predictions $\hat y = X \hat w$ remain accurate.

Diagnose with the Variance Inflation Factor: $\text{VIF}_j = 1 / (1 - R_j^2)$ where $R_j^2$ is from regressing feature $j$ on the others. Rule of thumb: VIF $> 10$ is severe.

Fix with Ridge regression, by dropping redundant features, or by replacing them with PCA components.

Q7: What are the classical assumptions and what breaks if they fail?#

| Assumption | Diagnostic | Fix when violated |

|---|---|---|

| Linearity in $w$ | residual plot has no pattern | polynomial features, basis expansion, kernel methods |

| Independent errors | Durbin–Watson $\approx 2$ | autoregressive models, GLS |

| Homoscedasticity | residuals uniform across $\hat y$ | weighted LS, log-transform $y$ |

| Normal errors | Q–Q plot near diagonal | Box–Cox, GLM, robust loss |

For inference (p-values, confidence intervals) all four matter. For prediction you can usually get away with violating normality.

Q8: How do I encode categorical features?#

Never as integers (that fakes an ordering). Use one-hot encoding (pd.get_dummies or OneHotEncoder). For a $k$

-level category, create $k$

indicators — and drop one to avoid the multicollinearity caused by the columns summing to 1 (drop='first').

Q9: Can linear regression model nonlinear relationships?#

Yes — linear refers to the parameters, not the inputs. Replace $x$ with $\phi(x)$ (polynomial, log, sin, ReLU, you name it), then fit a linear model in $\phi$ . This is exactly what kernel methods and neural networks generalise.

Q10: How do I interpret coefficients?#

After standardising features, $\hat w_j$ is the change in $\hat y$ (in standard deviations) per one-standard-deviation increase in $x_j$ , holding the other features fixed. Two large warnings:

- Multicollinearity makes individual $\hat w_j$ unstable even when predictions are fine — don’t read a single $\hat w_j$ in isolation.

- Correlation is not causation. A regression coefficient is a conditional association in your data, full stop. Causal claims need experimental design or special identification strategies.

Verifying the Code#

Every figure in this article was generated by scripts/figures/ml-math-derivations/05-linear-regression.py, which cross-checks each claim against scikit-learn (e.g. the manually computed slope and intercept in fig1 are asserted to match LinearRegression’s fit exactly, the projection in fig2 verifies orthogonality of the residual numerically). The file is the single source of truth for the visuals; reproducing them takes one command.

Summary#

We approached linear regression from three independent directions and arrived at the same equation:

- Algebra. Minimise a quadratic. Set the gradient to zero. Get $w^\* = (X^\top X)^{-1} X^\top y$ .

- Geometry. Project $y$ orthogonally onto $\operatorname{Col}(X)$ . The condition that the residual is perpendicular to the columns is the normal equation.

- Probability. Under Gaussian noise, MLE for $w$ minimises squared residuals. Under a Gaussian prior on $w$ , MAP gives Ridge.

Then we addressed three failures of vanilla OLS: instability under collinearity (Ridge), failure to select features (Lasso), and oversensitivity to outliers (Huber). The optimisation toolkit — BGD, SGD, mini-batch — handles the case where $X^\top X$ is too big to invert. And cross-validation tells us, empirically, what model capacity to choose.

Next chapter. We’ll generalise to classification — the output space becomes discrete. The sigmoid function, cross-entropy loss, and the geometric meaning of decision boundaries all fall out of asking “what’s the linear-Gaussian model for binary outputs?”

What’s next#

Linear regression bundles continuous outputs with Gaussian noise into a clean “linear + MLE = least squares” identity. But many real tasks have outputs that are not continuous at all — they are 0/1, categories, click-or-not. Forcing classification into a Gaussian likelihood gives an uncalibrated, geometrically awkward object.

The next chapter swaps the likelihood — Bernoulli for Gaussian. Once that single substitution is made, the same derivation chain hands me three new objects for free: the sigmoid (because the natural parameter of a Bernoulli is the logit), cross-entropy (because that is what the log-Bernoulli likelihood looks like), and a linear decision boundary (because the log-odds are linear). That is logistic regression. The geometry stays linear; the statistical soul changes completely. Internalising “swap the likelihood and you swap the algorithm” matters far more than memorising the sigmoid — it is the shared template behind every generalized linear model and every exponential-family extension that follows.

References#

- Hastie, T., Tibshirani, R., & Friedman, J. (2009). The Elements of Statistical Learning (2nd ed.). Springer.

- Bishop, C. M. (2006). Pattern Recognition and Machine Learning. Springer.

- Murphy, K. P. (2012). Machine Learning: A Probabilistic Perspective. MIT Press.

- James, G., Witten, D., Hastie, T., & Tibshirani, R. (2013). An Introduction to Statistical Learning. Springer.

- Tibshirani, R. (1996). Regression shrinkage and selection via the Lasso. JRSS-B, 58(1), 267–288.

- Hoerl, A. E., & Kennard, R. W. (1970). Ridge regression: biased estimation for nonorthogonal problems. Technometrics, 12(1), 55–67.

- Zou, H., & Hastie, T. (2005). Regularization and variable selection via the elastic net. JRSS-B, 67(2), 301–320.

- Huber, P. J. (1964). Robust estimation of a location parameter. Annals of Math. Stat., 35(1), 73–101.

- Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep Learning. MIT Press.

- Shalev-Shwartz, S., & Ben-David, S. (2014). Understanding Machine Learning. Cambridge University Press.

ML Math Derivations 20 parts

- 01 ML Math Derivations (1): Introduction and Mathematical Foundations

- 02 ML Math Derivations (2): Linear Algebra and Matrix Theory

- 03 ML Math Derivations (3): Probability Theory and Statistical Inference

- 04 ML Math Derivations (4): Convex Optimization Theory

- 05 ML Math Derivations (5): Linear Regression you are here

- 06 ML Math Derivations (6): Logistic Regression and Classification

- 07 ML Math Derivations (7): Decision Trees

- 08 ML Math Derivations (8): Support Vector Machines

- 09 ML Math Derivations (9): Naive Bayes

- 10 ML Math Derivations (10): Semi-Naive Bayes and Bayesian Networks

- 11 ML Math Derivations (11): Ensemble Learning

- 12 ML Math Derivations (12): XGBoost and LightGBM

- 13 ML Math Derivations (13): EM Algorithm and GMM

- 14 ML Math Derivations (14): Variational Inference and Variational EM

- 15 ML Math Derivations (15): Hidden Markov Models

- 16 ML Math Derivations (16): Conditional Random Fields

- 17 ML Math Derivations (17): Dimensionality Reduction and PCA

- 18 ML Math Derivations (18): Clustering Algorithms

- 19 ML Math Derivations (19): Neural Networks and Backpropagation

- 20 ML Math Derivations (20): Regularization and Model Selection