ML Math Derivations (6): Logistic Regression and Classification

Complete derivation of logistic regression from sigmoid to softmax, cross-entropy loss, gradient computation, regularization, and multi-class extension with Python verification.

Hook. Linear regression maps inputs to any real number — but what if the output has to be a probability between 0 and 1? Logistic regression solves this with one elegant trick: a sigmoid squashing function. Despite its name, logistic regression is a classification algorithm, and its math underpins every neuron in every modern neural network.

What You Will Learn#

- Why sigmoid is the natural way to turn a real-valued score into a probability, and why its derivative is so clean.

- How cross-entropy loss falls out of maximum likelihood estimation in two lines.

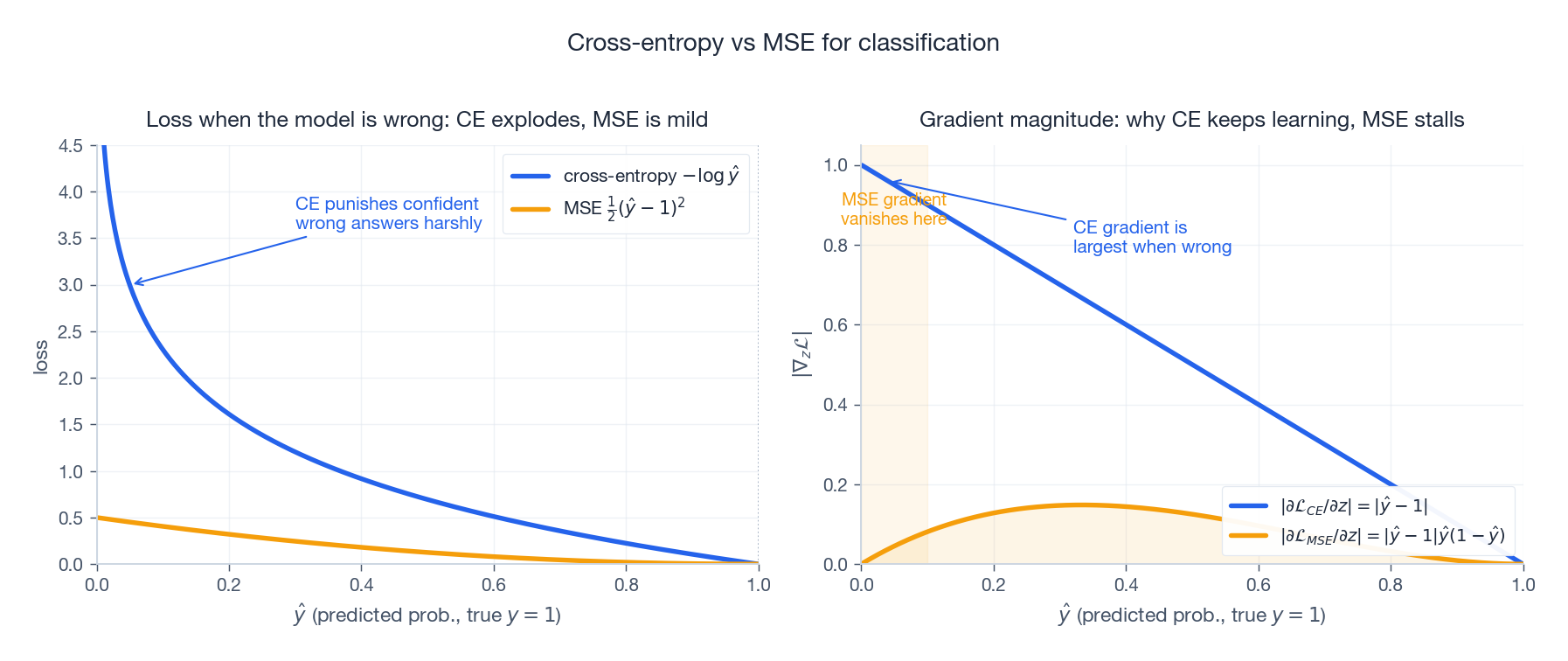

- Why cross-entropy beats MSE for classification — a vanishing-gradient argument made visible.

- The full gradient and Hessian for both binary and multi-class (softmax) cases, and why the loss is convex.

- L1, L2 and elastic-net regularization, and the Bayesian priors hiding behind them.

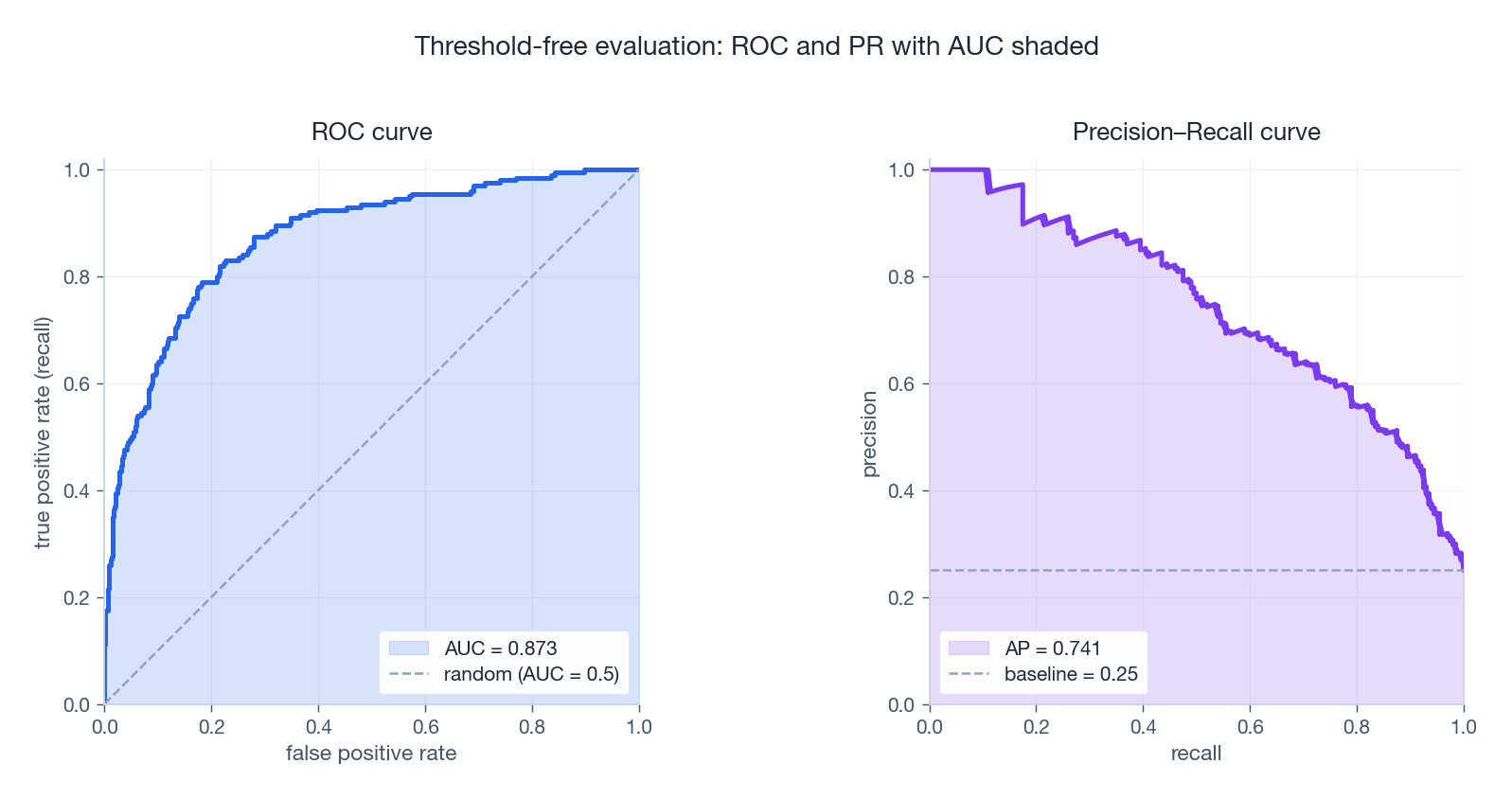

- Decision-boundary geometry and the threshold-free metrics (ROC / PR / AUC) that you actually need under class imbalance.

Prerequisites#

- Calculus: chain rule, partial derivatives.

- Linear algebra: matrix multiplication, transpose.

- Probability: Bernoulli and categorical distributions, likelihood.

- Familiarity with Part 5: Linear Regression .

From Linear Models to Probabilistic Classification#

The Problem with Raw Linear Output#

Linear regression gives us $\hat y = \mathbf{w}^\top \mathbf{x}$ , which is unbounded. For classification, two things go wrong:

- Unconstrained range. $\mathbf{w}^\top \mathbf{x} \in (-\infty, +\infty)$ , but a class label lives in a finite set.

- No probability semantics. “How sure are you this email is spam?” has no answer in $\mathbb{R}$ .

The fix is a link function that squashes the linear score into $[0, 1]$ . The canonical choice is the sigmoid.

The Sigmoid Function#

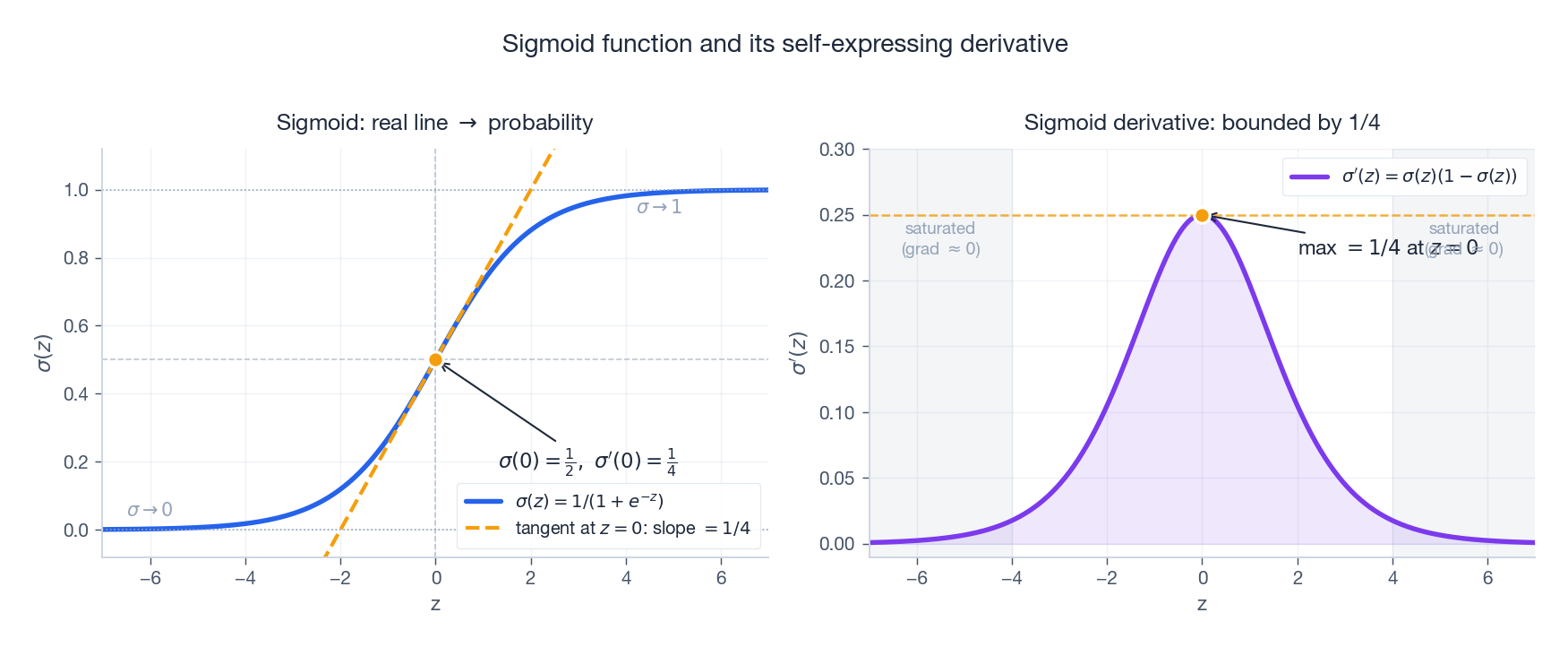

Think of it as a “soft switch”: it is essentially $0$ for very negative $z$ , essentially $1$ for very positive $z$ , and crosses $0.5$ at the origin. The picture above also shows the tangent at $z = 0$ , whose slope is exactly $1/4$ — this is the steepest the sigmoid can ever be, a fact we will use over and over.

Three properties make the sigmoid mathematically delightful.

Property 1 — Range. For all $z \in \mathbb{R}$ , $0 < \sigma(z) < 1$ , so the output is a valid probability.

Property 2 — Symmetry. $\sigma(-z) = 1 - \sigma(z)$ . This is what lets us write $P(y=0\mid \mathbf{x})$ in the same form as $P(y=1\mid \mathbf{x})$ .

$$\sigma(-z) = \frac{1}{1 + e^{z}} = \frac{e^{-z}}{1 + e^{-z}} = 1 - \sigma(z). \quad\square$$Property 3 — Self-expressing derivative. $\sigma'(z) = \sigma(z)\bigl(1 - \sigma(z)\bigr)$ . This is the property that makes the cross-entropy gradient collapse to one line.

$$ \sigma'(z) = \frac{e^{-z}}{(1 + e^{-z})^2} = \frac{1}{1 + e^{-z}} \cdot \frac{e^{-z}}{1 + e^{-z}} = \sigma(z)\bigl(1 - \sigma(z)\bigr). \quad\square $$ | |

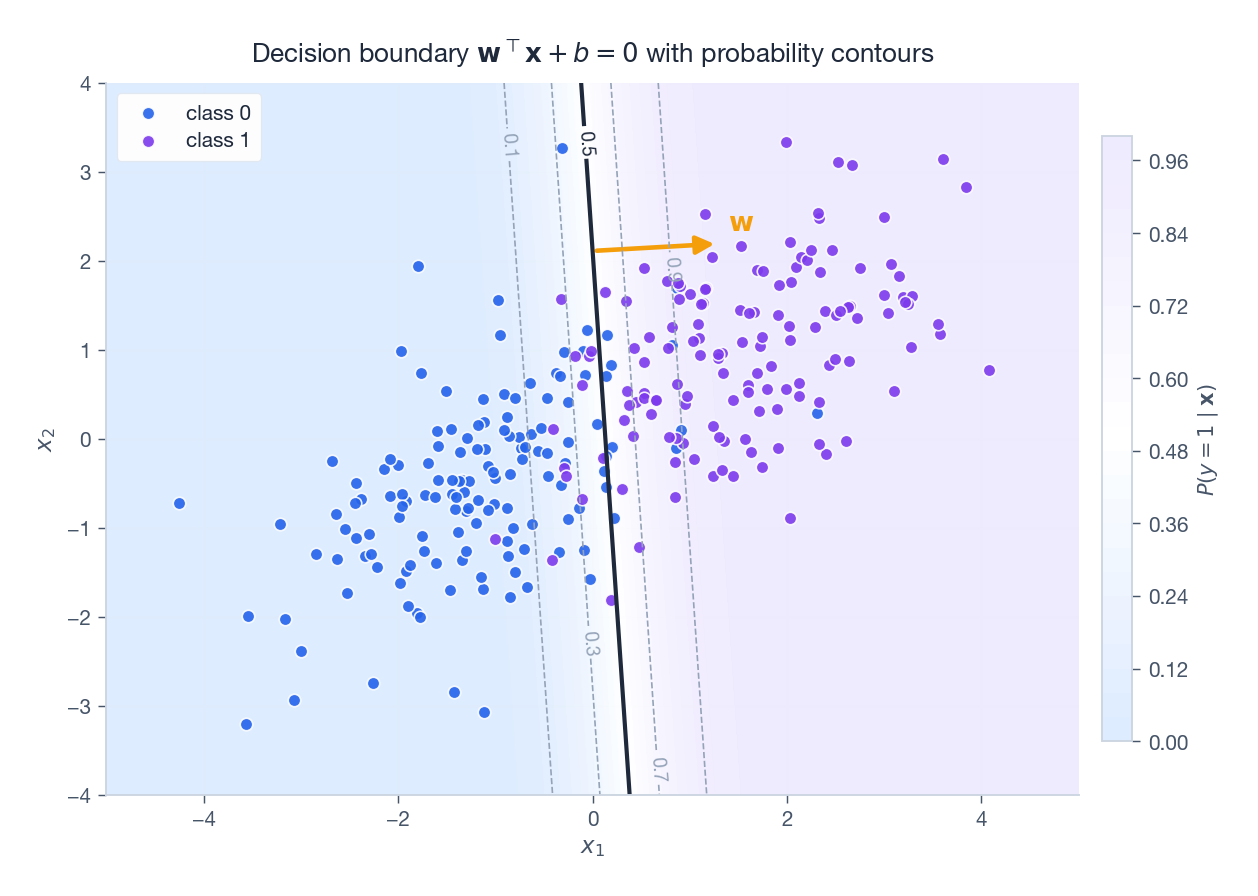

Logistic Regression Model#

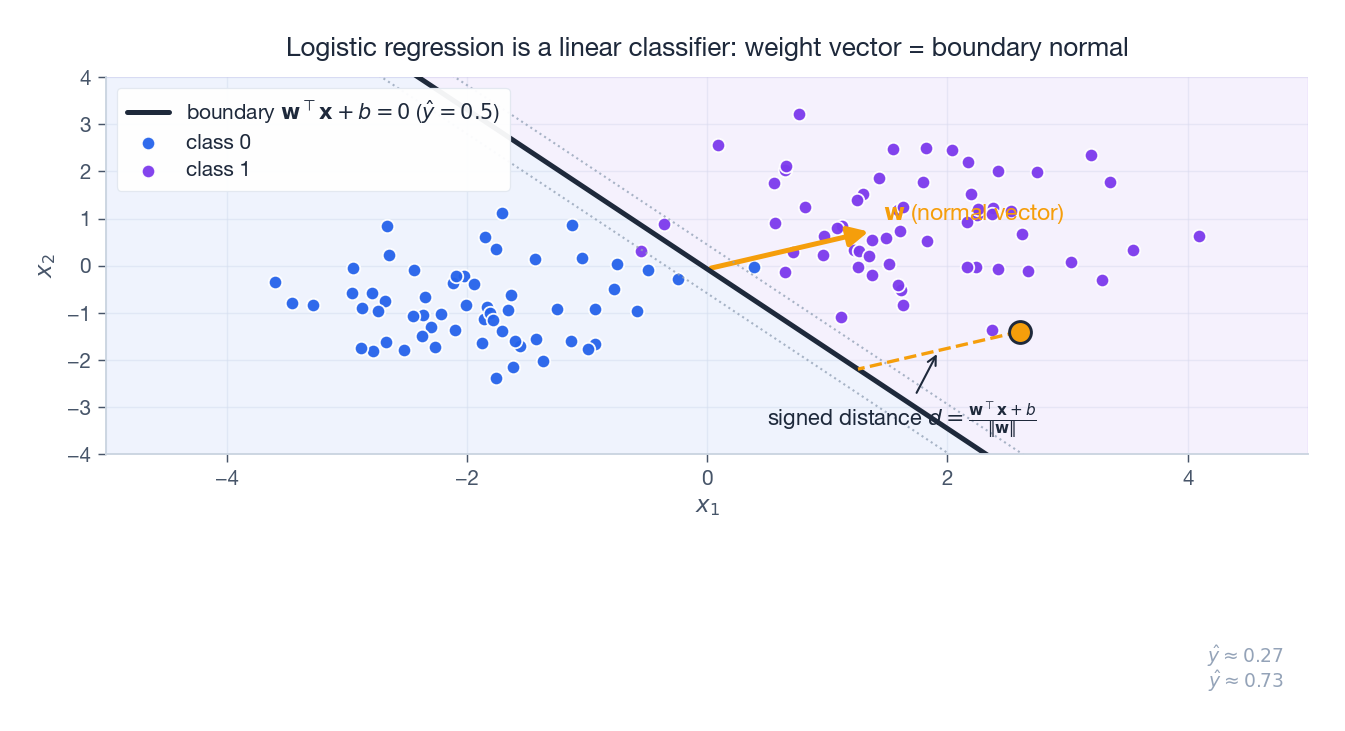

When $y = 1$ this returns $\hat y$ ; when $y = 0$ it returns $1 - \hat y$ . The figure above shows what this model looks like geometrically: the boundary $\mathbf{w}^\top\mathbf{x} = 0$ is a hyperplane, and the orange arrow $\mathbf{w}$ is its normal — moving along $\mathbf{w}$ pushes the predicted probability from $0.5$ toward $1$ .

Maximum Likelihood and the Cross-Entropy Loss#

Building the Likelihood#

$$ L(\mathbf{w}) = \prod_{i=1}^N P(y_i \mid \mathbf{x}_i; \mathbf{w}) = \prod_{i=1}^N \hat y_i^{\,y_i}(1 - \hat y_i)^{1 - y_i}. $$We want the $\mathbf{w}$ that makes the observed labels most probable.

From Log-Likelihood to Cross-Entropy#

$$\ell(\mathbf{w}) = \sum_{i=1}^N \bigl[\, y_i \ln \hat y_i + (1 - y_i)\ln(1 - \hat y_i) \,\bigr].$$ $$\boxed{\;\mathcal{L}(\mathbf{w}) = -\frac{1}{N} \sum_{i=1}^N \bigl[\, y_i \ln \hat y_i + (1 - y_i)\ln(1 - \hat y_i) \,\bigr].\;}$$Information-theoretic view. Cross-entropy $H(p, q) = -\sum_x p(x) \ln q(x)$ measures the extra bits needed to encode samples from $p$ using a code optimised for $q$ . Here $p$ is the hard one-hot label and $q$ is the sigmoid output, so minimising $\mathcal{L}$ is literally pulling our predicted distribution toward the data distribution.

Why Not MSE?#

The extra factor $\hat y(1-\hat y)$ is bounded by $1/4$ and vanishes when $\hat y$ is near $0$ or $1$ . So if the model is confidently wrong ($\hat y \approx 0$ but $y = 1$ ), MSE produces almost no gradient and learning stalls.

$$\frac{\partial \mathcal{L}_{\text{CE}}}{\partial z} = \hat y - y.$$The right panel above makes this concrete: when $y = 1$ and the model predicts $\hat y \approx 0$ , the CE gradient is near its maximum (push hard!) while the MSE gradient is essentially zero (give up).

| |

Gradient Derivation and Optimisation#

The Key Cancellation#

$$ \frac{\partial \mathcal{L}}{\partial \mathbf{w}} = \frac{\partial \mathcal{L}}{\partial \hat y} \cdot \frac{\partial \hat y}{\partial z} \cdot \frac{\partial z}{\partial \mathbf{w}}. $$- Loss w.r.t. prediction: $\dfrac{\partial \mathcal{L}}{\partial \hat y} = -\dfrac{y}{\hat y} + \dfrac{1 - y}{1 - \hat y}$ .

- Sigmoid derivative (Property 3): $\dfrac{\partial \hat y}{\partial z} = \hat y(1 - \hat y)$ .

- Linear part: $\dfrac{\partial z}{\partial \mathbf{w}} = \mathbf{x}$ .

Full Batch Gradient#

$$\nabla_{\mathbf{w}} \mathcal{L} = \frac{1}{N}\sum_{i=1}^N (\hat y_i - y_i)\,\mathbf{x}_i = \frac{1}{N}\,\mathbf{X}^\top(\hat{\mathbf{y}} - \mathbf{y}),$$where $\mathbf{X} \in \mathbb{R}^{N \times d}$ is the data matrix.

Hessian and Convexity#

$$\nabla^2 \mathcal{L} = \frac{1}{N}\sum_{i=1}^N \hat y_i(1 - \hat y_i)\,\mathbf{x}_i \mathbf{x}_i^\top = \frac{1}{N}\,\mathbf{X}^\top \mathbf{S}\, \mathbf{X},$$ $$\mathbf{v}^\top \nabla^2 \mathcal{L}\, \mathbf{v} = \frac{1}{N} \sum_i \hat y_i(1 - \hat y_i)(\mathbf{v}^\top \mathbf{x}_i)^2 \geq 0,$$so the Hessian is positive semi-definite and the loss is convex. There is a single global optimum and any reasonable optimiser will find it.

| |

Optimisation Variants#

- Batch GD: $\mathbf{w} \leftarrow \mathbf{w} - \eta \cdot \tfrac{1}{N}\mathbf{X}^\top(\hat{\mathbf{y}} - \mathbf{y})$ . Stable, slow per epoch.

- SGD: $\mathbf{w} \leftarrow \mathbf{w} - \eta(\hat y_i - y_i)\mathbf{x}_i$ for one random $i$ . Noisy, scales to massive data.

- Mini-batch GD: average the gradient over a batch of size $b$ . The default in practice.

- Newton / IRLS: use $\nabla^2 \mathcal{L}$ for quadratic convergence — feasible because the Hessian is cheap and PSD.

Multi-Class Extension: Softmax Regression#

From Binary to $K$ Classes#

$$P(y = k \mid \mathbf{x}) = \frac{e^{z_k}}{\sum_{j=1}^K e^{z_j}}.$$Softmax is a soft argmax: it exponentiates each score (so they are positive) and normalises (so they sum to one). The biggest score wins the most mass, but every class still gets a non-zero share.

Worked Numerical Example: softmax + cross-entropy on $K=3$ classes#

Take logits $\mathbf{z} = (2, 1, 0)$ and the true class $c = 0$ (one-hot $\mathbf{t} = (1, 0, 0)$ ).

Numerical stabilisation. Subtract $\max_j z_j = 2$ first: $\mathbf{z}' = (0, -1, -2)$ . Same softmax, no overflow.

Exponentiate. $e^{0} = 1$ , $e^{-1} \approx 0.3679$ , $e^{-2} \approx 0.1353$ . Sum $Z = 1 + 0.3679 + 0.1353 = 1.5032$ .

Normalise. $P_0 = 1/1.5032 \approx 0.6652$ , $P_1 = 0.3679/1.5032 \approx 0.2447$ , $P_2 = 0.1353/1.5032 \approx 0.0900$ . They sum to $1.0$ (round-off aside).

Cross-entropy. $\mathcal{L} = -\ln P_0 = -\ln 0.6652 \approx 0.4076$ nats. Equivalently, $\mathcal{L} = -z_0 + \ln \sum_j e^{z_j} = -2 + \ln(7.389 + 2.718 + 1.000) = -2 + \ln 11.107 = -2 + 2.408 = 0.408$ .

Gradient. $\partial \mathcal{L}/\partial \mathbf{z} = \mathbf{P} - \mathbf{t} = (0.6652 - 1,\, 0.2447 - 0,\, 0.0900 - 0) = (-0.3348,\, 0.2447,\, 0.0900)$ . Three things are visible from this single number: the gradient on the correct class is negative (push $z_0$ up), the gradients on the other two are positive (push $z_1, z_2$ down), and the magnitudes sum to zero (because $\sum_k P_k = \sum_k t_k = 1$ — the gradient never moves probability mass off the simplex). If the model were already confident — say $\mathbf{z} = (10, 1, 0)$ giving $P_0 \approx 0.9999$ — the gradient would shrink to $\approx (-10^{-4}, 7 \cdot 10^{-5}, 3 \cdot 10^{-5})$ and learning would stall on this example, which is exactly the desired behaviour: don’t waste capacity on points already classified correctly.

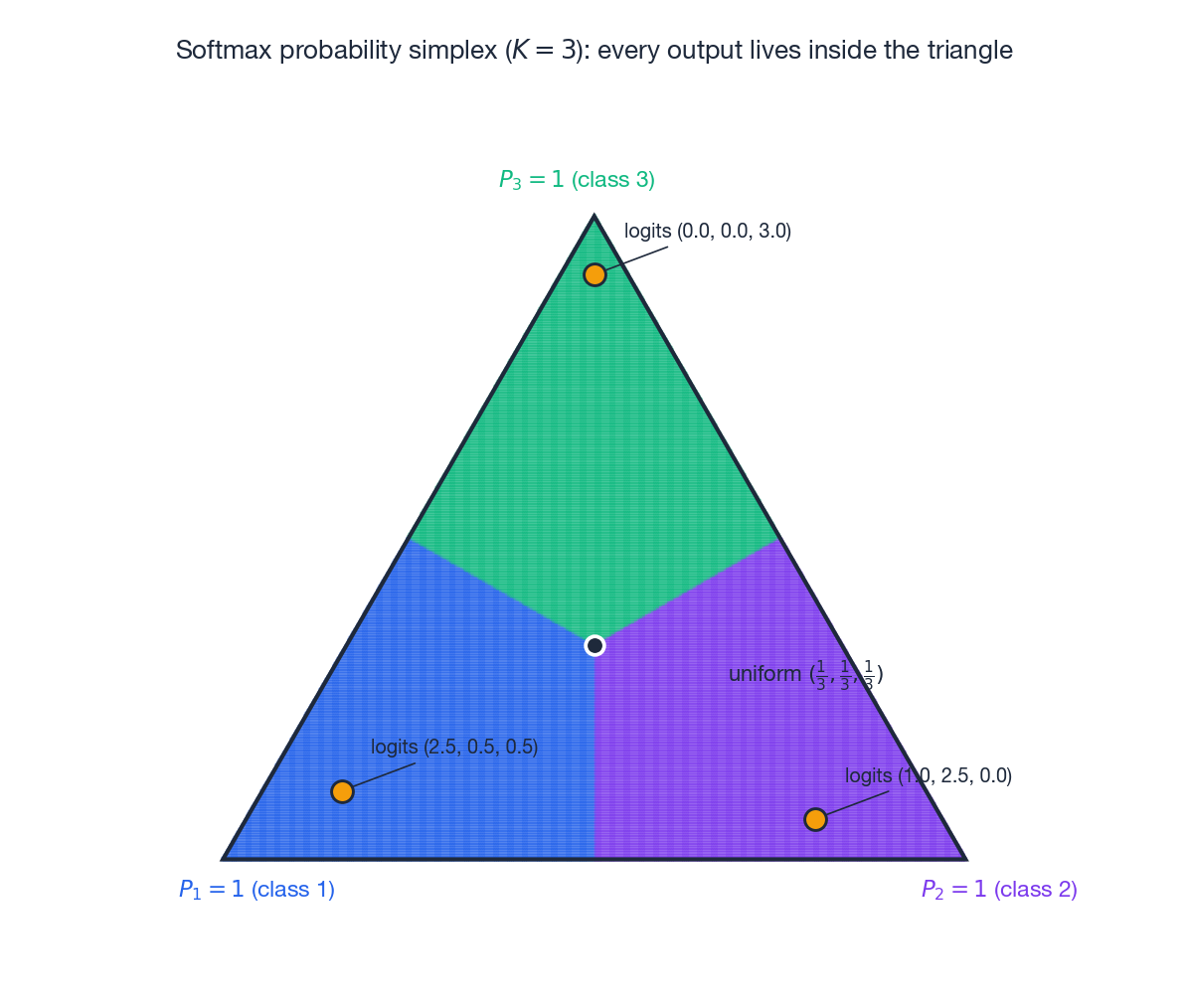

Geometrically, every softmax output is a point in the probability simplex — the triangle above for $K = 3$ . Vertices are deterministic predictions; the centre is maximal uncertainty $(1/3, 1/3, 1/3)$ ; example logits are projected to show how concentrated mass corresponds to clearer decisions.

Cross-Entropy with One-Hot Labels#

$$ \mathcal{L} = -\sum_{k=1}^K t_k \ln P(y = k \mid \mathbf{x}) = -\ln P(y = c \mid \mathbf{x}) = -z_c + \ln \sum_{j=1}^K e^{z_j}. $$This is the multi-class negative log-likelihood.

The Softmax Gradient — Same Form Again#

Differentiating $\mathcal{L}$ w.r.t. $z_k$ :

- If $k = c$ : $\dfrac{\partial \mathcal{L}}{\partial z_c} = -1 + P_c$ .

- If $k \neq c$ : $\dfrac{\partial \mathcal{L}}{\partial z_k} = P_k$ .

where $\mathbf{W} \in \mathbb{R}^{d \times K}$ stacks the per-class weight vectors.

| |

Regularisation#

L2 (Ridge)#

$$\mathcal{L}_{\text{reg}} = \mathcal{L} + \frac{\lambda}{2}\|\mathbf{w}\|_2^2.$$ $$\mathbf{w} \leftarrow (1 - \eta\lambda)\mathbf{w} - \frac{\eta}{N}\mathbf{X}^\top(\hat{\mathbf{y}} - \mathbf{y}).$$Bayesian view. L2 is MAP estimation under a Gaussian prior $\mathbf{w} \sim \mathcal{N}(\mathbf{0}, \tfrac{1}{\lambda}\mathbf{I})$ .

L1 (Lasso)#

$$\mathcal{L}_{\text{reg}} = \mathcal{L} + \lambda \|\mathbf{w}\|_1.$$L1 is non-differentiable at zero; using the subgradient $\partial_{w_j}\|\mathbf{w}\|_1 = \operatorname{sign}(w_j)$ gives the proximal / soft-thresholding update. The penalty’s sharp corner at the origin pushes many coefficients exactly to zero, producing automatic feature selection.

Bayesian view. L1 corresponds to a Laplace prior $p(w_j) \propto e^{-\lambda |w_j|}$ , whose peak at zero is the source of sparsity.

Elastic Net#

$$\mathcal{L}_{\text{reg}} = \mathcal{L} + \lambda_1 \|\mathbf{w}\|_1 + \frac{\lambda_2}{2}\|\mathbf{w}\|_2^2.$$Combines L1’s sparsity with L2’s stability when features are correlated.

Decision Boundary and Geometry#

Binary Boundary#

$$\mathbf{w}^\top \mathbf{x} + b = 0.$$ $$d = \frac{\mathbf{w}^\top \mathbf{x}_0 + b}{\|\mathbf{w}\|},$$and $|d|$ is exactly how confidently the model classifies $\mathbf{x}_0$ .

The figure makes three things explicit:

- The boundary is a hyperplane (a line in 2D).

- The weight vector $\mathbf{w}$ is the normal to that hyperplane.

- The norm $\|\mathbf{w}\|$ controls the steepness of the probability transition: a larger $\|\mathbf{w}\|$ collapses the $\hat y \approx 0.27 \to 0.73$ band into a thin strip.

Multi-Class Regions#

$$(\mathbf{w}_j - \mathbf{w}_k)^\top \mathbf{x} + (b_j - b_k) = 0,$$so all pairwise boundaries are still linear.

Model Evaluation#

Confusion Matrix and Headline Metrics#

For binary classification:

| Predicted Positive | Predicted Negative | |

|---|---|---|

| Actually Positive | TP | FN |

| Actually Negative | FP | TN |

Read precision as “of those I flagged, how many were real?” and recall as “of the real ones, how many did I catch?” Optimising one without the other is almost always wrong.

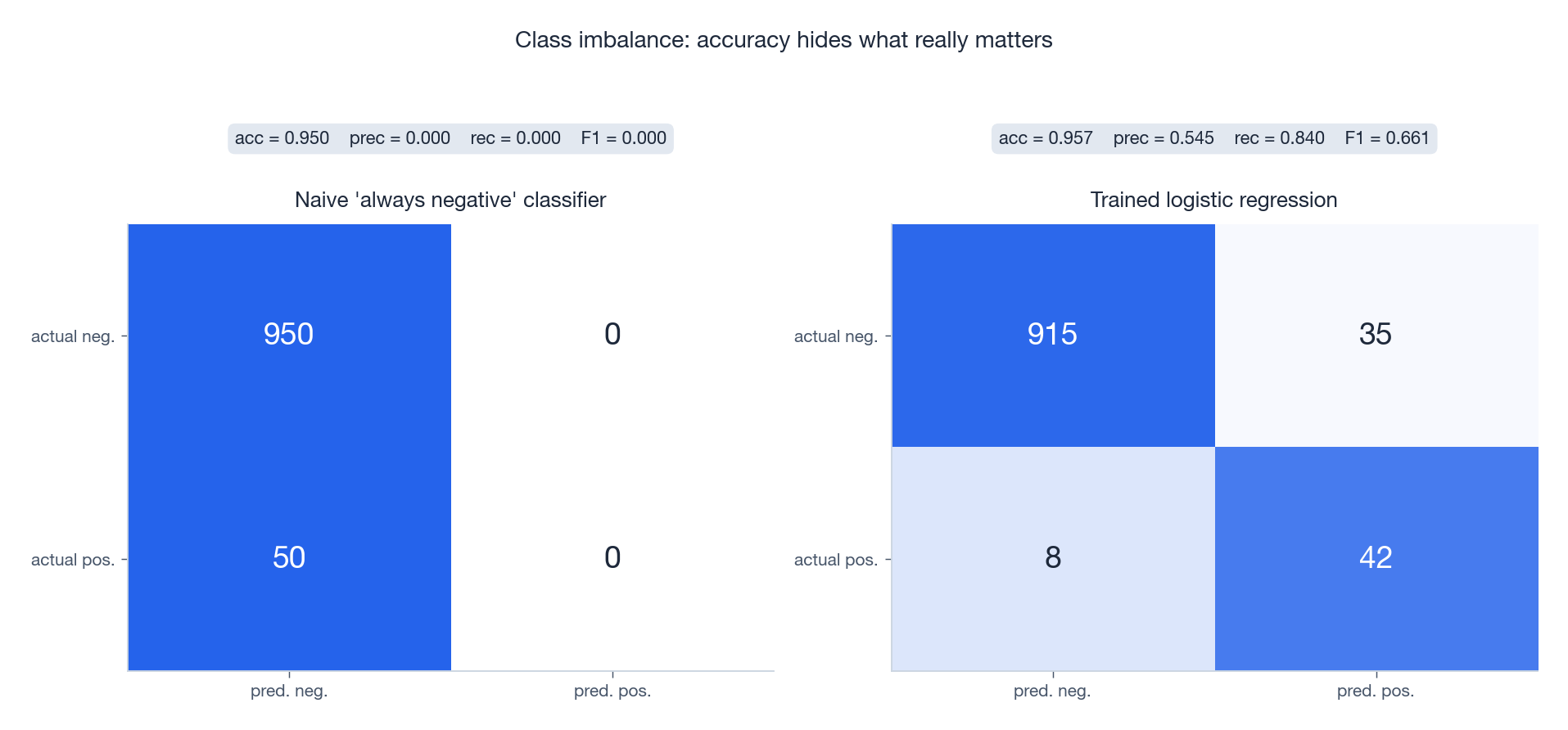

Class Imbalance: Why Accuracy Lies#

When the positive class is rare (95% negative, 5% positive), a trivial “always negative” classifier scores 95% accuracy and is completely useless: it never finds a single positive case. The trained model in the right panel sacrifices a little accuracy to actually solve the problem — its $F_1$ is dramatically higher even though its accuracy is lower. Lesson: always pair accuracy with precision, recall, and $F_1$ when classes are imbalanced.

ROC, PR, and AUC#

Sweeping the threshold $\tau$ (“predict positive when $\hat y \geq \tau$ ”) traces out two curves:

- ROC: TPR ($= \text{Recall}$ ) vs FPR ($= FP / (FP + TN)$ ).

- PR: precision vs recall.

AUC = area under the ROC curve. AUC = 1 is perfect ranking, AUC = 0.5 is random. There is a clean probabilistic reading: AUC equals the probability that a uniformly drawn positive scores higher than a uniformly drawn negative.

When positives are very rare, the PR curve and average precision (AP) are usually more informative than ROC, because the FPR denominator $FP + TN$ is dominated by the (huge) negative class and masks model differences.

Implementation#

Numerically Stable Sigmoid#

When $z$ is very negative, $e^{-z}$ overflows. Use a branched form:

| |

Numerically Stable Softmax#

Direct $e^{z_k}$ overflows for large logits. Use the shift-invariance $\operatorname{softmax}(z) = \operatorname{softmax}(z - \max_j z_j)$ :

| |

Complete Binary Classifier#

| |

Complete Multi-Class Classifier#

| |

Exercises#

Exercise 1 — Sigmoid Properties#

Problem. Prove $\sigma'(z) = \sigma(z)(1 - \sigma(z))$ and $\sigma(-z) = 1 - \sigma(z)$ .

$$ \sigma'(z) = \frac{e^{-z}}{(1+e^{-z})^2} = \sigma(z)\bigl(1-\sigma(z)\bigr), \qquad \sigma(-z) = \frac{1}{1+e^{z}} = 1 - \sigma(z). \quad\square $$Exercise 2 — Cross-Entropy from MLE#

Problem. Derive binary cross-entropy from maximum likelihood estimation.

$$\mathcal{L} = -\frac{1}{N}\sum_i \bigl[y_i \ln \hat y_i + (1 - y_i)\ln(1 - \hat y_i)\bigr]. \quad\square$$Exercise 3 — Softmax Gradient#

Problem. Derive $\partial \mathcal{L} / \partial z_k$ for softmax cross-entropy.

$$\frac{\partial \mathcal{L}}{\partial z_k} = P_k - \mathbb{1}[k = c] = P_k - t_k,$$structurally identical to the binary gradient.

Exercise 4 — Regularisation as a Bayesian Prior#

Problem. What priors do L2 and L1 correspond to?

Solution. L2 ↔ Gaussian prior $\mathbf{w} \sim \mathcal{N}(\mathbf{0}, \lambda^{-1}\mathbf{I})$ , since $-\ln p(\mathbf{w}) \propto \tfrac{\lambda}{2}\|\mathbf{w}\|^2$ . L1 ↔ Laplace prior $p(w_j) \propto e^{-\lambda|w_j|}$ , whose sharp peak at $0$ is what produces sparse MAP solutions.

Exercise 5 — Decision Boundary#

Problem. Show that $\mathbf{w}^\top\mathbf{x} + b = 0$ is a hyperplane and explain the role of $\mathbf{w}$ and $\|\mathbf{w}\|$ .

Solution. The set is an affine hyperplane with normal vector $\mathbf{w}$ and offset controlled by $b$ . The direction of $\mathbf{w}$ orients the boundary; the magnitude $\|\mathbf{w}\|$ controls the steepness of the sigmoid transition (large $\|\mathbf{w}\|$ ⇒ sharp jump from $\hat y \approx 0$ to $\hat y \approx 1$ near the boundary). Signed distance from $\mathbf{x}_0$ to the boundary is $d = (\mathbf{w}^\top\mathbf{x}_0 + b)/\|\mathbf{w}\|$ .

FAQ#

Why is it called “logistic regression” if it does classification?#

Historical accident. The logistic function was first used to regress a probability; classification was a later use case. The name stuck.

What is the essential difference from linear regression?#

Output space and likelihood. Linear regression assumes Gaussian noise on a continuous target (MSE = $-\ln$ Gaussian likelihood). Logistic regression assumes Bernoulli labels (CE = $-\ln$ Bernoulli likelihood). Both are special cases of generalised linear models, differing only in their link function and noise distribution.

Can logistic regression handle nonlinear boundaries?#

Not by itself — its boundary is a hyperplane in input space. But (a) polynomial features, (b) kernels, or (c) stacking inside a neural network give you arbitrarily nonlinear boundaries while keeping the cross-entropy loss intact.

Softmax vs. independent sigmoids?#

Softmax enforces $\sum_k P_k = 1$ — use it for mutually exclusive classes (single-label). Independent sigmoids let labels coexist — use them for multi-label problems (an image being both “outdoor” and “sunny”).

How do I pick the regularisation strength $\lambda$ ?#

Cross-validation. Sweep $\lambda \in \{10^{-4}, 10^{-3}, \dots, 10^{2}\}$ and pick the one with the lowest validation loss (or best validation AUC for imbalanced problems).

Why is logistic regression convex?#

The Hessian $\nabla^2 \mathcal{L} = \tfrac{1}{N}\mathbf{X}^\top \mathbf{S}\,\mathbf{X}$ is PSD because each diagonal entry $\hat y_i(1 - \hat y_i) \in (0, 1/4]$ is non-negative. Convexity ⇒ any local minimum is global.

Logistic regression vs. SVM?#

| Aspect | Logistic Regression | SVM |

|---|---|---|

| Loss | Cross-entropy | Hinge loss |

| Output | Calibrated probability | Decision value |

| Sparsity | Every sample contributes a gradient | Only support vectors do |

| Kernel trick | Needs adaptation | Native |

| Best for | Probability estimates, downstream calibration | Hard classification, complex nonlinear boundaries |

What’s next#

Logistic regression turns classification into a convex problem, but its inductive bias is rigid: the decision boundary is linear, and any nonlinear interaction between features has to be smuggled in via hand-crafted features or kernel tricks. When the problem is genuinely nonlinear, when features interact, when the data is tabular rather than vectorial, jamming it into logistic regression is uphill work.

The next chapter switches to a completely different inductive bias — decision trees. Trees do not assume linearity, do not assume differentiability, do not even assume features live on the same scale: each feature is split independently along an axis, growing greedily by information gain (or Gini). That “recursively cut the space into rectangles” strategy turns out to be a near-optimal prior for tabular data. Understanding entropy, Gini, pruning, and why trees are insensitive to monotone transforms of inputs is the entry ticket for everything that comes next — random forests, GBDT, XGBoost, LightGBM all stack variance reduction or gradient boosting on top of the tree.

References#

- Bishop, C. M. (2006). Pattern Recognition and Machine Learning. Springer. Chapter 4.

- Hastie, T., Tibshirani, R., & Friedman, J. (2009). The Elements of Statistical Learning (2nd ed.). Springer. Chapter 4.

- Murphy, K. P. (2012). Machine Learning: A Probabilistic Perspective. MIT Press. Chapter 8.

- Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep Learning. MIT Press. Chapter 5.

- Hosmer, D. W., Lemeshow, S., & Sturdivant, R. X. (2013). Applied Logistic Regression (3rd ed.). Wiley.

ML Mathematical Derivations Series

< Part 5: Linear Regression | Part 6: Logistic Regression | Part 7: Decision Trees >

ML Math Derivations 20 parts

- 01 ML Math Derivations (1): Introduction and Mathematical Foundations

- 02 ML Math Derivations (2): Linear Algebra and Matrix Theory

- 03 ML Math Derivations (3): Probability Theory and Statistical Inference

- 04 ML Math Derivations (4): Convex Optimization Theory

- 05 ML Math Derivations (5): Linear Regression

- 06 ML Math Derivations (6): Logistic Regression and Classification you are here

- 07 ML Math Derivations (7): Decision Trees

- 08 ML Math Derivations (8): Support Vector Machines

- 09 ML Math Derivations (9): Naive Bayes

- 10 ML Math Derivations (10): Semi-Naive Bayes and Bayesian Networks

- 11 ML Math Derivations (11): Ensemble Learning

- 12 ML Math Derivations (12): XGBoost and LightGBM

- 13 ML Math Derivations (13): EM Algorithm and GMM

- 14 ML Math Derivations (14): Variational Inference and Variational EM

- 15 ML Math Derivations (15): Hidden Markov Models

- 16 ML Math Derivations (16): Conditional Random Fields

- 17 ML Math Derivations (17): Dimensionality Reduction and PCA

- 18 ML Math Derivations (18): Clustering Algorithms

- 19 ML Math Derivations (19): Neural Networks and Backpropagation

- 20 ML Math Derivations (20): Regularization and Model Selection