NLP (5): BERT and Pretrained Models

How BERT made bidirectional pretraining the default in NLP. We unpack the architecture, the 80/10/10 masking rule, fine-tuning recipes, and the RoBERTa/ALBERT/ELECTRA family with HuggingFace code.

In October 2018, Google released BERT and broke eleven NLP benchmarks at once. The recipe is almost embarrassingly simple: take a Transformer encoder, train it to predict words that have been randomly hidden using both left and right context, and then fine-tune the same pretrained model for whatever downstream task you have. Before BERT, every task came with its own from-scratch model. After BERT, “pretrain once, fine-tune everywhere” became the default mental model for the entire field.

If you have used a sentiment-analysis API, a search engine that understands intent, or a customer-support bot in the last few years, there is a very good chance BERT or one of its descendants is doing the heavy lifting underneath.

What You Will Learn#

- How pretraining evolved: Word2Vec to ELMo to GPT-1 to BERT

- BERT’s architecture: a bidirectional Transformer encoder with WordPiece input

- Masked Language Modeling (MLM) and Next Sentence Prediction (NSP), and why the 80/10/10 mask split exists

- Fine-tuning BERT for classification, NER, QA and sentence-pair tasks

- The BERT family: RoBERTa, ALBERT, ELECTRA, and when to pick each

- Practical fine-tuning recipes (learning rate, warmup, gradient accumulation)

- A complete HuggingFace pipeline you can copy-paste

Prerequisites: Part 4 (Transformer architecture) and basic PyTorch.

The rise of pretrain-then-finetune#

Before BERT, every NLP task started from a freshly initialized model trained on its own labeled dataset. That was expensive (compute), wasteful (no knowledge sharing across tasks), and brittle (small datasets gave shaky models). The story of how the field escaped this trap runs through four landmark systems.

A short evolution#

Word2Vec (2013). Static word embeddings learned from raw text. The same vector represented “bank” in river bank and in bank account — there was no way for context to change a word’s meaning.

$$\text{ELMo}_k = \gamma \sum_{j=0}^{L} s_j \, h_{k,j}$$where $h_{k,j}$ is the hidden state of layer $j$ at token position $k$ and $s_j$ are learned softmax weights. ELMo proved that contextual representations dramatically improve almost every downstream task — but it was still RNN-based, so training was slow and hard to parallelize.

$$P(w_1, \ldots, w_n) = \prod_{i=1}^{n} P(w_i \mid w_1, \ldots, w_{i-1})$$GPT-1 was strong but unidirectional: when reading “the bank is closed,” it could not use “closed” to disambiguate “bank,” because at the position of “bank” the model has not yet seen “closed.”

BERT (October 2018). The breakthrough: change the pretraining objective so every token can attend to its full context, in both directions, simultaneously. That single decision unlocked an across-the-board jump in benchmark scores.

Why the paradigm shift matters#

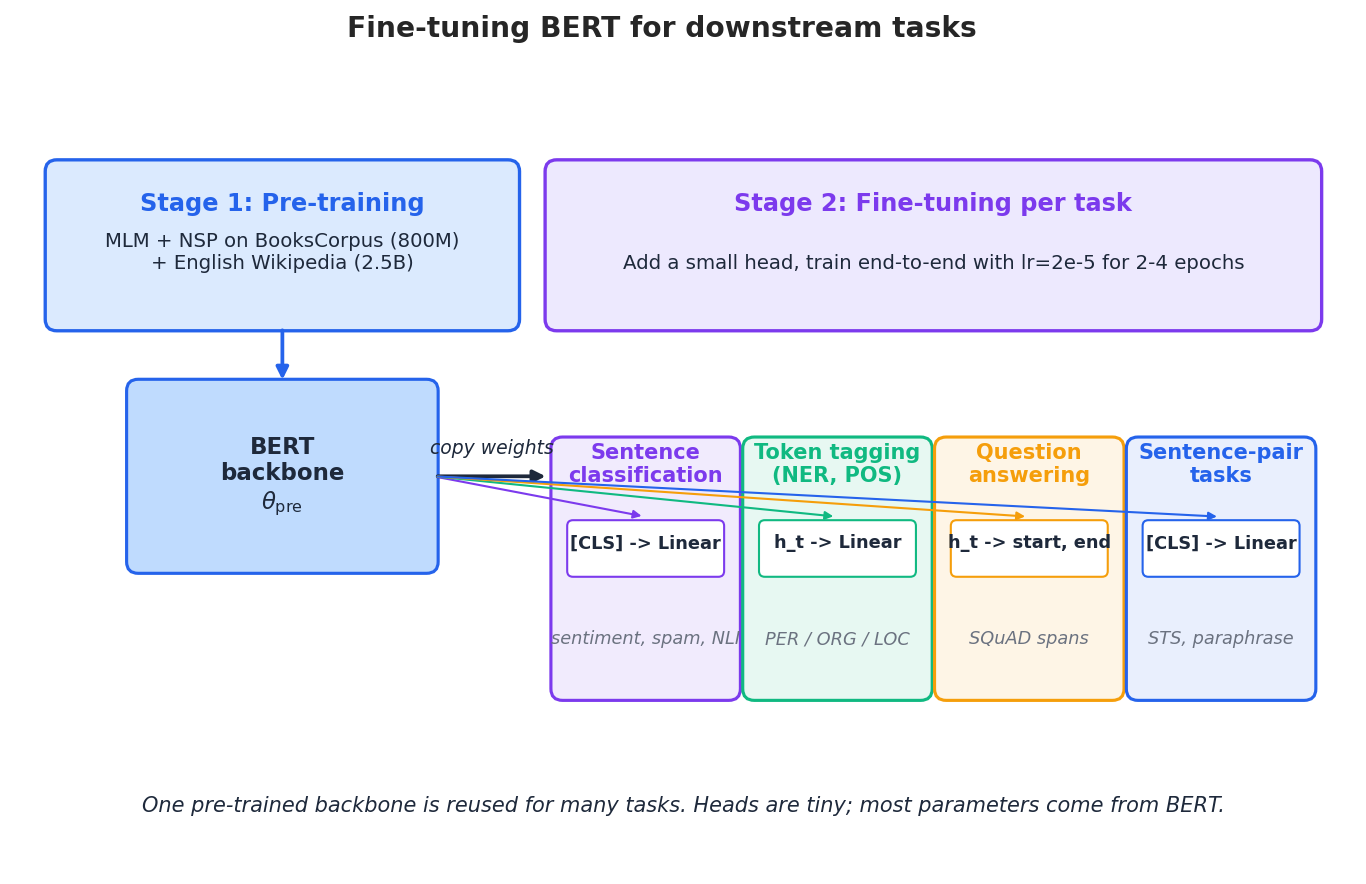

The pretrain-then-finetune pipeline has two stages:

- Pretrain on massive unlabeled text (books, Wikipedia, the web) using a self-supervised objective. This is expensive but you do it once.

- Fine-tune on a small labeled dataset for each downstream task by adding a tiny head and training end-to-end with a small learning rate.

The wins are concrete:

- Data-efficient. The backbone already “knows” syntax and a lot of world knowledge, so you usually need only hundreds to a few thousand labeled examples per task.

- Universal. The same backbone serves classification, tagging, span extraction, and pairwise tasks.

- Strong baselines. Plain BERT fine-tuning routinely beats the bespoke architectures it replaced.

BERT’s architecture#

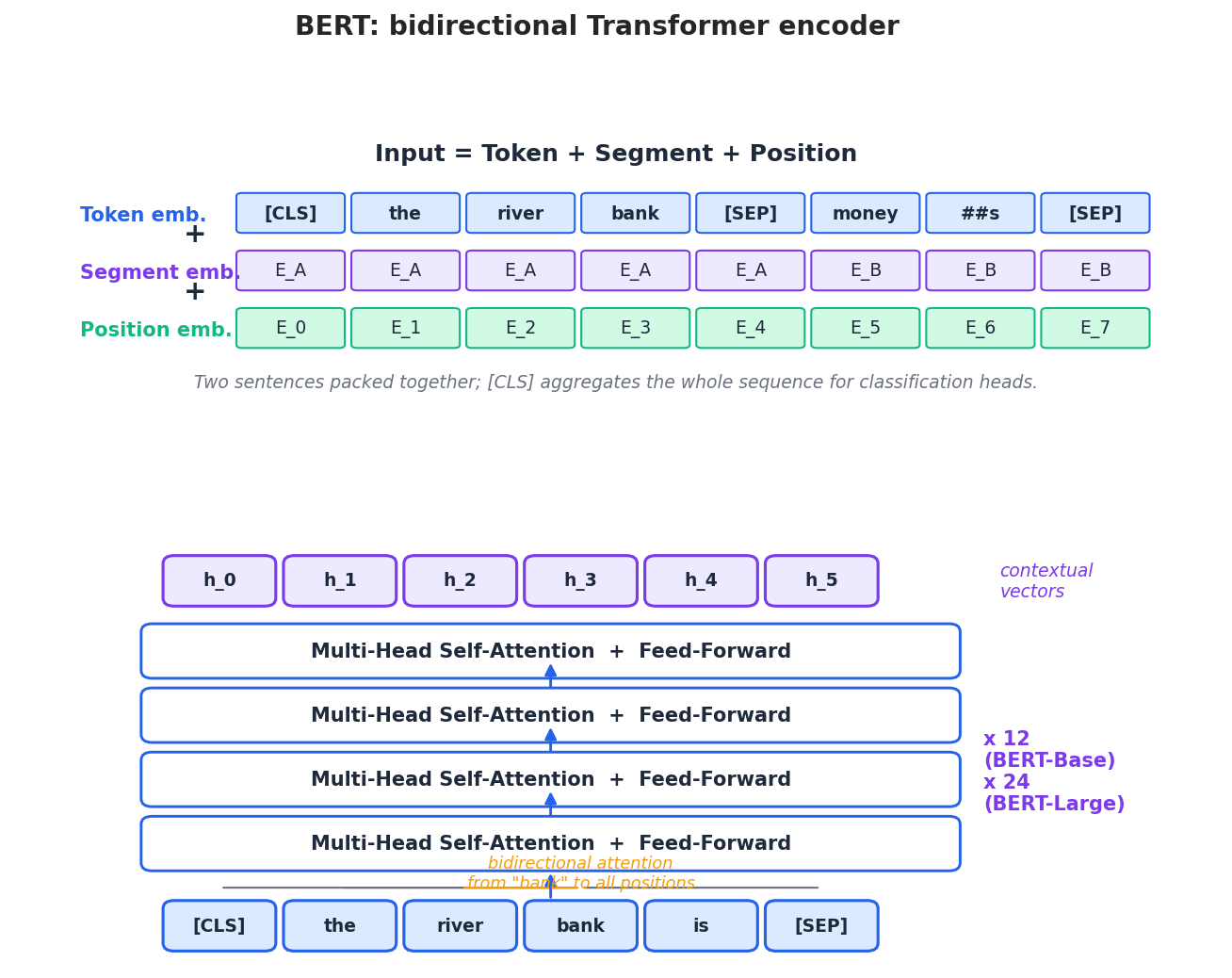

BERT is the encoder half of the original Transformer, repeated 12 or 24 times. There is no decoder, no causal mask, and no autoregressive generation — just a bidirectional stack of self-attention layers that turn a sequence of tokens into a sequence of contextual vectors.

Input representation: three embeddings, summed#

$$\text{Input}_i = E^{\text{tok}}_{w_i} + E^{\text{seg}}_{s_i} + E^{\text{pos}}_{i}$$- Token embedding — the WordPiece sub-token id, drawn from a 30K vocabulary.

- Segment embedding — $E_A$ for tokens belonging to the first sentence, $E_B$ for the second. This lets BERT model sentence-pair tasks (NLI, QA) without any architectural change.

- Position embedding — a learned vector for each absolute position from 0 to 511. (Unlike the original Transformer’s sinusoidal positions, BERT learns its own.)

Two special tokens carry most of the protocol:

[CLS]is prepended to every input. After all layers, its hidden state is treated as a pooled summary of the whole sequence and is the input to classification heads.[SEP]separates sentence A from sentence B and marks the end of the input.

Bidirectional self-attention#

$$\text{Attention}(Q, K, V) = \text{softmax}\!\left(\frac{Q K^\top}{\sqrt{d_k}}\right) V$$Crucially, $Q$ , $K$ , and $V$ all come from the same input sequence (self-attention) and there is no causal mask (bidirectional). So the representation of “bank” at position 3 can simultaneously incorporate “river” on its left and “is closed” on its right within a single forward pass.

Two sizes#

The original paper released two configurations that are still the reference points today:

| BERT-Base | BERT-Large | |

|---|---|---|

| Layers | 12 | 24 |

| Hidden size | 768 | 1024 |

| Attention heads | 12 | 16 |

| Parameters | 110M | 340M |

BERT-Base fits on a single consumer GPU for inference. BERT-Large was the workhorse that set most of the 2018 records.

Pretraining objectives#

BERT’s pretraining combines two self-supervised tasks. The first is the famous one; the second turned out to be optional.

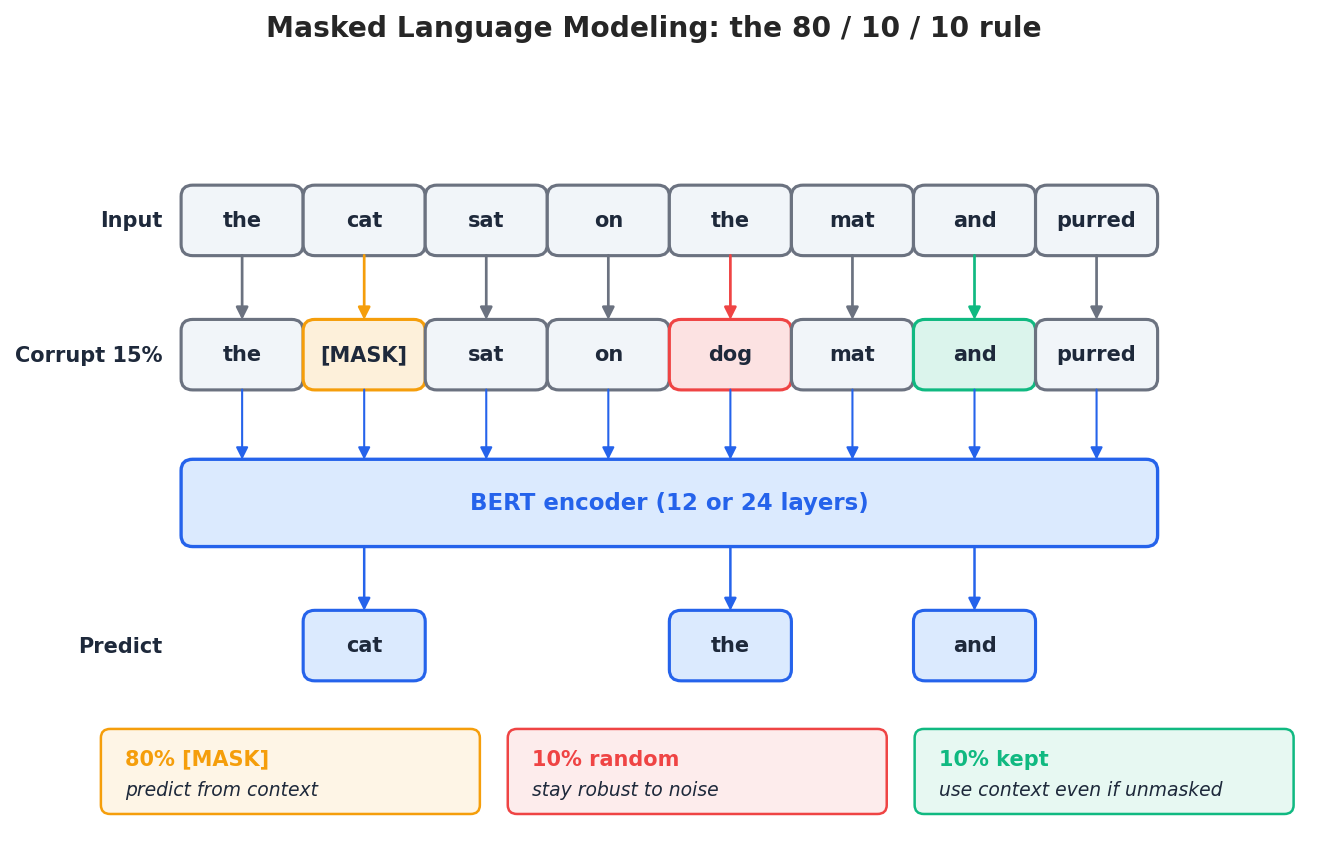

Masked Language Modeling (MLM)#

For each input sequence, randomly select 15% of the token positions. At each chosen position:

- with probability 80%, replace the token with

[MASK], - with probability 10%, replace it with a random vocabulary token,

- with probability 10%, leave it unchanged.

where $\mathcal{M}$ is the set of masked positions and $\tilde{x}$ is the corrupted input.

Why the 80/10/10 mix? It is engineered to prevent two failure modes:

- If you only used

[MASK], the model would never see[MASK]during fine-tuning (downstream inputs have no masks), creating a train/test mismatch. - If you only replaced tokens with random ones, the model could not trust any input token and would underuse local information.

- Leaving 10% unchanged forces the model to use context even when the surface form looks correct — otherwise it could learn the shortcut “if a token is not weird-looking, just copy it.”

The MLM objective is what makes BERT bidirectional in a clean way: predicting the masked word from both sides requires the encoder to fuse information from the entire sequence at every position.

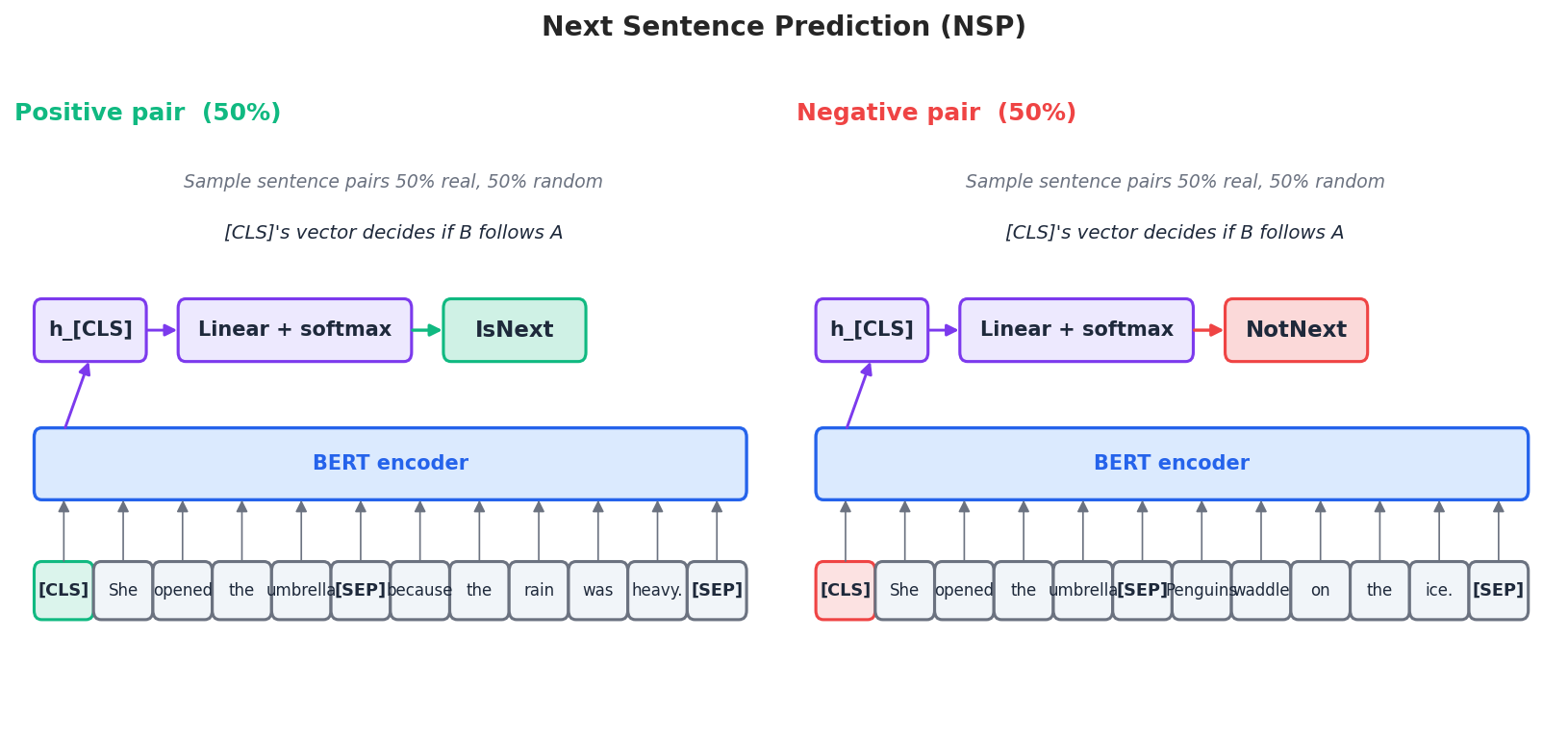

Next Sentence Prediction (NSP)#

NSP was added so that BERT could learn sentence-pair semantics for tasks like NLI and QA. Each training example packs two sentences [CLS] A [SEP] B [SEP], with the label generated by a coin flip:

- 50% of the time, B is the actual sentence that followed A in the corpus (label

IsNext). - 50% of the time, B is a random sentence from a different document (label

NotNext).

The total pretraining loss is just the sum of the MLM and NSP losses.

A footnote that aged badly: subsequent work (RoBERTa, ALBERT) found NSP contributes very little, and removing or replacing it actually helps. We will return to this in the variants section.

Pretraining corpus#

BERT was trained on BooksCorpus (about 800M words) and English Wikipedia (about 2.5B words), totalling roughly 3.3B words. By 2026 standards that is tiny — modern LLMs train on trillions of tokens — but it was already enough to set a new bar.

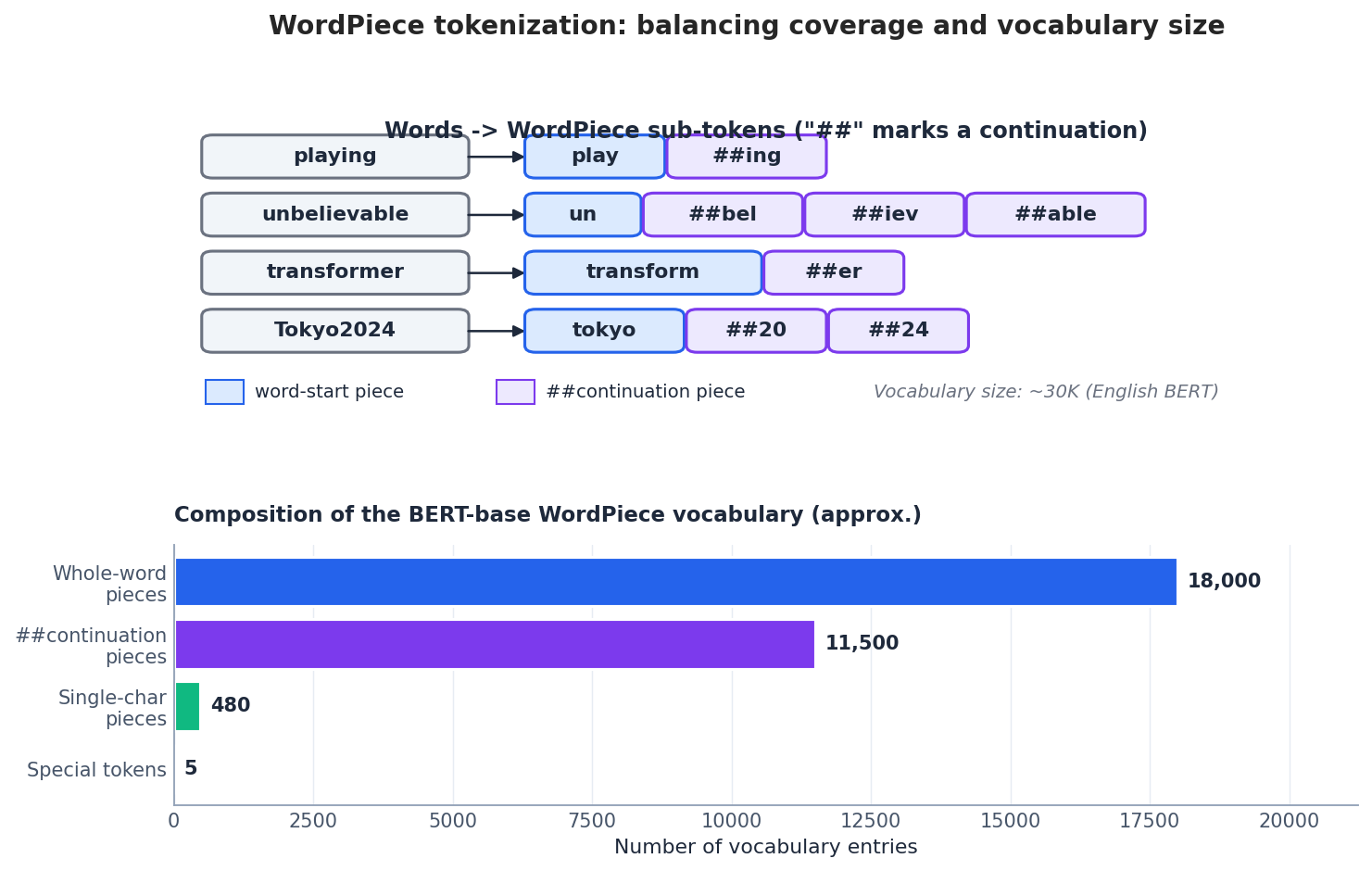

WordPiece tokenization#

BERT does not work with whole words. Instead, it uses WordPiece, a subword scheme that strikes a balance between two extremes:

- Whole-word vocabularies need millions of entries to cover any real corpus and still hit out-of-vocabulary tokens at inference time.

- Pure character vocabularies are tiny but force the model to reconstruct word meaning from scratch every sequence.

WordPiece picks a 30K-token vocabulary by greedily merging the character pairs whose merger most increases the likelihood of the training corpus. At tokenization time, it segments each word into the longest pieces it has in the vocabulary; pieces inside a word are prefixed with ## to mark them as continuations:

| |

This guarantees there is no out-of-vocabulary token (everything decomposes into known pieces, ultimately into single characters), while keeping common words as single tokens for efficiency.

Fine-tuning BERT for downstream tasks#

The big idea behind fine-tuning is that the same backbone serves nearly every task; only the head differs.

Text classification#

For a sentence-level label (sentiment, spam, intent), feed the input through BERT and project the final [CLS] vector through a linear layer:

| |

Internally:

- Tokenize and add

[CLS]and[SEP]. - Pass through 12 encoder layers.

- Take the

[CLS]hidden state (768-dim for BERT-Base). - Apply a single linear layer mapping 768 -> num_labels.

Named entity recognition (NER)#

Token-level tasks use the per-token vectors instead of [CLS]:

| |

A subtlety: WordPiece can split a word (“Hawaii” might stay whole, but “Tokyo2024” splits into three pieces). When converting back to word-level entities, you typically take the prediction at the first sub-token of each word and ignore the continuation pieces.

Question answering (extractive)#

For SQuAD-style QA, the model predicts the start and end positions of the answer span inside the context:

| |

The two heads are linear layers on top of every token vector, producing one start logit and one end logit per position. The predicted span is the contiguous range that maximizes start + end logits subject to start <= end.

Sentence-pair classification (NLI, paraphrase)#

Pack the two sentences with a [SEP] between them and use the [CLS] head:

| |

Notice how minimal the architectural change is: the same BertForSequenceClassification class handles both single-sentence and sentence-pair classification just by tokenizing differently.

Fine-tuning recipes that actually work#

Fine-tuning a 110M-parameter model with a few thousand labels is fundamentally different from training from scratch. The defaults that work for ResNet from scratch will overshoot and destroy the pretrained weights here.

Use a small learning rate, with weight decay#

The pretrained weights live in a good basin; you want to nudge them, not bulldoze them. The standard trick is AdamW with separate parameter groups so that bias and LayerNorm parameters are not decayed:

| |

Warm up, then linearly decay#

Even a tiny learning rate is too large if applied to the very first step on a freshly added head. Warmup ramps the LR up over the first ~10% of steps, then a linear schedule cools it back to zero:

| |

Gradient accumulation if memory is tight#

When you cannot fit batch size 32 on your GPU, simulate it by accumulating gradients across several smaller forward/backward passes:

| |

Default recipe#

When in doubt, start here — it is what most papers use:

| Setting | Recommended |

|---|---|

| Learning rate | 2e-5 to 5e-5 |

| Batch size | 16-32 (use gradient accumulation if needed) |

| Epochs | 2-4 (BERT fine-tunes fast; more epochs risk overfitting) |

| Warmup | 10% of total steps |

| Max sequence length | 128-512 (shorter is faster; pick the smallest that fits inputs) |

| Optimizer | AdamW with weight decay 0.01 on weights, 0.0 on bias/LayerNorm |

A complete HuggingFace pipeline#

Putting it all together, here is an end-to-end fine-tuning pipeline on the IMDB sentiment dataset:

| |

On a single modern GPU this trains in a few hours and reaches around 92-94% accuracy on IMDB — a number that took years of hand-engineered features to hit before BERT.

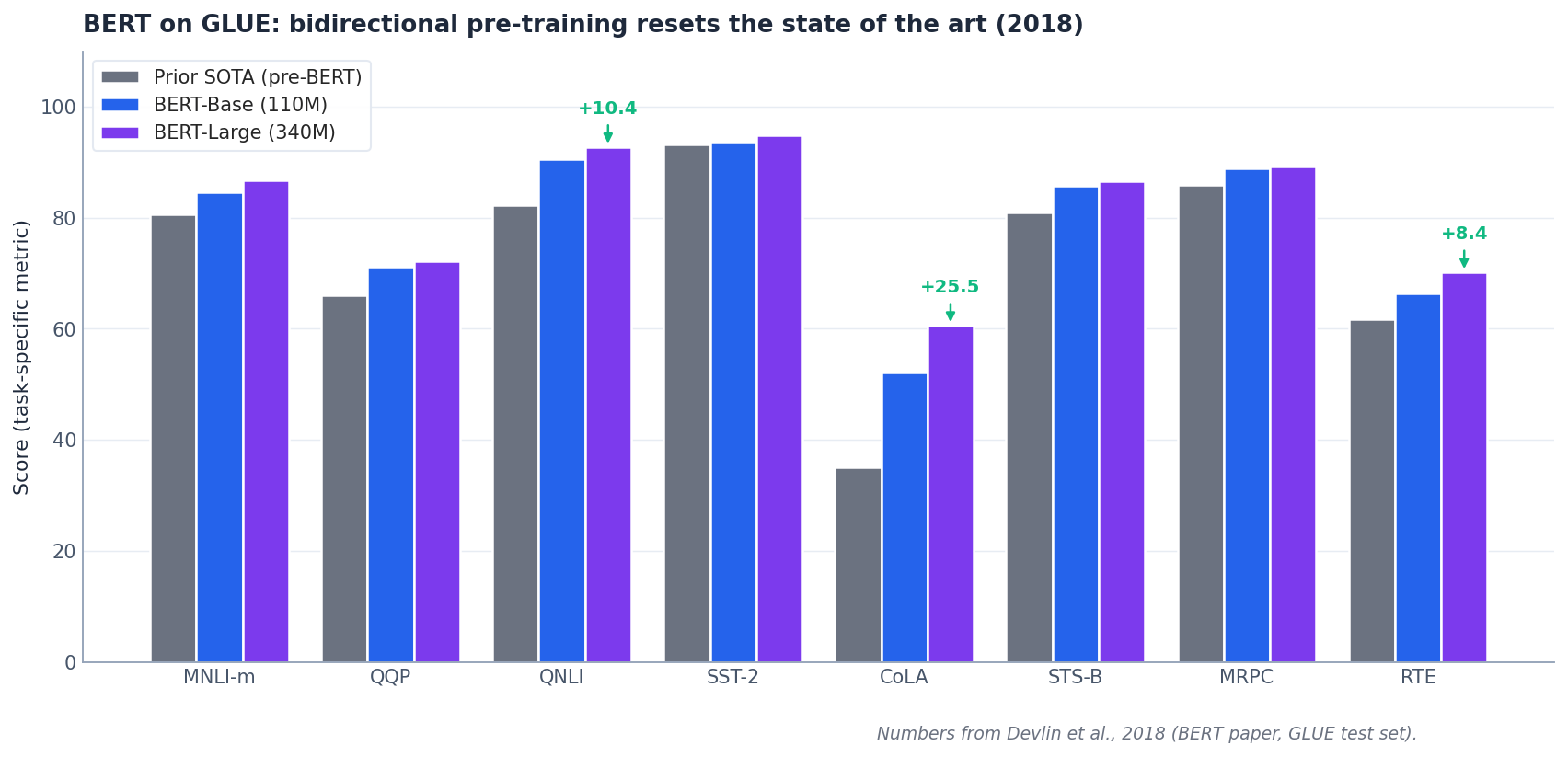

How big was the jump? GLUE in 2018#

To appreciate why BERT shocked the field, look at the eight-task GLUE benchmark from the original paper.

The bars compare the previous task-specific best (gray) with BERT-Base (blue) and BERT-Large (purple). On structurally hard tasks like CoLA (linguistic acceptability) and the small RTE (textual entailment) dataset, the absolute gain was double-digit. A single pretrained model, fine-tuned with a few epochs and a tiny head, beat years of bespoke architectures simultaneously on every task.

The BERT family: RoBERTa, ALBERT, ELECTRA#

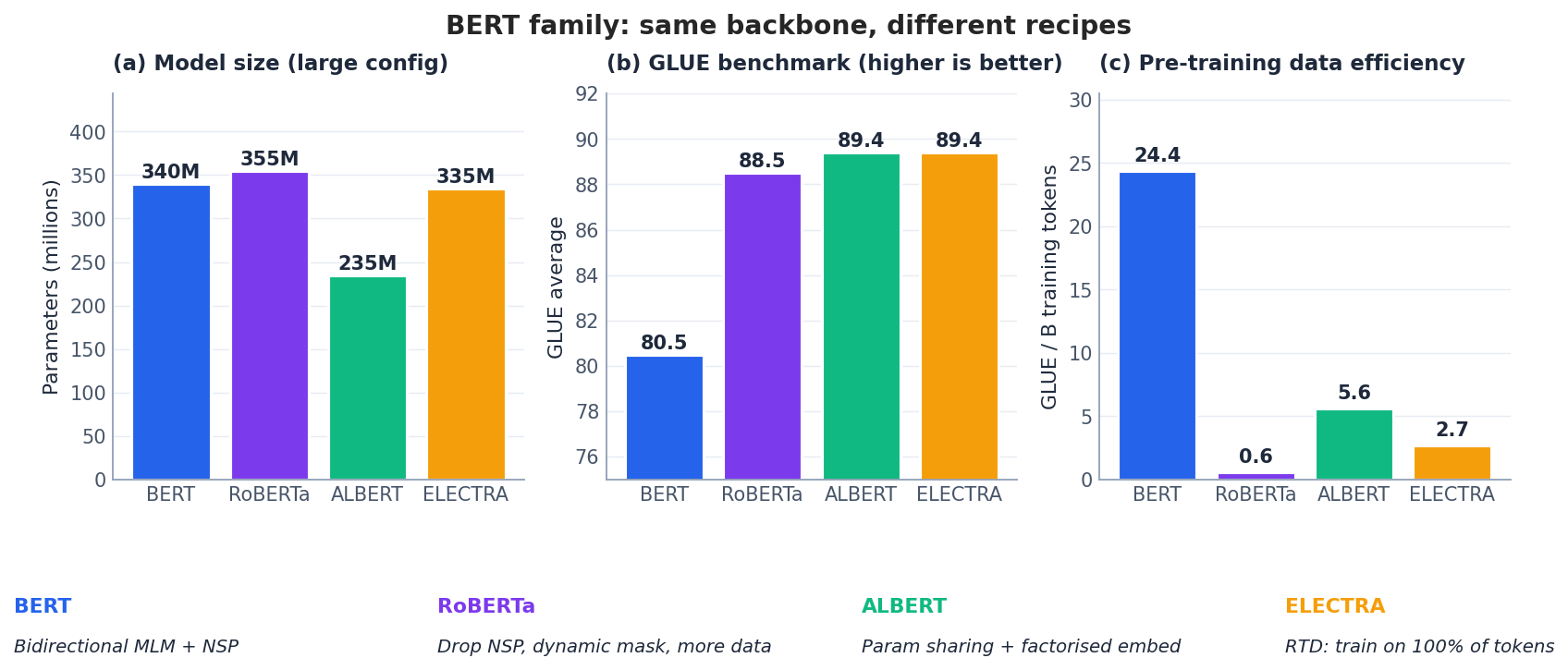

BERT was the start, not the end. Within two years a small family of variants improved on it along orthogonal axes.

RoBERTa (Facebook, 2019): just train it properly#

Liu et al. showed that BERT was significantly under-trained. Without changing the architecture, RoBERTa changes the recipe:

- Drop NSP. It does not help and may hurt.

- Dynamic masking. Generate a fresh mask for each epoch instead of reusing a static one created during preprocessing.

- Bigger batches. 8K instead of 256.

- Much more data. Add CC-News, OpenWebText, and Stories on top of BooksCorpus + Wikipedia (~160B tokens vs BERT’s ~3.3B).

The result is a 2-3 GLUE point jump using exactly the BERT-Large architecture.

ALBERT (Google, 2019): squeeze the parameters#

ALBERT achieves competitive scores with far fewer unique parameters:

- Factorized embeddings. Rather than a single $V \times H$ embedding matrix, decompose it into $V \times E$ and $E \times H$ with $E \ll H$ . Token embeddings live in a small space and are projected up to the hidden size.

- Cross-layer parameter sharing. All Transformer layers share the same weights, so depth no longer multiplies parameter count.

- Sentence Order Prediction (SOP) replaces NSP with a harder task: given two adjacent sentences, decide whether their order has been swapped. This is harder than detecting random pairs and turns out to be a more useful signal.

ALBERT-xxlarge has roughly 235M unique parameters (compare with BERT-Large’s 340M) but matches or beats it on GLUE.

ELECTRA (Google, 2020): use every token#

MLM only computes a loss at 15% of positions, which is wasteful. ELECTRA replaces MLM with Replaced Token Detection (RTD):

- A small generator (a tiny MLM) plausibly replaces some tokens.

- A larger discriminator examines every token and decides: was this the original, or did the generator swap it in?

- We throw away the generator and keep only the discriminator.

Because the discriminator gets a loss at every position, ELECTRA reaches BERT-quality scores with much less compute — ELECTRA-Small matches BERT-Base while training in a quarter of the time.

Comparison#

| Model | Key innovation | Parameters | Relative GLUE |

|---|---|---|---|

| BERT | Bidirectional MLM + NSP | 110M / 340M | Baseline |

| RoBERTa | Drop NSP, dynamic masking, far more data | 110M / 355M | +2-3 |

| ALBERT | Factorized embeddings + parameter sharing | 12M to 235M | +1-2 |

| ELECTRA | RTD (loss on 100% of tokens) | 14M to 335M | +2-3 |

Picking among them is a recipe choice, not an architecture one: all four are encoder-only Transformers with very similar shape.

What BERT cannot do#

It is just as important to know BERT’s limits.

- Cost. 110-340M parameters and quadratic attention make real-time inference uncomfortable without distillation (DistilBERT, TinyBERT) or quantization.

- No generation. BERT is encoder-only with bidirectional attention. There is no sensible way to autoregressively decode text from it. For generation you need GPT-style decoder models — the topic of Part 6 .

- 512-token ceiling. Position embeddings are learned for positions 0-511. Long documents need sliding windows, hierarchical aggregation, or a different architecture (Longformer, BigBird).

- English-centric. The original BERT was trained on English text only. Multilingual BERT covers 100+ languages but underperforms language-specific models (BERT-Chinese, CamemBERT, etc.) on their target language.

FAQ#

Why use [CLS] for classification? It is placed at position 0 of every input, so attention naturally lets it aggregate information from the whole sequence by the final layer. The pretraining NSP objective also conditions [CLS] to act as a sequence summary.

BERT or RoBERTa? If you want the highest score and have a few extra GPU-hours, RoBERTa. If you want the largest ecosystem, the most tutorials, and the most checkpoints to choose from, BERT remains the safest baseline.

How do I pick a variant? Use BERT for general baselines, RoBERTa for pushing accuracy, ALBERT when parameter count matters (mobile, embedded), ELECTRA when training compute is the bottleneck.

What about non-English languages? Use mBERT or XLM-RoBERTa as multilingual baselines. For best per-language performance, use a dedicated checkpoint — BERT-wwm-ext or MacBERT for Chinese, CamemBERT for French, BERTje for Dutch, and so on.

Can BERT be used for sentence embeddings? Naively averaging BERT token vectors gives mediocre sentence embeddings. Use Sentence-BERT (a fine-tuned variant trained with a Siamese contrastive loss) when you need similarity scoring.

Summary#

- BERT introduced bidirectional pretraining via Masked Language Modeling, letting every token see its full context in a single forward pass.

- The 80/10/10 mask split is engineered to avoid train-test mismatch and to force the model to use context even when the input looks unmasked.

- The pretrain-then-finetune paradigm means one expensive pretraining run amortizes across every downstream task; fine-tuning needs only a tiny head, a small learning rate, and a few epochs.

- RoBERTa, ALBERT, and ELECTRA show that the recipe (data, masking, parameter sharing, training objective) matters as much as the architecture.

- BERT excels at understanding tasks but cannot generate text. For that we turn to GPT (Part 6 ).

NLP 12 parts

- 01 NLP (1): Introduction and Text Preprocessing

- 02 NLP (2): Word Embeddings and Language Models

- 03 NLP (3): RNN and Sequence Modeling

- 04 NLP (4): Attention Mechanism and Transformer

- 05 NLP (5): BERT and Pretrained Models you are here

- 06 NLP (6): GPT and Generative Language Models

- 07 NLP (7): Prompt Engineering and In-Context Learning

- 08 NLP (8): Model Fine-tuning and PEFT

- 09 NLP (9): Deep Dive into LLM Architecture

- 10 NLP (10): RAG and Knowledge Enhancement Systems

- 11 NLP (11): Multimodal Large Language Models

- 12 NLP (12): Frontiers and Practical Applications