NLP (12): Frontiers and Practical Applications

Series finale: agents and tool use (Function Calling, ReAct), code generation (Code Llama, Codex), long-context attention (Longformer, Infini-attention), reasoning models (o1, R1), safety and alignment, evaluation, and production deployment with FastAPI, vLLM and Docker.

We have spent eleven chapters climbing from raw text to multimodal foundation models. This twelfth and final chapter sits at the frontier and at the runway. It is where research stops being a paper and starts being a service: an LLM that calls tools, writes and debugs code, reasons through hundred-step problems, ingests a 200K-token contract, and serves a thousand concurrent users behind a FastAPI endpoint with p95 latency under 300 ms.

Capability brings new failure modes. Models hallucinate confidently, generate harmful content if pushed, leak training data, and cost a small fortune to run badly. So this chapter is split in two halves. The first half — agents, code generation, long context, reasoning — is the frontier of what models can do. The second half — safety, evaluation, deployment — is the engineering needed to put that frontier into production without burning users or budgets.

What You Will Learn#

- Agents: the Function Calling protocol and the ReAct reason-act loop, with worked Python.

- Code generation: where Codex / Code Llama / DeepSeek-Coder sit on HumanEval, and how a self-repair loop works.

- Long context: sliding-window, dilated and Infini-attention masks, and when to use which.

- Reasoning models: how o1 and DeepSeek-R1 trade test-time compute for accuracy, and why CoT is now policy-conditioned.

- Safety: hallucination taxonomy, RLHF / DPO / Constitutional AI alignment, content guardrails.

- Evaluation: capability, safety and efficiency benchmarks, and what each of them does not tell you.

- Production: a FastAPI + vLLM + Docker reference stack, observability, and concrete latency targets.

Prerequisites#

- The previous eleven chapters of this series; we lean especially on Parts 4 (Transformer), 6 (GPT), 8 (PEFT), 9 (LLM internals) and 10 (RAG).

- Comfort with Python, basic asyncio, and the rough shape of a Docker container.

- A passing familiarity with reinforcement learning helps for the alignment section; see RL Part 12 — RLHF and LLM Applications for the long version.

Agents and tool use#

The single biggest capability jump after instruction-tuning was teaching models to call functions. A vanilla LLM is a frozen approximator: whatever it knew at training time is all it knows. An agentic LLM is a controller that can ask the world for facts, run code, query databases, and then continue generating. That changes the system from a clever autocomplete into a programmable executor.

Function Calling — the protocol#

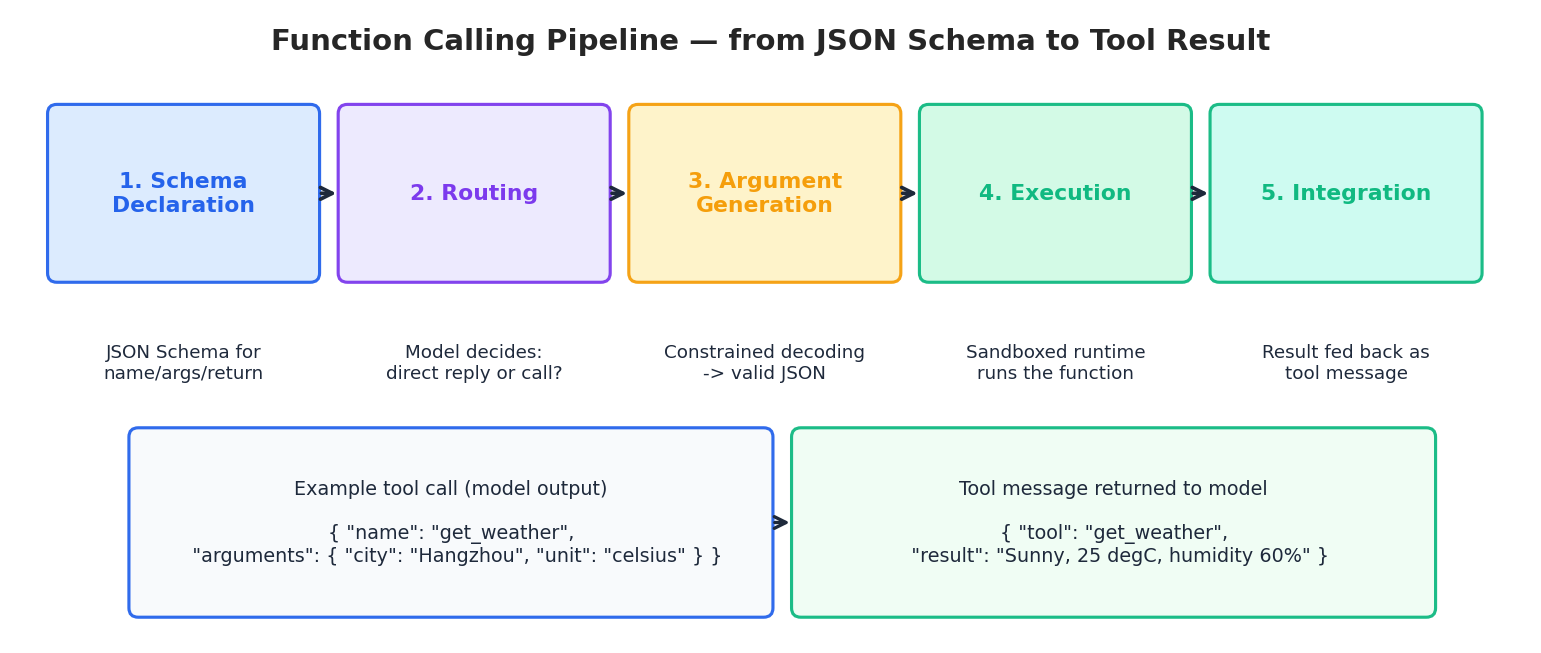

Function Calling, popularised by OpenAI in mid-2023 and now standard across Claude, Gemini, Llama-3 and Qwen, is a typed protocol layered on top of chat completion. The application declares tools as JSON Schema; the model is trained to either reply directly or emit a structured tool call. Five stages, no magic:

- Schema declaration — the application registers each tool with a name, a docstring, and a JSON-Schema for arguments.

- Routing — given the user message, the model decides whether to answer or call.

- Argument generation — constrained decoding produces a JSON object that validates against the schema.

- Execution — the application, not the model, runs the function, ideally in a sandbox.

- Integration — the result is appended to the conversation as a

toolmessage; the model then composes the final reply.

| |

Three details people get wrong. First, let the model decide whether to call (tool_choice="auto"); forcing a call when none is needed produces silly arguments. Second, always sandbox execution — the model can hallucinate a delete_all_users() call if your schema lets it. Third, the tool description is a prompt: rewrite it until the model picks the right tool reliably.

ReAct — reasoning + acting in a loop#

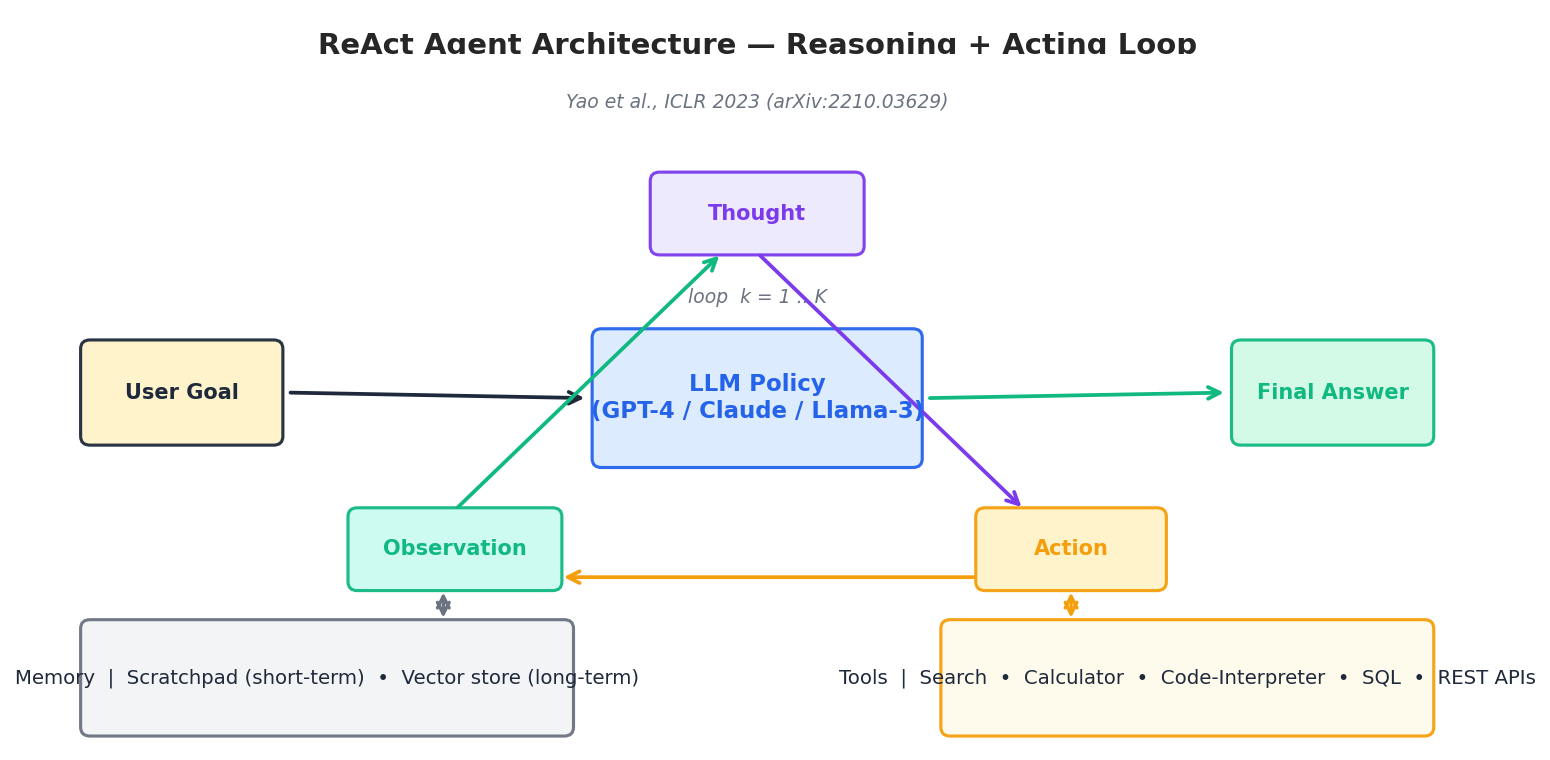

Function Calling is a single-shot interface. ReAct (Yao et al., ICLR 2023, arXiv:2210.03629

) generalises it into an iterative Thought -> Action -> Observation loop, so the model can decompose, branch, and re-plan. This is the architectural backbone of LangChain, AutoGPT, OpenAI’s Assistants, Claude’s tool-use mode and most production agents in 2025.

| |

When to choose what. Function Calling is the right default: it is structured, the prompt is short, and one round-trip is cheap. ReAct earns its overhead when the task needs multi-step planning, branching on intermediate observations, or recovering from tool failures — research summarisation, data analysis, multi-hop QA, complex bookings. For everything in between, modern frameworks let you express either as a graph of Function Calling steps with explicit state, which is usually the most maintainable choice.

Code generation#

Code is the application area where LLMs have most clearly crossed from “interesting demo” to “indispensable tool”. GitHub reports that Copilot users accept roughly a third of suggestions and complete tasks ~55% faster on benchmark tasks. The technical recipe behind that shift is straightforward: pretrain on code, fine-tune on instructions, evaluate by running the output, and add a self-repair loop.

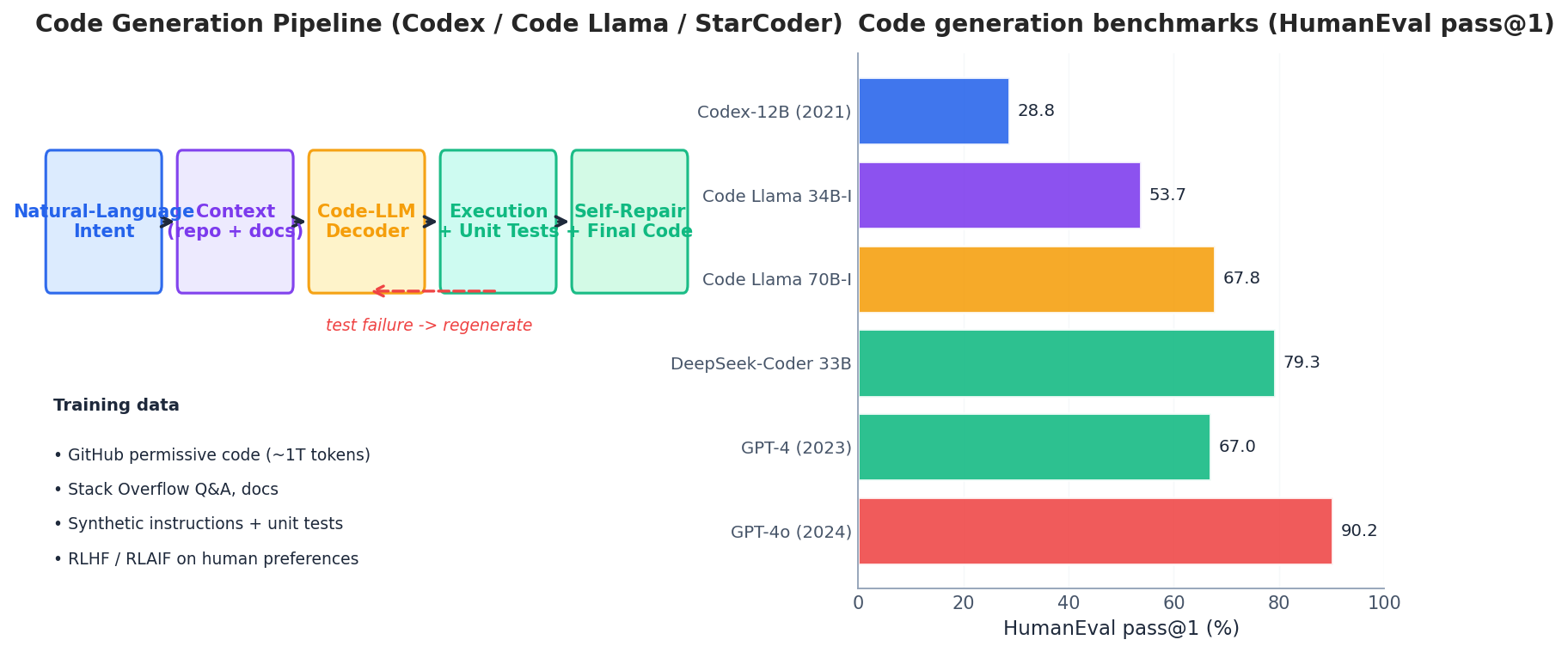

The pipeline#

A modern code-LLM pipeline has five stages: an intent in natural language, an enriched context (open files, repository symbols, test stubs, retrieved API docs), a decoder trained on code, an executor that compiles and runs unit tests, and a self-repair step that feeds the failure back to the model. The repair loop is what turns 65% pass@1 into 85% pass@5. AlphaCode, Reflexion and Code Llama’s instruction variants all use a version of it.

Models and benchmarks#

The standard benchmark is HumanEval (Chen et al., arXiv:2107.03374 , 2021): 164 hand-written Python problems graded by execution. pass@k is the probability that at least one of $k$ samples passes all unit tests; $\mathrm{pass}@1$ is the strict, single-shot measure. Two notes of caution: HumanEval is short, single-file and Python-only, so it overestimates real-world performance; and it has been heavily contaminated, so 2024+ scores should be cross-checked against MBPP, LiveCodeBench, SWE-bench Verified or CRUXEval.

| |

For Python-only work in 2025, DeepSeek-Coder-V2, Qwen2.5-Coder and GPT-4o sit at the top; for repo-scale tasks involving real PRs, SWE-bench Verified is a much better proxy than HumanEval and the leaderboard looks completely different — Claude 3.5 / 3.7 Sonnet, GPT-4o and Devin agents lead, often with execution agents on top of weaker base models.

Long-context modeling#

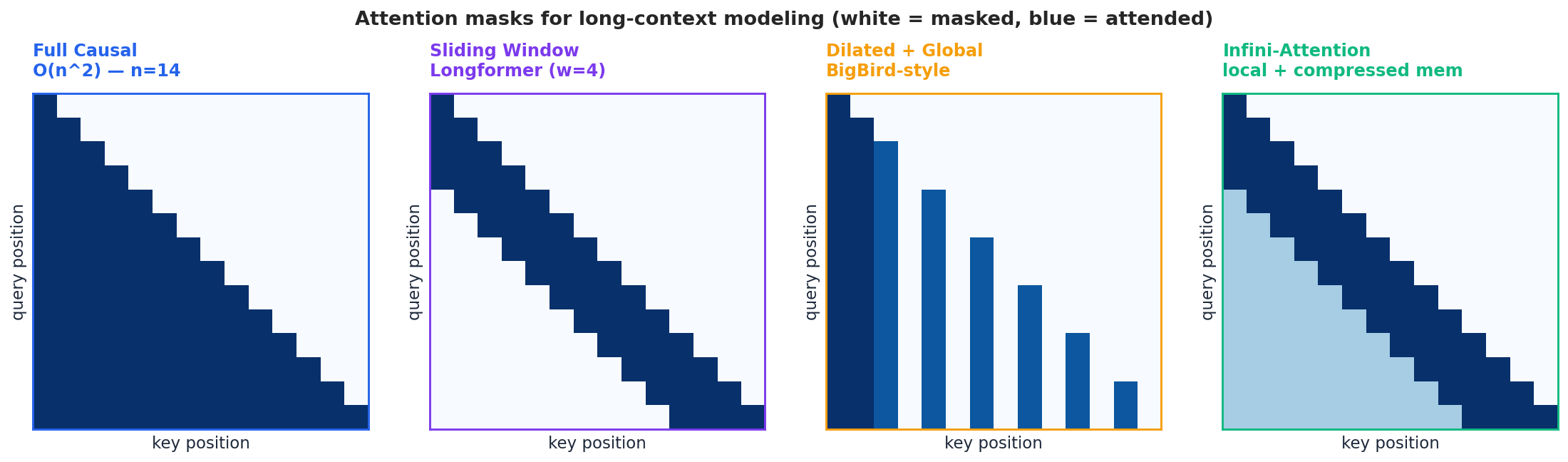

Standard self-attention costs $O(n^2)$ in time and memory. Doubling context from 4K to 8K is barely noticeable; going from 32K to 128K is a memory cliff. Several families of techniques push past it; you usually combine two or three.

- Sliding window (Longformer, Beltagy et al., 2020 ) — each query attends only to the last $w$ keys, dropping cost to $O(nw)$ . Captures local structure; relies on stacked layers to propagate distant information.

- Dilated + global tokens (BigBird, Longformer-global) — sparse strided attention plus a few “global” tokens that everyone attends to. Good for QA where the question token must see the whole document.

- Infini-attention (Munkhdalai et al., 2024 ) — keep a small local window for precision plus a compressive memory that summarises everything older. Bounded memory, unbounded effective context.

- Position-encoding extension — RoPE base scaling, NTK-aware interpolation, YaRN, LongRoPE — extend a model trained at 4K to 128K with little or no fine-tuning by reparameterising the rotary frequencies.

- Parameter-efficient long-context fine-tuning — LongLoRA combines shifted sparse attention with LoRA so that a 7B model can be extended to 100K context on two A100s.

In practice, modern long-context LLMs combine RoPE extension during pretraining, sliding-window or grouped-query attention in the kernel, and continued training on long-document mixtures. At inference time, FlashAttention-2/3 and PagedAttention (vLLM) keep the constant factors sane.

Reasoning models#

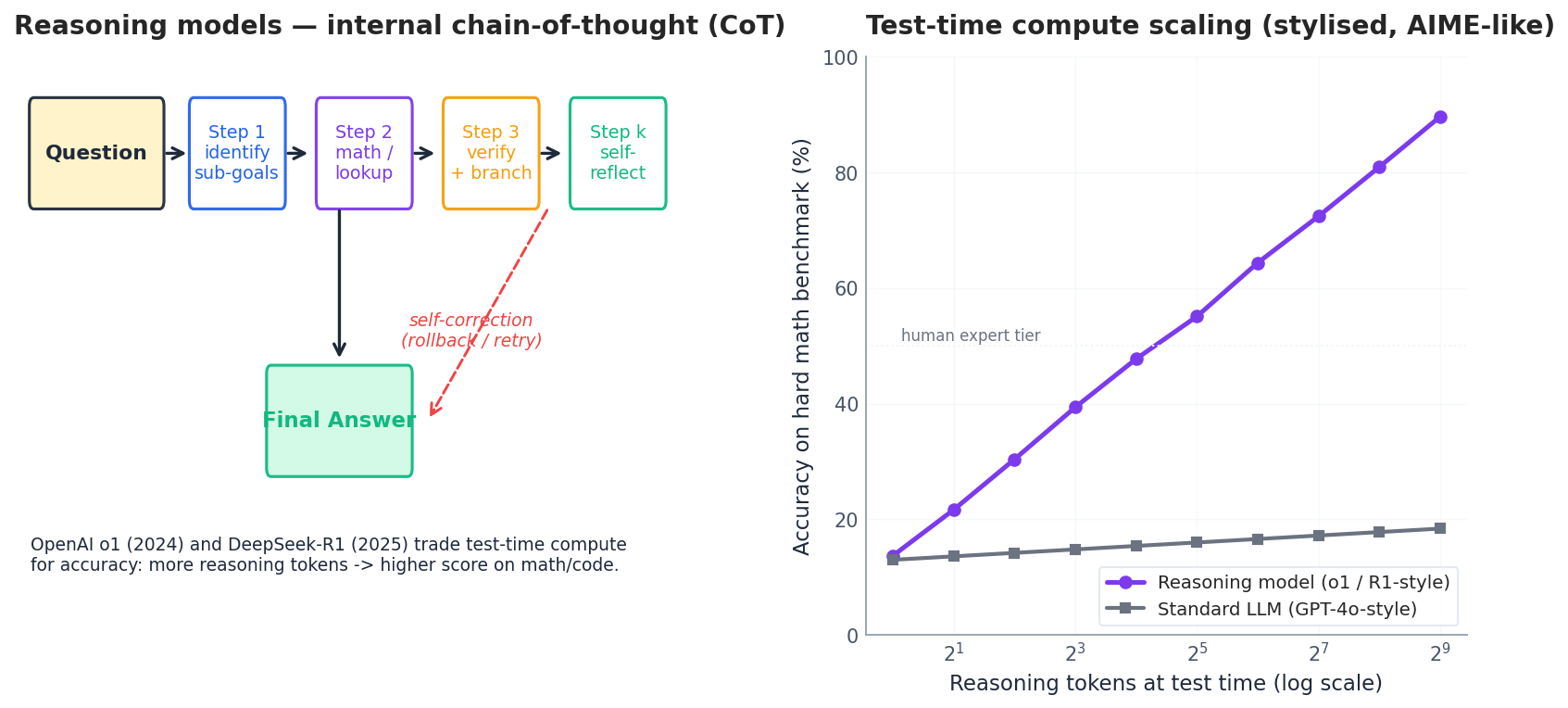

The 2024-2025 inflection point in NLP was test-time compute: instead of making the base model bigger, train it to think for longer before answering. OpenAI’s o1 (Sep 2024) and DeepSeek-R1 (arXiv:2501.12948 , Jan 2025) both do this: an internal chain-of-thought is generated, scored, sometimes rolled back, and only the final answer is returned to the user.

Two ideas drive the gain. First, process supervision — train a reward model to score intermediate steps, not just the final answer (Lightman et al., Let’s Verify Step by Step, 2023). Second, outcome RL on verifiable tasks — for math and code where success is checkable, run large-scale RL with the verifier as the reward. DeepSeek-R1’s “R1-Zero” recipe shows that reasoning emerges from pure RL on a strong base model, with no SFT step at all; aha moments and self-correction appear spontaneously.

The cost. Reasoning models are slow and token-hungry — a single AIME problem can consume 10K-100K reasoning tokens. They also hide their CoT (o1 charges for hidden reasoning tokens), which raises new evaluation and trust questions. The right design pattern in 2025 is a router: send easy queries to a fast model (GPT-4o, Claude Haiku), hard reasoning queries to o1 / R1 / Claude Sonnet thinking mode. The cost-quality difference is often 10-50x.

Safety, alignment and hallucinations#

A model that is helpful but unsafe is unshippable. A model that is safe but unhelpful is worse than no model. Alignment is the engineering discipline that tries to land in the narrow strip in between.

The hallucination taxonomy#

Hallucinations are not a single failure mode. The useful split (Huang et al., Survey of Hallucination, 2023) is:

- Factuality — the output contradicts a verifiable fact (“Marie Curie won the Fields Medal”).

- Faithfulness — the output contradicts the provided context in a RAG or summarisation setting (“the contract says payment is due in 30 days” when it actually says 60).

- Logical / arithmetic — invalid reasoning steps that happen to look fluent.

- Self-consistency — the model contradicts an earlier statement in the same conversation.

Mitigations stack: RAG for factuality (Part 10 ), constrained decoding and JSON schemas for structural faithfulness, self-consistency sampling plus majority vote for arithmetic, process supervision and reasoning models for logical errors, citation-required prompting for verifiability, and abstention training (“say I don’t know”) as a last line of defence.

Alignment — RLHF, DPO, Constitutional AI#

The dominant alignment recipe is still a three-stage pipeline: SFT on demonstrations, train a reward model on human preference pairs, then RL (PPO) against that reward. DPO (Rafailov et al., 2023) collapses the last two stages into a single supervised loss and is now the default in many open recipes because it is much simpler and cheaper. Constitutional AI (Bai et al., Anthropic, 2022) replaces most human labels with model-generated critiques against a written list of principles, making large-scale safety tuning tractable.

| |

In production, the input filter usually catches jailbreak attempts and prompt injection (especially from RAG sources, which is now the dominant attack vector); the output filter catches PII, toxicity and policy violations. Both filters are themselves small classifiers (Llama-Guard-3, ShieldGemma, OpenAI Moderation) — cheaper and more predictable than asking the main model to police itself.

Evaluation#

Benchmarks are how we lie to ourselves least. Three axes matter, and most teams under-invest in two of them.

| Axis | Public benchmarks | What it actually measures |

|---|---|---|

| Capability | MMLU, GPQA-Diamond, GSM8K, MATH, HumanEval, MBPP, SWE-bench, MT-Bench, Arena-Hard, C-Eval (zh) | Knowledge + reasoning + generation, mostly under contamination risk |

| Safety | TruthfulQA, ToxiGen, HarmBench, JailbreakBench, BBQ | Refusal behaviour, bias, jailbreak robustness |

| Efficiency | MLPerf-Inference, vLLM bench, MMLU-Pro tokens/answer | Throughput, p50/p95 latency, cost per million tokens |

Three habits that pay off. Build a private eval set of 100-500 prompts from your real users; it is the only number that correlates with launch outcomes. Run pairwise comparison (LLM-as-judge with chain-of-thought, plus periodic human spot-checks) — absolute scores drift, pairwise wins do not. Track regressions per release in a fixed harness (lm-evaluation-harness, evalplus, inspect_ai); a 2-point drop on your private eval should block a deploy even if MMLU went up.

Production deployment#

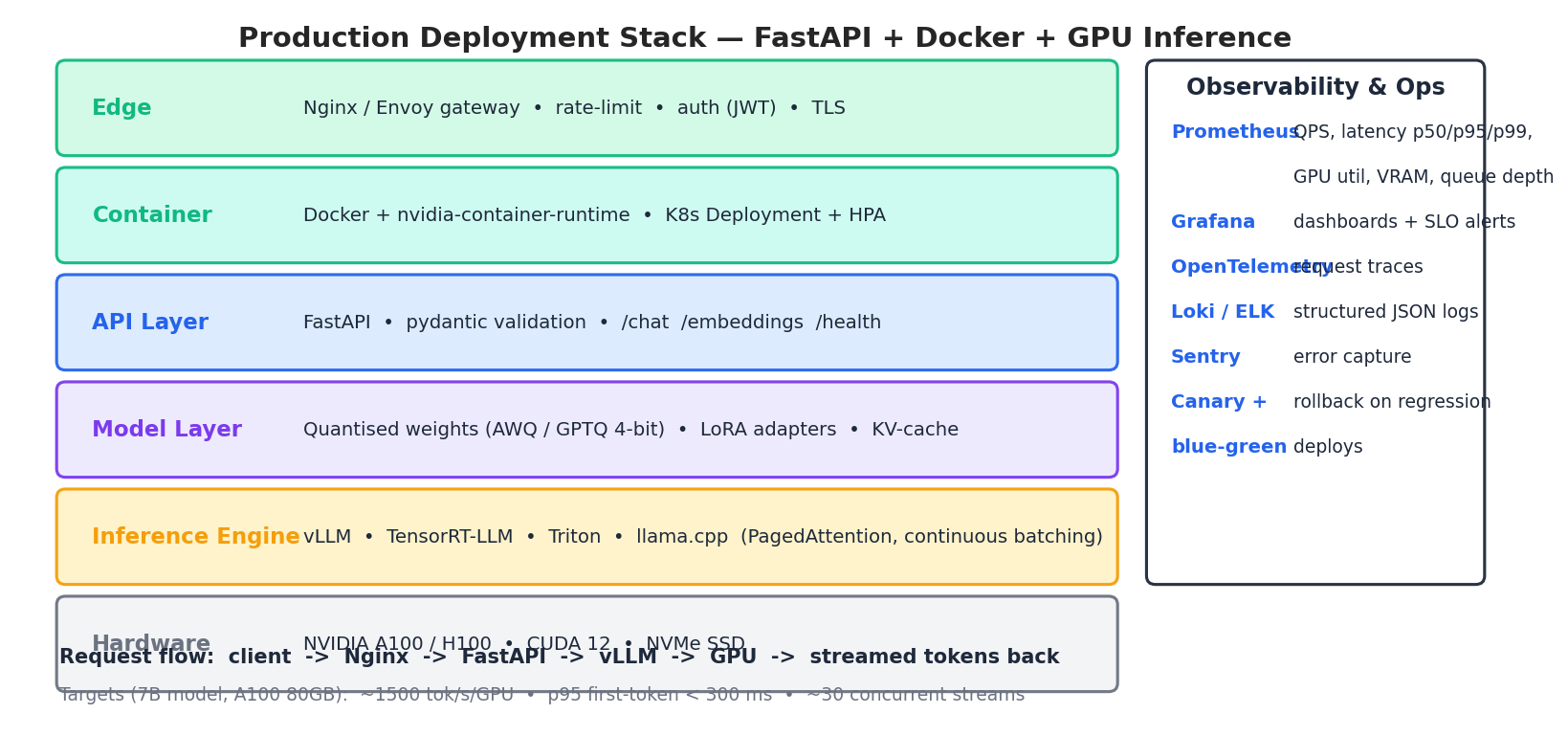

This is where the pretty notebook meets reality. The reference stack we will sketch — FastAPI in front, vLLM in the middle, Docker + K8s wrapping the lot, Prometheus watching it all — is the same pattern used by most production teams in 2025, modulo religious preferences about Triton vs. TGI vs. TensorRT-LLM.

The serving layer#

Forget transformers.generate for serving. It is fine for prototypes and disastrous in production: no continuous batching, no PagedAttention, naive KV-cache management. Use vLLM (the open-source default), TensorRT-LLM (NVIDIA, fastest on H100), or TGI (Hugging Face, simplest ops). All three implement continuous batching, paged KV-cache, prefix caching and speculative decoding.

| |

The API layer#

FastAPI is the path of least resistance: async, pydantic validation, OpenAPI for free, and it streams Server-Sent Events without ceremony. The non-obvious bit is request hygiene — strict input limits, request IDs, structured logging, and a fallback path when the GPU pool is saturated.

| |

Containerisation#

| |

For Kubernetes, schedule the Pod onto a GPU node with nvidia.com/gpu: 1, set tight CPU/memory requests, configure a livenessProbe on /healthz and a readinessProbe that only flips to ready after the model is loaded (the most common deployment bug is taking traffic during the 30-90 s warm-up).

Observability and SLOs#

You cannot fix what you do not measure. The minimum viable instrumentation:

- Metrics (Prometheus + Grafana): QPS, latency p50/p95/p99, GPU utilisation, VRAM, queue depth, cache hit rate, tokens-per-second.

- Tracing (OpenTelemetry): one span per request, with sub-spans for retrieval, generation, post-processing.

- Logs (Loki / ELK): structured JSON, every log line carries the request ID.

- Errors (Sentry): grouped by exception class, alert on rate spikes.

Sensible launch targets for a 7-8B model on a single A100 80GB with vLLM and bf16: ~1500-2500 tokens/s/GPU aggregate throughput, p95 first-token < 300 ms, p95 inter-token < 80 ms, ~30 concurrent streams. Quantising to 4-bit AWQ doubles throughput at the cost of 1-2 points on most benchmarks; ship a small private eval to confirm the trade is acceptable.

FAQ#

When should I pick Function Calling vs. ReAct vs. a graph framework? Single tool, deterministic invocation: Function Calling. Multi-step tasks with branching, retries and intermediate inspection: ReAct or a graph framework like LangGraph. If you find yourself writing a state machine on top of ReAct strings, switch to a graph — it makes the control flow explicit and testable.

Open-source model or hosted API? Hosted APIs win on time-to-market, capability ceiling and the cost of low request volumes. Open-source self-hosting wins on data sovereignty, fine-tuning freedom and cost at high sustained throughput (the crossover is roughly 50-100 M tokens/day for a 7-13B model on owned hardware).

Do I really need a reasoning model? For routine chat, summarisation, classification and short code: no, a fast non-reasoning model is 10-50x cheaper and good enough. For competition math, complex code, multi-hop research and any “system 2” task: yes, the gap is qualitative. Route by query.

Why does my agent loop forever? Almost always one of: (1) tool descriptions are ambiguous and the model keeps re-trying the wrong one; (2) you forgot a Final Answer sentinel; (3) the observation is too long and pushes the original goal out of context. Cap iterations, log every step, and shrink observations to the relevant fields.

What’s the cheapest way to extend context? If you control fine-tuning: RoPE base scaling + a small LongLoRA run. If not: chunk + RAG (Part 10 ). True 1M-token context is rarely the right answer — retrieval is cheaper, more debuggable, and usually more accurate.

Series Wrap-Up#

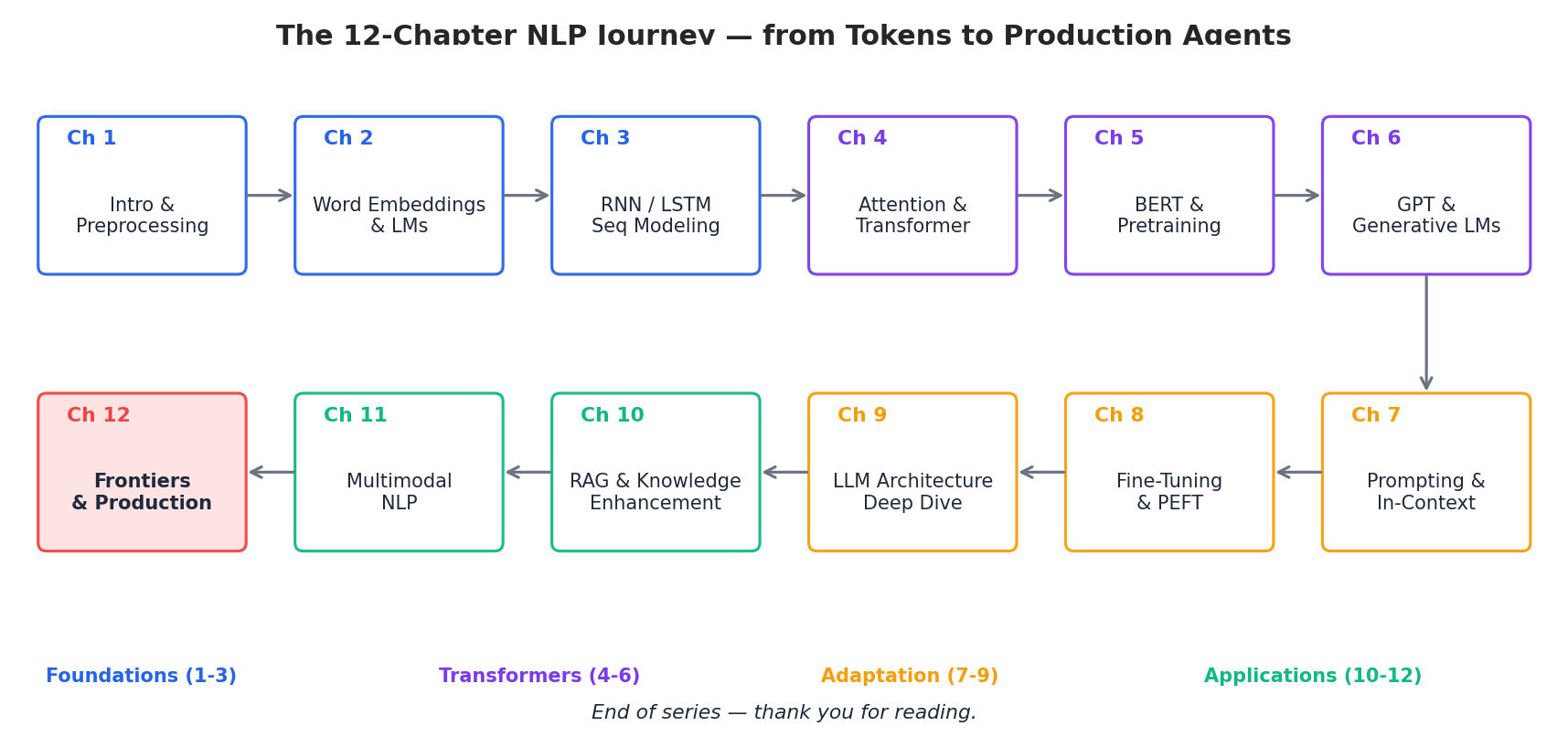

We started this series with whitespace tokenisation and ended with reasoning agents serving streamed tokens behind a load balancer. Looking at the map, four arcs are visible:

- Foundations (Parts 1-3) — text becomes vectors; sequences become hidden states.

- Transformers (Parts 4-6) — attention replaces recurrence; pretraining splits into BERT-style understanding and GPT-style generation.

- Adaptation (Parts 7-9) — prompting, PEFT and LLM internals turn pretrained models into tools we can shape.

- Applications (Parts 10-12) — RAG anchors models to knowledge, multimodality breaks the text-only ceiling, and agents + production engineering close the loop into a deployable system.

If you take three things from the whole series, take these. Architecture matters less than data and objectives — the Transformer is now table stakes; the differentiation is in pretraining mix, instruction data and evaluation. Engineering discipline beats model size — a careful 8B-model deployment with RAG, evals and guardrails will outperform a sloppy 70B service every time. The frontier moves fast, but the fundamentals are stable — embeddings, attention, fine-tuning, retrieval, alignment and serving will still be the right vocabulary in 2027, even when the specific model names are different.

Thank you for reading all twelve parts. Now go build something.

References#

- Yao et al., ReAct: Synergizing Reasoning and Acting in Language Models, ICLR 2023, arXiv:2210.03629 .

- Schick et al., Toolformer: Language Models Can Teach Themselves to Use Tools, NeurIPS 2023, arXiv:2302.04761 .

- Roziere et al., Code Llama: Open Foundation Models for Code, Meta AI, 2023, arXiv:2308.12950 .

- Chen et al., Evaluating Large Language Models Trained on Code (HumanEval / Codex), 2021, arXiv:2107.03374 .

- Beltagy et al., Longformer: The Long-Document Transformer, 2020, arXiv:2004.05150 .

- Munkhdalai et al., Leave No Context Behind: Efficient Infinite Context Transformers with Infini-attention, 2024, arXiv:2404.07143 .

- Chen et al., LongLoRA: Efficient Fine-tuning of Long-Context LLMs, ICLR 2024, arXiv:2309.12307 .

- Lightman et al., Let’s Verify Step by Step, 2023, arXiv:2305.20050 .

- OpenAI, Learning to Reason with LLMs (o1 system card), 2024.

- DeepSeek-AI, DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via RL, 2025, arXiv:2501.12948 .

- Rafailov et al., Direct Preference Optimization, NeurIPS 2023, arXiv:2305.18290 .

- Bai et al., Constitutional AI: Harmlessness from AI Feedback, Anthropic, 2022, arXiv:2212.08073 .

- Kwon et al., Efficient Memory Management for Large Language Model Serving with PagedAttention (vLLM), SOSP 2023, arXiv:2309.06180 .

- Dao, FlashAttention-2, 2023, arXiv:2307.08691 .

Series Navigation#

- Previous: Part 11 — Multimodal NLP

- Series complete! This concludes the 12-part NLP series.

- View all 12 parts in the NLP series

NLP 12 parts

- 01 NLP (1): Introduction and Text Preprocessing

- 02 NLP (2): Word Embeddings and Language Models

- 03 NLP (3): RNN and Sequence Modeling

- 04 NLP (4): Attention Mechanism and Transformer

- 05 NLP (5): BERT and Pretrained Models

- 06 NLP (6): GPT and Generative Language Models

- 07 NLP (7): Prompt Engineering and In-Context Learning

- 08 NLP (8): Model Fine-tuning and PEFT

- 09 NLP (9): Deep Dive into LLM Architecture

- 10 NLP (10): RAG and Knowledge Enhancement Systems

- 11 NLP (11): Multimodal Large Language Models

- 12 NLP (12): Frontiers and Practical Applications you are here