NLP (1): Introduction and Text Preprocessing

A first-principles introduction to NLP and text preprocessing. We trace the four eras of the field, build the cleaning to vectorization pipeline by hand, and unpack the math behind tokenization, TF-IDF, n-grams, and distributed representations.

Every time you ask Claude a question, autocomplete a sentence in Gmail, or read a Google Translate page, you’re using a stack that took seventy years to build. Natural Language Processing (NLP) is the field that taught machines to read, score, transform, and write human language. Surprisingly, much of the modern NLP stack still relies on preprocessing techniques from decades ago.

This first article in the series does two things. First, it maps out the field’s history, current scope, and the reasons behind the tools we use. Second, it builds the foundational layer — cleaning, tokenization, normalization, and feature extraction — with code you can use directly in a project. By the end, you’ll have a reusable preprocessing pipeline and, more importantly, an understanding of when each step is helpful and when it can destroy signal.

What You Will Learn#

- The four paradigms of NLP and the technical reason each one displaced the previous

- A precise vocabulary for tokenization: characters, words, subwords, and why BPE won

- How to build a configurable preprocessing pipeline in Python with NLTK, spaCy, and scikit-learn

- The math behind Bag-of-Words and TF-IDF, and how to read the resulting matrices

- Zipf’s law, n-gram language models, and why one-hot vectors fail

- A decision table for when to apply (or skip) each preprocessing step

Prerequisites: Comfortable Python, light familiarity with NumPy and pandas, no prior NLP exposure required.

Four Eras of NLP#

NLP did not advance smoothly. It moved in jumps, each driven by a new representation of language. Knowing the sequence helps you choose the right tool: rule systems still beat neural nets for narrow form-filling, statistical methods still drive search ranking, and embeddings dominate everything else.

Symbolic Era (1950s — late 1980s)#

Early systems treated language as a logic problem. ELIZA (1966) matched user input against hand-crafted regex patterns and rephrased the captured groups; SHRDLU (1970) parsed instructions about a blocks world using a hand-written grammar. These systems were precise within their domain and completely brittle outside it — a synonym or a typo broke them. The lesson, in hindsight, is that language has too many surface forms for any human to enumerate.

Statistical Revolution (1990s)#

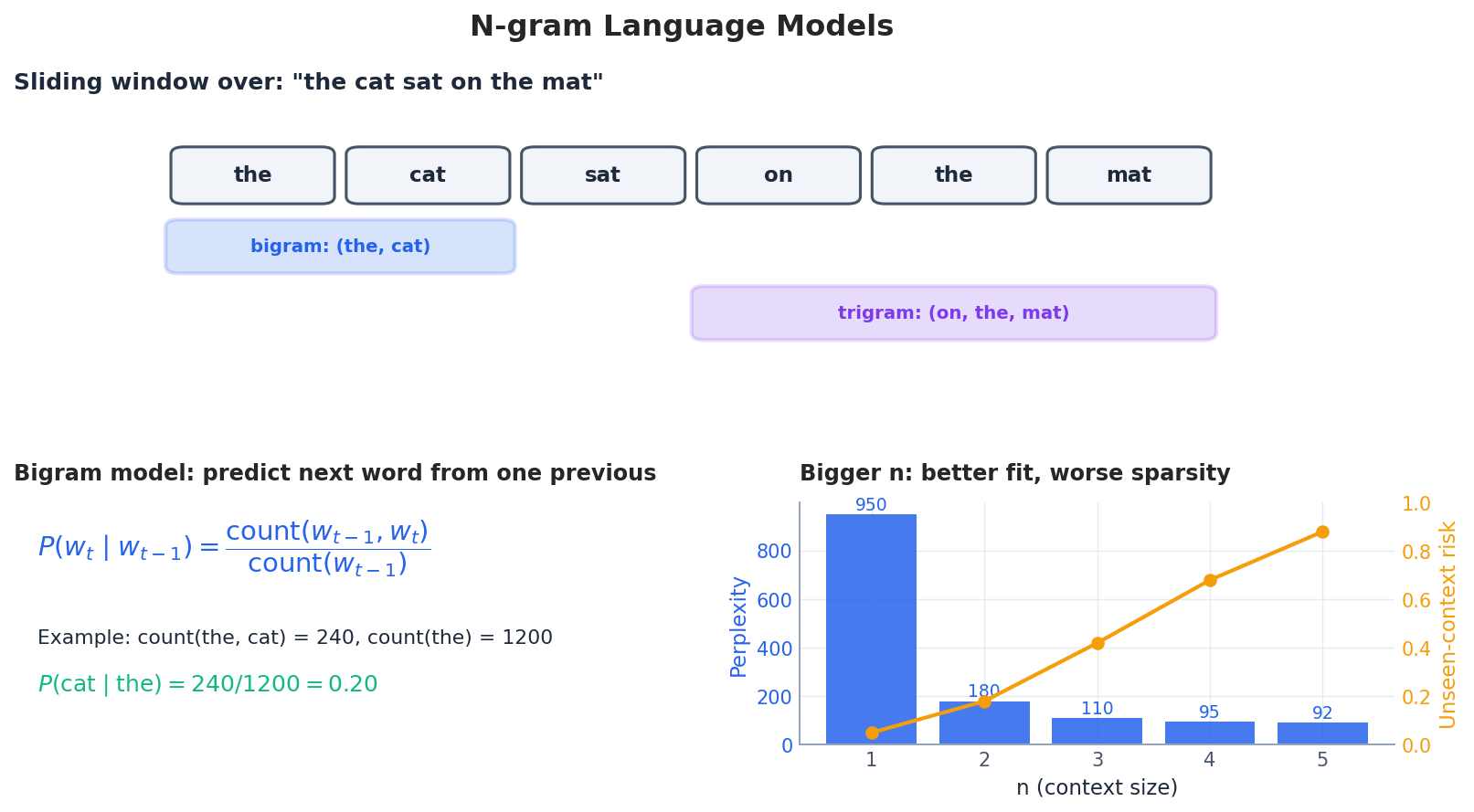

$$P(w_t \mid w_{t-1}) = \frac{\text{count}(w_{t-1}, w_t)}{\text{count}(w_{t-1})}$$This single formula powered IBM’s statistical machine translation, the first viable speech recognizers, and probabilistic part-of-speech taggers. Hidden Markov Models extended the same idea to latent state, and probabilistic context-free grammars handled syntax. Features were still hand-engineered, but the rules were learned.

Deep Learning Era (2013 — 2016)#

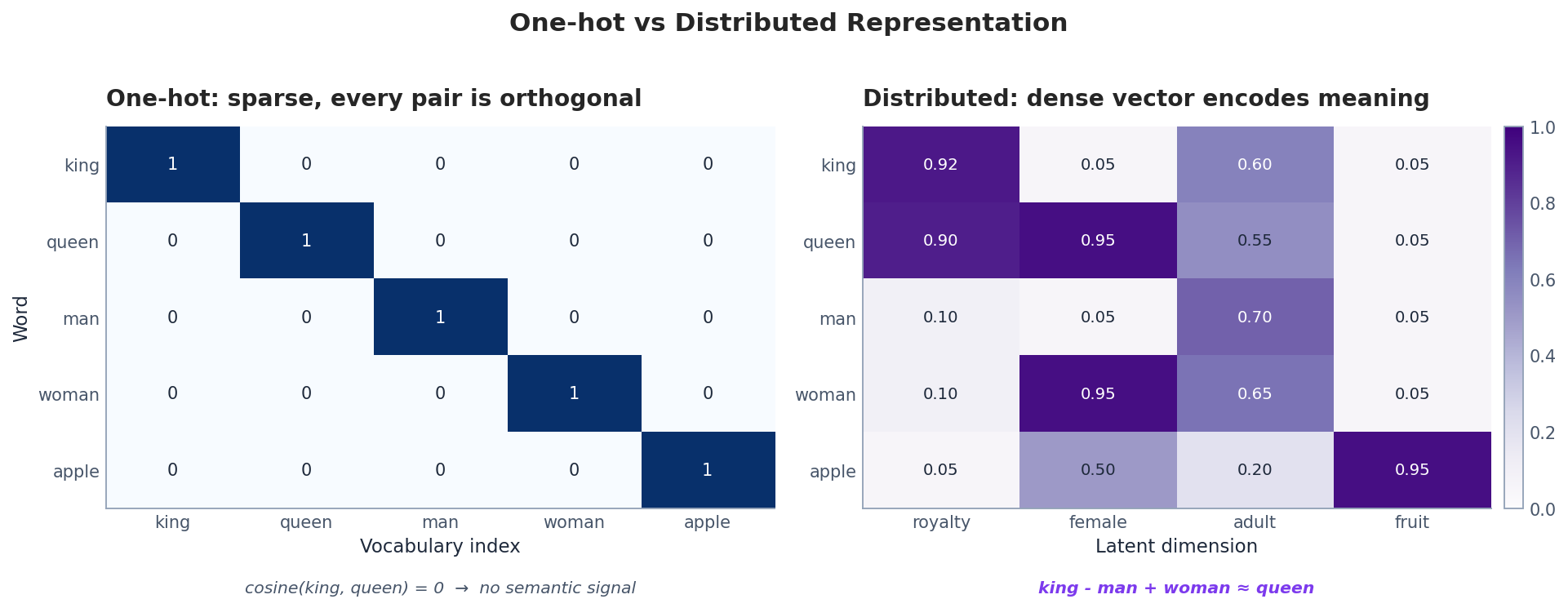

$$\vec{\text{king}} - \vec{\text{man}} + \vec{\text{woman}} \approx \vec{\text{queen}}$$For the first time, words were no longer atomic identifiers. They lived in a continuous space where similarity was a cosine away. RNNs and LSTMs followed, letting models thread context through a sequence and finally learn from order, not just bag-of-tokens counts.

Transformer Revolution (2017 — present)#

$$\text{Attention}(Q, K, V) = \text{softmax}\!\left(\frac{QK^\top}{\sqrt{d_k}}\right) V$$Two practical consequences mattered. First, the model is fully parallel across positions, so training scales with GPU memory rather than sequence length. Second, every token can attend directly to every other token, which finally solved the long-range dependency problem. BERT, GPT, and every modern LLM are direct descendants.

| Era | Years | Core idea | What broke it |

|---|---|---|---|

| Symbolic | 1950 — 1980s | Hand-written rules and grammars | Cannot enumerate surface forms |

| Statistical | 1990s — 2010s | Estimate probabilities from corpora | Hand-engineered features hit a ceiling |

| Deep learning | 2013 — 2016 | Learn dense representations end-to-end | Recurrence is sequential, slow to train |

| Transformer | 2017 — now | Self-attention over the whole sequence | (Still being explored) |

Insight: each shift solved the previous era’s bottleneck without throwing away the layer below. Even today, an LLM tokenizer is a statistical artifact, and your retrieval system probably uses TF-IDF as a fallback.

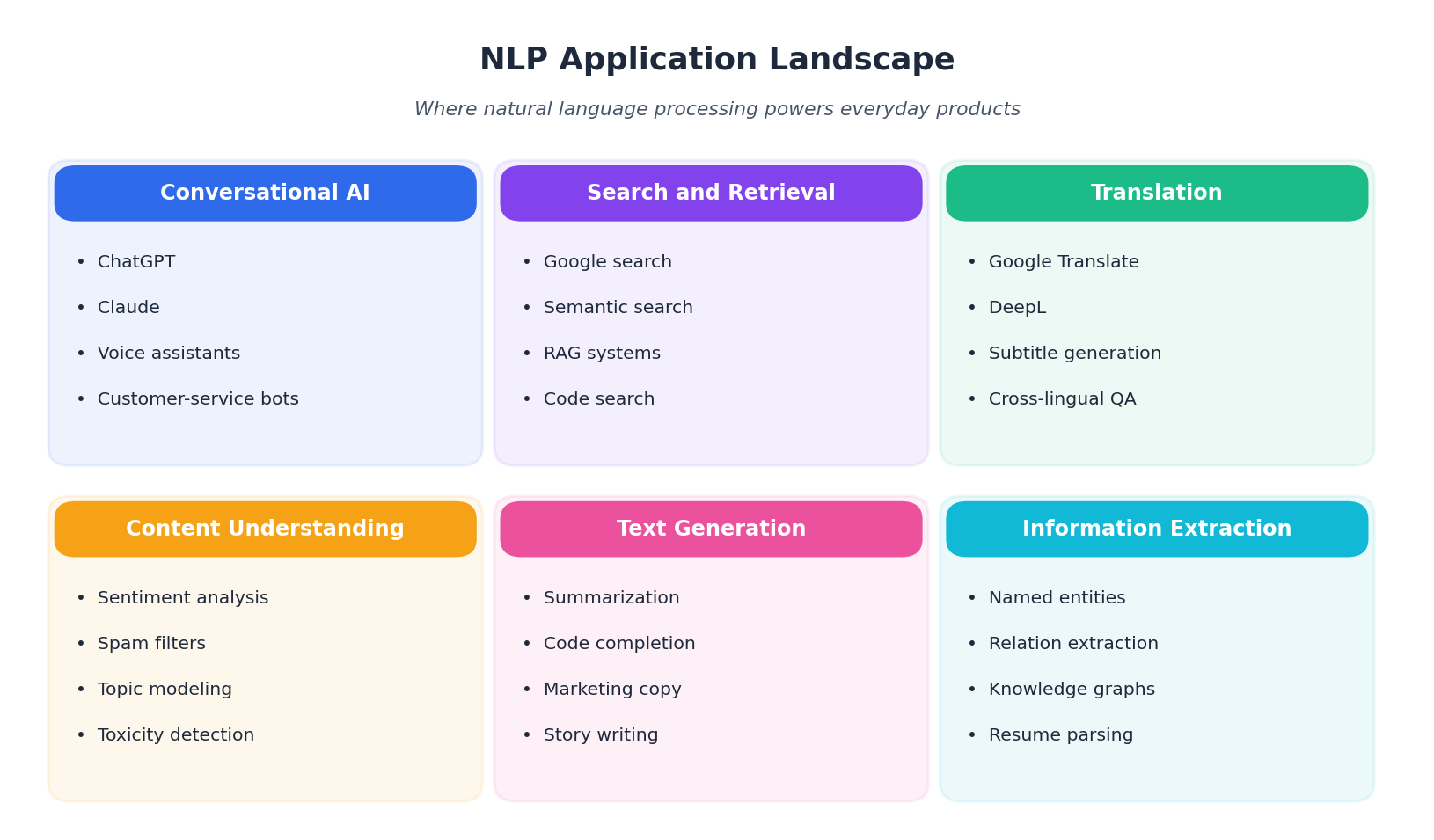

Where NLP Shows Up Today#

| Domain | Examples |

|---|---|

| Text classification | Sentiment, spam, intent routing |

| Information extraction | Named entities, relations, knowledge graphs |

| Generation | Translation, summarization, code |

| Conversational AI | ChatGPT, Claude, voice assistants |

| Search and analysis | Semantic search, topic modeling, RAG |

The figure above arranges these into six clusters. Notice that almost every cluster ultimately consumes a vector — which is exactly what preprocessing produces.

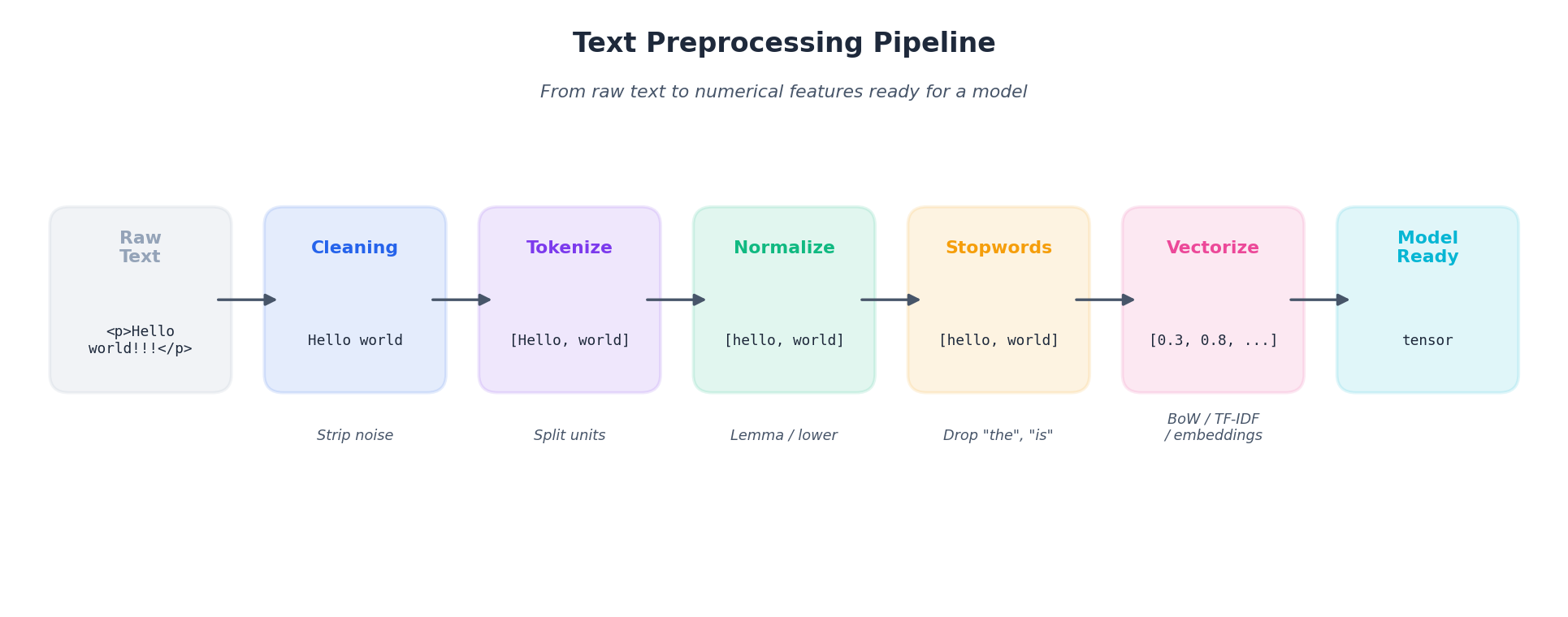

The Preprocessing Pipeline at a Glance#

Before any model, raw text has to become numerical features. The standard pipeline has six stages, and each one is a deliberate choice that trades information for regularity.

| |

A common mistake is to apply every step by reflex. The right framing is: each stage should remove noise that downstream cannot handle and preserve everything else. We will revisit this trade-off at every step.

Environment Setup#

| |

| |

Step 1 — Text Cleaning#

Web text comes wrapped in HTML, peppered with URLs, and littered with control characters. Cleaning removes the obvious noise without touching meaning.

| |

The aggressive cleaning trade-off. The function above also deletes digits and punctuation. That is fine for topic modeling, where numbers add noise, but it is wrong for:

- Sentiment analysis —

!!!and?!carry emotion. - Named entity recognition — “Apple Inc.” needs the period and the capitalization.

- Financial NLP —

$29.99is the actual signal you care about.

Always tailor the regex set to the task; do not apply a one-size-fits-all cleaner.

Performance tip. Compile patterns once if you process millions of documents:

| |

For HTML in the wild (malformed tags, embedded scripts), regex is fragile. Reach for a parser:

| |

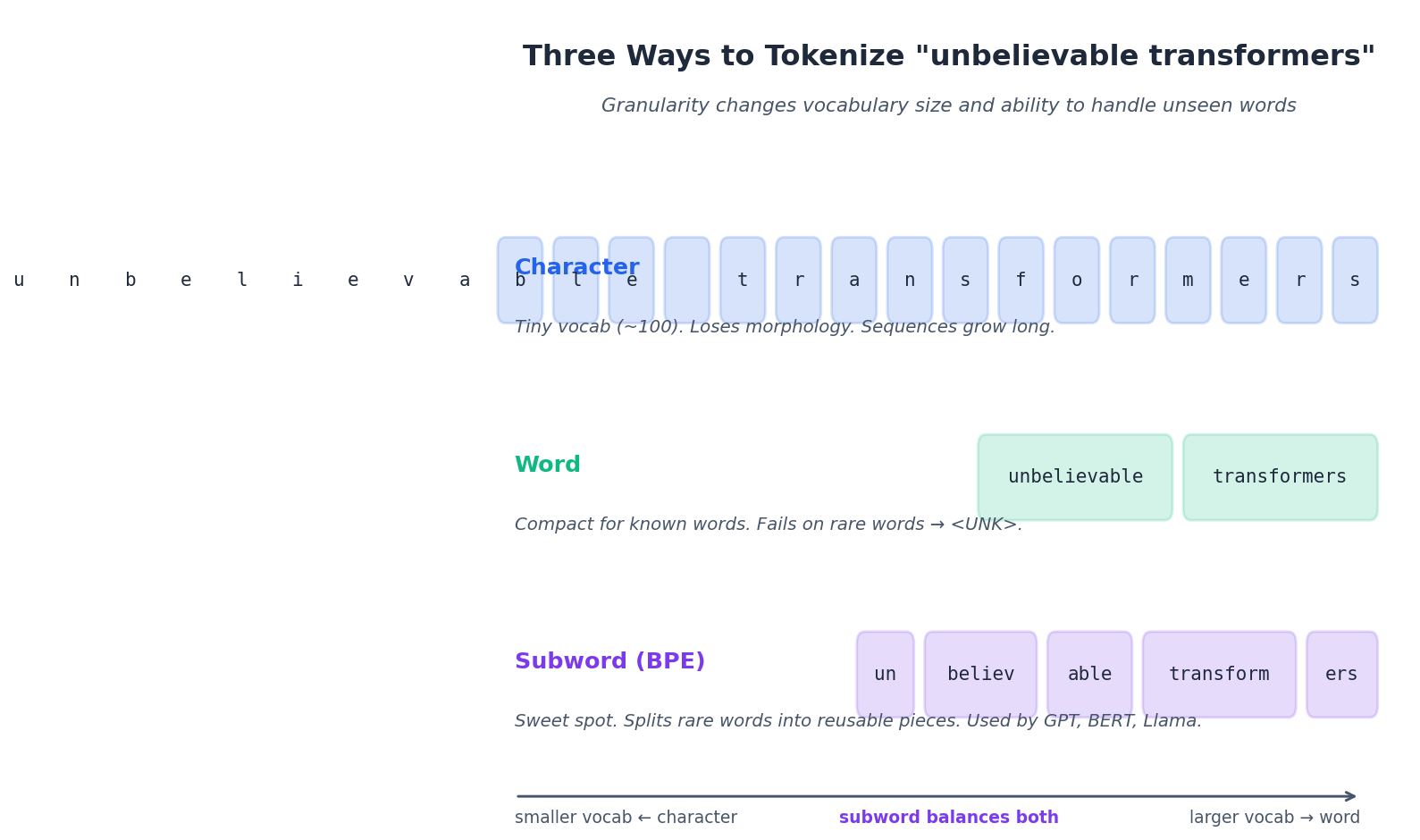

Step 2 — Tokenization#

Tokenization splits text into the atomic units a model will see. The boundary you choose — characters, words, subwords — determines vocabulary size, sequence length, and how gracefully the model handles words it has never seen.

Word Tokenization#

| |

NLTK keeps Dr. as one token, separates punctuation, and splits the contraction Isn't into Is + n't. Each of those decisions is a hard-coded English convention — which is exactly why word tokenization is brittle across languages.

Sentence Tokenization#

| |

NLTK’s Punkt model learns from data which periods end sentences and which mark abbreviations.

Subword Tokenization (BPE)#

Modern models — GPT, BERT, Llama, Claude — do not tokenize on words. They use subword tokenization, almost always a variant of Byte-Pair Encoding (BPE):

- Start with a vocabulary of individual characters.

- Count adjacent character pair frequencies across the corpus.

- Merge the most frequent pair into a new symbol.

- Repeat until the vocabulary reaches a target size (commonly 30k — 100k).

| |

Why BPE matters in practice:

- Rare words decompose —

unbelievablebecomesun + believ + able, all of which have appeared elsewhere. - Vocabulary stays bounded — a 50k subword vocabulary covers any English text and most code.

- Cross-lingual transfer — the same tokenizer handles English, French, and Mandarin if trained on a multilingual corpus.

Here is a minimal, runnable implementation:

| |

For production, use Hugging Face’s tokenizers library — it ships GPT-style BPE, BERT WordPiece, and SentencePiece behind a unified API.

Step 3 — Normalization#

Normalization collapses surface variants of the same word into a single form, which shrinks vocabulary and improves matching. It also throws information away, so apply it deliberately.

Lowercasing#

| |

Lowercasing helps search and topic modeling. It hurts named-entity recognition (Apple the company collapses with apple the fruit) and any task where capitalization signals emphasis.

Stemming vs Lemmatization#

Stemming chops suffixes with deterministic rules. Fast, crude, sometimes wrong:

| |

easili is not a word — the Porter stemmer optimizes for matching, not for legibility.

Lemmatization uses a dictionary plus part-of-speech information to return the actual lemma:

| |

| Aspect | Stemming | Lemmatization |

|---|---|---|

| Speed | Microseconds | Milliseconds (POS-tagged) |

| Output | May not be a real word | Always a dictionary form |

| Accuracy | Lower | Higher |

| Best for | Search and IR | NLU and QA |

A useful default: use lemmatization unless you are running a high-throughput retrieval system where the latency budget is tight.

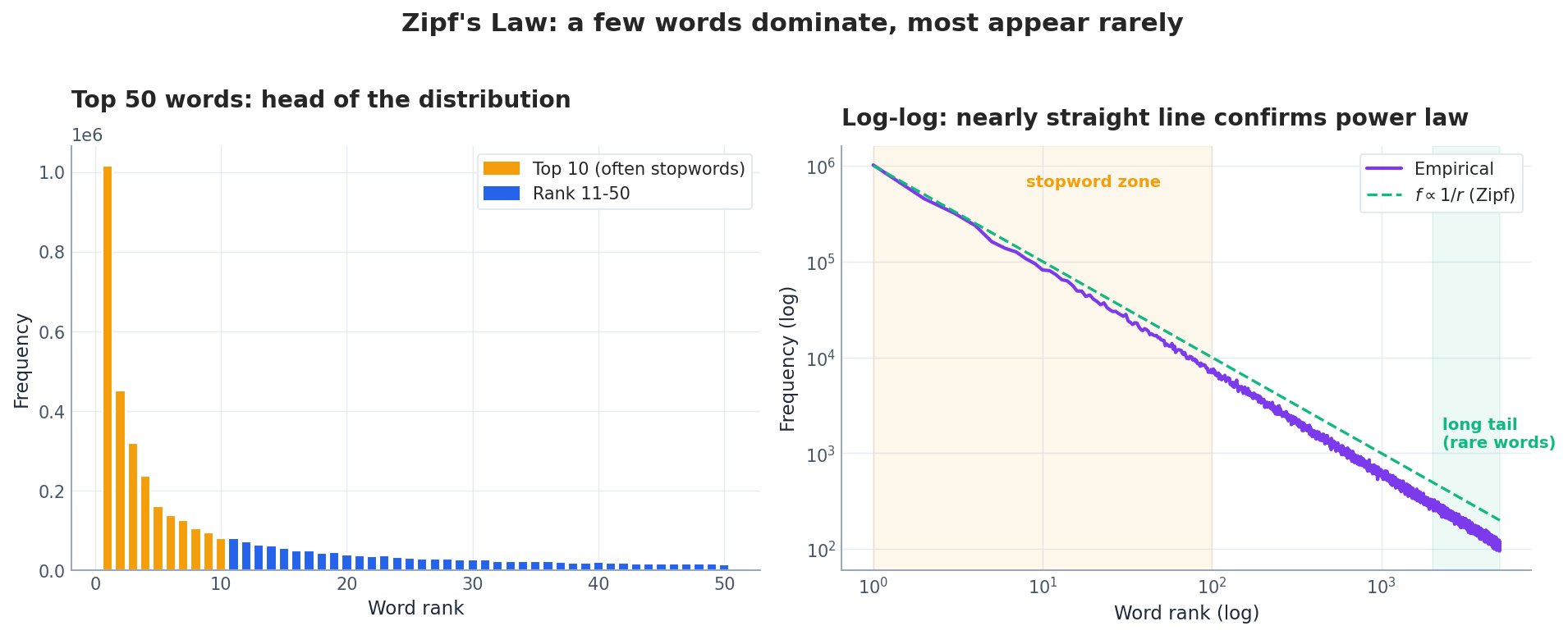

Step 4 — Stopwords and Zipf’s Law#

Stopwords are common closed-class words such as the, is, at that carry little task-specific meaning. Removing them shrinks vocabulary by roughly a third and concentrates signal in content words.

The top ten words alone often account for 25 — 30% of all tokens. That is the head of the distribution, and it is mostly stopwords. The tail — thousands of words appearing once or twice — is where most semantic content lives, but it is also where models struggle and where subword tokenization earns its keep.

| |

When to remove stopwords:

- Yes — bag-of-words and topic models, search indexing.

- No — sentiment (

not goodis not the same asgood), QA (function words carry the question), any deep model that learns to weight tokens itself.

Step 5 — From Tokens to Vectors#

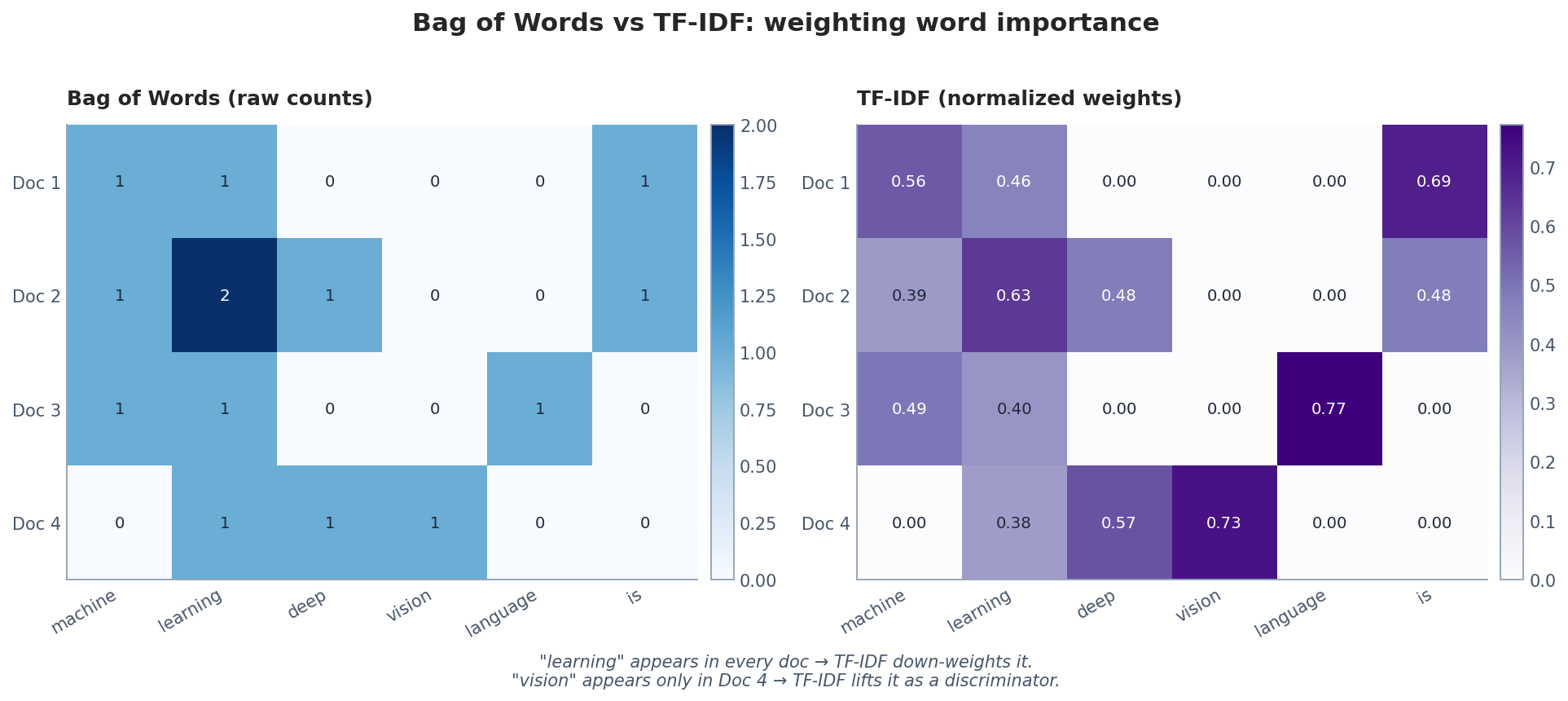

A model needs numbers. Two classical encodings — Bag-of-Words and TF-IDF — still anchor most retrieval systems and are the right baseline for any new task.

One-hot vs Distributed Representations#

Before we get to BoW, it helps to see why naive encodings fail. A one-hot vector assigns each word a unique index, with a 1 in that position and 0 everywhere else. Every pair of words is orthogonal, which means the encoding carries zero similarity information.

Distributed representations — which we will build in Part 2 — pack meaning into dense vectors where related words sit near each other. BoW and TF-IDF are a halfway step: each word still gets its own dimension, but the value in that dimension is a frequency, not just a marker.

Bag of Words#

Represent each document as a vector of word counts, ignoring order:

| |

| |

The fatal limitation: dog bites man and man bites dog produce identical vectors. BoW discards order entirely.

TF-IDF#

$$\text{TF-IDF}(t, d) = \text{TF}(t, d) \cdot \text{IDF}(t)$$ $$\text{IDF}(t) = \log\!\frac{1 + N}{1 + \text{df}(t)} + 1$$where $N$

is the number of documents and $\text{df}(t)$

is the number of documents containing term $t$

. The +1 smoothing keeps the IDF defined when a term appears in every document (or in none).

The figure above shows both matrices side by side. Notice how learning — present in every document — gets weighted down by TF-IDF, while a word like vision that is unique to one document gets lifted. That is exactly the ranking behavior you want for search.

| |

Production-grade TF-IDF. The defaults rarely survive contact with a real corpus. Tune at least these knobs:

| |

Step 6 — N-gram Language Models#

$$P(w_1, \ldots, w_T) = \prod_{t=1}^{T} P(w_t \mid w_{t-n+1}, \ldots, w_{t-1})$$A bigram model uses one word of context, a trigram uses two, and so on.

The trade-off is sharp:

- Larger n captures more context, which lowers perplexity (perplexity is roughly the model’s effective branching factor — lower is better).

- Larger n also explodes the parameter count and starves on rare contexts. With $V$ vocabulary, a trigram model has up to $V^3$ parameters, most of which see zero training examples. This is the sparsity problem, the central pain point of statistical NLP.

Smoothing techniques (Laplace, Kneser-Ney) patch the holes by redistributing probability mass to unseen n-grams. Modern neural language models sidestep the issue entirely by sharing parameters across contexts via embeddings — which is the bridge to Part 2 .

A Reusable Preprocessing Class#

Putting the steps together into something you can drop into a project:

| |

End-to-End Example: a Minimal Spam Classifier#

Combining everything into a working classifier. Use the SMS Spam Collection or any Kaggle spam dataset for real experiments; the snippet below is intentionally tiny so it runs anywhere.

| |

The point of the example is not the accuracy on ten samples — it is the shape of the pipeline. Swap in 5,000 SMS messages and the same code reaches roughly 97% accuracy with no further engineering. That is the strength of the classical NLP stack: short, transparent, and remarkably hard to beat without a GPU.

Decision Table: Which Steps for Which Task#

| Task | Tokenization | Normalization | Stopwords | Features |

|---|---|---|---|---|

| Search / IR | Word | Stem | Remove | TF-IDF |

| Sentiment | Word / subword | Lemma | Keep | TF-IDF or embeddings |

| Topic modeling | Word | Lemma | Remove | BoW or TF-IDF |

| Machine translation | Subword (BPE) | Minimal | Keep | Embeddings |

| NER | Word | None | Keep | Embeddings + context |

| Modern LLMs | Subword (BPE) | None | Keep | Learned embeddings |

Rule of thumb. The more model capacity and data you have, the less preprocessing you should do. Deep models learn their own normalization; aggressive preprocessing destroys signal they could have used. Classical ML benefits from careful feature engineering; LLMs prefer raw text.

Summary#

- Preprocessing is task-specific. Search wants aggressive normalization; neural models want raw text.

- Subword tokenization (BPE, WordPiece, SentencePiece) is the modern default because it bounds vocabulary and handles unseen words.

- TF-IDF remains the right baseline. If a TF-IDF + logistic regression baseline beats your fancy model, the fancy model is broken.

- Zipf’s law explains why stopword removal helps classical models and why long-tail words are hard.

- Less is often more. Over-preprocessing hurts representation learning. Always measure.

NLP 12 parts

- 01 NLP (1): Introduction and Text Preprocessing you are here

- 02 NLP (2): Word Embeddings and Language Models

- 03 NLP (3): RNN and Sequence Modeling

- 04 NLP (4): Attention Mechanism and Transformer

- 05 NLP (5): BERT and Pretrained Models

- 06 NLP (6): GPT and Generative Language Models

- 07 NLP (7): Prompt Engineering and In-Context Learning

- 08 NLP (8): Model Fine-tuning and PEFT

- 09 NLP (9): Deep Dive into LLM Architecture

- 10 NLP (10): RAG and Knowledge Enhancement Systems

- 11 NLP (11): Multimodal Large Language Models

- 12 NLP (12): Frontiers and Practical Applications