NLP (7): Prompt Engineering and In-Context Learning

From prompt anatomy to chain-of-thought, self-consistency and ReAct: a working theory of in-context learning, the variance you have to fight, and the patterns that scale to real systems.

The same model can produce a sharp answer or a confident hallucination. The difference lies in the framing, not the weights. A vague request like “analyze this text” yields a generic summary; a prompt with a role, two clear examples, and a strict output schema produces something a parser can use. Prompt engineering turns that gap into a repeatable system, not just a lucky shot.

In-Context Learning (ICL) is the mechanism that makes this work. When you include a few examples in the prompt, the model doesn’t retrain; it conditions its forward pass on those examples and effectively infers a task from them. Understanding ICL’s capabilities and limitations separates a developer who struggles with the model from one who guides it.

This part is the seventh in the NLP series. It assumes you know roughly how a Transformer decoder generates tokens (Part 4 ) and what an autoregressive LM is (Part 6 ). Everything below is grounded in published behaviour — but be warned: the literature on prompt engineering is unusually noisy, and most numbers are model- and dataset-specific. Treat the bars in the figures as illustrative shapes, not benchmark claims.

What You Will Learn#

- Prompt anatomy: the five composable blocks (system, instruction, examples, query, format spec) and what each one buys you.

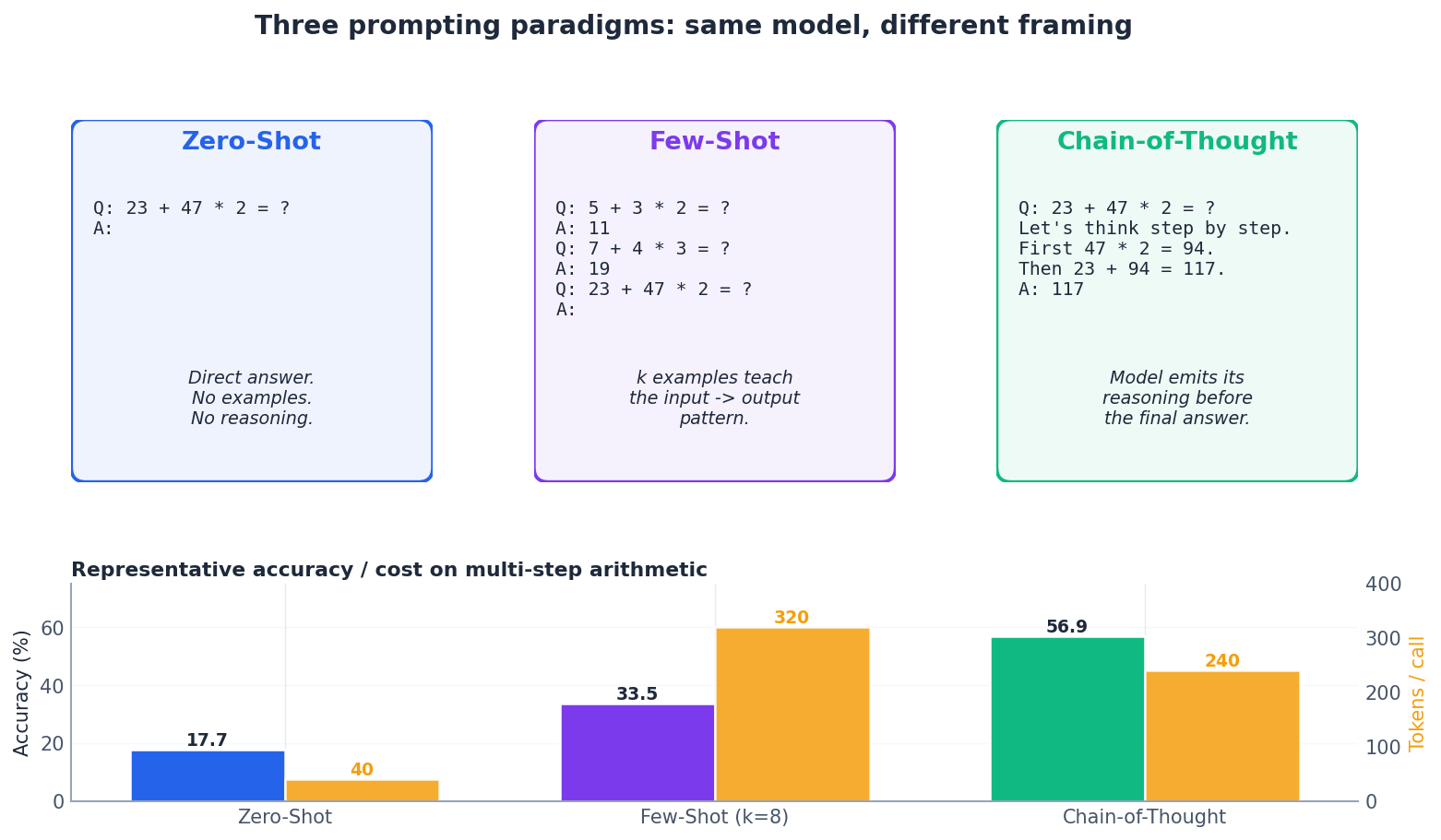

- Three paradigms: zero-shot, few-shot, and chain-of-thought — when each is the right choice, and what it costs in tokens.

- A working theory of ICL: why a non-trained model can still “learn” from in-prompt examples, and which signals it actually picks up.

- The variance problem: how much accuracy can swing from format and ordering alone, and how to measure it.

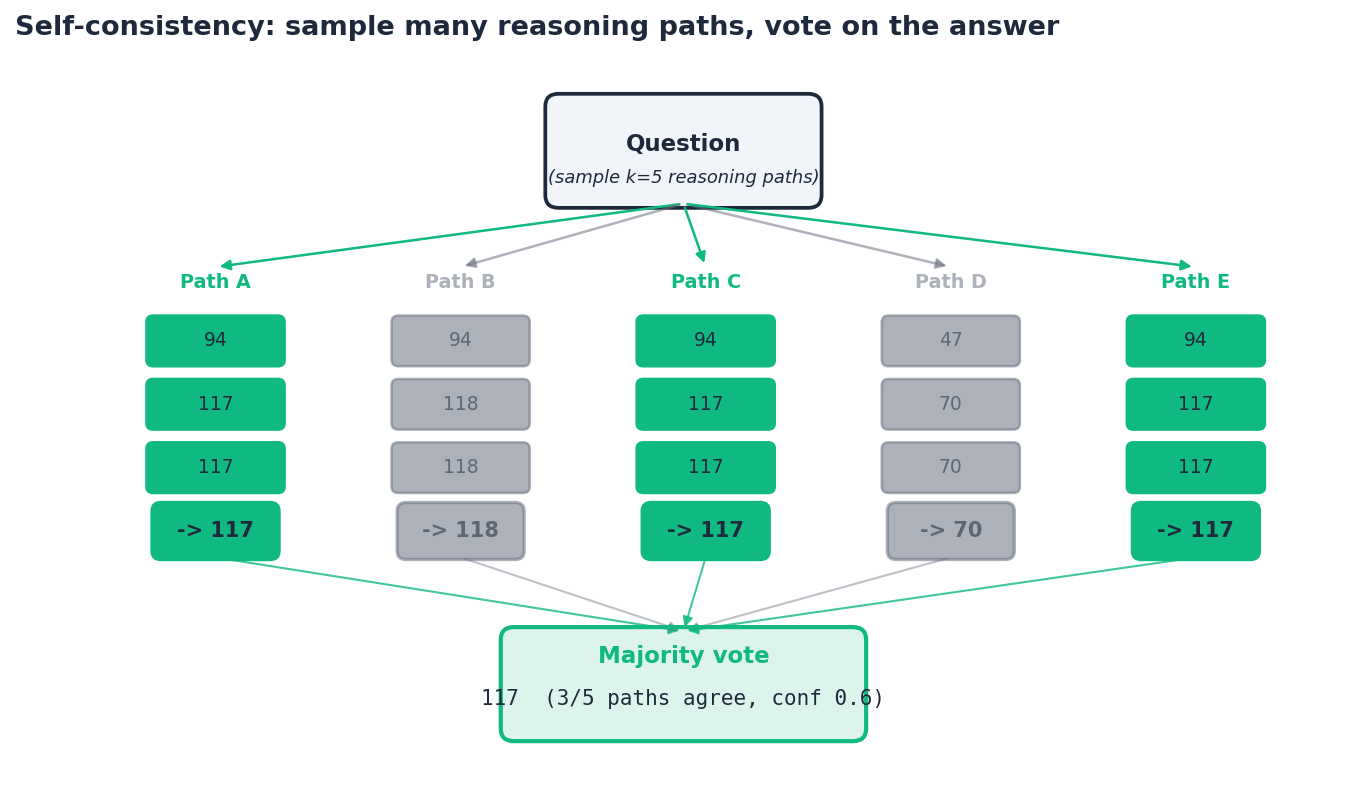

- Self-consistency: turning a stochastic decoder into an ensemble by sampling many reasoning paths.

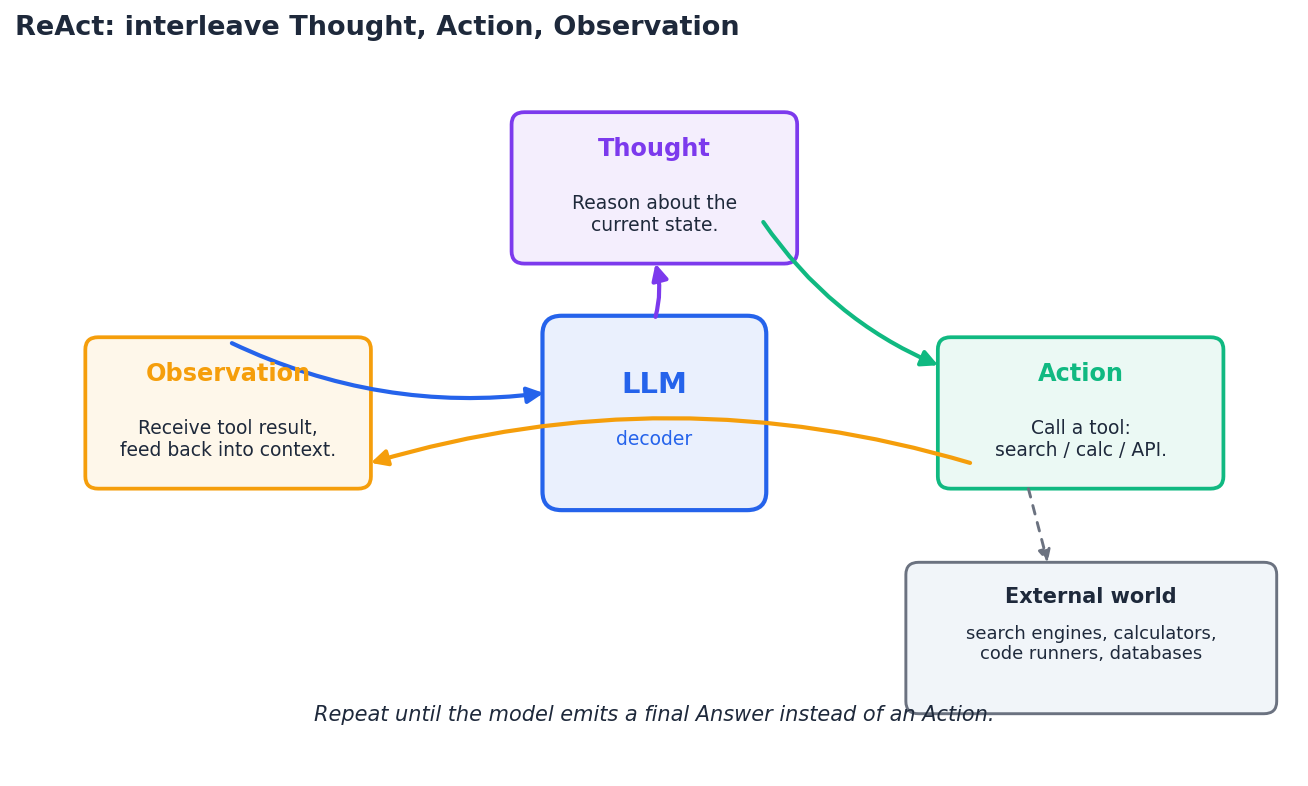

- ReAct: interleaving reasoning with tool calls, the foundation of modern agents.

- A small system: prompt registries, A/B harnesses, and the discipline that keeps prompt sets from rotting.

Prerequisites#

- Familiarity with large language models — see Part 6: GPT and Generative Models .

- Basic Python; comfort reading short snippets.

- Access to any LLM API (OpenAI, Anthropic, an open-weights model).

Anatomy of a prompt#

A prompt is a single text string the model conditions on. Everything else — such as “system” vs. “user” roles, function schemas, and retrieval results — is just structured text the API combines into one sequence before tokenization. Treating a prompt as a flat string with named blocks gives the cleanest mental model.

The five blocks below are not mandatory, but most production prompts include a subset of them, in roughly this order:

- System / role. Sets persona, refusal policy, tone, length budget. Stable across requests, so it caches well.

- Task instruction. One sentence stating the goal in the imperative.

- Few-shot examples. Demonstrations of input -> output pairs. The primary ICL signal.

- User query. The actual input to be processed.

- Format spec. A schema (JSON, regex-able tags, table) that pins the output shape.

A pragmatic prompt builder:

| |

Two design notes that beginners often miss:

- Order matters. Examples placed after the format spec but before the query consistently work better than examples buried at the top — recency biases the decoder.

- Stable prefix, variable suffix. Put everything that does not change (system, examples, format) at the top so KV-cache reuse and prompt-cache features can work. Variable input goes last.

Four principles that survive contact with reality#

These are the principles I would still teach today, after a lot of prompts in production:

- Clarity over cleverness. Replace “analyze the text” with “classify the text into {positive, negative, neutral} and return JSON”. You are competing with every plausible interpretation the model could pick up.

- Specificity buys determinism. Say what not to do, and what to output when uncertain (“if you cannot answer from the document, return

{\"answer\": null}”). Models honour negative constraints surprisingly well. - Context completeness. If the answer needs a definition the model could plausibly not have, include it. Cheaper than a wrong answer.

- Role assignment when relevant. “You are a senior security reviewer” measurably narrows the distribution of outputs on code-review tasks. It is not a magic spell — avoid it for generic tasks where it just adds tokens.

Zero-shot, few-shot, chain-of-thought#

These are the three baseline framings every other technique builds on.

Zero-shot#

Describe the task; provide no examples. The model relies on whatever it learned during pre-training and instruction tuning.

| |

Use zero-shot when the task is something the model already knows well (sentiment, translation, summarization for short inputs) and you care about latency or cost. Its weakness is that the output format is unstable — the model may emit “Positive sentiment”, “POSITIVE”, or a paragraph of analysis. Pin the format with one constrained sentence (“Reply with exactly one word from the set {positive, negative, neutral}.”).

Few-shot#

Few-shot prompting puts $k$ examples in front of the query. This is the textbook setting for ICL.

| |

Three things examples actually do:

- Task identification. They disambiguate what task you mean. “Translate” might mean transliterate, paraphrase, or rewrite — two examples nail it.

- Format alignment. The output side of each shot is a template. The model copies the template.

- Label-space anchoring. The set of labels in your examples becomes the model’s effective output vocabulary, even if you never enumerate them.

The often-cited surprise: the labels themselves matter less than you might think. Min et al. (2022) showed that randomizing the gold labels in few-shot prompts barely hurts on many tasks — what matters is the distribution of inputs and the label space. The takeaway is not “labels do not matter at all” (they do for hard tasks) but “do not over-engineer label correctness; engineer coverage and format”.

Chain-of-thought#

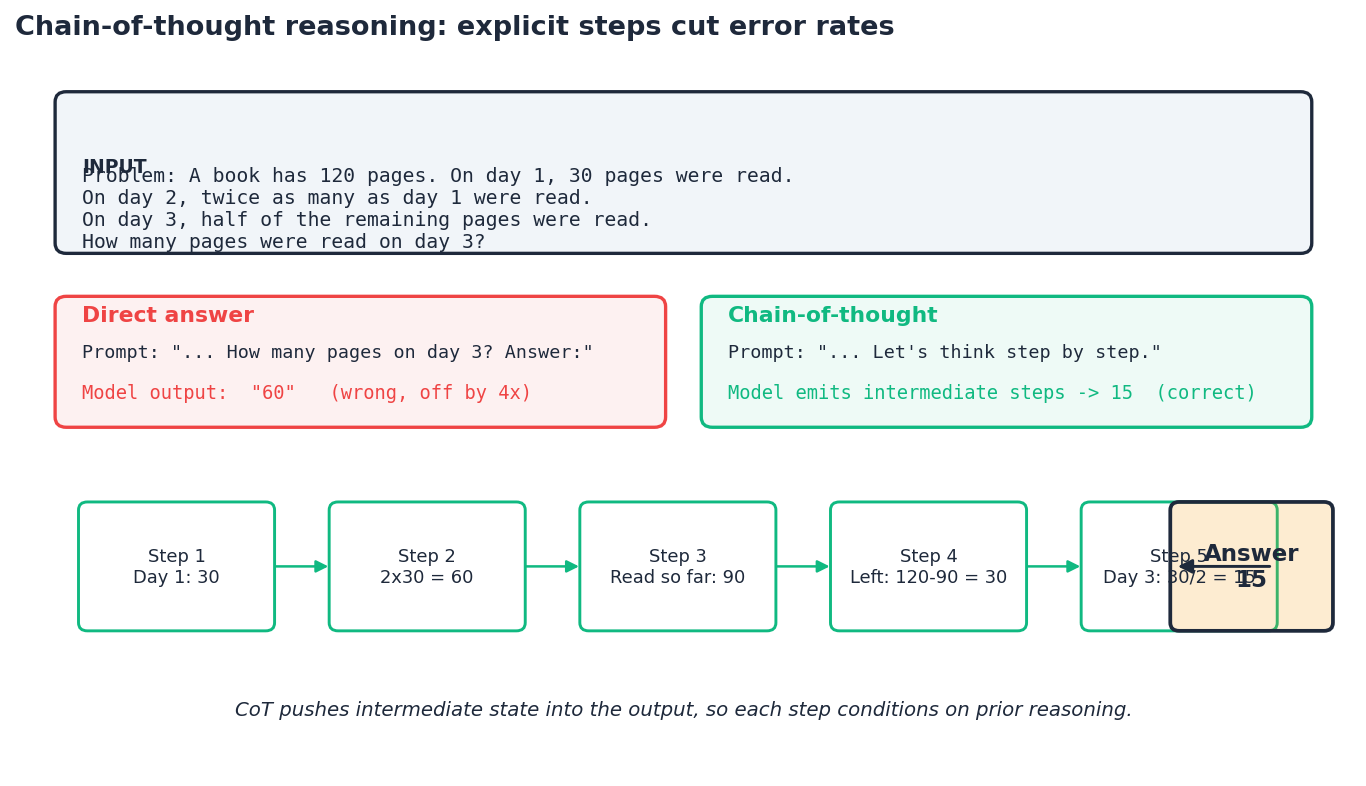

For multi-step problems, ask the model to write its reasoning before the answer.

| |

The trick is mechanical, not mystical. Each generated reasoning token changes the conditioning context for the next token. The model is autoregressive, so writing intermediate state (“Read so far: 90”) makes that state available to all later tokens — including the final answer. Without it, the model has to compute every intermediate quantity in a single forward pass through a fixed-depth network. CoT effectively buys extra serial compute, paid in output tokens.

Greedy CoT picks one $z$ and hopes it is right. Self-consistency (Section 5 ) approximates the sum by sampling many $z$ .

When CoT helps and when it does not:

- Helps: arithmetic, multi-hop QA, code reasoning, anything with intermediate state.

- Neutral or hurts: simple classification, retrieval, single-fact lookup. The reasoning preamble adds tokens, latency, and a chance for the model to talk itself into the wrong answer.

- Modern models with built-in “thinking” modes (long-context reasoning models trained on CoT-style traces) absorb most of this; explicit CoT prompting still helps but the gap is smaller than it was on the 2022 GPT-3 generation.

A working theory of in-context learning#

Why does putting examples in the prompt change behaviour at all? The model’s weights are frozen. Three complementary explanations — none alone complete — are the closest thing the field has to consensus.

1. Implicit task inference. The pre-trained LM has seen many tasks expressed in text during training (Q&A pairs, code-comment pairs, translation pairs). At inference, examples in the prompt act as a posterior update over which “task” the rest of the prompt is drawn from. This is the Bayesian view from Xie et al. (2022): few-shot examples sharpen $P(\text{task} \mid \text{prompt})$ .

2. Implicit gradient descent inside attention. A line of work (Akyurek et al., von Oswald et al., 2022-2023) shows that attention layers can implement one-step gradient descent on a linear regression task encoded in the prompt. The mechanistic claim is strong only for toy settings, but the suggestive picture — “attention is doing some kind of fast adaptation” — is useful intuition.

3. Pattern matching plus copy. The simplest explanation: induction heads (Olsson et al., 2022) copy patterns from earlier in the context. Few-shot prompts give the model a pattern to copy.

The practical consequences are the same regardless of which story you prefer:

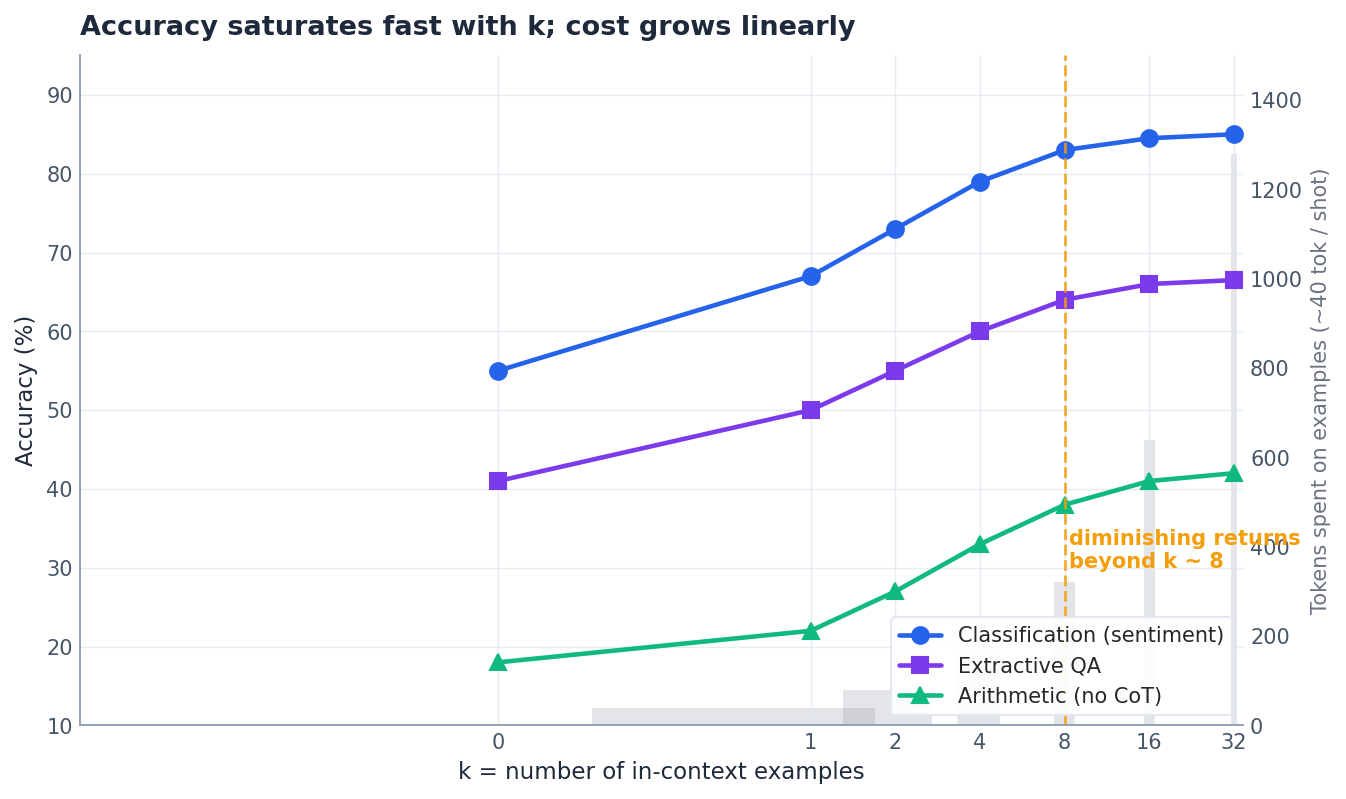

- More examples help, with steeply diminishing returns. Most of the win comes from the first 2-4.

- Distribution matters more than correctness. Examples that cover the input space beat examples that are individually clever.

- Recency wins on ties. The last example in the prompt has outsized influence.

- Position-of-correct-answer biases exist. On multiple-choice tasks, models systematically favour position A or the most recent option, depending on the model.

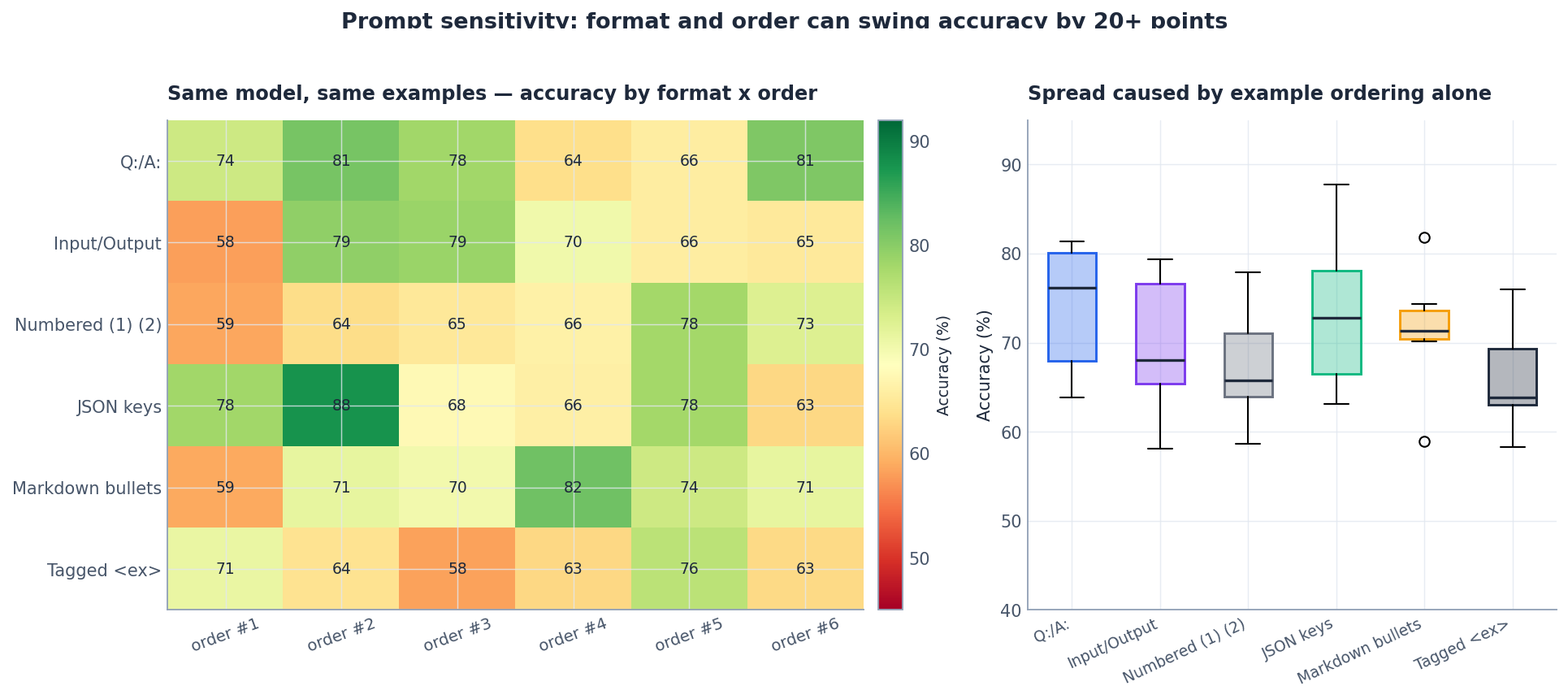

The variance problem#

Here is the uncomfortable truth nobody mentions in the marketing material. Prompt accuracy can swing 10-30 points based on choices that should not matter: the order of your examples, whether you write Q: or Input:, whether you wrap the answer in quotes.

Lu et al. (2022) called this order sensitivity and showed that on classification tasks the same model with the same examples in different orders ranged from near-random to near-state-of-the-art. Sclar et al. (2024) extended this to format sensitivity — swapping Q:/A: for Question:/Answer: produces double-digit accuracy swings.

What this means in practice:

- Always evaluate prompts on a held-out set of at least 50-100 examples. A single anecdote tells you nothing.

- Run multiple seeds / orders when comparing two prompts. Report the median, not the best.

- Fix the prompt format early and treat changes as a versioned event, not a tweak.

A minimal evaluation harness:

| |

If spread > 0.05, your prompt is unstable and any single-run number you report is noise.

How many shots is enough?#

The pattern is consistent across task families: the first few shots matter a lot, returns saturate around $k \approx 4$ to $8$ , and beyond $k \approx 16$ extra examples mostly cost tokens. Two practical rules:

- For classification with $\le 5$ classes, $k = 2 \times \text{num classes}$ is a good default.

- For generation tasks, $k = 2$ to $3$ high-quality demonstrations beats $k = 10$ mediocre ones every time.

Pick examples that are diverse across the input distribution and clean / unambiguous on the output side — the model will copy your format faithfully, including bugs.

Self-consistency: turn the decoder into an ensemble#

A single CoT chain can take a wrong turn at step 2 and propagate the mistake. Self-consistency (Wang et al., 2022) addresses this with a one-line fix: sample $k$ different reasoning chains, then majority-vote the final answers.

This is a Monte Carlo approximation to the marginal $\sum_z P(z \mid x)\, P(a \mid x, z)$ . It works because errors tend to be diverse but correctness tends to be convergent — many wrong reasoning paths land on different wrong answers, while correct paths agree.

| |

Two notes from running this in production:

- Temperature matters. Use $T \in [0.5, 0.9]$ . At $T = 0$ all samples collapse to the same chain; the ensemble degenerates.

- The vote ratio is your confidence signal. A 5/5 unanimous answer is much more trustworthy than a 3/5 plurality. Surface this number to downstream consumers; it is one of the cheapest reliability metrics you get.

Tree-of-thought (Yao et al., 2023) generalizes this: instead of sampling independent linear chains, explore a tree of partial reasoning steps, prune low-scoring branches, and search. It is more powerful but more expensive; reach for it only when self-consistency plateaus.

ReAct: reasoning + acting#

Self-consistency improves what the model can do with what it already knows. ReAct (Yao et al., 2022) addresses the harder case: when the model needs external information or actions. The pattern interleaves three blocks in the output:

- Thought. Free-form reasoning about the current state.

- Action. A structured tool call:

search("..."); calc("..."); read_file("..."). - Observation. The tool’s output, fed back into the prompt for the next iteration.

The loop terminates when the model emits a final Answer: instead of an Action:. Modern agent frameworks (LangChain agents, OpenAI’s function calling, Anthropic’s tool use) are all variations on this template — often with the structured tool call moved to a JSON-typed API field rather than parsed from free text.

A minimal implementation that captures the essentials:

| |

Three things that matter for a ReAct agent in production:

- Stop tokens. Stop generation at

\nObservation:so the model does not hallucinate the tool’s response. - Tool error handling. Wrap tool calls; pass the exception message back as an observation. The model will often correct itself.

- A step budget. Always cap iterations. Without a budget, agents loop until they bankrupt you.

Building a prompt system#

A handful of strong prompts is a script. A system of prompts is what survives team turnover, model upgrades, and four months of A/B tests. Three habits separate the two.

Treat prompts like code#

Version them, code-review them, store them in the repo, not in a copy-paste doc. A minimal registry:

| |

Tag each call site with (prompt_name, version). When you change a prompt, bump the version; old code keeps using the old prompt until you migrate.

Evaluate before you ship#

Every prompt that goes to production needs three artefacts:

- A golden set of 50-200 inputs with expected outputs (or a graded rubric).

- An automated evaluator (exact match, JSON schema check, or LLM-as-judge with its own pinned prompt).

- A regression CI step that runs both the current and the candidate prompt and refuses to merge if accuracy drops.

| |

Combine techniques deliberately#

The big wins usually come from stacking:

- Role + few-shot + format spec for any structured-output task.

- CoT + self-consistency for any reasoning task you cannot afford to get wrong.

- ReAct + retrieval for any task that needs facts the model does not have.

Avoid stacking for its own sake. Each block costs tokens, increases latency, and adds a place for things to go wrong.

| |

Worked example: a sentiment classifier you can actually deploy#

Putting it all together for a small but realistic task.

| |

Notice how every section from this article appears: a role (system), a format spec, three diverse few-shot examples, a parser that tolerates the model’s noise, and self-consistency to suppress label flips. None of it is exotic; together it is the difference between a 60% and a 90% pipeline.

FAQ#

Is a longer prompt always better?#

No. After a point, extra context distracts the model and bloats latency and cost. Start short, add only what measurably helps on your eval set.

How many shots should I use?#

Two to five for classification, one to three for generation. More than eight rarely helps and often adds variance from format issues. Always test, do not assume.

Does CoT help on every task?#

No. It shines on multi-step reasoning (math, logic, code, multi-hop QA). On simple classification or fact lookup it adds noise and tokens for no gain.

What temperature should I use?#

For deterministic outputs (classification, extraction): $T \in [0.0, 0.2]$ . For balanced generation: $T \in [0.5, 0.7]$ . For self-consistency sampling: $T \in [0.7, 0.9]$ — you want path diversity.

Does prompt engineering replace fine-tuning?#

It replaces a lot of the cases that used to need fine-tuning, especially for instruction-following tasks. Reach for fine-tuning when you need (a) consistent behaviour at scale where prompt drift is unacceptable, (b) a smaller / cheaper model that matches the big one on your domain, or (c) a behavioural change the base model resists.

Does example order matter?#

A lot more than it should. The recency bias is real — the last example influences the model most. Always evaluate at multiple orderings and report the median.

Should I worry about prompt injection?#

Yes, the moment your prompt includes any text from outside your control (user input, retrieved documents, tool outputs). Treat untrusted text as data and never let it modify your instructions; this is its own topic and we will return to it in the agents and safety material.

NLP 12 parts

- 01 NLP (1): Introduction and Text Preprocessing

- 02 NLP (2): Word Embeddings and Language Models

- 03 NLP (3): RNN and Sequence Modeling

- 04 NLP (4): Attention Mechanism and Transformer

- 05 NLP (5): BERT and Pretrained Models

- 06 NLP (6): GPT and Generative Language Models

- 07 NLP (7): Prompt Engineering and In-Context Learning you are here

- 08 NLP (8): Model Fine-tuning and PEFT

- 09 NLP (9): Deep Dive into LLM Architecture

- 10 NLP (10): RAG and Knowledge Enhancement Systems

- 11 NLP (11): Multimodal Large Language Models

- 12 NLP (12): Frontiers and Practical Applications