Ordinary Differential Equations (1): Origins and Intuition

Why do differential equations exist? Starting from cooling coffee and swinging pendulums, build your first ODE intuition and solve one in Python.

Everything around you is changing. Coffee cools, populations grow, pendulums swing, viruses spread, stocks oscillate, planets orbit. None of these systems are described by what something equals — they are described by how fast something changes. That second mode of description is what differential equations are for, and learning to read them is, quite literally, learning to read the language physics and biology are written in.

This chapter rebuilds your intuition from scratch. We start with a single cup of coffee, derive the same equation that governs radioactive decay and capacitor discharge, then climb upward to direction fields, classification, and the existence-and-uniqueness theorem that tells you when an ODE has a sensible answer at all.

What You Will Learn#

- What a differential equation actually is — beyond the symbols

- ODE vs. PDE, order, linearity — and why each distinction earns its keep

- Three classic models (cooling, decay, oscillation) that you will meet again and again

- Direction fields: how to “see” the solution before solving anything

- The existence and uniqueness theorem (Picard–Lindelöf), with a concrete counter-example

- Your first numerical solution in Python with

scipy, and when to trust it

Prerequisites#

- Single-variable calculus: derivatives, integrals, the chain rule

- Basic Python: NumPy arrays and Matplotlib plotting

What is a differential equation? Start with coffee#

You brew coffee at $T_0 = 90^\circ\mathrm{C}$ and put it in a $T_\text{env} = 20^\circ\mathrm{C}$ room. A few minutes later it has cooled. Two questions feel natural:

- What temperature is it now? — an algebraic question.

- How fast is it cooling right now? — a calculus question.

That single line is a differential equation — an equation that relates a function $T(t)$ to its own derivative. The unknown is the function, not a number.

| Symbol | Meaning |

|---|---|

| $T(t)$ | Temperature at time $t$ |

| $dT/dt$ | Instantaneous rate of change |

| $T_\text{env}$ | Ambient temperature ($20^\circ\mathrm{C}$ ) |

| $k > 0$ | Cooling constant — depends on the cup, the surface area, the air |

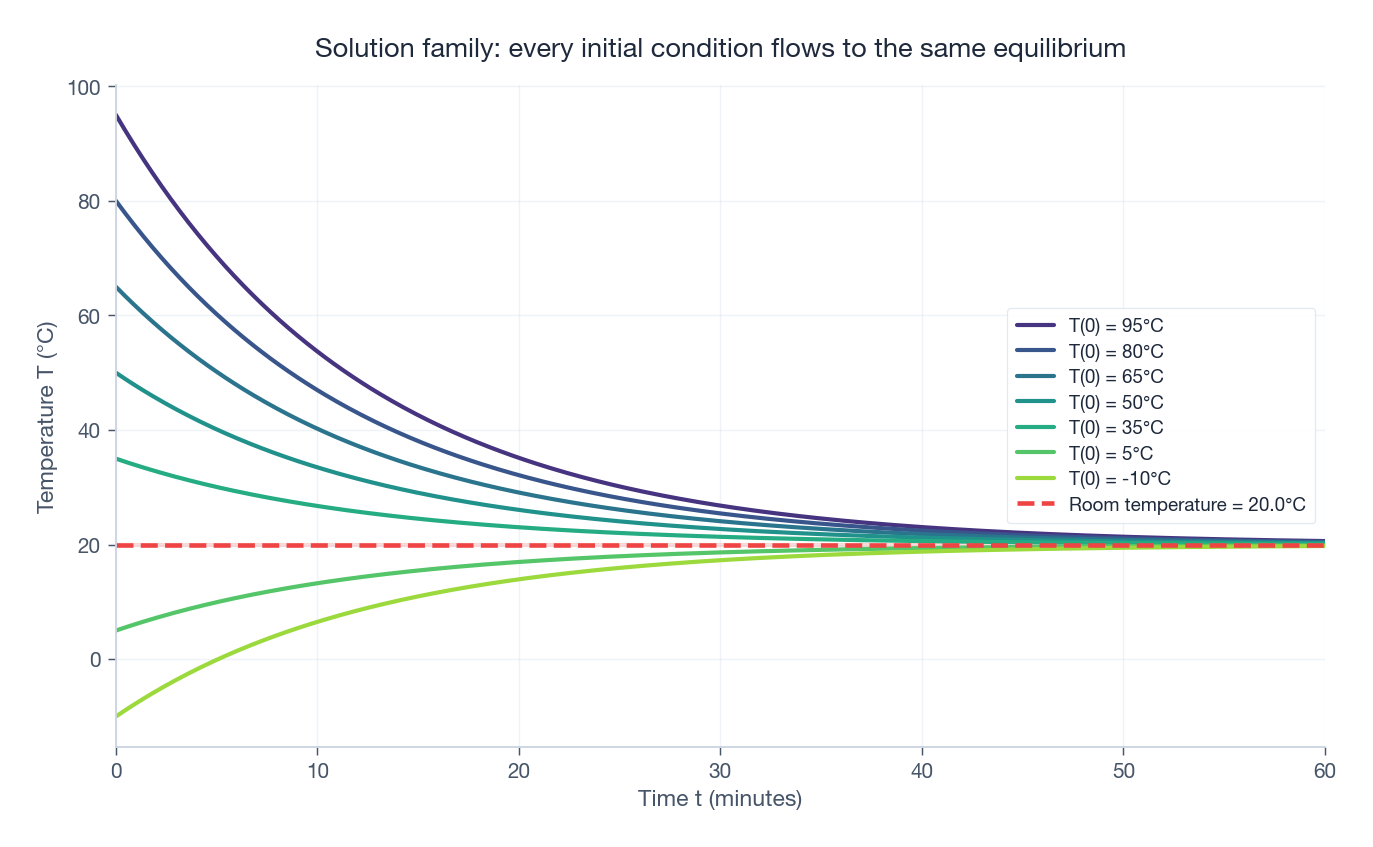

The minus sign matters: when $T > T_\text{env}$ the right-hand side is negative, so $T$ decreases. When $T < T_\text{env}$ it is positive, so $T$ rises. The equation already knows the second law of thermodynamics.

Reading the model before solving it#

We can extract physical predictions without writing a single integral:

- The closer $T$ gets to $T_\text{env}$ , the smaller the gap, the slower the cooling. Cooling decelerates.

- $T = T_\text{env}$ makes the right-hand side zero — once the coffee reaches room temperature, nothing changes. This is an equilibrium.

- A bigger $k$ (thin cup, drafty room) means faster decay; a smaller $k$ (thermos) means slower.

This is the first lesson of the whole subject: a good ODE tells you the qualitative behaviour before you solve it.

Solving it in Python#

| |

The closed-form answer#

$$\boxed{\,T(t) = T_\text{env} + \bigl(T_0 - T_\text{env}\bigr)\,e^{-kt}.\,}$$Three things are worth memorising:

- The departure $T - T_\text{env}$ decays as a pure exponential.

- The decay rate is set entirely by $k$ . Halving $k$ doubles the cooling time.

- $T \to T_\text{env}$ asymptotically — never exactly equal, but close enough that physics stops caring.

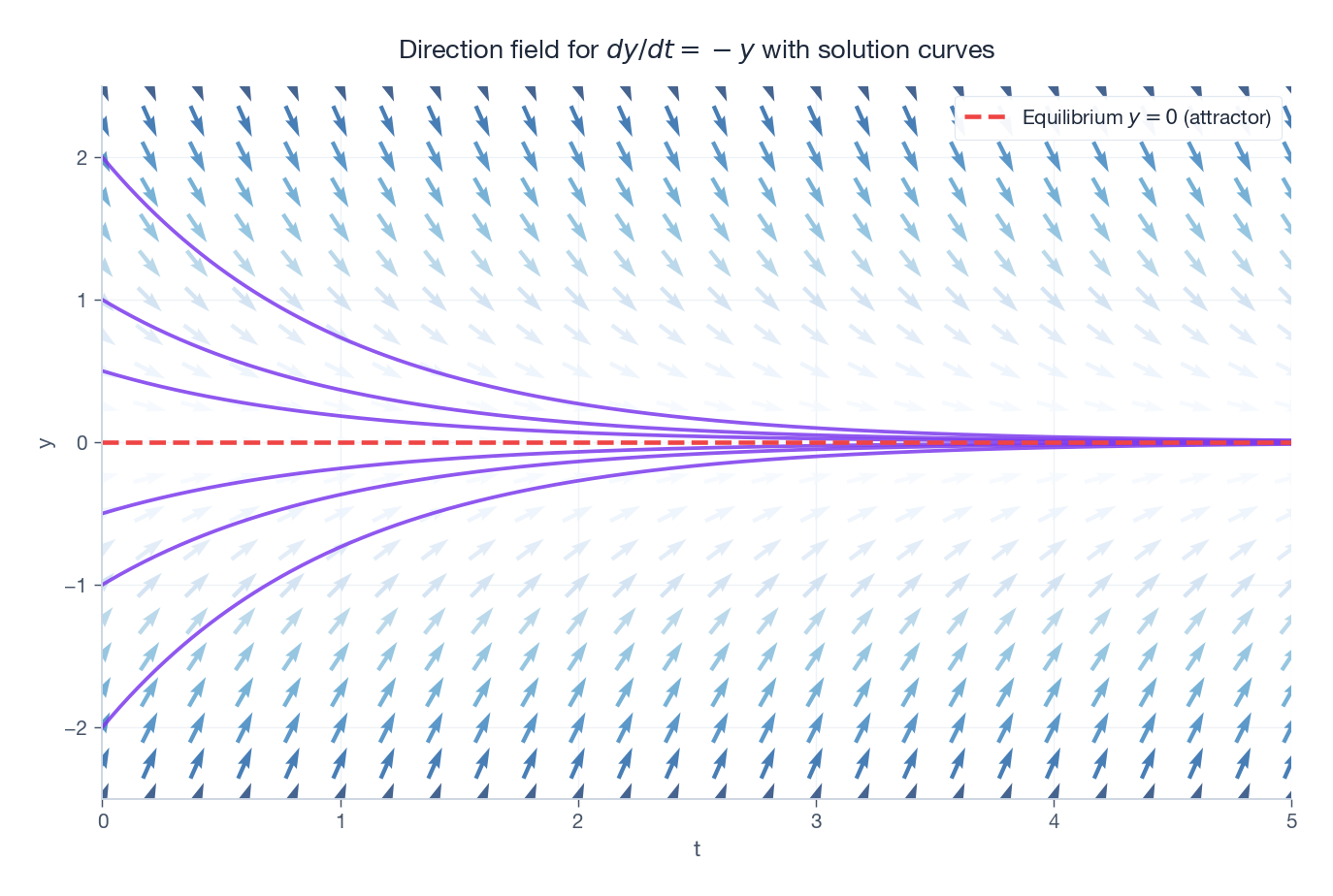

Direction fields: see the solution before you compute it#

the function $f$ tells you the slope of the solution at every point in the $(t, y)$ -plane without actually solving anything. Plot a tiny arrow with that slope at each grid point and you have a direction field (also called a slope field or vector field). Solution curves are the trajectories that flow tangent to the arrows.

This visual lets you reason about systems whose closed-form solution is hopeless. Looking at the field, you can already answer:

- Is there an equilibrium? (Where do arrows go horizontal?)

- Is it attracting or repelling? (Do nearby arrows point toward it or away?)

- Does the solution blow up, oscillate, or settle?

We will lean on direction fields throughout the series — they are the cheapest, most honest way to understand a nonlinear ODE.

Classification: ODE, PDE, order, linearity#

A few labels save us a lot of breath later.

ODE vs. PDE#

| Type | Independent variables | Example |

|---|---|---|

| Ordinary (ODE) | One (usually $t$ ) | $\dfrac{dy}{dt} = -ky$ |

| Partial (PDE) | Several ($x, y, t, \dots$ ) | $\dfrac{\partial u}{\partial t} = k\,\dfrac{\partial^2 u}{\partial x^2}$ |

This series is about ODEs. PDEs (heat, wave, Schrödinger, Navier–Stokes) get their own treatment in Chapter 13 .

Order = highest derivative present#

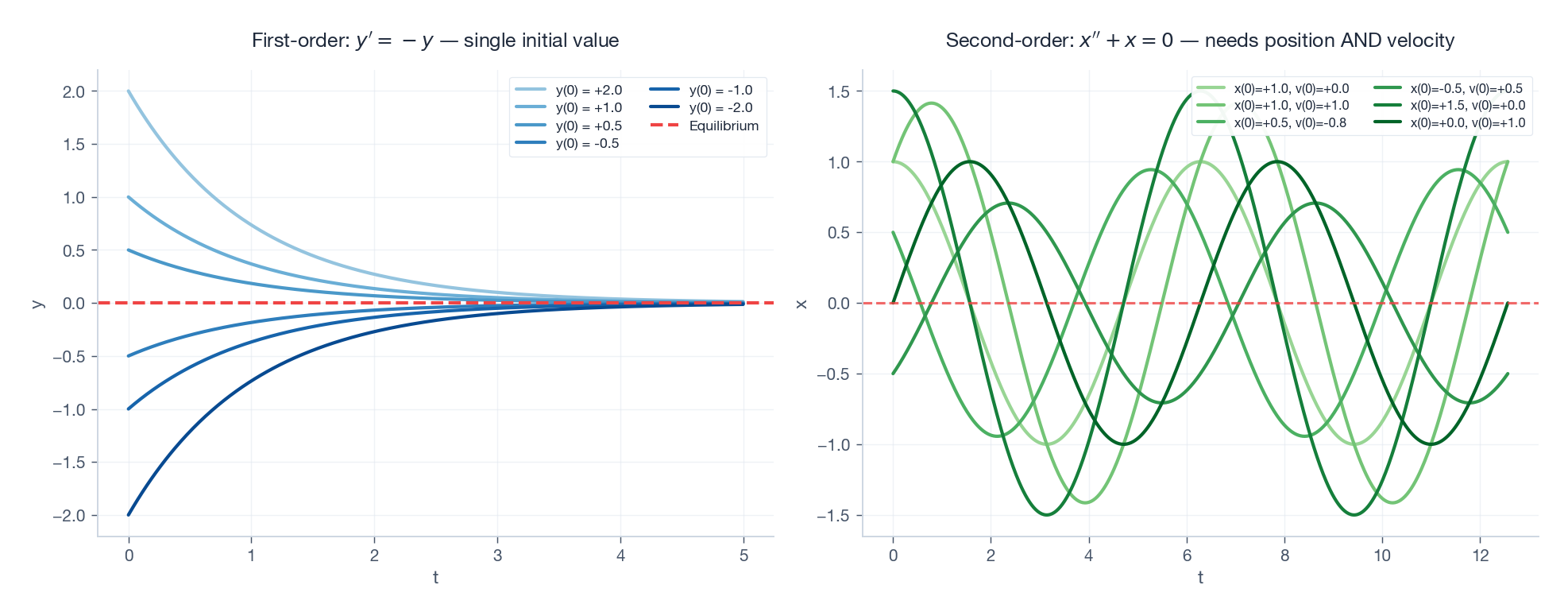

- First-order: $y' = f(t, y)$ — radioactive decay, RC circuits, single-species growth.

- Second-order: $y'' + p(t)y' + q(t)y = g(t)$ — springs, pendulums, RLC circuits, planetary orbits.

Why does the order matter? Because to pin down a unique solution you need exactly that many initial conditions. A first-order ODE needs $y(0)$ . A second-order one needs both $y(0)$ and $y'(0)$ — position and velocity. That is not a quirk; it is Newton’s $F = ma$ telling you that to predict a particle’s future you need its starting position and its starting kick.

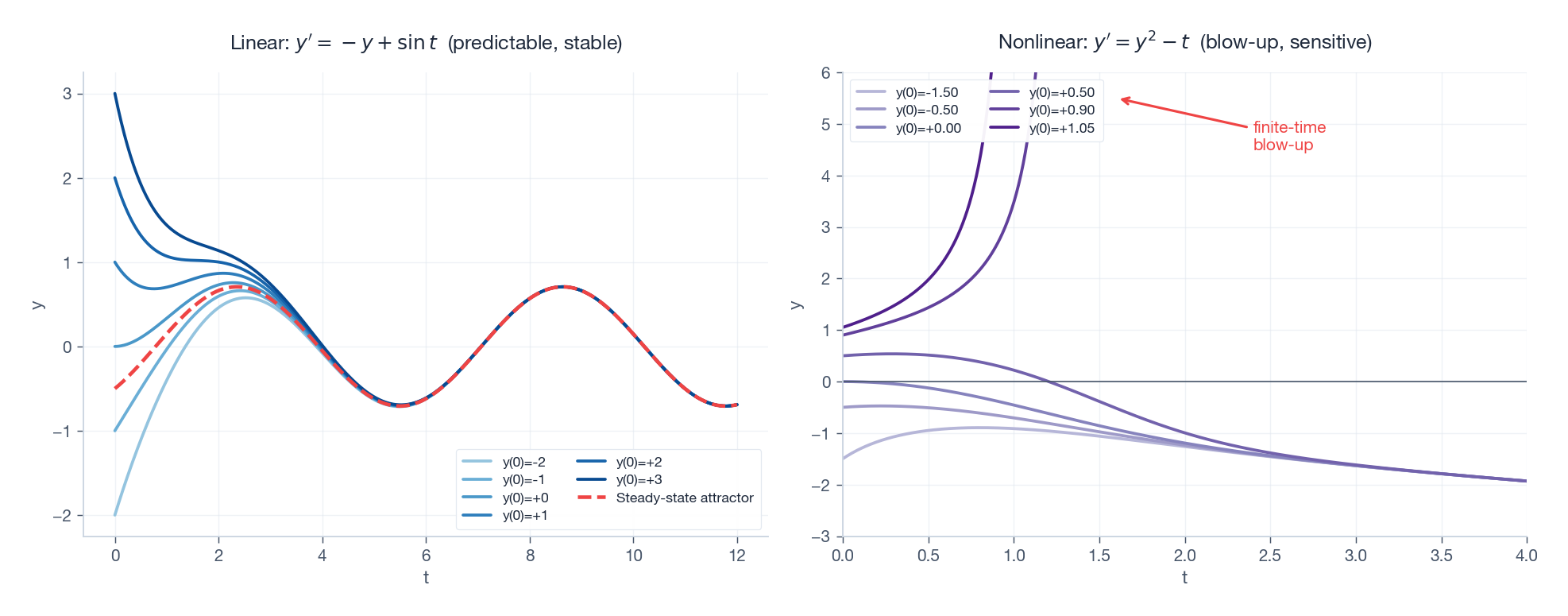

Linear vs. nonlinear#

$$a_n(t)\,y^{(n)} + a_{n-1}(t)\,y^{(n-1)} + \dots + a_0(t)\,y = g(t).$$If anything else shows up — $y^2$ , $\sin y$ , $y\,y'$ , $\sqrt{y}$ — the equation is nonlinear.

Why obsess over the distinction? Because linearity is a superpower:

- Solutions can be added (superposition principle).

- A complete solution decomposes into homogeneous + particular parts.

- The whole machinery of linear algebra (eigenvalues, transforms, Green’s functions) becomes available.

Nonlinear equations have none of this for free. They can do things linear equations cannot — limit cycles, chaos, finite-time blow-up — but each one tends to require its own custom assault.

A brief history#

Differential equations were not “discovered” so much as required by physics. The same century that invented calculus needed it.

| Year | Who | Milestone |

|---|---|---|

| 1666 | Newton | Calculus + the laws of motion. $F = ma$ is itself a second-order ODE. |

| 1690s | Bernoulli family | Catenary, brachistochrone — turning physical questions into ODEs. |

| 1739 | Euler | First systematic ODE theory; Euler’s method (still the seed of modern integrators). |

| 1820s | Cauchy | Existence proofs — when does a solution actually exist? |

| 1890 | Picard, Lindelöf | The uniqueness theorem in essentially modern form. |

| 1880s+ | Poincaré | Qualitative theory: stop chasing formulas, study the shape of solution flows. |

| 1963 | Lorenz | Chaos, born from a three-equation weather toy model. |

The arc is striking: we begin chasing formulas, give up, and learn instead to study the geometry of solutions. Modern ODE theory is much closer in spirit to Poincaré than to Newton.

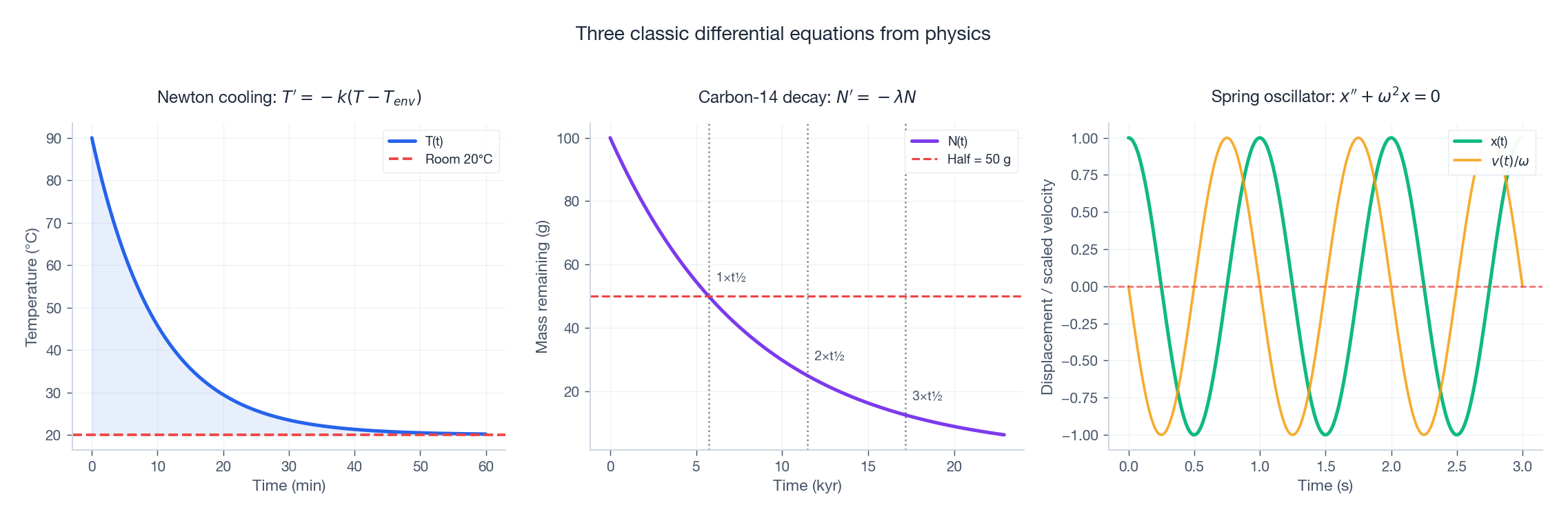

Three classic models you will keep meeting#

The reason ODEs feel ubiquitous is that a small number of equations show up over and over in different physical disguises. Here are three.

Radioactive decay — the universe’s stopwatch#

$$ \frac{dN}{dt} = -\lambda N \qquad\Longrightarrow\qquad N(t) = N_0\,e^{-\lambda t}. $$The half-life $t_{1/2} = (\ln 2)/\lambda$ is a single number that summarises the whole curve. Carbon-14 has $t_{1/2} = 5730\,\text{yr}$ , which is exactly why archaeologists can date a piece of charcoal: measure the surviving $^{14}\mathrm{C}$ ratio, take the log, and read off the age. The same equation also describes capacitor discharge in an RC circuit and drug clearance from the bloodstream.

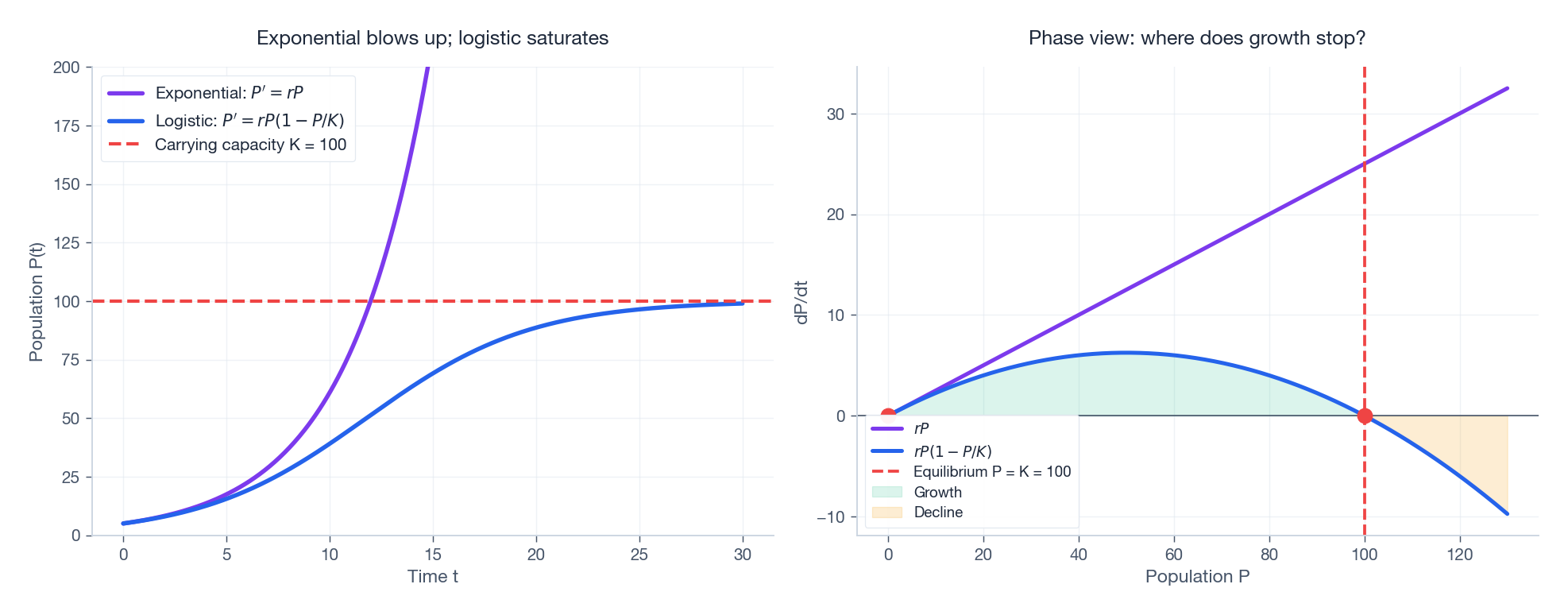

Malthusian population growth#

$$ \frac{dP}{dt} = rP \qquad\Longrightarrow\qquad P(t) = P_0\,e^{rt}. $$This is the exact same equation as decay, with $r$ replacing $-\lambda$ . The mathematics doesn’t care whether something is dying or breeding; it cares about the sign of the rate constant.

It also predicts unbounded exponential growth, which any biologist will tell you is nonsense — eventually you run out of food, space, or hosts. The fix, the logistic equation, is so important we plot it next to its naive cousin.

Simple harmonic motion#

$$ m\,\frac{d^2 x}{dt^2} = -kx \qquad\Longleftrightarrow\qquad x'' + \omega_0^2\,x = 0, \quad \omega_0 = \sqrt{k/m}. $$The general solution is $x(t) = A\cos(\omega_0 t + \varphi)$ — a pure sinusoid with natural angular frequency $\omega_0$ . You meet this equation in pendulums (small swings), LC circuits, vibrating molecules, and the eigenmodes of essentially every linear system in physics.

| |

The right panel is a phase portrait, our first glimpse of how second-order systems live naturally in a 2D space of $(x, v)$ . Energy conservation forces the trajectory to be a closed ellipse; that geometric fact will become the centrepiece of Chapter 7 .

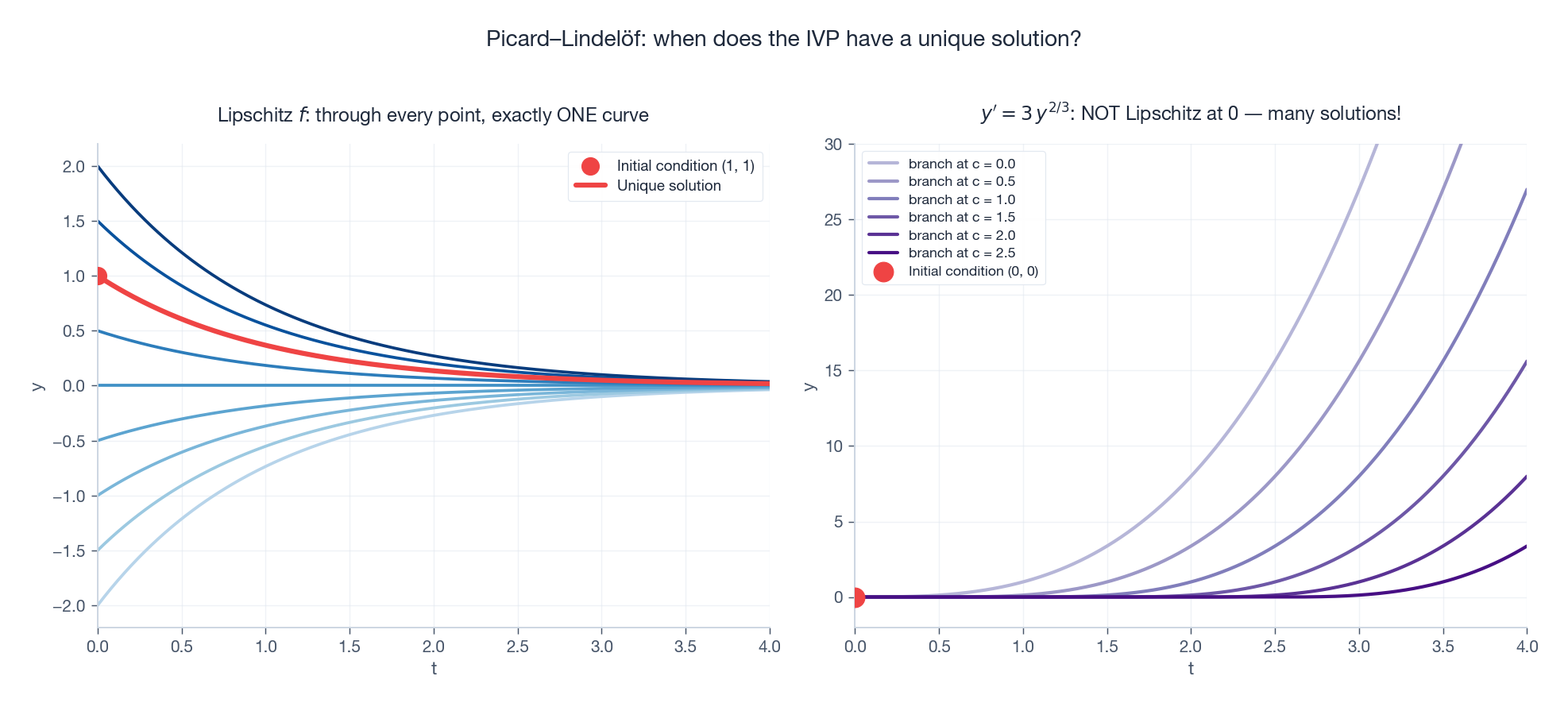

Initial value problems and the question of existence#

$$\frac{dy}{dt} = f(t, y), \qquad y(t_0) = y_0.$$Together they form an initial value problem (IVP). The natural follow-up question is one mathematicians took two centuries to take seriously:

Does the IVP actually have a solution? If so, is it unique?

The answer, in modern form, is the Picard–Lindelöf theorem.

Theorem (Picard–Lindelöf, 1890). If $f(t, y)$ is continuous in $t$ and Lipschitz in $y$ on a rectangle around $(t_0, y_0)$ , then the IVP has a unique solution on some open interval containing $t_0$ .

A function is Lipschitz in $y$ if there exists a constant $L$ with $|f(t, y_1) - f(t, y_2)| \le L\,|y_1 - y_2|$ — informally, the slope of $f$ in the $y$ -direction is bounded. Most “well-behaved” right-hand sides (polynomials, smooth functions) automatically satisfy this.

Why care? Because without this guarantee, the model itself is ambiguous — and that does happen.

The takeaway is sharp: a “law of physics” written as an ODE is only a law if Picard–Lindelöf holds. Otherwise the future is not determined by the present, and the whole point of a deterministic model collapses.

Analytical vs. numerical solutions#

| Analytical | Numerical | |

|---|---|---|

| What you get | A formula: $y(t) = C e^{-kt}$ | A table of $(t_n, y_n)$ values |

| Where it comes from | Pencil, paper, and a closed-form integral | A march in time: $y_{n+1} = y_n + h\,f(t_n, y_n) + \dots$ |

| Pro | Exact; reveals structure (what depends on what) | Works for any ODE you can write down |

| Con | Most ODEs have no closed form | Approximate; needs careful step-size control |

In a graduate physics course you might solve a dozen ODEs by hand. In professional engineering and science, the overwhelming majority of ODEs are solved numerically — there is no shame in this; in fact most modern modelling depends on it.

$$y_{n+1} = y_n + h\,f(t_n, y_n).$$ | |

Euler is the seed of every more sophisticated method (Runge–Kutta, Adams–Bashforth, the symplectic integrators used for orbit propagation). We will spend Chapter 11

unpacking how the modern solvers in scipy.integrate.solve_ivp actually work — but the logic is always the same: estimate the derivative, take a step, repeat, control the error.

Summary#

| Concept | Key takeaway |

|---|---|

| Differential equation | An equation relating an unknown function to its derivatives |

| ODE vs. PDE | One independent variable vs. several |

| Order | How many derivatives appear — equals how many initial conditions you need |

| Linear vs. nonlinear | Linear admits superposition and a complete theory; nonlinear can exhibit chaos |

| Direction field | Visualise the flow before solving |

| IVP + Picard–Lindelöf | Initial condition + Lipschitz $f$ ⇒ unique solution |

| Analytical vs. numerical | Exact when possible; numerical when necessary (almost always) |

The big idea of this chapter, in one sentence: physics writes its laws as differential equations because nature speaks the language of rates of change, and learning ODEs is learning to translate that language back into pictures, formulas, and predictions.

Exercises#

Warm-up

- Verify that $y(t) = C e^{3t}$ solves $y' = 3y$ for any constant $C$ .

- Coffee cools from $90^\circ\mathrm{C}$ to $60^\circ\mathrm{C}$ in 10 minutes in a $20^\circ\mathrm{C}$ room. Find the cooling constant $k$ . Then predict the temperature at $t = 30$ min.

- A radioactive sample has half-life 10 yr. How much of an initial 100 g remains after 25 yr?

Conceptual

- Prove that every solution of $y' = -2y$ approaches 0 as $t \to \infty$ , regardless of $y(0)$ .

- Sketch the direction field of $y' = y(1 - y)$ by hand. Identify the equilibria; predict the long-term behaviour for $y(0) = 0.1$ , $y(0) = 0.5$ , and $y(0) = 1.5$ . (This is the logistic equation.)

- Why is the Malthusian population model unrealistic? Propose at least two physical mechanisms that should modify it.

- Show that the IVP $y' = 3 y^{2/3}$ , $y(0) = 0$ admits more than one solution, and identify which hypothesis of Picard–Lindelöf fails.

Programming

- Implement Euler’s method and solve $y' = -y$ , $y(0) = 1$ on $[0, 5]$ with step sizes $h = 0.5,\,0.1,\,0.01$ . Plot the global error against $h$ on a log–log scale. What slope do you get? Why?

- Plot the direction field and several solution curves for $y' = \sin t - y$ . Conjecture the long-term behaviour and confirm numerically.

- Reproduce Figure 6 (logistic vs exponential): solve both ODEs with

scipy.integrate.solve_ivpand overlay them. At what time does the logistic solution reach 99% of $K$ ?

References#

- Boyce, DiPrima & Meade, Elementary Differential Equations and Boundary Value Problems, Wiley (11th ed., 2017).

- Strogatz, Nonlinear Dynamics and Chaos, CRC Press (2nd ed., 2015) — chapters 1–2 are the perfect companion to this one.

- Tenenbaum & Pollard, Ordinary Differential Equations, Dover (1985) — the classic exhaustive reference.

- Hirsch, Smale & Devaney, Differential Equations, Dynamical Systems, and an Introduction to Chaos, Academic Press (3rd ed., 2012).

- MIT OCW 18.03SC — Differential Equations (free video lectures).

This is Part 1 of the 18-part series on Ordinary Differential Equations.

- Part 1: Origins and Intuition (current)

- Part 2: First-Order Methods

- Part 3: Higher-Order Linear Theory

- Part 4: The Laplace Transform

- Part 5: Power Series and Special Functions

- Part 6: Linear Systems and the Matrix Exponential

- Part 7: Stability Theory

- Part 8: Nonlinear Systems and Phase Portraits

- Part 9: Chaos Theory and the Lorenz System

- Part 10: Bifurcation Theory

- Part 11: Numerical Methods

- Part 12: Boundary Value Problems

- Part 13: Introduction to PDEs

- Part 14: Epidemic Models

- Part 15: Population Dynamics

- Part 16: Fundamentals of Control Theory

- Part 17: Physics and Engineering Applications

- Part 18: Frontiers and Series Finale

ODE Foundations 18 parts

- 01 Ordinary Differential Equations (1): Origins and Intuition you are here

- 02 Ordinary Differential Equations (2): First-Order Methods

- 03 Ordinary Differential Equations (3): Higher-Order Linear Theory

- 04 Ordinary Differential Equations (4): The Laplace Transform

- 05 Ordinary Differential Equations (5): Power Series and Special Functions

- 06 Ordinary Differential Equations (6): Linear Systems and the Matrix Exponential

- 07 Ordinary Differential Equations (7): Stability Theory

- 08 Ordinary Differential Equations (8): Nonlinear Systems and Phase Portraits

- 09 Ordinary Differential Equations (9): Chaos Theory and the Lorenz System

- 10 Ordinary Differential Equations (10): Bifurcation Theory

- 11 Ordinary Differential Equations (11): Numerical Methods

- 12 Ordinary Differential Equations (12): Boundary Value Problems

- 13 Ordinary Differential Equations (13): Introduction to Partial Differential Equations

- 14 Ordinary Differential Equations (14): Epidemic Models and Epidemiology

- 15 Ordinary Differential Equations (15): Population Dynamics

- 16 Ordinary Differential Equations (16): Fundamentals of Control Theory

- 17 Ordinary Differential Equations (17): Physics and Engineering Applications

- 18 Ordinary Differential Equations (18): Frontiers and Series Finale