Ordinary Differential Equations (3): Higher-Order Linear Theory

From springs to RLC circuits, the full theory of higher-order linear ODEs: superposition, the Wronskian, characteristic equations, undetermined coefficients, variation of parameters, and the resonance phenomenon.

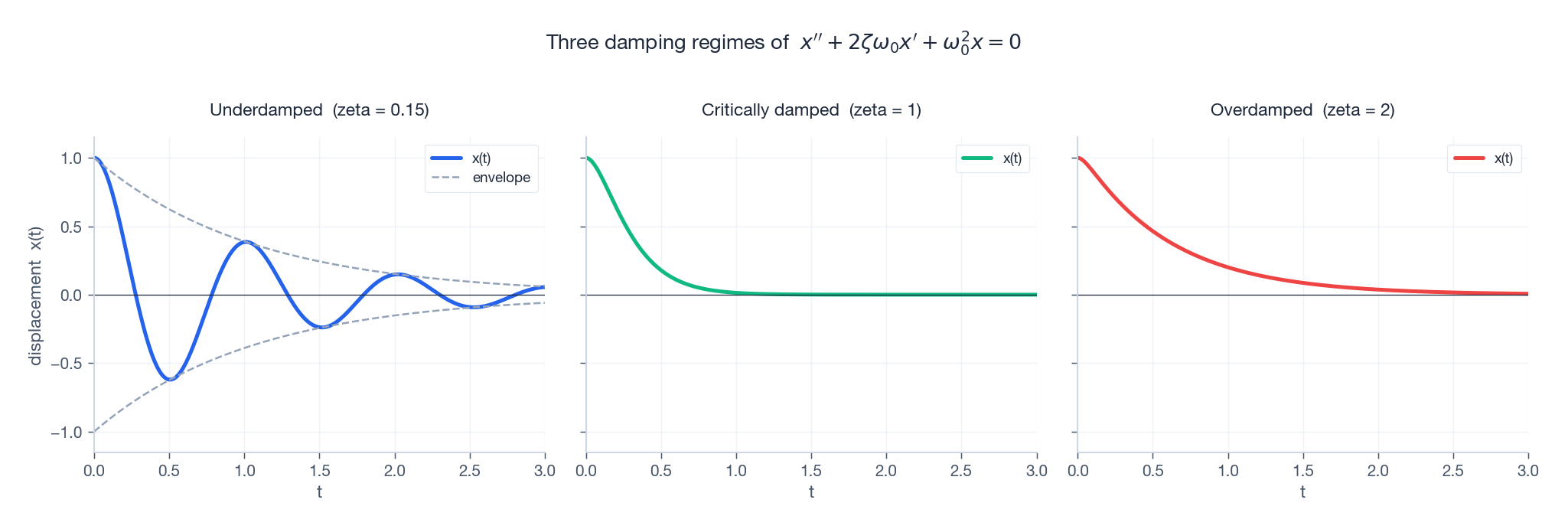

A first-order ODE has memory of one number; a second-order ODE has memory of two. That tiny extra degree of freedom is what lets the same equation describe a plucked guitar string, the suspension of your car, the L-C tank circuit inside an FM radio, and the swaying of a tall building in the wind. In every case the same three regimes appear — oscillate, return-with-a-touch-of-overshoot, or crawl back — and the same algebraic gadget, the characteristic equation, predicts which one happens.

This chapter builds the entire toolkit. We will derive it once, prove the structural theorems, then keep meeting the same picture in different clothing.

What You Will Learn#

- Why second-order shows up the moment Newton’s law meets a restoring force

- The structural theorems: superposition, the Wronskian, $y = y_h + y_p$

- Constant-coefficient homogeneous equations via the characteristic polynomial (real, repeated, complex roots)

- Damping ratio $\zeta$ and the under/critical/over trichotomy

- Forced response by undetermined coefficients (when $f$ is “nice”)

- Variation of parameters (when it is not)

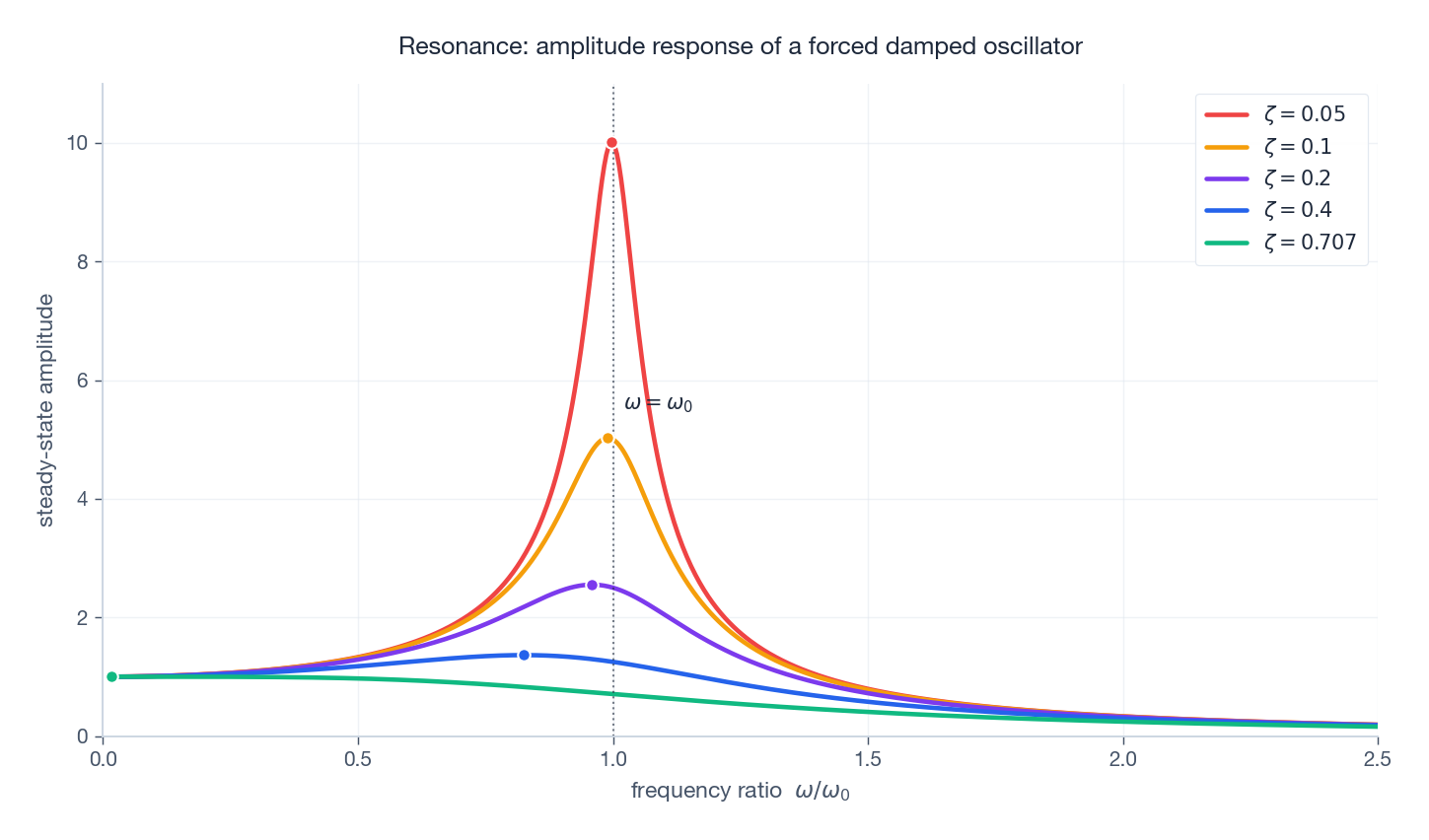

- Resonance and how to read the amplitude curve

- The same equation as an RLC circuit, and how to convert any $n$ -th order ODE into a first-order system

Prerequisites#

- Chapter 2: First-order methods — separable equations, integrating factors

- Linear algebra fluency at the level of $2\times 2$ determinants and complex numbers

Why second-order is the natural unit#

$$m\,\ddot x \;=\; -\,k x \;-\; b\,\dot x \;+\; F_\text{ext}(t).$$ $$\boxed{\;\ddot x + 2\zeta\omega_0\,\dot x + \omega_0^2\,x \;=\; f(t)\;}$$with natural frequency $\omega_0 = \sqrt{k/m}$ and damping ratio $\zeta = b/(2\sqrt{mk})$ . Almost every example in this chapter is a special case.

What second order really buys you. A first-order ODE $\dot x = F(x,t)$ at time $t$ is determined by the single number $x(t)$ . A second-order ODE needs two initial conditions, $x(0)$ and $\dot x(0)$ — you can specify position and velocity independently, so the system can store and exchange two kinds of “stuff” (kinetic and potential energy, voltage and current, etc.). Oscillation is the visible signature of that exchange.

Structure of the solution space#

Standard form#

$$y^{(n)} + p_{n-1}(x)\,y^{(n-1)} + \cdots + p_1(x)\,y' + p_0(x)\,y \;=\; g(x).$$It is homogeneous when $g \equiv 0$ , otherwise non-homogeneous.

Three theorems that organise everything#

(T1) Superposition. If $y_1, y_2$ both solve the homogeneous equation, so does $c_1 y_1 + c_2 y_2$ for any constants $c_1, c_2$ . (Linearity of derivatives — write it out.)

$$y_h(x) \;=\; c_1 y_1(x) + c_2 y_2(x) + \cdots + c_n y_n(x).$$This is the existence-uniqueness theorem in disguise: the $n$ initial conditions $y(x_0), y'(x_0), \dots, y^{(n-1)}(x_0)$ pick out a unique element of an $n$ -dimensional vector space.

$$y \;=\; y_h \;+\; y_p,$$where $y_p$ is any one particular solution. The reason is that if $y, \tilde y$ are two solutions, $L[y - \tilde y] = 0$ , so they differ by a homogeneous solution.

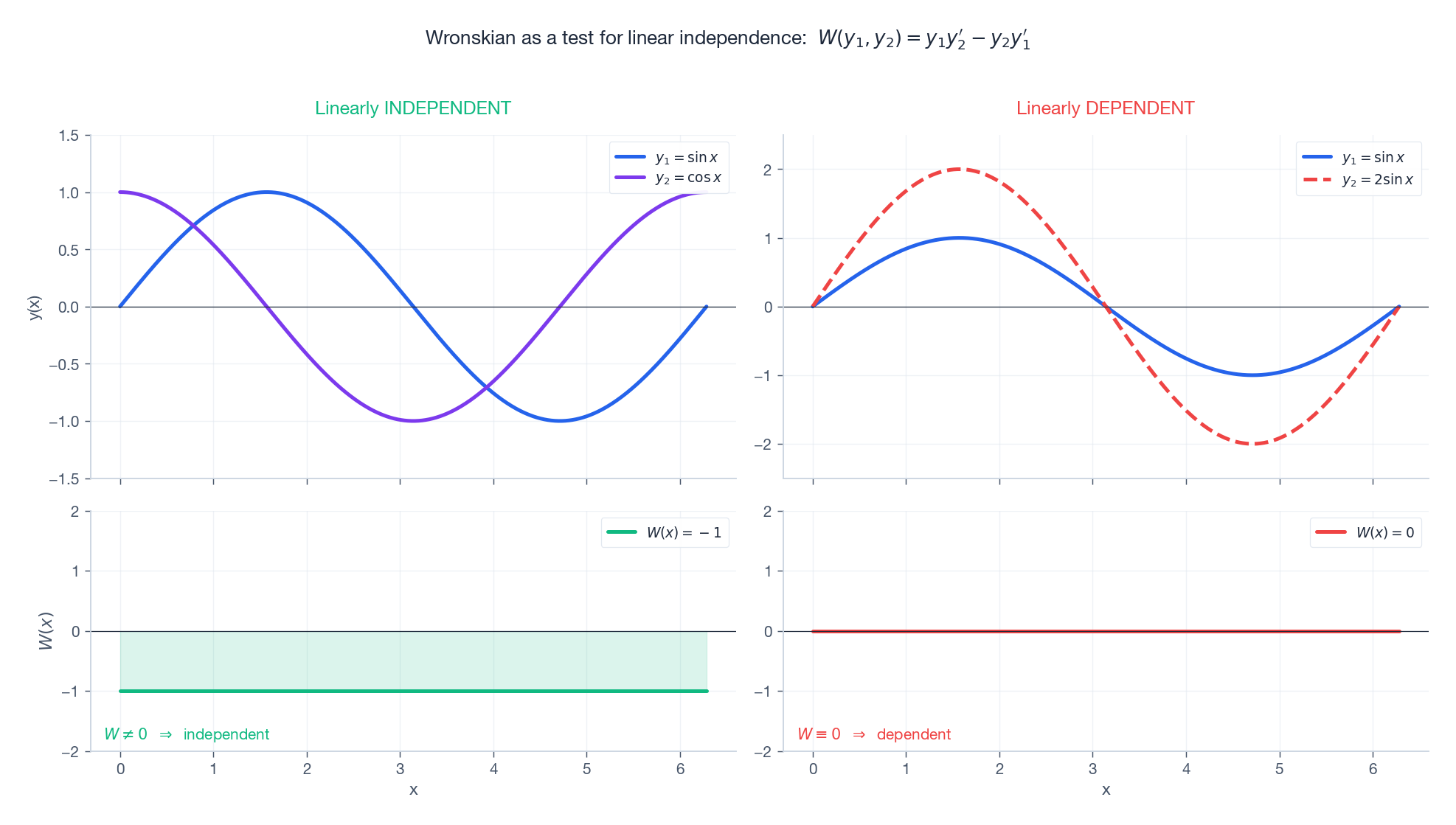

The Wronskian: a determinant test for independence#

$$ W(y_1, \dots, y_n)(x) \;=\; \det \begin{pmatrix} y_1 & y_2 & \cdots & y_n \\ y_1' & y_2' & \cdots & y_n' \\ \vdots & \vdots & & \vdots \\ y_1^{(n-1)} & y_2^{(n-1)} & \cdots & y_n^{(n-1)} \end{pmatrix}. $$For $n = 2$ : $W(y_1, y_2) = y_1 y_2' - y_2 y_1'$ .

The test. If $W(x_0) \neq 0$ at any one point $x_0$ in the interval, the solutions are linearly independent (and Abel’s identity then forces $W \neq 0$ everywhere on the interval).

$$W = \sin x \cdot (-\sin x) - \cos x \cdot \cos x = -1 \neq 0.$$Independent everywhere — they form a basis for solutions of $y'' + y = 0$ .

A dependent example. $y_1 = \sin x, y_2 = 2\sin x$ gives $W = 2\sin x\cos x - 2\sin x\cos x = 0$ identically. They are scalar multiples; the dimension of the span is one, not two.

Constant coefficients: the characteristic equation#

The trick#

$$a_n y^{(n)} + a_{n-1} y^{(n-1)} + \cdots + a_1 y' + a_0 y \;=\; 0$$ $$P(r) \;\equiv\; a_n r^n + a_{n-1} r^{n-1} + \cdots + a_1 r + a_0 \;=\; 0.$$Every root $r$ contributes a building block. The map roots $\to$ basis solutions is the entire content of this section.

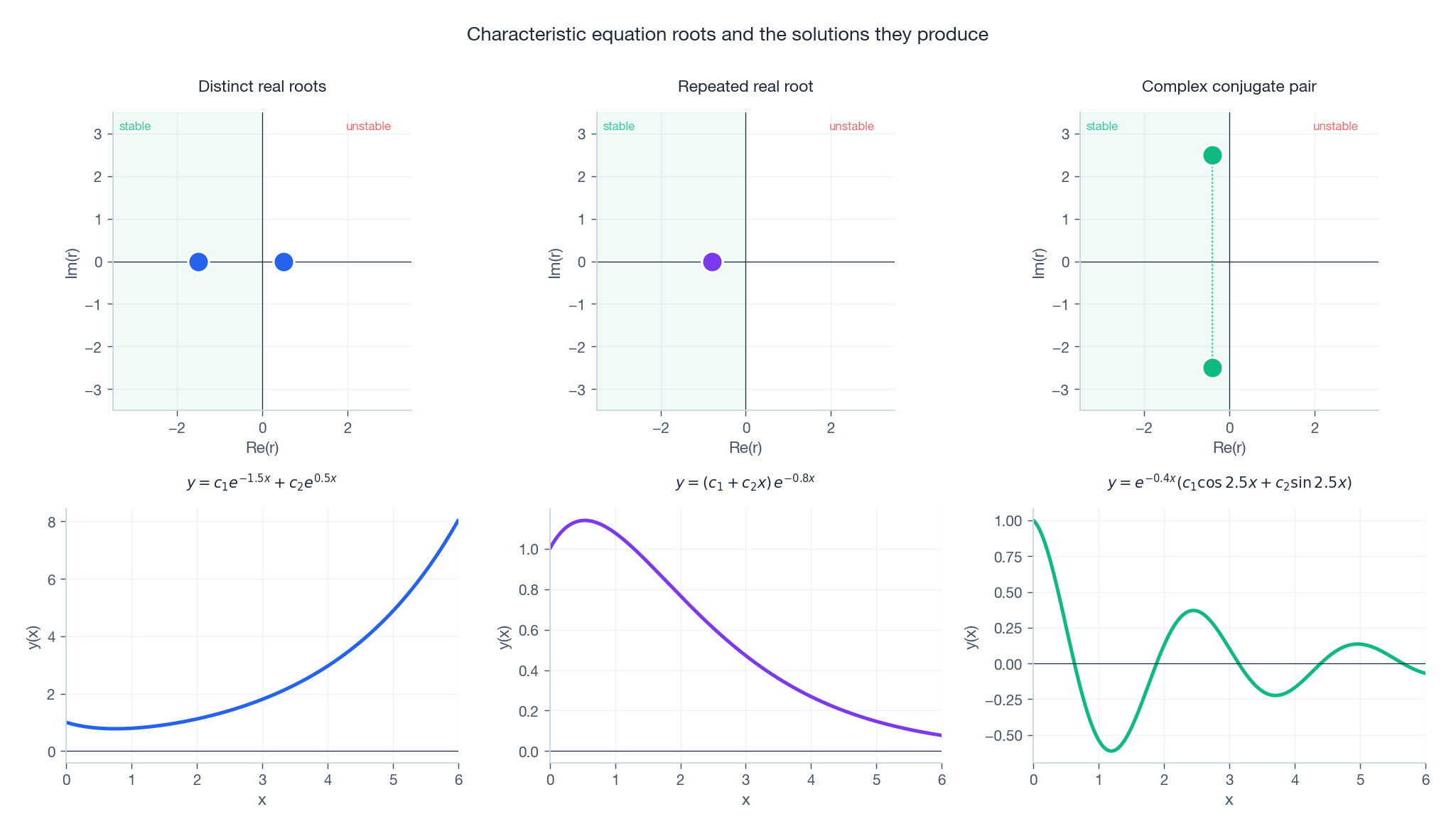

The three cases#

$$y \;=\; c_1 e^{r_1 x} + c_2 e^{r_2 x} + \cdots + c_n e^{r_n x}.$$Example. $y'' - 5y' + 6y = 0$ gives $(r-2)(r-3) = 0$ , so $y = c_1 e^{2x} + c_2 e^{3x}$ .

$$y \;=\; (c_1 + c_2 x + c_3 x^2 + \cdots + c_k x^{k-1})\,e^{rx}.$$The reason: when $P(r)$ has a double root, the operator $L$ factors as $(D - r)^2 \cdot Q(D)$ , and $(D-r)^2[x e^{rx}] = 0$ by direct calculation.

Example. $y'' - 4y' + 4y = 0$ gives $(r-2)^2 = 0$ , so $y = (c_1 + c_2 x)e^{2x}$ .

$$y \;=\; e^{\alpha x}\bigl(c_1 \cos\beta x + c_2 \sin\beta x\bigr).$$Why this works. The complex pair contributes $C_1 e^{(\alpha+i\beta)x} + C_2 e^{(\alpha-i\beta)x}$ , and Euler’s formula $e^{i\beta x} = \cos\beta x + i\sin\beta x$ rearranges this into the real form. Concretely, $\alpha$ controls the exponential envelope and $\beta$ controls the oscillation rate.

Example. $y'' + 2y' + 5y = 0$ gives $r = -1 \pm 2i$ , so $y = e^{-x}(c_1\cos 2x + c_2\sin 2x)$ — a sinusoid trapped inside a shrinking exponential envelope.

Reading roots geometrically#

The location of the roots in the complex plane is the qualitative behaviour:

- Left half-plane ($\Re(r) < 0$ ): solution decays. Stable.

- Right half-plane ($\Re(r) > 0$ ): solution grows. Unstable.

- Imaginary axis ($\Re(r) = 0$ ): pure oscillation, marginal.

- Off-axis ($\Im(r) \neq 0$ ): oscillatory component at frequency $|\Im(r)|$ .

What the characteristic equation is physically. Substituting $y = e^{rx}$ is not a clever guess — it is asking for which exponential rates does the differential operator commute with itself trivially? The operator $D = d/dx$ has each $e^{rx}$ as an eigenfunction with eigenvalue $r$ , so $L[e^{rx}] = P(r)\,e^{rx}$ . Solving $L[y]=0$ is therefore the same as finding the spectrum of $L$ : the rates at which the system can sustain itself without forcing. Each root $r$ is one natural mode. For the spring-mass-damper $\ddot x + 2\zeta\omega_0\dot x + \omega_0^2 x = 0$ , the roots $r = -\zeta\omega_0 \pm i\omega_0\sqrt{1-\zeta^2}$ encode two pieces of physics simultaneously: the real part $-\zeta\omega_0$ is the energy-dissipation rate (how fast friction drains the oscillation), and the imaginary part $\omega_d = \omega_0\sqrt{1-\zeta^2}$ is the actual ringing frequency you would measure on an oscilloscope — slightly slower than $\omega_0$ because damping steals a little from each cycle. The characteristic polynomial is thus a one-line summary of every linear time-invariant system: write down the polynomial, locate its roots, and the dynamics are determined.

Damped oscillation: the trichotomy in pictures#

Its characteristic equation $r^2 + 2\zeta\omega_0 r + \omega_0^2 = 0$ has discriminant $4\omega_0^2(\zeta^2 - 1)$ . The sign of $\zeta^2 - 1$ determines which of the three cases we land in:

| Regime | Condition | Roots | Behaviour |

|---|---|---|---|

| Underdamped | $0 < \zeta < 1$ | $-\zeta\omega_0 \pm i\omega_d$ , $\omega_d = \omega_0\sqrt{1-\zeta^2}$ | Oscillation under a decaying envelope $e^{-\zeta\omega_0 t}$ |

| Critically damped | $\zeta = 1$ | $-\omega_0$ (double) | Fastest non-oscillatory return; $(c_1 + c_2 t)e^{-\omega_0 t}$ |

| Overdamped | $\zeta > 1$ | two real, both negative | Sum of two decaying exponentials, slow return |

Engineering aside. Car suspensions are tuned to $\zeta \approx 0.6\text{--}0.7$ . That is just below critical damping: the car settles fast, but with a small overshoot you do not feel. Pure $\zeta = 1$ would feel “dead” because the response is sluggish near the end; pure $\zeta = 0.1$ would let you bounce for a city block after every pothole. Door closers, by contrast, are deliberately overdamped ($\zeta > 1$ ) to avoid slamming.

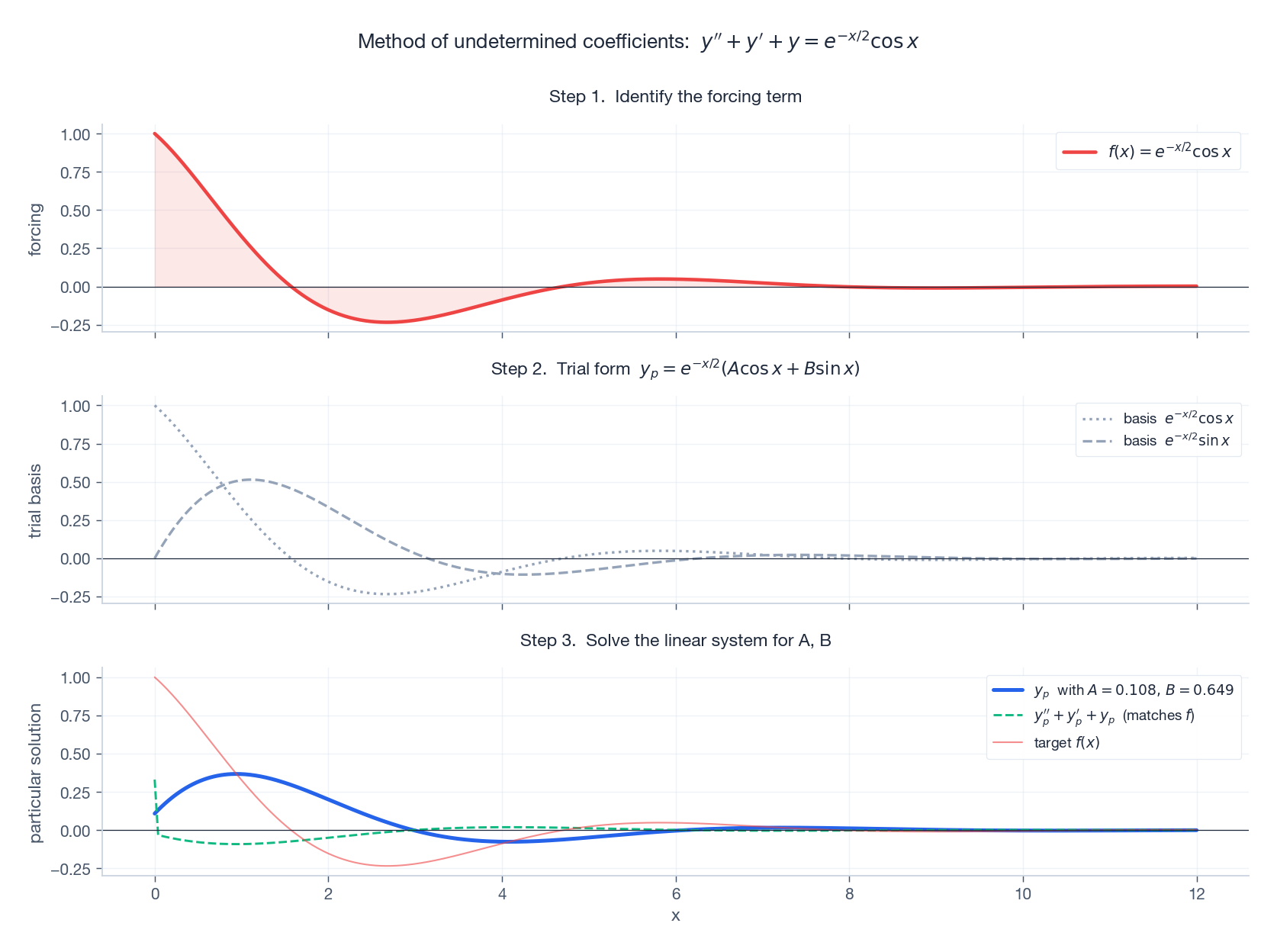

Non-homogeneous: the method of undetermined coefficients#

The recipe#

To solve $L[y] = f(x)$ with constant coefficients:

- Solve $L[y] = 0$ to get $y_h$ .

- Guess a $y_p$ whose form mirrors $f$ .

- Substitute the guess, equate coefficients to determine the unknown constants.

- Combine: $y = y_h + y_p$ .

The guessing table#

| Forcing $f(x)$ | Trial $y_p$ |

|---|---|

| $e^{\alpha x}$ | $A e^{\alpha x}$ |

| $\cos\beta x$ or $\sin\beta x$ | $A\cos\beta x + B\sin\beta x$ |

| polynomial of degree $n$ | polynomial of degree $n$ |

| $e^{\alpha x}\cos\beta x$ | $e^{\alpha x}(A\cos\beta x + B\sin\beta x)$ |

| product / sum of the above | corresponding product / sum |

The resonance correction. If your trial form is itself a homogeneous solution, multiply it by $x$ (or $x^2$ for a double resonance). Otherwise the substitution gives $0 = f$ — a contradiction.

A worked example end-to-end#

Solve $y'' + y' + y = e^{-x/2}\cos x$ .

$$y_h \;=\; e^{-x/2}\bigl(c_1\cos\tfrac{\sqrt 3}{2}x + c_2\sin\tfrac{\sqrt 3}{2}x\bigr).$$ $$y_p \;=\; e^{-x/2}(A\cos x + B\sin x).$$Solving for $A, B$ . Substituting and collecting $\cos$ and $\sin$ terms gives the linear system $\tfrac14 A + \tfrac32 B = 1,\ -\tfrac32 A + \tfrac14 B = 0$ , whose solution is $A = \tfrac{2}{37},\ B = \tfrac{24}{37}$ .

Resonance: when the trial form lies inside $y_h$ #

$$\ddot x + \omega_0^2 x \;=\; F_0\cos\omega_0 t.$$ $$x_p \;=\; \frac{F_0}{2\omega_0}\,t\,\sin\omega_0 t.$$The amplitude grows linearly with time — energy is pumped in every cycle and never removed. Add even a sliver of damping and the unbounded growth becomes a finite peak at $\omega_r = \omega_0\sqrt{1 - 2\zeta^2}$ , with peak amplitude $\propto 1/\zeta$ .

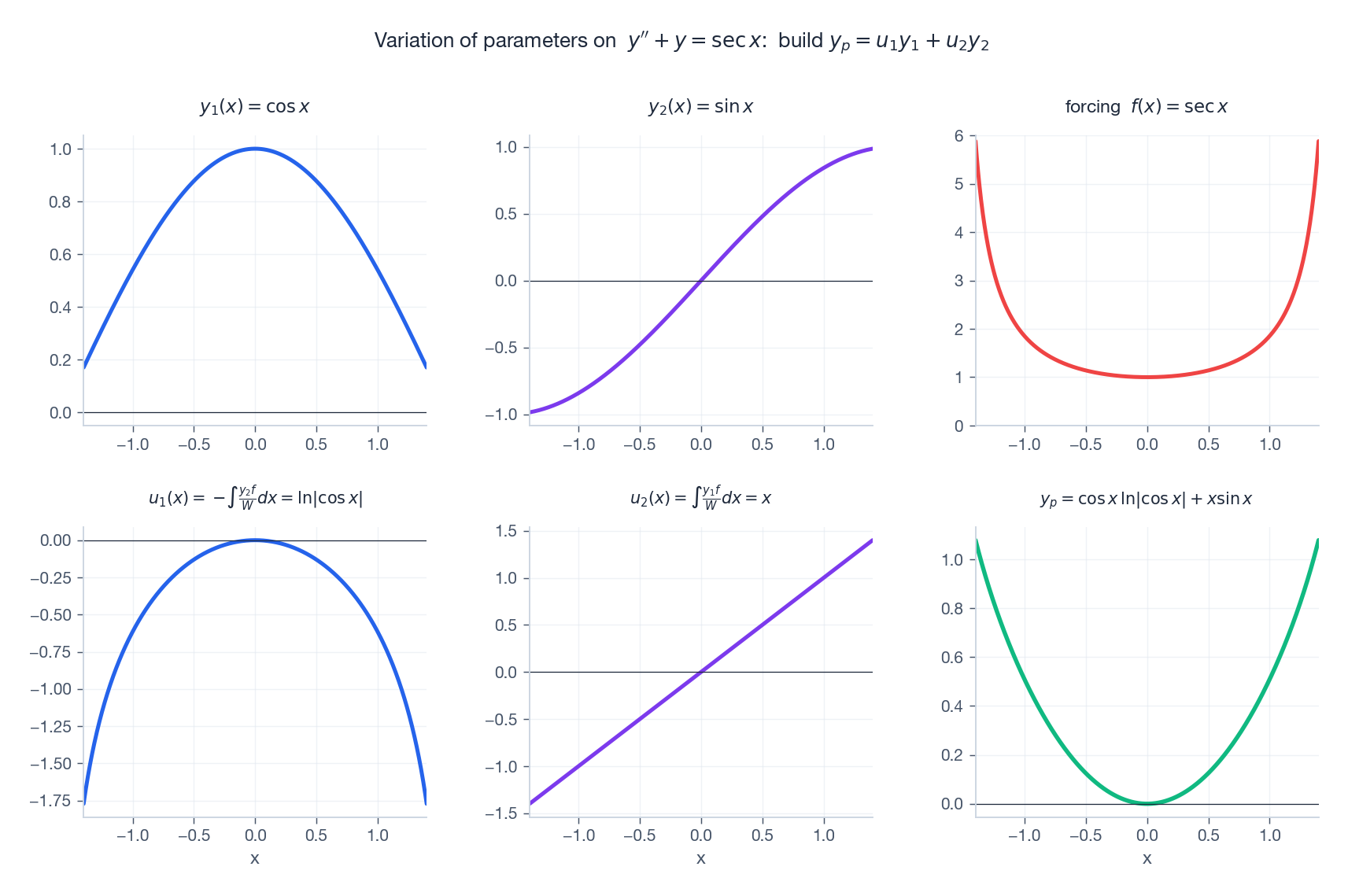

Variation of parameters: when guessing fails#

Why we need it#

The undetermined-coefficients table only contains exponentials, polynomials, sines, cosines, and their products. For $f(x) = \sec x, \tan x, \ln x, e^{x^2}$ , … we need a method that works for any continuous forcing. Variation of parameters is that method.

The formula (second order)#

$$ \begin{pmatrix} y_1 & y_2 \\ y_1' & y_2' \end{pmatrix} \begin{pmatrix} u_1' \\ u_2' \end{pmatrix} = \begin{pmatrix} 0 \\ f \end{pmatrix}, $$ $$\boxed{\;y_p \;=\; -\,y_1 \int \frac{y_2\,f}{W}\,dx \;+\; y_2 \int \frac{y_1\,f}{W}\,dx\;}.$$Worked example: $y'' + y = \sec x$ #

$$ \frac{y_2\,f}{W} = \sin x\,\sec x = \tan x, \qquad \frac{y_1\,f}{W} = \cos x\,\sec x = 1. $$ $$ y_p \;=\; -\cos x\int\tan x\,dx + \sin x\int 1\,dx \;=\; \cos x\,\ln|\cos x| + x\,\sin x. $$

When to use which. Undetermined coefficients is faster when it applies: you guess and solve a small linear system. Variation of parameters always works (with continuous $f$ ) but costs you two integrals — which may themselves be hard. For exponential / trig / polynomial forcings, prefer undetermined coefficients; for everything else, reach for variation of parameters.

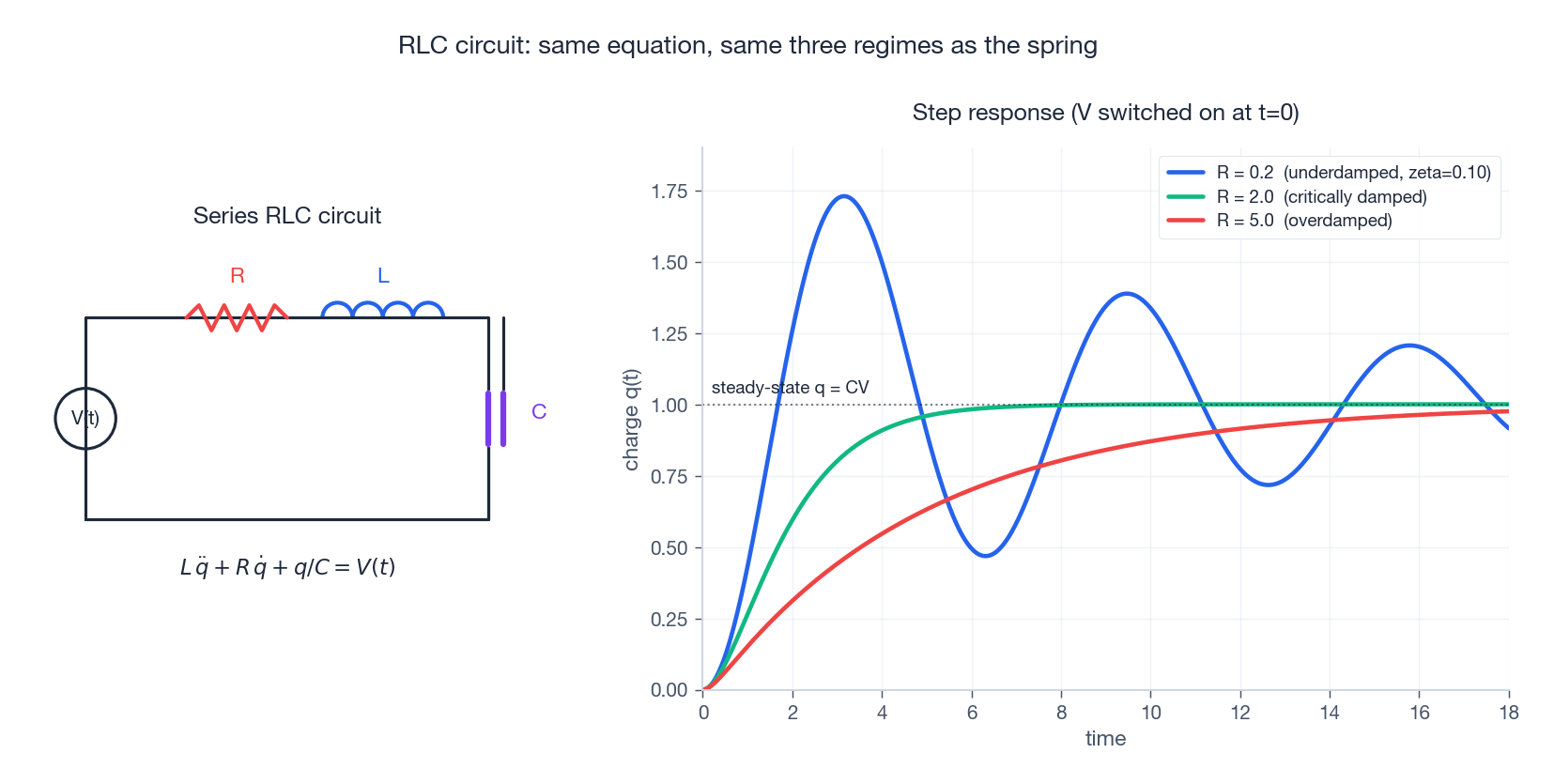

RLC circuits: the same equation in disguise#

$$L\,\ddot q \;+\; R\,\dot q \;+\; \frac{q}{C} \;=\; V(t),$$where $q(t)$ is the charge on the capacitor and $\dot q = i(t)$ is the current. Match coefficients with the mechanical normal form:

| Mechanical | Electrical |

|---|---|

| mass $m$ | inductance $L$ |

| damping $b$ | resistance $R$ |

| stiffness $k$ | inverse capacitance $1/C$ |

| displacement $x$ | charge $q$ |

| forcing $F(t)$ | voltage $V(t)$ |

The standard parameters become $\omega_0 = 1/\sqrt{LC}$ (resonant angular frequency), $\zeta = \tfrac{R}{2}\sqrt{C/L}$ (damping ratio), and $Q = 1/(2\zeta)$ (quality factor; high $Q$ means a sharp resonance peak). The entire under/critical/over trichotomy carries over verbatim.

Reduction to a first-order system#

$$y'' + a\,y' + b\,y = f(x), \qquad y_1 := y,\ y_2 := y',$$ $$ \begin{pmatrix} y_1' \\ y_2' \end{pmatrix} \;=\; \begin{pmatrix} 0 & 1 \\ -b & -a \end{pmatrix} \begin{pmatrix} y_1 \\ y_2 \end{pmatrix} + \begin{pmatrix} 0 \\ f(x) \end{pmatrix}. $$The eigenvalues of the matrix $\bigl(\begin{smallmatrix} 0 & 1 \\ -b & -a \end{smallmatrix}\bigr)$ are exactly the roots of the characteristic polynomial $r^2 + ar + b$ — so everything we said about characteristic roots is just two-dimensional linear algebra in disguise. We will exploit this fully in Chapter 6 .

Summary#

Method selector#

| Equation | First tool to try |

|---|---|

| Constant-coefficient homogeneous | Characteristic equation |

| Constant-coefficient non-homogeneous, “nice” $f$ | Characteristic equation + undetermined coefficients |

| Variable-coefficient or arbitrary continuous $f$ | Find $y_h$ first, then variation of parameters |

| Anything you want to integrate numerically | Reduce to a first-order system |

Roots-to-solutions cheat sheet#

| Root | Contribution to the basis |

|---|---|

| Distinct real $r$ | $e^{rx}$ |

| Real $r$ , multiplicity $k$ | $e^{rx},\ x e^{rx},\ \dots,\ x^{k-1} e^{rx}$ |

| Complex pair $\alpha \pm i\beta$ | $e^{\alpha x}\cos\beta x,\ e^{\alpha x}\sin\beta x$ |

Big ideas to keep#

- A second-order ODE is the natural language of inertia plus restoring force.

- The location of the characteristic roots in $\mathbb{C}$ already tells you the qualitative behaviour.

- The Wronskian is a determinant that detects whether candidate solutions span the full $n$ -dimensional solution space.

- Resonance is what happens when your trial form is a homogeneous solution; multiplying by $x$ both fixes the algebra and explains the unbounded growth physically.

- Mechanical and electrical second-order systems are the same equation; the same intuition transfers without modification.

Exercises#

Warm-up.

- General solution of $y'' - 3y' + 2y = 0$ .

- General solution of $y'' - 4y' + 4y = 0$ (repeated root).

- Solve the IVP $y'' + y = 0,\ y(0) = 1,\ y'(0) = 0$ .

- Solve $y'' + 4y = \sin 2x$ and identify the resonance correction.

Practice.

- Use variation of parameters to find a particular solution of $y'' + y = \tan x$ on $(-\pi/2, \pi/2)$ .

- An RLC circuit has $R = 100\,\Omega,\ L = 0.5\,\text{H},\ C = 10\,\mu\text{F}$ . Compute $\omega_0$ , $\zeta$ , and $Q$ , and decide which regime it is in.

- Show that the steady-state amplitude of $\ddot x + 2\zeta\omega_0\dot x + \omega_0^2 x = F_0\cos\omega t$ is $A(\omega) = F_0/\sqrt{(\omega_0^2 - \omega^2)^2 + (2\zeta\omega_0\omega)^2}$ , and that for $\zeta < 1/\sqrt 2$ the peak occurs at $\omega_r = \omega_0\sqrt{1 - 2\zeta^2}$ .

Programming.

- Reproduce the damping-regimes figure: plot step responses for $\zeta = 0.1, 0.3, 0.7, 1.0, 2.0$

with $\omega_0 = 2\pi$

, using

scipy.integrate.solve_ivp. - Simulate a double pendulum (a non-linear coupled second-order system) and show numerically that two trajectories with initial conditions differing by $10^{-6}$ diverge after a few seconds. This is your first taste of Chapter 9 (chaos).

References#

- Boyce & DiPrima, Elementary Differential Equations and Boundary Value Problems, Wiley (2012). The standard treatment, theorem-by-theorem.

- Kreyszig, Advanced Engineering Mathematics, Wiley (2011). Strong on the engineering applications, especially RLC and vibrations.

- Nagle, Saff & Snider, Fundamentals of Differential Equations, Pearson (2017). Clean exposition of variation of parameters.

- Strogatz, Nonlinear Dynamics and Chaos (1994). Chapters 4 and 5 give the geometric reading of linear stability that anticipates Chapter 7 .

- MIT OpenCourseWare 18.03, Differential Equations — video lectures by Arthur Mattuck.

This is Part 3 of the 18-part series on Ordinary Differential Equations.

- Part 1: Origins and Intuition

- Part 2: First-Order Methods

- Part 3: Higher-Order Linear Theory (current)

- Part 4: The Laplace Transform

- Part 5: Power Series and Special Functions

- Part 6: Linear Systems and the Matrix Exponential

- Part 7: Stability Theory

- Part 8: Nonlinear Systems and Phase Portraits

- Part 9: Chaos Theory and the Lorenz System

- Part 10: Bifurcation Theory

- Part 11: Numerical Methods

- Part 12: Boundary Value Problems

- Part 13: Introduction to PDEs

- Part 14: Epidemic Models

- Part 15: Population Dynamics

- Part 16: Fundamentals of Control Theory

- Part 17: Physics and Engineering Applications

- Part 18: Frontiers and Series Finale

ODE Foundations 18 parts

- 01 Ordinary Differential Equations (1): Origins and Intuition

- 02 Ordinary Differential Equations (2): First-Order Methods

- 03 Ordinary Differential Equations (3): Higher-Order Linear Theory you are here

- 04 Ordinary Differential Equations (4): The Laplace Transform

- 05 Ordinary Differential Equations (5): Power Series and Special Functions

- 06 Ordinary Differential Equations (6): Linear Systems and the Matrix Exponential

- 07 Ordinary Differential Equations (7): Stability Theory

- 08 Ordinary Differential Equations (8): Nonlinear Systems and Phase Portraits

- 09 Ordinary Differential Equations (9): Chaos Theory and the Lorenz System

- 10 Ordinary Differential Equations (10): Bifurcation Theory

- 11 Ordinary Differential Equations (11): Numerical Methods

- 12 Ordinary Differential Equations (12): Boundary Value Problems

- 13 Ordinary Differential Equations (13): Introduction to Partial Differential Equations

- 14 Ordinary Differential Equations (14): Epidemic Models and Epidemiology

- 15 Ordinary Differential Equations (15): Population Dynamics

- 16 Ordinary Differential Equations (16): Fundamentals of Control Theory

- 17 Ordinary Differential Equations (17): Physics and Engineering Applications

- 18 Ordinary Differential Equations (18): Frontiers and Series Finale