Ordinary Differential Equations (6): Linear Systems and the Matrix Exponential

When multiple variables interact, you need systems of ODEs. Learn the matrix exponential, eigenvalue-based solutions, phase portrait classification, and applications to coupled oscillators and RLC circuits.

One equation describes one quantity. The world is rarely that obliging. Predator and prey populations push each other up and down. Currents and voltages in an RLC network oscillate together. Chemical species in a reaction network feed into one another. The moment two unknowns share an equation, you have a system, and a single $y'=ay$ is no longer enough.

The miracle of the linear case is this: the scalar formula $y(t)=e^{at}y_0$ generalizes verbatim once you learn what $e^{At}$ means for a matrix $A$ . Linear algebra and ODEs fuse into one object — the matrix exponential — and its eigenstructure tells you everything about the long-term behavior, the geometry of the flow, and the physics of normal modes and beats.

What You Will Learn#

- Writing ODE systems in matrix form $\mathbf{x}'=A\mathbf{x}$ and why this is more than notation

- The matrix exponential $e^{At}$ : its definition, its properties, and three ways to compute it

- The eigenvalue method, complex eigenvalues, and what to do when eigenvectors run out

- The full classification of 2D phase portraits and the trace–determinant stability map

- Non-homogeneous systems and Duhamel’s formula

- Coupled oscillators: normal modes, beats, and energy transfer

Prerequisites#

- Linear algebra: eigenvalues, eigenvectors, change of basis

- Chapter 3 : second-order linear ODEs (every such equation becomes a 2D system)

From Two Coupled Equations to One Vector Equation#

$$x' = 2x - y, \qquad y' = x + 0.5\,y.$$ $$\mathbf{x}' = A\mathbf{x}, \qquad A = \begin{pmatrix} 2 & -1 \\ 1 & 0.5 \end{pmatrix}.$$This is not just notation. It is a change of viewpoint: a single trajectory $\mathbf{x}(t)$ in the plane replaces two coupled time series. Geometry takes over from algebra.

$$\mathbf{x}(t) = e^{At}\,\mathbf{x}_0,$$but this is only meaningful once we say what the exponential of a matrix is. That is the central object of the chapter.

The Matrix Exponential#

Definition by power series#

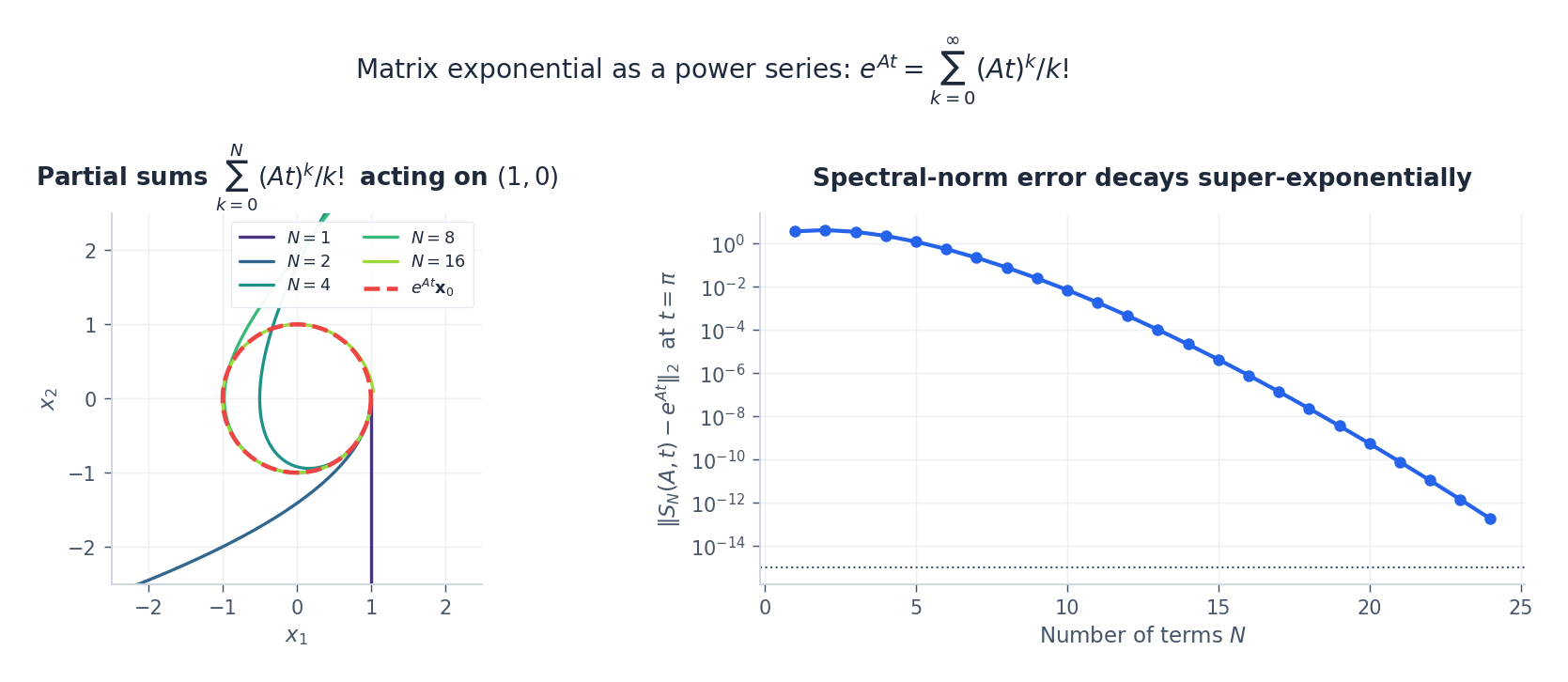

$$e^{At} \;=\; I + At + \frac{(At)^2}{2!} + \frac{(At)^3}{3!} + \cdots \;=\; \sum_{k=0}^{\infty} \frac{(At)^k}{k!}.$$This series converges in operator norm for every square matrix $A$ and every $t\in\mathbb{R}$ , because $\|(At)^k/k!\| \le (\|A\|t)^k/k!$ and the scalar series converges.

The three properties you actually use#

Once the series is in hand, three facts do almost all the work:

| Property | Why it matters |

|---|---|

| $e^{A\cdot 0} = I$ | Initial condition is satisfied automatically |

| $\dfrac{d}{dt}e^{At} = Ae^{At}$ | This is exactly what makes $\mathbf{x}(t)=e^{At}\mathbf{x}_0$ solve $\mathbf{x}'=A\mathbf{x}$ |

| $e^{At}e^{As}=e^{A(t+s)}$ | The flow is a one-parameter group; in particular $(e^{At})^{-1}=e^{-At}$ |

A subtle warning: $e^{A+B} = e^A e^B$ holds only when $AB=BA$ . This is the matrix version of the fact that $\sin(x+y)\ne \sin x+\sin y$ — non-commutativity has consequences.

Three ways to compute it in practice#

- Power series, truncated. Cheap conceptually, terrible numerically when $\|At\|$ is large.

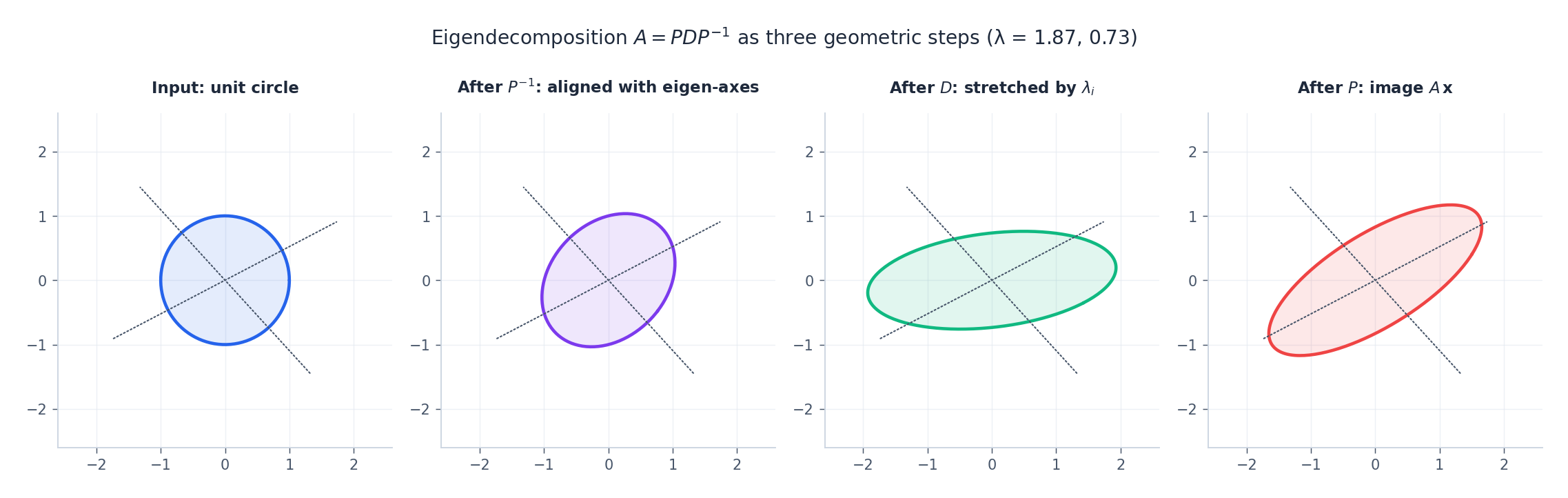

- Eigendecomposition. If $A$ is diagonalizable as $A=PDP^{-1}$ with $D=\mathrm{diag}(\lambda_i)$ , then

This is the structural formula every theoretical argument leans on.

3. Padé with scaling and squaring. The industrial method — used by scipy.linalg.expm, MATLAB’s expm, etc. Compute $e^{At/2^s}$

from a Padé rational approximant, then square $s$

times. Robust for large $\|At\|$

.

In code:

| |

The Eigenvalue Method#

The eigendecomposition formula has a clean re-statement that avoids matrix exponentials altogether.

Theorem. If $A$ has eigenpairs $(\lambda_i,\mathbf{v}_i)$ with linearly independent $\mathbf{v}_i$ , the general solution of $\mathbf{x}'=A\mathbf{x}$ is $\mathbf{x}(t) = c_1 e^{\lambda_1 t}\mathbf{v}_1 + c_2 e^{\lambda_2 t}\mathbf{v}_2 + \cdots + c_n e^{\lambda_n t}\mathbf{v}_n.$

The proof is a one-liner: for each eigenpair, $\frac{d}{dt}\bigl(e^{\lambda t}\mathbf{v}\bigr)=\lambda e^{\lambda t}\mathbf{v}=A\bigl(e^{\lambda t}\mathbf{v}\bigr)$ . Linearity finishes the job.

Geometry: eigenvectors are the natural axes#

The decomposition $A=PDP^{-1}$ has a simple geometric reading. Apply $A$ to a vector by:

- $P^{-1}$ : re-express the vector in the eigenbasis;

- $D$ : stretch along each eigen-axis by the corresponding $\lambda_i$ ;

- $P$ : re-express the stretched vector in the original basis.

The flow $e^{At}$ does the same thing but with $e^{\lambda_i t}$ stretches.

Complex eigenvalues give rotations#

$$\mathbf{x}_1(t) = e^{\alpha t}(\mathbf{a}\cos\beta t - \mathbf{b}\sin\beta t), \qquad \mathbf{x}_2(t) = e^{\alpha t}(\mathbf{a}\sin\beta t + \mathbf{b}\cos\beta t).$$Read this geometrically: $\beta$ is the angular frequency of rotation in the $(\mathbf a,\mathbf b)$ -plane, and $\alpha$ controls whether the orbit spirals outward ($\alpha>0$ ), inward ($\alpha<0$ ), or stays on a closed curve ($\alpha=0$ ).

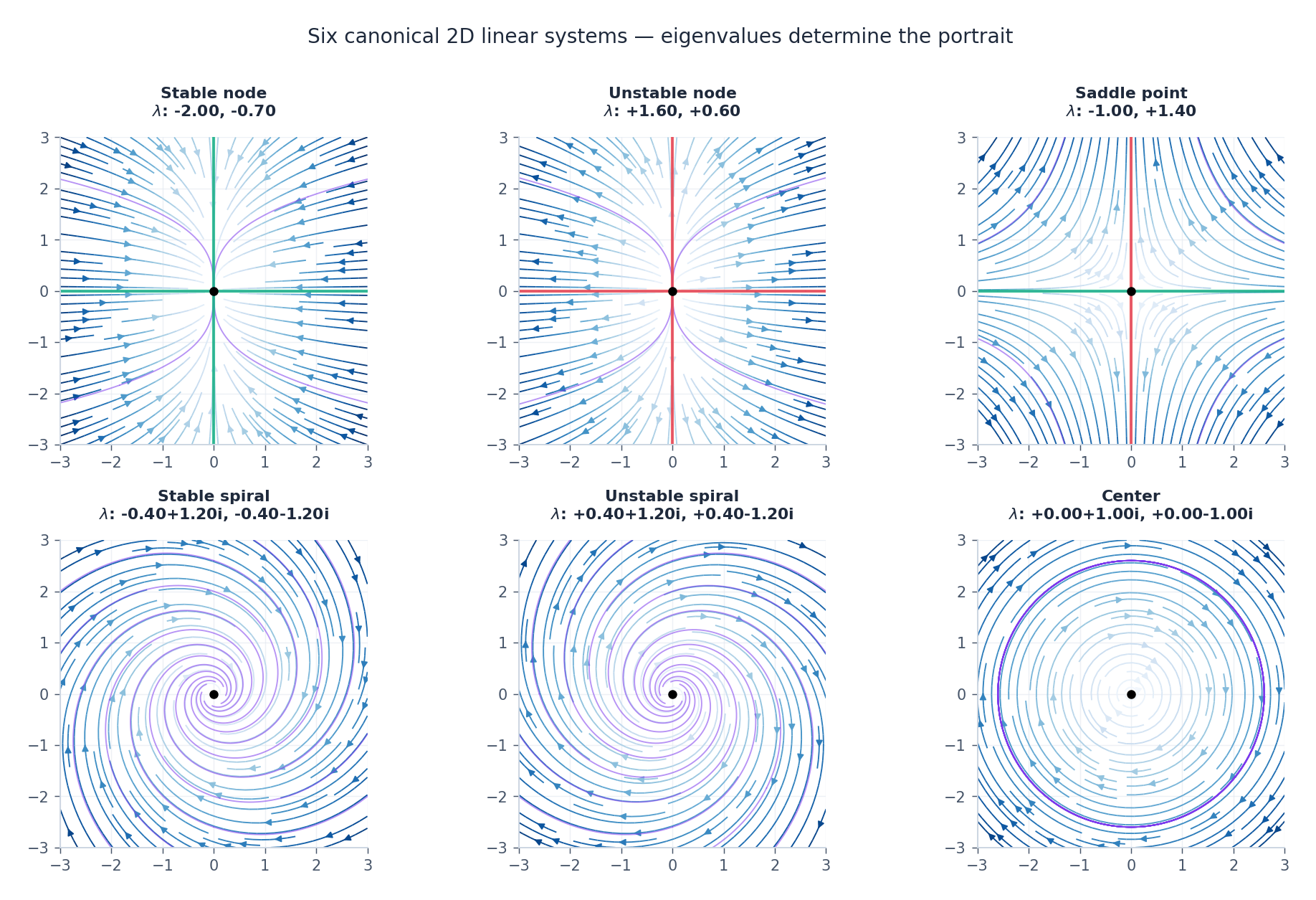

Phase Portraits in 2D#

For $\mathbf{x}'=A\mathbf{x}$ on the plane, the eigenvalues $\lambda_1,\lambda_2$ determine everything about the local geometry near the origin. The taxonomy is short and worth memorizing.

| Eigenvalues | Portrait | Stability |

|---|---|---|

| $\lambda_1<\lambda_2<0$ (real) | Stable node — all orbits enter the origin | Asymptotically stable |

| $0<\lambda_1<\lambda_2$ (real) | Unstable node — all orbits flee | Unstable |

| $\lambda_1<0<\lambda_2$ (real) | Saddle — two stable, two unstable directions | Unstable |

| $\alpha\pm\beta i$ , $\alpha<0$ | Stable spiral | Asymptotically stable |

| $\alpha\pm\beta i$ , $\alpha>0$ | Unstable spiral | Unstable |

| $\pm\beta i$ (purely imaginary) | Center — closed orbits | Lyapunov stable, not asymptotic |

| $\lambda_1=\lambda_2$ , two eigenvectors | Star node | Sign of $\lambda$ |

| $\lambda_1=\lambda_2$ , one eigenvector | Degenerate node | Sign of $\lambda$ |

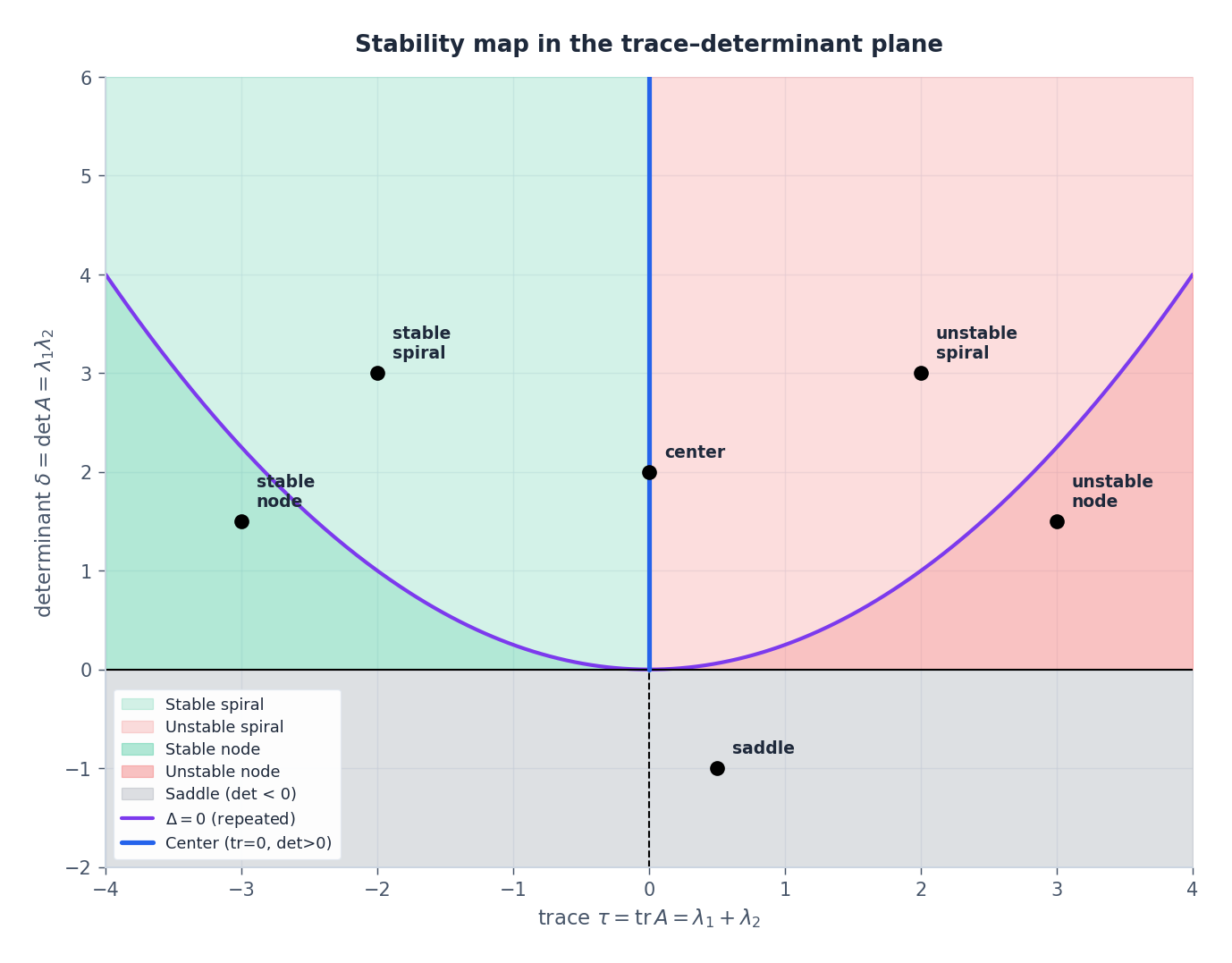

The trace–determinant trick#

$$\lambda^2 - \tau\lambda + \delta = 0, \qquad \tau = \mathrm{tr}\,A = \lambda_1+\lambda_2, \quad \delta = \det A = \lambda_1\lambda_2,$$so the discriminant is $\Delta = \tau^2 - 4\delta$ . The position of $(\tau,\delta)$ in the plane already classifies the equilibrium:

- $\delta < 0$ → real eigenvalues of opposite sign → saddle.

- $\delta > 0,\ \Delta > 0$ → real same-sign eigenvalues → node (sign of $\tau$ gives stability).

- $\delta > 0,\ \Delta < 0$ → complex eigenvalues → spiral (sign of $\tau$ gives stability).

- $\delta > 0,\ \tau = 0$ → purely imaginary → center.

- The parabola $\Delta = 0$ is the locus of repeated eigenvalues.

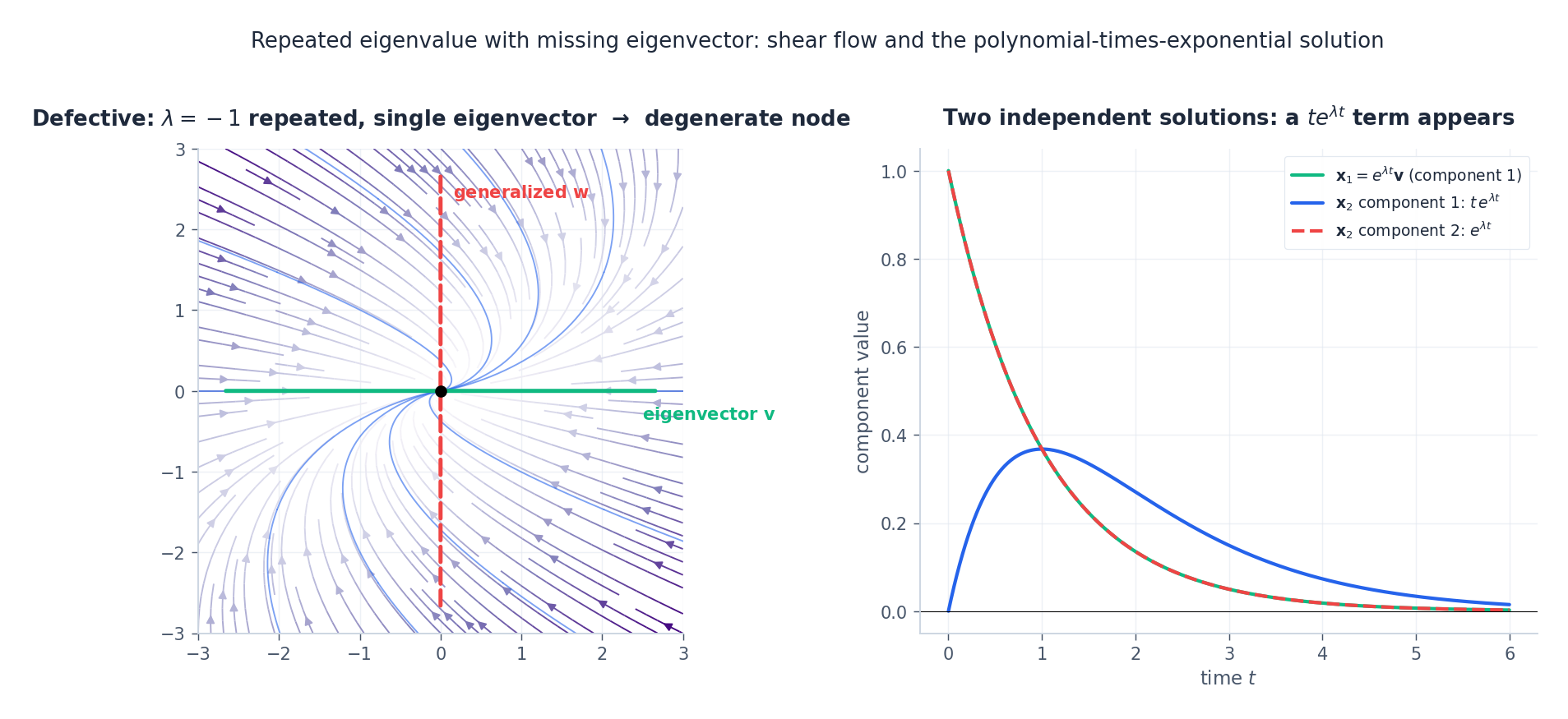

Repeated Eigenvalues and Generalized Eigenvectors#

The classification table above quietly hides a complication. A repeated eigenvalue $\lambda$ with algebraic multiplicity $2$ may have either two linearly independent eigenvectors (rare — this happens iff $A=\lambda I$ on that subspace; the result is a star node) or only one (the generic defective case — a degenerate node).

$$(A - \lambda I)\,\mathbf{w} = \mathbf{v}$$ $$\mathbf{x}_2(t) \;=\; e^{\lambda t}\bigl(t\,\mathbf{v} + \mathbf{w}\bigr)$$is a second, linearly independent solution. The polynomial-times-exponential growth of the $t$ factor is the algebraic fingerprint of degeneracy.

This is exactly the Jordan-block phenomenon: $A$ acts like $\lambda I + N$ where $N$ is nilpotent, and $e^{(\lambda I + N)t} = e^{\lambda t}(I + tN + \tfrac12 t^2 N^2 + \cdots)$ . The series terminates because $N$ is nilpotent, leaving a polynomial in $t$ .

Non-Homogeneous Systems: Duhamel’s Formula#

$$\mathbf{x}' = A\mathbf{x} + \mathbf{g}(t), \qquad \mathbf{x}(0)=\mathbf{x}_0.$$ $$\boxed{\;\mathbf{x}(t) \;=\; e^{At}\mathbf{x}_0 \;+\; \int_0^t e^{A(t-\tau)}\,\mathbf{g}(\tau)\,d\tau.\;}$$This is Duhamel’s formula. Read the integrand $e^{A(t-\tau)}\mathbf{g}(\tau)$ this way: at time $\tau$ the forcing kicks the system by $\mathbf{g}(\tau)d\tau$ , and that kick then propagates freely under the flow $e^{A(t-\tau)}$ for the remaining time. The full state is the superposition of all such delayed responses — a continuous-time impulse response.

$$\mathbf{x}(t) = e^{At}\mathbf{x}_0 + \int_0^t e^{A(t-\tau)} B\,\mathbf u(\tau)\,d\tau,$$and we are one short step from controllability and the matrix $\bigl[B,\,AB,\,A^2B,\dots\bigr]$ .

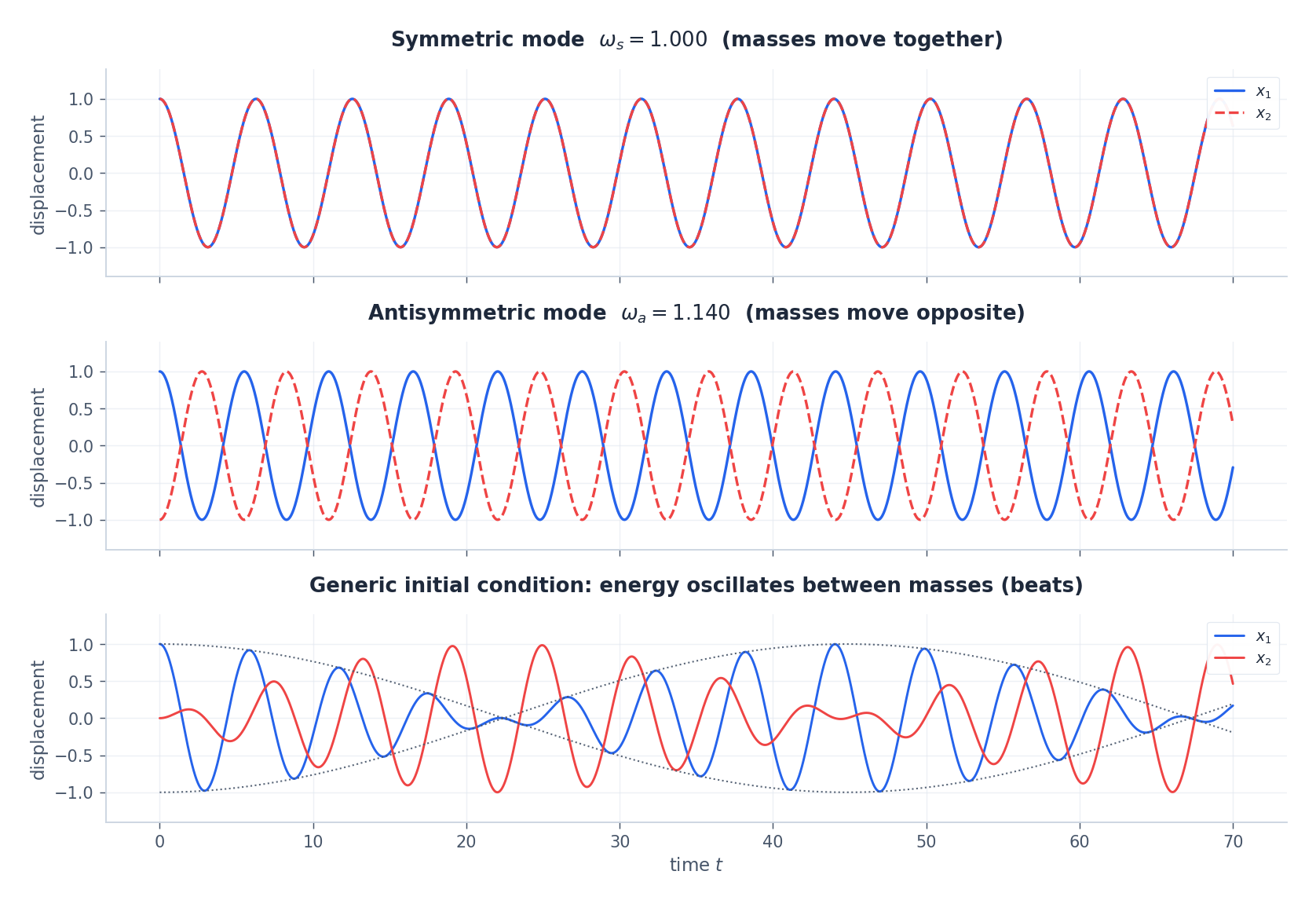

Application: Coupled Oscillators, Normal Modes, and Beats#

Two equal masses on a line, each tied to a wall by a spring of stiffness $k$ and to each other by a coupling spring of stiffness $\kappa$ :

| |

These are the normal modes: an in-phase mode at angular frequency $\omega_s=\sqrt{k}$ (the coupling spring never stretches) and an out-of-phase mode at $\omega_a=\sqrt{k+2\kappa}$ (the coupling spring is doing extra work). Every motion is a superposition of the two.

The drama happens when the modes are nearly degenerate ($\kappa \ll k$ ): displacing only mass 1 excites both modes with equal amplitude, and their slow relative dephasing causes energy to slosh entirely from mass 1 into mass 2 and back. These are beats — the audible rise and fall when two slightly mistuned tuning forks sound together.

This is the same mathematics as Rabi oscillations between two quantum states, the swap of energy in coupled LC circuits, and the synchronization of weakly coupled pendulum clocks.

Stability: The Eigenvalue Criterion#

For the linear flow $\mathbf{x}'=A\mathbf{x}$ , the long-time behavior is decided entirely by the spectrum of $A$ :

Theorem.

- All $\mathrm{Re}(\lambda) < 0\ \Longrightarrow\ e^{At}\to 0$ , and the origin is asymptotically stable.

- Any $\mathrm{Re}(\lambda) > 0\ \Longrightarrow$ origin is unstable.

- The borderline $\mathrm{Re}(\lambda) = 0$ requires a closer look (centers, Jordan blocks).

The liouville formula sharpens this: $\det \Phi(t) = \det \Phi(0)\,\exp\bigl(\int_0^t \mathrm{tr}\,A\,d\tau\bigr)$ . So $\mathrm{tr}\,A < 0$ means phase-space volumes contract (a dissipative system), $\mathrm{tr}\,A = 0$ means volumes are preserved (the Hamiltonian case), and $\mathrm{tr}\,A > 0$ means they expand.

Chapter 7 will lift this entire theory to nonlinear systems via linearization at fixed points — the Hartman–Grobman theorem says that, away from the borderline cases, the local picture of a nonlinear system is the picture of its Jacobian.

Summary#

| Concept | Key Point |

|---|---|

| Vector form $\mathbf{x}'=A\mathbf{x}$ | Solution is $e^{At}\mathbf{x}_0$ |

| Matrix exponential | Power series; computed via eigendecomposition or Padé scaling-and-squaring |

| Eigenvalue method | $\sum c_k e^{\lambda_k t}\mathbf{v}_k$ — linear combinations of eigenmodes |

| Complex eigenvalues | Real solutions are $e^{\alpha t}\bigl(\cos\beta t,\ \sin\beta t\bigr)$ -style — spirals |

| Repeated, defective | Generalized eigenvector adds a $te^{\lambda t}$ solution |

| Phase portraits | Eigenvalues $\to$ node / spiral / saddle / center; trace-det map summarizes |

| Non-homogeneous | Duhamel: $\mathbf{x}=e^{At}\mathbf{x}_0+\int_0^t e^{A(t-\tau)}\mathbf{g}(\tau)d\tau$ |

| Stability | All $\mathrm{Re}(\lambda)<0\ \Leftrightarrow$ asymptotically stable |

Exercises#

Basic

- Compute $e^{At}$ for $A=\bigl(\begin{smallmatrix}0&1\\-1&0\end{smallmatrix}\bigr)$ from the power series and by eigendecomposition. Verify they agree.

- Solve $\mathbf{x}'=\bigl(\begin{smallmatrix}1&2\\0&3\end{smallmatrix}\bigr)\mathbf{x}$ with $\mathbf{x}(0)=(1,1)^\top$ .

- Classify the origin for (a) $A=\bigl(\begin{smallmatrix}-1&2\\-2&-1\end{smallmatrix}\bigr)$ and (b) $A=\bigl(\begin{smallmatrix}1&0\\0&-2\end{smallmatrix}\bigr)$ .

Advanced

- Prove $\det e^A = e^{\mathrm{tr}\,A}$ . Hint: upper-triangularize $A$ over $\mathbb C$ (Schur decomposition).

- Find the normal-mode frequencies of three equal masses in a line, each connected to its neighbour and to the walls by springs of stiffness $k$ . Identify the symmetric, antisymmetric, and “breathing” modes.

- Give a $2\times 2$ counterexample to $e^{A+B}=e^A e^B$ when $AB\ne BA$ .

Programming

- Write a phase-portrait plotter that, given any $2\times 2$ matrix, automatically labels the equilibrium type using the trace–determinant test.

- Simulate the coupled-oscillator system for varying coupling $\kappa$ and plot the beat period $T_{\text{beat}} = 2\pi/(\omega_a - \omega_s)$ as a function of $\kappa$ . Compare against the small-$\kappa$ approximation.

References#

- Hirsch, Smale, & Devaney, Differential Equations, Dynamical Systems, and an Introduction to Chaos, Academic Press (2012)

- Strang, Linear Algebra and Learning from Data, Wellesley-Cambridge (2019)

- Moler & Van Loan, “Nineteen Dubious Ways to Compute the Exponential of a Matrix,” SIAM Review 45(1) (2003)

- Perko, Differential Equations and Dynamical Systems, Springer (2001)

This is Part 6 of the 18-part series on Ordinary Differential Equations.

- Part 1: Origins and Intuition

- Part 2: First-Order Methods

- Part 3: Higher-Order Linear Theory

- Part 4: The Laplace Transform

- Part 5: Power Series and Special Functions

- Part 6: Linear Systems and the Matrix Exponential (current)

- Part 7: Stability Theory

- Part 8: Nonlinear Systems and Phase Portraits

- Part 9: Chaos Theory and the Lorenz System

- Part 10: Bifurcation Theory

- Part 11: Numerical Methods

- Part 12: Boundary Value Problems

- Part 13: Introduction to PDEs

- Part 14: Epidemic Models

- Part 15: Population Dynamics

- Part 16: Fundamentals of Control Theory

- Part 17: Physics and Engineering Applications

- Part 18: Frontiers and Series Finale

ODE Foundations 18 parts

- 01 Ordinary Differential Equations (1): Origins and Intuition

- 02 Ordinary Differential Equations (2): First-Order Methods

- 03 Ordinary Differential Equations (3): Higher-Order Linear Theory

- 04 Ordinary Differential Equations (4): The Laplace Transform

- 05 Ordinary Differential Equations (5): Power Series and Special Functions

- 06 Ordinary Differential Equations (6): Linear Systems and the Matrix Exponential you are here

- 07 Ordinary Differential Equations (7): Stability Theory

- 08 Ordinary Differential Equations (8): Nonlinear Systems and Phase Portraits

- 09 Ordinary Differential Equations (9): Chaos Theory and the Lorenz System

- 10 Ordinary Differential Equations (10): Bifurcation Theory

- 11 Ordinary Differential Equations (11): Numerical Methods

- 12 Ordinary Differential Equations (12): Boundary Value Problems

- 13 Ordinary Differential Equations (13): Introduction to Partial Differential Equations

- 14 Ordinary Differential Equations (14): Epidemic Models and Epidemiology

- 15 Ordinary Differential Equations (15): Population Dynamics

- 16 Ordinary Differential Equations (16): Fundamentals of Control Theory

- 17 Ordinary Differential Equations (17): Physics and Engineering Applications

- 18 Ordinary Differential Equations (18): Frontiers and Series Finale