Ordinary Differential Equations (18): Frontiers and Series Finale

The series finale. Survey four research frontiers reshaping how we model dynamics -- Neural ODEs, delay equations, stochastic differential equations, and fractional calculus -- then take stock of the entire 18-chapter journey with a method-selection flowchart, the deep ODE-ML connection, and a roadmap for what to study next.

The journey ends here. Eighteen chapters ago we picked up a falling apple. Today we’re going to finish in the same vein in which we began — by treating ODEs as the universal language of change — but standing on a much taller mountain.

This chapter does three things. First, it surveys four active research frontiers that are reshaping how we model dynamical systems: Neural ODEs, delay equations, stochastic differential equations, and fractional calculus. Second, it reviews the entire series with a problem-solving flowchart and a chapter-by-chapter map. Third, it draws explicit connections from the classical theory you have just mastered to modern machine learning — the place where ODEs are most alive in 2025.

I’ll keep this chapter readable rather than encyclopaedic. Each frontier gets the intuition and why-it-matters; the references give you the way in.

What You Will Learn#

- Neural ODEs: connecting deep learning with continuous dynamics

- Delay differential equations: systems that remember their past

- Stochastic differential equations: noise as a first-class citizen

- Fractional derivatives and anomalous diffusion

- The deeper ODE-ML connection: PINNs, diffusion models, optimal transport

- A 18-chapter concept map and method-selection flowchart

- A study roadmap for what comes after

Prerequisites#

This chapter draws on the entire series. Familiarity with Chapters 1-17 will maximize understanding — but if you have made it this far, you are ready.

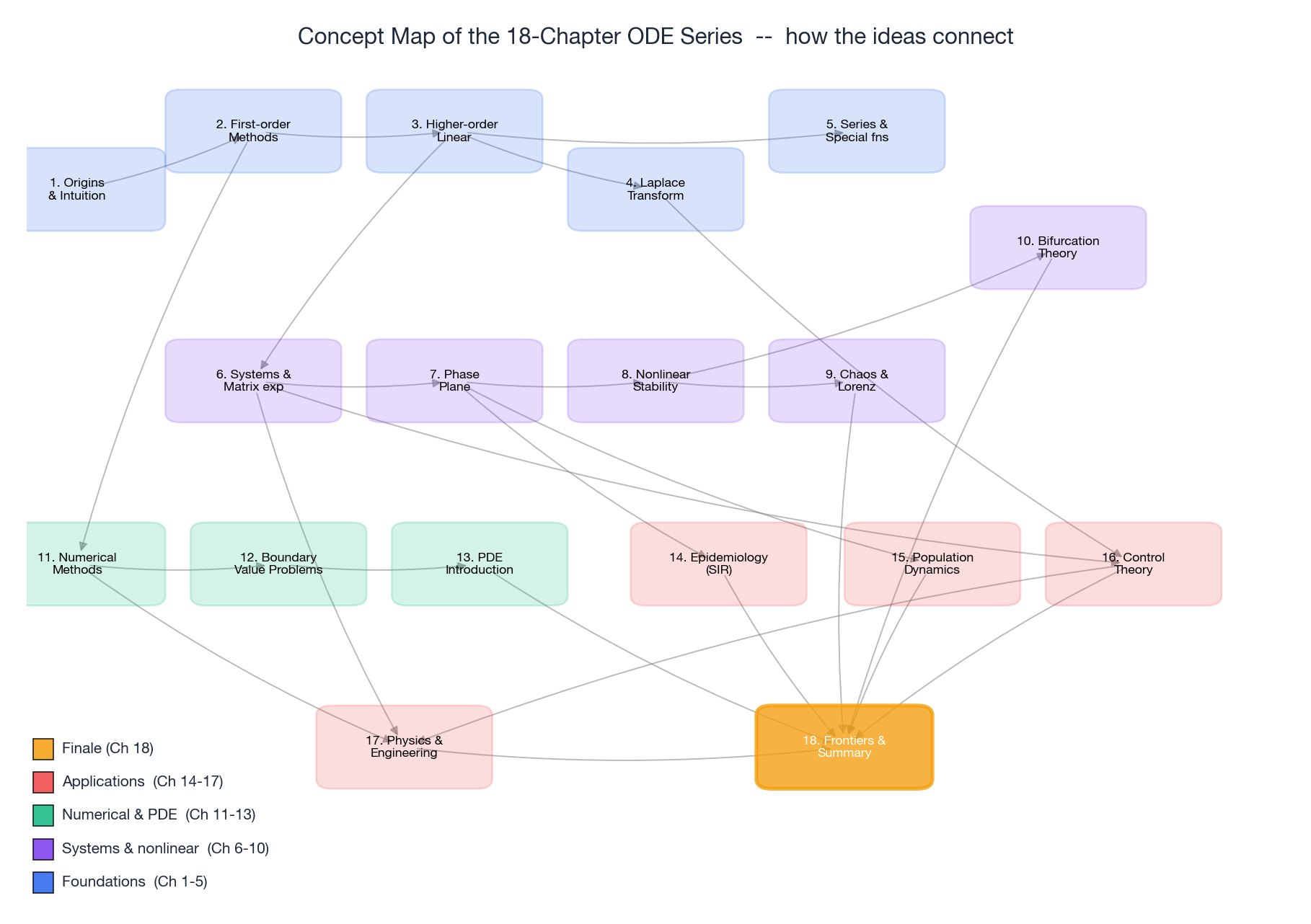

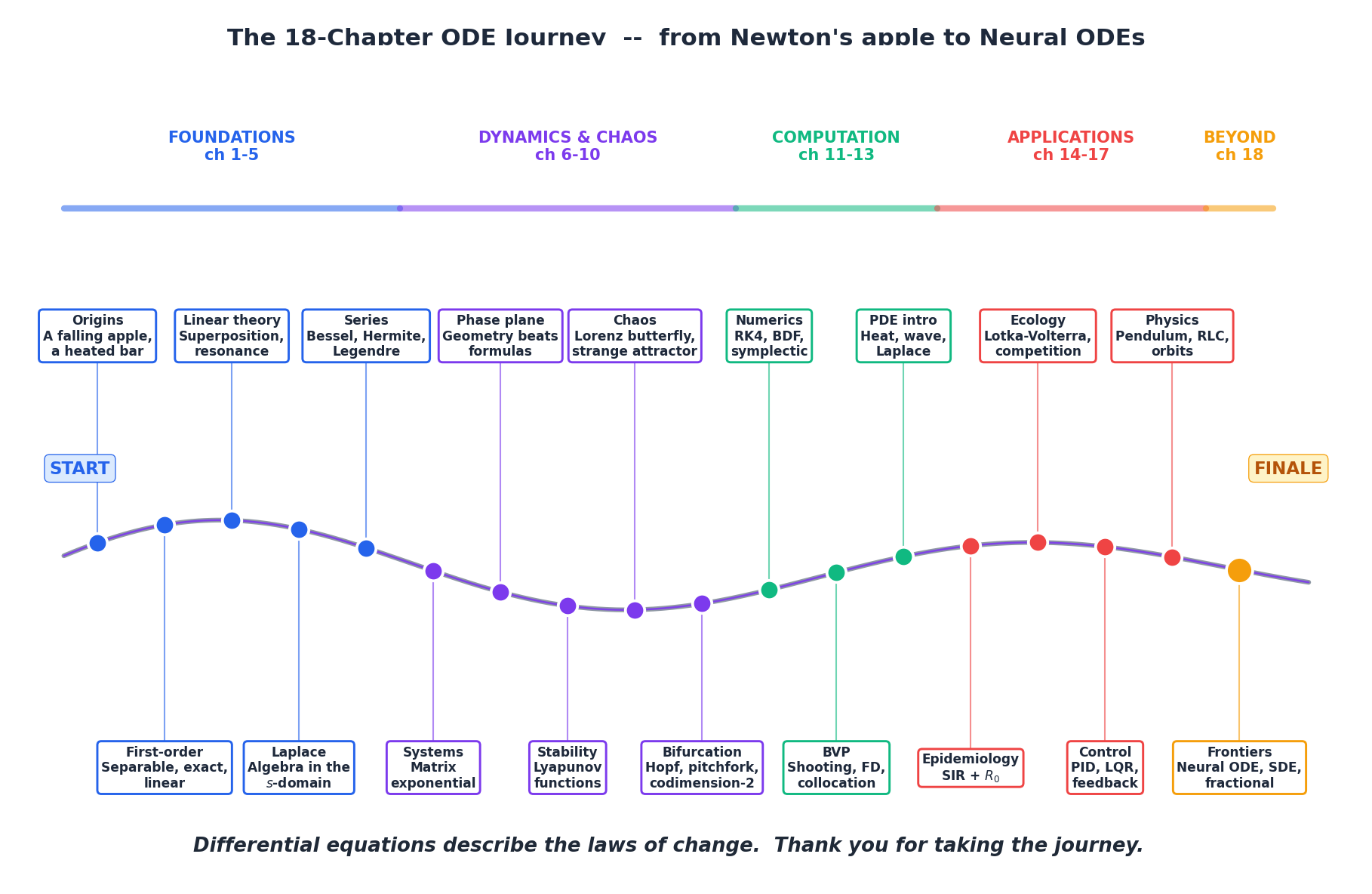

The Whole Course in One Diagram#

Before we step out of the classical world, let us see what we’ve built.

Read it as a journey, not a hierarchy:

- Foundations (1-5) — single equations, then linear theory, then transforms and series.

- Dynamics & nonlinear (6-10) — coupled systems, phase planes, stability, chaos, bifurcation.

- Computation (11-13) — numerical methods, BVPs, the bridge to PDEs.

- Applications (14-17) — epidemiology, ecology, control, physics & engineering.

- Frontiers (18) — where today’s research lives.

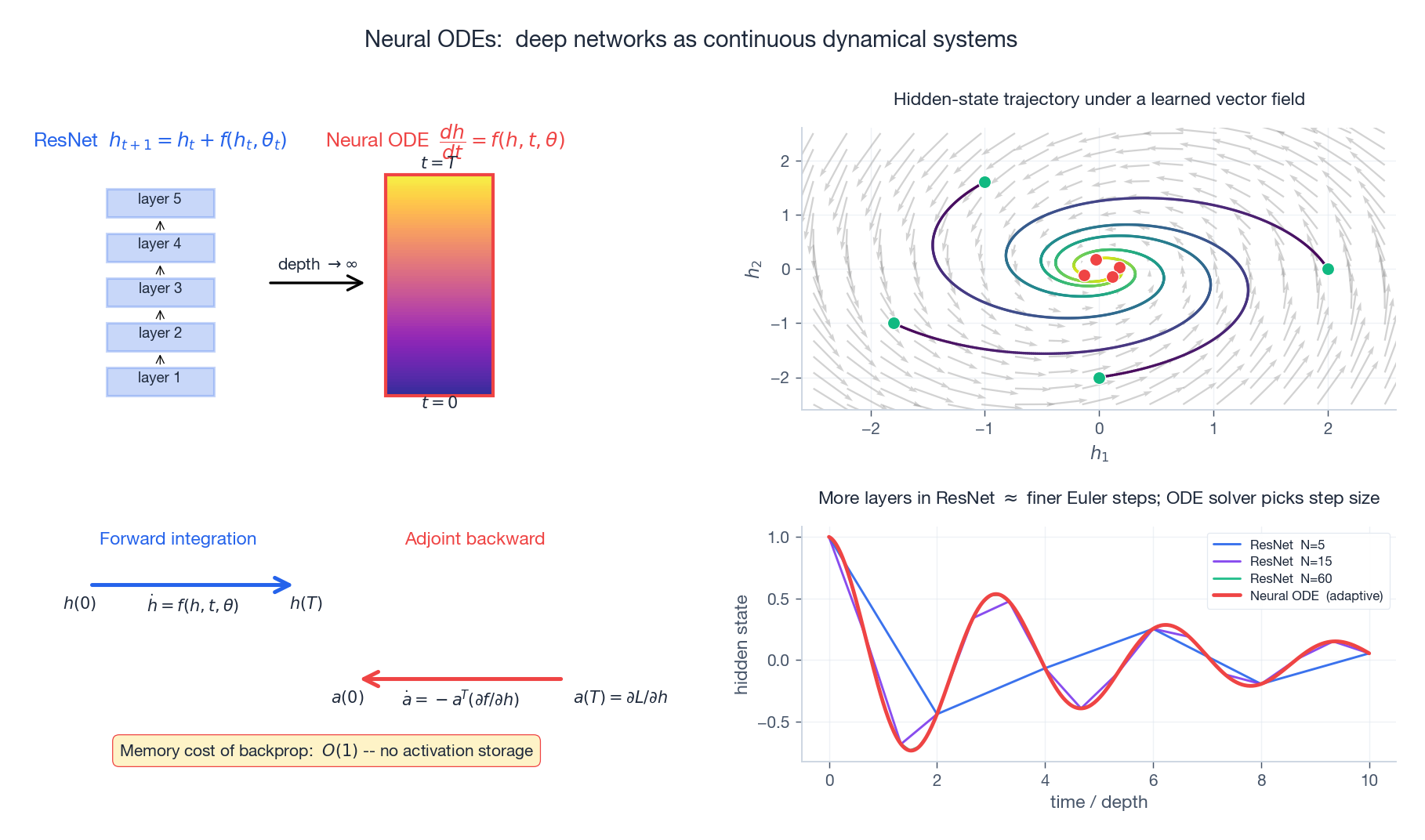

Neural ODEs — depth becomes time#

In 2018 a single NeurIPS paper, “Neural Ordinary Differential Equations” by Chen, Rubanova, Bettencourt and Duvenaud, made deep-learning practitioners pick up ODE textbooks. The trick is so clean it is almost unfair.

$$h_{t+1} = h_t + f(h_t,\; \theta_t).$$ $$\frac{d h(t)}{dt} = f\!\bigl(h(t),\; t,\; \theta\bigr).$$That is a learnable ODE. The forward pass is now an ODE solve; the network has adaptive depth; and the memory cost of backpropagation drops to $O(1)$ via the adjoint method (the same Pontryagin-style equations you would meet in optimal control, Chapter 16 ).

| |

Neural ODEs naturally handle irregularly sampled time series (medical records, sensor logs) and gave rise to continuous normalising flows for density modelling. They are also, philosophically, the cleanest example we have of machine learning rediscovering the calculus tradition — the integrator is no longer a tool, it is the model.

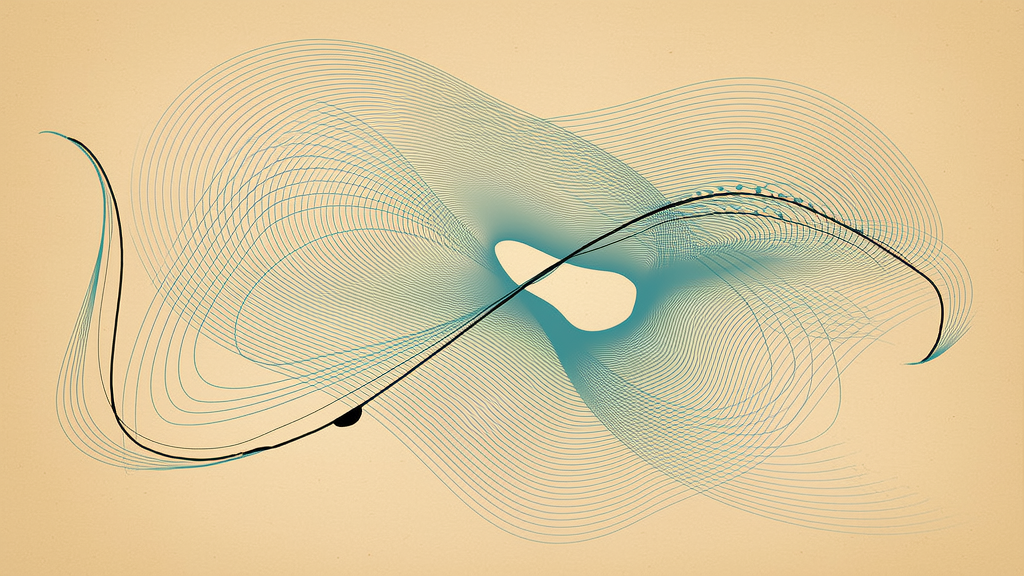

Delay Differential Equations — systems with a memory#

Many real systems do not respond to the current state alone; they respond to a delayed state. The delivery van’s response to a price change today depends on the order book of two weeks ago. A red blood cell count today reflects the bone-marrow signal of days past. A laser cavity feeds back light that was emitted picoseconds earlier.

$$\dot x(t) = f\bigl(x(t),\; x(t - \tau)\bigr).$$The state space is now infinite-dimensional: we need the entire history $\{x(s) : s \in [t-\tau, t]\}$ as initial data, not a single number.

Hutchinson’s equation#

$$\dot N(t) = r N(t)\,\Bigl(1 - \frac{N(t-\tau)}{K}\Bigr).$$Without delay ($\tau = 0$ ) it is the smooth Verhulst sigmoid. With delay it can become unstable and produce limit cycles — specifically, when $r\tau > \pi/2$ the equilibrium loses stability through a Hopf bifurcation (Chapter 10 ) and oscillations emerge.

| |

The Mackey-Glass equation with $\tau = 17$ produces low-dimensional chaos — a strange attractor born from a single delayed feedback loop. Delays show up in epidemiology (incubation periods), economics (production lead time), and any control loop with a transmission lag.

Stochastic Differential Equations — when noise has agency#

where $W_t$ is a standard Wiener process (Brownian motion) and $dW_t$ is the formal Gaussian increment with variance $dt$ .

Two canonical examples:

$$ dS = \mu S\,dt + \sigma S\,dW, \qquad S(t) = S_0\exp\!\Bigl[(\mu - \tfrac12\sigma^2)\,t + \sigma W(t)\Bigr]. $$ $$dX = \theta(\mu - X)\,dt + \sigma\,dW.$$ $$\partial_t \rho = -\partial_x(f\rho) + \tfrac12\,\partial_x^2(g^2\rho),$$a deterministic PDE. So the stochastic and deterministic worlds are linked: an SDE for individual trajectories is a PDE for the ensemble.

| |

SDEs form the foundation of mathematical finance, statistical physics, neuroscience, and — this is where we’ll come back to — modern generative AI.

Fractional Differential Equations — derivatives of order 0.7#

$${}^C\!D^\alpha f(t) \;=\; \frac{1}{\Gamma(1-\alpha)}\int_0^t \frac{f'(\tau)}{(t-\tau)^\alpha}\,d\tau.$$ $${}^C\!D^\alpha y(t) = -\lambda\,y(t)$$ $$y(t) = E_\alpha\!\bigl(-\lambda t^\alpha\bigr) = \sum_{k=0}^\infty \frac{(-\lambda t^\alpha)^k}{\Gamma(1 + k\alpha)}.$$For $\alpha = 1$ this reduces to $e^{-\lambda t}$ . For $\alpha < 1$ the early decay is faster than exponential but the long-time tail is a power law $\sim t^{-\alpha}/\Gamma(1-\alpha)$ — the system “remembers” its history.

That memory makes fractional ODEs the natural language for:

- Viscoelastic materials — creep that is neither Hookean nor Newtonian.

- Anomalous diffusion — where mean-square displacement scales as $\langle x^2\rangle \propto t^\alpha$ with $\alpha \neq 1$ (porous media, biological cells, financial returns at certain scales).

- Power-law relaxation in dielectrics, glasses, and biological tissues.

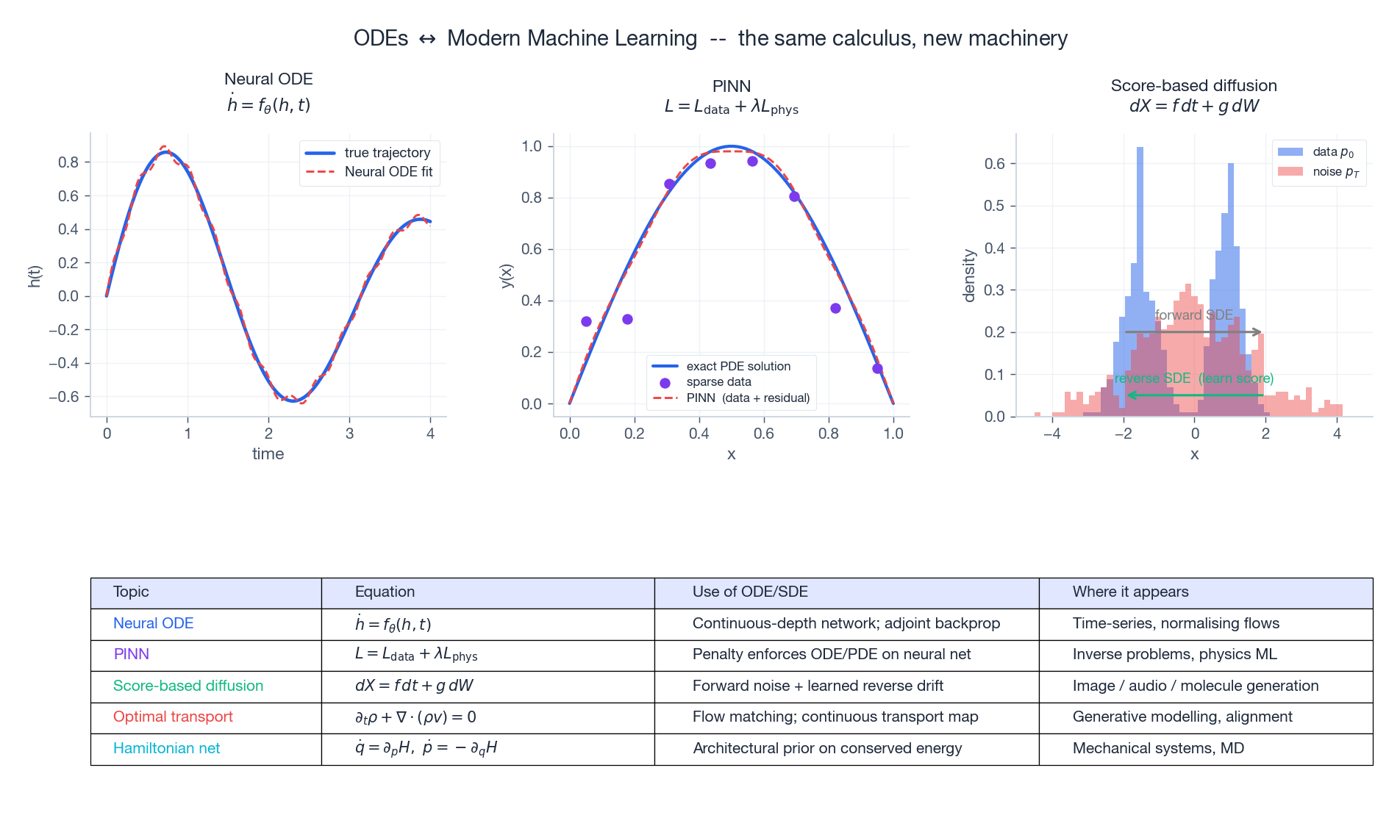

The ODE-ML Connection — and why it is more than a fashion#

We have already seen Neural ODEs (continuous-depth networks) and PINNs in the chapter intro. The deeper picture is this: ODEs and SDEs sit at the heart of every modern generative model.

Three highlights worth absorbing:

- Score-based diffusion (the engine behind Stable Diffusion and friends) is exactly an SDE with a learned drift. Image generation = solve a stochastic ODE backwards in time.

- Continuous normalising flows transform a base density via an ODE; the change of variables happens through $\partial_t \log p = -\nabla \cdot f$ .

- Optimal transport / flow matching approaches train a vector field that morphs one distribution into another along straight-line trajectories — ODEs as the geometric backbone of generative AI.

A practical takeaway: a serious ML practitioner in 2025 needs to be comfortable reading $dX = f\,dt + g\,dW$ .

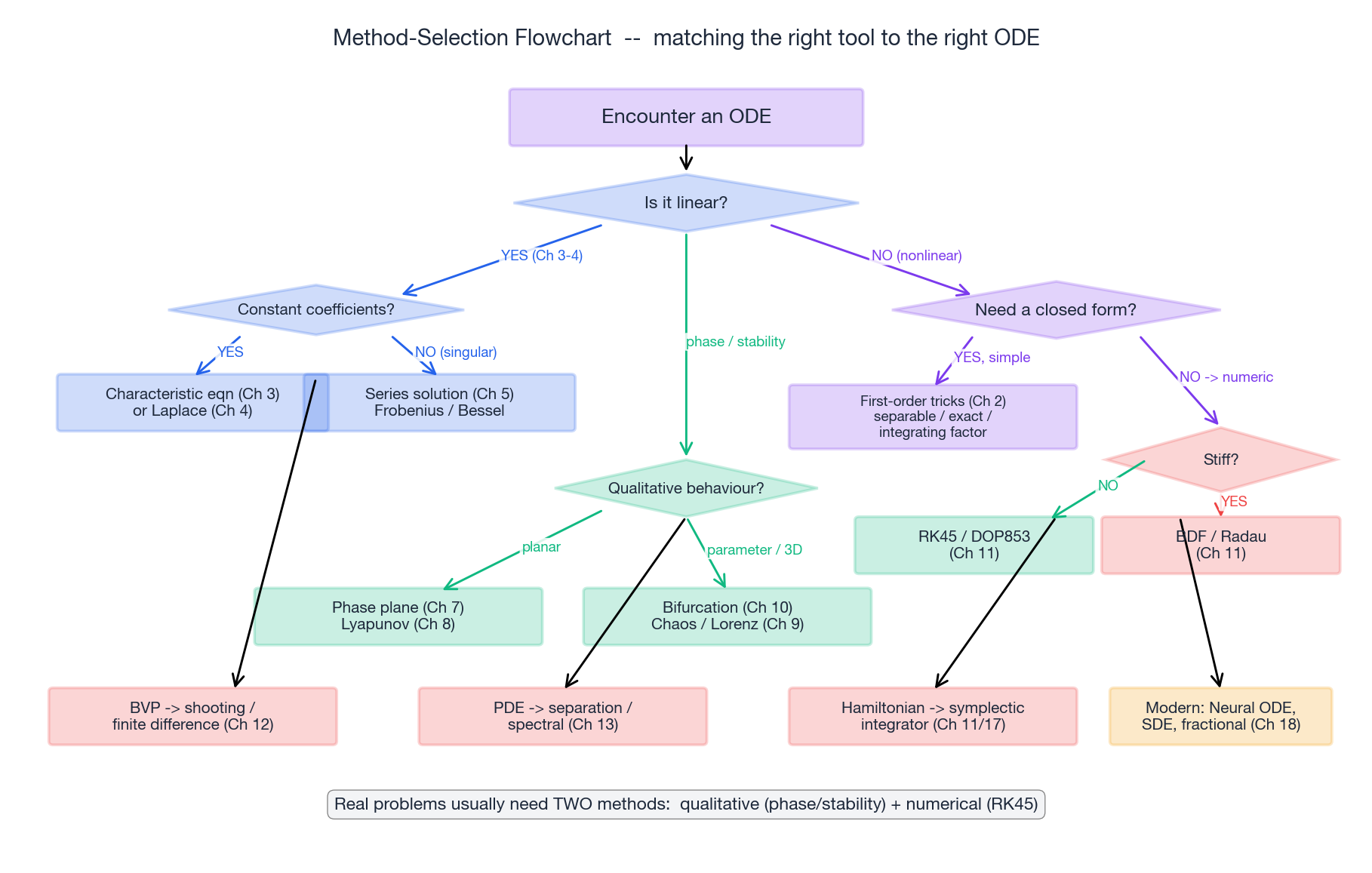

The Method Selection Flowchart#

Here is the practical decision tree for any ODE that lands on your desk.

A few concrete tips that the chart cannot show:

- Always check stiffness first. A stiff system run with RK45 will silently take huge numbers of small steps. Use BDF or Radau if you spot rapid transients.

- For Hamiltonian systems, prefer symplectic integrators (Verlet) — they conserve energy approximately for all time, while general-purpose RK methods drift.

- For BVPs, scipy’s

solve_bvpis excellent; for stiff BVPs you may need collocation. - For PDEs, separate space and time first (method of lines), then apply ODE solvers in time.

The 18-Chapter Map at a Glance#

| Chapters | Theme | What you can now do |

|---|---|---|

| 1-2 | First-order equations | Recognise & solve separable / linear / exact / Bernoulli |

| 3-4 | Higher-order linear & Laplace | Closed-form solutions for constant-coefficient LTI systems |

| 5 | Series & special functions | Frobenius near singular points; Bessel / Legendre / Hermite |

| 6-7 | Systems & phase plane | Matrix exponential; classify equilibria |

| 8-10 | Stability, chaos, bifurcation | Lyapunov functions, Lorenz attractor, Hopf / pitchfork |

| 11-13 | Numerical, BVP, PDE | RK4 / BDF / shooting / finite differences |

| 14-15 | Biology applications | SIR + $R_0$ , Lotka-Volterra, competition |

| 16-17 | Engineering applications | PID / LQR / pendulum / RLC / Kepler / vibration |

| 18 | Frontiers | Neural ODE, DDE, SDE, fractional, PINN, diffusion |

If you read all of them, you have covered roughly the equivalent of an undergraduate course on ODEs plus a graduate seminar on dynamics, plus the modern interface with ML. Few courses cover this breadth.

The Series Journey#

It is worth pausing at each label. Origins gave us the act of writing $F = ma$ as an equation. First-order gave us four canonical tricks that solve a surprising fraction of all problems by hand. Linear theory and Laplace taught us to fold the $n$ th-order behaviour of LTI systems into algebra and characteristic polynomials. Series opened the door to special functions when polynomials and exponentials were no longer enough.

Systems generalised the picture into vector form; phase planes gave us geometry as a substitute for closed-form solutions; stability and chaos showed us that beautiful structure persists even when prediction collapses. Bifurcation let us see how qualitative behaviour itself can be a function of parameters.

Numerics taught us the engineering: RK4, BDF, symplectic. BVPs and PDEs widened the horizon to spatial problems. The applications chapters proved the universality of the method by walking through epidemiology, ecology, control, mechanics, electronics, fluids — the same five-step grammar everywhere.

And here, in the finale, the toolset became modern: Neural ODE turned the integrator into a learnable model; SDEs gave noise a starring role; fractional derivatives let us interpolate between integer orders; PINN and diffusion put ODEs into the engine of contemporary AI.

A Closing Word#

Differential equations are the laws of change. They describe how a body falls, how a population grows, how a current rings, how a nation converges to herd immunity, how a neural network learns, how the universe expands. Every dynamical claim about the world, formalised, is an equation in this family.

You walked through 18 chapters. You met Newton, Laplace, Lyapunov, Lorenz, Lotka, Volterra, Bode, Riccati, Chen-Rubanova-Bettencourt-Duvenaud. You probably wrote some Python that surprised you with how clean a phase portrait looks. You may have caught yourself, looking at a swinging door or a buffering app, mentally writing down its equation.

If that is so, then the goal is met. Mathematics is not a memorised list of identities; it is a habit of seeing. ODEs train that habit better than almost any other subject because they are the place where rigour meets the world.

Go and use them. Predict, design, control, learn. The journey ends here — and the work begins now.

Thank you for reading.

Where to go from here#

Choose a target, not a textbook. Here are five well-defined next steps and the resources that match them.

| Goal | Read next | Why |

|---|---|---|

| Master classical theory | Hirsch, Smale & Devaney, Differential Equations, Dynamical Systems | Cleanest geometric treatment |

| Build numerical chops | Hairer & Wanner, Solving ODEs (vol 1 + 2) | The bible of numerical ODEs |

| Deepen nonlinear dynamics | Strogatz, Nonlinear Dynamics and Chaos | Best-written intuition-first text |

| Move into PDEs | Evans, Partial Differential Equations | Standard graduate reference |

| Bridge to ML | Kidger, On Neural Differential Equations (free PhD thesis) | Modern, code-rich, beautifully clear |

For software: scipy.integrate for Python, DifferentialEquations.jl for Julia (state-of-the-art), diffrax for JAX (autodiff-friendly), torchdiffeq for PyTorch.

References#

- Chen, R. T. Q., Rubanova, Y., Bettencourt, J., Duvenaud, D. (2018). “Neural Ordinary Differential Equations.” NeurIPS.

- Kidger, P. (2021). On Neural Differential Equations. PhD thesis, Oxford.

- Kloeden, P. E., Platen, E. (1992). Numerical Solution of Stochastic Differential Equations. Springer.

- Diethelm, K. (2010). The Analysis of Fractional Differential Equations. Springer.

- Hairer, E., Lubich, C., Wanner, G. (2006). Geometric Numerical Integration. Springer.

- Smith, H. (2010). An Introduction to Delay Differential Equations. Springer.

- Song, Y., Ermon, S. (2020). “Generative Modeling by Estimating Gradients of the Data Distribution.” NeurIPS.

Previous Chapter: Chapter 17: Physics and Engineering Applications

This is Part 18 — the final chapter — of the Ordinary Differential Equations series. Thank you for taking the journey.

ODE Foundations 18 parts

- 01 Ordinary Differential Equations (1): Origins and Intuition

- 02 Ordinary Differential Equations (2): First-Order Methods

- 03 Ordinary Differential Equations (3): Higher-Order Linear Theory

- 04 Ordinary Differential Equations (4): The Laplace Transform

- 05 Ordinary Differential Equations (5): Power Series and Special Functions

- 06 Ordinary Differential Equations (6): Linear Systems and the Matrix Exponential

- 07 Ordinary Differential Equations (7): Stability Theory

- 08 Ordinary Differential Equations (8): Nonlinear Systems and Phase Portraits

- 09 Ordinary Differential Equations (9): Chaos Theory and the Lorenz System

- 10 Ordinary Differential Equations (10): Bifurcation Theory

- 11 Ordinary Differential Equations (11): Numerical Methods

- 12 Ordinary Differential Equations (12): Boundary Value Problems

- 13 Ordinary Differential Equations (13): Introduction to Partial Differential Equations

- 14 Ordinary Differential Equations (14): Epidemic Models and Epidemiology

- 15 Ordinary Differential Equations (15): Population Dynamics

- 16 Ordinary Differential Equations (16): Fundamentals of Control Theory

- 17 Ordinary Differential Equations (17): Physics and Engineering Applications

- 18 Ordinary Differential Equations (18): Frontiers and Series Finale you are here