OpenClaw QuickStart (7): The Memory System, Without the Magic

MEMORY.md as index, memoryFlush, bge-m3 search, and the v2026.3.7 ContextEngine.

The first six pieces got you to a working OpenClaw with a channel and a skill. This one focuses on the part everyone gets wrong on their first install: memory.

The shape of the workspace#

Open ~/.openclaw/workspace/. You should see:

| |

Two files matter the most. MEMORY.md is the index — the agent reads it on every turn, so it has to be short. lessons.md is where the long tail of “we tried that, it broke because Y” lives. The daily logs are write-heavy and read-rarely; the agent only reaches for them through search.

The mistake I made for two months: dumping everything into MEMORY.md. By the time it hit 200 lines, every turn was paying ~3k tokens just to load the index, and the agent still couldn’t find what it needed. Index ≠ archive.

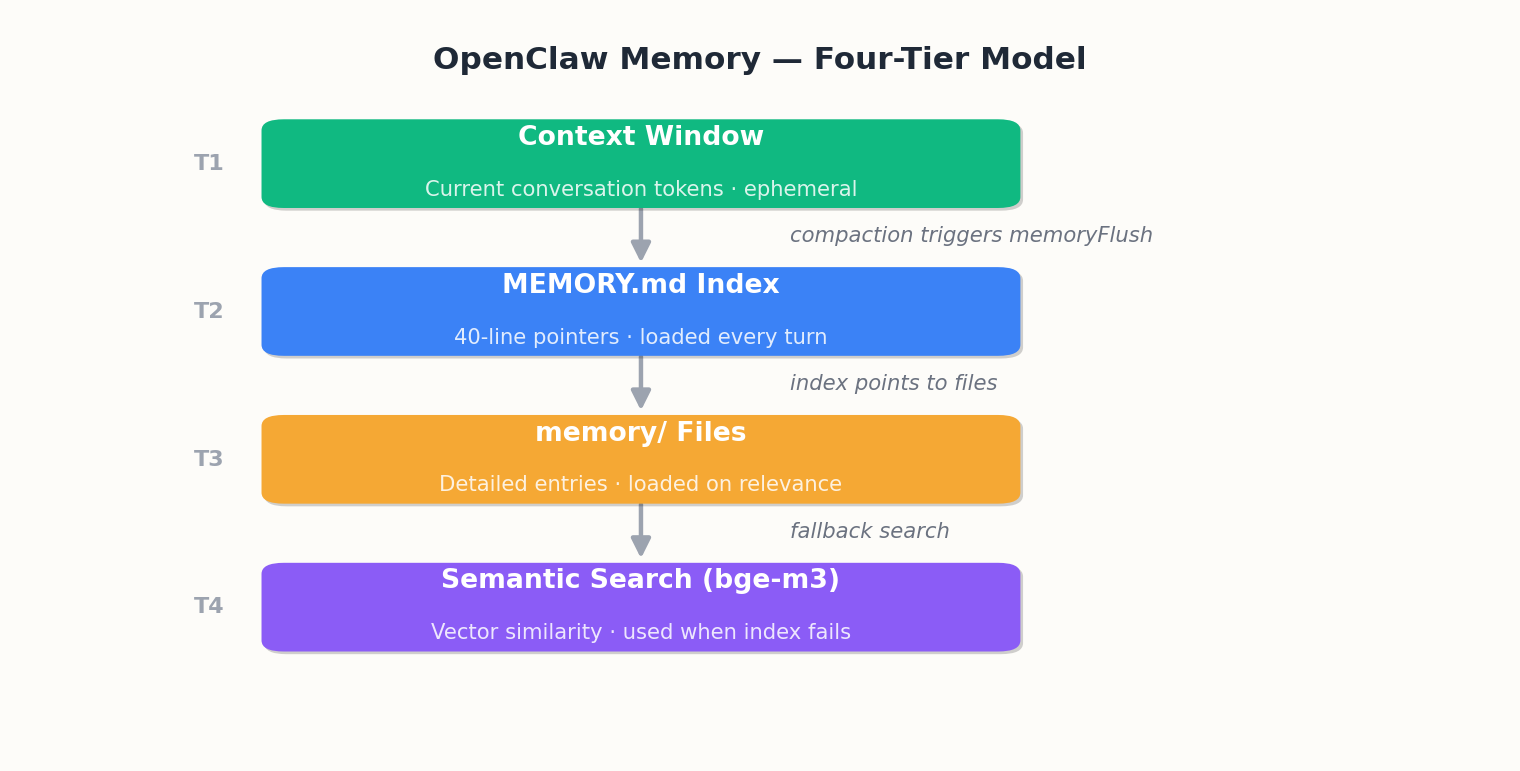

The four-tier mental model#

I keep this in mind when deciding where something goes:

| Tier | Lifespan | Goes in |

|---|---|---|

| Identity | forever | MEMORY.md (name, voice, role) |

| Decision | months | projects.md |

| Lesson | years | lessons.md |

| Trace | days | memory/YYYY-MM-DD.md |

Anything that doesn’t fit one of these is probably noise. Discard it.

Writing good memory entries#

The quality of what you store matters more than the quantity. Each memory entry should enable the agent to make a decision without asking you again.

Bad entries — vague, undated, no actionable content:

| |

These tell the agent almost nothing. Which API? When was it broken? What does “short” mean?

Good entries — specific, actionable, dated:

| |

Now the agent can act without guessing. It knows the provider, the constraint, and the exact behavior rule.

Here’s what a well-structured MEMORY.md looks like — enough for most solo users:

| |

Ten lines. Under 200 tokens to load. The agent knows who you are, what you’re working on, where to look, and what not to do. Everything else is in referenced files and gets pulled via search.

Memory types deep dive#

OpenClaw recognizes five memory types internally. You don’t have to use all of them, but understanding the taxonomy helps you route new information correctly.

1. User memory — facts about the person. Name, preferences, style, timezone. MEMORY.md under Identity. Rarely changes.

2. Project memory — current state of work. What’s done, blocked, next. projects.md. Changes weekly.

3. Feedback memory — corrections. “Not like that, like this.” lessons.md with a date.

4. Reference memory — stable facts. Server IPs, endpoints, config. Dedicated ref_*.md files. Changes rarely.

5. Lesson memory — generalizable insights from failure. “If X, then Y breaks.” lessons.md. Long shelf life.

The decision flowchart I use: Did the user correct you? That’s feedback, goes to lessons.md with a date. Is it a fact about the user as a person? User memory, goes in MEMORY.md. About the current state of a task? Project memory, goes in projects.md. A stable reference like an IP or endpoint? Reference, goes in memory/ref_*.md. A generalizable insight from failure? Lesson, goes in lessons.md. None of the above? Probably noise — daily log or discard.

When in doubt, write it to the daily log. If you find yourself searching for it later, promote it to the right tier.

memoryFlush — the one config I always set#

Long conversations get compacted automatically when the context window gets tight. By default, the agent loses whatever it didn’t write down. memoryFlush runs a “save the important stuff” pass before compaction:

| |

softThresholdTokens is how much room the flush itself can take. 4000 is enough for a useful summary; 8000 is wasteful unless you’re doing very long sessions.

What happens without it#

Here’s a real scenario from before I turned this on. I was 80 turns into a debugging session. Around turn 20, I mentioned switching my embedding provider from OpenAI to SiliconFlow due to rate limits. The agent acknowledged it, and we moved on.

Around turn 60, context got tight. Compaction trimmed the middle (turns 15-45), removing the provider switch. At turn 70, I asked the agent to re-index. It called OpenAI and failed. Ten minutes wasted on something that should have been permanent knowledge.

With memoryFlush enabled, the flush pass fires first — writing “Embedding provider changed to SiliconFlow, OpenAI deprecated” into projects.md before trimming. Post-compaction, the agent reloads and picks it up.

- Without memoryFlush: compaction deletes silently. The agent reverts to stale assumptions.

- With memoryFlush: compaction triggers a write pass first. Critical facts persist.

If you only change one default, change this one.

memorySearch — and why bge-m3 is fine#

You can run semantic search across the memory files. You need an embedding API. The cheap path is SiliconFlow’s BAAI/bge-m3, which is free and good enough:

| |

You’ll only see the benefit after about a week of usage. Before that, there’s nothing to search.

Memory budget math#

Every token spent on memory is a token not spent on conversation. Here’s the math.

MEMORY.md at 40 lines costs about 600 tokens — your fixed overhead per turn. Each file chunk from memorySearch costs 200-500 tokens. Default retrieval budget is 2000 tokens, meaning 3-4 chunks per turn.

Total overhead per turn: 600 + 2000 = 2600 tokens. On a 128k model, that’s about 2%. Acceptable.

Tune with max_memory_tokens_per_turn:

| |

Why not set it to 4000 or higher? Memory competes with the conversation. At 4000, you’re spending 4600 tokens on overhead. In a multi-turn conversation with a 6000-token reply budget, nearly half is used for retrieval. The agent’s responses get squeezed.

The sweet spot: 1000 for quick tasks (< 10 turns), 2000 for standard sessions (the default), and 3000 for long research. Never go above 4000 — it crowds out conversation. If the agent misses context at 2000, improve the memory entries, not the budget.

ContextEngine — the v2026.3.7 shift#

Up to v2026.3.6, memory was an explicit tool the agent had to call. If it forgot, it operated without context. From v2026.3.7, memory moved into a hooks lifecycle the harness runs around the agent:

| |

The agent doesn’t decide when to remember anymore. The harness does. This sounds small; it isn’t. It’s the difference between “the agent remembered to remember” and “the system remembers, full stop.”

The default ContextEngine works for most people. If you want to swap in a RAG-style retriever or a knowledge-graph backend, you can — same agent config, different engine.

The auto-write controversy#

The ContextEngine introduced auto_write: true as a default. The agent writes to memory files without asking — every correction, new fact, or project update just goes in.

For a solo user with a personal assistant, this is great. You never say “remember that.” The agent picks up patterns within a week.

For a shared bot — a team room with five people — auto-write is a disaster. Person A says “always use TypeScript.” Person B says “we’re a Python shop.” Both get written, no attribution. Memory becomes contradictory.

The config:

| |

Set it to false for shared bots. The agent still reads memory but won’t write unless asked. To audit recent writes:

| |

This prints every memory write in the last 7 days — file, line, content, and the triggering turn. I run this weekly. Occasionally the agent writes something wrong (misattributes a preference, or records a one-off as a permanent rule), and catching it early is cheap.

My rule of thumb:

- Solo user, personal assistant:

auto_write: true. Review weekly. - Shared bot, team room:

auto_write: false. Explicit memory commands only. - Hybrid:

auto_write: truewith a review cron that flags non-primary-user entries.

Migrating memory between agents#

At some point you’ll create a second agent, move machines, or upgrade and need to start fresh without losing context. Memory migration is straightforward but has gotchas.

Copying the workspace

The simplest migration: copy ~/.openclaw/workspace/ to the new location. All markdown, all portable.

| |

If you’re creating a second agent on the same machine, copy and prune. A project agent doesn’t need your identity section; a personal assistant doesn’t need another project’s references.

Re-indexing embeddings

The vector index at ~/.openclaw/index/ is not portable across models. Same model on new machine? Copy it. Different model? Delete and rebuild:

| |

A typical workspace (20-30 files) reindexes in under a minute.

Session-to-memory export

Had a great conversation but forgot to persist the key decisions? Extract them after the fact:

| |

This runs the agent over the transcript and pulls out decisions, preferences, and lessons into memory/staged.md for review. It over-extracts, so don’t trust it blindly — but it’s better than re-reading a 200-turn session.

What not to migrate

Skip memory/archive/, session .jsonl files (unless you’ll export from them), and the index/ directory if you changed embedding providers. Stale vectors are worse than none — they return confidently wrong results.

Where it still leaks#

Three things still bite me:

- Group chats and sub-agents don’t read

MEMORY.mdby default. Intentional (sandboxing), but if you forget, you’ll wonder why the team-room bot doesn’t know your name. - Embedding drift. Switch model and old vectors are useless. Re-index or live with degraded recall.

- The 40-line discipline. Nothing enforces it. Set a weekly cron that fails if

wc -l MEMORY.mdexceeds 40.

A quick health check I run every Sunday:

| |

If MEMORY.md creeps over 40, something belongs in projects.md or lessons.md instead. If sessions are piling up past a few hundred, archive them — startup time gets slow.

What to take away#

MEMORY.mdis an index, not a database.- Turn on

memoryFlushon day one. - Add

memorySearchonly after you have something worth searching. - After v2026.3.7, stop asking the agent to remember; let the engine do it.

- Memory is the part that decides whether the agent feels like a tool or like a colleague. It’s worth the discipline.

OpenClaw QuickStart 10 parts

- 01 OpenClaw QuickStart (1): What This Thing Actually Is

- 02 OpenClaw QuickStart (2): Install and First Chat in 10 Minutes

- 03 OpenClaw QuickStart (3): The Six Layers That Make the Agent Loop Work

- 04 OpenClaw QuickStart (4): Configuration, Model Providers, and the Coding Plan Trick

- 05 OpenClaw QuickStart (5): Wiring Telegram, DingTalk, and the WeChat Reality

- 06 OpenClaw QuickStart (6): Skills, MCP, and Shipping Something Real

- 07 OpenClaw QuickStart (7): The Memory System, Without the Magic you are here

- 08 OpenClaw QuickStart (8): Heartbeat, Cron, and Getting Pinged at 7am

- 09 OpenClaw QuickStart (9): The China IM Picker, with Honest Tradeoffs

- 10 OpenClaw QuickStart (10): Production Deploy and the Failure Modes Nobody Warns You About