PDE and ML (1): Physics-Informed Neural Networks

From finite differences to PINNs: automatic differentiation, PDE residual losses, NTK-based training pathologies, Burgers inverse problems, and a side-by-side comparison with FEM and neural operators. Seven figures included.

Series chapter 1 — about a 35-minute read. This is the foundation of the entire series. Neural operators, variational principles, score matching — every later chapter is, at heart, the same idea: how to encode physical or mathematical constraints directly into the neural network’s optimization objective. Master PINNs, and the rest is just swapping one constraint for another.

Prologue: a metal rod#

Suppose you want the temperature distribution $u(x,t)$ along a metal rod. Half a century of numerical analysis offers two standard answers:

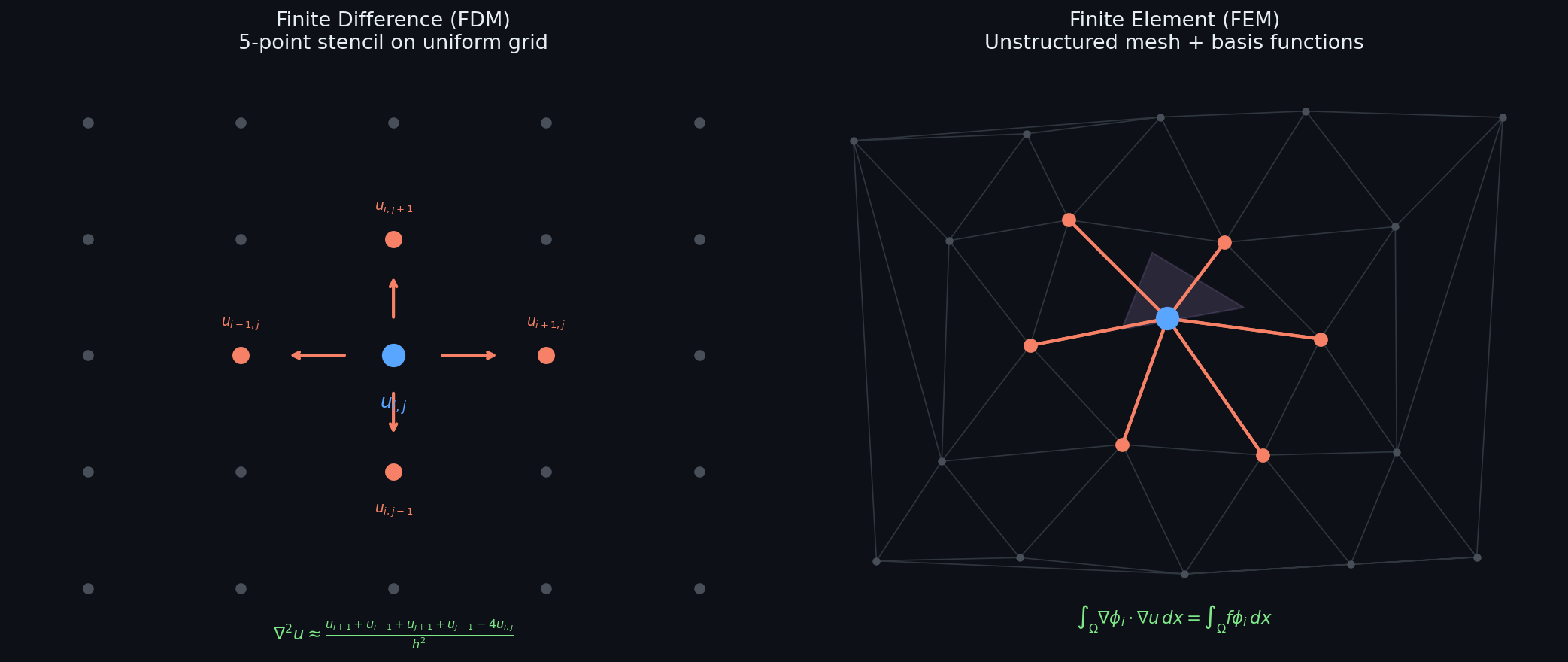

- Finite differences (FDM). Slice $[0,L]$ into $N$ pieces and $[0,T]$ into $M$ pieces, replace the second derivative by a three-point stencil, march forward in time.

- Finite elements (FEM). Triangulate the domain, approximate the solution by a linear polynomial inside each triangle, and require the weak-form residual to be orthogonal to a set of test functions.

Both routes are mature beyond reproach but share one painful prerequisite — first you must build a mesh. A 1-D rod is fine; an aircraft wing is annoying; a 10-dimensional state space is a death sentence (the curse of dimensionality: $N\propto h^{-d}$ explodes).

In 2019, Raissi, Perdikaris, and Karniadakis 1 proposed a third approach in Journal of Computational Physics:

Skip the mesh. Let a neural network $u_\theta(x,t)$ approximate the solution directly, and write “satisfies the PDE” as a loss function.

The seed of the idea goes back to Lagaris (1998) 2 and even further to Walther Ritz (1908), whose variational method recast solving a PDE as minimising a functional over a finite-dimensional function space. Ritz used piecewise polynomials; PINNs use neural networks. The killer move PINNs add is automatic differentiation: high-order derivatives are evaluated to machine precision in one line of torch.autograd.grad, no truncation error.

This chapter proceeds as follows: §2 quantifies the pain points of classical methods. §3 provides the minimal complete definition of a PINN and shows its equivalence with Ritz–Galerkin. §4 , the core of the chapter, offers a Neural Tangent Kernel (NTK) view of why PINNs are often hard to train and presents three effective remedies. §5 walks through a complete Burgers experiment and an inverse-problem demonstration. §6 lists failure modes and limits. §7 places PINNs on the broader SciML map alongside FEM and neural operators.

Classical numerical methods: mature, with edges#

Finite differences — intuition at the price of stability#

A Von Neumann analysis yields the stability condition $\tau\le h^2/(2\nu)$ — the time step must scale like the square of the space step. Refining $h$ tenfold demands $\tau$ refined a hundredfold; total cost grows by a factor of one thousand. The implicit Crank–Nicolson scheme is unconditionally stable but pays for it with a tridiagonal solve at every step; in higher dimensions you are at the mercy of sparse direct solvers or multigrid.

Bottom line. FDM has a clean global error of $O(\tau+h^2)$ guaranteed by Lax equivalence. It is unbeatable on structured grids and completely helpless on irregular geometries.

Finite elements — weak forms and the Ritz functional#

$$ \underbrace{\int_\Omega\nabla u\cdot\nabla v\,\mathrm dx}_{a(u,v)} =\underbrace{\int_\Omega fv\,\mathrm dx}_{\ell(v)},\qquad\forall v\in H_0^1(\Omega). $$This is equivalent to minimising the Dirichlet energy $J(u)=\tfrac12 a(u,u)-\ell(u)$ . In a piecewise-linear subspace $V_h\subset H_0^1$ , write $u_h=\sum c_j\phi_j$ and solve $Kc=f$ with $K_{ij}=a(\phi_i,\phi_j)$ — a sparse symmetric positive-definite stiffness matrix.

Céa’s lemma supplies the optimal error bound $\|u-u_h\|_{H^1}\le Ch^k\|u\|_{H^{k+1}}$ . FEM’s strengths are textbook: convergence proofs, error control, adaptive mesh refinement. The weakness, again, is the mesh — moving boundaries, porous media and high-dimensional parameter spaces are all hard.

What PINNs are trying to disrupt#

Stitching §2.1 and §2.2 together, the shared cost of classical methods is:

| dimension | FDM | FEM |

|---|---|---|

| mesh | required, structured | required, unstructured |

| high-order derivatives | discrete stencil, truncation $O(h^p)$ | weakened to first order via test functions |

| high dimensions | catastrophic | catastrophic |

| complex geometry | hard | moderate (mesh generation expensive) |

| inverse problems | nested optimisation | nested optimisation |

| change boundary / geometry / parameters | recompute everything | recompute everything |

PINNs aim at the last three rows simultaneously: mesh-free, dimension-friendly, and forward + inverse unified into a single optimisation. The price is the loss of classical convergence guarantees — those have to be replaced by training tricks.

The minimal complete definition of a PINN#

The mathematical statement#

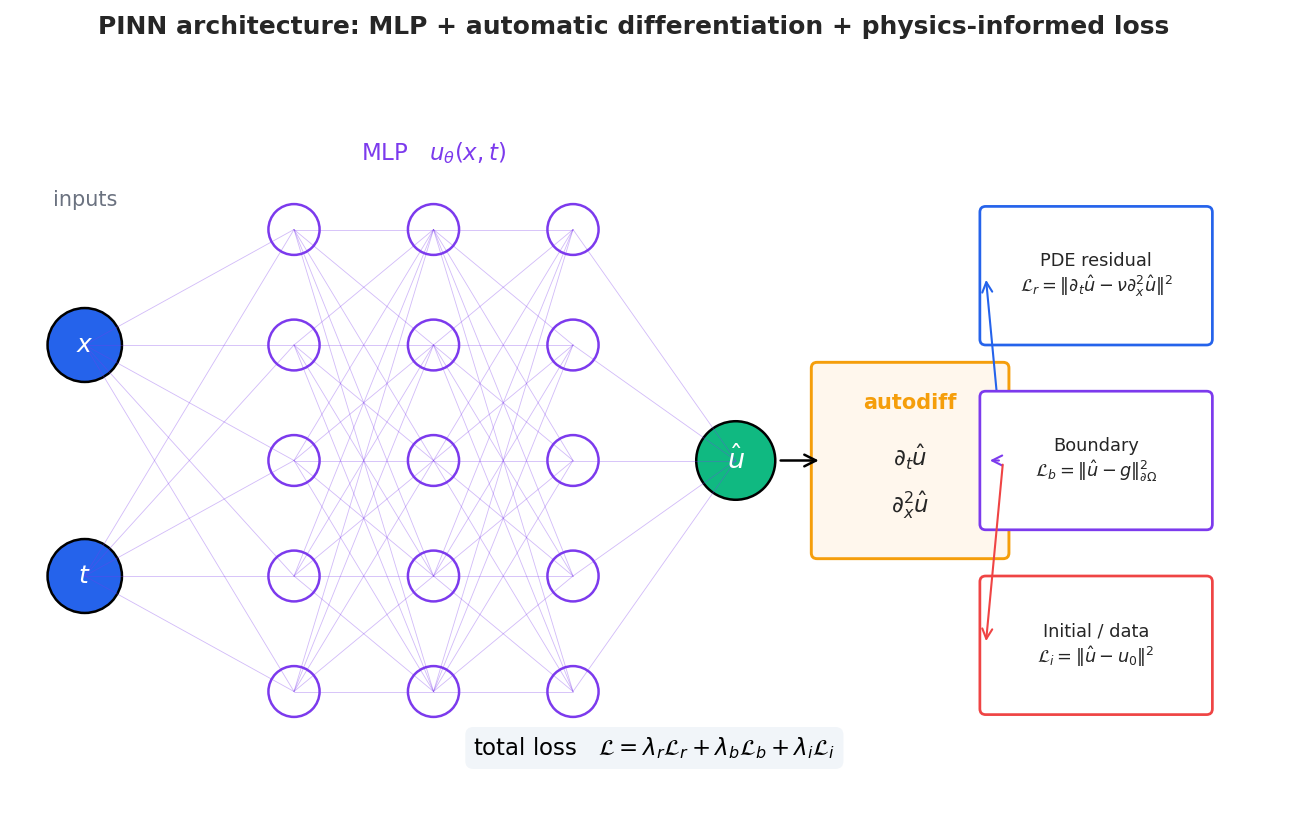

$$ \mathcal N[u](x,t)=0,\quad(x,t)\in\Omega\times(0,T],\qquad \mathcal B[u]=g\ \text{on}\ \partial\Omega,\qquad u(x,0)=u_0(x). $$ $$ \boxed{\; \mathcal L(\theta)=\lambda_r\underbrace{\frac1{N_r}\sum_{i=1}^{N_r}|\mathcal N[u_\theta](x_i^r,t_i^r)|^2}_{\mathcal L_r:\,\text{PDE residual}} +\lambda_b\underbrace{\frac1{N_b}\sum|\mathcal B[u_\theta]-g|^2}_{\mathcal L_b} +\lambda_i\underbrace{\frac1{N_i}\sum|u_\theta-u_0|^2}_{\mathcal L_i} +\lambda_d\underbrace{\frac1{N_d}\sum|u_\theta-u^{\mathrm{obs}}|^2}_{\mathcal L_d}\;} $$The last term $\mathcal L_d$ is absent in forward problems but central to inverse problems. Training is $\theta^\star=\arg\min_\theta\mathcal L(\theta)$ via Adam or L-BFGS.

Complete PINN implementation: 1D heat equation#

Before we discuss why autodiff matters, let us build a complete PINN from scratch. The 1D heat equation on $[0,1] \times [0,1]$ :

$$\frac{\partial u}{\partial t} = \nu \frac{\partial^2 u}{\partial x^2}, \quad u(x,0) = \sin(\pi x), \quad u(0,t) = u(1,t) = 0$$The exact solution is $u(x,t) = e^{-\nu \pi^2 t}\sin(\pi x)$ . We will train a network to recover it using only the PDE, boundary conditions, and initial condition – no mesh required.

| |

Key design choices visible in this code:

- Three-term loss: The total loss is a weighted sum of PDE residual, boundary, and initial conditions. The weights (here 10x for BC/IC) are critical – Section 3 will explain why.

- Automatic differentiation: We never discretize $\partial u/\partial t$

or $\partial^2 u/\partial x^2$

. PyTorch’s

autogradcomputes exact derivatives of the network output with respect to its inputs. - Mesh-free sampling: Collocation points are simply random samples in $[0,1]^2$ . No mesh connectivity, no element assembly.

Why automatic differentiation matters#

$$\partial_x u\approx\frac{u(x+\varepsilon)-u(x-\varepsilon)}{2\varepsilon},$$has two killers: $\varepsilon$ too small drowns in floating-point round-off, and high-order derivatives compound the error. Reverse-mode autodiff is symbolic-exact: every elementary operation has a known derivative, the chain rule is automatically composed, and the result equals the analytic derivative to machine precision.

| |

The flag create_graph=True keeps the derivative itself in the computation graph, so that loss = (residual**2).mean() propagates back to $\nabla_\theta$

correctly.

Isomorphism with the Ritz method#

Lifting to the abstract level:

- Ritz: minimise $J(u)=\tfrac12 a(u,u)-\ell(u)$ inside a subspace $V_h=\mathrm{span}\{\phi_1,\dots,\phi_n\}$ .

- PINN: minimise $\mathcal L(\theta)$ inside a subspace $V_\theta=\{u_\theta:\theta\in\mathbb R^p\}$ .

Two differences:

- Basis functions — piecewise polynomials vs. neural networks. The latter are nonlinear, smooth, and have a tunable spectrum.

- Testing mechanism — Galerkin uses inner products on test functions; PINNs use collocation + Monte-Carlo approximation of the integral.

Read this way, PINNs are not exotic: they are “Ritz with the finite-dimensional subspace replaced by a neural network.” The Deep Ritz method 3 makes this explicit by minimising the energy functional directly rather than the squared residual — better behaved on elliptic problems.

Convergence: yes, but weak#

Shin, Darbon and Karniadakis (2020) 4 proved asymptotic convergence for linear second-order elliptic PDEs: as $N_r\to\infty$ , network width $\to\infty$ , and $\mathcal L\to 0$ , $u_\theta\to u^\star$ in $L^2$ . There is no quantitative rate like FEM’s $O(h^k)$ — the most honest gap between PINNs and classical methods. Subsequent work has supplied Sobolev-norm bounds under restrictive assumptions, but engineering-grade a priori convergence orders remain out of reach.

Training pathologies: the part that’s actually hard#

Anyone who has run a PINN has seen the loss drop from 1 to 0.01 and then refuse to move, or seen boundary conditions fail outright. Three diagnoses follow, with engineering fixes for each.

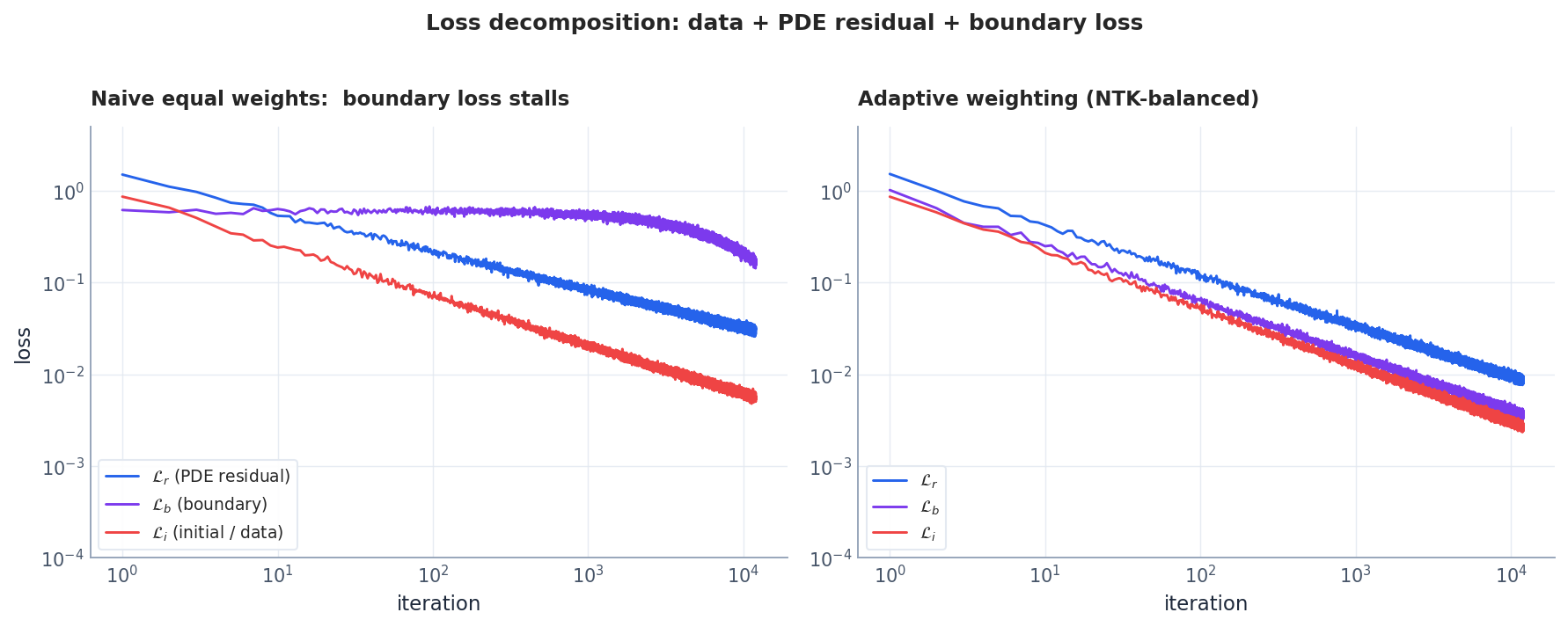

Pathology A: imbalanced loss terms (gradient pathology)#

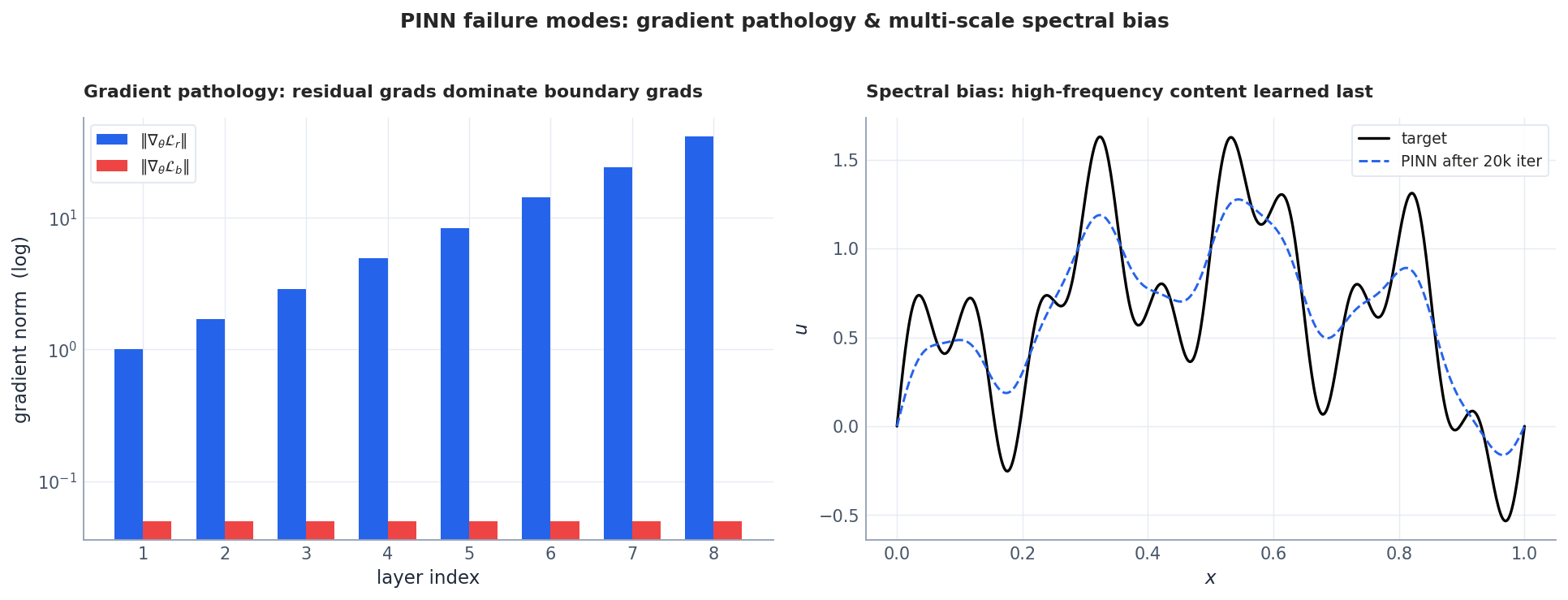

Wang & Perdikaris (2021) 5 used backprop gradient statistics to expose a universal phenomenon: $\nabla_\theta\mathcal L_r$ is several orders of magnitude larger than $\nabla_\theta\mathcal L_b$ . Plain Adam follows the dominant gradient, the boundary loss is drowned out, and the network “satisfies the PDE wonderfully in the interior but has no idea what the boundary looks like.”

This is Wang’s learning-rate annealing recipe; a few lines of code turn an unconvergent PINN into a healthy one.

$$u_\theta(x,t)=x(1-x)\,\tilde u_\theta(x,t)+B(x,t),$$where $B$ satisfies the boundary by construction and $\tilde u_\theta$ is unconstrained. Then $\mathcal L_b\equiv 0$ and there is nothing to balance.

Fix 3: NTK balancing. Wang–Yu–Perdikaris (2022) 6 proved PINN training dynamics are governed by three Neural Tangent Kernels; weighting by the trace of each NTK is the principled choice.

Fixing spectral bias: Fourier features and SIREN#

Before examining spectral bias in detail, let us see the practical fix. The core idea: if a standard MLP has trouble representing high-frequency functions, we can lift the input into a high-frequency basis before feeding it to the network.

Random Fourier Features (RFF): Map inputs $\mathbf{x}$ to $[\cos(B\mathbf{x}), \sin(B\mathbf{x})]$ where $B$ is a fixed random matrix sampled from $\mathcal{N}(0, \sigma^2)$ . The bandwidth $\sigma$ controls the frequency range.

| |

SIREN (Sinusoidal Representation Networks): An alternative where every activation is a sine function with a carefully initialized frequency:

| |

When to use which:

- RFF ($\sigma = 1$ –$10$ ): Good default for moderately oscillatory solutions. Cheap to implement, no architecture change needed beyond the input layer.

- RFF ($\sigma > 10$ ): Aggressive high-frequency capture. Risk: if $\sigma$ is too large, optimization becomes harder.

- SIREN ($\omega_0 = 30$ ): Best for problems where the solution has structure at many scales (e.g., turbulence, wave interference). Requires careful initialization but gives smooth, infinitely differentiable outputs at all frequencies.

Pathology B: spectral bias#

Neural network training has a well-known bias: low frequencies are learned first, high frequencies last (Rahaman 2019; Tancik 2020). For PINNs the impact is especially severe because the PDE residual involves second derivatives, which amplify high-frequency error by $k^2$ — the worse the network is at high frequencies, the larger the residual, in a vicious circle.

Fixes:

- Fourier features: map $x$ first to $[\sin(2\pi Bx),\cos(2\pi Bx)]$ with a Gaussian random matrix $B$ ; this flattens the NTK spectrum.

- Sine activations (SIREN 7): naturally distribute energy across the frequency domain, but require careful initialisation.

Pathology C: violation of causality#

Time-dependent PDEs respect “the past determines the future”. But PINNs sample $\Omega\times[0,T]$ all at once, asking the network to fit $t=T$ before $t<T$ has been learned correctly. Krishnapriyan et al. (2021) 8 coined this failure mode on the convection equation.

$$w_n=\exp\bigl(-\varepsilon\sum_{k<n}\mathcal L_r(t_k)\bigr).$$Late-time residuals are admitted into the loss only after early-time residuals have decayed.

Convergence comparison#

Combining the fixes on a Burgers experiment:

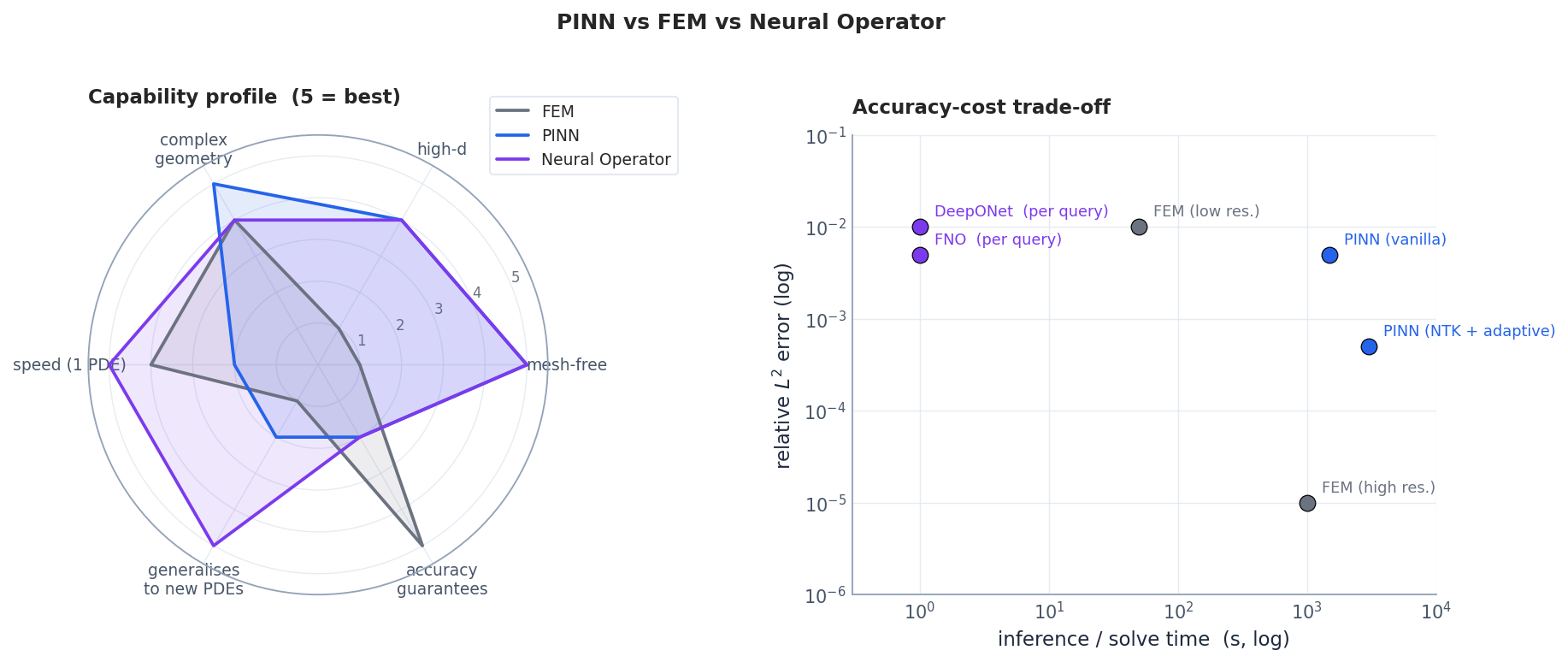

Method comparison: PINNs vs classical solvers vs neural operators#

Before diving into experiments, let us position PINNs relative to alternatives. This table distills practical experience from the literature:

| Criterion | FDM | FEM | PINN | Neural Operator (FNO/DeepONet) |

|---|---|---|---|---|

| Mesh required | Yes (structured) | Yes (unstructured) | No | No (at inference) |

| Accuracy (smooth PDE) | $O(h^2)$ –$O(h^4)$ | $O(h^{p+1})$ | ~$10^{-3}$ –$10^{-4}$ | ~$10^{-2}$ –$10^{-3}$ |

| Accuracy (singular/stiff) | Excellent with AMR | Excellent with adaptivity | Poor without tricks | Poor |

| Training/setup cost | Minutes | Minutes–hours | Hours–days | Hours (amortized over queries) |

| Inference cost | N/A (single solve) | N/A (single solve) | Milliseconds | Milliseconds |

| Dimension scaling | Curse of dimensionality | Curse of dimensionality | Graceful (mesh-free) | Graceful |

| Inverse problems | Requires adjoint code | Requires adjoint code | Native (add params to loss) | Requires fine-tuning |

| When to use | Low-dim, need precision | Complex geometry, need precision | High-dim, inverse, no mesh | Many-query (design, UQ) |

Rule of thumb: If you need $10^{-6}$ accuracy in 1D–3D with known geometry, use FEM. If you need fast parametric sweeps over many configurations, use neural operators. PINNs shine in the middle ground: inverse problems, high-dimensional PDEs, and situations where meshing is impractical.

Residual-based adaptive refinement (RAR)#

A key trick to improve PINN accuracy without increasing total point count: concentrate collocation points where the PDE residual is large. This is the mesh-free analogue of adaptive mesh refinement (AMR).

| |

RAR typically improves accuracy by 3–10x for problems with localized features (shocks, boundary layers) at no extra computational cost per epoch.

Experiment: Burgers and an inverse problem#

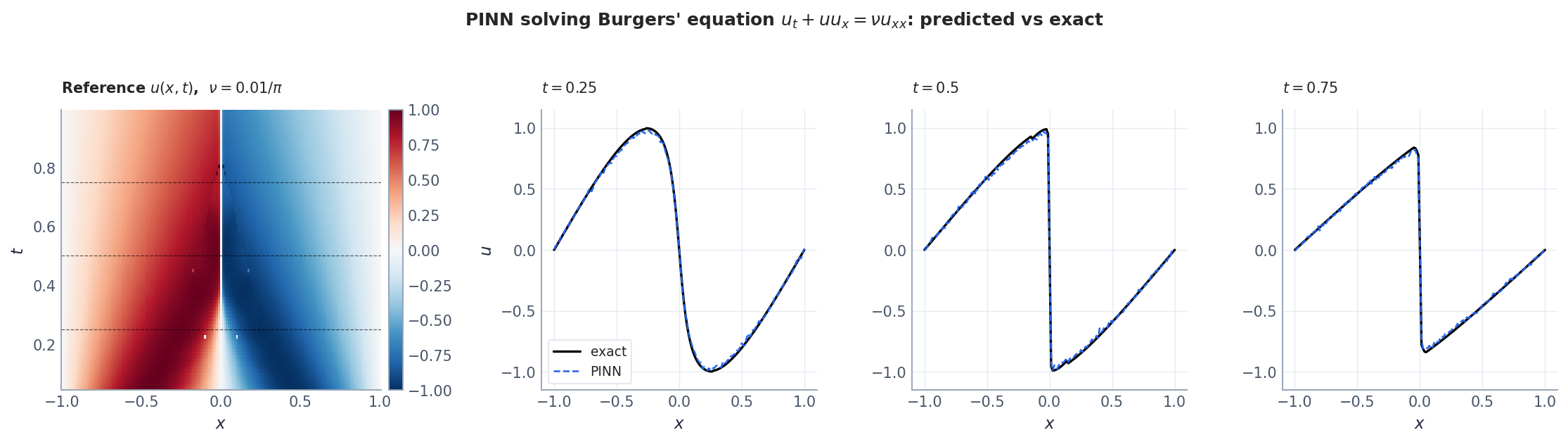

Forward problem: the Burgers shock#

$$ u_t+uu_x=\nu u_{xx},\quad x\in[-1,1],\ t\in[0,1],\quad u(\pm 1,t)=0,\ u(x,0)=-\sin(\pi x),\ \nu=\frac{0.01}{\pi}. $$The reference solution is obtained via the Cole–Hopf transform $u=-2\nu(\ln\phi)_x$

which reduces Burgers to the heat equation (the script’s burgers_cole_hopf does exactly this). With $\nu$

this small, a near-discontinuous shock forms near $x=0$

from $t\approx 0.4$

onward.

Practical recipe:

- An MLP of 8 hidden layers $\times$ 20 units with tanh activations is plenty — do not blindly stack depth.

- Residual collocation $N_r\sim 10^4$ . Use residual adaptive refinement (RAR): every few hundred steps, evaluate $|\mathcal N[u_\theta]|$ on a candidate pool and add the top-$k$ points to the training set.

- Run Adam at 1e-3 for 20k steps to settle, then switch to L-BFGS for 5k more to polish.

- Normalise. Map $(x,t)$ to $[-1,1]$ — otherwise the NTK is biased.

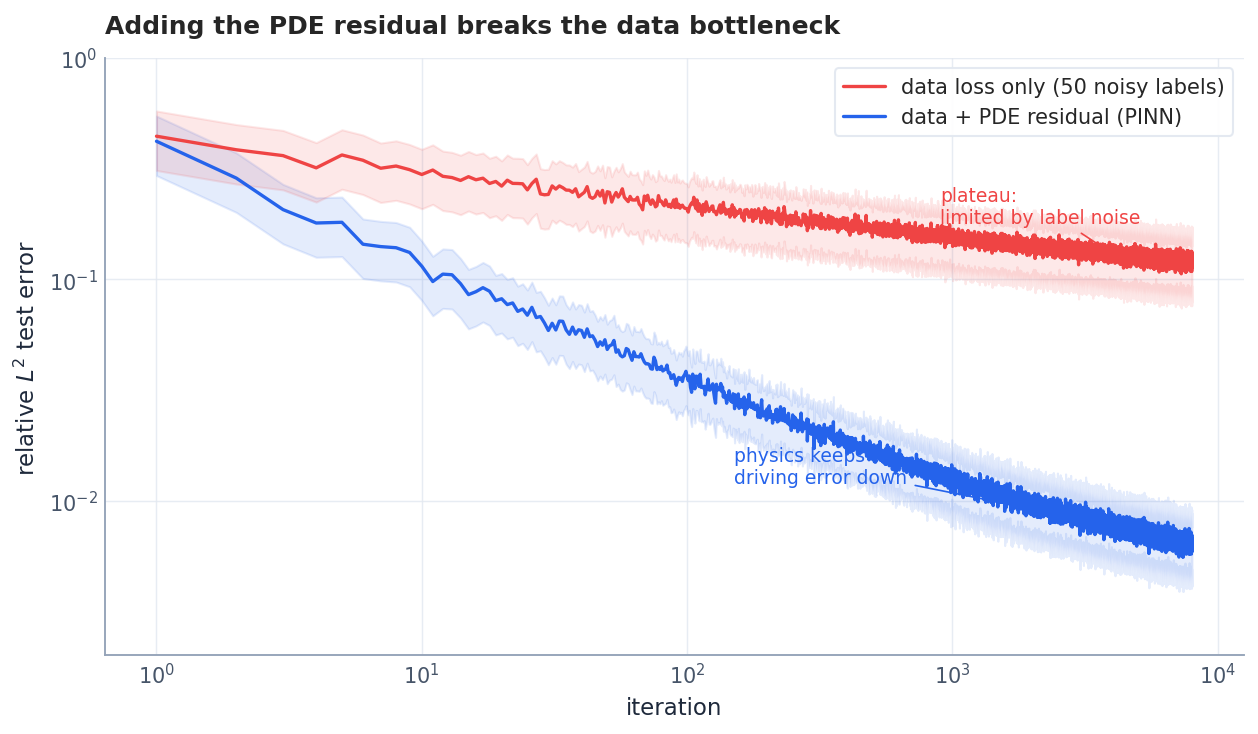

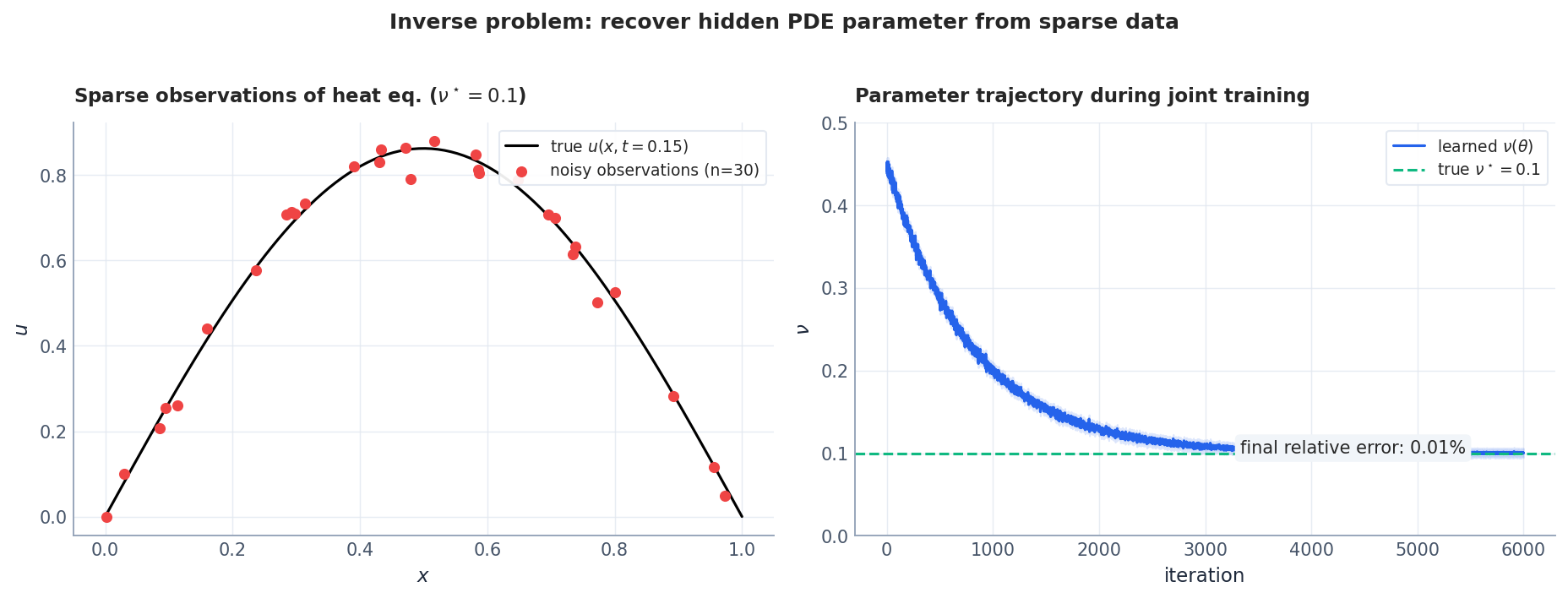

Inverse problem: parameter discovery#

Forward problems are unremarkable; inverse problems are where PINNs shine. Append $\mathcal L_d$ to fit sparse observations and treat the unknown PDE parameter $\nu$ as a learnable scalar that joins the gradient descent.

Why is PINN so good at inverse problems? Classical inverse problems require nested optimisation — outer loop on parameters, inner loop on the PDE solver; every parameter change triggers a full forward solve. PINNs put “satisfies the PDE” into the loss, so parameter and $\theta$ live on the same gradient — no outer loop. The price is uncertainty quantification: ensembles or Bayesian PINNs, not a textbook MCMC + FEM stack.

Complete inverse problem: recovering the diffusion coefficient#

One of the most compelling PINN applications is parameter discovery – recovering unknown PDE coefficients from sparse, noisy observations. Here we recover the diffusion coefficient $\nu$ in the heat equation from only 50 noisy measurements.

The setup: we observe $u(x_i, t_i) + \epsilon_i$ at scattered points, where $\epsilon_i \sim \mathcal{N}(0, 0.01^2)$ . The true $\nu = 0.01$ is unknown and treated as a trainable parameter.

| |

Key implementation details for inverse problems:

- Log-parameterization (

log_nu): We optimize $\log \nu$ and exponentiate to ensure positivity. This also improves gradient flow when the true value is small. - High data weight: The coefficient 100.0 on

loss_dataensures the network fits observations tightly. Without it, the PDE loss dominates and $\nu$ may not converge. - Observation window: We only observe $t \in [0, 0.5]$ but enforce the PDE on the full domain. The PDE acts as a physics-informed regularizer that extrapolates beyond the data.

Failure modes and limits#

PINNs are not silver bullets. Common industrial pitfalls:

| failure mode | cause | state of the art |

|---|---|---|

| high-frequency / multi-scale (turbulence) | spectral bias + 2nd-derivative amplification | partially mitigated by Fourier features and cPINN domain decomposition |

| near-discontinuities (shocks, phase transitions) | NN bias toward continuous functions | conservative PINN, weak-form PINN |

| long-time integration | causality violation, error accumulation | causal training, time partitioning |

| ill-conditioning (non-unique weak solutions) | non-convex loss landscape | priors, ensembles |

| coupled multi-physics | scale mismatch across loss terms | adaptive weighting, multi-task learning |

| high dimensions ($d>10$ ) | residual sampling curse | progressive sampling, quasi-MC |

A practitioner’s rule of thumb: complex geometry, high dimensions, parameter inversion required → PINN; rigorous accuracy guarantees, regular geometry, fixed parameters → FEM / spectral; many online queries of the same parametric PDE family → neural operator.

PINNs on the SciML map#

Selection mnemonic:

- FEM — classical, accuracy-guaranteed, regular geometry. Industrial-scale simulation still rests on FEM.

- PINN — a Swiss-army knife, especially for inverse problems and prior-fusion research questions.

- Neural operators (chapter 2) — for “same PDE family, varying input function, repeatedly queried” applications: weather, semiconductor simulation, financial pricing.

PINNs do not aim to replace FEM. Their real role is to make prior physics a first-class citizen of deep-learning model design. That theme will run through every subsequent chapter.

Handing off to the next chapters#

Reading PINN as “constraint embedded in the loss function” makes the rest of the series fall into place:

- Chapter 2 — Neural operators. From “learn one solution” to “learn the solution operator $\mathcal G:f\mapsto u$ ”.

- Chapter 3 — Variational principles. Replace the residual loss with an energy functional — more stable, closer to the FEM theory.

- Chapter 4 — Variational inference. Push the Fokker–Planck equation into the loss for consistent probabilistic inference.

- Chapter 5 — Symplectic geometry. Encode Hamiltonian conservation directly in the architecture — the extreme case of hard constraints.

- Chapter 6 — Neural ODE / CNF. Replace “residual = 0” with “divergence of the flow = 0”.

- Chapter 7 — Diffusion models / score matching. Run PINN backwards: given data, recover the stochastic process whose Fokker–Planck equation it satisfies.

- Chapter 8 — Reaction-diffusion + GNN. Swap the MLP for a graph network to handle mesh-shaped geometry.

After this chapter, you should be able to give the one-sentence answer:

A PINN is a mesh-free PDE solver that uses neural networks as universal basis functions, treats the PDE residual as a loss function, and uses automatic differentiation to evaluate high-order derivatives to machine precision; its bottleneck is training dynamics — the gradient pathology and spectral bias revealed by NTK analysis — not expressive power.

✅ Checkpoint#

- Write down what each of the three terms in the PINN loss represents.

- Explain why NTK scale mismatches cause boundary conditions to be ignored during training.

- Name at least two engineering fixes for spectral bias.

- Given an inverse problem, sketch the code that puts the unknown parameter into the computation graph.

- When should you choose PINN over FEM? Over a neural operator?

What’s next#

The handful of core ideas in this chapter (PDE residual as loss, operators on function spaces, Wasserstein geometry, symplectic structure, scores, diffusion) recur throughout the rest of the series. If a section stalls you, jot the question down and keep reading — the next chapter usually re-explains it from a different angle.

The fastest sanity check on your own understanding is to run this chapter’s equation on a minimal example: a 1-D heat equation, a single pendulum, a 2-D Gaussian mixture. The code is short, but it converts “looks right” into “it’s right on my machine.”

References#

Series Navigation#

| part | topic |

|---|---|

| 1 | Physics-Informed Neural Networks (this article) |

| 2 | Neural Operator Theory |

| 3 | Variational Principles and Optimisation |

| 4 | Variational Inference and the Fokker–Planck Equation |

| 5 | Symplectic Geometry and Structure-Preserving Networks |

| 6 | Continuous Normalising Flows and Neural ODE |

| 7 | Diffusion Models and Score Matching |

| 8 | Reaction-Diffusion Systems and GNN |

M. Raissi, P. Perdikaris, G. E. Karniadakis. Physics-Informed Neural Networks: A Deep Learning Framework for Solving Forward and Inverse Problems Involving Nonlinear Partial Differential Equations. J. Comput. Phys., 378:686–707, 2019. doi:10.1016/j.jcp.2018.10.045 ↩︎

I. E. Lagaris, A. Likas, D. I. Fotiadis. Artificial Neural Networks for Solving Ordinary and Partial Differential Equations. IEEE TNN, 9(5):987–1000, 1998. ↩︎

W. E, B. Yu. The Deep Ritz Method. Commun. Math. Stat., 6(1):1–12, 2018. arXiv:1710.00211 ↩︎

Y. Shin, J. Darbon, G. E. Karniadakis. On the Convergence of Physics-Informed Neural Networks for Linear Second-Order Elliptic and Parabolic Type PDEs. Commun. Comput. Phys., 28(5):2042–2074, 2020. arXiv:2004.01806 ↩︎

S. Wang, Y. Teng, P. Perdikaris. Understanding and Mitigating Gradient Flow Pathologies in Physics-Informed Neural Networks. SIAM J. Sci. Comput., 43(5):A3055–A3081, 2021. arXiv:2001.04536 ↩︎

S. Wang, X. Yu, P. Perdikaris. When and Why PINNs Fail to Train: A Neural Tangent Kernel Perspective. J. Comput. Phys., 449:110768, 2022. arXiv:2007.14527 ↩︎

V. Sitzmann et al. Implicit Neural Representations with Periodic Activation Functions (SIREN). NeurIPS 2020. arXiv:2006.09661 ↩︎

A. Krishnapriyan et al. Characterizing Possible Failure Modes in Physics-Informed Neural Networks. NeurIPS 2021. arXiv:2109.01050 ↩︎

PDE and Machine Learning 8 parts

- 01 PDE and ML (1): Physics-Informed Neural Networks you are here

- 02 PDE and ML (2): Neural Operator Theory

- 03 PDE and ML (3): Variational Principles and Optimization

- 04 PDE and ML (4): Variational Inference and the Fokker-Planck Equation

- 05 PDE and ML (5): Symplectic Geometry and Structure-Preserving Networks

- 06 PDE and ML (6): Continuous Normalizing Flows and Neural ODE

- 07 PDE and ML (7): Diffusion Models and Score Matching

- 08 PDE and ML (8): Reaction-Diffusion Systems and Graph Neural Networks