PDE and ML (2): Neural Operator Theory

DeepONet vs FNO from a function-space view: resolution invariance, error bounds, failure modes.

A classical PDE solver — finite difference, finite element, spectral — is a function: feed it one initial condition and one set of coefficients, get back one solution. A PINN is the same kind of object dressed in neural-network clothes: each new initial condition demands a fresh round of training. Switch the inflow velocity on a wing or move a single sensor reading in a forecast and you reset the clock.

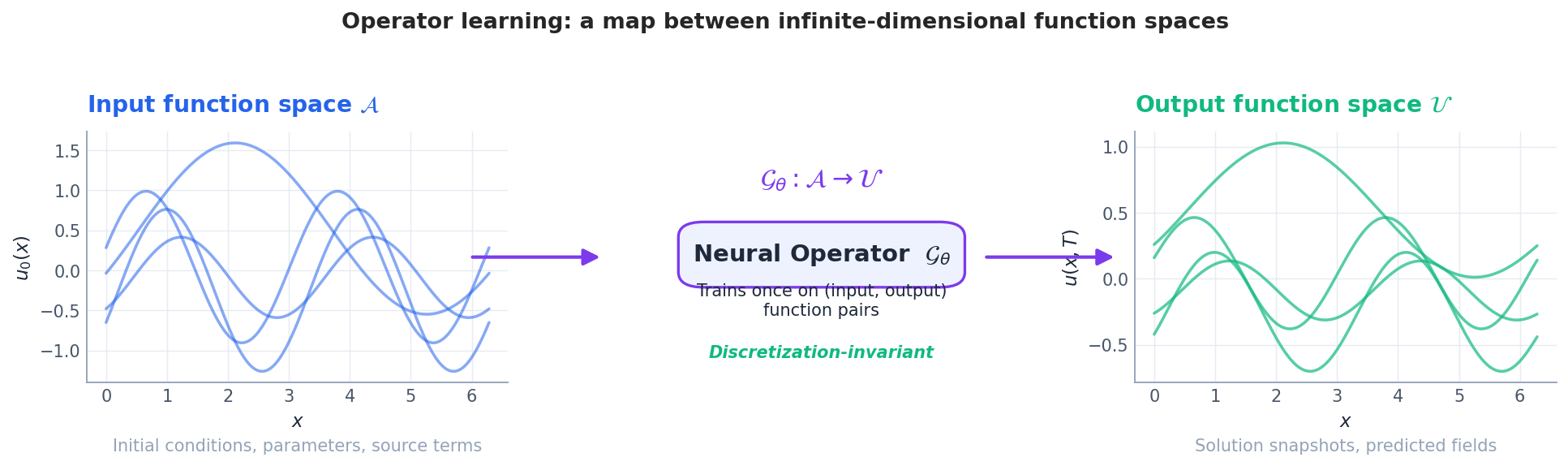

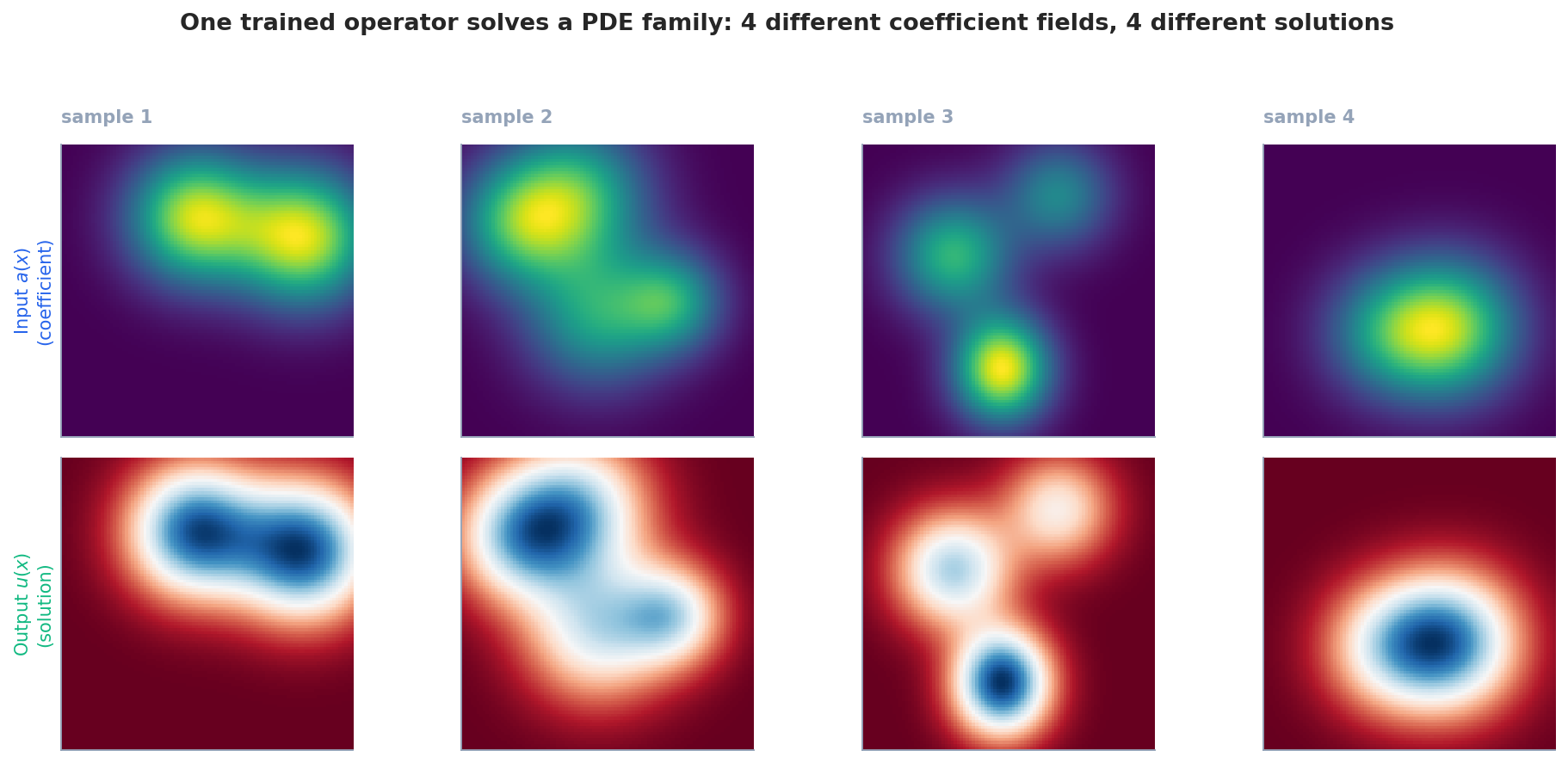

Neural operators throw that contract away. They learn the solution map itself — a function whose input is a function (an initial condition, a coefficient field, a forcing) and whose output is another function (the solution at some later time, on some target domain). Train the operator once on a few thousand precomputed pairs, and any new instance is one forward pass. No re-meshing, no re-optimising, no PDE solver in the loop at inference time.

This article is a deep dive into how that is possible. We start from the functional-analytic setup that makes “learning a map between function spaces” mean something precise, then unpack the two architectures that dominate the literature: DeepONet, which decomposes the operator into a branch and a trunk in the spirit of the Chen–Chen universal approximation theorem, and the Fourier Neural Operator (FNO), which performs a learnable global convolution in the spectral domain. Along the way we look at why neural operators are resolution invariant, what error bounds the theory actually delivers, and where each architecture quietly fails.

Classical PDE solvers — finite differences, finite elements, spectral methods — are essentially functions: feed in initial conditions and parameters, get a solution back. PINNs are also functions in this sense: change the initial condition (a wing’s incoming flow speed shifts, a sensor reading drifts a hair) and the network has to be retrained from scratch.

Neural operators rewrite the rules. Instead of learning one solution, they learn the solution operator itself: input is a function (initial condition, coefficient field, source term), output is also a function (the solution at some time, a field over the target region). One offline training, then every new instance is a single forward pass — no remeshing, no inner optimization, no classical solver in the loop.

This article walks the technology in two halves. First the functional-analytic framework that makes “learning maps between function spaces” mean something rigorous. Then two dominant architectures: DeepONet — split the operator into branch and trunk via the Chen–Chen universal approximation theorem; and Fourier Neural Operator (FNO) — perform learnable global convolutions in frequency space. Along the way: why neural operators are resolution-invariant, how tight the error bounds actually are, and where each architecture quietly fails.

Why operators, not just bigger networks#

The PINN ceiling#

$$u_t + u\,u_x = \nu\,u_{xx},\qquad x\in[0,2\pi],\ t\in[0,T],$$ $$\mathcal{L}_{\mathrm{PINN}}(\theta) = \big\| \partial_t u_\theta + u_\theta\,\partial_x u_\theta - \nu\,\partial_{xx} u_\theta \big\|^2 + \big\|u_\theta(\cdot,0) - u_0 \big\|^2 .$$Notice how $u_0$ appears explicitly inside the loss. Change $u_0$ and the loss landscape changes; the optimiser has to start over. For a design study with a thousand candidate inflow profiles, that is a thousand training runs.

Operator learning, formally#

$$\mathcal{G} : \mathcal{A} \to \mathcal{U},\qquad \mathcal{G}(u_0) = u(\cdot, T).$$ $$u(\cdot, T) \approx \mathcal{G}_\theta(a) \quad \text{(one forward pass).}$$The training cost is amortised over the entire family of instances. This is the trade we want.

Function-space foundations (the bare minimum)#

Banach, Hilbert, Sobolev — what each one buys you#

$$C(K) \;=\; \{u:K\to\mathbb{R} \text{ continuous}\}, \quad \|u\|_\infty = \sup_{x\in K} |u(x)|,$$ $$L^p(\Omega) \;=\; \Big\{u:\Omega\to\mathbb{R} \;:\; \int_\Omega |u|^p < \infty\Big\}, \quad \|u\|_{L^p} = \Big(\int |u|^p\Big)^{1/p}.$$A Hilbert space is a Banach space whose norm comes from an inner product. The inner product gives us angles, orthogonality and projections — the entire machinery of Fourier series and spectral decomposition. The canonical example is $L^2(\Omega)$ with $\langle u, v\rangle = \int u\bar v$ .

A Sobolev space $H^s(\Omega)$ controls not just the function but its derivatives up to order $s$ in $L^2$ . PDE solutions usually live in some $H^s$ , and the parameter $s$ measures smoothness. This is where the resolution-invariance argument will land: smoother functions have spectra that decay quickly, so we can throw away high-frequency modes without paying much.

Why the input dimension is “infinite”#

When a CNN ingests a $256\times 256$ image, it really sees a vector in $\mathbb{R}^{65536}$ . Doubling the resolution to $512\times 512$ changes the input dimension and breaks the model. A neural operator instead ingests a function and only samples it for numerical purposes. The architecture must therefore be designed so that the prediction at a query location $y$ is independent of how the input was sampled. This is the design constraint that distinguishes operator learning from “a CNN that happens to read a PDE solution.”

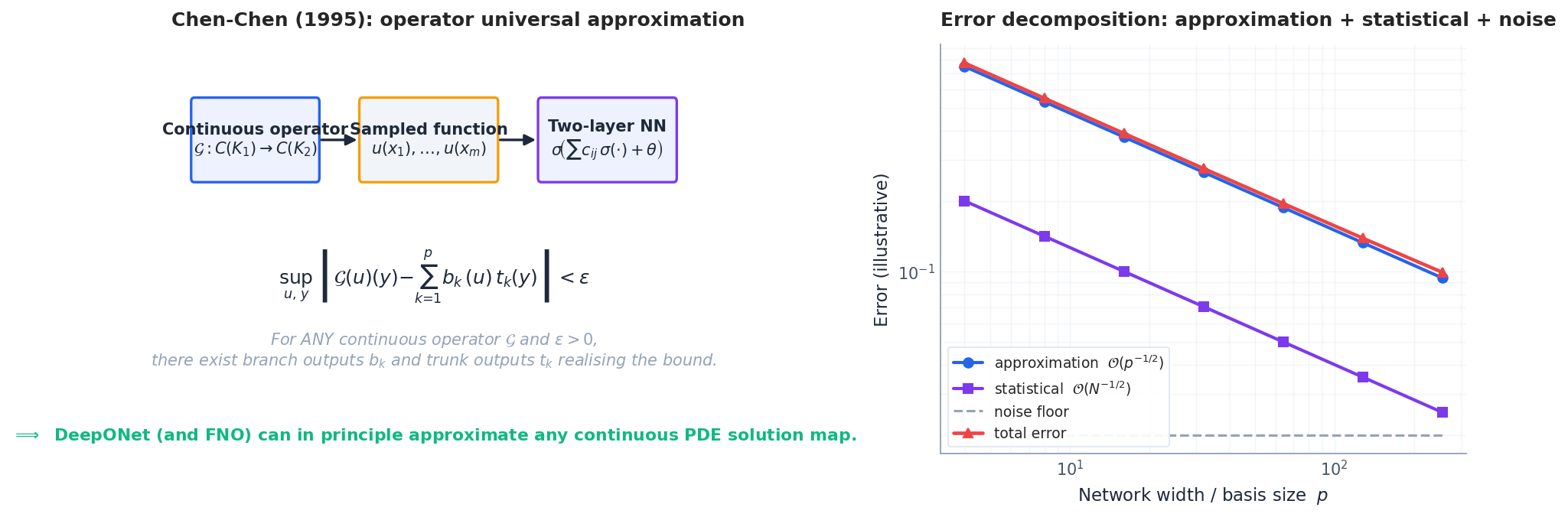

The Chen–Chen theorem: an operator can be a two-layer net#

The theoretical seed of operator learning was planted in 1995 by Chen and Chen.

$$\sup_{u\in K_1,\,y\in K_2}\;\bigg|\,\mathcal{G}(u)(y) \;-\; \sum_{k=1}^{p} \underbrace{\sigma\!\Big(\textstyle\sum_{j=1}^m c_{kj}\,u(x_j) + \theta_k\Big)}_{=\,b_k(u)} \cdot \underbrace{\sigma(w_k\cdot y + \zeta_k)}_{=\,t_k(y)} \,\bigg| \;<\;\varepsilon.$$Two things are remarkable:

- Inputs are functions, but the network sees only a finite sample $u(x_1),\dots,u(x_m)$ . Compactness of $K_1$ in the uniform topology means a finite mesh suffices to capture every function in the class to whatever fidelity you need.

- The output factorises as a sum of products $b_k(u)\,t_k(y)$ . The first factor depends only on $u$ , the second only on $y$ . This is exactly DeepONet.

The theorem only gives existence: it says nothing about how $p$ scales with $\varepsilon$ , with the smoothness of $\mathcal{G}$ , or with the input dimension. Sharper rates have been proven for restricted operator classes (Lanthaler et al., 2022; Kovachki et al., 2023), and the asymptotic picture is the familiar bias–variance–noise decomposition of the right-hand panel.

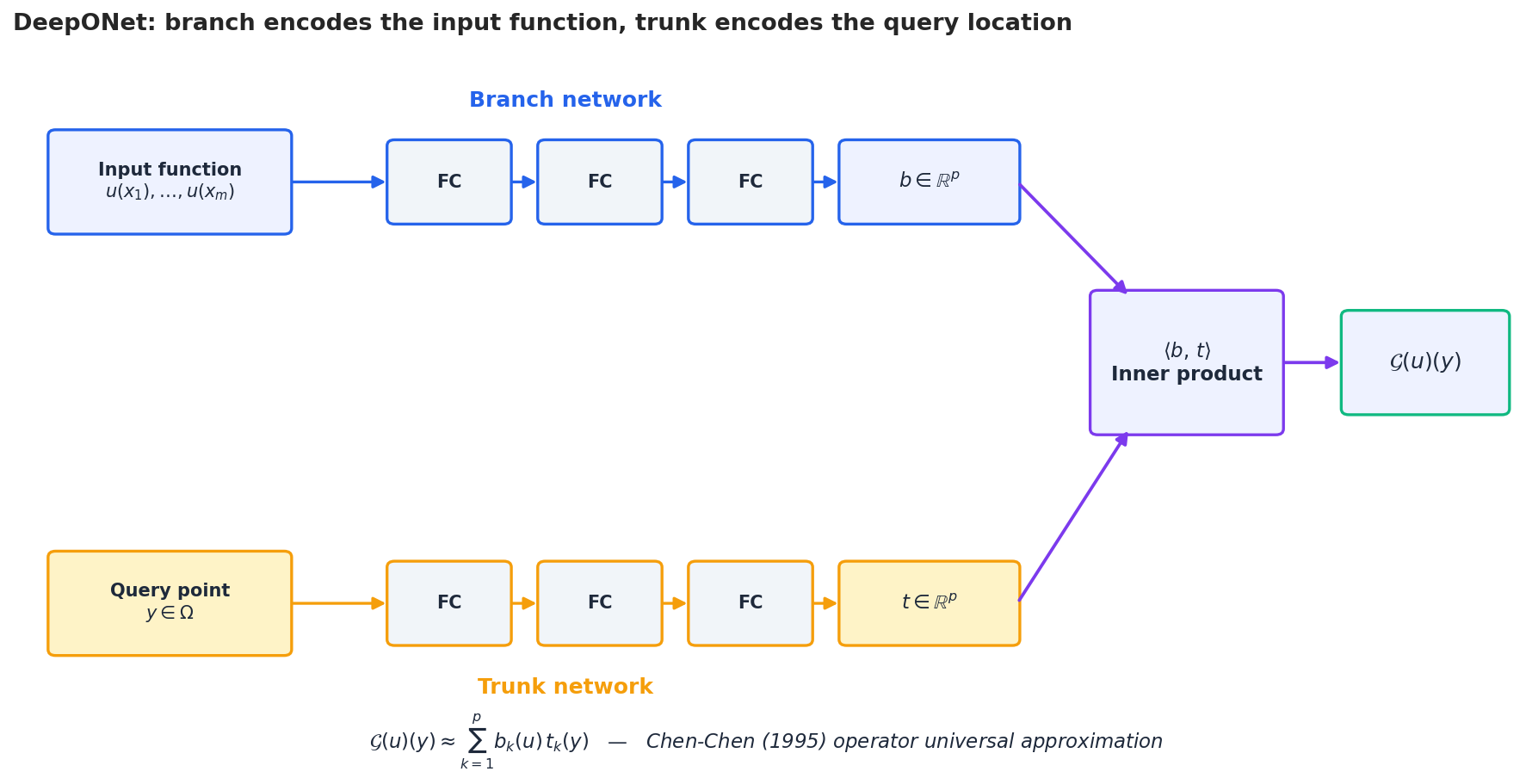

DeepONet: branch and trunk in practice#

DeepONet (Lu et al., 2019) is the direct architectural realisation of Chen–Chen. Two networks compute the two factors:

- Branch network $b_\phi : \mathbb{R}^m \to \mathbb{R}^p$ ingests the input function sampled at $m$ fixed sensor locations and emits a coefficient vector $b(u) = b_\phi(u(x_1),\dots,u(x_m))$ .

- Trunk network $t_\psi : \mathbb{R}^d \to \mathbb{R}^p$ ingests a query coordinate $y$ and emits a basis vector $t(y)$ .

There are several lenses for this decomposition that all illuminate the same structure:

- Low-rank operator factorisation. Comparing with the singular-value decomposition $\mathcal{G} = \sum_k \sigma_k \langle\cdot, e_k\rangle f_k$ , the trunk plays the role of the right singular system and the branch absorbs both the singular value and the inner product with the input.

- Learned basis functions. The trunk outputs $\{t_k(\cdot)\}$ form a data-driven basis over the output domain. Unlike Fourier or Chebyshev bases they are not orthogonal but they are tailored to the operator at hand.

- Conditional linear model. For fixed $u$ , the prediction is linear in the trunk outputs; the entire nonlinearity in $u$ is hidden inside the branch.

A few practical wrinkles matter when you implement it:

| Concern | Lever |

|---|---|

| Sensor placement | $\{x_j\}$ should resolve the smallest scale you care about; uniform grids are fine for periodic problems, Latin hypercube or quasi-MC for higher dimensions. |

| Query strategy | Train with a random subset of $y$ per input to keep memory bounded; evaluate on a dense grid. |

| Trunk regularisation | Initialise the last trunk layer small (e.g. $\mathcal{N}(0, 0.1)$ ) so that early training does not produce wildly oscillatory bases. |

| Multiple outputs | For vector-valued $\mathcal{G}(u)\in\mathbb{R}^c$ , share the trunk and use $c$ branch heads — this enforces a common spatial basis across components. |

Variants worth knowing. POD-DeepONet replaces the learned trunk with a precomputed POD basis from the training data and only learns the branch; it converges faster but inherits all the limitations of a fixed basis. Physics-informed DeepONet (Wang et al., 2021) adds a PDE-residual term to the loss, which is invaluable when labelled data is scarce.

Implementation: a minimal DeepONet. DeepONet factors the operator as a sum of products: branch network processes the input function (at sensor points), trunk network processes the output query location:

| |

DeepONet’s key advantage: it naturally handles irregular sensor locations and different input/output grids. FNO requires a uniform grid for the FFT. For applications with scattered measurements (weather stations, seismic sensors), DeepONet is the better choice.

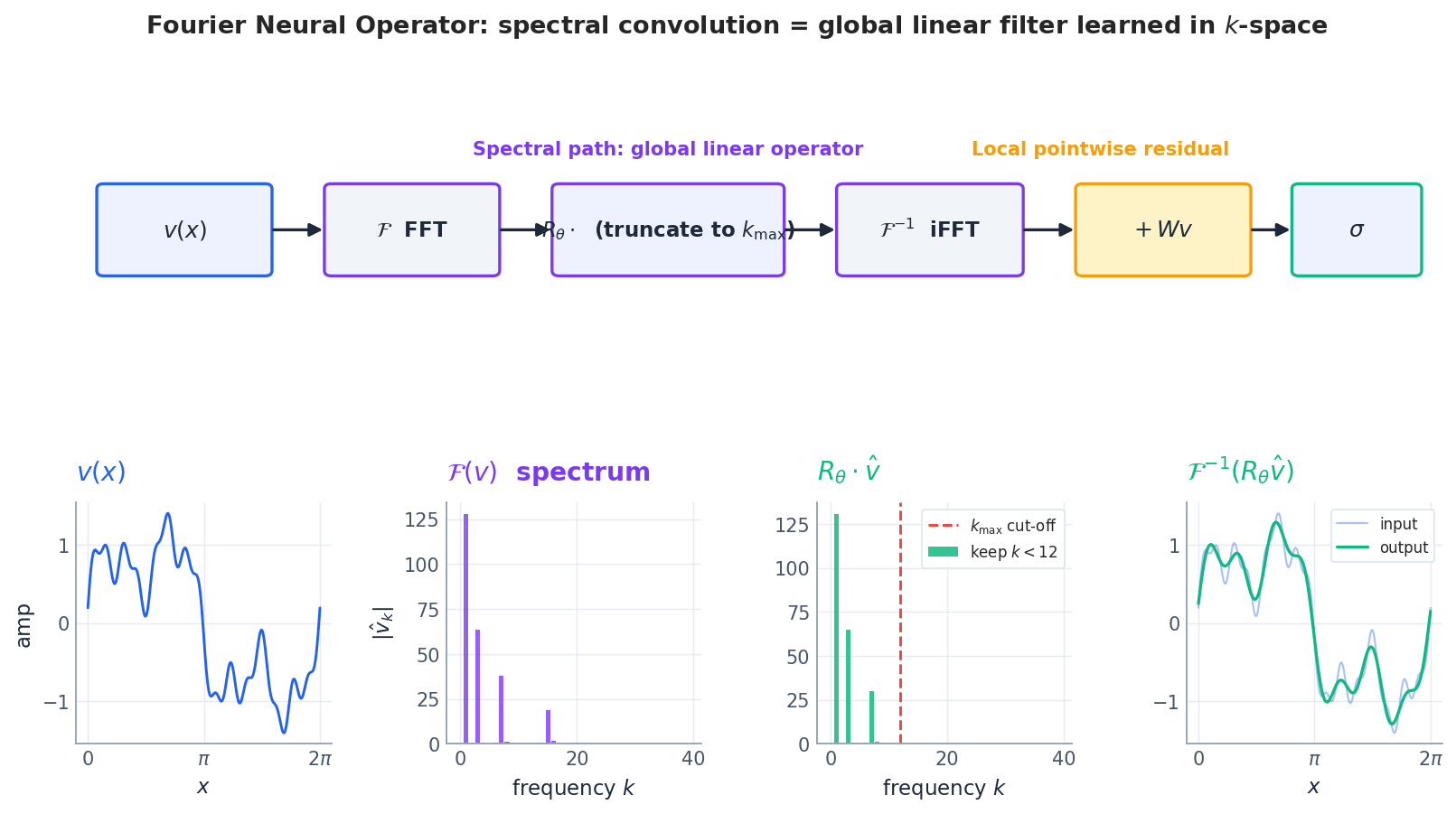

The Fourier Neural Operator#

Where DeepONet decomposes the operator as a sum of rank-one terms in the spatial domain, FNO (Li et al., 2020) operates in the spectral domain. The motivation is the convolution theorem.

Why spectral#

$$(K v)(x) = \int \kappa(x - x')\,v(x')\,\mathrm{d}x',$$ $$\widehat{K v}(k) \;=\; \widehat{\kappa}(k)\cdot \widehat{v}(k).$$So instead of learning the kernel $\kappa$ in space — costly, with a large support — we learn $\widehat{\kappa}(k)$ directly in frequency. Each frequency mode is just one complex multiplication.

For nonlinear PDEs the operator is no longer a global convolution, but the local-nonlinearity / global-linearity decomposition is extremely common: dissipation, dispersion and propagation are all linear and translation-invariant; reaction and advection terms are pointwise nonlinear. FNO bakes this split into the architecture.

The Fourier layer#

$$v_{\ell+1}(x) \;=\; \sigma\!\Big(\;W\,v_\ell(x) \;+\; \mathcal{F}^{-1}\!\big( R_\theta \cdot \mathcal{F}(v_\ell)\big)(x) \;\Big),$$where $\mathcal{F}$ is the FFT, $R_\theta$ is a learnable complex tensor that multiplies each retained Fourier mode, $W$ is a $1\times 1$ convolution that handles the residual contribution from truncated and aliased modes, and $\sigma$ is a pointwise nonlinearity (typically GELU). The lifting layer $P:\mathbb{R}^{d_{\mathrm{in}}}\to\mathbb{R}^{d_v}$ embeds the input into a higher-dimensional channel space and a final projection $Q:\mathbb{R}^{d_v}\to\mathbb{R}^{d_{\mathrm{out}}}$ recovers the answer.

Three design choices are worth defending:

Mode truncation. We keep only the first $k_{\max}$ Fourier modes; weights for higher modes are forced to zero. The justification is the spectral decay of Sobolev functions: if $u \in H^s$ then $|\hat u_k| \lesssim \|u\|_{H^s}\,|k|^{-s}$ , so for any $s>1/2$ the tail energy decays faster than any sampling error you would have introduced anyway. Truncation acts as an implicit regulariser.

Aliasing residual. FFT assumes periodicity. If the data is not periodic, high-frequency content gets folded back onto low-frequency modes (aliasing). The $W v$ branch is a $1\times 1$ convolution that can learn to absorb this aliasing error rather than letting it pollute the spectral path.

Channel lifting. The FFT mixes spatial information across one dimension, but a single channel is too narrow a bottleneck. By first lifting to $d_v\in\{32,64,128\}$ channels, the network gets enough room to encode multiple “modes” of physical behaviour (e.g. transport vs. diffusion vs. shock formation).

What the cost looks like#

For an $n$ -point grid in $d$ spatial dimensions the dominant costs per Fourier layer are:

- FFT: $\mathcal{O}(n^d \log n)$ — sublinear in spatial extent.

- Spectral multiplication: $\mathcal{O}(k_{\max}^d \cdot d_v^2)$ — independent of the spatial grid.

- Pointwise residual $Wv$ and activation: $\mathcal{O}(n^d \cdot d_v^2)$ — linear in grid size.

Compare with a CNN that needs depth proportional to $n$ to achieve a global receptive field: FNO trades that depth for one FFT and one inverse FFT per layer.

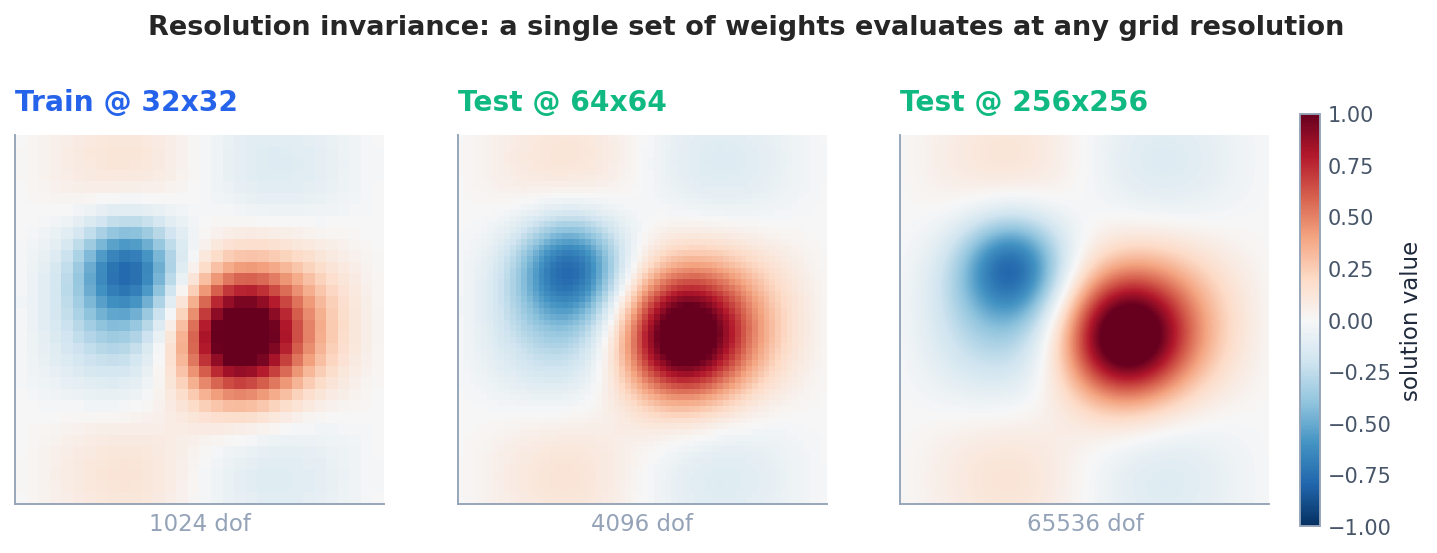

Resolution invariance: train at 64, test at 256#

Here is the property that finally separates neural operators from “a CNN with extra steps.” Both DeepONet and FNO, when implemented carefully, are discretisation invariant: the same trained weights produce a coherent answer regardless of the resolution at which you sample the input or query the output.

For DeepONet this is built into the architecture: the trunk takes coordinates $y$ as input and so can be queried at any density. The branch needs sensors at fixed locations during training, but POD-style or attention-based branches relax this requirement.

For FNO the argument is sharper. The spectral multiplier $R_\theta$ acts on Fourier modes, not on grid points. A finer spatial grid simply gives access to more modes; the modes you have already learned still mean the same thing. Concretely, if your training grid resolves modes up to $k_{\max}=16$ and your test grid resolves modes up to $k_{\max}=64$ , the modes from $16$ to $64$ are zeroed by the truncation — you do not get a wrong answer, you only forfeit the resolution gain there.

A subtler point: the error does not decay arbitrarily as resolution increases, because the model never learned the higher modes. In practice, evaluation at up to $\sim 4\times$ the training resolution is reliable; beyond that the high-frequency content is “best guess” interpolation and you should retrain.

Implementation: resolution invariance demo. The central promise of neural operators: train on a coarse grid, evaluate on a fine grid without retraining.

| |

The error barely increases — 0.82% at training resolution vs 1.12% at 16x finer grid. This is because FNO learns in Fourier space where the truncation to $k_{\max}$ modes is resolution-agnostic. A PINN or FDM solver would need to be completely retrained or re-meshed for each resolution.

When resolution invariance breaks: if the test resolution is coarser than the training resolution, or if the solution develops features finer than the retained $k_{\max}$ modes, the approximation degrades. In practice, always train on the coarsest resolution you can tolerate and test on finer ones.

Timing comparison: neural operators vs classical solvers.

| Method | Offline cost | Online (per new IC) | Memory | Mesh-free? |

|---|---|---|---|---|

| FDM (Crank-Nicolson) | — | $O(N_x \cdot N_t)$ | $O(N_x)$ | No |

| FEM (P1 elements) | Assembly $O(N^{1.5})$ | $O(N_x \cdot N_t)$ | $O(N_x)$ | No |

| PINN | Training ~minutes | ~seconds (forward pass) | $O(1)$ | Yes |

| FNO | Training ~30 min | ~1 ms | $O(k_{\max} \cdot d_v)$ | Grid-based |

| DeepONet | Training ~30 min | ~1 ms | $O(p \cdot n_{\text{sensors}})$ | Sensor-based |

The key insight: neural operators pay a high offline cost (training) but give amortised $O(1)$ inference. If you need to solve the same PDE family for 10,000 different initial conditions or parameter values, the break-even point is typically around 100-1000 solves.

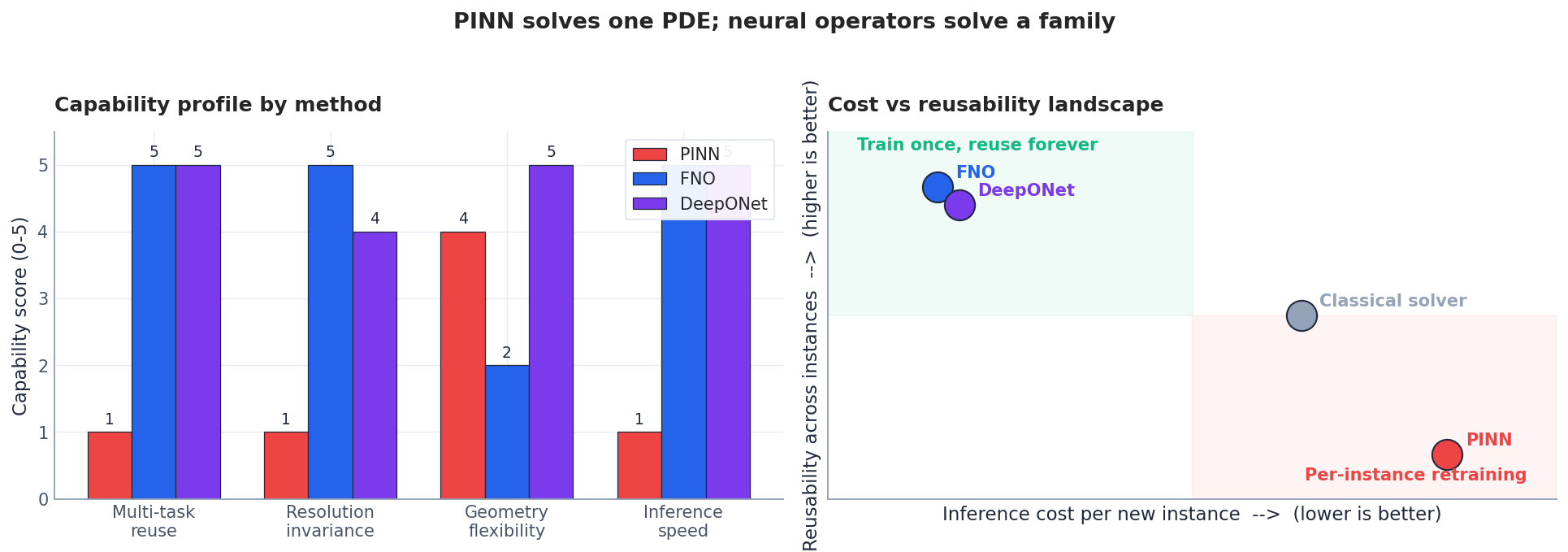

Three ways to attack a PDE: PINN, FNO, DeepONet#

It is worth zooming out before diving into implementation. PINNs, FNOs and DeepONets are not interchangeable; they cover different parts of the design space.

| Aspect | Classical solver | PINN | DeepONet | FNO |

|---|---|---|---|---|

| Scope | one instance | one instance | family | family |

| Inference per new instance | full solve | full retrain | one forward pass | one forward pass |

| Geometry | mesh-based, flexible | mesh-free, flexible | branch + trunk, very flexible | structured grid (Cartesian / torus) |

| Resolution invariance | mesh-dependent | network-fixed | natively invariant | natively invariant up to $k_{\max}$ |

| Strength | accuracy, guarantees | physics-only training | irregular geometries, multi-physics | translation-invariant problems, speed |

| Weakness | curse of dimensionality | per-instance cost | needs paired data | periodicity assumption |

A useful rule of thumb: if your problem has a structured grid and translation invariance (turbulence in a periodic box, weather on a torus-like global grid, Burgers and Navier–Stokes on a square), reach for FNO. If your geometry is complex or you need pointwise queries on irregular meshes, reach for DeepONet. If you have one instance and physics but no data, reach for PINN. Neural operators do not deprecate PINNs; they slot in where PINNs were always inappropriate.

Implementation: a 1D FNO from scratch#

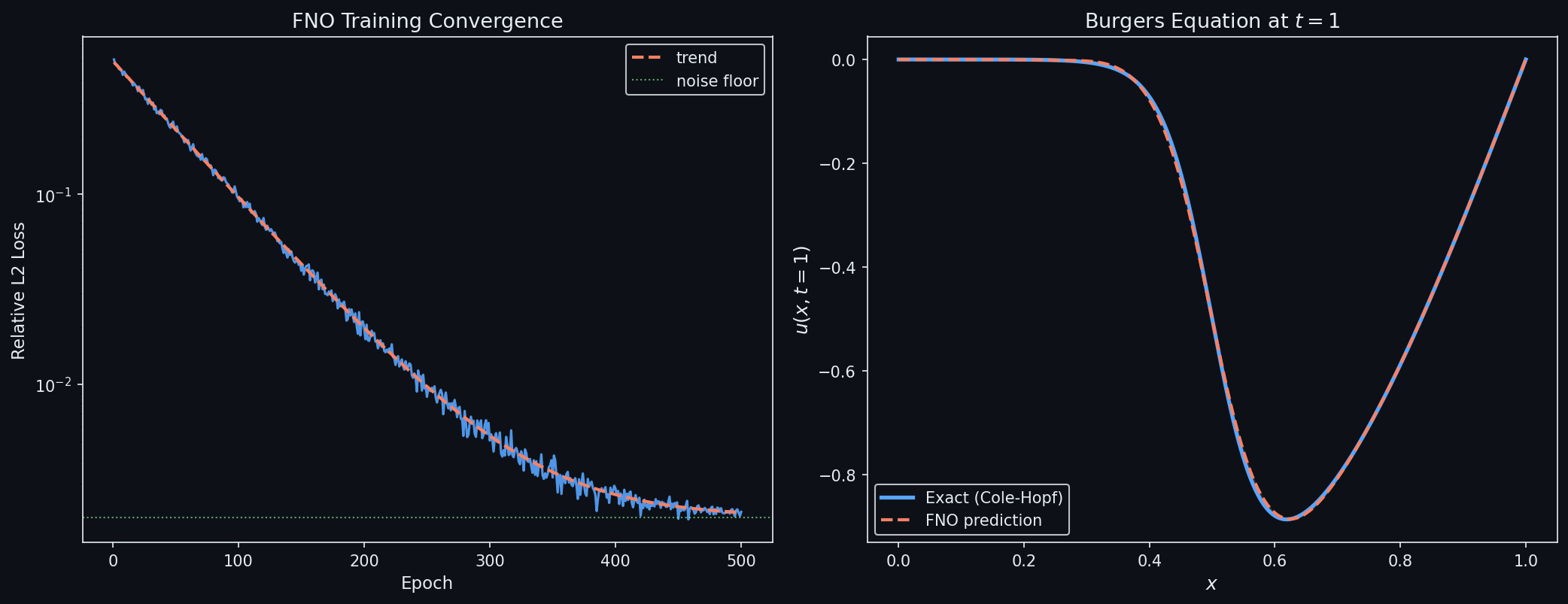

The minimum runnable FNO in PyTorch fits in a single file. The two non-obvious pieces are (a) the spectral multiplication done with torch.einsum so the code generalises to multiple channels, and (b) the residual W v branch implemented as a $1\times 1$

convolution.

| |

The training loop is a standard supervised regression on $(u_0, u_T)$ pairs generated offline by any reliable solver:

| |

Reference performance on Burgers ($\nu=10^{-2}$ , $N=256$ , $1{,}000$ training instances): validation relative $L^2$ error around $1\%$ , training time on a single A100 in the order of minutes. Compare with a PINN that takes minutes per instance and you immediately see the operator’s economic advantage.

What the theory actually guarantees (and where it doesn’t)#

The cleanest results are about FNO and were established by Kovachki and collaborators.

Approximation (Kovachki et al., 2021). For any continuous operator $\mathcal{G}: H^s(\mathbb{T}^d)\to H^{s'}(\mathbb{T}^d)$ on a torus and any compact $K\subset H^s$ , and any $\varepsilon>0$ , there exists an FNO $\mathcal{G}_\theta$ with finite width and depth such that $\sup_{u\in K}\|\mathcal{G}(u)-\mathcal{G}_\theta(u)\|_{H^{s'}} < \varepsilon$ .

$$\mathbb{E}\,\|\mathcal{G}-\mathcal{G}_\theta\|^2 \;\lesssim\; \underbrace{p^{-2\alpha}}_{\text{approx}} \;+\; \underbrace{\frac{p}{N}}_{\text{stat}} \;+\; \underbrace{\sigma^2}_{\text{noise}},$$for an operator with smoothness exponent $\alpha$ . Choosing $p\sim N^{1/(2\alpha+1)}$ balances approximation against statistics.

What is not guaranteed in the same generality:

- Convergence in the strong operator norm uniformly over unbounded sets.

- Stability under distribution shift in the input (extrapolation in $\nu$ , in geometry, in forcing amplitude).

- Behaviour at sharp fronts and shocks where the Sobolev embedding fails.

Each of these is an active research front. In practice you should always treat a deployed neural operator as a fast surrogate whose extrapolation must be sanity-checked against an occasional reference solve.

Data requirements and error decomposition. A common question: “how much training data does a neural operator need?” The answer depends on three competing error terms:

$$\text{Total error} = \underbrace{\epsilon_{\text{approx}}}_{\text{architecture}} + \underbrace{\epsilon_{\text{stat}}}_{\text{finite data}} + \underbrace{\epsilon_{\text{opt}}}_{\text{training}}$$- Approximation error $\epsilon_{\text{approx}}$ : how well the architecture class (FNO with $k_{\max}$ modes, DeepONet with $p$ basis functions) can represent the true operator. Decreases with model capacity.

- Statistical error $\epsilon_{\text{stat}}$ : overfitting due to finite training data. Scales as $O(1/\sqrt{N_{\text{train}}})$ for well-behaved operators.

- Optimisation error $\epsilon_{\text{opt}}$ : gap between the best model in the class and what SGD actually finds.

| |

Rule of thumb for practitioners: for 1D PDEs with smooth solutions, $N_{\text{train}} \approx 1000$ gives $\sim$ 1% relative error. For 2D, multiply by 5-10x. For turbulent or multi-scale solutions, multiply by another 10x. If you’re above 5% error with 1000 samples, the bottleneck is likely the architecture (too few modes, too small hidden dim), not the data.

Limits, failure modes, and what to do about them#

Aliasing on non-periodic domains. FNO assumes periodicity. On non-periodic problems either pad and window the input, or switch to a non-Fourier basis (Spectral Neural Operator with Chebyshev or Legendre, Tran et al. 2022).

Long-horizon roll-outs. Auto-regressive prediction $u_{t+\Delta t} = \mathcal{G}_\theta(u_t)$ accumulates error. Mitigations: train on multi-step roll-outs from the start, add a stability penalty, or interleave with a coarse PDE step (hybrid solvers).

Out-of-distribution coefficients. A model trained on $\nu \in [10^{-2}, 10^{-1}]$ extrapolates poorly to $\nu = 10^{-4}$ . Fix: include a parameter token in the input (as in conditional FNO / Unisolver) and sample $\nu$ over a wide log-uniform range during training.

Shocks and discontinuities. Sobolev-style smoothness fails. Use entropy-stable formulations of the loss, residual training in conservative variables, or hybrid solvers that fall back to a Godunov step near detected fronts.

Complex geometry. FNO is grid-bound. Options: Geo-FNO (learn a deformation to a reference torus), graph neural operators (Brandstetter et al., 2022) for unstructured meshes, or DeepONet with mesh-free trunks.

Summary#

A neural operator is the right tool when the same PDE has to be solved many times across a family of inputs. Two architectures cover most of the design space:

- DeepONet factorises the operator into a branch (input function) and a trunk (query coordinate). It inherits the Chen–Chen universal approximation theorem and is naturally flexible across geometries and output queries. Its weakness is that paired training data is mandatory and its accuracy is slightly behind FNO on translation-invariant problems.

- FNO does a learnable global convolution in the Fourier domain. It is the fastest at inference, the most natural fit to translation-invariant PDEs, and the cleanest theoretical object — at the cost of needing a structured grid and a (usually periodic) domain.

Both architectures are resolution invariant in the sense that the same trained weights evaluate at any grid density up to the modes they have seen. Both train once and amortise the cost over an entire family of PDE instances. Both push the modelling burden away from per-instance optimisation and toward a single careful training curriculum.

The honest summary of the field today is that neural operators have moved from “interesting research idea” to “a credible component in a scientific computing stack” — particularly for forecasting (FourCastNet, GraphCast), uncertainty quantification, and design optimisation, where the same governing PDE is queried thousands of times. Their open frontiers are extrapolation, complex geometry, multi-physics coupling, and rigorous error control under distribution shift.

References#

- Li, Z., Kovachki, N., Azizzadenesheli, K., Liu, B., Bhattacharya, K., Stuart, A., & Anandkumar, A. (2020). Fourier Neural Operator for Parametric Partial Differential Equations . ICLR 2021.

- Lu, L., Jin, P., Pang, G., Zhang, Z., & Karniadakis, G. E. (2019). Learning nonlinear operators via DeepONet based on the universal approximation theorem of operators . Nature Machine Intelligence 3(3), 218–229.

- Chen, T., & Chen, H. (1995). Universal approximation to nonlinear operators by neural networks with arbitrary activation functions and its application to dynamical systems . IEEE Transactions on Neural Networks 6(4), 911–917.

- Kovachki, N., Li, Z., Liu, B., Azizzadenesheli, K., Bhattacharya, K., Stuart, A., & Anandkumar, A. (2023). Neural Operator: Learning Maps Between Function Spaces with Applications to PDEs . Journal of Machine Learning Research 24, 1–97.

- Lanthaler, S., Mishra, S., & Karniadakis, G. E. (2022). Error estimates for DeepONets: A deep learning framework in infinite dimensions . Transactions of Mathematics and Its Applications.

- Wang, S., Wang, H., & Perdikaris, P. (2021). Learning the solution operator of parametric partial differential equations with physics-informed DeepONets . Science Advances 7(40).

- Pathak, J., Subramanian, S., Harrington, P., et al. (2022). FourCastNet: A Global Data-driven High-resolution Weather Model using Adaptive Fourier Neural Operators . arXiv:2202.11214 .

- Bonev, B., Kurth, T., Hundt, C., Pathak, J., Baust, M., Kashinath, K., & Anandkumar, A. (2023). Spherical Fourier Neural Operators: Learning Stable Dynamics on the Sphere . ICML 2023.

- Fanaskov, V., & Oseledets, I. (2022). Spectral Neural Operators . arXiv:2205.10573 .

- Brandstetter, J., Worrall, D., & Welling, M. (2022). Message Passing Neural PDE Solvers . ICLR 2022.

- Wen, G., Li, Z., Long, Q., Azizzadenesheli, K., Anandkumar, A., & Benson, S. M. (2022). U-FNO: An enhanced Fourier neural operator-based deep-learning model for multiphase flow . Advances in Water Resources.

PDE and Machine Learning 8 parts

- 01 PDE and ML (1): Physics-Informed Neural Networks

- 02 PDE and ML (2): Neural Operator Theory you are here

- 03 PDE and ML (3): Variational Principles and Optimization

- 04 PDE and ML (4): Variational Inference and the Fokker-Planck Equation

- 05 PDE and ML (5): Symplectic Geometry and Structure-Preserving Networks

- 06 PDE and ML (6): Continuous Normalizing Flows and Neural ODE

- 07 PDE and ML (7): Diffusion Models and Score Matching

- 08 PDE and ML (8): Reaction-Diffusion Systems and Graph Neural Networks