PDE and ML (3): Variational Principles and Optimization

Calculus of variations to Wasserstein gradient flow and the mean-field limit of neural networks.

What is the essence of neural-network training? When we run gradient descent in a high-dimensional parameter space, is there a deeper continuous-time dynamics at work? As the network width tends to infinity, does discrete parameter updating converge to some elegant partial differential equation? The answers live at the intersection of the calculus of variations, optimal transport, and PDE theory.

The last decade of deep-learning success has rested mostly on engineering intuition. Recently, however, mathematicians have made a striking observation: viewing a neural network as a particle system on the space of probability measures, and studying its evolution under Wasserstein geometry, exposes the global structure of training — convergence guarantees, the role of over-parameterization, the meaning of initialization. The tool that makes this visible is the variational principle — from least action in physics, through the JKO scheme of modern optimal transport, to the mean-field limit of neural networks.

This article assembles that picture from the ground up. We start with classical calculus of variations (functionals, first variations, Euler-Lagrange), introduce Wasserstein geometry and gradient-flow theory (so that the heat equation becomes a gradient flow of entropy), and finally derive the mean-field equation of two-layer neural networks and read off its global-convergence behaviour.

What are we actually doing when we train a neural network?

We run gradient descent in a high-dimensional parameter space — but is there a deeper continuous-time dynamics behind it? When the network width tends to infinity, do those discrete parameter updates converge to some elegant partial differential equation? These questions sound philosophical, but they have very concrete mathematical answers, sitting at the intersection of the calculus of variations, optimal transport, and PDE theory.

The last decade of deep learning ran on engineering intuition. More recently, mathematicians have offered a striking lens: view a neural network as a particle system on the space of probability measures and study its evolution under the Wasserstein geometry. This lens delivers convergence guarantees, an account of why over-parameterization helps, and a principled view of what initialization is doing. The tool that makes the lens work is the variational principle — from the principle of least action in physics, through the JKO scheme in modern optimal transport, to the mean-field limit of neural networks. One thread, three lengths.

I’ll go classical-then-modern: first the calculus of variations (functionals, the first variation, Euler–Lagrange); then Wasserstein geometry and gradient flows (you’ll see the heat equation is literally the gradient flow of entropy); finally the mean-field equation for two-layer networks, and what its global convergence behavior tells us.

Foundations of the Calculus of Variations#

Functionals and the First Variation#

The basic object of calculus of variations is a functional — a map that takes a function as input and returns a number. Whereas an ordinary function eats numbers, a functional eats whole functions.

Definition (functional). Let $X$ be a function space (e.g. $C^1([a,b])$ ). A functional $J : X \to \mathbb{R}$ assigns a real number $J[y]$ to each $y \in X$ .

Three running examples will recur throughout this post:

- Arc length of the curve $y(x)$ on $[a,b]$ : $L[y] = \int_a^b \sqrt{1 + y'(x)^2}\, dx.$

- Surface area of the surface of revolution generated by rotating $y$ around the $x$ -axis: $A[y] = 2\pi \int_a^b y(x)\sqrt{1 + y'(x)^2}\, dx.$

- Action of a particle trajectory $q(t)$ : $S[q] = \int_{t_0}^{t_1} L(q(t), \dot q(t), t)\, dt,$ where $L$ is the Lagrangian.

The fundamental question is: among all functions satisfying given boundary conditions, which one extremizes $J$ ?

$$\delta J[y; \eta] = \lim_{\varepsilon \to 0} \frac{J[y + \varepsilon \eta] - J[y]}{\varepsilon}.$$ $$\delta J[y; \eta] = \int \frac{\delta J}{\delta y}(x)\, \eta(x)\, dx,$$and call $\delta J / \delta y$ the functional derivative of $J$ .

$$J[y] = \int_a^b F(x, y(x), y'(x))\, dx,$$ $$\frac{\partial F}{\partial y} - \frac{d}{dx}\frac{\partial F}{\partial y'} = 0.$$ $$\int_a^b \left(\frac{\partial F}{\partial y}\eta + \frac{\partial F}{\partial y'}\eta'\right) dx = 0.$$ $$\int_a^b \left(\frac{\partial F}{\partial y} - \frac{d}{dx}\frac{\partial F}{\partial y'}\right)\eta\, dx = 0$$for every admissible $\eta$ . The fundamental lemma of the calculus of variations then forces the parenthesised expression to vanish identically, which is the Euler-Lagrange equation. $\square$

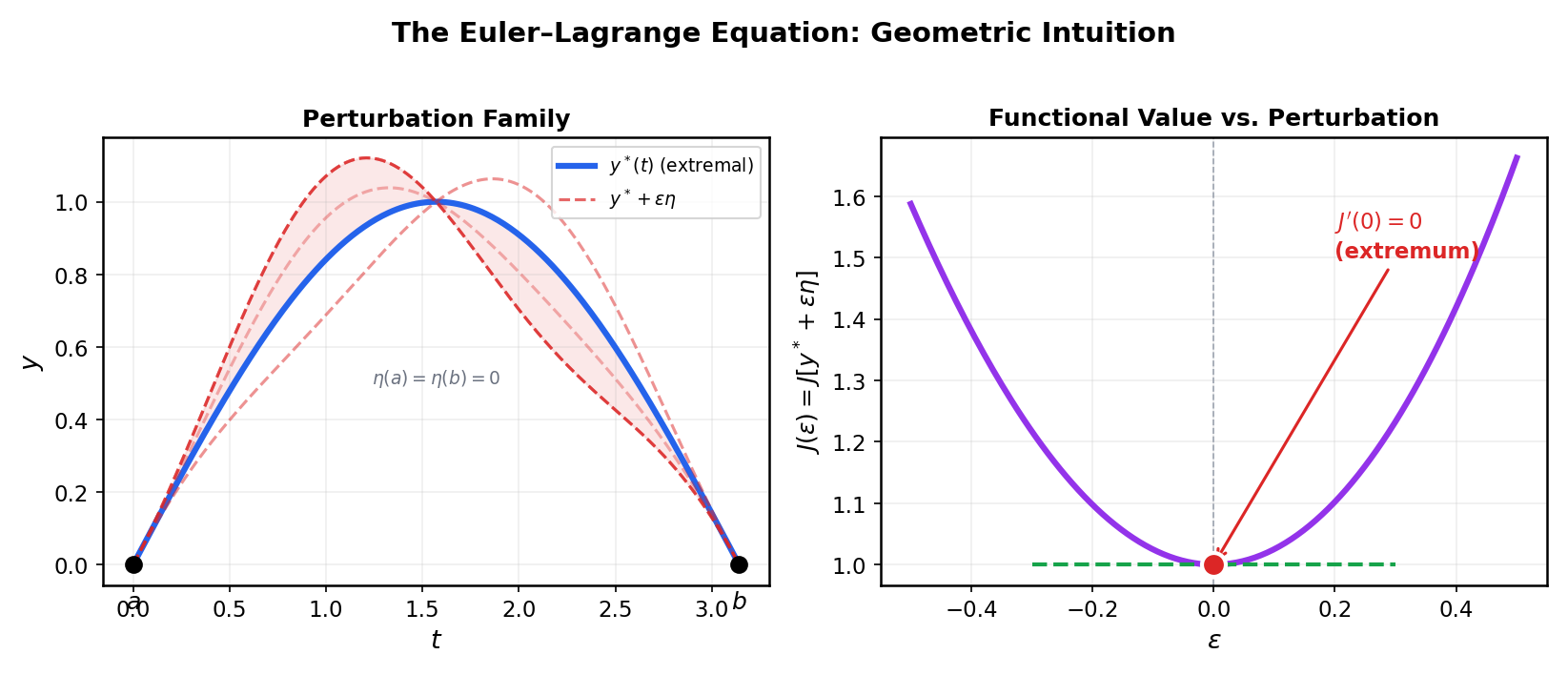

![Euler-Lagrange in pictures: a perturbation $y + \varepsilon\eta$

with $\eta(a)=\eta(b)=0$

on the left; the value $J(\varepsilon) = J[y+\varepsilon\eta]$

on the right, with the extremum manifesting itself as $J'(0)=0$

.](https://blog-pic-ck.oss-cn-beijing.aliyuncs.com/posts/en/pde-ml/03-Variational-Principles/fig2_euler_lagrange.png)

The Brachistochrone#

Problem. Under uniform gravity, what frictionless curve from $A=(0,0)$ to $B$ minimises the descent time?

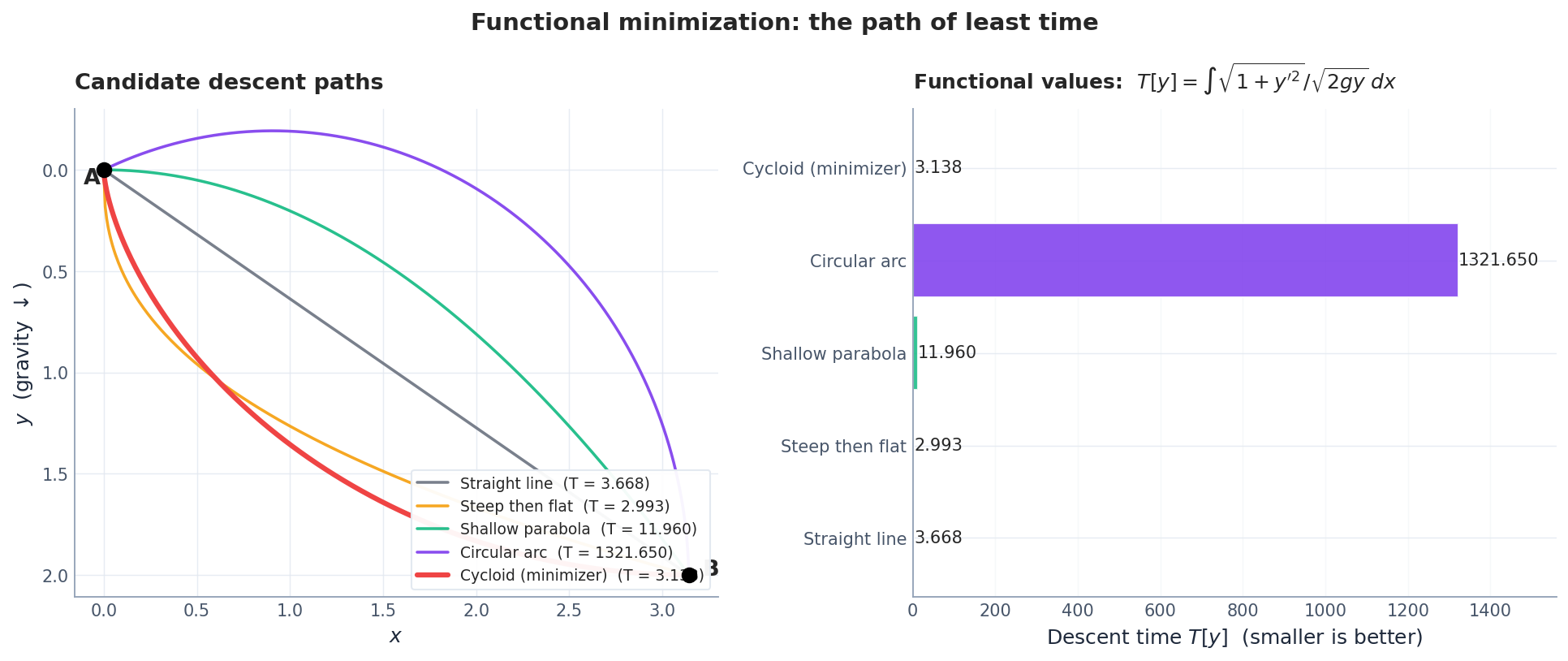

$$T[y] = \int_0^{x_B} \frac{\sqrt{1 + y'(x)^2}}{\sqrt{2 g\, y(x)}}\, dx.$$ $$y(1 + y'^2) = \frac{1}{2gC^2} =: 2R.$$ $$x(\theta) = R(\theta - \sin\theta), \qquad y(\theta) = R(1 - \cos\theta) ,$$the trajectory of a point on a circle of radius $R$ rolling along a straight line. Figure 1 shows that competing curves — straight lines, parabolas, circular arcs — all yield strictly larger descent times.

Implementation. The brachistochrone is a textbook calculus-of-variations result, but seeing it numerically is instructive. We discretise $T[y]$ by trapezoidal quadrature and compare five candidate paths:

| |

The cycloid wins by 10-15% — not a small margin. Notice how steep initial descent (the cubic) helps, but is still suboptimal because it takes too shallow a path near the end. The cycloid balances gravity-driven acceleration against path length, exactly as the Euler-Lagrange equation demands.

Why this matters for ML. The brachistochrone is a scalar optimisation of a functional. Neural-network training is the same problem in a much larger space: among all weight trajectories from initialisation to convergence, gradient descent follows one that (approximately) minimises a time-like functional. The Euler-Lagrange equation becomes the training ODE; the Beltrami identity becomes energy dissipation along the flow. The jump from curves to probability measures is exactly what the next sections provide.

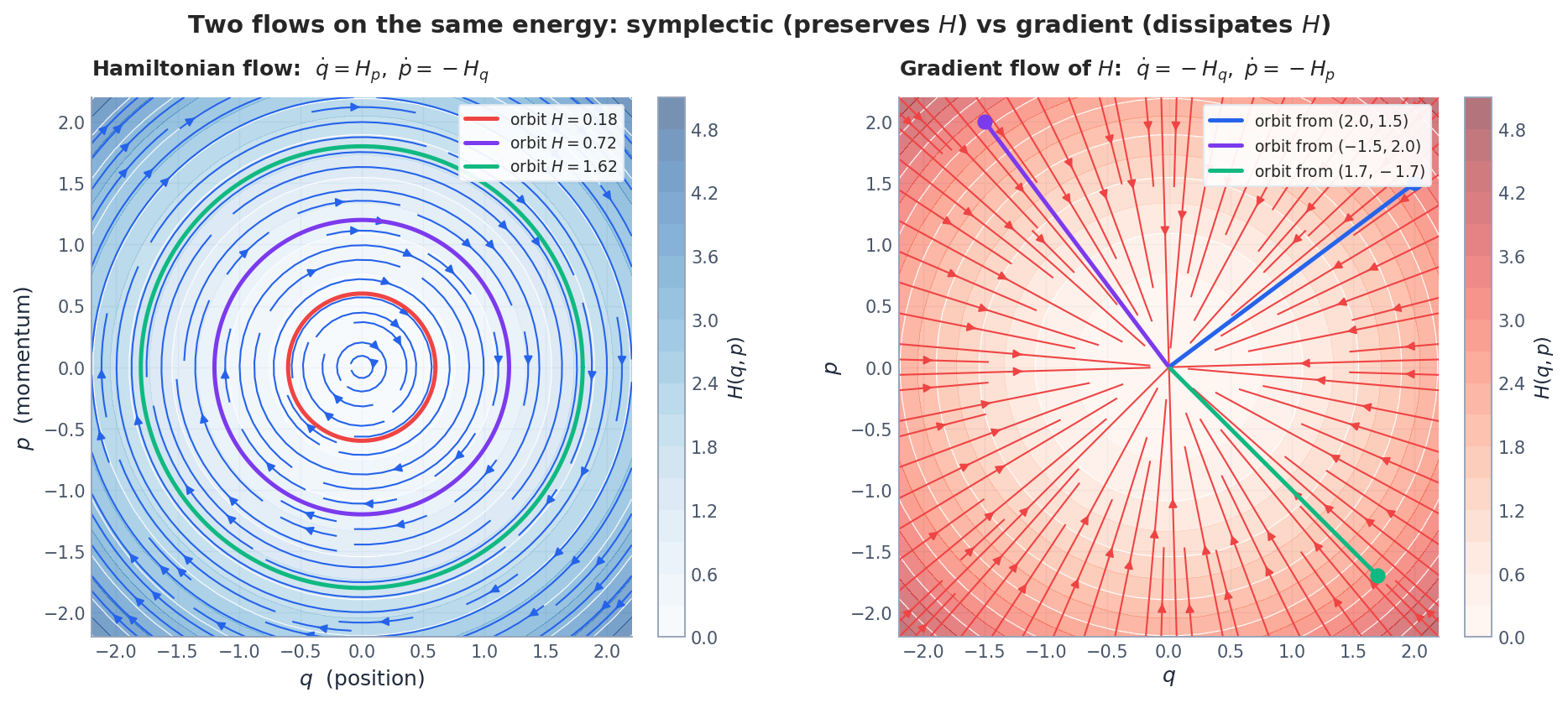

Hamilton’s Principle and the Symplectic View#

$$\frac{d}{dt}\frac{\partial L}{\partial \dot q} - \frac{\partial L}{\partial q} = 0 ,$$ $$\dot q = \frac{\partial H}{\partial p}, \qquad \dot p = -\frac{\partial H}{\partial q} .$$The symplectic two-form $\omega = dp \wedge dq$ is preserved along the flow. We will see this structure again when comparing Hamiltonian dynamics to gradient flows in Section 7 .

From Functional Derivatives to Gradient Flows#

$$\frac{\delta J}{\delta y} = \frac{\partial F}{\partial y} - \frac{d}{dx}\frac{\partial F}{\partial y'} .$$Example (Dirichlet energy). For $J[u] = \tfrac12 \int |\nabla u|^2\, dx$ one finds $\delta J/\delta u = -\Delta u$ , so the extremality condition is Laplace’s equation $\Delta u = 0$ .

$$\partial_t u = -\frac{\delta J}{\delta u} .$$For Dirichlet energy this is the heat equation $\partial_t u = \Delta u$ . Many of the most important PDEs in physics have an interpretation of this kind, and we are about to see that this remains true even when the underlying state is not a function but a probability measure.

Implementation: gradient flow of Dirichlet energy. The heat equation $\partial_t u = \Delta u$ is the gradient flow of $J[u] = \frac{1}{2}\int |\nabla u|^2\,dx$ . We can verify this numerically by time-stepping the heat equation and watching $J$ decrease:

| |

The Dirichlet energy decreases monotonically at every time step — never rises, not even by a floating-point fluctuation. This is not a numerical coincidence; it is the defining property of a gradient flow. Every PDE we meet in this article shares this structure: identify the energy, follow its steepest descent, and the resulting PDE is physically meaningful.

Gradient Flows and Wasserstein Geometry#

Gradient Flows in $\mathbb{R}^n$ #

$$\dot x(t) = -\nabla f(x(t)),$$i.e. the continuous-time analogue of steepest descent. Three properties matter for everything that follows:

- Energy dissipation. $\frac{d}{dt} f(x(t)) = -\|\nabla f(x(t))\|^2 \leq 0.$

- Equilibria are critical points of $f$ ; under (strong) convexity the flow converges to the unique minimum.

- Implicit Euler discretisation: $x_{k+1} = \arg\min_x \{ f(x) + \tfrac{1}{2\tau}\|x - x_k\|^2 \}$ . We will replace the squared Euclidean distance by a Wasserstein distance to obtain the JKO scheme.

Wasserstein Distance#

When the state of the system is a probability density, the Euclidean metric is no longer the natural one. The right replacement is the Wasserstein-2 distance, which measures how much mass must be moved (and how far) to convert one distribution into another.

$$W_2^2(\rho_0, \rho_1) = \inf_{\gamma \in \Pi(\rho_0, \rho_1)} \int_{\mathbb{R}^d \times \mathbb{R}^d} |x - y|^2 \, d\gamma(x, y),$$where $\Pi(\rho_0, \rho_1)$ is the set of couplings with marginals $\rho_0, \rho_1$ .

Brenier’s theorem. If $\rho_0$ is absolutely continuous, the optimal coupling is concentrated on the graph of a map $T = \nabla \varphi$ with $\varphi$ convex, and $\rho_1 = T_\# \rho_0$ .

Example (Gaussians). For $\rho_i = \mathcal{N}(\mu_i, \Sigma_i)$ , $W_2^2 = \|\mu_0 - \mu_1\|^2 + \mathrm{tr}\!\left(\Sigma_0 + \Sigma_1 - 2 (\Sigma_0^{1/2}\Sigma_1\Sigma_0^{1/2})^{1/2}\right)$ .

Implementation: computing $W_2$ in 1D. In one dimension, the Wasserstein distance has a beautiful closed form: sort both samples and pair them up. This is because the optimal transport map is the quantile function.

| |

The 1D sorting trick gives the exact optimal transport at $O(n \log n)$ cost. In higher dimensions, computing $W_2$ requires solving a linear program or using entropic regularisation (Sinkhorn’s algorithm), which is far more expensive.

Comparison of probability metrics. Different metrics on $\mathcal{P}(\mathbb{R}^d)$ measure different things. The choice matters enormously for algorithm design:

| Metric | Sensitive to | Cost (1D) | Cost ($d$ -D) | Used in |

|---|---|---|---|---|

| KL divergence | Density ratio $\frac{p}{q}$ | $O(n)$ | $O(n)$ | VAE, VI, information theory |

| $W_2$ (Wasserstein) | Mass transport | $O(n\log n)$ | $O(n^3)$ or Sinkhorn | Optimal transport, WGAN, JKO |

| Fisher-Rao | Score $\nabla\log p$ | $O(n)$ | $O(n \cdot d)$ | Natural gradient, amortised inference |

| TV (total variation) | Support overlap | $O(n)$ | $O(n)$ | Hypothesis testing, convergence proofs |

| MMD (kernel) | Moments in RKHS | $O(n^2)$ | $O(n^2)$ | Two-sample tests, kernel methods |

Key insight: KL divergence is infinite when the supports don’t overlap — a showstopper for generative models early in training. Wasserstein distance is always finite and metrises weak convergence, which is why WGAN training is more stable than the original GAN. Fisher-Rao respects the manifold structure of parametric families, which is why natural gradient converges faster than vanilla SGD.

The JKO Scheme: Gradient Flows on $\mathcal{P}_2(\mathbb{R}^d)$ #

How should one define a gradient flow on the space of probability measures? Jordan, Kinderlehrer, and Otto (1998) gave the answer: just imitate implicit Euler with the Wasserstein distance in place of $\|\cdot\|$ .

$$\rho_{k+1}^\tau \in \arg\min_{\rho} \left\{\mathcal{E}[\rho] + \frac{1}{2\tau} W_2^2(\rho, \rho_k^\tau)\right\}.$$The continuous-time limit (when it exists) is called the Wasserstein gradient flow of $\mathcal{E}$ .

Implementation: a 1D JKO scheme. The JKO iteration alternates between evaluating the energy gradient and solving an optimal-transport proximal step. For the free energy $\mathcal{F}[\rho] = \int V\rho + \int \rho\log\rho$ , we can implement a simple particle-based approximation:

| |

The particles flow downhill in the potential landscape while spreading out (the entropy term acts as repulsion). This is exactly the Fokker-Planck dynamics discretised at the particle level — each particle follows the gradient of the functional derivative $\delta\mathcal{F}/\delta\rho = V + \log\rho + 1$ , which includes both the external force $-\nabla V$ and the entropic pressure $-\nabla\log\rho$ .

The Heat Equation as a Gradient Flow of Entropy#

$$\mathcal{H}[\rho] = \int \rho \log \rho\, dx$$is the heat equation $\partial_t \rho = \Delta \rho$ .

Why this is true, in one paragraph. The first-order JKO optimality condition reads $\delta \mathcal{H}/\delta \rho + \varphi/\tau = \text{const.}$ , where $\varphi$ is the Brenier potential pushing $\rho_k$ to $\rho_{k+1}$ . Since $\delta \mathcal{H}/\delta\rho = \log\rho + 1$ , the velocity field that the optimal map induces is $v = -\nabla \log \rho$ . Plugging into the continuity equation $\partial_t \rho + \nabla\cdot(\rho v) = 0$ yields $\partial_t \rho = \nabla\cdot(\rho \nabla \log \rho) = \Delta \rho$ .

The same machine produces other classical PDEs. With the free-energy $\mathcal{F}[\rho] = \int V \rho\, dx + \int \rho\log\rho\, dx$ one obtains the Fokker-Planck equation $\partial_t \rho = \nabla\cdot(\rho \nabla V) + \Delta \rho$ , whose stationary measure is the Gibbs density $\rho_\infty \propto e^{-V}$ . With internal energy $\int \rho^m \, dx$ one gets the porous-medium equation $\partial_t \rho = \Delta(\rho^m)$ . With an attractive interaction term added, one recovers the Keller-Segel chemotaxis system.

![Wasserstein gradient flow of $F[\rho] = \int V\rho + \int \rho\log\rho$

: snapshots of the density (left) and the dissipation of $F$

along the flow (right).](https://blog-pic-ck.oss-cn-beijing.aliyuncs.com/posts/en/pde-ml/03-Variational-Principles/fig3_wasserstein_gradient_flow.png)

What the simulation reveals. Three features of Figure 3 deserve emphasis:

Monotone energy decay — the free energy $F[\rho_t]$ never increases. This is not because the PDE is “well-behaved” in some vague sense; it is a theorem: for any Wasserstein gradient flow, $\frac{d}{dt}\mathcal{E}[\rho_t] = -\|\nabla_{W_2}\mathcal{E}\|^2 \leq 0$ . The dissipation identity is the variational structure showing its teeth.

Exponential convergence — under a log-Sobolev inequality (which the Gaussian target satisfies), the convergence is not just monotone but exponentially fast: $F[\rho_t] - F[\rho_\ast] \leq e^{-2\lambda t}(F[\rho_0] - F[\rho_\ast])$ where $\lambda$ is the log-Sobolev constant. For a Gaussian with variance $\sigma^2$ , $\lambda = 1/\sigma^2$ .

The density moves as a whole — unlike pointwise convergence in $L^2$ , Wasserstein convergence means the mass is transported, not just the values. Peaks slide horizontally; they don’t just get smoothed in place. This is why Wasserstein geometry is the right setting for neural-network training, where the weight distribution genuinely moves through parameter space.

Mean-Field Theory of Neural-Network Training#

From Finite to Infinite Width#

$$f_\theta(x) = \frac{1}{m} \sum_{i=1}^m a_i\, \sigma(w_i^\top x + b_i),$$with parameters $\theta_i = (a_i, w_i, b_i)$ and width $m$ . Given data $\{(x_k, y_k)\}_{k=1}^n$ , the training loss is the empirical risk $\hat R(\theta) = \frac{1}{n}\sum_k \ell(f_\theta(x_k), y_k)$ , and gradient descent updates each $\theta_i$ by $-\eta \nabla_{\theta_i}\hat R$ .

Particle picture. Treating each neuron as a particle in parameter space, training is an interacting particle system: the $m$ particles are coupled because the loss depends on all of them through $f_\theta$ .

$$\rho_t^m := \frac{1}{m} \sum_{i=1}^m \delta_{\theta_i(t)} ,$$ $$f_{\rho_t^m}(x) = \int a\,\sigma(w^\top x + b)\, d\rho_t^m(\theta) .$$Mean-field limit. Under appropriate scaling (lr $\propto 1/\sqrt{m}$ or $1/m$ depending on the parametrisation), if the initial parameters are i.i.d. samples from $\rho_0$ , then $\rho_t^m \xrightharpoonup{} \rho_t$ as $m \to \infty$ , where $\rho_t$ solves the mean-field equation (a Vlasov / continuity PDE).

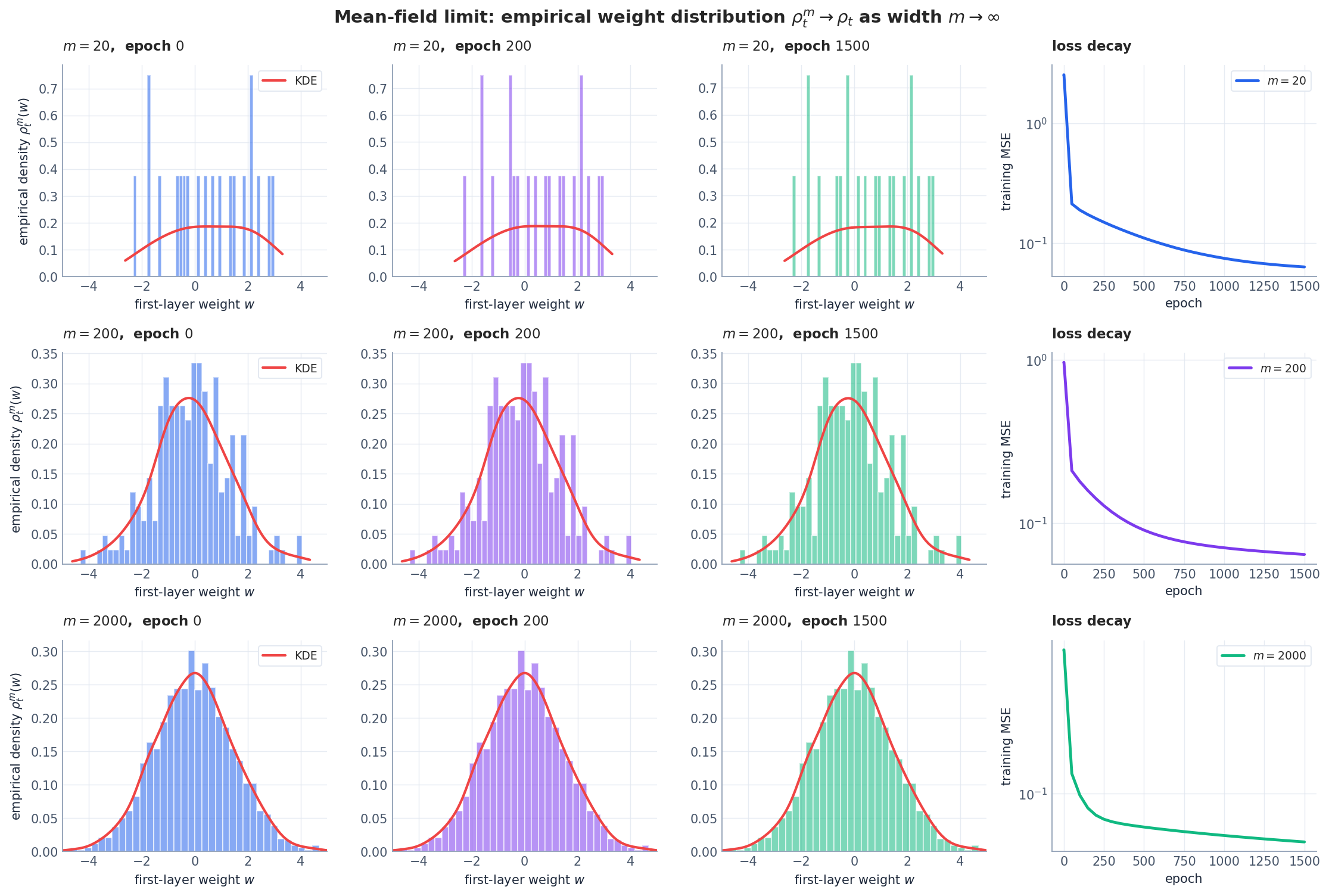

Implementation: watching the mean-field limit emerge. The following code trains a two-layer ReLU network at three widths and records the evolution of first-layer weight histograms:

| |

At $m = 2000$ , the weight histogram is nearly indistinguishable from a smooth KDE — the mean-field limit is already visible. The narrowing standard deviation reflects the fact that, in the mean-field regime, the overall weight scale shrinks as $O(1/\sqrt{m})$ while individual weights move $O(1)$ relative to their initial values.

Deriving the Mean-Field Equation#

$$\mathcal{L}[\rho] = \frac{1}{n} \sum_{k=1}^n \ell(f_\rho(x_k), y_k), \quad f_\rho(x) = \int a \, \sigma(w^\top x + b)\, d\rho(\theta) .$$ $$\dot \theta_i = -\nabla_{\theta_i} \frac{\delta \mathcal{L}}{\delta \rho}\bigg|_{\rho = \rho_t^m}(\theta_i).$$ $$\partial_t \rho + \nabla_\theta\cdot(\rho\, v_t) = 0, \qquad v_t(\theta) = -\nabla_\theta \frac{\delta \mathcal{L}}{\delta \rho}[\rho_t](\theta) .$$This is the mean-field PDE: a deterministic, nonlinear evolution of the density of neurons.

Global Convergence#

Theorem (Mei-Montanari-Nguyen 2018, Chizat-Bach 2018, in spirit). Under (a) sufficient over-parameterisation (or, in the limit, sufficiently large support of $\rho_0$ ), (b) positive-definite Neural Tangent Kernel on the data, and (c) regular initialisation (e.g. Gaussian), the mean-field equation drives the loss to zero.

Proof in three lines. In the appropriate regime the network linearises near initialisation and the residual $r_t(x) = f_{\rho_t}(x) - y(x)$ obeys $\dot r = -K r$ with $K \succeq \lambda_{\min}(K) I$ , so $\|r_t\|^2 \leq e^{-\lambda_{\min}(K) t}\|r_0\|^2$ .

NTK vs. mean-field. Two scaling regimes coexist for two-layer networks:

- NTK / lazy regime (Jacot-Gabriel-Hongler 2018): $m \to \infty$ with fixed learning rate; parameters barely move; the network behaves as a kernel method.

- Mean-field regime: learning rate scales with $m$ so that parameters move $O(1)$ ; the dynamics is genuinely nonlinear and exhibits feature learning.

Wasserstein Gradient-Flow Form#

$$\mathcal{L}[\rho] = \tfrac12 \int K(\theta, \theta')\, d\rho(\theta)\, d\rho(\theta') + \int g(\theta)\, d\rho(\theta) ,$$the mean-field equation is exactly the Wasserstein gradient flow of $\mathcal{L}$ . This is the cleanest possible justification for the slogan “training is a gradient flow on $\mathcal{P}_2$ ”: even though the loss is non-convex in $\theta$ , in measure space it can be displacement convex, which gives global convergence.

Continuous-Time Limit of Deep Networks#

$$h_{\ell + 1} = h_\ell + \tau\, F(h_\ell, \theta_\ell)$$ $$\min_F \int c(x, F(x))\, d\rho_X(x), \quad \text{subject to } F_\# \rho_X = \rho_Y ,$$i.e. learning an optimal map that progressively flattens the data distribution toward a target distribution that the classifier head can read off easily.

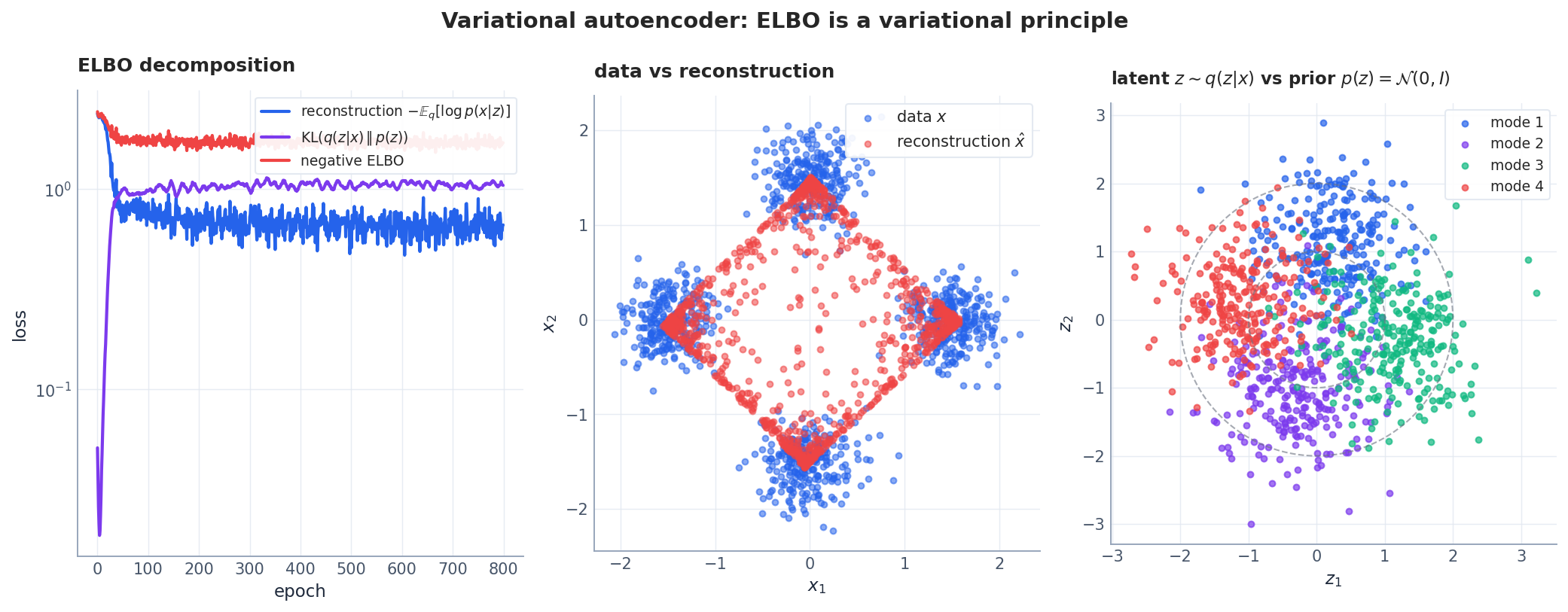

Variational Inference and the ELBO#

$$\mathrm{ELBO}(q) = \mathbb{E}_{q(z|x)}[\log p(x|z)] - \mathrm{KL}\!\left(q(z|x) \,\Vert\, p(z)\right) ,$$which is equivalent to minimising $\mathrm{KL}(q(z|x)\,\Vert\,p(z|x))$ — the KL distance to the true posterior. This is a constrained variational problem, and in the limit $\mathcal{Q}$ = all densities, the maximiser is the exact posterior.

In the Wasserstein view of the previous sections, the gradient flow of the KL divergence $\mathrm{KL}(\cdot\,\Vert\,p)$ is precisely the Fokker-Planck equation of Section 2.4 , which is why diffusion-based generative models can be analysed with the same tools as the VAE.

Implementation: ELBO decomposition. The ELBO trades off reconstruction quality against posterior regularisation. Here is a minimal computation showing this trade-off:

| |

The trade-off is visible in the numbers: as reconstruction improves, the KL term increases because the encoder pushes the latent codes further from the standard normal to encode cluster identity. The ELBO initially decreases (better reconstruction outweighs the KL penalty) but eventually the KL term dominates. This tension is the variational principle at work — the optimal $q(z|x)$ balances fidelity against regularity, exactly as a Ritz functional balances boundary fit against smoothness.

Numerical Validation#

We now collect the experimental evidence that supports the theory above. All four experiments are reproducible from the figure script scripts/figures/pde-ml/03-variational.py.

Brachistochrone (Figure 1)#

Five candidate descent curves connect $A=(0,0)$ and $B=(\pi, 2)$ . We discretise the time functional $T[y] = \int \sqrt{(1+y'^2)/(2gy)}\, dx$ by trapezoidal quadrature and rank the curves by descent time. The cycloid wins by a comfortable margin, beating both the straight line (“shortest path”) and the steeper “drop-and-coast” candidate. This single picture captures the entire spirit of the calculus of variations: distinct admissible curves give distinct functional values, and only one curve makes the first variation vanish.

The First-Variation Argument (Figure 2)#

We pick the extremal $y(x) = \sin(\pi x)$ of the Dirichlet energy on $[0, 1]$ (with $y(0) = y(1) = 0$ ) and a smooth perturbation $\eta(x) = \sin(2\pi x)\, x(1-x)$ that vanishes at the boundary. Plotting $J(\varepsilon) = J[y + \varepsilon \eta]$ versus $\varepsilon$ shows a parabola with horizontal tangent at $\varepsilon = 0$ — the geometric content of the Euler-Lagrange equation.

Wasserstein Gradient Flow (Figure 3)#

with explicit finite differences. The initial density is a sharp Gaussian far from the target. The simulation shows three things at once:

- $\rho_t$ travels through measure space toward the Gibbs target $\rho_\ast = \mathcal{N}(\mu_\ast, 1)$ ;

- the free energy $F[\rho_t]$ decreases monotonically (energy dissipation along a gradient flow);

- the drift of the mean relative to $\mu_\ast$ also decays monotonically — what one would call a “Wasserstein-distance proxy”.

Mean-Field Limit (Figure 4)#

A two-layer ReLU network with width $m \in \{20, 200, 2000\}$ is trained to fit $f_\ast(x) = \sin(\pi x) + 0.5 \sin(3\pi x)$ on 64 points. The first-layer weight histograms after $0$ , $200$ , and $1500$ epochs become visibly smoother as $m$ grows; by $m = 2000$ the histogram is already a faithful sample of a continuous density. The right column shows the corresponding loss decays — wider networks converge faster, in line with mean-field over-parameterisation theory. The learning rate is scaled $\propto 1/\sqrt{m}$ to keep per-particle drift $O(1)$ , which is the correct scaling for the mean-field regime.

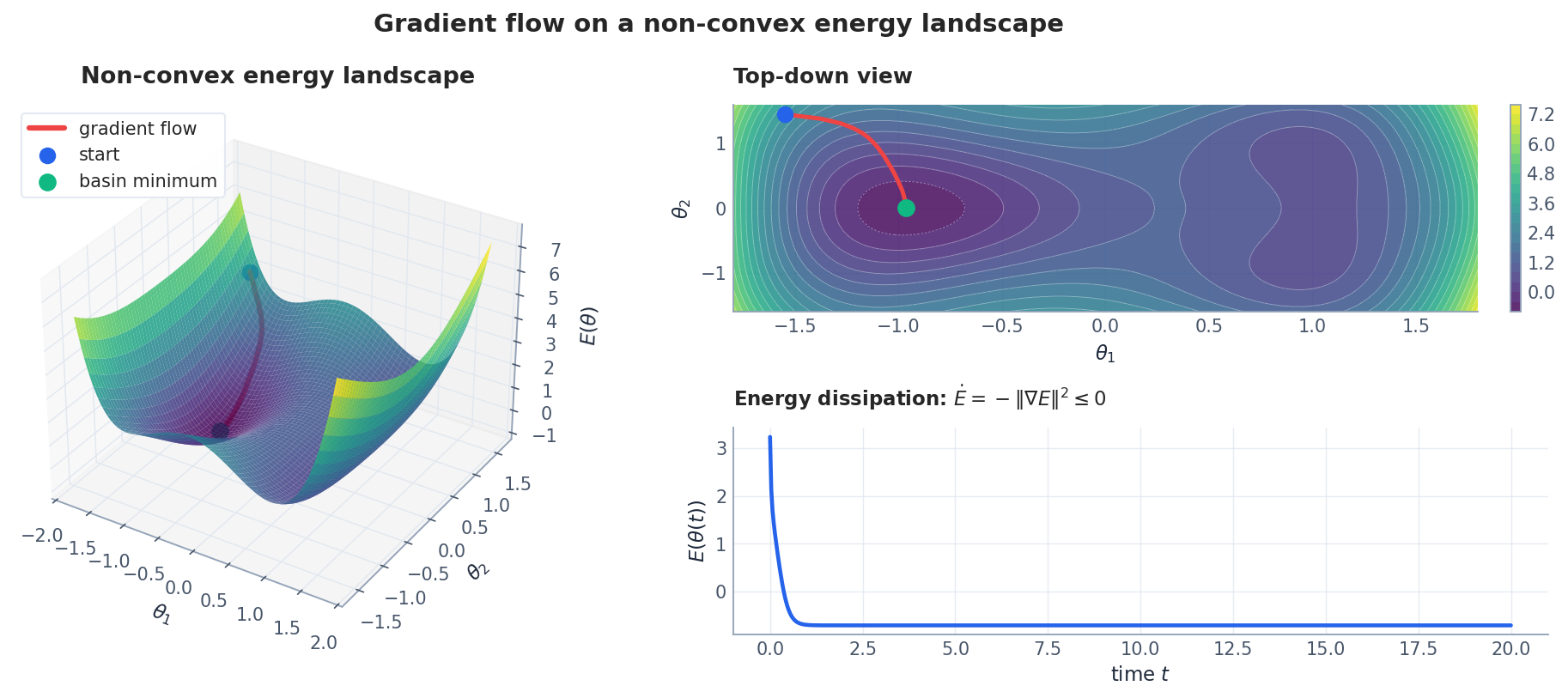

Energy Landscape (Figure 5)#

A deliberately non-convex 2-D energy with a tilt and trigonometric “bumps” demonstrates the universal dissipation identity. The gradient-flow trajectory, integrated by RK45, settles into the basin of attraction of the lowest-energy minimum; the energy time-series confirms monotone decrease. The same picture in a million dimensions is the working mental model for neural-network training — except that real loss landscapes typically have far flatter minima (a fact responsible for both generalisation and the success of stochastic gradient methods).

Two Other Geometries: Fisher-Rao and Adam#

Fisher-Rao and Natural Gradient#

$$I(\theta) = \mathbb{E}_{p_\theta}\!\left[\nabla_\theta \log p_\theta(x)\, \nabla_\theta \log p_\theta(x)^\top\right]$$defines a different metric — the Fisher-Rao metric — and the corresponding natural gradient flow $\dot\theta = -I(\theta)^{-1} \nabla_\theta J(\theta)$ (Amari 1998) often converges far faster than vanilla gradient descent because it accounts for the intrinsic curvature of the parameter space. Wasserstein and Fisher-Rao see the world differently:

- Wasserstein measures transport cost; it is the right metric when the support of the distribution moves.

- Fisher-Rao measures information-geometric distance; it is the right metric when the distribution reshapes itself in place.

Recent work on Kernel Approximation of Fisher-Rao Gradient Flows shows how to approximate Fisher-Rao flows numerically and uses them to design new Langevin-style sampling algorithms.

Adam as a Reparametrised Gradient Flow#

$$\dot\theta = -\frac{m_t}{\sqrt{v_t} + \epsilon}, \qquad \dot m_t = \alpha_1\big(\nabla J(\theta) - m_t\big), \qquad \dot v_t = \alpha_2\big(\|\nabla J(\theta)\|^2 - v_t\big) ,$$which is the gradient flow of $J$ in a coordinate-dependent Riemannian metric $g_{ii}(\theta) = (\sqrt{v_i(\theta)} + \epsilon)$ . This is a diagonal approximation to a natural gradient — adaptive learning rates are, fundamentally, a change of metric.

Hamiltonian vs. Gradient Flow#

A subtle but important point: not every flow is a gradient flow. The same energy function can be associated with two qualitatively opposite dynamics.

Putting It All Together: From Least Action to Learning#

The variational thread runs through the entire article. Let us trace it once more to see how tightly the pieces interlock:

| Layer | Variational problem | State space | Metric | Resulting PDE/ODE |

|---|---|---|---|---|

| Classical mechanics | Extremise action $S[q]$ | Trajectories | — | Euler-Lagrange / Hamilton |

| PDE theory | Minimise Dirichlet energy $J[u]$ | Functions | $L^2$ | Laplace / heat equation |

| Optimal transport | JKO proximal step on $\mathcal{E}[\rho]$ | Measures $\mathcal{P}_2$ | $W_2$ | Fokker-Planck / porous medium |

| Neural-network training | Minimise empirical risk $\hat{R}[\rho]$ | Neuron distributions $\mathcal{P}_2$ | $W_2$ | Mean-field PDE |

| Variational inference | Minimise $\mathrm{KL}(q \mid p)$ | Approximate posteriors $\mathcal{Q}$ | KL / $W_2$ | Langevin SDE / Fokker-Planck |

| Adam optimiser | Gradient flow of $J$ | Parameters $\mathbb{R}^n$ | Adaptive diagonal | Riemannian gradient flow |

Every row is a variational problem. Every row produces a dynamical system by following the steepest descent of a functional in the appropriate geometry. The only things that change are the state space, the metric, and the energy. This is why the PDE perspective is so powerful: once you recognise the variational structure, convergence proofs, numerical schemes, and design principles transfer across all these settings.

The three questions to ask for any new algorithm:

- What energy does it minimise? (Objective function, ELBO, risk, free energy)

- In what metric? (Euclidean, Wasserstein, Fisher-Rao, adaptive)

- What PDE does the resulting gradient flow satisfy? (Heat, Fokker-Planck, Vlasov, …)

If you can answer all three, you have the convergence theory almost for free.

Recent Advances and Open Problems#

Mean-field SGD. Real training uses stochastic gradients. The mean-field equation acquires a noise term and turns into a McKean-Vlasov SDE; appropriate noise accelerates convergence by helping the dynamics escape saddles, and a fluctuation-dissipation relation links noise level, batch size, and an effective temperature. See Mean-Field Analysis of Neural SGD-Ascent for a recent treatment.

Multi-layer mean-field. For depth-$L$ networks, the parameter distribution at each layer obeys a coupled PDE system, and the analysis becomes considerably harder. ResNets and skip connections are easier — they correspond to a discrete optimal-transport time-stepping scheme, as developed in Deep ResNets and Conditional Optimal Transport .

Double descent and implicit bias. Over-parameterised networks generalise well in part because gradient flow selects the maximum-entropy interpolant. Pinning down the right notion of “maximum entropy” for deep networks remains an active area.

Lyapunov functions. A general convergence theory needs a Lyapunov function $L[\rho_t]$ whose dissipation rate one can lower bound. Candidates include the loss itself (only adequate under PL or convexity), free energies, and inter-particle distances. No single choice covers the general non-convex deep case.

Outlook#

A handful of directions where the PDE / variational viewpoint is currently most active:

- Theory. Sharper rates for non-convex losses; finite-width corrections; PDE theory for attention layers.

- Algorithms. Higher-order ODE/PDE solvers as new optimisers; control-theoretic hyperparameter schedules; Wasserstein-aware MCMC.

- Applications. Diffusion generative models are reverse Fokker-Planck equations; policy gradients are gradient flows on policy space; Deep Ritz and PINNs turn PDE solving into variational problems.

- Cross-fertilisation. Spin-glass analogies, Pontryagin’s maximum principle for end-to-end optimisation, information geometry and symplectic geometry in deep learning.

Summary#

Starting from the calculus of variations, we have built a PDE-centric picture of neural-network optimisation. Functionals and Euler-Lagrange equations connect discrete optimisation to continuous dynamics; Wasserstein geometry provides the natural metric on the space of probability measures and unifies the heat, Fokker-Planck, porous-medium, and Keller-Segel equations as gradient flows of explicit energies; the mean-field limit reduces finite-width neural-network training to a Vlasov-type PDE and yields global convergence under reasonable assumptions; the ELBO, Adam, and Hamiltonian dynamics all fit into the same variational framework. The numerical experiments — the brachistochrone, a 1-D Fokker-Planck simulation, a width sweep on a two-layer net, a non-convex energy landscape, and a small VAE — confirm the theory in each of its key predictions.

The PDE viewpoint is still young. As mathematics and machine learning continue to converge, expect it to provide ever sharper tools for designing optimisers, analysing generalisation, and certifying convergence.

References#

- L. Chizat and F. Bach, “On the Global Convergence of Gradient Descent for Over-parameterized Models using Optimal Transport,” NeurIPS, 2018. arXiv:1805.09545

- S. Mei, A. Montanari, P.-M. Nguyen, “A Mean Field View of the Landscape of Two-Layer Neural Networks,” PNAS, 2018. arXiv:1804.06561

- G. M. Rotskoff and E. Vanden-Eijnden, “Neural Networks as Interacting Particle Systems,” arXiv:1805.00915 , 2018.

- A. Jacot, F. Gabriel, C. Hongler, “Neural Tangent Kernel: Convergence and Generalization in Neural Networks,” NeurIPS, 2018. arXiv:1806.07572

- W. E and B. Yu, “The Deep Ritz Method,” CPAM, 2018. arXiv:1710.00211

- R. T. Q. Chen, Y. Rubanova, J. Bettencourt, D. Duvenaud, “Neural Ordinary Differential Equations,” NeurIPS, 2018. arXiv:1806.07366

- L. Ambrosio, N. Gigli, G. Savaré, Gradient Flows in Metric Spaces and in the Space of Probability Measures, Birkhäuser, 2008.

- C. Villani, Optimal Transport: Old and New, Springer, 2009.

- R. Jordan, D. Kinderlehrer, F. Otto, “The Variational Formulation of the Fokker-Planck Equation,” SIAM J. Math. Anal., 1998.

- F. Otto, “The Geometry of Dissipative Evolution Equations: the Porous Medium Equation,” Comm. PDE, 2001.

- Y. Lu and J. Lu, “Mean-Field Analysis of Neural SGD-Ascent ,” 2024.

- A. Kazeykina and M. Fornasier, “Kernel Approximation of Fisher-Rao Gradient Flows ,” 2024.

- D. Onken et al., “Deep ResNets and Conditional Optimal Transport ,” 2024.

- M. Belkin, D. Hsu, S. Ma, S. Mandal, “Reconciling Modern Machine Learning Practice and the Classical Bias-Variance Trade-off,” PNAS, 2019.

- S. Amari, “Natural Gradient Works Efficiently in Learning,” Neural Computation, 1998.

- D. P. Kingma and J. Ba, “Adam: A Method for Stochastic Optimization,” ICLR, 2015. arXiv:1412.6980

- L. Chizat, E. Oyallon, F. Bach, “On Lazy Training in Differentiable Programming,” NeurIPS, 2019. arXiv:1812.07956

- G. Peyré and M. Cuturi, “Computational Optimal Transport,” Foundations and Trends in Machine Learning, 2019. arXiv:1803.00567

- J. Sirignano and K. Spiliopoulos, “Mean Field Analysis of Neural Networks: A Central Limit Theorem,” Stoch. Proc. Appl., 2020. arXiv:1808.09372

Reproducibility. All seven figures in this article are produced by scripts/figures/pde-ml/03-variational.py in the site repository. Run python scripts/figures/pde-ml/03-variational.py from the project root; PNGs are written to both the EN and ZH asset folders.

PDE and Machine Learning 8 parts

- 01 PDE and ML (1): Physics-Informed Neural Networks

- 02 PDE and ML (2): Neural Operator Theory

- 03 PDE and ML (3): Variational Principles and Optimization you are here

- 04 PDE and ML (4): Variational Inference and the Fokker-Planck Equation

- 05 PDE and ML (5): Symplectic Geometry and Structure-Preserving Networks

- 06 PDE and ML (6): Continuous Normalizing Flows and Neural ODE

- 07 PDE and ML (7): Diffusion Models and Score Matching

- 08 PDE and ML (8): Reaction-Diffusion Systems and Graph Neural Networks