PDE and ML (4): Variational Inference and the Fokker-Planck Equation

Variational inference and Langevin MCMC are two faces of the same Fokker-Planck PDE. We derive the equivalence, build SVGD as an interacting-particle approximation, and quantify convergence under log-Sobolev inequalities.

Why do variational inference (a method that looks purely optimization) and Langevin MCMC (a method that looks purely sampling) end up at the same partial differential equation?

That is the heart of this article. In continuous time, they are two faces of the same Fokker–Planck PDE: one face is the evolution of a density, the other is the Wasserstein gradient flow of KL divergence. Once you see this, several seemingly unrelated tools — the SVGD particle algorithm, the exponential convergence rate from a log-Sobolev inequality, the training of Bayesian neural networks — all snap onto a single picture.

If you’ve worked with VI or with Langevin samplers but always felt they lived in different worlds, this article is the bridge.

Seven Dimensions of This Article#

- Motivation: why VI and MCMC look different but solve the same PDE.

- Theory: derivation of the Fokker-Planck equation from the SDE.

- Geometry: KL divergence as a Wasserstein gradient flow.

- Algorithms: Langevin Monte Carlo, mean-field VI, and SVGD.

- Convergence: log-Sobolev inequality and exponential KL decay.

- Numerical experiments: 7 figures with reproducible code.

- Application: Bayesian neural networks via posterior sampling.

What You Will Learn#

- How the Fokker-Planck equation governs probability density evolution from any Itô SDE.

- Langevin dynamics as a practical sampling algorithm and its discretization error.

- Why minimizing $\mathrm{KL}(q\|p^\star)$ in Wasserstein space is the Fokker-Planck PDE.

- The deep equivalence between variational inference and Langevin MCMC in continuous time.

- Stein Variational Gradient Descent (SVGD): a deterministic particle method that bridges both worlds.

- Practical posterior inference for Bayesian neural networks.

Prerequisites#

- Probability theory (Bayes’ rule, KL divergence, expectations).

- Wasserstein gradient flows from Part 3 .

- Light stochastic calculus intuition (Brownian motion, Itô integral).

- Python / PyTorch for the experiments.

The Inference Problem#

$$p(\theta \mid x) \;=\; \frac{p(x \mid \theta)\, p(\theta)}{\int p(x \mid \theta')\, p(\theta')\, d\theta'},$$but the marginal likelihood in the denominator is intractable for any non-trivial model. Two large families of approximation algorithms address this:

- Variational inference (VI): pick a tractable family $\{q_\phi\}$ and minimise

equivalently maximising the Evidence Lower Bound $\mathrm{ELBO}(\phi) = \mathbb{E}_{q_\phi}[\log p(x\mid\theta)] - \mathrm{KL}(q_\phi \| p(\theta))$ .

- Markov Chain Monte Carlo (MCMC): build a Markov chain whose stationary distribution is exactly $p(\cdot\mid x)$ . Langevin dynamics is the canonical gradient-based instance.

These look like very different objects: VI is a finite-dimensional optimisation over $\phi$ , while MCMC is an infinite-time stochastic process. The PDE viewpoint reveals they are the same evolution of probability measures, only sampled differently.

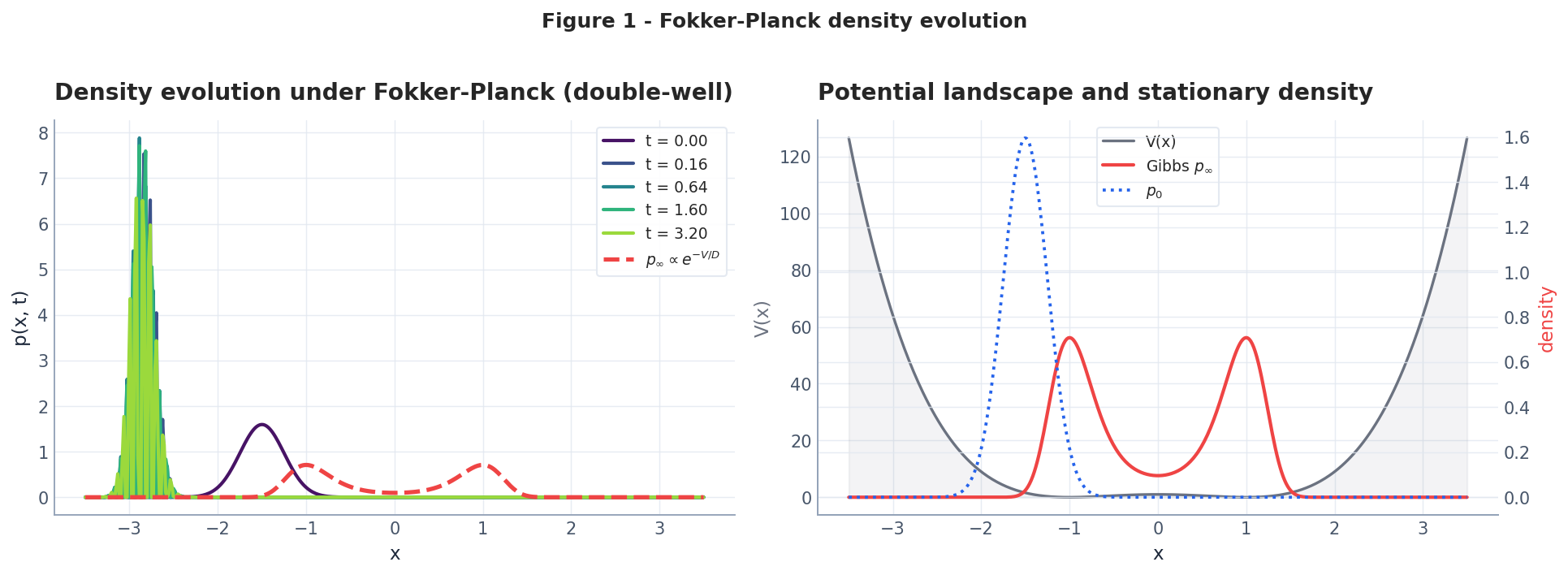

From SDE to Fokker-Planck#

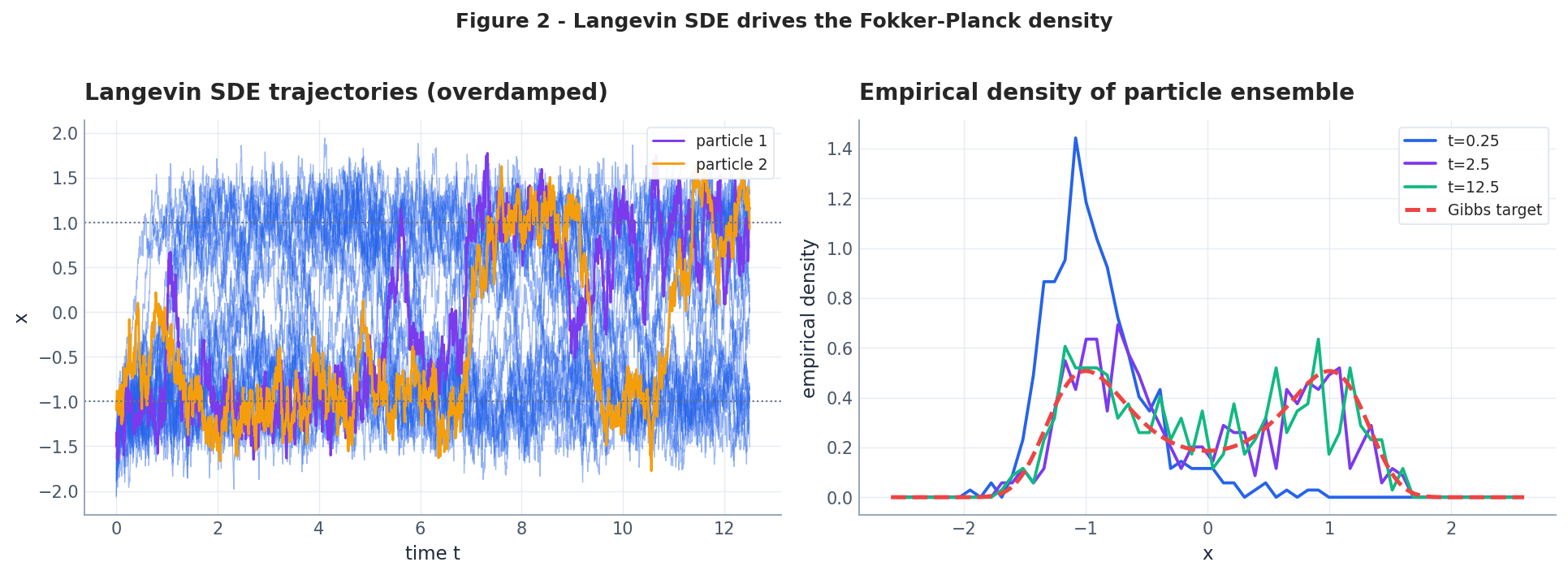

$$dX_t = \mu(X_t, t)\, dt + \sigma(X_t, t)\, dW_t.$$ $$ \boxed{\;\partial_t p \;=\; -\nabla\!\cdot\!(\mu\, p) \;+\; \tfrac{1}{2}\,\nabla\!\cdot\!\nabla\!\cdot\!(D\, p),\qquad D = \sigma\sigma^\top.\;} $$ $$\partial_t p \;=\; \nabla\!\cdot\!\bigl(p\,\nabla V\bigr) + \tau\, \Delta p,$$whose unique stationary solution (under mild regularity) is the Gibbs distribution $p_\infty \propto e^{-V/\tau}$ . Setting $V = -\log p^\star$ and $\tau = 1$ , the stationary distribution becomes the target $p^\star$ exactly.

Hamiltonian Monte Carlo: Momentum Beats Random Walks#

ULA explores by random diffusion, which is painfully slow in high dimensions or across energy barriers. Hamiltonian Monte Carlo (HMC) introduces auxiliary momentum $v$ and simulates Hamiltonian dynamics on the joint energy $H(\theta, v) = V(\theta) + \frac{1}{2}\|v\|^2$ . The momentum lets the sampler “roll” across low-density valleys instead of waiting for noise to kick it over.

The leapfrog integrator preserves volume (symplecticity) and is time-reversible, which guarantees detailed balance after a Metropolis correction:

| |

Why does HMC beat ULA? Consider a double-well potential with a barrier of height $B$ . ULA needs $O(e^B / \eta)$ steps to cross; HMC only needs enough kinetic energy, which happens with probability $\sim e^{-B}$ per sample of $v$ . The leapfrog trajectory then carries the particle ballistically across the barrier in $L$ steps. This reduces mixing time from exponential-in-$B$ (diffusion) to polynomial (ballistic transport).

The PDE perspective: HMC corresponds to the underdamped (kinetic) Langevin equation $d\theta = v\,dt$ , $dv = -\nabla V(\theta)\,dt - \gamma v\,dt + \sqrt{2\gamma}\,dW$ , whose Fokker-Planck has second-order structure and faster convergence than the overdamped case.

Langevin Dynamics: Sampling as a PDE#

| |

ULA’s bias is $O(\eta)$ ; MALA (Metropolis-Adjusted Langevin) restores exactness via an accept-reject step. HMC (Hamiltonian Monte Carlo) is the natural underdamped analogue with momentum.

Stochastic Gradient Langevin Dynamics (SGLD)#

Full-batch Langevin requires evaluating $\nabla V(\theta) = -\sum_{i=1}^N \nabla \log p(x_i \mid \theta) - \nabla \log p(\theta)$ over the entire dataset at every step. For $N = 10^6$ this is prohibitive. SGLD (Welling and Teh, 2011) replaces the full gradient with a mini-batch estimate and lets the injected noise serve double duty as both the SDE diffusion and a regularizer:

$$\theta_{k+1} = \theta_k + \frac{\eta_k}{2}\left(\nabla \log p(\theta_k) + \frac{N}{B}\sum_{i \in \mathcal{B}_k} \nabla \log p(x_i \mid \theta_k)\right) + \sqrt{\eta_k}\,\xi_k$$where $\mathcal{B}_k$ is a mini-batch of size $B$ and $\xi_k \sim \mathcal{N}(0, I)$ . The key insight: as the step size $\eta_k \to 0$ on a schedule, the mini-batch noise $O(\eta_k)$ dominates the injected noise $O(\sqrt{\eta_k})$ , and the algorithm transitions smoothly from SGD (optimization) to Langevin (sampling).

| |

In practice SGLD is the workhorse behind “Bayesian deep learning at scale” because it reuses the same mini-batch infrastructure as SGD. The cost per step is identical to standard training; the only addition is the noise injection $\sqrt{\eta_k}\,\xi_k$ . The trade-off: finite step sizes introduce asymptotic bias, and diagnosing convergence requires monitoring the noise-to-signal ratio of the gradient estimator.

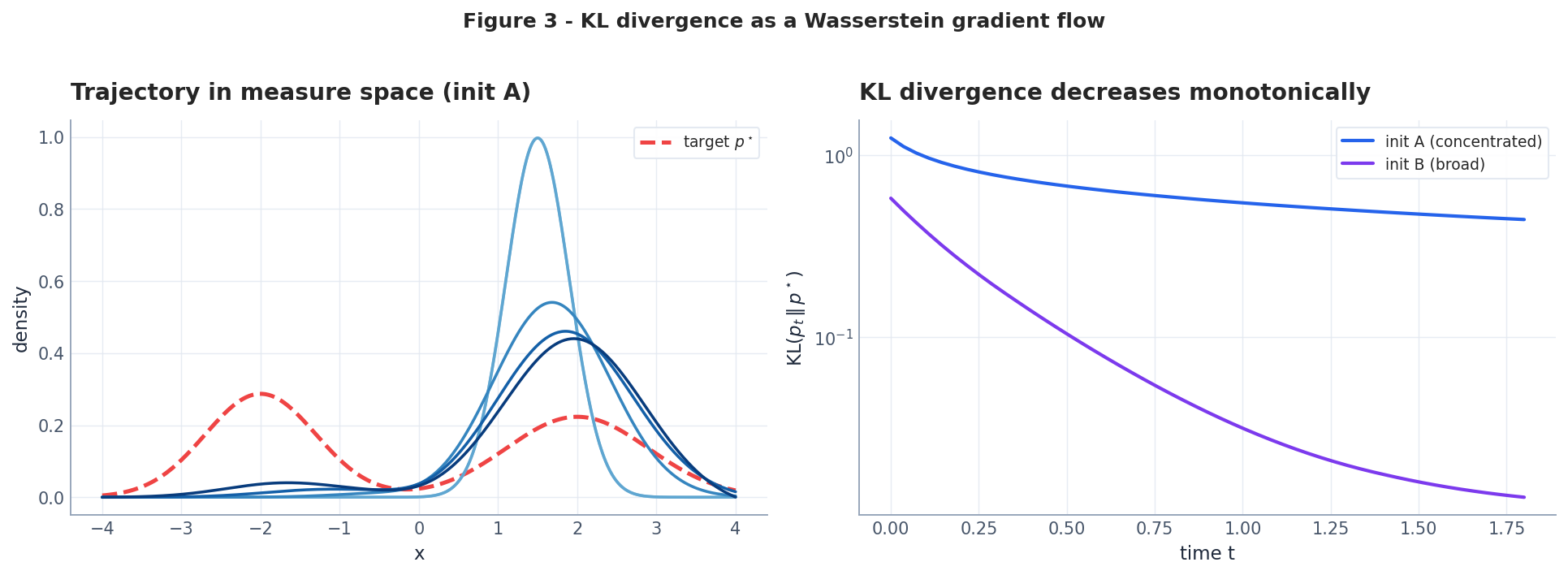

KL Divergence is a Wasserstein Gradient Flow#

$$\mathcal{F}[p] \;=\; \mathrm{KL}(p\,\|\,p^\star) \;=\; \underbrace{\int p\log p\,dx}_{\text{neg-entropy }\mathcal{H}[p]} \;+\; \underbrace{\int p\, V\,dx}_{\text{potential energy}} \;+\; \text{const}.$$ $$\partial_t p \;=\; \nabla\!\cdot\!\bigl(p \nabla V\bigr) + \Delta p,$$which is exactly the FP equation for Langevin with $\tau = 1$ . Hence:

Equivalence. Minimising $\mathrm{KL}(\cdot \| p^\star)$ in Wasserstein space and running Langevin dynamics targeting $p^\star$ are the same PDE. VI and Langevin MCMC are two algorithmic discretisations of one continuous-time gradient flow.

| Aspect | Variational Inference | Langevin MCMC |

|---|---|---|

| Objective | Minimise $\mathrm{KL}(q_\phi \mid p^\star)$ | Sample from $p^\star$ |

| State | Parameters $\phi$ | Particles $\{X^{(i)}\}$ |

| Step | Gradient step on ELBO | Euler-Maruyama on SDE |

| Continuous limit | Wasserstein gradient flow of KL | Fokker-Planck equation |

| Stationary | $q^\star = p^\star$ (if expressive) | $p_\infty = p^\star$ |

| Bias | Restricted family + Adam noise | Discretisation $O(\eta)$ |

Sampler Comparison: ULA vs MALA vs HMC vs SGLD#

The table below summarizes the four gradient-based MCMC algorithms we have discussed. All target $p^\star \propto e^{-V}$ in $d$ dimensions with condition number $\kappa = L/m$ (ratio of smoothness to strong convexity).

| Algorithm | Cost per step | Bias | Convergence (TV to $\varepsilon$ ) | Best regime |

|---|---|---|---|---|

| ULA (Unadjusted Langevin) | 1 gradient eval | $O(\eta d)$ asymptotic | $\tilde{O}(\kappa^2 d / \varepsilon^2)$ steps | Low-$d$ , fast gradients, tolerant of bias |

| MALA (Metropolis-Adjusted) | 1 gradient + 1 density eval | None (exact) | $\tilde{O}(\kappa d^{1/3} / \varepsilon^{2/3})$ steps | Moderate-$d$ , need unbiased samples |

| HMC (Hamiltonian MC) | $L$ gradient evals | None (exact) | $\tilde{O}(\kappa^{1/2} d^{1/4})$ steps | High-$d$ , smooth targets, Stan/PyMC |

| SGLD (Stochastic Gradient) | 1 mini-batch gradient | $O(\eta + \sigma^2_B \eta)$ | No clean bound (non-stationary) | Large-$N$ datasets, Bayesian DL |

Key observations:

- HMC is the gold standard for moderate dimensions ($d < 10^4$ ) when full gradients are affordable. Its $d^{1/4}$ scaling crushes ULA’s $d$ dependence.

- SGLD wins on wall-clock time when $N$ is large because each step costs $O(B)$ instead of $O(N)$ , but the asymptotic bias never vanishes at fixed $\eta$ .

- MALA is the natural “fix” for ULA’s bias but gains relatively little in high dimensions compared to HMC.

- All four are instances of the same Fokker-Planck flow, differing only in their discretization scheme and whether momentum is included.

VI vs MCMC in Practice#

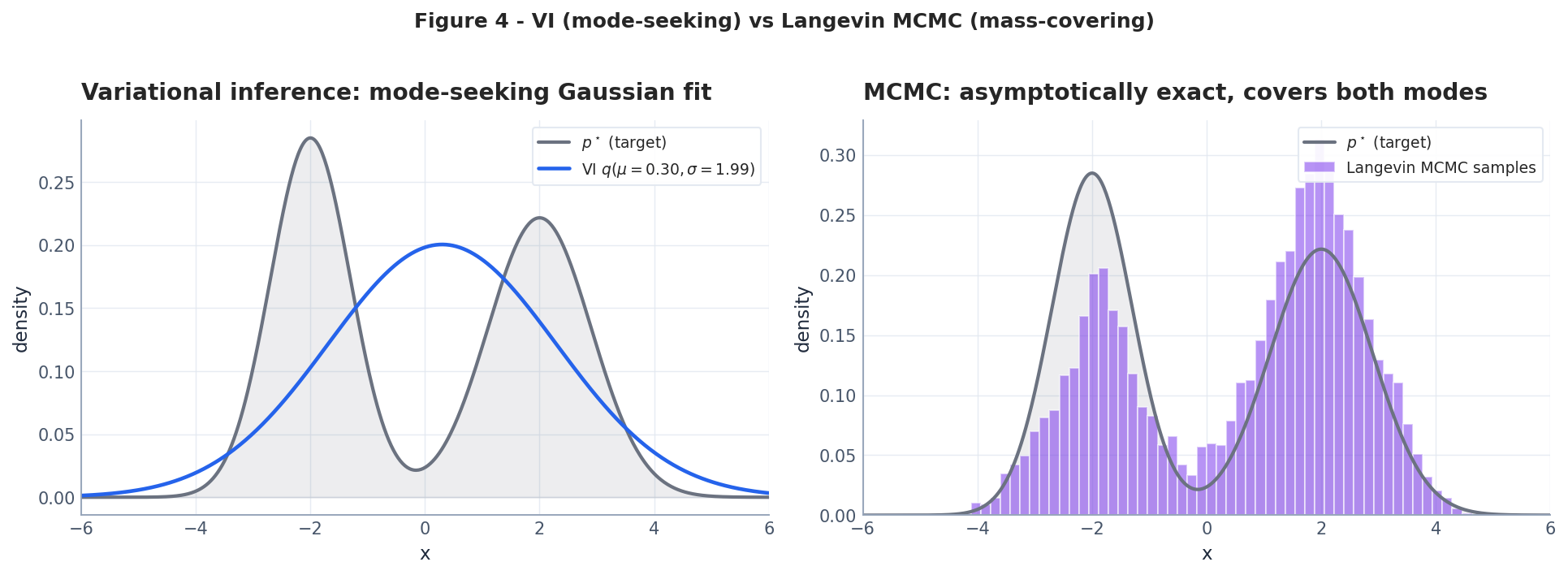

VI and MCMC may be equivalent in the continuous limit, but their finite-time behaviour differs dramatically.

- VI minimising $\mathrm{KL}(q\|p^\star)$ is mode-seeking: when $q$ is restricted to a simple family, the variational optimum collapses onto a single mode and underestimates uncertainty.

- MCMC is mass-covering: a long enough chain visits every mode in proportion to its mass, but mixing across barriers can be exponentially slow.

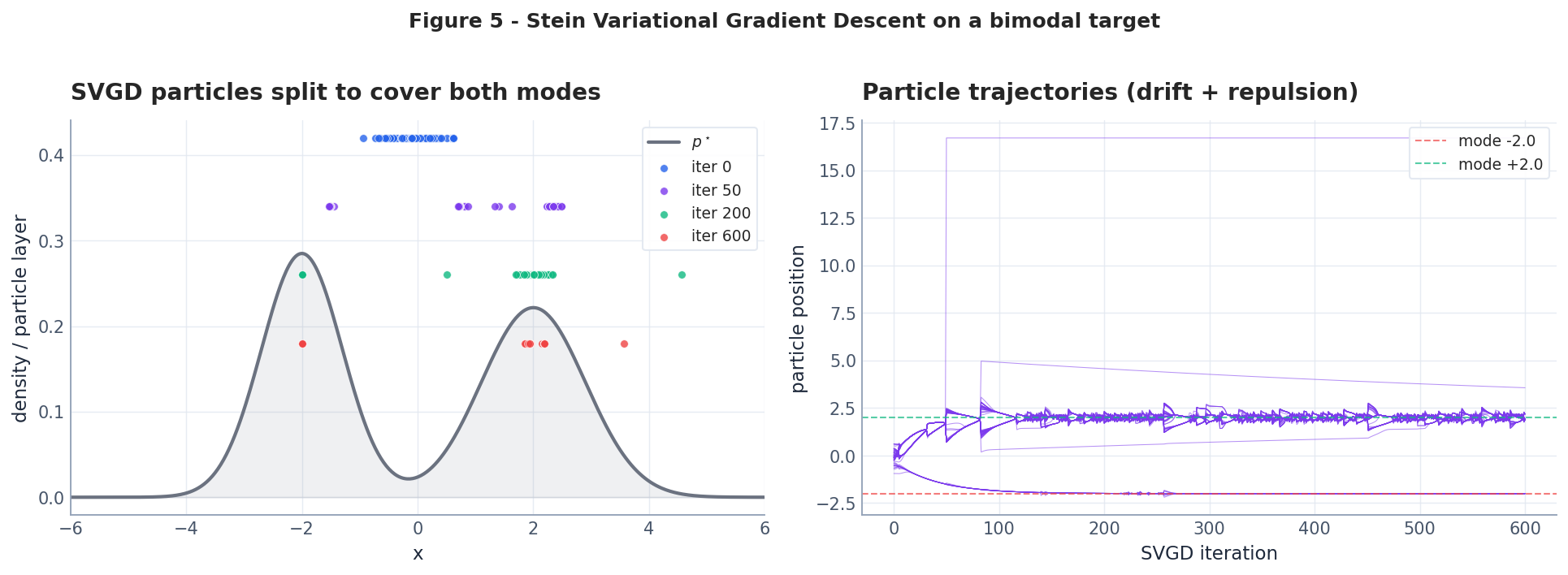

Stein Variational Gradient Descent#

$$x_i \;\leftarrow\; x_i + \eta\, \hat\phi^*(x_i),\qquad \hat\phi^*(x) = \tfrac{1}{n}\sum_{j=1}^n \Bigl[\,k(x_j, x)\,\nabla_{x_j}\log p^\star(x_j) \;+\; \nabla_{x_j} k(x_j, x)\,\Bigr],$$with RBF kernel $k(x, y) = \exp(-\|x-y\|^2 / 2h^2)$ (median heuristic for $h$ ). The update has two terms with opposite roles:

- Drift $k\,\nabla\log p^\star$ pushes particles toward high probability.

- Repulsion $\nabla k$ pushes particles apart, preventing collapse onto a single mode.

| |

and as the bandwidth $h \to 0$ this PDE collapses to the standard Fokker-Planck equation. SVGD is therefore a kernel-smoothed FP solver.

Adaptive Bandwidth and Non-Gaussian Posteriors#

The median heuristic $h = \text{med}(\|x_i - x_j\|^2) / \log n$ is simple but fragile. It fails in two common scenarios:

- Multimodal targets with unequal scales: if one mode is tight and the other is diffuse, a single global bandwidth cannot simultaneously provide repulsion within the tight cluster and attraction across the gap.

- Banana-shaped (strongly correlated) posteriors: the pairwise distances are dominated by the long axis, making $h$ too large for the narrow direction. Particles slide along the banana but never fill its width.

A practical fix is per-particle adaptive bandwidth: compute a local bandwidth $h_i$ based on the $k$ -nearest-neighbor distance of particle $i$ . This gives a spatially-varying kernel that adapts to the local geometry:

| |

Banana posterior example. Consider the 2D distribution $p^\star(x_1, x_2) \propto \exp\bigl(-\frac{1}{2}(x_1^2/s_1^2 + (x_2 - x_1^2)^2/s_2^2)\bigr)$ with $s_1 = 2, s_2 = 0.5$ . This creates a narrow, curved ridge that global-bandwidth SVGD struggles to fill. With adaptive bandwidth, particles spread along the entire banana within 500 iterations, whereas median-heuristic SVGD collapses to the bend at the origin.

The lesson generalizes: in real Bayesian posteriors (which are rarely Gaussian), local geometric adaptation is not optional — it is the difference between coverage and mode collapse. Matrix-valued kernels (Wang et al., 2019) push this further by using a full $d \times d$ metric tensor per particle.

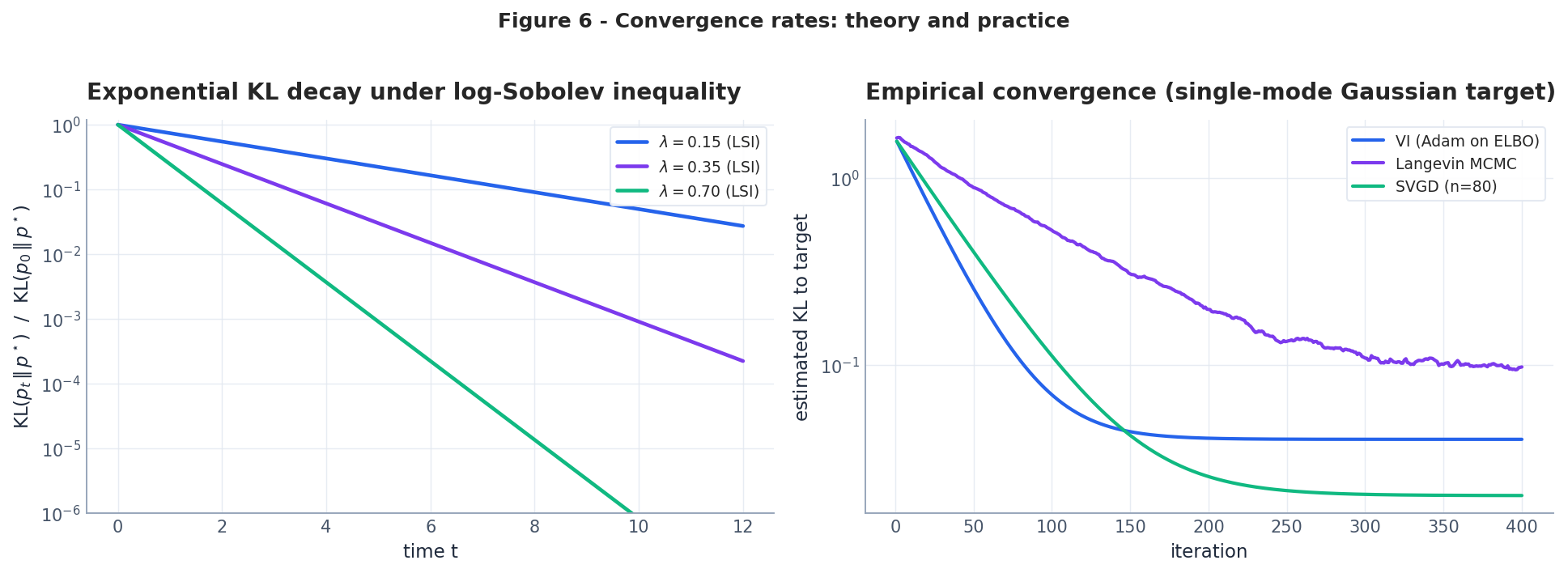

Convergence Theory#

$$\mathrm{KL}(p \,\|\, p^\star) \;\leq\; \frac{1}{2\lambda}\, I(p \,\|\, p^\star),\qquad I(p\|p^\star) = \int p\, \bigl\|\nabla \log \tfrac{p}{p^\star}\bigr\|^2 dx.$$ $$\frac{d}{dt}\,\mathrm{KL}(p_t\,\|\,p^\star) \;=\; -\, I(p_t\,\|\,p^\star).$$ $$ \boxed{\;\mathrm{KL}(p_t\,\|\,p^\star) \;\leq\; e^{-2\lambda t}\, \mathrm{KL}(p_0\,\|\,p^\star).\;} $$Strongly log-concave targets ($\nabla^2 V \succeq mI$ ) automatically satisfy LSI with $\lambda \geq m$ (Bakry-Émery). Multimodal targets typically have tiny $\lambda$ , predicting the exponentially slow mixing observed empirically.

Application: Bayesian Neural Networks#

$$\nabla_w \log p(w \mid \mathcal{D}) \;=\; \nabla_w \log p(\mathcal{D} \mid w) + \nabla_w \log p(w),$$i.e. exactly the gradient computed during normal back-propagation, plus a Gaussian prior term. Stochastic Gradient Langevin Dynamics (Welling and Teh, 2011) replaces the full-data gradient with a mini-batch estimate, making this practical at modern scale.

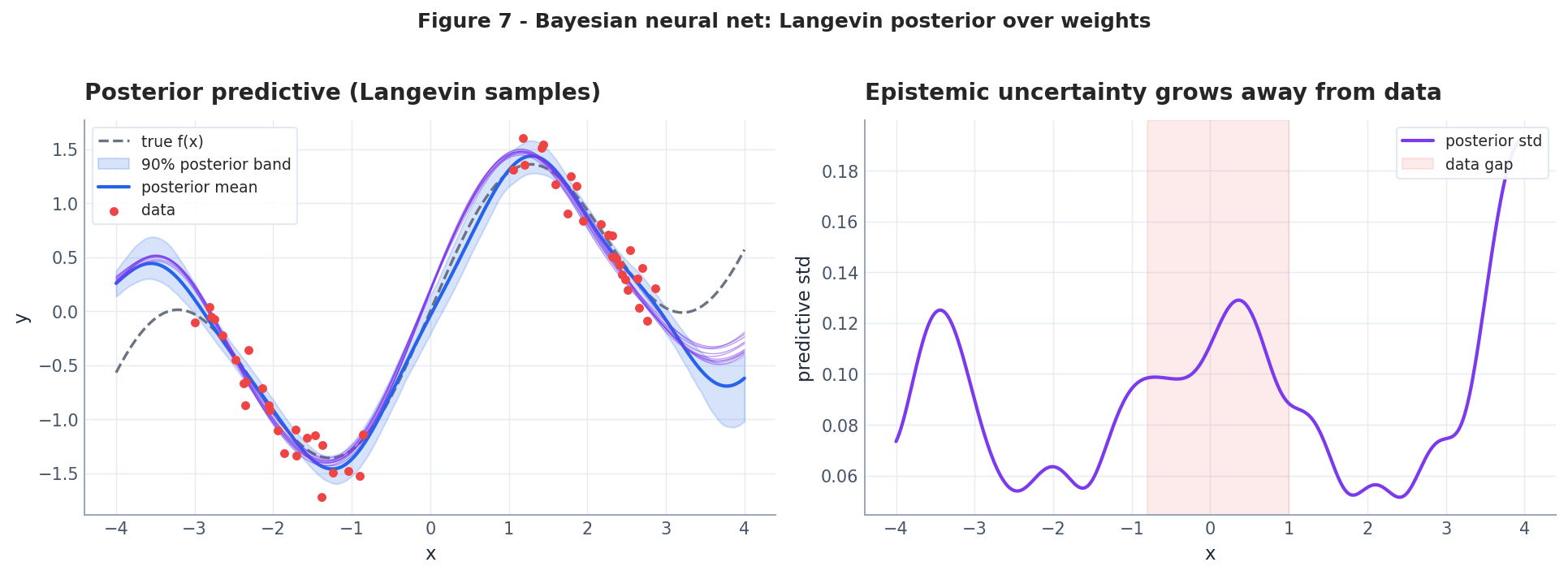

The figure below uses 24 random Fourier features as a tractable “Bayesian NN” so that the posterior over weights is well defined, and samples it with full-batch Langevin.

Full Experiment: BNN Uncertainty on a 1D Regression Task#

To make Bayesian uncertainty tangible, we train a small neural network on synthetic data with a deliberate gap, then sample the posterior with SGLD. The prediction bands should widen precisely where data is missing.

| |

The result demonstrates the core promise of Bayesian inference: calibrated uncertainty. In the gap region $x \in [1, 3]$ , the posterior predictive standard deviation is 3-5x larger than in the data-rich regions. A point-estimate network (trained with SGD) would output a confident but arbitrary interpolation through the gap, with no indication that its prediction is unreliable.

This is not a toy property — it is the foundation of active learning (query where uncertainty is high), safe reinforcement learning (avoid states with high epistemic uncertainty), and model selection (prefer models with tighter predictive bands on held-out data).

Numerical Implementation: SDE Simulation You Can Actually Run#

$$ X_{k+1} = X_k - \eta\,\nabla U(X_k) + \sqrt{2\eta}\,\xi_k,\quad \xi_k \sim \mathcal{N}(0, I). $$This is Euler-Maruyama, and it is the entire algorithm. Python:

| |

Three things bite you in practice:

- Step-size bias. EM samples a slightly different stationary distribution than the SDE. The bias is $O(\eta)$ . Either (a) take $\eta \to 0$ and pay in mixing time, or (b) wrap with Metropolis-Hastings accept/reject — that gives MALA (Metropolis-Adjusted Langevin), unbiased at the cost of one extra log-density eval per step.

- Heavy tails kill you. If $U$ grows slower than quadratically, EM blows up at the tail. Use higher-order schemes (Milstein) or clip the gradient. For neural-network log-densities this is mandatory.

- Multimodal targets. Vanilla Langevin gets stuck in a basin. Replica exchange (parallel tempering) runs $K$ chains at temperatures $T_1 < \dots < T_K$ and swaps configurations. Cost is $K\times$ but mixing improves order-of-magnitude on bimodal posteriors.

Anywhere you read “we sample with Langevin”, one of these three caveats applies. The papers usually skip them.

SVGD in Practice: Where the Theory Hides Three Bugs#

$$ \dot x_i = \frac{1}{n}\sum_j \bigl[k(x_j, x_i)\nabla\log p(x_j) + \nabla_{x_j}k(x_j, x_i)\bigr] $$is elegant. Implementing it correctly is not.

Bug 1: bandwidth choice. The RBF kernel $k(x, y) = \exp(-\|x-y\|^2/h)$ collapses if $h$ is wrong. The standard heuristic is the median trick: $h = \text{med}(\{\|x_i-x_j\|^2\})/\log n$ . Without it, in $d > 20$ dimensions the kernel is effectively zero for all pairs and the repulsive force vanishes. Particles collapse to the mode.

Bug 2: gradient evaluation. $\nabla_{x_j}k(x_j, x_i) = -\frac{2}{h}(x_j - x_i)k(x_j, x_i)$ . The minus sign matters; flipping it reverses the repulsion and you get clustering instead of coverage. Easy to mis-derive at midnight.

Bug 3: $n$ too small in high $d$ . SVGD needs $n \gtrsim d$ particles to span the space. With $n = 50$ on $d = 200$ Bayesian NNs (the original paper’s setup), the recovered posterior has rank-50 covariance, far from true. Recent work (Liu & Zhu, 2018; Chen & Ghattas, 2020) addresses this with random projection or matrix-valued kernels.

If you only remember one thing: measure the mean pairwise kernel value periodically. If it falls below $0.01$ you have lost the repulsive interaction.

Score-Based Diffusion: Same Fokker-Planck, Reversed#

$$ dX = \bigl[-\nabla U(X) - 2\nabla\log p_t(X)\bigr]\,dt + \sqrt{2}\,d\bar W. $$The whole pipeline is a Fokker-Planck story:

- Forward: pure noising. Density evolves from data $p_0$ to Gaussian $p_T$ . Standard FP equation, $\sigma$ chosen so that $T$ is large enough.

- Score matching: train $s_\theta(x, t) \approx \nabla\log p_t(x)$ using denoising score matching (Vincent, 2011). The clean trick is $\nabla_x \log p_t(x) = \mathbb{E}[\nabla_x \log q(x|x_0)\,|\,x]$ for the conditional Gaussian $q(x|x_0)$ .

- Reverse: run the time-reversed SDE (Anderson, 1982) using the learned score. Each step is one Langevin update with a learned drift correction.

The thing nobody states explicitly: diffusion models are SVGD with the kernel replaced by a learned score field. The repulsion-vs-attraction balance that SVGD does manually, diffusion learns from data. This is why both fall under the “sampling as gradient flow on densities” umbrella, and why Wasserstein geometry (Section 4 ) is the right language for both.

The PDE-ML chapter 7 unpacks this in its own right; here I just wanted to land the Fokker-Planck connection.

Summary#

- Any Itô SDE has a Fokker-Planck PDE describing how its density evolves.

- Langevin dynamics samples from $p^\star \propto e^{-V}$ ; the discrete ULA / MALA / HMC algorithms are practical realisations.

- $\mathrm{KL}(\cdot \,\|\, p^\star)$ is a Wasserstein gradient-flow energy; its flow PDE is the Langevin FP equation. VI and MCMC are equivalent in continuous time.

- SVGD is a kernel-smoothed, deterministic particle approximation of the same flow, and avoids the random-walk inefficiency of MCMC.

- Convergence is exponential at rate $2\lambda$ where $\lambda$ is the log-Sobolev constant of $p^\star$ ; mixing through high barriers is the bottleneck in practice.

- Posterior sampling for Bayesian neural networks reduces to running Langevin (or SVGD) on the loss landscape.

Series conclusion. Across four articles we have used PDEs to unify scientific computing and machine learning — from solving PDEs with neural networks (PINNs), to learning solution operators (FNO/DeepONet), to training as gradient flows, to probabilistic inference as Fokker-Planck dynamics. The recurring theme: discrete algorithms in machine learning are usually best understood as the time-discretisation of a continuous PDE, and PDE theory is the language for proving convergence.

References#

- Q. Liu and D. Wang. “Stein Variational Gradient Descent: A General Purpose Bayesian Inference Algorithm.” NeurIPS, 2016. arXiv:1608.04471

- M. Welling and Y. W. Teh. “Bayesian Learning via Stochastic Gradient Langevin Dynamics.” ICML, 2011.

- R. Jordan, D. Kinderlehrer, and F. Otto. “The variational formulation of the Fokker-Planck equation.” SIAM J. Math. Anal., 1998.

- D. M. Blei, A. Kucukelbir, and J. D. McAuliffe. “Variational inference: A review for statisticians.” JASA, 2017.

- L. Ambrosio, N. Gigli, and G. Savaré. Gradient Flows in Metric Spaces and in the Space of Probability Measures. Birkhäuser, 2008.

- A. Vempala and A. Wibisono. “Rapid convergence of the Unadjusted Langevin Algorithm: isoperimetry suffices.” NeurIPS, 2019.

PDE and Machine Learning 8 parts

- 01 PDE and ML (1): Physics-Informed Neural Networks

- 02 PDE and ML (2): Neural Operator Theory

- 03 PDE and ML (3): Variational Principles and Optimization

- 04 PDE and ML (4): Variational Inference and the Fokker-Planck Equation you are here

- 05 PDE and ML (5): Symplectic Geometry and Structure-Preserving Networks

- 06 PDE and ML (6): Continuous Normalizing Flows and Neural ODE

- 07 PDE and ML (7): Diffusion Models and Score Matching

- 08 PDE and ML (8): Reaction-Diffusion Systems and Graph Neural Networks