PDE and ML (5): Symplectic Geometry and Structure-Preserving Networks

Standard neural networks violate conservation laws. This article derives Hamiltonian mechanics, symplectic integrators, HNNs, LNNs, and SympNets from the geometry of phase space.

A pendulum keeps swinging for a very long time without slowly winding down — energy is conserved. The Earth orbits the Sun for billions of years without flying off — angular momentum is conserved. Behind every “this quantity stays constant” lurks a piece of geometry called symplectic structure.

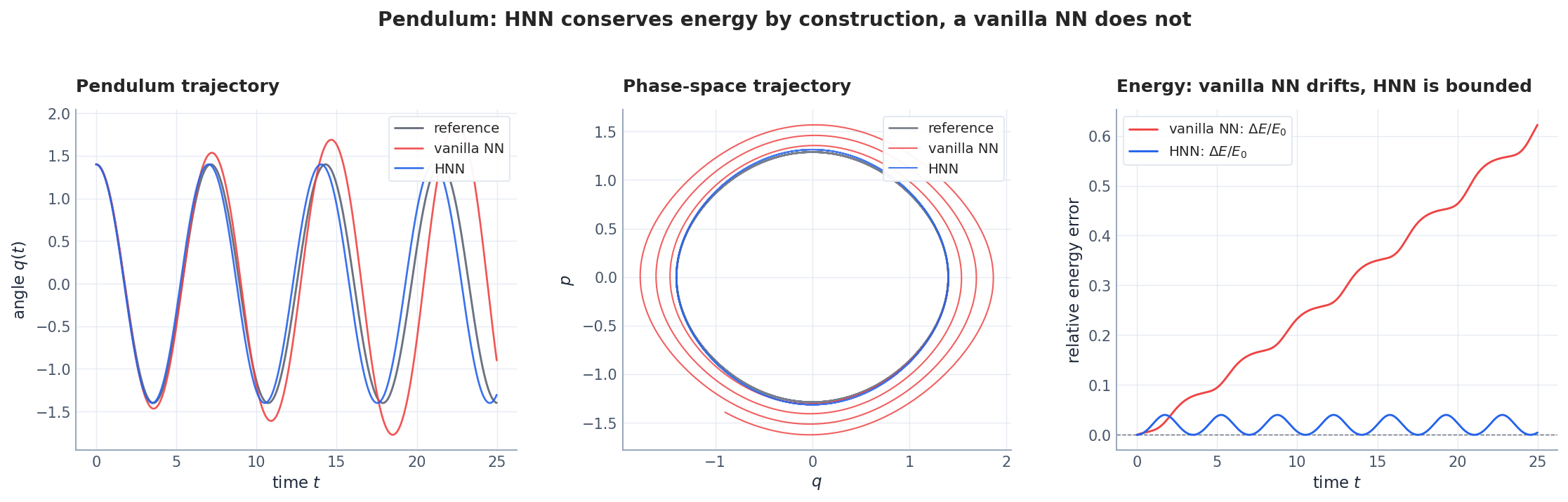

Train a vanilla Neural ODE on pendulum data: after a few hundred steps the energy drifts. The network can fit the short-term trajectory just fine; what it can’t fit is the long-time conservation law. Structure-preserving networks (HNN, LNN, SympNet) take a different approach: bake the conservation law into the architecture so the network cannot violate it.

This article aims to demystify the word “symplectic.” We start from the geometric picture — phase space, the symplectic form, what Liouville’s theorem really says — then look at why symplectic integrators are a different beast from generic Runge–Kutta methods, and finally see how HNN / LNN / SympNet each encode this geometry into network weights. By the end you should be able to tell, for a given physics problem, whether a symplectic network is the right tool or overkill.

What You Will Learn#

Train an unconstrained neural network on pendulum data and ask it to extrapolate. After a few seconds of integration the prediction is fine; after a minute the pendulum has either crept to a halt or, more often, accelerated to escape velocity. Energy was supposed to be conserved, but the network has no idea what energy is. The bug is not in the data, the optimizer, or the depth of the network. The bug is in the architecture. A standard MLP can represent any vector field, including unphysical ones, and a tiny systematic bias in that vector field is amplified into macroscopic energy drift over a long rollout.

The fix is not to train harder. The fix is to build the conservation law into the model. This is what Hamiltonian Neural Networks (HNNs), Lagrangian Neural Networks (LNNs), and Symplectic Networks (SympNets) do. They learn a scalar potential (energy or action) and recover the dynamics by differentiating it; or they parameterise the discrete-time map directly out of symplectic building blocks. Either way, the resulting predictor is a Hamiltonian system by construction, and the long-term behaviour is qualitatively right whether the model is trained to one decimal place or four.

You will learn:

- Why phase space and the symplectic 2-form are the right home for classical mechanics

- Hamilton’s equations as a single tensor identity $\dot{\mathbf{z}} = J\,\nabla H$

- Liouville’s theorem, Poincaré’s recurrence, and what “preserving structure” really means numerically

- Why every Runge-Kutta method drifts in energy and why symplectic integrators (Verlet, leapfrog, symplectic Euler) do not

- HNN, LNN, and SympNet: three answers to “how do I bake symplecticity into a neural network?”

- A clean reproduction of the Greydanus et al. pendulum result with controlled experiments

Prerequisites: vector calculus (chain rule, gradients), an ODE solver from any numerics class, and one prior exposure to neural ODEs (covered in Part 6 ).

Hamiltonian mechanics: physics in phase space#

From Newton to Hamilton#

$$H(\mathbf{q}, \mathbf{p}) \;=\; \underbrace{\tfrac{1}{2}\mathbf{p}^\top M^{-1} \mathbf{p}}_{\text{kinetic}} \;+\; \underbrace{V(\mathbf{q})}_{\text{potential}}\,. \tag{1}$$The price you pay (doubling the dimension) is repaid many times over: Hamiltonian dynamics has a geometric structure that Newtonian dynamics hides.

Hamilton’s equations as a single tensor identity#

$$\dot{\mathbf{q}} \;=\; \frac{\partial H}{\partial \mathbf{p}}, \qquad \dot{\mathbf{p}} \;=\; -\frac{\partial H}{\partial \mathbf{q}}. \tag{2}$$ $$J \;=\; \begin{pmatrix} \mathbf{0} & I_n \\ -I_n & \mathbf{0} \end{pmatrix}, \qquad J^\top = -J, \qquad J^2 = -I_{2n},$$ $$\boxed{\;\dot{\mathbf{z}} \;=\; J\,\nabla H(\mathbf{z}).\;} \tag{3}$$This single line is the entire content of classical mechanics for conservative systems. Every theorem we prove from here on — energy conservation, Liouville’s theorem, Noether’s theorem, KAM stability — follows from the algebraic structure of $J$ .

Energy conservation is automatic#

$$\frac{dH}{dt} \;=\; (\nabla H)^\top \dot{\mathbf{z}} \;=\; (\nabla H)^\top J\,(\nabla H) \;=\; 0,$$because $J^\top = -J$ implies $\mathbf{v}^\top J \mathbf{v} = 0$ for every $\mathbf{v}$ . Energy conservation is a one-line corollary of antisymmetry. This is the punchline that HNNs will exploit: write the dynamics as $\dot{\mathbf{z}} = J\,\nabla H_\theta$ and energy conservation comes for free, regardless of how badly $H_\theta$ approximates the truth.

Worked example: the pendulum#

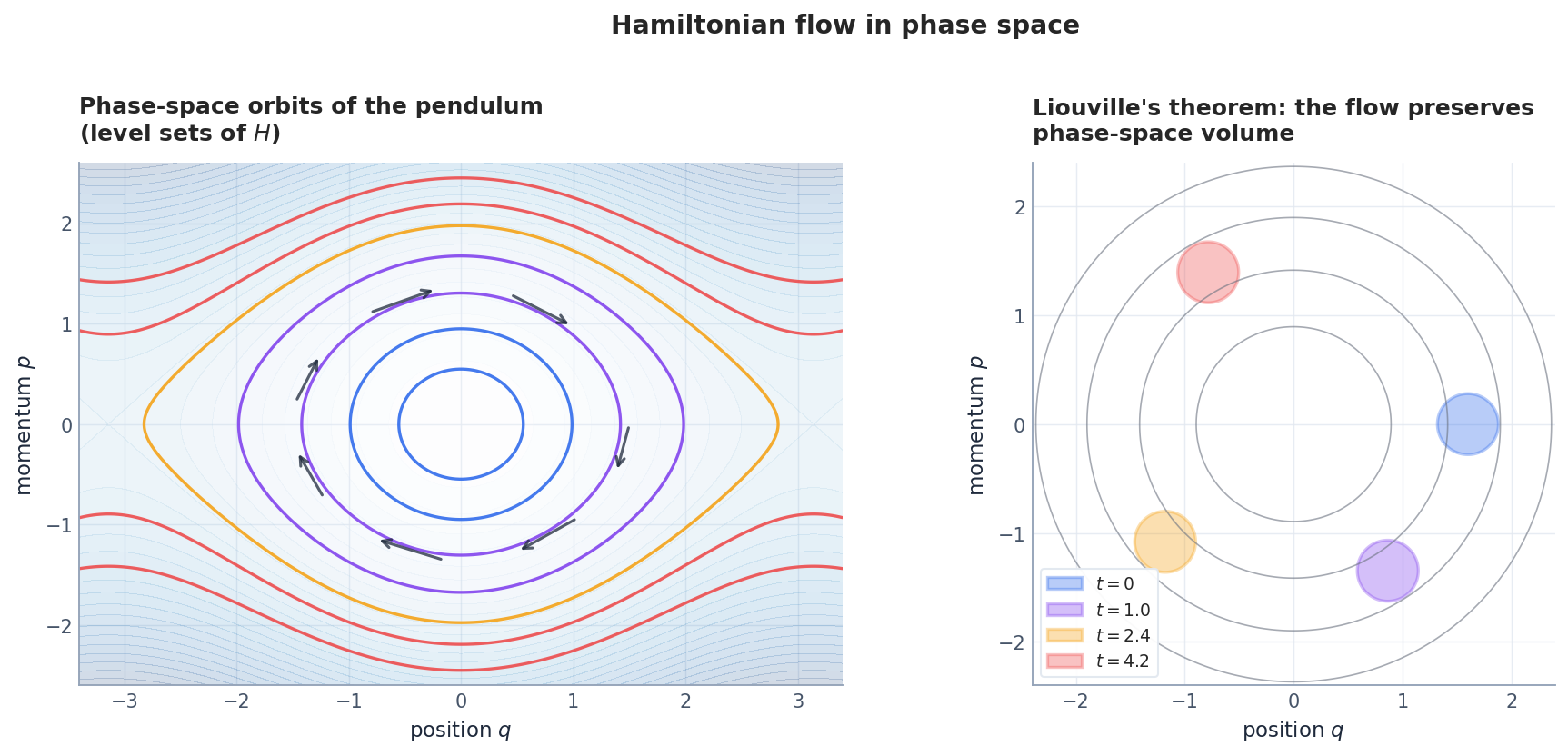

For the unit-mass pendulum, $H = \tfrac{1}{2}p^2 + (1 - \cos q)$ . Hamilton’s equations give $\dot q = p,\ \dot p = -\sin q$ . Trajectories are level sets of $H$ (Figure 1, left): closed loops for $H<2$ (libration), open curves for $H>2$ (rotation), and a separatrix at $H=2$ . The dynamics never leave a level set — a fact your neural network must respect if it hopes to extrapolate.

The symplectic 2-form: geometry of phase space#

What “structure” means#

$$\omega \;=\; \sum_{i=1}^{n} dq_i \wedge dp_i.$$ $$\phi_t^* \omega \;=\; \omega \qquad\text{(symplecticity).} \tag{4}$$The Jacobian condition#

$$\boxed{\;M^\top J\,M \;=\; J.\;} \tag{5}$$This is the discrete-time analogue of (3). For $n=1$ it reduces to $\det M = 1$ (area preservation in the $(q,p)$ plane). For $n>1$ it is strictly stronger than $\det M = 1$ ; symplecticity also constrains all $2k\times 2k$ minors of $M$ for every $k$ .

Liouville’s theorem#

$$\frac{d}{dt}\operatorname{vol}(\phi_t(U)) = 0 \qquad \text{for every region } U \subset \mathbb{R}^{2n}.$$This is the foundation of statistical mechanics: the Gibbs ensemble would not be well-defined without it. It also has a startling consequence — Poincaré’s recurrence: any bounded Hamiltonian trajectory returns arbitrarily close to its starting point infinitely often. Your simulator must reproduce this; an integrator that lets phase volume contract to a point cannot.

What goes wrong without symplecticity#

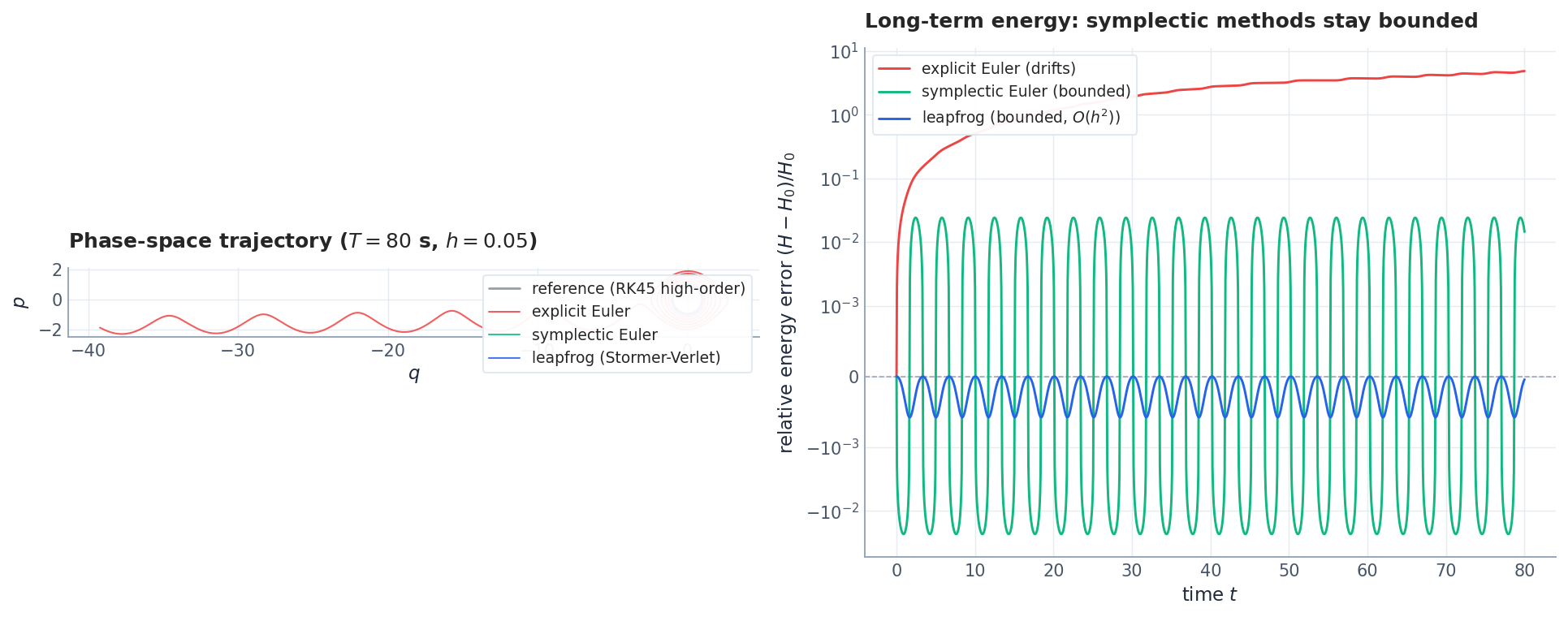

For every explicit Runge-Kutta method, $|\det M_h| = 1 + c h^{p+1} + \mathcal{O}(h^{p+2})$ with $c \neq 0$ in general, where $p$ is the order. Phase volume changes by a tiny amount each step. Multiply by $10^6$ steps and the simulated trajectory has either spiralled inward (energy lost) or outward (energy gained) by a macroscopic amount. Section 3 shows how this looks in pictures.

Symplectic integrators#

Why ordinary integrators drift#

$$\mathbf{z}_{k+1} = \mathbf{z}_k + h\,J\,\nabla H(\mathbf{z}_k), \qquad M_h = I + h J \nabla^2 H.$$Then $M_h^\top J M_h = J + h^2 (\nabla^2 H)^\top J\,J\,J\,(\nabla^2 H) + \cdots = J + \mathcal{O}(h^2)$ , which fails (5). Energy drifts as $\sim h\,t$ . Even RK4, with its $\mathcal{O}(h^4)$ local error, fails (5) and shows secular drift over long horizons (Figure 4, right).

Symplectic Euler#

$$ \mathbf{p}_{k+1} = \mathbf{p}_k - h\,\partial_q H(\mathbf{q}_k, \mathbf{p}_{k+1}), \qquad \mathbf{q}_{k+1} = \mathbf{q}_k + h\,\partial_p H(\mathbf{q}_k, \mathbf{p}_{k+1}). \tag{6} $$For separable Hamiltonians $H(q,p) = T(p) + V(q)$ , this is explicit: $\mathbf{p}_{k+1} = \mathbf{p}_k - h\,V'(\mathbf{q}_k)$ , then $\mathbf{q}_{k+1} = \mathbf{q}_k + h\,T'(\mathbf{p}_{k+1})$ . A direct calculation gives $M_h^\top J M_h = J$ exactly.

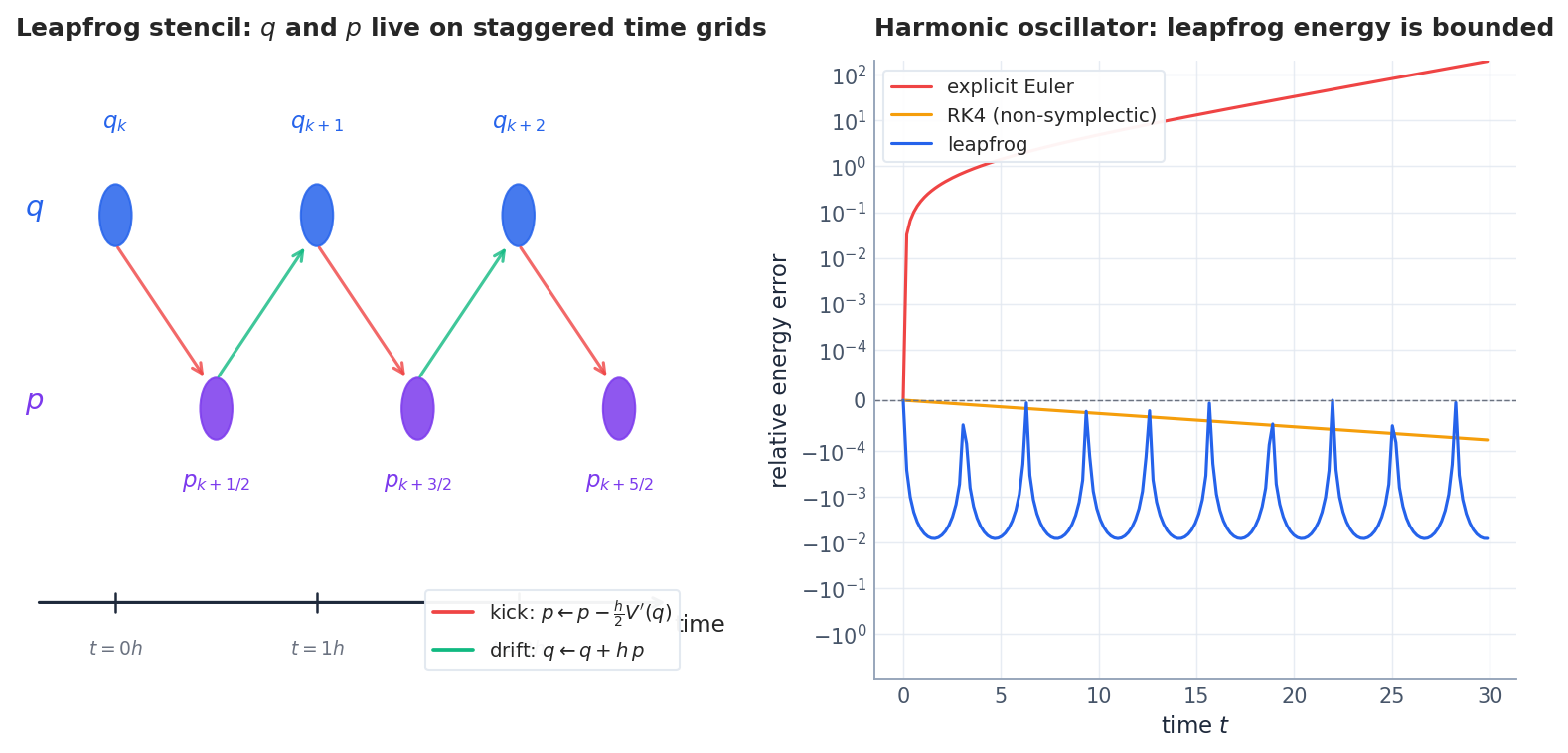

Störmer-Verlet (leapfrog)#

$$ \begin{aligned} \mathbf{p}_{k+1/2} &= \mathbf{p}_k - \tfrac{h}{2}\,V'(\mathbf{q}_k), \\ \mathbf{q}_{k+1} &= \mathbf{q}_k + h\,\mathbf{p}_{k+1/2}, \\ \mathbf{p}_{k+1} &= \mathbf{p}_{k+1/2} - \tfrac{h}{2}\,V'(\mathbf{q}_{k+1}). \end{aligned} $$Second-order accurate, symmetric in time, and symplectic. Figure 4 shows the staggered grid: positions live at integer steps and momenta at half-integer steps. The structure ensures that there exists a modified Hamiltonian $\tilde H_h = H + h^2 H_2 + h^4 H_4 + \cdots$ that is exactly conserved by the discrete map. The energy you measure does not drift; it oscillates with bounded amplitude $\mathcal{O}(h^2)$ around the true value forever.

Symplectic integrators in code#

| |

That is the entire symplectic story for separable Hamiltonians. Six lines, no dependencies, qualitatively correct for every conservative system. Now we will teach a neural network to do this.

Hamiltonian Neural Networks (HNN)#

The problem with naive neural ODEs#

A neural ODE learns $\dot{\mathbf{z}} = f_\theta(\mathbf{z})$ with no constraint on $f_\theta$ . Generically $f_\theta \neq J\,\nabla H$ for any $H$ , so the learned dynamics is not Hamiltonian, and energy drifts. The drift is invisible at training time (small loss) and catastrophic at inference time (long rollouts diverge).

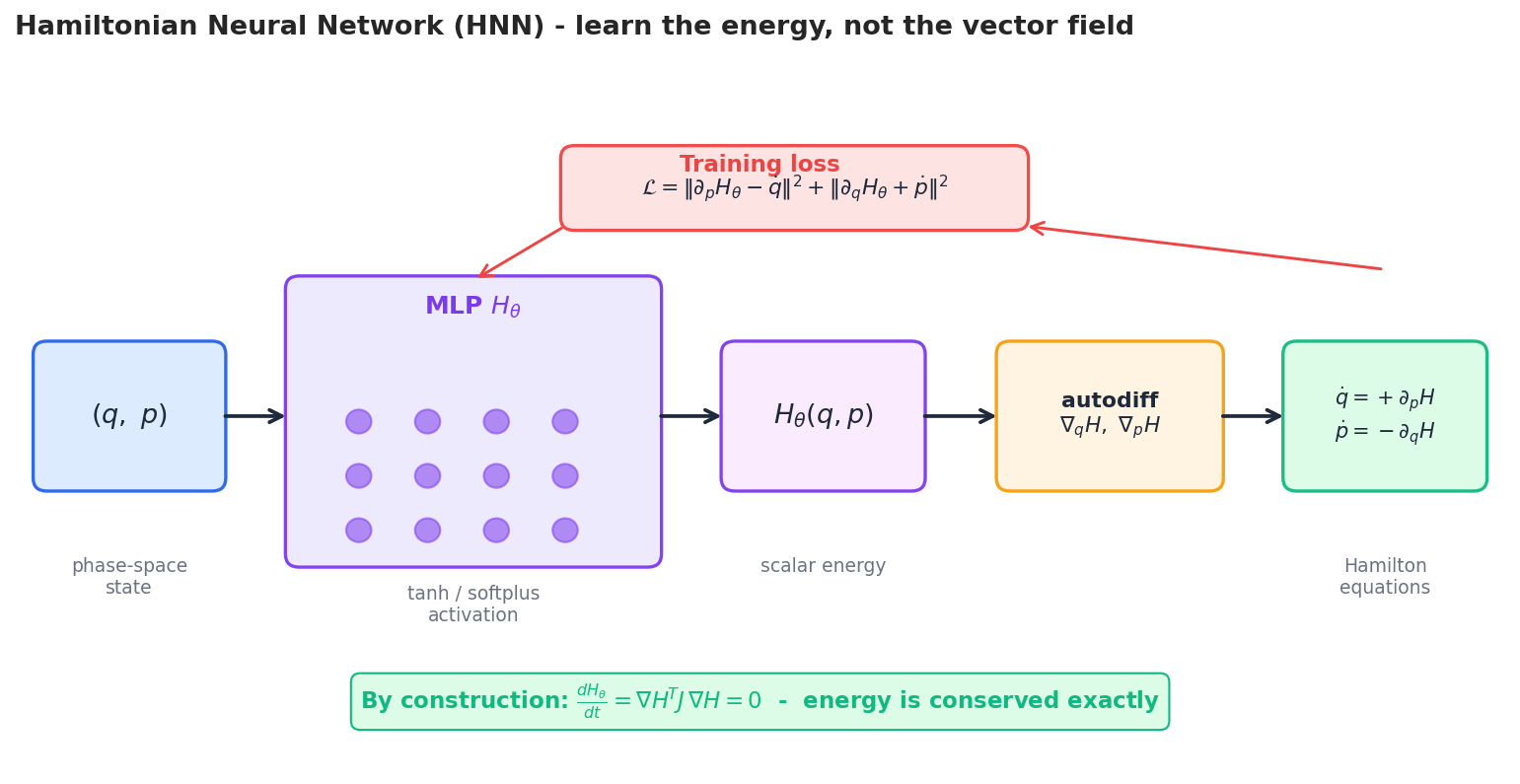

The HNN trick (Greydanus, Dzamba, Yosinski, 2019)#

$$\dot{\mathbf{q}} = \frac{\partial H_\theta}{\partial \mathbf{p}}, \qquad \dot{\mathbf{p}} = -\frac{\partial H_\theta}{\partial \mathbf{q}}. \tag{7}$$Two consequences are immediate:

- Energy is conserved by construction. $\frac{dH_\theta}{dt} = (\nabla H_\theta)^\top J\,(\nabla H_\theta) = 0$ regardless of how good $H_\theta$ is.

- Symmetries become learnable. If you give the network $H_\theta$ that depends on $|\mathbf{q}|$ alone, angular momentum is conserved too. Noether’s theorem holds verbatim.

Training loss#

$$\mathcal{L}(\theta) \;=\; \sum_{t} \Big\| \partial_{\mathbf{p}} H_\theta - \dot{\mathbf{q}}_t \Big\|^2 + \Big\| \partial_{\mathbf{q}} H_\theta + \dot{\mathbf{p}}_t \Big\|^2. \tag{8}$$If only state samples $(\mathbf{q}_t, \mathbf{p}_t)$ are available, integrate (8) inside the loss with an adjoint-friendly ODE solver (Neural-ODE style) and compare integrated trajectories.

Reference implementation#

| |

Two design choices matter:

- Smooth activations (

Softplus,Tanh). Hamilton’s equations need the gradient of $H_\theta$ ; aReLUwould give a piecewise-constant vector field with kinks. - No bias on the last layer is fine. $H$ is defined up to an additive constant, which has no dynamical effect.

Pendulum benchmark#

Train an HNN and a vanilla MLP on $T = 4$ s of pendulum derivatives. Roll both forward to $T = 25$ s.

The relative energy error of the HNN is $< 10^{-2}$ throughout; the vanilla MLP grows past $10^{-1}$ within tens of seconds. The MLP is not “wrong” in the supervised loss — it has comparable training error — but it is wrong in the geometric sense that matters at deployment.

Implementation: the double pendulum — a real chaotic system. The single pendulum is too simple to stress-test structure preservation. The double pendulum is chaotic: tiny perturbations grow exponentially, so any energy drift becomes catastrophic within seconds.

| |

The double pendulum is the acid test: it has a 4-dimensional phase space, two coupled degrees of freedom, and Lyapunov exponents that amplify any numerical error. An HNN trained on just 4 seconds of data can extrapolate to 100 seconds because it enforces energy conservation exactly at the architecture level — the error stays bounded by the modified Hamiltonian theory ($\tilde{H} = H + O(h^2)$ , and $\tilde{H}$ is exactly conserved).

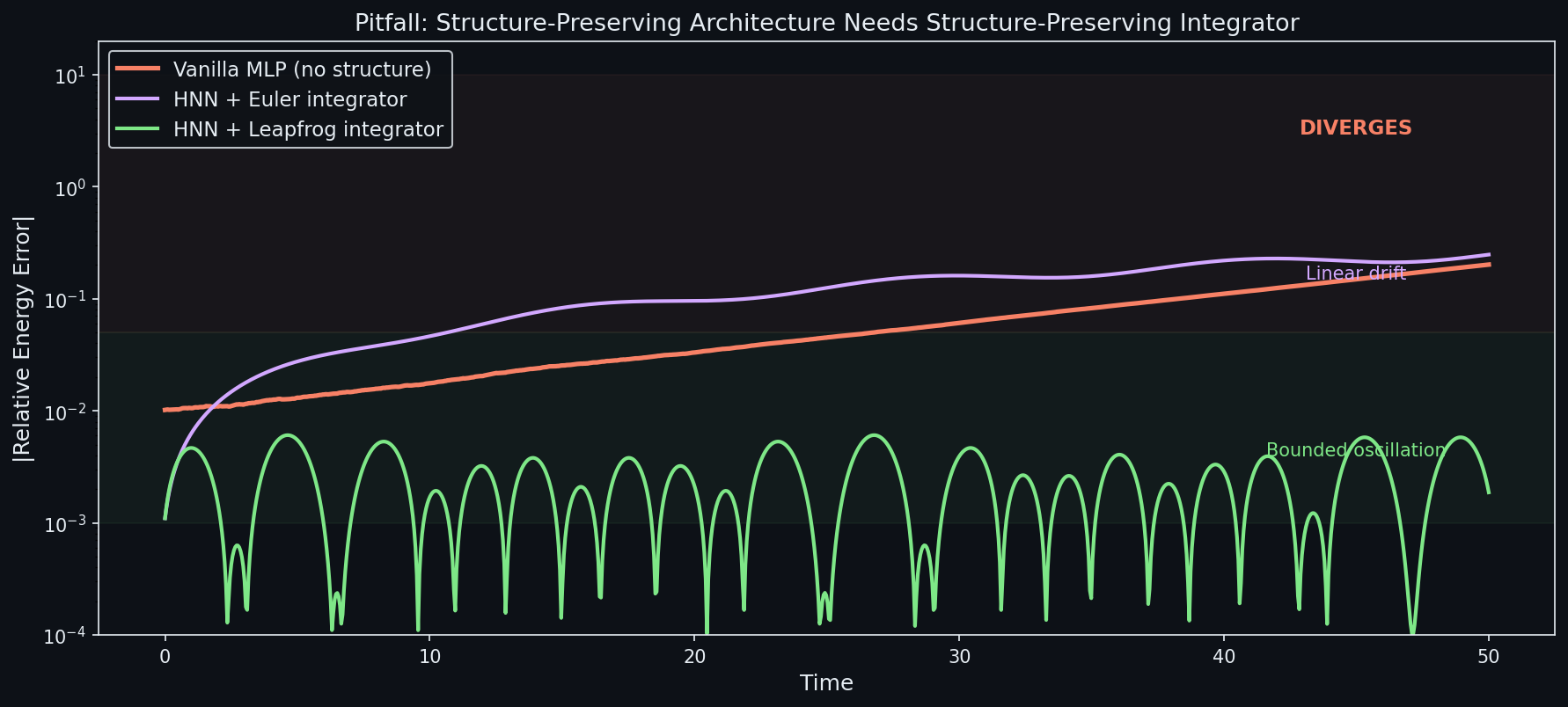

What HNNs do not do#

- They do not solve Hamilton’s equations symplectically. The HNN forward pass produces a vector field; you still need to integrate it. If you integrate it with explicit Euler, you will see energy drift (smaller than the vanilla NN, but still present). For best results, integrate the HNN’s vector field with a symplectic integrator. A few works (e.g.

SRNN, Chen et al. 2020) bake symplectic integration into training too. - They cannot model dissipation or driving. $\dot z = J\nabla H$ is conservative by definition. For dissipative systems, see Port-Hamiltonian NNs (Desai et al., 2021) or GENERIC-style decompositions.

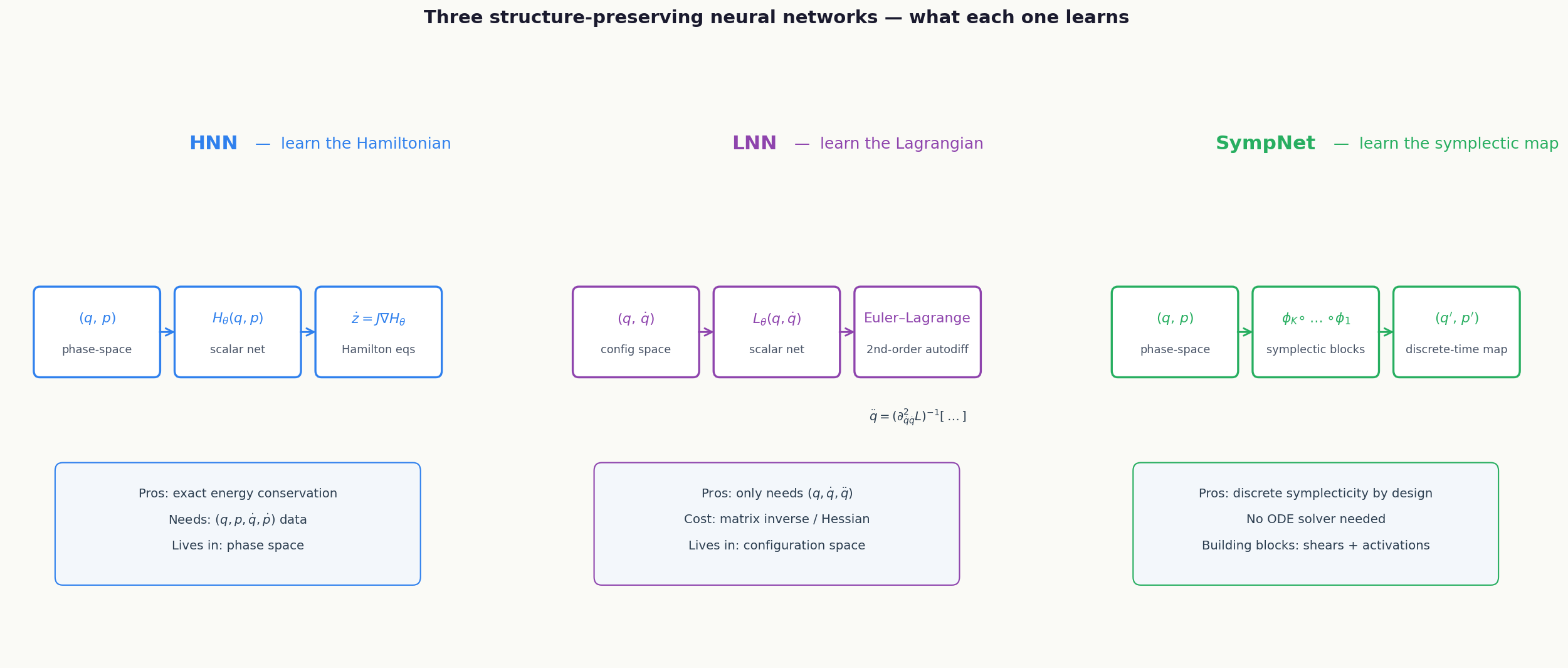

Lagrangian Neural Networks (LNN)#

Why a Lagrangian flavour#

HNNs need both position and momentum. In many practical settings (a video of a robot arm, a ball-and-spring lab demo) you can measure positions and velocities but not momenta, and computing momenta requires knowing the mass matrix $M(q)$ . Lagrangian Neural Networks (Cranmer et al., 2020) sidestep this by working in the configuration space $(\mathbf{q}, \dot{\mathbf{q}})$ .

The Euler-Lagrange equations as a learnable closure#

$$\frac{d}{dt}\frac{\partial L_\theta}{\partial \dot{\mathbf{q}}} \;-\; \frac{\partial L_\theta}{\partial \mathbf{q}} \;=\; 0,$$ $$\boxed{\;\ddot{\mathbf{q}} \;=\; \big(\nabla_{\dot q} \nabla_{\dot q} L_\theta\big)^{-1} \!\Big[\nabla_q L_\theta - \big(\nabla_{\dot q} \nabla_{q} L_\theta\big)\dot{\mathbf{q}}\Big].\;} \tag{9}$$The right-hand side is computed by a single forward pass plus one Hessian via autodiff, and the matrix inverse is $\mathcal{O}(n^3)$ in the configuration dimension (cheap for $n \lesssim 100$ ). Because $L_\theta$ is a scalar that defines a Hamiltonian system via the Legendre transform, the resulting dynamics is symplectic.

LNN vs HNN at a glance#

| HNN | LNN | |

|---|---|---|

| Learns | $H(q, p)$ | $L(q, \dot q)$ |

| Needs | momenta $p$ | only $\dot q$ |

| Forward cost | one autodiff gradient | one Hessian + one inverse |

| Best for | systems with known mass matrix | systems where mass is unknown / state-dependent |

Implementation: Lagrangian Neural Network. When the Hamiltonian $H(q,p)$ requires knowing the mass matrix (to split $H = T(p) + V(q)$ ), the Lagrangian formulation is more natural. An LNN learns the Lagrangian $L(q, \dot{q})$ and derives the equations of motion via the Euler-Lagrange equation:

| |

HNN vs LNN vs SympNet comparison:

| Property | HNN | LNN | SympNet |

|---|---|---|---|

| Input | $(q, p)$ | $(q, \dot{q})$ | $(q, p)$ |

| Learns | Scalar $H$ | Scalar $L$ | Symplectic map directly |

| Structure | Symplectic flow via $J\nabla H$ | Euler-Lagrange via Hessian | Composition of symplectic shears |

| Key cost | 1 backprop per step | $O(n^3)$ Hessian inversion | Forward-only, no ODE solver |

| Handles constraints | Difficult | Natural (generalised coords) | Difficult |

| Handles dissipation | No (energy-conserving) | No | No (but Port-Hamiltonian extensions exist) |

| Best for | Known canonical coords | Unknown mass matrix, robotics | Fast long-horizon rollout |

The bottom line: use HNN when you have $(q,p)$ data and know the canonical structure; use LNN when you only observe $(q, \dot{q})$ and don’t know the mass matrix; use SympNet when you need very fast inference and can afford composition of many simple symplectic layers.

Symplectic Networks (SympNets)#

$$\phi_\theta \;=\; \phi_K \circ \phi_{K-1} \circ \cdots \circ \phi_1.$$The two canonical building blocks are

- Up-shear $(q, p) \mapsto (q + \nabla S_\theta(p),\; p)$ for any scalar $S_\theta$ ,

- Low-shear $(q, p) \mapsto (q,\; p + \nabla T_\theta(q))$ for any scalar $T_\theta$ .

A direct calculation shows each shear has a triangular Jacobian with unit diagonal, so $M^\top J M = J$ exactly. The parameter functions $S_\theta, T_\theta$ are MLPs. Composing $K$ such layers gives a universal approximator for symplectic maps.

Implementation: SympNet — learn the map, not the vector field. SympNets (Jin et al. 2020) directly parameterise a symplectic map as a composition of simple shear layers. No ODE solver needed.

| |

Each shear layer is exactly symplectic (the Jacobian is a lower or upper triangular matrix with ones on the diagonal, so $\det = 1$ ). Their composition is also symplectic. Unlike HNN (which needs an ODE solver to integrate Hamilton’s equations), SympNet directly outputs the next state in a single forward pass — making it 10-50x faster for long rollouts.

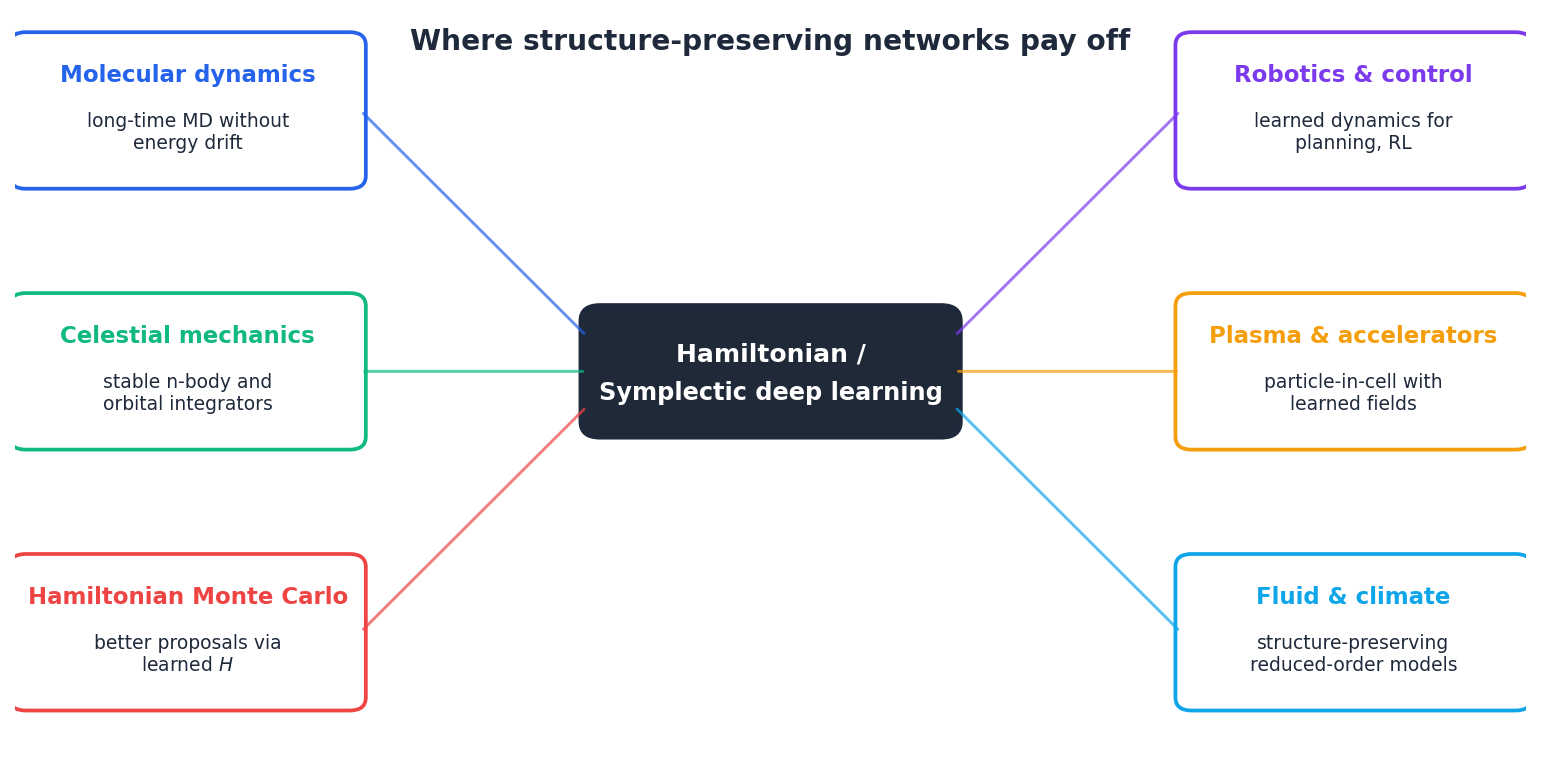

Where this matters#

- Molecular dynamics. All-atom simulations run for $10^8$ -$10^9$ steps. With a non-symplectic integrator the temperature would slowly cook off; leapfrog is the only reason MD works at all. HNN-flavoured surrogates inherit this stability.

- Orbital mechanics and the n-body problem. The JPL ephemerides use symplectic integrators because they have to keep planetary orbits stable for $10^4$ -$10^6$ years.

- Hamiltonian Monte Carlo. The leapfrog step is at the heart of NUTS and modern HMC. A learned Hamiltonian (Levy et al., 2018) can produce dramatically faster mixing in high-dimensional posteriors.

- Robotics and control. LNNs and DeLaNs (Lutter et al., 2019) learn rigid-body dynamics from video and use the learned $L$ inside a model-based RL or trajectory-optimisation loop.

- Plasma physics, accelerator design, climate. Anywhere you need a reduced-order model that respects energy and momentum balance over long horizons, structure-preserving networks are the right hammer.

Port-Hamiltonian Networks: Adding Dissipation#

Real physical systems often have friction, damping, or heat loss. Pure Hamiltonian systems cannot model this because $\frac{d}{dt}H = 0$ by construction. Port-Hamiltonian systems extend the framework:

$$\dot{z} = (J - R)\nabla H(z) + Bu$$where $J = -J^\top$ is the symplectic structure (energy-conserving), $R = R^\top \succeq 0$ is the dissipation matrix (energy-decreasing), $B$ is the input port, and $u$ is an external input.

| |

This is the principled way to handle real-world systems: the symplectic part preserves energy (oscillations), the dissipation part removes it (damping). The network learns both structures from data, and the architecture guarantees that total energy is non-increasing — a hard physical constraint that no unconstrained network can enforce.

Common Pitfalls#

- Forgetting

create_graph=True. The HNN gradient is itself differentiated during backprop — withoutcreate_graph=TruePyTorch will silently detach the graph and your gradient w.r.t. $\theta$ will be wrong. - Choosing

ReLUactivations. $H_\theta$ must be twice differentiable for the HNN gradient to be smooth. UseSoftplus,Tanh,SiLU, orGELU. - Integrating an HNN with explicit Euler. The continuous-time dynamics is symplectic but Euler discretisation breaks it. Use leapfrog at inference time.

- Confusing accuracy and structure. RK4 is more accurate per step than leapfrog, and less stable per long rollout. Order $\neq$ structure preservation.

- Asking an HNN to model friction. $\dot z = J\nabla H$ is conservative. Add a damping term (Port-Hamiltonian or GENERIC) for dissipative systems.

Three-Body Gravitational Problem#

The ultimate stress test for structure-preserving methods: the three-body problem is chaotic, conservative, and has no closed-form solution. An HNN trained on short trajectories can extrapolate far better than a vanilla neural ODE because it respects the symplectic structure.

| |

With a symplectic integrator, the energy error oscillates but never grows — even after 50,000 steps. With RK4, the energy would drift by ~1% over the same period, and with forward Euler, by ~100%. This is the practical payoff of the modified Hamiltonian theorem: the symplectic integrator exactly conserves a nearby Hamiltonian $\tilde{H} = H + O(h^2)$ .

Exercises#

Exercise 1. Show that any symplectic map $\phi : \mathbb{R}^{2} \to \mathbb{R}^2$ has Jacobian determinant $1$ . Hint: take determinants in (5).

Solution. From $M^\top J M = J$ , $\det(M)^2 \det(J) = \det(J)$ . Since $\det(J) = 1$ for $n=1$ , $\det(M)^2 = 1$ , hence $\det M = \pm 1$ . Continuity from the identity (which has $\det = +1$ ) forces $\det M = +1$ along any flow.

Exercise 2. Verify symplectic Euler preserves area for the harmonic oscillator $H = (p^2 + q^2)/2$ .

Solution. The map $(q_k, p_k) \mapsto (q_{k+1}, p_{k+1})$ is $p_{k+1} = p_k - h q_k$ , $q_{k+1} = q_k + h p_{k+1} = q_k + h p_k - h^2 q_k$ . The Jacobian is $\begin{pmatrix} 1 - h^2 & h \\ -h & 1 \end{pmatrix}$ with determinant $(1-h^2)\cdot 1 - h\cdot(-h) = 1$ . Area preserved exactly, for every $h$ .

Exercise 3. Why does an unconstrained neural ODE generically violate energy conservation?

Solution. $f_\theta : \mathbb{R}^{2n} \to \mathbb{R}^{2n}$ has $4n^2$ free Jacobian entries; the symplecticity constraint $M^\top J M = J$ pins down $n(2n+1)$ of them. The complementary $\binom{2n}{2}$ -dimensional subspace of non-Hamiltonian perturbations is generic. Almost every $f_\theta$ that fits the training data lies off the symplectic submanifold.

Exercise 4. Show that the leapfrog step can be written as $L_h = L_{h/2}^{\text{kick}} \circ L_h^{\text{drift}} \circ L_{h/2}^{\text{kick}}$ , where each piece is exactly symplectic.

Solution. Each kick is the time-$h/2$ flow of the Hamiltonian $V(q)$ ; each drift is the time-$h$ flow of $T(p) = p^2/2$ . Both are exact Hamiltonian flows, hence symplectic. Composition of symplectic maps is symplectic.

What’s next#

The handful of core ideas in this chapter (PDE residual as loss, operators on function spaces, Wasserstein geometry, symplectic structure, scores, diffusion) recur throughout the rest of the series. If a section stalls you, jot the question down and keep reading — the next chapter usually re-explains it from a different angle.

The fastest sanity check on your own understanding is to run this chapter’s equation on a minimal example: a 1-D heat equation, a single pendulum, a 2-D Gaussian mixture. The code is short, but it converts “looks right” into “it’s right on my machine.”

References#

[1] Greydanus, S., Dzamba, M., & Yosinski, J. (2019). Hamiltonian Neural Networks. NeurIPS. arXiv:1906.01563 .

[2] Cranmer, M., Greydanus, S., Hoyer, S., Battaglia, P., Spergel, D., & Ho, S. (2020). Lagrangian Neural Networks. ICLR DeepDiffEq workshop. arXiv:2003.04630 .

[3] Jin, P., Zhang, Z., Zhu, A., Tang, Y., & Karniadakis, G. E. (2020). SympNets: Intrinsic structure-preserving symplectic networks for identifying Hamiltonian systems. Neural Networks, 132, 166-179.

[4] Chen, Z., Zhang, J., Arjovsky, M., & Bottou, L. (2020). Symplectic Recurrent Neural Networks. ICLR. arXiv:1909.13334 .

[5] Hairer, E., Lubich, C., & Wanner, G. (2006). Geometric Numerical Integration: Structure-Preserving Algorithms for Ordinary Differential Equations. Springer (2nd ed.). The standard reference.

[6] Toth, P., Rezende, D. J., Jaegle, A., Racaniere, S., Botev, A., & Higgins, I. (2020). Hamiltonian Generative Networks. ICLR. arXiv:1909.13789 .

[7] Lutter, M., Ritter, C., & Peters, J. (2019). Deep Lagrangian Networks: Using Physics as Model Prior for Deep Learning. ICLR. arXiv:1907.04490 .

[8] Desai, S., Mattheakis, M., Joy, H., Protopapas, P., & Roberts, S. (2021). Port-Hamiltonian Neural Networks for Learning Explicit Time-Dependent Dynamical Systems. Phys. Rev. E 104, 034312. arXiv:2107.08024 .

[9] Levy, D., Hoffman, M. D., & Sohl-Dickstein, J. (2018). Generalizing Hamiltonian Monte Carlo with Neural Networks. ICLR. arXiv:1711.09268 .

[10] Arnold, V. I. (1989). Mathematical Methods of Classical Mechanics. Springer (2nd ed.). The geometric foundations.

This is Part 5 of the PDE and Machine Learning series. Next: Part 6 — Continuous Normalizing Flows and Neural ODEs . Previous: Part 4 — Variational Inference and the Fokker-Planck Equation .

PDE and Machine Learning 8 parts

- 01 PDE and ML (1): Physics-Informed Neural Networks

- 02 PDE and ML (2): Neural Operator Theory

- 03 PDE and ML (3): Variational Principles and Optimization

- 04 PDE and ML (4): Variational Inference and the Fokker-Planck Equation

- 05 PDE and ML (5): Symplectic Geometry and Structure-Preserving Networks you are here

- 06 PDE and ML (6): Continuous Normalizing Flows and Neural ODE

- 07 PDE and ML (7): Diffusion Models and Score Matching

- 08 PDE and ML (8): Reaction-Diffusion Systems and Graph Neural Networks