Recommendation Systems (1): Fundamentals and Core Concepts

A beginner-friendly guide to recommendation systems: the three core paradigms (collaborative filtering, content-based, hybrid), evaluation metrics, the multi-stage funnel architecture used in production, and the open challenges of cold-start, sparsity, and the long tail. With working Python implementations.

Open Netflix and the homepage somehow knows you. Scroll TikTok and the next video is the one you didn’t realise you wanted. Drop into Spotify on a Monday morning and Discover Weekly serves up thirty songs you’ve never heard of, and you save half of them.

None of this is magic. It is one of the most commercially successful applications of machine learning, quietly running behind almost every consumer product you use: the recommendation system.

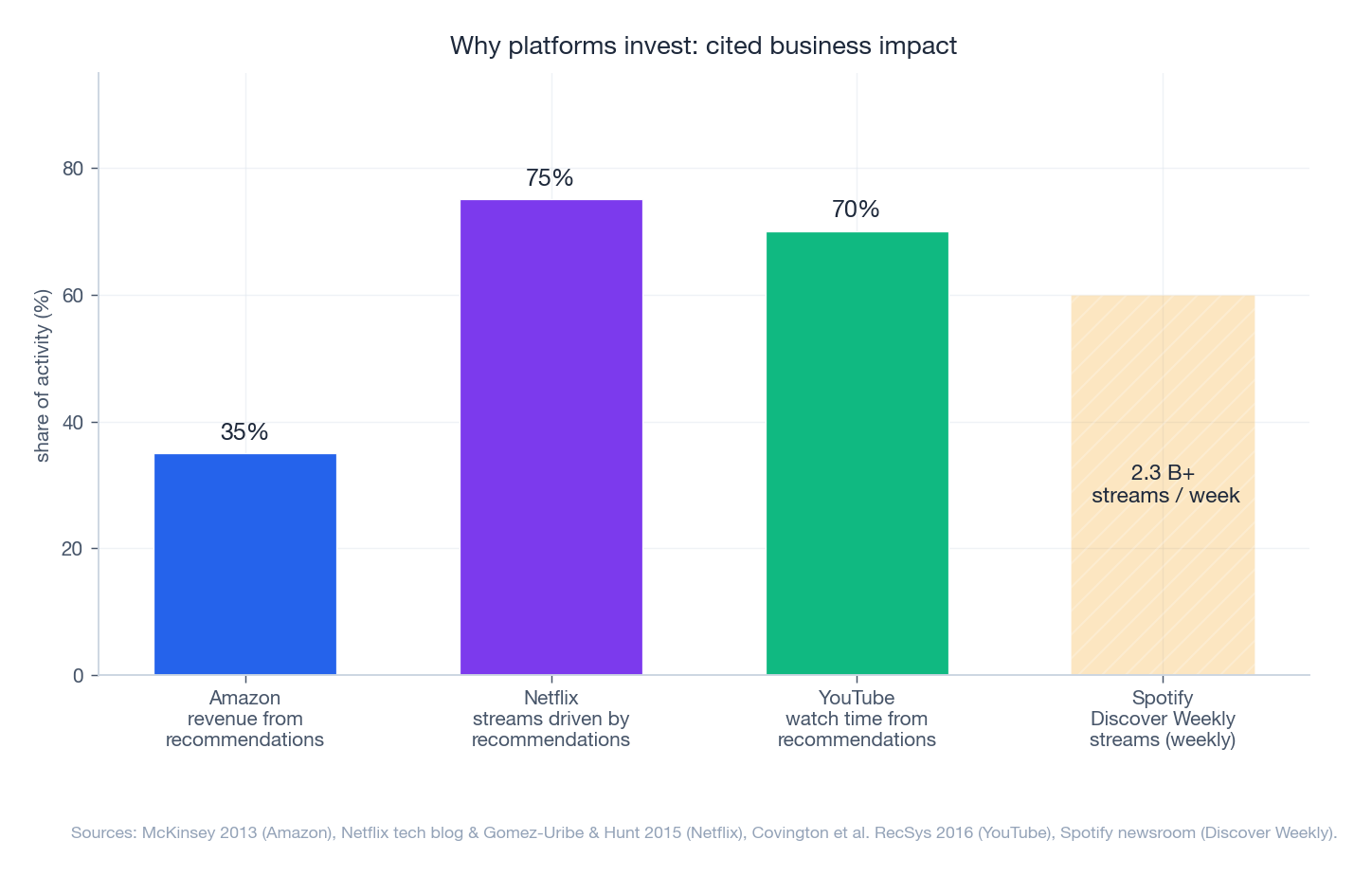

The numbers explain why every major platform invests so heavily here. McKinsey reports that 35% of Amazon’s purchases come from its recommendation engine. YouTube engineers, in their RecSys 2016 paper, attribute about 70% of watch time to recommendations. Netflix’s product leads have publicly stated that around 75% of what people stream is driven by their recommender.

This article is the first in a 16-part series. Its job is to give you a complete and honest mental model of how these systems work — enough to understand any modern recommender paper or production architecture you encounter later.

What you will learn

- The three foundational paradigms — collaborative filtering, content-based, hybrid — and when each wins

- Matrix factorization, the workhorse that won the Netflix Prize, with full math and code

- The evaluation metrics that actually matter: Precision, Recall, MAP, NDCG, plus diversity and coverage

- The multi-stage funnel architecture every production system uses to go from millions of items to a top-10 list in 100 ms

- The five open challenges every recommender engineer wrestles with: cold-start, sparsity, long tail, temporal drift, scale

- Working Python implementations of User-CF, Item-CF and matrix factorization that you can run today

Prerequisites: comfort with Python, basic linear algebra (dot products, matrices), and willingness to read a few formulas. No prior machine-learning experience required.

Why Recommendation Systems Matter#

Before any algorithm, it helps to be clear about what problem we are solving.

The shape of the problem#

Every modern catalog is too large for a human to browse:

- Netflix carries ~17,000 titles worldwide

- YouTube ingests 500 hours of video per minute

- Spotify hosts over 100 million tracks

- Amazon lists hundreds of millions of products

Without filtering, a user spends more time searching than consuming, and the paradox of choice kicks in — more options, worse decisions, less satisfaction. A recommender’s job is to act as a personalised lens that surfaces the tiny fraction of content that matters to you.

The business case#

The dollars are large enough to drive entire org charts.

A few representative numbers, with sources:

| Platform | Impact | Source |

|---|---|---|

| Amazon | ~35% of revenue from recommendations | MGI / McKinsey 2013 |

| Netflix | ~75% of streams driven by the recommender | Gomez-Uribe & Hunt, ACM TMIS 2015 |

| YouTube | ~70% of watch time from recommendations | Covington et al., RecSys 2016 |

| Spotify | Discover Weekly surpassed 2.3 B streams within a year | Spotify Newsroom |

These are not marginal lifts. For a platform at scale, a 1% relative improvement in click-through rate is often worth eight or nine figures a year, which is why a recommender team can easily justify hundreds of engineers.

Where you encounter them daily#

Different surfaces demand different recipes:

- E-commerce — “Customers who bought this also bought…”, personalised home rows, complete-the-look bundles.

- Streaming video — taste-clustered home rows, “Because you watched…”, auto-play the next episode.

- Music — Discover Weekly, Daily Mix, radio stations seeded from a single track.

- Social feeds — engagement-optimised ranking, “People you may know”, For You pages.

All of these are powered, at the bottom, by a small set of ideas. Let us look at them.

The Three Paradigms#

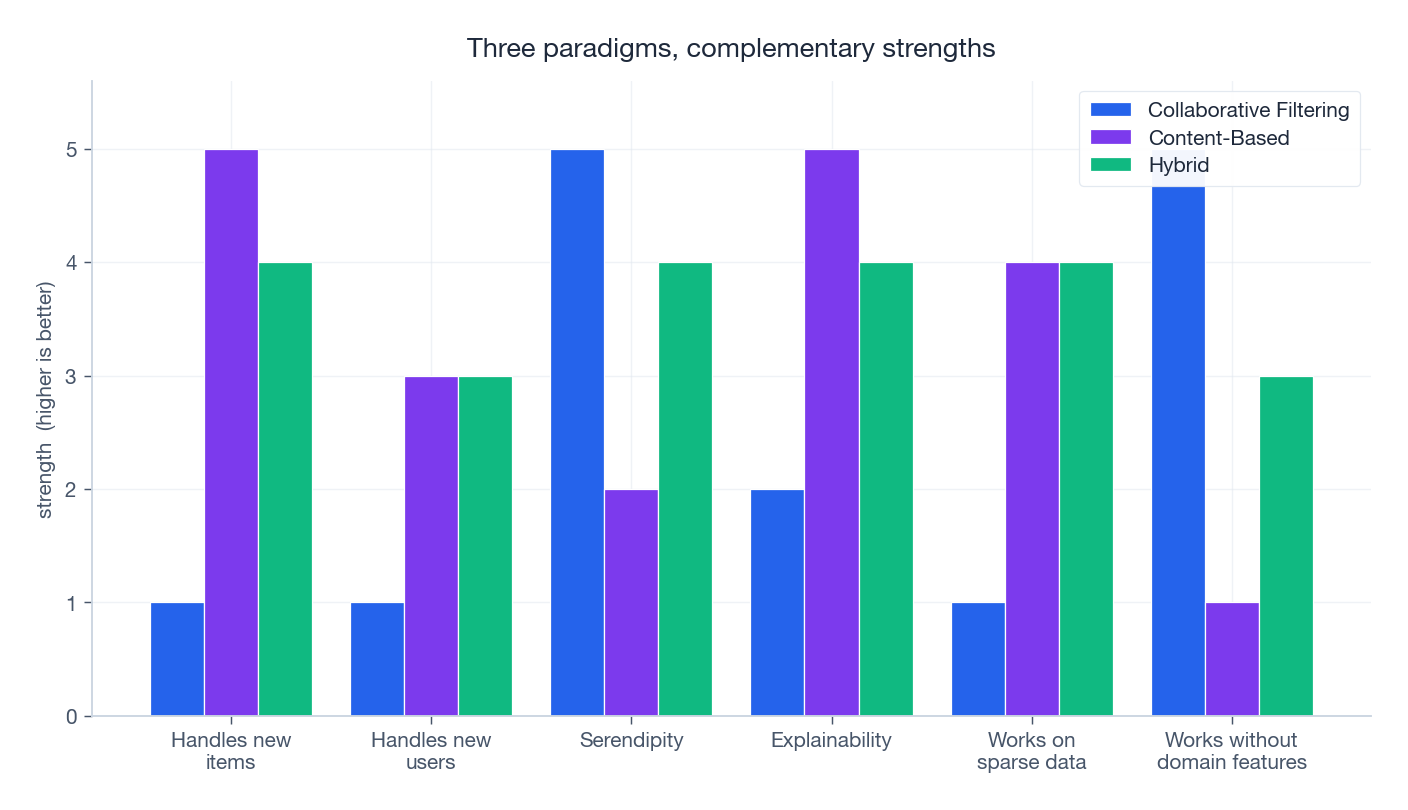

Every recommender, from a 50-line script to YouTube’s stack, builds on one of three philosophies:

- Collaborative filtering — learn from who liked what.

- Content-based filtering — learn from what items are made of.

- Hybrid — combine both, because each has blind spots.

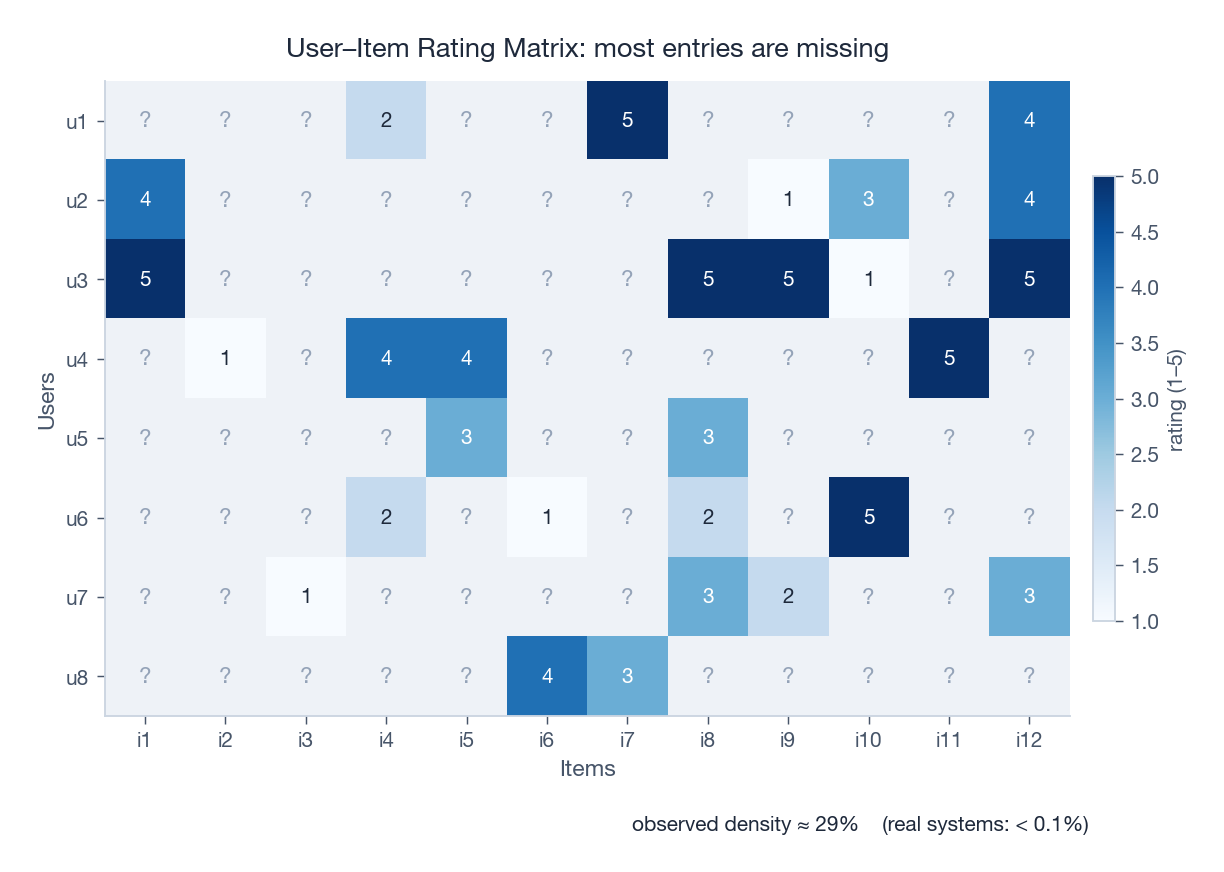

The data we start from looks like this:

Most entries are unknown. The job of any recommender is, in essence, to fill in the blanks.

Collaborative Filtering — “users like you liked this”#

Collaborative filtering (CF) rests on a beautifully simple intuition: if two users agreed in the past, they are likely to agree in the future. The algorithm needs to know nothing about what an item is. It learns purely from the pattern of who interacted with what.

The taste-twin analogy. You and a friend have rated 50 movies almost identically — she is your taste twin. She watches a new film and loves it. You haven’t seen it. The reasonable bet is that you will love it too, even if neither of you can articulate why.

Two flavours. CF comes in two practical variants:

- User-based CF — find users similar to you, recommend what they liked.

- Item-based CF — find items similar to ones you already liked.

Item-based CF tends to win in production, for two reasons. Items move slowly (a movie’s neighbours barely change), so similarities can be precomputed and cached. And on most platforms there are far fewer items than users, making item–item more scalable.

The math. Let:

- $U = \{u_1, \dots, u_m\}$ be the set of $m$ users

- $I = \{i_1, \dots, i_n\}$ be the set of $n$ items

- $R \in \mathbb{R}^{m \times n}$ be the user-item rating matrix, with $r_{ui}$ user $u$ ’s rating for item $i$

In words: start from user $u$ ’s personal baseline $\bar r_u$ , then nudge it by what similar users thought, weighted by how similar.

$$\text{sim}_{\cos}(u, v) = \frac{\sum_{i \in I_{uv}} r_{ui} r_{vi}}{\sqrt{\sum r_{ui}^2}\sqrt{\sum r_{vi}^2}}$$ $$\text{sim}_{\text{pearson}}(u, v) = \frac{\sum_{i \in I_{uv}} (r_{ui} - \bar r_u)(r_{vi} - \bar r_v)}{\sqrt{\sum (r_{ui} - \bar r_u)^2}\sqrt{\sum (r_{vi} - \bar r_v)^2}}$$The crucial difference: Alice rates everything 4–5 and Bob rates everything 2–3. Cosine sees them as different. Pearson centres each user first, recognises that their relative preferences may be identical, and rates them as twins.

Strengths and weaknesses.

| Strengths | Weaknesses |

|---|---|

| Domain-agnostic — no item features needed | Cold start: useless for brand-new users or items |

| Captures serendipity | Suffers when data is sparse |

| Improves automatically with more data | Popularity bias — popular items get recommended more |

Content-Based Filtering — “you’ll like this because it’s similar”#

Where CF asks “who else liked this?”, content-based filtering asks “what is this thing, and have you liked similar things before?”

The algorithm builds a profile of your taste from the attributes of items you’ve engaged with, then ranks unseen items by how well they match that profile. It does not care what other users think.

Representing items. The choice of feature representation depends entirely on the medium:

- Text (news, articles): TF-IDF vectors, sentence embeddings, topic models

- Images (products, art): CNN embeddings, colour histograms, visual attributes

- Audio (music): MFCCs, learned audio embeddings, metadata (tempo, key, genre)

- Structured (movies, products): one-hot genres, prices, knowledge-graph features

The $\lambda \|\mathbf{w}_u\|^2$ term is L2 regularisation — a tax on large weights that prevents the model from over-fitting on a handful of ratings.

Strengths and weaknesses.

| Strengths | Weaknesses |

|---|---|

| Handles new items as soon as features exist | Requires good features, which means feature engineering |

| Recommendations are easy to explain | Tends to over-specialise — keeps recommending the same kind of thing |

| Works with a single user’s history | New users still cold-start (no profile yet) |

Hybrid Methods — combining what each does best#

Pure CF and pure content-based each have predictable failure modes. Hybrids combine them so that when one approach falters, the other takes over.

There are five common ways to combine them:

$$\hat{r}_{ui} = \alpha \cdot \hat{r}_{ui}^{\text{CF}} + (1 - \alpha) \cdot \hat{r}_{ui}^{\text{CB}}$$The weight $\alpha$ can be fixed, learned on a validation set, or made adaptive (more CF for power users, more content for newcomers).

2. Switching. Pick a method based on context.

| |

3. Feature combination. Treat content features as additional signals inside a single CF model.

4. Cascade. Use a cheap method to generate candidates, then a precise method to re-rank. This is the dominant pattern in industry — and it generalises into the funnel architecture we’ll see in §5.

5. Meta-level. Use one model’s outputs as another’s inputs. Modern deep recommenders that learn item embeddings from content and then apply CF on those embeddings fall into this bucket.

A real example. Netflix’s production recommender is a sophisticated hybrid: matrix factorization on viewing history, deep nets that fuse text/image/audio features, context signals (time of day, device), business constraints (recency, diversity), and a final ensemble across dozens of models. Almost no major platform runs a single algorithm any more.

Matrix Factorization: The Workhorse#

Neighbourhood methods like User-CF and Item-CF are intuitive, but matrix factorization (MF) is the technique that won the Netflix Prize and underpins much of what came after, including modern two-tower deep models.

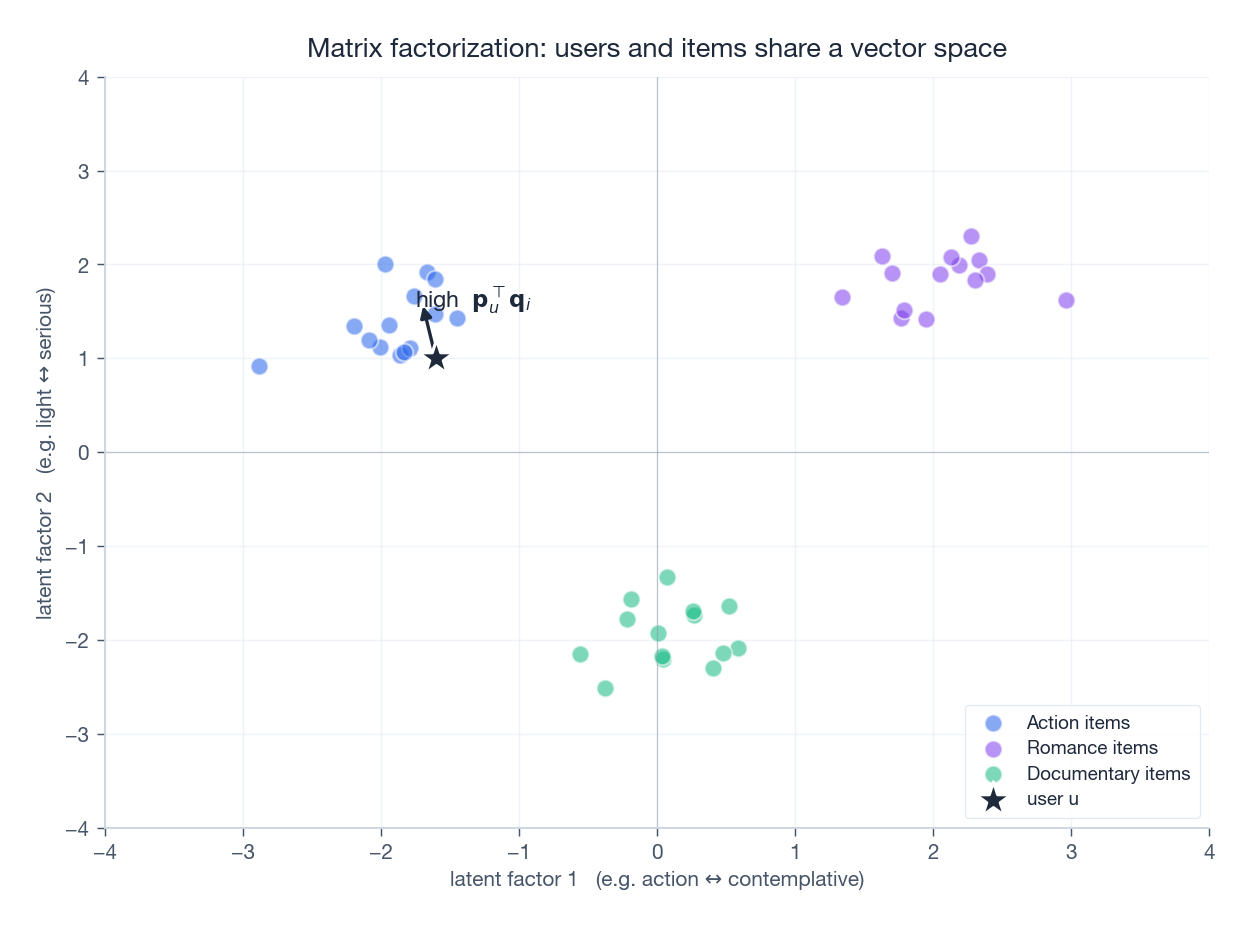

The geometric idea#

MF assumes user preferences and item characteristics can be summarised by a small number of latent factors — hidden axes that emerge from the data rather than being labelled by hand.

The picture says it best: every user and every item lives at a point in a $k$ -dimensional space (typically $k = 20$ –$200$ in practice). For movies, the axes might end up looking like blockbuster ↔ art-house or light ↔ serious, but you never tell the model that — it discovers them.

$$\hat{r}_{ui} = \mathbf{p}_u^\top \mathbf{q}_i$$If user and item vectors point in similar directions, the dot product is large, and the model predicts a high rating.

The optimisation problem#

$$\min_{P, Q} \sum_{(u, i) \in \Omega} (r_{ui} - \mathbf{p}_u^\top \mathbf{q}_i)^2 + \lambda (\|\mathbf{p}_u\|^2 + \|\mathbf{q}_i\|^2)$$ $$\hat{r}_{ui} = \mu + b_u + b_i + \mathbf{p}_u^\top \mathbf{q}_i$$where $\mu$ is the global mean, $b_u$ is “this user rates everything high”, and $b_i$ is “this item is universally loved (or hated)”. With those biases pulled out, the latent factors only have to model the interaction, which is what we actually want them to learn.

Two ways to optimise#

$$\mathbf{p}_u \leftarrow \mathbf{p}_u + \eta (e_{ui} \mathbf{q}_i - \lambda \mathbf{p}_u), \quad \mathbf{q}_i \leftarrow \mathbf{q}_i + \eta (e_{ui} \mathbf{p}_u - \lambda \mathbf{q}_i)$$Alternating Least Squares (ALS). Fix $Q$ , solve a closed-form least-squares problem for each $\mathbf{p}_u$ , then swap. ALS parallelises trivially across users (and items), which is why Spark MLlib’s recommender ships with it.

Implementation#

Here is a minimal but complete MF with biases, trained by SGD:

| |

Try it. Sweep

n_factorsfrom 2 to 50 and watch RMSE: too few and the model under-fits, too many and it over-fits this tiny dataset. The right value on real data is almost always in the 20–200 range.

Implicit feedback#

In production you rarely have explicit 1-to-5 ratings. You have implicit feedback: clicks, watch time, dwell time, purchases. The trouble is that no interaction doesn’t mean dislike — the user may simply never have seen the item.

$$\min_{P, Q} \sum_{u, i} c_{ui} (p_{ui} - \mathbf{p}_u^\top \mathbf{q}_i)^2 + \lambda(\|\mathbf{p}_u\|^2 + \|\mathbf{q}_i\|^2)$$with $p_{ui} \in \{0, 1\}$ (interacted or not) and $c_{ui} = 1 + \alpha f_{ui}$ a confidence that grows with interaction frequency $f_{ui}$ . We optimise over all user-item pairs, but observed interactions get high confidence (“definitely a positive”) and unobserved ones get low confidence (“probably negative, but we are not sure”).

Evaluation: Measuring What Matters#

Building a recommender is half the battle. Knowing whether it is actually good is the other half — and it is genuinely harder than people assume.

Why a single accuracy number lies#

If your model has 95% accuracy at predicting ratings, is it good? Maybe — but consider:

- Users only rate things they like. Predicting “high” for every item can score well and recommend nothing useful.

- If your test set is dominated by popular items, you’ve measured the easy cases.

- If recommendations lack diversity, users may be satisfied today and churn next month.

Honest evaluation needs multiple metrics measuring different things.

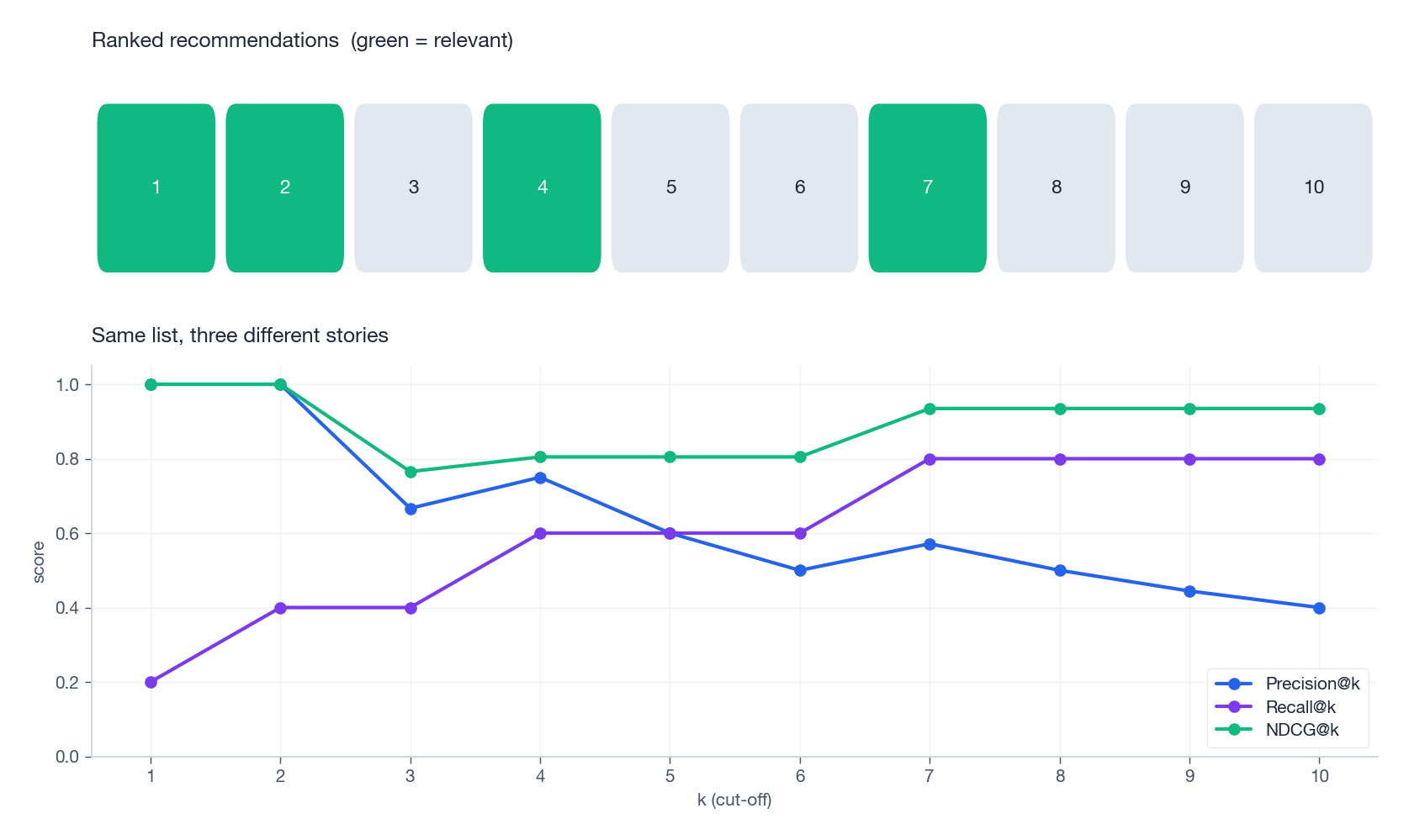

Classification metrics#

$$\text{Precision@}K = \frac{|R_u \cap T_u|}{|R_u|}, \quad \text{Recall@}K = \frac{|R_u \cap T_u|}{|T_u|}$$Precision@K asks: of what we recommended, how much was relevant?

Recall@K asks: of what was relevant, how much did we surface?

These are intuitive but they ignore order within the top-$K$ list — a relevant item at position 1 counts the same as at position 10.

Ranking metrics — position matters#

In real interfaces, position 1 is worth dramatically more than position 10. Two metrics dominate.

$$\text{AP@}K = \frac{1}{|T_u|} \sum_{k=1}^{K} \text{Precision@}k \cdot \text{rel}(k)$$where $\text{rel}(k) = 1$ when item at rank $k$ is relevant. MAP is the mean over users. It rewards putting hits early.

$$\text{DCG@}K = \sum_{k=1}^{K} \frac{2^{\text{rel}_k} - 1}{\log_2(k + 1)}, \quad \text{NDCG@}K = \frac{\text{DCG@}K}{\text{IDCG@}K}$$The $\log_2(k+1)$ term discounts lower positions — rank 1 gets full credit, rank 10 gets about a third. IDCG is the DCG of the perfectly sorted list, so NDCG always lands in $[0, 1]$ .

The same ranked list can look very different through these three lenses:

A reference implementation:

| |

Beyond accuracy: coverage, diversity, serendipity#

A recommender that always serves the global top 10 will score well on precision and ruin your product. Three accuracy-orthogonal metrics keep you honest:

- Catalog coverage — fraction of items the system ever recommends. If you only ever surface 1% of your catalog, the other 99% might as well not exist.

- Intra-list diversity — average pairwise dissimilarity inside a recommendation list. Ten near-duplicate sequels are not a great list.

- Serendipity — items that are both relevant and unexpected. The “wow, how did it know I’d like this?” effect.

These objectives often conflict with raw accuracy. Picking the trade-off is a product decision, not an algorithmic one.

Online metrics — what really decides#

Offline metrics guide development. Online metrics decide promotions. The ones that matter in production:

- CTR — clicks / impressions

- Conversion rate — purchases / clicks

- Engagement — watch time, session length, return rate

- Business — revenue per user, LTV, churn

The tool is A/B testing: split users randomly, run the new system against the old, ship the winner if (and only if) the lift survives statistical scrutiny.

System Architecture: From Millions to Top-10#

A real recommender has a daunting job: pick 10 items from a catalog of 10⁷, in <100 ms, hundreds of thousands of times per second. No single model can do that. The answer everyone has converged on is a multi-stage funnel that progressively narrows the candidate set while applying increasingly expensive models.

Stage 1 — Recall (a.k.a. candidate generation)#

Goal: collapse 10⁷ items down to a few thousand likely-relevant candidates, in well under 10 ms.

Multiple cheap recall channels run in parallel — Item-CF, embedding ANN search (FAISS, ScaNN, HNSW), trending content, social graph, content match — and their outputs are merged. This stage is engineered for recall: it is fine to pass through many false positives, the next stages will weed them out. Missing a true positive here is fatal — no later stage can recover an item that was never proposed.

| |

Stage 2 — Coarse ranking#

Goal: cut a few thousand candidates to a few hundred, with a cheap-but-not-tiny model.

Typical choices: logistic regression, small MLPs, or a distilled deep model. The features are coarse — user demographics, item popularity, recall scores, the recall channel itself — because we cannot afford rich features at this scale.

Stage 3 — Fine ranking#

Goal: rank the remaining few hundred candidates with the best, most expensive model in the stack.

This is where the deep nets live: Wide & Deep, DCN, DIN, transformer-based sequence models, two-tower architectures. They consume rich features (long user history, real-time session, item embeddings, cross features) and often optimise multiple objectives at once — click and conversion and watch time.

| |

Stage 4 — Rerank and policy#

Goal: turn a relevance-sorted list into a good final list.

This is where business logic lives: enforce diversity (often via Maximal Marginal Relevance), inject freshness, demote items the user just saw, apply content-policy filters, honour sponsored placements, satisfy fairness constraints.

| |

The funnel’s discipline is what makes it possible to combine “deep learning quality” with “100 ms latency”. Cheap models cast a wide net; expensive models do the careful work on a small set.

The Five Open Challenges#

Decades of research later, every recommender team still wrestles with the same five problems. There is no clean solution to any of them.

Cold start#

A new user has no history. A new item has no interactions. Both look invisible to CF.

For new users — onboard with a few quick taste choices, fall back to demographics, lean on popular and trending until a few signals accumulate.

$$\text{score}(i) = \hat{r}_i + \beta \sqrt{\frac{\log N}{n_i}}$$so items with few impressions get a chance to prove themselves.

| |

Sparsity#

Netflix has on the order of 200M users and 17K titles — about 3.4 × 10⁹ possible interactions. The average user rates a few dozen movies. Density is well below 0.001%.

Tools that help: matrix factorization (shares statistical strength via the latent space), implicit feedback (clicks and dwell time densify the matrix dramatically), cross-domain transfer (use music taste to seed movie taste), and aggressive regularisation.

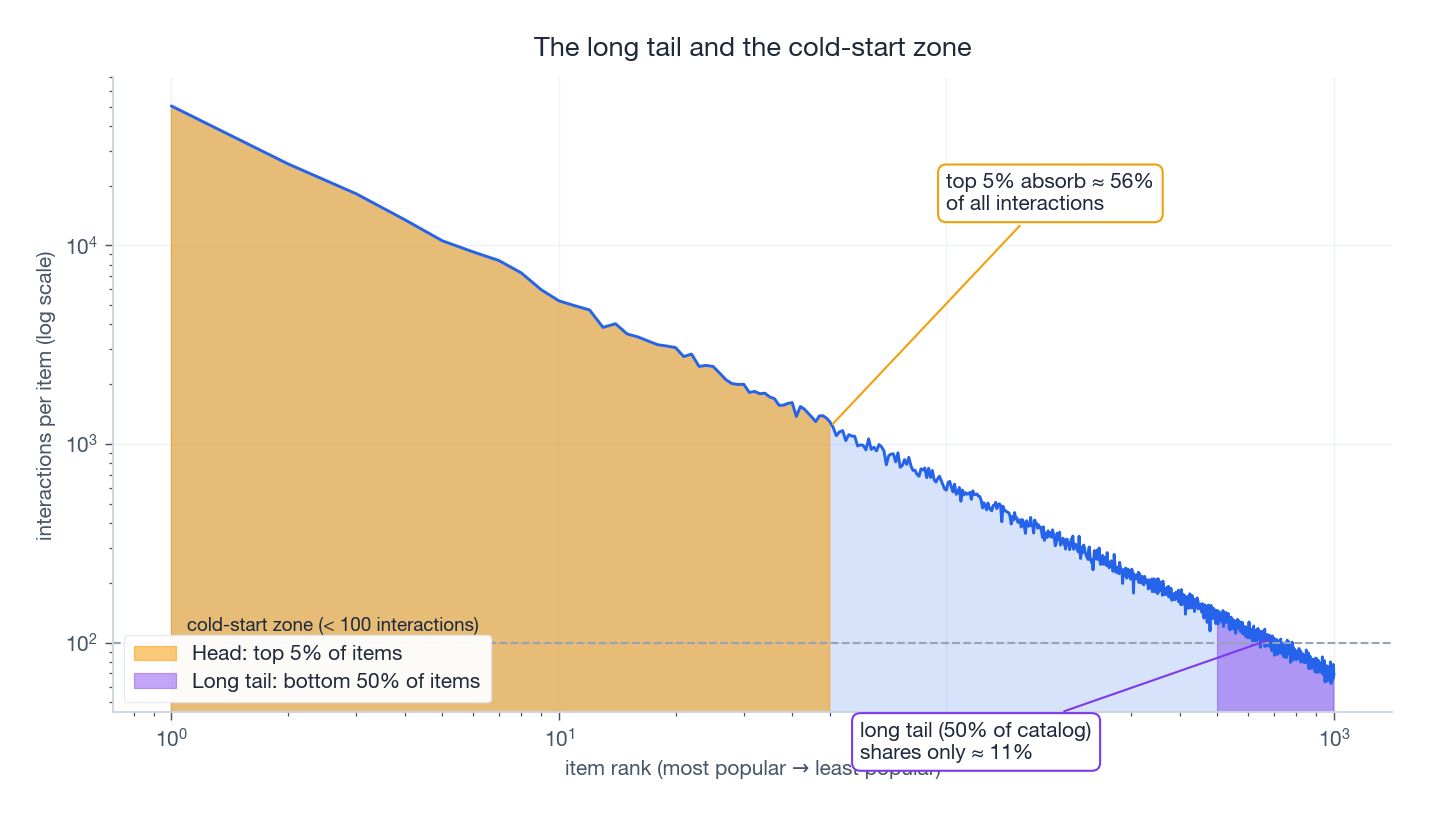

The long tail#

Item popularity follows a power law. The picture is always the same:

The top few percent of items absorb the majority of interactions. The bottom half lives in the cold-start zone — too few signals for CF to do anything.

This causes three real harms: popularity bias (rich-get-richer feedback loops), creator unfairness (niche creators stay invisible), and user dissatisfaction (niche tastes are poorly served). Mitigations include inverse-propensity weighting (down-weight popular items in training), explicit diversity constraints in reranking, and contextual bandits that learn which tail items resonate with which user clusters.

Temporal drift#

Tastes evolve. Items age. Trends explode and burn out. A model trained on last quarter’s behaviour can be confidently wrong about today.

Standard remedies: time-decayed training weights $w(t) = e^{-\lambda(t_{\text{now}} - t)}$ , online learning with small incremental updates, time-of-day and freshness features, and session-based sequence models that condition on the last few minutes of behaviour rather than the last few months.

Scale#

Pinterest, ByteDance, Meta — the largest systems serve millions of QPS at sub-100 ms p99. That is a brutal engineering constraint, and it shapes algorithm choices as much as accuracy does.

The standard toolkit: aggressive caching, ANN libraries (FAISS, ScaNN, HNSW), model quantisation and distillation, sharding by user, and a strict offline/online split — pre-compute everything you can the night before, and only do request-specific work at serve time.

Implementations from Scratch#

Reading code teaches things that prose does not. Two reference implementations follow: User-CF and Item-CF, both fully runnable.

User-Based Collaborative Filtering#

| |

Item-Based Collaborative Filtering#

The architecture mirrors User-CF but flips the question: instead of “which users are like me?”, we ask “which items are like the ones I already liked?” The standard similarity is adjusted cosine — cosine after subtracting each user’s mean, which removes their personal rating scale.

| |

FAQ#

When should I use CF vs. content-based?

Use CF when you have abundant interaction data, items are hard to describe with features (what makes a song “good”?), and you want serendipity. Use content-based when item metadata is rich but interactions are sparse, when items are constantly new, when you need explainable recommendations, or when privacy precludes broad behavioural data. In production, ship a hybrid.

How do I handle implicit feedback (clicks, views) instead of explicit ratings?

Implicit data is more abundant but noisier — absence of interaction is not dislike. Three standard approaches: weighted matrix factorization (treat observed as positive with high confidence, unobserved as negative with low confidence), negative sampling (sample a few unobserved items per positive), or pairwise ranking losses such as BPR.

What dimensionality should I use for matrix factorization?

Typical range: 20–200. Below 10 you usually under-fit. Above 500 you get diminishing returns, longer training, and overfitting risk. Start at 50–100 and tune on validation.

How often should I retrain?

Depends on data velocity. TikTok-like systems do online learning with sub-second updates. Netflix and Spotify retrain daily or weekly. Long-lived catalogs like Amazon products can get away with weekly to monthly retraining for the heavy components, with online updates for user state.

How do I balance exploration vs. exploitation?

Pure exploitation creates filter bubbles; pure exploration annoys users. Practical recipes: ε-greedy with $\epsilon \in [0.05, 0.15]$ , Thompson sampling for a Bayesian flavour that adapts automatically, or simply reserving 1–2 slots in the top-10 for exploratory items chosen by an upper-confidence-bound rule.

Takeaways and What’s Next#

Five things to remember from this article:

- No single algorithm wins. The right approach depends on data, scale, and product goals. Hybrids dominate in practice.

- Architecture beats algorithms at scale. The funnel — recall → coarse rank → fine rank → rerank — is what lets a deep model serve in 100 ms.

- Evaluation is multi-objective. Accuracy, ranking quality, diversity, coverage, and online business metrics all matter, and they often disagree.

- The hard problems are perennial. Cold-start, sparsity, the long tail, temporal drift, scale — every team works on these forever.

- Read code. The intuition lives in the implementations as much as in the equations.

In the rest of this series we go deep on each piece:

- Part 2 — collaborative filtering and matrix factorization in full detail

- Part 3 — deep-learning building blocks for recommenders

- Part 4 — CTR prediction (Wide & Deep, DCN, DeepFM)

- Part 5 — embedding techniques (Word2Vec, item2vec, graph embeddings)

- Parts 6–10 — sequential models, GNNs, knowledge graphs, multi-task, DIN

- Parts 11–16 — contrastive learning, LLM-based recommenders, fairness, cross-domain, real-time, industrial practice

Further reading

- Aggarwal, Recommender Systems: The Textbook — the most comprehensive single reference

- Koren, Bell & Volinsky, Matrix Factorization Techniques for Recommender Systems (IEEE Computer 2009) — the Netflix Prize paper

- Hu, Koren & Volinsky, Collaborative Filtering for Implicit Feedback Datasets (ICDM 2008)

- Cheng et al., Wide & Deep Learning for Recommender Systems (DLRS 2016)

- Covington, Adams & Sargin, Deep Neural Networks for YouTube Recommendations (RecSys 2016)

- He et al., Neural Collaborative Filtering (WWW 2017)

Open-source libraries to play with

- Surprise — small, classroom-friendly

- implicit — fast ALS / BPR for implicit feedback

- LightFM — clean hybrid models

- RecBole — comprehensive research toolkit

- TensorFlow Recommenders — production-grade

You are reading Part 1 of 16 in the Recommendation Systems series.

- Part 1: Fundamentals and Core Concepts (you are here)

- Part 2: Collaborative Filtering and Matrix Factorization

- Part 3: Deep Learning Foundation Models

- Part 4: CTR Prediction Models

- Part 5: Embedding Techniques

- Part 6: Sequential Recommendation

- Part 7: Graph Neural Networks

- Part 8: Knowledge Graph Integration

- Part 9: Multi-Task Learning

- Part 10: Deep Interest Networks

- Part 11: Contrastive Learning

- Part 12: LLM-Based Recommendation

- Part 13: Fairness and Explainability

- Part 14: Cross-Domain and Cold Start

- Part 15: Real-Time and Online Learning

- Part 16: Industrial Practice

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts you are here

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices