Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

A practical guide to CTR prediction models -- from Logistic Regression and Factorization Machines to DeepFM, xDeepFM, DCN, AutoInt, and FiBiNet -- with intuitive explanations and PyTorch implementations.

Every time you scroll through a social-media feed, click a product recommendation, or watch a suggested video, a CTR (click-through rate) model decides what to show you. These models answer one deceptively small question:

“What is the probability that this specific user will click on this specific item, right now?”

Behind that question lies one of the most economically valuable problems in machine learning. A 1% lift in CTR translates into millions of dollars at the scale of Google, Amazon, or Alibaba — and the same models also drive video feeds, app stores, news apps, and dating apps. CTR prediction sits at the heart of the ranking stage: candidate generation gives you a few thousand items, and the CTR model decides which dozen actually reach the user.

This article tours the decade-long evolution of CTR models, from a single-line logistic regression to attention-based architectures. We won’t just look at formulas. For each model, we’ll ask three questions:

- What problem in the previous model forced this design?

- What is the geometric or probabilistic intuition?

- How would you actually implement and ship it?

By the end, you should be able to read any modern CTR paper, sketch its architecture from memory, and pick the right baseline for your own system.

What You Will Learn#

- The CTR prediction problem and why it is uniquely hard (it is not just classification with imbalanced labels)

- Logistic Regression as both a baseline and a sanity check — and exactly where it breaks

- Factorization Machines (FM) and Field-aware FM (FFM) for automatic pairwise interactions on sparse data

- DeepFM — the industry workhorse that combines FM and a deep network

- xDeepFM — explicit high-order interactions through the Compressed Interaction Network

- DCN — bounded-degree feature crosses with linear parameter cost

- AutoInt — self-attention applied to feature interactions

- FiBiNet — learning which features matter with SENet plus richer bilinear interactions

- Training reality: class imbalance, calibration, AUC vs Logloss, and how to evaluate offline before A/B tests

Prerequisites#

- Comfortable Python and PyTorch (

nn.Module, training loops, embeddings) - Basic deep-learning concepts and the embedding view of categorical features (Part 3 )

- Familiarity with binary classification, sigmoid, and cross-entropy

Understanding the CTR Prediction Problem#

What Is CTR Prediction?#

$$P(y = 1 \mid \mathbf{x}) \quad\text{where } y \in \{0, 1\},\;\; 1 = \text{click}.$$The feature vector $\mathbf{x}$ is the concatenation of three families:

| Family | Examples |

|---|---|

| User | user id, age bucket, gender, history, country |

| Item | item id, brand, category, price band, freshness |

| Context | hour of day, device, network, query, position |

Empirically, $\text{CTR} = \text{clicks} / \text{impressions}$ , and the model output is later used to rank candidates, filter low-quality ones, and feed a downstream business objective (e.g. eCPM = CTR x bid for ads, or a multi-objective score for feeds).

Why CTR Prediction Is Hard#

Five properties make CTR prediction look like a standard classification task but behave very differently:

1. Extreme class imbalance. Display ads sit at 0.1-2%, e-commerce at 1-5%, news feeds at 2-10%. A “predict no” model gets 95%+ accuracy and is useless — AUC and Logloss replace accuracy.

2. High-dimensional, ultra-sparse features. After one-hot encoding, the feature space is $10^6$ to $10^9$ dimensions. Each sample lights up only dozens of them. Storing a weight per feature pair is impossible.

3. The signal lives in interactions. “Young user” alone is a weak signal; “young user x action movie x evening” is gold. Capturing those crosses automatically and cheaply is the central modelling problem.

4. Distribution shift is constant. New items, viral trends, and weekday/weekend cycles. Models retrain daily or hourly, and offline AUC alone doesn’t tell the full story.

5. Hard latency budget. Ranking must score thousands of candidates in under 100 ms (often under 10 ms p99). Model size, embedding lookup, and batching are as important as architecture.

The CTR Prediction Pipeline#

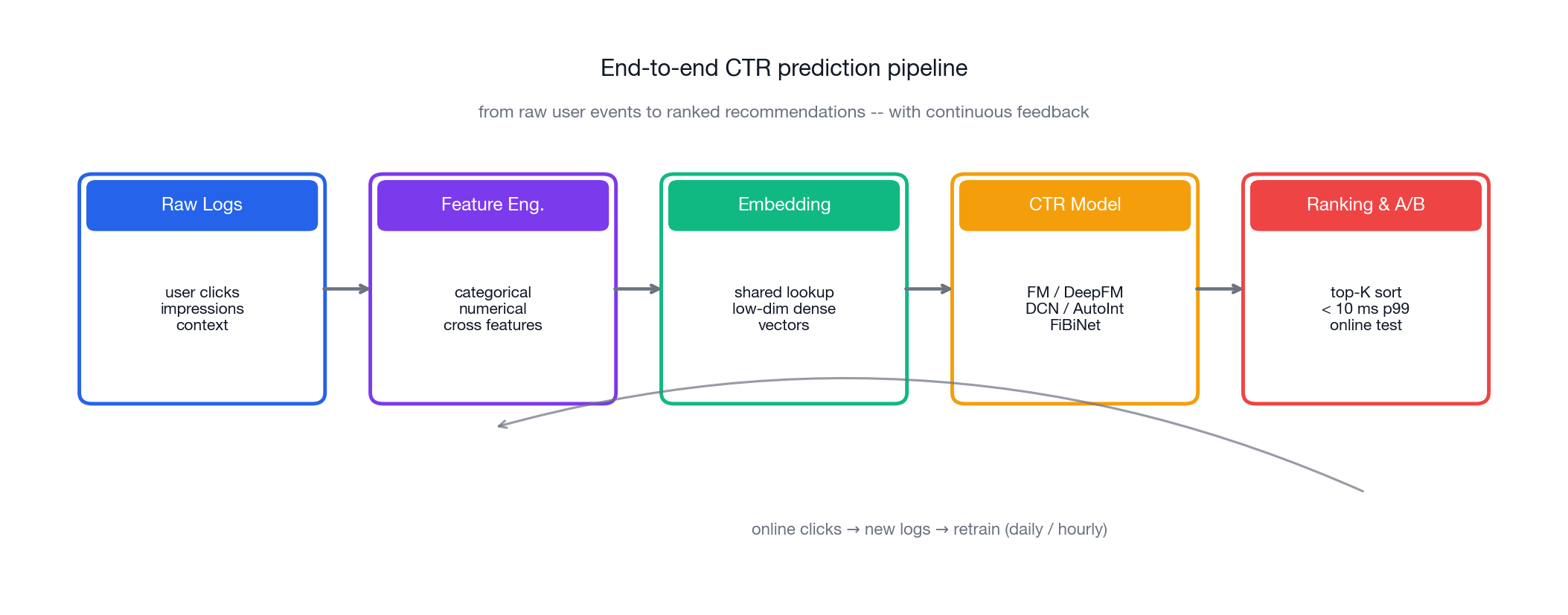

The end-to-end view from a raw click log to a ranked list and back to model retraining looks like this:

A few things to notice in the pipeline:

- Feature engineering still dominates real systems. Embeddings learn what they can, but explicit cross features and statistical features (rolling CTR per user, per item, per slot) routinely win the largest A/B tests.

- Embeddings are shared infrastructure. All deep CTR models (FM, DeepFM, xDeepFM, DCN, AutoInt, FiBiNet) read from the same embedding table. The architecture mostly defines how the embeddings interact.

- Online feedback closes the loop. Yesterday’s serving log is today’s training data. Model freshness often beats model sophistication.

With that mental map, let us walk the architecture timeline.

Logistic Regression: The Foundation (and the Reason FM Exists)#

Despite living next to giant neural networks in production, Logistic Regression (LR) refuses to die. It is the universal baseline, the calibration anchor, and — in latency-bounded systems — still the actual scorer for a non-trivial fraction of requests.

How It Works#

$$P(y = 1 \mid \mathbf{x}) = \sigma(\mathbf{w}^\top \mathbf{x} + b) = \frac{1}{1 + e^{-(\mathbf{w}^\top \mathbf{x} + b)}}.$$$$\mathcal{L} = -\frac{1}{N} \sum_{i=1}^{N} \big[ y_i \log \hat{y}_i + (1 - y_i) \log(1 - \hat{y}_i) \big].$$Plain English: “Take a weighted sum of every feature, add a bias, then squash to $[0, 1]$ .”

Why LR Is Both Beloved and Insufficient#

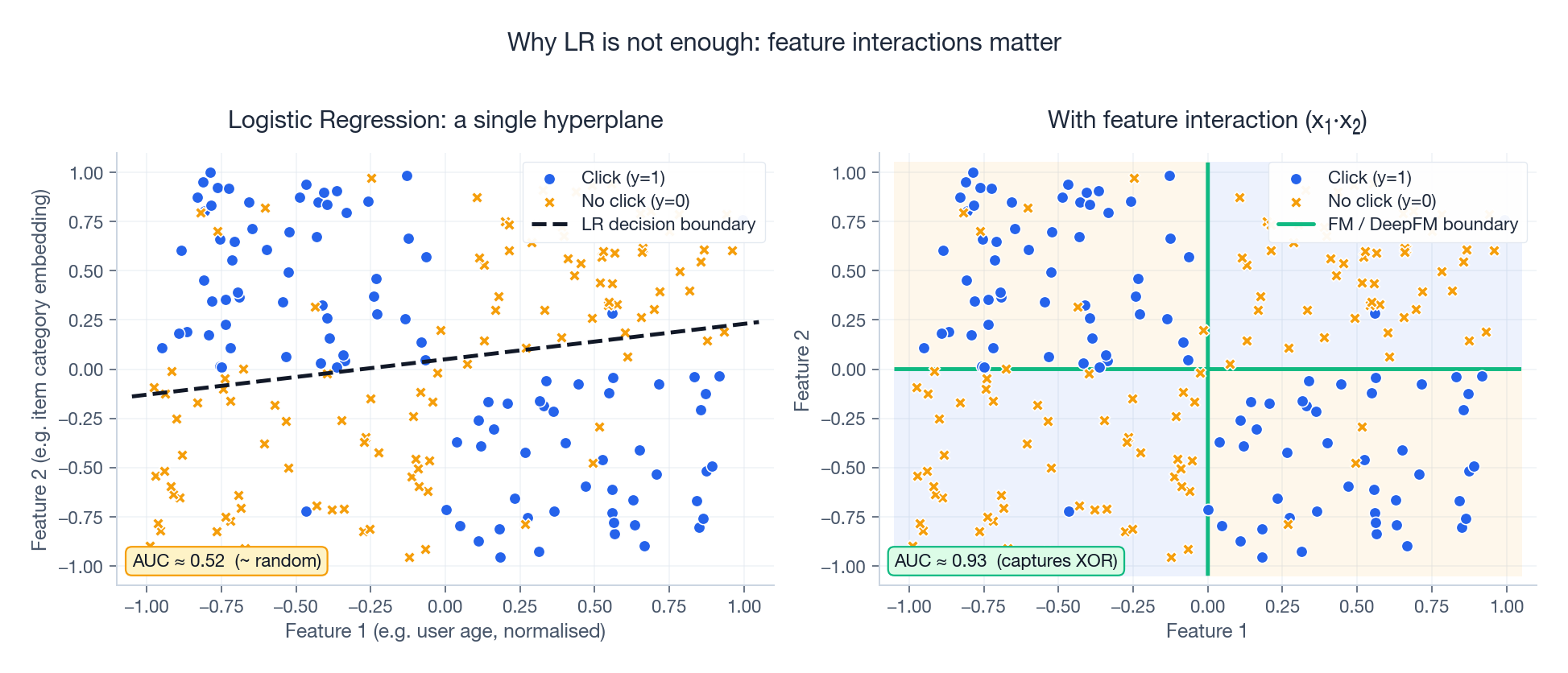

The geometry tells the whole story. LR can only learn a hyperplane in feature space. Any pattern that requires “feature A is good only when feature B is also active” is invisible to it. The classic illustration is XOR-shaped click behaviour:

In the left panel, “young + action” and “old + comedy” both click, but “young + comedy” and “old + action” do not. No linear boundary works — AUC stays near 0.5. The right panel adds a single interaction term ($x_1 \cdot x_2$ ) and instantly recovers the structure. Every CTR model after LR is, at heart, an answer to the question:

“How do we discover and represent useful feature crosses, automatically and at scale?”

Implementation#

| |

Where LR Falls Short — Concretely#

- No feature interactions. Treats every feature as independent.

- Manual feature engineering. To capture interactions you must hand-craft

user_age x item_categorycolumns — impossible past two- or three-way crosses. - Linear decision boundary. Visible above; no representation power for XOR-style structure.

These three failures motivate every subsequent architecture in this article.

Factorization Machines (FM): Automatic Pairwise Interactions#

Steffen Rendle’s 2010 Factorization Machines were the first model that made automatic pairwise interactions both practical and statistically efficient on sparse data.

The Core Insight#

A naive “interaction-aware LR” would learn a separate weight $w_{ij}$ for every pair of features. With $d$ features that is $O(d^2)$ parameters — and most pairs are never observed together in the training set, so they cannot be learned anyway.

$$w_{ij} \approx \langle \mathbf{v}_i, \mathbf{v}_j \rangle = \sum_{f=1}^{k} v_{i,f} \, v_{j,f}.$$Analogy. Imagine 1,000 movies. Storing a weight for every pair needs a million numbers, most never observed. Instead, give each movie a $k$ -dimensional “personality vector”. Two movies interact strongly iff their vectors point similarly. We now have $1000 \cdot k$ numbers, and we can predict an interaction even for a pair we have never seen together — because each vector was learned from many other co-occurrences.

That last property — generalisation to unseen pairs — is the real magic. It is why FM still works on extreme sparsity where decision trees and linear models stall.

Mathematical Formulation#

$$\hat{y}(\mathbf{x}) = \underbrace{w_0}_{\text{bias}} + \underbrace{\sum_{i=1}^{d} w_i x_i}_{\text{linear}} + \underbrace{\sum_{i=1}^{d} \sum_{j=i+1}^{d} \langle \mathbf{v}_i, \mathbf{v}_j \rangle x_i x_j}_{\text{pairwise interactions}}.$$ $$\sum_{i<j} \langle \mathbf{v}_i, \mathbf{v}_j \rangle x_i x_j = \frac{1}{2} \left[ \left(\sum_i \mathbf{v}_i x_i \right)^2 - \sum_i (\mathbf{v}_i x_i)^2 \right].$$Why this works. Squaring the sum gives all $i \cdot j$ products including $i = j$ ; subtracting the sum of squares removes the diagonal; halving removes the double-count.

Implementation#

| |

FM: Strengths and Limitations#

Strengths. Pairwise interactions for free, $O(kd)$ compute, and statistical generalisation to unseen pairs.

Limitations. Only pairwise, and a feature uses the same embedding regardless of which other field it is interacting with — which is sometimes wrong. That single observation gave us FFM.

Field-aware Factorization Machines (FFM)#

FFM (2016) extends FM with one targeted change: each feature gets a separate embedding for each field it is interacting with.

The Intuition#

$$\hat{y}(\mathbf{x}) = w_0 + \sum_i w_i x_i + \sum_{i<j} \langle \mathbf{v}_{i, f_j}, \mathbf{v}_{j, f_i} \rangle x_i x_j.$$The notation $\mathbf{v}_{i, f_j}$ reads “feature $i$ ’s embedding when interacting with field $f_j$ ”.

Implementation#

| |

FFM vs FM Trade-offs#

| Aspect | FM | FFM |

|---|---|---|

| Parameters | $O(d \cdot k)$ | $O(d \cdot F \cdot k)$ , with $F$ fields |

| Expressiveness | Same embedding for all interactions | Field-aware embeddings |

| Domain knowledge | Not required | Need a field schema |

| Typical use | First baseline | Won early Criteo / Avazu Kaggle competitions |

Both stop at pairwise interactions. To go higher we have two options: stack non-linearities (deep networks) or build interactions explicitly (CIN, Cross). DeepFM does the first; xDeepFM and DCN do the second.

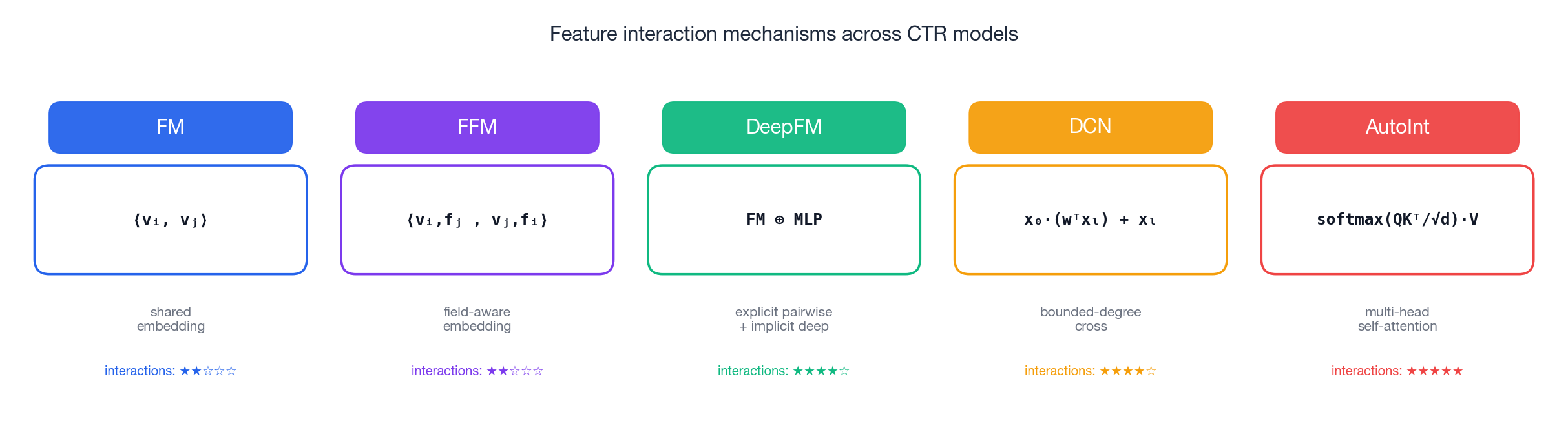

Before continuing, here is a one-look summary of the interaction primitives used by the rest of the article:

DeepFM: Combining FM with Deep Learning#

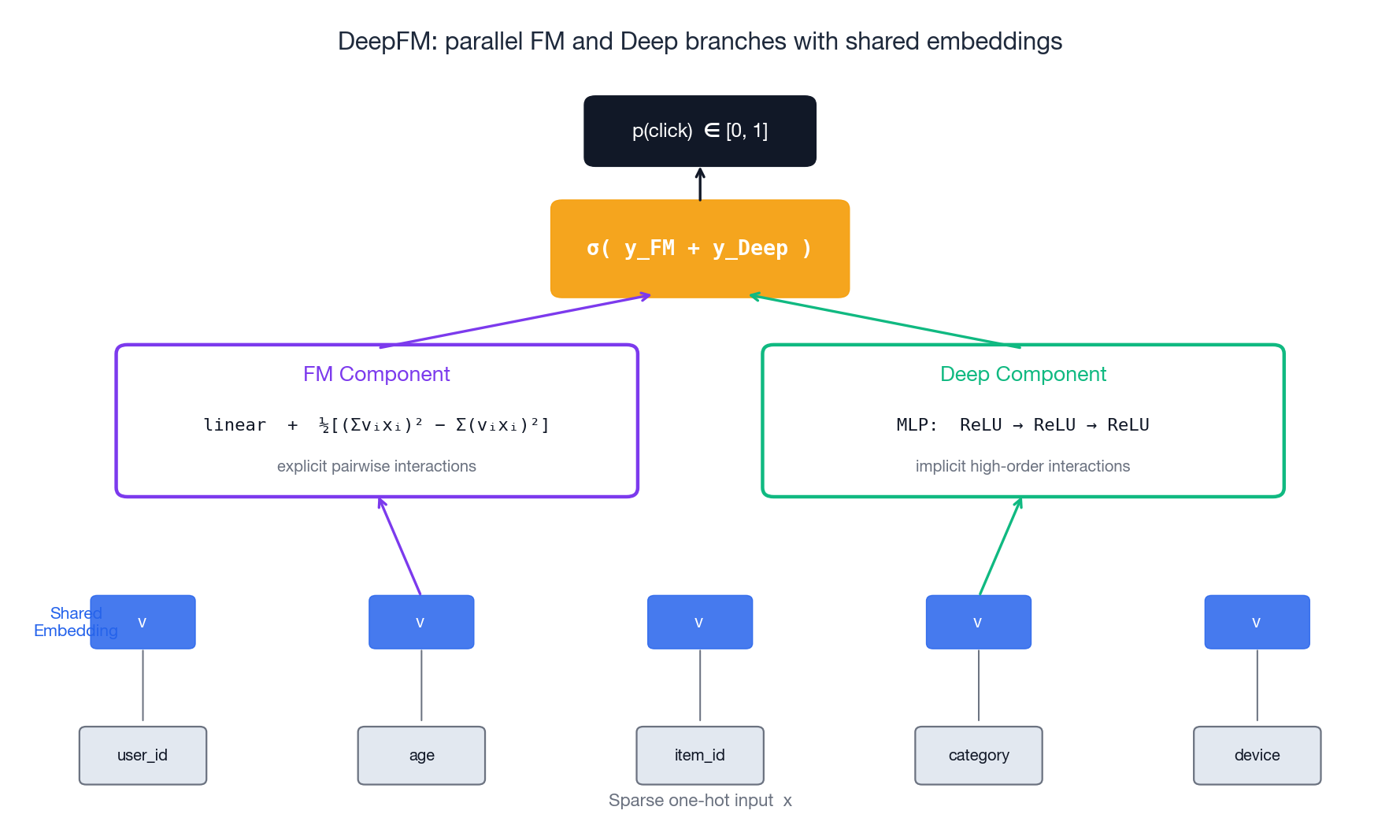

DeepFM (Huawei, 2017) is, with little exaggeration, the default starting point for deep CTR models. Its idea is structurally simple: run an FM and a deep network in parallel, sharing the embedding table.

Why This Combination Works#

- The FM branch captures pairwise (low-order) interactions explicitly.

- The Deep branch captures higher-order interactions implicitly through stacked non-linearities.

- Shared embeddings halve the parameter count and force both branches to agree on what each feature means.

Analogy. Two detectives on the same case. FM is the rule-based investigator who is great with simple clues (“these two features always co-occur with clicks”). The deep MLP is the pattern-matcher who finds long, fuzzy chains of evidence. They argue, then add up their scores.

The architecture diagram makes the parallel structure obvious:

Mathematical Formulation#

$$\hat{y}(\mathbf{x}) = \sigma\big(y_{\text{FM}} + y_{\text{Deep}}\big),$$ $$\mathbf{h}_0 = [\mathbf{v}_1; \mathbf{v}_2; \ldots; \mathbf{v}_m], \quad \mathbf{h}_l = \text{ReLU}(\mathbf{W}_l \mathbf{h}_{l-1} + \mathbf{b}_l), \quad y_{\text{Deep}} = \mathbf{w}^\top \mathbf{h}_L + b.$$Implementation#

| |

DeepFM is the go-to baseline. If you are bootstrapping a new CTR system, start here, then ablate the FM branch, ablate the Deep branch, and only invest in something more exotic if either ablation costs you AUC.

The next two models came out of an honest observation: a deep MLP learns interactions implicitly, and you cannot tell which interactions it actually learned. That motivates xDeepFM (CIN) and DCN (cross network), which both make the high-order structure explicit.

xDeepFM: Explicit High-Order Feature Interactions#

xDeepFM (eXtreme Deep Factorization Machine, 2018) introduces the Compressed Interaction Network (CIN), which builds higher-order interactions layer by layer in the embedding space.

How CIN Works#

Think of CIN as a pyramid of interactions:

- Layer 0: the original embeddings (degree-1 features).

- Layer 1: every Layer-0 feature crossed elementwise with every original embedding (degree 2).

- Layer 2: every Layer-1 feature crossed with every original embedding (degree 3).

- …

where $\circ$ is the Hadamard (elementwise) product and $W$ are learned weights.

Plain English. “Take every feature map from the previous layer, cross it elementwise with every original embedding, then apply a learned 1x1 convolution to compress all those crosses back down to a manageable number of feature maps. Stack.”

The full xDeepFM is Linear + CIN + Deep MLP — a three-tower model, summed before the sigmoid.

Implementation#

| |

xDeepFM vs DeepFM#

| Aspect | DeepFM | xDeepFM |

|---|---|---|

| Low-order interactions | Explicit (FM) | Explicit (FM + CIN) |

| High-order interactions | Implicit (deep MLP only) | Explicit (CIN) + Implicit (deep) |

| Interpretability | Limited | Better — you can probe CIN feature maps |

| Inference cost | Lower | Higher (CIN dominates) |

| When to pick it | Default starting point | Complex datasets where DeepFM plateaus |

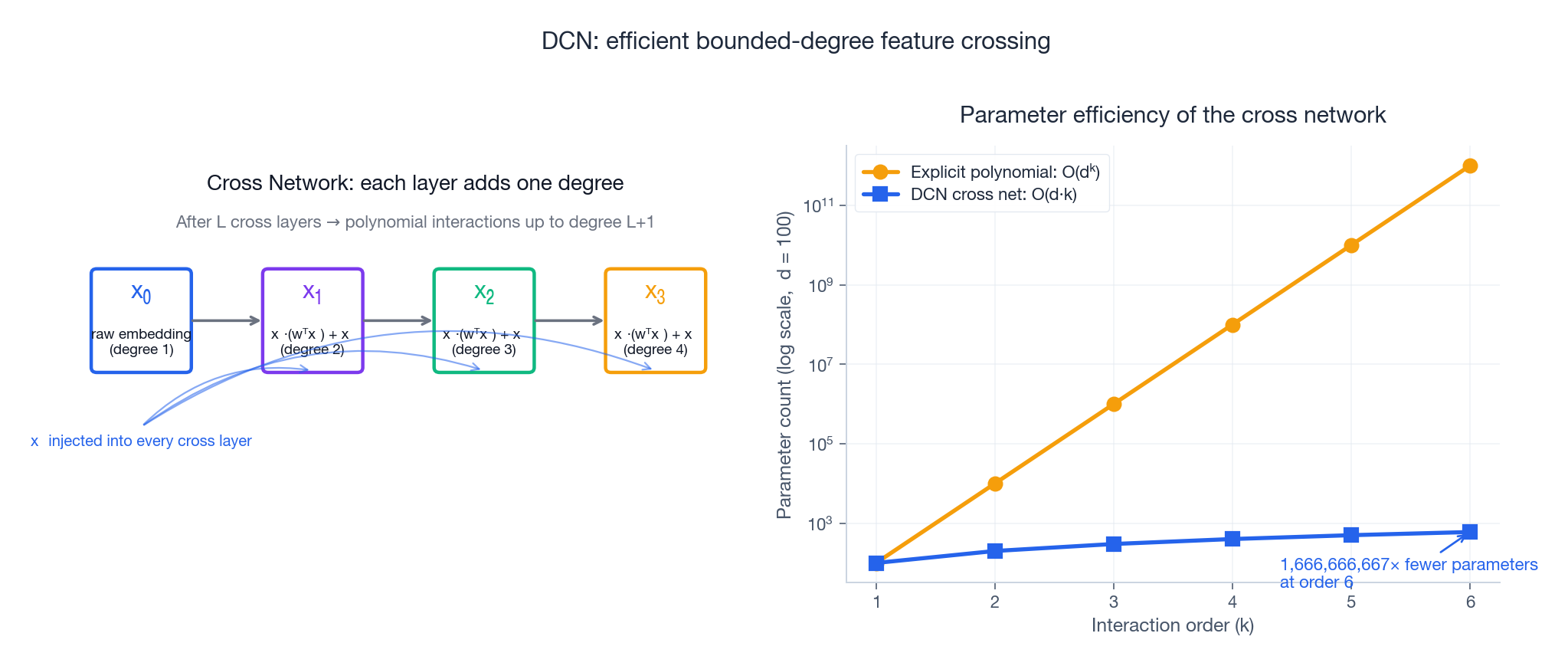

Deep & Cross Network (DCN): Bounded-Degree Cross Features#

DCN (Google, 2017) takes a different route. Instead of stacking elementwise products with learnable convolutions, it adds a tiny module called the Cross Network that increases the polynomial degree of the interaction by exactly one per layer, with O($d$ ) parameters per layer.

The Cross Layer#

$$\mathbf{x}_{l+1} = \mathbf{x}_0 \cdot (\mathbf{w}_l^\top \mathbf{x}_l) + \mathbf{b}_l + \mathbf{x}_l.$$Plain English. “Take a learned scalar projection of the current state, multiply it by the original input vector, add a bias, plus a residual.” Each step injects $\mathbf{x}_0$ once more, raising the interaction degree by one.

After $L$ cross layers you have learned a polynomial of degree $L+1$ in the original features — but with only $L \cdot d$ parameters in the cross stack.

The picture below shows both ideas: how each cross layer adds degree, and how dramatically cheaper this is than naively expanding all polynomial monomials.

The right panel is the punchline. At degree 6 with 100 input features, an explicit polynomial expansion needs $10^{12}$ parameters. The cross network needs 600.

Implementation#

| |

DCN advantages.

- Explicit, bounded interaction degree — no surprises in production.

- Cross stack is tiny relative to the deep MLP, so latency stays close to a plain MLP.

- Successfully deployed at Google scale; v2 of the paper introduces DCN-Mix for even higher capacity.

AutoInt: Attention as a Feature-Interaction Engine#

AutoInt (2019) brings multi-head self-attention — the engine inside Transformers — to feature interactions. The key claim: not all interactions matter equally, and attention can learn which feature pairs to focus on, with multiple heads learning multiple notions of “related”.

How It Works#

$$\text{Attention}(\mathbf{Q}, \mathbf{K}, \mathbf{V}) = \text{softmax}\!\left(\frac{\mathbf{Q} \mathbf{K}^\top}{\sqrt{d_k}}\right) \mathbf{V}.$$Plain English. “Each feature asks ‘whose embedding should I read from?’ (Q), advertises what it knows (K), and offers content to be aggregated (V). Softmax over similarity gives the routing weights.”

With $H$ heads, the model learns $H$ parallel notions of feature relatedness. Stacking $L$ AutoInt blocks lets information flow more than once, building deeper compositions.

Implementation#

| |

AutoInt advantages.

- Discovers which interactions matter without manual schema design.

- Attention weights are inspectable, which helps debugging and reporting.

- Multi-head structure naturally captures multiple flavours of feature relationship.

FiBiNet: Feature Importance + Bilinear Interactions#

FiBiNet (2019) tackles two assumptions other models bake in silently:

- All features deserve equal attention. They do not. Some carry strong signal; some are noise. FiBiNet uses SENet to learn a per-field importance gate.

- Interactions are well captured by elementwise products. Sometimes they are not. FiBiNet replaces the Hadamard product with a bilinear form that can model asymmetric, richer interactions.

SENet: Learning Feature Importance#

Three steps:

- Squeeze. Average each field’s embedding along the embedding dimension to a scalar — one importance score per field.

- Excitation. A two-layer MLP (bottleneck) maps the scalars to per-field gates.

- Reweight. Multiply each embedding by its gate.

Analogy. A DJ adjusting volume sliders for each track based on what is currently playing.

Bilinear Interaction#

Replace $\mathbf{v}_i \odot \mathbf{v}_j$ with $\mathbf{v}_i^\top \mathbf{W} \mathbf{v}_j$ where $\mathbf{W}$ is learned. Variants share $\mathbf{W}$ across all field pairs (Field-All), per field (Field-Each), or per pair (Field-Interaction).

Implementation#

| |

Model Comparison and Selection Guide#

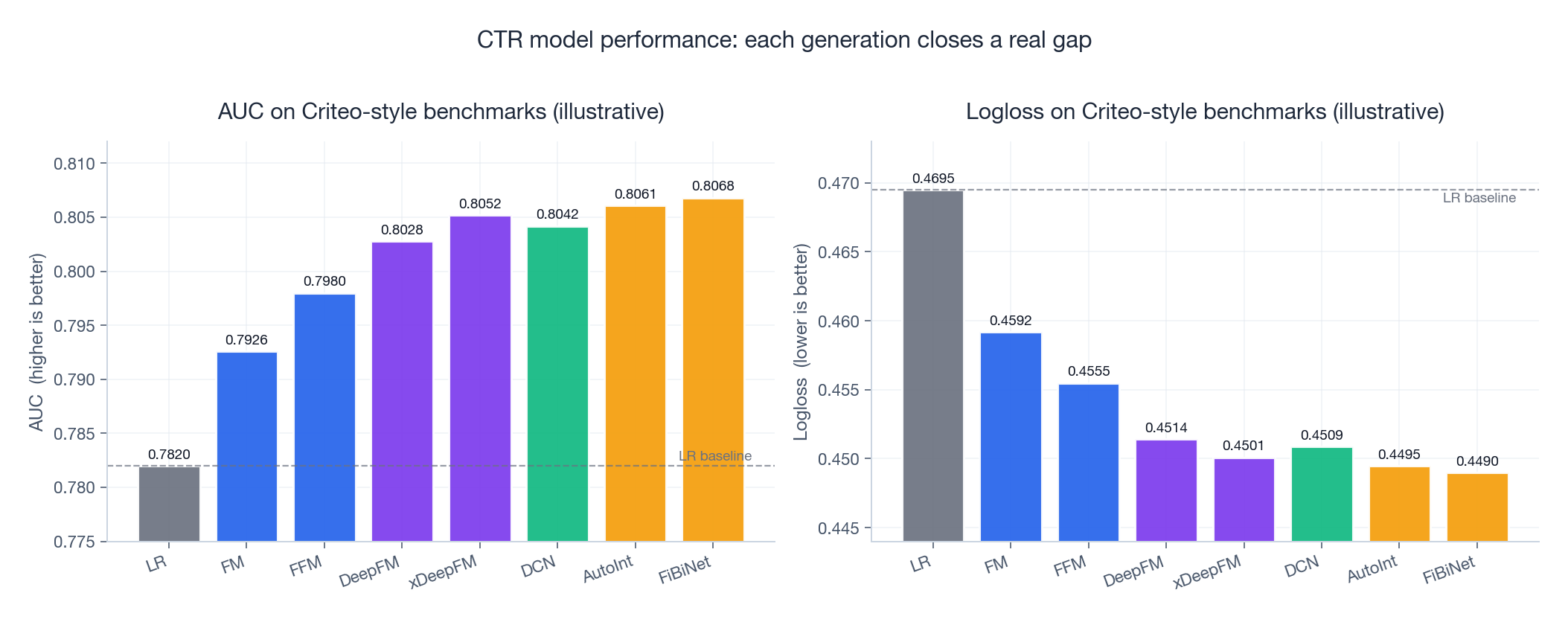

Now the question every practitioner asks: does any of this actually move AUC?

The figure below summarises typical relative ordering on Criteo-style benchmarks. Numbers are illustrative — absolute values vary by dataset, embedding size, and training budget — but the gap pattern is consistent across published reports.

Two observations matter more than the absolute numbers:

- The biggest single jump is LR -> FM. Adding pairwise interactions, even cheaply, is worth more AUC than any later architectural refinement.

- DeepFM and beyond live within ~0.005 AUC of each other. That sounds tiny. At Google or Meta scale, 0.5 milli-AUC is real money. At a startup with 1M users, it is in the noise — features and freshness will dominate.

Computational Complexity#

| Model | Parameters | Training Speed | Inference Speed |

|---|---|---|---|

| LR | $O(d)$ | very fast | very fast |

| FM | $O(d \cdot k)$ | fast | fast |

| FFM | $O(d \cdot F \cdot k)$ | medium | medium |

| DeepFM | $O(d \cdot k + \text{MLP})$ | medium | medium |

| xDeepFM | $O(d \cdot k + \text{CIN} + \text{MLP})$ | slow | medium |

| DCN | $O(d \cdot k + L \cdot d + \text{MLP})$ | medium | medium |

| AutoInt | $O(d \cdot k + L \cdot \text{Attn} + \text{MLP})$ | medium | medium |

| FiBiNet | $O(d \cdot k + \text{SE} + \text{Bilinear} + \text{MLP})$ | medium | medium |

Feature Interaction Capabilities#

| Model | Low-Order | High-Order | Explicit | Implicit |

|---|---|---|---|---|

| LR | linear only | no | no | no |

| FM | pairwise | no | yes | no |

| FFM | pairwise (field-aware) | no | yes | no |

| DeepFM | pairwise | yes | yes (FM) | yes (DNN) |

| xDeepFM | pairwise | bounded | yes (CIN) | yes (DNN) |

| DCN | bounded degree | yes | yes (Cross) | yes (DNN) |

| AutoInt | all orders | yes | yes (Attention) | yes (DNN) |

| FiBiNet | bilinear pairs | yes | yes (Bilinear) | yes (DNN) |

A Decision Flowchart You Can Actually Use#

- First system / proof of concept. Use LR or FM. Get the pipeline, evaluation, and serving right before you add layers.

- First “real” model. DeepFM. Strongest performance-to-effort ratio in the table.

- DeepFM plateaued and you have GPU budget. Try DCN (cheaper) or xDeepFM (richer), not both at once.

- Heterogeneous fields, want interpretability of interaction weights. AutoInt.

- Long, noisy feature lists where you suspect feature-importance varies a lot. FiBiNet.

- Ultra-low latency, edge serving. LR or FM for online; deeper model offline for re-ranking or retrieval bootstrapping.

Training Strategies and Best Practices#

Handling Class Imbalance#

CTR data is brutally imbalanced. Three reliable tools:

1. Weighted BCE loss.

| |

2. Negative downsampling. Standard at Facebook-scale; just remember that downsampling miscalibrates the predicted probability and you have to re-calibrate before serving.

| |

3. Focal loss. Down-weights easy examples so the gradient focuses on the few hard ones.

| |

Regularisation#

- Dropout 0.2-0.5 in MLP layers; never on embedding lookups directly.

- L2 (weight decay 1e-5 to 1e-6) on dense weights; embeddings often need less regularisation than weights.

- Early stopping on validation AUC, patience 3-10 epochs.

| |

Evaluation Metrics#

AUC-ROC is the headline metric. It measures the probability that a random positive sample is scored above a random negative one — by construction, it is invariant to the label imbalance.

| |

Calibration matters too, often more than AUC for downstream auctions. Predicted CTR of 0.05 should match an empirical 5% click rate inside that probability bucket. Use sklearn.calibration.calibration_curve to plot reliability diagrams; fix systematic over/under-prediction with Platt scaling or isotonic regression before serving.

FAQ#

Why is CTR prediction binary classification, not regression?#

The target is a probability (the chance of a click), and Bernoulli is the right likelihood. Binary classification has well-established metrics (AUC, Logloss), handles imbalance gracefully, and produces interpretable scores between 0 and 1. Regression on click counts is sometimes used for revenue or watch-time estimation, but for click prediction specifically, BCE is the standard.

How do I choose the embedding dimension?#

Start with 16. For small datasets (< 1M samples), 4-8 is usually enough. For huge datasets (> 100M), try 16-64. Run a quick ablation: if doubling the dimension lifts AUC by less than 0.001, go back to the smaller value. Embedding tables dominate model memory and serving cost.

What is the difference between FM and matrix factorisation?#

Matrix factorisation decomposes a single user-item rating matrix into user and item embeddings. FM is strictly more general: it factorises pairwise interactions among any features, so it can absorb side information (age, city, time of day) into the same factorised form. MF is the special case of FM with two fields.

When should I use DeepFM vs xDeepFM?#

Default to DeepFM. Try xDeepFM only after DeepFM clearly plateaus and your dataset is rich enough that the third- and fourth-order interactions plausibly matter. The CIN component nearly doubles inference cost.

How do I handle cold-start items?#

Four levers, usually combined: (1) initialise embeddings from content features (text/image encoders); (2) fall back to popularity for the first few impressions; (3) explore via a contextual bandit so new items get some impressions; (4) pre-train embeddings on a related task. The rule of thumb: never let a model see only the item id of a new item.

Feature engineering vs model architecture — which matters more?#

Almost always feature engineering, by 2-3x. Good cross features, sensible bucketisation, proper missing-value handling, and rolling user/item statistics typically yield 10-30% AUC improvement. Switching architectures within the deep CTR family yields 2-10%. Do feature engineering first; reach for fancier architectures last.

How do I handle missing features?#

Four options: (1) default value (0, mean, mode); (2) add a binary is_missing indicator; (3) reserve a special “missing” embedding for categorical features; (4) impute with KNN or a simple model. Choose based on whether missingness itself is informative — if a logged-out user is missing demographics, that fact predicts behaviour and should be a feature.

How do I evaluate offline vs online?#

Offline: time-based train/validation/test split (never random!). Metrics: AUC and Logloss. Fast and cheap, but downstream effects (diversity, freshness, position bias) are invisible. Online: A/B test with real users. Metrics: realised CTR, conversions, revenue, retention. Slow and expensive but the only authoritative signal. Always validate offline first; never ship without an online test.

How do I deploy CTR models in production?#

The big four: (1) serve from TorchServe / Triton / TF-Serving with batched requests; (2) target < 10 ms p99 via INT8 quantisation, embedding sharding, and pre-fetching; (3) monitor predicted-CTR distribution drift — if the histogram shifts, retrain or roll back; (4) version your models and your feature pipelines together — a feature schema mismatch silently destroys AUC.

What are the latest trends (2024-2025)?#

Transformer-based interaction stacks at scale, multi-task learning (jointly predicting CTR + conversion + watch time), graph neural networks over user-item graphs, AutoML for embedding dimension and architecture search, debiasing via causal inference and inverse-propensity weighting, and federated learning for privacy. The fundamentals — feature quality, interaction modelling, calibration, freshness — remain the dominant levers regardless of the trend.

Summary#

CTR prediction is the heart of modern ranking. We walked the architecture timeline from a single linear layer to attention-based interaction discovery:

- LR — simple, calibrated, but blind to feature interactions.

- FM / FFM — automatic pairwise interactions on sparse data; FFM adds field awareness at a parameter cost.

- DeepFM — the industry workhorse: explicit pairwise (FM) + implicit deep, sharing one embedding table.

- xDeepFM — explicit higher-order interactions through CIN.

- DCN — bounded-degree polynomial crosses with linear parameter cost.

- AutoInt — multi-head self-attention for interaction discovery and inspection.

- FiBiNet — learnable feature importance (SENet) plus bilinear interactions.

Practical takeaways.

- Start simple. LR -> FM -> DeepFM, in that order. Stop the moment you stop improving AUC.

- Features first, architecture second. A new cross-feature usually beats a new model.

- Handle imbalance deliberately. Pick one of pos_weight, downsampling+calibration, or focal loss — and stick with it.

- Evaluate honestly. Time-based splits offline, A/B tests online, and watch calibration alongside AUC.

- Iterate forever. CTR systems are never done. Distributions shift, items churn, and yesterday’s model is today’s baseline.

The “best” model is the one that wins your A/B test under your latency budget on your data. Understand the problem first; pick the smallest tool that solves it.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling you are here

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices