Recommendation Systems (5): Embedding and Representation Learning

How modern recommenders learn dense vector representations for users and items: Word2Vec / Item2Vec, Node2Vec, two-tower DSSM and YouTube DNN, negative sampling, FAISS/HNSW serving, and how to evaluate embedding quality. Concept, math, code and production guidance in one place.

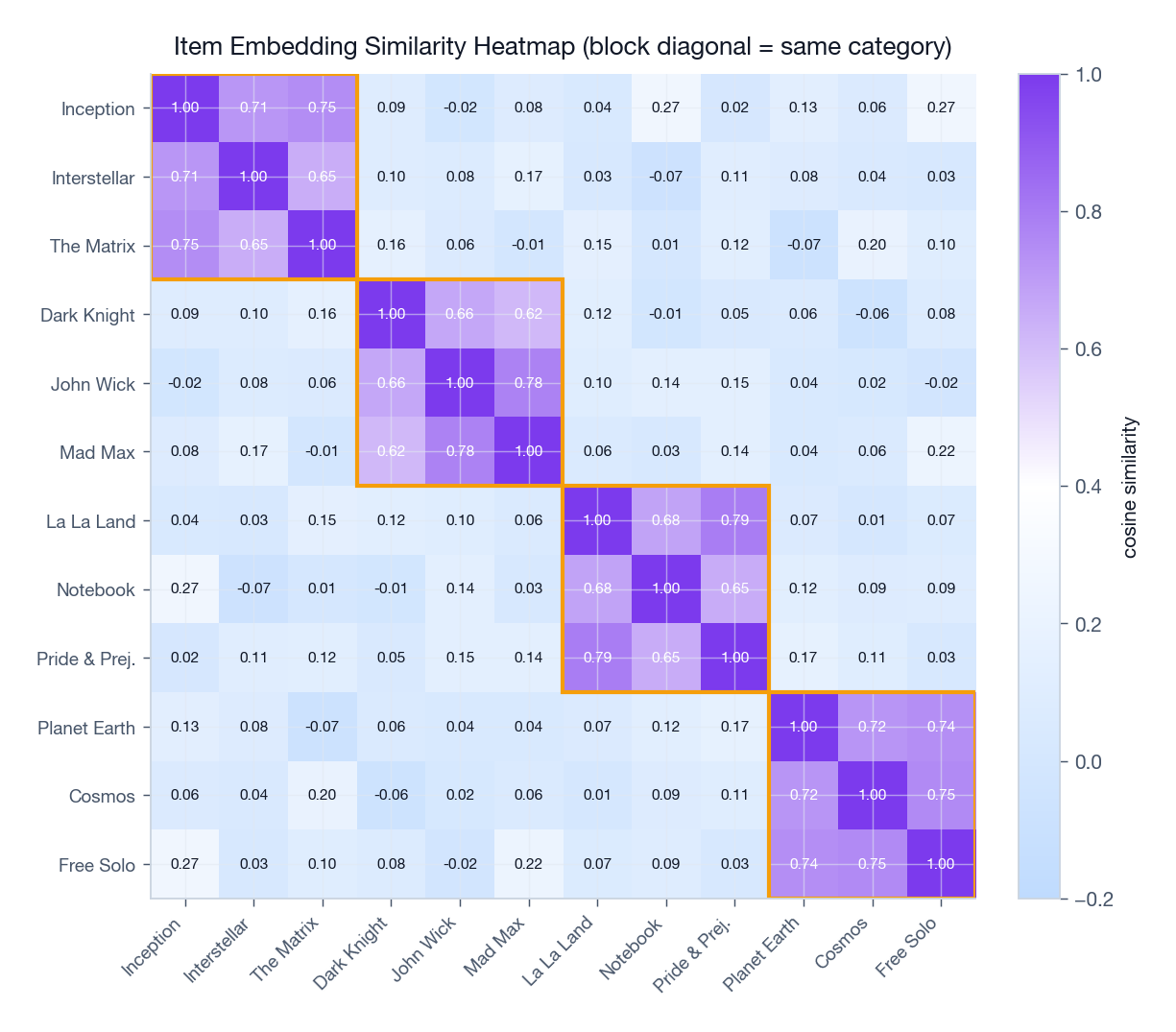

When Netflix suggests Inception to someone who just finished The Dark Knight, the magic is not a hand-crafted “if-watched-Nolan-then” rule. It is geometry. Both films sit close together in a 128-dimensional embedding space that the model has learned from billions of viewing events. Geometry replaces enumeration: instead of comparing a movie to fifteen thousand others through brittle similarity rules, the system asks a single question — how far apart are these two vectors?

This article unpacks how those vectors get learned and served at production scale. We will move from the underlying intuition through five families of techniques (sequence, graph, two-tower, attention-pooled, contrastive), the engineering of negative sampling, and the millisecond-level realities of approximate nearest-neighbour search. Every section is paired with code that compiles and runs.

What You Will Learn#

- What an embedding actually is — and why “low-dimensional” is the entire point

- Sequence-based learning with Word2Vec and Item2Vec, including the Skip-gram derivation

- Graph-based learning with Node2Vec’s biased random walks

- Two-tower architectures (DSSM, YouTube DNN) that dominate large-scale retrieval

- Negative sampling strategies — uniform, popularity-aware, hard, in-batch

- ANN serving with FAISS, HNSW and Annoy, and how to read the latency / recall trade-off

- Evaluation through both intrinsic (coherence, clustering) and extrinsic (HR@K, NDCG) metrics

Prerequisites#

- Linear algebra basics (vectors, dot products, matrix multiplication)

- Familiarity with neural networks and PyTorch

- Recommendation system fundamentals — see Part 1

- Helpful but not required: deep learning for recommendations — see Part 3

Foundations: what an embedding is, and why it matters#

The compression view#

An embedding is a learned function $f : \mathcal{I} \to \mathbb{R}^{d}$ that maps a discrete object — a user, a movie, a SKU, a node in a graph — to a dense vector of $d$ real numbers, where $d$ is much smaller than the catalogue size.

Analogy. Picture a Netflix-style catalogue with a million titles. A naïve representation would need a million-dimensional one-hot vector per movie. Embeddings replace that with, say, 128 numbers per movie — a vector of latent qualities that nobody labelled but the model discovered: how cerebral, how violent, how visually dark, how 1990s.

Three properties make embeddings the lingua franca of modern recommenders:

| Property | What it gives you |

|---|---|

| Density | Every dimension carries information; no wasted bits compared to one-hot vectors. |

| Geometry | “Similar” becomes a measurable quantity — a dot product or a cosine. |

| Composability | Embeddings can be averaged, concatenated, attended over, and indexed for retrieval. |

Why this beats sparse representations#

Real interaction matrices are absurdly sparse. A platform with $10^{8}$ users and $10^{7}$ items has $10^{15}$ possible cells but typically observes fewer than $10^{11}$ — under 0.01% density. Storing or factoring that matrix directly is infeasible. Compressing each row and column into a $d$ -dimensional vector turns the problem into something modern hardware loves: dense matrix multiplications.

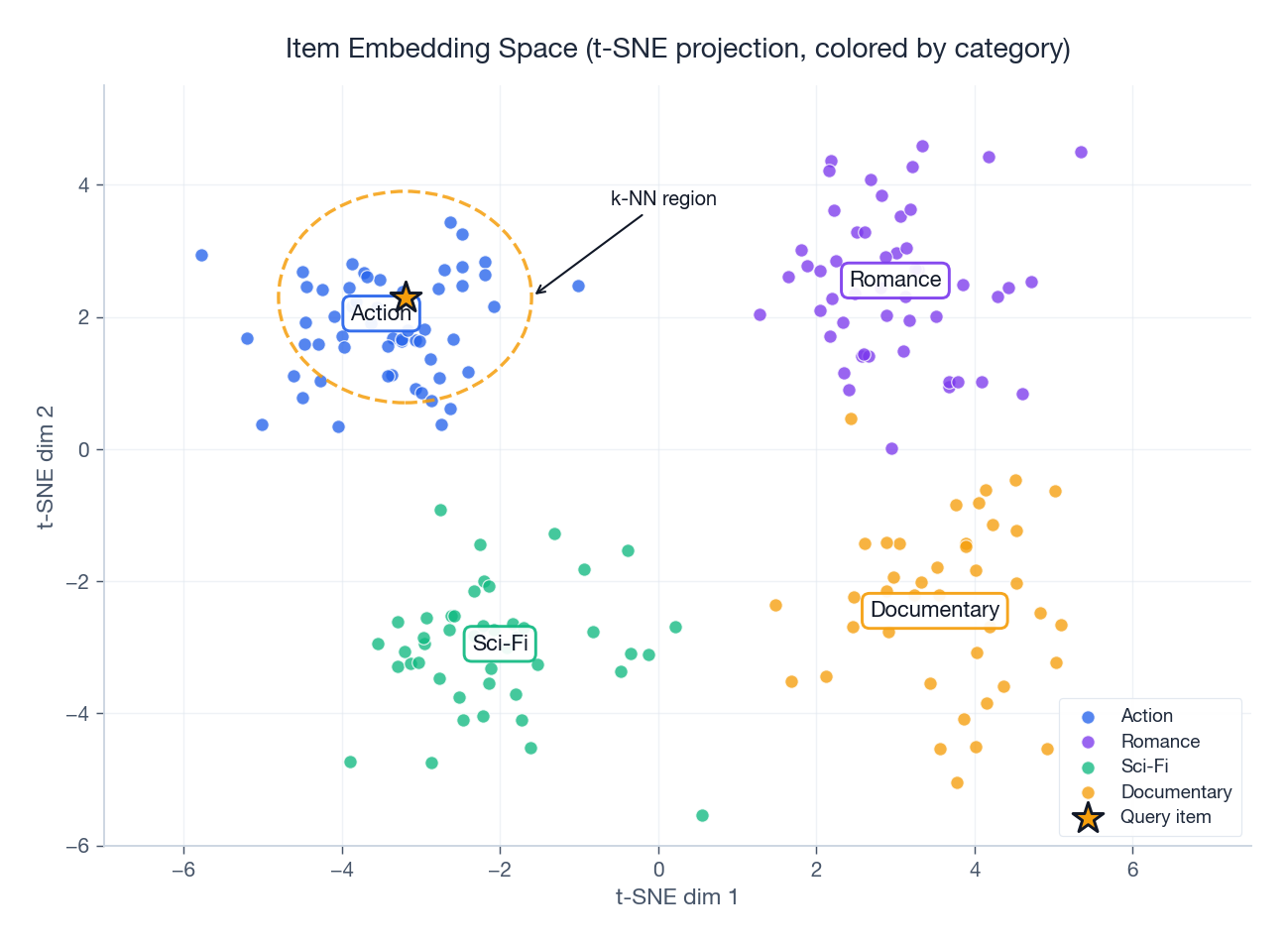

The picture above is the central intuition of this entire article. Items belonging to the same category form clusters; the query item’s neighbours in vector space are exactly the items we want to recommend. Recommendation reduces to nearest-neighbour search in an embedding space.

The learning objective in one sentence#

Almost every embedding method, from matrix factorisation to BERT4Rec, optimises the same idea:

Items that appear in similar contexts should end up with similar vectors; items that do not should be pushed apart.

This is the distributional hypothesis borrowed from linguistics — “you shall know a word by the company it keeps.” In recommender land, “context” can be:

- a co-occurrence in a user session (Item2Vec)

- adjacency in a graph (Node2Vec, GraphSAGE)

- a click on the same query (DSSM)

- a positive label in a CTR log (DeepFM, DLRM)

The choice of context defines the method.

Sequence-based embeddings: Word2Vec and Item2Vec#

From words to items#

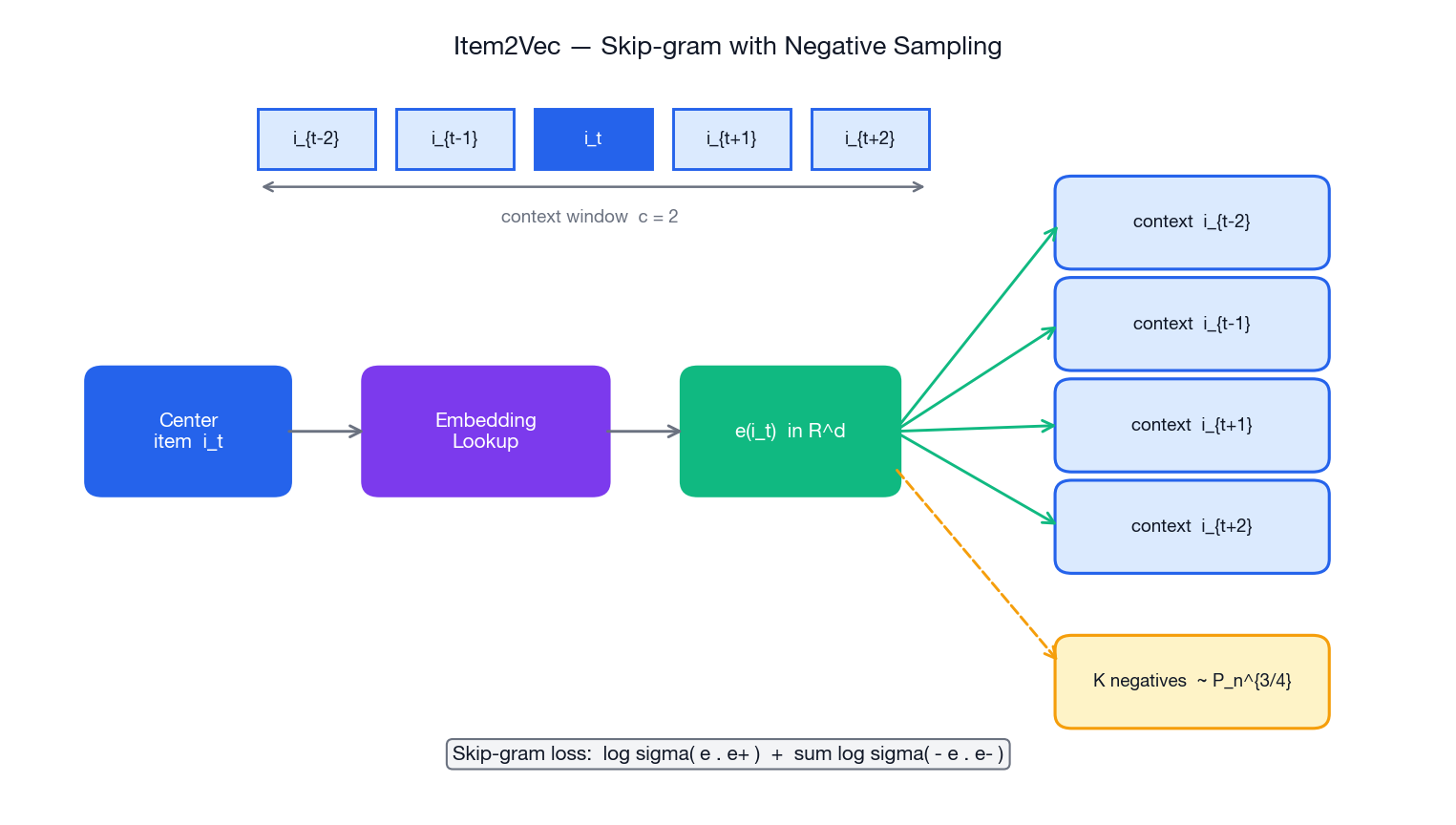

Word2Vec (Mikolov et al., 2013) had two flavours:

- Skip-gram — given a centre word, predict its surrounding context.

- CBOW (continuous bag-of-words) — given the surrounding context, predict the centre word.

Analogy. Skip-gram is like handing someone a single jigsaw piece and asking what surrounds it. CBOW is the reverse — show the surrounding pieces and ask what the missing centre piece is.

Item2Vec (Barkan & Koenigstein, 2016) is the trivial-looking but powerful adaptation: treat a user’s interaction sequence as a sentence and each item as a word. All the Word2Vec machinery transfers verbatim.

The Skip-gram objective, derived#

$$\mathcal{L} \;=\; \sum_{t=1}^{T} \;\sum_{\substack{-c \le j \le c \\ j \ne 0}} \log p\!\left(i_{t+j}\,\mid dle|\,i_t\right).$$ $$p\!\left(i_{t+j}\,\mid dle|\,i_t\right) \;=\; \frac{\exp\!\left(\mathbf{e}_{i_t}^{\top}\mathbf{e}'_{i_{t+j}}\right)}{\displaystyle\sum_{k=1}^{|\mathcal{I}|} \exp\!\left(\mathbf{e}_{i_t}^{\top}\mathbf{e}'_{k}\right)}.$$Here $\mathbf{e}_i$ is the input (centre) embedding and $\mathbf{e}'_i$ is the output (context) embedding. Two sets of vectors per item — a small surprise the first time you see it.

$$ \mathcal{L} \;=\; \sum_{(i_t,\,i_c)} \!\left[\, \log\sigma(\mathbf{e}_{i_t}^{\top}\mathbf{e}'_{i_c}) \;+\; \sum_{k=1}^{K} \mathbb{E}_{i_k \sim P_n}\!\big[\log\sigma(-\mathbf{e}_{i_t}^{\top}\mathbf{e}'_{i_k})\big]\right], $$where $\sigma$ is the sigmoid. The noise distribution $P_n$ is the unigram frequency raised to the $3/4$ power — a famous Mikolov heuristic that nudges rarer items into the negative pool more than pure frequency would.

Implementation#

A self-contained Item2Vec with Skip-gram + negative sampling. Read it once paying attention to the two embedding tables, the score clamping, and the sigmoid trick.

| |

Design decisions worth defending#

| Decision | Choice | Why it matters |

|---|---|---|

| Two embedding tables | Separate centre / context | Gives the model more room to encode “what I am” vs. “what I appear next to”. Standard in Word2Vec. |

| Negative count $K$ | 5 – 20 | Small $K$ trains fast; large $K$ better discriminates long-tail items. Diminishing returns past 20. |

| Window size $c$ | 2 – 5 | Bigger windows pick up more co-occurrence noise. Sessions are short; a small window is usually enough. |

| Negative distribution | $P_n \propto f^{0.75}$ | Pure-frequency over-samples blockbusters; uniform over-samples obscure long tails. The 3/4 exponent is the empirical sweet spot Mikolov found. |

clamp(-10, 10) on dot products | Numerical guard | A single overflowed sigmoid can NaN the whole epoch. Cheap insurance. |

Field-tested gotchas#

- Cold-start items. An item with zero training interactions has no embedding. Three remedies: (1) train a side network from item content (text, image) to predict its embedding; (2) initialise from the average of categorically similar items; (3) accept randomness and let early online traffic pull it into place.

- Variable session length. Truncate to the most recent $N$ items (typically 50 – 100) and pad shorter sessions. For very long sessions, segment into windows.

- Negative collisions. With a million-item catalogue, drawing a “false negative” that is actually positive happens with probability $< 0.1\%$ — ignore it. With a 1k-item catalogue, explicitly exclude positives.

- Repeated items. Power users replay the same song fifty times. De-duplicate consecutive repeats before building pairs, otherwise the model just learns “this song is similar to itself.”

CBOW: the symmetric variant#

CBOW averages the surrounding context embeddings into one vector and uses that to predict the centre. It trains faster and tends to be slightly worse on long-tail items — Skip-gram sees each context item independently while CBOW averages everything into a single shot.

| |

Pick Skip-gram for recommenders by default. Item popularity follows a long tail; Skip-gram allocates gradient to rare items more generously than CBOW.

Graph-based embeddings: Node2Vec#

When sequences are not enough#

Item2Vec only sees what is adjacent in time. But the relationships in a marketplace look more like a graph: items are co-purchased, co-viewed, share a category, share a brand. The same item can be relevant in two completely different “neighbourhoods” — a hiking tent is near “camping” and near “cycling gear”. Sequence models flatten that structure; graph models preserve it.

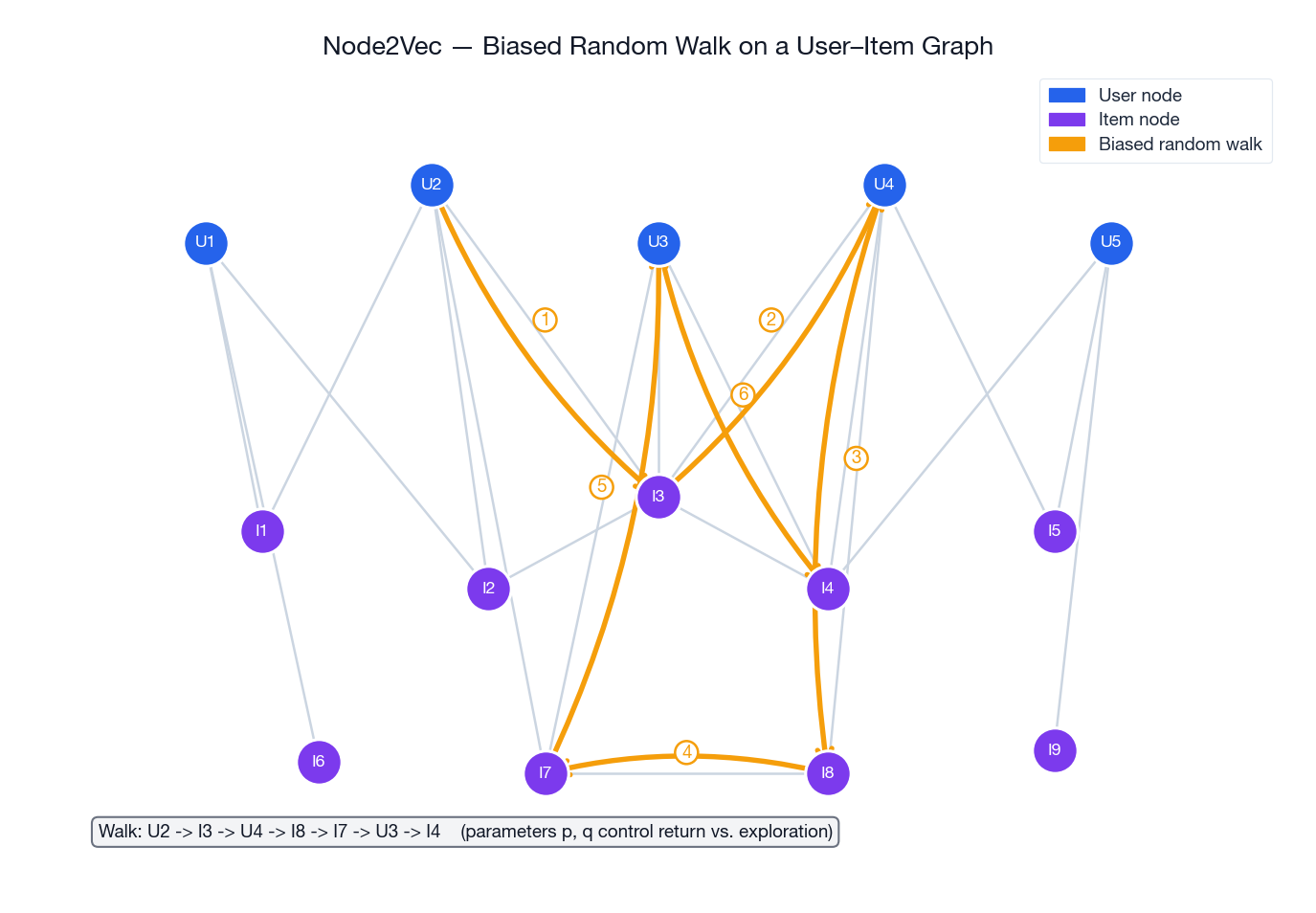

The biased random walk#

Node2Vec (Grover & Leskovec, 2016) takes a graph and produces “sentences” by walking from node to node. The trick is how it walks: a tunable bias makes the walker prefer either staying close to home (BFS-like, captures community) or wandering far (DFS-like, captures structural roles).

$$ \alpha_{p, q}(t, x) \;=\; \begin{cases} 1/p & \text{if } d_{t,x} = 0 \;\;\text{(go back to } t\text{)} \\ 1 & \text{if } d_{t,x} = 1 \;\;\text{(}x\text{ is also a neighbour of } t\text{)} \\ 1/q & \text{if } d_{t,x} = 2 \;\;\text{(}x\text{ is one step further away)} \end{cases} $$Analogy. Imagine exploring a city. BFS-style is “every shop on this street, then the next street” — you map out one neighbourhood thoroughly. DFS-style is “follow the main road for ten kilometres” — you discover how neighbourhoods connect to each other. Node2Vec lets you dial between the two.

| Setting | Walk behaviour | Captures |

|---|---|---|

| small $p$ , large $q$ | BFS-like | Community / cluster structure |

| large $p$ , small $q$ | DFS-like | Structural equivalence, hub-spoke patterns |

| $p = q = 1$ | Plain random walk (DeepWalk) | Mix of both |

Once you have a corpus of walks, you feed them into Skip-gram exactly as in Item2Vec — the algorithm does not care whether the “sentence” came from user history or graph traversal.

Implementation#

| |

Building the graph in the first place#

Most production deployments do not start with a clean graph; they construct one from interaction logs. A common recipe uses Jaccard similarity over user sets to weight edges:

| |

Practitioner tip. The pairwise loop above is $O(N^2)$ in items. For a real catalogue, build an inverted index by user, iterate per user, and only generate candidate pairs that share at least one user. That brings it down to $O(\sum_u |I_u|^2)$ , which is dramatically smaller in practice.

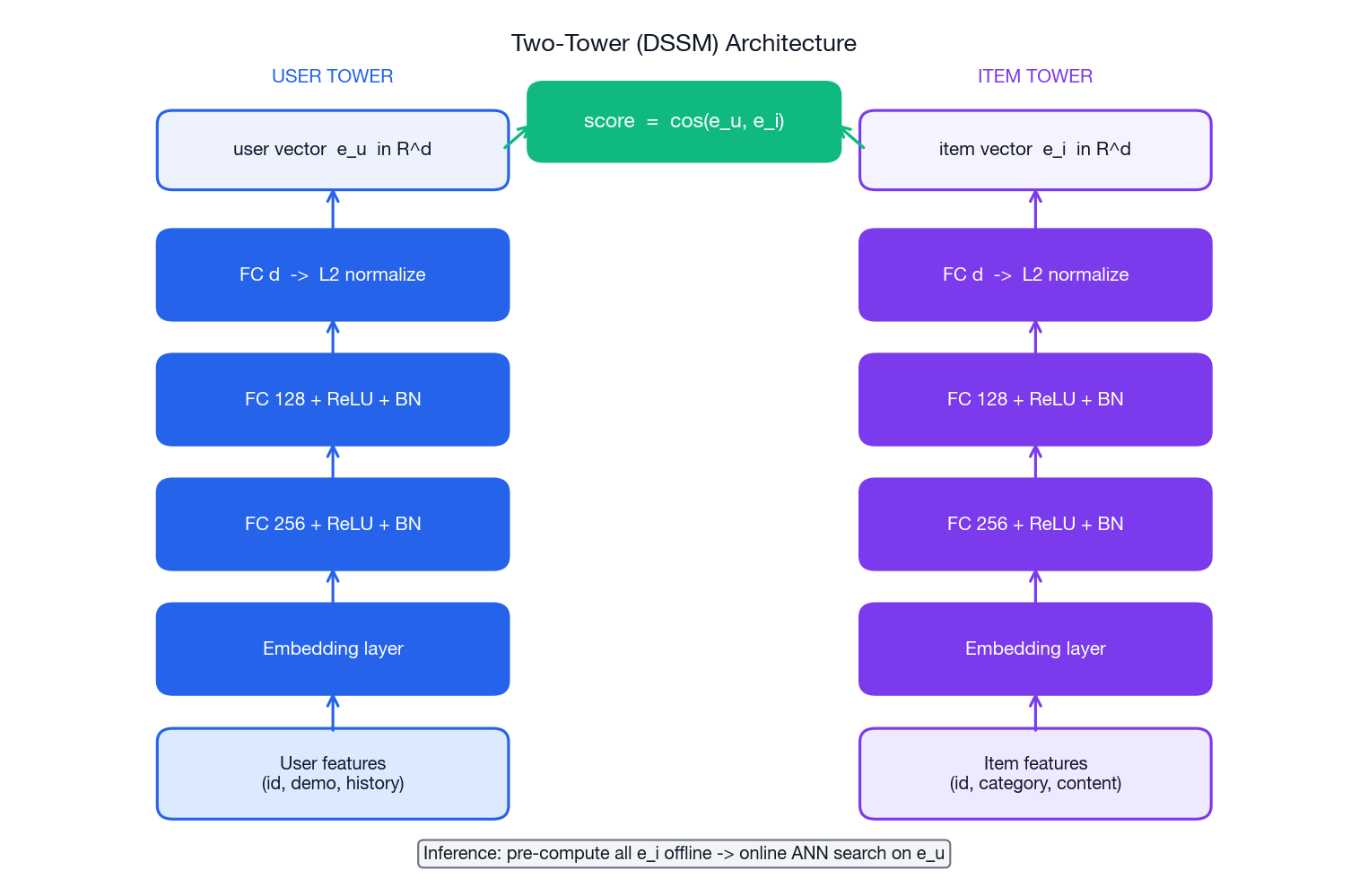

Two-tower models: separate user and item encoders#

Why two towers, not one#

Concatenate user features with item features, push them through a single network, get a score. Why not? Because at serving time you would have to run the network for every (user, candidate) pair. With ten million candidates that is ten million forward passes per request.

$$ \mathbf{e}_u = f_u(\mathbf{x}_u; \theta_u), \qquad \mathbf{e}_i = f_i(\mathbf{x}_i; \theta_i), \qquad s(u, i) = \cos(\mathbf{e}_u, \mathbf{e}_i). $$Analogy. Think of a dating app. One tower writes everyone’s profile; the other tower writes everyone’s “what I look for” preferences. At match time you just compare profiles — you do not re-run the matching algorithm from scratch for every potential pair.

The architectural payoff is enormous:

- Pre-compute all item vectors offline. Run the item tower once per item and store the result.

- At serving time, run only the user tower. One forward pass per request.

- Use ANN search to find the top-K nearest item vectors in milliseconds.

This is the canonical recipe for the retrieval (a.k.a. recall) stage of a modern recommendation pipeline.

DSSM in code#

DSSM (Huang et al., Microsoft, 2013) is the canonical two-tower model. Originally designed for web search, it ships in essentially every large recommender today.

| |

A few non-obvious choices baked into this 30-line implementation:

- L2-normalise the outputs. Cosine similarity equals an inner product on unit-norm vectors, so this lets you index with FAISS’s inner-product flavour.

- Temperature. Dividing logits by a small $\tau \in [0.05, 0.2]$ makes the softmax sharper. Without it, cosine similarities live in $[-1, 1]$ — a very flat distribution that learns slowly.

BatchNormbetween layers. Keeps activations on a sane scale through the depth of the tower; especially important when input features mix wildly different magnitudes.- Cross-entropy with the positive at index 0. Cleanly extends to in-batch negatives by using every other item in the batch as a negative — see Section 5 .

YouTube DNN: pooling a user’s history#

The setup#

YouTube DNN (Covington et al., 2016) is a celebrated two-tower variant tailored to video recommendation, where each user is described by a sequence of recently watched videos rather than a flat feature vector. The user tower’s job is to pool that sequence into one vector.

Original YouTube DNN does the simplest possible thing: mean-pool the embeddings of the watched videos, then concatenate with demographics, then push through a 2-layer MLP. Average pooling is unbeatable on latency, and at YouTube’s scale every saved millisecond adds up to data-centre money.

| |

When pooling is too crude: attention#

Average pooling treats every watched video equally. In reality, a user who just watched ten cooking videos and one ten-second car ad probably wants more cooking, not more cars. Self-attention lets the model learn the weighting:

| |

The classic trade-off. Mean pooling is $O(T)$ in history length and trivially fast. Self-attention is $O(T^2)$ in compute and $O(T)$ in memory but typically improves recall by a few percent. For real-time retrieval over a long history, target attention against the candidate item (DIN, Zhou et al., 2018 ) is often a better engineering compromise.

Negative sampling strategies#

Positives are expensive — they come from real user behaviour. Negatives are cheap — there are millions of them. The art is picking the right cheap negatives, because random ones are too easy and trivially-hard ones destabilise training.

The four strategies#

| Strategy | Mechanism | When to use |

|---|---|---|

| Uniform | Pick negatives uniformly at random from the catalogue. | Simple baseline; works on small catalogues. |

| Popularity-aware | Sample with $P_n \propto f^{0.75}$ . | Default for large catalogues; counters popularity bias. |

| Hard-negative mining | Pick items that look relevant to the user but were not interacted with. | Late-stage fine-tuning; sharpens the decision boundary. |

| In-batch negatives | Use other positives in the same minibatch as your negatives. | Two-tower training at scale; effectively free. |

Why in-batch negatives are the workhorse#

In a batch of $B$ (user, positive item) pairs, every other positive’s item embedding can serve as a negative for every user. That gives you $B - 1$ negatives per row at no extra cost. Variant: add a popularity correction term to undo the bias of in-batch sampling — popular items appear in batches more often and get penalised too hard. The standard correction (Yi et al., 2019, “Sampling-Bias-Corrected Neural Modeling”) subtracts $\log p(i)$ from the logit before softmax.

Implementation snippets#

| |

Curriculum. Start training with uniform / popularity-aware negatives for stability. After a few epochs, mix in 10 – 30% hard negatives to sharpen the boundary. Pure hard-negative training from scratch tends to collapse — the model has not learned coarse separation yet, so “hard” is just “noise.”

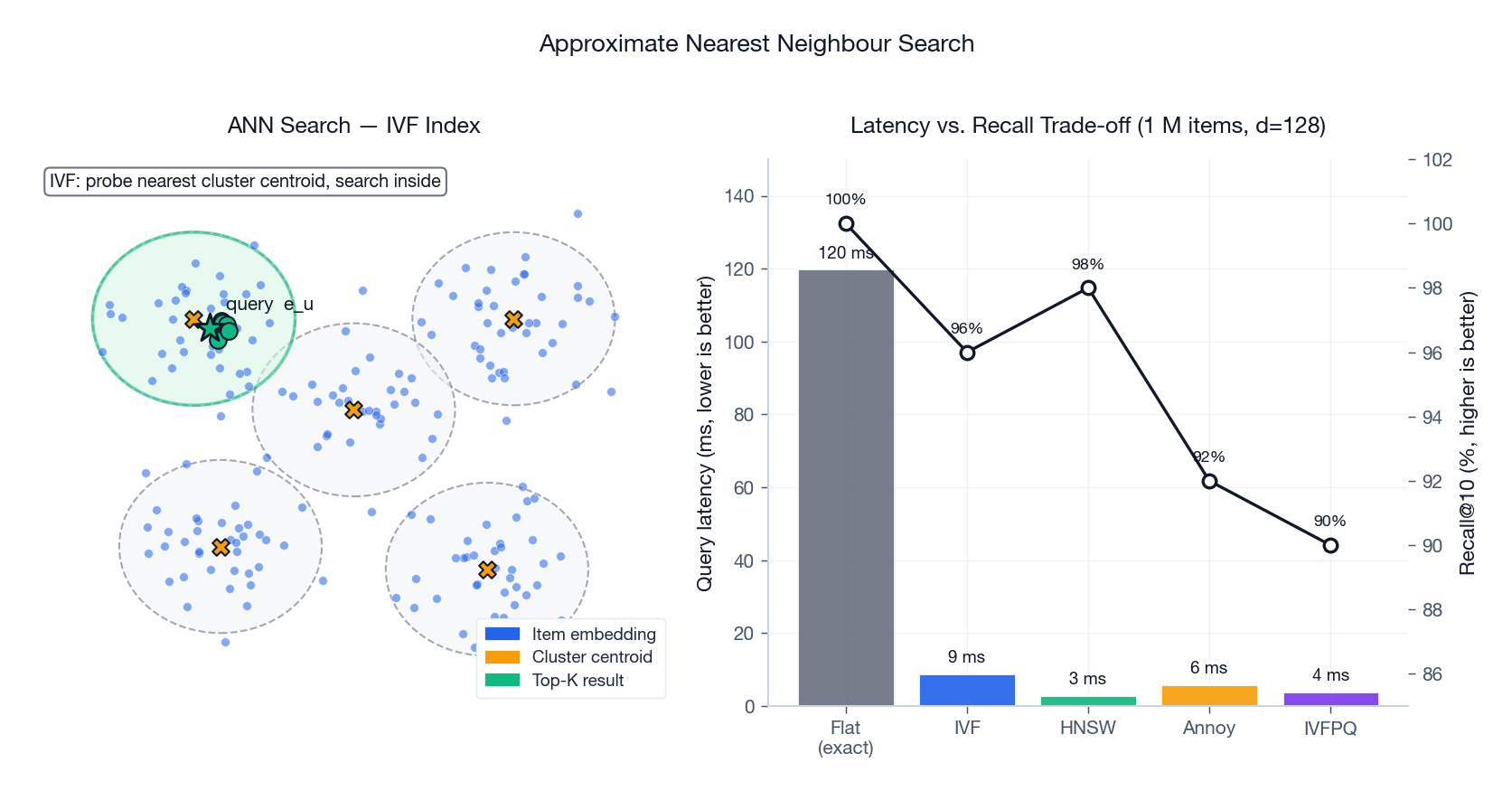

Approximate nearest-neighbour search#

The serving problem#

You have a one-million-item index and a hundred-millisecond budget. A brute-force scan is $O(Nd)$ per query — about $1.3 \times 10^{8}$ multiply-adds for $d=128$ . Doable on a beefy CPU, undoable on a fleet of them at QPS in the tens of thousands. Approximate nearest-neighbour search trades 1 – 5% recall for a 10 – 100× speedup.

The three index families#

| Family | Idea | Strength |

|---|---|---|

| Inverted file (IVF) | k-means clusters the vectors; at query time only the nearest few centroids are scanned. | Tunable speed / recall; great for medium-to-large indexes. |

| Hierarchical Navigable Small World (HNSW) | A multi-layer proximity graph with greedy navigation. | Best raw query speed at high recall; modest memory cost. |

| Product Quantisation (PQ) | Compresses vectors into a few bytes by quantising sub-vectors independently. Usually layered onto IVF (IVFPQ). | Massive memory savings; mandatory at billion-scale. |

Annoy (Spotify) uses random projection trees — simpler API, excellent for quick prototypes but typically slower than HNSW at the same recall.

FAISS in production#

| |

Benchmarks at a glance#

| Index | Build time | Query time | Memory | Recall@10 | Best for |

|---|---|---|---|---|---|

| Flat | seconds | $O(N)$ — slow | $4Nd$ B | 100% | Ground truth, small catalogues |

| IVF | minutes | fast | $4Nd$ B | 95 – 99% | General large-scale, tunable |

| HNSW | tens of minutes | very fast | $4Nd + $ graph | 95 – 99% | Tight latency budgets |

| IVFPQ | minutes | fast | $\sim N$ B (8× compression) | 90 – 95% | Billion-scale, RAM-bound |

| Annoy | minutes | fast | medium | 90 – 95% | Static indexes, simple deployment |

Operational rules of thumb. Use IVF with

nprobe = sqrt(nlist)as a starting point. Setnlist ≈ sqrt(N). For an HNSW graph,M = 32andefSearch = 100are sane defaults. Always benchmark on your vectors — recall numbers from generic benchmarks are surprisingly volatile when the cluster geometry changes.

Evaluating embedding quality#

Embeddings are not directly visible to users, so you need proxies. Use both intrinsic metrics (purely about the vectors) and extrinsic metrics (about the recommendations they produce).

Intrinsic — does the geometry look right?#

| |

Extrinsic — does the system recommend better?#

| |

A real evaluation suite tracks more than accuracy. Coverage (what fraction of the catalogue ever gets recommended), diversity (intra-list dissimilarity), serendipity (recommendations far from the user’s history that still convert), and freshness all matter. A model that wins HR@10 but only ever recommends the top 1% of items is a popularity baseline in disguise.

FAQ#

Q1. Item2Vec vs. matrix factorisation — when do I pick which?#

Matrix factorisation (MF) learns from the whole user-item matrix and captures global preference patterns. Item2Vec learns from sequences and captures temporal co-occurrence. If your data is naturally sequential (playlists, sessions, watch logs) Item2Vec is usually stronger; if you have explicit ratings or stable long-term preferences, MF is competitive and far simpler to train.

Q2. How do I pick the embedding dimension?#

| Catalogue size | Typical $d$ |

|---|---|

| < 10K items | 32 – 64 |

| 10K – 1M items | 64 – 128 |

| > 1M items | 128 – 512 |

Doubling $d$ doubles index size and query latency without doubling quality — diminishing returns set in fast. Validate on HR@K and NDCG, not on training loss.

Q3. Skip-gram or CBOW for items?#

Skip-gram for almost everything in recommendations. It allocates more gradient to long-tail items, which is exactly the regime where recommenders need help.

Q4. When is Node2Vec strictly better than Item2Vec?#

When your relationships are not naturally sequential. Co-purchase graphs, social networks, knowledge graphs, item-attribute graphs — anything where “neighbour” has a meaning beyond “appeared next to in time.”

Q5. Why not just train one big model end-to-end?#

A single-tower model that takes both user and item features cannot factor into independent encoders, so retrieval becomes $O(N)$ per query. Two-tower trades a bit of expressiveness for the ability to pre-compute and index — at scale, that trade is non-negotiable.

Q6. How do I handle cold-start?#

- New users: rely on demographic / context features in the user tower (no ID needed).

- New items: use a content-based item tower (text, image, category embeddings).

- Hybrid: sum a learned ID embedding with a content embedding; the ID part learns once data accumulates, the content part carries the new item.

Q7. What is the right negative sampling recipe?#

Start with uniform or popularity-aware sampling for stability. After a few epochs, blend in 10 – 30% hard negatives. Use in-batch negatives whenever you can — they are essentially free.

Q8. FAISS vs. HNSW vs. Annoy?#

- FAISS is the production standard for very large indexes (> 10M items), supports GPUs, and has the richest set of index types.

- HNSW (via

hnswlibor FAISS’sIndexHNSWFlat) gives the best raw query speed at high recall and is the right default for tight latency budgets. - Annoy is great for prototyping or static indexes you can rebuild offline.

Q9. How do I sanity-check embeddings without labels?#

Inspect nearest neighbours visually for a handful of items you know well. Plot a t-SNE / UMAP and check whether known categories cluster. Compute a silhouette score against any auxiliary categorical label.

Q10. Dot product or cosine similarity?#

Cosine similarity is direction-only; magnitudes cancel. Dot product also rewards larger magnitudes — which can be useful if you want popular items to score higher (their embeddings tend to grow longer during training). For most retrieval setups, L2-normalise everything and use dot product = cosine. That also lets you index with FAISS’s inner-product mode.

Series Navigation#

- Previous: Part 4 — CTR Prediction and Click-Through Rate Modeling

- Next: Part 6 — Sequential Recommendation and Session-based Modeling

- Back to Series Overview (Part 1)

Summary#

- Embeddings are dense, learned vectors that turn discrete identity into geometry. Recommendation becomes nearest-neighbour search.

- Choose the method by the shape of your data. Sequences → Item2Vec. Graphs → Node2Vec. Rich features and large catalogues → two-tower (DSSM, YouTube DNN).

- Negative sampling is half of the model. Mix uniform / popularity-aware / hard / in-batch and watch curriculum effects.

- Two-tower architectures are the production default for retrieval because they make item vectors pre-computable and indexable.

- ANN search makes serving feasible. Pick FAISS-IVF, HNSW or Annoy by your latency / recall / memory budget; benchmark on your data.

- Evaluate with intrinsic and extrinsic metrics. Track coverage and diversity alongside HR@K and NDCG, or you will silently drift toward popularity.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning you are here

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices