Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

How recommenders use the order of user actions to predict the next one. Markov chains, GRU4Rec, Caser, SASRec, BERT4Rec, BST, and SR-GNN, with implementations and intuition.

When you scroll TikTok, every recommendation feels eerily on-point — not because the system reads your mind, but because it reads the order of what you just watched. A cooking video followed by a travel vlog tells a different story than the same two clips in reverse. That ordering is exactly the signal that sequential recommenders are built to exploit.

Compare two friends recommending shows. The first knows your favourite genres but never asks what you watched last week. The second says, “You just finished three sci-fi thrillers in a row — try this one.” Traditional collaborative filtering is friend one. Sequential recommendation is friend two.

What You Will Learn#

- Why order matters, and how sequential models depart from set-based collaborative filtering

- Markov chains as the simplest sequential baseline — interpretable, sparse, and surprisingly resilient

- GRU4Rec, the first deep-learning model to take session-based recommendation seriously

- Caser, which treats the sequence as an “image” and runs CNN filters across it

- SASRec and BERT4Rec, the Transformer-era unidirectional and bidirectional models

- BST, the Behavior Sequence Transformer that pulls in side features

- SR-GNN, which represents a session as a directed graph

- Evaluation metrics (HR@K, NDCG@K, MRR) and production tradeoffs

Prerequisites#

- Comfort with neural networks (RNNs, CNNs, Transformers)

- Basic PyTorch

- Recommendation fundamentals from Part 1

- Embedding techniques from Part 5 help

What is sequential recommendation?#

Definition#

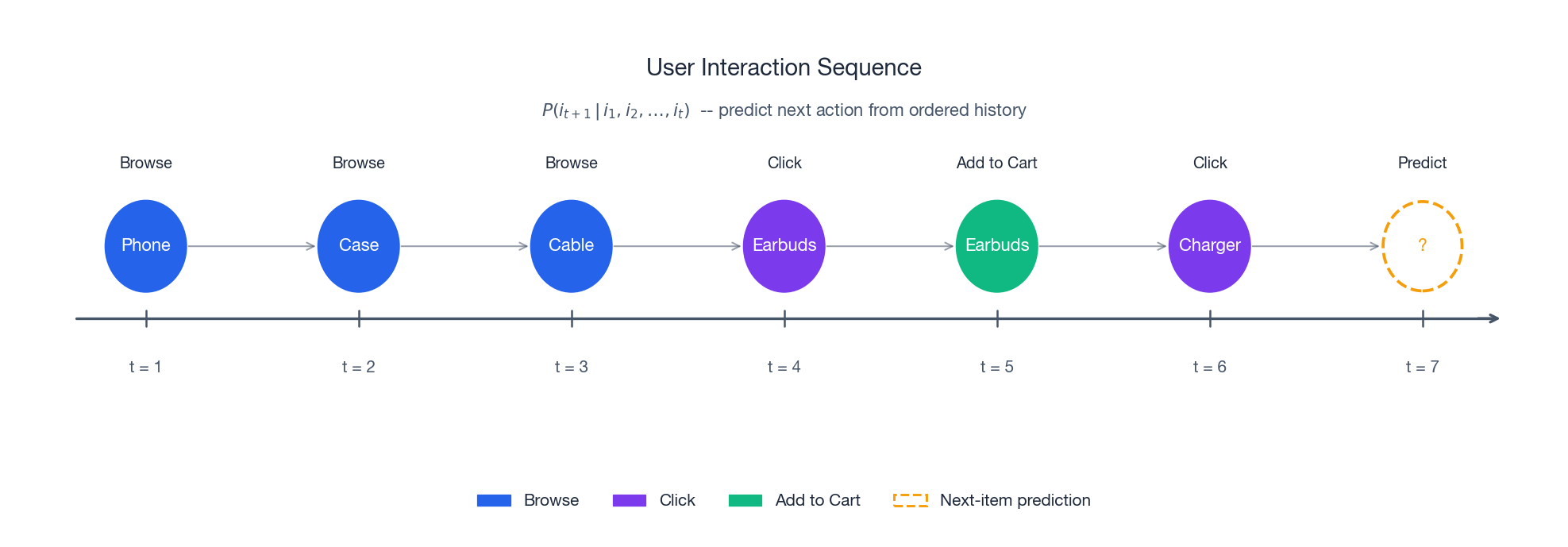

A sequential recommender models user preferences using the temporal order of interactions. Where traditional collaborative filtering treats a user’s history as a bag of items, a sequential model treats it as a stream — and that stream carries information.

$$P(i_{t+1} \mid S_u) = P(i_{t+1} \mid i_1, i_2, \dots, i_t).$$In plain English: given everything the user has done so far, in order, what comes next? The probability depends not just on which items appeared but on how they were arranged in time.

Why this matters#

There are four reasons sequential modelling pays off in production:

- Drifting taste. A user who binged action films last month might be on a documentary kick this week. Static models miss the shift; sequential ones absorb it.

- Local context. After watching a movie trailer, the next thing a user wants is the movie itself, not a random recommendation. Sequential context captures that.

- Session intent. On a shopping site, a user browsing laptops will probably look at laptop bags next — a pattern that lives in the transition, not in the marginal popularity of bags.

- Cold-start dampening. Even users with three interactions carry signal in their order; set-based models throw that signal away.

Sequential vs. classical recommendation#

| Aspect | Classical CF | Sequential |

|---|---|---|

| Input | Unordered set of interactions | Ordered sequence |

| Temporal modelling | Ignored | First-class |

| Prediction target | $P(i \mid u)$ | $P(i_{t+1} \mid S_u)$ |

| Strength | Long-term taste | Next-action prediction |

| Typical pitch | “Users like you also liked…” | “Based on your last few actions…” |

Three flavours#

- User-based sequential. Treats the user’s entire history as one long sequence. Good for capturing slow taste evolution (months of music listening).

- Session-based. Treats each short session as an independent sequence. Ideal when users are anonymous or intent is short-lived (a single shopping session).

- Hybrid. Uses long-term embeddings for “who you are” and the current session for “what you want right now.” Production systems usually land here.

Markov chain models#

First-order chains#

$$P(i_{t+1} \mid i_1, \dots, i_t) = P(i_{t+1} \mid i_t).$$$$M_{ij} = \frac{\text{count}(i \to j)}{\text{count}(i)}.$$Analogy. This is like predicting the next word in a sentence using only the current word. If the current word is “ice,” you might guess “cream” — but you have no idea whether the conversation is about dessert or hockey.

In words: how often does $j$ follow $i$ , divided by how often $i$ appears (excluding final positions).

Higher-order chains#

$$P(i_{t+1} \mid i_1, \dots, i_t) = P(i_{t+1} \mid i_{t-k+1}, \dots, i_t).$$Higher orders capture more context but burn through your data: with 10,000 items a second-order model has $10^8$ possible transitions, most of which are never observed. This is the curse of dimensionality in disguise.

Implementation#

| |

Where Markov chains break#

Sparsity. For large catalogues most transitions never appear. Smoothing helps but does not fix the underlying problem.

Tunnel vision. Even a high-order chain only sees a fixed window. It cannot represent a statement like “the user has been into photography for the past month.”

No generalization. If items A and B are functionally similar, the chain still treats them as completely independent — there is no shared representation.

These limitations are exactly the gap that neural sequence models fill.

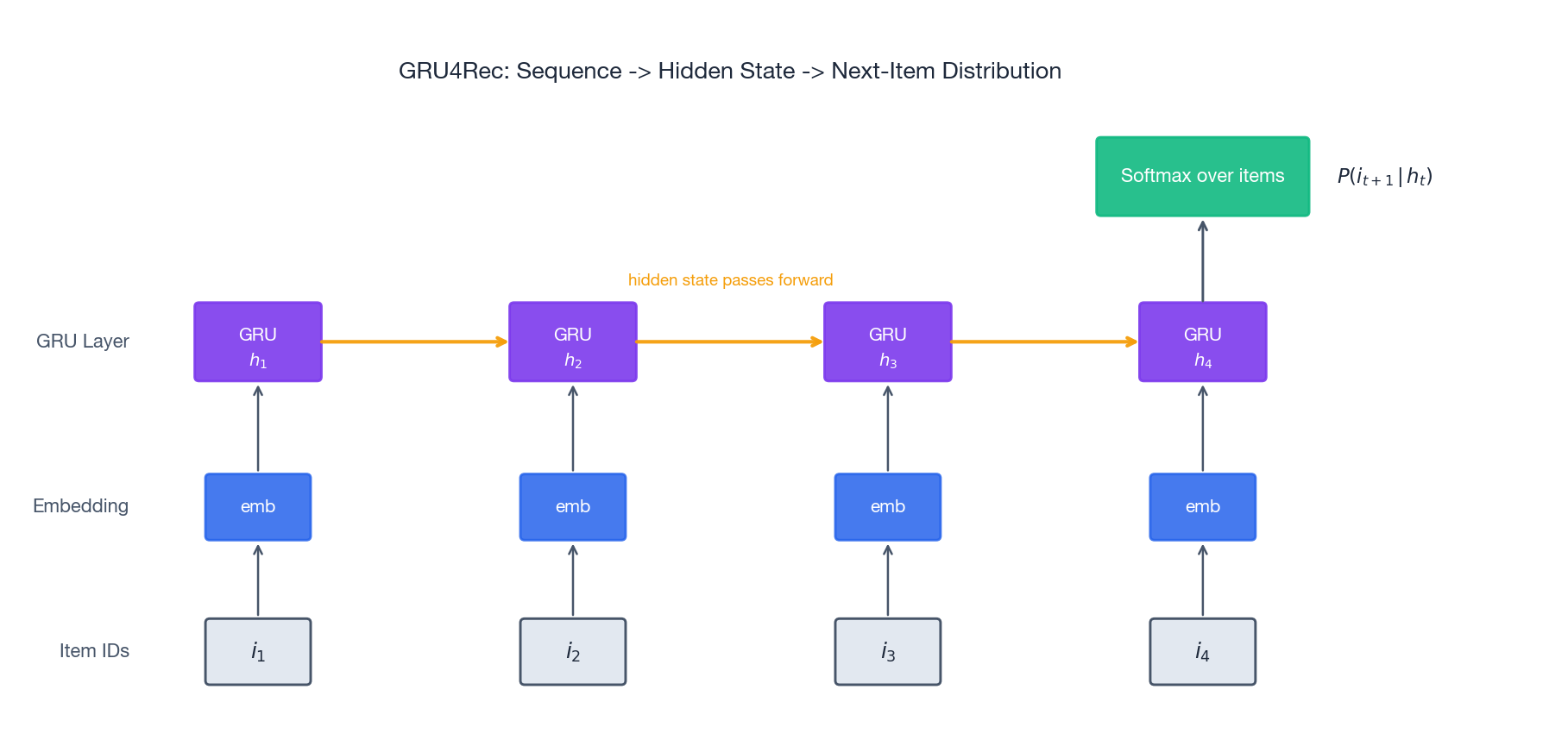

GRU4Rec: RNN-based sequential recommendation#

Architecture overview#

GRU4Rec (Hidasi et al., 2015) was the first deep model to take session-based recommendation seriously. It uses Gated Recurrent Units to consume items one at a time and maintain a running summary of the session.

Key idea. Instead of treating sessions as bags of items, GRU4Rec walks through them sequentially, updating a hidden state that compresses everything seen so far.

How a GRU updates its state#

A GRU cell maintains hidden state $h_t$ . Given the input $x_t$ (embedding of item $i_t$ ) and the previous hidden state $h_{t-1}$ :

$$r_t = \sigma(W_r x_t + U_r h_{t-1} + b_r)$$ $$z_t = \sigma(W_z x_t + U_z h_{t-1} + b_z)$$ $$\tilde{h}_t = \tanh(W_h x_t + U_h (r_t \odot h_{t-1}) + b_h)$$ $$h_t = (1 - z_t) \odot h_{t-1} + z_t \odot \tilde{h}_t$$Plain English. The GRU reads items one at a time and keeps a “memory” vector. The reset gate decides how much of the old memory to wipe; the update gate decides how much new information to mix in. The final hidden state is a learned summary of the whole session.

The full pipeline is: Embedding → GRU → Linear projection → Softmax over the item vocabulary, trained with a ranking loss (BPR or TOP1) for implicit feedback.

Implementation#

| |

Strengths and limitations#

| Strengths | Limitations |

|---|---|

| Captures sequential dependencies naturally | Sequential by construction — no parallelism over time steps |

| Handles variable-length sequences gracefully | Long-range dependencies fade with distance |

| Compact, well-understood architecture | Fixed hidden state caps memory capacity |

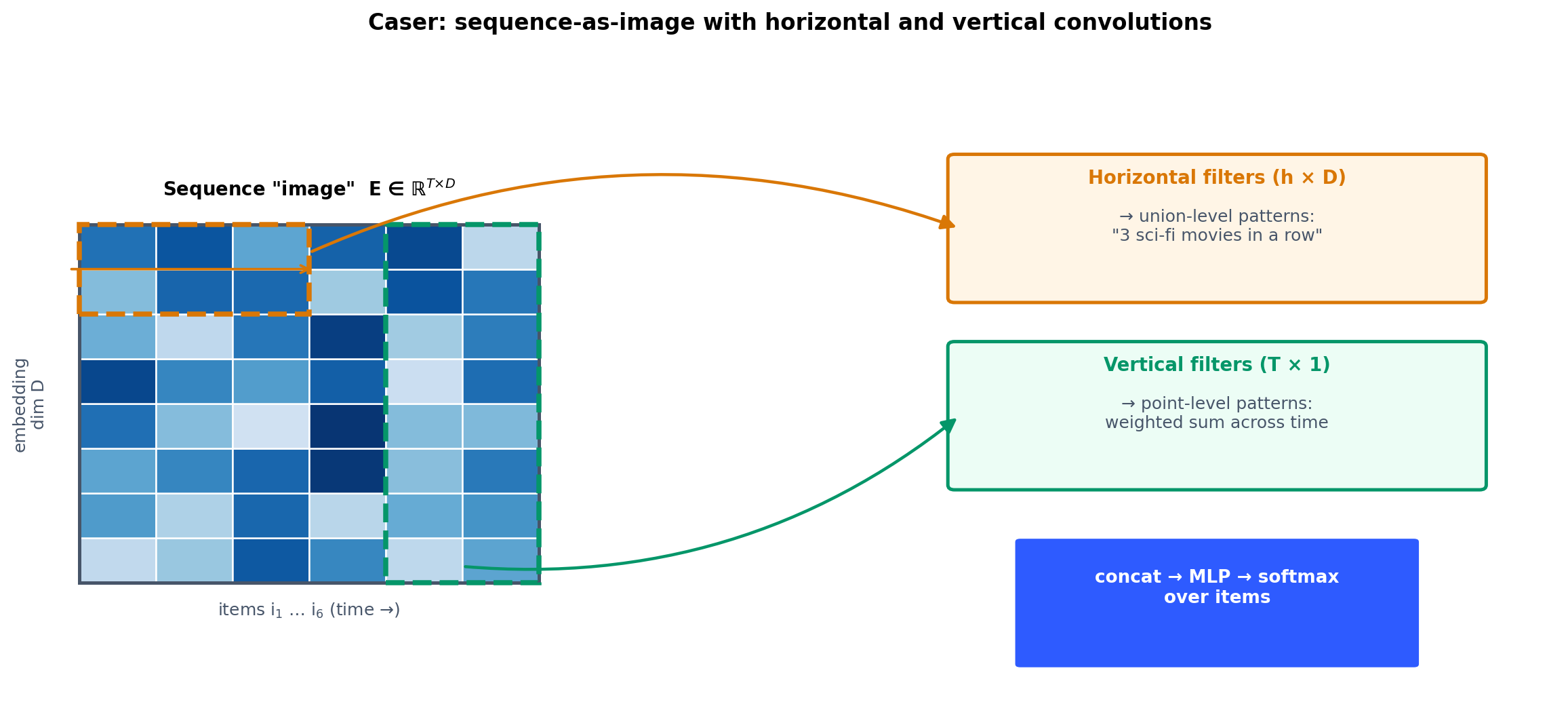

Caser: convolutional sequence embedding#

Motivation#

Caser (Tang and Wang, 2018) flips the problem on its head. Instead of walking through the sequence step by step, it lays the embedded sequence out as an image and runs convolutions over it. Different filter sizes catch patterns of different lengths in parallel.

Analogy. If GRU4Rec reads a sentence word by word, Caser looks at it all at once with a set of differently-sized magnifying glasses — one for pairs, one for triplets, one for longer sub-phrases.

Architecture#

Caser stacks two filter families on the $t \times d$ embedding matrix $\mathbf{E}$ (sequence length $t$ , embedding dim $d$ ):

- Horizontal filters slide along the sequence to capture union-level n-gram patterns. Heights of 2, 3, 4 give bigram, trigram, and 4-gram detectors.

- Vertical filters sweep the embedding dimension to capture point-level patterns — latent features of individual items aggregated across time. $ \mathbf{c}_h = \text{ReLU}(\text{Conv}_h(\mathbf{E})) \quad\text{(horizontal, height } h\text{)} $

The two outputs are pooled, concatenated, and pushed through a fully-connected head.

Implementation#

| |

Why Caser matters#

- Parallel by design. CNNs process the whole sequence in one forward pass — much faster than RNNs on a GPU.

- Multi-scale patterns. Different filter heights pick up bigrams, trigrams, and longer phrases simultaneously.

- Local pattern detector. Excels at short-range sequential patterns where RNNs are overkill and Transformers are wasteful.

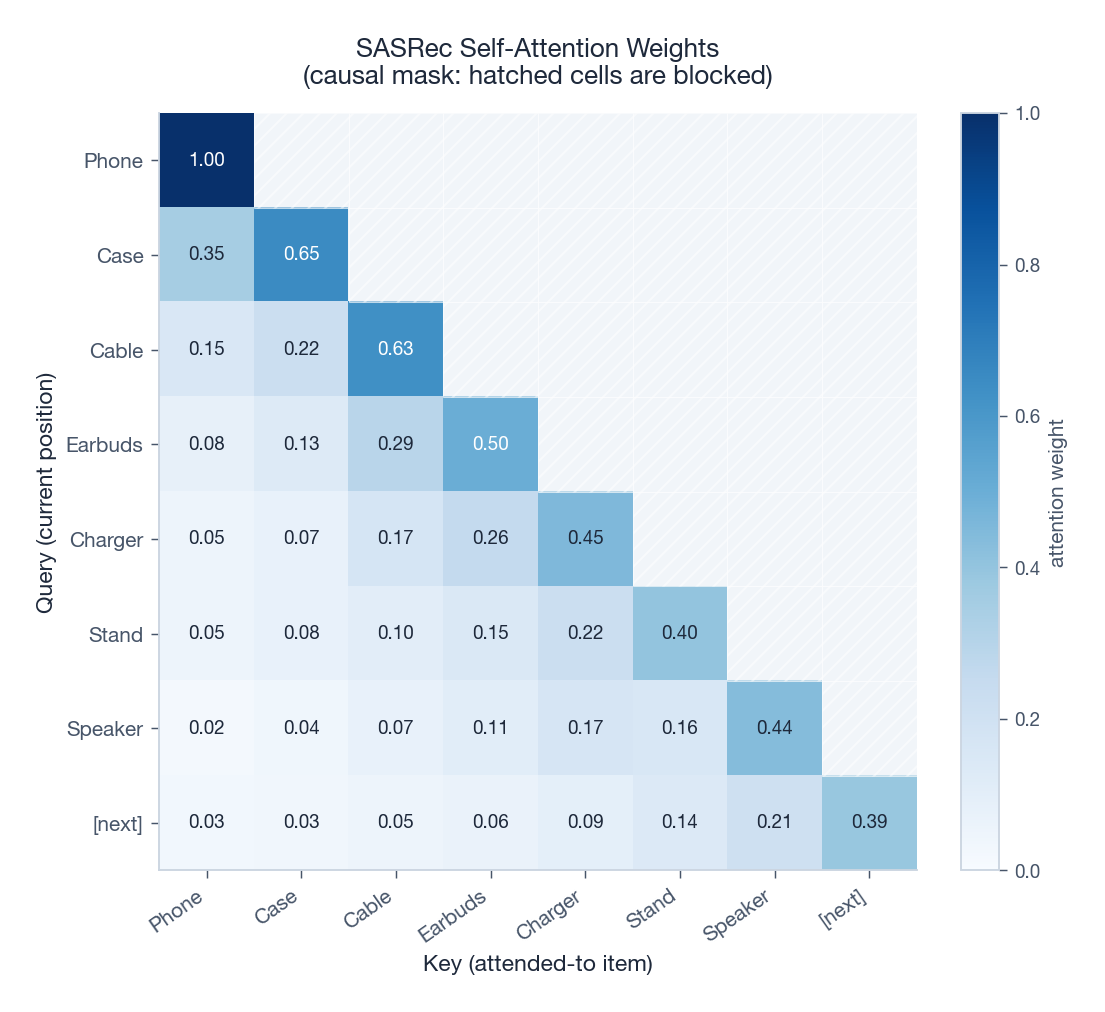

SASRec: self-attention for sequential recommendation#

Why Transformers#

SASRec (Kang and McAuley, 2018) brings the Transformer encoder to sequential recommendation. Self-attention solves two problems that bedevil RNNs at once: it lets every position connect directly to every earlier one (no vanishing gradients), and it processes all positions in parallel.

Why it works. RNNs read one item at a time, which makes them slow and makes it hard to link items that are far apart in time. Self-attention lets each item look at every earlier item in a single matrix multiplication, capturing both nearby and distant relationships at once.

The heatmap above shows a typical attention pattern. Each row is one position acting as the query; the columns are the items it attends to. The diagonal is dark (every position pays attention to itself), recent items get more weight than older ones, and the upper triangle is hatched — those cells are masked out so the model cannot cheat by looking ahead.

Building blocks#

$$ \text{Attention}(Q, K, V) = \text{softmax}\!\left(\frac{QK^\top}{\sqrt{d_k}}\right) V. $$The $\sqrt{d_k}$ scaling keeps the dot products from saturating the softmax as the dimension grows.

2. Causal mask. Position $t$ may only attend to positions $1, \dots, t$ . Without this mask the model would trivially solve the prediction task by reading the answer.

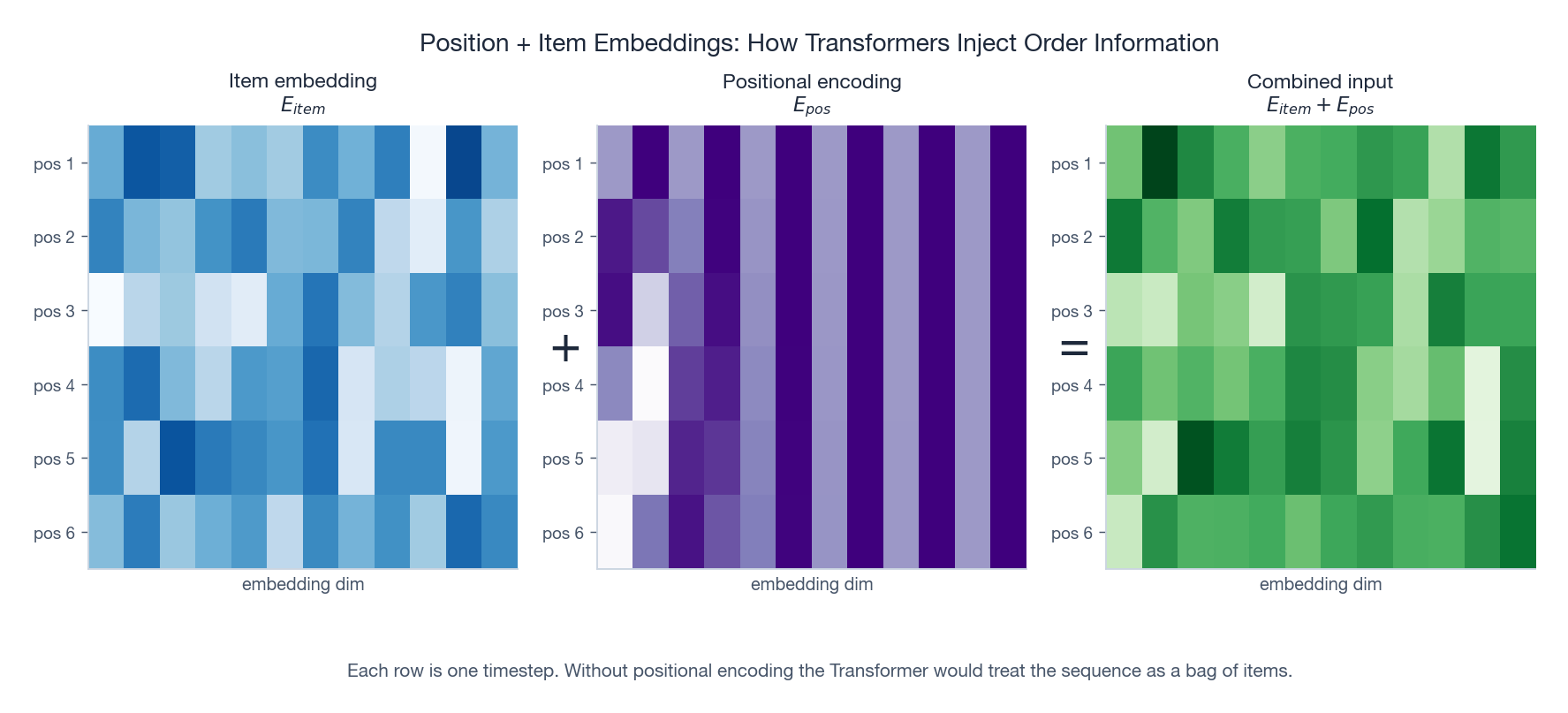

$$ PE_{(p, 2i)} = \sin(p / 10000^{2i/d}), \qquad PE_{(p, 2i+1)} = \cos(p / 10000^{2i/d}). $$The next figure shows what this addition looks like in practice.

4. Residuals and LayerNorm. Standard Transformer plumbing — they make deeper stacks trainable.

Implementation#

| |

Why SASRec became the default#

- Long-range dependencies without vanishing gradients — any two positions are one attention hop apart.

- Parallel training over all positions at once.

- Interpretability for free — attention weights tell you which past actions drove each prediction.

- Strong scaling with sequence length and model size.

BERT4Rec: bidirectional encoder for sequential recommendation#

Motivation#

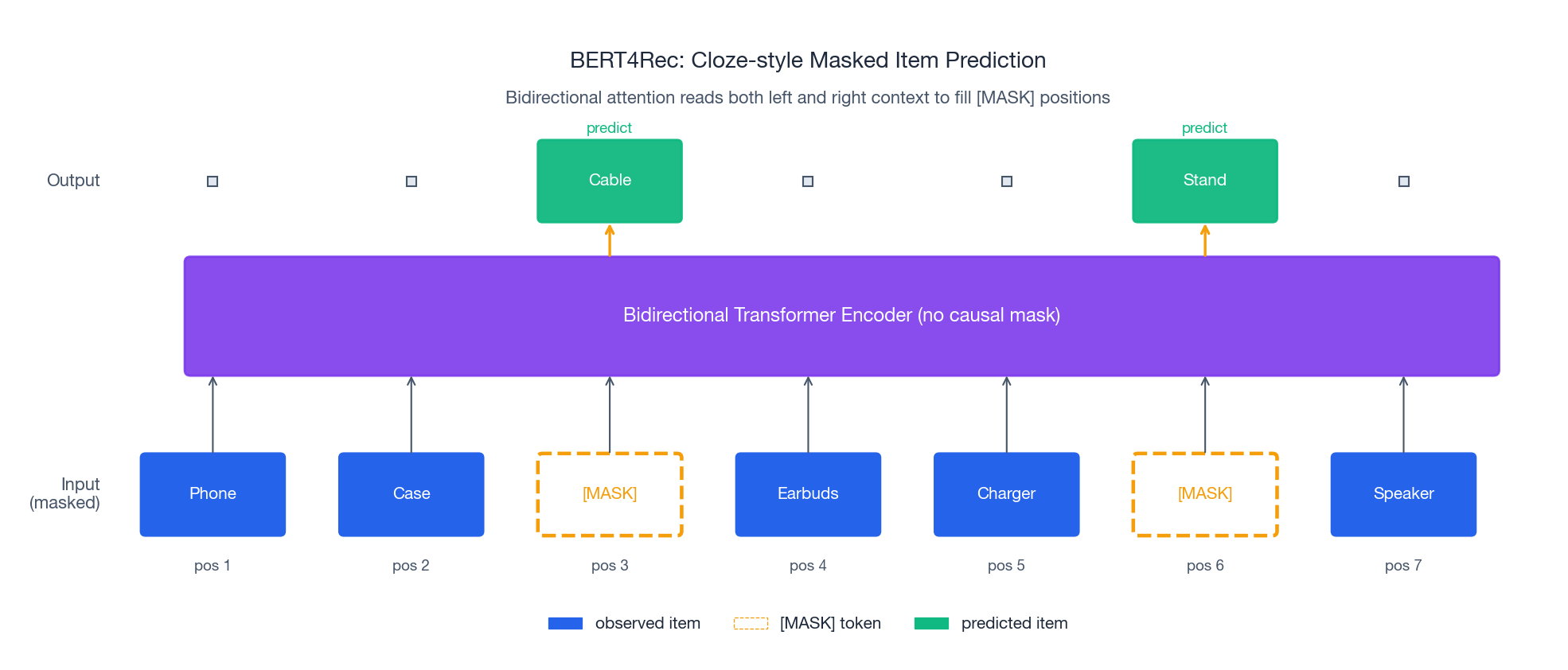

BERT4Rec (Sun et al., 2019) takes the same Transformer backbone but flips the training objective. Instead of next-item prediction with a causal mask, it borrows BERT’s cloze task: randomly mask items in the sequence and ask the model to fill them in using both left and right context.

Wait — how can we look at future items? During training we hide a few positions and let the bidirectional encoder reconstruct them. At inference we append a

[MASK]token to the end of the sequence and predict what should go there. Same backbone, different pretext task, richer representations.

Differences from SASRec at a glance#

| Feature | SASRec | BERT4Rec |

|---|---|---|

| Attention direction | Causal (left → right) | Bidirectional |

| Training task | Predict the next item | Predict masked items |

| Masking | Causal mask during training | Random masking during training |

| Inference | Use the last position’s output | Append [MASK], read its output |

Implementation#

| |

Tradeoffs#

Wins. Bidirectional context produces richer representations, and masked training makes the model robust to missing or noisy items.

Costs. Inference needs the [MASK]-append trick, which is less natural than autoregressive prediction. Training is a touch more complex, and large catalogs often see diminishing returns over a well-tuned SASRec.

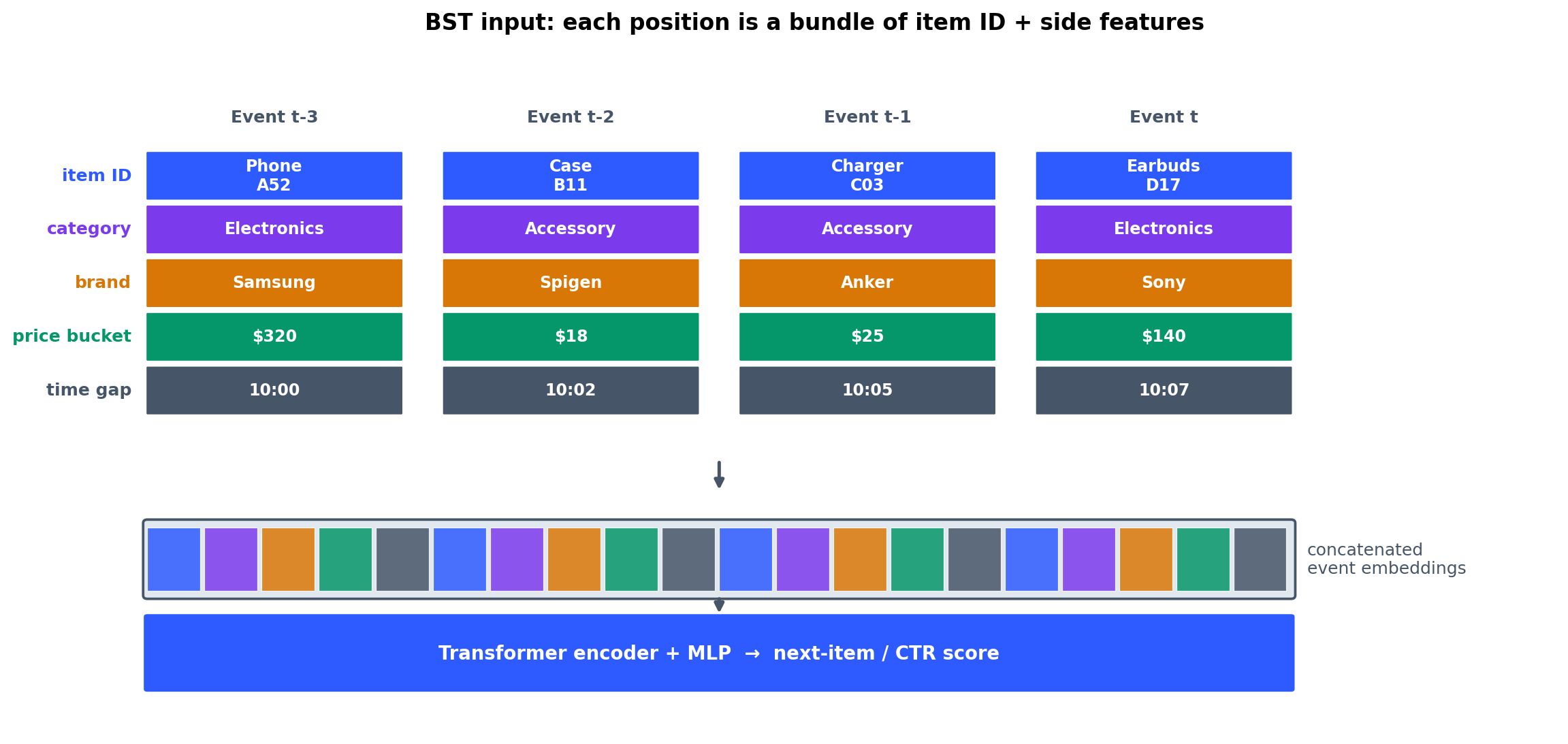

BST: Behavior Sequence Transformer#

What makes BST different#

BST (Chen et al., 2019, Alibaba) extends the Transformer to incorporate rich side features beyond item IDs. In a real e-commerce system you have item categories, brands, prices, shop IDs, user demographics — BST embeds all of them and feeds the concatenation through a Transformer.

Insight. Real user behaviour is not a sequence of IDs, it is a sequence of events. Each event bundles an item with its category, brand, price bucket, time gap, and so on. BST treats the bundle as the unit of input.

Implementation#

| |

Session-based recommendation#

A session is a short burst of interactions — one shopping visit, one playlist, one news-reading session. Session-based recommendation is sequential recommendation with extra constraints:

- No persistent identity. The user may be anonymous.

- Short sequences. Typically 5 to 20 items.

- Independent. Each session is treated on its own; cross-session history is unavailable or ignored.

- Real-time. Predictions must keep up with the user’s clicks.

| Domain | Example session |

|---|---|

| E-commerce | Laptops → laptop bags → laptop stands |

| News | Politics → sports → weather |

| Music | Jazz → late-night jazz → classical |

| Video | Three cooking tutorials in a row |

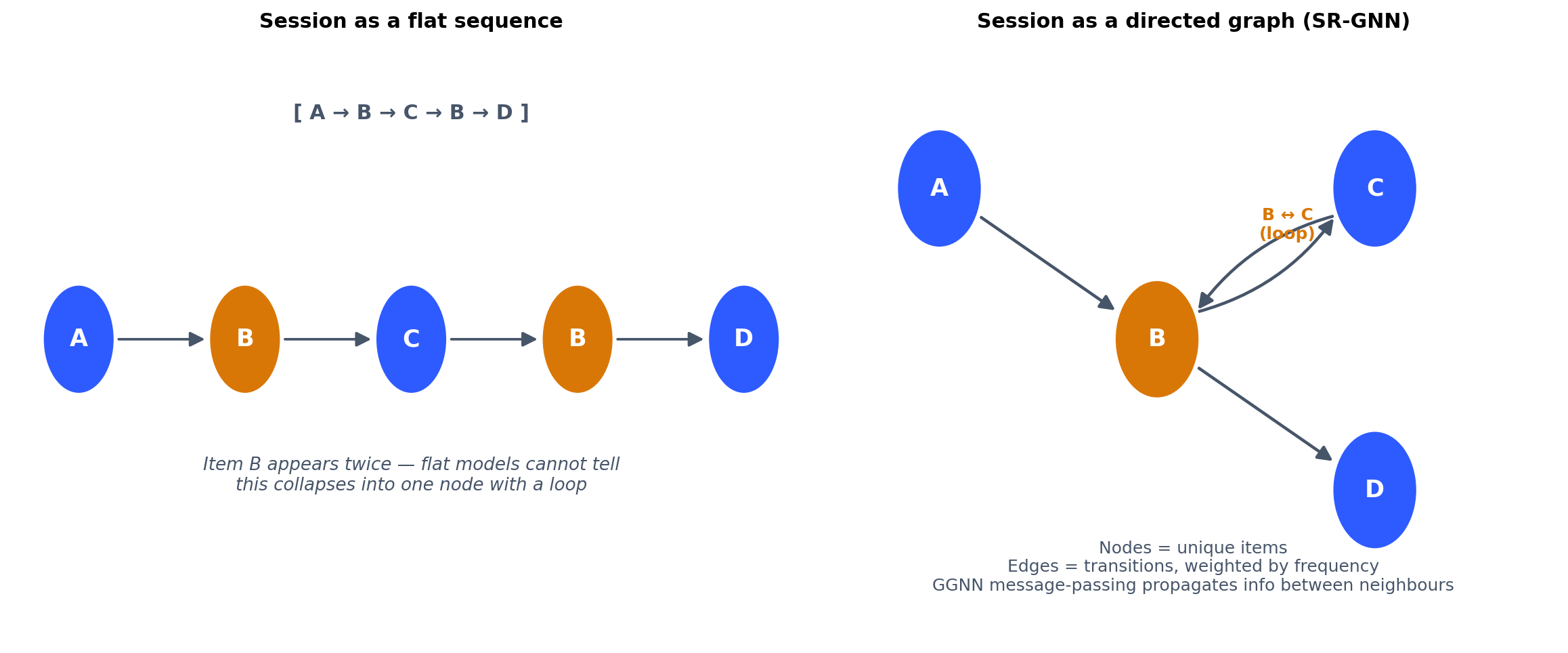

SR-GNN: graph neural networks for session recommendation#

Motivation#

SR-GNN (Wu et al., 2019) takes a step sideways: instead of treating a session as a flat sequence, it models it as a directed graph where nodes are items and edges are transitions.

Why a graph? Consider a session $[A, B, C, B, D]$ where item $B$ appears twice, creating a loop. A graph captures this naturally; a flat sequence model has to fight to represent it.

Graph construction#

For session $S = [i_1, i_2, \dots, i_t]$ :

- Nodes are unique items in the session.

- Edges are directed from each item to the next item in the original order.

- Edge weights record the frequency of each transition (handling repeated visits).

How SR-GNN works#

SR-GNN runs a Gated Graph Neural Network to propagate information between neighbouring items. The update equations look like a GRU but operate on graph neighbours:

$$ \mathbf{m}_v^{(l)} = \sum_{u \in \mathcal{N}(v)} \mathbf{A}_{uv}\,\mathbf{h}_u^{(l-1)} $$ $$ \mathbf{z}_v = \sigma(\mathbf{W}_z \mathbf{m}_v + \mathbf{U}_z \mathbf{h}_v),\quad \mathbf{r}_v = \sigma(\mathbf{W}_r \mathbf{m}_v + \mathbf{U}_r \mathbf{h}_v) $$ $$ \tilde{\mathbf{h}}_v = \tanh(\mathbf{W}_h \mathbf{m}_v + \mathbf{U}_h (\mathbf{r}_v \odot \mathbf{h}_v)) $$ $$ \mathbf{h}_v = (1 - \mathbf{z}_v) \odot \mathbf{h}_v + \mathbf{z}_v \odot \tilde{\mathbf{h}}_v $$Plain English. Each item “talks” to the items that appeared right before or after it in the session. After a few rounds of message passing, every item’s representation has absorbed information about its local neighbourhood in the session graph. The session is then summarised by attention-pooling the node embeddings.

Implementation (simplified)#

| |

Why SR-GNN stands out#

- Graph structure captures complex transition patterns flat sequences miss.

- Repeated items are first-class — they collapse into the same node and accumulate edge weights.

- Local message passing picks up tight neighbourhood signals like “this item is the centre of a small interest cluster within the session.”

How long should the sequence be?#

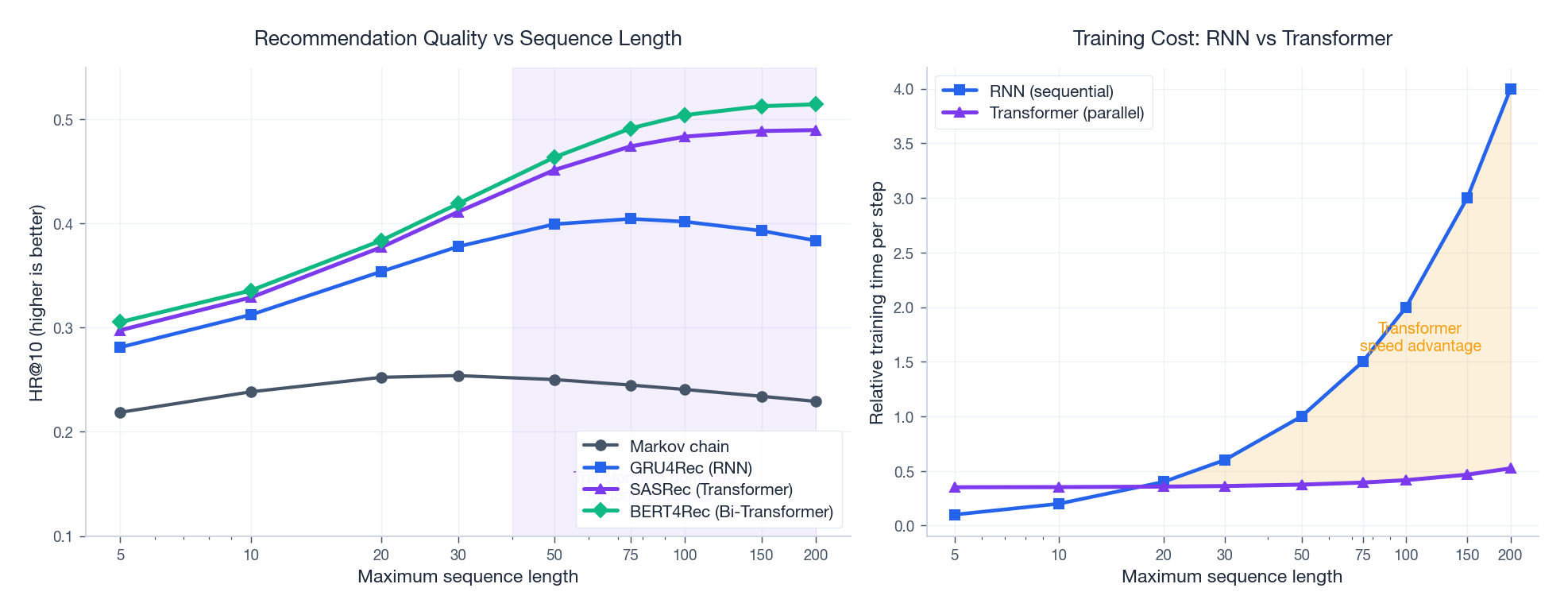

Different model families plateau at very different sequence lengths, and they pay very different prices for it. The figure below shows the typical pattern on a benchmark like MovieLens or Amazon Beauty.

Three things stand out:

- Markov chains plateau early. Their fixed-window assumption simply cannot exploit longer context.

- GRU4Rec peaks around 50–75 items, then degrades as the hidden state struggles to compress more history into a fixed-size vector.

- SASRec and BERT4Rec keep climbing. With direct attention between any two positions there is no compression bottleneck — they convert longer context into higher quality.

The cost picture is the mirror image. RNNs cannot parallelize across time, so cost grows linearly with sequence length. Transformers exploit GPU parallelism and pay almost nothing extra at moderate lengths. Both effects together explain why Transformers became the default in production sequential recommenders.

Evaluation metrics#

Three you need to know#

$$ \text{HR@K} = \frac{1}{|T|} \sum_{t \in T} \mathbb{1}\!\left[\text{rank}(i_t^*) \leq K\right] $$ $$ \text{NDCG@K} = \frac{1}{|T|} \sum_{t \in T} \frac{\mathbb{1}\!\left[\text{rank}(i_t^*) \leq K\right]}{\log_2(\text{rank}(i_t^*) + 1)} $$ $$ \text{MRR} = \frac{1}{|T|} \sum_{t \in T} \frac{1}{\text{rank}(i_t^*)} $$Which to use? HR@K is the most interpretable (“did we get it right at all?”). NDCG@K is the right metric for ranking quality (“how high did we put it?”). MRR is great when there is exactly one relevant item per query.

Implementation#

| |

Practical considerations#

Data preprocessing#

Padding and truncation. Most pipelines pad short sequences with zeros (prepended) and truncate long ones to keep the most recent items. Dynamic batching by length minimizes wasted compute. For very long histories, a sliding window of overlapping fixed-length subsequences works well.

Negative sampling. Implicit-feedback data only has positive interactions, so you sample negatives. Random sampling is the baseline; popularity-weighted and hard-negative mining produce better gradients but cost more (see Part 5 ).

Augmentation. Three cheap tricks:

- Sequence cropping — turn each session into many overlapping prefixes.

- Item masking — BERT4Rec-style random masking, even for non-BERT4Rec models.

- Light shuffling — perturb non-adjacent items to encourage robustness.

Training#

- Loss. Cross-entropy, BPR, and sampled softmax are the standard menu. Sampled softmax is essential for vocabularies in the millions.

- Regularization. Dropout (0.1–0.5), L2 weight decay, and early stopping on validation HR@K cover most cases.

- Optimization. AdamW with a warmup-then-decay schedule is the default for Transformer-based models; gradient clipping at norm 1.0 is good insurance.

Scaling to millions of items#

Six levers, used in roughly this order:

- Negative sampling. Don’t compute over the full vocabulary at training time.

- Approximate nearest neighbour (FAISS, HNSW) for the final retrieval step.

- Two-stage retrieval. Embedding-based candidate generation, then a heavier ranker on the top few hundred.

- Distillation. Train a smaller, faster student that mimics a heavyweight teacher.

- Quantization. FP16 or INT8 inference for tight latency budgets.

- Caching. Item embeddings, popular sequences, and even predictions for stable users.

Model comparison and selection#

| Model | Architecture | Parallel | Long-range | Side features | Best for |

|---|---|---|---|---|---|

| Markov chain | Statistical | n/a | No | No | Baselines, very cold start |

| GRU4Rec | RNN | No | Medium | No | Streaming, simple sessions |

| Caser | CNN | Yes | Short | No | Short sessions, local patterns |

| SASRec | Transformer | Yes | Yes | No | General-purpose default |

| BERT4Rec | Transformer | Yes | Yes | No | When bidirectional context truly helps |

| BST | Transformer | Yes | Yes | Yes | Feature-rich e-commerce |

| SR-GNN | GNN | Partly | Medium | No | Sessions with repeated items |

Picking a starting point:

- Default to SASRec. It is the strongest single bet across most datasets.

- Use GRU4Rec when you need online updates from a streaming session.

- Use Caser when sessions are short and locality dominates.

- Use BST when item-side features are rich and meaningful.

- Use SR-GNN when sessions contain repeated items and complex transitions.

- Use BERT4Rec when you can afford pre-training on a large corpus.

Questions and answers#

Q1. When should I pick session-based over user-based?#

Session-based wins for anonymous users, short and self-contained intent (e-commerce browsing, news), and real-time low-latency settings. User-based wins when you have stable user IDs, long histories, and care about preference evolution (music streaming, video accounts). Most production systems do both — use a long-term embedding for “who you are” and the session for “what you want right now.”

Q2. How do I pick max_len?#

Look at the empirical distribution of session lengths and target the 90th percentile. Common choices: 20–50 for sessions, 50–200 for user histories. Longer is not free — every extra position is compute, and old interactions are often noisier than helpful.

Q3. Why are Transformers usually better than RNNs here?#

Three reasons that matter in practice: parallel training, direct attention between any two positions (no vanishing gradients), and free interpretability via attention weights. RNNs are still useful when memory budgets are tight or when you need true streaming inference with state passed across requests.

Q4. Does BERT4Rec’s bidirectional attention actually help?#

Sometimes. The bidirectional context gives you richer item representations and makes the model robust to gaps. But the [MASK]-append inference trick is awkward, training is more involved, and on many real catalogs a well-tuned SASRec matches it. Try SASRec first; reach for BERT4Rec when you have a large pretraining corpus or noisy logged sessions.

Q5. How do I handle cold start?#

For new items use content features (category, brand, price, text, image) — feature-aware models like BST do this naturally. For new users or sessions start with popular and trending items, lean on session-based models that need no history, or wrap the recommender in an exploration layer (epsilon-greedy or contextual bandits).

Q6. Which evaluation metric should I report?#

For most leaderboards, HR@10 and NDCG@10 together. HR@10 is interpretable; NDCG@10 captures ranking quality. MRR is a good third number when each query has exactly one ground-truth item. In production, always pair offline metrics with online A/B tests on click-through rate, conversion, and revenue — offline wins do not always translate.

Q7. Can I combine sequential models with other approaches?#

Yes — and you usually should. Common hybrids:

- Sequential + collaborative filtering. Combine temporal signals with user-item similarity.

- Sequential + content. Use item features alongside the sequence (BST, content-aware SASRec).

- Sequential + knowledge graph. Inject relational structure between items.

- Multi-task. Predict next item and category, and dwell time, etc.

Q8. What is on the research frontier?#

The 2023–2025 wave is dominated by:

- LLM-based recommenders that prompt or fine-tune a language model on serialized user histories.

- Contrastive learning to produce better-separated item representations.

- Multi-modal sequences mixing IDs with images, text, and audio.

- Linear and sub-quadratic attention for very long histories.

- Causal inference tools to ask why users behave a certain way, not just predict what comes next.

Summary#

Sequential recommendation explicitly models the temporal order of user interactions to predict what comes next. The field has evolved from the simplicity of Markov chains to the sophistication of Transformers and graph neural networks.

Key takeaways:

- Order is signal. The arrangement of past interactions carries information that bag-of-items models throw away.

- Architecture progression. Markov chains → RNNs (GRU4Rec) → CNNs (Caser) → Transformers (SASRec, BERT4Rec) → GNNs (SR-GNN) — each step solved a real limitation of its predecessor.

- Session vs. user. Session-based models thrive on anonymous, short-term intent; user-based models capture taste evolution. Most production systems blend both.

- Picking an architecture depends on sequence length, the value of side features, latency, and how repetitive sessions are.

- Evaluate with HR@K, NDCG@K, MRR, but rely on online A/B tests for the final word.

- Scaling tricks — negative sampling, ANN search, two-stage retrieval, distillation, quantization, caching — are not optional in production.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling you are here

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices