Recommendation Systems (7): Graph Neural Networks and Social Recommendation

A deep, intuition-first walkthrough of graph neural networks for recommendation: GCN, GAT, GraphSAGE, PinSage, LightGCN, NGCF, social signals, scalable sampling, and cold start. Diagrams plus working PyTorch.

When Netflix decides what to recommend next, it does not look at your watch history in isolation. Behind the scenes there is a web of relationships: movies that share actors, users with overlapping taste, ratings that ripple through the catalogue. The “graph” view is not a metaphor — every interaction matrix is a graph, and treating it as one unlocks ideas that flat user/item embeddings cannot express.

Graph neural networks (GNNs) are the tool that lets us reason over that graph. Instead of learning each user and each item in isolation, a GNN says: your representation is shaped by the company you keep. That single shift powers Pinterest’s billion-node PinSage, the strikingly simple LightGCN that beats heavier baselines on collaborative filtering, and the social-recommendation systems that fuse “what you watched” with “what your friends watched.”

This article is an intuition-first walk through the landscape. We start from the bipartite user-item graph, build up the message-passing framework, then dissect GCN, GAT, GraphSAGE, PinSage, LightGCN, NGCF, social GNNs, and the sampling tricks that keep all of this tractable at scale. Every model comes with working PyTorch.

What You Will Learn#

- Why recommendation data is naturally graph-shaped, and what that buys you

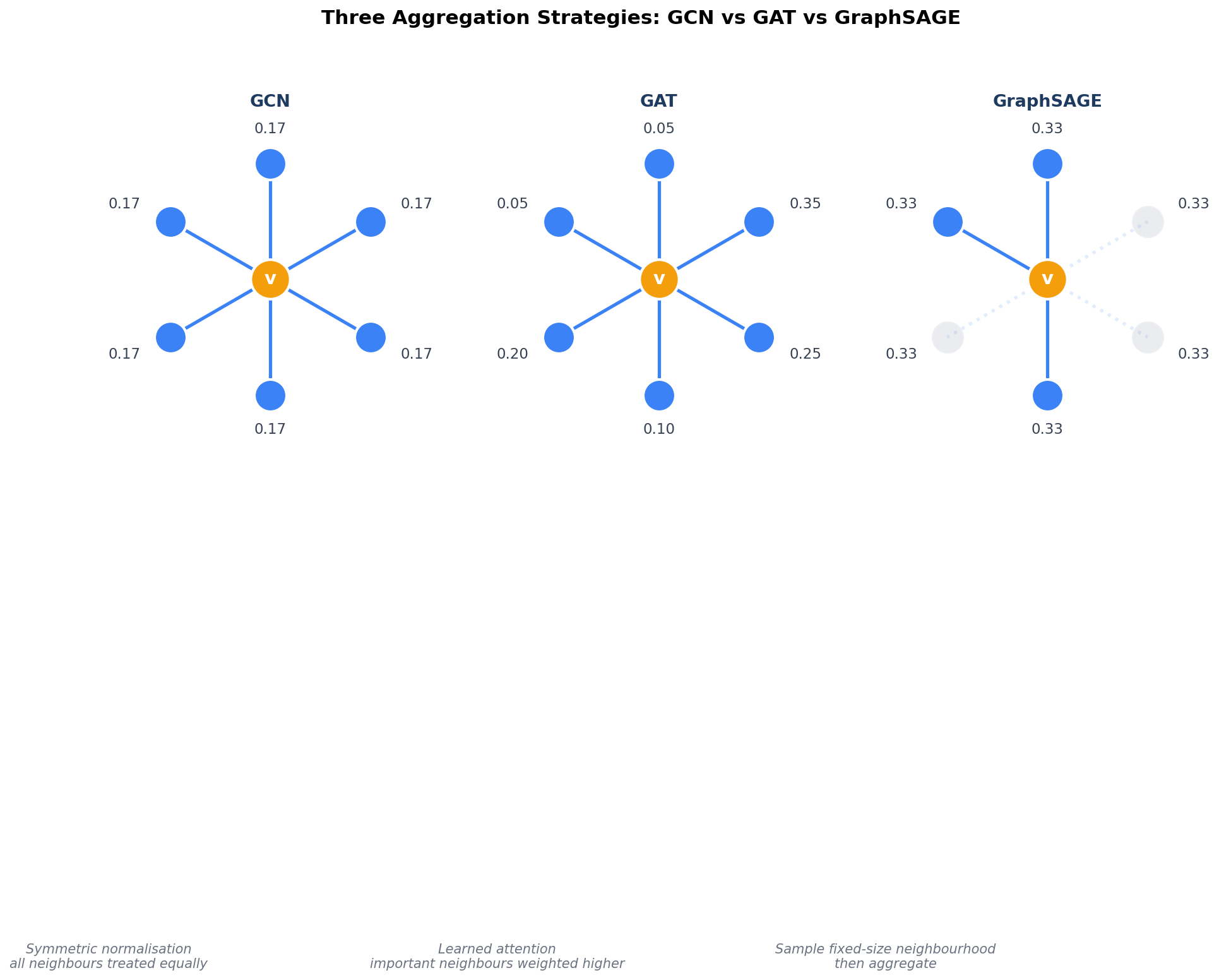

- The core GNN building blocks — GCN, GAT, GraphSAGE — and when to pick which

- How industrial models (PinSage, LightGCN, NGCF) put GNNs into production

- How social signals add 5–15% lift, and the failure mode to watch for

- Mini-batch neighbour sampling that scales GNNs from MovieLens to Pinterest

- A clean recipe for cold-start users via inductive aggregation

Prerequisites#

- Comfort with Python and PyTorch (tensors,

nn.Module, training loops) - Basic embeddings and collaborative filtering (Part 2 )

- Comfort with matrix notation; no spectral graph theory required — we build everything by hand first

Why GNNs for Recommendation?#

Recommendation Data Is Already a Graph#

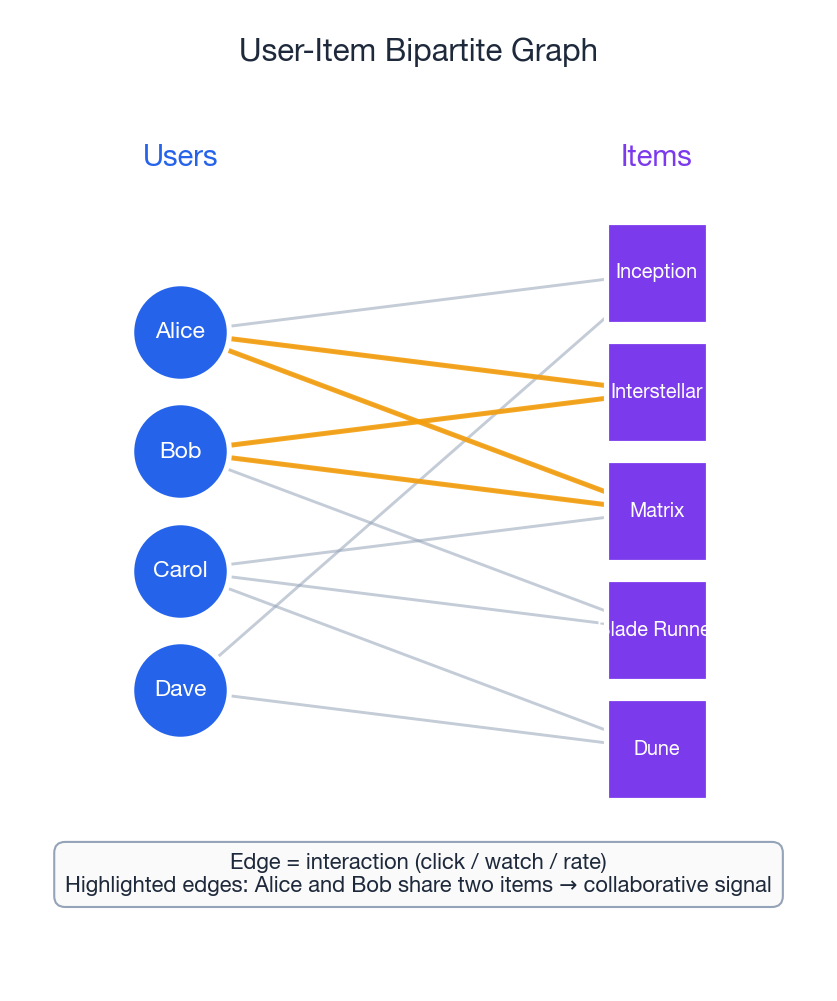

Take any recommendation system and look closely at the interaction matrix: rows are users, columns are items, and each non-zero entry is a click, watch, purchase, or rating. Redraw that matrix as a bipartite graph:

- Nodes are users and items.

- Edges connect a user to every item they interacted with.

- Collaborative signal is hidden in the topology: users who share many edges (interacted with similar items) are implicitly connected through those shared items.

In the figure above, Alice and Bob never met, but the highlighted edges reveal they share two movies. A GNN can propagate this signal across hops: “Bob liked Blade Runner, Bob is graph-similar to Alice through their shared movies, so Alice probably likes Blade Runner too.” Matrix factorisation never asks that question — it only sees individual cells.

What Traditional Approaches Miss#

| Method | What it captures | What it misses |

|---|---|---|

| Matrix factorisation | Latent factors for users/items, dot product score | Each embedding learned in isolation; no notion of “neighbours of neighbours” |

| Neural CF / autoencoders | Nonlinear interactions | Still per-pair; no propagation along the graph |

| GNN | Node embedding shaped by its $L$ -hop neighbourhood | (Eventually: scaling, over-smoothing — we will fix both) |

The slogan: traditional methods learn embeddings in isolation; GNNs learn embeddings in context.

What GNNs Bring to the Table#

- Explicit graph modelling — operate directly on topology.

- Neighbourhood aggregation — collaborative signal propagates as a side-effect of the architecture.

- Multi-hop reasoning — stack two layers and you capture “users who liked items similar to items you liked.”

- Inductive variants (GraphSAGE) — embed brand-new users/items without retraining.

- Heterogeneous graphs — fold in social ties, knowledge graphs, attributes.

Graph Neural Network Fundamentals#

Graph Basics (Quick Refresher)#

A graph $G=(V,E)$ has vertices $V=\{v_1,\dots,v_n\}$ and edges $E\subseteq V\times V$ . The adjacency matrix $A\in\{0,1\}^{n\times n}$ has $A_{ij}=1$ exactly when there is an edge between $v_i$ and $v_j$ .

Three graph flavours show up everywhere in recommendation:

| Type | Description | Example |

|---|---|---|

| Bipartite | Two node types; edges only cross types | User ↔ item |

| Weighted | Edge weights encode strength | Rating, click count |

| Attributed | Nodes carry feature vectors | Demographics, item categories |

Message Passing in One Picture#

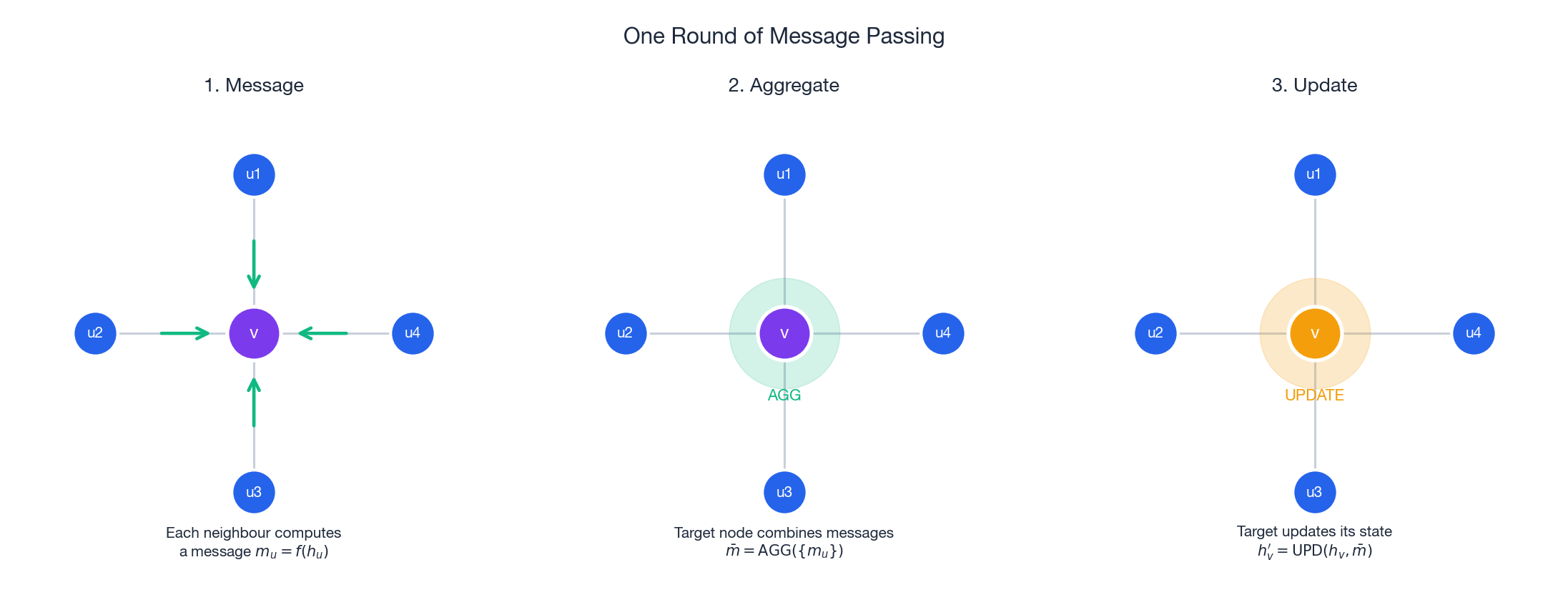

Every GNN, however dressed up, is a variation of the same three-step recipe:

- Message. Each neighbour computes what to send.

- Aggregate. The target combines incoming messages (sum, mean, attention-weighted, …).

- Update. The target blends the aggregate with its own previous state.

In plain English: “Look at what your neighbours know, summarise it, then blend it with what you already know.” Stack $L$ layers and each node sees its $L$ -hop neighbourhood.

Telephone analogy. Imagine a game of telephone where every person whispers to all their friends simultaneously. After one round you know what your friends think; after two, what your friends’ friends think. GNNs do exactly that with learned, differentiable functions.

Graph Convolutional Networks (GCN)#

The Key Idea#

GCN (Kipf & Welling, 2017) defines a convolution-like operation on graphs. Just as a CNN aggregates pixels from a local neighbourhood, a GCN aggregates feature vectors from a node’s graph neighbourhood.

The GCN Layer#

$$\mathbf{H}^{(l+1)} = \sigma\!\Bigl(\tilde{D}^{-1/2}\, \tilde{A}\, \tilde{D}^{-1/2}\, \mathbf{H}^{(l)}\, \mathbf{W}^{(l)}\Bigr)$$That looks dense. Here is what each piece does:

| Symbol | Meaning |

|---|---|

| $\tilde{A} = A + I$ | Adjacency matrix with self-loops added (each node also listens to itself) |

| $\tilde{D}$ | Degree matrix of $\tilde{A}$ |

| $\tilde{D}^{-1/2}\tilde{A}\tilde{D}^{-1/2}$ | Symmetric normalisation — prevents high-degree nodes from dominating |

| $\mathbf{H}^{(l)}$ | Node feature matrix at layer $l$ (each row = one node’s embedding) |

| $\mathbf{W}^{(l)}$ | Learnable weight matrix |

| $\sigma$ | Nonlinearity, typically ReLU |

- Gather the embeddings of all neighbours (plus yourself).

- Normalise each contribution by $1/\sqrt{d_v\cdot d_u}$ — popular nodes are scaled down so they do not shout over everyone else.

- Transform with a shared weight matrix $\mathbf{W}$ .

- Activate with ReLU.

Implementation: GCN Layer#

| |

Multi-Layer GCN#

Stacking layers extends the receptive field. Two layers = two-hop neighbourhood, three layers = three hops. But beware over-smoothing: too many layers and every node’s embedding converges to the same value. Two to three layers is the sweet spot for recommendation.

| |

Graph Attention Networks (GAT)#

Why Attention?#

GCN treats every neighbour equally (up to degree normalisation). But not all neighbours are equally informative — your best friend’s movie taste matters more than a random acquaintance’s. GAT (Veličković et al., 2018) fixes this by learning adaptive attention weights per edge.

How GAT Works#

$$e_{ij} = \mathrm{LeakyReLU}\!\bigl(\mathbf{a}^\top [\mathbf{W}\mathbf{h}_i \,\|\, \mathbf{W}\mathbf{h}_j]\bigr)$$ $$\alpha_{ij} = \frac{\exp(e_{ij})}{\sum_{k\in\mathcal{N}(i)} \exp(e_{ik})}$$ $$\mathbf{h}_i^{(l+1)} = \sigma\!\left(\sum_{j\in\mathcal{N}(i)\cup\{i\}} \alpha_{ij}\, \mathbf{W}^{(l)}\, \mathbf{h}_j^{(l)}\right)$$In plain English: “For each neighbour, learn how relevant they are to me, then take a weighted average of their features.”

Multi-Head Attention#

$$\mathbf{h}_i^{(l+1)} = \big\|_{k=1}^{K}\; \sigma\!\left(\sum_{j\in\mathcal{N}(i)\cup\{i\}} \alpha_{ij}^{(k)}\, \mathbf{W}^{(k)}\, \mathbf{h}_j^{(l)}\right)$$Implementation: GAT Layer#

| |

GraphSAGE: Scalable Inductive Learning#

The Problem with GCN#

GCN is transductive: it needs the entire graph at training time and has no embedding for nodes that appear later. If a new user signs up tomorrow, GCN cannot score them without retraining.

GraphSAGE (Hamilton et al., 2017) fixes this with inductive learning. Instead of memorising one fixed embedding per node, it learns how to aggregate neighbour information. When a new node appears, GraphSAGE runs the same aggregation on its neighbours to produce an embedding on the fly.

Sample and Aggregate#

GraphSAGE has two key ideas:

- Neighbour sampling. Instead of using all neighbours (expensive), sample a fixed number $k$ at each hop.

- Learned aggregation. Apply a trainable function (mean, LSTM, max-pool) to the sampled neighbours.

In plain English: “Sample some neighbours, summarise their features, concatenate with your own, push through a linear layer.”

| Aggregator | How it works |

|---|---|

| Mean | Average neighbour embeddings: $\tfrac{1}{\lvert \mathcal{N}\rvert}\sum_u \mathbf{h}_u$ |

| Max-pool | Element-wise max after a shared MLP: $\max(\sigma(\mathbf{W}_{\text{pool}}\mathbf{h}_u + \mathbf{b}))$ |

| LSTM | Run an LSTM over neighbours (order-sensitive) |

How the Three Differ at a Glance#

GCN gives every neighbour the same (degree-normalised) say. GAT learns who matters and turns up their weight. GraphSAGE picks a manageable subset of neighbours so the whole thing stays affordable on huge graphs.

Implementation: GraphSAGE Layer#

| |

The ability to embed brand-new nodes is the single biggest reason GraphSAGE shows up in production. Pinterest’s PinSage (next section) is a direct descendant.

PinSage: Billion-Scale Recommendation at Pinterest#

PinSage (Ying et al., 2018) is Pinterest’s production GNN, running on 3 billion nodes and 18 billion edges. Three innovations make this possible:

Random Walk Sampling#

Instead of sampling neighbours uniformly, PinSage runs short random walks from each node and keeps the top-$k$ most frequently visited neighbours. This focuses on the most important neighbours, not just the closest ones.

Importance-Weighted Aggregation#

$$\mathbf{h}_v^{(l)} = \sigma\!\Bigl(\mathbf{W}^{(l)}\cdot \bigl[\mathbf{h}_v^{(l-1)} \,\big\|\, \mathrm{AGG}\bigl(\{\alpha_{uv}\, \mathbf{h}_u^{(l-1)} : u\in\mathcal{N}_{\text{top-}k}(v)\}\bigr)\bigr]\Bigr)$$Hard Negative Mining#

During training, PinSage samples hard negatives — items that score high but are not actual positives. This forces the model to make finer distinctions instead of just separating obviously irrelevant items from positives.

Implementation: PinSage Convolution#

| |

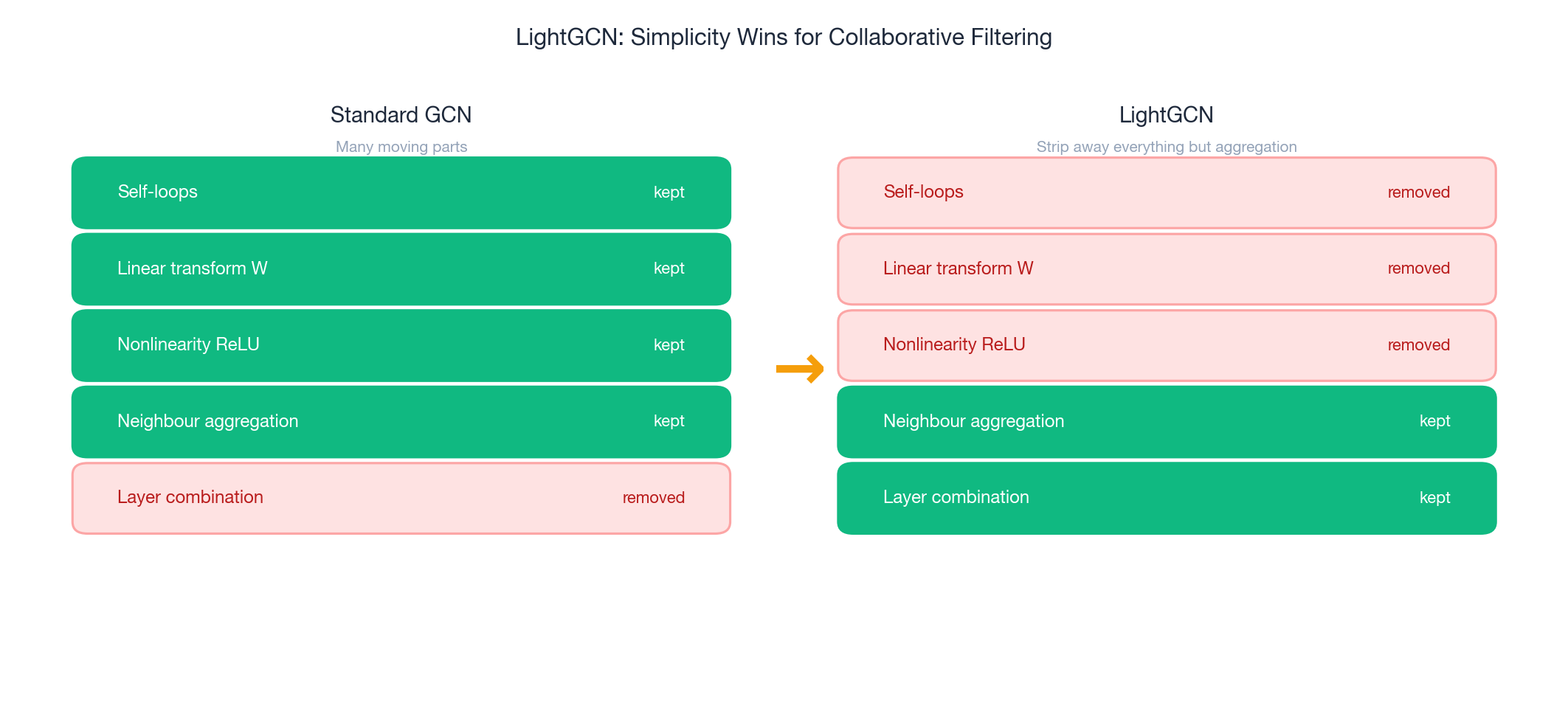

LightGCN: Less Is More#

The Surprising Insight#

LightGCN (He et al., 2020) asks a provocative question: “What if we strip everything out of GCN — no learned weight matrices, no nonlinear activations, no self-loops — and just keep the neighbourhood aggregation?”

The answer, on collaborative filtering, is it works better. The graph structure alone carries enough signal. The feature transforms and activations of a standard GCN actually hurt by adding unnecessary complexity and overfitting risk.

Architecture#

$$\mathbf{e}_u^{(l+1)} = \sum_{i\in\mathcal{N}(u)} \frac{1}{\sqrt{|\mathcal{N}(u)|\cdot|\mathcal{N}(i)|}}\; \mathbf{e}_i^{(l)}$$ $$\mathbf{e}_i^{(l+1)} = \sum_{u\in\mathcal{N}(i)} \frac{1}{\sqrt{|\mathcal{N}(u)|\cdot|\mathcal{N}(i)|}}\; \mathbf{e}_u^{(l)}$$In plain English: “Average your neighbours’ embeddings (normalised by degrees). No activation, no weight matrix. That is one layer.”

$$\mathbf{e}_u = \sum_{l=0}^{L} \alpha_l\, \mathbf{e}_u^{(l)}, \qquad \mathbf{e}_i = \sum_{l=0}^{L} \alpha_l\, \mathbf{e}_i^{(l)}$$with $\alpha_l = \tfrac{1}{L+1}$ (equal weighting). Layer 0 is the raw embedding, layer 1 is one-hop, layer 2 is two-hop, and so on. Combining them gives a multi-scale representation without committing to a single depth.

Why It Works#

- Smoothing effect. Aggregation pulls similar nodes’ embeddings closer — exactly what collaborative filtering wants.

- Layer combination. Mixing layers balances direct signals (layer 0) with higher-order collaborative signals (deeper layers) and dampens over-smoothing.

- Simplicity as regularisation. Fewer parameters means less overfitting on sparse interaction data.

Implementation: LightGCN#

| |

NGCF: Neural Graph Collaborative Filtering#

How It Differs from LightGCN#

NGCF (Wang et al., 2019) takes the opposite philosophy: explicit feature transformations and user-item interaction terms during message passing should give richer embeddings. Where LightGCN strips, NGCF adds.

Message Construction#

$$\mathbf{m}_{u\leftarrow i} = \frac{1}{\sqrt{|\mathcal{N}(u)|\cdot|\mathcal{N}(i)|}}\;\bigl(\mathbf{W}_1\, \mathbf{e}_i^{(l)} + \mathbf{W}_2\, (\mathbf{e}_i^{(l)} \odot \mathbf{e}_u^{(l)})\bigr)$$The $\odot$ (element-wise product) captures how user and item features interact. If a user dimension is high for “action” and the item dimension is high for the same, the product amplifies the signal.

$$\mathbf{e}_u^{(l+1)} = \mathrm{LeakyReLU}\!\bigl(\mathbf{m}_{u\leftarrow u} + \sum_{i\in\mathcal{N}(u)} \mathbf{m}_{u\leftarrow i}\bigr)$$Implementation: NGCF#

| |

LightGCN vs. NGCF: Which Should You Use?#

| LightGCN | NGCF | |

|---|---|---|

| Parameters | Only embeddings | Embeddings + weight matrices |

| Performance | Often better on sparse data | Better when features are rich |

| Overfitting risk | Low | Higher (more parameters) |

| Training speed | Fast | Slower |

| Recommended for | Most CF tasks | Tasks with dense features |

Default choice: LightGCN. Switch to NGCF only with strong item/user features and a large enough dataset to justify the extra parameters.

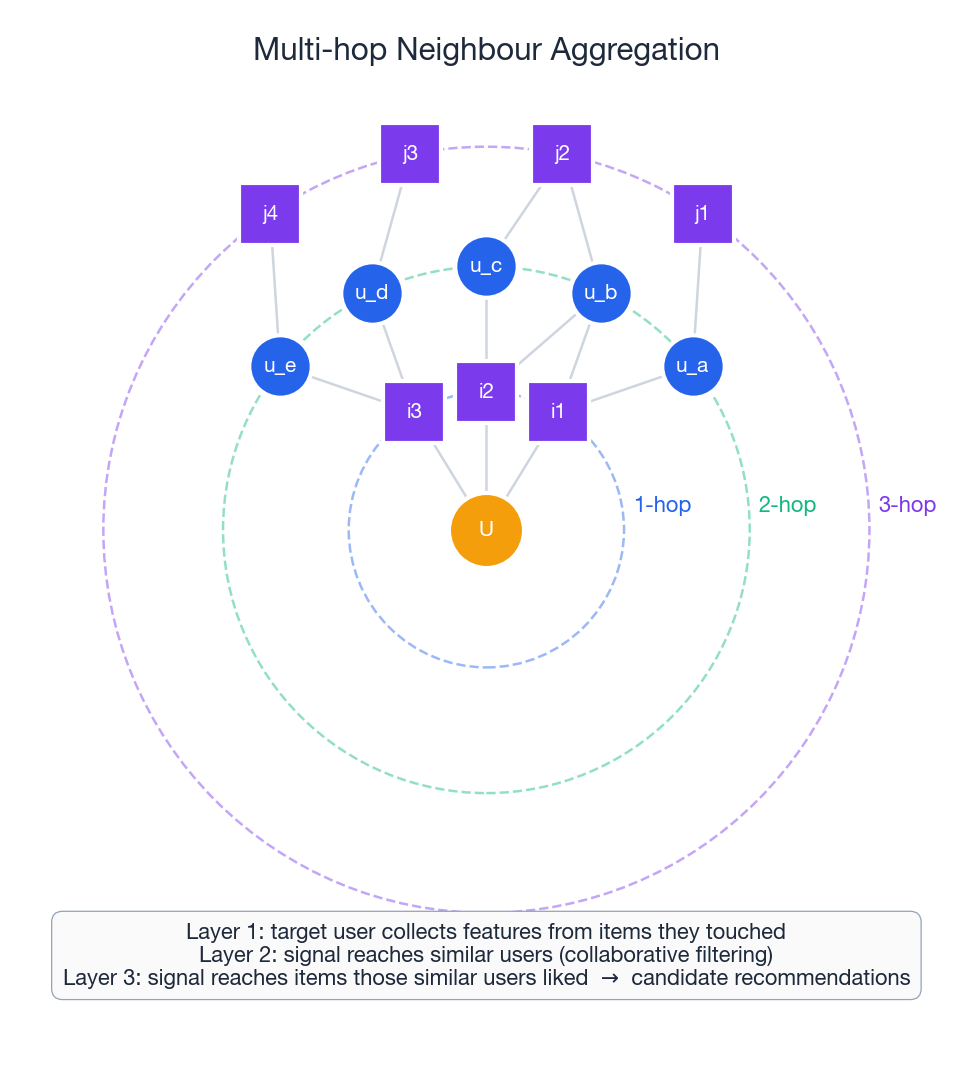

Why Stack Layers? Multi-Hop Aggregation#

The reason recommendation cares about depth at all becomes obvious once you draw the hops:

- Layer 1 — target user collects features from items they touched.

- Layer 2 — signal reaches similar users (the classic collaborative-filtering hop).

- Layer 3 — signal reaches the items those similar users liked: candidate recommendations.

Two layers already capture the “users who liked similar items” pattern that matrix factorisation cannot express. Three layers occasionally help on sparse graphs but flirt with over-smoothing — defer to LightGCN-style layer combination if you want depth without collapse.

Social Recommendation#

The Idea#

Your friends influence what you buy, watch, and listen to. Social recommendation adds social edges (friendships, follows, trust links) to the user-item graph. The bet: socially connected users tend to share preferences.

Graph Structure#

A social recommendation graph has two edge types:

- Interaction edges $(u_i, i_j)$ : user $u_i$ interacted with item $i_j$ .

- Social edges $(u_i, u_j)$ : users $u_i$ and $u_j$ are friends.

Two Mechanisms#

Homophily. Friends have similar tastes because they are friends (shared background, culture).

Social influence. Friends become more similar over time because they influence each other’s choices.

For the model, both reduce to the same prescription: propagate preferences along social edges.

Implementation: Social GCN#

| |

Empirical studies report 5–15% lift in NDCG when social signals are informative — meaningful in production. The failure mode: noisy social ties (random Facebook friends with no shared taste) hurt instead of help. Use attention to down-weight irrelevant social edges, or filter the social graph by interaction overlap before training.

Graph Sampling for Scalability#

The Problem#

Full-batch GNN training means loading the entire graph into memory and computing embeddings for all nodes every forward pass. Fine for MovieLens (100K interactions). Impossible for Pinterest (18 billion edges).

Sampling Strategies#

| Strategy | How it works | Example |

|---|---|---|

| Neighbour sampling | Sample a fixed number of neighbours per node, per layer | GraphSAGE: 10 at hop 1, 5 at hop 2 |

| Node sampling | Sample a subset of nodes, use their neighbourhoods | FastGCN |

| Subgraph sampling | Sample connected subgraphs | Cluster-GCN, GraphSAINT |

| Random walk sampling | Use random walks to find important neighbours | PinSage |

Neighbour sampling is the workhorse: bound the receptive field per node so memory stays predictable, then re-sample every epoch for variance reduction.

Implementation: Neighbour Sampler#

| |

Training Techniques#

BPR Loss (Bayesian Personalised Ranking)#

$$\mathcal{L}_{\text{BPR}} = -\sum_{(u,i,j)} \ln\, \sigma(\hat{r}_{ui} - \hat{r}_{uj}) + \lambda \|\Theta\|^2$$In plain English: “For each user $u$ , make the score of a positive item $i$ (one they interacted with) higher than a negative item $j$ (one they did not). The sigmoid and log turn this into a smooth, differentiable objective.”

- $(u,i,j)$ = (user, positive item, negative item) triplet

- $\hat{r}_{ui} = \mathbf{e}_u^\top \mathbf{e}_i$ = dot-product score

- $\lambda \|\Theta\|^2$ = L2 regularisation

Negative Sampling Strategies#

| Strategy | Description | When to use |

|---|---|---|

| Uniform | Pick random non-interacted items | Baseline |

| Popularity-based | Sample proportional to item popularity | Helps with long-tail |

| Hard negatives | High-scoring negatives | Late-stage refinement |

Training Loop#

| |

Evaluation Metrics#

| |

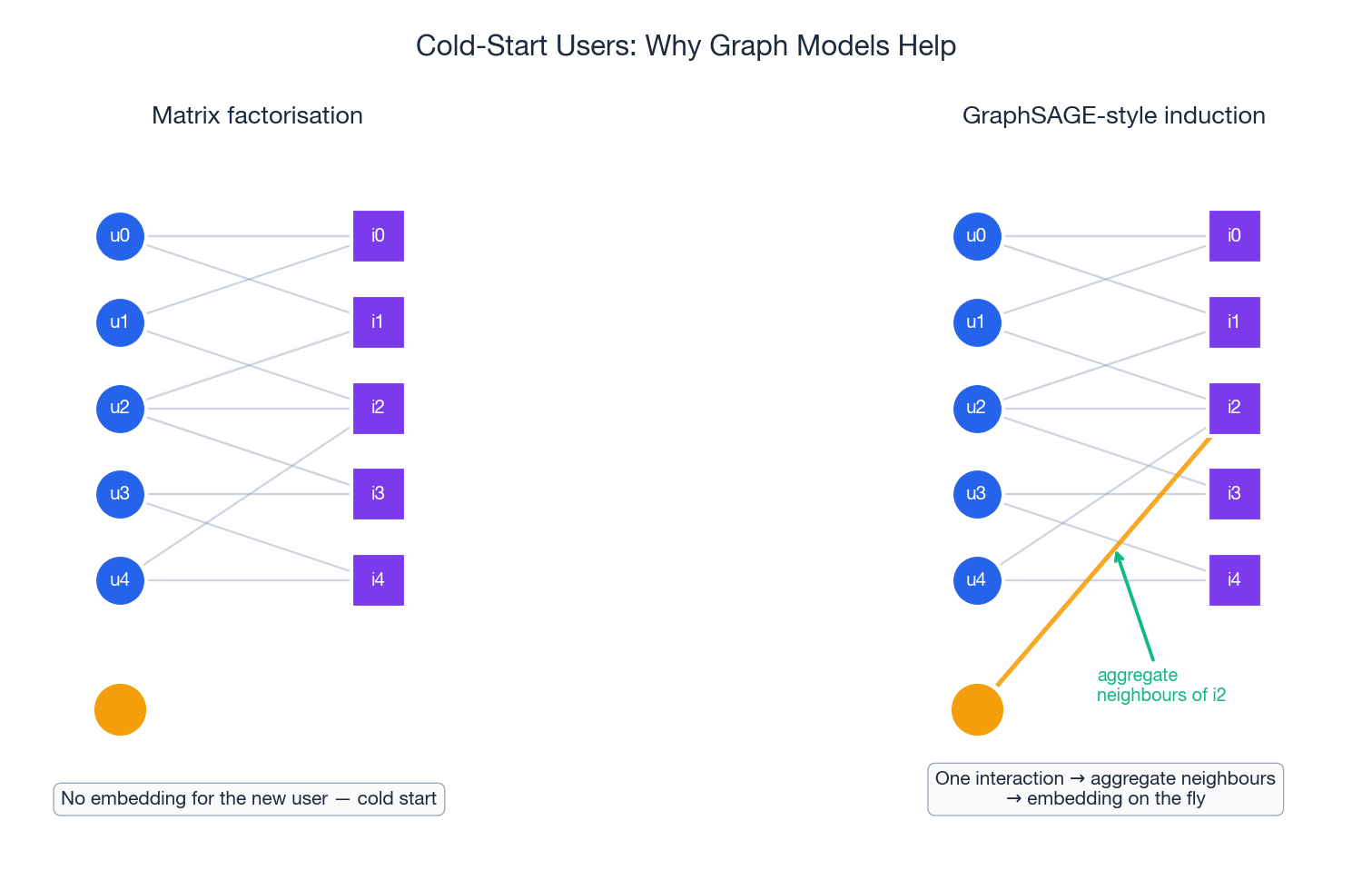

Cold Start: Where Graph Models Shine#

A new user appears with one interaction. Matrix factorisation cannot help — it has no row for them, and adding one means retraining. An inductive GNN like GraphSAGE simply runs the same aggregation function on whatever neighbours the new user has. Even a single interaction yields a meaningful embedding because the aggregation pulls in everything we already know about that item’s other admirers.

A practical recipe for handling cold-start users:

- Inductive aggregation. Use GraphSAGE/PinSage-style models so any node with at least one neighbour gets an embedding for free.

- Feature initialisation. Seed cold-start nodes with side-feature embeddings (demographics, device, registration channel) before any aggregation.

- Mean-of-similar fallback. Until a user has enough interactions, blend their embedding with the mean of users who share the same side features.

- Meta-learning (MAML-style). Train the model to adapt rapidly to new users from a few interactions if you can afford it.

Complete Example: LightGCN on MovieLens#

| |

FAQ#

Why do GNNs outperform matrix factorisation for recommendation?#

Matrix factorisation learns each embedding independently — it never asks “what do my neighbours look like?” GNNs explicitly propagate collaborative signals through the graph, so similar users (connected through shared items) end up with similar embeddings as a side-effect of the architecture. Multi-layer GNNs capture higher-order patterns (“users who liked items similar to items you liked”) that matrix factorisation cannot express in a single dot product.

GCN vs. GAT vs. GraphSAGE — when do I use which?#

| GCN | GAT | GraphSAGE | |

|---|---|---|---|

| Strengths | Simple, efficient | Adaptive neighbour weighting | Scalable, handles new nodes |

| Weaknesses | Needs full graph, fixed weights | Expensive for large neighbourhoods | Sampling introduces variance |

| Best for | Small/medium static graphs | Heterogeneous edges | Large, evolving graphs |

Rule of thumb: start with LightGCN (simplified GCN) for collaborative filtering. Reach for GraphSAGE when you need inductive learning, and GAT when different neighbours carry very different importance.

How many GNN layers should I use?#

Two to three layers is standard for recommendation:

- 1 layer — direct neighbours only; misses collaborative patterns.

- 2 layers — friends-of-friends; sweet spot for most tasks.

- 3 layers — three-hop patterns; slight gain, watch for over-smoothing.

- 4+ layers — over-smoothing dominates; embeddings become indistinguishable.

Use layer combination (LightGCN-style) to get the benefit of multiple scales without committing to a single deep stack.

How do I handle cold-start users in a GNN?#

- GraphSAGE-style induction. Aggregation works for any node with at least one neighbour.

- Feature initialisation. Initialise the new user’s embedding from side features (demographics, device) or the mean of similar users.

- Meta-learning. Use MAML-style approaches to adapt rapidly from a few interactions.

- Hybrid models. Combine GNN embeddings with content-based features so cold nodes still have useful representations.

See the cold-start figure above for the inductive case.

What about computational cost?#

| Model | Forward pass | Memory |

|---|---|---|

| GCN / LightGCN | $O(L\cdot\lvert E\rvert\cdot d)$ | $O(\lvert V\rvert\cdot d + \lvert E\rvert)$ |

| GAT | $O(L\cdot\lvert E\rvert\cdot d\cdot H)$ | $O(\lvert V\rvert\cdot d\cdot H + \lvert E\rvert)$ |

| GraphSAGE (sampled) | $O(L\cdot\lvert V\rvert\cdot k\cdot d)$ | $O(\lvert V\rvert\cdot d + k\cdot\lvert V\rvert)$ |

with $L$ = layers, $|E|$ = edges, $d$ = embedding dim, $H$ = attention heads, $k$ = sampled neighbours. For graphs with billions of edges, sampling (GraphSAGE, PinSage) or subgraph partitioning (Cluster-GCN) is mandatory.

Does social recommendation always help?#

Only when social ties genuinely reflect shared preferences. Empirical studies show 5–15% NDCG lift on informative social graphs. Noisy social connections (random follower lists) can hurt. Use attention to down-weight irrelevant edges, or pre-filter the social graph by interaction overlap.

Can GNNs handle dynamic graphs?#

Yes, with modifications:

- Time-aware aggregation — weight neighbours by recency.

- Temporal encoding — add time embeddings to edges.

- Incremental updates — fine-tune on new edges instead of retraining.

- Temporal GNNs — TGN and friends handle streaming graphs natively.

| |

How do I prevent over-smoothing?#

- Limit depth to 2–3 layers.

- Layer combination (LightGCN-style) — equal-weight all layers so the final representation still contains the original embedding.

- Residual connections — $\mathbf{h}^{(l+1)} = \mathbf{h}^{(l)} + \mathrm{GNN}(\mathbf{h}^{(l)})$ .

- Edge dropout — randomly drop edges during training to reduce structural over-reliance.

Summary#

- Recommendation data is inherently graph-structured. GNNs exploit this structure by propagating collaborative signals through neighbourhood aggregation.

- LightGCN proves simplicity wins. Stripping GCN down to bare aggregation often outperforms heavier architectures on collaborative filtering.

- GraphSAGE enables inductive learning. Critical for production systems where new users and items appear constantly — and the cleanest path to handling cold start.

- PinSage demonstrates billion-scale feasibility. Random-walk sampling and importance weighting make GNNs practical at Pinterest scale.

- Social signals provide 5–15% lift — but only when social connections genuinely reflect shared preferences.

- Two to three layers is the sweet spot. Deeper risks over-smoothing; layer combination buys depth without collapse.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation you are here

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices