Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

Learn how knowledge graphs supercharge recommendation systems by adding semantic understanding. Covers RippleNet, KGCN, KGAT, CKE, and path-based reasoning -- with intuitive explanations, real-world analogies, and working Python code.

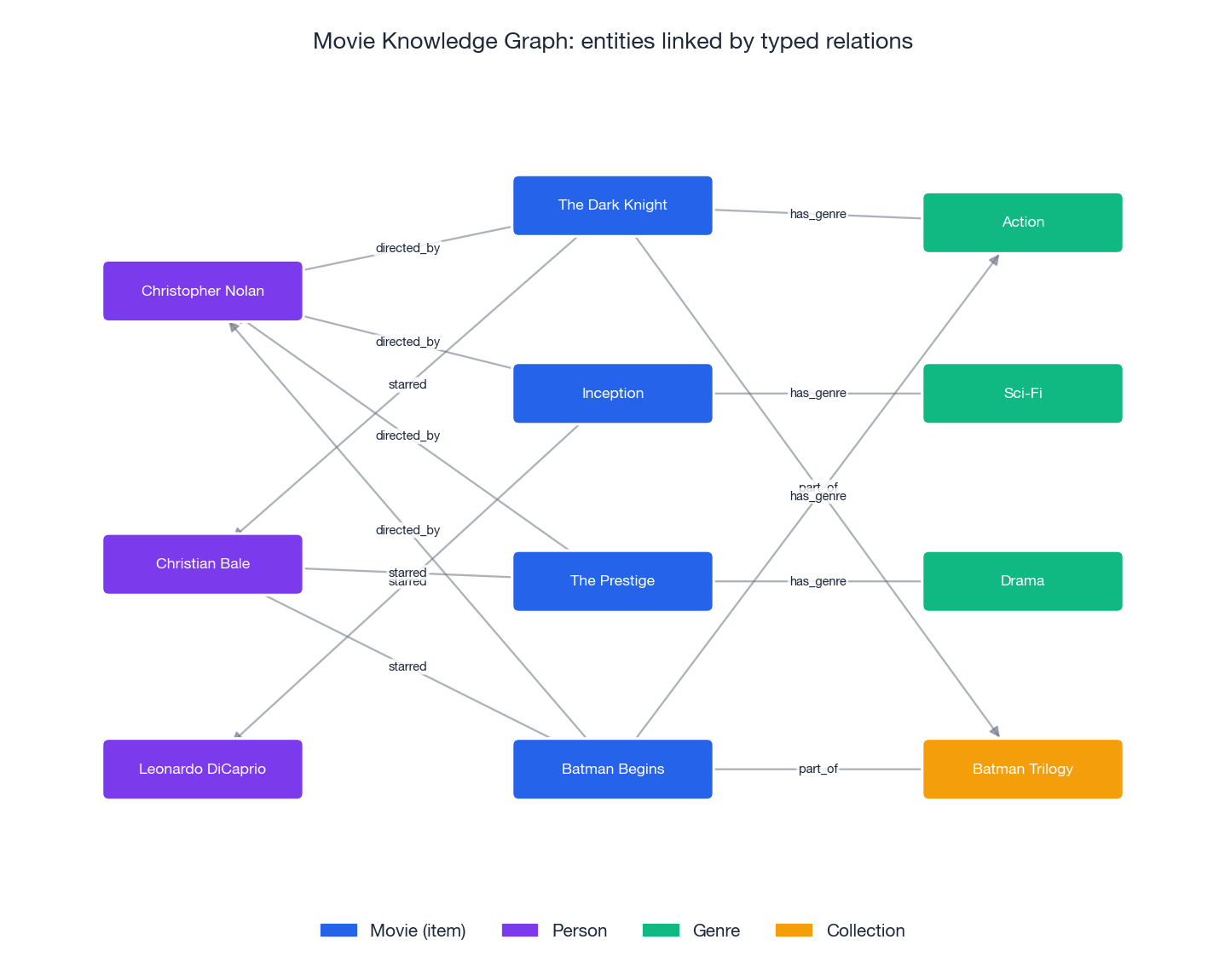

When you search for The Dark Knight on a streaming platform, the system doesn’t just log that you watched it. It knows that Christian Bale played Batman, Christopher Nolan directed it, it’s part of the Batman trilogy, and it shares cinematic DNA with other cerebral action films. This rich semantic web is a knowledge graph (KG) — a structured network of entities (movies, actors, directors, genres) connected by typed relations (acted_in, directed_by, part_of).

Why does this matter for recommendations? Pure collaborative filtering has a blind spot: it can only recommend items with existing interaction history. A brand-new film with zero views is invisible. But if that film shares a director with movies you love, a knowledge graph sees the connection right away. KGs transform recommendations from raw pattern matching into semantic reasoning.

What You Will Learn#

- What knowledge graphs are and how they encode real-world facts

- How KG embeddings (TransE, TransR, DistMult) represent entities and relations as vectors

- Propagation-based methods: RippleNet spreads user preferences like ripples in water

- Graph convolutional methods: KGCN and KGAT learn item representations from KG neighbors

- Embedding fusion: CKE combines collaborative, structural, and textual signals

- Path-based reasoning for explainable recommendations

- Working PyTorch code for every major method

Prerequisites#

- Basic Python and PyTorch (tensors,

nn.Module, training loops) - Familiarity with graph neural networks (Part 7 of this series)

- Comfort with embedding concepts (Part 5 )

Foundations of Knowledge Graphs#

What Is a Knowledge Graph?#

A knowledge graph stores facts as (head, relation, tail) triples. Each triple represents one atomic fact:

- (The Dark Knight, directed_by, Christopher Nolan)

- (The Dark Knight, starred, Christian Bale)

- (The Dark Knight, has_genre, Action)

- (Christian Bale, acted_in, The Prestige)

Formally, a knowledge graph is a set $\mathcal{G} = \{(h, r, t)\}$ where $h \in \mathcal{E}$ is the head entity, $r \in \mathcal{R}$ is the relation type, and $t \in \mathcal{E}$ is the tail entity. $\mathcal{E}$ is the set of all entities and $\mathcal{R}$ is the set of all relation types.

Analogy. Think of a knowledge graph as a Wikipedia-scale fact database, but stored as a graph instead of prose. Each article is a node, and every link between articles is a labeled edge — the label explains why they are connected.

Real-World Knowledge Graphs#

| Knowledge Graph | Entities | Facts | Used by |

|---|---|---|---|

| Freebase | 39M | 1.9B | Google (deprecated) |

| Wikidata | 100M+ | 1.4B+ | Wikipedia, Google |

| DBpedia | 6M | 580M | Academic research |

| Amazon Product Graph | Billions | Trillions | Amazon recommendations |

Knowledge Graph Structure for Recommendation#

Recommendation KGs are typically heterogeneous — they contain multiple entity and relation types:

Entity types

- Users: $U = \{u_1, u_2, \ldots, u_m\}$

- Items: $I = \{i_1, i_2, \ldots, i_n\}$

- Attributes: genres, actors, directors, brands, …

Relation types

- User-item:

(user, interacted, item) - Item-attribute:

(movie, has_genre, Action),(movie, directed_by, Nolan) - Attribute-attribute:

(Nolan, collaborated_with, Bale)

Knowledge Graph Embeddings#

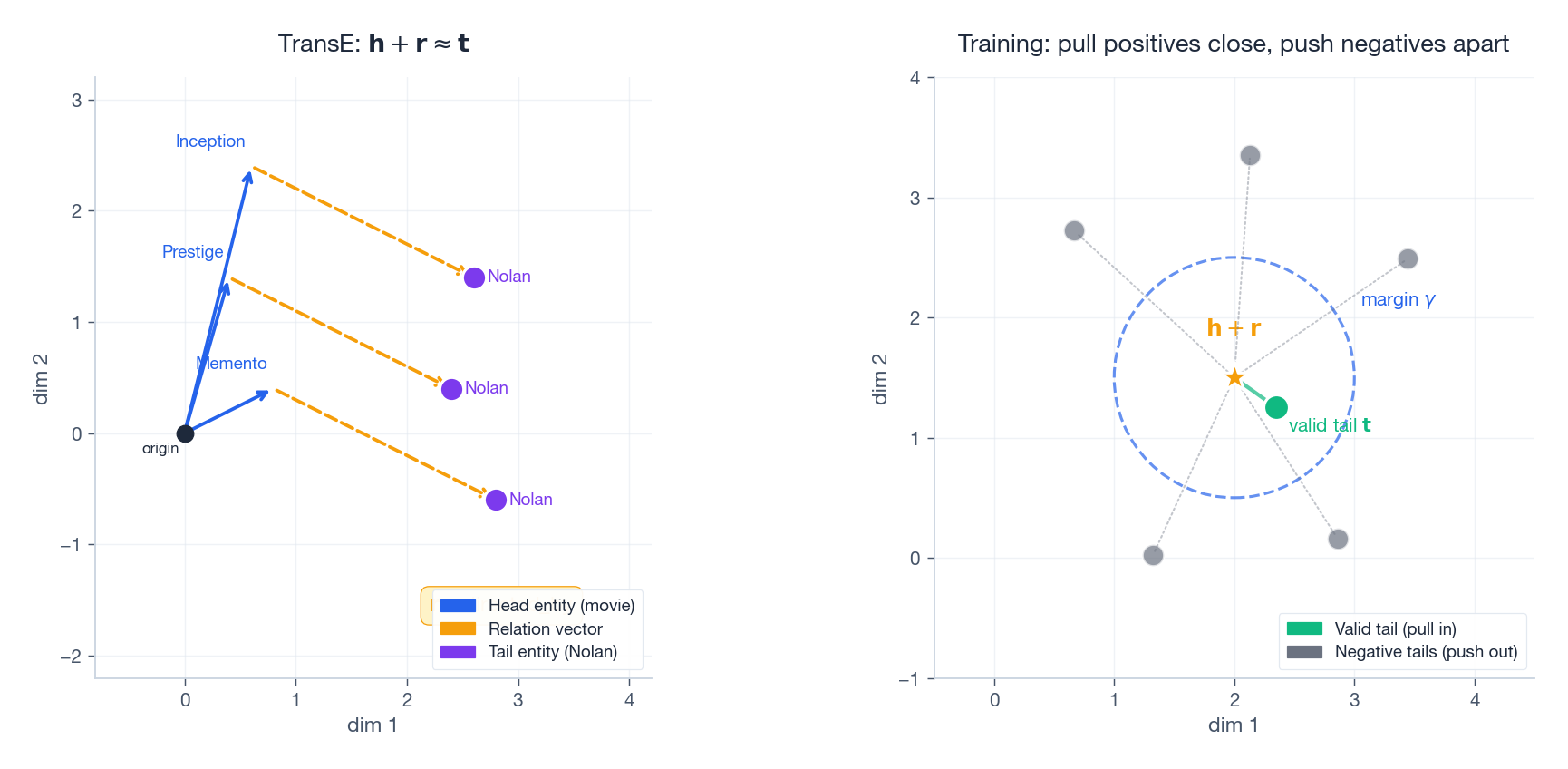

Before feeding a knowledge graph into a recommendation model, we must convert entities and relations into dense vectors. The key question is: how do we train these embeddings to respect the graph’s structure?

Plain English. “Push valid triples close together ($\mathbf{h} + \mathbf{r} \approx \mathbf{t}$ ) and invalid triples far apart. The margin $\gamma$ controls how much separation you require.” The right panel of the figure above shows this: the green dot (valid tail) is pulled inside the margin circle, while gray negatives are pushed outside.

| Method | Scoring function | Best for |

|---|---|---|

| TransE | $\mid\mathbf{h} + \mathbf{r} - \mathbf{t}\mid$ | Simple 1-to-1 relations |

| TransR | $\mid\mathbf{h} M_r + \mathbf{r} - \mathbf{t} M_r\mid$ | Relations needing different vector spaces |

| DistMult | $\mathbf{h}^T \text{diag}(\mathbf{r})\, \mathbf{t}$ | Symmetric relations |

| ComplEx | $\text{Re}(\mathbf{h}^T \text{diag}(\mathbf{r})\, \bar{\mathbf{t}})$ | Asymmetric relations |

TransE analogy. Think of cities on a map. “Paris” + “fly_east_2000km” should land near “Moscow.” “Paris” + “fly_south_1500km” should land near “Algiers.” The relation vector acts like a displacement on the map.

Implementation: Knowledge Graph Construction#

| |

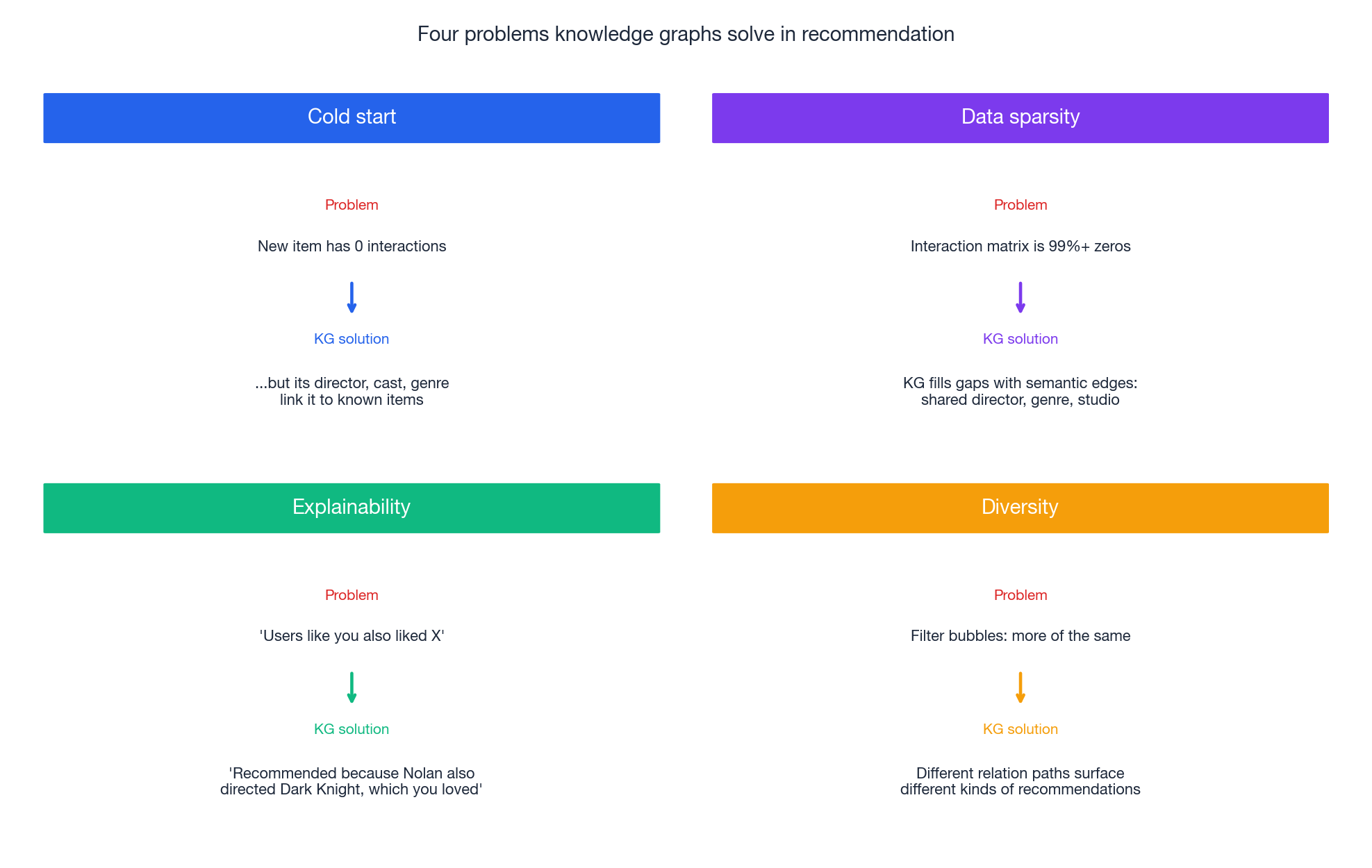

Why Knowledge Graphs Help Recommendation#

Knowledge graphs solve four hard problems that plague pure collaborative filtering.

Cold Start#

Problem. A new movie with zero interactions is invisible to collaborative filtering — there’s nothing for the model to compare against.

KG solution. Even on day one, the new movie has attributes (director, actors, genre) that connect it to the rest of the graph. If you loved Nolan’s other films, the KG can recommend his new movie immediately, without any interaction data.

Data Sparsity#

Problem. Most users interact with a tiny fraction of items. The interaction matrix is 99%+ zeros, leaving the model with little signal to work with.

KG solution. The knowledge graph fills the gaps with dense semantic connections. Even if two items share no users, they might share a director, genre, or production studio — and that link still carries information.

Explainability#

Problem. “Users who liked X also liked Y” is not a satisfying explanation.

KG solution. “We recommend Inception because it was directed by Christopher Nolan, who also directed The Dark Knight, which you rated 5 stars.” The KG provides concrete, interpretable reasoning paths.

Diversity#

Problem. Collaborative filtering tends to create filter bubbles — recommending more of the same.

KG solution. Different relation paths lead to different kinds of recommendations. Following same_director yields different results than same_genre or same_actor, naturally diversifying the recommendation list.

Concrete Example: After Watching The Dark Knight#

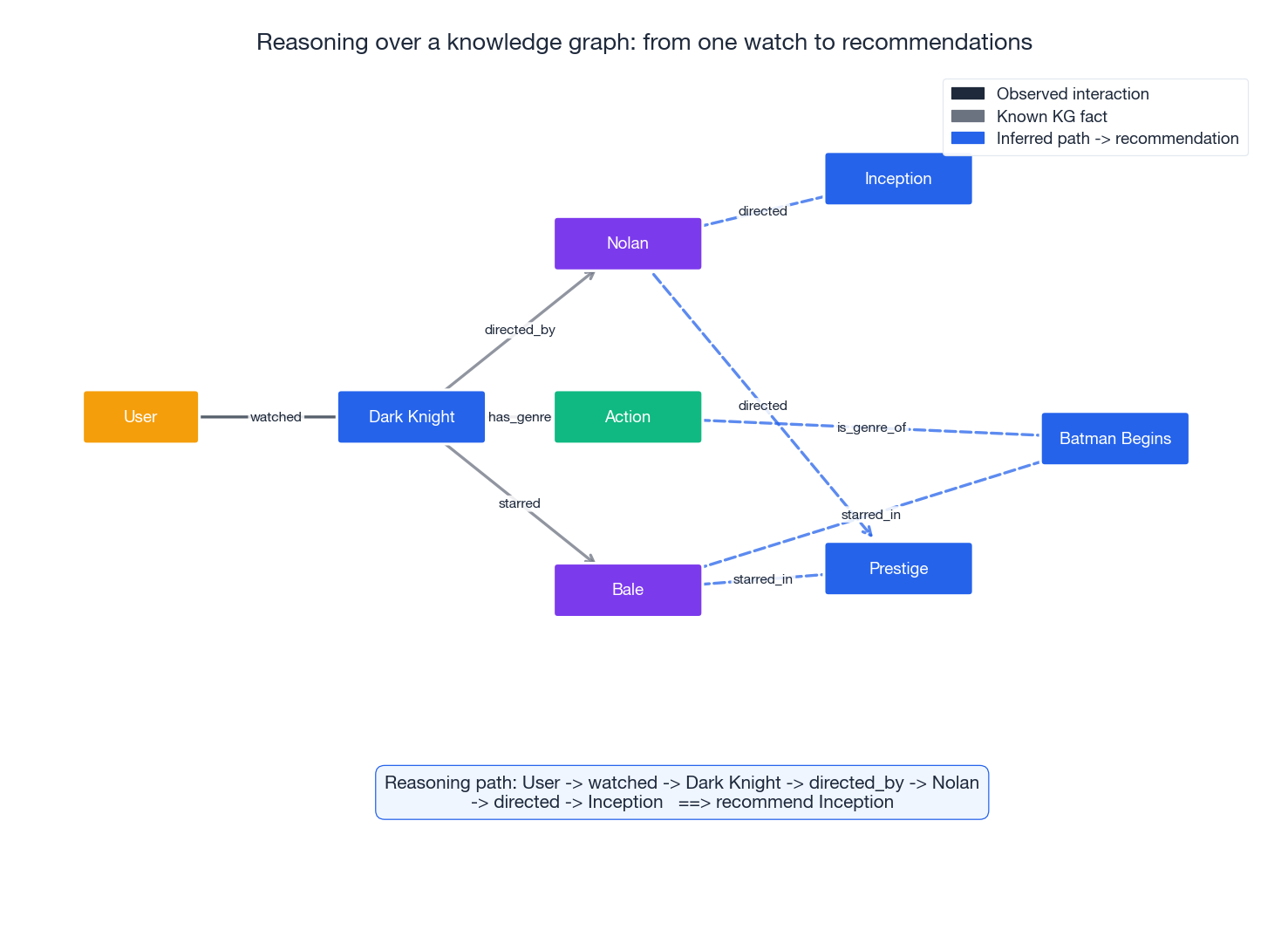

The figure traces explicit paths from a user’s single watch to four candidate movies:

- Inception — via

Dark Knight -> directed_by -> Nolan -> directed -> Inception - The Prestige — two converging paths through Nolan and Bale (a strong signal)

- Batman Begins — via shared cast and shared genre

- The system can produce a different ranking and an explanation for each candidate, something pure CF cannot do.

Types of KG-Enhanced Methods#

| Category | Approach | Key models |

|---|---|---|

| Propagation-based | Spread user preferences through the KG | RippleNet |

| Graph convolutional | Learn item embeddings by aggregating KG neighbors | KGCN, KGAT |

| Embedding-based | Learn joint embeddings of users, items, and KG entities | CKE |

| Path-based | Reason over multi-hop paths for explainability | KPRN, PathRec |

RippleNet: Preference Propagation#

The Ripple Analogy#

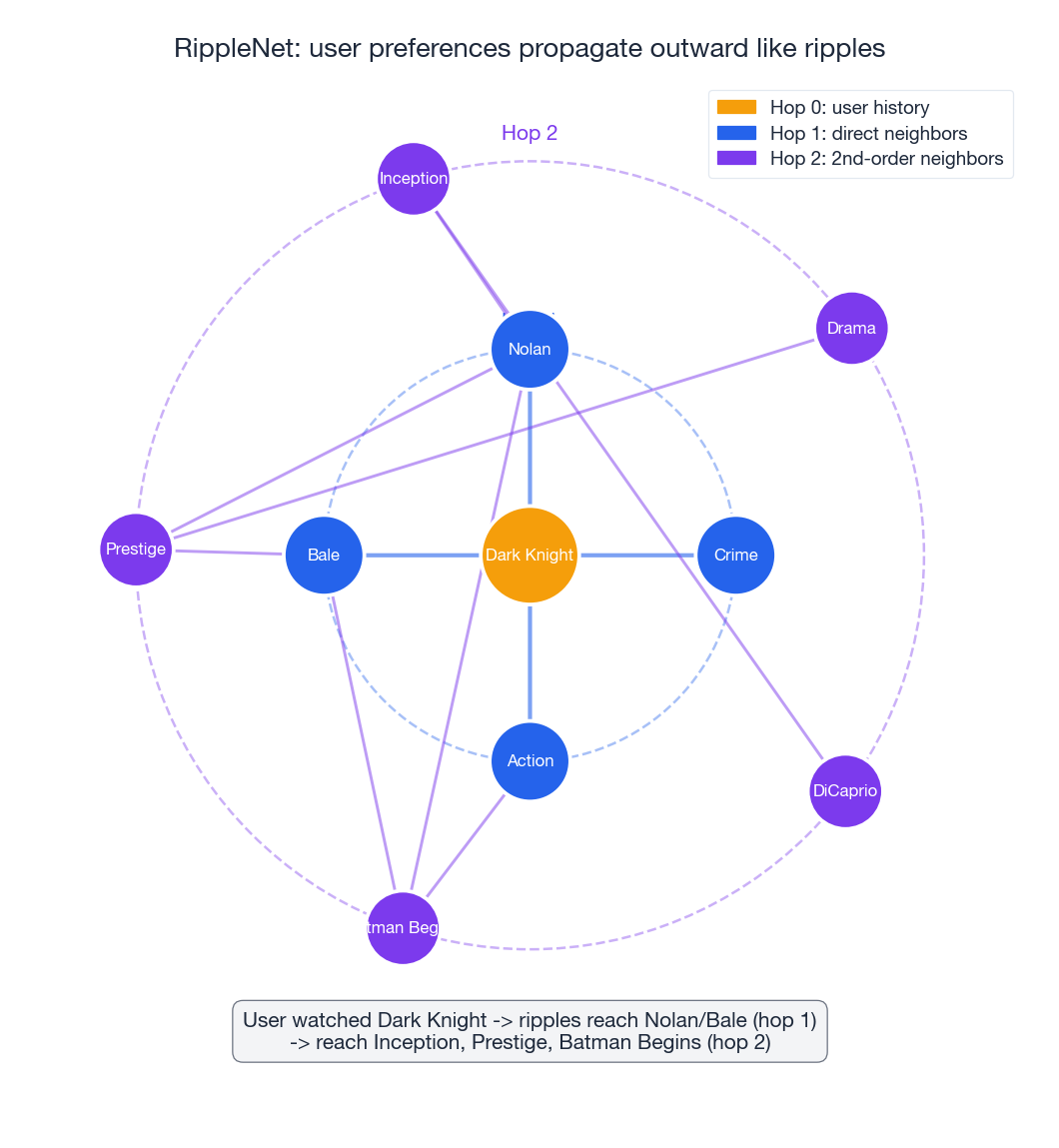

Drop a stone in a pond and ripples spread outward. RippleNet (Wang et al., CIKM 2018) does the same thing with user preferences: when a user interacts with an item, that preference ripples outward through the knowledge graph, activating related entities at increasing distances.

How It Works#

Given user $u$ with historical items $V_u = \{v_1, v_2, \ldots\}$ :

- Hop 0: Start with the user’s items as the initial preference set $\mathcal{S}_u^0 = V_u$ .

- Hop 1: Find every entity connected to $\mathcal{S}_u^0$ via any relation. These form $\mathcal{S}_u^1$ .

- Hop 2: Find every entity connected to $\mathcal{S}_u^1$ . These form $\mathcal{S}_u^2$ .

- Aggregate: Compute the user’s enhanced embedding by attention-weighting entities at each hop.

Plain English. “How relevant is this KG entity to the item we are scoring? Use the relation matrix $\mathbf{R}$ to measure compatibility.”

$$\mathbf{o}_u^h = \sum_{(h,r,t) \in \mathcal{S}_u^h} p_i^h\, \mathbf{t}$$ $$\mathbf{u} = \sum_{h=0}^{H} \alpha_h\, \mathbf{o}_u^h$$Walkthrough. A user watched The Dark Knight:

- Hop 0: {Dark Knight}

- Hop 1: {Nolan, Bale, Action, Crime}

- Hop 2: {Inception, Prestige, Batman Begins, DiCaprio, Drama, …}

The ripples discover that this user might like Inception (connected through Nolan) and The Prestige (connected through both Nolan and Bale — a doubly-supported signal).

Implementation: RippleNet#

| |

KGCN: Knowledge Graph Convolutional Networks#

A Different Perspective#

While RippleNet propagates user preferences through the KG, KGCN (Wang et al., WWW 2019) takes the opposite stance: it builds better item representations by aggregating information from each item’s KG neighborhood.

Analogy. RippleNet asks, “Starting from what you liked, what is nearby in the knowledge graph?” KGCN asks, “For each candidate item, what does its KG neighborhood tell us about it?”

How KGCN Works#

For an item $i$ with KG neighbors $\mathcal{N}_i$ , KGCN:

- Samples $K$ neighbors from the KG.

- Weights each neighbor by a learned, relation-aware attention score.

- Aggregates the weighted neighbor embeddings.

- Combines the aggregate with the item’s own embedding.

Plain English. “Look at what is connected to this item in the KG (actors, directors, genres). Weight each connection by how important that relation type is. Blend the neighborhood signal with the item’s own embedding.”

Implementation: KGCN#

| |

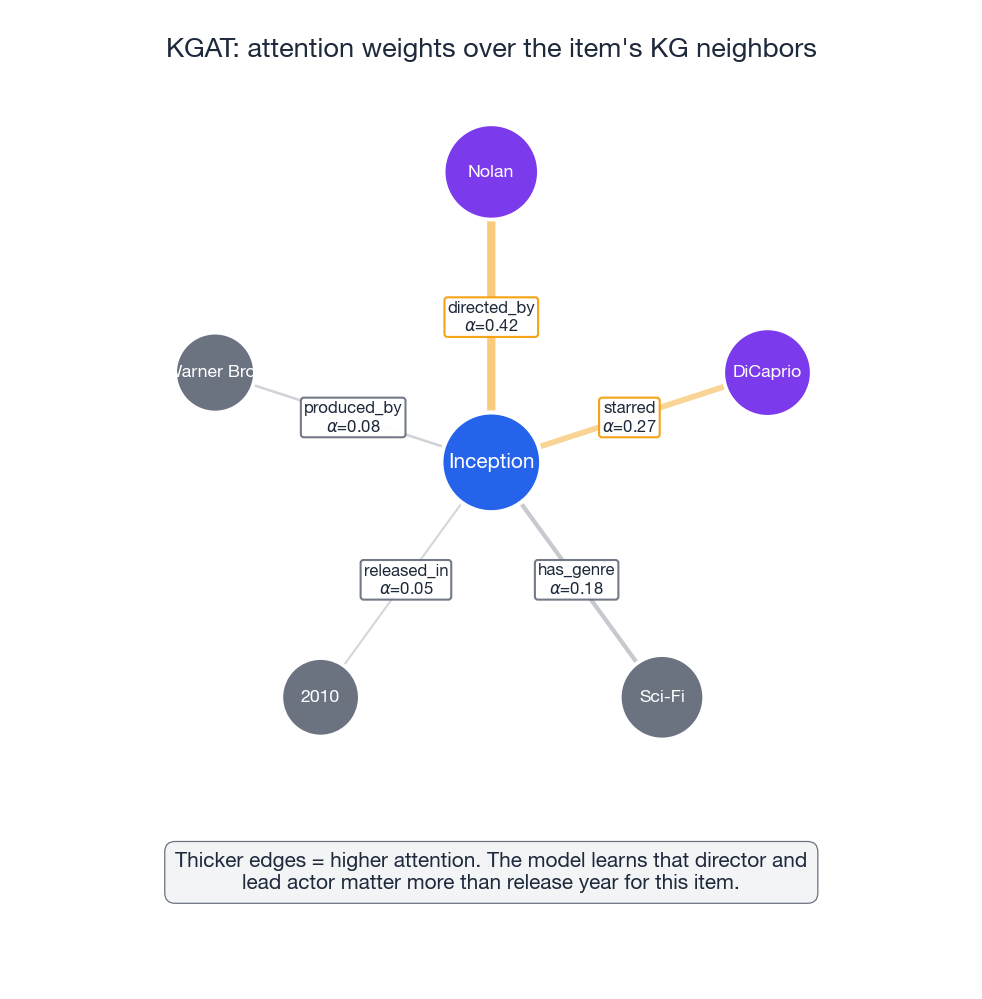

KGAT: Knowledge Graph Attention Network#

What KGAT Adds#

$$\mathcal{G}_\text{CKG} = \underbrace{\{(u, \text{interact}, i)\}}_{\text{user-item edges}} \;\cup\; \underbrace{\{(h, r, t)\}}_{\text{KG edges}}$$Why this matters. In KGCN, user and item embeddings live in separate spaces. In KGAT, they are all nodes in the same graph, so collaborative signals and semantic signals propagate together.

Attention Mechanism#

$$\pi(e, r, e') = \frac{\exp\!\bigl(\text{LeakyReLU}(\mathbf{a}^T [\mathbf{e}_e \,\|\, \mathbf{e}_r \,\|\, \mathbf{e}_{e'}])\bigr)}{\sum_{(r'', e'') \in \mathcal{N}(e)} \exp\!\bigl(\text{LeakyReLU}(\mathbf{a}^T [\mathbf{e}_e \,\|\, \mathbf{e}_{r''} \,\|\, \mathbf{e}_{e''}])\bigr)}$$Plain English. “For each neighbor, concatenate the entity, relation, and neighbor embeddings, run them through a learned scoring function, and normalize with softmax. This tells the model which connections matter most.” The figure above visualizes this: edges to Nolan and DiCaprio are thick (high attention) while the edge to 2010 (release year) is thin.

$$\mathbf{e}_e^{(l)} = \sigma\!\bigl(\mathbf{W}^{(l)}[\mathbf{e}_e^{(l-1)} \,\|\, \mathbf{e}_{\mathcal{N}(e)}^{(l-1)}] + \mathbf{b}^{(l)}\bigr)$$Multi-Head Attention#

$$\mathbf{e}_{\mathcal{N}(e)} = \big\|_{k=1}^{K}\; \sum_{(r,e') \in \mathcal{N}(e)} \pi^{(k)}(e, r, e')\, \mathbf{e}_{e'}^{(k)}$$Implementation: KGAT#

| |

CKE: Collaborative Knowledge Base Embedding#

The Multi-Signal Approach#

$$\mathbf{i} = \mathbf{i}_\text{CF} + \mathbf{i}_\text{KG} + \mathbf{i}_\text{text}$$| Component | Source | Learning method |

|---|---|---|

| $\mathbf{i}_\text{CF}$ | User-item interactions | Matrix factorization |

| $\mathbf{i}_\text{KG}$ | Knowledge graph structure | TransR embedding |

| $\mathbf{i}_\text{text}$ | Item descriptions | CNN text encoder |

Why three signals? Each captures something the others miss:

- CF knows who likes what, but not why.

- KG knows semantic relationships, but not user behavior.

- Text captures nuanced descriptions that structured triples cannot express.

Joint Training#

$$\mathcal{L} = \mathcal{L}_\text{CF} + \lambda_1 \mathcal{L}_\text{KG} + \lambda_2 \mathcal{L}_\text{text} + \lambda_3 \mathcal{L}_\text{reg}$$The CF loss is standard matrix factorization with $\hat{r}_{ui} = \mathbf{u}^T \mathbf{i}$ . The KG loss uses TransR: push valid triples together and invalid triples apart.

Implementation: CKE#

| |

Path-Based Reasoning for Explainable Recommendations#

Why Paths Matter#

The methods above learn embeddings — dense vectors that are powerful but opaque. Path-based reasoning takes a different route: it finds concrete paths through the knowledge graph from a user’s history to a candidate item, and uses those paths as both features and explanations.

Example path. You rated The Dark Knight highly -> The Dark Knight directed_by Christopher Nolan -> Christopher Nolan directed Inception -> Recommend Inception.

This path is not just a feature for the model — it doubles as a human-readable explanation.

Implementation: Multi-Hop Path Reasoning#

| |

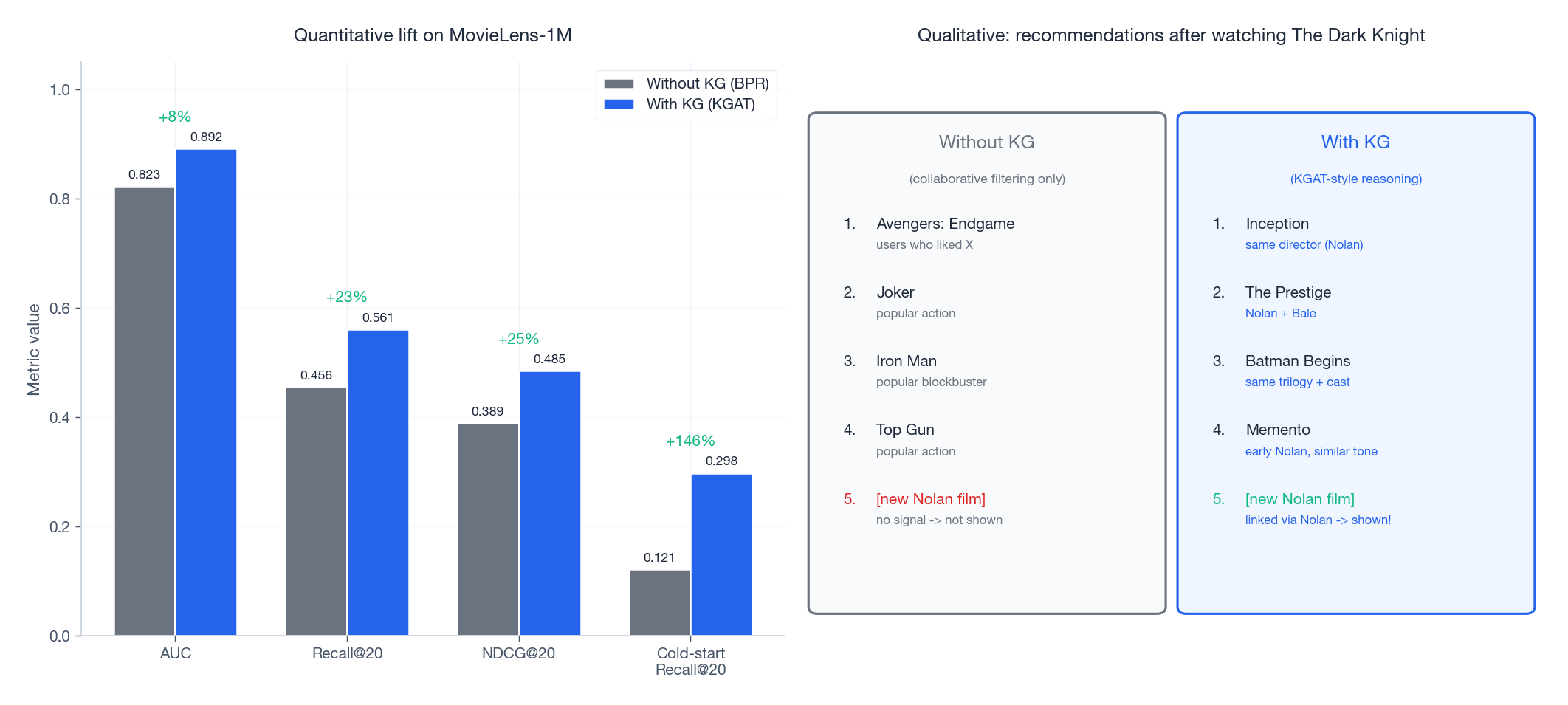

With KG vs Without KG: The Bottom Line#

The left panel shows KGAT delivering double-digit relative gains over a vanilla BPR baseline on every standard metric — and a much larger gain on cold-start Recall@20, which is exactly the regime where collaborative filtering struggles most.

The right panel makes the qualitative difference visceral. Without a KG, the model falls back on raw popularity and surfaces unrelated blockbusters; the brand-new Nolan film is invisible because no user has seen it yet. With a KG, the model surfaces films that are thematically connected — and the new Nolan release is recommended on day one because the graph already knows Nolan directed it.

Practical Tips#

Building a Knowledge Graph#

| Source | Type | Example |

|---|---|---|

| Wikidata / DBpedia | Public structured KGs | Movie metadata, company info |

| Product catalogs | Internal databases | Brand, category, specifications |

| NER + relation extraction | From unstructured text | Reviews, descriptions |

Entity Alignment#

Items in your recommendation system need to be linked to entities in the knowledge graph. Common approaches:

- Exact string matching — fast but brittle.

- Fuzzy matching — handles typos and abbreviations.

- Embedding similarity — learn embeddings on both sides and match nearest neighbors.

- Manual curation — highest quality but expensive.

Training Strategies#

| Strategy | When to use |

|---|---|

| Joint training | Enough data to train KG and rec objectives together |

| Pre-train + fine-tune | KG data is much larger than interaction data |

| Multi-task learning | You want shared representations across tasks |

Choosing the Number of Hops#

- 1 hop: direct attributes only. Fast but shallow.

- 2 hops: the sweet spot for most tasks. Captures “same director” or “same actor” patterns.

- 3 hops: richer paths but introduces noise. Use attention to filter.

- 4+ hops: rarely helpful. The signal-to-noise ratio drops sharply.

Complete Training Pipeline#

| |

FAQ#

How do knowledge graphs help with the cold-start problem?#

Even when a new item has zero interactions, it still has attributes in the knowledge graph (genre, director, cast). These attributes connect it to other items that do have interaction history. A user who loved Nolan’s films can receive a recommendation for his latest movie on release day, purely through KG connections.

RippleNet vs. KGCN — what is the difference?#

RippleNet is user-centric: it starts from the user’s history and ripples outward through the KG. KGCN is item-centric: it builds enriched item representations by aggregating from each item’s KG neighborhood. In practice, KGCN tends to scale better because item neighborhoods are more stable than user preference ripples.

When should I use KGAT over KGCN?#

Use KGAT when you want to model the interaction between collaborative signals and semantic signals in a unified graph. KGAT’s Collaborative Knowledge Graph merges user-item edges with KG edges, so attention can learn across both types. KGCN keeps them separate.

How does CKE compare to graph-based methods?#

CKE is simpler and faster — it pre-learns KG embeddings and combines them additively. Graph-based methods (KGCN, KGAT) are more powerful because they propagate information through the graph at inference time, but they are also more expensive. Start with CKE for a quick baseline, then upgrade to KGAT if you need better accuracy.

Can knowledge graphs improve recommendation diversity?#

Yes. Different relation types lead to different kinds of connections. Following same_director gives different results than same_genre or same_actor. By exploring multiple relation paths, the system naturally surfaces diverse recommendations instead of creating a filter bubble.

How do I handle noisy or incomplete knowledge graphs?#

- Attention mechanisms (KGAT) naturally down-weight noisy connections.

- TransR embeddings learn to ignore inconsistent triples during training.

- Data augmentation through relation inference: predict missing edges.

- Multi-task learning shares information across tasks, making the model more robust to missing data.

What are the computational bottlenecks?#

The main bottleneck is neighbor aggregation on large KGs (millions of entities). Solutions:

- Neighbor sampling — limit to $K$ neighbors per node.

- Hierarchical aggregation — aggregate attributes first, then items.

- Mini-batch training with subgraph sampling.

- Pre-computation of KG embeddings (CKE approach).

Can knowledge graphs provide explainable recommendations?#

This is one of their biggest strengths. Path-based methods generate explanations like: “We recommend Movie X because it shares director Y with Movie Z, which you rated highly.” These explanations are concrete, human-readable, and grounded in factual relationships — much better than “users similar to you also liked this.”

What is the latest in KG-enhanced recommendation?#

Recent trends include:

- Temporal KGs that model how preferences and facts evolve over time.

- Multi-modal KGs combining structured knowledge with images and text.

- Transformer-based KG methods that replace GCN aggregation with self-attention.

- Pre-trained KG embeddings from large-scale foundation models.

- LLM + KG hybrids that use language models to extract from and reason over knowledge graphs.

Summary#

- Knowledge graphs add semantic understanding to recommendation systems, going beyond pure collaborative signals.

- Cold start is the killer app: KGs enable recommendations for items with zero interaction history.

- RippleNet propagates user preferences outward through the KG like ripples in water.

- KGCN and KGAT build enriched item representations by aggregating from KG neighborhoods.

- CKE combines three signal types (collaborative, structural, textual) into a unified item embedding.

- Path-based reasoning provides explainability — concrete, human-readable reasons for each recommendation.

- 2 hops is the sweet spot for most KG-enhanced methods; more hops introduce noise.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation you are here

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices