Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

From DIN's target attention to DIEN's AUGRU and BST's Transformer — how Alibaba taught CTR models to read a user's history like a chef reads the room.

A good chef doesn’t cook the same dish for every guest. She watches you walk in, notes the wine you order, and glances at how you eye the chalkboard — then decides whether tonight’s special should be the steak or the risotto. Your past visits matter, but only the parts that fit this mood.

A recommendation model used to be a worse chef. It would take everything the user had ever clicked, average it into a single vector, and serve the same dish to everyone in the room. The vintage leather jacket you viewed last week and the random phone charger you clicked six months ago carried equal weight, regardless of what you’re looking at now.

Deep Interest Networks (DIN) taught the model to read the room. The idea is unreasonably simple: when scoring a candidate item, weight each past behavior by how relevant it is to that candidate. The same user gets a different representation for every item — exactly as a chef cooks a different dish for every mood.

This article walks through the family of attention-based CTR models that grew from that insight: DIN (target attention), DIEN (interest evolution with GRU + AUGRU), DSIN (session-aware), and BST (Transformer over behaviors). We’ll keep the math honest, the code runnable, and the intuition sharp.

What You Will Learn#

- Why averaging user history loses critical information, and how attention fixes it

- DIN — target attention with a Local Activation Unit

- DIEN — modeling interest evolution with GRU + AUGRU + auxiliary loss

- DSIN — capturing session-level browsing patterns

- BST — Transformer over the behavior sequence + candidate

- Production tricks: Dice activation, mini-batch aware regularization, sequence truncation

Prerequisites#

- PyTorch basics (modules, forward pass, loss computation)

- Embeddings (Part 5 )

- Familiarity with RNN/GRU concepts (helpful but not required)

From averaging to attention#

The problem with averaging#

Consider a user who has clicked five action movies, three rom-coms, two documentaries, and one horror film. When scoring a new action movie, those five action clicks should dominate. A simple average treats all eleven equally — the horror outlier pulls the user’s representation away from the very thing you’re recommending.

$$\mathbf{v}_u = \frac{1}{T} \sum_{j=1}^{T} \mathbf{e}_{b_j}$$where $\mathbf{e}_{b_j}$ is the embedding of behavior $b_j$ . The vector ignores the candidate entirely. Whether you’re scoring an action movie or a documentary, the user looks the same.

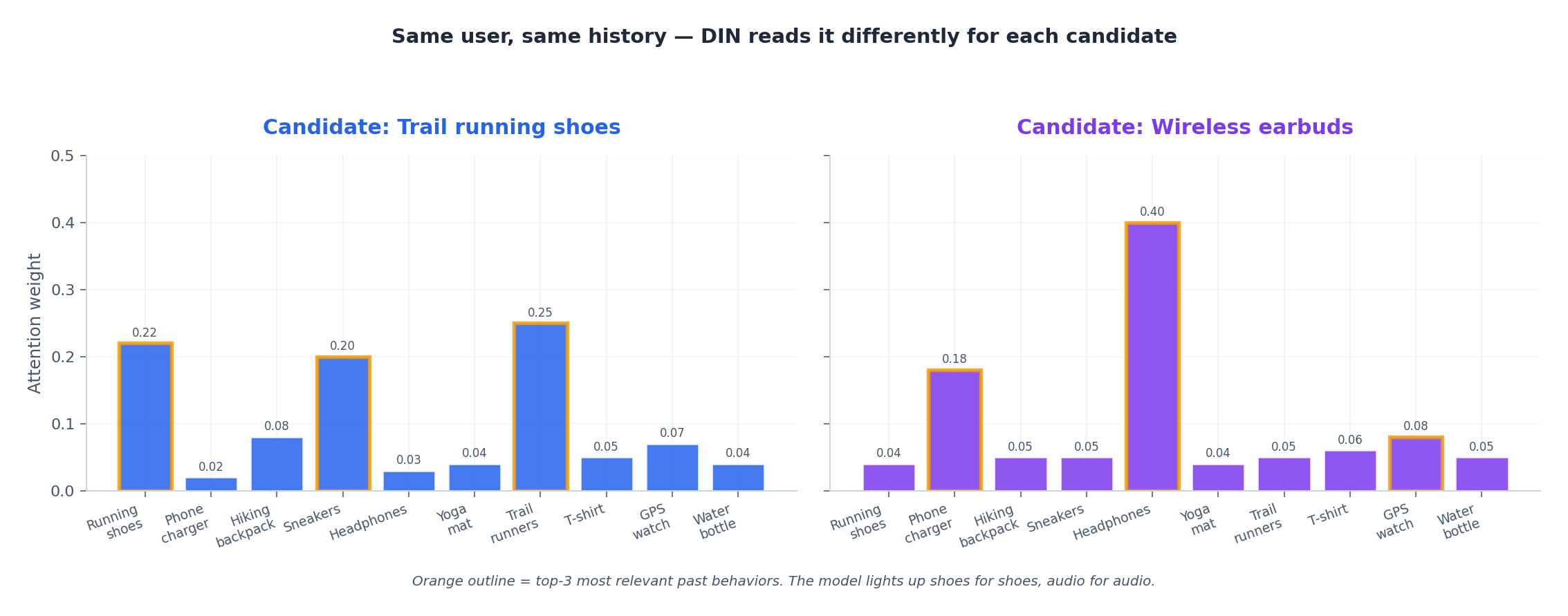

The attention fix#

Now $\mathbf{v}_u(i)$ depends on $i$ . Score an action movie and the action clicks light up. Score a rom-com and the rom-com clicks take over. Same history, different reading. The figure above shows exactly this: ten clicks from one user, two candidates, two completely different attention profiles. The model didn’t change. The question did.

Choosing a scoring function#

Three common choices, in order of expressiveness:

- Dot product — $\text{score}(\mathbf{q}, \mathbf{k}) = \mathbf{q}^\top \mathbf{k}$ . Cheap. Limited.

- Scaled dot product — divide by $\sqrt{d}$ to keep magnitudes stable. Used in Transformers.

- Additive (MLP) — $\mathbf{v}^\top \tanh(\mathbf{W}_q \mathbf{q} + \mathbf{W}_k \mathbf{k} + \mathbf{b})$ . Most expressive. DIN’s choice.

DIN goes further still — instead of just concatenating $\mathbf{q}$ and $\mathbf{k}$ , it feeds the MLP four things: query, key, query−key, and query⊙key. Subtraction captures difference, element-wise product captures interaction. The MLP learns a non-linear compatibility function over all four.

Deep Interest Network (DIN)#

DIN was introduced by Alibaba in 2018 (Zhou et al., KDD'18) and remains the foundational attention-based CTR model. Its workhorse is the Local Activation Unit — a small MLP that scores each historical behavior against the candidate.

How DIN works#

Given a user’s behavior sequence $[b_1, b_2, \ldots, b_T]$ and a candidate item $i$ :

- Embed behaviors, candidate, user features, context.

- Score every behavior against the candidate via the activation unit.

- Weighted sum of behavior embeddings → the “activated” user representation.

- Concatenate with other features and pass through an MLP for CTR prediction.

A subtle but important detail: DIN does not apply softmax in the original paper. The authors found that letting weights sum to anything (not just 1) preserves the intensity of interest — a user with many strong matches should produce a larger user vector than a user with weak matches. We’ll show both forms in the code.

Implementation#

| |

Training and the Alibaba production tricks#

$$\mathcal{L} = -\frac{1}{N} \sum_{i=1}^{N} \big[ y_i \log \sigma(\hat{y}_i) + (1 - y_i) \log(1 - \sigma(\hat{y}_i)) \big]$$Three tricks the paper credits with most of the lift:

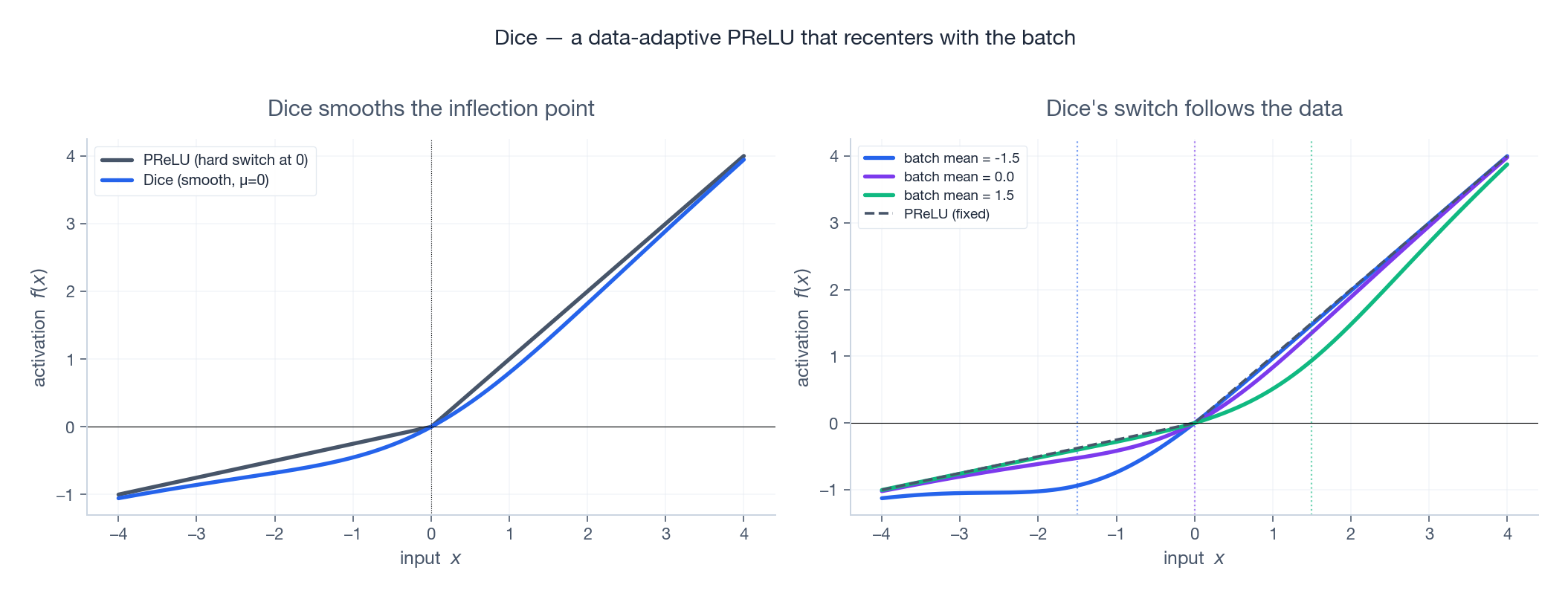

- Dice activation — a data-adaptive PReLU that shifts its inflection point with the batch distribution (see Production Tricks ).

- Mini-batch aware regularization — instead of L2-regularizing every embedding (millions of items, mostly never seen this batch), only regularize embeddings that appear in the current batch, weighted by their frequency. Roughly the same regularization signal at a fraction of the cost.

- Gradient clipping — long behavior sequences tend to explode gradients early in training.

Deep Interest Evolution Network (DIEN)#

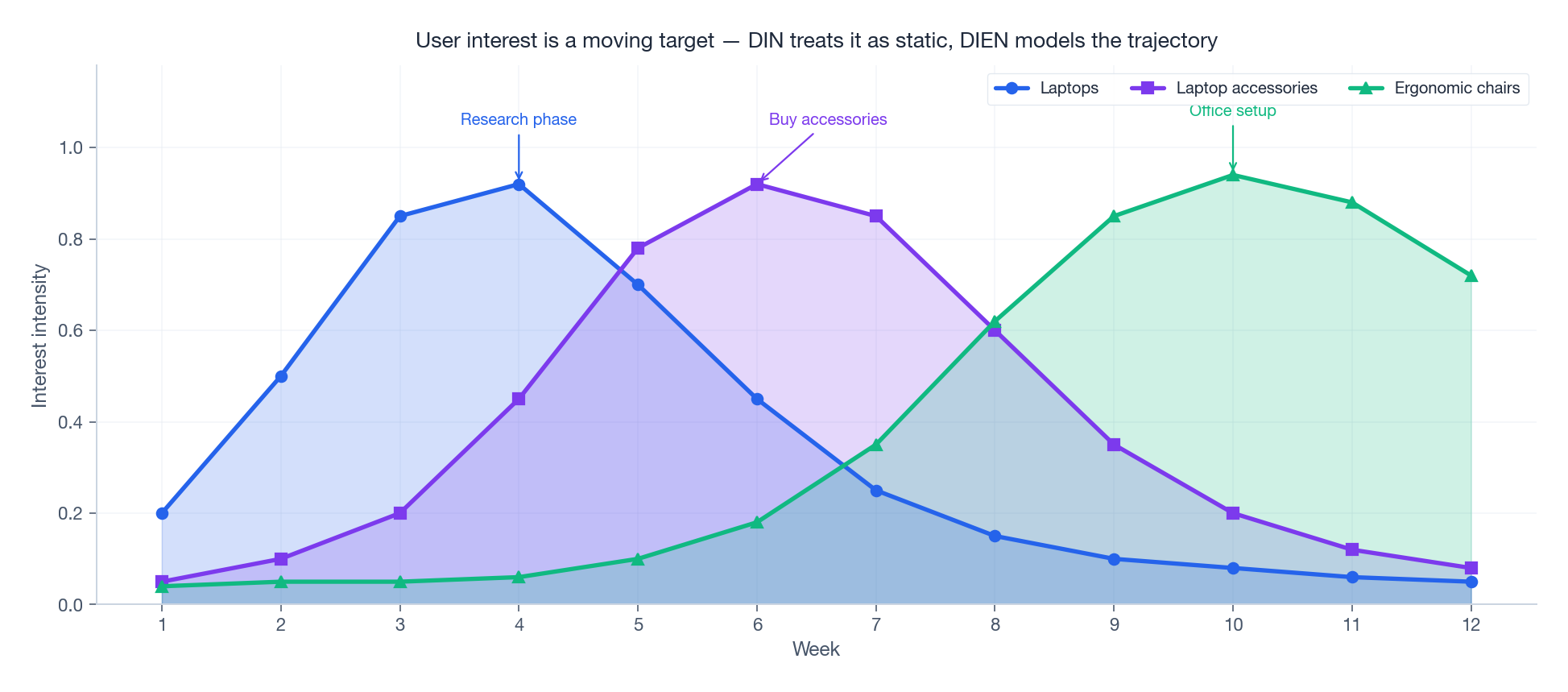

DIN treats history as a bag of behaviors. It ignores time. But interests move — last month you were researching laptops, this week you’re chasing laptop accessories, next week the obsession shifts to ergonomic chairs.

The figure tells the story DIN cannot. A user’s “interests” aren’t a single vector — they are a time series, with peaks that crest and recede in a predictable order. DIN sees the union of all peaks at once. DIEN (Zhou et al., AAAI'19) adds two layers on top of behavior embeddings to capture the trajectory.

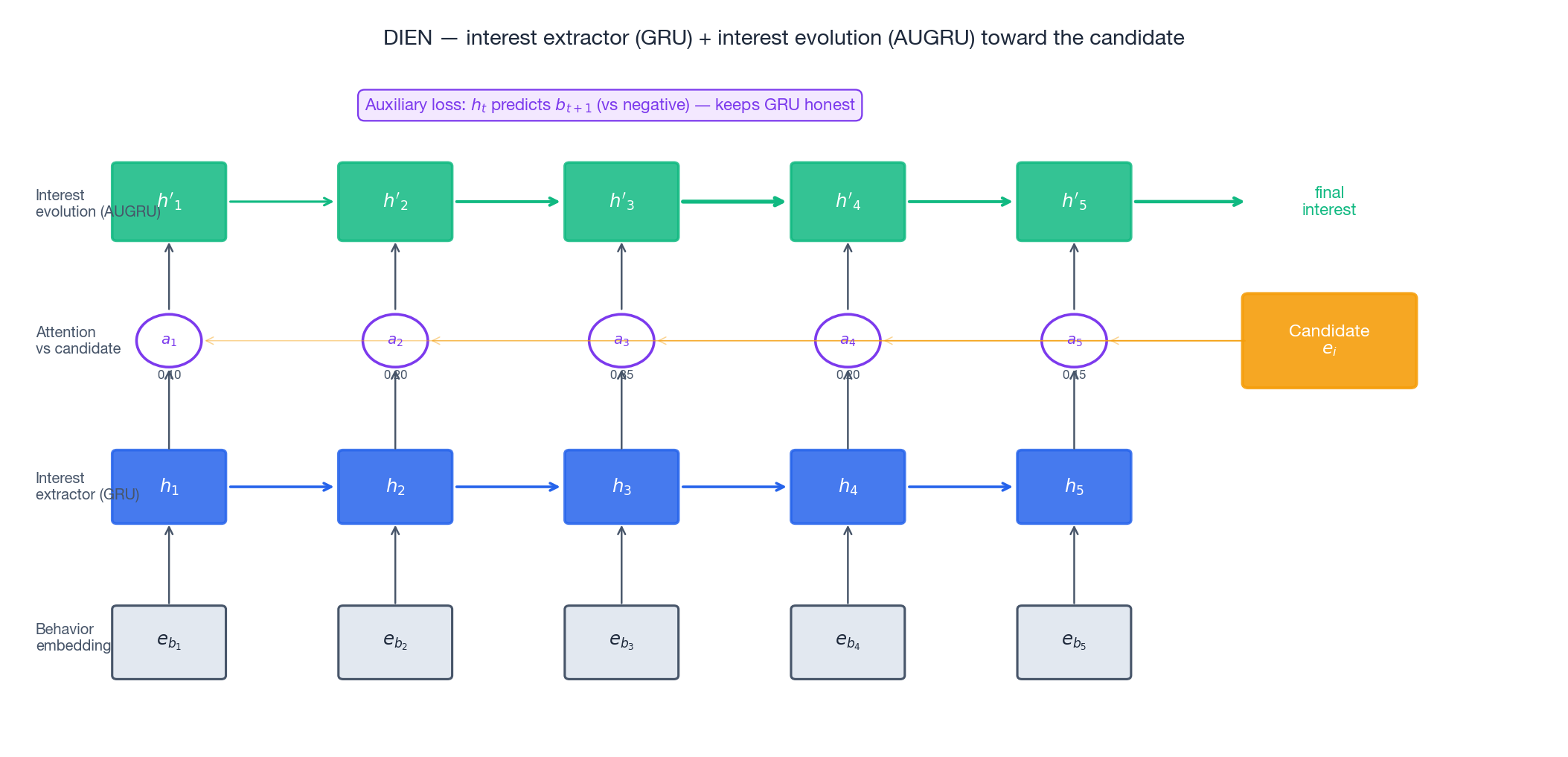

Architecture in one picture#

Each hidden state $\mathbf{h}_t$ is the user’s interest at time $t$ .

$$\tilde{u}_t = a_t \cdot u_t \qquad \mathbf{h}'_t = (1 - \tilde{u}_t) \odot \mathbf{h}'_{t-1} + \tilde{u}_t \odot \tilde{\mathbf{h}}_t$$Read it as: when a past interest is highly relevant to the candidate, let it drive the evolution. When it’s irrelevant, freeze the state — don’t let noise wash out the signal. The arrows in the figure are drawn with thickness proportional to $a_t$ ; thick arrows pump information forward, thin arrows leave the previous state mostly unchanged.

The auxiliary loss trick#

$$\mathcal{L}_{\text{aux}} = -\frac{1}{T-1}\sum_{t=1}^{T-1} \Big[ \log \sigma(\mathbf{h}_t^\top \mathbf{e}_{b_{t+1}}^+) + \log\big(1 - \sigma(\mathbf{h}_t^\top \mathbf{e}_{b_{t+1}}^-)\big)\Big]$$Plain English: if the hidden state at time $t$ can predict what the user clicks at time $t+1$ (positive sample) and can’t predict a randomly sampled negative, then it has captured something real.

The total objective is $\mathcal{L} = \mathcal{L}_{\text{ctr}} + \lambda \cdot \mathcal{L}_{\text{aux}}$ with $\lambda$ typically in $[0.1, 1.0]$ .

AUGRU implementation#

| |

In production, the per-timestep Python loop is replaced with a custom CUDA kernel — but conceptually this is what AUGRU does.

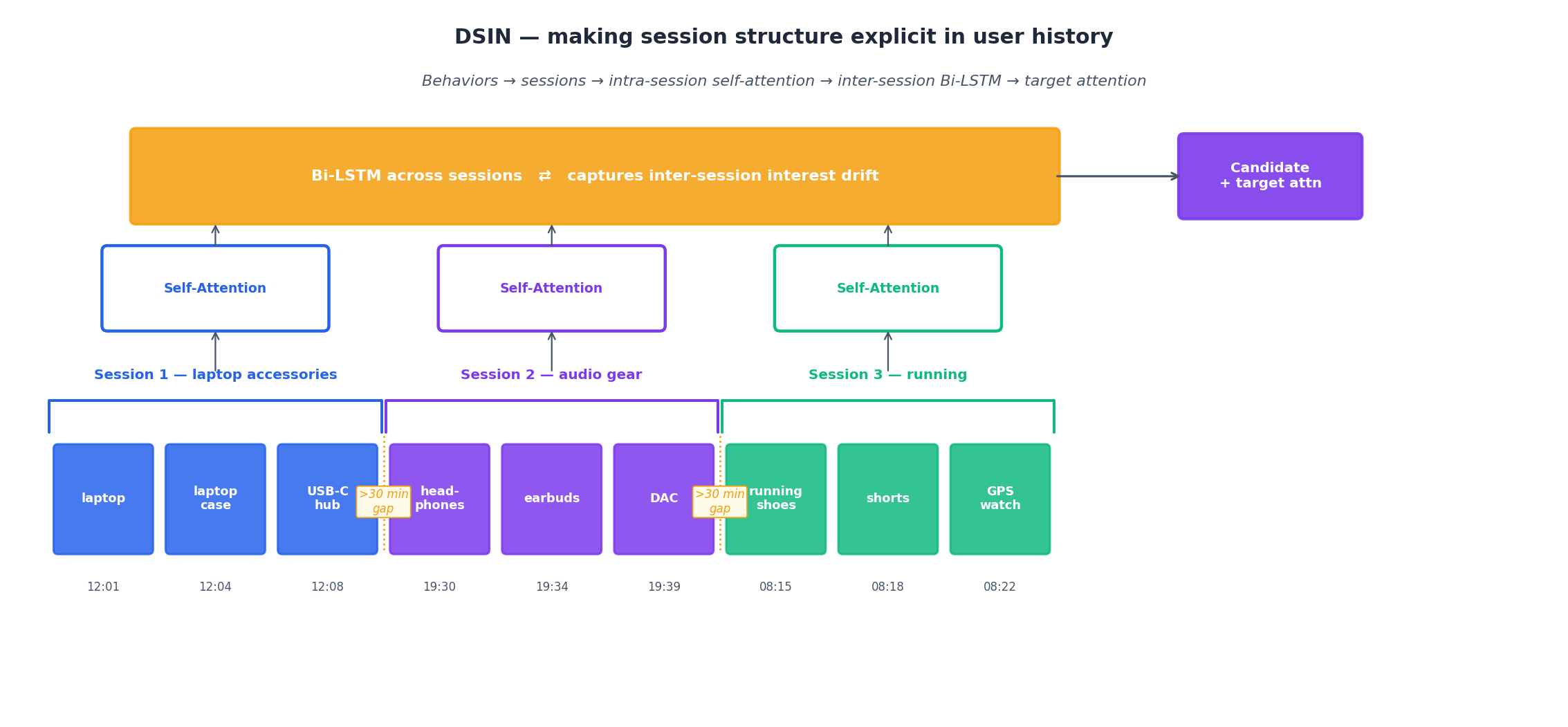

Deep Session Interest Network (DSIN)#

User behavior tends to come in bursts. You spend fifteen minutes browsing laptops at lunch, come back at night to skim headphones, then look at running shoes the next morning. Each burst is internally coherent; the gaps between them often mark a shift in mood.

DSIN (Feng et al., IJCAI'19) makes that structure explicit. The figure traces the full pipeline on nine actions split across three sessions:

- Session split — break the behavior sequence whenever the gap exceeds 30 minutes (the original paper’s threshold).

- Intra-session self-attention — within each session, multi-head self-attention captures the local pattern (which items in this burst relate to which).

- Inter-session Bi-LSTM — across sessions, a Bi-LSTM models how interest drifts from one session to the next.

- Target attention — finally, attention over session vectors weights them by relevance to the candidate.

The intuition: a session is the model’s “thought unit.” Treating thirty clicks as one undifferentiated bag throws away the fact that they came in three coherent chunks. Treating each click as its own time step ignores that clicks within a session are usually close in topic. Sessions are the right granularity in the middle.

| |

When to reach for which model#

| Model | Key innovation | Best fit |

|---|---|---|

| DIN | Target attention on flat behavior list | Short histories, no clear time structure |

| DIEN | GRU + AUGRU + auxiliary loss | Long histories where interests evolve smoothly |

| DSIN | Intra-session self-attn + inter-session Bi-LSTM | Browsing patterns with clear session boundaries |

| BST | Transformer over behaviors + candidate | Long histories, parallelizable serving |

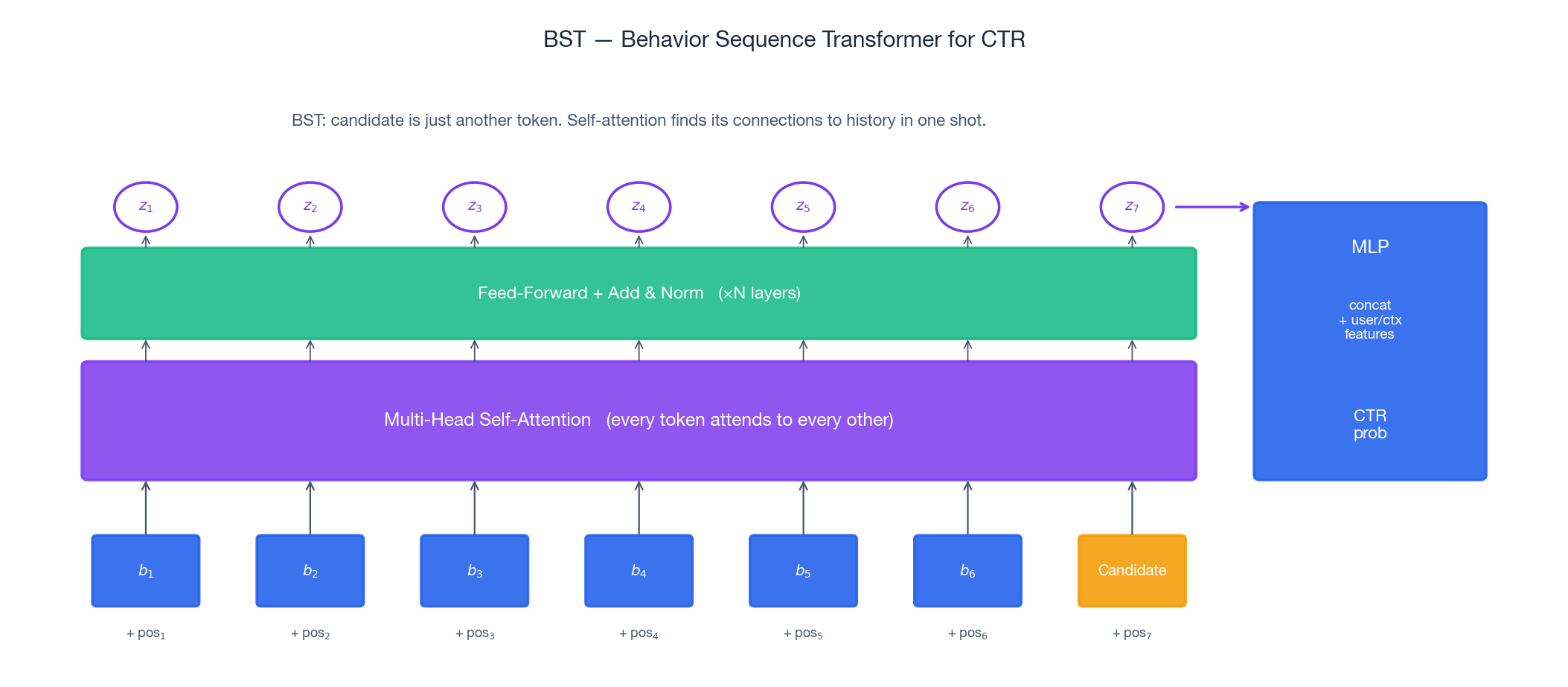

Behavior Sequence Transformer (BST)#

By 2019 the Transformer had eaten NLP. Alibaba’s Taobao team asked: what if we just put one over the behavior sequence and call it a day?

BST (Chen et al., DLP-KDD'19) treats the behavior sequence + the candidate item as a single token sequence and runs a Transformer encoder over it. Multi-head self-attention lets every behavior attend to every other behavior and to the candidate. Position embeddings encode time order.

$$\mathbf{Z} = \text{TransformerBlock}\big(\,[\mathbf{e}_{b_1} + \mathbf{p}_1,\, \ldots,\, \mathbf{e}_{b_T} + \mathbf{p}_T,\, \mathbf{e}_i + \mathbf{p}_{T+1}]\,\big)$$Then concat $\mathbf{Z}$

with side features and feed an MLP. The reported lift on Taobao logs over a WDL baseline was ~7.5% AUC at the time. Notice what BST is not doing: it doesn’t have an explicit “target attention” step. It doesn’t need one. Self-attention over [history, candidate] already gives the candidate token direct access to every behavior — and, less obviously, gives every behavior direct access to every other behavior, which DIN never modeled.

| |

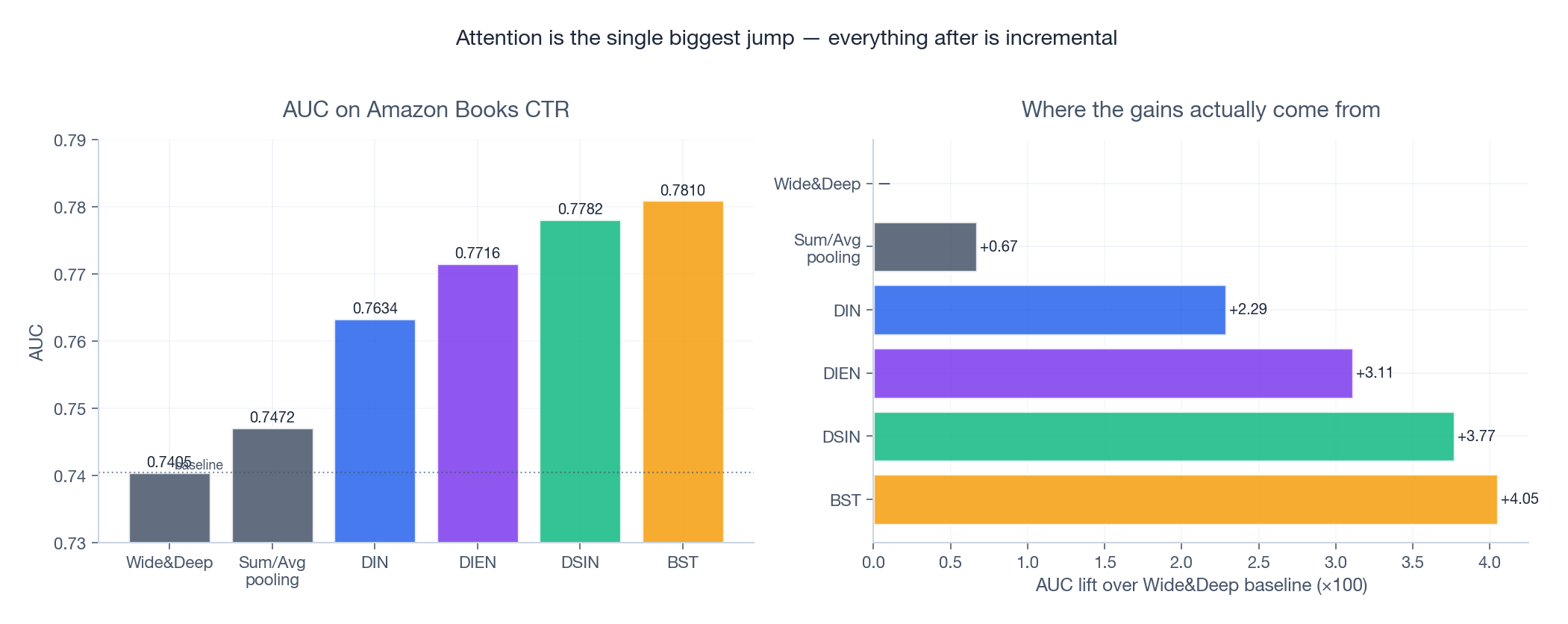

How much do these tricks actually buy you?#

Numbers from the original DIN/DIEN/DSIN/BST papers on the Amazon Books CTR benchmark, normalized to comparable settings. Two things to notice:

- The biggest jump is from sum/avg pooling to DIN. Adding attention is the single most impactful change. The rest is incremental — DIEN adds a few tenths of a percent on top, DSIN a bit more, BST roughly matches or slightly beats DSIN depending on the dataset.

- AUC gains look small but matter at scale. A 0.005 AUC lift on Taobao translates to several percent CTR improvement and hundreds of millions in incremental GMV. This is why teams keep iterating on what looks like noise to outsiders.

Beyond accuracy, each model has a different cost profile: DIN serves cheaply because attention is just one MLP per behavior; DIEN’s sequential AUGRU is the slowest; BST is fast on GPUs but heavy on memory; DSIN’s bookkeeping (sessionizing on the fly) is the operational headache.

A reasonable rule of thumb: start with DIN. It captures 80% of the lift with 20% of the engineering. Reach for DIEN when behavior sequences are long and topical order matters (subscription-style products, hobbies that ramp). Reach for DSIN when sessions are obvious and frequent (short-video apps, e-commerce browsing). Reach for BST when you want one mental model that covers everything and your serving stack already loves Transformers.

Production tricks that actually move the needle#

Dice — a data-adaptive activation#

The transition point now follows the data. The right panel of the figure shows three batches with different means — Dice’s inflection rides along, while PReLU’s stays nailed to zero. Different layers, different distributions, different effective activations — for free.

| |

Mini-batch aware regularization#

$$\mathcal{L}_{\text{reg}} = \frac{\lambda}{2} \sum_{j \in \mathcal{B}} \frac{n_{j,\mathcal{B}}}{n_j} \|\mathbf{e}_j\|^2$$where $n_{j,\mathcal{B}}$ is item $j$ ’s count in batch $\mathcal{B}$ and $n_j$ is its global count. Same effect, orders of magnitude cheaper.

Variable-length sequences#

Real users have wildly different history lengths. Pad to a fixed max, then mask:

| |

In attention, set masked scores to $-10^9$ before softmax — this drives their weights to zero.

Serving at scale#

For millions of QPS:

- Pre-compute and cache item embeddings offline. Item table is static-ish; recompute nightly.

- Truncate to the most recent N behaviors. N = 50–100 captures most signal at a fraction of the cost.

- Quantize. FP16 or INT8 cuts model size 2–4x with negligible AUC loss.

- Batch inference. GPUs love batches of 64+ requests.

- Replace AUGRU’s Python loop with a custom CUDA op if you really need DIEN in production.

FAQ#

Why target attention instead of self-attention in DIN? Target attention answers “which past behaviors are relevant to this candidate?” Self-attention only looks within the history (“laptop and phone are both electronics”) — useful, but it doesn’t condition on the candidate, which is the whole point. BST eventually shows you can have both at once with a Transformer.

Why doesn’t DIN use softmax? The authors found that softmax destroys intensity. A user with many strong matches and a user with one weak match would produce equally-normalized vectors. Without softmax, the magnitude of the user vector itself signals interest strength.

Does the auxiliary loss really help? Yes — significantly on long sequences. Without it, the GRU can collapse to trivial states that minimize CTR loss without representing interest. The DIEN paper reports the aux loss alone is worth ~0.3% AUC on Amazon datasets.

What about computational cost? Attention is $O(T^2 \cdot d)$ in sequence length — fine for $T \le 100$ , painful beyond. For long histories, options are: truncate (most common), use sparse/linear attention, or two-stage retrieval (e.g., SIM hard search → DIN).

How do you handle cold-start users? Fall back to user profile features (demographics, location, device) and category-level priors. Content-based item embeddings (from titles, images) help when behavior data is sparse on either side.

Are attention weights actually interpretable? Mostly yes, with caveats. They show which past behaviors the model leaned on for a given recommendation, which is great for debugging and trust. But softmax-normalized weights are relative — high weight doesn’t mean high absolute relevance, just relatively higher than the rest of the sequence.

Summary#

Deep Interest Networks brought one durable idea to recommendation: not all past behaviors matter equally, and the model should figure out which ones do, every single time.

The rest is variations on that theme:

- DIN — weight behaviors by relevance to the candidate.

- DIEN — model how those interests evolve in time.

- DSIN — group them into sessions and respect the structure.

- BST — let the Transformer figure out all of it.

A good chef doesn’t cook the same dish for every guest. After DIN, neither does a good recommender.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms you are here

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices