Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

A practitioner's guide to contrastive learning for recommendations: InfoNCE and the role of temperature, SimCLR vs MoCo negatives, SGL graph augmentations, CL4SRec sequence augmentations, XSimGCL's noise-only trick, with intuition, math, and clean PyTorch.

Classical recommenders learn from one signal: did a user click, watch, or buy? That signal is precious, but it is also brutally sparse. Most users touch fewer than 1% of the catalogue, most items are touched by fewer than 0.1% of users, and a brand-new item or user has nothing at all. Optimising a model directly against such sparse labels almost guarantees overfitting on the head and silence on the tail.

Contrastive learning offers a different bargain. Instead of asking “what label should this example have?”, it asks “which two examples should look alike, and which two should look different?”. That question is cheap to answer — you can derive it from the data itself by perturbing the same user/item/sequence in two ways and declaring the two perturbations a positive pair. Every other example in the batch is a negative. The model learns geometry: similar things end up close, dissimilar things end up far. Once the geometry is good, the supervised recommendation head only needs a small nudge.

This article walks through the core machinery (InfoNCE, temperature, augmentations) and the four families that matter in practice for recommendations: SimCLR-style in-batch contrast, MoCo-style queue-based contrast, SGL graph augmentations, and CL4SRec sequence augmentations. We finish with XSimGCL, the surprising result that you can throw the augmentations away entirely and just inject noise.

What You Will Learn#

- Why contrastive learning attacks the sparsity, cold-start, and popularity-bias problems from a different angle than “more data”

- InfoNCE in detail: where the loss comes from, why temperature $\tau$ matters more than you’d think, and how it shapes gradients

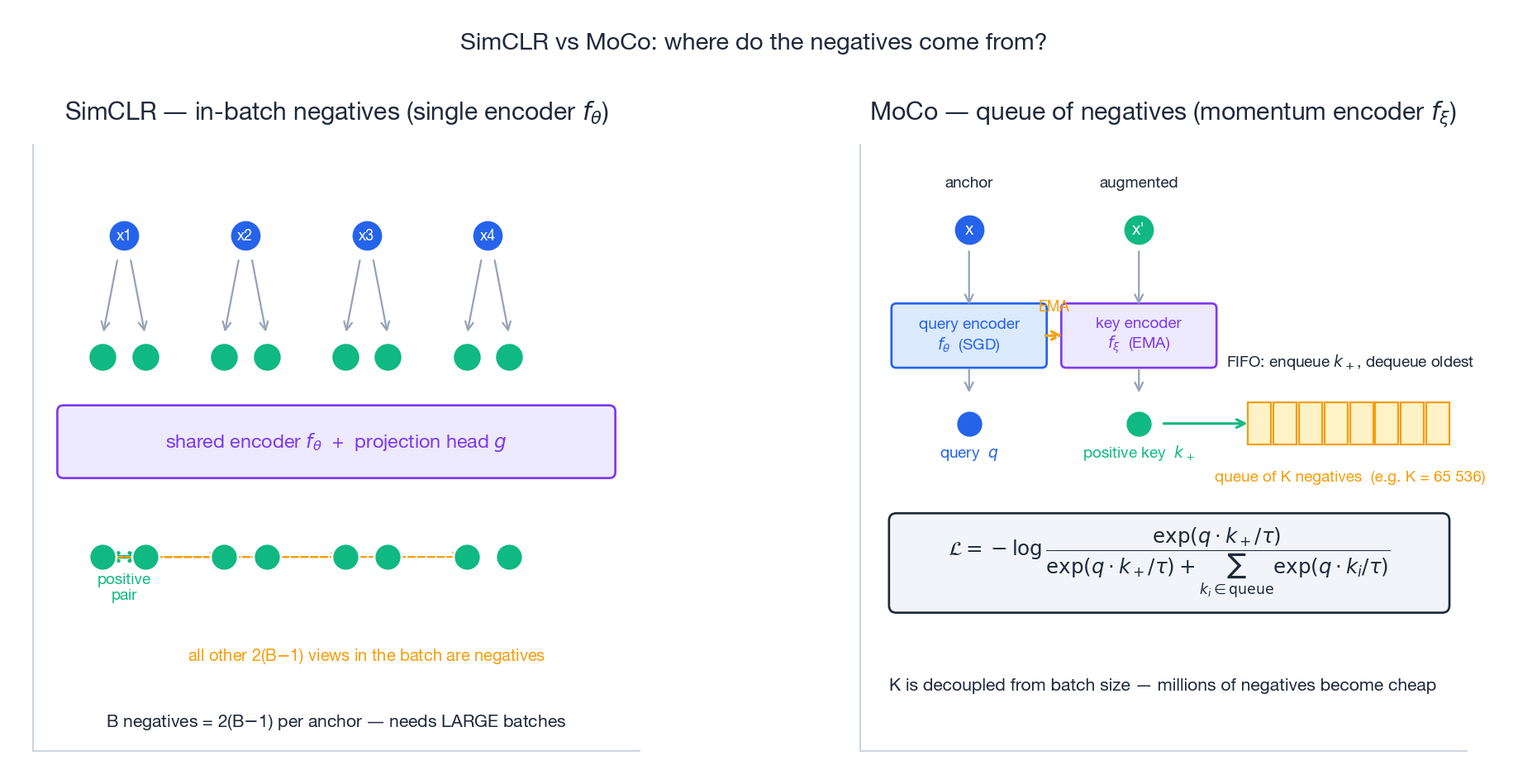

- SimCLR vs MoCo: in-batch negatives vs a momentum-encoder queue, and when each wins

- SGL (Wu et al., SIGIR 2021): node dropout, edge dropout, random-walk subgraphs as graph views

- CL4SRec (Xie et al., ICDE 2022): crop, mask, reorder for sequential recommenders

- SimGCL / XSimGCL (Yu et al., 2022/2023): why a tiny embedding-noise trick beats elaborate graph augmentation

- Working PyTorch for each piece

Prerequisites#

- PyTorch fundamentals (modules, autograd, loss functions)

- Graph neural networks, especially LightGCN (Part 7 )

- Embedding spaces and similarity (Part 5 )

Why contrastive learning for recommendations?#

The data sparsity problem, restated#

Sparsity is not just “we have few labels”. It is structural:

- Cold start. New users and new items have zero interactions, so any model that depends on them as features (matrix factorisation, two-tower, GNN) cannot place them anywhere meaningful in the embedding space.

- Overfitting on the head. With a long-tailed click distribution, the loss is dominated by a handful of popular items. A model that memorises them looks fine on training metrics and useless on the tail.

- Popularity bias. Even when you serve diverse candidates, the scoring head will rank popular items higher because that is what minimised the training loss. The system collapses toward sameness.

The contrastive bargain#

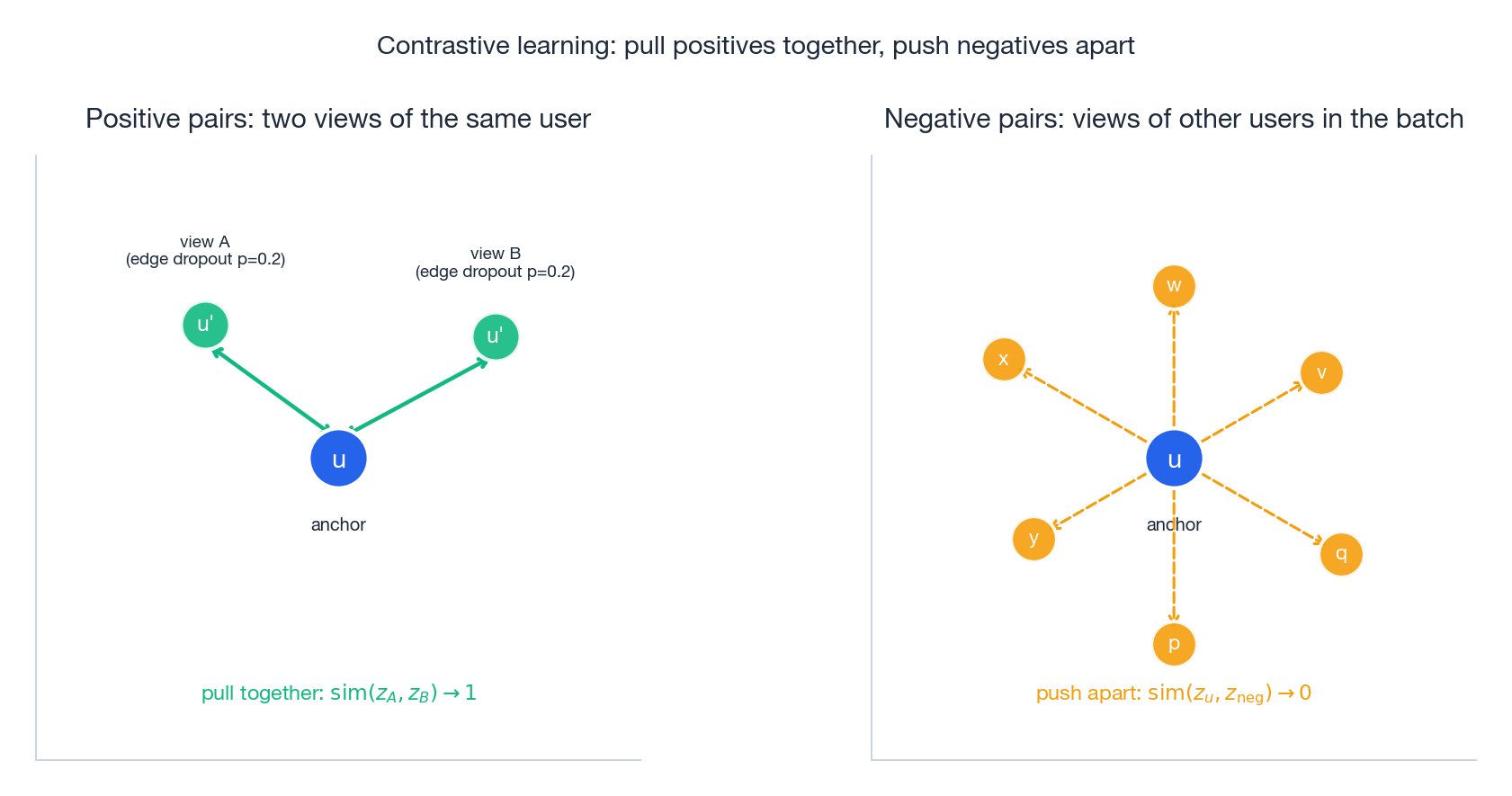

Contrastive learning trades one expensive signal (labels) for a much cheaper one (consistency under perturbation). Suppose you take a user’s behaviour graph, drop 20% of the edges, encode it, then drop a different 20% of the edges and encode again. Two views, same user. The bargain is simple: the two embeddings should be nearly identical, while embeddings for different users in the batch should be different.

That single objective gives you three things at once:

- A free training signal, available in unlimited quantity (any user can be perturbed any number of times).

- A representation prior: the model learns features that survive perturbation, which by construction are the robust ones — exactly what you want for cold-start and tail items.

- A regulariser against collapse onto popular items: the loss explicitly forces different users apart.

The figure above captures the entire intuition. The blue anchor is one user. The two green points are augmented views of that same user, and we pull them in. The amber points are other users from the same training batch, and we push them out. Doing this for every user, every batch, shapes a geometry where semantic similarity equals embedding similarity.

InfoNCE: the loss that does the work#

This is exactly the cross-entropy of an $(N+1)$ -way classifier whose correct class is “the positive”. The numerator says “make the positive likely”; the denominator forces the model to rank the positive above every negative. That ranking pressure is what prevents the trivial solution where all embeddings collapse to the same vector.

Temperature: the most under-appreciated knob#

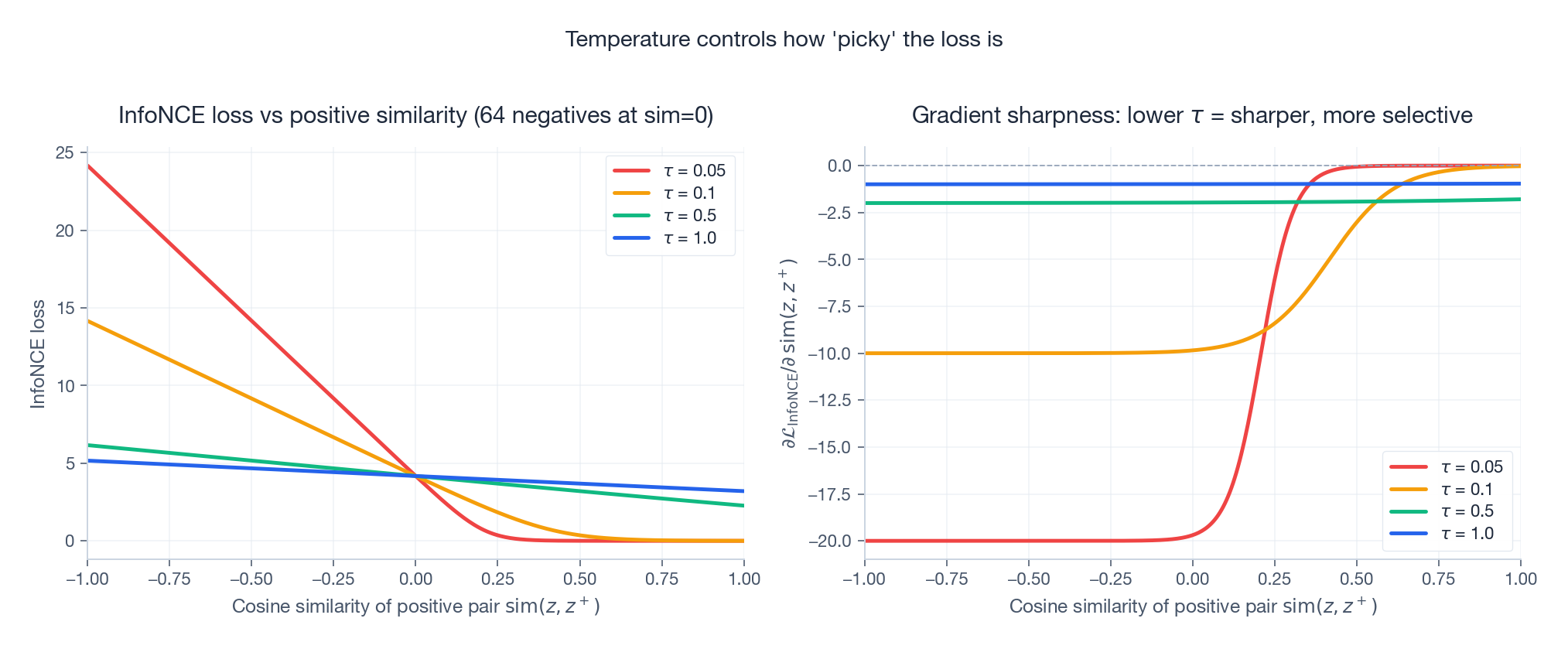

The temperature $\tau$ controls how sharply the softmax distinguishes positives from negatives. It is not a cosmetic hyperparameter; it changes what the loss optimises.

Two things to read off the right-hand panel:

- Low $\tau$ (0.05) makes the gradient very sharp around the decision boundary. The model focuses essentially all of its capacity on the hardest negatives — the ones nearly as similar as the positive. This is great for fine-grained discrimination but unstable if those hard negatives are actually mislabelled (e.g. a missing-not-at-random click).

- High $\tau$ (1.0) spreads gradient mass across all negatives, even easy ones. The optimisation is smoother but the resulting embeddings cluster less tightly.

- The sweet spot for recommendations is roughly $\tau \in [0.1, 0.2]$ — sharp enough for useful contrast, soft enough not to chase noise.

A useful mental model: $1/\tau$ is a “magnification”. Halving $\tau$ doubles every similarity gap, which doubles the gradient pressure to separate near-positives from near-negatives.

Why we need negatives at all#

If you trained only the numerator — pull positives together — every embedding would collapse to a constant vector and the loss would happily drop to zero. Negatives are not a side dish; they are the load-bearing constraint. This is why batch size matters so much in SimCLR-style setups: a batch of 256 gives you 510 negatives per anchor, a batch of 4096 gives you 8190. More negatives means a denser, more informative denominator.

SimCLR vs MoCo: where do the negatives come from?#

Two paradigms dominate self-supervised vision and have been carried over wholesale into recommendations.

SimCLR (Chen et al., ICML 2020) keeps things simple: one encoder, one projection head, two augmentations per example, and every other view in the same minibatch is a negative. The drawback is the obvious one — your number of negatives is bounded by the GPU memory budget for the batch.

MoCo (He et al., CVPR 2020) decouples the two: a query encoder updated by SGD, a momentum key encoder updated as an exponential moving average of the query encoder $\xi \leftarrow m\xi + (1-m)\theta$ , and a FIFO queue holding the last $K$ encoded keys (typically $K = 65{,}536$ ). Negatives come from the queue, so $K$ is independent of batch size.

In recommendation systems, the SimCLR pattern wins more often: minibatches in CTR/CVR training are large anyway, the encoder is usually a GNN whose forward cost is dominated by graph traversal rather than embedding lookup, and a queue of millions of user keys ages quickly because the embedding table itself is being updated. MoCo-style queues do reappear in long-sequence retrieval models, where the encoder is heavy and you genuinely want to amortise its cost across many anchors.

A reference SimCLR loss in PyTorch#

| |

Three things worth highlighting in this 10-line implementation:

- The embeddings are assumed pre-normalised. The cosine similarity then is just the dot product, and the temperature $\tau$ has the geometric meaning above.

- We mask the diagonal with $-\infty$

, not zero. With

cross_entropyworking in log-softmax space, $-\infty$ disappears from the partition function; zero would silently shift gradients. - The loss is symmetric: each of the $2B$ rows contributes one cross-entropy term. Half the rows treat the second view as the target, half treat the first view.

SGL: contrastive learning on the user-item graph#

SGL (Wu et al., SIGIR 2021, Self-supervised Graph Learning for Recommendation) was the paper that brought contrastive learning into the recommendation mainstream. The idea is to bolt an InfoNCE head onto a LightGCN backbone and treat two perturbed copies of the user-item graph as positive pairs of every node.

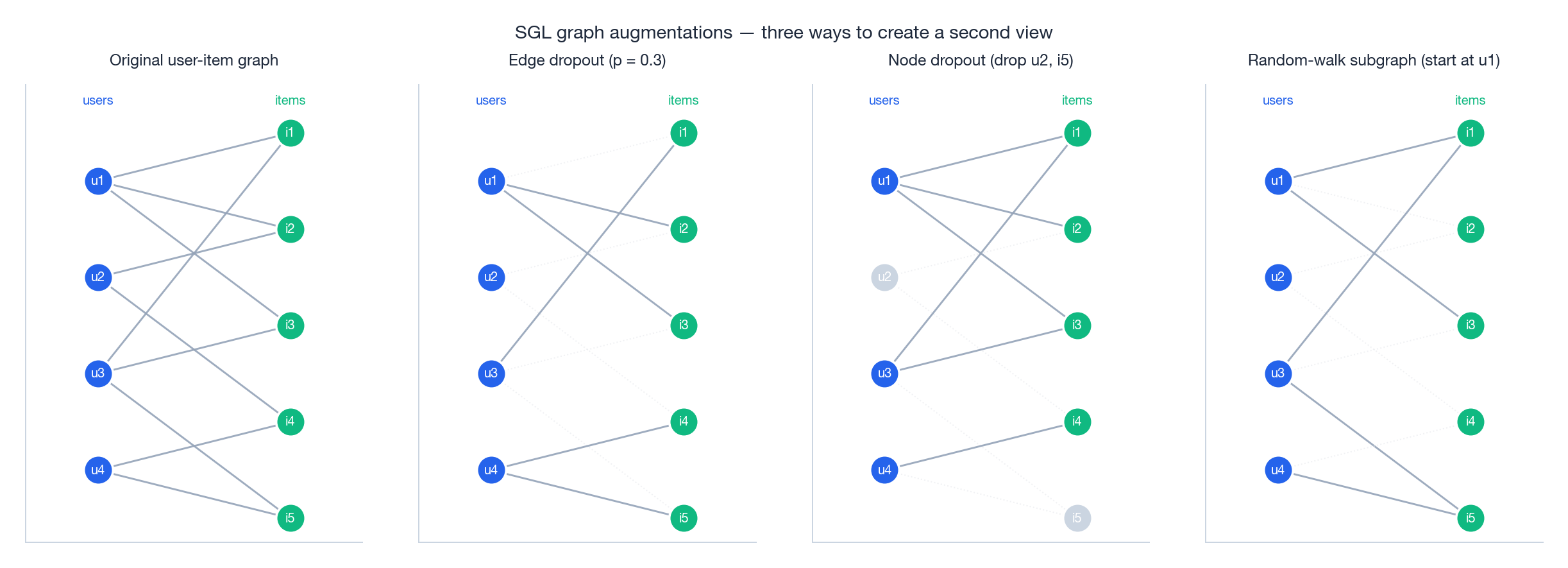

Three ways to perturb a graph#

- Edge dropout (ED): each edge survives independently with probability $1-p$ . Cheap, structure-preserving, the most-used variant.

- Node dropout (ND): each node (and all of its incident edges) is dropped with probability $p$ . Stronger augmentation; can destabilise small-degree nodes.

- Random-walk subgraph (RW): sample a subgraph by a length-$L$ random walk from each anchor. Different walks give different views.

In the original SGL ablations, edge dropout consistently matches or beats the other two while being trivial to implement. Use ED unless you have a specific reason not to.

The full SGL training step#

| |

A few non-obvious implementation details:

- Three forward passes, not two. The contrastive views are dropped separately from the clean graph used for BPR. Sharing the dropped graph with the recommendation loss biases the supervised signal toward the lucky surviving edges.

- The contrastive loss is computed at the node level, on the concatenation of user and item embeddings. This is what gives both sides of the bipartite graph a self-supervised signal.

- Loss weight $\lambda$ matters. Too small ($<10^{-2}$ ) and the contrastive signal disappears; too large ($>1$ ) and BPR loses its grip. The SGL paper sweeps $\lambda \in \{0.005, 0.05, 0.1, 0.5, 1.0\}$ and reports $0.1$ as a robust default — start there.

CL4SRec: contrastive learning for sequential recommenders#

For sequence-based recommenders (SASRec, BERT4Rec, GRU4Rec…), the analogous question is “how do I create two views of the same behaviour sequence?”. CL4SRec (Xie et al., ICDE 2022) proposed three augmentations that have become the defaults.

![Three augmentations on a behaviour sequence: crop a contiguous span, mask a random fraction with [M], reorder a contiguous chunk](https://blog-pic-ck.oss-cn-beijing.aliyuncs.com/posts/en/recommendation-systems/11-contrastive-learning/fig5_cl4srec_augmentations.png)

- Crop: keep a contiguous subsequence of length $\eta L$ . Preserves local order; teaches invariance to “starting later” or “stopping earlier”.

- Mask: replace a random $\gamma$

fraction of items with a special

[M]token. The same idea as masked language modelling — the encoder must infer hidden items from context. - Reorder: shuffle a contiguous chunk of length $\beta L$ . Teaches the model that exact positions inside a session matter less than the bag of items themselves.

CL4SRec randomly samples one of the three augmentations per view, giving nine possible (view A, view B) pairs. This stochastic mixture is itself a regulariser: the encoder cannot overfit to any single augmentation policy.

| |

The encoder is whatever sequence model you already have (Transformer, GRU, …). Pool the final hidden state, project, normalise, drop into the same info_nce we wrote earlier — and add it as an auxiliary loss to your usual next-item prediction.

XSimGCL: when the augmentations themselves don’t matter#

A surprising empirical result from Yu et al. (2022/2023) deserves its own section. They asked: how much of SGL’s gain actually comes from the graph augmentations, and how much from the contrastive loss itself? Their answer: almost all of it comes from the loss. Replacing graph dropout with a tiny amount of uniform noise added to the propagated embeddings matches or beats SGL, while removing all the bookkeeping around graph perturbation.

The trick (SimGCL / XSimGCL, the latter being the streamlined variant) is roughly:

- Run LightGCN propagation as usual.

- At each layer, add a small noise $\Delta$ to the embeddings, where $\Delta$ is a unit-norm random direction scaled by a small $\epsilon$ (e.g. 0.1) and pointing in the same hemisphere as the embedding (so it perturbs but does not flip).

- Run the propagation a second time with a different noise sample to get the second view. No graph dropout.

| |

The deeper lesson, articulated in the SimGCL analysis, is that the contrastive loss is doing two things simultaneously:

- Alignment: pulling positive pairs together (the numerator).

- Uniformity: pushing all embeddings to spread evenly over the unit hypersphere (the denominator).

Uniformity is the regulariser that breaks popularity bias, and it is the dominant effect. Once you have uniformity, the exact mechanism that produces the second view (graph dropout, embedding noise, anything reasonable) hardly matters.

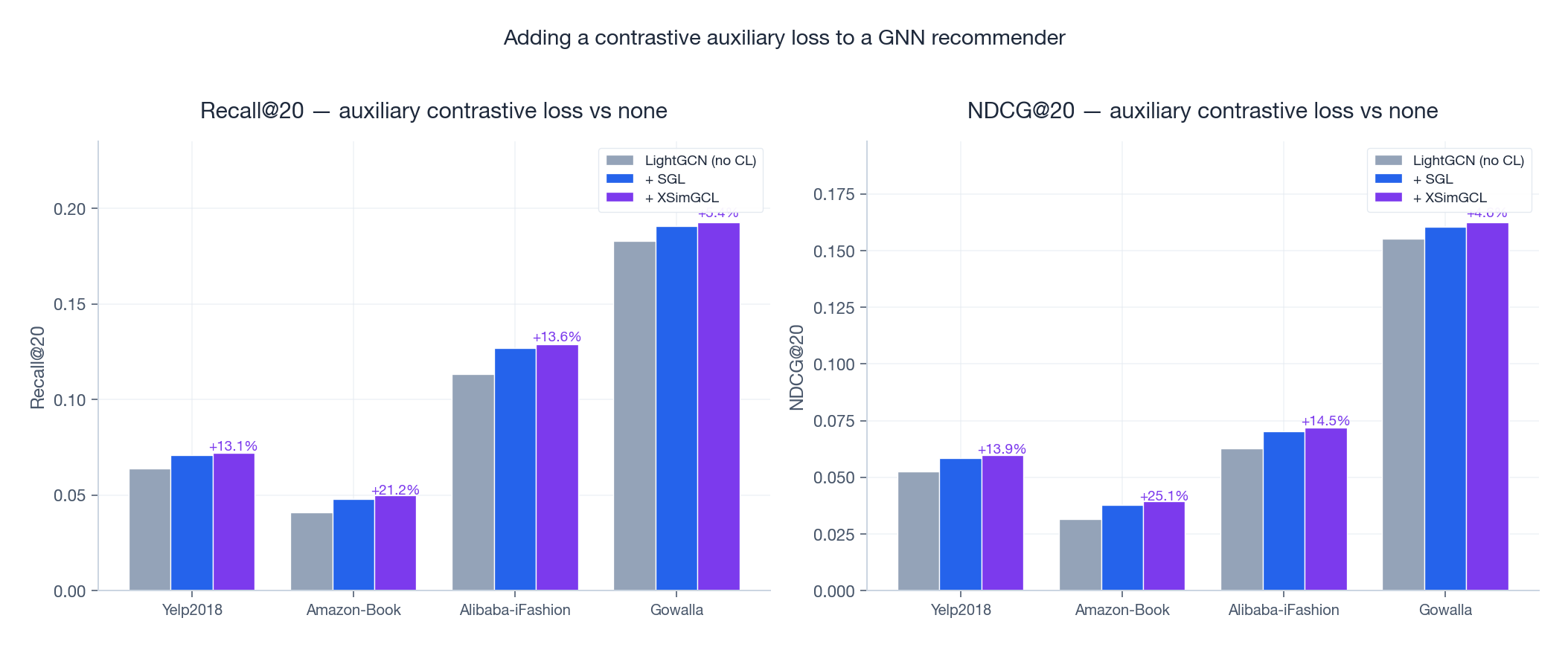

Does this actually help? Reported gains.#

The numbers above are illustrative of magnitudes reported in the SGL (Wu et al., 2021) and SimGCL/XSimGCL (Yu et al., 2022/2023) papers on Yelp2018, Amazon-Book, Alibaba-iFashion, and Gowalla. The pattern is consistent: a contrastive auxiliary loss adds 5–20% to Recall@20 and NDCG@20 over the same backbone with no contrastive signal, and the gains are concentrated on tail items and cold users — exactly where you cared.

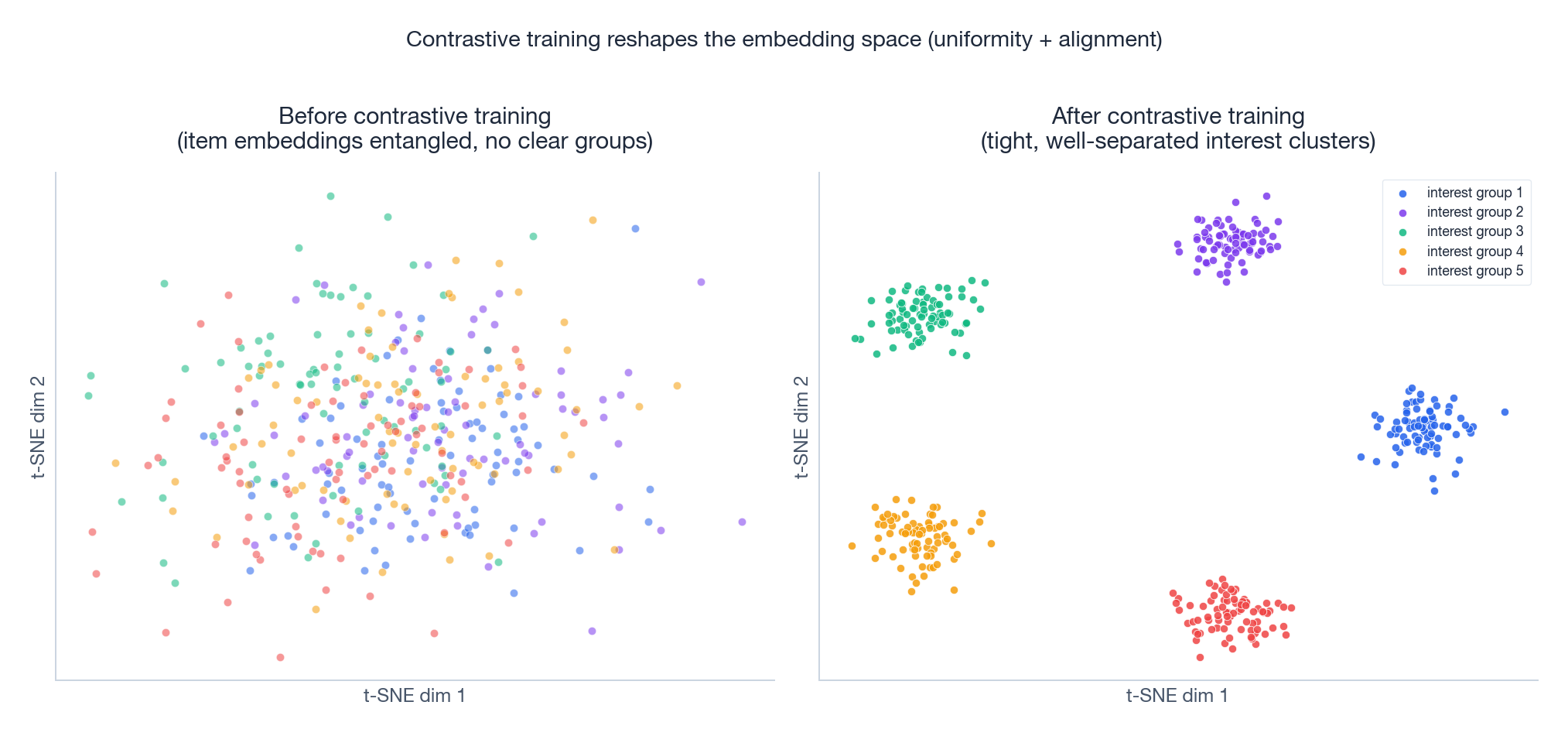

What that gain looks like in the embedding space:

Before contrastive training, the item embeddings cluster weakly — the dominant axis of variation is popularity, and most semantic structure is buried. After contrastive training, the items separate into tight, well-spaced groups corresponding to interest categories. The downstream scoring head then has a much easier job: a near-linear boundary suffices.

FAQ#

Why not just use more data instead of contrastive learning?#

You can’t get “more data” for cold users — they are cold by definition. You can’t get “more data” for tail items — they are tail because almost no one interacts with them. Contrastive learning manufactures a training signal that does not require new interactions, only new perturbations of the interactions you already have. It is doing something categorically different from collecting more clicks.

How do I choose between graph augmentations and embedding noise?#

Default to embedding noise (XSimGCL): it is faster, simpler, and the recent literature consistently finds it competitive with or superior to graph augmentation. Reach for graph augmentations (SGL) when you want to also regularise the GNN’s structural inductive bias, or when you have a good prior on which edges/nodes are most informative.

How do I set the temperature?#

Start at $\tau = 0.2$ . If your hardest negatives are reliably real negatives (e.g. you have explicit dwell-time signals), drop $\tau$ to 0.05–0.1 to sharpen. If your negatives are noisy (e.g. random in-batch users in a high-dimensional catalogue), keep $\tau \geq 0.2$ to avoid overfitting to false negatives. Tune on a small grid; the loss landscape is smooth in $\tau$ .

Do I need a projection head?#

For SimCLR / SGL on top of a GNN, yes — discard it after pretraining. The projection head lets the encoder’s intermediate representation stay general while the head specialises for the contrastive metric. XSimGCL is the notable exception: it contrasts the propagated GNN embeddings directly without a projection head, and works fine.

How do I combine contrastive and recommendation losses?#

$$\mathcal{L}_{\text{total}} = \mathcal{L}_{\text{rec}} + \lambda \cdot \mathcal{L}_{\text{CL}}$$Start with $\lambda = 0.1$ . Sweep $\{0.01, 0.05, 0.1, 0.5, 1.0\}$ on validation if results matter. Sensitivity to $\lambda$ is much lower than sensitivity to $\tau$ — don’t burn your hyperparameter budget here.

Does this work with implicit feedback?#

Yes, and arguably better than with explicit feedback. The whole framework treats every interaction as a positive label and lets the InfoNCE structure handle negatives implicitly. SGL, SimGCL, XSimGCL, and CL4SRec are all designed for implicit feedback (clicks, plays, purchases).

How small can my dataset be?#

Contrastive learning helps most precisely when supervised signal is scarce. SGL’s reported gains on Yelp2018 (sparsity ~99.87%) are larger than on denser datasets. Below ~10K interactions the variance from random augmentation dominates and you may need stronger priors (cross-domain transfer, content features); above ~100K interactions you should expect clean improvements.

How do I evaluate contrastive recommenders?#

Standard top-K metrics (Recall@K, NDCG@K, HR@K) for parity with baselines, then additionally:

- Cold-start slice: bucket users/items by interaction count and report metrics per bucket.

- Tail coverage: fraction of recommended items in the long tail (often defined as the bottom 80% by popularity).

- Embedding diagnostics: visualise with t-SNE/UMAP, or compute uniformity ($\log \mathbb{E}\,e^{-2\|z_i - z_j\|^2}$ ) and alignment ($\mathbb{E}\,\|z - z^+\|^2$ ) per Wang & Isola (2020). High uniformity + low alignment = healthy.

Online vs offline metric divergence#

This is the trap I’ve seen burn the most teams trying to ship contrastive recsys.

The offline story for SGL/CL4SRec is consistent: NDCG@20 up 5–15%, Recall@20 up 8–20% on Yelp, Amazon, MovieLens. Beautiful papers. Then you A/B test in production and CTR moves +0.3% with a 95% CI of $\pm 0.4\%$ . The contrastive model that crushed offline barely budges live.

What’s going on?

Offline benchmarks evaluate against held-out clicks. Held-out clicks come from a recommender that’s already biased — usually a popularity-tilted CF baseline. When your contrastive model surfaces more long-tail items with sharper embeddings, it gets penalised offline because those items had no chance to be clicked in the historical log. Offline NDCG rewards you for predicting what the previous system already showed.

The contrastive gain concentrates in the cold tail. SGL improves uniformity in the embedding space (Wang & Isola 2020). Items with few interactions get vectors that are more separable from popular items. In offline evaluation, those tail items are mostly absent from positive labels. In live A/B, they show up as new impressions, get some clicks, but the overall CTR is dominated by head items where SGL makes no difference.

Position bias dominates short-term metrics. A new model that reorders the top 5 by 1 position can swing CTR more than a model that fixes the cold tail. Position 1 vs position 2 has a CTR ratio of ~1.6× on most surfaces.

What to do:

- Evaluate offline with a counterfactual estimator (IPS-weighted NDCG) instead of vanilla NDCG. Closes most of the gap.

- Bucket A/B results by item popularity quintile. SGL usually wins +3–8% CTR in the bottom two quintiles even when the overall metric is flat.

- Run the test for 14+ days. Cold-start improvements compound: new items that finally get exposure today generate engagement data that improves the next training cycle.

If you only have one number to report, report lift on first-week-active items (items launched in the last 7 days). That’s where contrastive learning earns its keep.

Inference cost: where contrastive doesn’t bite#

The training cost story for CL is well-known — InfoNCE with large negative batches is heavy. The inference story is the surprise: contrastive learning costs you almost nothing extra at serving time.

The augmentation, projection head, and contrastive loss are all training-only. At inference, SGL is structurally identical to LightGCN — same number of GNN layers, same embedding dim, same dot-product scoring. Same QPS, same latency, same memory.

The gotcha is training cost, which determines refresh cadence. Contrastive training adds:

- One extra forward pass per augmentation (typically 2 augmentations → 2× forward)

- A negatives matrix of size (batch, batch) for the InfoNCE denominator → quadratic in batch size

On a 100M-interaction dataset, vanilla LightGCN trains in ~3 hours on a single A100. SGL with 2 augmentations and batch 4096 takes ~9 hours. That’s a real cost when you’re retraining every 4 hours in production. Mitigations: smaller batch with momentum queue (MoCo-style), or skip augmentations entirely (XSimGCL — random noise on embeddings).

XSimGCL is what most teams should ship. Same uniformity gains as SGL, ~30% slower than vanilla LightGCN instead of 3×.

Summary#

Contrastive learning solves a problem that more data cannot: it gives a model a way to learn geometry from sparse interactions, by demanding consistency under perturbation. The recipe in 2024 is well-understood:

- Pick an augmentation, or none at all (XSimGCL noise).

- Apply InfoNCE with $\tau \approx 0.2$ .

- Add it as an auxiliary loss with weight $\lambda \approx 0.1$ on top of your existing supervised objective.

For graph-based recommenders, start with XSimGCL — it is the simplest thing that works. If you want a more interpretable baseline, SGL with edge dropout is still a strong choice. For sequence recommenders, CL4SRec’s three-way augmentation menu is the standard. In every case, expect the largest wins on the cold and the tail.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning you are here

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices