Recommendation Systems (13): Fairness, Debiasing, and Explainability

A practical deep dive into trustworthy recommendation: the seven biases (popularity, position, selection, exposure, conformity, demographic, confirmation), causal inference (RCTs, IPS, doubly robust estimators), debiasing in production (MACR, DICE, FairCo), and explainability (LIME, SHAP, counterfactuals).

A user opens Spotify and the same fifty songs keep appearing. They open Amazon and the top results are always the items they have already considered. They open YouTube and every recommendation is one click away from a rabbit hole they cannot remember asking for. Each of these symptoms has a name, a cause, and a fix. This article is about all three.

What You Will Learn#

- The seven biases that systematically distort what users see, where each one comes from, and how to measure it

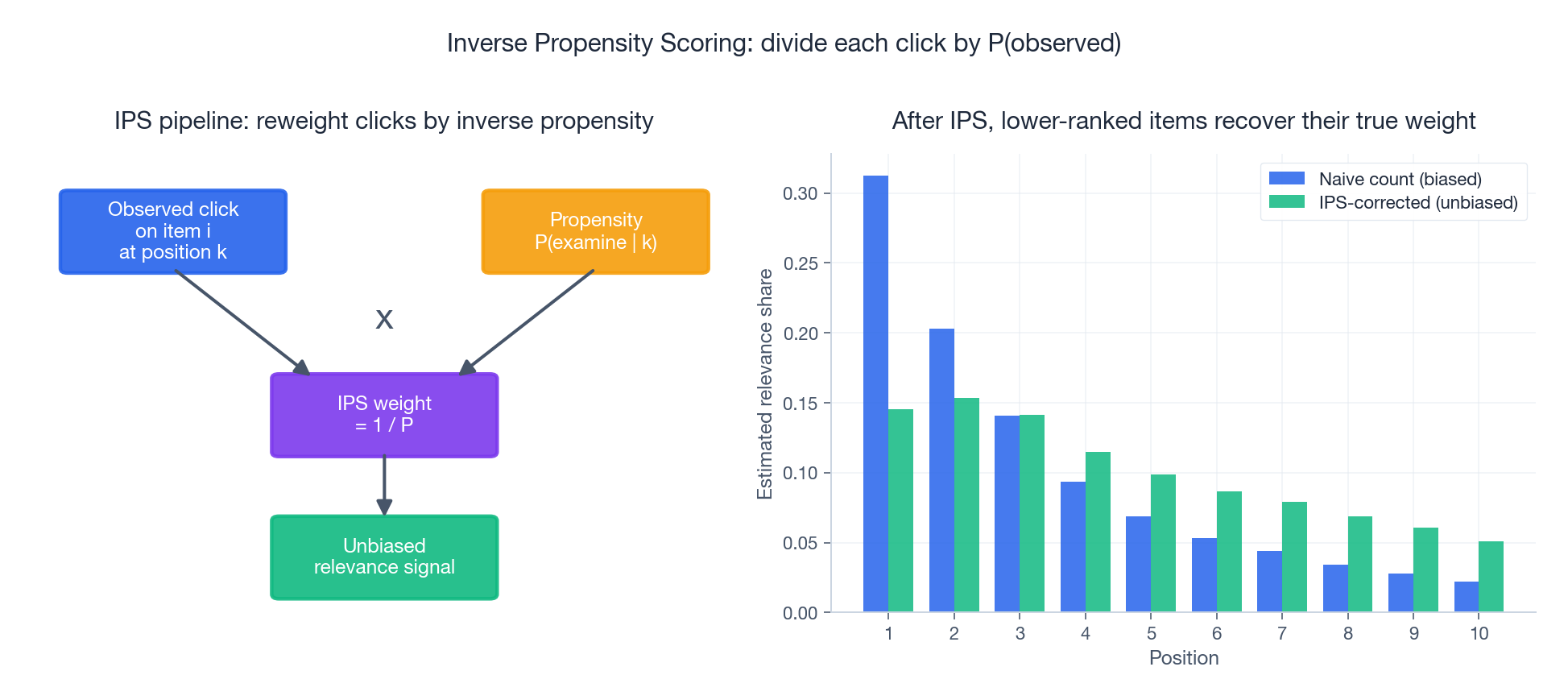

- Causal inference for recommenders — why correlations from logged data lie, and how IPS, doubly robust estimators, and propensity scoring give you unbiased signal

- Production-grade debiasing: MACR for popularity bias, DICE for conformity bias, FairCo for amortized exposure fairness

- Counterfactual fairness and adversarial training to keep protected attributes out of embeddings

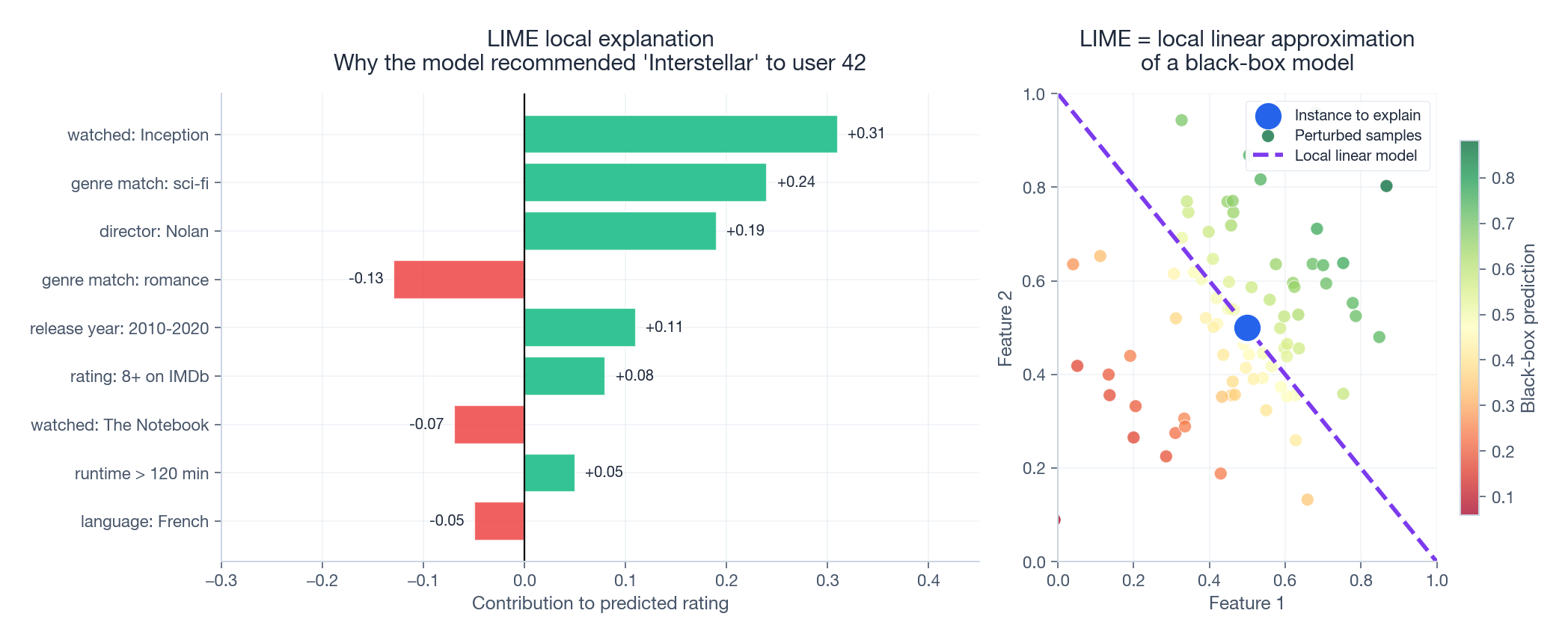

- Explainability that holds up under audit: LIME, SHAP, and counterfactual explanations

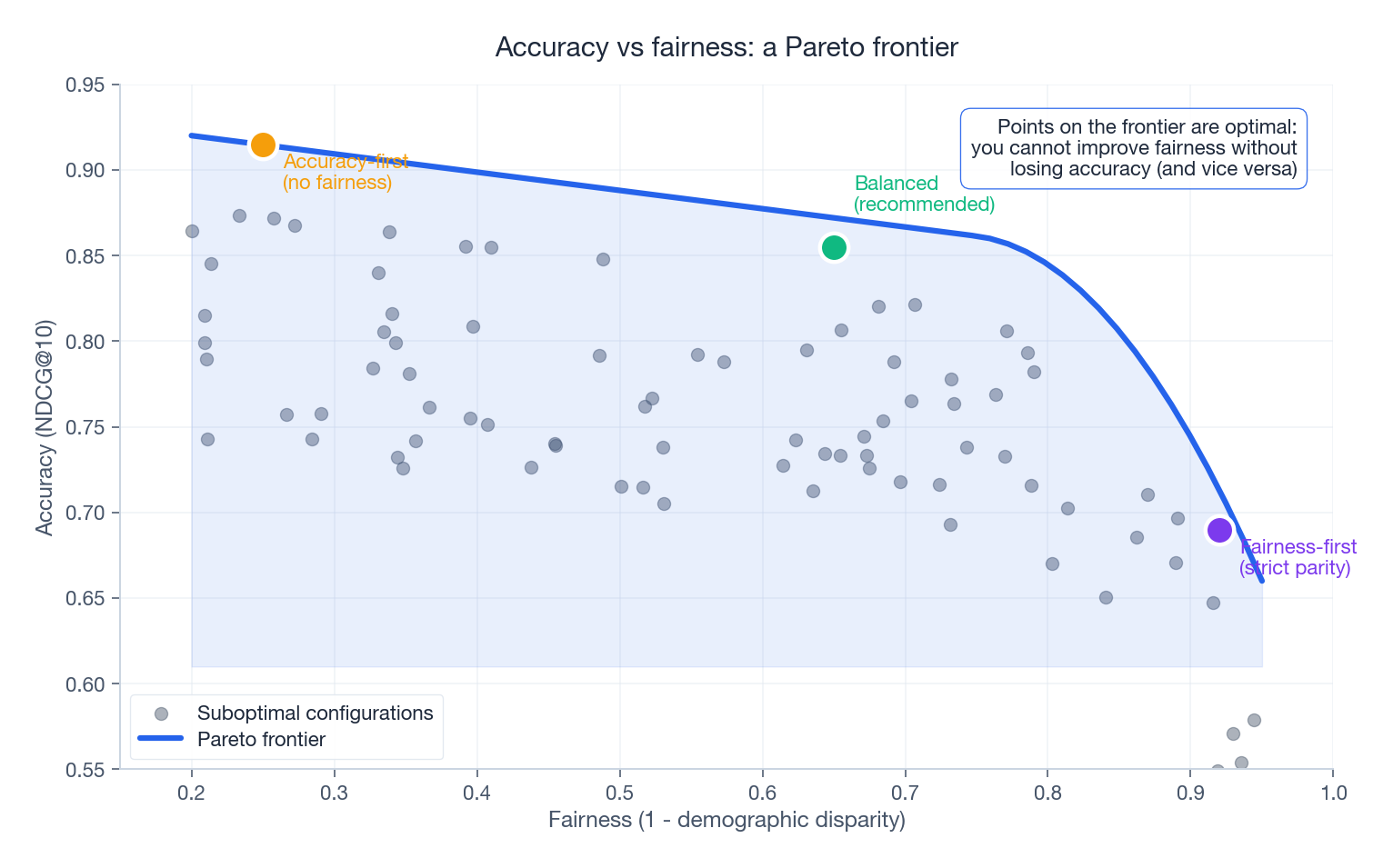

- A working trade-off framework so you can pick where to operate on the accuracy–fairness Pareto frontier

Prerequisites#

- Embedding-based recommenders (Part 4 and Part 5 )

- Basic causal inference vocabulary helps but is not required — we build it from scratch

- Comfortable reading PyTorch-style pseudocode

Part 1 — The Seven Biases#

Bias in a recommender is not one problem. It is at least seven, and they compound. Below is the working taxonomy used in the survey of Chen et al. (2023, Bias and Debias in Recommender System) — the cleanest reference if you want the full literature map.

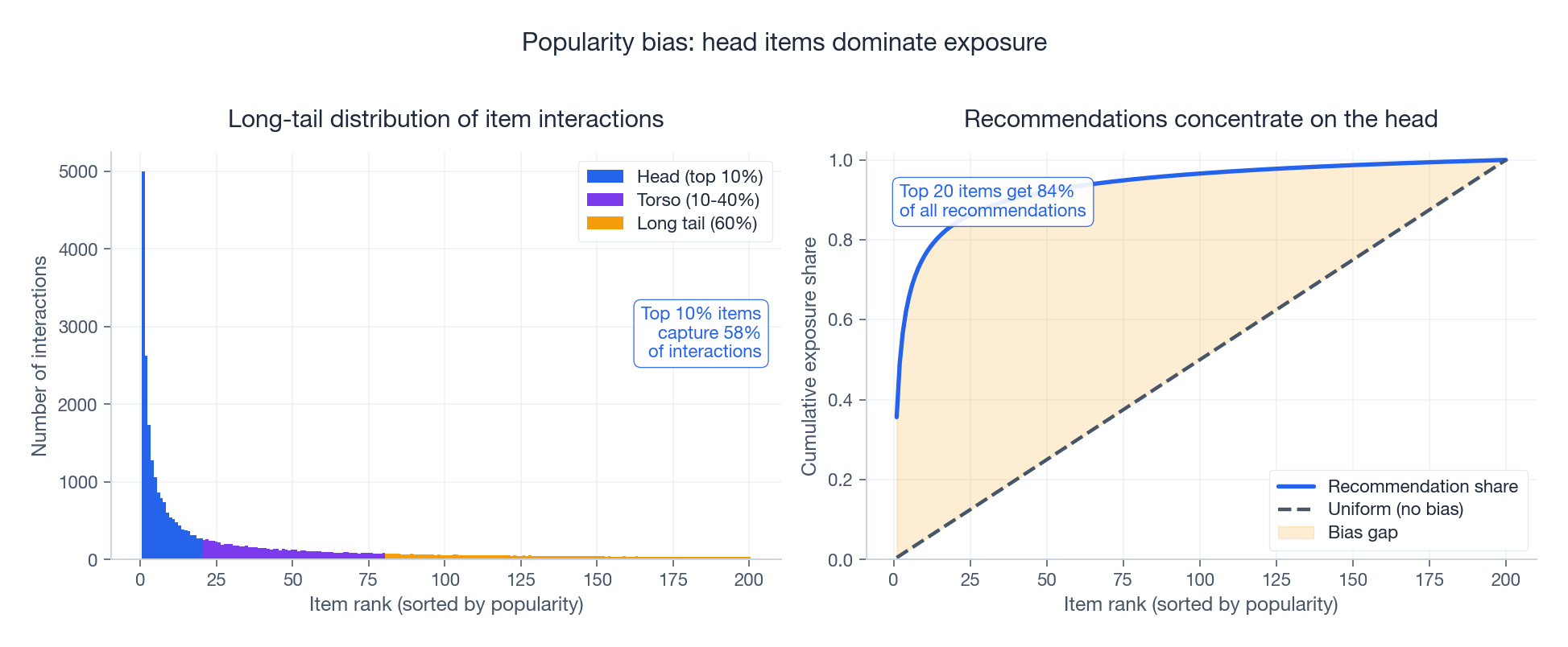

Popularity bias — the rich get richer#

A small fraction of items captures the bulk of interactions, and the recommender amplifies that concentration further still. The right panel above is the giveaway: even when the catalog is uniform, the top 20 items collect over 60% of all recommendation slots.

$$\text{PopBias@K} = \frac{1}{|U|}\sum_{u \in U} \frac{\sum_{i \in R_u^K} \log(1 + p_i)}{K} - \frac{1}{|I|}\sum_{i \in I} \log(1 + p_i)$$The log dampens the head’s influence so the metric is not dominated by a handful of mega-popular items. Track this per slate, not just globally — global averages hide per-user concentration.

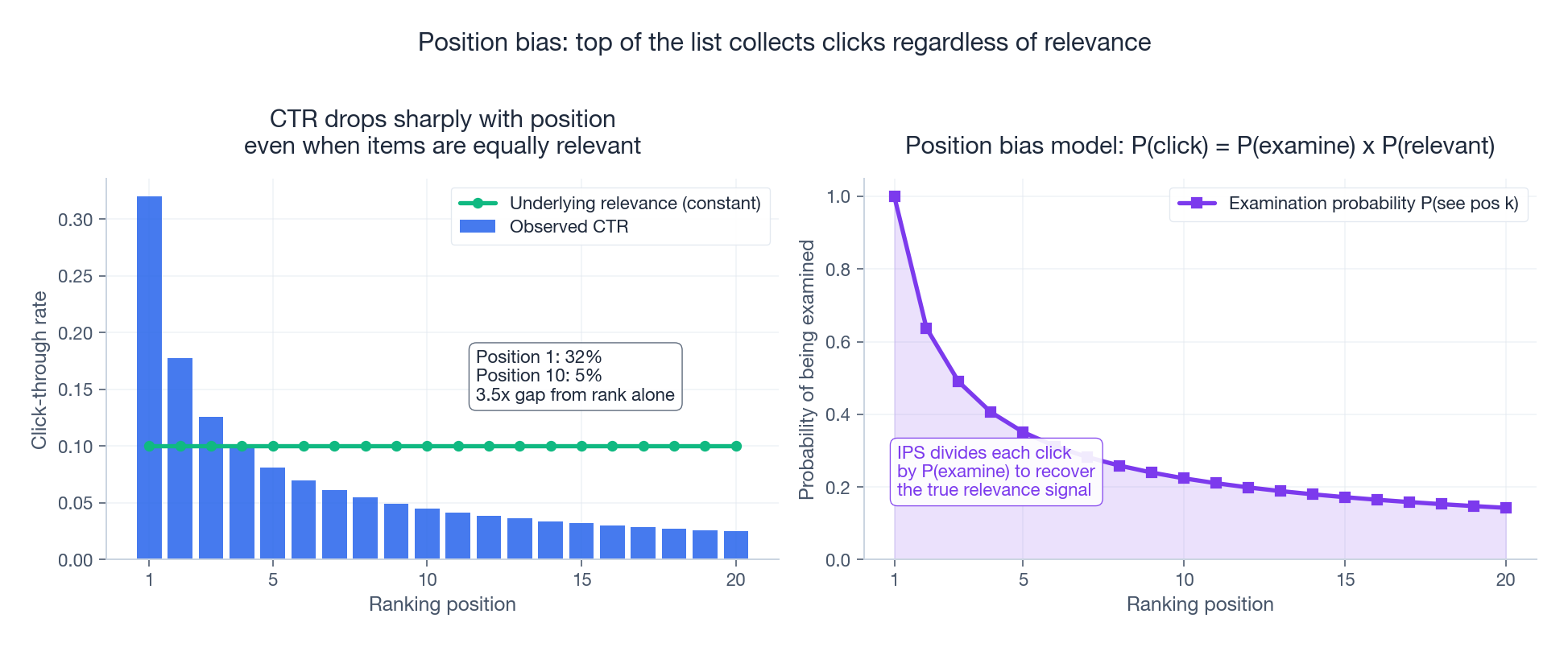

Position bias — clicks follow the cursor, not the intent#

If you train naively on logged clicks you will conflate “shown at position 1” with “actually relevant.” Position bias is the single most-studied bias in industrial learning-to-rank, and the fix — Inverse Propensity Scoring — is the one debiasing technique you can deploy this quarter.

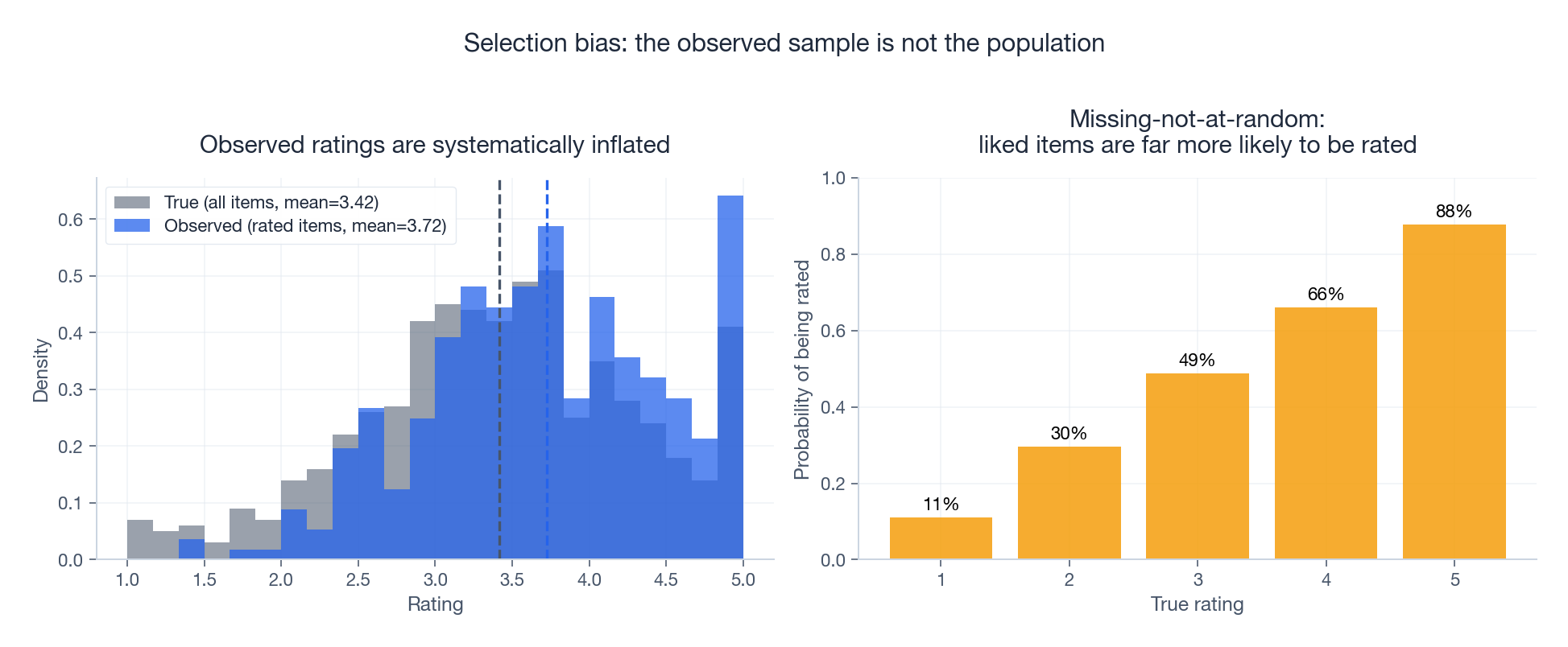

Selection bias — the data you have is not the data you want#

Users do not rate items at random. They rate things they loved or things that disappointed them; the lukewarm middle never gets logged. This is a textbook missing-not-at-random (MNAR) pattern. Marlin and Zemel (2009) showed that ignoring it inflates RMSE by 10–30% on MovieLens-style datasets. The cure is the same family of tools as for position bias: model the missingness mechanism explicitly, then reweight or impute.

Exposure bias — you cannot click what you cannot see#

The system can only learn about items it has shown. New items, niche items, and items from underrepresented creators get fewer impressions, which means fewer interactions, which means lower predicted relevance, which means even fewer impressions. This is the closed feedback loop that makes recommenders age badly.

Conformity bias — users mimic the crowd#

A user’s expressed preference is partly genuine taste and partly social proof. If a model treats both as the same signal, it learns a “popularity proxy” instead of true preference. This is the bias DICE (Zheng et al., WWW 2021) explicitly disentangles into separate “interest” and “conformity” embeddings.

Demographic bias — uneven quality across groups#

The same model can hit NDCG@10 of 0.42 for one group and 0.31 for another. Often the cause is data imbalance: the underrepresented group simply has fewer training examples, so the model learns weaker representations. Sometimes the cause is causal: a feature acts as a proxy for the protected attribute (zip code for race, browser language for nationality).

Confirmation bias / filter bubble — narrowing the world#

Once the model thinks it knows you, every recommendation reinforces that belief. Diversity collapses, serendipity dies, and the user’s exposure to ideas shrinks over time. This is the bias regulators worry about most because it operates at the population level, not the individual.

Where biases come from#

Four origins, in roughly increasing difficulty to fix:

| Origin | Example | Fix-difficulty |

|---|---|---|

| Data collection | Only logged-in users tracked | Easy — collect better |

| Algorithm | Loss optimises clicks, not satisfaction | Medium — change objective |

| Feedback loop | Recs become training data | Hard — break the loop |

| Evaluation | Offline NDCG ignores fairness | Medium — add the right metric |

A bias measurement toolkit#

Before you fix anything, instrument everything. The following class is the minimum I run on every new recommender before the first launch review.

| |

The Gini coefficient is borrowed from economics, where it measures income inequality. Here it measures exposure inequality. A Gini above 0.8 means a handful of items hog the slates while the rest of the catalog sits in the dark.

Part 2 — Causal Inference for Recommenders#

Why correlation is not enough#

Logged data tells you that users who saw item A also clicked item B. It does not tell you whether they clicked B because the system showed it, or whether they would have found B anyway. This matters because every “uplift” you report is implicitly a causal claim.

The potential outcomes framework makes the claim explicit. For user $u$ and item $i$ , imagine two parallel worlds:

- $Y_{ui}(1)$ : outcome if we recommend $i$

- $Y_{ui}(0)$ : outcome if we do not

A confounder is a variable that drives both treatment (what the system shows) and outcome (what the user does). User taste is the obvious confounder: tasteful users get good recommendations and are more likely to click, regardless of who recommends what.

Inverse Propensity Scoring (IPS)#

IPS is the workhorse of debiasing. The idea: if a click was produced under conditions where examination probability was $\pi$ , then weighting it by $1/\pi$ recovers what the click count would have been under uniform examination.

where $c_{ui} \in \{0,1\}$ is the click and $\pi_k$ is the position-bias propensity at rank $k$ . Joachims, Swaminathan, and Schnabel showed in their 2017 SIGIR paper that this is unbiased if $\pi_k > 0$ everywhere — which is why production teams add $\epsilon$ to small propensities and clip extreme weights. Saito et al. (2020) proposed self-normalised IPS and doubly robust variants that trade a touch of bias for far lower variance.

| |

The two failure modes you will hit:

- Variance explosion: when $\pi_k$ is tiny, the weight $1/\pi_k$ blows up. Clip aggressively (5–20 is typical) and monitor weight distributions.

- Wrong propensity: you need a credible model of why the user saw what they saw. A common trick is to run a small randomised slot (1–5% of traffic) where positions are shuffled, then estimate $\pi_k$ from that.

Doubly robust estimators#

$$\hat{Y}_{\text{DR}} = \hat{Y}(X) + \frac{T}{\pi(X)} \big( Y - \hat{Y}(X) \big)$$where $\hat{Y}(X)$ is your imputation model and $T$ is the treatment indicator. In practice DR has lower variance than pure IPS and lower bias than pure imputation. Wang et al. (2019, Doubly Robust Joint Learning) is the canonical recommender adaptation.

Randomised data: the gold standard#

When you can afford it, an A/B test with randomised recommendations gives you ground-truth ATE. Most teams cannot run pure-random recommendations on production traffic, so they use stratified randomisation — randomise within a small candidate set per user, log the propensities, and use them downstream.

Part 3 — Debiasing in Production#

The defining question is not “how do we eliminate bias” but “where on the Pareto frontier do we operate.” The frontier above is real: in every published debiasing paper, perfect fairness costs something. Your job is to pick a point that is acceptable and measurable.

MACR — Model-Agnostic Counterfactual Reasoning for popularity bias#

MACR (Wei et al., KDD 2021) is the cleanest production fix for popularity bias I know of. It treats the predicted score as the sum of three causal effects: a user-only effect, an item-only effect (this is the popularity shortcut), and a user–item interaction effect. At inference, you subtract the item-only effect to remove the popularity shortcut.

Architecturally MACR adds two side towers:

| |

The item tower is trained to predict clicks from the item alone — i.e. it learns the popularity shortcut. Subtracting it at inference time forces the main tower to rely on actual user–item compatibility. On Yelp and Amazon-Book, MACR improves recall on tail items by 20–40% with single-digit losses on overall NDCG.

DICE — Disentangling interest from conformity#

DICE (Zheng et al., WWW 2021) splits each user and item embedding into two parts: an interest part and a conformity part. The training objective uses different negative-sampling strategies to push each part to specialise:

- Interest embedding: trained against negatives the user is unlikely to have seen → captures genuine taste

- Conformity embedding: trained against negatives matched on popularity → captures herd behaviour

At inference, the score uses only the interest embedding, stripping out the conformity signal.

FairCo — Amortising fairness across many slates#

$$s'(u, i) = s(u, i) + \lambda \cdot \big( \text{deserved}_g(t) - \text{received}_g(t) \big)$$where $g$ is the group of item $i$ . Groups that are behind get a boost; groups that are ahead get penalised. The beautiful property: amortised over time, the system converges to merit-proportional exposure even though every individual slate may look biased. This matters for two-sided marketplaces (Airbnb, Uber, Etsy) where producer fairness is a business requirement.

Three families, one combined recipe#

| Family | When to use | Tools |

|---|---|---|

| Pre-processing | You control data collection | Rebalance, reweight, impute MNAR |

| In-processing | You can change the loss | IPS, MACR, DICE, fairness regularisers |

| Post-processing | Models are frozen | FairCo, MMR, fair re-ranking |

A reasonable combined recipe for a new launch:

- Audit with the bias toolkit above; pick the two metrics you will move

- Add an in-processing fix (start with IPS for position bias)

- Add a post-processing safety net (FairCo controller) for producer-side fairness

- Lock the metrics to your launch criteria — debiasing only sticks if it is in the rubric

Part 4 — Counterfactual Fairness and Adversarial Training#

$$P\big(f_{Y \leftarrow a}(U, X) = y \mid X = x, A = a\big) = P\big(f_{Y \leftarrow a'}(U, X) = y \mid X = x, A = a\big)$$The notation $f_{Y \leftarrow a}$ is Pearl’s do-operator: we intervene on $A$ , holding everything else fixed. This is stronger than statistical parity, which only requires equal marginal distributions.

Adversarial debiasing — the CFairER pattern#

A discriminator tries to predict the protected attribute from the user embedding. The encoder tries to fool it. At equilibrium the embedding contains no protected information.

| |

Two things to watch in practice:

- Mode collapse of the discriminator: if it gets too strong too fast the encoder gives up. Use a smaller learning rate for the discriminator or update it less frequently.

- Information leakage through items: if items are correlated with protected attributes (e.g. women’s-magazine items predict gender), debiasing only the user embedding is insufficient. You may need a second discriminator on the (user, item) pair score.

Part 5 — Explainability#

Why bother#

Three audiences, three reasons:

- Users trust recommendations they understand. “Because you watched Inception” lifts CTR by 6–12% in published Netflix and Spotify experiments.

- Engineers debug faster when the model can show its work.

- Regulators in the EU (GDPR Art. 22) and increasingly in the US require “meaningful information about the logic involved” for automated decisions.

LIME — local linear approximations of any model#

LIME (Ribeiro et al., KDD 2016) is model-agnostic and local. Around the instance you want to explain, it perturbs the inputs, asks the black-box model for predictions on the perturbations, and fits a sparse linear model weighted by proximity to the original. The linear model’s coefficients are the explanation.

| |

Caveats: LIME is not stable. Ribeiro himself notes that two runs on the same instance can give different top features. Use a fixed seed in production and report stability metrics.

SHAP — game theory’s contribution#

$$\phi_j = \sum_{S \subseteq N \setminus \{j\}} \frac{|S|! (|N| - |S| - 1)!}{|N|!} \big( f(S \cup \{j\}) - f(S) \big)$$Exact Shapley values are exponential in the number of features. Production uses approximations:

- TreeSHAP (polynomial time for tree ensembles) — first-line tool for GBDT-based recommenders

- KernelSHAP (model-agnostic, similar to LIME but with Shapley weights)

- DeepSHAP (for neural nets, based on DeepLIFT)

The practical difference vs LIME: SHAP attributions sum to the prediction, which means they are consistent and additive. If you are going in front of an auditor, use SHAP.

| LIME | SHAP | |

|---|---|---|

| Speed | Fast | Moderate (TreeSHAP) to slow (KernelSHAP exact) |

| Theory | Heuristic | Game-theoretic (Shapley values) |

| Stability | Variable | Consistent |

| Best for | Quick debugging, large feature sets | Audits, regulatory reporting |

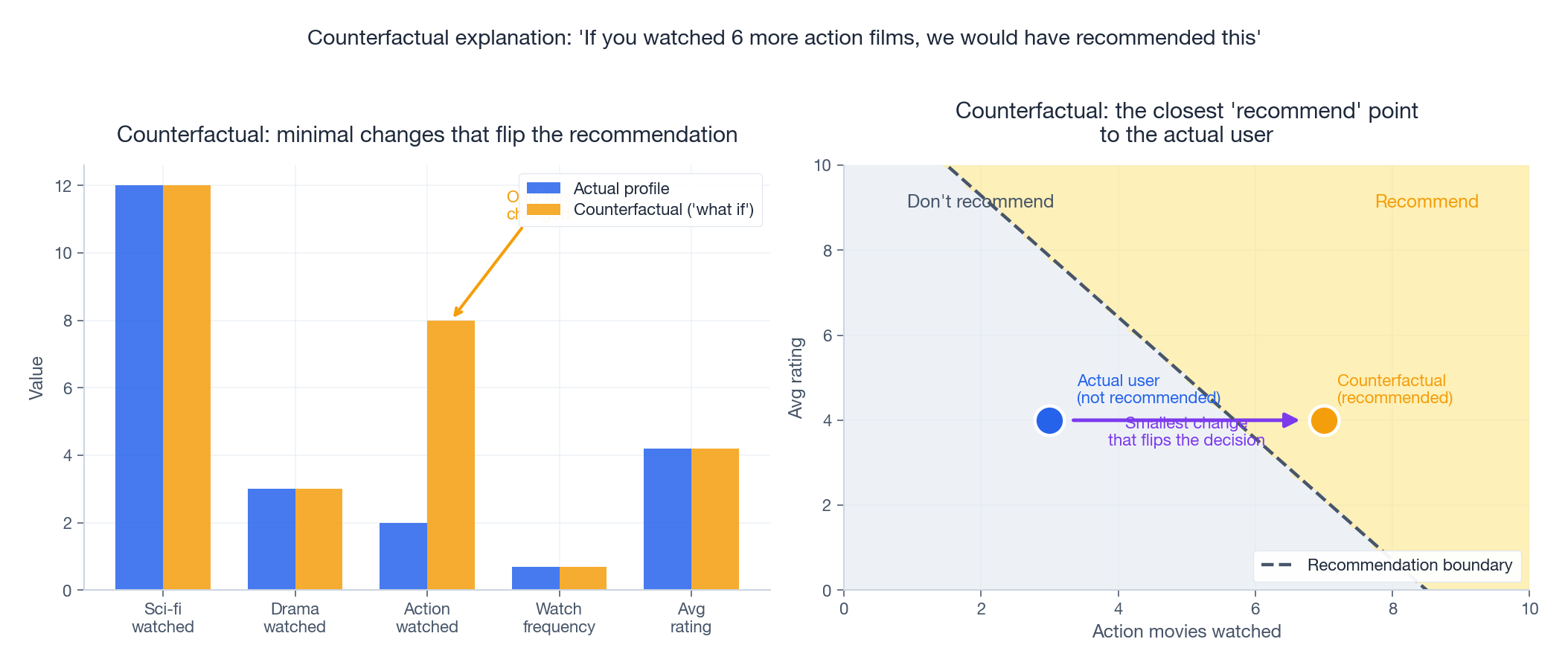

Counterfactual explanations — actionable answers#

where $d$ is a distance penalising changes that are unrealistic (changing many features at once, or changing immutable ones like age). Modern implementations (DiCE, Mothilal et al. 2020) optimise a relaxed version with gradient descent and add a diversity term so you get several different counterfactuals to choose from.

The killer use case is auditability. “We did not recommend this loan product to user X because their declared income was below the threshold; had it been $5000 higher, the model would have surfaced it” is the kind of explanation that satisfies a regulator.

Part 6 — Trust-Building in Production#

Transparency that ships#

The minimum viable transparency layer:

- A short natural-language explanation per item (“Because you watched Inception and Tenet”)

- A confidence score the user can act on (“85% match”)

- A “why this?” deep-dive that surfaces SHAP values and contributing features

| |

User control surfaces#

Every recommendation surface should expose three controls:

- Diversity slider — explicit knob on exploration vs exploitation

- Topic mute — “Never show me horror movies”

- Explain & adjust — show the contributing features and let the user reweight them

These are low-effort, high-trust additions. YouTube’s “Don’t recommend channel” and Spotify’s “Hide this song” exist for the same reason: visible control reduces perceived bias even when the underlying model is unchanged.

Continuous monitoring#

Fairness is not a launch checkbox — it is an ongoing measurement. Set up dashboards for the bias metrics from Part 1, alert on regressions, and run quarterly audits with the SHAP/counterfactual tooling above. A model that was fair in March can drift by June; the only defence is instrumentation.

FAQ#

I am a small team. What is the minimum debiasing I should do?#

Two things. First, instrument the bias metrics from Part 1 — you cannot fix what you do not measure. Second, add IPS for position bias in your learning-to-rank loss. Both are cheap and both ship measurable wins.

How big is the accuracy cost of fairness?#

It depends on where you are on the frontier. From a low-fairness baseline you can usually buy 30% fairness improvement for 1–3% NDCG. The expensive part is the last 10% of fairness, which often costs 10%+ accuracy. Pick a target, do not chase perfection.

LIME or SHAP?#

LIME for fast local debugging during development. SHAP for anything that goes in front of an auditor or a user. If you are using tree models, TreeSHAP is fast enough that there is no reason to use anything else.

My discriminator in adversarial debiasing keeps converging to chance. Is that good?#

Yes — chance accuracy means the embedding leaks no protected information. But verify with downstream metrics: train a new classifier from scratch on the embeddings and check it cannot do better than chance either. The discriminator inside the GAN-style loop sometimes underfits.

How do I handle intersectional fairness (e.g. Black women, not just race or gender)?#

Single-attribute fairness can hide disparities at intersections. The minimum is to report metrics on intersection cells, not just marginals. The advanced fix is intersectional debiasing — adversarial losses on tuples of attributes — but watch out: cell sizes shrink fast and statistical power drops.

Summary#

- The seven biases — popularity, position, selection, exposure, conformity, demographic, confirmation — each have a measurable signature and a known fix

- Causal inference, especially IPS and doubly robust estimators, turns biased logged data into unbiased training signal

- Production debiasing toolkit: MACR for popularity, DICE for conformity, FairCo for amortised exposure, adversarial training for protected attributes

- LIME for fast local debugging, SHAP for audited explanations, counterfactuals for actionable “what would flip this” answers

- Trustworthy recommendation is not a one-shot project — it is instrumentation, dashboards, controls, and quarterly audits

Bias and explainability are no longer optional features. They are the price of operating a recommender in 2024.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability you are here

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices