Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

Cold-start and cross-domain recommendation in depth: the three faces of cold-start, EMCDR/PTUPCDR cross-domain bridges, MeLU/MAML meta-learning, UCB bandits for exploration, and the cold-to-warm production stack.

When Netflix launches in a new country, it inherits millions of users with no history and a catalog with no local ratings. Amazon faces the same issue each time it opens a new product category. Pure collaborative filtering, the workhorse of warm-state recommendations, has nothing to compute. Techniques that make recommendations work in this scenario include: bootstrap heuristics for the first request, meta-learning after a few interactions, cross-domain transfer when a related domain is rich, and bandits to keep exploring once the model is confident. This post walks through these techniques, anchored to the papers they come from.

What You Will Learn#

- The three cold-start regimes — new user, new item, new system — and why each demands a different lever

- Cross-domain bridges — from EMCDR’s shared MLP to PTUPCDR’s per-user meta network

- Meta-learning for recsys — MAML’s bilevel optimization and how MeLU specialises it for users

- Exploration vs exploitation — UCB1, ε-greedy, Thompson sampling and their regret bounds

- The cold-to-warm progression — which method dominates at which interaction count

- Content-based fallback — the always-on safety net for new items

Prerequisites#

- Working knowledge of collaborative filtering and matrix factorization (Parts 3-4)

- Comfort with PyTorch and basic gradient descent (Part 7 )

- Willingness to read one or two equations carefully

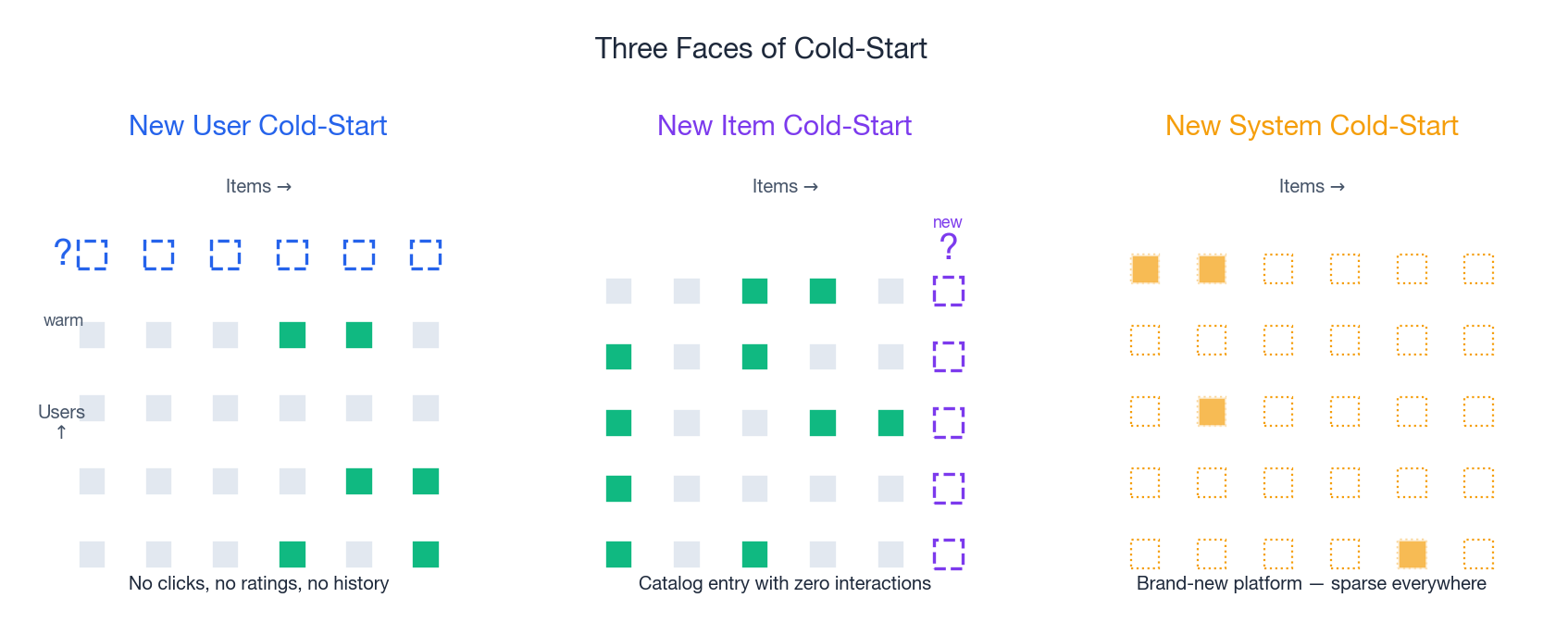

The Three Faces of Cold-Start#

A recommendation system trained on history fails in three distinct ways when history is missing. Each looks like a different shape of hole in the user-item matrix.

User cold-start#

A user signs up. We may know their device, IP region, and the campaign that brought them in — possibly an age band from a sign-up form. We have zero explicit interactions. Their row in the rating matrix is blank. Industry data shows that poor first-session recommendations correlate with roughly 3× higher 30-day churn on consumer apps. The solution is inference from sparse signals plus aggressive exploration.

Item cold-start#

A merchant uploads a new SKU, a studio releases a new film, or a creator publishes a new video. The column is blank. Collaborative filtering can’t retrieve the item because nobody has interacted with it. Without intervention, the item never gets exposure, never accumulates ratings, and stays invisible — the so-called rich-get-richer problem. The solution is content features: title, image, embedding from a pretrained encoder, and seeding via similar warm items.

System cold-start#

A new platform launches, or an existing platform expands into a new vertical. The matrix is mostly empty. Both rows and columns are sparse simultaneously. The solution is transfer: borrow embeddings, mappings, or even ranking models from a related, data-rich domain.

A formalism that covers all three#

Given users $U$ , items $I$ , and an interaction matrix $R \in \mathbb{R}^{|U| \times |I|}$ , define cold-start subsets $U_{\text{cold}} \subset U$ with $|R_{u,\cdot}| < K$ for $u \in U_{\text{cold}}$ , and similarly $I_{\text{cold}}$ . The objective is to predict $R_{u,i}$ for $(u, i)$ pairs where at least one side is cold. Standard CF learns embeddings $\mathbf{e}_u, \mathbf{e}_i$ from co-occurrence patterns; on cold rows or columns these embeddings either don’t exist or are random initializations with no gradient signal. Every method below is essentially an answer to the question: what do we use instead of $\mathbf{e}_u$ or $\mathbf{e}_i$ when the data isn’t there?

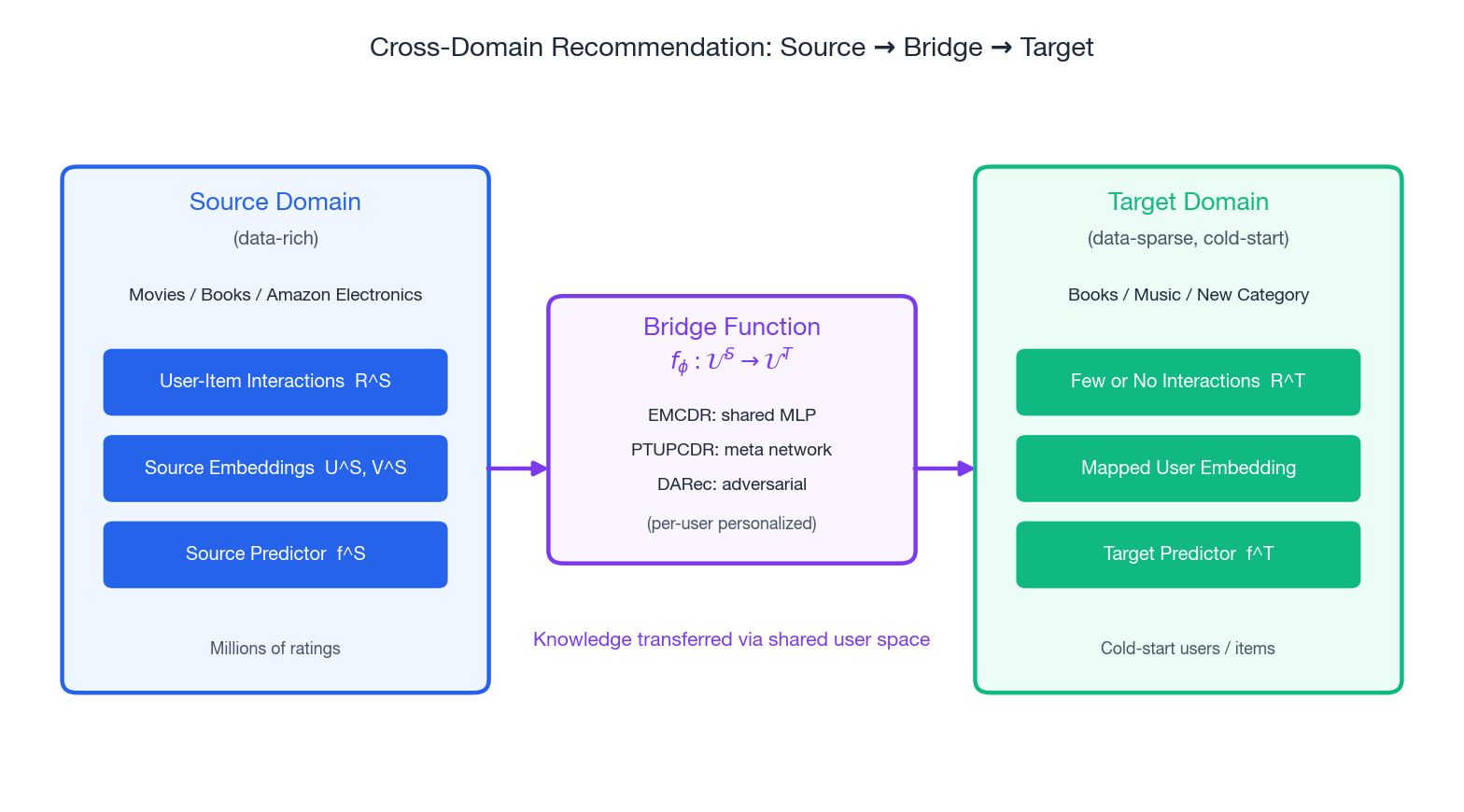

Cross-Domain Recommendation#

The intuition is old: a user who loves Stanley Kubrick’s 2001: A Space Odyssey probably reads Arthur C. Clarke. Their movie behavior is a strong prior on their book preferences even though the items are different. The technical question is how to operationalize “strong prior” — what function maps a user’s representation in the movie domain to a representation in the book domain?

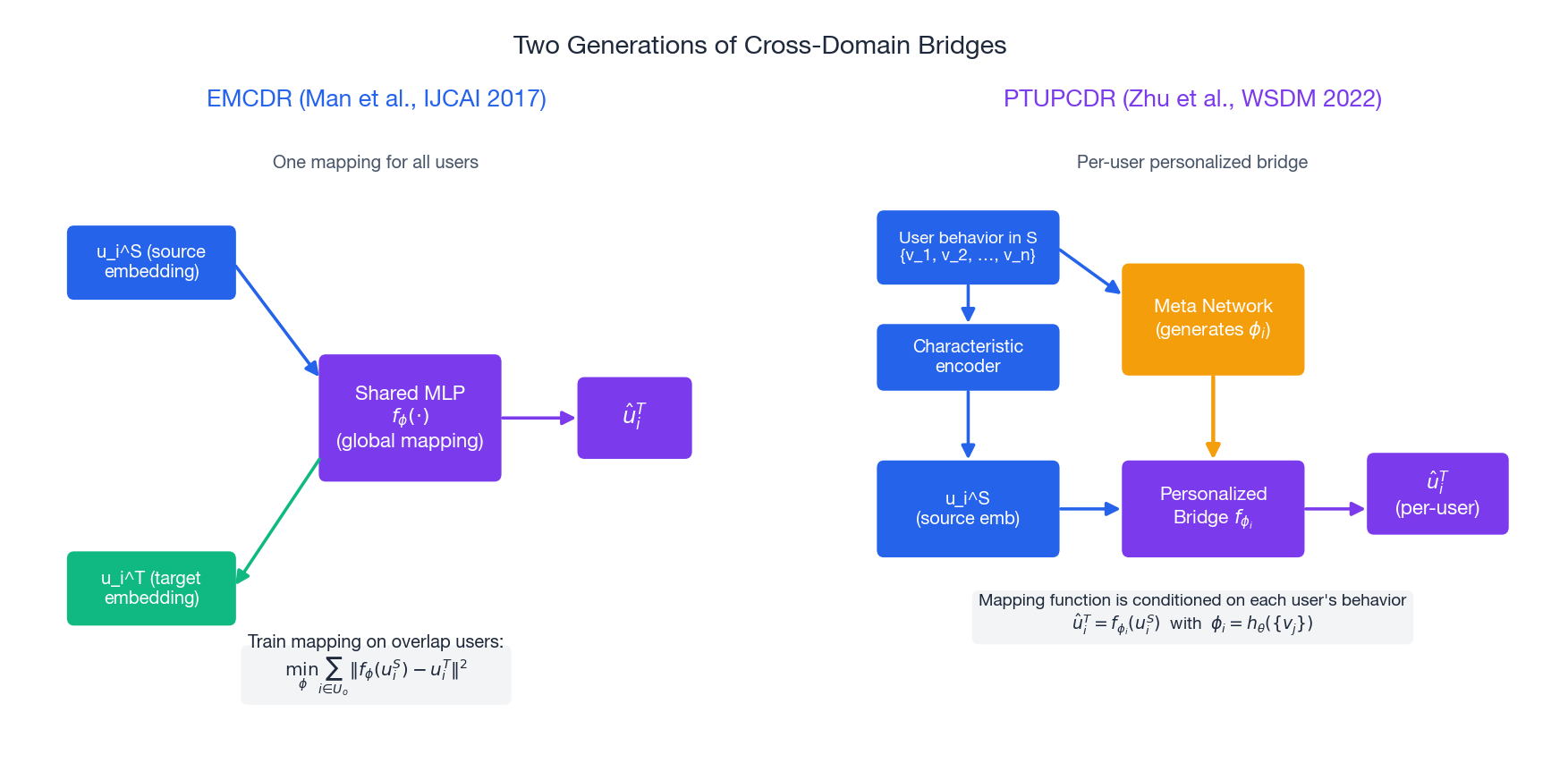

EMCDR — the shared MLP bridge#

Man et al., IJCAI 2017 introduced EMCDR (Embedding and Mapping for Cross-Domain Recommendation), the cleanest formulation. It has three stages.

- Train domain-specific MF. Run matrix factorization on source interactions $R^S$ and target interactions $R^T$ separately, yielding user embeddings $U^S, U^T$ and item embeddings $V^S, V^T$ .

- Train a mapping on overlap users. For users $i \in U_o = U^S \cap U^T$ that exist in both domains, learn an MLP $f_\phi$ that minimizes $\sum_{i \in U_o} \|f_\phi(\mathbf{u}_i^S) - \mathbf{u}_i^T\|^2$ .

- Predict for cold target users. For a user who only exists in the source, set $\hat{\mathbf{u}}_i^T = f_\phi(\mathbf{u}_i^S)$ and feed it into the target predictor.

The bridge is global — every user is mapped through the same $f_\phi$ .

PTUPCDR — personalize the bridge itself#

$$\phi_i = h_\theta\bigl(\{\mathbf{v}_j^S : j \in \mathcal{H}_i^S\}\bigr), \qquad \hat{\mathbf{u}}_i^T = f_{\phi_i}(\mathbf{u}_i^S)$$In words: read the user’s source-domain history, summarize it into a small set of bridge weights, then apply that personalized bridge to the user’s source embedding. PTUPCDR reports MAE reductions of 5–10% over EMCDR on the standard Amazon cross-category benchmarks.

A minimal cross-domain skeleton#

| |

Negative transfer — the failure mode to watch for#

Cross-domain transfer is not free. If the source and target are weakly related (say, news-reading patterns and grocery purchases), the bridge can actively hurt target performance. The signal is straightforward: run an A/B against a target-only baseline and watch the cold-user metric. If transfer doesn’t beat the baseline at the same training cost, the domains are too far apart.

Meta-Learning for Cold-Start#

The cross-domain story assumes you have a related rich domain. Meta-learning takes a different angle: even within a single domain, can we train the model so that it adapts quickly to a new user from just a handful of interactions?

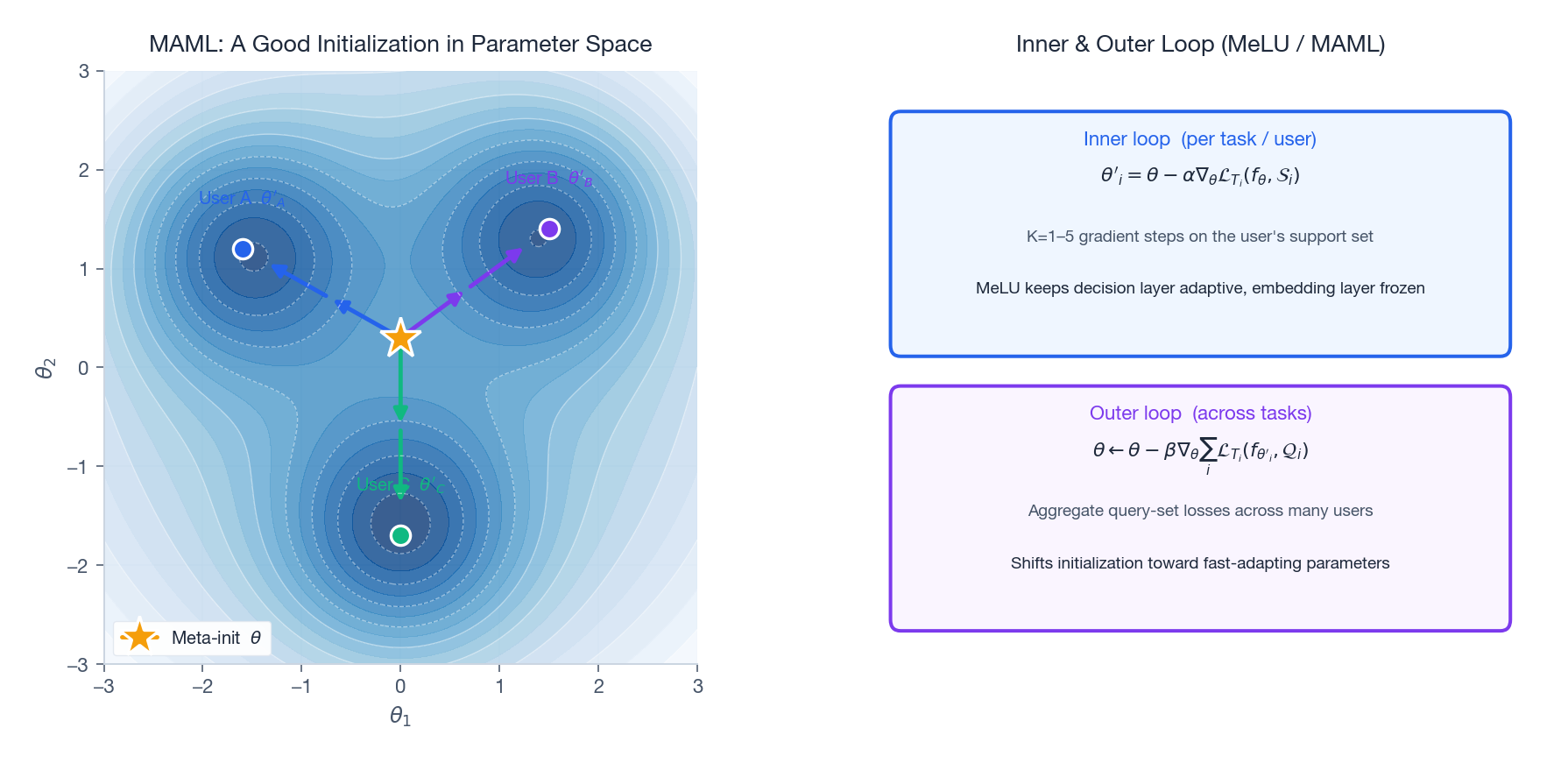

MAML in one paragraph#

$$\theta'_i = \theta - \alpha \nabla_\theta \mathcal{L}_{T_i}(f_\theta, \mathcal{S}_i)$$ $$\theta \leftarrow \theta - \beta \nabla_\theta \sum_{T_i \sim p(\mathcal{T})} \mathcal{L}_{T_i}(f_{\theta'_i}, \mathcal{Q}_i)$$Geometrically, MAML pushes $\theta$ to a region of parameter space where every task’s optimum is just a few gradient steps away.

MeLU — MAML specialised for recommendation#

Lee et al. (KDD 2019) adapt MAML to recommendation as MeLU (Meta-Learned User preference estimator). The key engineering choice: only the decision layers are adapted in the inner loop; the embedding layers stay shared and slow-moving. This matches the inductive bias that item / category embeddings should be stable, while the way a user combines them is what changes per user.

Each “task” is one user. The support set $\mathcal{S}_i$ is the user’s first 1–5 interactions; the query set $\mathcal{Q}_i$ is held out for the outer-loop loss. After meta-training on hundreds of thousands of users, a brand-new user can be handled by:

- Take their first $K$ ratings as the support set.

- Run $K{=}1$ to $5$ gradient steps on the decision layers.

- Score all candidate items with the adapted model.

| |

When meta-learning is worth the cost#

MAML/MeLU costs 3–10× more training compute than a vanilla model because of the inner-loop unrolling and second-order gradients. Use it when:

- You have many users, each with very few interactions (the long-tail user distribution).

- You can identify a clear “task” boundary — one user, one session, one cohort.

- Bootstrap heuristics aren’t enough but you don’t have a related domain to transfer from.

If second-order gradients are too expensive, FOMAML drops them and recovers most of the benefit.

Bandits — Exploration vs Exploitation#

Once a model has some confidence about a user, the next question is what to actually serve. Always pick the highest-scoring item and you’ll never learn whether the user might love something the model rates lower. Always pick randomly and you’ll burn the user’s session. The textbook framework is the multi-armed bandit.

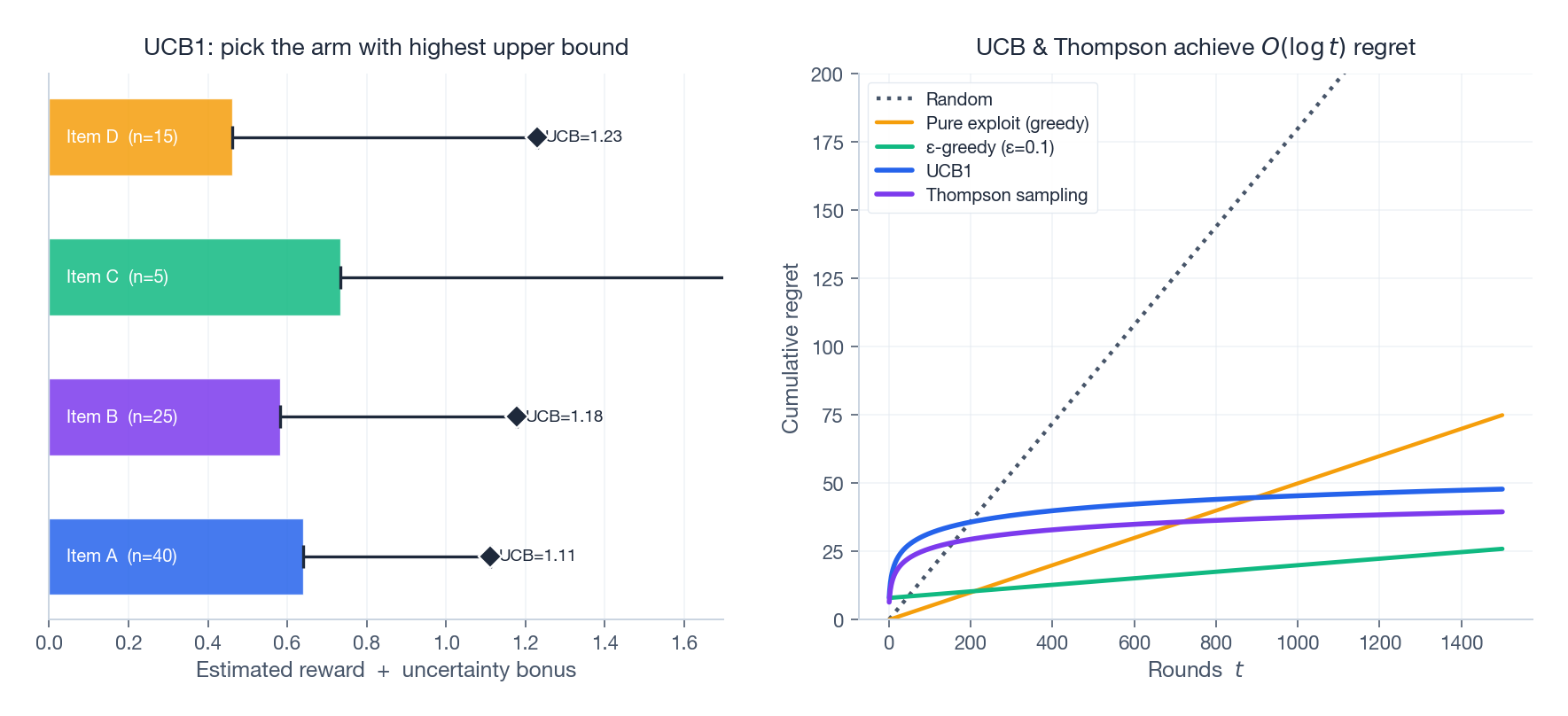

UCB1 — confidence bounds#

$$a_t = \arg\max_a \left[ \hat\mu_a + \sqrt{\frac{2 \ln t}{n_a}} \right]$$achieves $O(\log t)$ cumulative regret — i.e., the gap between UCB and an oracle that always picks the best arm grows only logarithmically in the number of rounds. The formula has a clean interpretation: pick the item whose upper confidence bound is highest. Items with few pulls $n_a$ get a large exploration bonus and are tried; items with many pulls have tight bounds and are picked only if their estimated mean is genuinely high.

Thompson sampling — Bayesian alternative#

Thompson sampling maintains a posterior over each arm’s reward and samples from it; pick the arm whose sample is highest. Empirically it usually matches or beats UCB1 and is trivial to implement for Beta-Bernoulli rewards.

| |

In production, contextual bandits like LinUCB and neural variants extend these ideas to use user / item features rather than just per-arm counters. They are the canonical tool for the few-shot regime where meta-learning has produced a model but you still need to gather data efficiently.

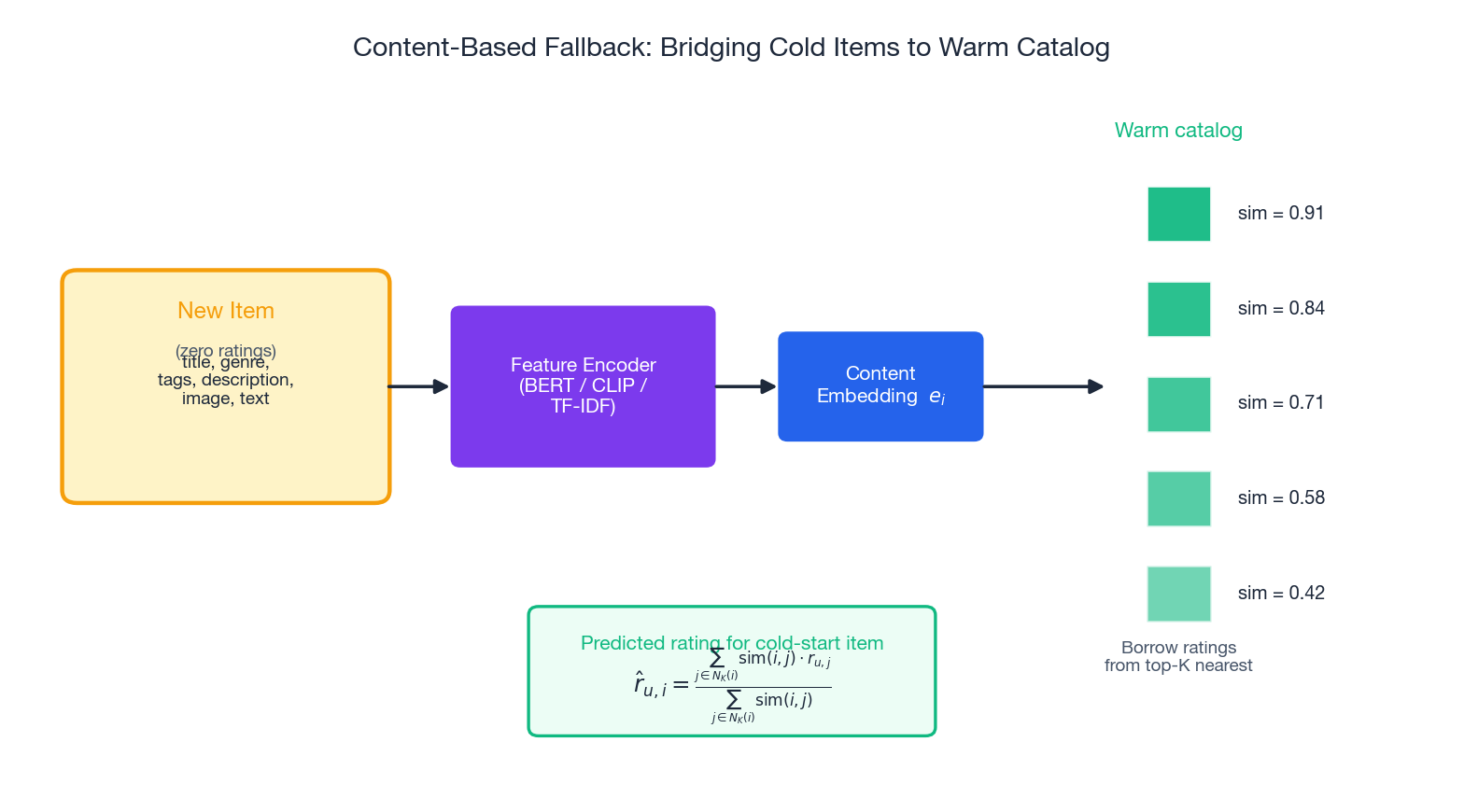

Content-Based Fallback#

For new items, no amount of meta-learning helps if there’s no signal at all. Content-based retrieval is the always-on safety net.

The recipe is mechanical:

- Encode the new item with a domain-appropriate encoder. Text → BERT / sentence-transformers. Images → CLIP. Tabular → handcrafted features + a small MLP.

- Find the K nearest warm items by cosine similarity on the content embedding.

- Predict the rating as a similarity-weighted average of those neighbors’ ratings: $\hat r_{u,i} = \frac{\sum_{j \in N_K(i)} \mathrm{sim}(i, j) \cdot r_{u,j}}{\sum_{j \in N_K(i)} \mathrm{sim}(i, j)}$

| |

The same pattern works for user cold-start if the user provides any content-style signal (a survey on sign-up, a single onboarding click): encode the signal, find similar warm users, borrow their preferences.

The Cold-to-Warm Production Stack#

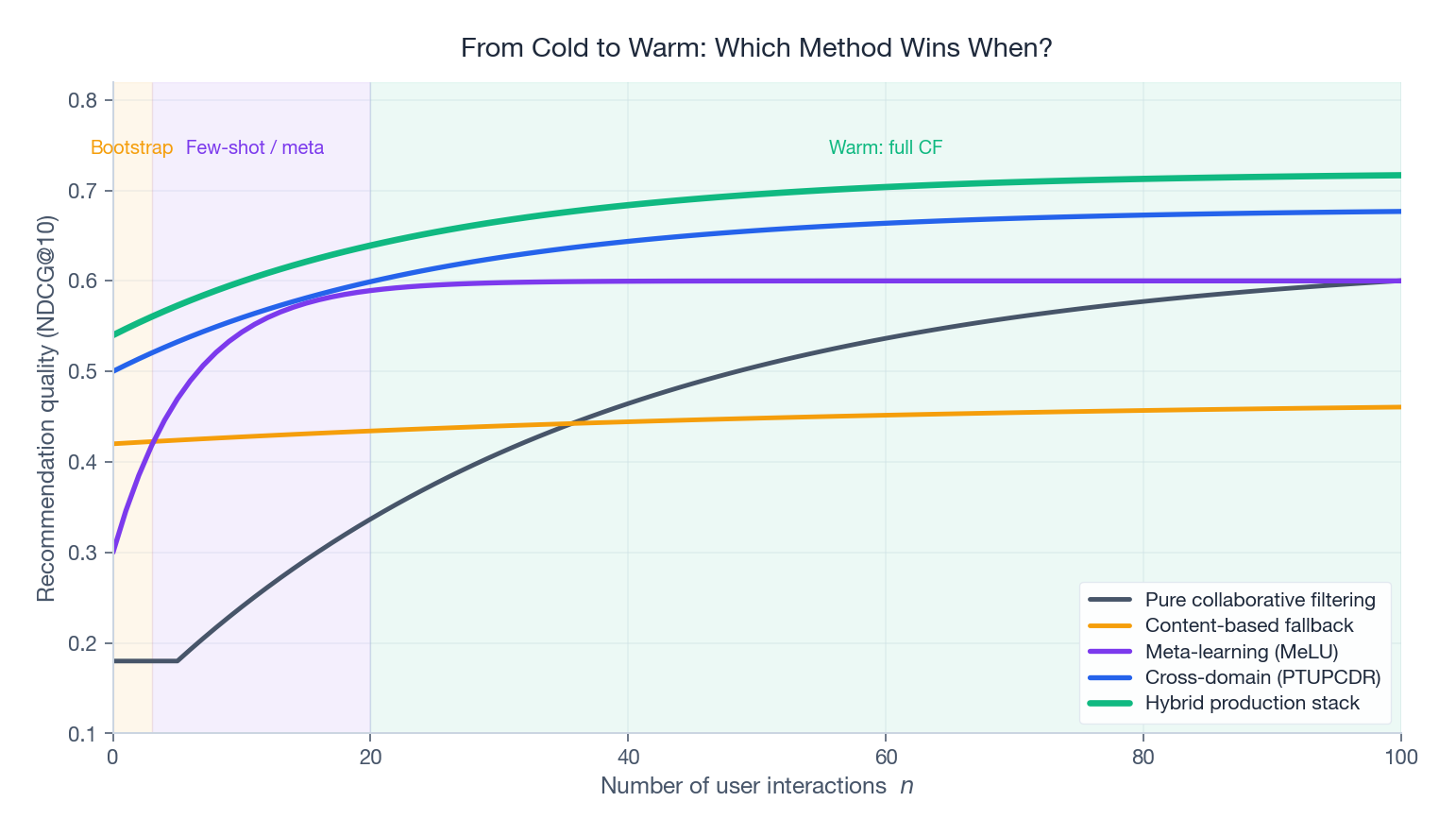

No single method dominates across the whole interaction-count axis. Production systems route requests to different methods based on how much data the user has accumulated.

The shape of each curve tells a story:

- Pure CF is useless below ~5 interactions and slow to climb until ~20.

- Content-based has a flat floor — never great, never terrible, always available.

- Meta-learning (MeLU) is the steepest mover in the 1–10 interaction range — exactly what it’s designed for.

- Cross-domain (PTUPCDR) starts highest because it inherits source-domain knowledge for free, but flattens earlier.

- Hybrid stack is the upper envelope: route to whichever method dominates at the current interaction count.

A practical routing rule:

| Interactions | Primary method | Fallbacks |

|---|---|---|

| 0 | Cross-domain or popularity | Content-based |

| 1-3 | Cross-domain + bandit | Content-based |

| 3-20 | Meta-learning (MeLU) | Cross-domain |

| 20+ | Full CF / DIN / sequential | Meta-learning for new sessions |

Keep a popularity baseline running in parallel as a circuit breaker — if any of the above methods produces low-confidence predictions, fall back to popular items in the user’s inferred segment.

FAQ#

My platform has no related domain to transfer from. Where do I start?#

Lead with content-based fallback for items and a popularity prior for users, then meta-train MeLU on whatever interaction data you accumulate. Cross-domain is a 10–20% relative boost when available, not a prerequisite.

How many interactions before MeLU outperforms a content baseline?#

In published benchmarks (MovieLens, Bookcrossing) MeLU starts winning at 2–3 interactions and dominates by 5. Your mileage depends on how informative your support interactions are — diverse genres beat homogeneous ones.

Is FOMAML really good enough, or should I bite the bullet on second-order MAML?#

For recommendation, FOMAML is within 1–2% of MAML on standard metrics and trains 3× faster. Use second-order MAML only when you’ve measured that the gap matters for your business metric.

How do I detect negative transfer in cross-domain?#

Run a target-only baseline alongside the cross-domain model. If target performance on cold users does not improve, the domains are too distant. Common culprit: aligning users by ID across domains where the same user has wildly different behavior (work account vs personal account).

Are bandits worth it for top-K recommendation, or just for single-pick problems?#

Worth it for the first slot or two of a feed — that’s where exploration value is highest. After the first few items, switch to ranking. Combinatorial bandits exist but are operationally expensive.

How do I evaluate a cold-start system offline?#

Hold out users entirely (not random interactions). For each held-out user, expose only their first $K$ interactions to the model as “support” and predict on the rest. Report metrics stratified by $K \in \{1, 3, 5, 10\}$ — a single number hides the cold-to-warm transition.

References#

- Finn, C., Abbeel, P., & Levine, S. (2017). Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks . ICML.

- Lee, H., Im, J., Jang, S., Cho, H., & Chung, S. (2019). MeLU: Meta-Learned User Preference Estimator for Cold-Start Recommendation . KDD.

- Man, T., Shen, H., Jin, X., & Cheng, X. (2017). Cross-Domain Recommendation: An Embedding and Mapping Approach . IJCAI.

- Zhu, Y., Tang, Z., Liu, Y., Zhuang, F., Xie, R., Zhang, X., Lin, L., & He, Q. (2022). Personalized Transfer of User Preferences for Cross-domain Recommendation . WSDM.

- Auer, P., Cesa-Bianchi, N., & Fischer, P. (2002). Finite-time Analysis of the Multiarmed Bandit Problem . Machine Learning, 47, 235-256.

- Snell, J., Swersky, K., & Zemel, R. (2017). Prototypical Networks for Few-shot Learning . NeurIPS.

Cold-start treatment: which method for which cold#

The taxonomy in section 1 says cold = user / item / system. The honest mapping from method to flavour is narrower than most papers suggest.

| Cold flavour | What works in production | What sounds good but doesn’t |

|---|---|---|

| New user, no history | Bandits + onboarding signal collection | Meta-learning (too slow per-user, too much variance) |

| New item, has metadata | Content-based fallback + cross-domain transfer | Pure CF (no signal to learn from) |

| New item, no metadata | Bandits with high exploration ε | Anything embedding-based (random embeddings dominate) |

| New domain, has overlapping users | Cross-domain (CMF, EMCDR, BiTGCF) | Independent training |

| New domain, no overlap | Content + foundation-model embeddings | Cross-domain (nothing to bridge) |

The single highest-leverage trick I’ve seen for new-item cold-start is using a pretrained foundation model to seed embeddings. For a new product on an e-commerce platform with title + description + image:

- Run the title+description through a multilingual sentence encoder (bge-m3 or e5-multilingual-large).

- Run the image through CLIP or a domain-specific vision encoder.

- Concatenate or average. This is the item’s embedding for day 1.

After ~50 interactions, blend with the learned ID embedding using a sigmoid gate. After ~500 interactions, drop the content embedding entirely.

This single intervention typically lifts new-item CTR by 15–30% in the first week vs random init. It’s three lines of code and it works.

Latency budget for cold-start serving#

Cold-start methods often have higher serving cost than warm-path. Watch for these.

Bandits with Thompson sampling. Pulling a sample from the posterior at request time costs O(d²) per arm if you’re using a Gaussian posterior with full covariance. With 10K candidate items at d=128, that’s 160M ops per request. Unworkable. Use diagonal covariance (drop d to 1.3M ops) or precompute samples in batches every few seconds.

Meta-learning at inference. MAML-style methods require running k gradient steps per cold user at request time. At k=5, this is ~5 forward passes through the model — typically 50–200 ms extra latency per request. Unacceptable for top-of-funnel. Reserve for a backend “learn-from-onboarding” flow that runs offline after the user finishes onboarding.

Cross-domain models with two encoders. EMCDR-style models with separate source-domain and target-domain encoders effectively double inference cost. Mitigate by precomputing source-domain embeddings nightly and only running the lightweight target-domain encoder online.

Content-based fallback. Cheap if you precompute item content embeddings and store them in the same ANN index as collaborative embeddings. Use a flag column to mark “cold” items and apply a popularity prior to their scores during ranking.

The pattern: keep the online path simple (an ANN call + a small ranker), push the cold-start intelligence into batch jobs that update the ANN index every few minutes.

Summary#

Cold-start is not a single problem with a single solution; it’s a regime that demands different machinery at different points on the interaction-count axis.

- The taxonomy (user / item / system) determines which lever — exploration, content, transfer — is actually available.

- EMCDR and PTUPCDR turn a data-rich source domain into a prior on a data-sparse target. PTUPCDR’s per-user bridge is the current practical sweet spot.

- MAML and its recsys specialisation MeLU train models whose initialisations adapt in a few steps — perfect for the 3-10 interaction window.

- UCB1 and Thompson sampling give the few-shot regime a principled exploration rule with logarithmic regret.

- Content-based fallback is the unglamorous always-on safety net.

- The hybrid stack routes by interaction count and keeps a popularity circuit breaker.

Build the stack incrementally. Start with content + popularity, layer in meta-learning once you have enough users to meta-train, add cross-domain when a related domain becomes available. Measure cold-user metrics separately from warm metrics; aggregate numbers will hide the regime where you’re actually losing money.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions you are here

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices