Recommendation Systems (15): Real-Time Recommendation and Online Learning

A practitioner's guide to real-time recommendation: streaming pipelines (Kafka + Flink), online learning (SGD, FTRL, AdaGrad), bandits (UCB, Thompson Sampling, LinUCB), latency budgets, feature freshness, concept drift, and the cache-vs-compute trade-off you actually tune in production.

A user opens your app at 14:02 and searches for ’trail running shoes’. By 15:30, they’ve moved on to reading kitchen reviews. A model that retrains nightly still shows them Salomon ads at 16:00 — and that gap is exactly the bug a real-time system fixes. The interesting part isn’t ‘make it faster’ but ‘what should be fast’ — most features add nothing to AUC even when made real-time, and the wrong design point wastes money without improving performance.

What You Will Learn#

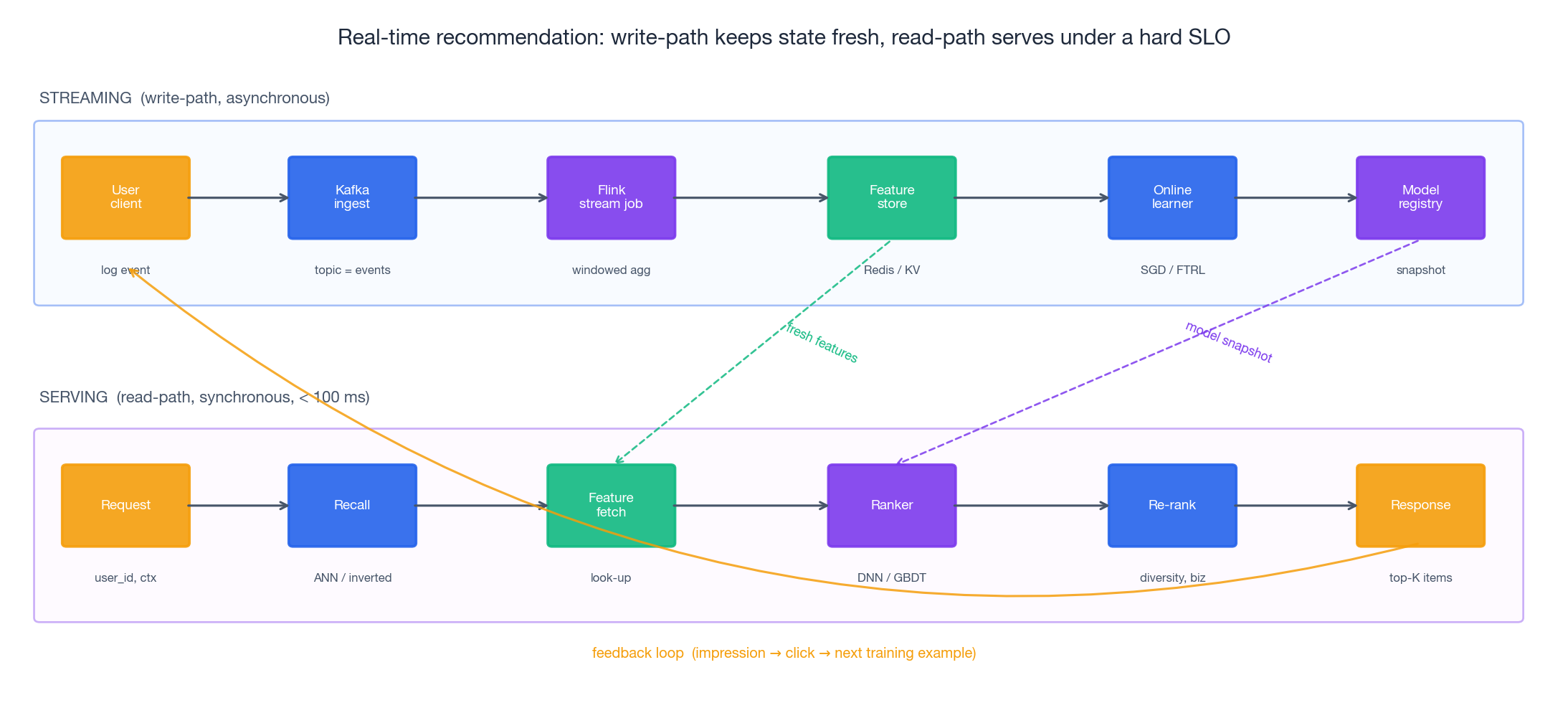

- The two paths: a real-time recommender is two pipelines glued together — an asynchronous write-path (events → state → model) and a synchronous read-path (request → recall → rank → response).

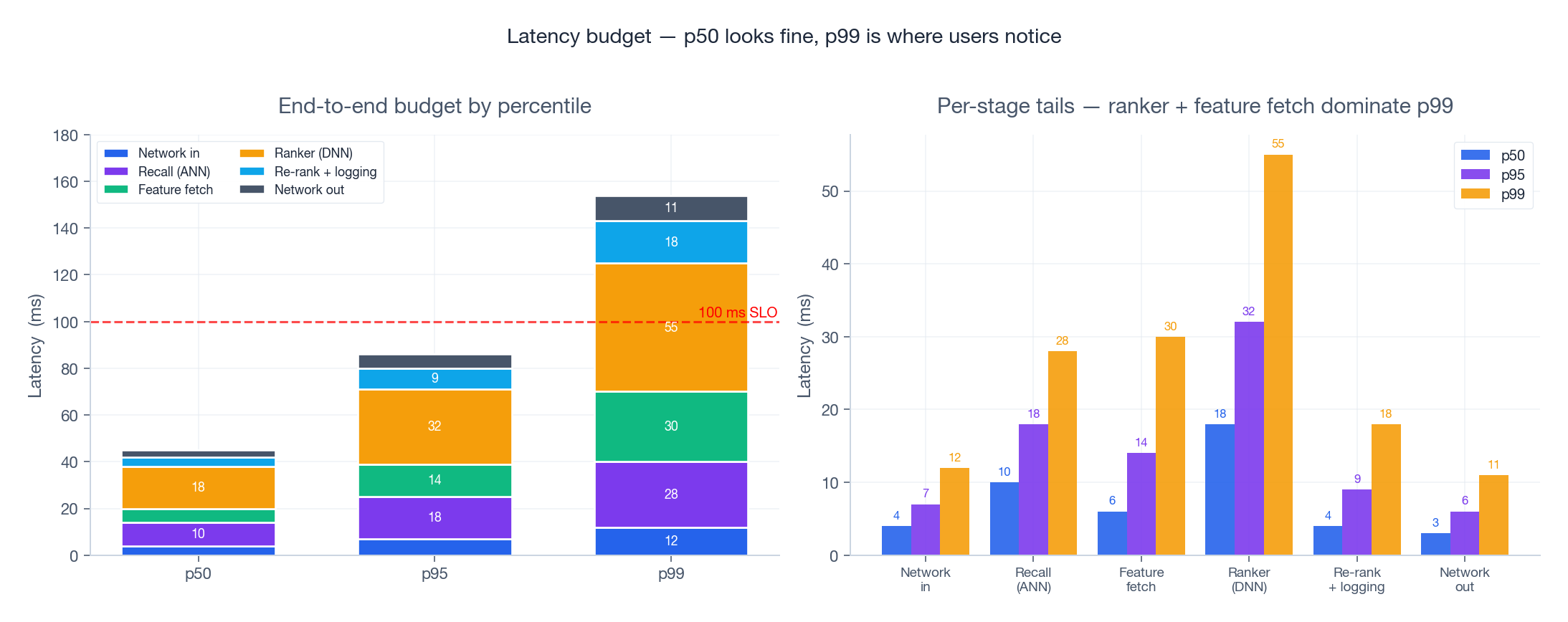

- Where the milliseconds go: latency is not an average; the p99 tail is what users feel. We break down the 100 ms budget by stage and by percentile.

- Online learning in practice: SGD, AdaGrad, and the production workhorse — FTRL-Proximal — that powers Google’s Ad Click Prediction.

- Bandits: UCB1, Thompson Sampling, and contextual LinUCB — what their regret bounds actually mean when items churn daily.

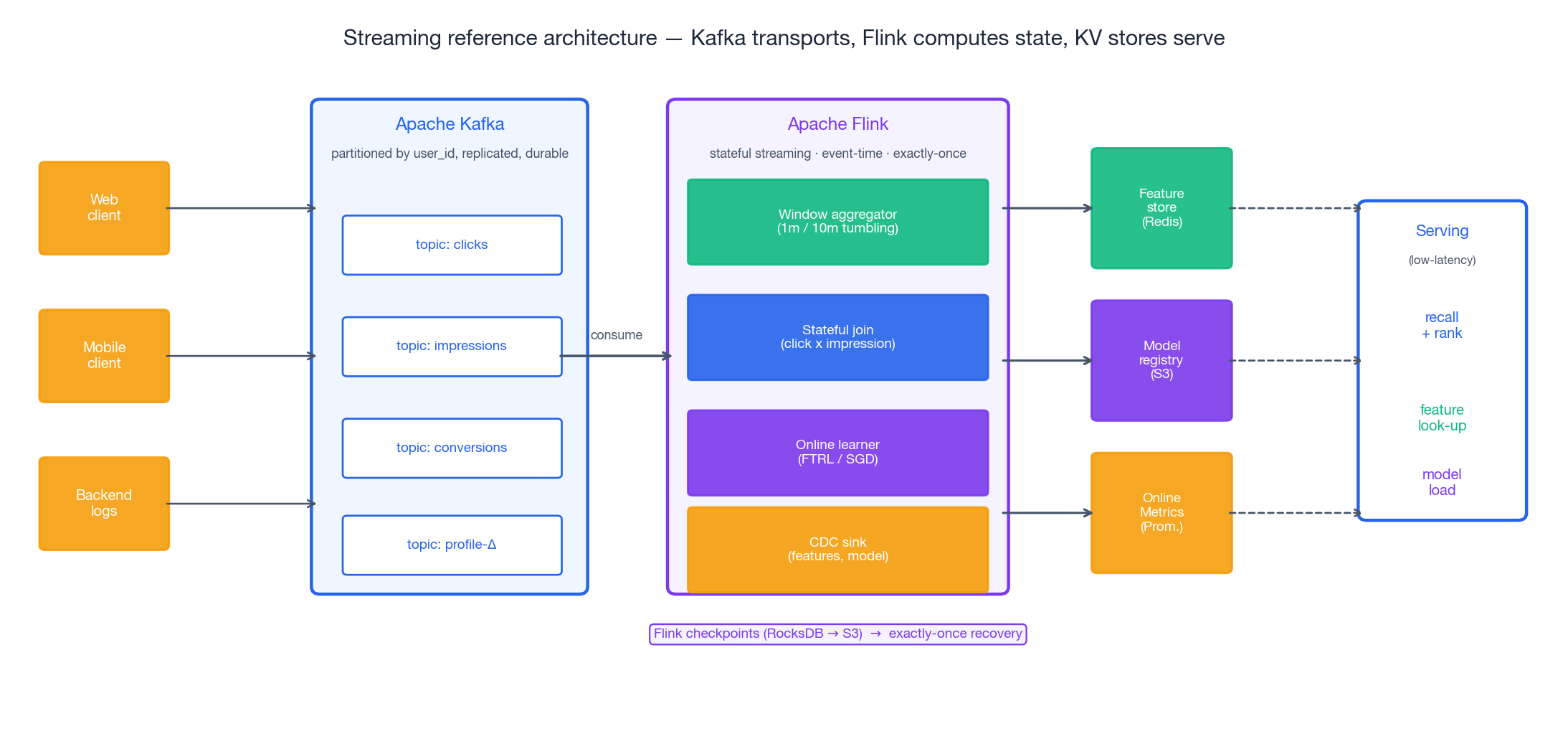

- Streaming architecture: a concrete Kafka + Flink + KV-store layout, with checkpointing and exactly-once semantics.

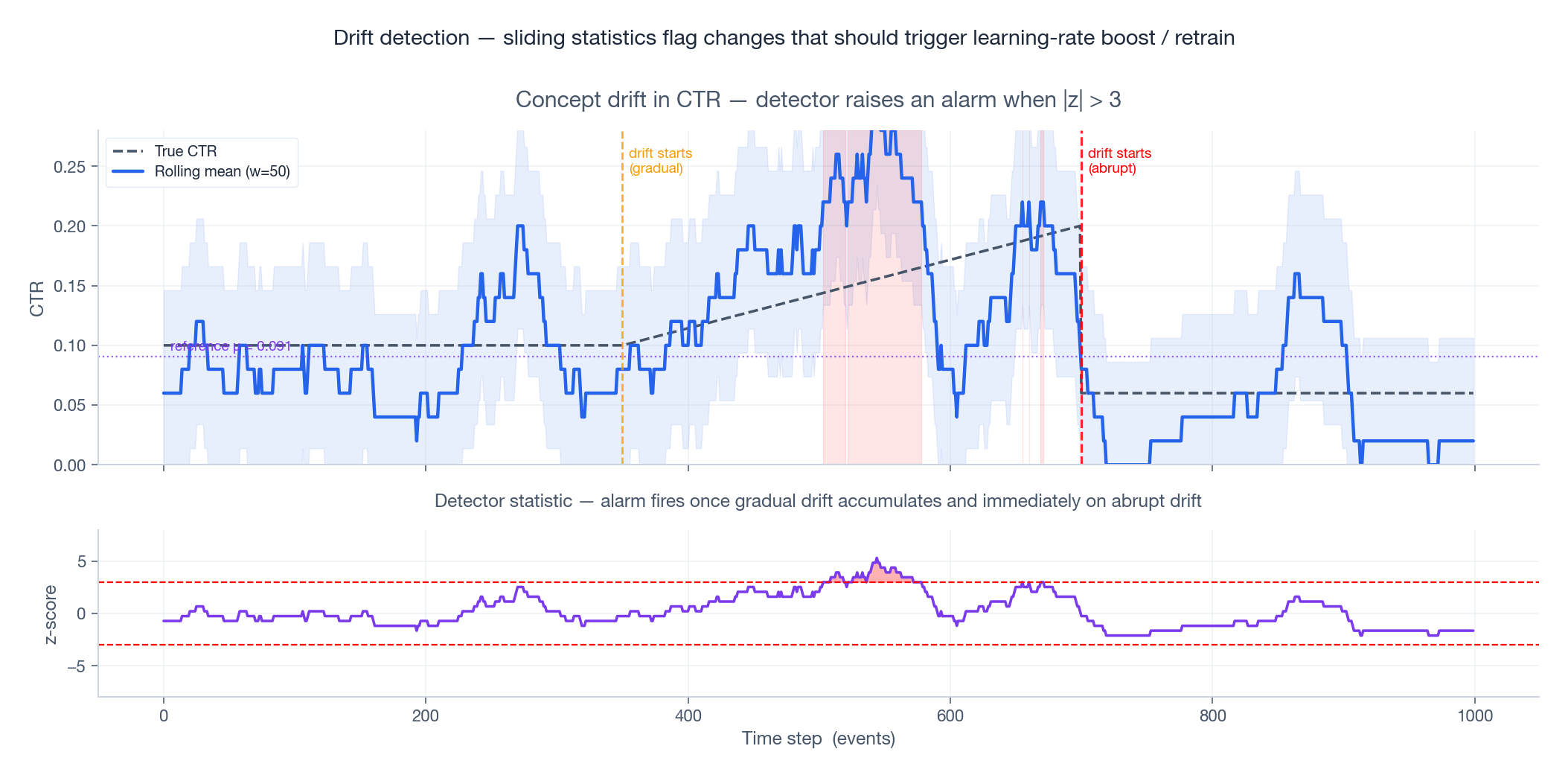

- Concept drift: how to detect it (ADWIN, DDM, page-Hinkley) and what to do once you have.

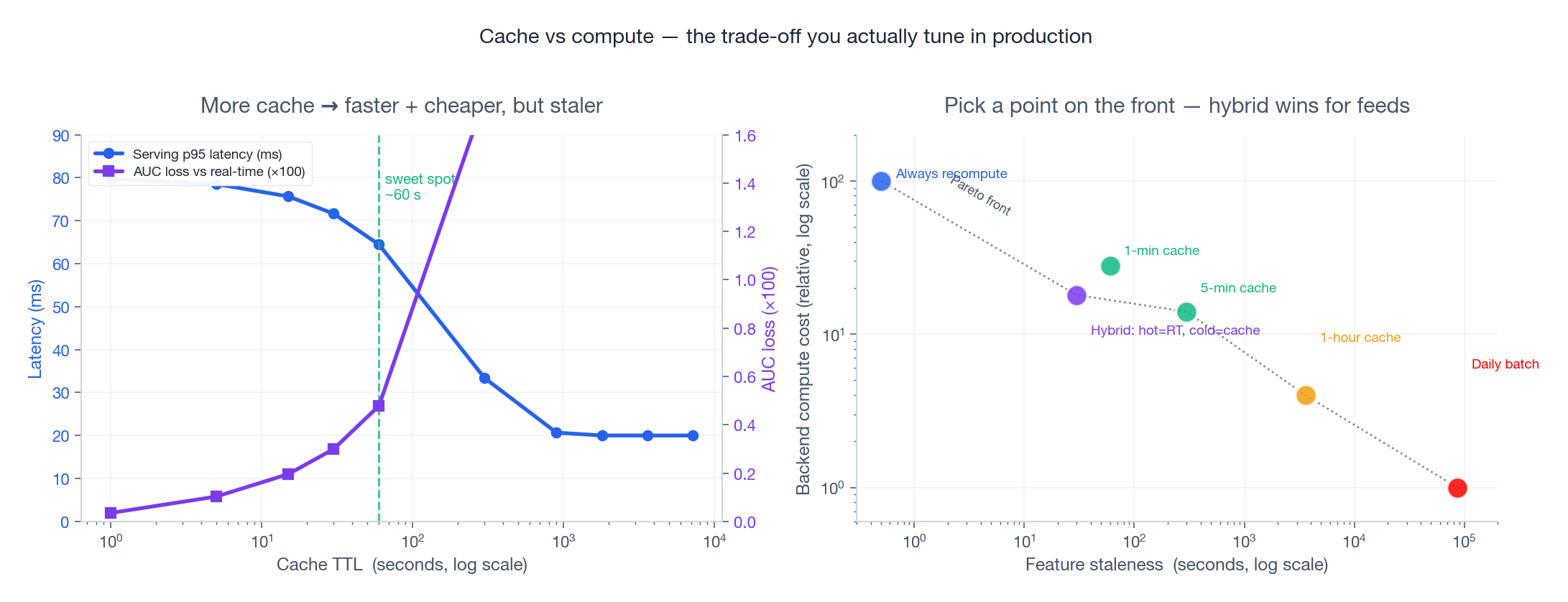

- Cache vs compute: the trade-off you actually tune — and why the answer is almost always hybrid.

Prerequisites#

- Python and NumPy (Parts 1-2)

- SGD and loss functions (Part 7 )

- The recommendation pipeline overview (Part 11 )

Why real-time, and what “real-time” actually means#

Three realities push toward real time:

- Sessions are short. Median session length on a feed app is 3-7 minutes. A model that updates daily literally never sees most sessions before they end.

- Trends are short-lived. A meme video can hit 80 % of its lifetime engagement in the first 6 hours. Yesterday’s batch model has no way to surface it.

- The feedback loop is the model. Once you serve a recommendation, the click that comes back is the next training example. Closing that loop in seconds vs days is the difference between a learning system and a stale one.

But “real-time” is not a single thing — it is a spectrum:

| Tier | Update cadence | Typical use |

|---|---|---|

| Real-time | < 1 second | session intent, in-feed dedup, abuse signals |

| Near-real-time | 1 second – 1 hour | recent-click sequences, per-creator CTR |

| Hourly | 1 – 24 hours | trending topics, item popularity decay |

| Batch | 1+ days | user demographics, item embeddings, retrieval index |

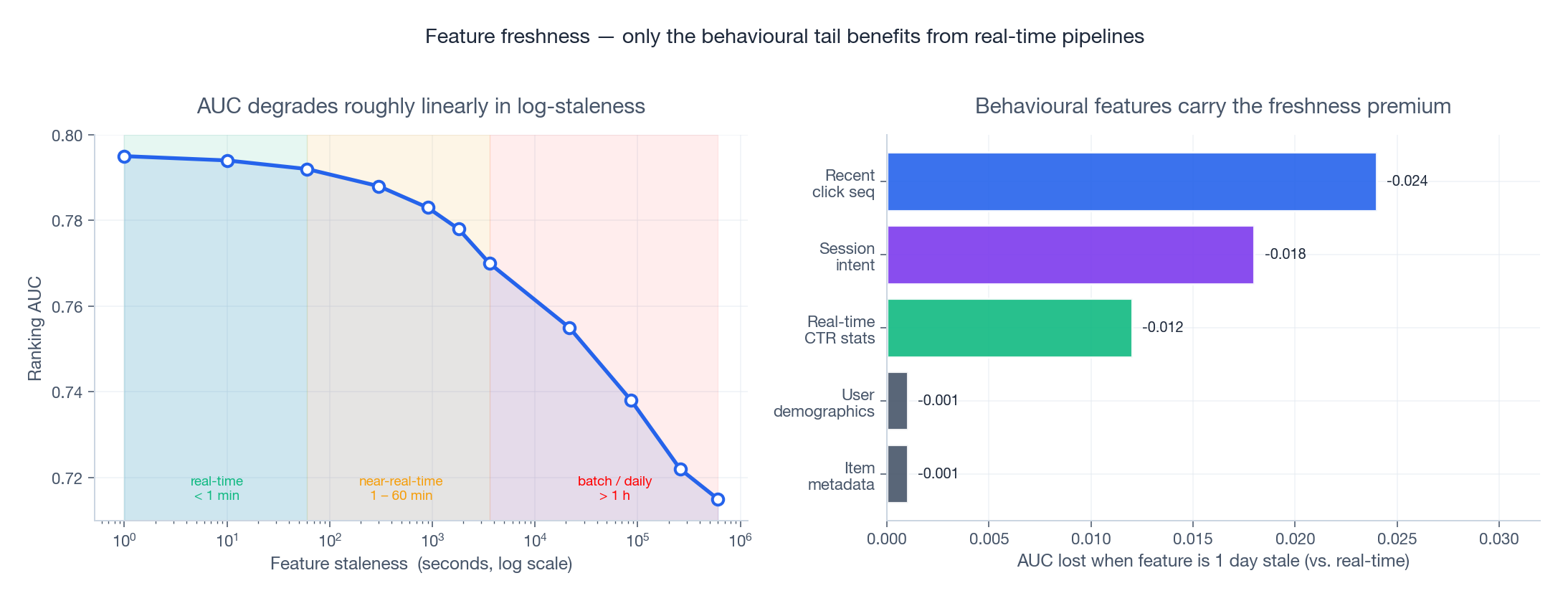

The mistake is making everything real-time. As Figure 4 shows, demographics and item metadata gain almost nothing from a real-time pipeline, but recent click sequences gain 2-3 AUC points. Real-time is a budget you spend on the features that move the metric.

The two-path architecture#

A real-time recommender decomposes cleanly into two paths:

Write-path (asynchronous, throughput-bound). Events leave the client, hit a Kafka topic partitioned by user_id, get aggregated by a Flink job into rolling windows (last-N clicks, 10-minute CTR, etc.), and land in two places: a feature store (Redis or RocksDB) for the read-path to consume, and an online learner that updates model weights. A new model snapshot is pushed to a registry every few minutes.

Read-path (synchronous, latency-bound). A serving request arrives. We perform recall (ANN over embeddings, plus inverted indexes for fresh items), fetch features in a single round-trip from the store, rank, re-rank for diversity and business rules, and return. Total budget: < 100 ms.

The discipline is keeping these two paths decoupled. The serving path never writes to the model, never trains, never blocks on stream processing. If the streaming side falls behind, serving keeps working with slightly stale features — degraded, not down.

The latency budget — where every millisecond goes#

A 100 ms end-to-end SLO is the industry norm for feed-style products (perception research puts the “feels instant” threshold around 100-200 ms for visual updates). Inside that budget, the split looks roughly like this:

| Stage | p50 | p95 | p99 | Notes |

|---|---|---|---|---|

| Network in | 4 | 7 | 12 | TLS handshake amortised by keep-alive |

| Recall (ANN) | 10 | 18 | 28 | HNSW or ScaNN over 100 M items |

| Feature fetch | 6 | 14 | 30 | Redis pipeline; tail = GC / network |

| Ranker (DNN) | 18 | 32 | 55 | Batched scoring over ~500 candidates |

| Re-rank + logging | 4 | 9 | 18 | Diversity, biz rules, async log |

| Network out | 3 | 6 | 11 | |

| End-to-end | 45 | 86 | 154 | p99 blows the SLO — that’s normal |

Two practical lessons:

- Average latency lies. A p50 of 45 ms looks like room to spare. The p99 of 154 ms means 1 % of requests miss the SLO — that’s millions per day on a billion-request platform.

- The ranker dominates. Batching candidates, model distillation, and TensorRT/ONNX-Runtime quantization buy more than any single optimization elsewhere. Pinterest reported a 30 % p99 reduction by moving from a 6-layer DNN to a distilled 2-layer student plus feature crosses.

Streaming: Kafka + Flink in production#

Kafka — the durable transport#

Kafka’s role is narrow but essential: a durable, partitioned, and replayable log. Three properties matter:

- Partitioning by

user_idkeeps a single user’s events on a single partition, which preserves causal order — critical for stateful joins like “did the click come before or after the impression?”. - Replication (typically

replication-factor=3) means a broker can die without data loss. - Retention lets you replay the last 7 days for backfilling a new model — the same code path serves online and recovery.

What Kafka does not do: aggregation, joins, machine learning. It is a postal service.

| |

Flink — the stateful compute#

Where Kafka is a transport, Flink is a stateful stream processor. The killer feature is exactly-once processing under failure, achieved by Chandy-Lamport-style distributed snapshots: every checkpoint interval (default 60 s), Flink atomically captures the state of every operator and writes it to durable storage (S3). On failure, it rewinds Kafka offsets to the last checkpoint and replays — the externally visible effect is as if no failure occurred.

A canonical Flink job for click attribution:

| |

The watermark is the part most people get wrong. It says “I will not see any event with event_time < watermark.” That gives Flink permission to close and emit a window. Set it too tight and you drop late events; set it too loose and your features are systematically delayed.

Online learning: from SGD to FTRL#

The core update#

$$\theta_{t+1} = \theta_t - \eta_t \, \nabla_\theta \mathcal{L}(\sigma(\theta_t^\top x_t), y_t)$$That is plain SGD. It works, but on web-scale CTR data — millions of sparse features, each appearing in a small fraction of examples — it has two problems:

- No per-feature learning rate: a feature that fires 1 in 10 000 needs bigger steps than one that fires every example.

- No sparsity: weights drift away from zero on noise. A model with 10⁹ parameters that never zeros any of them is unservable.

AdaGrad — adaptive per-feature step#

$$\theta_{t+1, i} = \theta_{t, i} - \frac{\eta}{\sqrt{G_{t, i} + \varepsilon}} g_{t, i}, \quad G_{t, i} = \sum_{s=1}^{t} g_{s, i}^2$$A rare feature has small $G$ , so it gets a big step the few times it does fire. A common feature has large $G$ and is updated cautiously.

FTRL-Proximal — the production workhorse#

$$ z_{t,i} \leftarrow z_{t-1,i} + g_{t,i} - \frac{\sigma_{t,i}}{\eta} \theta_{t-1,i}, \qquad \theta_{t,i} = \begin{cases} 0 & \text{if } |z_{t,i}| \le \lambda_1 \\ -\frac{1}{\eta_{t,i}} \big(z_{t,i} - \mathrm{sign}(z_{t,i}) \lambda_1\big) & \text{otherwise} \end{cases} $$where $\sigma_{t,i} = \frac{1}{\eta_{t,i}} - \frac{1}{\eta_{t-1,i}}$ and $\eta_{t,i} = \alpha / (\beta + \sqrt{\sum_s g_{s,i}^2})$ .

The crucial property: the model is genuinely sparse. Google reported FTRL-Proximal cutting model size by an order of magnitude versus naive SGD with $L_2$ — at the same AUC.

| |

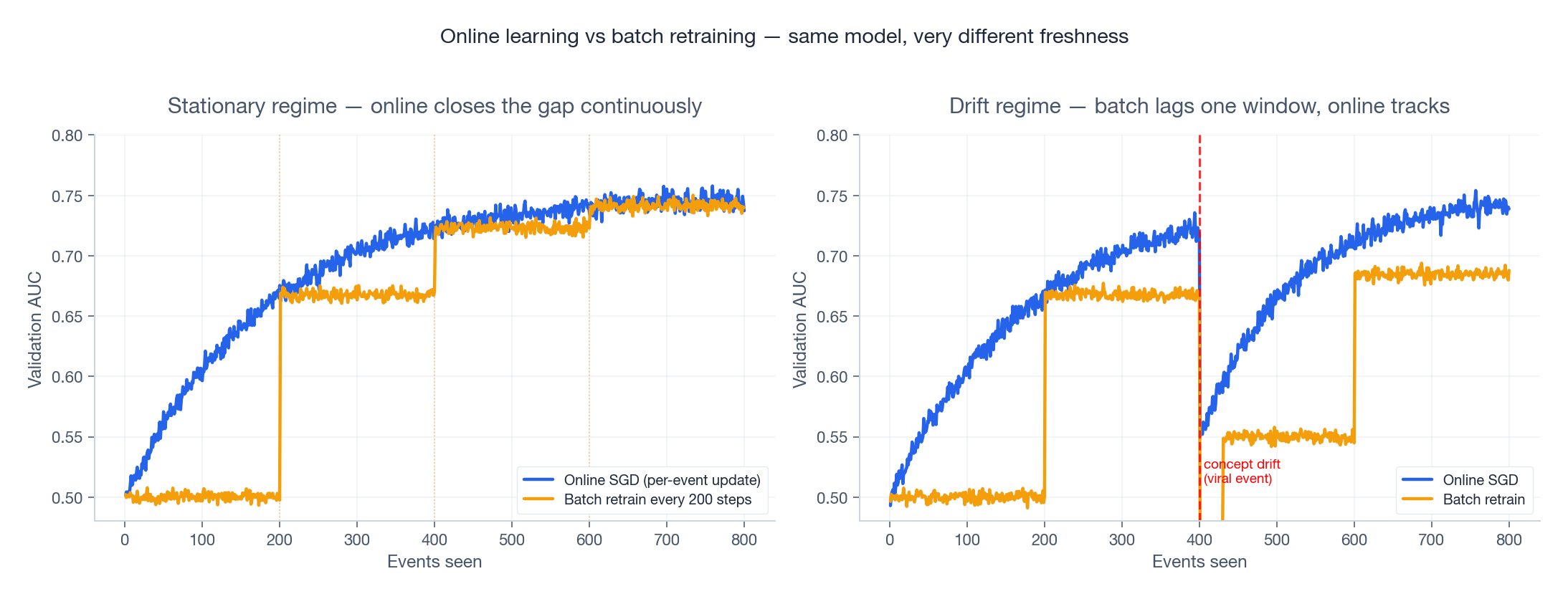

Online vs batch — the picture#

The left panel of Figure 3 shows the stationary case: online learning converges smoothly while batch retraining produces a staircase — a fresh snapshot every 200 events, plateaus in between. On a stable task the gap closes in expectation, but online wins on integral over time.

The right panel is where it matters: a sudden distribution shift (a viral event, a new product launch, a holiday). The batch model, blind to anything outside its training window, takes a full window to recover. Online learning starts adjusting on the very first new example and is back to near-peak AUC in roughly 100 events. In production, drift is the rule, not the exception — and that is what makes online learning a real lever, not just an academic preference.

Feature freshness — how much does it actually matter?#

The freshness question is what a serious team argues about, because making something real-time is expensive. The empirical pattern from public reports (Meta’s deep learning recommendation models, Pinterest’s PinnerSAGE, ByteDance’s Monolith) is consistent:

- AUC drops roughly linearly in log-staleness. Going from 1 second to 1 minute: tiny loss. From 1 minute to 1 hour: meaningful (~0.005 AUC). From 1 hour to 1 day: substantial (~0.015-0.020 AUC).

- The loss is concentrated in behavioral features. Recent click sequences and session intent are responsible for ~80 % of the freshness premium. Demographics and item metadata are essentially flat — you could update them weekly with no measurable impact.

This shapes the architecture: do not pay the streaming cost for features that don’t pay back. A typical feed system runs three pipelines side by side — real-time for behavioral, hourly for popularity-style aggregates, daily for embeddings and demographics.

Bandits — the principled exploration story#

The problem in one sentence#

$$R_T = T \mu^* - \mathbb{E}\!\left[\sum_{t=1}^T \mu_{a_t}\right]$$A “good” algorithm has sublinear regret: $R_T = o(T)$ , i.e. average per-round loss → 0.

UCB1 — be optimistic in the face of uncertainty#

$$a_t = \arg\max_i \left( \hat\mu_i + \sqrt{\frac{2 \ln t}{n_i}} \right)$$where $n_i$ is how many times arm $i$ has been pulled. The bonus shrinks as $n_i$ grows — explore until you’re sure, then exploit. UCB1 achieves $O(\log T)$ regret, which is provably optimal up to constants for stationary bandits (Lai-Robbins lower bound).

Thompson Sampling — the Bayesian way#

$$\theta_i \sim \text{Beta}(\alpha_i, \beta_i), \quad \text{update: } (\alpha_i, \beta_i) \leftarrow (\alpha_i + r, \beta_i + 1 - r)$$Each round, sample one $\theta_i$ from each arm’s posterior and pick the highest sample. Wide posteriors get explored (their samples are noisy and occasionally land high); narrow posteriors get exploited. Thompson Sampling matches UCB1’s $O(\log T)$ asymptotically (Agrawal & Goyal, 2012) and beats it in practice on most benchmarks, while being simpler to extend to delayed feedback, batched updates, and complex reward models.

| |

LinUCB — context that actually matters#

$$\mathbb{E}[r_a \mid x_t] = x_t^\top \theta_a$$ $$a_t = \arg\max_a \left( x_t^\top \hat\theta_a + \alpha \sqrt{x_t^\top A_a^{-1} x_t} \right), \quad \hat\theta_a = A_a^{-1} b_a$$The bonus term $\sqrt{x_t^\top A_a^{-1} x_t}$ is the predictive standard deviation of ridge regression — large when the current context is unlike anything we’ve seen for this arm. Regret is $\tilde O(\sqrt{d T})$ where $d$ is the context dimension.

| |

In practice you almost never use a “pure” LinUCB. The serving system runs a deep ranker; bandits live one layer above, deciding which strategy (creator-boost, fresh-item-boost, exploitation) to allocate to a given request. The arms are policies, not items.

Concept drift — detect, don’t pretend it isn’t there#

Online learning adapts to drift only if the learner can adapt — if the learning rate has decayed to nothing, it can’t. Production systems explicitly detect drift and react.

Three classical detectors#

- Page-Hinkley test: cumulative sum of deviations from the mean; alarm when CUSUM exceeds a threshold. Good for monotonic drift.

- DDM (Drift Detection Method, Gama et al. 2004): tracks the binomial error rate $p_t$ and its standard deviation. Warning at $p_t + s_t \ge p_{\min} + 2 s_{\min}$ , alarm at $\ge p_{\min} + 3 s_{\min}$ .

- ADWIN (Bifet & Gavaldà 2007): maintains an adaptive window; whenever the mean of two sub-windows differs by more than a Hoeffding bound, the older half is dropped. Provably bounded false-alarm rate.

The simple z-score variant in Figure 6 is what you actually deploy for a v1: keep a reference window of “known-good” performance, compute the z-score of recent rolling-mean CTR vs that reference, alarm at $|z| > 3$ .

Reacting to drift#

Detection without a response is just an alert. Practical reactions, in order of cost:

- Bump the learning rate (cheap, reversible). Multiply $\eta$ by 2-5x for a fixed window.

- Reset bandit posteriors (medium). Halve $\alpha, \beta$ for affected arms — keeps the prior shape but doubles uncertainty.

- Force a checkpoint reload (expensive). Roll back to the last known-good snapshot, replay from Kafka, retrain on the post-drift window.

| |

Cache vs compute — the trade-off you actually tune#

Every read-path decision lands somewhere on this trade-off:

- Always recompute is correct but expensive — every request hits the model, every feature is computed fresh.

- Aggressive caching is cheap and fast but stale — minutes-old features for a session that just changed intent.

- The hybrid pattern is what production systems converge to:

- Hot path (top users / active sessions / recent items): compute live, no cache.

- Cold path (everyone else): serve from cache with a 30-60 s TTL.

- Negative cache: explicitly cache “nothing changed” responses to avoid repeated computation on idle users.

The right panel of Figure 7 shows why: a 60-second TTL captures most of the latency win at a tiny freshness cost; pure recompute spends 5x the compute for ~0.005 AUC. The “always recompute” point is on the front but rarely worth it.

Putting it together — a minimal but realistic system#

| |

A few things that look like trivia but matter in production:

- The bandit operates on strategies, not items. The action space of “pick one item out of 100 M” is too large for any contextual bandit to converge — but “pick one of 4 ranking heuristics” is exactly its sweet spot.

- Snapshots are a circular buffer of the online learner state, not the predictions. If a bad batch of training data corrupts weights, you roll back the state and replay clean events from Kafka.

DriftDetectorreads calibration error (|p - y|), not raw CTR. Calibration drift catches more failure modes than rate drift alone.

FAQ#

Q: How do I A/B test an online learning system? Standard A/B tests assume independent observations, but online learners learn from their treatment group. The fix is to use interleaved comparisons (Chapelle et al., 2012) or to hold a separate “frozen” model in one arm and the live online model in the other, then compare cumulative reward, not per-request CTR.

Q: What is the actual difference between Flink and Spark Streaming? Flink processes events one at a time with millisecond latency; Spark Streaming processes them in micro-batches with seconds of latency. For recommendations, Flink’s lower latency and its more mature exactly-once state management both matter — virtually every recently-built feed system at scale uses Flink (or proprietary equivalents like Twitter’s Heron, ByteDance’s Aiops).

Q: Is online learning unstable? It can be, and that is the failure mode you must engineer against. Three protections: (1) bound the learning rate; (2) clip gradients; (3) keep snapshots and a rollback path. Google’s FTRL paper devotes a full section to “tricks of the trade” — saturation guards, per-coordinate learning rate floors, calibration loss monitoring — that exist solely to make production online learning behave.

Q: How do bandits handle delayed reward? Two patterns. Batched updates: collect $k$ observations, update with all of them, repeat. Both UCB and Thompson Sampling have proofs that batching multiplies regret by a factor of at most $\log k$ . Optimistic counters: when an arm is pulled, increment $n_i$ immediately and assume reward = mean (or 0 for safety) — correct it once the real reward arrives. The optimistic variant is what production systems use because it keeps the system from over-pulling a single arm during a delay window.

Q: When should I not use a real-time pipeline? When the feature is slow-moving (demographics, long-term taste embeddings), when the cost of a stale recommendation is small (warehouse search, browsing taxonomies), or when you can’t measure improvement (a freshness bump from 1 h to 1 s on a low-traffic surface is invisible in the noise). A real-time pipeline costs ~10-100x of a daily batch — earn it.

Summary#

- A real-time recommender is two pipelines glued together by a feature store and a model registry: an asynchronous write-path that keeps state fresh, and a synchronous read-path that serves under a hard SLO.

- Latency is a tail problem. Optimise for p99, not p50 — the ranker dominates and is where distillation and quantisation pay back.

- Online learning is FTRL-Proximal in production. It is the algorithm Google published for ad CTR; SGD/AdaGrad are stepping stones.

- Streaming is Kafka + Flink. Kafka is durable transport; Flink is stateful compute with exactly-once semantics via distributed snapshots.

- Freshness has a price curve. Behavioral features pay back the streaming cost; demographics and item metadata don’t.

- Bandits work above the ranker, not at the item level. Their action space is “which policy”, not “which item”.

- Drift will happen. Detect it (z-score, ADWIN, DDM), react cheaply (bump $\eta$ ), keep a rollback path.

- Cache vs compute is not a binary. The production answer is hybrid — hot path live, cold path cached, with a 30-60 s TTL covering 95 % of traffic at a fraction of the cost.

The rule of thumb that has held across every system I have seen at scale: make real-time only what moves AUC, and budget the rest by how often it actually changes.

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning you are here

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices