Recommendation Systems (16): Industrial Architecture and Best Practices

Production recommendation systems serve hundreds of millions of users with sub-100ms latency. This final article covers the industrial multi-stage pipeline (recall, coarse ranking, fine ranking, reranking), feature stores, A/B testing, model optimization, deployment, and team responsibilities -- drawing on patterns from YouTube, TikTok, Taobao, and ByteDance.

The hardest part of a production recommendation system isn’t the model. It’s the system around the model: the feature store that prevents training/serving skew, the canary deployment that catches regressions before they hit 100M users, and the orchestration that meets a 100ms p95 latency budget while running four ML models in sequence. This final article describes the architecture that every major tech company has converged on — and the trade-offs within each layer.

What You Will Learn#

- Multi-stage pipeline — recall, coarse ranking, fine ranking, and reranking, with the constraints that determine each stage’s design

- Multi-channel recall — combining collaborative filtering, two-tower deep learning, graph traversal, and real-time behaviour signals

- Ranking models in production — Wide & Deep, DeepFM, and DIN, with concrete code

- Reranking strategies — diversity (MMR), business rules, and freshness boosts

- Feature store — the offline + online architecture that decouples training from serving

- A/B testing — consistent assignment, z-test for proportions, and how long to run

- Performance optimisation — quantisation, distillation, and prediction caching

- Deployment and monitoring — canary rollouts, drift detection, and auto-rollback

- Team responsibilities — who owns recall, ranking, the feature store, and serving

Prerequisites#

- All previous parts of this series (especially Parts 7, 11, 15)

- Basic familiarity with distributed systems (load balancers, message queues)

- Comfortable with Python, PyTorch, and REST APIs

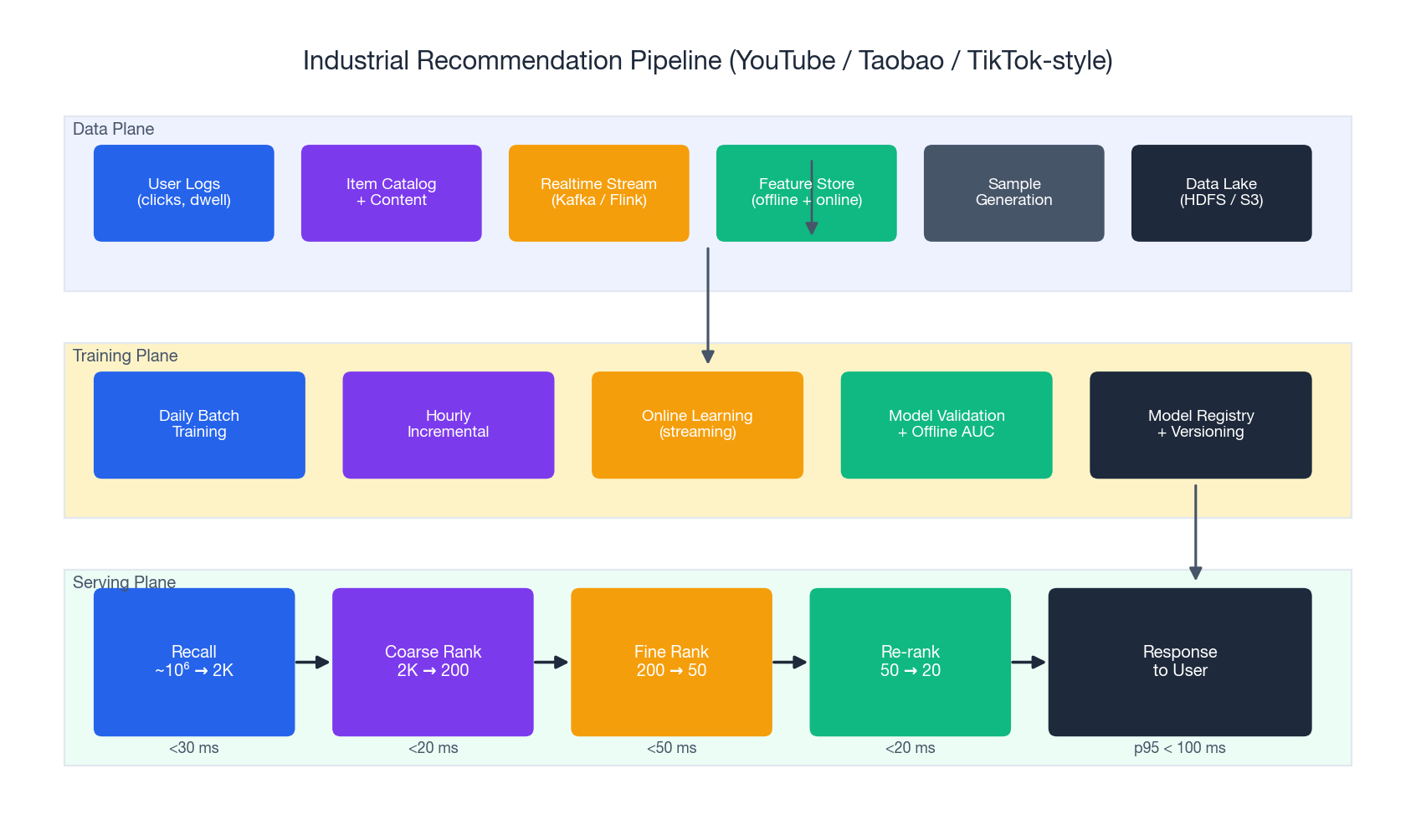

The Industrial Recommendation Pipeline#

Architecture Overview#

Every major tech company — Google, Amazon, Alibaba, ByteDance — has converged on the same three-plane architecture:

- Data plane generates samples and features from logs and content. This is where Hive, Spark, Flink, and Kafka live.

- Training plane turns samples into models, validates them offline, and writes the result to a model registry.

- Serving plane is the real-time funnel that the user actually waits on. It is the only plane with a strict latency budget.

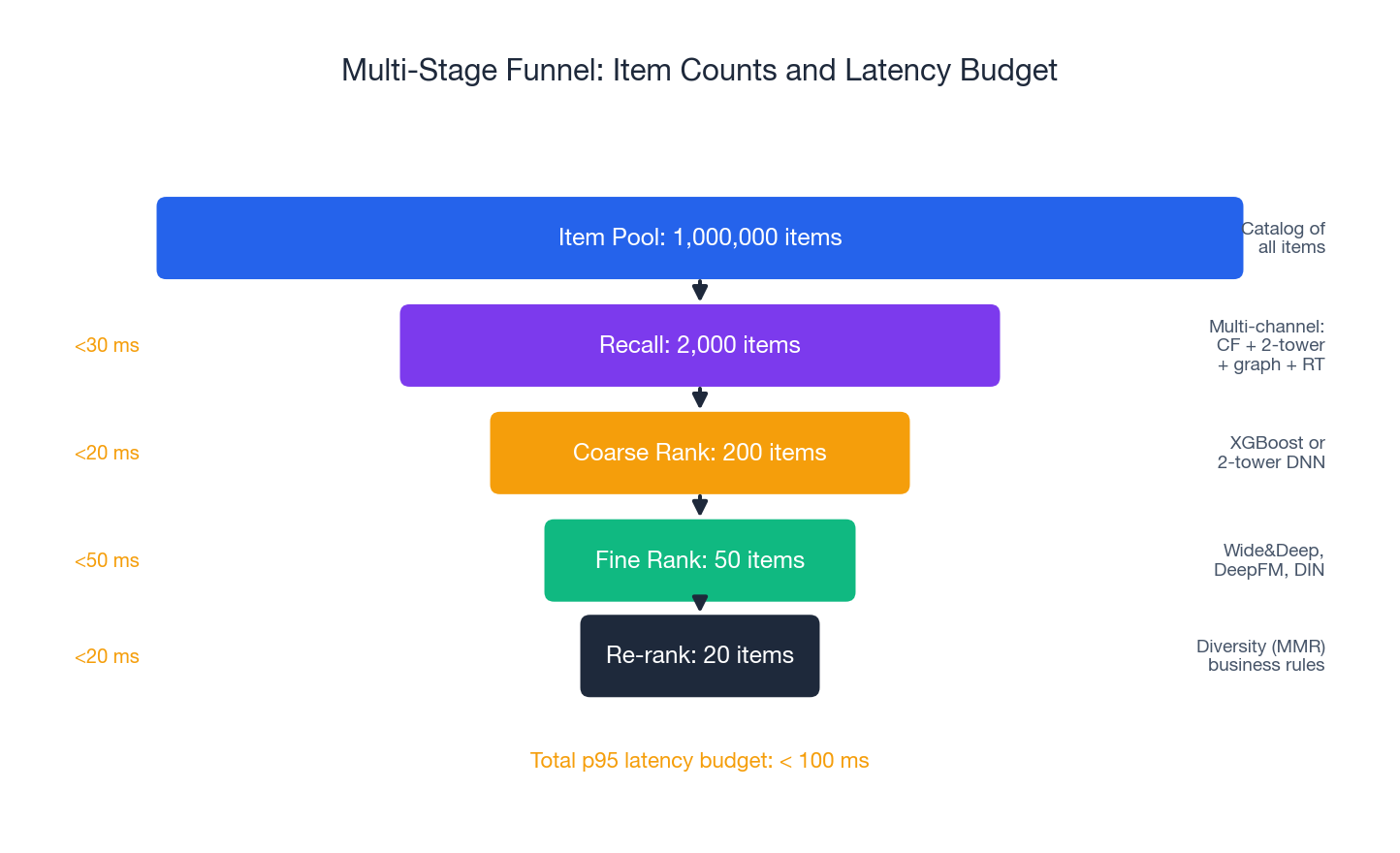

The serving plane itself is a funnel that progressively narrows the candidate set and increases scoring precision:

| |

| Stage | Input → Output | Model class | Latency budget |

|---|---|---|---|

| Recall | 10⁶ → ~2,000 | Two-tower DNN, ANN, simple CF | 20-30 ms |

| Coarse ranking | ~2,000 → ~200 | Shallow DNN or XGBoost | 10-20 ms |

| Fine ranking | ~200 → ~50 | Wide & Deep, DeepFM, DIN | 30-50 ms |

| Reranking | ~50 → ~20 | Rules + lightweight ML | 10-20 ms |

| Total | < 100 ms p95 |

Think of it as a hiring funnel: recall is like a resume screen (fast, wide net), coarse ranking is the phone screen, fine ranking is the on-site interview, and reranking is the hiring committee that makes the final adjustments for diversity and team fit.

Why a Funnel Instead of One Big Model?#

The brute-force alternative — scoring every item with one heavy model — would take seconds per request. The funnel provides orders of magnitude more speed because each stage uses a model appropriate for its candidate count: cheap models for many items, expensive models for fewer. The recall stage typically spends ~5 microseconds per item; the fine ranker spends ~250 microseconds. The product fits within the budget.

Key Design Principles#

Stateless services. Every service must be horizontally scalable. State (user embeddings, recent behaviour) lives in Redis, KV stores, or feature stores — never in process memory.

| |

Graceful degradation. Every component must have a fallback. If the deep recall channel times out, the system falls back to collaborative filtering. If everything fails, it serves popular items. The user must never see an empty page.

| |

Latency budget enforcement. Every call has an aggressive timeout, and the orchestrator enforces it. A slow recall channel does not delay the whole pipeline — it is dropped on the floor.

The Funnel in Detail#

The funnel above shows the order-of-magnitude reduction at each stage. Two design rules are worth memorising:

- The recall stage sets the upper bound on quality. If a great item never enters the funnel, no amount of fine ranking can save it. This is why production systems run multiple recall channels in parallel.

- The narrower the stage, the heavier the model can be. Fine ranking on 200 items can afford a 100M-parameter DIN. Recall on a million items cannot afford anything heavier than two embedding lookups and a dot product.

Multi-Channel Recall#

A single recall strategy will always miss something. Collaborative filtering misses cold items, content recall misses serendipitous discoveries, and real-time signals miss the user’s longer-term interests. Therefore, production systems run 3-5 recall channels in parallel and merge the results.

Channel 1: Two-Tower Deep Recall#

The two-tower architecture is the workhorse of modern recall. The user tower runs at request time; the item tower runs offline and its embeddings are loaded into an ANN index (Faiss or HNSW). At serving time, recall is a single ANN query in 5-10 ms.

| |

Two practical notes. First, the loss matters: in-batch sampled softmax with logQ correction for popularity bias is now standard (Yi et al., RecSys 2019, the YouTube paper). Second, the item index needs to be rebuilt when item embeddings drift — typically hourly for fast-moving catalogues, daily otherwise.

Channel 2: Graph-Based Recall#

Graph recall finds items through multi-hop traversal: user A liked items X and Y; user B liked Y and Z; therefore Z is a candidate for A. This catches discoveries that pure embedding similarity misses.

| |

Channel 3: Real-Time Behaviour Recall#

A recall channel that captures what the user is doing right now. If a user just clicked three items in the same category, the next recommendation should reflect that within seconds, not days.

| |

Channel Fusion#

Each channel returns a ranked list. The merger uses rank-based fusion rather than raw scores (scores from different channels are not comparable):

| |

This is reciprocal rank fusion — robust to scale differences and well known from search engines. The 25 ms per-channel timeout is non-negotiable: a slow channel is dropped, never blocked on.

Ranking: Coarse and Fine#

Coarse Ranking#

Coarse ranking trims thousands of candidates down to hundreds with a fast, lightweight model. The point is eliminating obviously bad candidates cheaply — not perfect ranking. Two patterns dominate:

- A shallow two-tower model whose item-side runs offline (similar to recall but with richer features).

- An XGBoost ranker on simple features (popularity, CTR, basic user/item stats).

| |

A common mistake is making coarse ranking too good. If its top-200 already matches what the fine ranker would choose, the fine ranker adds no value. Aim for the coarse ranker’s recall@200 vs. fine ranking to be around 0.7 — enough to filter, not enough to dominate.

Fine Ranking: Wide & Deep, DeepFM, DIN#

Fine ranking runs heavy models on the reduced candidate set. Three architectures dominate production CTR prediction.

Wide & Deep (Google, 2016) combines memorisation (wide linear model on cross features) with generalisation (deep MLP on embeddings):

| |

DeepFM (Huawei, 2017) replaces the hand-crafted wide cross features with a factorisation machine that learns pairwise interactions automatically. This is the right default if you do not want to hand-curate cross features.

DIN — Deep Interest Network (Alibaba, 2018) adds an attention mechanism over the user’s behaviour sequence. Instead of averaging the embeddings of all past items, DIN attends to the past items most similar to the current candidate:

| |

The attention trick matters: a user who has bought 50 books in 5 categories does not have a single “average interest” — they have category-specific interests, and DIN unlocks them per candidate.

Reranking#

Reranking is where business logic meets algorithmic output. Three patterns appear in almost every production system.

Diversity (MMR)#

Pure CTR optimisation produces a list that all looks the same — the user clicks the first item, then drops off. Maximal Marginal Relevance greedily picks items that balance relevance with novelty against already-selected items:

| |

The diversity weight (typically 0.2-0.3) is itself an A/B test parameter. Too low and the feed becomes monotone; too high and CTR drops because relevance is sacrificed.

Business Rules#

Hard constraints live here, not in the ML model. Out-of-stock filtering, regulatory compliance, promoted-item boosting — these are deterministic rules, easier to reason about as code than as features.

| |

Freshness Boost#

For news, video, and short-form content, recency is a feature in itself. An exponential decay gives recent items a bounded boost without dominating the list:

| |

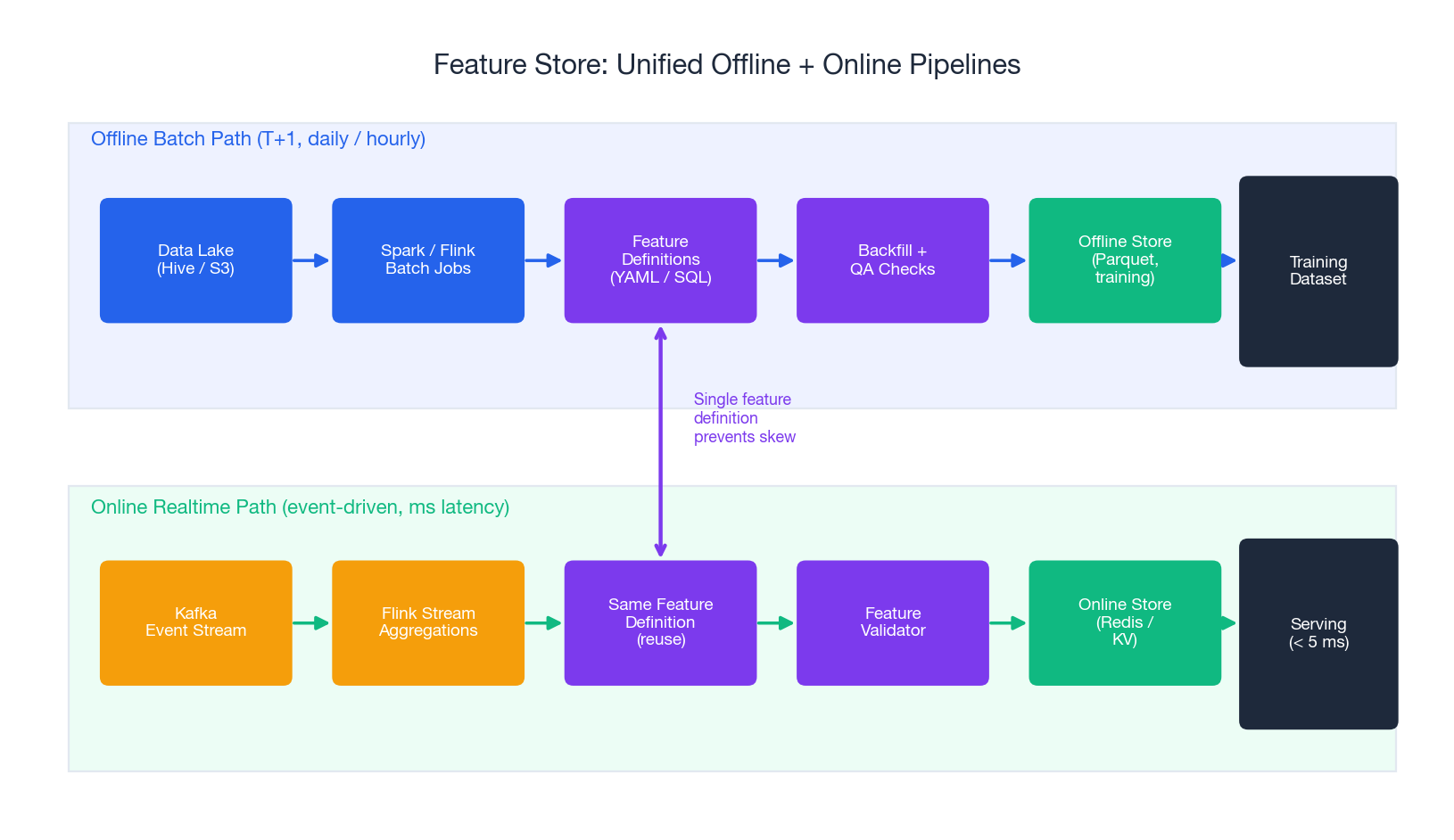

The Feature Store#

The feature store is the single most important piece of infrastructure in a mature recommendation system, and the one most often built last. Its job is to eliminate training/serving skew: the guarantee that a feature computed offline for training has exactly the same definition as the feature computed online at serving time.

The architecture has two paths sharing one feature definition:

- Offline path runs Spark/Flink jobs over the data lake, materialises features into Parquet, and feeds the training pipeline.

- Online path consumes Kafka events with Flink, writes aggregated features to Redis, and serves them at p99 < 5 ms.

Both paths execute the same feature definition (typically a SQL or YAML spec). When a feature is changed, both pipelines change together. Without this discipline you will, eventually, train a model on a feature that means one thing offline and a different thing online — and the AUC drop will be silent and brutal.

| |

Open-source feature stores worth knowing: Feast (most popular open source), Tecton (commercial), and Alibaba’s internal Feathr. They all follow the same offline+online pattern.

Cyclical Encoding for Time Features#

A small but important detail. Hour 23 and hour 0 are adjacent in time, but a linear model treats them as 23 units apart. Encode them as (sin, cos) so the model sees them as neighbours:

| |

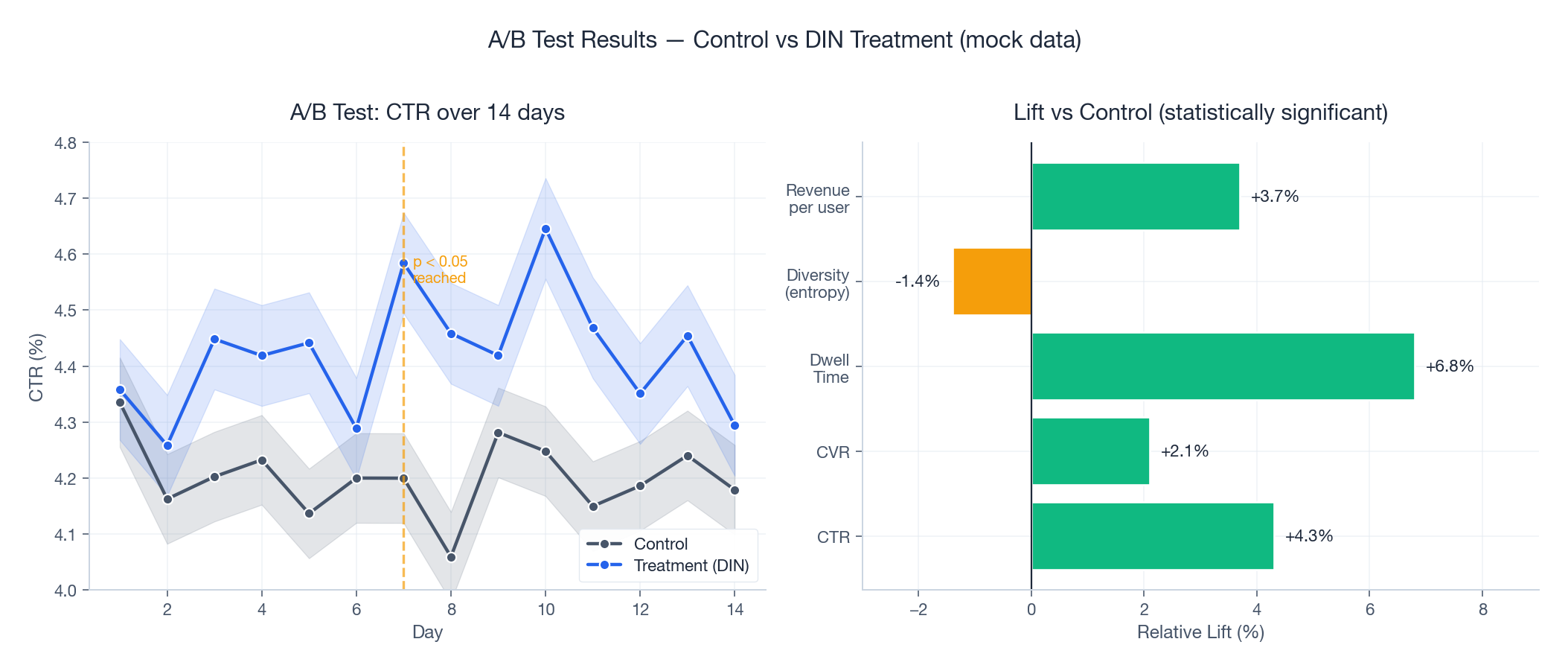

A/B Testing Framework#

A/B testing is how you discover that the model that beat baseline by 3% offline actually loses 1.5% online — which happens more often than people admit. Three properties matter:

- Consistent hashing for assignment, so a user always sees the same variant. Flickering between variants destroys both UX and statistical validity.

- Pre-registered metrics, including guardrail metrics (latency, error rate, revenue) that block a launch even if the primary metric wins.

- Power analysis up front to know how many samples you need before the experiment starts. Stopping early because the result “looks good” inflates false positives dramatically.

| |

How long should an A/B test run? Long enough to: (1) capture at least one weekly cycle (usually 2 weeks), (2) reach the sample size given by power analysis, and (3) outlast novelty effects (users sometimes click new things just because they are new). Two weeks is the modal answer. Anything less than one week is suspect.

Common pitfalls. Network effects between variants (treatment users influencing control users via shared content); SUTVA violations; heterogeneous treatment effects across user segments; cumulative effects (the treatment helps long-term retention but hurts short-term CTR). The cure for most of these is layered experimentation infrastructure — which is why Google, Facebook and ByteDance all built their own.

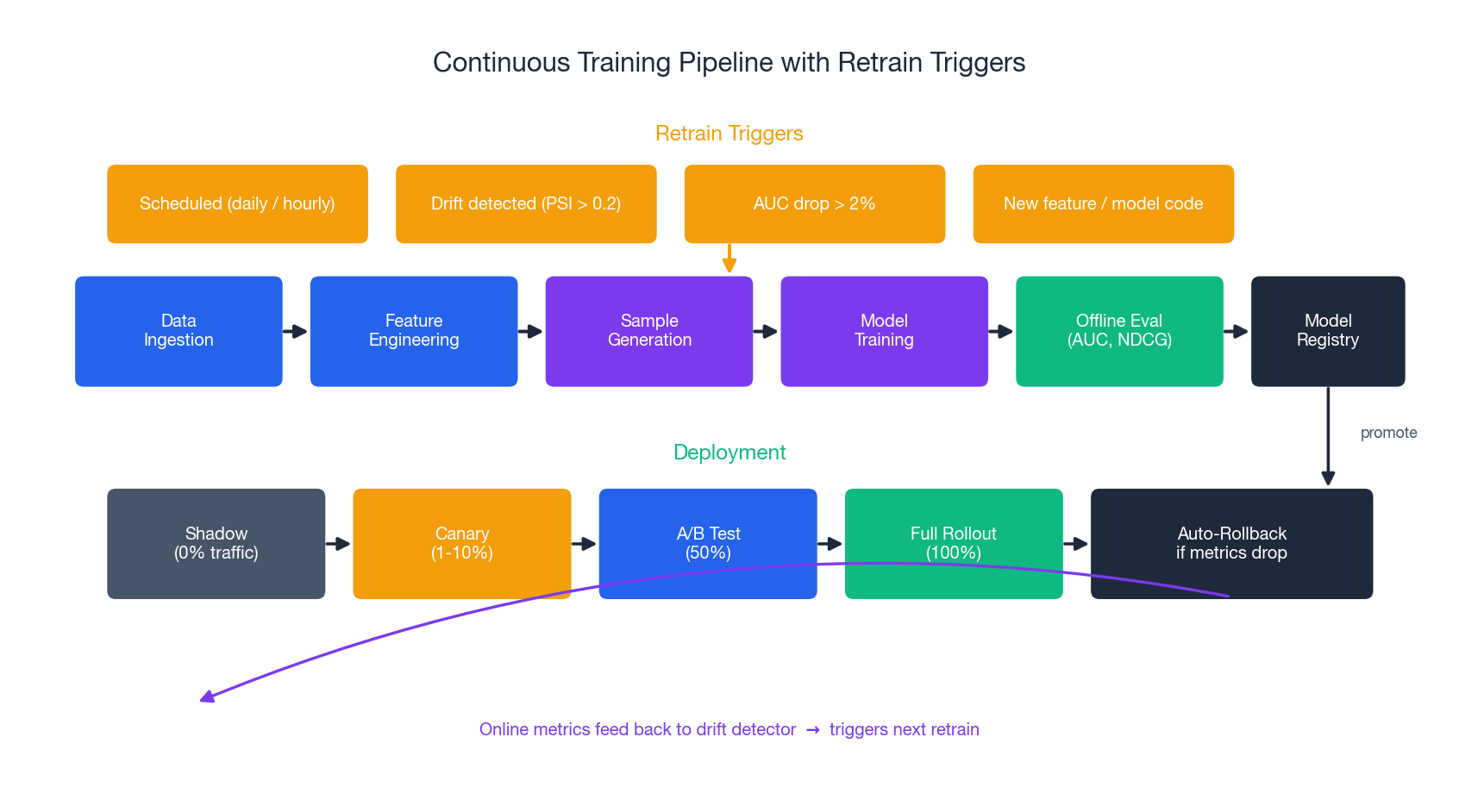

Continuous Training#

Models decay. User behaviour drifts, the catalogue changes, seasonality shifts. A model that was state-of-the-art last month will be a liability next month if it is not retrained. The training pipeline must run automatically, triggered by:

- Schedule — daily for fine ranking, hourly for incremental updates, near-real-time for online learning on the most volatile features.

- Drift detection — PSI (Population Stability Index) > 0.2 on an important feature triggers a retrain even if the schedule has not fired.

- Metric decay — offline AUC drops by more than 2% between checkpoints.

- Code change — new feature definition or model architecture.

The output of training is not a deployed model. It is an artifact in a model registry with metadata: version, training data window, offline metrics, lineage. Deployment is a separate, gated step.

Deployment: Shadow → Canary → A/B → Full Rollout#

A new model never goes from registry to 100% traffic in one step. The standard staircase:

- Shadow — 0% traffic, but the model runs in parallel and predictions are logged. This catches latency regressions, schema mismatches, and serving bugs without risking users.

- Canary — 1-10% traffic for 1-24 hours. Auto-rollback if guardrail metrics breach.

- A/B test — 50% traffic for 1-2 weeks for proper statistical validation.

- Full rollout — 100% traffic.

Auto-rollback is non-negotiable. The criteria are blunt: if p95 latency exceeds the SLO, error rate exceeds 1%, or CTR drops more than 5%, roll back automatically and page a human.

| |

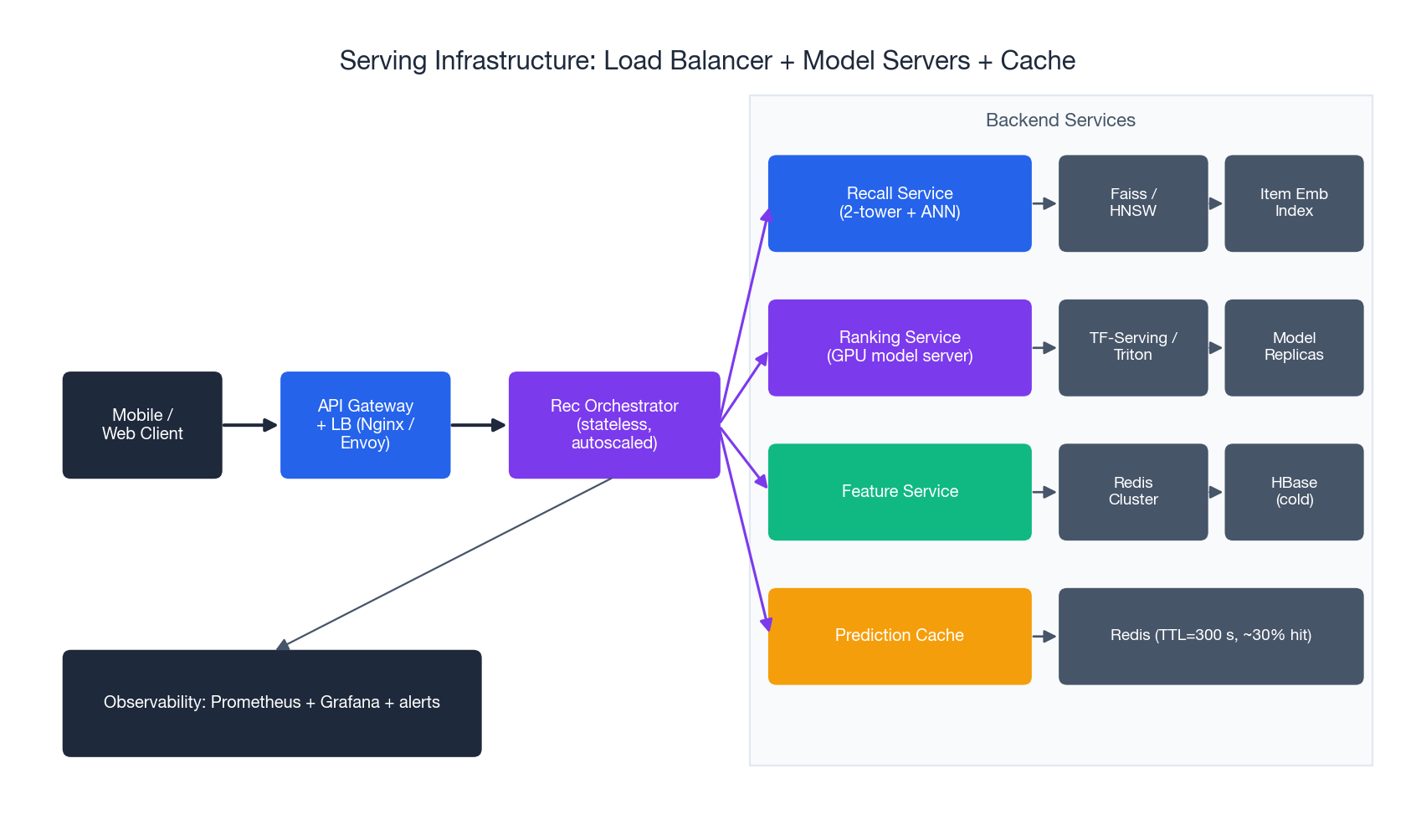

Serving Infrastructure#

The serving stack has four layers, all stateless and horizontally scaled:

- API gateway + load balancer (Nginx, Envoy, or a cloud LB). Handles TLS, auth, rate limiting, and routing.

- Recommendation orchestrator — the stateless service that runs the funnel. It calls recall, ranking, and reranking in sequence and merges the results.

- Backend services for each stage: a recall service backed by Faiss/HNSW; a ranking service running TensorFlow Serving or Triton on GPU; a feature service backed by Redis (hot) and HBase (cold).

- Caches at multiple levels: feature cache, embedding cache, and full-prediction cache. Hit rates of 30-50% on the prediction cache are typical and cut compute cost roughly proportionally.

Performance Optimisation#

Three techniques compound to give 5-10x speedups without measurable quality loss:

Quantisation (INT8 from FP32) gives 2-4x inference speedup on CPU and modest GPU gains.

| |

Knowledge distillation trains a small student model to mimic a large teacher. The student learns from soft probabilities (not just hard labels), which carry information about relative item quality.

| |

Prediction caching for the long tail of repeated requests. A 5-minute TTL is a good default — long enough to amortise compute, short enough to reflect new behaviour.

The standard recipe is: distill first, then prune, then quantise. In that order each step preserves the quality gains of the previous one.

Monitoring#

Three categories of metrics, all alerting:

| |

The most subtle alert is the third one — prediction distribution shift. If the average predicted CTR jumps by two standard deviations, something is broken upstream: a corrupted feature, a stale embedding index, or a model that is silently serving the wrong version. By the time business metrics move, you have lost an hour. Distribution monitoring catches it in minutes.

Team Responsibilities#

A production recommendation system is too big for one person and too coupled for fully independent teams. The role boundaries that work in practice:

- Algorithm engineers own model code, feature design, and A/B experiments. They write the recall, ranking, and reranking models.

- Data engineers own ETL pipelines, sample generation, and the offline side of the feature store. They are the firewall against data quality bugs.

- MLOps / platform engineers own training infrastructure, the model registry, CI/CD, and the serving runtime. They make it possible to ship a new model in a day rather than a month.

- SRE / infra own latency SLOs, capacity planning, and incident response. They are the ones paged at 3 a.m.

- Analysts / research own long-horizon evaluation, causal inference, and ranking diagnostics. They catch the metrics-look-good-but-revenue-is-flat problem.

- Product owns business KPIs and content policy.

The matrix on the right side of the figure shows primary owners by pipeline stage. The pattern: every stage has at least two owners, because every stage has both a model-quality dimension and an operational dimension.

Industrial Frameworks#

Alibaba EasyRec (open source). End-to-end framework with feature engineering, pre-built models (Wide & Deep, DeepFM, DIN, MMoE), training on PAI/MaxCompute, and PAI-EAS for serving. The fastest path to a production-quality baseline if you are on Alibaba Cloud.

Meta’s Looper / TorchRec. TorchRec is the open-source library powering Meta’s internal recommendation stack. Strong support for sharded embedding tables, which is the hard distributed-systems problem of recommendation training.

ByteDance Monolith (open source). Designed for online learning at billion-parameter scale. Built around collisionless embedding hash tables and asynchronous training that updates the model from production logs in near-real-time. Powers parts of TikTok’s recommendation stack.

YouTube’s two-stage system is described in the classic 2016 paper (Covington et al.) — a two-tower deep candidate generator plus a deep ranker. The architecture has evolved since (the 2019 sampled-softmax paper is the most influential follow-up), but the two-stage skeleton remains the template most teams copy.

Q&A: Common Questions#

How many recall channels should we use?#

Start with three: collaborative filtering, two-tower deep, and real-time behaviour. Add specialised channels (graph, content, geo, social) only when an offline gap analysis shows they would catch items the existing channels miss. Beyond ten channels you spend more on plumbing than on quality.

What is the right coarse-to-fine reduction ratio?#

Typically 10:1 (2,000 → 200 → 20). Monitor recall@K end-to-end: too aggressive a coarse stage drops good candidates that the fine ranker would have surfaced; too lax wastes the fine ranker’s compute budget.

How complex should the fine ranker be?#

Start with Wide & Deep or DeepFM. Add DIN-style attention over user history only after you have measured that the existing model under-uses sequential information (look for users with rich history but flat predictions). Each step up in complexity needs to justify its serving cost.

Model-based or rule-based reranking?#

Hybrid. Hard constraints (compliance, stock, blocklists) belong in deterministic rules where you can audit them. Soft optimisation (diversity, freshness, exploration) is where learned rerankers add value. Mixing them is normal.

How to choose between quantisation, pruning, and distillation?#

Quantisation gives the biggest speed-up per unit of effort (2-4x on CPU). Distillation is the right tool when you also need a smaller model footprint, not just a faster one. Pruning is the most fragile — it works but needs careful retraining. The recommended sequence is distill → prune → quantise.

How do you handle new users?#

Multi-stage fallback: (1) popular and trending items for truly new users; (2) demographics-based content recommendations once minimal profile is known; (3) bandit-style exploration to bootstrap signal in 3-10 interactions; (4) the full personalised model after ~50 interactions. See Part 14 for the meta-learning angle.

How do you decide to retire a model?#

A model is retired when (a) a successor wins an A/B test on the primary metric and does not regress any guardrail, and (b) the operational cost of the new model is acceptable. Always keep the previous model deployable for 30 days in case of a delayed regression.

Summary#

This article assembled the complete industrial recommendation stack:

- Three planes — data, training, serving — with clear interfaces between them

- A four-stage funnel — recall, coarse rank, fine rank, rerank — that fits hundreds of millions of users into a 100ms budget

- Multi-channel recall with reciprocal rank fusion, because no single channel covers all of quality

- Wide & Deep, DeepFM, and DIN as the production-grade ranking architectures

- A feature store that eliminates training/serving skew by sharing one feature definition between offline and online paths

- A/B testing with consistent hashing, z-tests, and pre-registered guardrails

- Continuous training triggered by schedule, drift, and metric decay

- Canary deployment with auto-rollback on latency, error rate, and CTR

- Serving infrastructure — gateway, orchestrator, GPU model servers, Redis feature store, prediction cache

- Team responsibilities clearly mapped to pipeline stages

The single most valuable lesson from industrial practice is also the simplest: start small, measure everything, and let A/B tests decide what stays. A pipeline with popular items, simple two-tower recall, a DeepFM ranker, and disciplined experimentation will beat an exotic GNN that is launched without an A/B framework. The frameworks in this article are not the source of competitive advantage — the loops they enable are.

References#

- Covington, P., Adams, J., Sargin, E. “Deep Neural Networks for YouTube Recommendations.” RecSys 2016. paper

- Yi, X., et al. “Sampling-Bias-Corrected Neural Modeling for Large Corpus Item Recommendations.” RecSys 2019. (the “two-tower with logQ correction” paper)

- Cheng, H., et al. “Wide & Deep Learning for Recommender Systems.” DLRS 2016. arXiv:1606.07792

- Guo, H., et al. “DeepFM: A Factorization-Machine based Neural Network for CTR Prediction.” IJCAI 2017. arXiv:1703.04247

- Zhou, G., et al. “Deep Interest Network for Click-Through Rate Prediction.” KDD 2018. arXiv:1706.06978

- Liu, Z., et al. “Monolith: Real Time Recommendation System With Collisionless Embedding Table.” 2022. arXiv:2209.07663

- Alibaba EasyRec: github.com/alibaba/EasyRec

- TorchRec: github.com/pytorch/torchrec

- Feast (open-source feature store): feast.dev

Recommendation Systems 16 parts

- 01 Recommendation Systems (1): Fundamentals and Core Concepts

- 02 Recommendation Systems (2): Collaborative Filtering and Matrix Factorization

- 03 Recommendation Systems (3): Deep Learning Foundations

- 04 Recommendation Systems (4): CTR Prediction and Click-Through Rate Modeling

- 05 Recommendation Systems (5): Embedding and Representation Learning

- 06 Recommendation Systems (6): Sequential Recommendation and Session-based Modeling

- 07 Recommendation Systems (7): Graph Neural Networks and Social Recommendation

- 08 Recommendation Systems (8): Knowledge Graph-Enhanced Recommendation

- 09 Recommendation Systems (9): Multi-Task Learning and Multi-Objective Optimization

- 10 Recommendation Systems (10): Deep Interest Networks and Attention Mechanisms

- 11 Recommendation Systems (11): Contrastive Learning and Self-Supervised Learning

- 12 Recommendation Systems (12): Large Language Models and Recommendation

- 13 Recommendation Systems (13): Fairness, Debiasing, and Explainability

- 14 Recommendation Systems (14): Cross-Domain Recommendation and Cold-Start Solutions

- 15 Recommendation Systems (15): Real-Time Recommendation and Online Learning

- 16 Recommendation Systems (16): Industrial Architecture and Best Practices you are here