Reinforcement Learning (1): Fundamentals and Core Concepts

A beginner-friendly guide to the mathematical foundations of reinforcement learning -- MDPs, Bellman equations, dynamic programming, Monte Carlo methods, and temporal difference learning -- with working Python code, all explained through the analogy of learning to ride a bicycle.

The first time you sat on a bicycle, nobody handed you a manual that said “if your tilt angle exceeds 7.4 degrees, apply 12% counter-steer.” You wobbled, you over-corrected, you fell, you got back on. After a few hundred attempts your body simply knew what to do, even though you could not put it into words.

That trial-feedback-improvement loop is not just how we learn to ride bikes. It is how AlphaGo learned to defeat the world Go champion, how Boston Dynamics robots learn to walk, and how recommendation systems quietly improve every time you click. They all share one mathematical framework called reinforcement learning (RL).

This article builds RL from the ground up. We will use the bicycle as our running analogy and translate every piece of intuition into the math that powers modern algorithms.

What You Will Learn#

- The Markov Decision Process (MDP) — the mathematical skeleton of every RL problem

- Bellman equations and why they make value functions tractable

- Dynamic programming for environments where the rules are known

- Monte Carlo methods for learning purely from experience

- Temporal difference (TD) learning — the bridge between DP and MC that powers DQN, PPO, and beyond

- Working Python implementations you can run on your laptop

Prerequisites: Basic probability and a little Python. Familiarity with supervised learning helps but is not required.

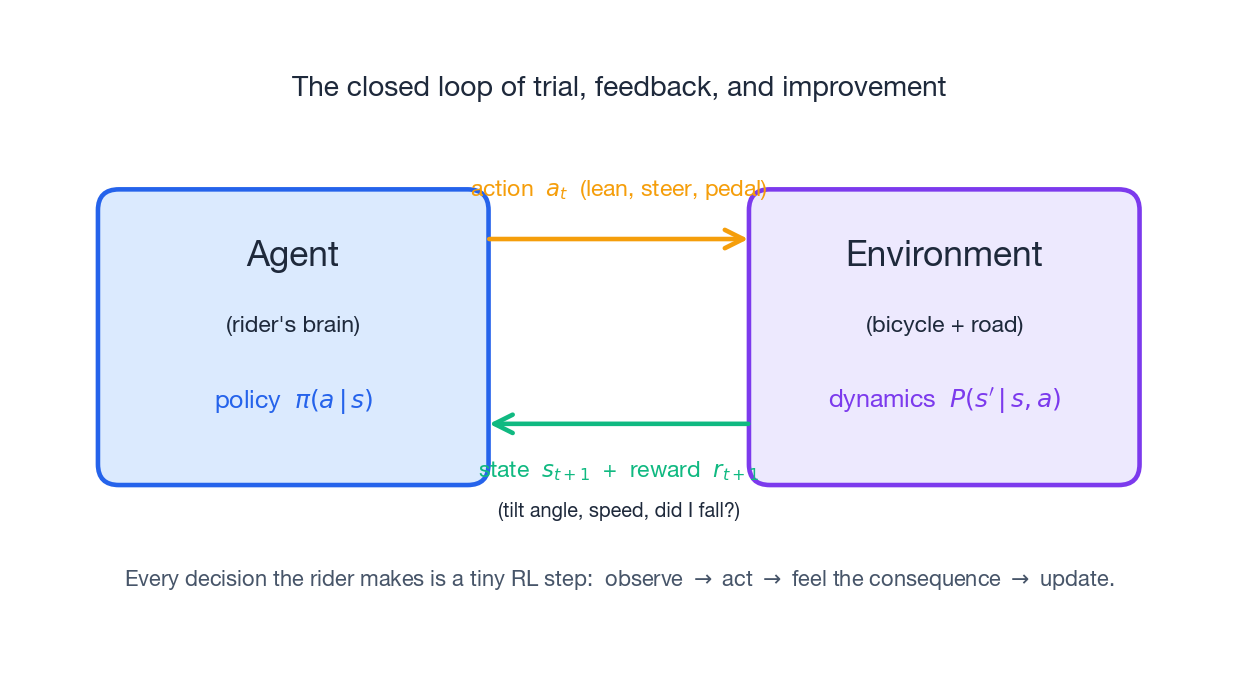

The Bicycle Loop#

Picture yourself on a bicycle for the first time. At every instant, three things are happening in a tight loop:

- You observe: tilt angle, speed, where the curb is.

- You act: lean a little, steer a little, pedal a little.

- The world responds: the bike either steadies, drifts further off balance, or drops you on the pavement.

That third step gives you a signal — a small reward when you stay upright, a sharp punishment when you fall. Over many trials, your brain assembles a policy: a mapping from “what I am sensing right now” to “what I should do next.” The policy is never written down; it is etched into your reflexes by the loop itself.

Reinforcement learning is the mathematical formalism of this loop. The “you” in the diagram is called the agent; the bicycle plus road is the environment; the lean and steer commands are actions; the tilt and speed readouts are states; and the don’t-fall feeling is the reward. Everything else in this article is just careful bookkeeping on top of this picture.

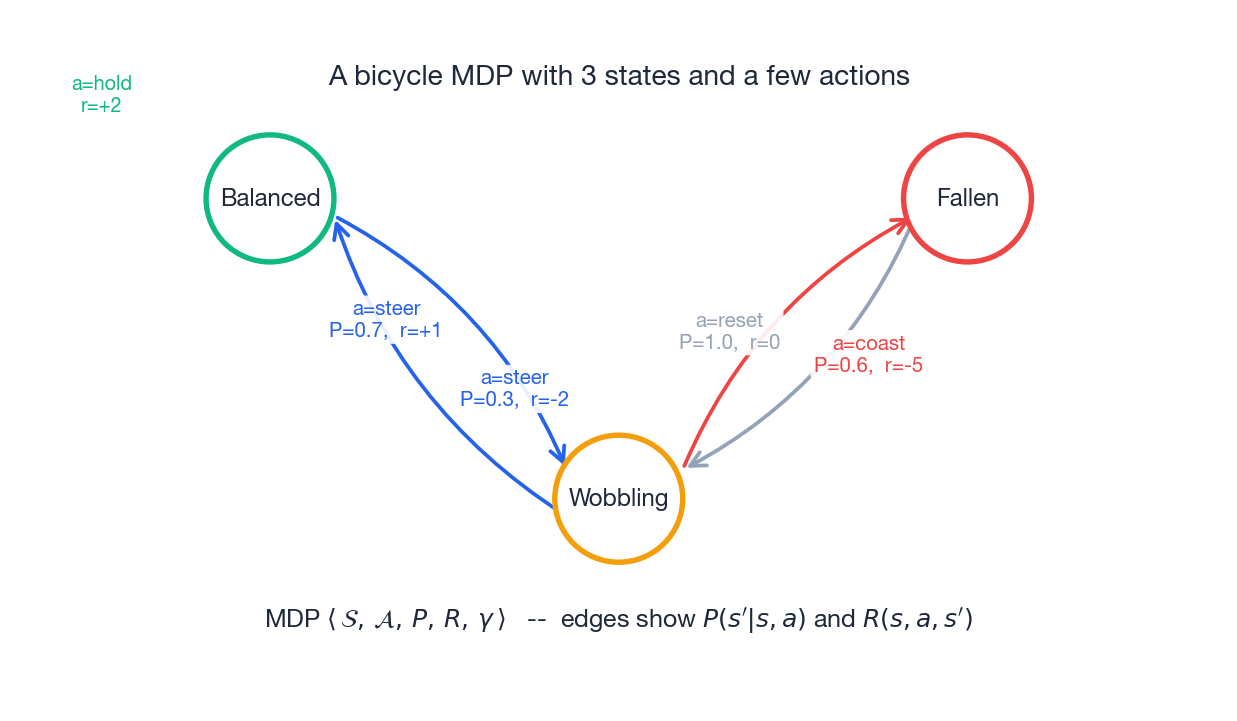

Markov Decision Process: The Mathematical Foundation#

The figure above shows a deliberately tiny MDP: just three states (Balanced, Wobbling, Fallen), a few actions, and the transition probabilities and rewards labelled directly on the edges. Every real RL problem — robot control, Go, large-language-model fine-tuning — is a (much larger) instance of this same structure.

The Five Components#

State space $\mathcal{S}$ : every situation the agent can find itself in. For a bicycle: tilt angle, angular velocity, forward speed, road curvature. States can be discrete (board positions in chess) or continuous (joint angles of a robot).

Action space $\mathcal{A}$

: everything the agent can do. Discrete ({lean-left, lean-right, hold}) or continuous (apply a torque of 0.37 Nm to the handlebars).

A perfectly balanced bicycle is not a deterministic system: a gust of wind, a pebble, or a slightly uneven pedal stroke can each push you to a different next state. The transition probability captures all of that uncertainty.

Reward function $R(s, a, s')$ : the immediate payoff for the transition $s \xrightarrow{a} s'$ . Rewards are the agent’s only learning signal. Get them wrong and the agent will obediently optimise the wrong thing — a phenomenon known as reward hacking.

Discount factor $\gamma \in [0, 1)$ : how much the agent cares about future reward versus immediate reward. We will see this is far more than a numerical convenience.

The Markov Property#

$$P(S_{t+1} \mid S_t, A_t, S_{t-1}, A_{t-1}, \ldots) = P(S_{t+1} \mid S_t, A_t).$$For the bicycle this looks suspicious — surely which way I was leaning a moment ago matters? It does, but the trick is to fold history into the state itself. If we redefine the state as (tilt, angular velocity, speed) instead of just tilt, the Markov property holds again. In Atari, DeepMind famously stacked the last 4 frames into the state for exactly this reason.

Policy: From States to Actions#

A policy $\pi$ is the agent’s strategy — the function that turns observations into decisions:

- Deterministic: $a = \pi(s)$ . Always the same action for the same state.

- Stochastic: $\pi(a \mid s) = \Pr(A_t = a \mid S_t = s)$ . A probability distribution over actions.

Stochastic policies matter for two reasons: they let the agent explore new behaviours, and they are the natural output of neural networks (a softmax over actions).

Return and Value Functions#

$$G_t = r_t + \gamma r_{t+1} + \gamma^2 r_{t+2} + \cdots = \sum_{k=0}^{\infty} \gamma^k r_{t+k}.$$Why discount? Three independent reasons all point the same way:

- Mathematical: when $|r| \le R_{\max}$ the geometric sum stays finite, $|G_t| \le R_{\max} / (1 - \gamma)$ . Without it, infinite-horizon tasks would blow up.

- Cognitive: a reward today is worth more than the same reward tomorrow — there is uncertainty between you and tomorrow.

- Operational: without discount, an agent could rationally do nothing forever and still claim infinite return. Discounting forces it to get on with it.

Bellman Equations: The Recursive Heart of RL#

Value functions have a beautiful recursive structure. This is the single most important idea in RL theory — once it clicks, every algorithm in the rest of the series will feel like a variation on a theme.

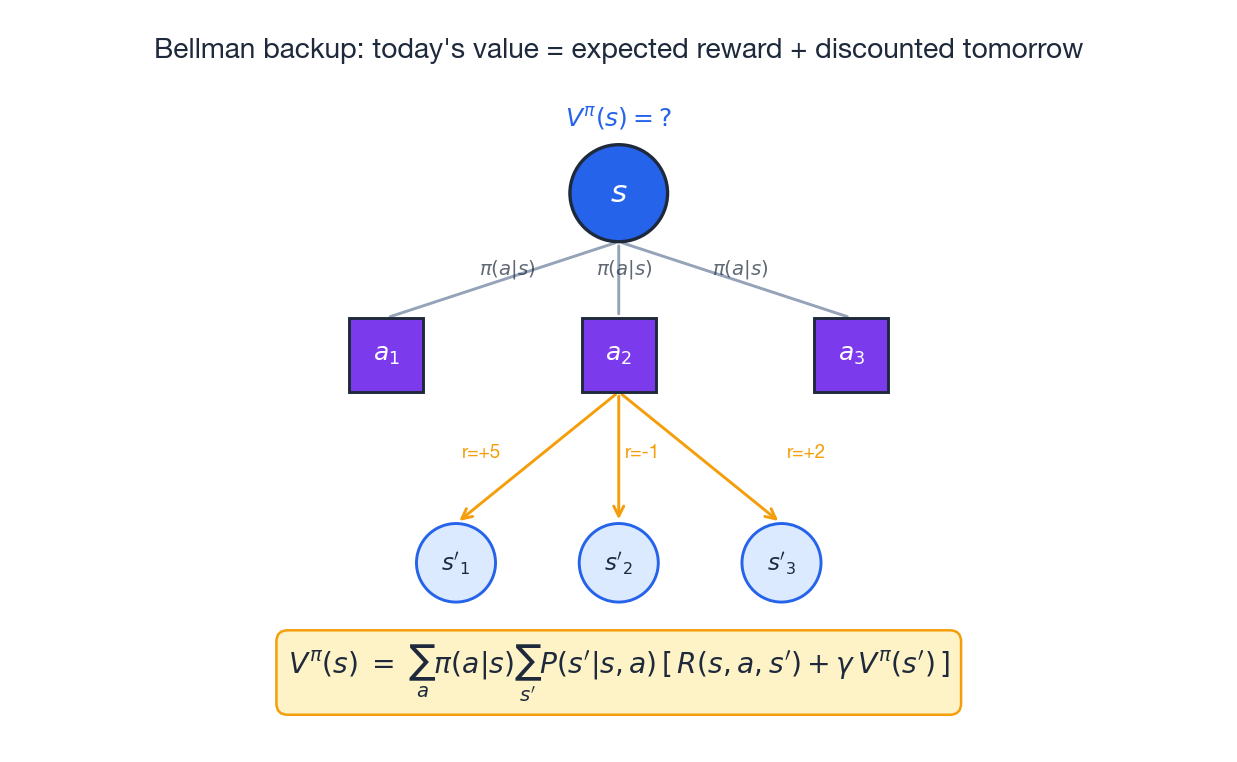

$$V^\pi(s) = \sum_a \pi(a \mid s) \sum_{s'} P(s' \mid s, a)\!\left[R(s, a, s') + \gamma V^\pi(s')\right].$$Read it out loud: the value of being here equals the expected immediate reward plus the discounted value of where I land next. The tree in the figure is exactly this equation drawn out — the root is the current state, the middle layer is the actions weighted by $\pi$ , the leaves are the next states weighted by $P$ , and the rewards live on the arrows.

$$V^*(s) = \max_a \sum_{s'} P(s' \mid s, a)\!\left[R(s, a, s') + \gamma V^*(s')\right],$$ $$Q^*(s, a) = \sum_{s'} P(s' \mid s, a)\!\left[R(s, a, s') + \gamma \max_{a'} Q^*(s', a')\right].$$ $$\pi^*(s) = \arg\max_{a} Q^*(s, a).$$A Numerical Example#

Let us pin this down with a small two-state MDP, $\{s_1, s_2\}$ , one action $a_1$ that we always pick, and $\gamma = 0.9$ .

| State | Action | Next State | Prob | Reward |

|---|---|---|---|---|

| $s_1$ | $a_1$ | $s_1$ | 0.5 | 5 |

| $s_1$ | $a_1$ | $s_2$ | 0.5 | 10 |

| $s_2$ | $a_1$ | $s_1$ | 0.7 | 2 |

| $s_2$ | $a_1$ | $s_2$ | 0.3 | 8 |

with solution $V(s_1) \approx 52.3$ and $V(s_2) \approx 50.4$ . The values are large because the rewards keep coming forever and $\gamma$ is close to 1; that is the geometric-series effect at work.

Dynamic Programming: When You Know the Rules#

When the environment model ($P$ and $R$ ) is fully known, dynamic programming (DP) computes the optimal policy exactly. There are no samples, no noise — just deterministic iteration on the Bellman equation.

Policy Evaluation#

$$V_{k+1}(s) = \sum_a \pi(a \mid s) \sum_{s'} P(s' \mid s, a)\!\left[R(s, a, s') + \gamma V_k(s')\right].$$Start with $V_0 \equiv 0$ and iterate. The Bellman operator is a $\gamma$ -contraction in the sup-norm, so $V_k \to V^\pi$ exponentially fast.

Policy Improvement#

$$\pi'(s) = \arg\max_{a} \sum_{s'} P(s' \mid s, a)\!\left[R(s, a, s') + \gamma V^\pi(s')\right].$$The policy improvement theorem guarantees $V^{\pi'}(s) \ge V^\pi(s)$ for every state. You never get worse by being greedier with respect to a correct value function.

Policy Iteration#

Alternate between the two:

- Start with any policy $\pi_0$ .

- Evaluate: compute $V^{\pi_k}$ .

- Improve: build the greedy $\pi_{k+1}$ .

- If $\pi_{k+1} = \pi_k$ , stop — we have hit a fixed point, which is the optimal policy.

Value Iteration#

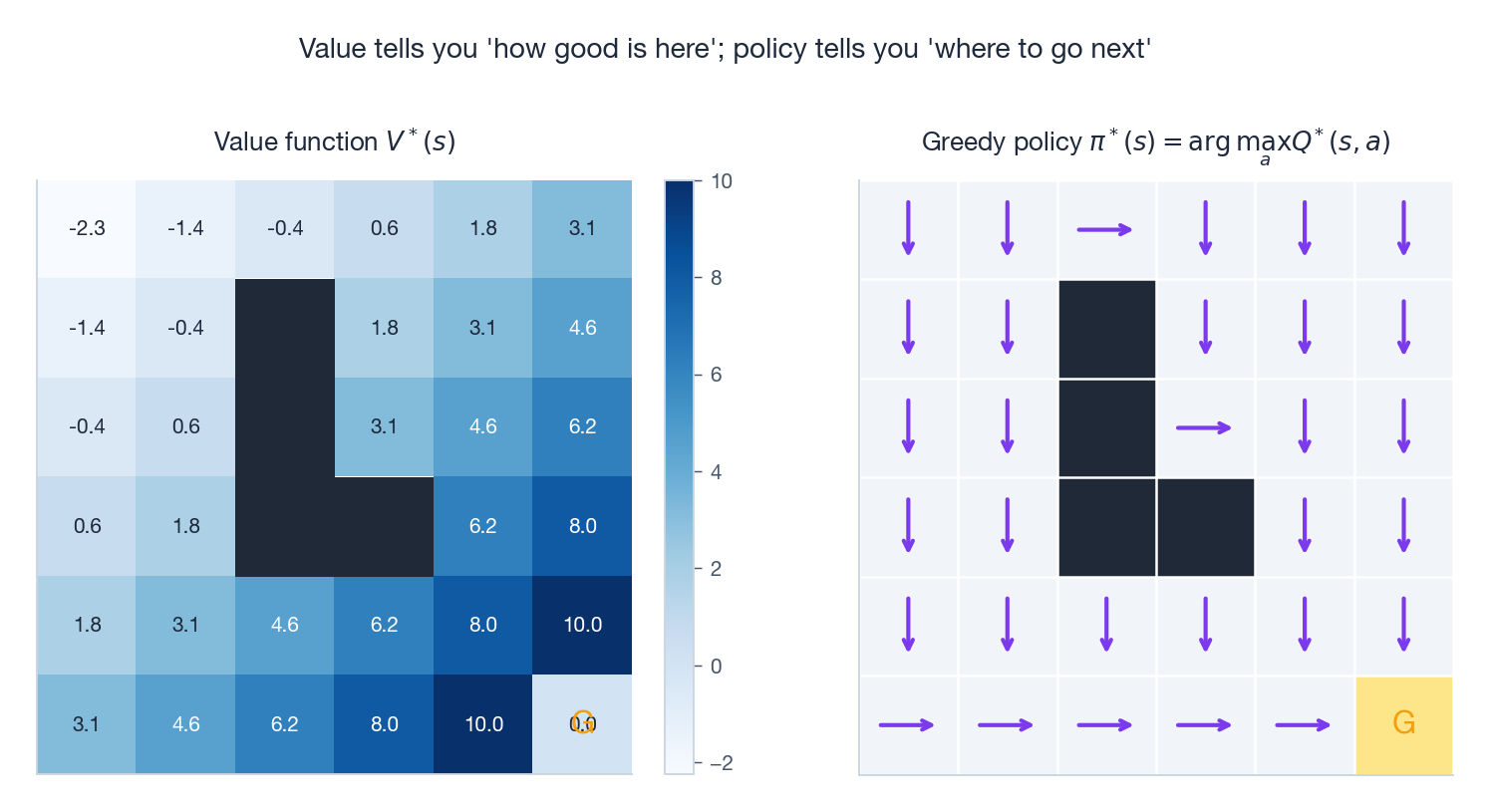

$$V_{k+1}(s) = \max_a \sum_{s'} P(s' \mid s, a)\!\left[R(s, a, s') + \gamma V_k(s')\right].$$Each sweep contracts the error by a factor of $\gamma$ . The figure below shows what the converged value function and the resulting greedy policy look like on a small grid world.

The two panels are inseparable halves of the same answer: the heatmap tells you how good is here?, and the arrows tell you where should I go next?. The arrows always point uphill on the heatmap, which is exactly what “greedy with respect to $V$ ” means.

Code: GridWorld with Value Iteration#

| |

DP is exact and elegant, but it has two crippling limitations. First, it requires the model: in real life we rarely know $P$ and $R$ in closed form. Second, it sweeps over the entire state space on every iteration — impossible when the state space is the set of all $84 \times 84$ Atari frames. The next two sections fix both issues.

Monte Carlo Methods: Learning Without a Model#

When we do not know $P$ and $R$ , we must learn from experience. The most direct way is also the oldest in statistics: average a lot of samples.

Core Idea#

$$V(s) \approx \frac{1}{N} \sum_{i=1}^{N} G_t^{(i)},$$where $G_t^{(i)}$ is the return observed in the $i$ -th episode that passed through $s$ . As $N \to \infty$ this is unbiased and consistent.

The recipe is brutally simple:

- Run the policy from start to terminal state, recording $s_0, a_0, r_0, s_1, a_1, r_1, \ldots, s_T$ .

- For each state visited, compute the return from that point onwards: $G_t = \sum_{k=0}^{T-t-1} \gamma^k r_{t+k}$ .

- Update the running mean.

First-Visit vs Every-Visit MC#

Within a single episode, the same state may appear multiple times. We have two options:

- First-visit MC: only use the return from the first occurrence.

- Every-visit MC: use returns from every occurrence.

Both converge to $V^\pi$ as the number of episodes grows. First-visit is the easier of the two to prove unbiased and is the default choice in textbooks.

MC Control: Finding Better Policies#

$$ \pi(a \mid s) = \begin{cases} 1 - \varepsilon + \varepsilon / |\mathcal{A}| & \text{if } a = \arg\max_{a'} Q(s, a'), \\ \varepsilon / |\mathcal{A}| & \text{otherwise.} \end{cases} $$That tiny $\varepsilon$ is the engine of exploration — without it, the agent might lock onto a mediocre action and never discover the better one.

Code: MC Policy Evaluation and Control#

| |

Pros: model-free, conceptually transparent, unbiased.

Cons: needs complete episodes (so non-terminating tasks are out), high variance because the entire return depends on a long noisy trajectory, and no online updates.

Temporal Difference Learning: The Best of Both Worlds#

Temporal difference (TD) learning is the conceptual centrepiece of modern RL. It combines MC’s model-free spirit with DP’s bootstrapping in a single line of code, and it is the reason DQN, A3C, PPO, and SAC all exist.

TD(0): One-Step Updates#

$$V(S_t) \leftarrow V(S_t) + \alpha\,\big[\,r_t + \gamma V(S_{t+1}) - V(S_t)\,\big].$$ $$\delta_t = r_t + \gamma V(S_{t+1}) - V(S_t).$$It is the difference between what just happened ($r_t + \gamma V(S_{t+1})$ ) and what we expected ($V(S_t)$ ). The agent nudges its estimate towards reality, by an amount controlled by the learning rate $\alpha$ .

Three things are special about this rule:

| Method | Update target | Bias / variance | Online? |

|---|---|---|---|

| Monte Carlo | $G_t$ (actual return) | Unbiased / high variance | No |

| TD(0) | $r_t + \gamma V(S_{t+1})$ | Biased initially / low variance | Yes |

| DP backup | $\mathbb{E}[r_t + \gamma V(S_{t+1})]$ | No noise, requires model | Yes |

The figure above shows how the choice of $\gamma$ alone reshapes how fast a Q-learning agent (a TD method) climbs out of the early-training swamp on a small grid task. Lower $\gamma$ propagates credit more locally and learns faster initially; higher $\gamma$ is slower to converge but values long-term success more.

Sarsa: On-Policy TD Control#

$$Q(S_t, A_t) \leftarrow Q(S_t, A_t) + \alpha\,\big[\,r_t + \gamma Q(S_{t+1}, A_{t+1}) - Q(S_t, A_t)\,\big].$$The name spells out the quintuple it depends on: $(S_t, A_t, R_t, S_{t+1}, A_{t+1})$ .

Q-Learning: Off-Policy TD Control#

$$Q(S_t, A_t) \leftarrow Q(S_t, A_t) + \alpha\,\big[\,r_t + \gamma \max_{a'} Q(S_{t+1}, a') - Q(S_t, A_t)\,\big].$$The single-character difference — $A_{t+1}$ versus $\max_{a'}$ — changes everything. Sarsa evaluates the policy it follows (on-policy); Q-learning evaluates the greedy policy regardless of what it actually does (off-policy). Q-learning can therefore learn the optimal policy while exploring randomly.

Sarsa vs Q-Learning: Cliff Walking#

The classic illustration is cliff walking:

| |

The agent starts at S, must reach G, and falls into the cliff C if it ever steps below the top row.

- Sarsa factors in the risk of an exploratory step pushing it off the cliff. It learns a safer path that hugs the top edge of the grid.

- Q-learning evaluates the greedy policy (which never explores), so it learns the optimal path right along the cliff edge — but during training it falls in much more often.

This is the cleanest possible illustration of the on-policy/off-policy trade-off: Sarsa is conservative, Q-learning is asymptotically optimal but more reckless during learning.

TD($\lambda$ ) and Eligibility Traces#

$$G_t^\lambda = (1 - \lambda) \sum_{n=1}^{\infty} \lambda^{n-1} G_t^{(n)},$$where $G_t^{(n)}$ is the $n$ -step return. The interpolation parameter $\lambda \in [0, 1]$ lets you smoothly trade between TD(0) ($\lambda = 0$ ) and Monte Carlo ($\lambda = 1$ ).

$$e_t(s) = \gamma \lambda \, e_{t-1}(s) + \mathbf{1}[S_t = s], \qquad V(s) \leftarrow V(s) + \alpha \, \delta_t \, e_t(s)\quad\text{for all } s.$$Recently visited states get more credit when a reward arrives, propagating information backwards through the entire trajectory in a single step.

Code: Sarsa and Q-Learning#

| |

Two Themes That Run Through Everything#

Two ideas appear and reappear in every algorithm above. It is worth pulling them out so they are easy to recognise later in the series.

Exploration vs Exploitation#

Every learning agent faces a permanent dilemma: use what I know, or test what I don’t? If you only ever pick the action you currently believe is best, you may never discover a better one. If you constantly randomise, you learn a lot but earn very little.

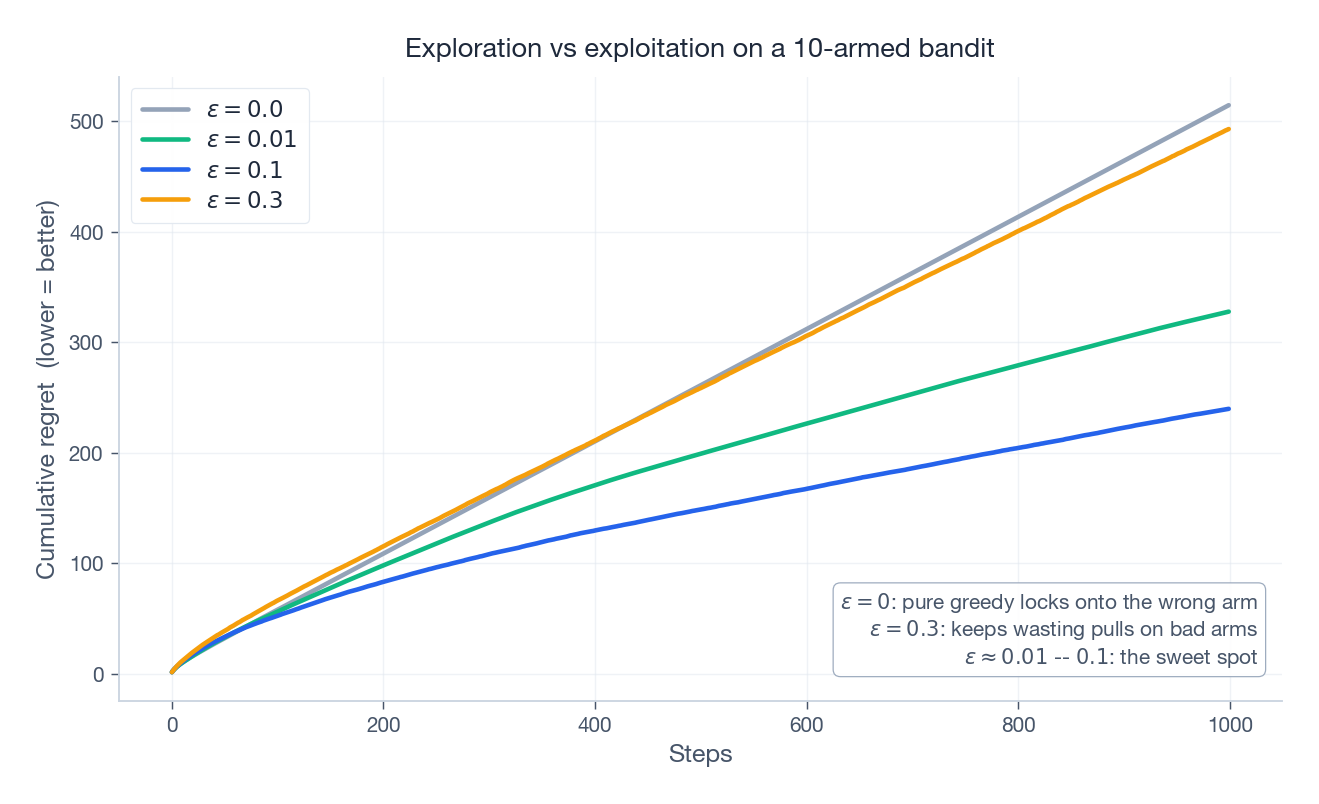

The figure above runs a classic 10-armed bandit experiment: each “arm” is a slot machine with an unknown average payoff, and the agent has 1000 pulls. Cumulative regret is the gap between the reward of the best arm and the reward you actually collected.

- $\varepsilon = 0$ (pure greedy) often locks onto an arm that seemed good after a few pulls but isn’t actually the best — regret grows linearly forever.

- $\varepsilon = 0.3$ keeps wasting pulls on bad arms.

- $\varepsilon \in [0.01, 0.1]$ sits in the sweet spot.

This same trade-off shows up dressed in different clothes throughout the series: $\varepsilon$ -greedy in DQN, entropy bonuses in PPO, intrinsic motivation in Part 4 , Thompson sampling in Bayesian RL. The names differ, the problem doesn’t.

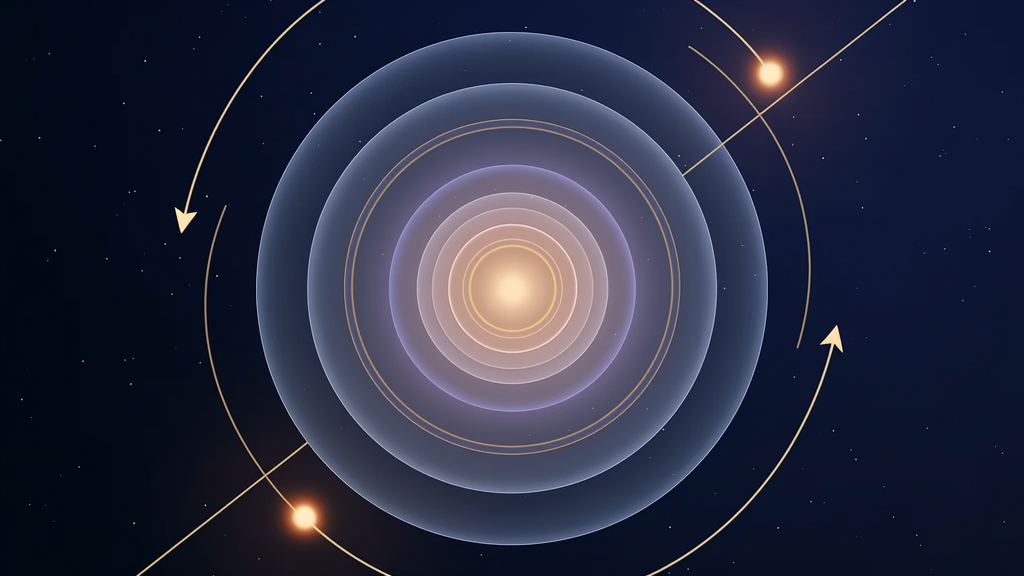

The Discount Factor as a Planning Horizon#

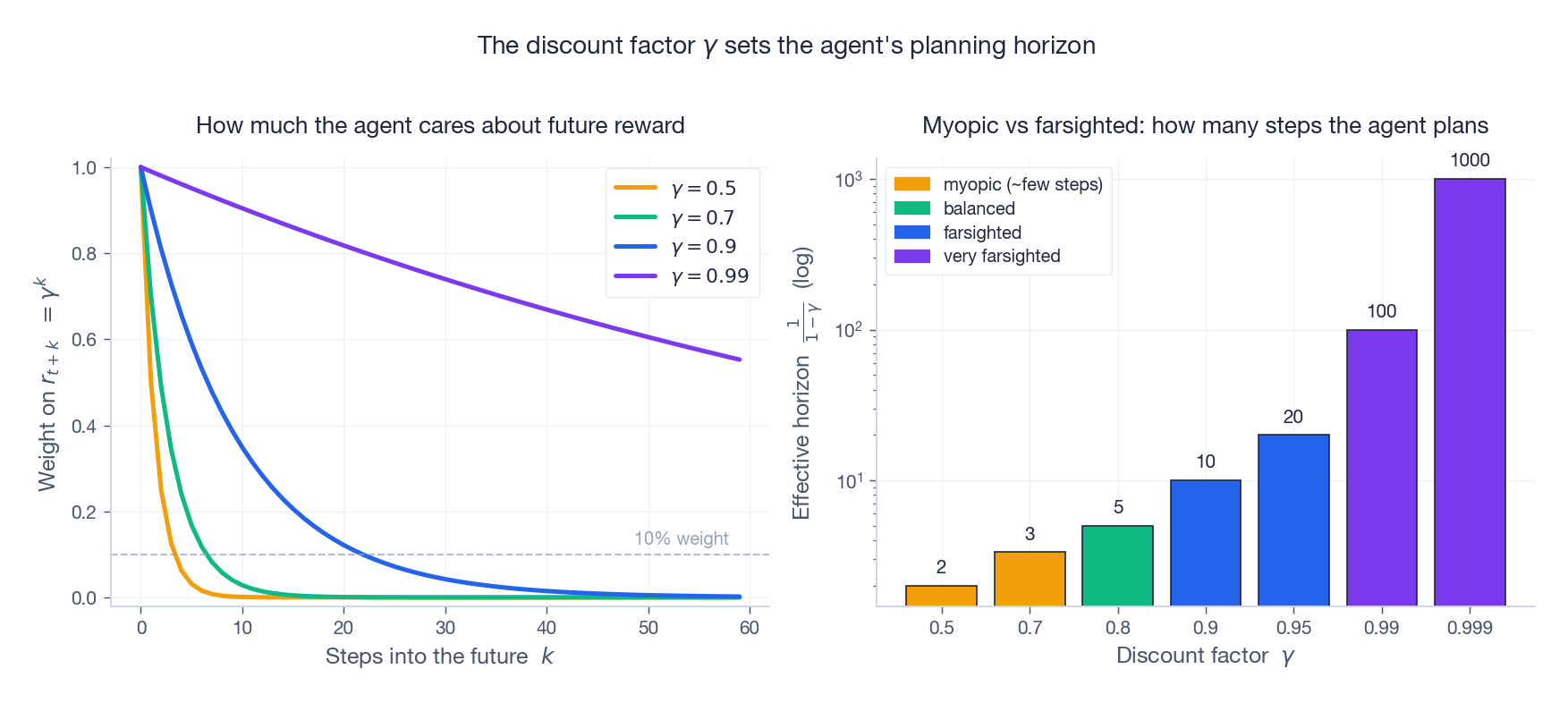

The discount factor $\gamma$ is more than a numerical knob. It quietly defines how far into the future the agent thinks. Two ways to see it:

- Reward weighting (left panel): a reward $k$ steps away is worth $\gamma^k$ of an immediate reward. With $\gamma = 0.5$ , a reward 10 steps out is already worth less than 0.1%; with $\gamma = 0.99$ , it still carries 90%.

- Effective horizon (right panel): the rough number of future steps that meaningfully contribute to $G_t$ is $1 / (1 - \gamma)$ . So $\gamma = 0.9$ means “I plan about 10 steps ahead,” $\gamma = 0.99$ means “100 steps,” and $\gamma = 0.999$ means “1000 steps.” Notice the y-axis is logarithmic — pushing $\gamma$ from 0.99 to 0.999 is a tenfold expansion of the planning horizon, not a 1% tweak.

Practical consequence: $\gamma$ should match the time scale of your task. Game-playing agents often use $\gamma \approx 0.99$ . Recommendation systems that care about decade-long lifetime value might push it higher. Robot reflex controllers might use $\gamma = 0.9$ or lower because anything more than a second is irrelevant.

Choosing the Right Method#

A quick decision guide:

| Situation | Recommended method | Why |

|---|---|---|

| Model is known | Dynamic programming | Exact, no sampling needed |

| Short episodes, no model | Monte Carlo | Unbiased, conceptually simple |

| Long or continuing tasks | TD (Q-learning / Sarsa) | Online, low variance |

| Need optimal policy | Q-learning | Off-policy, converges to $Q^*$ |

| Safety matters | Sarsa | Factors exploration risk into learning |

| Long credit-assignment chains | TD($\lambda$ ) / Sarsa($\lambda$ ) | Eligibility traces propagate fast |

Three sentences to memorise:

Value = immediate reward + discounted future value.

Policy improvement = greedily prefer high-value actions.

Learning = use experience to correct value estimates.

Summary#

This chapter built the foundation that the rest of the series stands on:

- MDPs formalise the agent-environment loop: states, actions, transitions, rewards, discount.

- Bellman equations give value functions a recursive structure that turns long-horizon planning into local arithmetic.

- Dynamic programming solves an MDP exactly when the model is known.

- Monte Carlo methods learn from complete episodes without a model.

- Temporal difference methods combine the best of both worlds: model-free and online.

- Exploration vs exploitation and the discount factor are the two hidden levers that show up in every algorithm to come.

All of these methods assume small, discrete state and action spaces where you can store one value per cell of a table. When state spaces explode — think $256^{84 \times 84}$ Atari frames — tabular methods break down completely.

Next up: Part 2 introduces Deep Q-Networks (DQN) — using neural networks to approximate $Q$ , with experience replay and target networks to keep training stable. That is the bridge from textbook RL to the algorithms that beat humans at Atari.

References#

- Sutton, R. S., & Barto, A. G. (2018). Reinforcement Learning: An Introduction (2nd ed.). MIT Press.

- Watkins, C. J., & Dayan, P. (1992). Q-learning. Machine Learning, 8(3-4), 279-292.

- Sutton, R. S. (1988). Learning to predict by the methods of temporal differences. Machine Learning, 3(1), 9-44.

- Bellman, R. (1957). Dynamic Programming. Princeton University Press.

- Silver, D. (2015). UCL Course on Reinforcement Learning.

Reinforcement Learning 12 parts

- 01 Reinforcement Learning (1): Fundamentals and Core Concepts you are here

- 02 Reinforcement Learning (2): Q-Learning and Deep Q-Networks (DQN)

- 03 Reinforcement Learning (3): Policy Gradient and Actor-Critic Methods

- 04 Reinforcement Learning (4): Exploration Strategies and Curiosity-Driven Learning

- 05 Reinforcement Learning (5): Model-Based RL and World Models

- 06 Reinforcement Learning (6): PPO and TRPO — Trust Region Policy Optimization

- 07 Reinforcement Learning (7): Imitation Learning and Inverse RL

- 08 Reinforcement Learning (8): AlphaGo and Monte Carlo Tree Search

- 09 Reinforcement Learning (9): Multi-Agent Reinforcement Learning

- 10 Reinforcement Learning (10): Offline Reinforcement Learning

- 11 Reinforcement Learning (11): Hierarchical RL and Meta-Learning

- 12 Reinforcement Learning (12): RLHF and LLM Applications