Reinforcement Learning (2): Q-Learning and Deep Q-Networks (DQN)

How DQN combined neural networks with Q-Learning to master Atari games -- covering experience replay, target networks, Double DQN, Dueling DQN, Prioritized Experience Replay, and Rainbow.

In December 2013, a small DeepMind team uploaded a paper to arXiv with a striking claim: a single neural network, trained from raw pixels and the score, learned to play seven Atari games — and beat the previous best on six of them. No game-specific features. No hand-coded heuristics. The same architecture for Pong, Breakout, and Space Invaders. The algorithm was Deep Q-Network (DQN), and it kicked off the deep reinforcement learning era.

DQN was not invented from scratch. It is Q-Learning — a 1989 tabular algorithm by Chris Watkins — with a neural network in place of the lookup table, plus two engineering tricks that keep training from blowing up. This article explains exactly which problems those tricks solve, walks through a complete PyTorch implementation, and surveys the variants that turned DQN from an Atari demo into a workhorse of modern RL.

What You Will Learn#

- Why tabular Q-Learning collapses in high-dimensional state spaces

- The Deadly Triad — the three ingredients that make naive deep RL diverge

- DQN’s two innovations: experience replay and target networks — and which Triad failure each one fixes

- A complete, runnable Atari DQN agent in PyTorch

- The post-DQN family: Double DQN, Dueling DQN, Prioritized Experience Replay, and Rainbow

Prerequisites: Part 1 for MDPs, the Bellman equations, and the temporal-difference (TD) intuition.

Q-Learning Foundations#

The Bellman optimality equation, reread#

$$Q^*(s, a) \;=\; \mathbb{E}_{s' \sim P(\cdot|s,a)}\Big[R(s,a,s') + \gamma \max_{a'} Q^*(s', a')\Big]$$Read it as a contract: the value of taking action $a$ in state $s$ today equals the immediate reward plus the discounted best future you can achieve from $s'$ . Once you know $Q^*$ , the optimal policy is just $\pi^*(s) = \arg\max_a Q^*(s, a)$ — no planning, no search, just a table lookup.

So the entire problem reduces to: estimate $Q^*$ . Q-Learning is one way.

The Q-Learning update rule#

$$Q(S_t, A_t) \;\leftarrow\; Q(S_t, A_t) + \alpha \underbrace{\Big[r_t + \gamma \max_{a'} Q(S_{t+1}, a') - Q(S_t, A_t)\Big]}_{\text{TD error } \delta_t}$$The bracketed quantity is the TD error — the gap between a one-step bootstrapped estimate and the current value. Positive $\delta_t$ means we underestimated and should nudge up; negative means the opposite.

Two properties make Q-Learning special:

- Off-policy. The target uses $\max_{a'}$ — the greedy action — regardless of which action the agent actually picks next. The behaviour policy (typically epsilon-greedy) can explore freely; the target policy stays greedy. This decoupling is the source of Q-Learning’s power and, later, its instability.

- Convergence guarantee. Watkins and Dayan (1992) proved that if every state-action pair is visited infinitely often and the learning rate satisfies the Robbins-Monro conditions ($\sum_t \alpha_t = \infty$ , $\sum_t \alpha_t^2 < \infty$ ), then $Q(s, a) \to Q^*(s, a)$ with probability one.

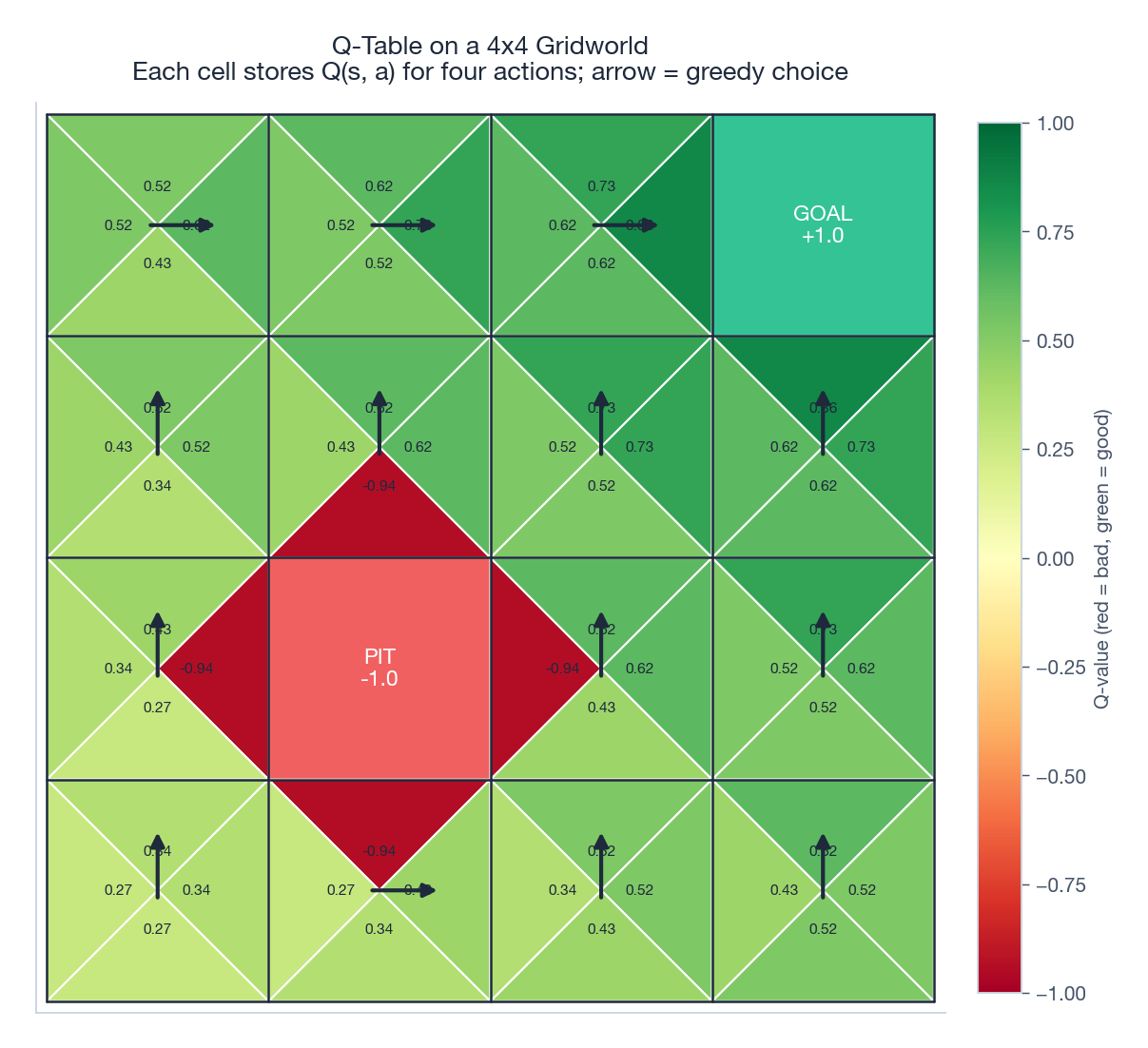

A trained Q-table is a small but informative object. The figure below shows one for a 4x4 gridworld with a goal, a pit, and a -0.04 step cost. Each cell carries four numbers (one per action); the arrow marks the greedy choice that you would extract via $\arg\max_a Q(s, a)$ .

A worked example: Cliff Walking#

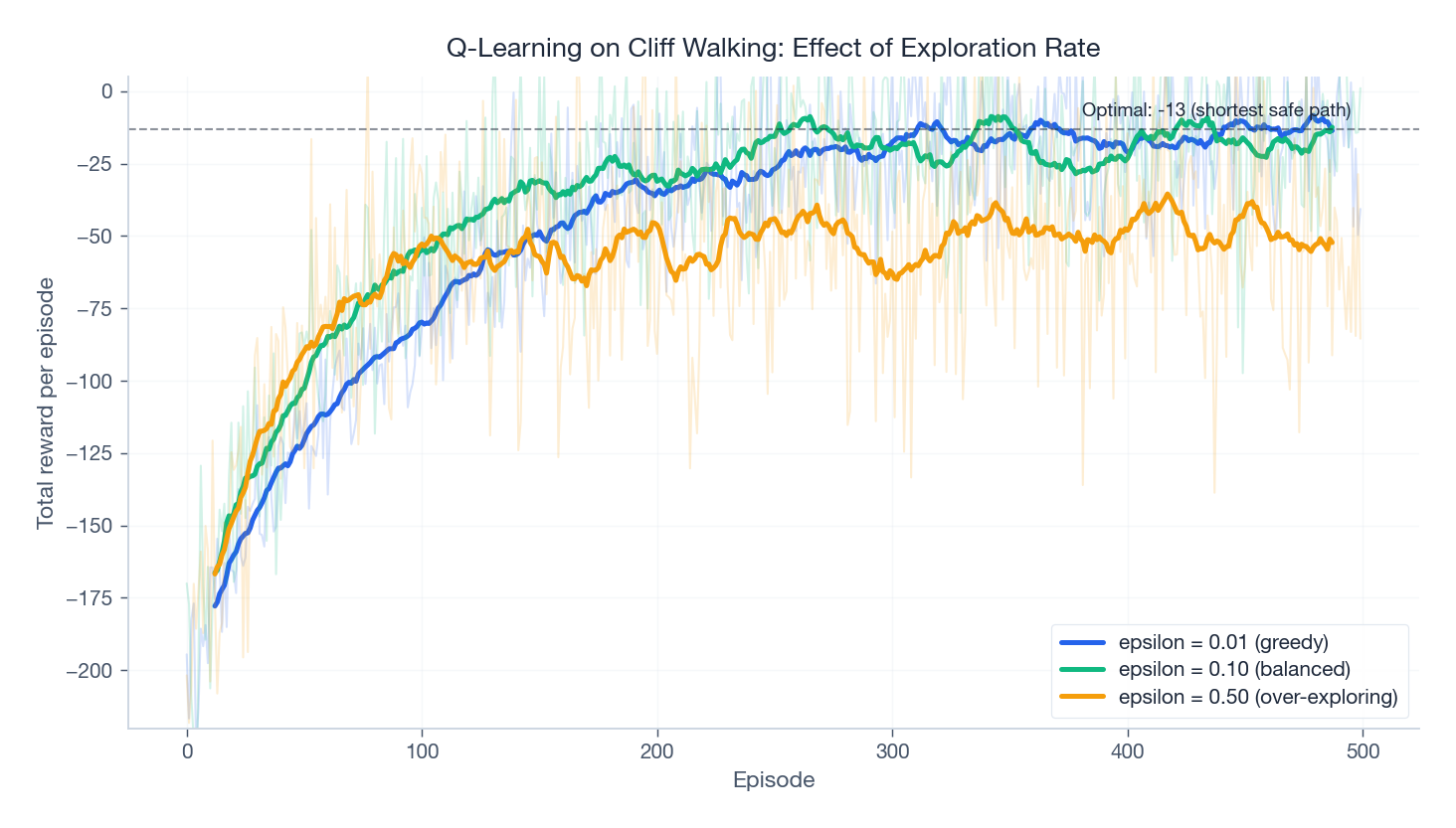

Sutton & Barto’s Cliff Walking is the standard sanity check for Q-Learning. The agent walks on a 4 x 12 grid; the bottom row between start and goal is a cliff that costs -100 and resets the episode. Every other step costs -1, so the optimal route is a one-step-above-the-cliff shortcut with return -13.

| |

The interesting thing is what happens when you sweep $\varepsilon$ . Too greedy and the agent never finds the optimal route. Too exploratory and it keeps falling off the cliff.

The 0.10 curve climbs fast and stabilises near the optimum. The 0.50 curve plateaus far from optimal because the agent keeps choosing random (often fatal) actions. The 0.01 curve eventually converges but takes longer because exploration is so sparse early on. This trade-off recurs everywhere in RL.

Why Tables Are Not Enough#

Cliff Walking has 48 states — a Q-table is 192 floats. Now consider Atari Breakout. The DQN paper preprocesses the screen into 84 x 84 grayscale frames and stacks the last four to give the agent a sense of motion. The state is a vector in $\{0, \ldots, 255\}^{84 \times 84 \times 4}$ , a space of size $256^{28224}$ . There are not enough atoms in the universe.

Two failures follow immediately:

- Storage is impossible. No table can hold a Q-value per state.

- Visit count is zero. Q-Learning’s convergence theorem requires every state-action pair to be visited infinitely often. The agent will never see the exact same 84x84x4 array twice.

The fix is to replace the table with a parametric function approximator: a function $Q(s, a; \theta)$ with parameters $\theta$ — in DQN, a convolutional network — that generalises across visually similar states. Two screens that differ only in noise or brick colour should map to similar Q-values.

The Deadly Triad#

Generalisation comes at a price. Sutton & Barto identify three properties that, together, can make a value-based RL algorithm diverge. They call this the Deadly Triad:

- Bootstrapping. The TD target $r + \gamma \max_{a'} Q(s', a'; \theta)$ uses the network’s own current estimate as ground truth.

- Function approximation. Updating $\theta$ to fix the Q-value at one state shifts the Q-values at every nearby state too — you cannot edit one cell of a “neural table” in isolation.

- Off-policy data. The transitions used for updates do not come from the policy whose value we are trying to learn.

Each pair is fine; tabular Q-Learning is off-policy + bootstrapping but has no function approximation. Linear function approximation + on-policy is fine. But all three at once is genuinely unsafe — you can construct toy MDPs where Q-values diverge to infinity.

DQN’s two innovations target the off-policy and bootstrapping legs of the Triad respectively.

DQN’s Core Innovations#

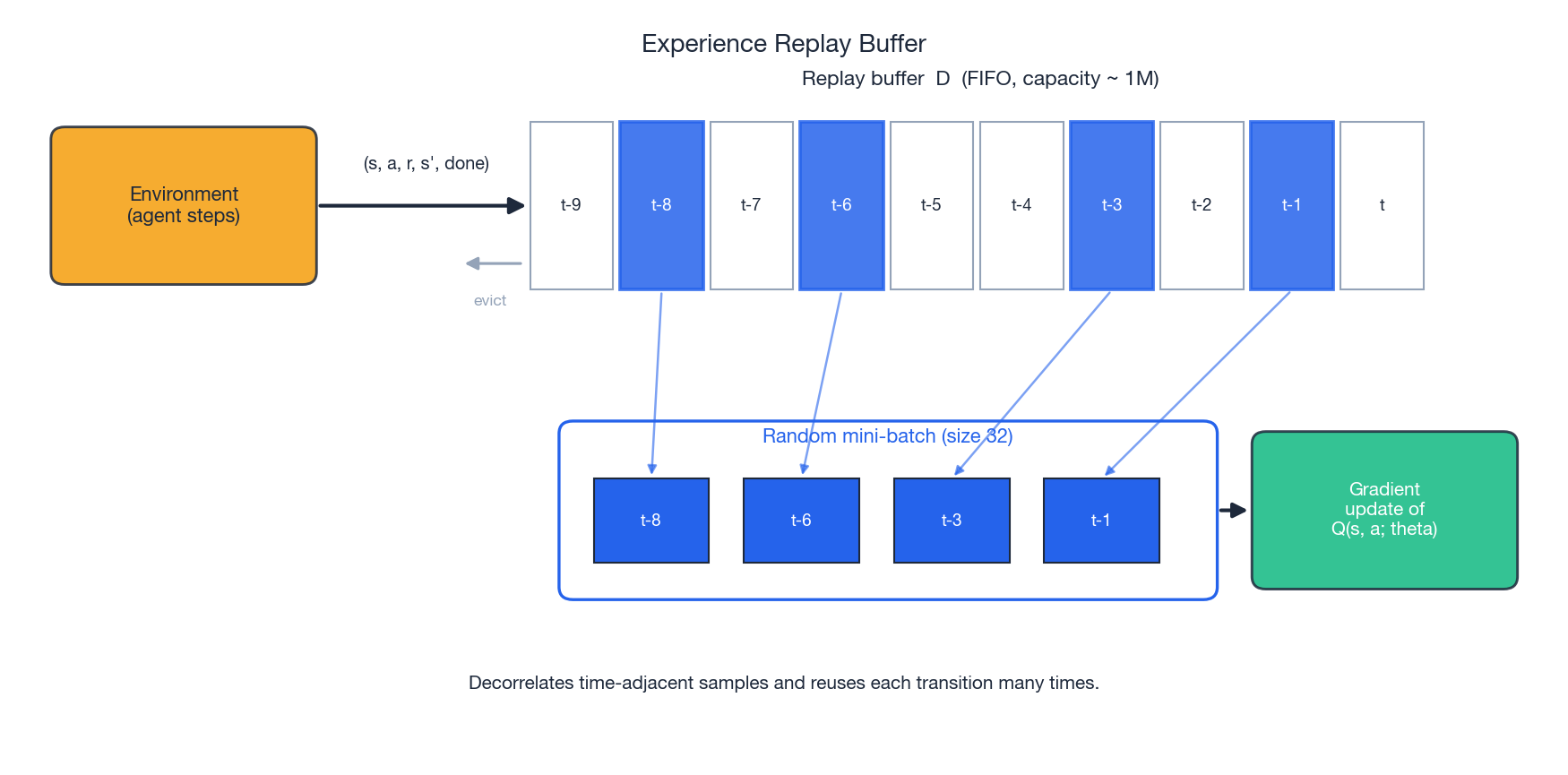

Experience replay: breaking temporal correlation#

In supervised learning, mini-batch SGD assumes the data are i.i.d. — you shuffle and sample. In RL, consecutive transitions $(s_t, a_t, r_t, s_{t+1})$ and $(s_{t+1}, a_{t+1}, r_{t+1}, s_{t+2})$ are wildly correlated: the next state is literally a function of the current one. Train on this stream directly and the gradient updates oscillate, the network forgets old experiences as soon as it leaves them, and convergence stalls.

DQN’s fix is the replay buffer: store every observed transition in a large FIFO queue (typically 1 million entries) and, at each gradient step, draw a random mini-batch from it.

This buys three benefits:

- Decorrelated mini-batches. A random sample of 32 transitions is approximately i.i.d., satisfying the assumption SGD relies on.

- Sample efficiency. Each transition is replayed many times — DeepMind reports roughly an order-of-magnitude improvement in sample efficiency.

- Smoother distribution shift. The buffer mixes data from many recent policies, so the training distribution evolves slowly even when the policy changes quickly.

| |

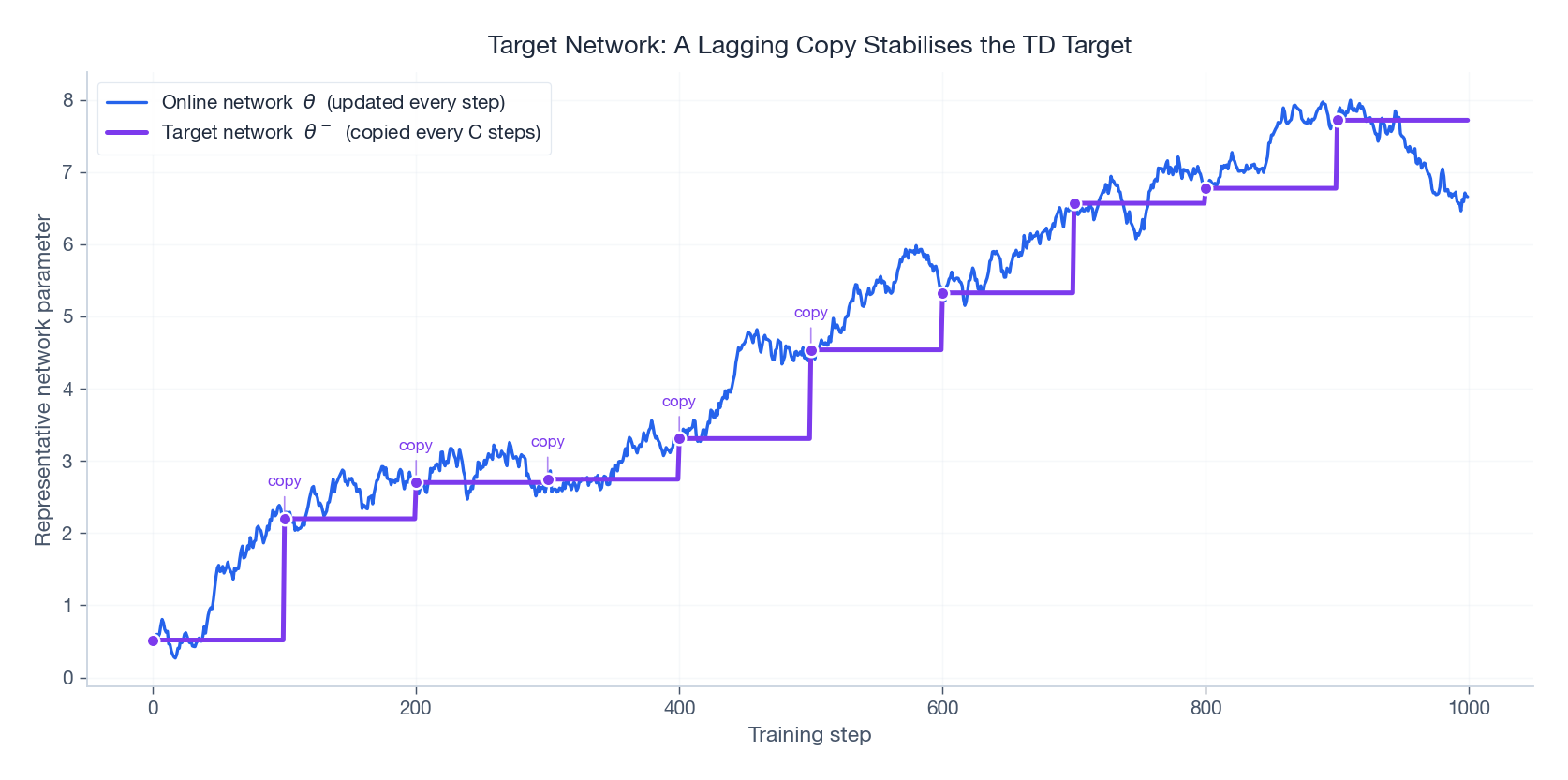

Target network: stop chasing your own tail#

Even with replay, the TD target $r + \gamma \max_{a'} Q(s', a'; \theta)$ moves every time we update $\theta$ — the target is a function of the parameters we are optimising. The geometry of the loss surface changes underfoot at every step. Empirically, this causes oscillation and divergence.

DQN keeps two copies of the network:

- The online network $Q(\cdot; \theta)$ , updated every step by gradient descent.

- The target network $Q(\cdot; \theta^-)$ , a frozen copy that the online network is periodically reset to.

Every $C$ steps (10,000 in the Nature paper), copy: $\theta^- \leftarrow \theta$ . Between copies the target is constant, turning each $C$ -step window into a near-supervised regression problem.

A continuous variant — the Polyak (soft) update $\theta^- \leftarrow \tau \theta + (1-\tau) \theta^-$ with $\tau \approx 0.005$ — is used by DDPG and SAC and works equally well.

A Complete Atari DQN#

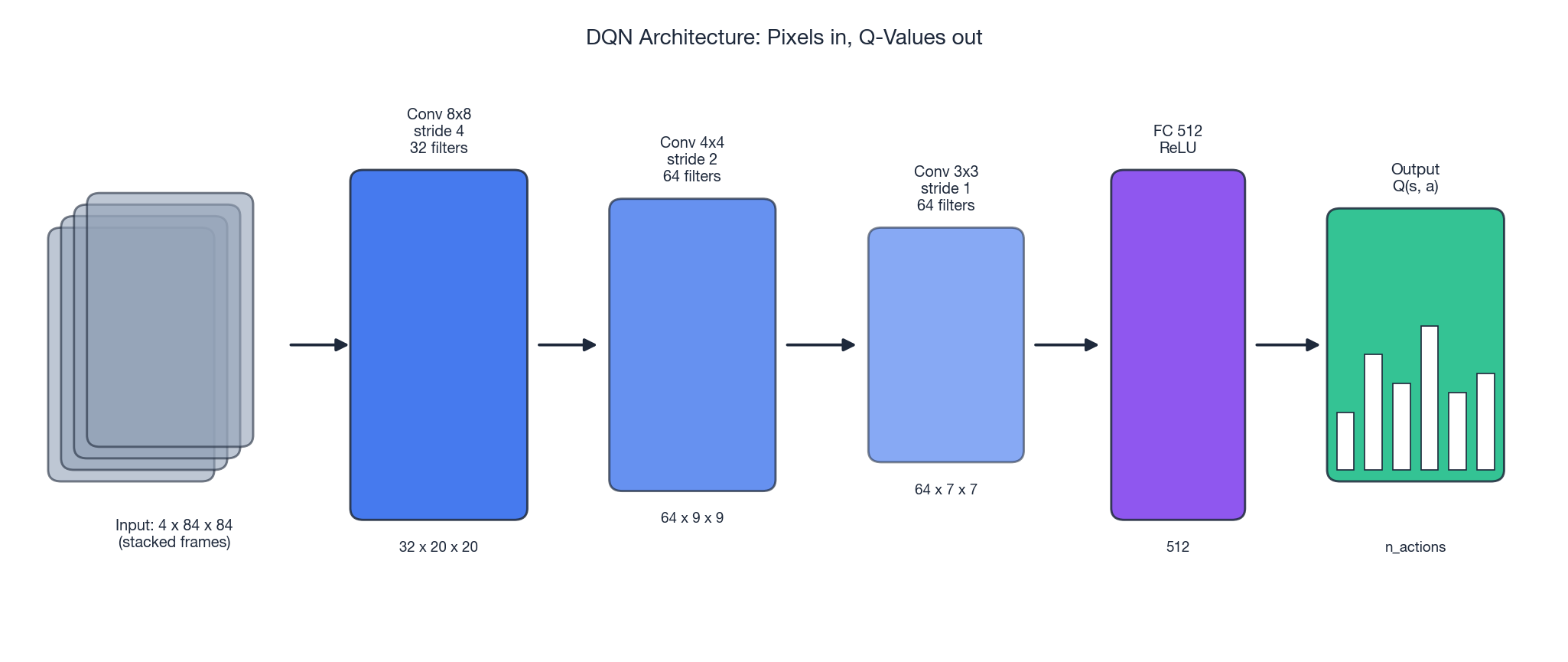

The DQN architecture is small by 2026 standards: three convolutional layers and two fully-connected layers, around 1.7M parameters. The convolutions look at local patches of the 84x84 screen; the FC layers map the flattened feature map to one Q-value per action.

| |

A few practical notes you will not find in the paper:

- Use Huber loss (

smooth_l1_loss), not pure MSE — a few wild TD errors will not throw the optimiser if the gradient is clipped to magnitude 1. - Gradient clipping to 10 (or norm 1) prevents the rare large update from destabilising everything.

- Frame-skip 4 with max-pool over the last two frames handles Atari’s flickering sprites; this is environment preprocessing, not part of the agent.

- On Pong, this agent reaches near-optimal play (mean episode reward $\approx +21$ ) in roughly 200-300 episodes on a single GPU.

DQN Variants: From Double to Rainbow#

The original DQN was published in 2013 (workshop) and 2015 (Nature). In the years that followed, a procession of papers each plugged one specific weakness. Eventually they were assembled into a single agent — Rainbow — whose components compose almost additively.

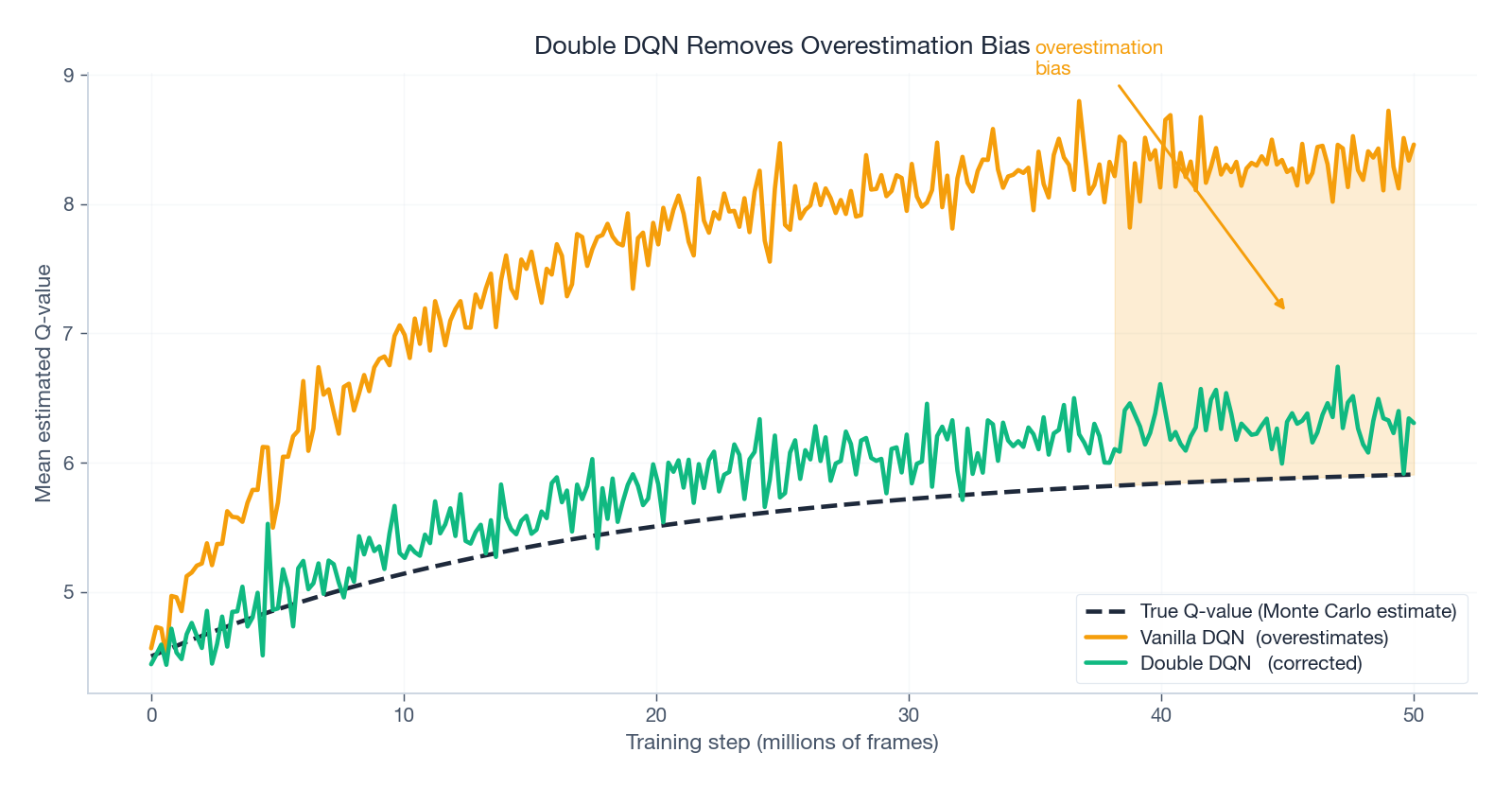

Double DQN: removing the maximisation bias#

$$\mathbb{E}\Big[\max_a \big(Q^*(s, a) + \varepsilon_a\big)\Big] \;\geq\; \max_a Q^*(s, a)$$The max preferentially picks whichever action got a positive error. Through bootstrapping, this overestimation seeps into every other state’s target. Empirically, vanilla DQN’s predicted Q-values drift far above the true return.

$$y_t = r_t + \gamma\, Q\big(s_{t+1},\; \arg\max_{a'} Q(s_{t+1}, a'; \theta);\; \theta^-\big)$$Errors in the two networks are partially independent, so the systematic bias largely cancels.

The code change is two lines:

| |

Dueling DQN: separating “how good is here?” from “how good is this action?”#

In a great many Atari frames, the choice of action barely matters — the ball is far away, the enemies are off-screen, you cannot influence anything for a few frames. Lumping state value and action advantage into one Q-head wastes capacity learning the same $V(s)$ over and over.

$$Q(s, a) \;=\; V(s) + \Big(A(s, a) - \tfrac{1}{|\mathcal{A}|} \sum_{a'} A(s, a')\Big)$$- $V(s)$ — “How good is it to be in this state?” — is action-independent.

- $A(s, a)$ — “How much better is action $a$ than average?” — is action-specific.

The mean-subtraction trick is what makes the decomposition identifiable; without it $V$ and $A$ could absorb arbitrary constants from each other.

| |

Dueling pairs especially well with Double DQN — the two changes attack different weaknesses and stack cleanly.

Prioritized Experience Replay#

Uniform sampling treats every transition as equally informative, but that is plainly false: a transition where the prediction was already correct teaches almost nothing, while one with a large TD error contains a strong learning signal.

$$p_i \;\propto\; \big(|\delta_i| + \varepsilon\big)^\alpha$$ $$w_i = \Big(\tfrac{1}{N \cdot p_i}\Big)^\beta$$with $\beta$ annealed from 0.4 toward 1.0 across training — low $\beta$ early when the bias hurts less, full correction late when stability matters more.

Multi-step targets, distributional RL, NoisyNets#

Three more pieces complete Rainbow:

- $$ y_t = \sum_{k=0}^{n-1} \gamma^k r_{t+k} + \gamma^n \max_{a'} Q(s_{t+n}, a'; \theta^-) $$

Distributional RL (C51), from Bellemare et al. (2017), learns the entire distribution of returns rather than just the mean. The categorical projection onto a fixed support of 51 atoms gives the method its name. The full distribution carries information that the mean discards, especially in stochastic environments.

NoisyNet replaces epsilon-greedy with parameterised noise injected directly into the FC layer weights. Exploration is thus state-dependent and learned, rather than a hand-tuned schedule.

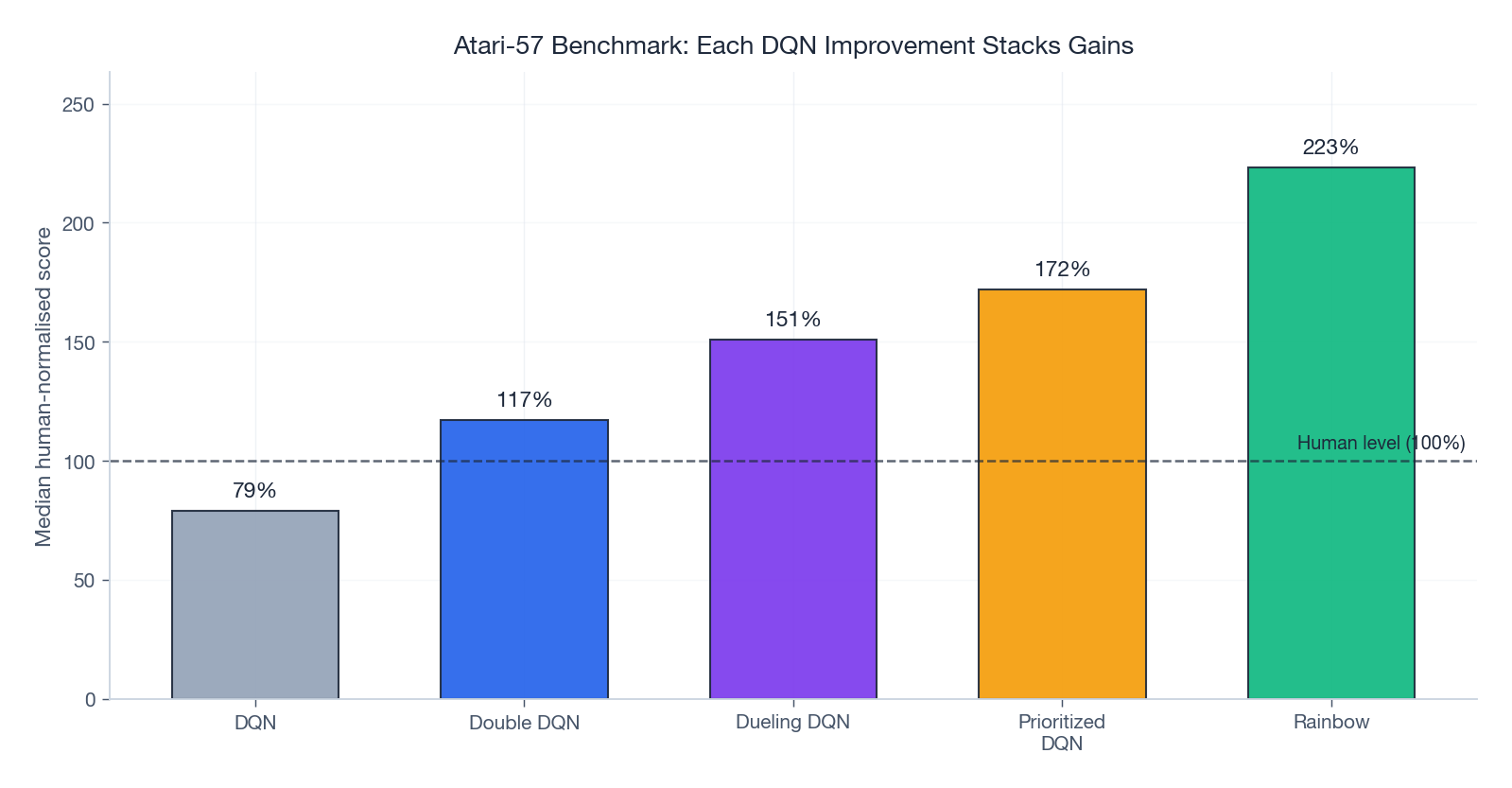

Rainbow#

Hessel et al. (2018) put all six together (DQN base + Double + Dueling + PER + n-step + distributional + NoisyNet) and ran a careful ablation. Every single component contributed; their combination beat each individual variant by a clear margin.

The numbers above are median scores normalised so that the human baseline = 100% and a random agent = 0%. DQN alone is below human. Each subsequent improvement adds tens of percentage points; Rainbow more than doubles human performance on the median game.

Practical Notes#

A starter hyperparameter table#

| Hyperparameter | Typical value | Comment |

|---|---|---|

| Replay capacity | 100K - 1M transitions | Larger is better, RAM-bound |

| Mini-batch size | 32 - 128 | 32 is the Nature default |

| Learning rate | 1e-4 to 3e-4 | Adam, 2.5e-4 is a safe starting point |

| Discount $\gamma$ | 0.99 | Lower for short horizons |

| $\varepsilon$ schedule | 1.0 -> 0.01 | Linear over ~1M frames |

| Target update period $C$ | 10K steps | Or soft update with $\tau \approx 0.005$ |

| Frame skip | 4 | Atari preprocessing |

| Gradient clipping | norm 10 | Use Huber loss as well |

A debugging checklist#

- Solve CartPole first. A correctly implemented DQN solves CartPole in under 200 episodes on CPU. If yours cannot, the bug is in your code, not your hyperparameters.

- Watch the Q-value magnitudes. They should rise and then plateau near a sensible scale (for Atari, single or double digits). If they drift past 1000 you have a divergence bug.

- Track the TD error. It should fall and stay bounded. A monotonic upward trend means the target is escaping.

- Inspect the action distribution. If the agent only ever takes one action, the network’s output is collapsing — check for dead ReLUs, broken initialisation, or a runaway learning rate.

When DQN, when something else?#

| Aspect | DQN family | Policy gradient (PPO, SAC, …) |

|---|---|---|

| Action space | Discrete only | Discrete and continuous |

| Sample efficiency | High (replay reuses data) | Lower (PPO is on-policy) |

| Stability | Needs the target network + tricks | Generally easier to tune |

| Best fit | Atari, board games, discrete control | Robotics, locomotion, continuous control |

DQN’s hard limitation is the discrete action space: $\arg\max_a Q(s, a)$ is trivial over five buttons but infeasible over a real-valued joint angle. The next article picks up exactly there.

Summary#

DQN’s contribution was as much engineering as it was theory. The neural network in place of the table is the obvious change; the experience replay buffer and the target network are the two ideas that turn an unstable algorithm into one that actually trains. Together they neutralise the off-policy and bootstrapping legs of the Deadly Triad enough to get useful learning out of a non-linear function approximator.

The post-DQN variants each add a clean fix to a clean failure: Double DQN removes overestimation bias, Dueling separates state value from action advantage, Prioritized Replay focuses learning on surprising transitions, and Rainbow shows that these fixes compose. The same building blocks survive into modern agents — you will see replay buffers and target networks in SAC, in offline RL, and in many recent LLM-RL hybrids.

Next: Part 3 introduces policy gradient methods and actor-critic architectures — the family that handles continuous actions and underlies PPO, SAC, and the policy half of every modern algorithm.

References#

- Mnih, V., et al. (2013). Playing Atari with Deep Reinforcement Learning. NIPS Deep Learning Workshop.

- Mnih, V., et al. (2015). Human-level control through deep reinforcement learning. Nature, 518, 529-533.

- Watkins, C. J., & Dayan, P. (1992). Q-learning. Machine Learning, 8(3-4), 279-292.

- van Hasselt, H., Guez, A., & Silver, D. (2016). Deep RL with Double Q-learning. AAAI.

- Wang, Z., et al. (2016). Dueling Network Architectures for Deep RL. ICML.

- Schaul, T., et al. (2016). Prioritized Experience Replay. ICLR.

- Bellemare, M., Dabney, W., & Munos, R. (2017). A Distributional Perspective on Reinforcement Learning. ICML.

- Fortunato, M., et al. (2018). Noisy Networks for Exploration. ICLR.

- Hessel, M., et al. (2018). Rainbow: Combining Improvements in Deep Reinforcement Learning. AAAI.

- Sutton, R. S., & Barto, A. G. (2018). Reinforcement Learning: An Introduction (2nd ed.). MIT Press. — Chapter 11 for the Deadly Triad.

Reinforcement Learning 12 parts

- 01 Reinforcement Learning (1): Fundamentals and Core Concepts

- 02 Reinforcement Learning (2): Q-Learning and Deep Q-Networks (DQN) you are here

- 03 Reinforcement Learning (3): Policy Gradient and Actor-Critic Methods

- 04 Reinforcement Learning (4): Exploration Strategies and Curiosity-Driven Learning

- 05 Reinforcement Learning (5): Model-Based RL and World Models

- 06 Reinforcement Learning (6): PPO and TRPO — Trust Region Policy Optimization

- 07 Reinforcement Learning (7): Imitation Learning and Inverse RL

- 08 Reinforcement Learning (8): AlphaGo and Monte Carlo Tree Search

- 09 Reinforcement Learning (9): Multi-Agent Reinforcement Learning

- 10 Reinforcement Learning (10): Offline Reinforcement Learning

- 11 Reinforcement Learning (11): Hierarchical RL and Meta-Learning

- 12 Reinforcement Learning (12): RLHF and LLM Applications