Reinforcement Learning (3): Policy Gradient and Actor-Critic Methods

From REINFORCE to SAC -- how policy gradient methods directly optimize policies, naturally handle continuous actions, and power modern algorithms like PPO, TD3, and SAC.

DQN showed that deep RL can master Atari, but it has a hard ceiling: it only works in discrete action spaces. Ask it to control a robot arm with seven continuous joint angles, and it fails — you’d have to solve an inner optimization problem every time you choose an action.

Policy gradient methods take a fundamentally different route. Instead of learning a value function and deriving a policy from it, they directly optimise the policy. That single change opens the door to continuous actions, stochastic strategies, and problems where the optimal play is itself random (think rock-paper-scissors).

What You Will Learn#

- Why policy gradients exist, and what the Policy Gradient Theorem actually says

- REINFORCE: the simplest policy-gradient algorithm and why its variance is so painful

- The Actor-Critic architecture and how the advantage function $A = Q - V$ shrinks variance

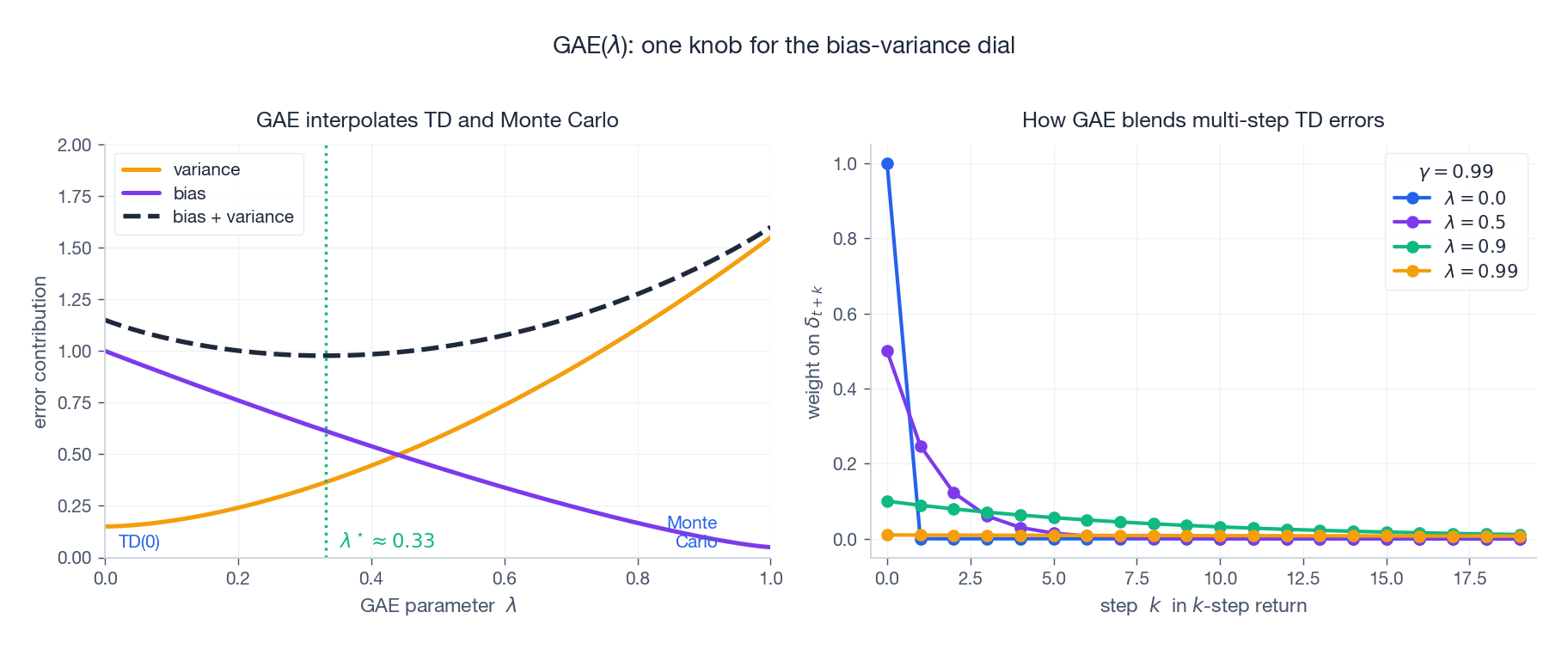

- GAE($\lambda$ ): one knob for the bias-variance trade-off

- DDPG / TD3 / SAC for continuous control

- A practical algorithm-selection guide grounded in current industrial usage

Prerequisites: Part 1 (MDPs, value functions, TD learning) and Part 2 (DQN, target networks, replay buffers).

Why Policy Gradients?#

DQN learns $Q(s,a)$ and acts greedily: $\pi(s) = \arg\max_a Q(s,a)$ . That indirect recipe creates four pain points:

- Discrete actions only. Computing $\arg\max$ over a continuous space is itself a non-trivial optimisation, repeated at every environment step.

- No stochastic policies. Greedy policies are deterministic. But in matching-pennies-style games, the optimal policy is genuinely random.

- Error amplification. Q-value approximation errors get amplified by the $\max$ operator — the overestimation bias we fixed (partially) with Double DQN in Part 2 .

- Ad-hoc exploration. $\epsilon$ -greedy is a hack: it has no principled reason for the noise it injects.

Policy gradient methods sidestep all four by parameterising the policy directly as $\pi_\theta(a|s)$ :

- For discrete actions: a linear layer followed by a softmax produces a categorical distribution.

- For continuous actions: the network outputs the parameters of a Gaussian (mean $\mu_\theta(s)$ and log-std $\log\sigma_\theta(s)$ ), often squashed through $\tanh$ to bound the action.

Either way, the loss machinery is identical: pick an action by sampling from $\pi_\theta$ , then push the parameters in a direction that makes good actions more likely.

The Policy Gradient Theorem#

Let $J(\theta) = \mathbb{E}_{\tau \sim \pi_\theta}[G_0]$ be the expected return of the trajectories produced by $\pi_\theta$ . We want $\nabla_\theta J(\theta)$ so we can do gradient ascent.

$$\nabla_\theta J(\theta) \;=\; \mathbb{E}_{\pi_\theta}\!\Big[\sum_{t=0}^{T} \nabla_\theta \log \pi_\theta(a_t \mid s_t)\;\cdot\;Q^{\pi_\theta}(s_t, a_t)\Big]$$Three key points to consider:

- $\nabla_\theta \log \pi_\theta(a|s)$ is called the score function. It is the gradient that, on its own, would make action $a$ slightly more likely.

- $Q^\pi(s,a)$ acts as a scalar weight on that direction: good actions amplify the score, bad actions invert it.

- The environment dynamics $P(s'|s,a)$ disappear from the formula. We never need to know them; sampled trajectories are enough. This is what makes the method model-free.

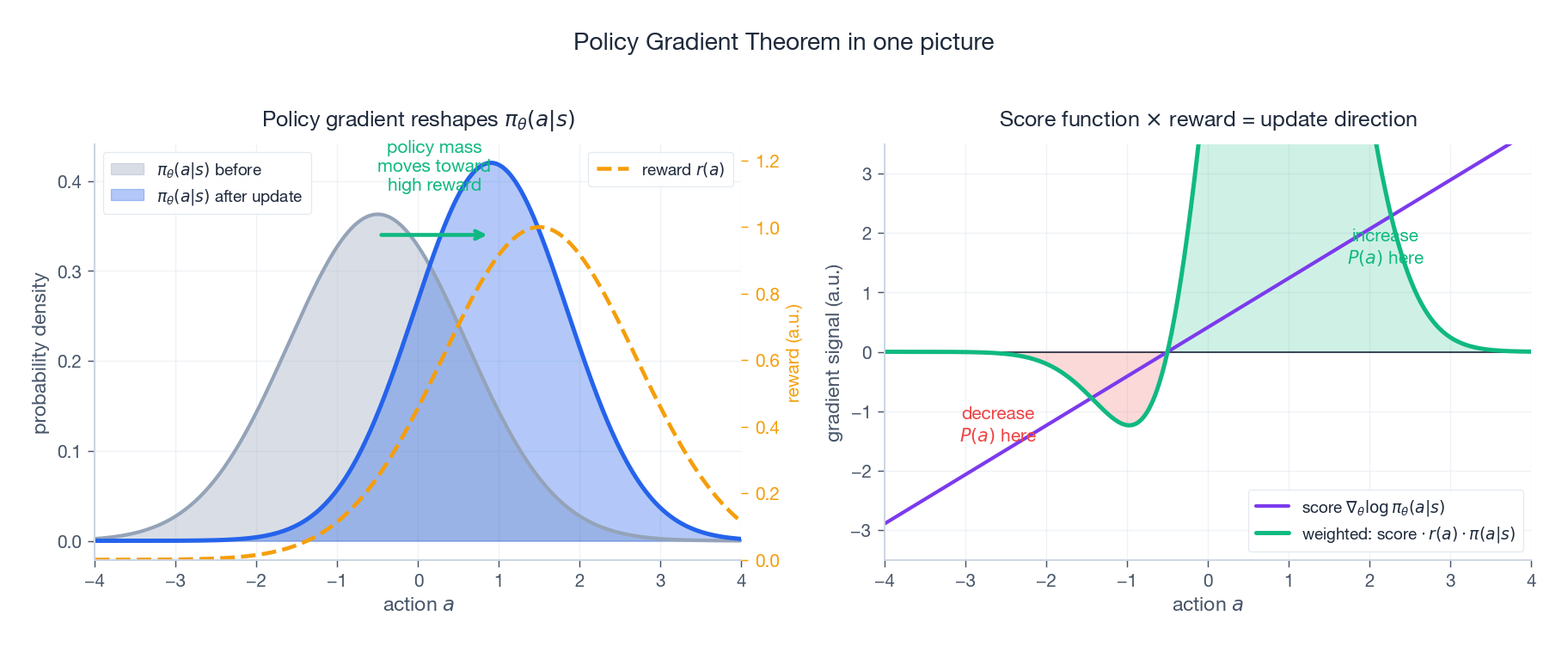

Visually, the theorem says “shift probability mass toward actions whose realised return was high”:

The left panel shows $\pi_\theta(a|s)$ before and after one (large, illustrative) update — mass migrates toward the reward bump. The right panel shows the update direction itself: the score function multiplied by the reward and the current density. Where the product is positive we increase $\pi(a)$ ; where it is negative we decrease it.

The Variance Problem and the Baseline Trick#

The raw estimator above is unbiased, but its variance is horrible. $Q^\pi$ can be hundreds or thousands; one lucky episode can shove $\theta$ in any direction.

$$\mathbb{E}_{a \sim \pi_\theta}\!\big[\,\nabla_\theta \log \pi_\theta(a|s)\,\cdot\,b(s)\,\big] \;=\; 0,$$ $$A^\pi(s,a) \;=\; Q^\pi(s,a) - V^\pi(s).$$“Advantage” is exactly what it sounds like: how much better is action $a$ than the average action in state $s$ ? Centred around zero, the gradient signal stops swinging wildly.

Green bars on the right are actions worth reinforcing; red bars are actions to suppress. The greedy DQN view (“pick the biggest $Q$ ”) becomes a continuous, signed update: “lift the policy in proportion to how above-average each action turned out to be.”

REINFORCE: Monte Carlo Policy Gradient#

REINFORCE (Williams, 1992) is the textbook starting point. It uses the actual discounted return $G_t$ as a Monte Carlo estimate of $Q^\pi(s_t, a_t)$ .

Algorithm#

- Roll out one full trajectory $\tau = (s_0, a_0, r_0, \ldots, s_T)$ under $\pi_\theta$ .

- Compute the discounted return for every step: $G_t = \sum_{k=0}^{T-t-1} \gamma^k r_{t+k}$ .

- Estimate the gradient: $\hat g = \sum_t \nabla_\theta \log \pi_\theta(a_t|s_t)\,(G_t - b(s_t))$ .

- Ascend: $\theta \leftarrow \theta + \alpha\,\hat g$ .

That is the whole algorithm. The simplicity is the point.

REINFORCE with a Learned Baseline on CartPole#

| |

This typically solves CartPole in 100-200 episodes. On harder tasks, REINFORCE quickly shows its weaknesses.

Strengths: simple, unbiased, action-space agnostic. Weaknesses: very high gradient variance, every trajectory is used exactly once (no off-policy reuse), and updates only happen at episode boundaries.

Actor-Critic: Replacing Returns with TD Estimates#

REINFORCE waits until the end of the episode to compute $G_t$ . That return contains noise from every future state, action, and reward. Can we do better?

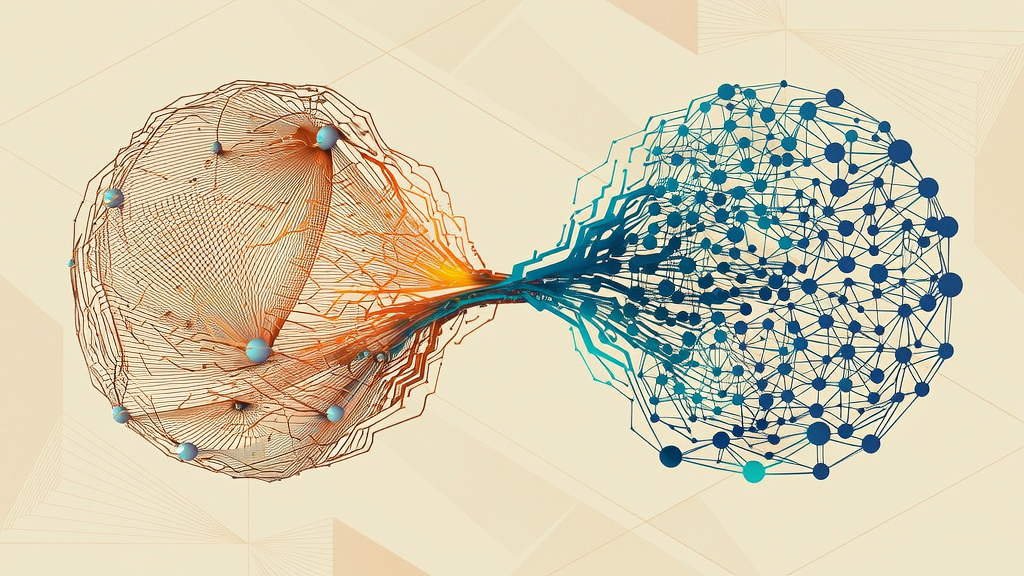

Actor-Critic says: train a second network — a critic $V_\phi(s)$ — and use it to bootstrap the gradient signal.

- Actor $\pi_\theta(a|s)$ : decides what to do.

- Critic $V_\phi(s)$ : scores how good a state is.

This depends on only one step of randomness instead of the entire tail of the trajectory. The variance collapses; in exchange we accept some bias from the imperfect critic.

The two networks usually share a backbone:

The TD error $\delta_t$ does double duty: it acts as the advantage weighting the actor’s gradient, and as the target for the critic’s regression loss.

How Big a Deal Is the Variance Reduction?#

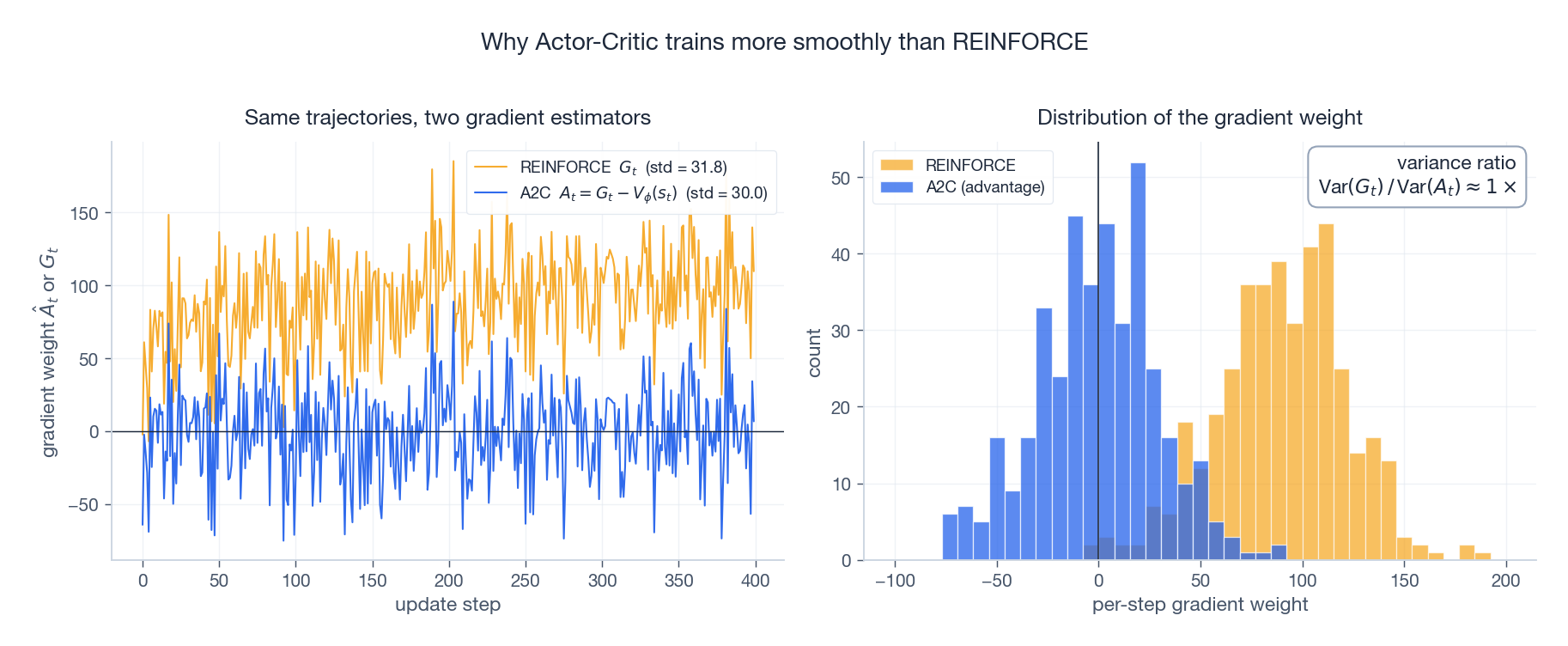

A simulated comparison on the same set of trajectories: the orange line uses raw Monte Carlo returns, the blue line uses the advantage produced by a learned baseline.

Same trajectories, same expected gradient. The right panel shows the practical payoff: variance shrinks by an order of magnitude, which is exactly why training curves of A2C/PPO look so much smoother than REINFORCE.

A2C in Code#

| |

A3C (Mnih et al., 2016) parallelised this across asynchronous workers. Modern practice prefers the synchronous version A2C: collect rollouts from $N$ environments in lockstep, then take one combined gradient step. Same idea, much friendlier to GPUs.

GAE: A Dial Between TD and Monte Carlo#

$$ \hat A_t^{\text{GAE}(\lambda)} \;=\; \sum_{k=0}^{\infty} (\gamma\lambda)^k\,\delta_{t+k}, \quad \delta_t = r_t + \gamma V_\phi(s_{t+1}) - V_\phi(s_t). $$$\lambda = 0$ recovers the one-step TD advantage; $\lambda = 1$ recovers the full Monte Carlo return (minus the baseline). Practitioners almost always pick something in $[0.9, 0.97]$ .

The left panel shows the qualitative trade-off; the right panel shows what the weighting actually does — larger $\lambda$ spreads the credit across many future TD errors, smaller $\lambda$ trusts only the immediate one. PPO’s defaults ($\lambda = 0.95$ , $\gamma = 0.99$ ) live near the sweet spot.

Continuous Control: DDPG and TD3#

For continuous actions like joint torques the policy is naturally Gaussian: $a \sim \mathcal{N}(\mu_\theta(s),\,\sigma_\theta(s))$ . But sampling injects noise that hurts precise control. Deterministic policies $a = \mu_\theta(s)$ avoid that noise — and admit a particularly clean gradient.

DDPG: Deep Deterministic Policy Gradient#

DDPG (Lillicrap et al., 2016) couples DQN-style stability tricks with an Actor-Critic structure:

- Replay buffer + target networks (inherited from DQN).

- The deterministic policy gradient (Silver et al., 2014): $\nabla_\theta J \;=\; \mathbb{E}_{s \sim \rho^\beta}\!\Big[\,\nabla_a Q_\phi(s,a)\big|_{a=\mu_\theta(s)}\;\nabla_\theta \mu_\theta(s)\,\Big].$ Read it from right to left: shift $\theta$ in whatever direction $\mu_\theta(s)$ moves, weighted by how steeply $Q$ rises in $a$ at that point. Pure chain rule.

Exploration is added externally as action noise: $a_t = \mu_\theta(s_t) + \mathcal{N}(0,\sigma)$ .

TD3: Three Tricks That Stabilise DDPG#

DDPG inherits DQN’s overestimation bias and is famously brittle. TD3 (Fujimoto et al., 2018) fixes it with three independent ideas, each useful on its own:

- Clipped double Q-learning. Train two critics and use the minimum of their target predictions: $y = r + \gamma \min_{i=1,2} Q_{\phi_i'}(s', \tilde a')$ .

- Delayed policy updates. Update the actor only every $d$ critic updates (typically $d=2$ ). Lets the critic settle before the actor pulls the rug.

- Target policy smoothing. Add clipped noise to the target action: $\tilde a' = \mu_{\theta'}(s') + \mathrm{clip}(\epsilon, -c, c)$ , $\epsilon \sim \mathcal{N}(0, \sigma)$ . This forces the critic to be smooth in $a$ , so the actor cannot exploit narrow Q-spikes.

| |

These three changes turn DDPG from “sometimes works after careful tuning” into a competitive, reproducible baseline.

SAC: Maximum Entropy RL#

$$J(\pi) \;=\; \mathbb{E}\!\Big[\sum_t \gamma^t\big(r_t + \alpha\,\mathcal H[\pi(\cdot|s_t)]\big)\Big].$$The entropy term $\mathcal H[\pi]$ pays the agent for being uncertain. The temperature $\alpha$ controls the trade-off. The Bellman backups are modified to match: the “soft” Q-target adds an expected log-policy term.

Three engineering details make SAC the workhorse it is:

- Automatic temperature tuning. $\alpha$ is itself learned by gradient descent against a target-entropy constraint, so you do not have to guess.

- Stochastic squashed-Gaussian policy. The actor outputs $(\mu, \log\sigma)$ ; samples are passed through $\tanh$ and the log-prob is corrected by the change-of-variables Jacobian.

- Twin critics with clipped double-Q, off-policy replay, and soft target updates — the familiar TD3 stack.

In practice, SAC matches or beats TD3 on MuJoCo benchmarks while being noticeably less sensitive to hyperparameters. For continuous control on real hardware, SAC is the default starting point in many labs.

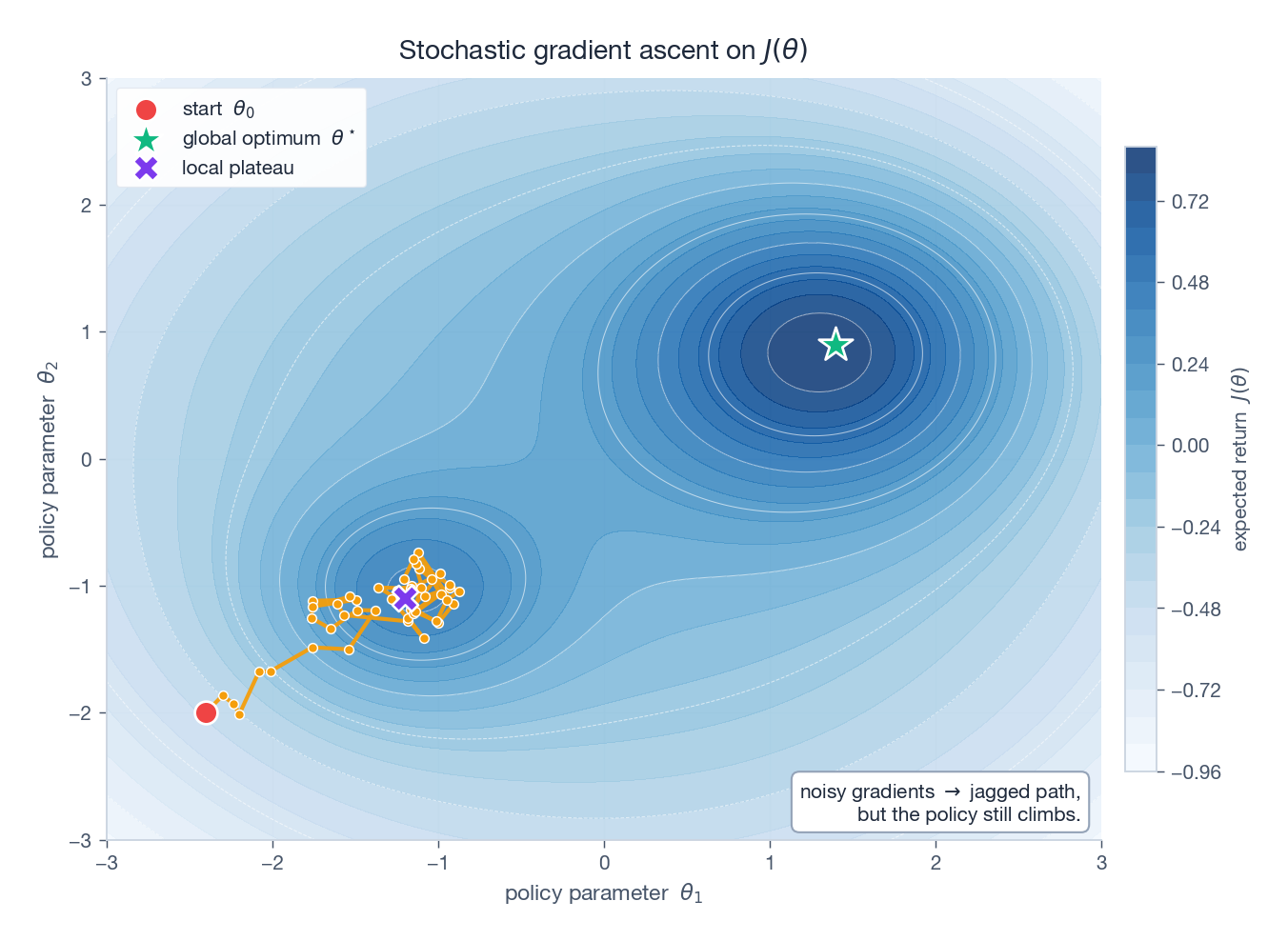

Why Does This Even Work? Climbing a Noisy Hill in $\theta$ -Space#

It is worth zooming out. Every algorithm in this article is a special case of one idea: stochastic gradient ascent on $J(\theta)$ in policy parameter space. The “stochastic” is doing a lot of work — our gradient estimates are noisy, sometimes wildly so, and the loss surface itself is non-convex.

A few things this picture makes obvious:

- The path is jagged, not smooth. Variance reduction (baseline, advantage, GAE) buys us a less jagged path, not a different destination.

- Local plateaus exist. Entropy regularisation (SAC) and stochastic policies are partly defences against getting stuck on them.

- The landscape itself changes as $\theta$ changes — the data distribution is on-policy. This is why off-policy methods (DDPG, TD3, SAC) need careful corrections, and why PPO clips the update step in the next instalment.

Algorithm Selection Guide#

| Situation | Recommended | Why |

|---|---|---|

| Discrete actions, fast prototyping | PPO | Stable, simple, well-supported by every library |

| Continuous actions, expensive samples | SAC or TD3 | Off-policy, high sample efficiency |

| Continuous actions, abundant simulation | PPO | Smoother training curves, easy to scale across environments |

| Need a stochastic policy by design | SAC | Maximum-entropy framework gives you one for free |

| Sparse rewards | SAC | Entropy keeps the policy exploring long enough to find them |

| Learning the field, building intuition | REINFORCE -> A2C -> PPO | Each step adds exactly one new idea on top of the last |

What major labs actually use, as of 2026:

- OpenAI (Dota 2, ChatGPT RLHF): PPO

- DeepMind (continuous control research): SAC and variants

- Berkeley robotics: SAC for real-world manipulation

- TD3 remains the standard reference baseline in continuous-control benchmarks

Summary#

Policy-gradient methods opened RL to the world beyond discrete actions:

- REINFORCE showed that policies can be optimised directly via gradient ascent on expected return — conceptually clean, practically noisy.

- Actor-Critic + advantage traded a touch of bias for an order-of-magnitude variance reduction, making training tractable.

- GAE($\lambda$ ) turned the bias-variance choice into a single tunable knob.

- DDPG / TD3 brought off-policy efficiency to continuous control with deterministic policies and DQN-style stabilisation.

- SAC added entropy regularisation and became the go-to method for continuous control.

- PPO — the subject of Part 6 — simplified trust-region ideas into a clipped surrogate that became the industry workhorse.

All of these methods are model-free: they learn from interaction without ever building an explicit model of the environment. That generality is their strength, and the millions of samples they consume is their weakness.

Next up: Part 4 tackles the exploration problem — how do agents discover rewards in the first place when the environment provides almost no feedback?

References#

- Williams, R. J. (1992). Simple statistical gradient-following algorithms for connectionist reinforcement learning. Machine Learning, 8(3-4), 229-256.

- Sutton, R. S., McAllester, D., Singh, S., & Mansour, Y. (2000). Policy gradient methods for reinforcement learning with function approximation. NeurIPS.

- Silver, D., Lever, G., Heess, N., Degris, T., Wierstra, D., & Riedmiller, M. (2014). Deterministic policy gradient algorithms. ICML.

- Mnih, V., et al. (2016). Asynchronous methods for deep reinforcement learning. ICML.

- Lillicrap, T. P., et al. (2016). Continuous control with deep reinforcement learning. ICLR.

- Schulman, J., Moritz, P., Levine, S., Jordan, M., & Abbeel, P. (2016). High-dimensional continuous control using generalized advantage estimation. ICLR.

- Schulman, J., Wolski, F., Dhariwal, P., Radford, A., & Klimov, O. (2017). Proximal policy optimization algorithms. arXiv:1707.06347 .

- Fujimoto, S., van Hoof, H., & Meger, D. (2018). Addressing function approximation error in actor-critic methods. ICML.

- Haarnoja, T., Zhou, A., Abbeel, P., & Levine, S. (2018). Soft actor-critic: off-policy maximum entropy deep RL. ICML.

Reinforcement Learning 12 parts

- 01 Reinforcement Learning (1): Fundamentals and Core Concepts

- 02 Reinforcement Learning (2): Q-Learning and Deep Q-Networks (DQN)

- 03 Reinforcement Learning (3): Policy Gradient and Actor-Critic Methods you are here

- 04 Reinforcement Learning (4): Exploration Strategies and Curiosity-Driven Learning

- 05 Reinforcement Learning (5): Model-Based RL and World Models

- 06 Reinforcement Learning (6): PPO and TRPO — Trust Region Policy Optimization

- 07 Reinforcement Learning (7): Imitation Learning and Inverse RL

- 08 Reinforcement Learning (8): AlphaGo and Monte Carlo Tree Search

- 09 Reinforcement Learning (9): Multi-Agent Reinforcement Learning

- 10 Reinforcement Learning (10): Offline Reinforcement Learning

- 11 Reinforcement Learning (11): Hierarchical RL and Meta-Learning

- 12 Reinforcement Learning (12): RLHF and LLM Applications