Reinforcement Learning (7): Imitation Learning and Inverse RL

A practical, theory-grounded tour of imitation learning: behavioral cloning and its quadratic compounding error, DAgger and the no-regret reduction, MaxEnt inverse RL for recovering reward functions, and adversarial methods (GAIL, AIRL). Includes runnable PyTorch code, a method-selection ladder, and seven publication-quality figures.

Every algorithm in the previous chapters assumed access to a reward function. In practice, designing that reward is often the hardest part of an RL project. Try writing one paragraph that captures “drive like a careful human”, “fold a shirt the way a tailor would”, or “summarise this document the way an expert editor would”. You can show those behaviours far more easily than you can specify them.

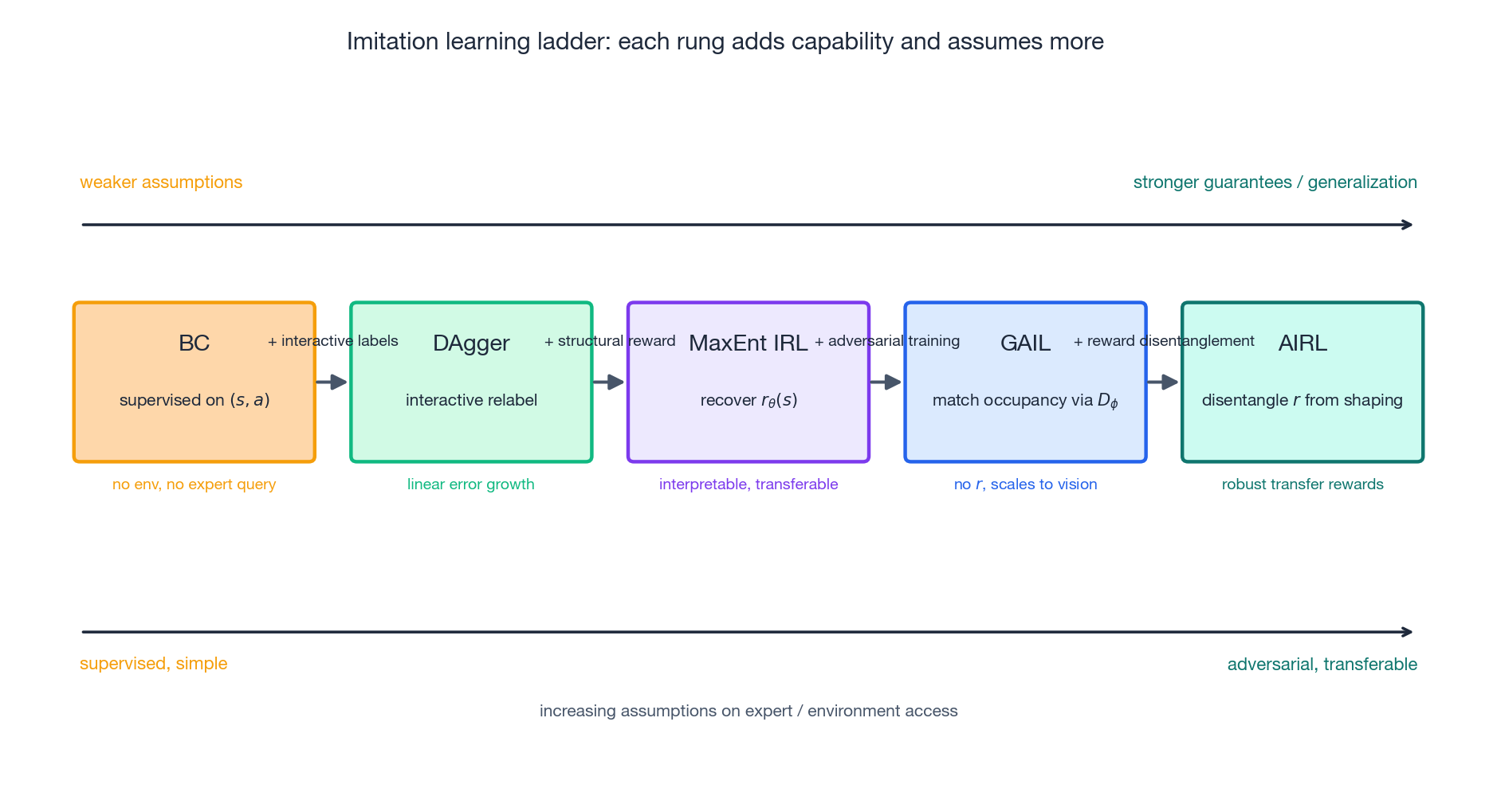

Imitation learning takes that intuition seriously: instead of optimising a hand-engineered scalar, it learns from expert demonstrations $\mathcal{D} = \{(s_t, a_t)\}$ . This chapter walks the four canonical methods — behavioral cloning, DAgger, maximum-entropy IRL, and GAIL/AIRL — not as isolated tricks but as a single ladder where each rung relaxes one assumption and pays for it with new structure.

What You Will Learn#

- Behavioral cloning (BC): imitation as supervised learning, why it works on short tasks, and the precise reason it breaks on long ones.

- DAgger: how interactive relabelling turns BC’s quadratic error into a linear one, with the no-regret theorem behind it.

- Maximum-entropy IRL: recovering an interpretable reward whose optimum reproduces the demonstrations.

- GAIL and AIRL: matching expert occupancy measures end-to-end through adversarial training.

- A selection rubric: which method to reach for given expert availability, environment access, and need for transfer.

Prerequisites: policy gradients (Part 5 ) and PPO (Part 6 ). PyTorch fundamentals are assumed for the code snippets.

Problem setting#

$$\mathcal{D} = \{(s_1, a_1), (s_2, a_2), \ldots, (s_N, a_N)\},$$the goal is to learn a policy $\pi_\theta$ whose behaviour is close to that of an unknown expert policy $\pi^*$ . We never observe $\pi^*$ directly — only its samples — and there is no reward signal. Sometimes we can also query the expert on new states (DAgger); often we cannot.

| Aspect | Reinforcement learning | Imitation learning |

|---|---|---|

| Signal | Reward $r(s, a)$ | Expert dataset $\mathcal{D}$ |

| Interaction | Required (must explore) | Sometimes optional (offline BC) |

| Objective | Maximise $\mathbb{E}[\sum \gamma^t r_t]$ | Match expert behaviour distribution |

| Failure mode | Reward hacking, exploration | Distribution shift, mode collapse |

| Typical use | Game-playing, robotics, RLHF | Driving, surgery, language style |

The methods we cover trade these axes against each other. The five-rung ladder is summarised below; the rest of the chapter unpacks each rung.

Behavioral cloning#

$$\mathcal{L}(\theta) \;=\; \mathbb{E}_{(s,a)\sim \mathcal{D}}\big[ \ell\big(\pi_\theta(s),\, a\big) \big],$$where $\ell$ is cross-entropy for discrete actions or MSE / negative log-likelihood for continuous ones. The historical first instance is ALVINN (Pomerleau, 1989), which trained a fully connected network to steer a van from camera images.

| |

Three implementation details matter much more than they look:

- Standardise inputs. BC is a small supervised model — unscaled features dominate the loss surface and produce overconfident actions in rare states.

- Early-stopping on a held-out validation set. Long training overfits the expert’s noise, which makes the next problem worse, not better.

- Action representation. For continuous control, predicting Gaussian parameters with a NLL loss outperforms MSE on Tanh outputs whenever the expert is multimodal.

Why BC fails on long horizons#

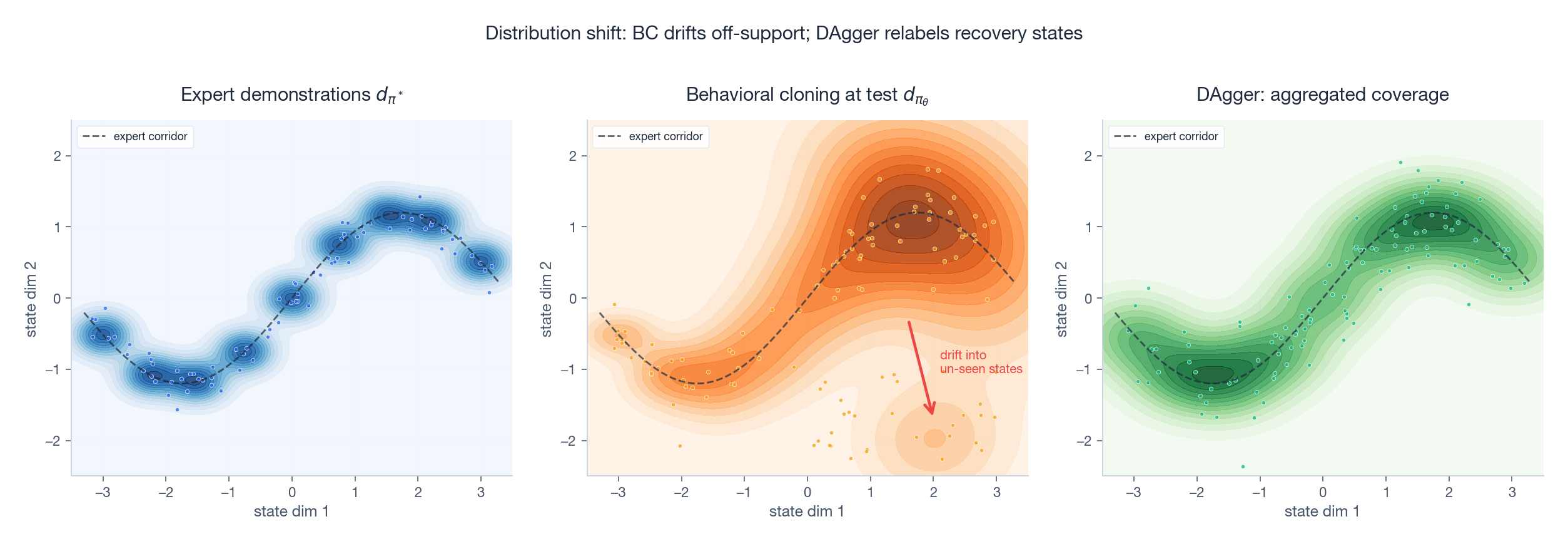

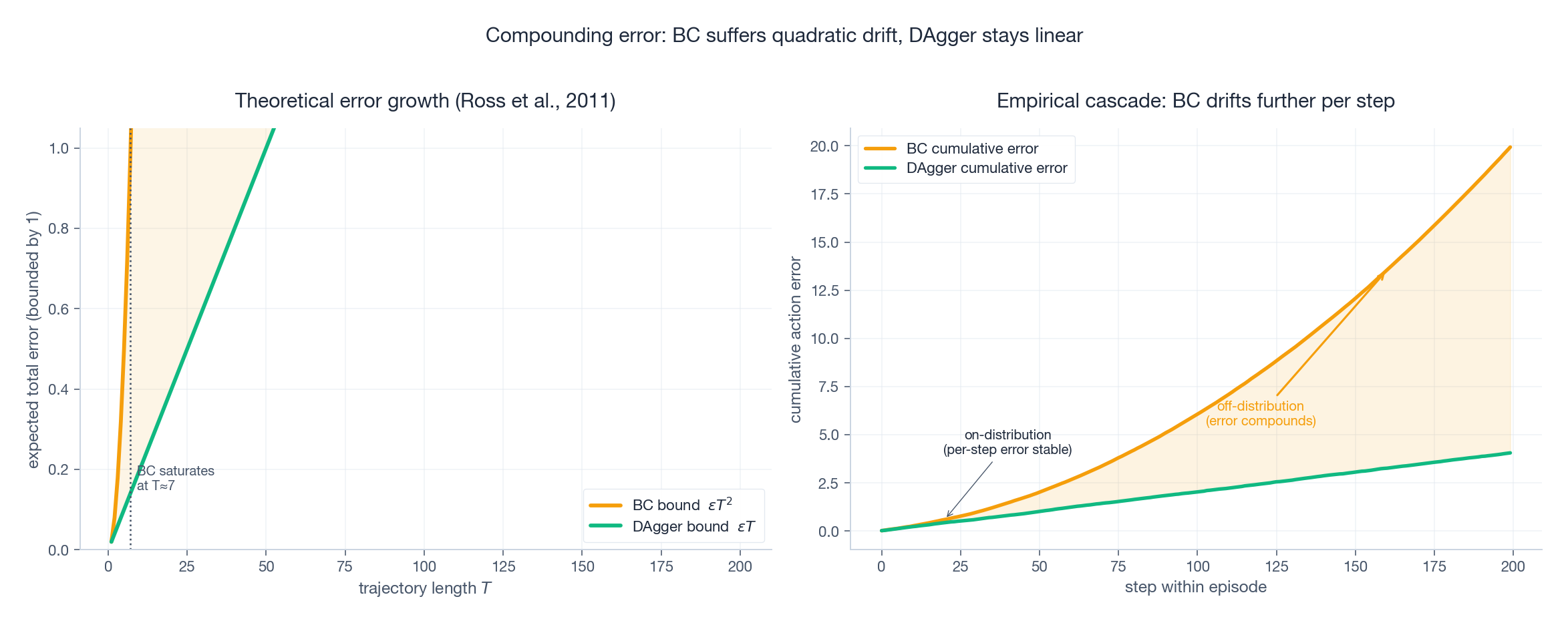

BC is trained under the expert’s state distribution $d_{\pi^*}$ but, at deployment, it visits states drawn from its own distribution $d_{\pi_\theta}$ . Because the policy is imperfect, those distributions diverge with every step.

quadratic in horizon (Ross & Bagnell, 2010). Even a 99% accurate policy fails on a 200-step task, because the 1% probability of a mistake compounds and the policy lands in states the expert never visited — where it has no useful training signal.

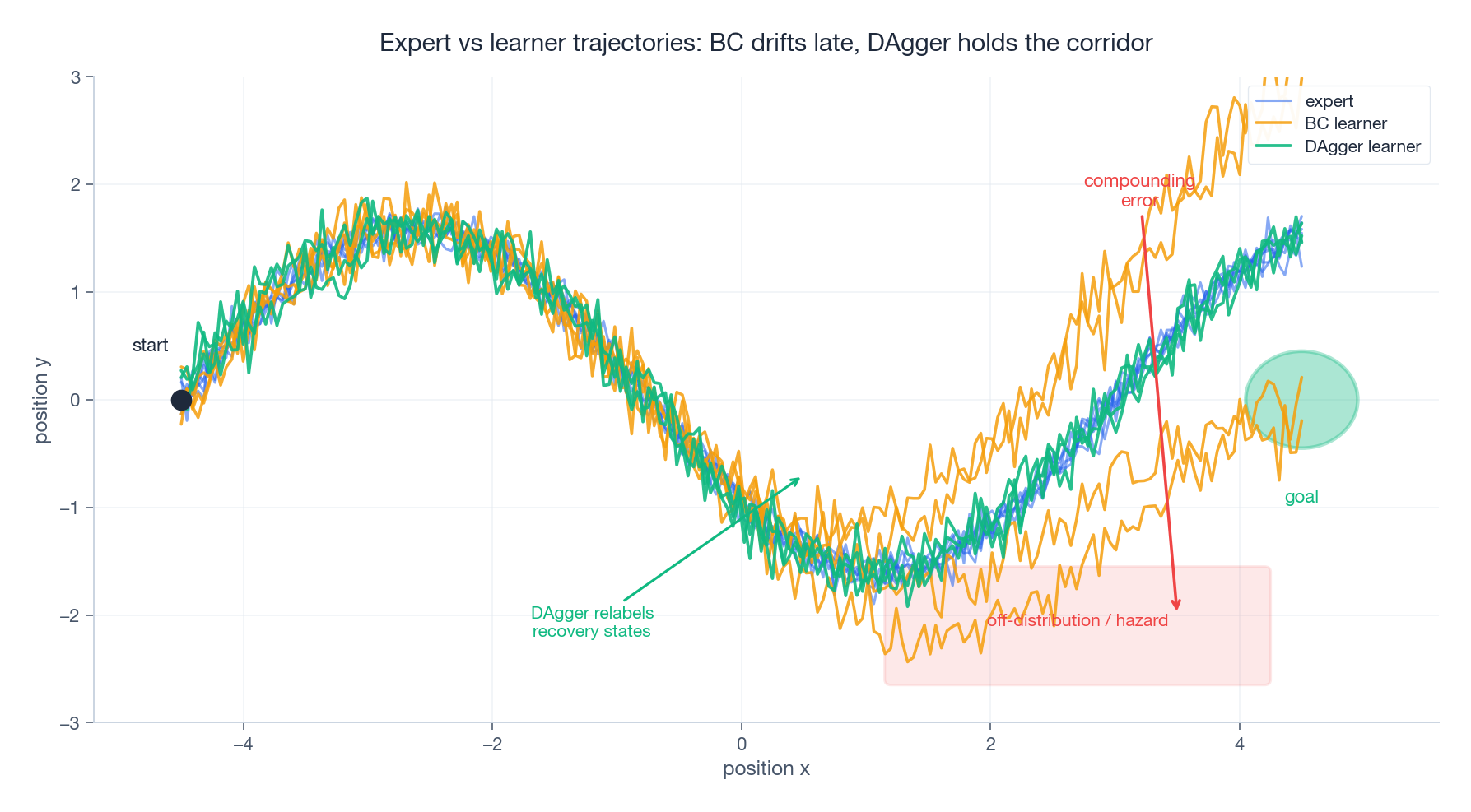

You can see the cascade clearly when you roll the trained policy forward:

Early in the episode the BC learner stays on the expert corridor. Around the midpoint a small error appears, the policy enters off-distribution states, and from there it drifts steadily into the hazard zone. The same compounding effect appears in the per-step error curve below.

DAgger: dataset aggregation#

DAgger (Ross, Gordon & Bagnell, 2011) breaks the cascade by collecting expert labels on the states the learner actually visits. Each iteration adds a new slice of $(s, a^*)$ pairs sampled from $d_{\pi_\theta}$ and retrains on the aggregated dataset.

Algorithm.

- Train $\pi_1$ on initial expert demonstrations.

- For $i = 1, \ldots, N$

:

- Mix $\pi_i$ with the expert under a schedule $\beta_i \to 0$ to roll out states.

- Query the expert for the correct action $a^*$ at every visited state.

- Aggregate: $\mathcal{D} \leftarrow \mathcal{D} \cup \{(s, a^*)\}$ .

- Retrain $\pi_{i+1}$ on all of $\mathcal{D}$ .

i.e. linear in horizon. The right way to read this is: every additional iteration buys back the recovery information that BC discarded.

| |

When DAgger applies. DAgger needs an expert that can be queried at run-time. That is realistic when:

- the expert is an algorithmic planner (e.g. an MPC controller, a search-based oracle);

- the expert is a more capable model you want to distil (e.g. teacher-student RL, model-based oracle, language-model self-distillation);

- a human is in the loop and willing to label batches.

It does not apply when the only demonstrations are a static log — e.g. a recorded driving dataset. For that regime, jump to GAIL.

Inverse reinforcement learning#

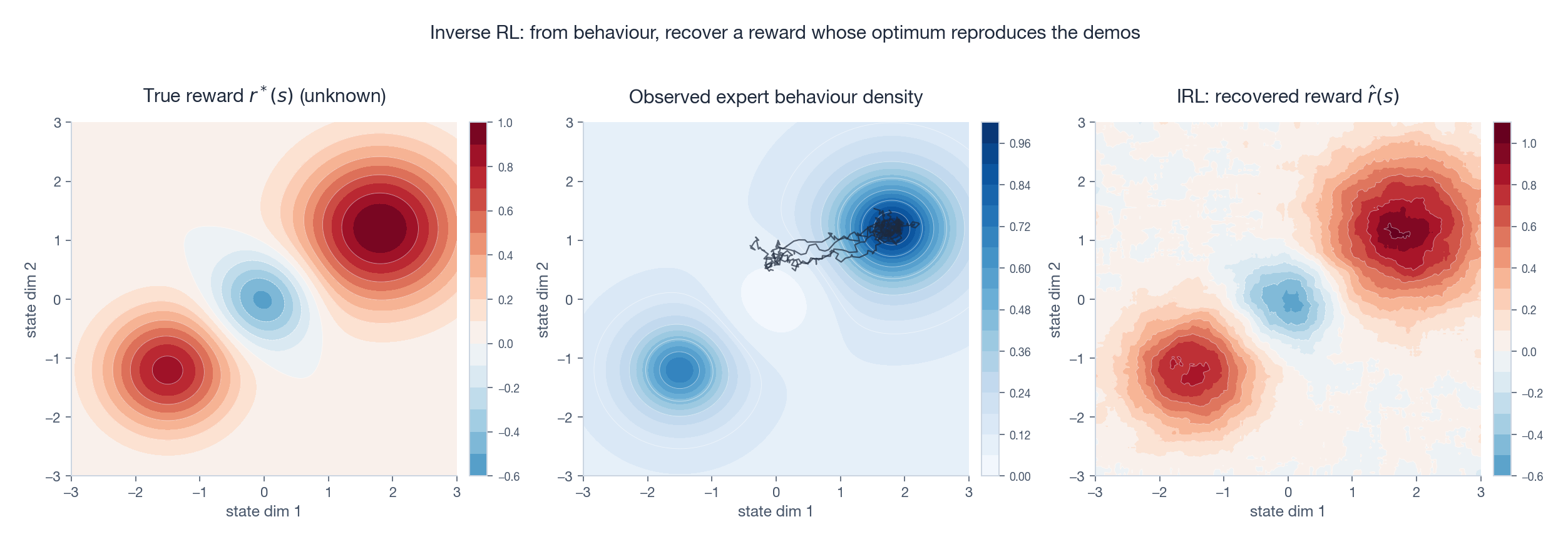

BC and DAgger both imitate the action. IRL asks a deeper question: why is that action good? It posits that the expert is (approximately) optimising some unknown reward $r^*$ , recovers a candidate $\hat r$ from the demonstrations, and then runs standard RL under $\hat r$ .

Why bother going through reward? Two reasons:

- Interpretability. $\hat r$ tells you what the expert cares about, which is auditable and editable.

- Transfer. A reward function survives changes to dynamics, embodiment, and start-state distribution that would break a behaviour-level model.

Maximum-entropy IRL#

$$p_\theta(\tau) \;\propto\; \exp\!\left( \sum_t r_\theta(s_t, a_t) \right).$$ $$\nabla_\theta \mathcal{L}(\theta) \;=\; \mathbb{E}_{\tau \sim \pi^*}\!\left[\nabla_\theta r_\theta(\tau)\right] \;-\; \mathbb{E}_{\tau \sim \pi_\theta}\!\left[\nabla_\theta r_\theta(\tau)\right].$$Read this as a contrastive update: push reward up where the expert goes, push it down where the current policy goes. At convergence, the two expectations match and the policy reproduces the expert occupancy.

The cost is the second expectation. Computing $\mathbb{E}_{\pi_\theta}$ requires solving an RL problem (or doing trajectory sampling) at every reward update — a nested loop that limited classical IRL to small grid worlds. Guided cost learning (Finn, Levine & Abbeel, 2016) replaces the inner RL solve with sampled importance-weighted trajectories, scaling MaxEnt IRL to continuous control.

Reward ambiguity#

Even with the max-entropy regulariser, $\hat r$ is recovered up to shaping invariances: adding a potential function $\Phi(s') - \Phi(s)$ leaves the optimal policy unchanged but changes $r$ . The recovered reward is therefore a useful ranking over states, not an absolute scale. Adversarial inverse RL (AIRL, below) explicitly disentangles the shaping component.

Adversarial imitation: GAIL and AIRL#

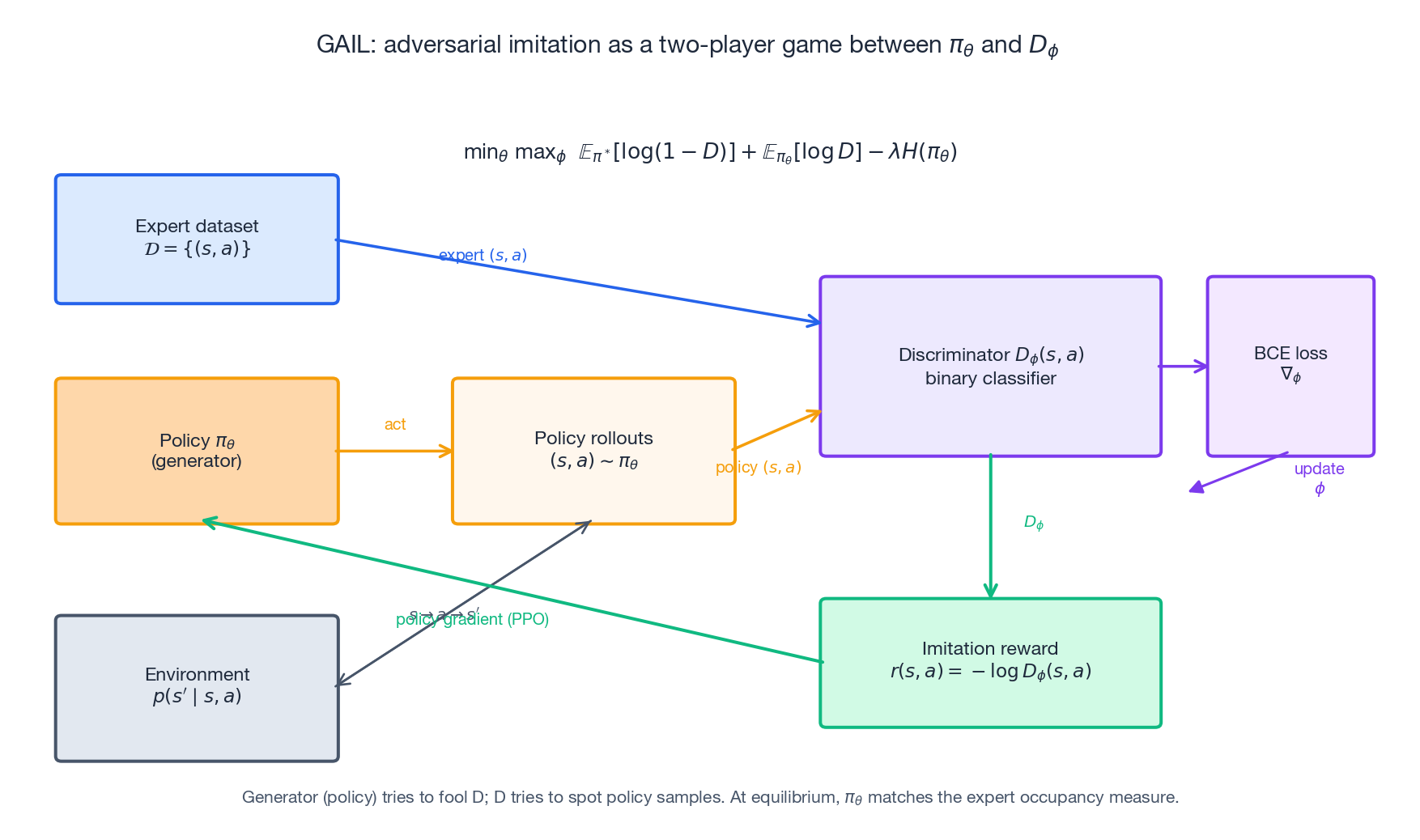

The IRL inner loop is expensive. GAIL (Ho & Ermon, 2016) noticed that for imitation we don’t actually need $r$ — we only need the policy whose state-action occupancy matches the expert’s. So GAIL replaces “recover reward, then re-solve RL” with a single adversarial game.

$$ \min_\theta \max_\phi \;\; \mathbb{E}_{(s,a)\sim \pi^*}\!\left[\log D_\phi(s, a)\right] \,+\, \mathbb{E}_{(s,a)\sim \pi_\theta}\!\left[\log\big(1 - D_\phi(s, a)\big)\right] \,-\, \lambda H(\pi_\theta). $$The entropy term $\lambda H(\pi_\theta)$ stabilises the generator and discourages mode collapse. At the saddle point, $\pi_\theta$ ’s occupancy measure equals $\pi^*$ ’s — which is exactly what BC was trying to do but without the supervision-vs-rollout mismatch.

Both forms appear in the literature; the first is the reward used in the original GAIL paper, the second has lower variance early in training.

| |

Tuning notes. Three failure modes dominate GAIL in practice:

- Discriminator wins too quickly. Gradient through the policy reward vanishes. Mitigations: clip discriminator logits, use spectral normalisation, or enforce a Lipschitz constraint (WGAIL).

- Reward signal collapses. As $D \to 0$ on policy samples, the reward drifts to zero. Reward normalisation per batch (running mean / std) restores learning.

- Policy mode-collapses. Increase the entropy bonus $\lambda$ or use a maximum-entropy actor (SAC-style) as the generator.

What does “matching occupancy” actually mean?#

GAIL is not minimising an action-prediction loss; it is minimising the Jensen-Shannon divergence between the expert occupancy $\rho_{\pi^*}(s, a)$ and the learner occupancy $\rho_{\pi_\theta}(s, a)$ . That is a much stronger objective than BC — it is aware of the rollout distribution. The price: it requires environment interaction during training (to sample $\rho_{\pi_\theta}$ ), so it does not work in fully offline settings without modification.

AIRL: disentangling reward from shaping#

$$ D_\phi(s, a, s') \;=\; \frac{\exp\big(f_\phi(s, a, s')\big)}{\exp\big(f_\phi(s, a, s')\big) + \pi_\theta(a \mid s)}, \qquad f_\phi(s, a, s') \;=\; r_\psi(s) + \gamma \Phi_\xi(s') - \Phi_\xi(s), $$so that $r_\psi$ is the state-only reward and $\Phi_\xi$ absorbs the shaping. Training with this parameterisation yields a recovered reward $\hat r = r_\psi$ that transfers across dynamics changes — the empirical headline result of the AIRL paper.

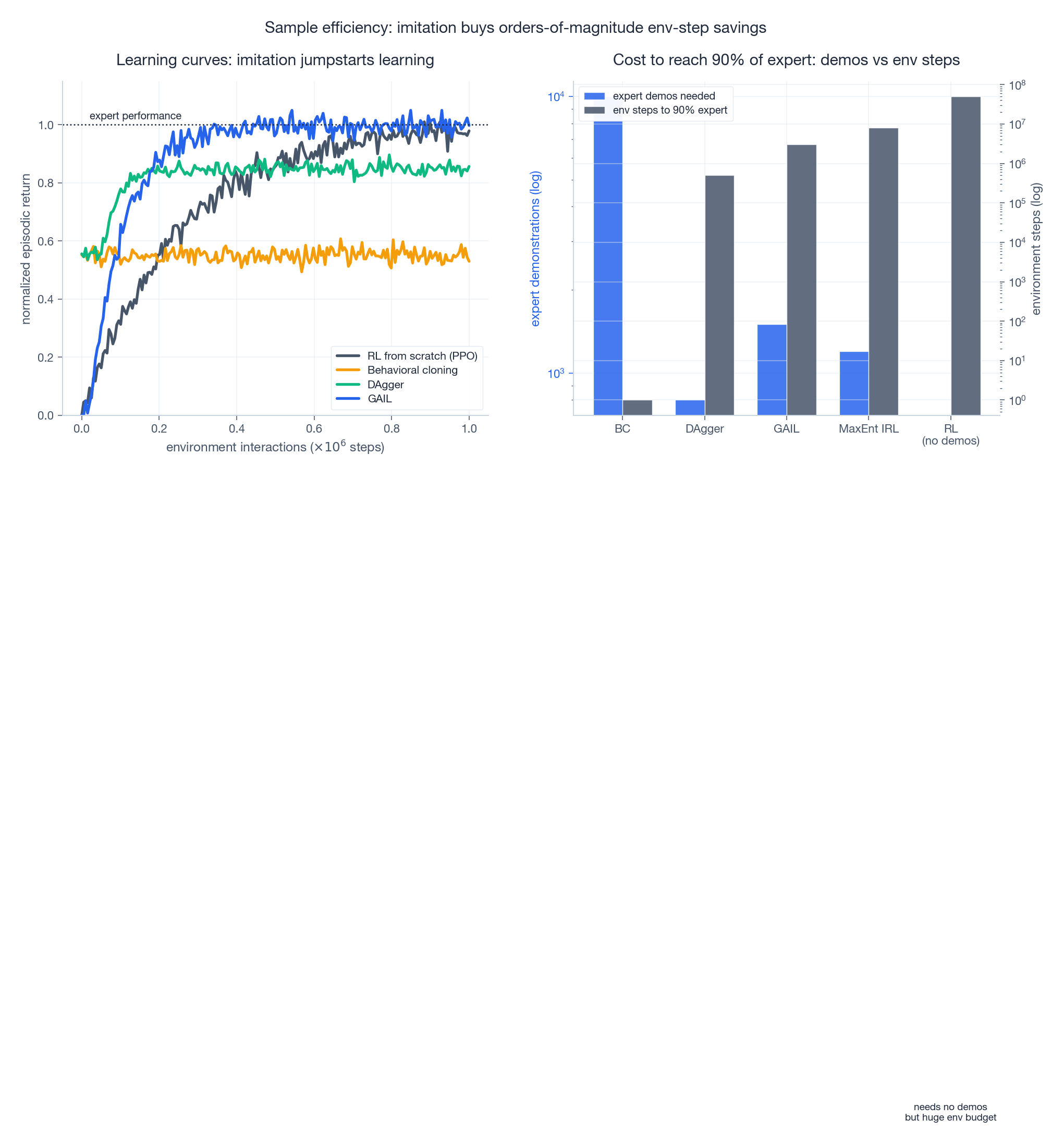

Sample efficiency: imitation vs RL#

The strongest practical argument for imitation is sample efficiency. A few thousand expert demonstrations can substitute for tens of millions of environment interactions, especially in high-dimensional control.

The pattern in the left panel is robust across robotics benchmarks: BC saturates below the expert (it cannot exceed its training data), DAgger climbs once it sees recovery states, GAIL eventually matches the expert, and pure RL takes much longer to reach the same level. The right panel makes the trade-off concrete: methods that consume more expert data tend to need fewer environment steps, and vice versa.

This is also why imitation pre-training + RL fine-tuning is the dominant recipe in modern systems — AlphaStar, robotic manipulation, and InstructGPT all start with imitation and then improve with RL.

Method selection#

| Method | Needs interactive expert? | Needs env interaction? | Sample efficiency | Interpretable reward? | Typical regime |

|---|---|---|---|---|---|

| BC | no | no | high (offline) | no | short tasks, abundant logs |

| DAgger | yes | yes | medium-high | no | algorithmic / model expert available |

| MaxEnt IRL | no | yes (inner loop) | low | yes | small state spaces, need transfer |

| GAIL | no | yes | medium | no | high-dim continuous control |

| AIRL | no | yes | medium | yes (state reward) | want to transfer reward across tasks |

A short decision rule:

- Static log of demonstrations, short horizon → start with BC. Add early stopping and standardisation; consider an ensemble for uncertainty.

- You can query the expert at training time → DAgger. The linear-error guarantee is the cheapest improvement you will ever buy.

- You need to understand what the expert wants, or to transfer it to a different environment → MaxEnt IRL for small problems, AIRL for continuous control.

- High-dimensional continuous control with a static dataset and an environment you can interact with → GAIL. Budget for adversarial-training instability.

FAQ#

Can imitation learning exceed the expert? Pure imitation cannot, by construction — the optimal imitator matches the expert. The standard fix is imitation as initialisation: start from the BC/GAIL policy and fine-tune with RL on whatever reward you can specify (or with RLHF). Most large-scale systems use exactly this two-stage recipe.

How do I handle noisy or suboptimal expert demonstrations? Three lines of defence: (i) weight samples by an estimated quality score (e.g. return, human preference); (ii) replace cross-entropy with a robust loss (Huber, log-cosh) to discount outliers; (iii) use offline RL on the demonstrations — methods such as IQL or CQL handle suboptimal trajectories gracefully.

The expert is multimodal — different actions in the same state. What now? Standard MSE-BC averages the modes and produces dangerous in-between actions (the classic “drive into the obstacle because half the demos go left and half go right” failure). Use a Mixture Density Network, a discrete-action quantised policy, a conditional VAE, or a diffusion policy. Diffusion policies (Chi et al., 2023) currently dominate on multimodal manipulation tasks.

Can BC handle distribution shift if I just collect more data? Up to a point. More data shrinks $\varepsilon$ but does not change the $T^2$ exponent. Once your horizon is long enough that the policy ever leaves the support of $\mathcal{D}$ , you need either DAgger-style relabelling, GAIL-style occupancy matching, or conservative offline RL (CQL, IQL) that explicitly penalises out-of-support actions.

Is RLHF a kind of imitation learning? Partly. The supervised fine-tuning stage of RLHF is BC on human-written demonstrations. The preference-modelling stage is closer to an IRL variant — you learn a reward model from comparisons, then optimise it with PPO. We unpack the full pipeline in Part 12 .

References#

- Pomerleau, D. (1989). ALVINN: An Autonomous Land Vehicle in a Neural Network. NIPS.

- Ross, S., Gordon, G., & Bagnell, J. A. (2011). A Reduction of Imitation Learning and Structured Prediction to No-Regret Online Learning. AISTATS. arXiv:1011.0686 .

- Ziebart, B., Maas, A., Bagnell, J. A., & Dey, A. (2008). Maximum Entropy Inverse Reinforcement Learning. AAAI.

- Finn, C., Levine, S., & Abbeel, P. (2016). Guided Cost Learning. ICML. arXiv:1603.00448 .

- Ho, J., & Ermon, S. (2016). Generative Adversarial Imitation Learning. NeurIPS. arXiv:1606.03476 .

- Fu, J., Luo, K., & Levine, S. (2018). Learning Robust Rewards with Adversarial Inverse Reinforcement Learning. ICLR. arXiv:1710.11248 .

- Chi, C. et al. (2023). Diffusion Policy: Visuomotor Policy Learning via Action Diffusion. RSS. arXiv:2303.04137 .

Series Navigation#

Reinforcement Learning 12 parts

- 01 Reinforcement Learning (1): Fundamentals and Core Concepts

- 02 Reinforcement Learning (2): Q-Learning and Deep Q-Networks (DQN)

- 03 Reinforcement Learning (3): Policy Gradient and Actor-Critic Methods

- 04 Reinforcement Learning (4): Exploration Strategies and Curiosity-Driven Learning

- 05 Reinforcement Learning (5): Model-Based RL and World Models

- 06 Reinforcement Learning (6): PPO and TRPO — Trust Region Policy Optimization

- 07 Reinforcement Learning (7): Imitation Learning and Inverse RL you are here

- 08 Reinforcement Learning (8): AlphaGo and Monte Carlo Tree Search

- 09 Reinforcement Learning (9): Multi-Agent Reinforcement Learning

- 10 Reinforcement Learning (10): Offline Reinforcement Learning

- 11 Reinforcement Learning (11): Hierarchical RL and Meta-Learning

- 12 Reinforcement Learning (12): RLHF and LLM Applications