Reinforcement Learning (9): Multi-Agent Reinforcement Learning

A working tour of multi-agent RL: Markov games, the non-stationarity and credit-assignment problems, CTDE, value decomposition (VDN, QMIX), counterfactual baselines (COMA), MADDPG, communication topologies, and the league-training pipeline behind AlphaStar and OpenAI Five — with a runnable QMIX mixer in PyTorch.

Single-agent RL rests on one quiet but enormous assumption: the environment is stationary. The transition kernel does not change while the agent learns. The moment a second learner shares the world, that assumption collapses. Each agent now sees an environment whose dynamics shift as its peers update, rewards become entangled across agents, and the joint action space explodes combinatorially. These are not engineering nuisances. They are the reason multi-agent RL needs its own algorithms instead of just running DQN n times in parallel.

The payoff for solving this is large. AlphaStar reached Grandmaster on the StarCraft II ladder, OpenAI Five won against the world champions in Dota 2, and cooperative MARL is increasingly the workhorse for warehouse robotics, traffic-signal control, and multi-LLM agent systems. This chapter builds the conceptual backbone — Markov games, CTDE, value decomposition, counterfactual credit — and lands on a clean QMIX mixer you can drop into your own training loop.

What You Will Learn#

- The four hard problems unique to MARL: non-stationarity, credit assignment, partial observability, and scalability

- The game-theoretic vocabulary you actually need: Markov games, Nash equilibria, Pareto fronts, social dilemmas

- CTDE — centralised training, decentralised execution — and why it dominates

- Value decomposition: VDN, QMIX, and the monotonicity constraint that makes greedy execution sound

- Multi-agent actor-critic: MADDPG (centralised critic per agent) and COMA (counterfactual baselines)

- Communication: when broadcast, sparse, and attention-based message passing each pay off

- The engineering of AlphaStar’s league and OpenAI Five’s scale-out training

Prerequisites#

- Q-learning and DQN (Part 2 )

- Actor-critic and policy gradients (Part 3 , Part 6 )

- Comfort with expectations and conditional probabilities; we keep formal game theory minimal

Why MARL is genuinely harder than single-agent RL#

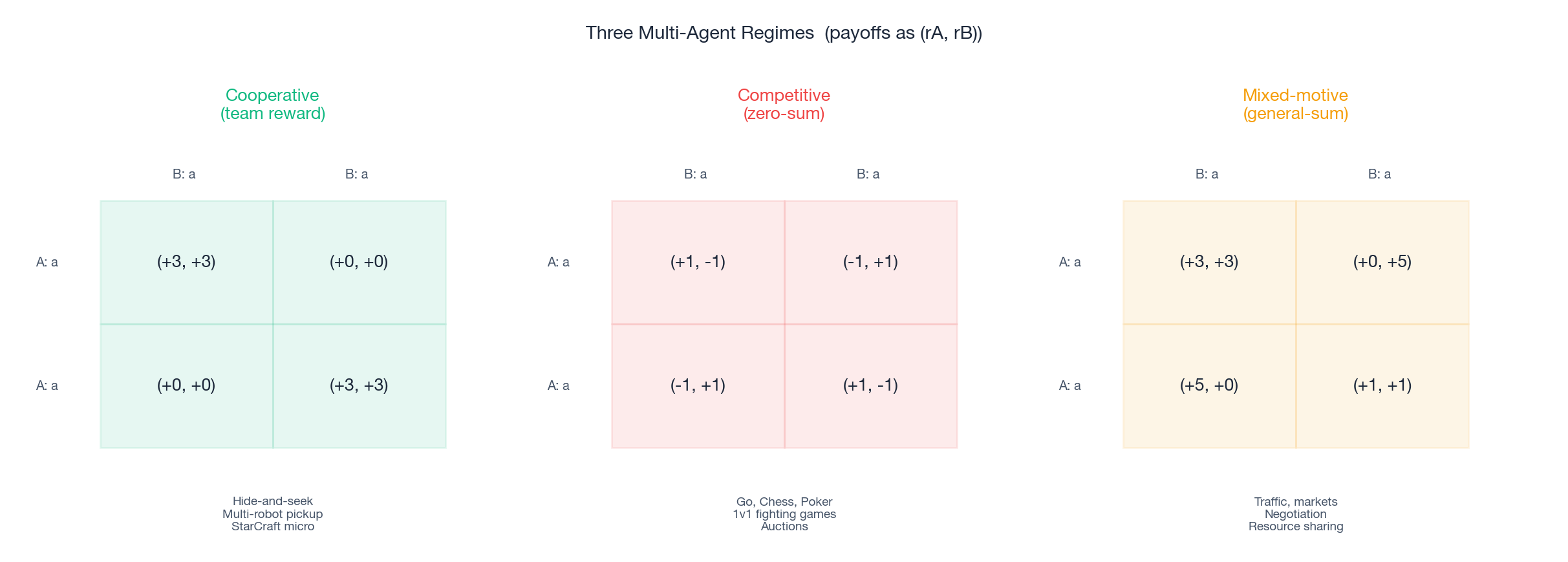

The reward structure decides almost everything else. In cooperative games every agent shares the team reward — coordinated soccer plays, swarm logistics, StarCraft micro-management. Competitive games are zero-sum: one agent’s gain is another’s loss, and the right tool is some form of self-play. The interesting middle ground is mixed-motive (general-sum) games — markets, traffic, negotiation, congestion control — where short-term self-interest can torpedo long-term collective welfare (the prisoner’s dilemma is the canonical 2×2 case, and the figure shows it as the third payoff matrix).

Within any of these regimes, four obstacles break the single-agent toolbox.

Non-stationarity. From agent $i$ ’s point of view the transition is $P(s' \mid s, a_i, a_{-i})$ , where $a_{-i}$ denotes everyone else’s actions. As the others’ policies $\pi_{-i}$ keep updating during training, the effective transition kernel that $i$ sees keeps drifting. Off-policy methods like DQN, which assume a fixed data-generating distribution, can diverge or oscillate.

Credit assignment. When the team gets a single reward $r$ for an episode-long collaboration, who actually earned it? A naive shared gradient encourages free-riding: an agent that does nothing receives the same credit as one that did the heavy lifting. Counterfactual baselines (COMA, Section 5 ) and value decomposition (Section 4 ) are the two principled answers.

Partial observability. Each agent sees only its own observation $o_i$ . There is no Markov state available at execution time. Recurrent networks plus belief-state-style representations are typical, but the deeper fix is to architect training so that the centralised critic sees more than execution does.

Scalability. With $n$ agents and $|A|$ actions each, the joint action space is $|A|^n$ . Without exploiting structure, even modest cooperative tasks become intractable.

The dominant resolution to all four — and the conceptual backbone of the chapter — is CTDE.

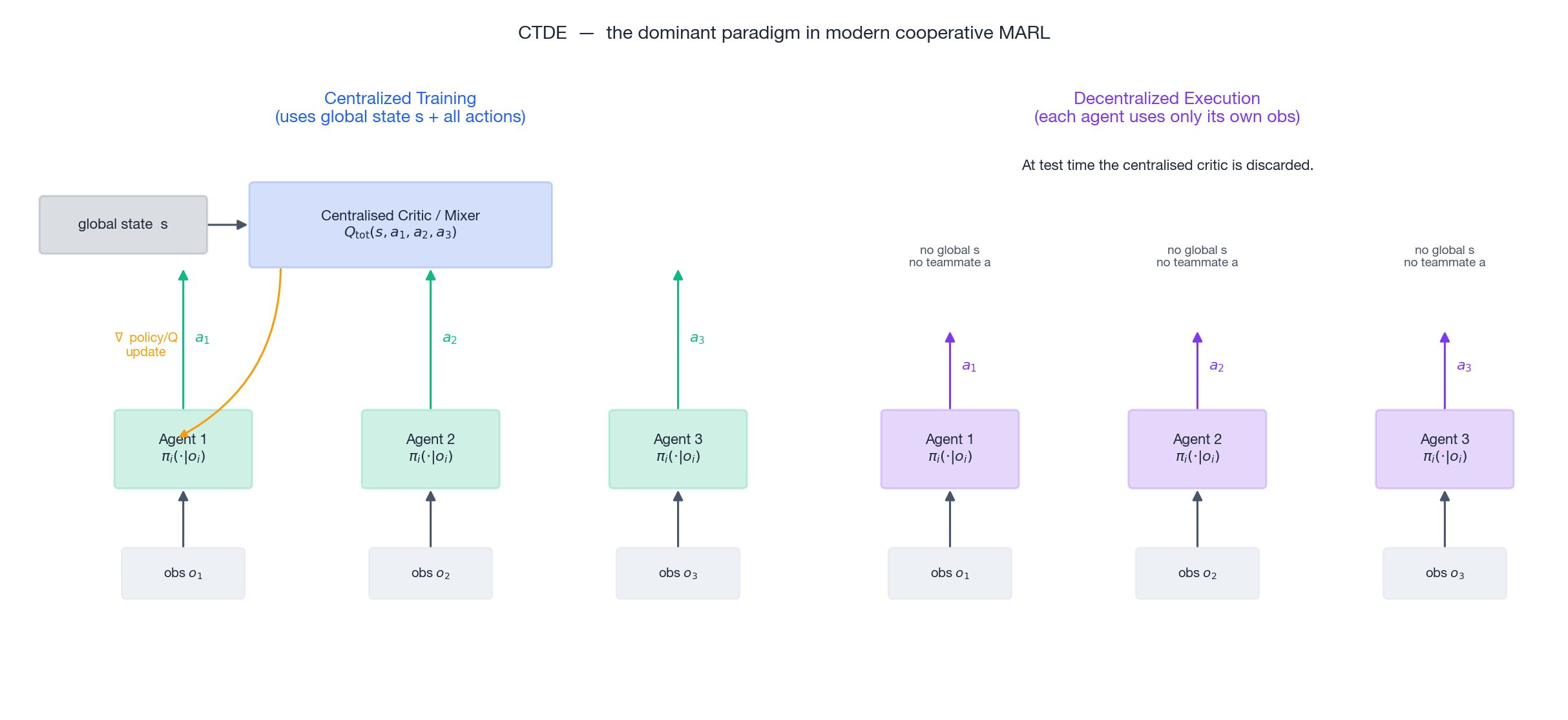

CTDE: train with everything, execute with almost nothing#

The idea is asymmetric. During training we let the algorithm peek at the full state and at every agent’s action — a centralised critic, mixer, or value function. At deployment we keep only the per-agent policies that condition on local observations. The execution interface is decentralised; the optimisation interface is not.

CTDE works because the things that make MARL hard (non-stationarity, credit assignment, partial observability) are properties of the learning phase, while the constraints that make MARL practical (limited bandwidth, asynchronous control, privacy) are properties of the execution phase. CTDE puts each problem in its own corner. Almost every modern cooperative MARL algorithm — VDN, QMIX, MADDPG, COMA, MAPPO — is a CTDE method; they differ only in what the centralised object is and how it is decomposed.

Markov games and Nash equilibria, just enough to be useful#

$$\langle \mathcal{N}, \mathcal{S}, \{A_i\}_{i\in\mathcal{N}}, P, \{r_i\}_{i\in\mathcal{N}}, \gamma \rangle.$$ $$V_i(\pi_i^*, \pi_{-i}^*) \;\geq\; V_i(\pi_i, \pi_{-i}^*) \qquad \forall \pi_i,\, \forall i.$$Two facts make Nash equilibria a slippery target. First, they are typically not unique; second, they are not always Pareto optimal. In the fully cooperative case, where $r_1 = \cdots = r_n$ , the picture simplifies dramatically — the problem reduces to maximising a single team return, and Nash equilibrium and Pareto optimality collapse onto the same set. That is why most of the algorithmic machinery in this chapter targets the cooperative setting first; competitive and mixed-motive cases borrow from it but layer additional ideas (self-play, opponent modelling, regularisation) on top.

Value decomposition: VDN and QMIX#

VDN: additive decomposition#

$$Q_\text{tot}(s, \mathbf{a}) \;=\; \sum_{i=1}^{n} Q_i(o_i, a_i).$$Sums are monotonic in each summand, so IGM holds trivially. The cost is expressivity: VDN cannot represent any non-additive interaction between agents.

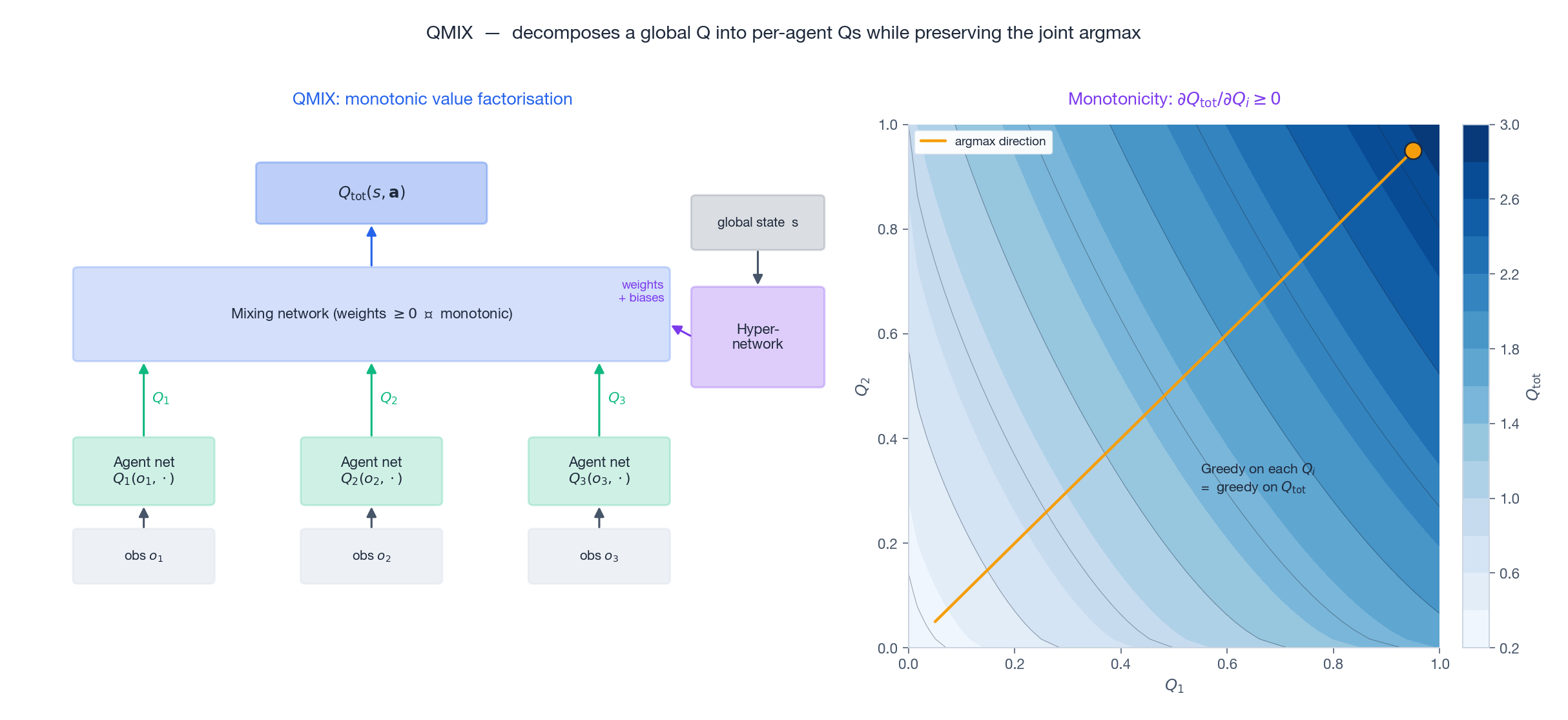

QMIX: monotonic mixing#

$$\frac{\partial Q_\text{tot}}{\partial Q_i} \;\geq\; 0 \qquad \forall i.$$The constraint is enforced by constructing the mixer’s weights to be non-negative — by passing them through an absolute value or a softplus. Crucially, the weights themselves are produced by a hypernetwork conditioned on the global state $s$ , so different states can reweight agents differently (and the mixer can express interactions that pure summation cannot). The right panel of the figure shows the resulting $Q_\text{tot}$ surface: monotone in each $Q_i$ , so the joint argmax aligns with the pair of per-agent argmaxes.

| |

Train it exactly like a DQN target — sample transitions, build the TD target on $Q_\text{tot}$ , backprop through the mixer into all per-agent networks. At execution time, every agent runs its own $Q_i$ and never touches the mixer.

A practical note: QMIX cannot represent every IGM-decomposable function (its monotonicity is sufficient but not necessary). QTRAN and QPLEX push this frontier, at the cost of more complex losses and engineering.

Multi-agent actor-critic: MADDPG and COMA#

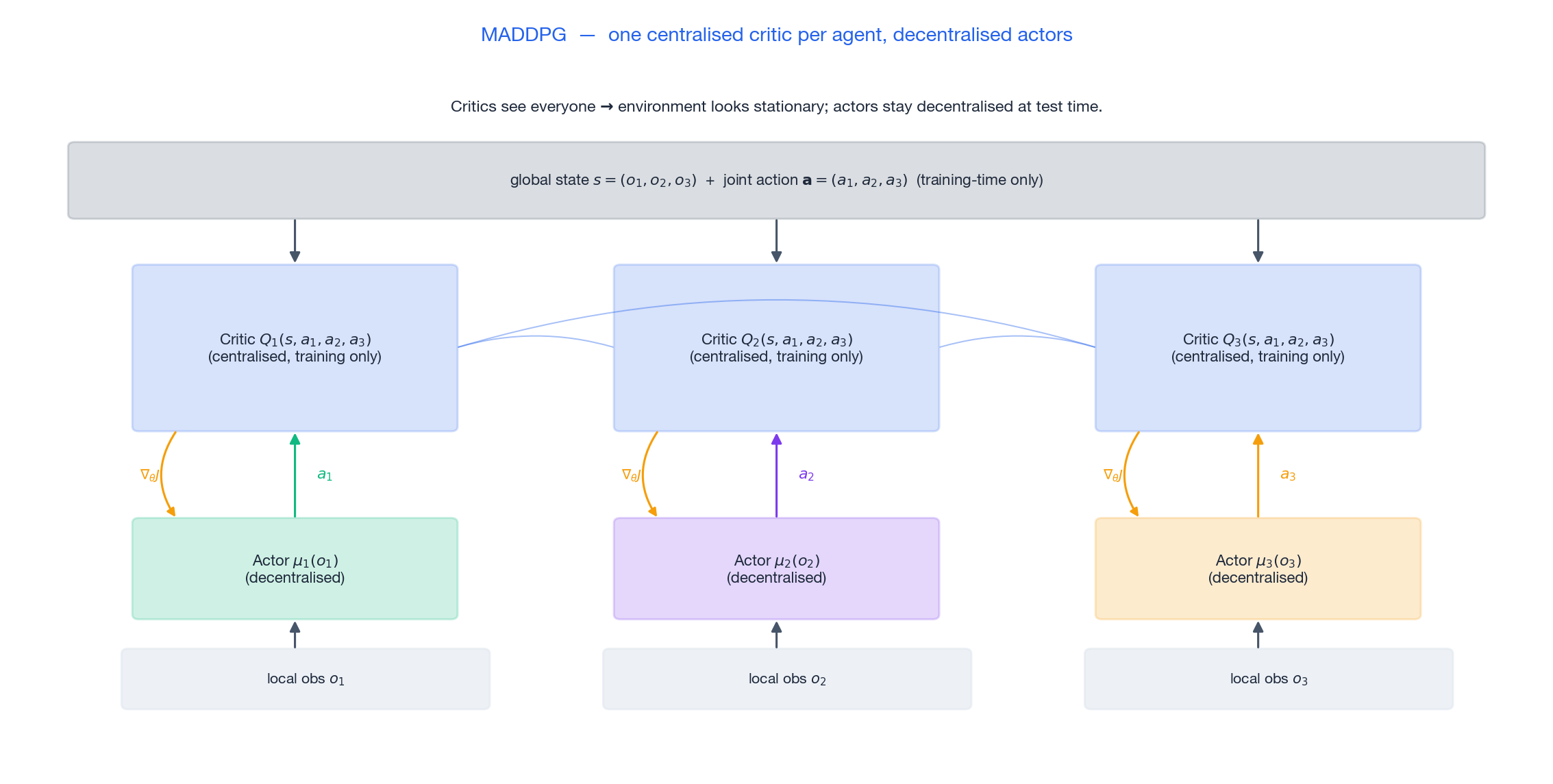

MADDPG: a centralised critic per agent#

MADDPG is the natural CTDE extension of DDPG. Each agent $i$ has

- an actor $\mu_i(o_i)$ that conditions only on its local observation, and

- a critic $Q_i(s, a_1, \ldots, a_n)$ that conditions on the full state and every agent’s action.

Because the critic conditions on $a_{-i}$ , the environment looks stationary to the critic — its inputs include the very thing that was changing under it. The actor remains decentralised, so deployment is unchanged. MADDPG works in cooperative, competitive, and mixed-motive settings; the replay buffer simply stores joint trajectories.

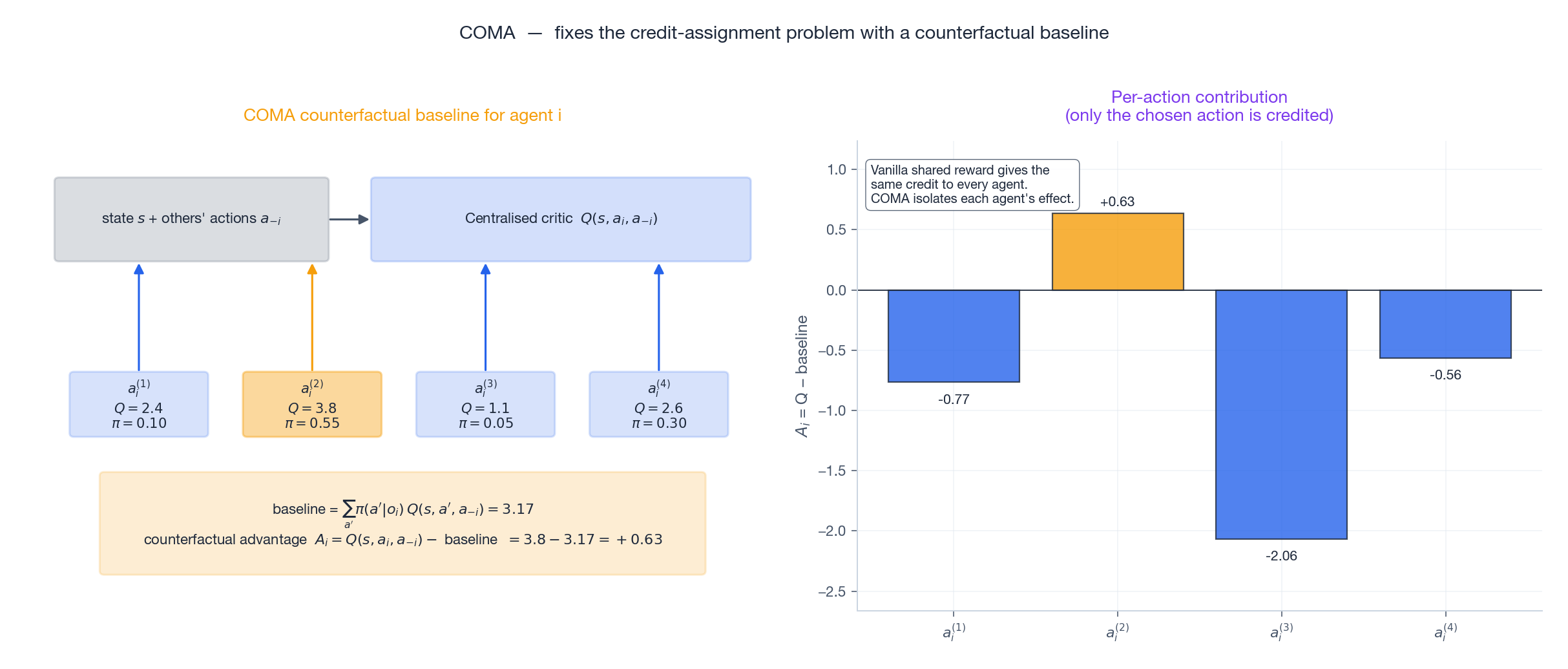

COMA: counterfactual credit assignment#

Read this carefully. The first term is the value of what actually happened. The second is the expected value if agent $i$ had sampled some other action while everyone else did exactly what they did. The difference is precisely $i$ ’s marginal contribution. When the team reward is shared, this is the right object to do policy gradient on — it removes the noise from $a_{-i}$ without introducing bias. The figure shows the four-action case: only the chosen action receives a non-trivial advantage.

The trick that makes COMA tractable is that the centralised critic outputs a vector of $Q$ -values for all candidate actions of agent $i$ at once, so the baseline is a single dot product against the policy probabilities — no extra environment rollouts needed.

Communication: how much should agents tell each other?#

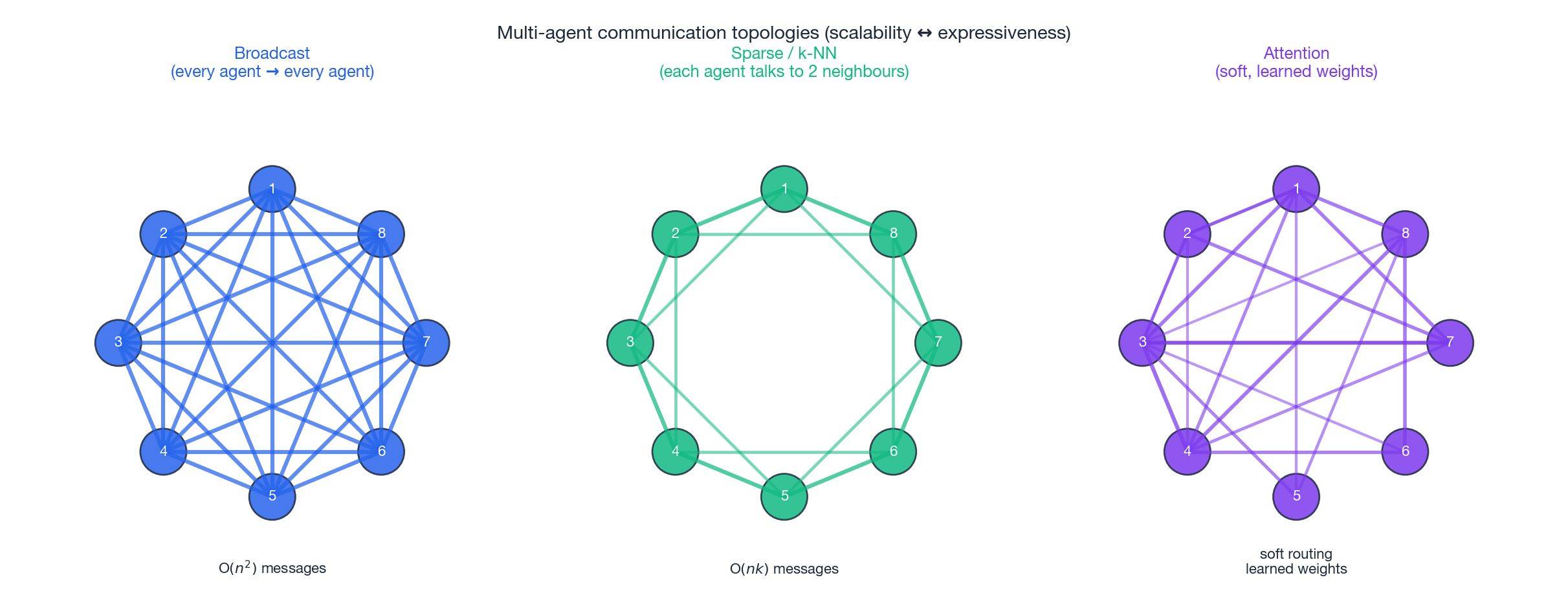

Sometimes decentralised execution is too restrictive — agents really do need to share information at test time. The design space is a spectrum.

Broadcast — every agent sends a fixed-size message to every other agent. Conceptually clean, but message volume grows as $O(n^2)$ per step, which is fatal beyond a few dozen agents.

Sparse / k-NN — each agent only talks to its $k$ nearest neighbours (in space, in a graph, in role). Linear in $n$ for fixed $k$ . CommNet, IC3Net and TarMAC all live near this end.

Attention — soft, learned routing. Each agent issues a query, peers respond with keys, and message weights are computed by softmax. The cost is $O(n^2)$ in attention scores but messages can be heavily pruned (top-$k$ attention) and the structure is end-to-end differentiable. This is the dominant choice in modern MARL and the natural bridge to multi-LLM-agent systems, where attention-style routing maps directly onto tool-use and agent orchestration patterns.

The right knob is task-dependent: for tightly-coupled robotics teams, dense communication is fine; for thousands of microservices or vehicles, sparse or attention-pruned schemes are the only feasible designs.

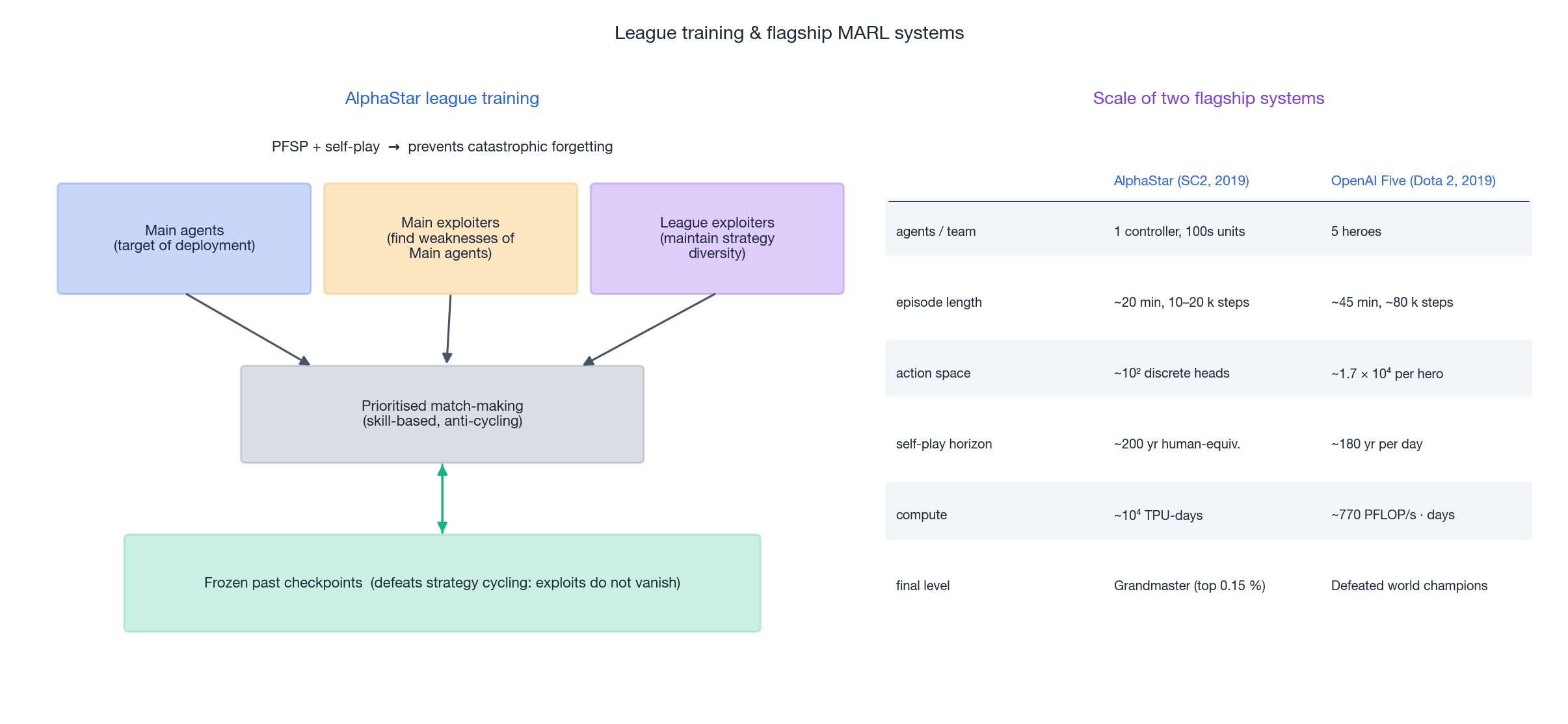

Scaling MARL: AlphaStar, OpenAI Five, and league training#

Both AlphaStar (StarCraft II) and OpenAI Five (Dota 2) succeed by treating training-population design as a first-class engineering concern. Naive self-play tends to cycle — agent A learns to beat B, B learns to beat A’s new policy and forgets why it used to beat A’s old policy, and so on. AlphaStar’s league explicitly decouples three populations:

- Main agents — the agents that will actually be deployed; they are the optimisation target.

- Main exploiters — separate agents whose only job is to find weaknesses in the main agents, forcing them to round out their game.

- League exploiters — periodically reset agents that maintain strategic diversity so the league does not collapse onto a narrow style.

A pool of frozen historical checkpoints is matched against current learners with prioritised fictitious self-play (PFSP), which biases match-making toward opponents the current learner is just barely beating. Together these mechanisms prevent the catastrophic forgetting that breaks naive self-play.

OpenAI Five takes a different cut. Each Dota hero is controlled by an independent LSTM policy with a shared parameter set; the team-level coordination problem is handed off to a hand-engineered shared reward and an enormous compute budget — roughly 180 years of self-play per day. The lesson from both systems is the same: at industrial scale, the training curriculum matters at least as much as the per-agent algorithm.

These ideas are migrating outside games. RLHF (Part 12 ) and tool-use agent orchestration both increasingly look like multi-population training — a population of policies, a population of evaluators, and prioritised match-making between them.

Credit Assignment in MARL: A Worked Example#

Credit assignment — figuring out which agent’s action caused a team-level reward — is the real hard problem in cooperative MARL, and it deserves more than a passing mention. Consider a 4-agent grid task where the team gets +10 only when all four agents are on a goal cell at the same step. Train naive independent Q-learners and watch them oscillate forever: each agent’s $Q$ -update treats the other three as part of the environment, so the gradient of “I should move to my goal” gets buried in noise from teammates moving in and out of theirs.

Three families of solutions, in order of complexity.

Difference rewards#

$$ D_i = R(s, a) - R(s, (a_{-i}, c_i)), $$where $c_i$ is a default action (e.g. “do nothing”). Each agent’s effective reward is what the team got because of agent $i$ ’s actual choice, marginalised over a baseline. Difference rewards predate deep RL by 15 years (Wolpert & Tumer, 2002) and remain shockingly effective when you can compute the counterfactual cheaply — for example in any simulator you can roll back.

Counterfactual baselines (COMA)#

$$ A_i(s, a) = Q(s, a) - \sum_{a_i'} \pi_i(a_i' | \tau_i)\, Q(s, (a_{-i}, a_i')), $$which marginalises over agent $i$ ’s alternative actions while holding the rest fixed. The magic is that the marginal $Q$ -value uses the centralised critic — at training time you have everyone’s observation — so the baseline is well-defined even though each agent acts on its own history at test time.

Reward shaping with potentials#

For domains where you cannot afford either, fall back to potential-based reward shaping: add an extra reward term $F(s, s') = \gamma \Phi(s') - \Phi(s)$ where $\Phi$ is a hand-designed potential (e.g. negative distance to goal). The Ng-Harada-Russell theorem guarantees the optimal policy is preserved. In MARL this trick applied per agent often does most of the work that fancier credit-assignment methods do, and it is trivially easy to implement.

A concrete failure I have seen#

On a multi-robot warehouse pick task, an early team trained QMIX with a sparse “all packages picked” reward. After 100 M environment steps the policy was still suboptimal because robot 4 was idling — its individual $Q$ -value barely moved because robot 4’s action almost never determined the team success on its own. We added a difference reward (each robot’s bonus = team reward $-$ team reward if that robot stood still), and the policy converged in 12 M steps. The fancy credit-assignment paper turned out to be dominated by a 30-line shaping function.

Reward Shaping and Curricula in MARL#

Sparse team rewards rarely converge in MARL. Two engineering tricks help more than another algorithm switch.

Curriculum over team size#

Train with 2 agents first, then 3, then 4. The gradient signal at $n=2$ is much stronger because the joint action space is small enough that random exploration occasionally hits the reward. Once policies are sensible, scale up — add agents whose initial policy is the best learned single-agent policy, and continue training with the full team. AlphaStar uses an extreme version of this (population-based training over leagues), but you do not need that machinery to benefit from the basic curriculum.

Self-play with frozen opponents#

For competitive MARL, the standard recipe is fictitious self-play: at each iteration the active learner trains against a uniform mixture of past versions of itself. This avoids the cycle where today’s policy beats yesterday’s but loses to last week’s (the rock-paper-scissors trap). OpenAI Five maintained 80 % of training against the latest checkpoint and 20 % against random older snapshots. The 20 % is what kept the population diverse enough to generalise.

A note on hyperparameter sensitivity#

MARL is much more sensitive to hyperparameters than single-agent RL. The same QMIX implementation, with all defaults, converges on StarCraft II micromanagement at one learning rate and diverges on a multi-particle environment at a 2× different learning rate. The community lore is that the right starting point is roughly half the learning rate you would use for single-agent PPO — and to keep the entropy bonus higher for longer, because exploration is a public good in MARL: when one agent stops exploring, all the others lose information.

FAQ#

Why does QMIX need the monotonicity constraint?#

So that decentralised greedy execution is consistent with centralised joint optimisation. If $\partial Q_\text{tot}/\partial Q_i$ could go negative, an agent acting greedily on its own $Q_i$ might lower the team value. Monotonicity makes the per-agent argmax and the joint argmax coincide.

When do MARL systems get stuck in suboptimal equilibria?#

Whenever the optimal joint action requires coordinated exploration — both agents must simultaneously try the risky move for it to look good. If random exploration almost never produces that joint action, the system converges to a safer but Pareto-dominated equilibrium. The fixes are joint exploration (committed-exploration schedules), explicit communication, and opponent modelling.

How does sample complexity scale with the number of agents?#

For cooperative tasks where value decomposition holds, sample complexity grows roughly linearly in $n$ — that is the entire point of QMIX. Without decomposition, learning $Q(s, \mathbf{a})$ over the joint action space is exponential in $n$ .

Independent learners vs CTDE — is CTDE always worth it?#

For very small populations or when global state is genuinely unavailable, independent Q-learning can work and is much simpler to implement. As soon as credit assignment matters or the team is more than 3–4 agents, CTDE methods pull ahead and the gap widens fast.

Does any of this transfer to multi-LLM-agent systems?#

Yes. Centralised critics map onto outer evaluators, decentralised actors onto sub-agents, attention-based communication onto tool routing, and league training onto the population-of-evaluators pattern that is becoming standard in agentic RLHF.

References#

- Sunehag et al., Value-Decomposition Networks for Cooperative Multi-Agent Learning, AAMAS 2018. arXiv:1706.05296

- Rashid et al., QMIX: Monotonic Value Function Factorisation for Deep Multi-Agent RL, ICML 2018. arXiv:1803.11485

- Lowe et al., Multi-Agent Actor-Critic for Mixed Cooperative-Competitive Environments (MADDPG), NeurIPS 2017. arXiv:1706.02275

- Foerster et al., Counterfactual Multi-Agent Policy Gradients (COMA), AAAI 2018. arXiv:1705.08926

- Vinyals et al., Grandmaster level in StarCraft II using multi-agent reinforcement learning, Nature 575, 2019.

- OpenAI et al., Dota 2 with Large Scale Deep Reinforcement Learning, 2019. arXiv:1912.06680

- Yu et al., The Surprising Effectiveness of PPO in Cooperative Multi-Agent Games (MAPPO), NeurIPS 2022. arXiv:2103.01955

Reinforcement Learning 12 parts

- 01 Reinforcement Learning (1): Fundamentals and Core Concepts

- 02 Reinforcement Learning (2): Q-Learning and Deep Q-Networks (DQN)

- 03 Reinforcement Learning (3): Policy Gradient and Actor-Critic Methods

- 04 Reinforcement Learning (4): Exploration Strategies and Curiosity-Driven Learning

- 05 Reinforcement Learning (5): Model-Based RL and World Models

- 06 Reinforcement Learning (6): PPO and TRPO — Trust Region Policy Optimization

- 07 Reinforcement Learning (7): Imitation Learning and Inverse RL

- 08 Reinforcement Learning (8): AlphaGo and Monte Carlo Tree Search

- 09 Reinforcement Learning (9): Multi-Agent Reinforcement Learning you are here

- 10 Reinforcement Learning (10): Offline Reinforcement Learning

- 11 Reinforcement Learning (11): Hierarchical RL and Meta-Learning

- 12 Reinforcement Learning (12): RLHF and LLM Applications