Reinforcement Learning (10): Offline Reinforcement Learning

Master offline RL: learn policies from fixed datasets without environment interaction. Covers distributional shift, Conservative Q-Learning (CQL), BCQ, Implicit Q-Learning (IQL), Decision Transformer, with a complete CQL implementation.

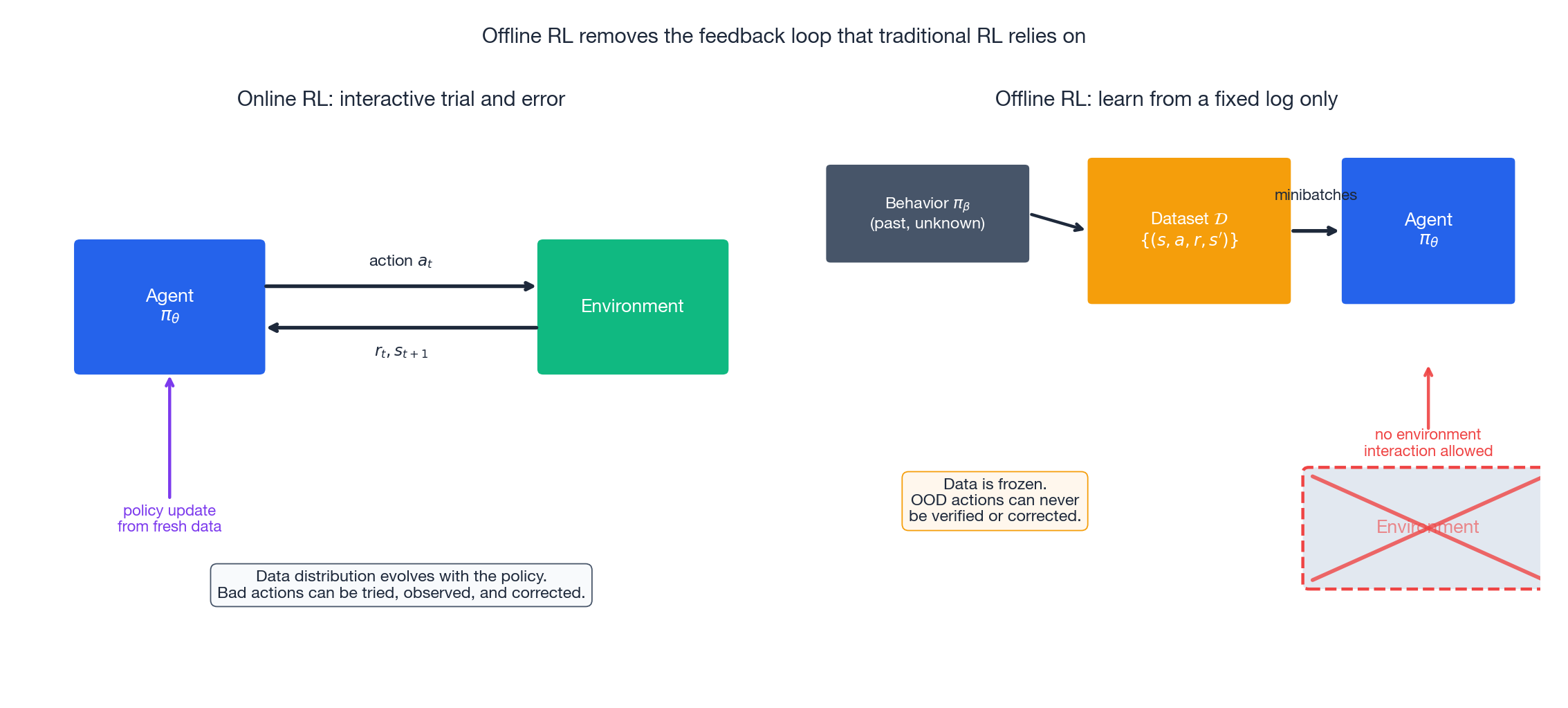

Every algorithm we’ve studied so far has the same core loop: act, observe, update. This loop makes RL work, but it also prevents RL from being deployed. A self-driving system can’t practice intersections by crashing. A clinical decision-support model can’t run a randomized policy on real patients. A factory robot can’t test ten thousand grasp variants on a production line.

These settings do have logs — millions of hours of human driving, decades of de-identified patient records, and terabytes of behavior cloning data. Offline RL (also called batch RL) is the subfield that asks: can we extract a strong policy from a fixed dataset without any new interaction with the environment?

The answer is “yes, but only if we are very careful.” The reason for this caveat is the central theme of this post: distributional shift between the behavior policy that generated the data and the learned policy that aims to improve on it.

What You Will Learn#

- Why naive off-policy RL fails offline: extrapolation error, value overestimation, and the death spiral.

- CQL (Conservative Q-Learning): a pessimistic regularizer that lower-bounds the true value.

- BCQ (Batch-Constrained Q-Learning): generative action proposals that stay inside the data manifold.

- IQL (Implicit Q-Learning): expectile regression that approximates

maxwithout ever querying OOD actions. - Decision Transformer: reframing RL as conditional sequence modeling on returns-to-go.

- D4RL benchmark numbers so you know which algorithm to reach for first.

- A complete, runnable CQL implementation in PyTorch.

Prerequisites#

- Q-learning, target networks, and actor-critic (Parts 2 -6 ).

- Comfort with the Bellman optimality operator and importance sampling.

- PyTorch and the Gym/Gymnasium API.

Why Offline RL Is Genuinely Hard#

In online RL the policy and the data distribution are coupled. As soon as the policy starts overestimating some action, the next rollout puts it under the microscope and the Bellman update corrects it. In offline RL the dataset is frozen. There is no second chance: any error the model makes about an unseen action will sit in the Q-table forever, and the argmax operator will happily exploit it.

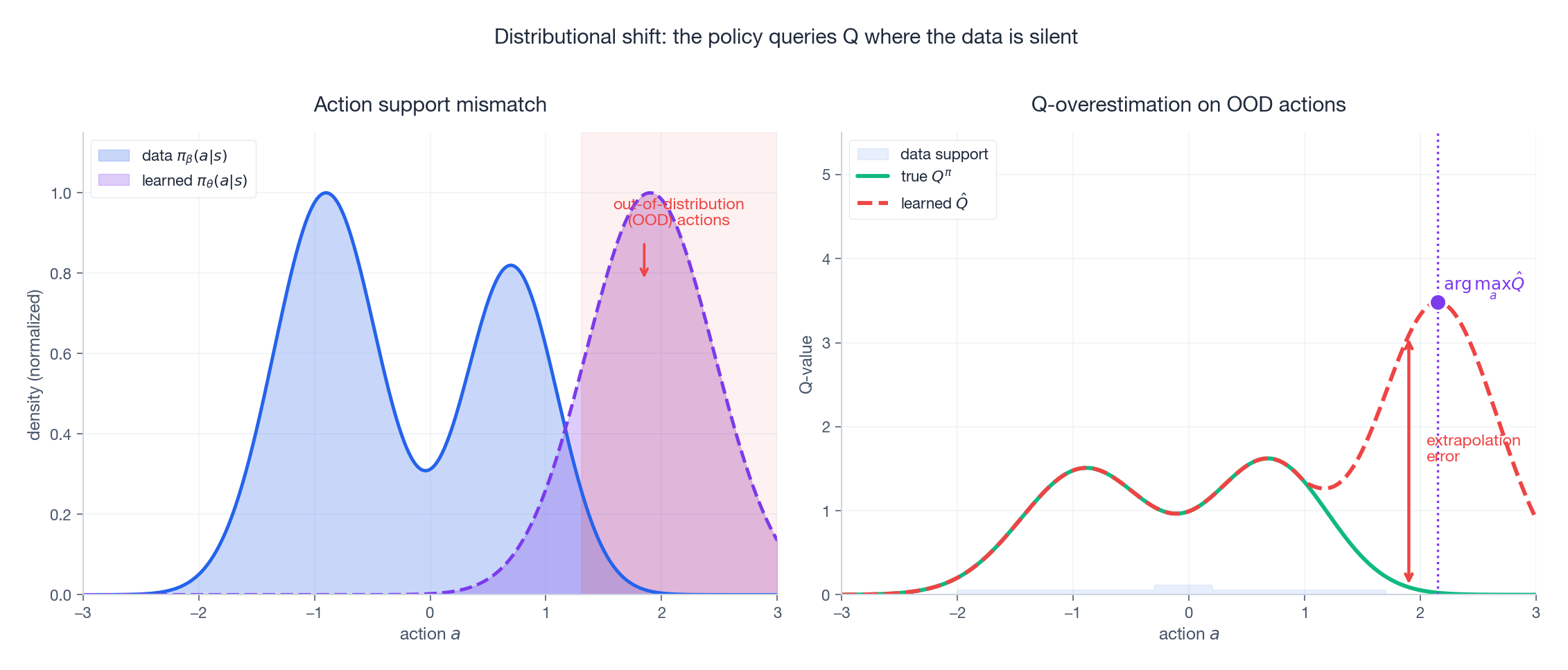

Distributional Shift#

$$d_{\pi_\theta}(s,a)\neq d_{\pi_\beta}(s,a).$$This becomes a problem the moment $\pi_\theta$ wants to take an action $a$ for which $\pi_\beta(a\mid s)\approx 0$ . The Q-network has never seen a target for that action; whatever value it returns is pure extrapolation from a neural net that has been trained to fit a completely different region of the input space.

Extrapolation Error and the Death Spiral#

The max operator selects the most optimistic extrapolation. If $Q$

overestimates even one out-of-distribution action by $\epsilon$

, that bias becomes the bootstrap target for the previous timestep, then for the timestep before that, and so on. Empirically this diverges within a few thousand gradient steps on standard benchmarks (Fujimoto et al., 2019

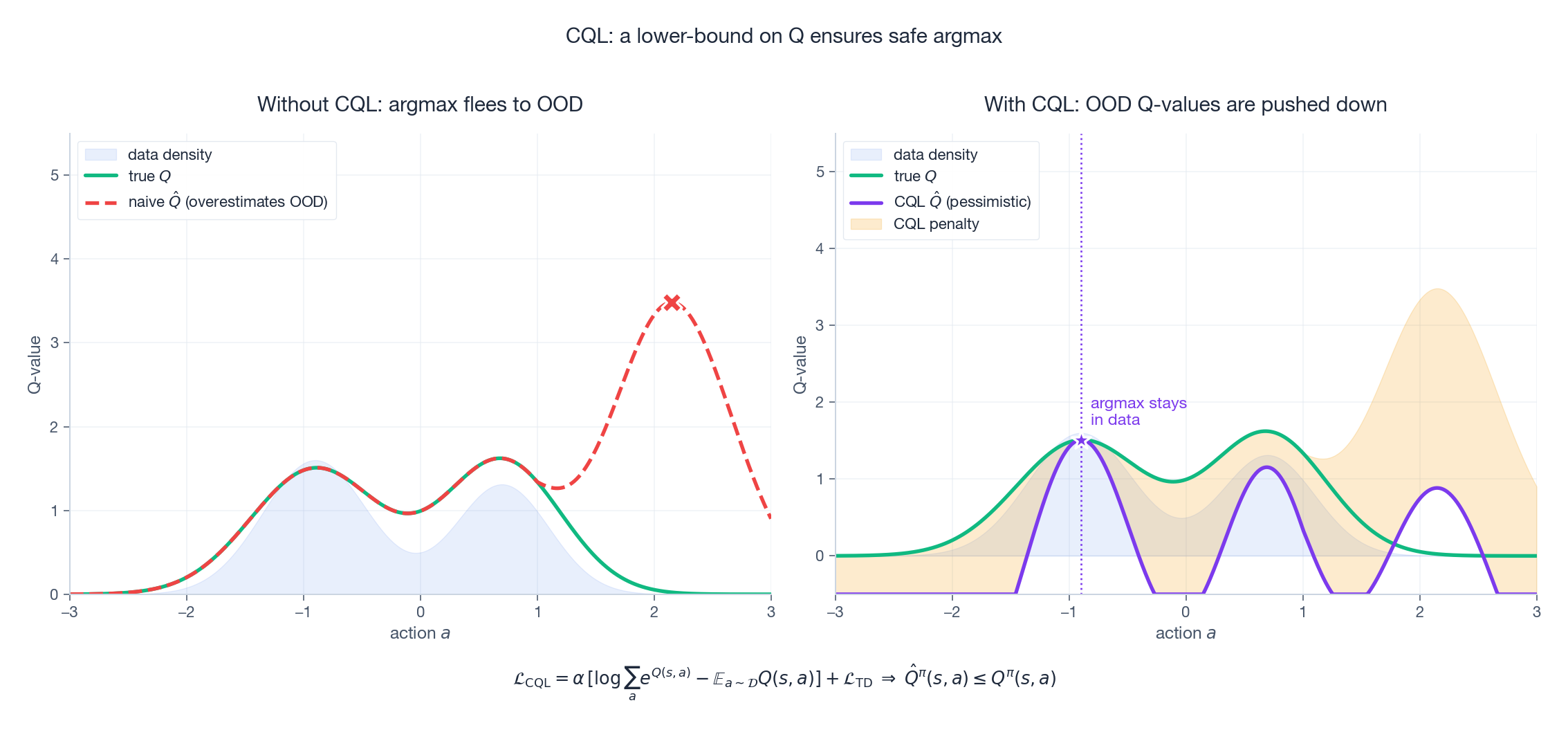

). The right panel of the figure above is the picture to keep in mind: the green curve is reality, the red curve is what the network believes, and the policy walks straight off the cliff at the OOD peak.

The three families of algorithms below all attack this problem; they differ in what they constrain.

| Family | Slogan | Constraint placed on |

|---|---|---|

| Policy-constraint (BCQ, BEAR, TD3+BC) | “Only act like the data” | $\pi_\theta$ |

| Value-pessimism (CQL, MOPO) | “Distrust unfamiliar Q-values” | $Q$ |

| In-sample learning (IQL, AWAC) | “Never query OOD actions” | the loss itself |

| Sequence modeling (Decision Transformer, Trajectory Transformer) | “Skip Bellman entirely” | the problem formulation |

Conservative Q-Learning (CQL)#

CQL (Kumar et al., 2020 ) is the most widely used baseline. It does not change the network, the actor, or the data pipeline. It changes one term in the loss.

The Idea in One Sentence#

Push down the Q-values of any action the policy might consider, then push back up the Q-values of actions actually present in the data. The net effect is a Q-function that is pessimistic exactly where it lacks evidence.

The Objective#

$$\mathcal{L}_{\mathrm{CQL}} \;=\; \alpha\,\Big[\,\underbrace{\log\sum_{a}\exp Q(s,a)}_{\text{push down everywhere}} \;-\; \underbrace{\mathbb{E}_{a\sim\mathcal{D}}\big[Q(s,a)\big]}_{\text{push up on data}}\Big] \;+\; \mathcal{L}_{\mathrm{TD}}.$$The logsumexp is a soft maximum over actions: minimizing it pulls all Q-values down. Subtracting the expectation under the data distribution lets the in-data actions float back up. Out-of-distribution actions only feel the downward force.

A pessimistic estimate is exactly what we need: any policy that maximizes a lower bound on the true value cannot pick a catastrophic action because it has no incentive to.

The left panel shows what happens with a vanilla SAC-style critic on offline data: the argmax flees to the OOD bump. The right panel shows CQL’s correction: the orange shaded region is the pessimism penalty, and the new argmax sits comfortably inside the data support.

Practical Notes#

- The

logsumexpis intractable for continuous actions, so implementations approximate it withn_randomuniform samples plusn_actorsamples from the current policy. 10-20 samples is usually enough. - The original paper’s “CQL($\mathcal{H}$

)” variant adds a Lagrangian that auto-tunes $\alpha$

to hit a target gap; this is what the open-source

d3rlpyandJaxRLimplementations ship by default. - Empirically CQL is robust on

medium-replayandmediumD4RL splits but can be slightly conservative onmedium-expert, where IQL or DT often win.

A Complete CQL Implementation#

| |

Plug an offline replay buffer (D4RL via minari or d4rl-pybullet) into update, train for ~1M gradient steps, and you should hit roughly the published numbers in the benchmark figure further down.

BCQ: Generative Action Proposals#

CQL constrains the value; BCQ (Fujimoto et al., 2019 ) constrains the policy directly. The principle is mechanical: never even query the Q-function on an action that the behavior policy would not have produced.

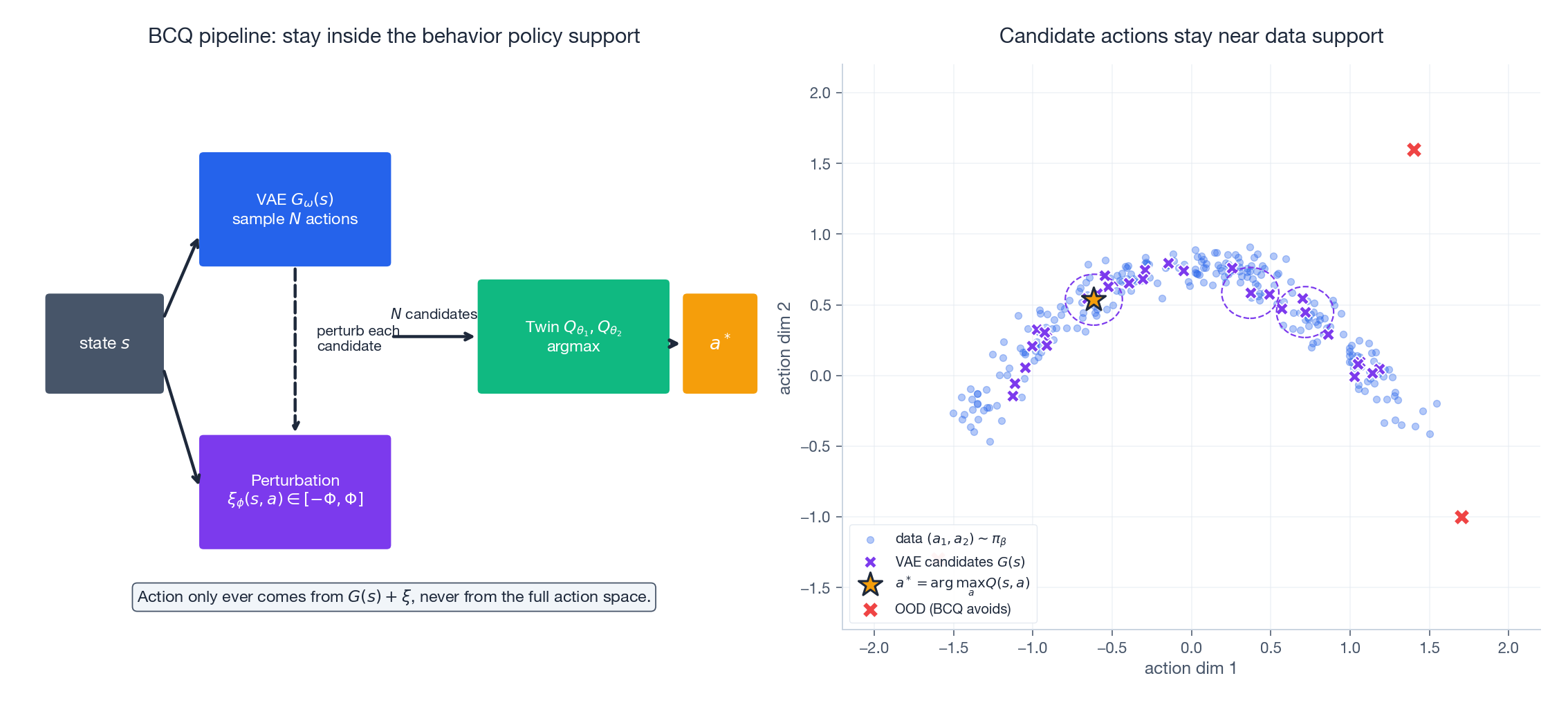

The architecture has three pieces:

- A conditional VAE $G_\omega(s)$ trained on $\mathcal{D}$ via the standard ELBO. It models $\pi_\beta(a\mid s)$ , so samples drawn from it stay on the behavior manifold.

- A perturbation network $\xi_\phi(s,a)\in[-\Phi,\Phi]^{\dim(a)}$ that adds a small, bounded refinement to each VAE sample. With $\Phi$ small (typically 0.05), the refined action cannot drift far from the data; with $\Phi=0$ BCQ reduces to weighted imitation.

- Twin Q-networks that score the $N$ refined candidates; the argmax is the action that gets executed.

The clever part is that the perturbation network is trained to maximize Q. So BCQ gets the benefit of policy improvement (it is not pure imitation) without the cost of OOD extrapolation (it cannot stray far from the data). The price is a more elaborate pipeline — four networks instead of two — and the VAE itself must be trained well, otherwise the candidates are biased.

IQL: Avoid the Bootstrap Problem Entirely#

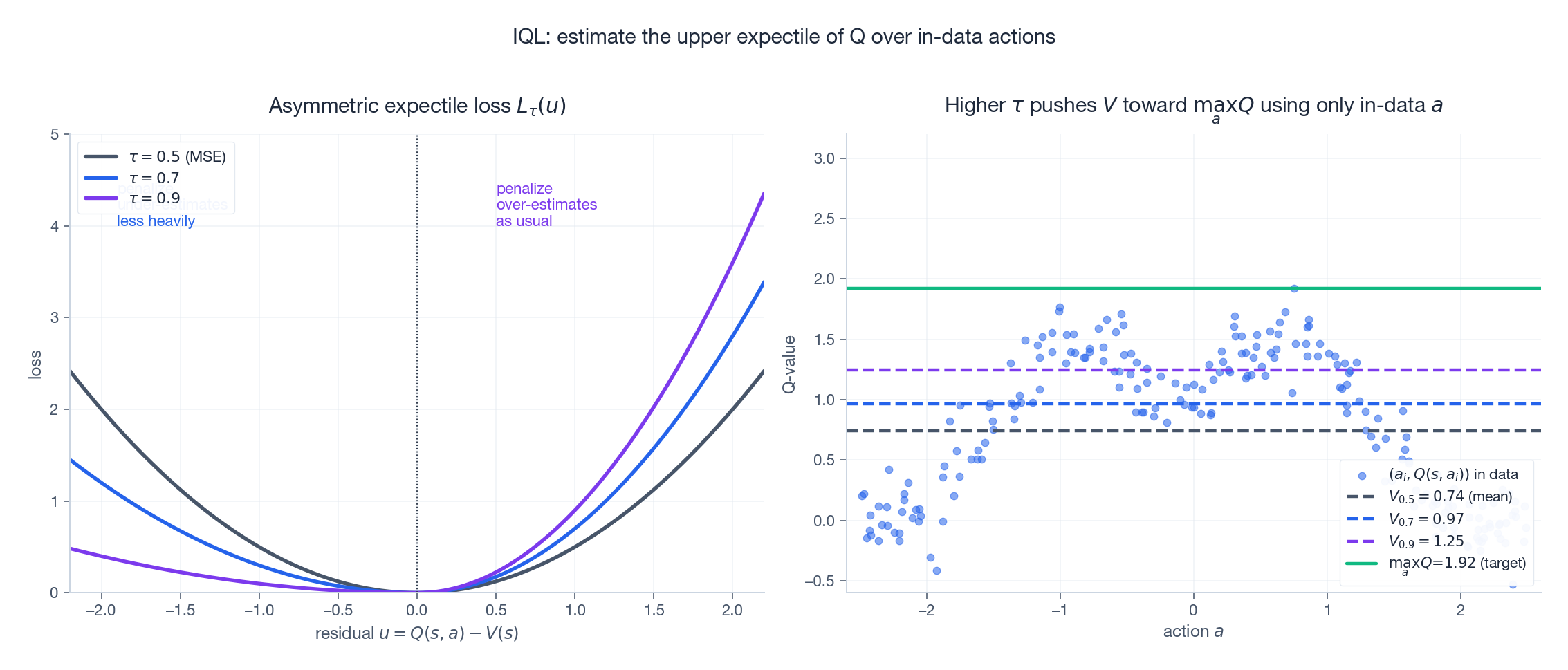

IQL (Kostrikov et al., 2022 ) is the most elegant of the three. Its observation: every offline-RL pathology comes from the $\max_{a'}Q(s',a')$ in the Bellman target, because that is where OOD actions enter. So… just don’t use it.

$$\mathcal{L}_V \;=\; \mathbb{E}_{(s,a)\sim\mathcal{D}}\big[L_2^{\tau}\big(Q(s,a)-V(s)\big)\big],\qquad L_2^{\tau}(u)=\big|\tau-\mathbb{1}(u<0)\big|\,u^{2}.$$When $\tau=0.5$ this is plain MSE and $V$ learns the mean. When $\tau\to 1$ it asymmetrically penalizes under-estimates much more than over-estimates, so $V$ converges to the upper expectile of $Q(s,a)$ over the actions seen in the data.

IQL has the smallest moving-parts surface of any modern offline-RL algorithm, and it consistently tops the D4RL leaderboard on AntMaze and Adroit — environments where good data is sparse and bootstrapping with max is most dangerous.

Decision Transformer: RL as Sequence Modeling#

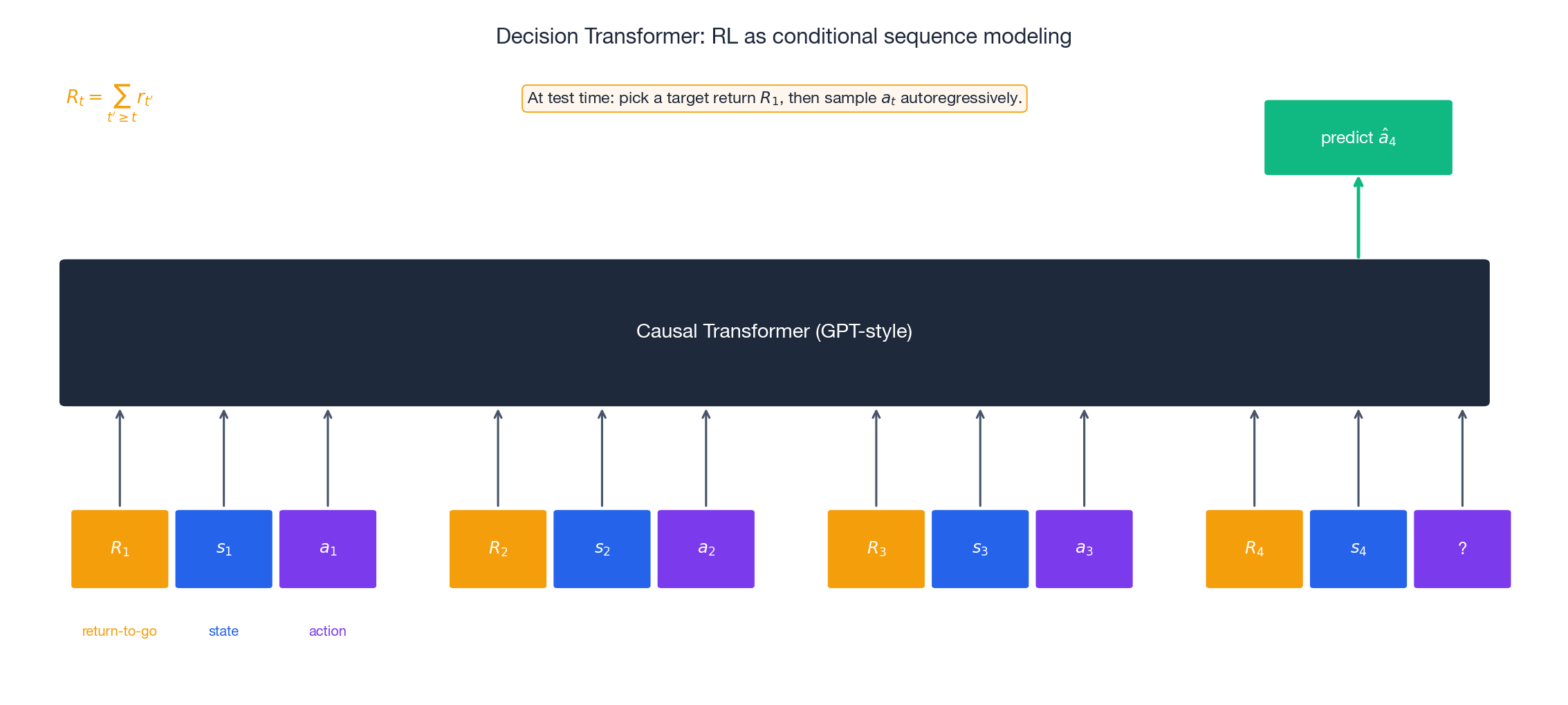

If we are going to drop the Bellman equation, why stop at IQL? The Decision Transformer (Chen et al., 2021 ) drops the value function entirely and treats RL as next-token prediction.

A trajectory is laid out as a sequence of triplets $(\hat{R}_1, s_1, a_1, \hat{R}_2, s_2, a_2, \ldots)$ , where $\hat{R}_t = \sum_{t'\geq t} r_{t'}$ is the return-to-go from timestep $t$ . A standard causal (GPT-style) Transformer is trained with cross-entropy or MSE to predict $a_t$ given everything before it.

At test time you feed in your desired return-to-go as $\hat{R}_1$ , run the model autoregressively, and out come the actions. The return-to-go acts as a knob: ask for more, get a more aggressive policy.

What this buys you:

- No bootstrapping, so no extrapolation error.

- Long context lets the model condition on partial-observation history “for free.”

- Works out of the box with all the Transformer engineering (LayerNorm, RoPE, FlashAttention, mixed precision…).

- One paradigm scales from D4RL to Atari to large multi-task setups (e.g. Gato).

What it costs:

- Cannot exceed the best return seen in the data: if no trajectory in $\mathcal{D}$ ever scored 90, asking for 90 produces garbage.

- No causal credit assignment — the model is trained to imitate trajectories conditioned on outcome, not to reason about which action caused the outcome.

- Generally requires more parameters and more data than CQL/IQL to reach the same wall-clock score on small benchmarks.

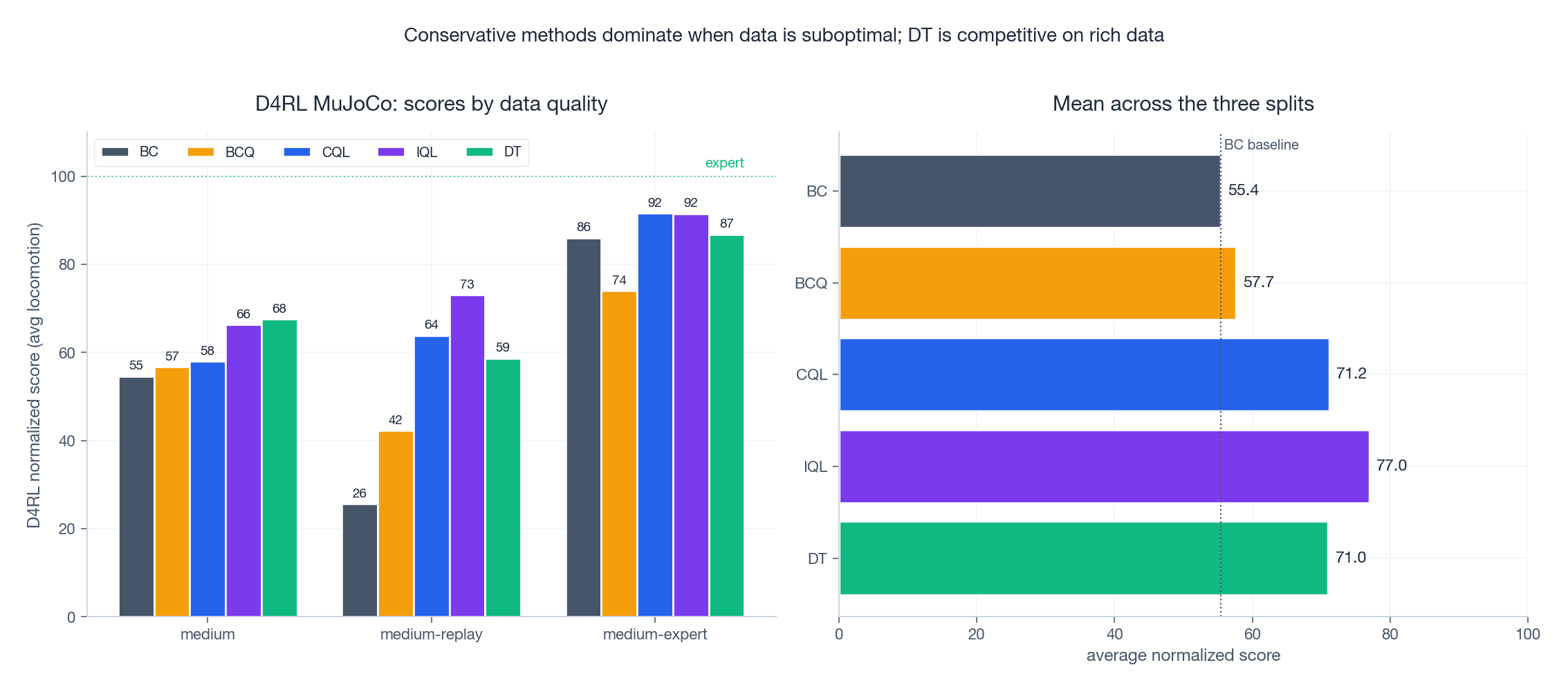

Putting Numbers to It: D4RL#

The figure compiles representative normalized scores from the original CQL (Kumar et al., 2020

), IQL (Kostrikov et al., 2022

) and DT (Chen et al., 2021

) papers on the canonical D4RL

MuJoCo locomotion suite (hopper, halfcheetah, walker2d averaged). 100 = expert performance, 0 = random.

Three takeaways:

medium-replayis where conservatism matters most. This split is generated by an early SAC checkpoint — lots of bad actions, lots of recovery transitions. CQL and IQL roughly double BC’s score; DT trails because it has no value bootstrapping to stitch together good sub-trajectories.medium-expertis where DT shines. When the data already contains near-optimal trajectories, sequence modeling wins on simplicity.- IQL is the most consistent. It is rarely the absolute best on any single dataset, but it is rarely worse than third, and its training is the most stable of the four.

When in doubt, start with IQL for stability or CQL for simplicity, and reach for DT when the dataset already contains near-expert demonstrations.

FAQ#

Q: When does offline RL fail outright? Three failure modes are well-documented: (i) narrow data coverage — only expert trajectories with no recovery examples, so the policy cannot learn to handle its own mistakes; (ii) very low data quality — random-policy data on long-horizon tasks; (iii) evaluation-environment shift — the test MDP’s transition dynamics differ from those that produced $\mathcal{D}$ .

Q: How do I tune CQL’s $\alpha$ ? Roughly: $\alpha=0.5$ -$1.0$ for expert data, $1.0$ -$5.0$ for medium data, $5.0$ -$10.0$ for random/replay data. Better: use the Lagrangian variant from the original paper, which auto-tunes $\alpha$ to keep the gap $\mathbb{E}_{a\sim\pi}Q - \mathbb{E}_{a\sim\mathcal{D}}Q$ near a target threshold (typically 5-10).

Q: Offline RL vs imitation learning — what’s actually different? Imitation cloning (BC) ignores rewards and cannot beat the demonstrator. Offline RL uses the reward signal and can stitch good segments from different trajectories, producing a policy strictly better than any single trajectory in $\mathcal{D}$ . The classic stitching example: trajectories A reach state $s$ via a clumsy route then act well, trajectories B reach $s$ efficiently then act poorly — offline RL can produce “B’s beginning + A’s end” while BC cannot.

Q: Should I use a model-based offline method instead? For high-dimensional continuous control, MOPO/MOReL/COMBO are competitive but add a learned dynamics model with its own pessimism term. They shine when the dataset is small (because the model adds inductive bias) but are heavier to engineer. As a default, model-free CQL/IQL are still the right starting point in 2025.

Q: What about offline-to-online fine-tuning? This is where IQL pulled ahead in the last two years. Methods like AWAC, Cal-QL, and RLPD initialize from an offline policy and continue training online with a small amount of fresh data. CQL initialization tends to be over-conservative for fine-tuning; IQL or AWAC initializations are usually preferred.

References#

- Levine, Kumar, Tucker, Fu. Offline Reinforcement Learning: Tutorial, Review, and Perspectives on Open Problems . 2020.

- Fujimoto, Meger, Precup. Off-Policy Deep Reinforcement Learning without Exploration (BCQ) . ICML 2019.

- Kumar, Zhou, Tucker, Levine. Conservative Q-Learning for Offline Reinforcement Learning (CQL) . NeurIPS 2020.

- Kostrikov, Nair, Levine. Offline Reinforcement Learning with Implicit Q-Learning (IQL) . ICLR 2022.

- Chen, Lu, Rajeswaran, Lee, Grover, Laskin, Abbeel, Srinivas, Mordatch. Decision Transformer: Reinforcement Learning via Sequence Modeling . NeurIPS 2021.

- Fu, Kumar, Nachum, Tucker, Levine. D4RL: Datasets for Deep Data-Driven RL . 2020.

Reinforcement Learning 12 parts

- 01 Reinforcement Learning (1): Fundamentals and Core Concepts

- 02 Reinforcement Learning (2): Q-Learning and Deep Q-Networks (DQN)

- 03 Reinforcement Learning (3): Policy Gradient and Actor-Critic Methods

- 04 Reinforcement Learning (4): Exploration Strategies and Curiosity-Driven Learning

- 05 Reinforcement Learning (5): Model-Based RL and World Models

- 06 Reinforcement Learning (6): PPO and TRPO — Trust Region Policy Optimization

- 07 Reinforcement Learning (7): Imitation Learning and Inverse RL

- 08 Reinforcement Learning (8): AlphaGo and Monte Carlo Tree Search

- 09 Reinforcement Learning (9): Multi-Agent Reinforcement Learning

- 10 Reinforcement Learning (10): Offline Reinforcement Learning you are here

- 11 Reinforcement Learning (11): Hierarchical RL and Meta-Learning

- 12 Reinforcement Learning (12): RLHF and LLM Applications