Reinforcement Learning (11): Hierarchical RL and Meta-Learning

A deep dive into hierarchical RL (Options, MAXQ, Feudal Networks, goal-conditioned policies) and meta-RL (MAML, FOMAML, RL^2). Covers temporal abstraction, semi-MDPs, manager-worker architectures, second-order meta-gradients and recurrent meta-learners, with annotated PyTorch implementations.

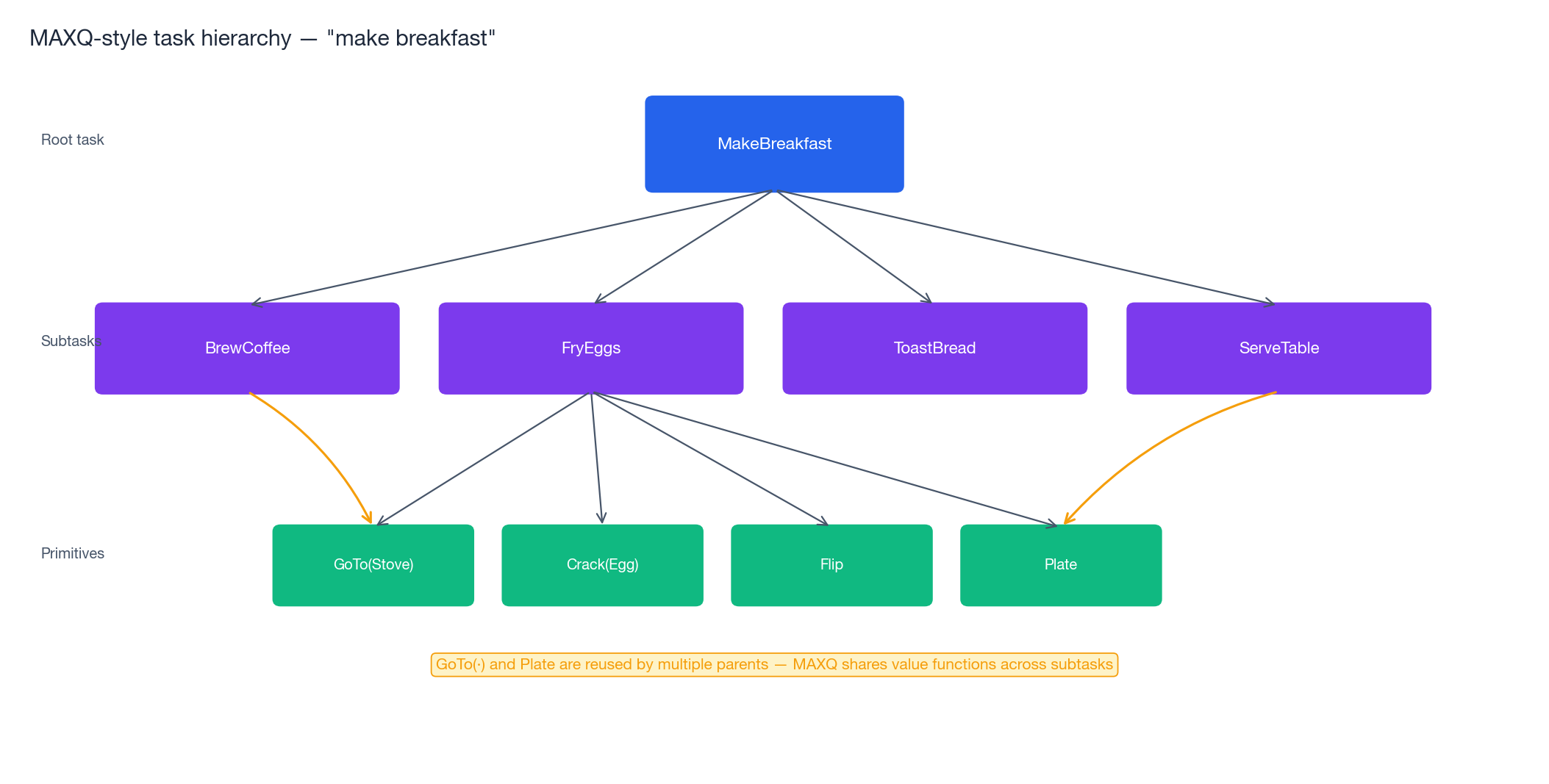

Standard RL treats every problem as a flat sequence of atomic decisions: observe state, pick an action, receive a reward, repeat. That works when the horizon is short and rewards are dense, but it breaks down on the kind of tasks humans solve effortlessly. “Make breakfast” is not one decision; it is a tree of subtasks — brew coffee, fry eggs, toast bread, plate it up — each of which is itself a small policy. Hierarchical RL (HRL) lets agents reason and act at multiple timescales by treating macro-actions as first-class citizens.

A second weakness of standard RL is that every new task is learned from scratch. A bicycle rider becomes a motorcycle rider in an afternoon, not in 10 million environment steps. Meta-RL attacks this by training across a distribution of tasks so that adaptation to a held-out task takes a handful of episodes — or even a single forward pass through a recurrent network.

This post unifies the two ideas: hierarchy buys temporal abstraction, meta-learning buys task abstraction. Both reduce the effective dimensionality of the learning problem, and the modern frontier (FuN, HIRO, MAML, RL$^2$ ) combines them aggressively.

What You Will Learn#

- Options framework — semi-Markov decision processes and intra-option Q-learning

- MAXQ — value-function decomposition along a task hierarchy

- Feudal RL (FuN, HIRO) — continuous subgoals with manager-worker architectures

- Goal-conditioned RL — universal value functions and HER

- MAML / FOMAML — learning an initialisation that adapts in a few gradient steps

- RL$^2$ — folding the inner RL algorithm into an RNN’s hidden state

- Working code — intra-option Q-learning in Four Rooms and a MAML policy gradient on 2-D navigation

Prerequisites#

- Q-learning, policy gradients and value functions (Parts 1–6 )

- Comfort with RNN unrolling and second-order autodiff

- PyTorch

Hierarchy: the Options framework#

Why temporal abstraction matters#

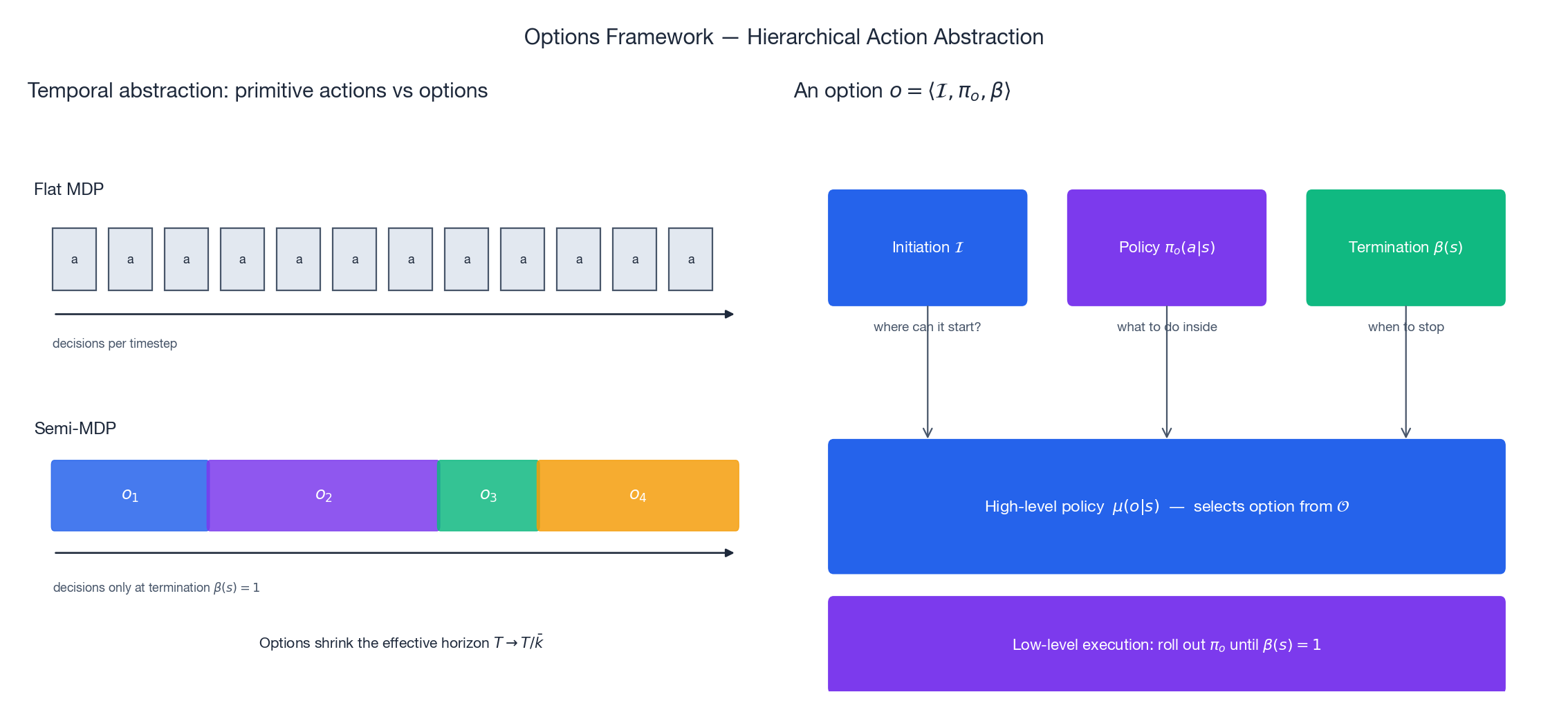

A flat policy makes one decision per environment step, so its credit-assignment path on a horizon-$T$ task has length $T$ and its exploration tree has $|\mathcal{A}|^T$ leaves. Both grow exponentially. A hierarchy with average macro-action length $\bar k$ shrinks the decision count to $T/\bar k$ and the exploration tree to $|\mathcal{O}|^{T/\bar k}$ , where $\mathcal{O}$ is a (typically small) set of options. The figure below contrasts the two views.

Beyond the asymptotic argument, hierarchies offer three practical benefits:

- Modularity — options trained on one task transfer to others (e.g., go-to-door generalizes across navigation problems).

- Interpretability — the high-level policy is small enough to inspect, and decisions are made at semantically meaningful checkpoints.

- Reward Shaping — subgoals provide dense intrinsic rewards even when extrinsic rewards are sparse.

The option triple#

$$o = \langle \mathcal{I}, \pi_o, \beta \rangle,$$where $\mathcal{I} \subseteq \mathcal{S}$ is the set of states from which the option may be initiated, $\pi_o(a \mid s)$ is the option’s internal policy and $\beta(s) \in [0, 1]$ is its termination probability. Once options are introduced, the underlying MDP becomes a semi-MDP: the high-level policy $\mu(o \mid s)$ chooses an option, the option runs until $\beta$ fires, and only then does the next high-level decision happen.

Intra-option Q-learning#

$$Q(s, o) \leftarrow Q(s, o) + \alpha \big[r + \gamma U(s', o) - Q(s, o)\big],$$ $$U(s', o) = (1 - \beta(s'))\, Q(s', o) + \beta(s')\, \max_{o'} Q(s', o').$$The continuation value is the elegant piece: if the option keeps going we keep its Q-value, otherwise we hand control back to the high-level policy and take the best alternative.

| |

In the canonical Four Rooms benchmark, intra-option Q-learning with four hand-crafted “go through doorway” options converges 3–5$\times$ faster than flat Q-learning, and the learned options transfer cleanly to new goal locations.

MAXQ: value decomposition along a task tree#

$$Q_i(s, a) = V_a(s) + C_i(s, a),$$where $V_a(s)$ is the value of completing the child subtask and $C_i(s, a)$ is the completion function — the value of finishing the parent task once the child returns. Because $V_a$ depends only on $a$ , it can be reused across every parent that invokes $a$ , which is where the sample-efficiency win comes from.

MAXQ pays for that win with recursive optimality rather than global optimality: the policy is optimal given the decomposition. If your task graph cannot express the truly optimal solution, MAXQ will not find it.

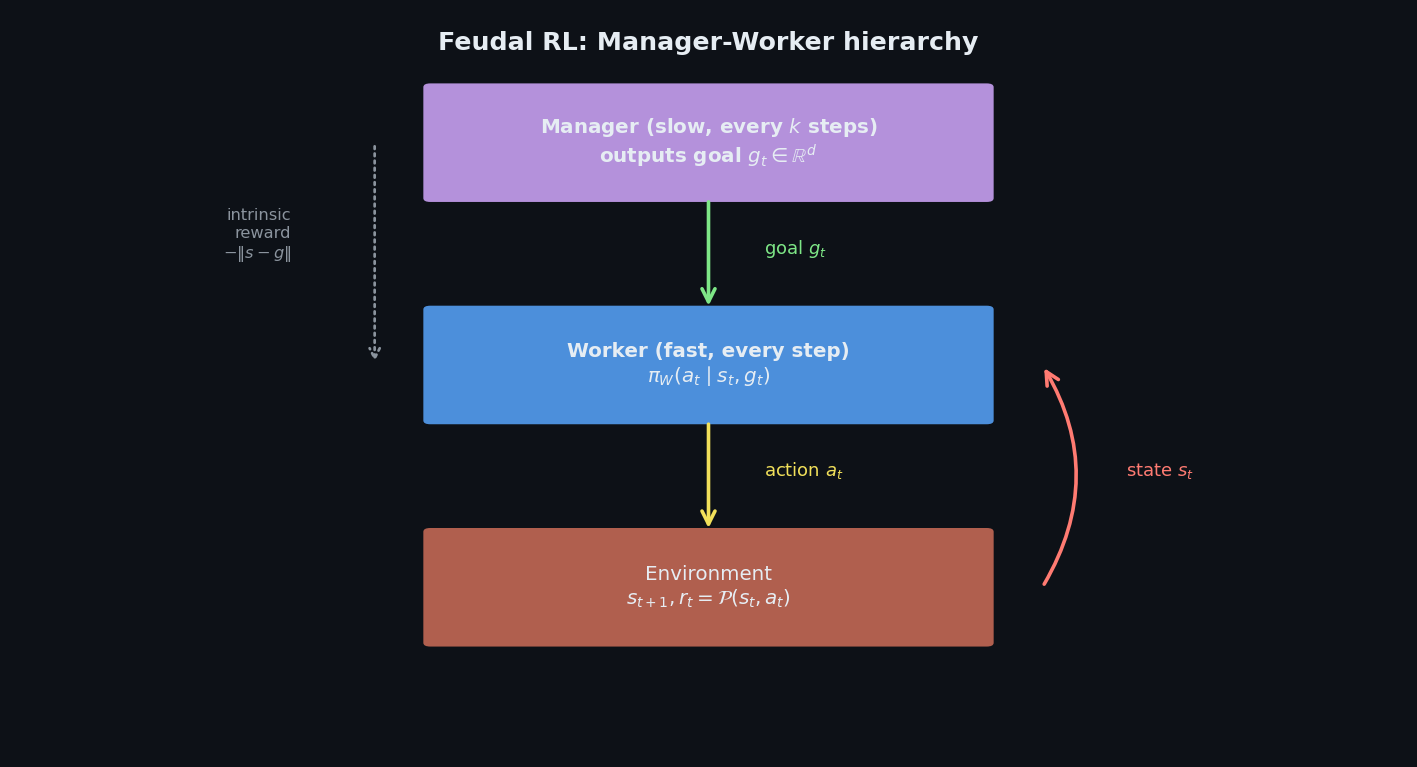

Feudal RL: continuous subgoals with manager-worker#

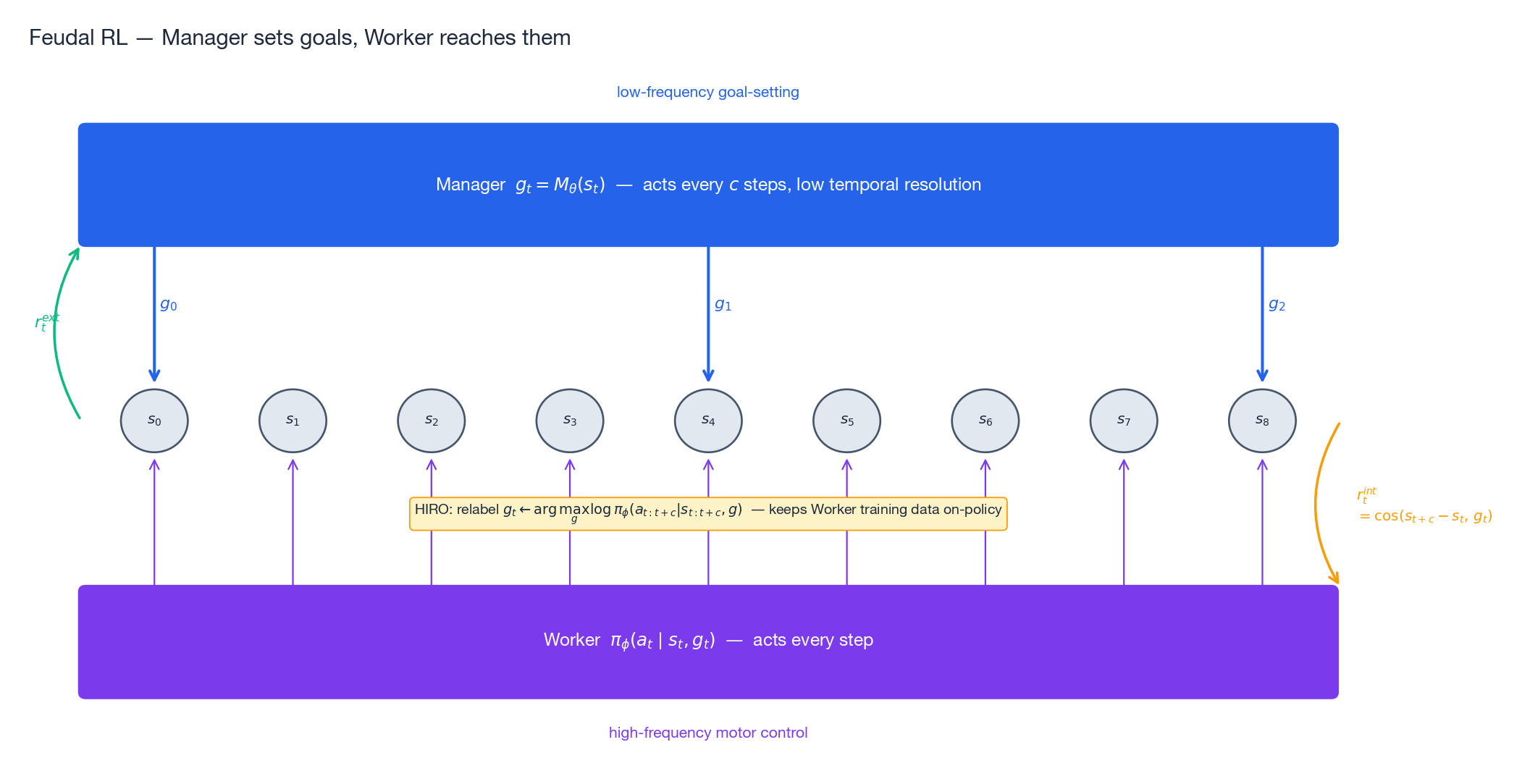

Discrete options scale poorly: in continuous-control or pixel-input domains we cannot enumerate a useful option set by hand. Feudal Networks (FuN, Vezhnevets et al., 2017) and HIRO (Nachum et al., 2018) replace the discrete option set with a continuous goal vector produced by a high-level Manager.

while the Manager is trained on the extrinsic environment reward. This decoupling is what makes Feudal architectures so attractive: the Worker learns motor control on a dense, geometric reward, and the Manager focuses on long-horizon credit assignment with a much smaller effective horizon ($T/c$ ).

HIRO’s relabelling trick#

$$\tilde g_t = \arg\max_{g} \log \pi_\phi(a_{t:t+c} \mid s_{t:t+c},\, g).$$This keeps the Worker’s training data on-policy with respect to its current parameters and dramatically stabilises off-policy learning of the Manager.

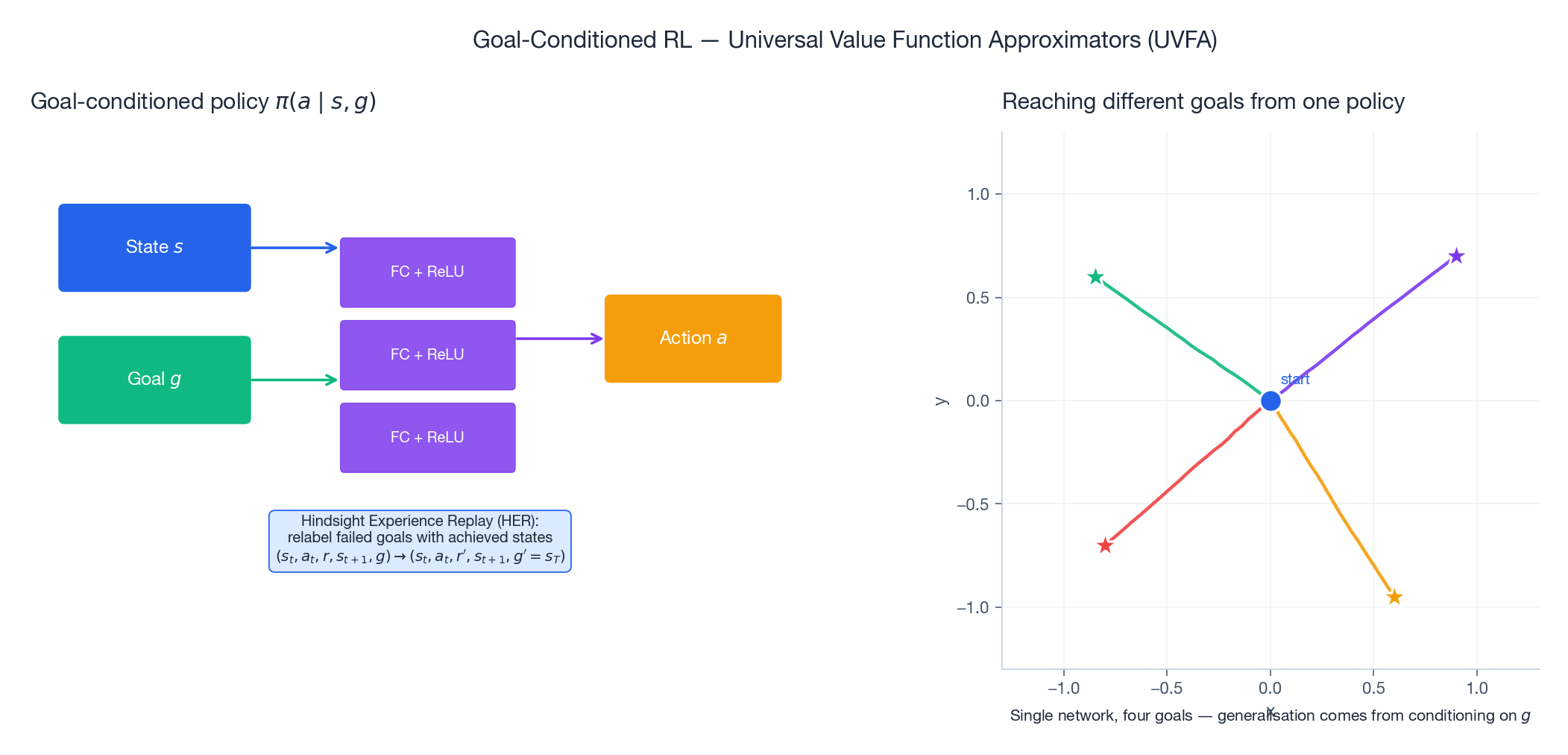

Goal-conditioned RL and HER#

Goal-conditioned policies $\pi(a \mid s, g)$ deserve a section of their own, because they are the bridge between hierarchy and meta-learning. The same network can pursue many goals; the goal $g$ is just an additional input.

The classical formalisation is Universal Value Function Approximators (UVFA, Schaul et al., 2015): learn $V(s, g)$ or $Q(s, a, g)$ instead of $V(s), Q(s, a)$ . Without further tricks UVFAs suffer badly from sparse rewards: most goals are never reached during exploration, so the reward signal is essentially zero.

$$(s_t, a_t, r, s_{t+1}, g) \;\longrightarrow\; (s_t, a_t, r', s_{t+1},\, g' = s_T).$$Combined with off-policy methods (DDPG, SAC), HER turns sparse-reward goal reaching from “essentially impossible” into “routine”.

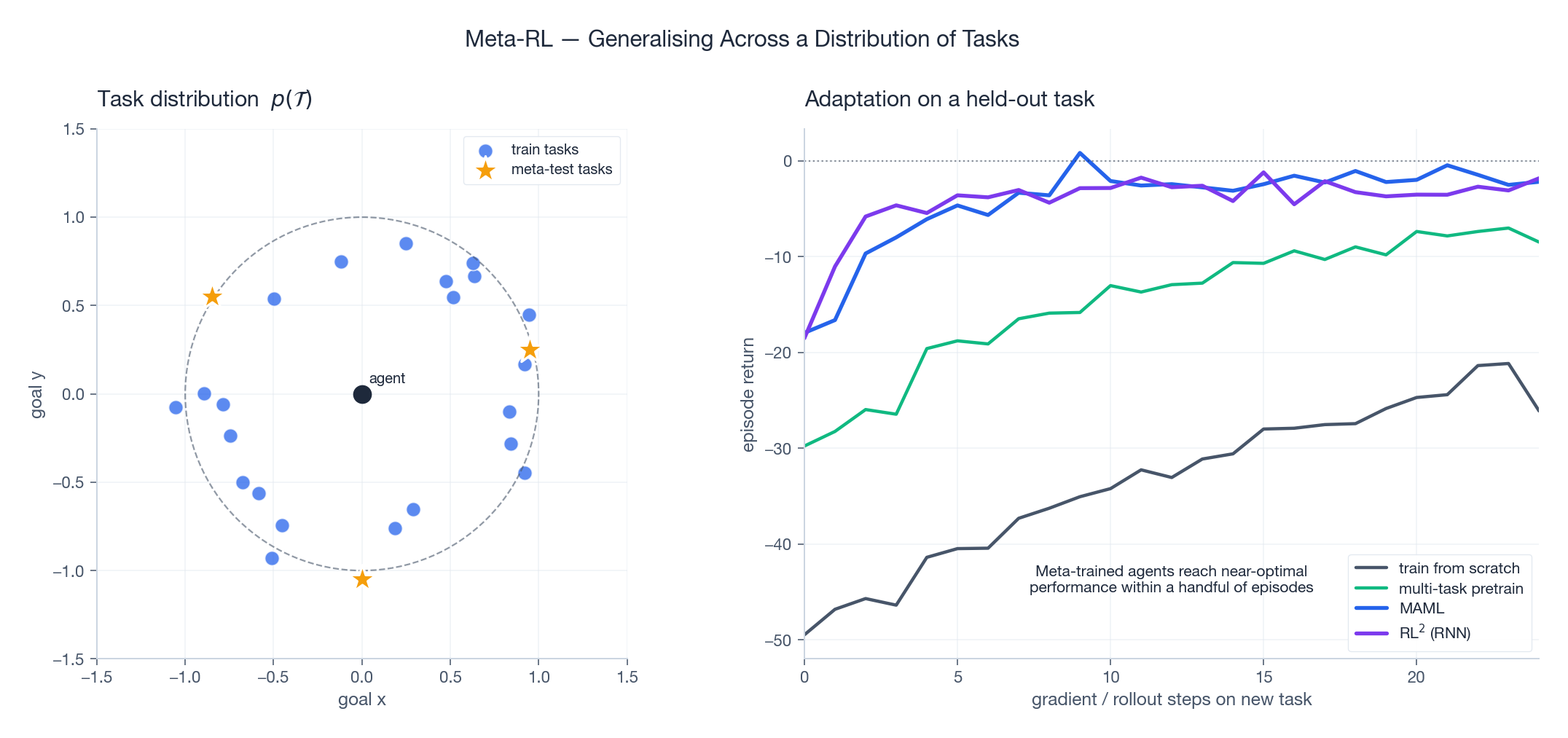

Meta-RL: learning to learn#

Meta-RL assumes a task distribution $p(\mathcal{T})$ rather than a single MDP. At meta-train time the agent sees many tasks $\mathcal{T}_i \sim p(\mathcal{T})$ ; at meta-test time it sees a fresh task and must adapt with as few interactions as possible.

The two dominant families are:

- Optimisation-based (MAML, Reptile, ANIL): adaptation = a few gradient steps on the test task.

- Recurrent / context-based (RL$^2$ , PEARL): adaptation = updating the hidden state of an RNN as data arrives, no gradients needed at test time.

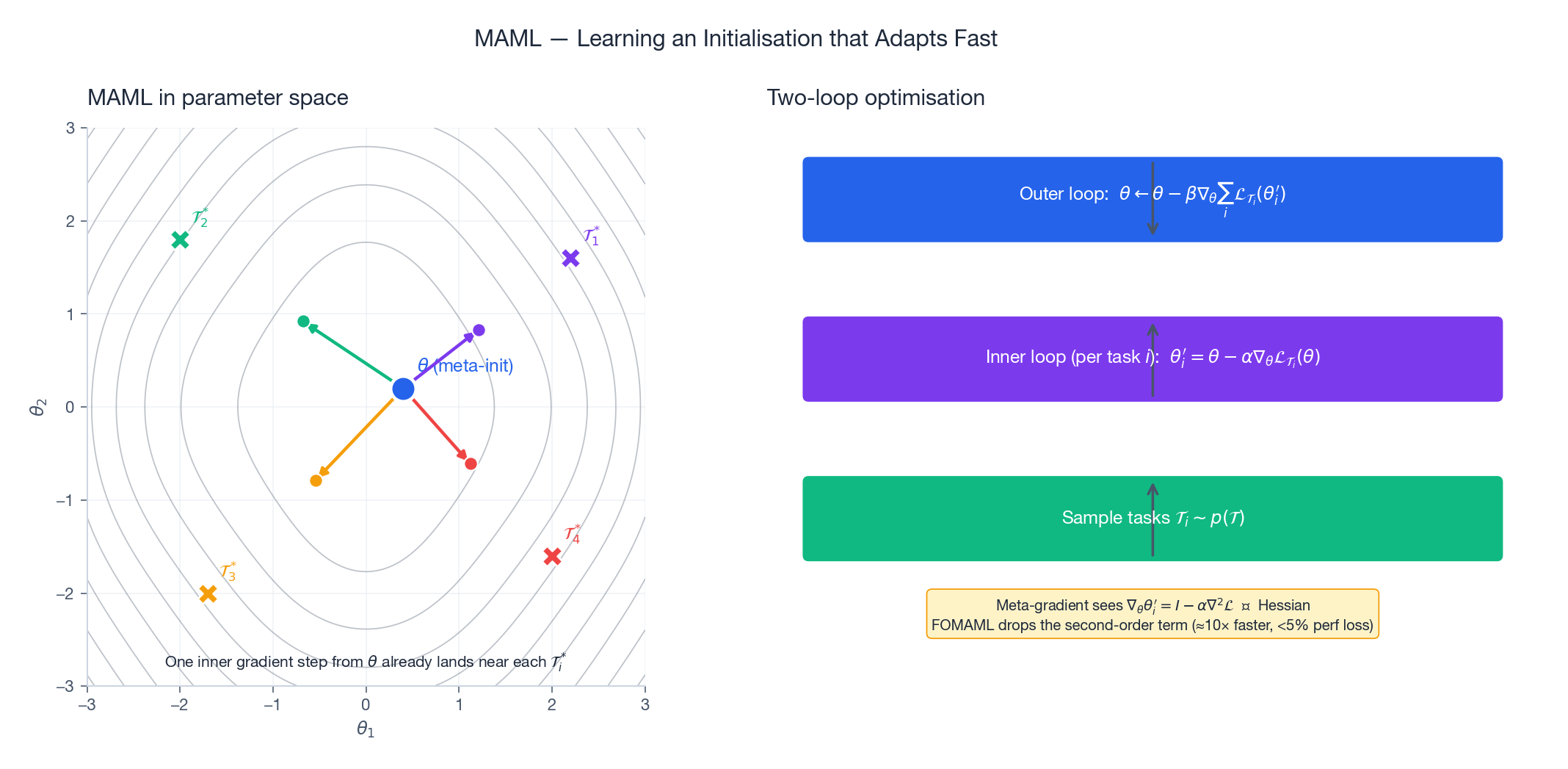

MAML — learning a good initialisation#

$$ \theta_i' = \theta - \alpha \nabla_\theta \mathcal{L}_{\mathcal{T}_i}(\theta), \qquad \theta \leftarrow \theta - \beta \nabla_\theta \sum_{i} \mathcal{L}_{\mathcal{T}_i}(\theta_i'). $$The outer gradient differentiates through the inner update, so it contains a Hessian term $\nabla^2 \mathcal{L}$ . That is what makes MAML expensive — and what motivates FOMAML, which simply ignores the second-order term. Empirically FOMAML is roughly $10\times$ faster per outer step and loses less than 5% of the final return.

The picture on the left is the most useful intuition: meta-training does not try to find an initialisation that is good on any single task. It finds an initialisation that sits in a “sweet spot” from which one gradient step lands close to each task-specific optimum.

| |

The implementation above swaps adapted weights into the network for the meta-evaluation rollout rather than relying on a functional forward pass — it is the simplest readable version, and good enough for small networks. For research-scale MAML you want a proper functional API such as higher or torch.func.functional_call.

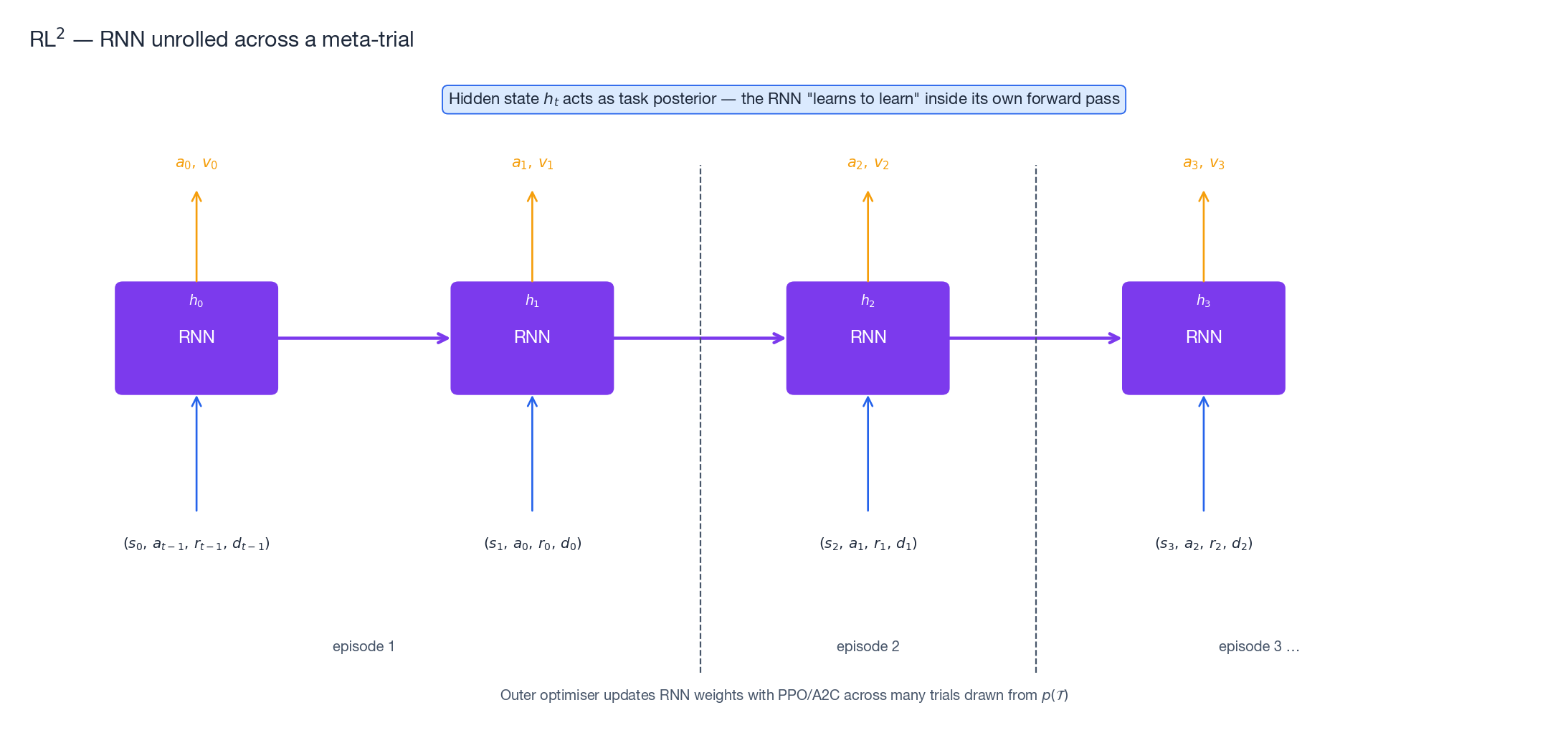

RL$^2$ — folding the algorithm into an RNN#

$$x_t = (s_t,\, a_{t-1},\, r_{t-1},\, d_{t-1}),$$i.e. state plus the previous action, reward and done-flag. Across episodes within a meta-trial the hidden state $h_t$ accumulates information about the current task — effectively performing Bayesian belief updates implicitly. At meta-train time the outer optimiser (PPO or A2C) shapes the RNN weights so that this implicit “inner algorithm” is sample-efficient on $p(\mathcal{T})$ .

RL$^2$ has two attractive properties: (i) zero gradient computation at test time, so adaptation is one forward pass per step; (ii) the adaptation procedure is learned, so it can in principle exceed any hand-designed optimiser on the training distribution. The price is a hard credit-assignment problem during meta-training — the gradient must flow through hundreds of recurrent steps spanning multiple episodes.

FAQ#

Why does the Options framework actually accelerate learning? Three compounding effects: (i) the effective horizon shrinks from $T$ to roughly $T/\bar k$ ; (ii) the high-level branching factor $|\mathcal{O}|$ is usually much smaller than $|\mathcal{A}|$ ; (iii) intra-option learning means every primitive transition contributes to the value of every compatible option, not just the one in control.

Hierarchically optimal vs globally optimal — what’s the difference? A hierarchically optimal policy is the best policy that respects the given decomposition (option set or task graph). A globally optimal policy is the best policy on the underlying flat MDP. They diverge whenever the decomposition cannot express the optimum — e.g. an option that always runs for at least 10 steps cannot implement a policy that needs to switch behaviour every 3 steps.

Why does MAML need second-order gradients, and how much does FOMAML lose? The meta-loss is $\mathcal{L}_{\mathcal{T}_i}(\theta_i')$ where $\theta_i' = \theta - \alpha \nabla_\theta \mathcal{L}_{\mathcal{T}_i}(\theta)$ . Differentiating with respect to $\theta$ yields $(I - \alpha \nabla^2_\theta \mathcal{L}_{\mathcal{T}_i})\, \nabla_{\theta_i'} \mathcal{L}_{\mathcal{T}_i}(\theta_i')$ , which contains the Hessian. FOMAML drops the $-\alpha \nabla^2 \mathcal{L}$ factor, treating $\theta_i'$ as a stop-gradient. The original MAML paper reports <5% performance loss on the standard few-shot benchmarks.

When should I use MAML vs RL$^2$ ? Use MAML when you can afford gradient computation at adaptation time and your task distribution is broad enough that a fixed policy cannot do well on all of it. Use RL$^2$ when the task family is narrow and exploitative (e.g. multi-armed bandits with shifting arms), or when test-time gradients are infeasible. PEARL — which infers a task embedding with a separate encoder — is often a happy medium.

Why is HER specific to off-policy algorithms? HER changes the goal that a transition was collected for, which violates on-policy assumptions: the action distribution that produced the data was conditioned on the original goal, not the relabelled one. Off-policy algorithms (DQN, DDPG, SAC) only require that we know $\pi(a \mid s, g')$ at update time, which we do, so the relabelling is harmless.

References#

- Sutton, Precup & Singh. Between MDPs and semi-MDPs: A framework for temporal abstraction in reinforcement learning. Artificial Intelligence, 1999.

- Dietterich. Hierarchical Reinforcement Learning with the MAXQ Value Function Decomposition. JAIR, 2000.

- Vezhnevets et al. FeUdal Networks for Hierarchical Reinforcement Learning. ICML 2017. arXiv:1703.01161 .

- Nachum et al. Data-Efficient Hierarchical Reinforcement Learning (HIRO). NeurIPS 2018. arXiv:1805.08296 .

- Schaul et al. Universal Value Function Approximators. ICML 2015.

- Andrychowicz et al. Hindsight Experience Replay. NeurIPS 2017. arXiv:1707.01495 .

- Finn, Abbeel & Levine. Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks. ICML 2017. arXiv:1703.03400 .

- Duan et al. RL$^2$ : Fast Reinforcement Learning via Slow Reinforcement Learning. 2016. arXiv:1611.02779 .

- Wang et al. Learning to reinforcement learn. 2016. arXiv:1611.05763 .

- Rakelly et al. Efficient Off-Policy Meta-RL via Probabilistic Context Variables (PEARL). ICML 2019.

Reinforcement Learning 12 parts

- 01 Reinforcement Learning (1): Fundamentals and Core Concepts

- 02 Reinforcement Learning (2): Q-Learning and Deep Q-Networks (DQN)

- 03 Reinforcement Learning (3): Policy Gradient and Actor-Critic Methods

- 04 Reinforcement Learning (4): Exploration Strategies and Curiosity-Driven Learning

- 05 Reinforcement Learning (5): Model-Based RL and World Models

- 06 Reinforcement Learning (6): PPO and TRPO — Trust Region Policy Optimization

- 07 Reinforcement Learning (7): Imitation Learning and Inverse RL

- 08 Reinforcement Learning (8): AlphaGo and Monte Carlo Tree Search

- 09 Reinforcement Learning (9): Multi-Agent Reinforcement Learning

- 10 Reinforcement Learning (10): Offline Reinforcement Learning

- 11 Reinforcement Learning (11): Hierarchical RL and Meta-Learning you are here

- 12 Reinforcement Learning (12): RLHF and LLM Applications