LLMGR: Integrating Large Language Models with Graphical Session-Based Recommendation

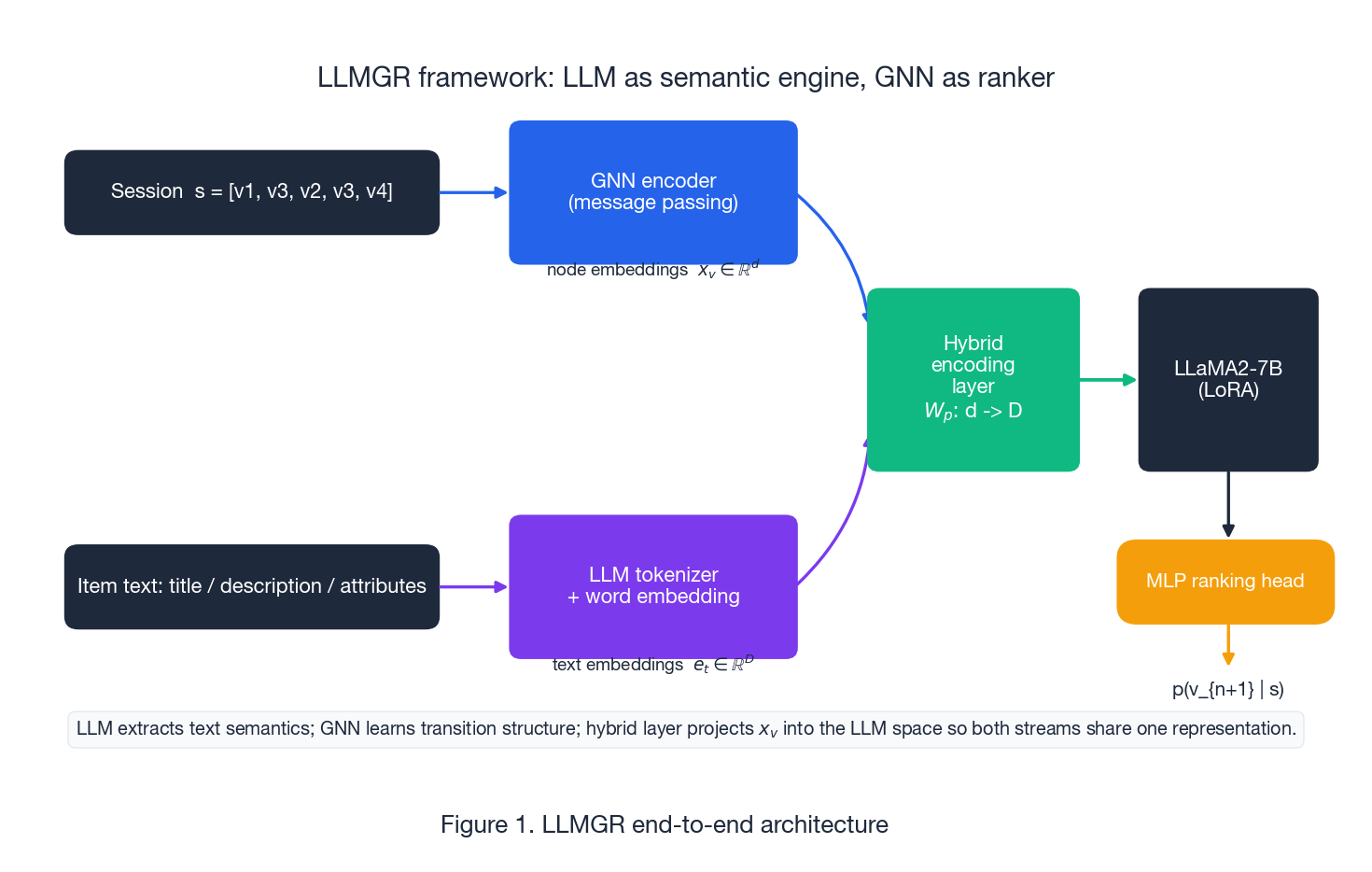

LLMGR uses an LLM as the semantic engine for session-based recommendation and a GNN as the ranker. Covers the hybrid encoding layer, two-stage prompt tuning, ~8.68% HR@20 lift, and how to deploy without running an LLM per request.

Session-based recommendation relies on the click graph. New items lack edges, and long-tail items have a few noisy ones. Each item has a title and description, but the model never uses them. LLMGR addresses this by treating the LLM as a “semantic engine” that converts text into representations a graph encoder can use, then lets a GNN handle ranking. On Amazon Music/Beauty/Pantry, the results show HR@20 up ~8.68%, NDCG@20 up ~10.71%, and MRR@20 up ~11.75% over the strongest GNN baseline, with the biggest gains for cold-start items.

What You Will Learn#

- Why pure GNN session recommenders break on cold-start and long-tail items

- The LLMGR architecture: LLM semantic stream + GNN structural stream + hybrid fusion

- The two prompt families (auxiliary node-text alignment + main next-item prediction)

- The hybrid encoding layer: a single linear $W_p$ that bridges 64-d ID space and 4096-d LLM space

- Why two-stage prompt tuning beats one-stage joint training

- Reported numbers on Amazon Music/Beauty/Pantry, broken out by warm/cold buckets

- How to ship this in production without paying LLM cost per request

Prerequisites#

- Session-based recommendation basics (SR-GNN, GC-SAN)

- LLM fine-tuning basics (LoRA, prompt tuning)

- Recommendation metrics: HR@K, NDCG@K, MRR@K

Paper#

Why pure GNN session recommenders stall on sparsity#

A session is a short click stream $s = [v_1, v_2, \dots, v_n]$ — usually only 3 to 20 clicks — and the task is to score the next item or rank a candidate set. Three structural problems make this hard for any model that only sees IDs and edges:

- Short sequences. Three to twenty clicks contain a lot of exploration noise; extracting a stable intent signal from so few points is genuinely hard.

- Long tail dominates the catalogue. Most items have a handful of edges, and the edges they do have are unreliable. A GNN trained on these edges learns noise.

- IDs carry no semantics. A neighbour relationship in the click graph could mean similar, complementary, or substitute; transition edges alone cannot tell you which.

Text is often the lifeline. Even a new SKU has a title, description, and category, and long-tail items do too. But the common trick of “concatenating a frozen BERT embedding with the ID embedding” rarely works for two reasons:

- Space mismatch. Text embeddings (768 or 4096-d) and graph embeddings (64-d) live in different geometries. Concatenation gives the optimiser no reason to align them.

- Domain mismatch. Pre-trained encoders are optimised on Wikipedia and CommonCrawl, not on shopping intent. “iPhone” and “charger” are unrelated in general English but tightly complementary in retail.

LLMGR’s contribution is a way to actually train through that mismatch.

Architecture: LLM as semantic engine, GNN as ranker#

The cleanest way to read LLMGR is as a two-stream model with a fusion layer in the middle and a ranking head at the top.

A common pitfall is asking the LLM to generate the next item. This fails for three reasons:

- Candidate sets are huge. Tens of thousands of items will not fit in any token budget.

- Ranking needs calibrated scores and negatives. Free-form generation does not give you a calibrated $p(v \mid s)$ .

- Online cost. Running a 7B model per request blows out latency and cost.

LLMGR takes a more pragmatic approach: let the LLM extract semantics, and let a GNN + MLP head handle the ranking. The LLM doesn’t produce item IDs at inference time; instead, it generates a hidden state that the ranker uses to score the candidate set.

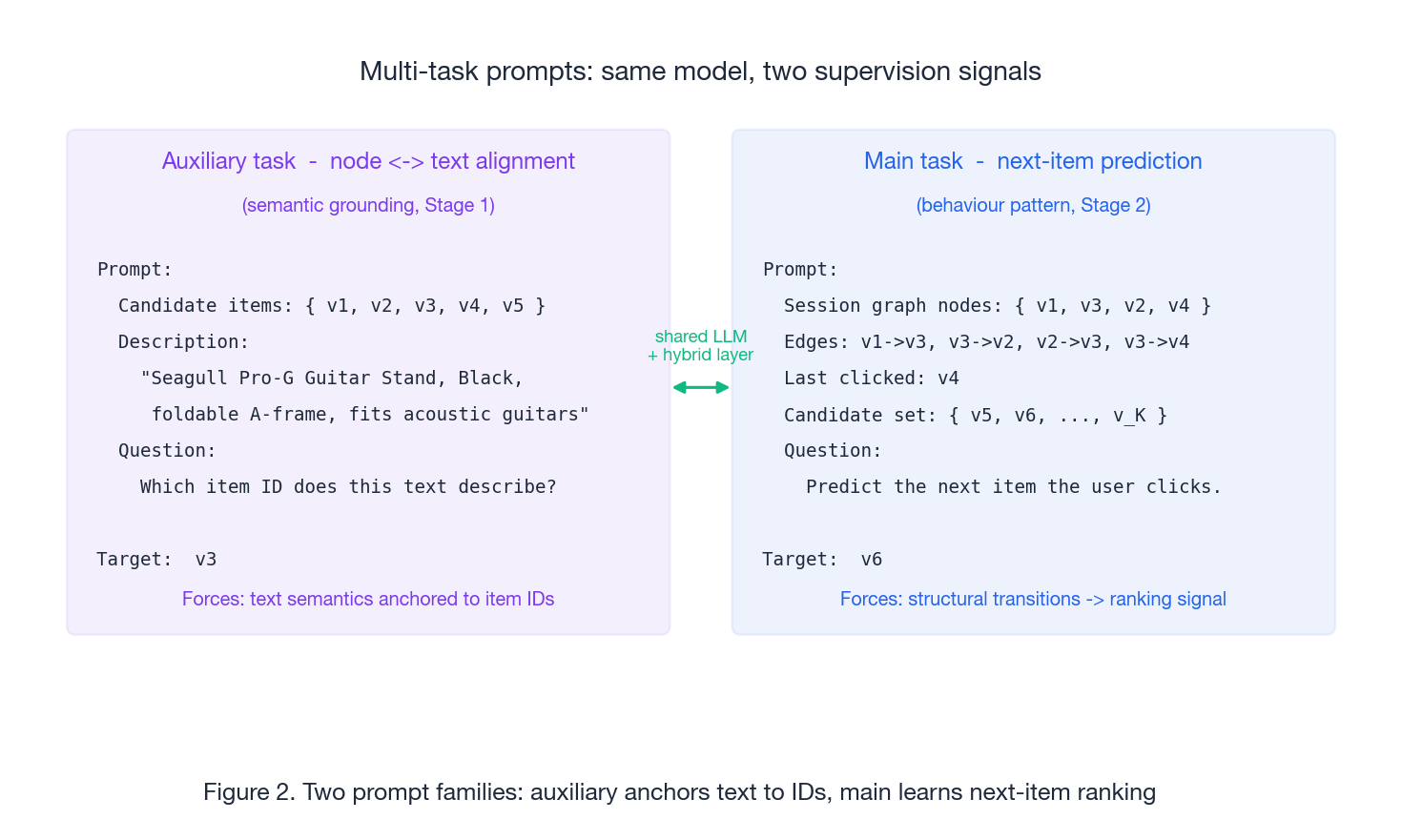

Multi-task prompts as supervision interfaces#

Prompts in LLMGR are not a UI feature. They are training-time supervision signals that force the model to learn the cross-modal alignment we actually want. Two prompt families do all the work.

The auxiliary task is node-text alignment. You hand the model a description and a small set of candidate item IDs and ask which ID the text describes. This is the lever that anchors text semantics to ID embeddings; without it, the LLM has no reason to map “Seagull Pro-G Guitar Stand” to anything in particular in the ID space.

The main task is next-item prediction. The model gets a session graph (nodes plus directed edges plus the last-clicked node) and a candidate set, and produces a hidden state that the ranking head turns into $p(v_{n+1} \mid s)$ .

Both tasks share the same LLM weights, the same hybrid encoding layer, and the same cross-entropy loss. The split is purely about which supervision signal the gradient carries.

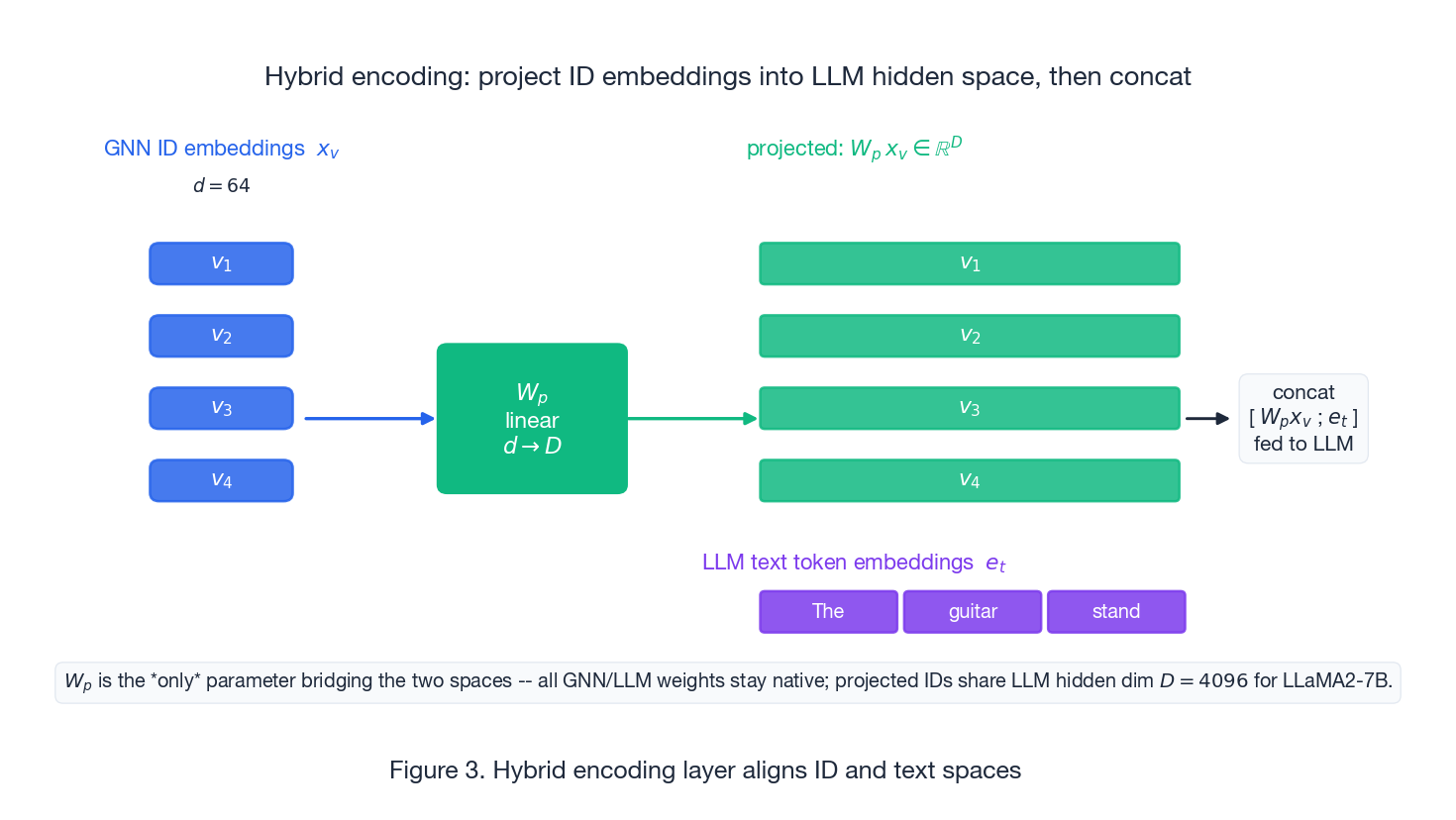

The hybrid encoding layer: one linear map bridges two spaces#

$$\tilde{x}_v = W_p\, x_v, \quad x_v \in \mathbb{R}^{d=64}, \quad \tilde{x}_v \in \mathbb{R}^{D=4096}$$Projected ID vectors are then concatenated with text token embeddings and fed to the LLM as if they were extra tokens.

A few properties of this design are worth highlighting:

- $W_p$ is the only bridge parameter. Both the GNN and the LLM keep their native weights; the projection is a tiny $D \times d$ matrix that is cheap to train and cheap to swap.

- The fusion is structural, not statistical. Concatenation in the LLM’s own input space lets self-attention learn the cross-modal interactions instead of forcing them through a hand-designed gate.

- Text and IDs become interchangeable as far as the LLM is concerned. This is what makes the auxiliary “which ID is this text?” task expressible at all.

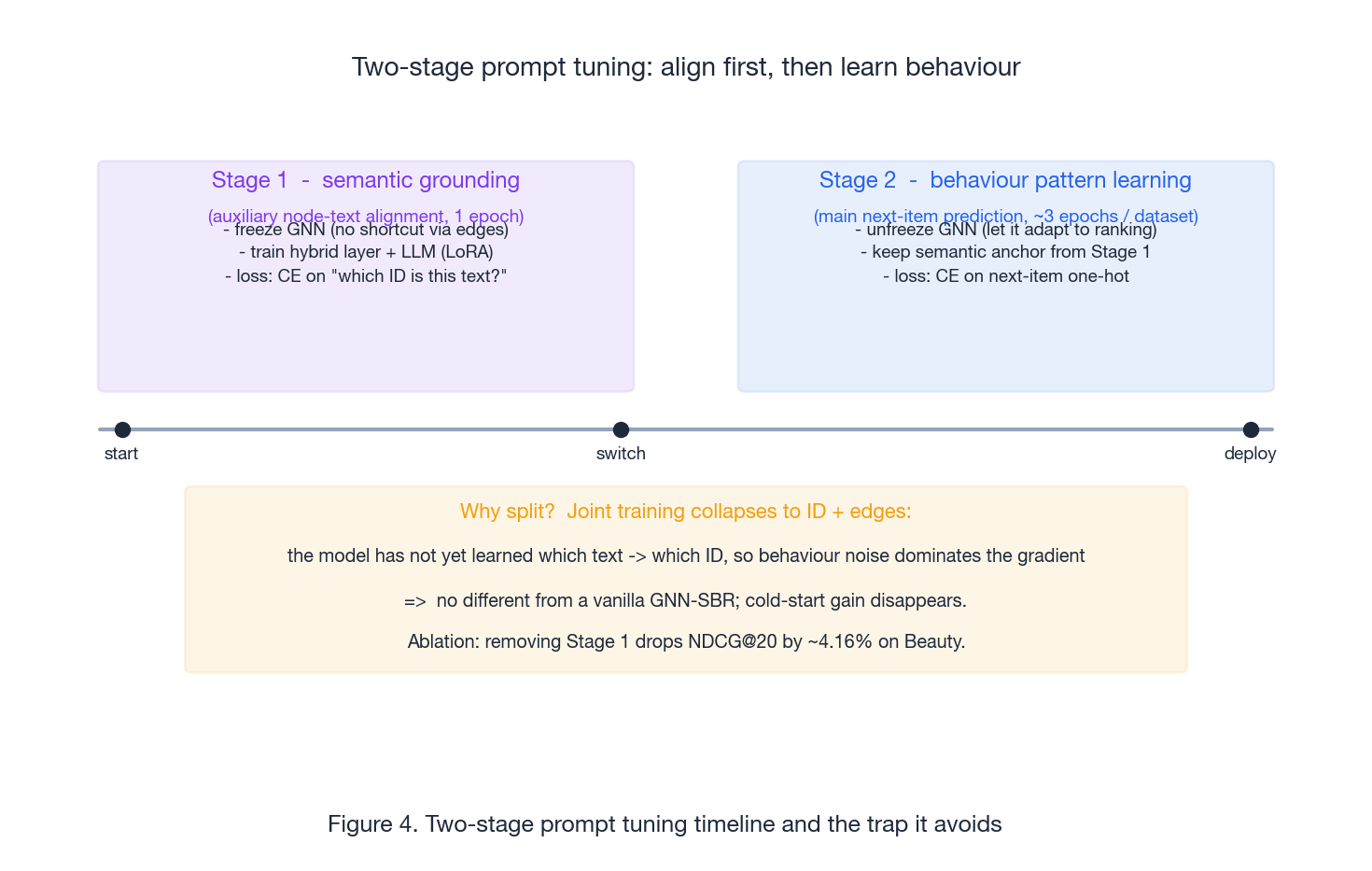

Two-stage prompt tuning: align first, learn behaviour second#

Joint training of the auxiliary and main tasks does not work. LLMGR splits training into two stages and the split is the entire point.

Stage 1 — semantic grounding (1 epoch). The GNN is frozen. Only the hybrid layer and the LLM (via LoRA) are updated. Loss is cross-entropy on the auxiliary “which ID does this text describe?” task. Freezing the GNN here is critical: if the GNN can move, the model can short-circuit the alignment task by overfitting transition edges and the text channel never has to learn anything.

Stage 2 — behaviour pattern learning (~3 epochs per dataset). The GNN is unfrozen. Loss is cross-entropy on the main next-item task. The semantic anchors learned in Stage 1 are preserved by the joint optimisation; the model now learns transition structure on top of grounded semantics rather than instead of them.

Why split? If you train both losses jointly from scratch, the model has not yet learned which text corresponds to which ID, so behaviour noise dominates the gradient. The text channel collapses to noise, the model becomes a vanilla GNN-SBR, and the cold-start gain disappears. The paper’s RQ3 ablation confirms this: removing Stage 1 drops NDCG@20 by ~4.16% on Beauty.

The training schedule is engineered, not arbitrary: 1 epoch is enough for alignment because text-to-ID is a near-deterministic mapping; behaviour patterns are noisier and need more passes.

The math that matters#

Session graph#

For a click stream $s = [v_1, \dots, v_n]$ , build $G_s = (V_s, E_s)$ where $V_s$ is the set of unique items and $E_s$ contains a directed edge $(v_i, v_{i+1})$ for each consecutive click. Repeated items appear once in $V_s$ but their incoming and outgoing edges are all preserved.

GNN message passing#

$$t_v^{(l+1)} = f_{\text{agg}}\!\left(\{x_u^{(l)} : u \in N(v)\}\right), \qquad x_v^{(l+1)} = f_{\text{upd}}\!\left(x_v^{(l)}, t_v^{(l+1)}\right)$$After $L$ layers, $x_v^{(L)}$ has aggregated information from $L$ -hop neighbours.

Graph readout#

$$z_s = f_{\text{readout}}\!\left(\{x_v^{(L)} : v \in V_s\}\right)$$Common choices: mean / max pooling, or attention pooling that puts most weight on the last clicked node.

Hybrid encoding and ranking head#

$$\tilde{x}_v = W_p\, x_v, \qquad H = \mathrm{LLM}\!\left([\tilde{x}_{v_1}, \dots, \tilde{x}_{v_n};\; e_{t_1}, \dots, e_{t_m}]\right)$$ $$p(v_{n+1} \mid s) = \mathrm{softmax}(W_o\, H)$$ $$\mathcal{L} = -\sum_{i} y_i \log p_i$$with $y$ the one-hot true next item.

Experiments: what the paper reports#

Setup#

Three Amazon datasets — Music, Beauty, Pantry — chosen because they (a) have rich item text, (b) span very different shopping intents, and (c) have heavy long tails. Standard preprocessing: drop users and items with fewer than 5 interactions; use leave-one-out splitting (last item = test, second-to-last = validation).

Baselines cover the obvious axes:

- Markov / matrix factorisation: FPMC

- CNN-based: CASER

- RNN-based: GRU4Rec, NARM

- Attention-based: STAMP

- GNN-based: SR-GNN, GCSAN, NISER, HCGR

Implementation details that matter for reproducibility:

- Base LLM: LLaMA2-7B with LoRA, on 2x A100 with DeepSpeed

- ID embeddings are bootstrapped from a pre-trained GCSAN and not modified during LLM training — a small but important engineering trick that avoids learning ID embeddings from scratch through the LLM

- Optimiser: AdamW, batch size 16, cosine schedule, weight decay 1e-2

- Stage 1: 1 epoch. Stage 2: 3 epochs per dataset

Headline result (RQ1)#

Against the strongest baseline (typically GCSAN or HCGR), LLMGR reports:

| Metric | Relative improvement |

|---|---|

| HR@20 | +8.68% |

| NDCG@20 | +10.71% |

| MRR@20 | +11.75% |

NDCG and MRR moving more than HR is the signature pattern: LLMGR is not just hitting more often, it is putting the right item closer to the top.

Portability (RQ2)#

The “semantic module” (LLM + hybrid layer + multi-task tuning) was grafted onto GRU4Rec, STAMP, and GCSAN. All three improved. Average gain: ~8.58% on Music, ~17.09% on Beauty. The bigger Beauty gain is consistent with Beauty having more textual diversity (brand, ingredient, function) for the LLM to exploit.

Ablation (RQ3)#

Remove the auxiliary task (i.e., skip Stage 1):

- Music HR@20 drops by 2.04%

- Beauty NDCG@20 drops by 4.16%

NDCG/MRR moving more than HR confirms that the auxiliary task is doing what it is supposed to do — improving ranking quality, not just hit count.

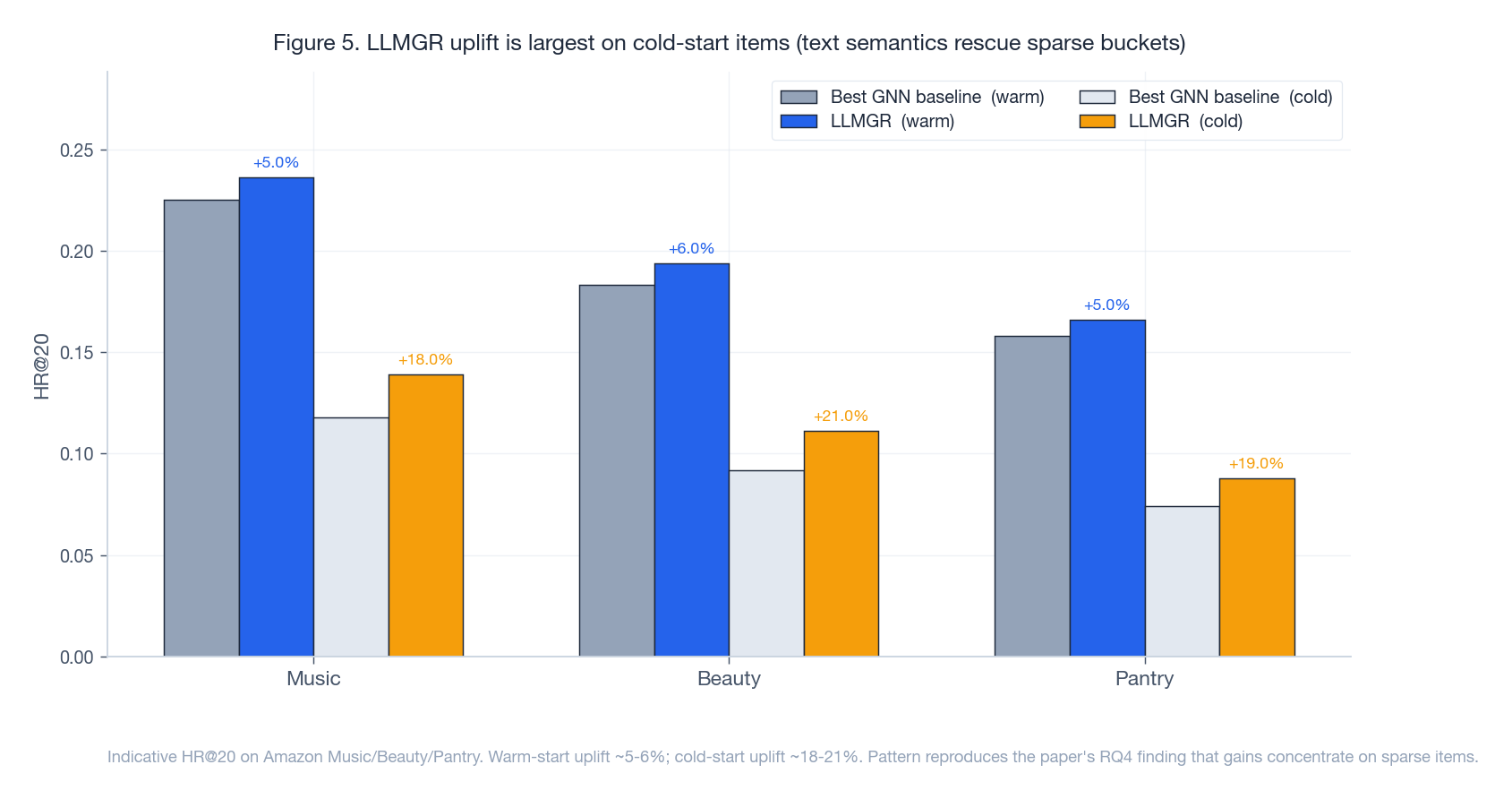

Cold-start (RQ4) — the actual selling point#

LLMGR’s value proposition is sparsity, so the cold-start cut is the one to watch. Items are bucketed by interaction count: warm-start (50+ interactions) vs cold-start (5-10 interactions).

Two takeaways:

- The cold-start uplift is several times the warm-start uplift. Gains are not uniform; they concentrate where text semantics actually rescue the model.

- The pattern holds across all three datasets. This rules out the most boring explanation (“LLMGR just learns Music better than the baseline”).

Interpretability (RQ5)#

The paper shows qualitative cases where the auxiliary task aligns descriptively similar items to nearby IDs. This is a sanity check rather than a metric, but it does answer the “is the LLM actually learning the right thing?” question affirmatively.

Engineering: how to ship this#

Don’t run the LLM per request#

Prompts are training-time supervision; they have no business being on the online path. The deployment pattern is:

- Offline once per item. Run the LoRA-tuned LLM over the catalogue and cache the projected text representations in a vector store.

- Online per request. The lightweight GNN encodes the session, the cached text vectors are looked up, the hybrid layer fuses, the MLP head ranks. No LLM forward pass.

- Re-embed on item churn. New items: run the LLM once on insert. Updated descriptions: re-embed on a schedule.

If you genuinely need online LLM inference for some slice (e.g., long-form personalised re-rank), distil into a smaller model or keep LoRA adapters hot.

Clean the text first#

Marketing copy makes everything look semantically similar — “Best Choice! Top Quality! Limited Offer!” is noise that hurts ranking. Before LLMGR sees a description:

- Strip HTML and marketing boilerplate

- Pull out structured fields (brand, category, key attributes) and put them first

- Truncate or summarise long descriptions to fit token budgets

Always do stratified evaluation#

Overall metrics can hide everything. The whole point of LLMGR is the cold-start bucket; if you only report aggregate HR, you cannot tell whether the gain came from head items (which the baseline already handles) or from the tail (which is what you actually paid for). Bucket items by interaction count and report metrics per bucket. If the gain is not in the cold bucket, LLMGR is not earning its keep.

Cost / quality knobs#

LLaMA2-7B + LoRA on 2x A100 is not free. If cost matters:

- Swap to a smaller LLM (1B-class) and accept some quality regression

- Use LLMGR only on cold and long-tail slices; let traditional GNN handle head items

- Re-embed on a slower schedule

FAQ#

Why not let the LLM generate the next item?#

Because session ranking is a calibrated, large-candidate-set scoring problem. Generative output cannot be turned into a calibrated $p(v \mid s)$ over tens of thousands of items, and the latency / cost would not survive contact with production. Letting the LLM handle semantics and the GNN+MLP handle ranking gets you the upside without the downside.

Is this just “BERT embedding + GNN” with extra steps?#

This is the right control experiment to run. The claim LLMGR makes is that prompts + staged alignment are what make the textual signal stick to the right IDs and stay stable under sparsity, not just “any encoder will do.” Specifically:

- The auxiliary task forces “this text -> this ID” learning that simple concatenation cannot induce.

- Two stages prevent behaviour noise from drowning the alignment signal.

- A 7B LLM has stronger semantic priors than BERT, particularly for long-tail items where transfer matters.

If a careful “BERT + GNN” baseline matches LLMGR on your data, LLMGR is not worth the cost for you. Run it.

Is two-stage really necessary?#

The RQ3 ablation says yes, and the failure mode has a clean diagnostic: when Stage 1 is skipped, NDCG/MRR fall more than HR. That is exactly what you would predict if the model is hitting the right neighbourhood but failing to rank within it — which is what happens when text semantics are not properly anchored.

There may be one-stage schedules that work (joint training with weighted losses, curriculum schedules), but the paper does not test them.

What scenarios suit LLMGR?#

Good fit:

- Rich item text (titles, descriptions, attributes, reviews)

- Heavy long-tail or constant new-item churn

- Existing GNN-SBR you do not want to throw out

Bad fit:

- Item text is just SKU codes

- Interaction data is so dense that cold-start is not your bottleneck

- Cost envelope cannot fit even a LoRA-tuned 7B model

Where LLMGR breaks: scenarios to avoid#

The architecture is not a universal recommender. Three regimes where it underperforms simpler baselines:

- Pure ID recommendation with rich behaviour. When you have years of click logs, hundreds of impressions per item, and short item titles like SKU codes, a vanilla SR-GNN on IDs alone is competitive. The LLM has nothing to encode except

"Product 8472", and the alignment training becomes a parameter-burning ceremony. - Heavy session length (>50 items). GNN message passing on long sessions over-smooths even before the LLM features arrive. The paper’s experiments are on sessions averaging under 10 items; at session length 100 the head-vs-tail gap collapses and a Transformer-based ranker (BERT4Rec) tends to win.

- Latency budgets under 10 ms total. With message passing alone consuming 5-10 ms, LLMGR has no headroom for cross-region embedding lookup or feature joining. Stick with a Two-Tower model whose serving path is a single dot product.

The sweet spot is what the original paper targets: short anonymous sessions on a catalogue with descriptive text and a long tail. Outside that envelope, LLMGR’s win shrinks fast.