Prefix-Tuning: Optimizing Continuous Prompts for Generation

Prefix-Tuning adapts frozen LLMs by learning continuous key/value vectors injected into attention. Covers the method, reparameterization, KV-cache mechanics, and comparisons with prompt tuning, adapters, and LoRA.

Fine-tuning a 1.5B-parameter GPT-2 model for each downstream task means saving a fresh 1.5B-parameter checkpoint every time. Across a dozen tasks, that is a substantial storage and serving headache, and it makes sharing a single base model essentially impossible. Prefix-Tuning (Li & Liang, 2021) takes the opposite stance: freeze every weight of the language model, and learn a tiny block of continuous vectors — the prefix — that is fed into the attention layers as if it were context the model already attended to. The model never changes; only the prefix does, and a different prefix produces a different “personality” on demand.

What You Will Learn#

- What a prefix actually is, and why injecting it as K/V at every layer is more powerful than prepending soft tokens at the input

- The exact attention math, parameter count, and the role of the reparameterization MLP

- How the prefix interacts with the KV-cache during autoregressive decoding

- Where Prefix-Tuning sits relative to prompt tuning, adapters, and LoRA

- Practical guidance on prefix length, multi-task storage, and common failure modes

Prerequisites#

- Transformer attention (Q/K/V projections, multi-head structure)

- Why parameter-efficient fine-tuning (PEFT) exists at all

- Basic familiarity with autoregressive language modeling

Motivation: adapt large models without touching their weights#

Full fine-tuning updates every parameter of a large LM. That is expensive in compute, ruinous in storage when you have many tasks, and incompatible with a single-model multi-tenant serving setup. PEFT methods aim to:

- reduce trainable parameters (less GPU memory for optimizer states)

- shrink per-task checkpoints from gigabytes to megabytes

- keep a single frozen backbone shared across many tasks

Prefix-Tuning is one of the earliest PEFT methods designed specifically for generation tasks (table-to-text, summarization, dialogue), and it remains a clean conceptual baseline.

What is a “prefix” in Prefix-Tuning?#

There are two natural places to insert learned parameters that look like additional context:

- Input-prefix model. Prepend learnable embeddings to the token embeddings. This is what prompt tuning (Lester et al., 2021) does. Only the input layer sees them; later layers receive their influence indirectly.

- Layer-wise K/V prefix model. At every layer, prepend learnable key/value vectors directly to $K$ and $V$ . This is Prefix-Tuning. Every layer gets its own dedicated knob.

The second formulation is strictly more expressive: it adds capacity at every layer, not just at the input. In practice it is also what works well for generation tasks.

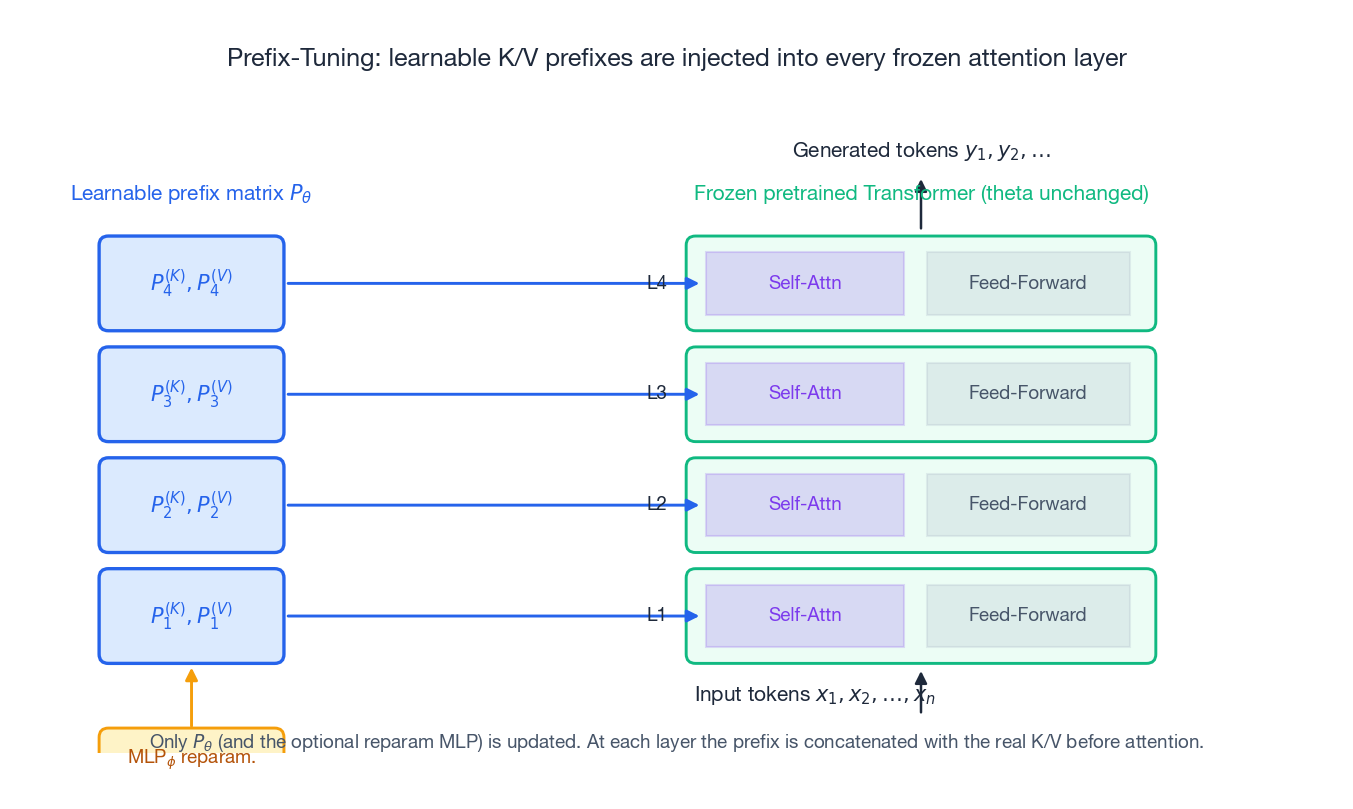

The attention-prefix formulation#

$$ P^{(\ell)} = \big[\, P^{(\ell)}_K \;\Vert\; P^{(\ell)}_V \,\big], \quad P^{(\ell)}_K, P^{(\ell)}_V \in \mathbb{R}^{L_{\text{prefix}} \times d}. $$ $$ \tilde{K}^{(\ell)} = \big[\, P^{(\ell)}_K \;;\; K^{(\ell)} \,\big], \qquad \tilde{V}^{(\ell)} = \big[\, P^{(\ell)}_V \;;\; V^{(\ell)} \,\big], $$ $$\text{Attn}\!\big(Q^{(\ell)},\, \tilde{K}^{(\ell)},\, \tilde{V}^{(\ell)}\big).$$Two things are worth noting:

- The queries $Q^{(\ell)}$ are not augmented. The prefix only ever appears on the “context” side of attention, so token positions still attend to it but it does not produce its own output.

- Because $K$ and $V$ are extended, every generated token can read from the prefix at every layer. The prefix behaves like a learned, differentiable working memory threaded through the whole stack.

Parameter count: why it is efficient#

$$| \theta_{\text{prefix}} | = 2 \cdot L \cdot d \cdot L_{\text{prefix}} .$$For GPT-2 medium ($L = 24$ , $d = 1024$ ) and a typical $L_{\text{prefix}} = 10$ , this is about 0.5 M parameters versus the full model’s 355 M — roughly a 700× reduction. For GPT-2 XL the ratio gets even more dramatic.

Per-task storage drops from gigabytes to a few hundred kilobytes. That is the practical hook: you can ship one base model and a directory of small prefix files, one per task.

Why reparameterization helps#

$$P^{(\ell)}_K, P^{(\ell)}_V = \text{MLP}_\phi\!\big( P'^{(\ell)} \big),$$where $P'^{(\ell)} \in \mathbb{R}^{L_{\text{prefix}} \times d'}$ is a smaller latent prefix and $d' \ll d$ . Why this helps:

- The MLP smooths the optimization landscape; gradients flowing into a low-dimensional latent are better-conditioned than those landing on a wide $L_{\text{prefix}} \times 2d$ matrix.

- The non-linearity adds capacity that the prefix matrix alone cannot express, without unfreezing the backbone.

- A small shared MLP gives you partial parameter sharing across layers while still producing layer-specific outputs.

After training, the MLP can be discarded — only the materialized $P^{(\ell)}_K, P^{(\ell)}_V$ tensors are kept for inference, so the extra capacity is “free” at deploy time.

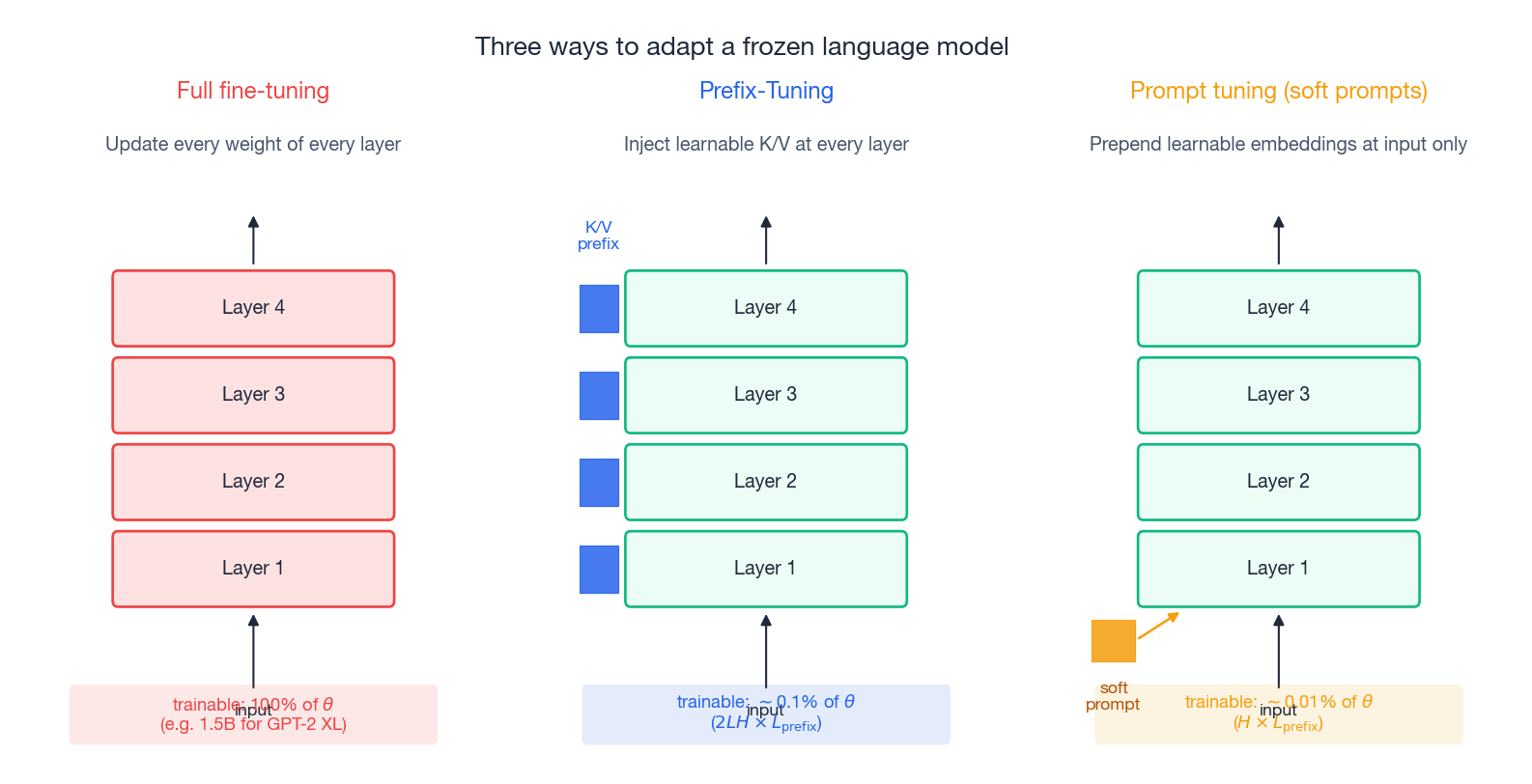

Three ways to adapt a frozen LM, at a glance#

The three approaches sit on a clear continuum:

- Full fine-tuning updates everything. Maximum capacity, maximum cost, no model sharing.

- Prefix-Tuning updates a small layer-wise K/V tensor. ~0.1 % of parameters, strong on generation, frozen backbone.

- Prompt tuning updates only an input-side soft prompt. ~0.01 % of parameters, simplest to implement, generally weaker than Prefix-Tuning at small model scale but catches up at very large scale (Lester et al., 2021).

LoRA (Hu et al., 2021) is a fourth option that is structurally different: it leaves the activations alone and instead adds a low-rank delta to the weight matrices, $W \mapsto W + \alpha BA$ with $A, B$ low rank. LoRA has dominated practical PEFT for instruction tuning, mostly because (a) it merges into the weights at inference with zero latency overhead and (b) the rank knob is more intuitive than prefix length for many users. Prefix-Tuning is still the right tool when you need to keep weights bit-identical, when you want per-request swappable adapters at decode time, or when the task is generation-flavored and you want the inductive bias of “extra context”.

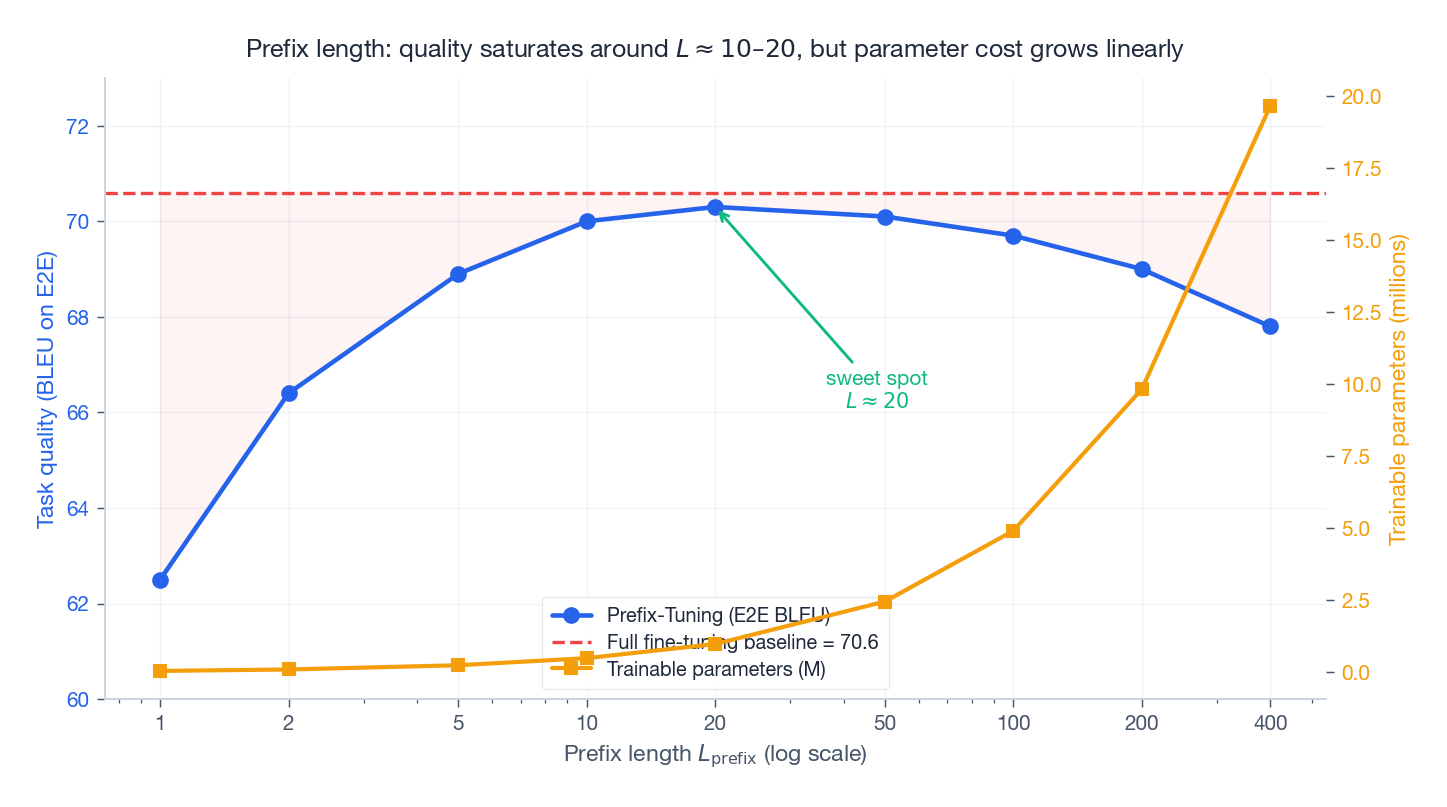

Prefix length: a sweet spot, not a hyperparameter to maximize#

Empirically, BLEU on E2E table-to-text rises sharply from $L_{\text{prefix}} = 1$ to about $L_{\text{prefix}} = 10$ , then flattens, and very long prefixes ($> 200$ ) begin to slightly hurt — likely a mild optimization issue rather than a capacity one, but the consequence is that simply making the prefix longer is not free. A useful starting point:

- Classification-style or short generation: $L_{\text{prefix}} \in [5, 10]$

- Long-form generation, few-shot regime: $L_{\text{prefix}} \in [10, 20]$

- More than ~100: rarely worth the parameter cost or the longer effective sequence at inference

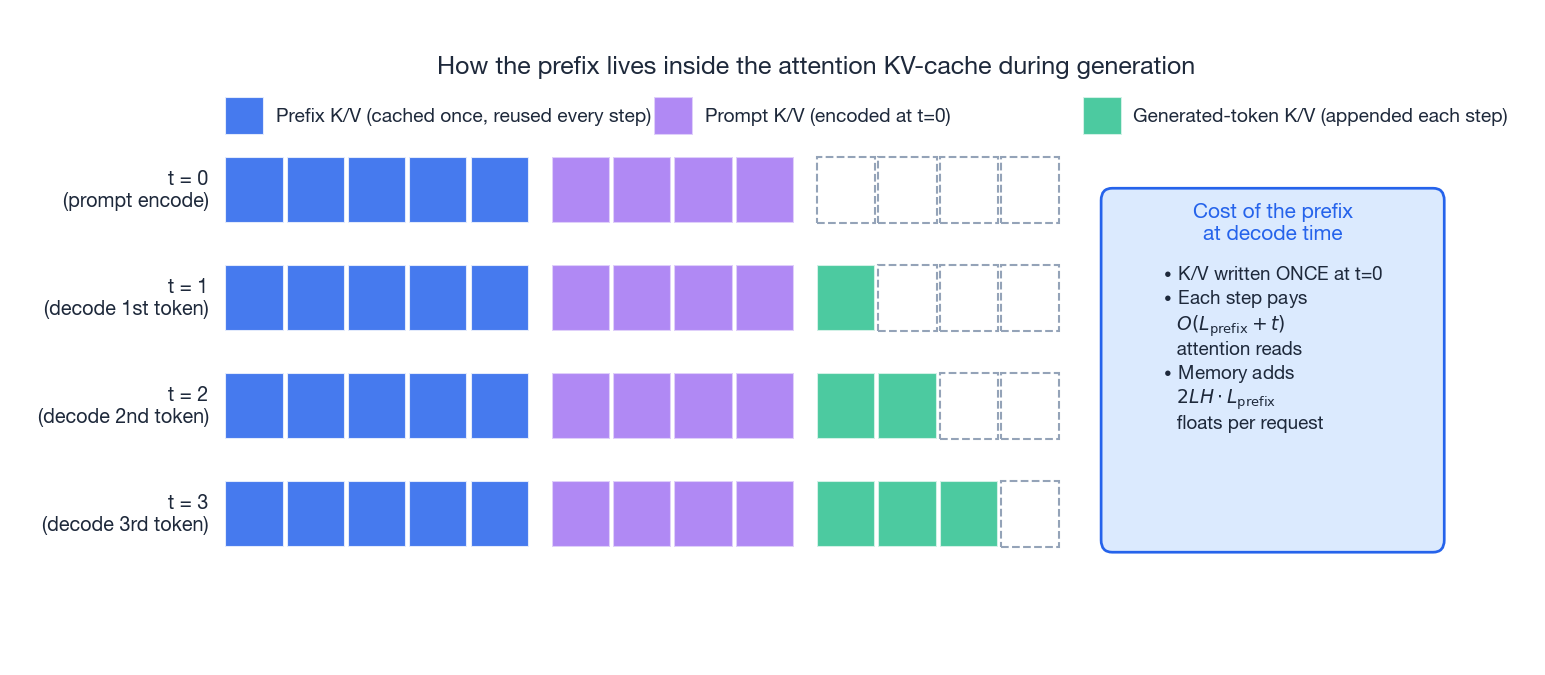

KV-cache mechanics during autoregressive decoding#

The prefix integrates very naturally with the standard KV-cache used by autoregressive decoders. At each layer, you maintain a tensor of cached $(K, V)$ pairs for everything the model has already attended to. With Prefix-Tuning:

- At step $t = 0$ , the cache is initialized with the prefix K/V tensors (they are produced once, at request load time, by reading $P^{(\ell)}_K, P^{(\ell)}_V$ from disk). Then the prompt is encoded and its K/V are appended.

- At each decode step $t$ , the newly generated token’s K/V are appended to the cache, exactly as in normal decoding.

- Attention at every step runs against the full extended cache: prefix + prompt + everything generated so far.

The runtime cost is therefore an additive $O(L_{\text{prefix}})$ on top of normal attention at each step, and a fixed extra memory of $2 \cdot L \cdot d \cdot L_{\text{prefix}}$ floats per active request. With $L_{\text{prefix}} = 10$ this is negligible; with $L_{\text{prefix}} = 200$ you are effectively decoding against a 200-token-longer context, which is a real cost worth measuring.

This mechanism also makes per-request task switching cheap: load a different prefix tensor, reset the cache, and the same base model now behaves like a different fine-tune.

Application: GPT-2 generation tasks#

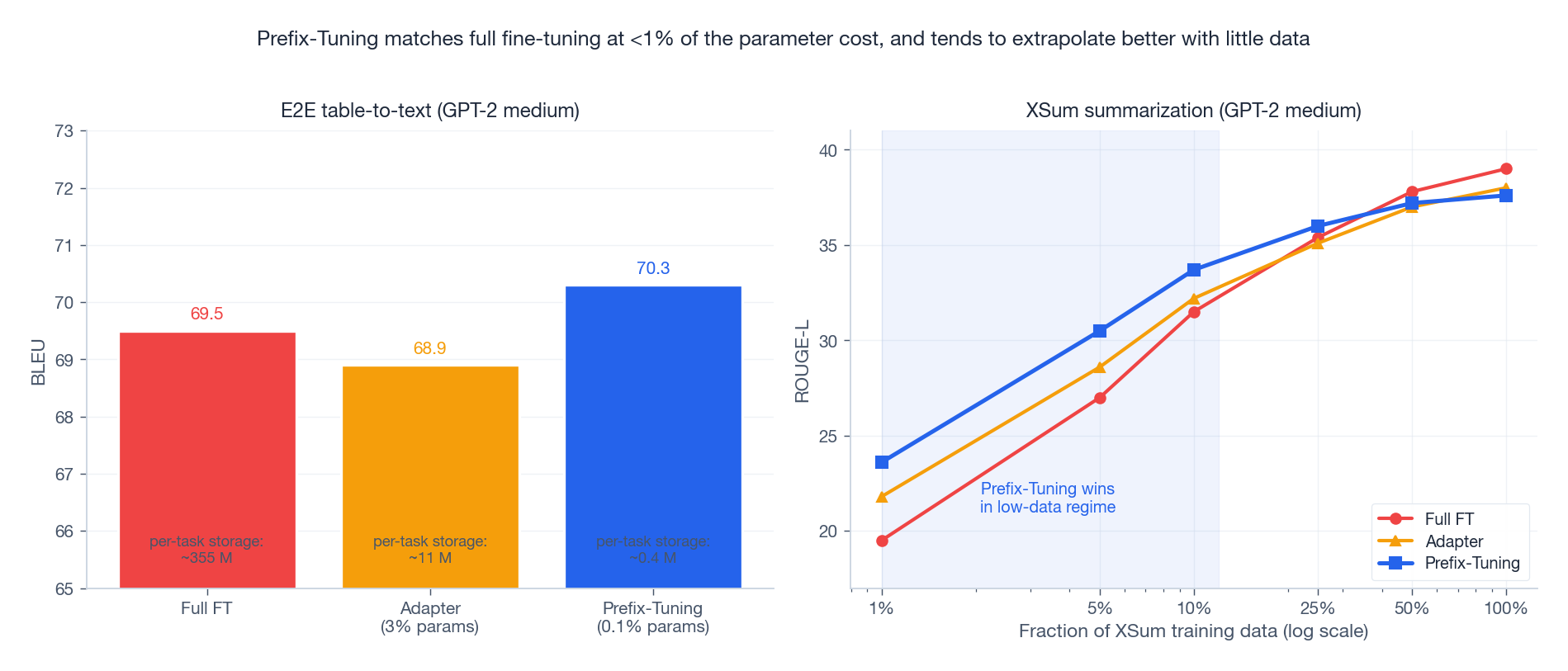

The original paper’s headline experiments are on GPT-2 medium for two generation benchmarks:

- E2E (table-to-text), where Prefix-Tuning matches or slightly beats full fine-tuning at 0.1 % of the parameter budget.

- XSum (abstractive summarization), where the gap to full fine-tuning closes as data grows but Prefix-Tuning has a clear edge in the low-data regime — under ~5 % of training data, the inductive bias of “you only have a small contextual knob to turn” prevents the kind of overfitting that full fine-tuning falls into.

The low-data result has aged well. It is the same story you see later with LoRA on instruction-tuning data: when you have very little task data, restricting the hypothesis class to a low-dimensional adapter is a regularizer, and the regularizer is usually worth more than the extra capacity full fine-tuning would give you.

Practical engineering notes#

Where to apply prefixes. In a decoder-only model, applying them to self-attention at every layer is the standard recipe. In an encoder-decoder model, you have to decide: encoder self-attention only, decoder self-attention, decoder cross-attention, or all three. For conditional generation tasks the original paper found cross-attention prefixes to be the most important.

Initialization. Random init works but is slow to warm up. Initializing the prefix from the embedding of a real prompt sentence (“summarize the following article:”) gives faster convergence on the same task — a small trick, but a real one.

Multi-task serving. Store one prefix tensor per task. A directory of 30 prefixes for GPT-2 medium is a handful of megabytes, versus ~10 GB for 30 full fine-tunes. Switching tasks in a serving stack means re-loading the tiny prefix file, not the model.

Common failure modes.

- No improvement after long training. Usually $L_{\text{prefix}}$ too small, learning rate too low, or the reparameterization MLP missing on a task that needs it.

- Loss is stable but generation is gibberish. Check that you are concatenating into the correct attention dimension and that the prefix is on the context side only. A surprisingly common bug is also putting it on $Q$ .

- Quality collapses with very long prefixes. Likely an optimization issue; try smaller LR, stronger reparameterization, or just shorten the prefix.

When to choose Prefix-Tuning#

Choose Prefix-Tuning when:

- The base model weights must remain bit-identical (regulatory or sharing reasons).

- You serve many tasks behind a single base model and want per-request hot-swap.

- The task is generation-flavored and the data budget is modest.

Choose LoRA instead when:

- You want the absolute lowest inference latency (LoRA can be merged into the weights).

- You need broad performance across instruction-tuning style mixtures.

- You prefer reasoning about “rank” rather than “prefix length”.

Choose full fine-tuning when:

- You truly have abundant task data and care about every last point of quality.

- Per-task storage is not a concern.

Memory and latency budget in production#

The “0.5 M parameters” headline hides three other costs you actually pay at serving time. None are deal-breakers, but they decide whether your stack scales to thousands of tasks or stalls at a few dozen.

Per-request prefix-cache memory. With $L = 24$ , $d = 1024$ , $L_{\text{prefix}} = 10$ , fp16, the prefix K/V tensors are $2 \cdot 24 \cdot 1024 \cdot 10 \cdot 2 = 983{,}040$ bytes per request — about 960 KB. With 100 concurrent requests on a single GPU that is 96 MB of VRAM dedicated purely to prefixes, on top of normal KV-cache. A 1 % overhead on an A10G’s 24 GB, but with $L_{\text{prefix}} = 200$ the same calculation yields 19 GB. The lesson: long prefixes turn into a real VRAM bill very quickly under load.

Prefix load latency. Reading a 1 MB prefix from local SSD costs sub-millisecond, from S3 it is 30-80 ms. If your serving stack switches tasks per request and loads from object storage, that 30-80 ms dominates total latency for short generations. The fix is a prefix LRU cache pinned in host memory — with 30 prefixes at 1 MB each, the entire working set is 30 MB and fits in any reasonable container.

Decode throughput hit. Adding 10 prefix tokens to the KV-cache costs roughly 10/N extra attention flops per generated token, where N is the cache length at that step. For a 200-token prompt with 100-token generation, the average extra cost is about 6-8 %. For a 4 K-token chat history the same prefix is closer to 0.3 %. Long-context serving makes Prefix-Tuning effectively free; tight conversational loops feel the hit.

The combined picture: Prefix-Tuning is cheap to store, cheap to swap, modest to serve, and only gets expensive when you push prefix length into the hundreds. A pragmatic deployment posture is “$L_{\text{prefix}} \in [8, 16]$ , in-memory LRU of 50 prefixes, S3 as the cold store”. You never have to think about it again.

Summary#

Prefix-Tuning reframes adaptation as learning a small, layer-wise contextual memory that is fed into a frozen Transformer’s attention. The architecture is simple, the parameter cost is in the sub-percent regime, and the low-data inductive bias is real. Even after LoRA largely took over the practical PEFT landscape, Prefix-Tuning remains the cleanest mental model for “adapting a model by giving it learned context” — and it is still the right tool for several real-world serving scenarios.

References#

- Li, X. L., & Liang, P. (2021). Prefix-Tuning: Optimizing Continuous Prompts for Generation . ACL 2021.

- Lester, B., Al-Rfou, R., & Constant, N. (2021). The Power of Scale for Parameter-Efficient Prompt Tuning . EMNLP 2021.

- Houlsby, N., et al. (2019). Parameter-Efficient Transfer Learning for NLP . ICML 2019 (Adapters).

- Hu, E. J., et al. (2021). LoRA: Low-Rank Adaptation of Large Language Models . ICLR 2022.

- He, J., et al. (2022). Towards a Unified View of Parameter-Efficient Transfer Learning . ICLR 2022 (unified PEFT framework).

- Yang, Z., et al. (2022). Robust Prefix-Tuning for Text Classification . THUMT blog companion to the ICLR 2022 paper.