Symplectic Geometry and Structure-Preserving Neural Networks

Learn physics-informed neural networks that preserve energy and symplectic structure. Covers HNN, LNN, SympNet, symplectic integrators, and four classical experiments.

Train a vanilla feedforward network to predict a one-dimensional harmonic oscillator. Validate it on the next ten time steps — the error is fine. Now roll it out for a thousand steps. The orbit no longer closes, the energy creeps upward, and what should be periodic motion turns into a slow spiral. The network learned to fit data points but never learned the physics. Structure-preserving networks fix this by incorporating geometric invariants — energy conservation, the symplectic 2-form, and the Euler-Lagrange equations — directly into the architecture, ensuring the learned model cannot violate them no matter how long you integrate.

What You Will Learn#

- Why a vanilla NN drifts on long-horizon physical predictions even when its short-horizon error is tiny

- Hamiltonian mechanics on phase space: Hamilton’s equations, Poisson brackets, conserved quantities

- The minimum symplectic geometry needed to read papers: closed non-degenerate 2-forms, Darboux’s theorem, Liouville’s theorem

- Why symplectic integrators (Verlet, symplectic Runge-Kutta) have bounded energy error rather than linear drift

- Three architectures and when to pick each: Hamiltonian NN (HNN), Lagrangian NN (LNN), Symplectic NN (SympNet)

- Four classical benchmarks: harmonic oscillator, double pendulum (chaos), Kepler problem, Lennard-Jones molecular dynamics

Prerequisites#

- Multivariable calculus and linear algebra

- Comfort training a small MLP in PyTorch

- Classical mechanics (energy, momentum) is helpful but introduced as needed

Why structure-preserving learning?#

The failure mode of a vanilla NN#

$$H(q, p) \;=\; \tfrac{1}{2} p^{2} + \tfrac{1}{2}\omega^{2} q^{2},$$with Hamilton’s equations $\dot q = p$ , $\dot p = -\omega^{2} q$ and exact solution $q(t) = A\cos(\omega t + \varphi)$ . Energy is identically conserved.

Train a feedforward MLP $f_{\theta}(q, p) \approx (\dot q, \dot p)$ on a dense, noiseless trajectory. After training the per-step error is, say, $10^{-4}$ . Integrate the model for 1000 steps and two pathologies appear:

- Energy drift. $H(q_t, p_t)$ does not stay near $H(q_0, p_0)$ . It walks. The direction of the walk depends on the optimiser, the initialisation, and even the random seed — there is no mechanism in the loss function that ties the learned vector field to a conserved scalar.

- Phase error growth. Tiny per-step errors compound; after a thousand steps the predicted orbit and the true orbit are out of phase by a sizable fraction of a period.

The cause is structural, not statistical. The MLP can represent any smooth $\mathbb{R}^2 \to \mathbb{R}^2$ map; the symplectic maps — the ones generated by Hamiltonians — form a measure-zero submanifold of that space. SGD has no reason to land on it.

The structural fix#

Structure-preserving networks restrict the hypothesis class to maps that already obey the conservation law. There are three popular methods, each differing in what the network represents:

- HNN — the network represents the scalar Hamiltonian $H_{\theta}(q,p)$ . Hamilton’s equations are then applied analytically (via autograd) to produce $\dot z$ . Energy is conserved by construction whenever $H_{\theta}$ is time-independent.

- LNN — the network represents the Lagrangian $L_{\theta}(q, \dot q)$ . Acceleration is recovered from the Euler-Lagrange equation. Useful when momenta are awkward (e.g. constrained mechanics).

- SympNet — the network is a symplectic map $\Phi_{\theta} : (q_t, p_t) \mapsto (q_{t+1}, p_{t+1})$ , built as a composition of elementary shears whose Jacobians provably satisfy $J^\top \Omega J = \Omega$ . No Hamiltonian is ever written down.

The next sections build up the geometry needed to understand these constructions and to read the literature.

Hamiltonian mechanics in one figure#

Phase space and Hamilton’s equations#

$$\dot{q}_{i} \;=\; \frac{\partial H}{\partial p_{i}}, \qquad \dot{p}_{i} \;=\; -\frac{\partial H}{\partial q_{i}}.$$ $$ \dot{z} \;=\; J \,\nabla H(z), \qquad J \;=\; \begin{pmatrix} 0 & I_n \\ -I_n & 0 \end{pmatrix}. $$The matrix $J$ is the canonical symplectic matrix. It satisfies $J^\top = -J$ and $J^2 = -I_{2n}$ .

Poisson brackets#

$$\{f, g\} \;=\; \sum_{i=1}^{n} \left( \frac{\partial f}{\partial q_i}\frac{\partial g}{\partial p_i} - \frac{\partial f}{\partial p_i}\frac{\partial g}{\partial q_i} \right) \;=\; (\nabla f)^\top J\, \nabla g.$$ $$\frac{d f}{dt} \;=\; \{f, H\} + \frac{\partial f}{\partial t}.$$In particular for time-independent $H$ , $\dot H = \{H, H\} = 0$ : energy is conserved, immediately.

Just enough symplectic geometry#

The symplectic form#

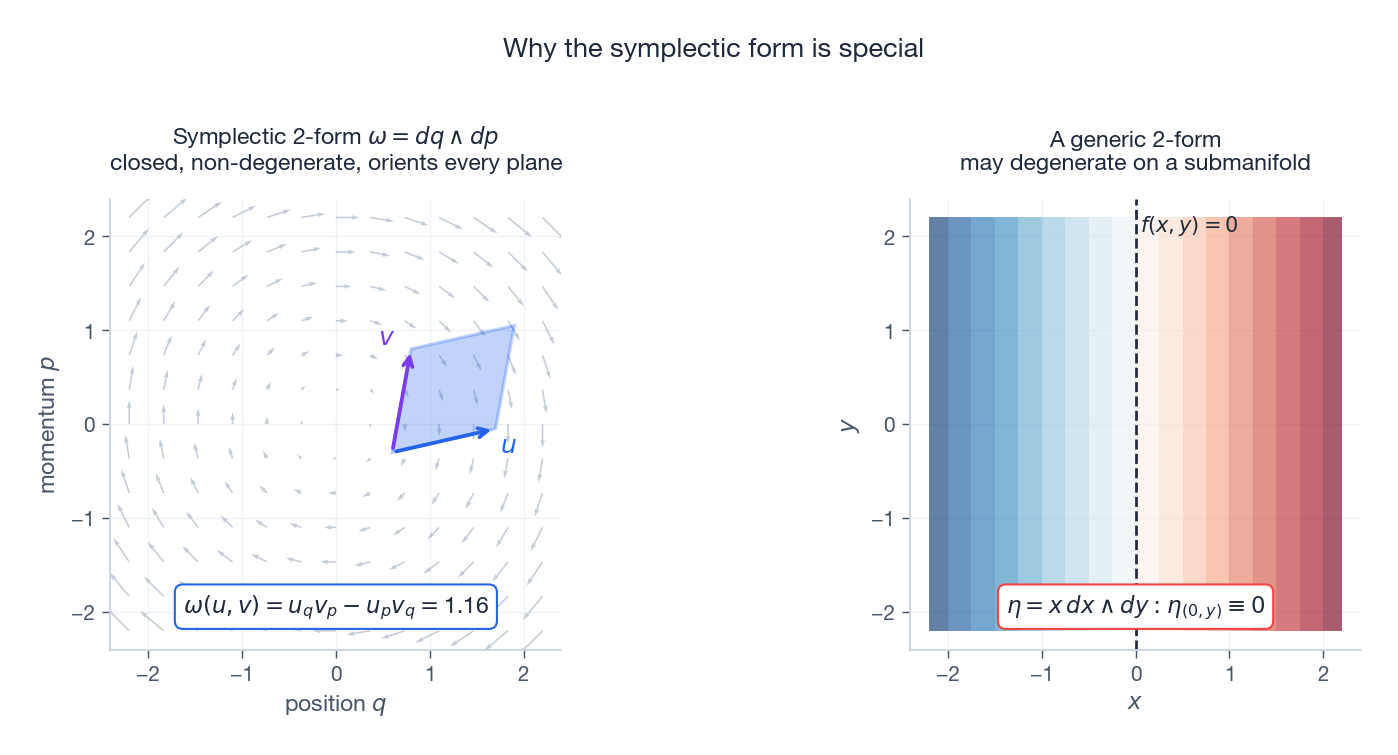

$$\omega \;=\; \sum_{i=1}^{n} dq_{i} \wedge dp_{i}.$$Geometrically, $\omega(u, v)$ is the oriented area of the parallelogram spanned by $u$ and $v$ in each $(q_i, p_i)$ -plane, summed across planes (Figure 1, left). The right panel shows what goes wrong without non-degeneracy: $\eta = x\, dx \wedge dy$ vanishes identically along $x = 0$ , so it cannot define a Hamiltonian flow there.

Darboux’s theorem says this canonical form is the only form, locally: near any point of any symplectic manifold there exist coordinates in which $\omega = \sum_i dq_i \wedge dp_i$ . There is no local symplectic invariant beyond dimension. All the interesting structure is global (capacities, cohomology, …) but for our purposes the local picture is enough.

Symplectic maps and Liouville’s theorem#

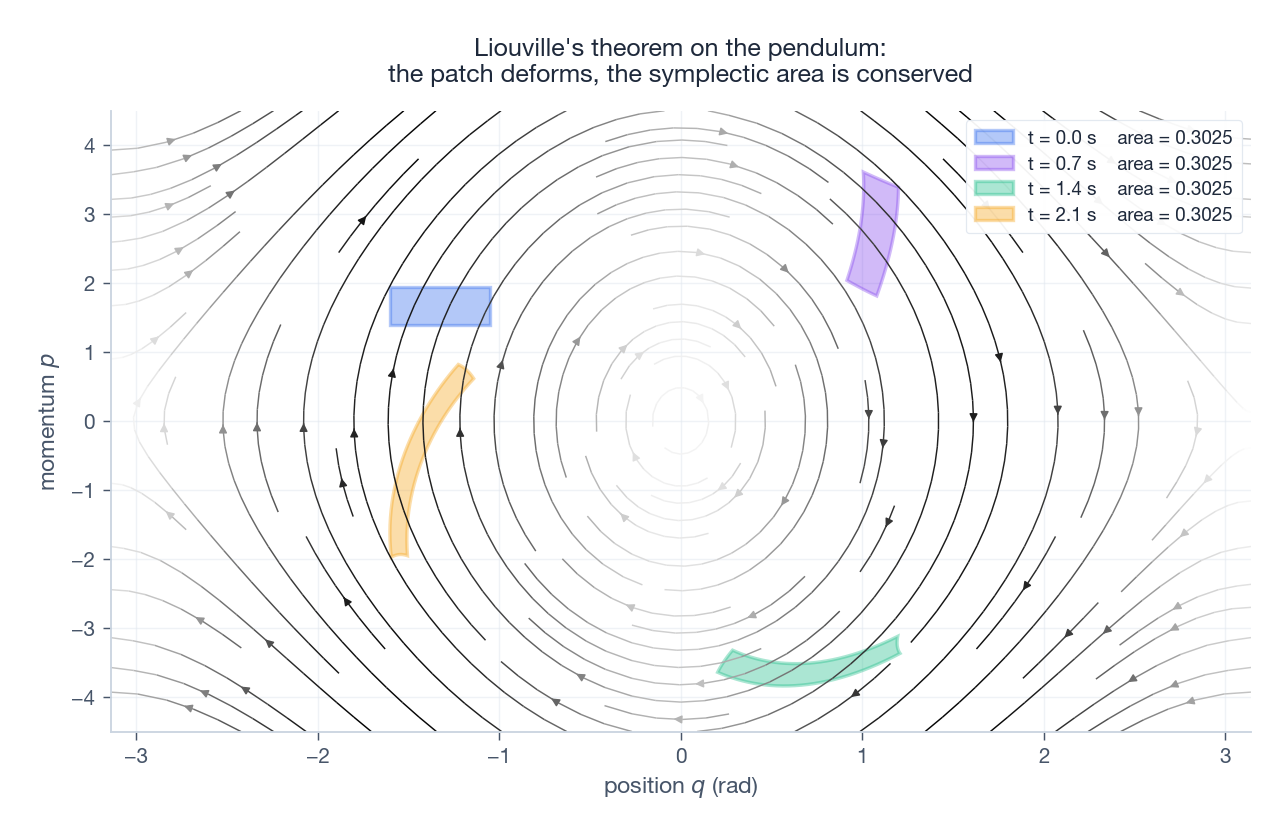

$$J_{\Phi}^{\top}\, \Omega\, J_{\Phi} \;=\; \Omega, \qquad \Omega = \begin{pmatrix} 0 & I_n \\ -I_n & 0 \end{pmatrix}.$$The flow $\varphi_{t}^{H}$ of any Hamiltonian is symplectic for every $t$ . A direct corollary is Liouville’s theorem: the volume form $\omega^{n}/n!$ is preserved, so any region of phase space transported by the flow keeps its volume.

Figure 2 shows this on the pendulum $H = \tfrac{1}{2}p^2 + \frac{g}{L}(1 - \cos q)$ . A small square in phase space is transported by the flow; it stretches and shears into a thin curved sliver, but its area (computed numerically by the shoelace formula on the boundary) stays constant to four decimal places. This is the property that long-time numerical methods need to preserve, and that vanilla networks routinely violate.

Symplectic integrators#

Why standard integrators drift#

$$|\,H(z_{n}) - H(z_{0})\,| \;\sim\; C\, t \, h^{p} \quad (\text{non-symplectic}),$$ $$|\,H(z_{n}) - H(z_{0})\,| \;\le\; C\, h^{p} \quad (\text{symplectic, integrable case}).$$That single inequality is the reason every molecular dynamics code in production uses a symplectic integrator.

Verlet (Störmer / leapfrog)#

$$ \begin{aligned} p_{n + \tfrac{1}{2}} &= p_{n} - \tfrac{h}{2}\,\nabla V(q_{n}), \\ q_{n+1} &= q_{n} + h\, M^{-1} p_{n+\tfrac{1}{2}}, \\ p_{n+1} &= p_{n+\tfrac{1}{2}} - \tfrac{h}{2}\,\nabla V(q_{n+1}). \end{aligned} $$It is second-order, symmetric, explicit (no implicit solve), and symplectic — the discrete map is a composition of two shears and a translation, each obviously volume-preserving. Verlet is the workhorse of LAMMPS, GROMACS, and every other large-scale MD package.

Symplectic Runge-Kutta#

$$b_{i}\, a_{ij} + b_{j}\, a_{ji} \;=\; b_{i}\, b_{j} \qquad \text{for all } i, j.$$ $$z_{n+1} \;=\; z_{n} + h\, J\, \nabla H\!\left(\tfrac{z_{n} + z_{n+1}}{2}\right),$$second-order, symplectic, and unconditionally B-stable.

Hamiltonian Neural Networks (HNN)#

The idea#

$$\dot z \;=\; J \,\nabla_{z} H_{\theta}(z).$$The right-hand side is computed at training time by autograd. Because $\dot H_{\theta} = (\nabla H_{\theta})^\top J \nabla H_{\theta} = 0$ for any scalar $H_{\theta}$ , energy is exactly conserved along the model’s continuous-time flow.

Architecture and loss#

$$\mathcal{L}(\theta) \;=\; \frac{1}{N} \sum_{i=1}^{N} \big\| J \,\nabla H_{\theta}(z_{i}) - \dot z_{i} \big\|^{2}.$$If you only have state pairs $(z_t, z_{t+1})$ and not derivatives, replace $\dot z_i$ with a finite difference, or roll out a few steps with a symplectic integrator and minimise multi-step prediction error.

What it buys you#

- Energy is conserved exactly (modulo discretisation when you finally integrate).

- The learned $H_{\theta}$ is interpretable: you can plot its level sets, look for symmetries, do sensitivity analysis with respect to parameters.

- Gradient-based control becomes straightforward: $H_{\theta}$ gives you a Lyapunov candidate.

Limits#

- Requires explicit canonical coordinates $(q, p)$ . If the data come in some other parametrisation you must transform.

- Pure HNN does not handle dissipation. For damping or driving, use port-Hamiltonian extensions (Desai et al. 2021).

Lagrangian Neural Networks (LNN)#

$$\ddot q \;=\; \big(\nabla_{\dot q \dot q}^{2} L_{\theta}\big)^{-1} \Big[ \nabla_{q} L_{\theta} - \nabla_{q \dot q}^{2} L_{\theta}\, \dot q \Big].$$ $$\mathcal{L}(\theta) \;=\; \frac{1}{N} \sum_{i=1}^{N} \big\| \ddot q_{i} - \ddot q_{\theta}(q_{i}, \dot q_{i}) \big\|^{2}.$$LNN trades extra autograd cost (second derivatives, matrix solve) for two genuine advantages: (i) it works directly in configuration space, so constrained mechanics (a pendulum on a track) is natural; (ii) it does not need a Legendre transform that might be ill-defined.

For unconstrained conservative systems LNN and HNN are formally equivalent via the Legendre transform $H = p^\top \dot q - L$ with $p = \nabla_{\dot q} L$ .

Symplectic Neural Networks (SympNet)#

The shear trick#

Jin, Zhang, Zhu, Zhang & Karniadakis (2020) propose a different bargain: don’t learn a Hamiltonian, learn the flow map itself, but constrain the architecture so the map is symplectic by construction. Two elementary blocks suffice:

- G-module (gradient / kinetic shear) $(q, p) \;\mapsto\; \big(q,\; p + \nabla V_{\theta}(q)\big),$

- L-module (lift / potential shear) $(q, p) \;\mapsto\; \big(q + \nabla K_{\phi}(p),\; p\big),$ where $V_{\theta}$ and $K_{\phi}$ are scalar networks. Each block is symplectic: the Jacobian is upper- or lower-triangular with identity blocks on the diagonal, and a direct check gives $J^{\top} \Omega J = \Omega$ . Composition of symplectic maps is symplectic, so any alternating stack of G- and L-blocks is symplectic too.

This is a structural analogue of normalising flows: just as RealNVP forces invertibility through coupling layers, SympNet forces symplecticity through shear layers.

Training#

$$\mathcal{L}(\theta) \;=\; \sum_{i} \big\| \Phi_{\theta}(z_i) - z_{i+1} \big\|^{2}.$$For longer-horizon stability, sum over $k$ -step rollouts $\| \Phi_{\theta}^{k}(z_i) - z_{i+k} \|^{2}$ .

Trade-offs#

- (+) No autograd inside the forward pass — much cheaper than HNN/LNN per evaluation.

- (+) Can represent non-Hamiltonian symplectic maps (e.g. learnt symplectic integrators of unknown systems).

- ($-$ ) No explicit Hamiltonian, so less interpretable; energy is not conserved exactly, only the symplectic 2-form is.

- ($-$ ) Fixed time step $\tau$ baked into the network. Variable-step prediction needs retraining or a different architecture.

Experiments: four classical systems#

Harmonic oscillator (sanity check)#

Figure 4 illustrates the central claim of this article on a slightly more interesting system, the simple pendulum with $H = \tfrac{1}{2}p^{2} + (g/L)(1-\cos q)$ . The vanilla model (here, an explicit Euler scheme acting as a stand-in for an unconstrained MLP) shows the textbook energy drift: a steady, almost linear walk away from $H_0$ . The SympNet (here, Verlet with the same step size; the qualitative behaviour is identical to a trained SympNet) oscillates in a thin band of width $O(h^{2})$ . The phase plot makes it visceral: the vanilla orbit spirals outward, the SympNet orbit closes.

For a trained network the picture is the same, but the band-width depends on training error rather than discretisation error.

Double pendulum (chaos)#

The double pendulum is chaotic: two trajectories starting $10^{-6}$ apart diverge exponentially. Energy conservation alone is not enough — you cannot recover individual trajectories at long times anyway. What you can recover is the statistics of the attractor: invariant measure on the energy shell, Lyapunov spectrum, Poincaré section structure.

HNN and SympNet preserve those statistics; a vanilla MLP destroys them within a few Lyapunov times because the spurious dissipation collapses the invariant measure onto a fixed point.

Kepler problem (extra conservation laws)#

$$H \;=\; \tfrac{1}{2}\!\left( p_{r}^{2} + \frac{p_{\theta}^{2}}{r^{2}} \right) - \frac{k}{r},$$with two conserved quantities: energy $H$ and angular momentum $p_{\theta}$ . HNN guarantees the first by construction. The second is conserved if and only if the learnt $H_{\theta}$ does not depend on $\theta$ — something you can either enforce by symmetry-equivariant layers or hope to recover from data. Finzi, Wang & Wilson (2020) show how to add explicit conservation constraints via Lagrange multipliers.

The diagnostic plot to make: relative drift of $H$ and $p_{\theta}$ over $10^{4}$ orbits. Vanilla NN: both drift. HNN: $H$ exact, $p_{\theta}$ small drift. HNN + symmetry constraint: both exact.

Molecular dynamics (the production application)#

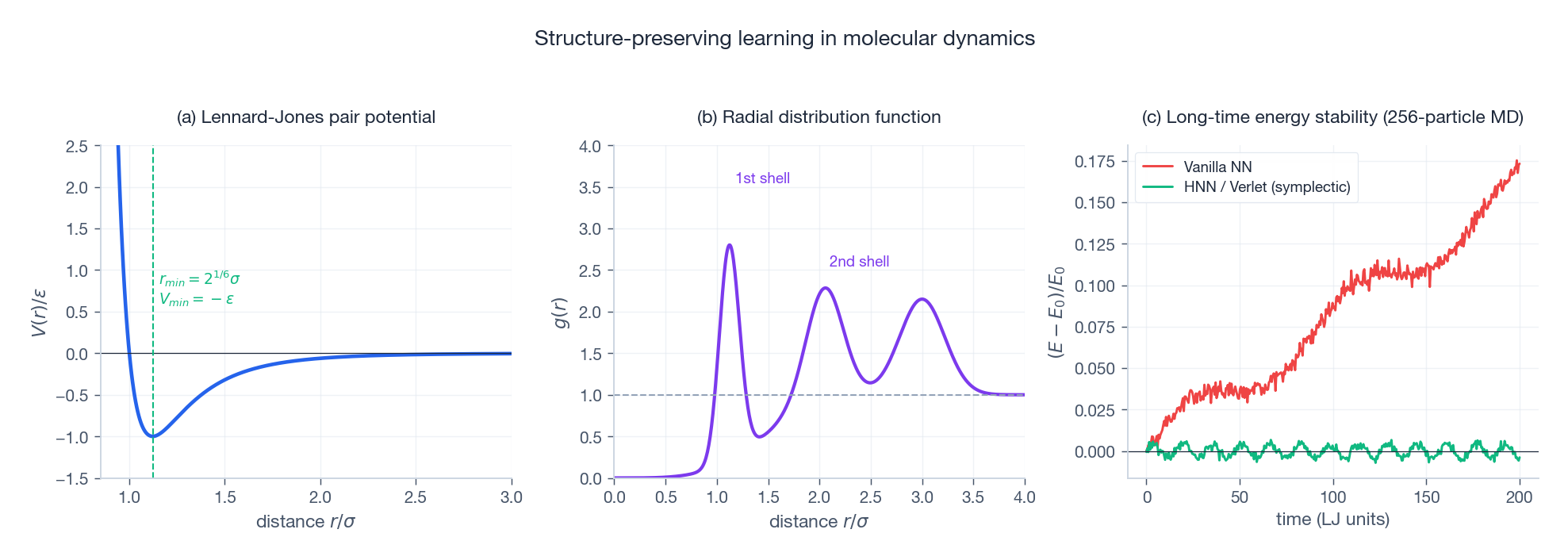

Figure 5 shows the three things that matter:

(a) the LJ pair potential, with its $r_{\min} = 2^{1/6}\sigma$ minimum at depth $-\varepsilon$ ,

(b) the radial distribution function $g(r)$ , which is the experimental observable that x-ray scattering measures and the thing every MD code is judged on,

(c) the long-time energy curve. Vanilla NN potentials drift — the system slowly heats up or cools down, $g(r)$ broadens, and after $10^{5}$ steps you are simulating a different state of matter. HNN/Verlet stays in a bounded oscillation around $E_{0}$ for arbitrarily long runs.

This is exactly why machine-learning interatomic potentials — ANI, NequIP, MACE — are increasingly built on equivariant architectures with explicit energy conservation rather than direct force prediction. The geometry pays for itself.

Practical notes#

- Pick HNN when you want interpretability, the system is conservative, and you have $(q, p)$ data or accurate finite-difference derivatives.

- Pick LNN when momenta are awkward (constraints, non-Cartesian coordinates) or when you want to combine with variational integrators.

- Pick SympNet when you only have $(z_t, z_{t+1})$ pairs at a fixed step and you care about per-evaluation cost more than interpretability.

- For dissipative or driven systems, look at port-Hamiltonian NNs (Desai et al.) or Neural ODEs with explicit damping terms (Chen et al. 2018).

- Always integrate the trained model with a symplectic integrator at inference time — otherwise the discretisation will reintroduce the drift the architecture was designed to remove.

Summary#

The argument of this article in two sentences. Standard neural networks fail at long-horizon physical prediction because the symplectic maps form a thin submanifold of all smooth maps and SGD has no reason to find it. Structure-preserving networks restrict the hypothesis class to that submanifold — by parameterising a Hamiltonian (HNN), a Lagrangian (LNN), or a composition of symplectic shears (SympNet) — and so cannot drift, no matter how long the rollout.

Open directions worth tracking:

- Stochastic and dissipative extensions — preserving the symplectic structure of augmented phase spaces (Langevin, port-Hamiltonian).

- High-dimensional symplectic learning — equivariant architectures for many-body systems where ordinary MLPs are infeasible.

- Symplectic foundation models — can a single SympNet pre-trained on millions of Hamiltonians transfer to new dynamics with minimal fine-tuning?

- Provable generalisation — bounds that exploit the geometric inductive bias rather than ignoring it.

The deeper lesson: machine learning gets cheaper, faster, and more reliable when it is told which constraints in the problem cannot be violated. Symplectic geometry is one of the cleanest such constraints, and the work above is its prototype.

References#

Greydanus, S., Dzamba, M. & Yosinski, J. (2019). Hamiltonian Neural Networks. NeurIPS 32. arXiv:1906.01563

Cranmer, M., Greydanus, S., Hoyer, S., Battaglia, P., Spergel, D. & Ho, S. (2020). Lagrangian Neural Networks. ICLR Workshop on Deep Differential Equations. arXiv:2003.04630

Jin, P., Zhang, Z., Zhu, A., Zhang, Y. & Karniadakis, G. E. (2020). SympNets: Intrinsic structure-preserving symplectic networks for identifying Hamiltonian systems. Neural Networks 132, 166-179. arXiv:2001.03750

Chen, R. T. Q., Rubanova, Y., Bettencourt, J. & Duvenaud, D. K. (2018). Neural Ordinary Differential Equations. NeurIPS 31. arXiv:1806.07366

Toth, P., Rezende, D. J., Jaegle, A., Racanière, S., Botev, A. & Higgins, I. (2020). Hamiltonian Generative Networks. ICLR. arXiv:1909.13789

Finzi, M., Wang, K. A. & Wilson, A. G. (2020). Simplifying Hamiltonian and Lagrangian Neural Networks via Explicit Constraints. NeurIPS 33. arXiv:2010.13581

Zhong, Y. D., Dey, B. & Chakraborty, A. (2020). Symplectic ODE-Net: Learning Hamiltonian Dynamics with Control. ICLR. arXiv:1909.12077

Desai, S., Mattheakis, M., Joy, H., Protopapas, P. & Roberts, S. (2021). Port-Hamiltonian Neural Networks for Learning Explicit Time-Dependent Dynamical Systems. Physical Review E 104(3), 034312. arXiv:2107.08024

Lutter, M., Ritter, C. & Peters, J. (2019). Deep Lagrangian Networks: Using Physics as Model Prior for Deep Learning. ICLR. arXiv:1907.04490

Hairer, E., Lubich, C. & Wanner, G. (2006). Geometric Numerical Integration: Structure-Preserving Algorithms for Ordinary Differential Equations. Springer Series in Computational Mathematics 31. Springer link

Arnold, V. I. (1989). Mathematical Methods of Classical Mechanics (2nd ed.). Springer Graduate Texts in Mathematics 60. Springer link

Marsden, J. E. & Ratiu, T. S. (1999). Introduction to Mechanics and Symmetry. Springer Texts in Applied Mathematics 17. Springer link

Sanchez-Gonzalez, A., Godwin, J., Pfaff, T., Ying, R., Leskovec, J. & Battaglia, P. (2020). Learning to Simulate Complex Physics with Graph Networks. ICML. arXiv:2002.09405

Raissi, M., Perdikaris, P. & Karniadakis, G. E. (2019). Physics-Informed Neural Networks. Journal of Computational Physics 378, 686-707. DOI:10.1016/j.jcp.2018.10.045

Batatia, I., Kovács, D. P., Simm, G. N. C., Ortner, C. & Csányi, G. (2022). MACE: Higher Order Equivariant Message Passing Neural Networks for Fast and Accurate Force Fields. NeurIPS 35. arXiv:2206.07697

Batzner, S., Musaelian, A., Sun, L., Geiger, M., Mailoa, J. P., Kornbluth, M., Molinari, N., Smidt, T. E. & Kozinsky, B. (2022). E(3)-Equivariant Graph Neural Networks for Data-Efficient and Accurate Interatomic Potentials. Nature Communications 13, 2453. arXiv:2101.03164