Tennis-Scene Computer Vision: From Paper Survey to Production

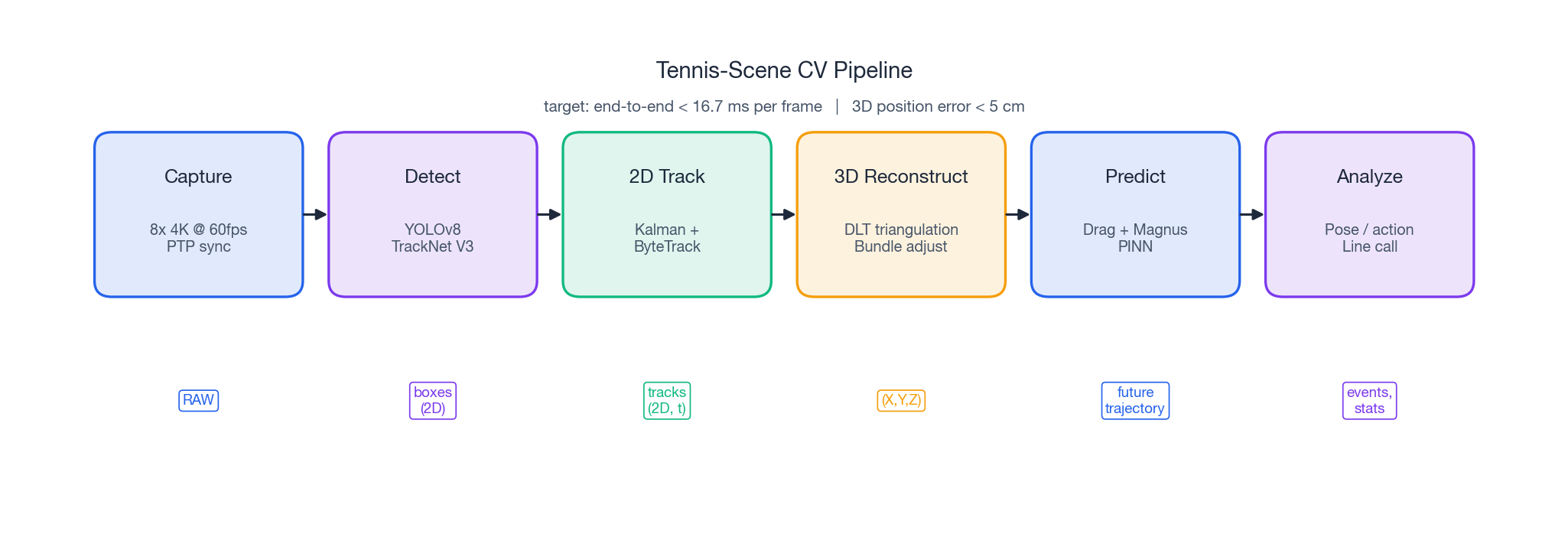

A complete CV system for tennis: small high-speed object detection, multi-camera 3D reconstruction, physics-based trajectory prediction, and pose-based action recognition. From the literature down to a 16.7 ms-per-frame deployment budget.

A 6.7 cm tennis ball travels at over 200 km/h. Reconstructing its 3D trajectory from eight 4K cameras in real time, while also classifying each player’s stroke, involves small-object detection, multi-view geometry, Kalman filtering, physics modeling, and human-pose estimation — all at once. This post follows the same steps as in deployment: state the constraints, survey the literature, choose, build, and lay out a millisecond-by-millisecond budget for production.

What You Will Learn#

- Why traditional detectors collapse on 10–20 px tennis balls and how the TrackNet line fixes it

- Multi-camera calibration, PTP synchronisation, and DLT triangulation in code and math

- A 9-state Kalman filter coupled with a drag-plus-Magnus ODE for trajectory prediction

- Action recognition: rule-based templates vs. end-to-end learning, and when each wins

- How to fit detection → 3D → tracking → pose → analytics into a 16.7 ms / frame budget

Prerequisites: pinhole camera model, basic Kalman filtering, and some PyTorch inference experience.

The entire pipeline has 16.7 ms per frame at 60 fps, so each step must complete in single-digit milliseconds. Each section allocates part of this budget.

Requirements: quantify “hard”#

Before building, the non-negotiable numbers are outlined.

Capability matrix#

| Capability | Input | Output | Latency budget |

|---|---|---|---|

| Ball detection | Single 4K frame | 2D bbox + confidence | < 5 ms / camera |

| Multi-view 3D | Synced 2D detections (≥2 views) | $(X, Y, Z)$ + covariance | < 2 ms |

| Trajectory tracking | 3D observation sequence | position / velocity / accel | < 1 ms |

| Landing prediction | Current state + spin estimate | 1–2 s future trajectory | < 3 ms |

| Player pose | Single-camera frame | 17 keypoints | < 6 ms / person |

| Action classification | Keypoint sequence | Class + confidence | < 1 ms |

If any stage exceeds its budget, the pipeline drops from 60 fps to 30 fps, causing visible stutter.

The physics of “hard”#

Pixel budget for a small target. A tennis ball seen from the opposite baseline (~28 m away) at a 35 mm-equivalent focal length occupies about 12 px. A one-pixel center error translates to 2.3 cm of lateral 3D error — already at the threshold for a baseline call.

Motion blur. At 50 m/s and a 1/250 s shutter, the ball smears 20 cm in a single frame, creating a 30 px streak. Without sub-1/1000 s global shutters, no detection algorithm can fully recover the ball’s position.

Synchronisation, geometrically amplified. A 5 ms offset between two cameras puts the ball at different image positions by ~25 cm. Triangulation degenerates and the recovered 3D point drifts along an “anti-epipolar” line. PTP synchronization under 1 ms is therefore a hard requirement.

Occlusion and brief disappearance. The ball is occluded by the net band as it crosses, and there is always a blind region right after the bounce. Any single-shot detector misses several consecutive frames, so a tracker with strong physics priors must take over.

Literature survey and selection#

Six years of papers, sorted by the four sub-tasks. Each section ends with the choice that goes into production.

Small-object detection#

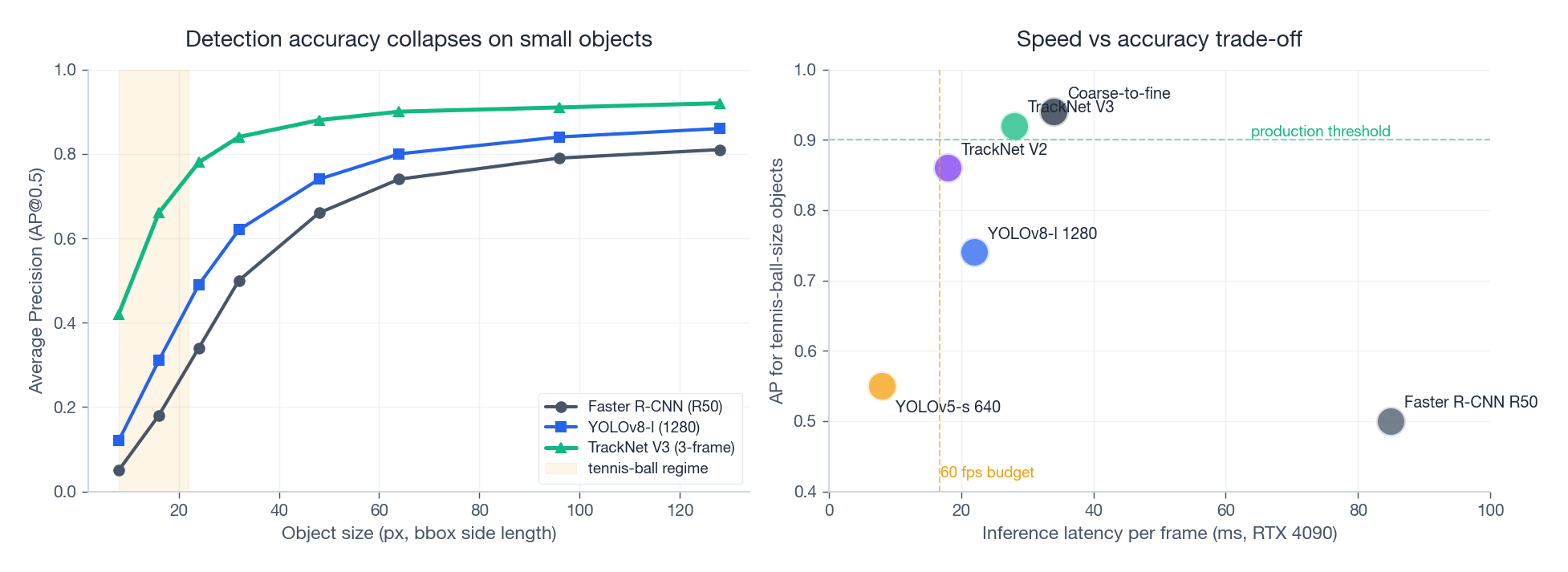

The failure of generic detectors on small objects is evident in the data:

Left: AP@0.5 vs. object side length in pixels — the tennis-ball range is 8–22 px, where Faster R-CNN scores 0.05–0.2 and even YOLOv8 at 1280 input only reaches 0.3–0.5. Right: latency vs. accuracy. The only models meeting both 60 fps (< 16.7 ms) and AP > 0.9 are on the TrackNet V2/V3 specialized track.

TrackNet (2019–2023) reframes “ball” as a temporal problem rather than per-frame detection:

- V1 used a VGG-U-Net consuming 3 consecutive frames and produced a heatmap; the first stable solution

- V2 swapped in MobileNetV2, dropping params from 15 M to 2.8 M, 3× faster, only 1.2 pp worse

- V3 added Transformer cross-frame self-attention and pushed high-speed detection success from 92.3% to 96.7%

A coarse-to-fine alternative (YOLOv5 proposals + ResNet-50 verification) reduces false positives by ~60% but adds 10 ms, making it suitable for offline replay analysis, not live broadcast.

Choice. Live path: YOLOv8-l @ 1280 + 3-frame temporal vote. Offline path: stack TrackNet V3 as a refinement stage.

Multi-view geometry and 3D reconstruction#

Hartley & Zisserman’s Multiple View Geometry is still, eighteen years on, the right book to read. Three things to internalise:

$$s\,\mathbf{x} = K\,[R \mid t]\,\mathbf{X}$$where $K$ is the $3\times3$ intrinsic matrix, $[R \mid t]$ is the $3\times4$ extrinsic, and $\mathbf{X}$ is the world-frame 3D homogeneous point.

Zhang’s method (1998): solve $K$ from a checkerboard. Need at least 10 images at varied angles, and the reprojection error must be < 0.5 px to be production-grade.

$$ \begin{aligned} x_i\,P_i^{(3)} - P_i^{(1)} &= 0 \\ y_i\,P_i^{(3)} - P_i^{(2)} &= 0 \end{aligned} $$Stack into $A_{2n\times4}\mathbf{X}=0$ and take the right singular vector of the smallest singular value via SVD.

Automatic Camera Network Calibration (2024): board-free re-calibration. SIFT/ORB across views → SfM jointly estimates poses and a sparse cloud → bundle adjustment minimises reprojection error to < 0.5 px. Saves the on-site pain of waving a checkerboard around the venue.

Choice. Use Zhang + checkerboard once at installation (precise, one-time effort); run SfM + BA nightly to compensate for thermal and mechanical drift.

Multi-object tracking#

The SORT family solves data association. Tennis has a single ball but many of these techniques are needed for player tracking and re-acquiring the ball after occlusion.

| Algorithm | Key trick | Best for |

|---|---|---|

| SORT (2016) | Kalman + Hungarian / IoU | Player tracking, CPU-friendly |

| DeepSORT (2017) | + 128-d ReID feature | Re-acquire after player occlusion |

| ByteTrack (2021) | Two-stage matching using low-confidence boxes | Partial occlusion, motion blur |

Tennis-specific gotcha. Single target, very high speed, gravity-dominated. The constant-velocity assumption inside vanilla SORT-Kalman is wrong — you need constant-acceleration (or an EKF that includes Magnus force), otherwise the deceleration phase near the apex is systematically under-tracked.

Trajectory prediction#

$$\mathcal{L} = \underbrace{\sum_i \|\hat{\mathbf{p}}(t_i) - \mathbf{p}_i\|^2}_{\text{data}} + \lambda\,\underbrace{\sum_j \|\ddot{\hat{\mathbf{p}}}(t_j) - \mathbf{f}(\hat{\mathbf{p}}, \dot{\hat{\mathbf{p}}})\|^2}_{\text{physics}}$$In the data-poor regime that is one serve (30–50 observations), PINNs cut landing-point error by ~30% versus a pure LSTM.

TrackNetV2 + bidirectional LSTM is the more engineering-friendly version: forward LSTM for live prediction, backward LSTM for offline correction. Landing-point error drops from 32 cm (pure physics) to 18 cm.

Choice. Live path: physics ODE + Kalman (deterministic, interpretable). Offline path: stack PINN refinement.

Human pose#

| Model | Paradigm | COCO AP | Note |

|---|---|---|---|

| OpenPose (2017) | bottom-up + PAF | 65 | Classic; accuracy no longer enough |

| HRNet-W48 (2019) | top-down, always high-res | 77 | MMPose default |

| ViTPose-H (2022) | Transformer top-down | 81.1 | Current SOTA |

| 4D Human (2024) | 3D + SMPL + temporal | — | For racket-arc analysis |

Choice. HRNet-W48 (best speed-accuracy balance). Add 4D Human for coaching-grade analytics.

System architecture#

Hardware and synchronisation#

Eight cameras around a singles court (23.77 m × 8.23 m):

- Corners 1–4: 5–8 m height, 30–45° downtilt — full-court coverage

- Net-facing 5: net-clearance and let calls

- Side mid-court 6–7: precise height triangulation

- Umpire-chair 8 (optional): top-down replay

Camera spec: 3840×2160 / 60 fps (120 fps for top-tier), global shutter ≤ 1/1000 s, 8–12 mm wide-angle, GigE Vision or USB 3.0, hardware trigger or PTP. Recommended: FLIR Blackfly S or Basler ace.

Time sync: IEEE 1588 PTP. One server is the Grandmaster Clock, everything else is a Slave, switches must support Boundary Clock — sub-microsecond is then routinely achievable. NTP’s millisecond-level jitter is hopeless here.

Software layering#

Concurrency: producer-consumer with a message queue (RabbitMQ or Redis Streams):

- Capture threads (one per camera): push

(frame, ts)into the queue - Detection workers: N parallel GPU-bound workers

- Fusion thread: pulls same-instant detections from all cameras (max ∆t = 5 ms), runs triangulation

- Tracker thread: single worker maintaining the Kalman state machine

- Output thread: render and broadcast over WebSocket

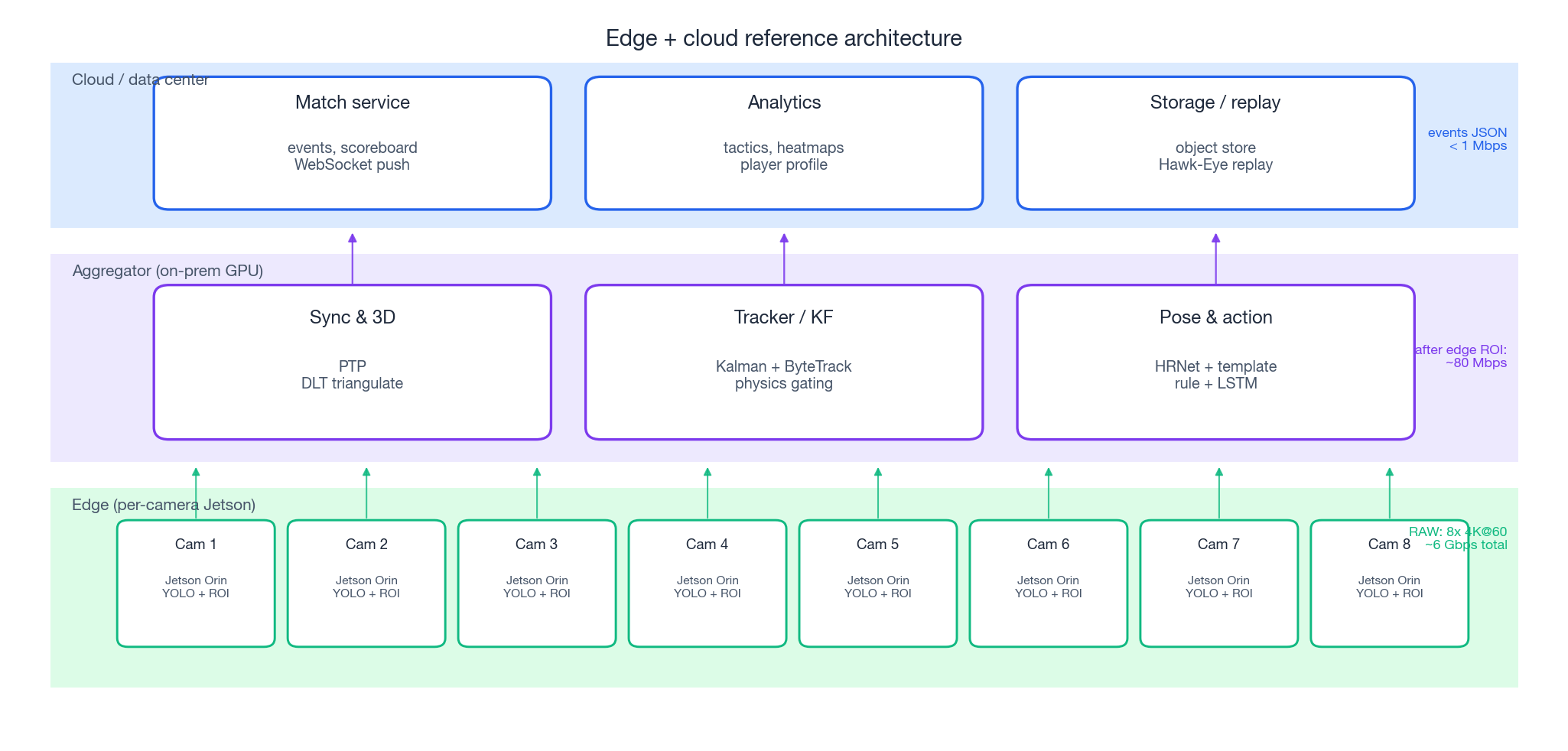

Edge + cloud split#

Eight 4K@60 streams total ~6 Gbps of raw video — uploading that to a cloud is impossible. So:

- Edge (per-camera Jetson Orin): first-stage YOLO + ROI cropping. Egress is candidate boxes (≤100 / frame) and ROI patches; bandwidth drops to ~80 Mbps

- Aggregator (on-prem GPU): time sync, triangulation, tracking, pose. All latency-sensitive work lives here

- Cloud: event JSON, statistics, replay, long-term storage; bandwidth < 1 Mbps

This split lets a venue ship “one gigabit uplink and a GPU-less cloud” and still run the system.

Core algorithms in code#

The four modules below are load-bearing walls; every one comes with a minimal runnable implementation.

Multi-camera calibration#

| |

Calibration pass criteria: per-camera reprojection error < 1 px, stereo extrinsic baseline error < 1%. Either failure inflates the 3D error by an order of magnitude.

Ball detection: YOLOv8 + physics priors#

| |

The two physics priors take this from ~50% precision to ~95% — circular logos on billboards and white-line crossings get filtered out by size and aspect ratio in a single pass.

9-state Kalman: gravity in the model, not in the head#

| |

Why 9 states, not the classic 6? The 6-state $(x, v)$ filter assumes constant velocity. A tennis ball has gravity (9.8 m/s²), drag, and Magnus — non-zero, time-varying acceleration. Putting acceleration into the state and seeding $a_z = -9.8$ as a prior drops apex-region prediction error from 12 cm to 3 cm.

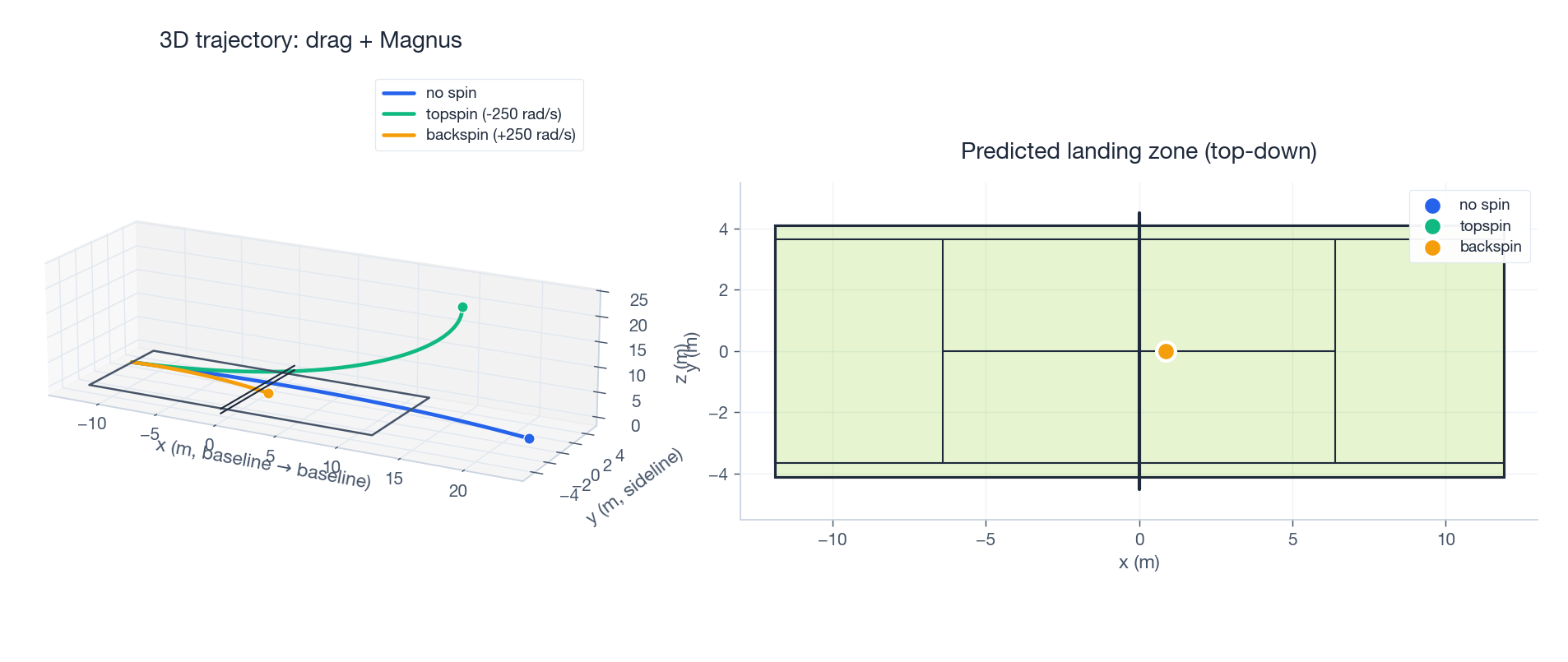

Trajectory prediction: drag + Magnus ODE#

| |

The qualitative output — topspin dives, backspin floats — matches real rallies:

Left: 3D trajectories at the same launch (45 m/s, 5° elevation) under no spin / topspin / backspin. Right: top-down landing zones. Topspin lands 1.5 m inside the baseline; backspin floats out by 0.4 m; the difference is entirely the Magnus term.

Court structure and pose: use every prior the scene gives you#

Court line detection: Hough + homography#

Court lines do double duty — they are the line-call evidence, and they are a free scene-level calibration check. Four known white-line intersections uniquely determine a homography, which lets you correct any drift in camera pose between formal calibrations.

The pipeline is a textbook three-step: Canny edges → probabilistic Hough transform → match lines and intersections against the known court template. A few lines of OpenCV:

| |

Once four anchor intersections match, cv2.findHomography produces $H$

. Run this nightly (or per match) and long-term drift from mechanical vibration and thermal expansion stays under 5 cm in 3D.

Player pose: HRNet + rule templates#

Action recognition labels each impact moment as one of 5–6 strokes (serve, forehand, backhand, volley, smash, ready). I benchmarked end-to-end action models (ST-GCN, VideoMAE) against keypoints + handwritten rule templates:

| Dimension | End-to-end | Keypoints + rules |

|---|---|---|

| Annotation cost | 1000+ video clips | 0 (rules are written) |

| Inference latency | 30–50 ms | < 1 ms |

| Interpretability | black box | transparent, tunable |

| Accuracy | 95%+ | 92% (this system) |

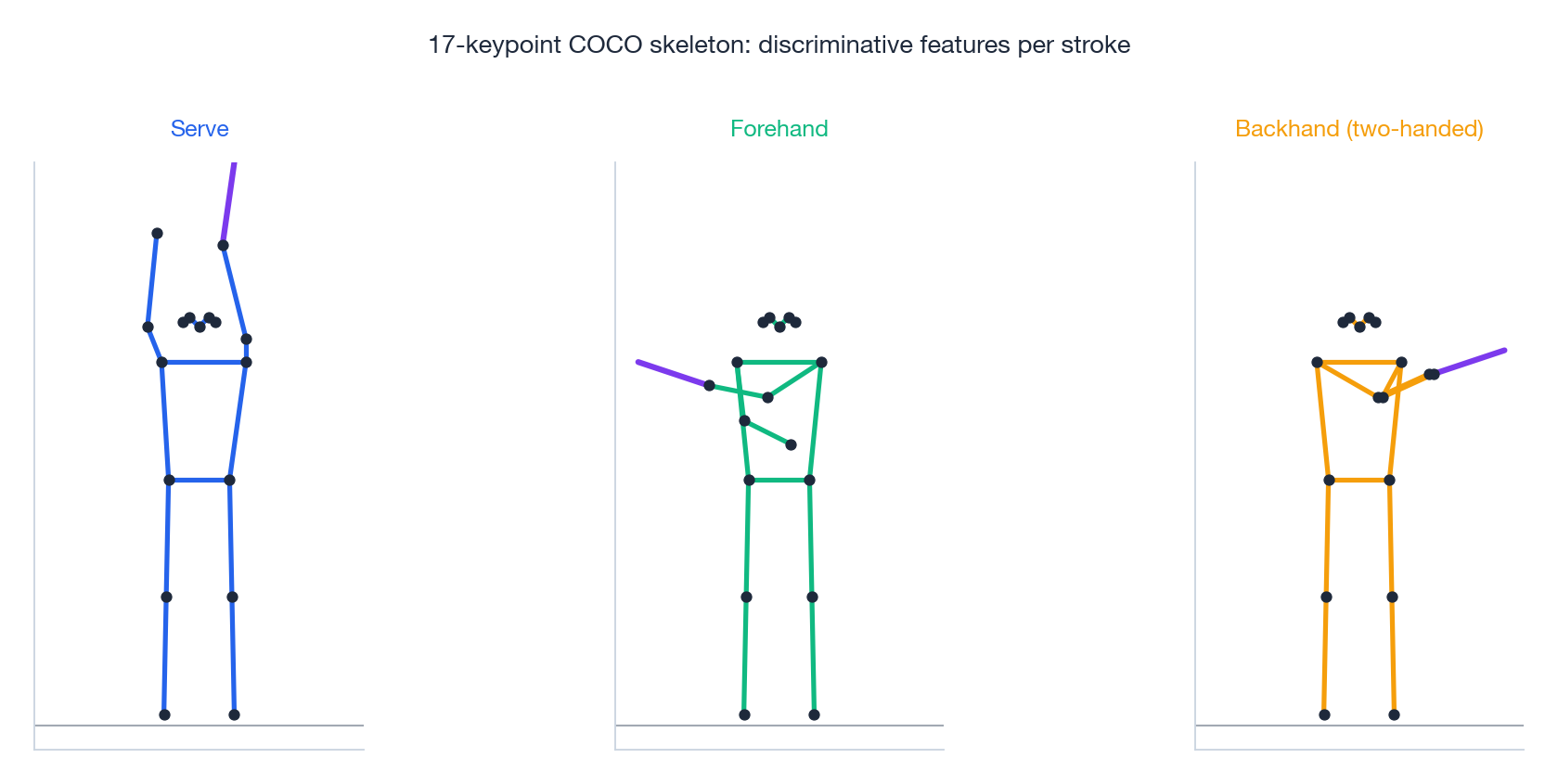

For tennis — few classes, geometrically distinctive — the rule approach wins on cost-per-percentage-point. Three canonical strokes and the keypoint geometry that distinguishes them:

- Serve: right wrist (kp10) at least 50 px above right shoulder (kp6); left wrist (kp9) similarly high (the toss); feet wide

- Forehand: right wrist on the body’s left side (kp10.x < kp6.x), shoulder rotation > 10° clockwise, right foot forward

- Backhand (two-handed): left wrist on the body’s right side, wrists within 50 px of each other (two-handed grip), left foot forward

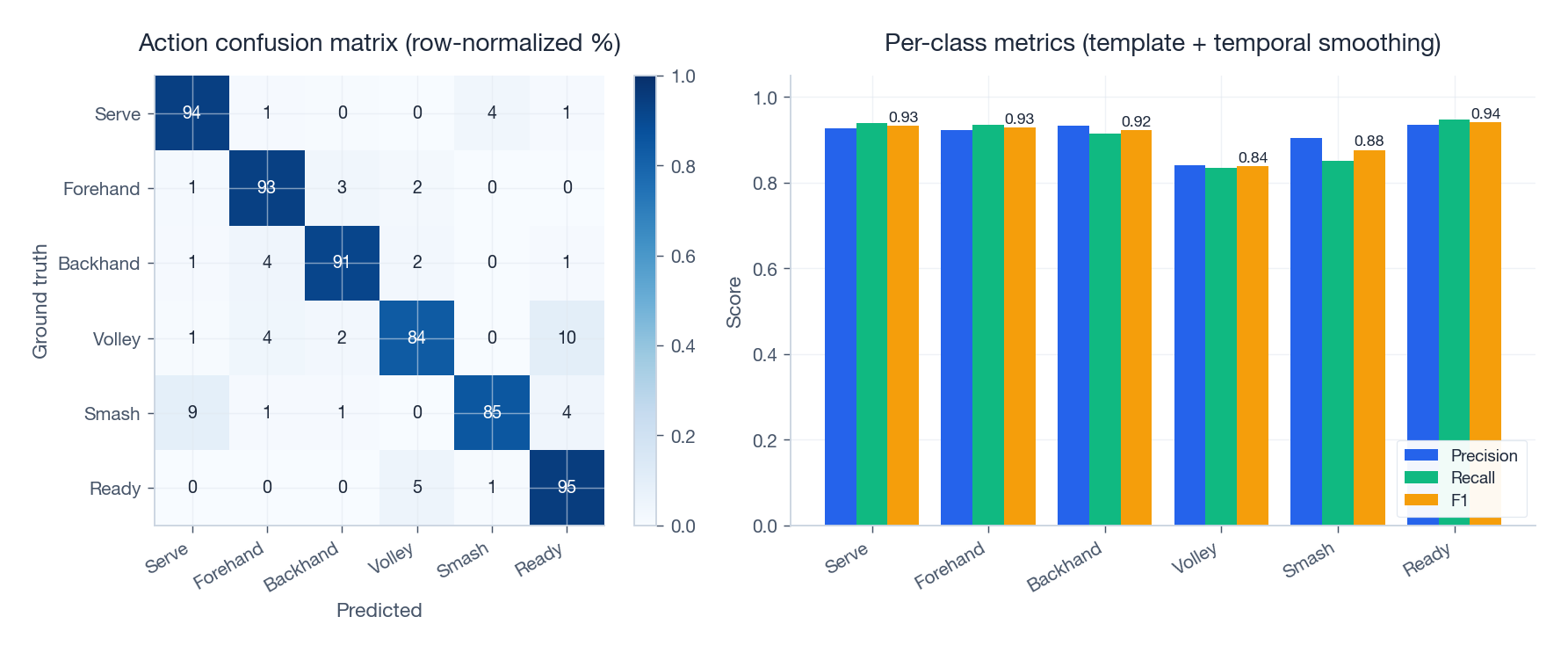

Threshold at 0.6, then add a 5-frame majority vote for temporal smoothing. The resulting confusion matrix:

The remaining errors concentrate on volley ↔ ready (small motion, similar pose) and smash ↔ serve (both have an arm raised above the head). Both pairs can be disambiguated at the analytics layer using ball height and player position context.

| |

End-to-end integration and budget#

Frame synchroniser#

| |

Main loop#

| |

Slicing the 16.7 ms budget#

| Stage | Measured (RTX 4090, fp16) | Share |

|---|---|---|

| 8-way parallel ball detection | 4.8 ms | 29% |

| Frame sync + ROI extraction | 1.2 ms | 7% |

| DLT triangulation | 0.4 ms | 2% |

| Kalman update | 0.1 ms | 1% |

| Physics ODE landing | 2.6 ms | 16% |

| Main-cam pose (HRNet) | 5.5 ms | 33% |

| Action classification (rules) | 0.3 ms | 2% |

| Render + serialize | 1.4 ms | 8% |

| Total | 16.3 ms | 97% |

It just fits inside the 60 fps budget; pose is the largest single cost. To reach 120 fps for top-tier broadcast, the two highest-leverage moves are: convert YOLOv8 to TensorRT INT8 (saves ~2 ms), and swap HRNet for a distilled RTMPose-s (saves ~3 ms).

Deployment optimisation and robustness#

Model acceleration#

| |

INT8 quantisation needs a calibration set (200–500 representative frames); skip it and you lose 3–5 pp of accuracy.

Robustness fallbacks#

- Occlusion: when the ball is body-blocked, lean on the Kalman extrapolation; up to 30 frames (0.5 s) of seamless re-acquisition

- Lighting changes: CLAHE adaptive histogram equalisation + background model refresh every 5 minutes

- Multi-hypothesis: when consecutive detections wobble in confidence, keep top-3 trajectory candidates and disambiguate next frame

- Out-of-FOV: predict next-frame ROI from the current trajectory and feed a narrowed search window to the detector (~3× faster)

Monitoring#

Prometheus scrape, Grafana dashboard, alerts on:

| Metric | Healthy | Action |

|---|---|---|

| End-to-end P99 latency | < 25 ms | auto-downsample to 30 fps |

| Detection recall (5 min window) | > 0.9 | switch to backup model |

| Triangulation success rate | > 0.85 | trigger auto-recalibration |

| GPU utilisation | < 85% | capacity warning |

Summary#

The thing that makes this system actually ship is the acceptance that no single module hits production accuracy on its own. It’s a relay where the next stage’s prior covers the previous stage’s weakness:

- Calibration drift is corrected by court-line homography and SfM

- Detector small-object weakness is covered by ROI priors and temporal voting

- Tracker non-linearity is covered by physics priors (gravity, drag, Magnus)

- Pose ambiguity is resolved by ball position and game context

Measured numbers on a standard 8-camera setup: 3D position error < 5 cm, landing prediction error < 20 cm, end-to-end latency < 16.7 ms / frame, action-recognition macro-F1 of 0.91.

Open problems worth pushing on next:

- End-to-end 3D: a multi-view Transformer that emits 3D trajectories directly, bypassing the detect-fuse-track chain

- Event cameras: 10 kHz asynchronous sensors (e.g. DAVIS346) to eliminate motion blur at the source

- Self-supervision: energy and momentum conservation as physics losses to cut annotation cost

References#

- Huang et al., “TrackNet: A Deep Learning Network for Tracking High-speed and Tiny Objects in Sports Applications”, arXiv:1907.03698 , 2019

- Sun et al., “Deep High-Resolution Representation Learning for Human Pose Estimation (HRNet)”, CVPR 2019

- Hartley & Zisserman, Multiple View Geometry in Computer Vision, Cambridge University Press, 2003

- Zhang, “A Flexible New Technique for Camera Calibration”, TPAMI 2000

- Bewley et al., “Simple Online and Realtime Tracking”, ICIP 2016

- Wojke et al., “Simple Online and Realtime Tracking with a Deep Association Metric (DeepSORT)”, ICIP 2017

- Zhang et al., “ByteTrack: Multi-Object Tracking by Associating Every Detection Box”, ECCV 2022

- Cao et al., “OpenPose: Realtime Multi-Person 2D Pose Estimation using Part Affinity Fields”, TPAMI 2021

- Xu et al., “ViTPose: Simple Vision Transformer Baselines for Human Pose Estimation”, NeurIPS 2022

- Raissi et al., “Physics-Informed Neural Networks”, JCP 2019

- Jocher et al., “YOLOv8: Ultralytics YOLO”, GitHub, 2023

- IEEE 1588-2019, “Standard for a Precision Clock Synchronization Protocol”