Tags

LSTM

NLP (3): RNN and Sequence Modeling

How RNNs, LSTMs, and GRUs process sequences with memory. We derive vanishing gradients from first principles, build a character-level text generator, and implement a Seq2Seq translator in PyTorch.

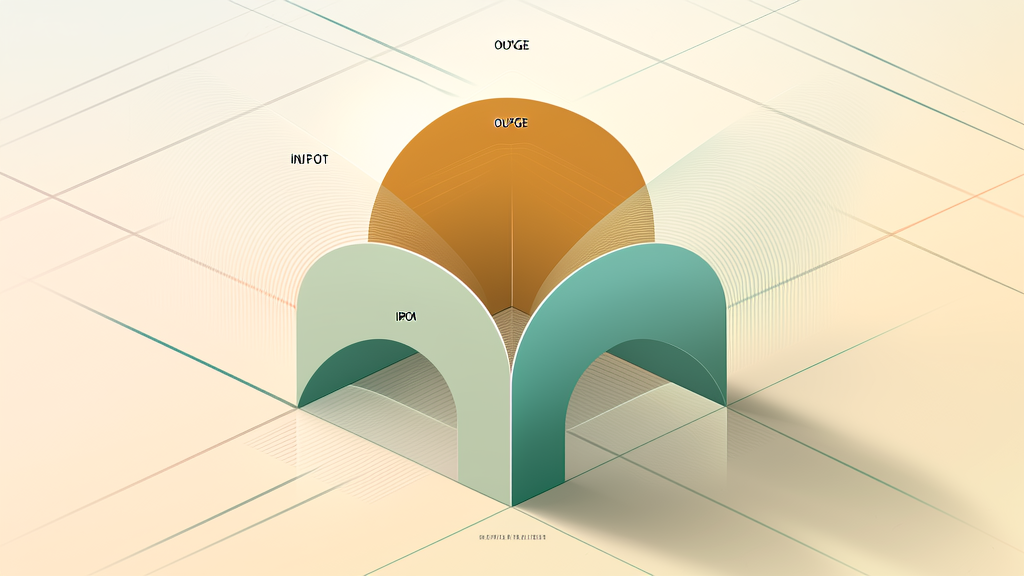

Time Series Forecasting (2): LSTM — Gate Mechanisms and Long-Term Dependencies

How LSTM's forget, input, and output gates solve the vanishing gradient problem. Complete PyTorch code for time series forecasting with practical tuning tips.